Automated Whitebox Fuzz Testing Patrice Godefroid Microsoft Research

Automated Whitebox Fuzz Testing Patrice Godefroid (Microsoft Research) Michael Y. Levin (Microsoft Center for Software Excellence) David Molnar (UC-Berkeley, visiting MSR) Presented by Yuyan Bao

Acknowledgement • This presentation is extended and modified from • The presentation by David Molnar Automated Whitebox Fuzz Testing • The presentation by Corina Păsăreanu (Kestrel) Symbolic Execution for Model Checking and Testing

Agenda • • • Fuzz Testing Symbolic Execution Dynamic Testing Generational Search SAGE Architecture Conclusion Contribution Weakness Improvement

Fuzz Testing • An effective technique for finding security vulnerabilities in software • Apply invalid, unexpected, or random data to the inputs of a program. • If the program fails(crashing, access violation exception), the defects can be noted.

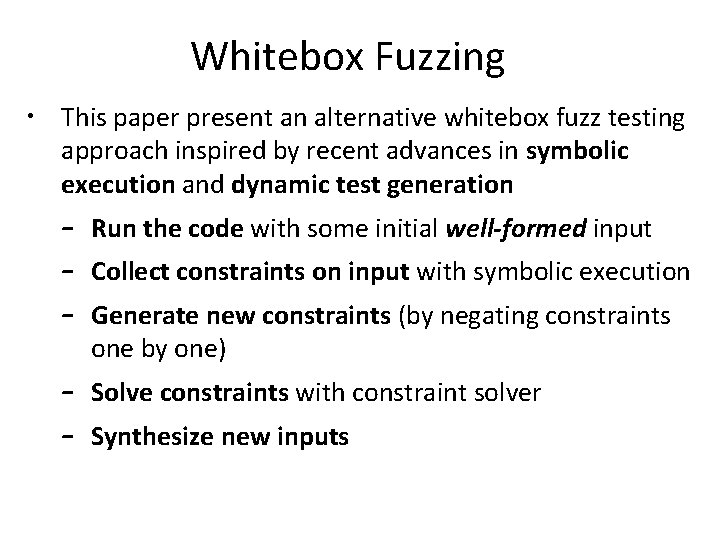

Whitebox Fuzzing • This paper present an alternative whitebox fuzz testing approach inspired by recent advances in symbolic execution and dynamic test generation – Run the code with some initial well-formed input – Collect constraints on input with symbolic execution – Generate new constraints (by negating constraints one by one) – Solve constraints with constraint solver – Synthesize new inputs

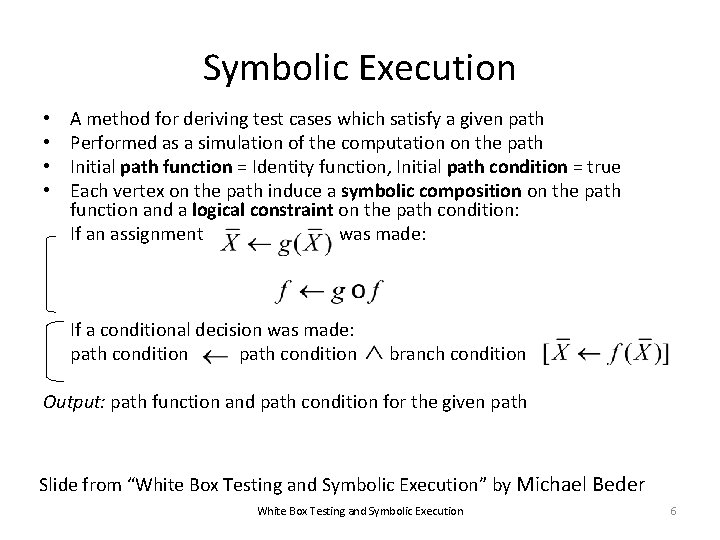

Symbolic Execution • • A method for deriving test cases which satisfy a given path Performed as a simulation of the computation on the path Initial path function = Identity function, Initial path condition = true Each vertex on the path induce a symbolic composition on the path function and a logical constraint on the path condition: If an assignment was made: If a conditional decision was made: path condition branch condition Output: path function and path condition for the given path Slide from “White Box Testing and Symbolic Execution” by Michael Beder White Box Testing and Symbolic Execution 6

Symbolic Execution Symbolic execution tree: x: X, y: Y PC: true x: X, y: Y PC: X>Y Code: int x, y; if (x > y) { x = x + y; y = x - y; x = x - y; if (x – y > 0) assert (false); } x: X, y: Y PC: X<=Y x: X+Y, y: Y PC: X>Y x: X+Y, y: X PC: X>Y Not reachable x: Y, y: X PC: X>Y - “Simulate” the code using symbolic values instead of program numeric data (PC=“path condition”) false true x: Y, y: X PC: X>Y Y-X>0 FALSE! false x: Y, y: X PC: X>Y Y-X<=0 7

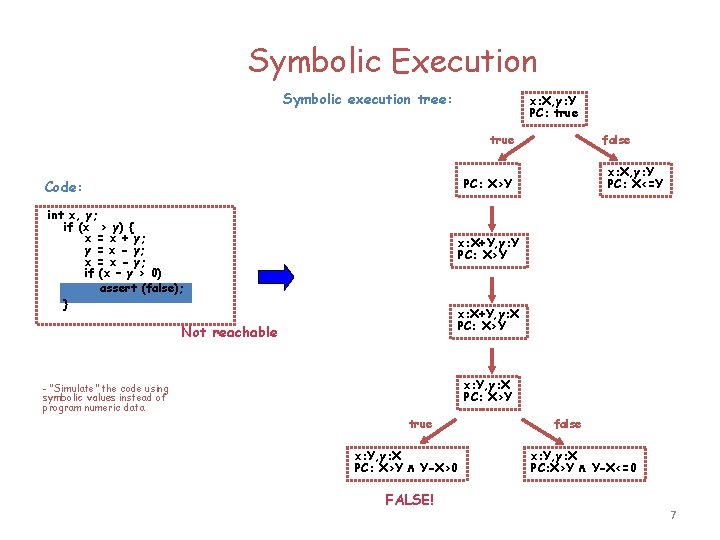

Dynamic Test Generation • Executing the program starting with some initial inputs • Performing a dynamic symbolic execution to collect constraints on inputs • Use a constraint solver to infer variants of the previous inputs in order to steer the next executions

![Dynamic Test Generation void top(char input[4]) { int cnt = 0; if (input[0] == Dynamic Test Generation void top(char input[4]) { int cnt = 0; if (input[0] ==](http://slidetodoc.com/presentation_image_h2/c8cb2eb10ee2540977179663e1ccaf08/image-9.jpg)

Dynamic Test Generation void top(char input[4]) { int cnt = 0; if (input[0] == ‘b’) cnt++; if (input[1] == ‘a’) cnt++; if (input[2] == ‘d’) cnt++; if (input[3] == ‘!’) cnt++; if (cnt >= 3) crash(); } • • input = “good” Running the program with random values for the 4 input bytes is unlikely to discovery the error: a probability of about Traditional fuzz testing is difficult to generate input values that will drive program through all its possible execution paths.

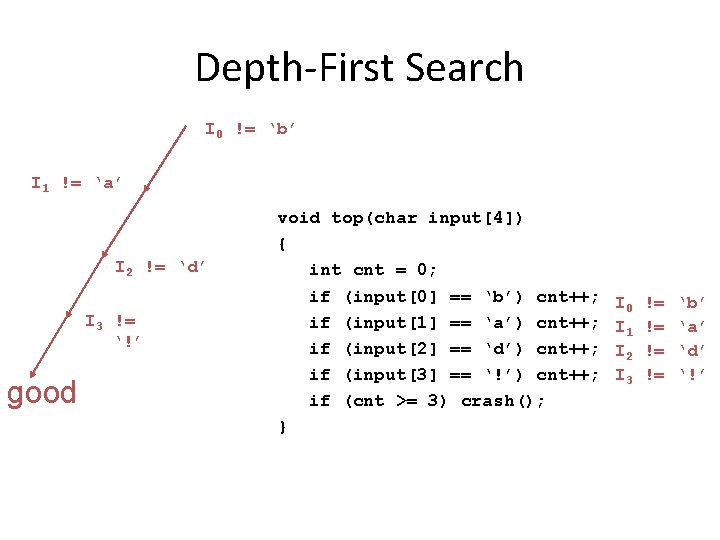

Depth-First Search I 0 != ‘b’ I 1 != ‘a’ I 2 != ‘d’ I 3 != ‘!’ good void top(char input[4]) { int cnt = 0; if (input[0] == ‘b’) cnt++; if (input[1] == ‘a’) cnt++; if (input[2] == ‘d’) cnt++; if (input[3] == ‘!’) cnt++; if (cnt >= 3) crash(); } I 0 I 1 I 2 I 3 != != ‘b’ ‘a’ ‘d’ ‘!’

![Depth-First Search good goo! void top(char input[4]) { int cnt = 0; if (input[0] Depth-First Search good goo! void top(char input[4]) { int cnt = 0; if (input[0]](http://slidetodoc.com/presentation_image_h2/c8cb2eb10ee2540977179663e1ccaf08/image-11.jpg)

Depth-First Search good goo! void top(char input[4]) { int cnt = 0; if (input[0] == ‘b’) cnt++; if (input[1] == ‘a’) cnt++; if (input[2] == ‘d’) cnt++; if (input[3] == ‘!’) cnt++; if (cnt >= 3) crash(); } I 0 I 1 I 2 I 3 != != != == ‘b’ ‘a’ ‘d’ ‘!’

![Practical limitation good godd void top(char input[4]) { int cnt = 0; if (input[0] Practical limitation good godd void top(char input[4]) { int cnt = 0; if (input[0]](http://slidetodoc.com/presentation_image_h2/c8cb2eb10ee2540977179663e1ccaf08/image-12.jpg)

Practical limitation good godd void top(char input[4]) { int cnt = 0; if (input[0] == ‘b’) cnt++; if (input[1] == ‘a’) cnt++; if (input[2] == ‘d’) cnt++; if (input[3] == ‘!’) cnt++; if (cnt >= 3) crash(); } I 0 I 1 I 2 I 3 != != == != Every time only one constraint is expanded, low efficiency! ‘b’ ‘a’ ‘d’ ‘!’

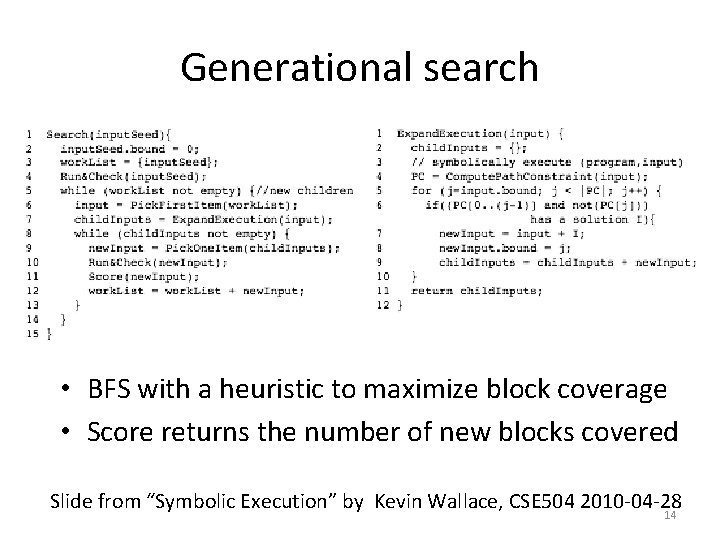

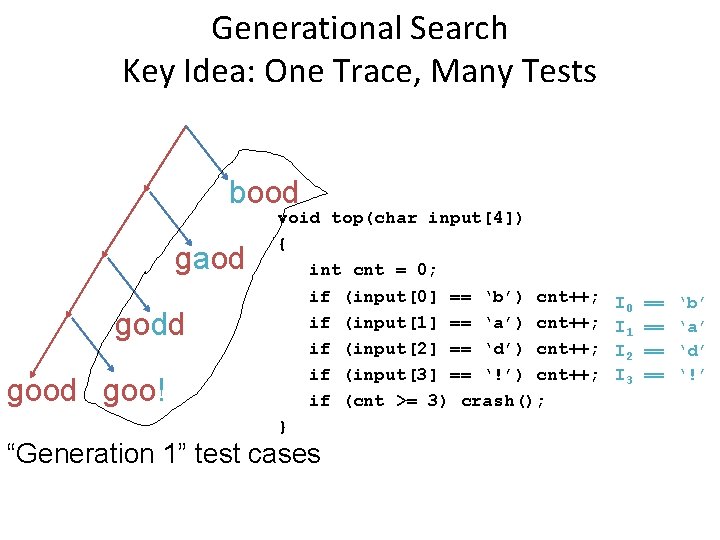

Generational search • BFS with a heuristic to maximize block coverage • Score returns the number of new blocks covered Slide from “Symbolic Execution” by Kevin Wallace, CSE 504 2010 -04 -28 14

Generational Search Key Idea: One Trace, Many Tests bood gaod godd goo! void top(char input[4]) { int cnt = 0; if (input[0] == ‘b’) cnt++; if (input[1] == ‘a’) cnt++; if (input[2] == ‘d’) cnt++; if (input[3] == ‘!’) cnt++; if (cnt >= 3) crash(); } “Generation 1” test cases I 0 I 1 I 2 I 3 == == ‘b’ ‘a’ ‘d’ ‘!’

![The Generational Search Space void top(char input[4]) { int cnt = 0; if (input[0] The Generational Search Space void top(char input[4]) { int cnt = 0; if (input[0]](http://slidetodoc.com/presentation_image_h2/c8cb2eb10ee2540977179663e1ccaf08/image-15.jpg)

The Generational Search Space void top(char input[4]) { int cnt = 0; if (input[0] == ‘b’) cnt++; if (input[1] == ‘a’) cnt++; if (input[2] == ‘d’) cnt++; if (input[3] == ‘!’) cnt++; if (cnt >= 3) crash(); } 0 1 1 2 2 2 good goo! godd god! gaod gao! gadd gad! bood boo! bodd bod! baod badd bad!

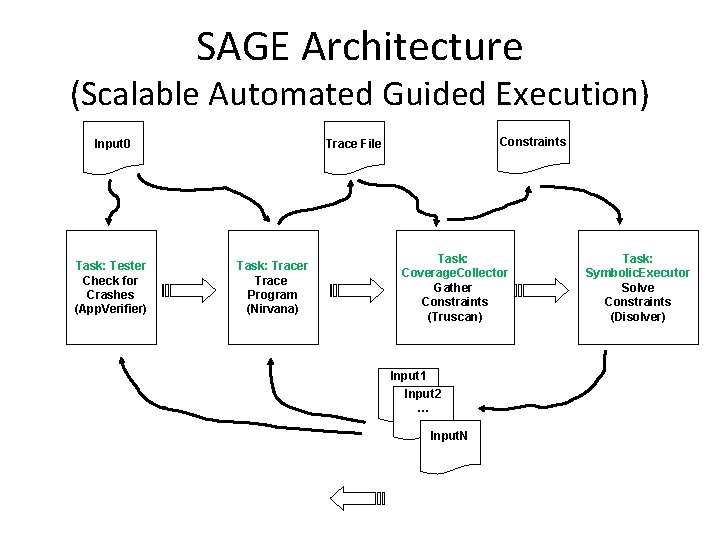

SAGE Architecture (Scalable Automated Guided Execution) Input 0 Task: Tester Check for Crashes (App. Verifier) Constraints Trace File Task: Tracer Trace Program (Nirvana) Task: Coverage. Collector Gather Constraints (Truscan) Input 1 Input 2 … Input. N Task: Symbolic. Executor Solve Constraints (Disolver)

Initial Experiences with SAGE • Since 1 st MS internal release in April’ 07: dozens of new security bugs found (most missed by blackbox fuzzers, static analysis) • Apps: image processors, media players, file decoders, … • Many bugs found rated as “security critical, severity 1, priority 1” • Now used by several teams regularly as part of QA process

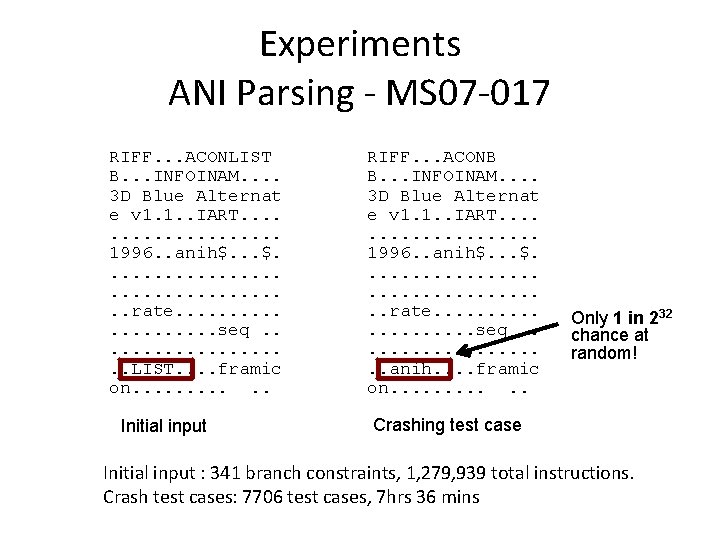

Experiments ANI Parsing - MS 07 -017 RIFF. . . ACONLIST B. . . INFOINAM. . 3 D Blue Alternat e v 1. 1. . IART. . . . . 1996. . anih$. . . . . rate. . . . . seq. . . . . LIST. . framic on. . . Initial input RIFF. . . ACONB B. . . INFOINAM. . 3 D Blue Alternat e v 1. 1. . IART. . . . . 1996. . anih$. . . . . rate. . . . . seq. . . . . anih. . framic on. . . Only 1 in 232 chance at random! Crashing test case Initial input : 341 branch constraints, 1, 279, 939 total instructions. Crash test cases: 7706 test cases, 7 hrs 36 mins

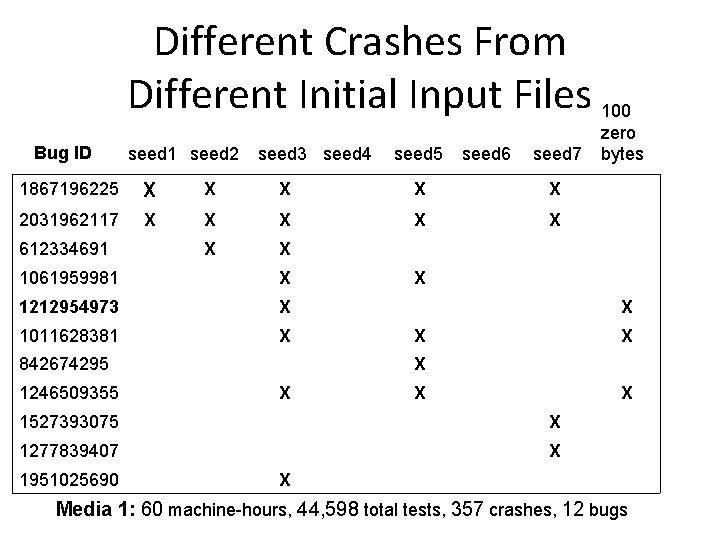

Different Crashes From Different Initial Input Files 100 Bug ID seed 1 seed 2 seed 3 seed 4 seed 5 seed 6 seed 7 1867196225 X X X 2031962117 X X X X 612334691 1061959981 X 1212954973 X 1011628381 X 842674295 1246509355 X X X X 1527393075 X 1277839407 X 1951025690 zero bytes X Media 1: 60 machine-hours, 44, 598 total tests, 357 crashes, 12 bugs

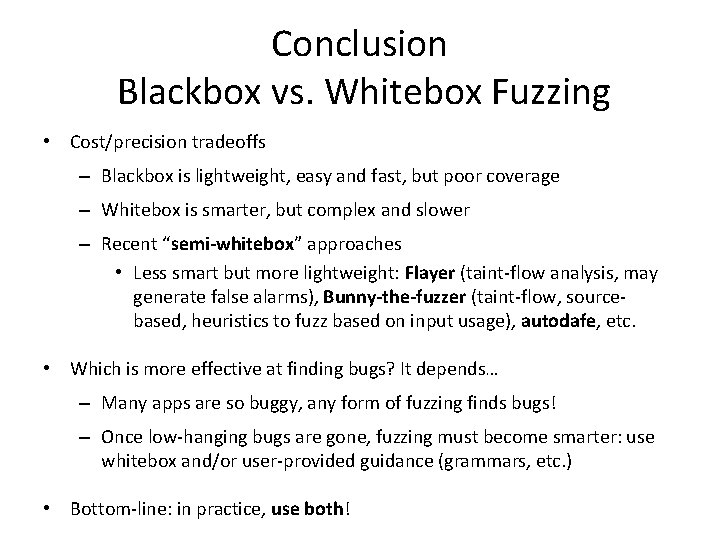

Conclusion Blackbox vs. Whitebox Fuzzing • Cost/precision tradeoffs – Blackbox is lightweight, easy and fast, but poor coverage – Whitebox is smarter, but complex and slower – Recent “semi-whitebox” approaches • Less smart but more lightweight: Flayer (taint-flow analysis, may generate false alarms), Bunny-the-fuzzer (taint-flow, sourcebased, heuristics to fuzz based on input usage), autodafe, etc. • Which is more effective at finding bugs? It depends… – Many apps are so buggy, any form of fuzzing finds bugs! – Once low-hanging bugs are gone, fuzzing must become smarter: use whitebox and/or user-provided guidance (grammars, etc. ) • Bottom-line: in practice, use both!

Contribution • A novel search algorithm with a coverage -maximizing heuristic • It performs symbolic execution of program traces at the x 86 binary level • Tests larger applications than previously done in dynamic test generation

Weakness • Gathering initial inputs • The class of inputs characterized by each symbolic execution is determined by the dependence of the program’s control flow on its inputs • Not a general solution • Only for x 86 windows applications • Only focus on file-reading applications • Nirvana simulates a given processor’s architecture

Improvement • Portability • Translate collected traces into an intermediate language • Reproduce bugs on different platforms without re-simulating the program • Better way to find initial inputs efficiently

Thank you! Questions?

- Slides: 24