Automated Scoring in NDSA Writing Copyright 2020 Cambium

Automated Scoring in NDSA Writing Copyright © 2020 Cambium Assessment, Inc. All rights reserve

Introduction of Presenters Cambium Assessment, Inc. • Sue Lottridge, Cambium Assessment Senior Director, Automated Scoring 2

Agenda I. Questions on scores produced by the engine II. Questions on how automated scoring works III. Wrap-up Q&A 3

Automated Scoring of Writing Prompts

Questions • Why did my student get this score? – It’s a great essay, and they got a zero! – Sally’s essay is better than Billy’s but Billy got a higher score! – I don’t think that this essay deserved that score! • How does the automated scoring engine work? • How should I instruct my students to get higher scores? 5

Answers • Interpreting the scores – First, let’s understand what the scores mean. • How does the automated essay scoring work? 6

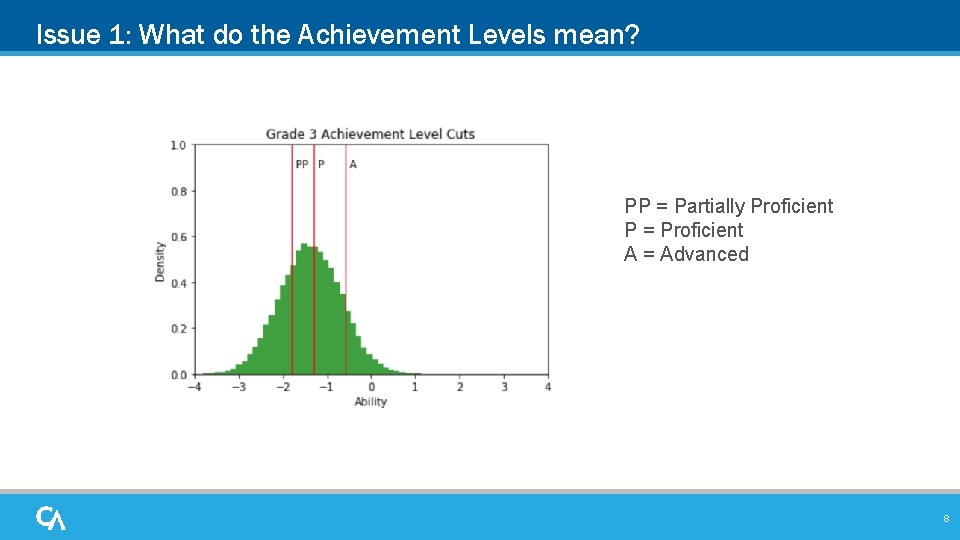

Interpreting Scores • Issue 1: What do the Achievement Levels mean? • Issue 2: Why are high scores on the writing prompts rare? 7

Issue 1: What do the Achievement Levels mean? PP = Partially Proficient P = Proficient A = Advanced 8

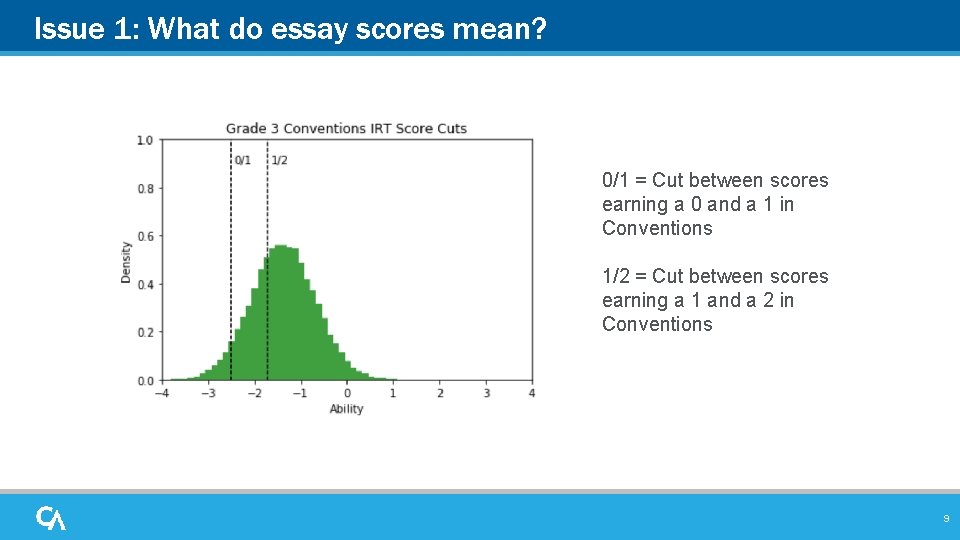

Issue 1: What do essay scores mean? 0/1 = Cut between scores earning a 0 and a 1 in Conventions 1/2 = Cut between scores earning a 1 and a 2 in Conventions 9

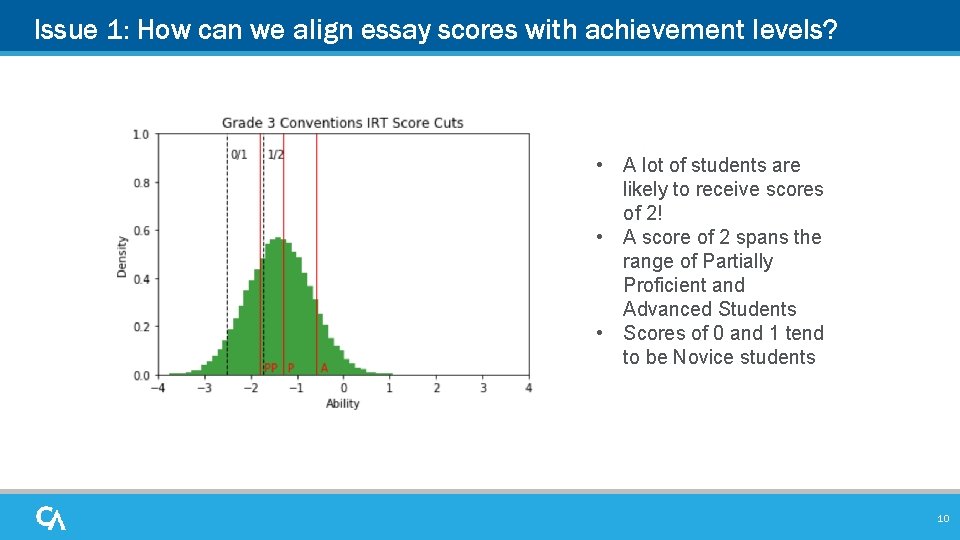

Issue 1: How can we align essay scores with achievement levels? • A lot of students are likely to receive scores of 2! • A score of 2 spans the range of Partially Proficient and Advanced Students • Scores of 0 and 1 tend to be Novice students 10

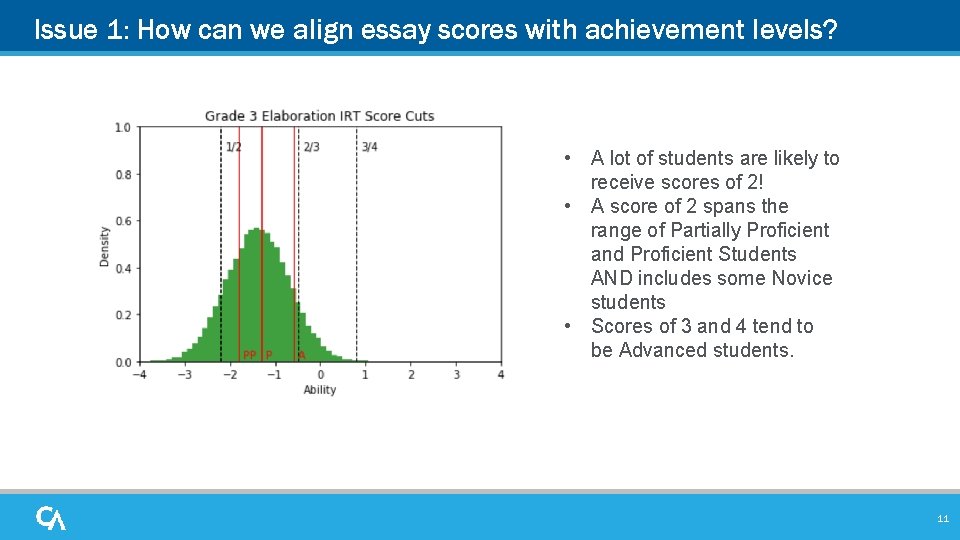

Issue 1: How can we align essay scores with achievement levels? • A lot of students are likely to receive scores of 2! • A score of 2 spans the range of Partially Proficient and Proficient Students AND includes some Novice students • Scores of 3 and 4 tend to be Advanced students. 11

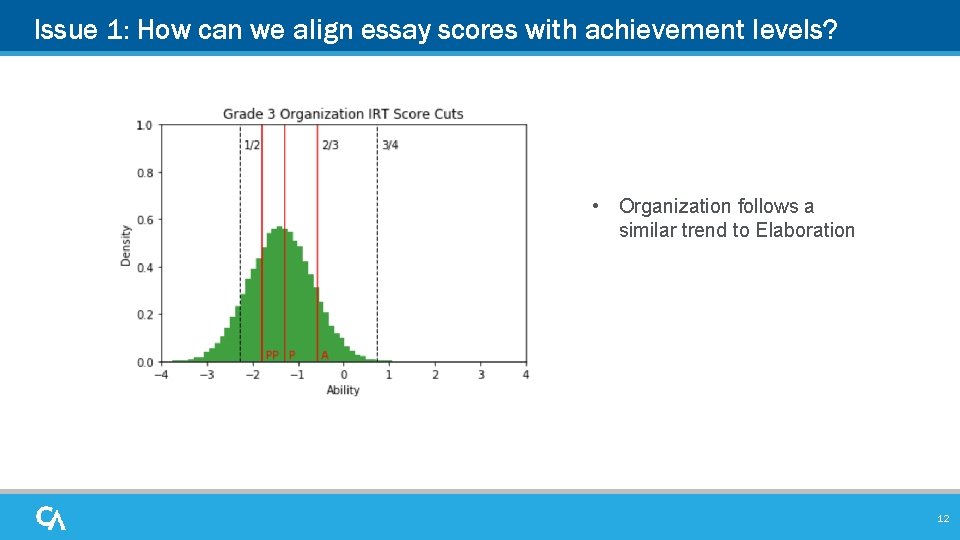

Issue 1: How can we align essay scores with achievement levels? • Organization follows a similar trend to Elaboration 12

Some implications for Issue 1 • In grade 3, o An essay score of ‘ 2’ spans a broad range of student proficiency levels from Novice to Proficient and sometimes Advanced. o A lot of students are likely to fall in the ‘ 2’ category in grade 3. • In general, o Organization and Elaboration scores of 4 are very rare because they are associated with students are very high performers relative to even the Advanced threshold. o Organization and Elaboration scores of 3 tend to be associated with Advanced students. • These trends can vary by grade. 13

Why does the engine rarely assign high scores? Because humans rarely assign high scores… 14

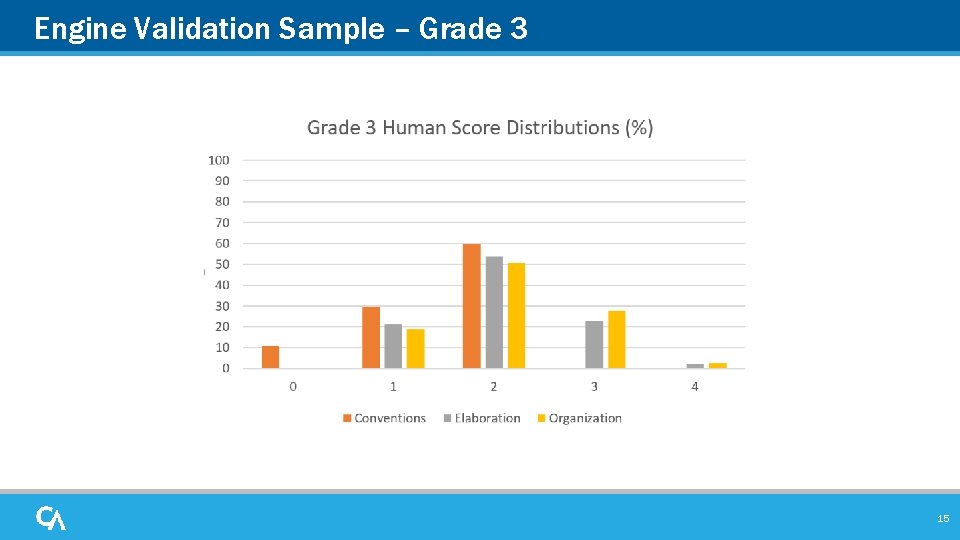

Engine Validation Sample – Grade 3 15

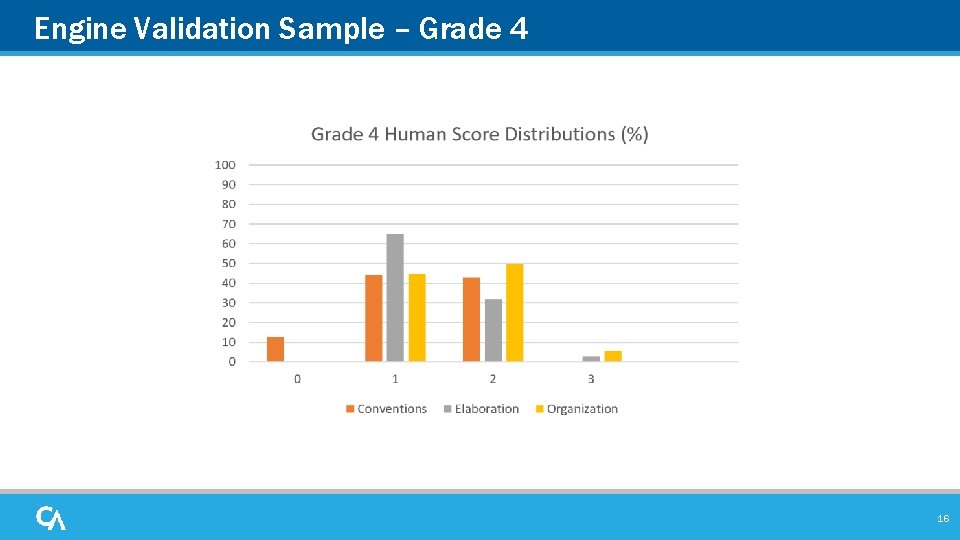

Engine Validation Sample – Grade 4 16

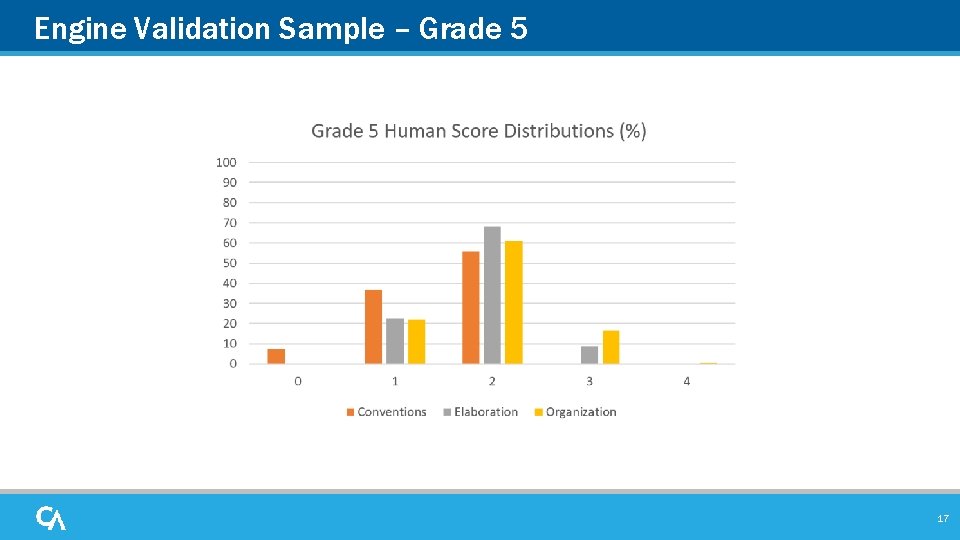

Engine Validation Sample – Grade 5 17

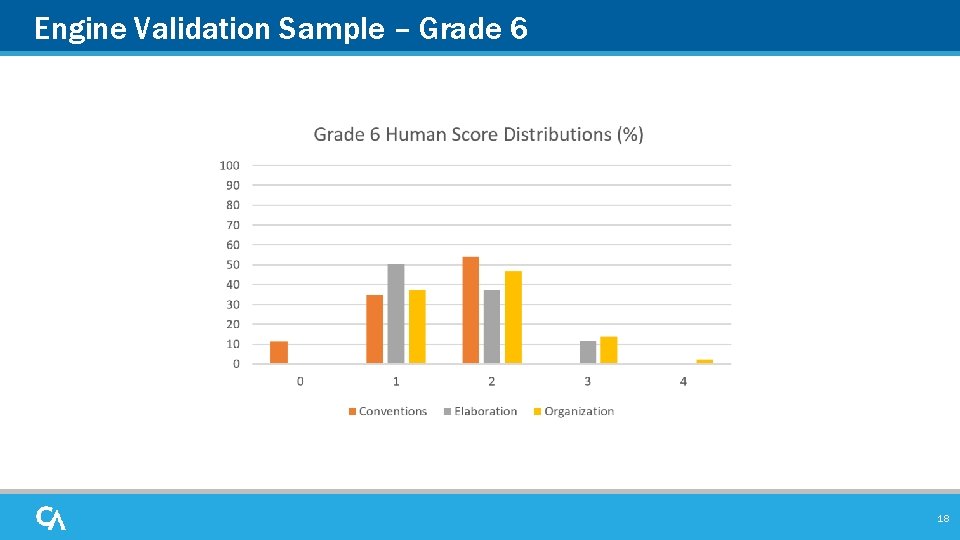

Engine Validation Sample – Grade 6 18

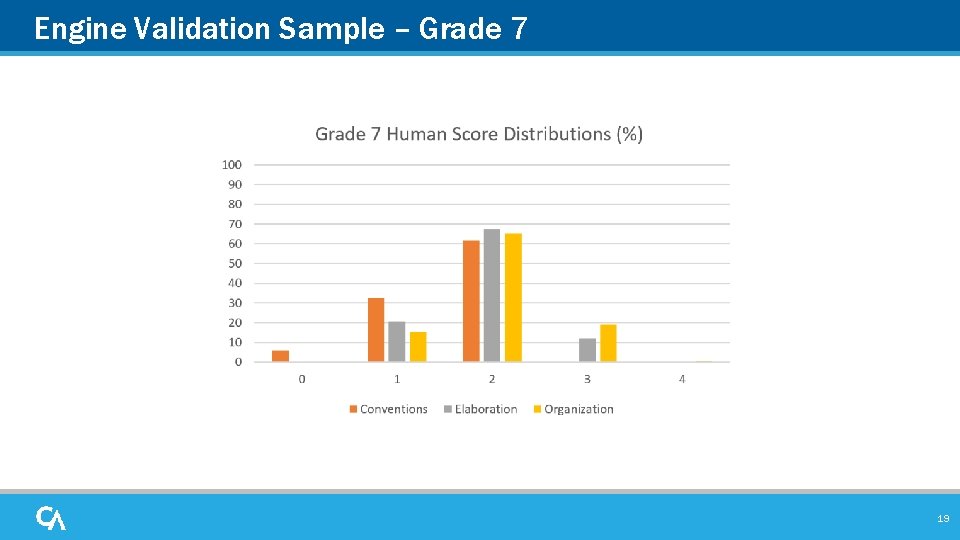

Engine Validation Sample – Grade 7 19

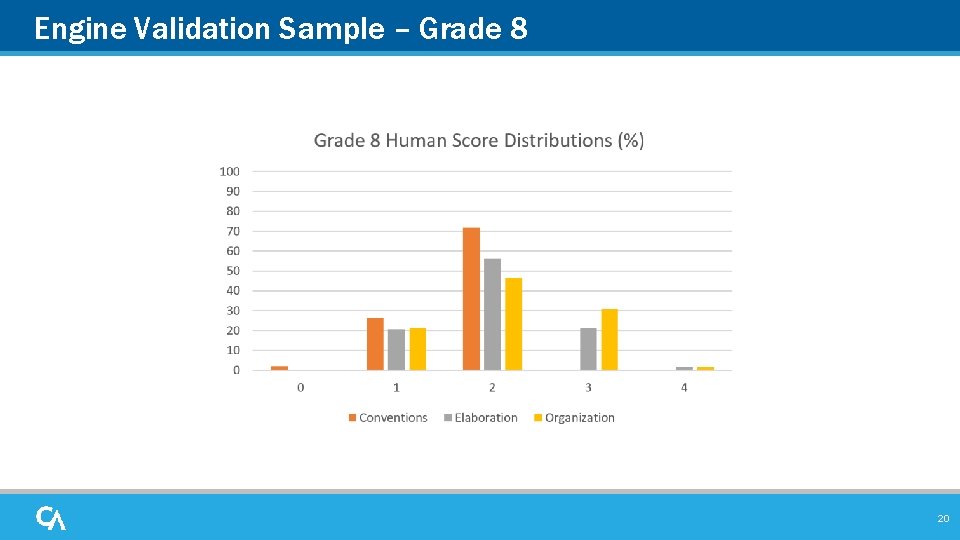

Engine Validation Sample – Grade 8 20

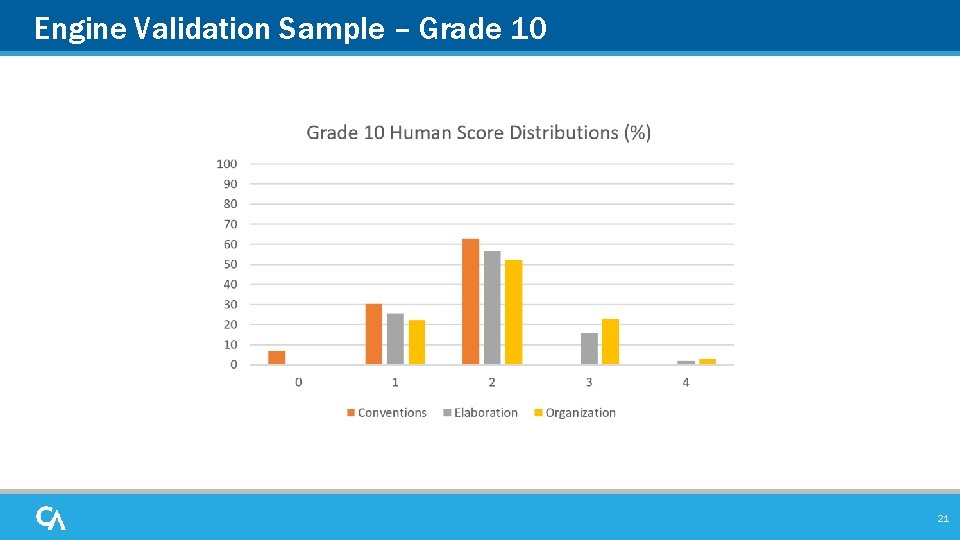

Engine Validation Sample – Grade 10 21

Implications • Very high scores are rare because human scorers assign them rarely – We do see them assigned by the engine to students, but they are rare • Not getting the highest score has a very limited impact on students’ final scores – The statistical models recognize that getting a 4 on a dimension means super-extraordinary performance – Most student writing is not super-extraordinary (yet). – Getting a 4 on a dimension corresponds to a very high scale score 22

How do the distributions look now? • Final essay scores are a combination of human and engine scores – First 500 are routed for human scoring with adjudication. – 15% are routed for human scoring. – Non Specific codes are routed for human scoring. – Approximately 20% are scored by humans and 80% by the machine. 23

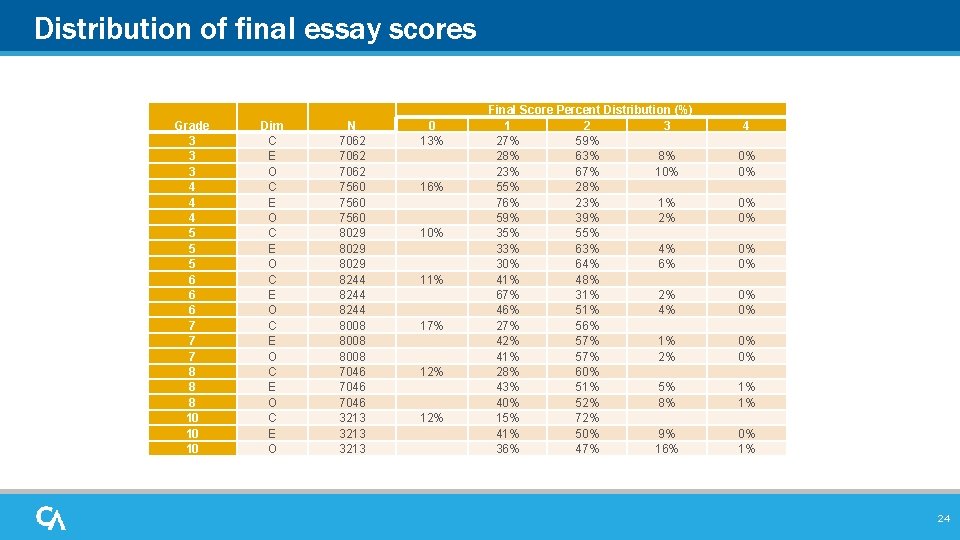

Distribution of final essay scores Grade 3 3 3 4 4 4 5 5 5 6 6 6 7 7 7 8 8 8 10 10 10 Dim C E O C E O N 7062 7560 8029 8244 8008 7046 3213 0 13% 16% 10% 11% 17% 12% Final Score Percent Distribution (%) 1 2 3 27% 59% 28% 63% 8% 23% 67% 10% 55% 28% 76% 23% 1% 59% 39% 2% 35% 55% 33% 63% 4% 30% 64% 6% 41% 48% 67% 31% 2% 46% 51% 4% 27% 56% 42% 57% 1% 41% 57% 2% 28% 60% 43% 51% 5% 40% 52% 8% 15% 72% 41% 50% 9% 36% 47% 16% 4 0% 0% 0% 0% 1% 1% 0% 1% 24

Automated Scoring Engine

Automated Essay Scoring • Specialized software designed to predict how trained raters would assign scores to essays. • The engine is trained on specific questions using writing samples and scores from trained raters. • After initial training is completed the engine is run through an extensive quality control process by professional psychometricians. • Trained human raters are considered to be the ‘gold standard’ 26

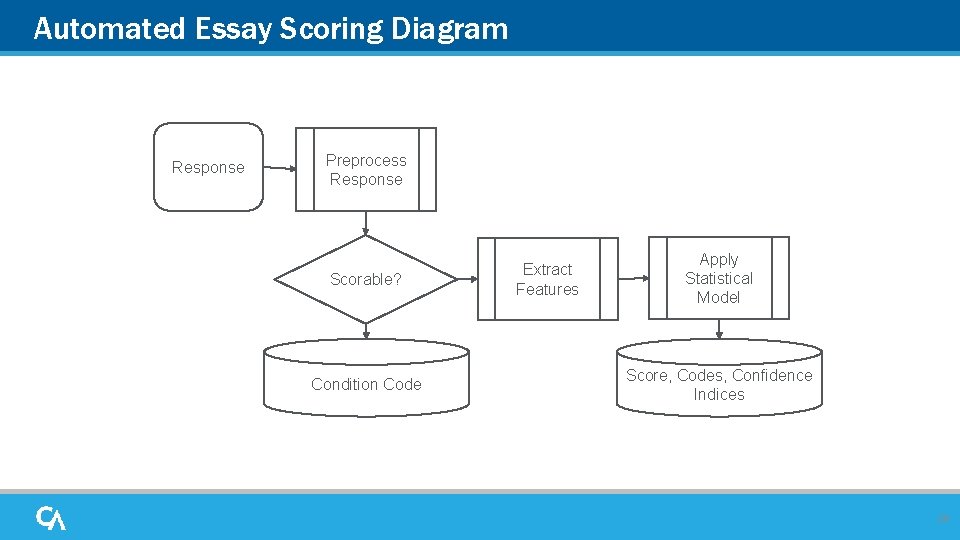

Automated Essay Scoring • Preprocessing: The response text is prepared for the scoring engine. During this phase, blank responses are flagged, as are responses that have too little original text to be scored by humans or the engine. • Feature Extraction: The processed response is analyzed using functions built to reflect common evaluations of writing quality. Features include: grammar and spelling errors, elements of sentence variety and complexity, elements of voice and word choice, and discourse or organizational elements, in addition to the words and phrases used. • Score Modelling: The values from the feature extraction phase are combined with prediction weights to produce a score and a confidence level. 27

Automated Essay Scoring Diagram Response Preprocess Response Scorable? Condition Code Extract Features Apply Statistical Model Score, Codes, Confidence Indices 28

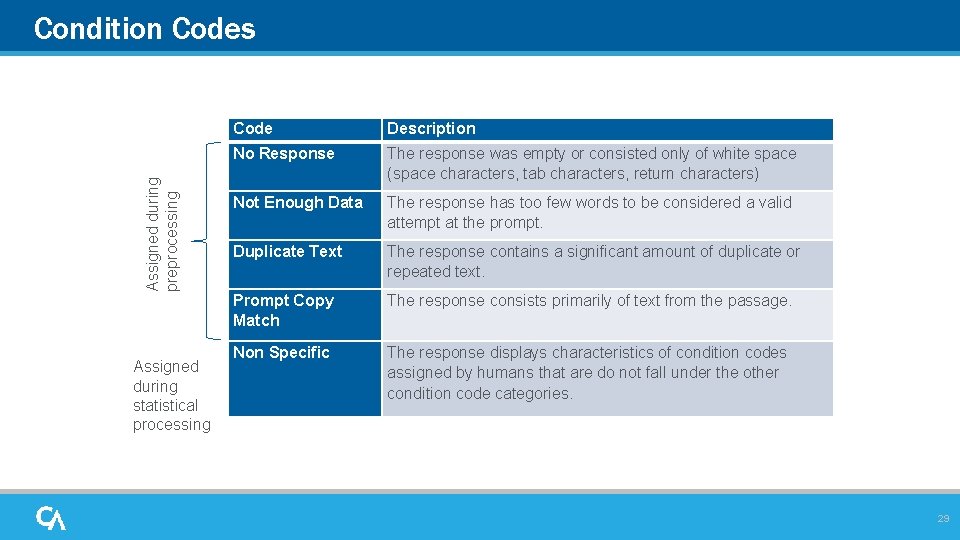

Assigned during preprocessing Condition Codes Assigned during statistical processing Code Description No Response The response was empty or consisted only of white space (space characters, tab characters, return characters) Not Enough Data The response has too few words to be considered a valid attempt at the prompt. Duplicate Text The response contains a significant amount of duplicate or repeated text. Prompt Copy Match The response consists primarily of text from the passage. Non Specific The response displays characteristics of condition codes assigned by humans that are do not fall under the other condition code categories. 29

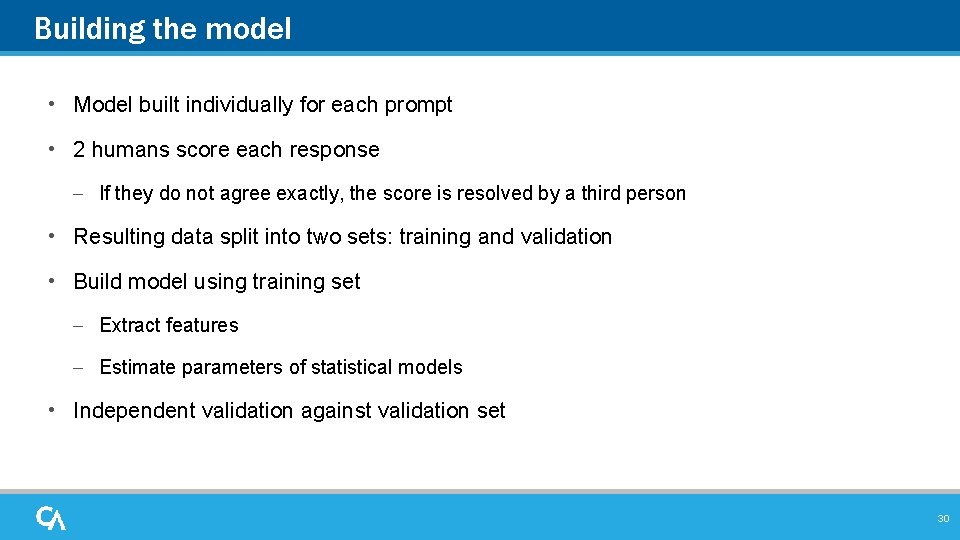

Building the model • Model built individually for each prompt • 2 humans score each response – If they do not agree exactly, the score is resolved by a third person • Resulting data split into two sets: training and validation • Build model using training set – Extract features – Estimate parameters of statistical models • Independent validation against validation set 30

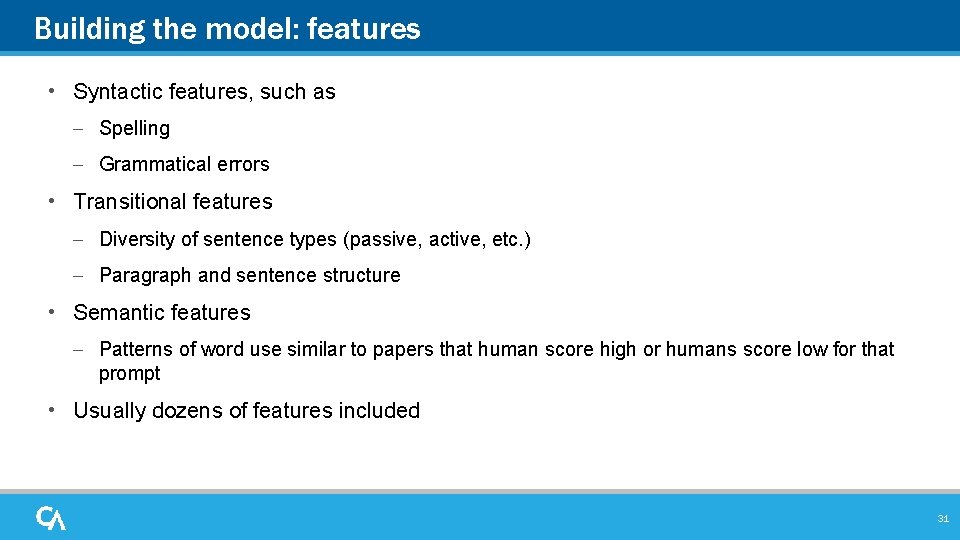

Building the model: features • Syntactic features, such as – Spelling – Grammatical errors • Transitional features – Diversity of sentence types (passive, active, etc. ) – Paragraph and sentence structure • Semantic features – Patterns of word use similar to papers that human score high or humans score low for that prompt • Usually dozens of features included 31

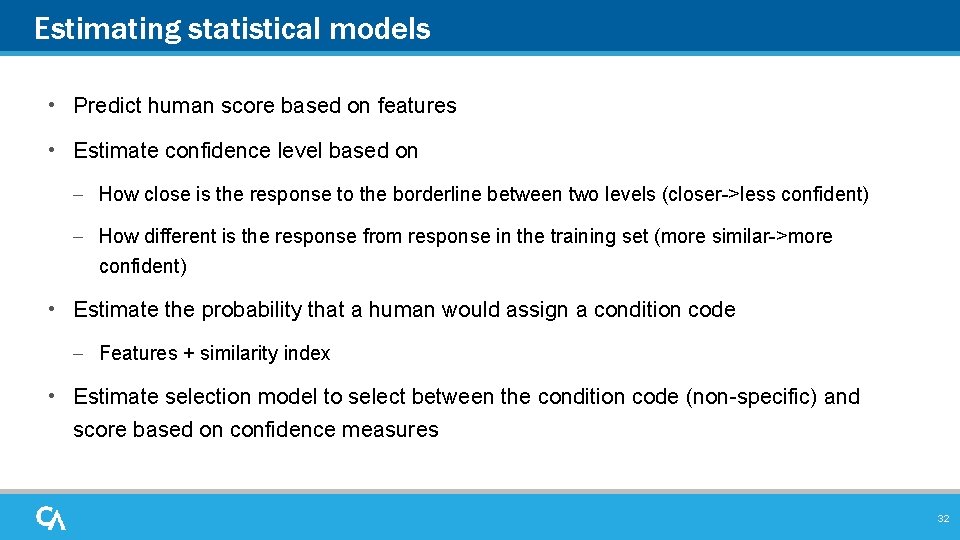

Estimating statistical models • Predict human score based on features • Estimate confidence level based on – How close is the response to the borderline between two levels (closer->less confident) – How different is the response from response in the training set (more similar->more confident) • Estimate the probability that a human would assign a condition code – Features + similarity index • Estimate selection model to select between the condition code (non-specific) and score based on confidence measures 32

Score Agreement • The process predicts human judgment. Humans do not always agree with each other when applying rubrics • Humans tend to agree with each other 60 -70% of the time on scores and 80 -95% of the time on condition codes. • Humans (and the engine) are almost never off by more than one point (about 1% of the time) • As part of the engine training process, the human to engine match must be similar. 33

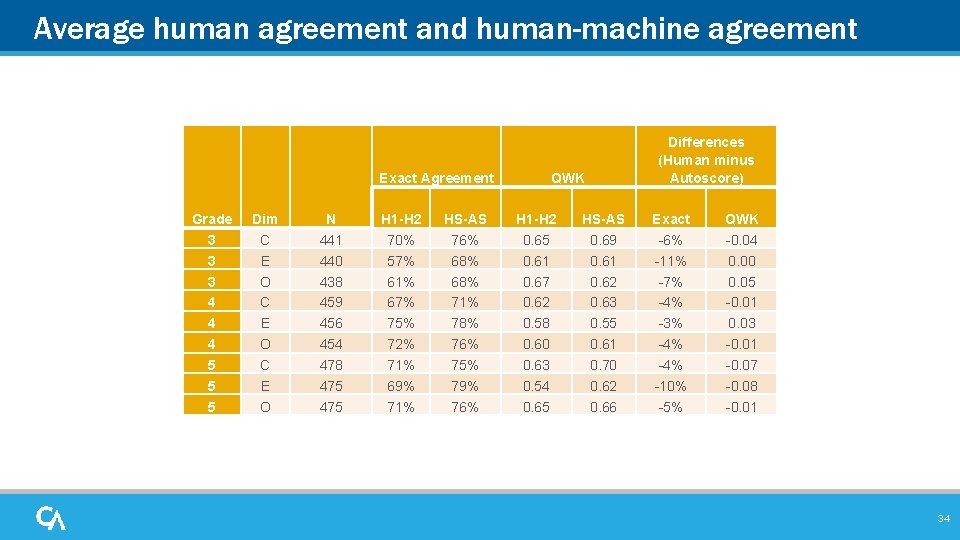

Average human agreement and human-machine agreement Exact Agreement Grade 3 3 3 4 4 4 5 5 5 Dim C E O N 441 440 438 459 456 454 478 475 H 1 -H 2 70% 57% 61% 67% 75% 72% 71% 69% 71% HS-AS 76% 68% 71% 78% 76% 75% 79% 76% QWK H 1 -H 2 0. 65 0. 61 0. 67 0. 62 0. 58 0. 60 0. 63 0. 54 0. 65 HS-AS 0. 69 0. 61 0. 62 0. 63 0. 55 0. 61 0. 70 0. 62 0. 66 Differences (Human minus Autoscore) Exact -6% -11% -7% -4% -3% -4% -10% -5% QWK -0. 04 0. 00 0. 05 -0. 01 0. 03 -0. 01 -0. 07 -0. 08 -0. 01 34

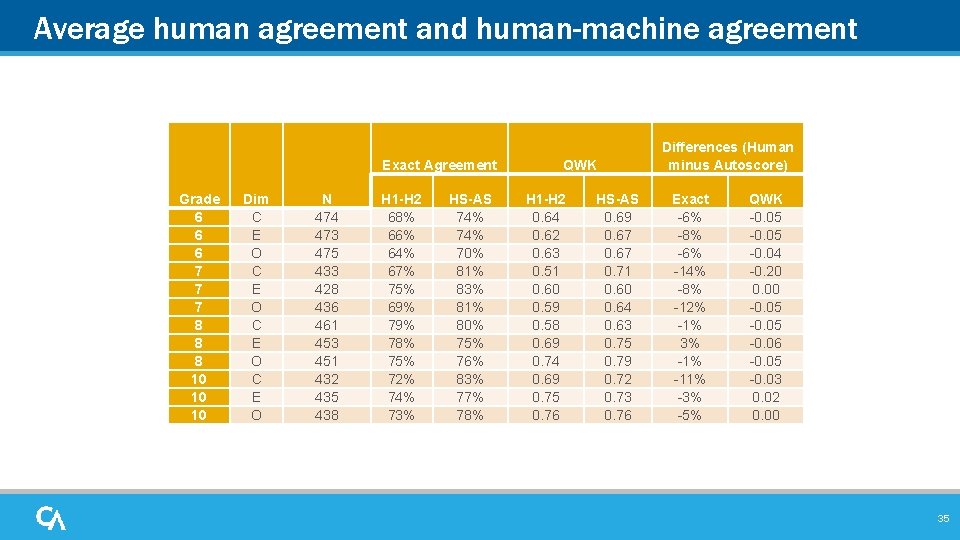

Average human agreement and human-machine agreement Exact Agreement Grade 6 6 6 7 7 7 8 8 8 10 10 10 Dim C E O N 474 473 475 433 428 436 461 453 451 432 435 438 H 1 -H 2 68% 66% 64% 67% 75% 69% 78% 75% 72% 74% 73% HS-AS 74% 70% 81% 83% 81% 80% 75% 76% 83% 77% 78% QWK H 1 -H 2 0. 64 0. 62 0. 63 0. 51 0. 60 0. 59 0. 58 0. 69 0. 74 0. 69 0. 75 0. 76 HS-AS 0. 69 0. 67 0. 71 0. 60 0. 64 0. 63 0. 75 0. 79 0. 72 0. 73 0. 76 Differences (Human minus Autoscore) Exact -6% -8% -6% -14% -8% -12% -1% 3% -11% -3% -5% QWK -0. 05 -0. 04 -0. 20 0. 00 -0. 05 -0. 06 -0. 05 -0. 03 0. 02 0. 00 35

Human Scoring • The engine is tuned specifically to model the human scores on the writing prompts appearing in the summative assessment. • The responses that the engine deems it cannot score with confidence are routed for expert human scoring. • The responses receiving the “Non Specific” code are routed for human scoring. • Approximately 15% of the responses will be routed for human scoring. 36

Q and A

- Slides: 37