Auditory Perception Rob van der Willigen http robvdwcnpa

Auditory Perception Rob van der Willigen http: //~robvdw/cnpa 04/coll 1/Aud. Perc_2007_P 8. ppt

Today’s goal Understanding the problem of Auditory Scene Analysis (ASA): - Higher-levels principles of organization - Complex Waveforms analysis - Neural Activity Patterns (NAP) analysis

OBJECTS?

Psychoacoustics The Problem of Auditory Scene Analysis (ASA) “Conversion of auditory sensory input into a adequate representation of reality. ” ASA allows an organism to obtain and react appropriately to complex sounds from the environment “Only by being aware of how sound is created and shaped in the world can we know how to use it to derive the properties of the sound-producing events around us” Albert S. Bregman (Auditory scene analysis, 1999; p. 1)

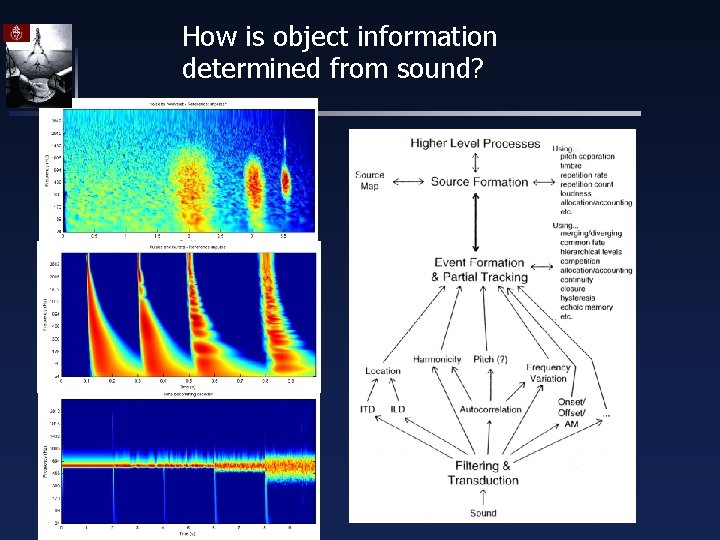

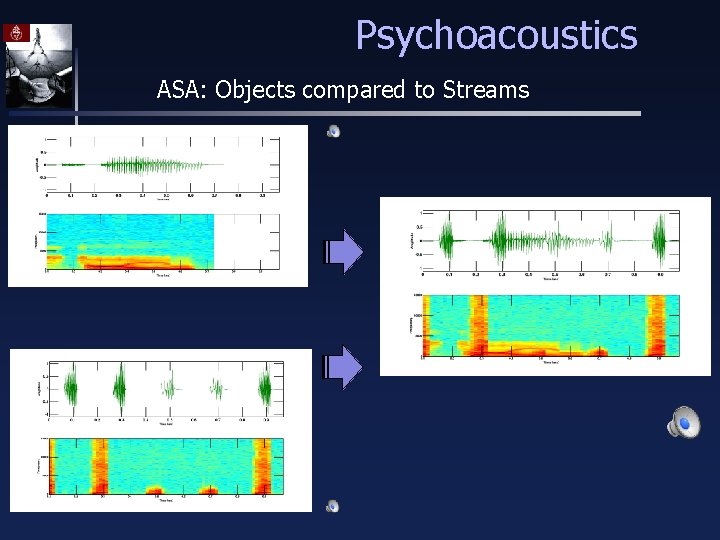

Psychoacoustics ASA: Objects compared to Streams In vision we intuitively focus on objects. In fact the visual system uses light reflections to form separate descriptions of the individual objects. These descriptions include the object’s shape, size, distance, color etc. But how is object information determined from sound?

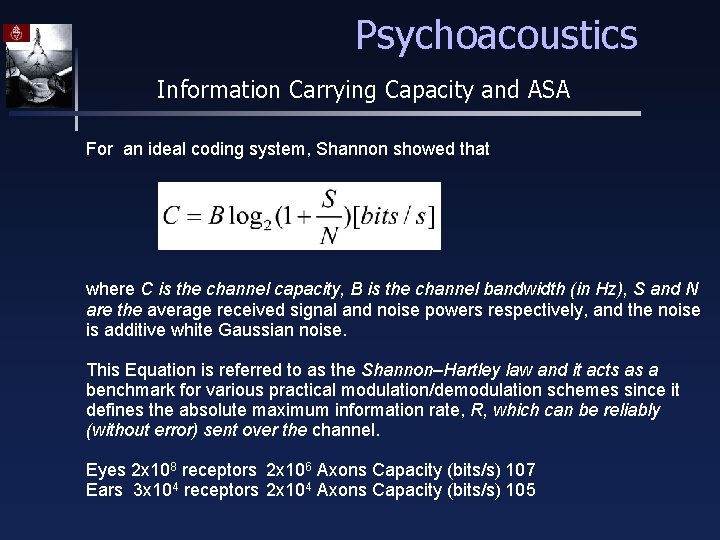

Psychoacoustics Information Carrying Capacity and ASA For an ideal coding system, Shannon showed that where C is the channel capacity, B is the channel bandwidth (in Hz), S and N are the average received signal and noise powers respectively, and the noise is additive white Gaussian noise. This Equation is referred to as the Shannon–Hartley law and it acts as a benchmark for various practical modulation/demodulation schemes since it defines the absolute maximum information rate, R, which can be reliably (without error) sent over the channel. Eyes 2 x 108 receptors 2 x 106 Axons Capacity (bits/s) 107 Ears 3 x 104 receptors 2 x 104 Axons Capacity (bits/s) 105

Psychoacoustics Information Carrying Capacity and ASA Eyes 2 x 108 receptors 2 x 106 Axons Capacity (bits/s) 107 Ears 3 x 104 receptors 2 x 104 Axons Capacity (bits/s) 105

Psychoacoustics Information Carrying Capacity and ASA Ears 3 x 104 receptors 2 x 104 Axons Capacity (bits/s) 105 Intensity differences of 1 d. B over a range of about 120 d. B 120 levels can encode 7 bits (2^7=128). 24 nonoverlaping frequency bands

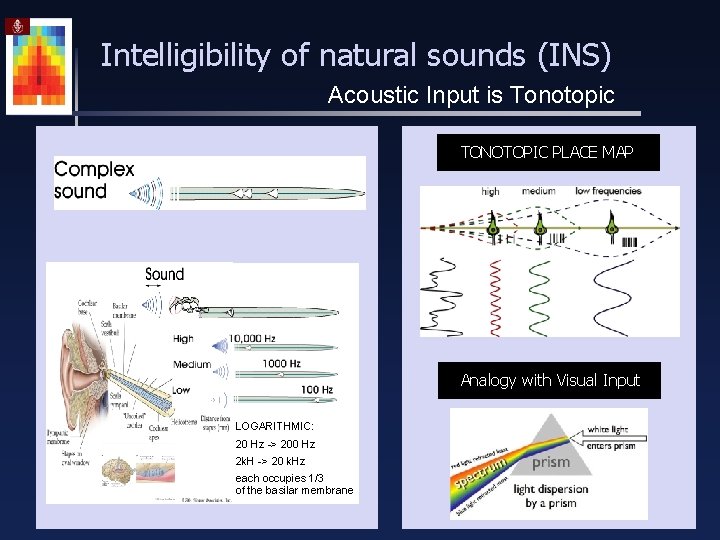

Intelligibility of natural sounds (INS) Acoustic Input is Tonotopic TONOTOPIC PLACE MAP Analogy with Visual Input LOGARITHMIC: 20 Hz -> 200 Hz 2 k. H -> 20 k. Hz each occupies 1/3 of the basilar membrane

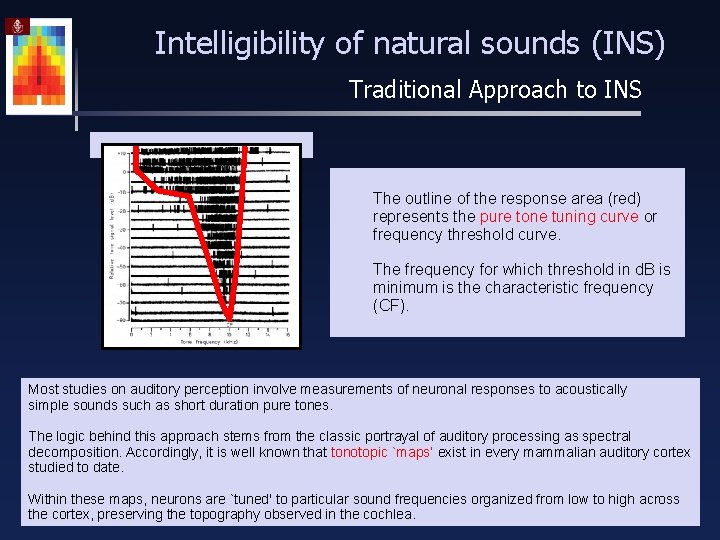

Intelligibility of natural sounds (INS) Traditional Approach to INS The outline of the response area (red) represents the pure tone tuning curve or frequency threshold curve. The frequency for which threshold in d. B is minimum is the characteristic frequency (CF). Most studies on auditory perception involve measurements of neuronal responses to acoustically simple sounds such as short duration pure tones. The logic behind this approach stems from the classic portrayal of auditory processing as spectral decomposition. Accordingly, it is well known that tonotopic `maps’ exist in every mammalian auditory cortex studied to date. Within these maps, neurons are `tuned' to particular sound frequencies organized from low to high across the cortex, preserving the topography observed in the cochlea.

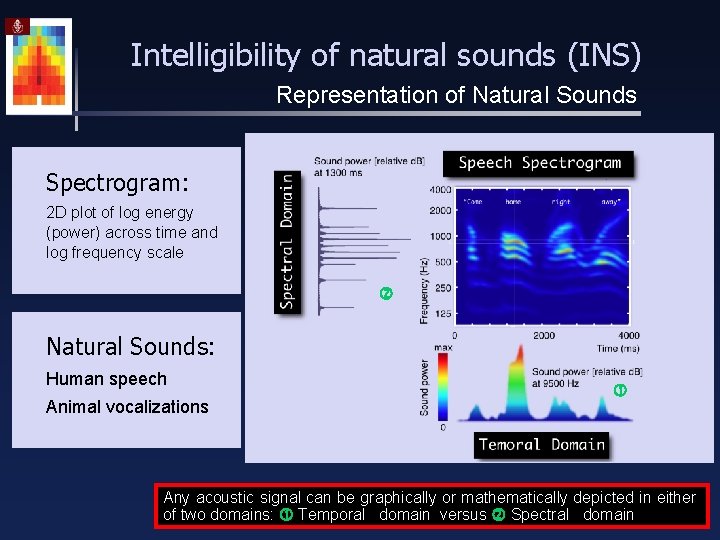

Intelligibility of natural sounds (INS) Representation of Natural Sounds Spectrogram: 2 D plot of log energy (power) across time and log frequency scale Natural Sounds: Human speech Animal vocalizations Any acoustic signal can be graphically or mathematically depicted in either of two domains: Temporal domain versus Spectral domain

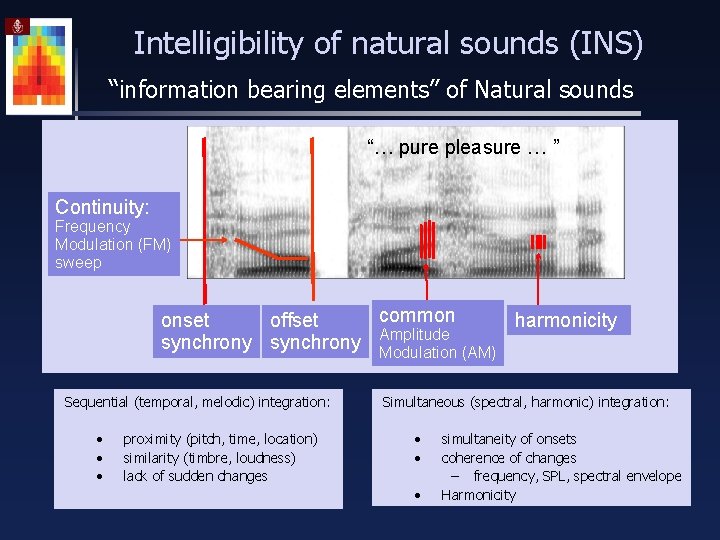

Intelligibility of natural sounds (INS) “information bearing elements” of Natural sounds “… pure pleasure … ” Continuity: Frequency Modulation (FM) sweep common onset offset harmonicity synchrony Amplitude Modulation (AM) Sequential (temporal, melodic) integration: • • • proximity (pitch, time, location) similarity (timbre, loudness) lack of sudden changes Simultaneous (spectral, harmonic) integration: • • • simultaneity of onsets coherence of changes – frequency, SPL, spectral envelope Harmonicity

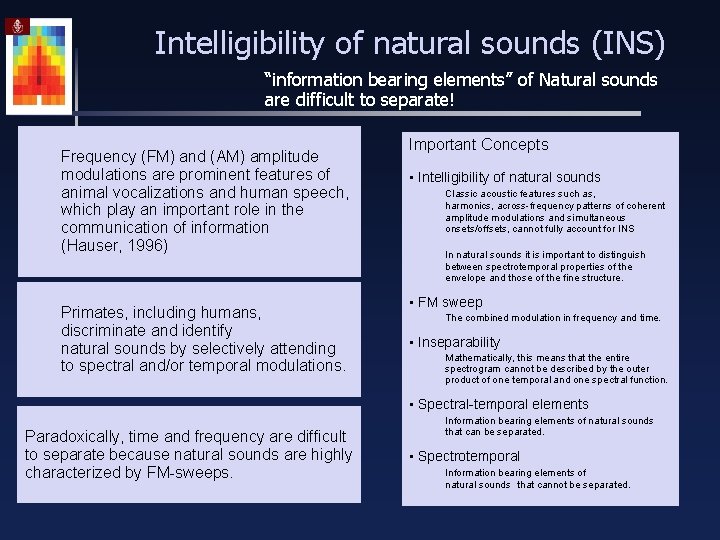

Intelligibility of natural sounds (INS) “information bearing elements” of Natural sounds are difficult to separate! Frequency (FM) and (AM) amplitude modulations are prominent features of animal vocalizations and human speech, which play an important role in the communication of information (Hauser, 1996) Primates, including humans, discriminate and identify natural sounds by selectively attending to spectral and/or temporal modulations. Important Concepts • Intelligibility of natural sounds Classic acoustic features such as, harmonics, across-frequency patterns of coherent amplitude modulations and simultaneous onsets/offsets, cannot fully account for INS In natural sounds it is important to distinguish between spectrotemporal properties of the envelope and those of the fine structure. • FM sweep The combined modulation in frequency and time. • Inseparability Mathematically, this means that the entire spectrogram cannot be described by the outer product of one temporal and one spectral function. • Spectral-temporal elements Paradoxically, time and frequency are difficult to separate because natural sounds are highly characterized by FM-sweeps. Information bearing elements of natural sounds that can be separated. • Spectrotemporal Information bearing elements of natural sounds that cannot be separated.

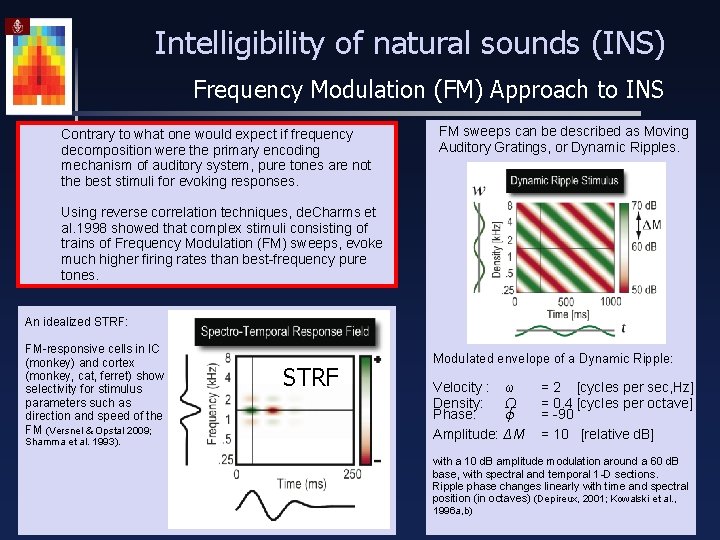

Intelligibility of natural sounds (INS) Frequency Modulation (FM) Approach to INS Contrary to what one would expect if frequency decomposition were the primary encoding mechanism of auditory system, pure tones are not the best stimuli for evoking responses. FM sweeps can be described as Moving Auditory Gratings, or Dynamic Ripples. Using reverse correlation techniques, de. Charms et al. 1998 showed that complex stimuli consisting of trains of Frequency Modulation (FM) sweeps, evoke much higher firing rates than best-frequency pure tones. An idealized STRF: FM-responsive cells in IC (monkey) and cortex (monkey, cat, ferret) show selectivity for stimulus parameters such as direction and speed of the FM (Versnel & Opstal 2009; Shamma et al. 1993). STRF Modulated envelope of a Dynamic Ripple: Velocity : ω Density: Ω Phase: ϕ Amplitude: ΔM = 2 [cycles per sec, Hz] = 0. 4 [cycles per octave] = -90 = 10 [relative d. B] with a 10 d. B amplitude modulation around a 60 d. B base, with spectral and temporal 1 -D sections. Ripple phase changes linearly with time and spectral position (in octaves) (Depireux, 2001; Kowalski et al. , 1996 a, b)

How is object information determined from sound?

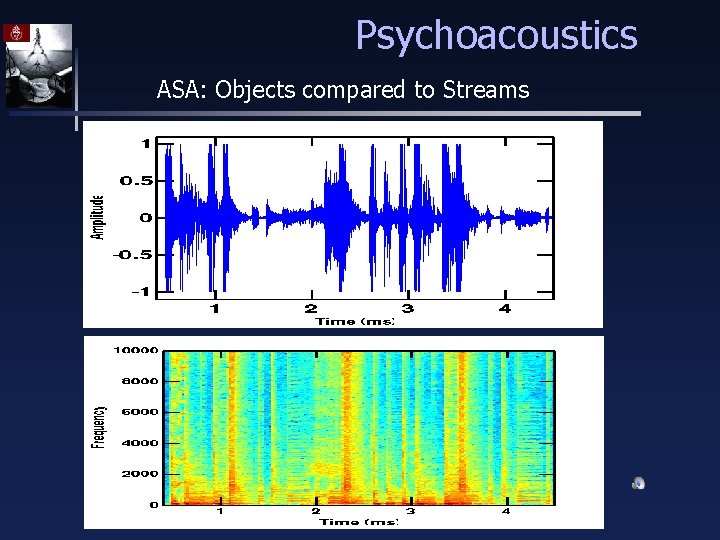

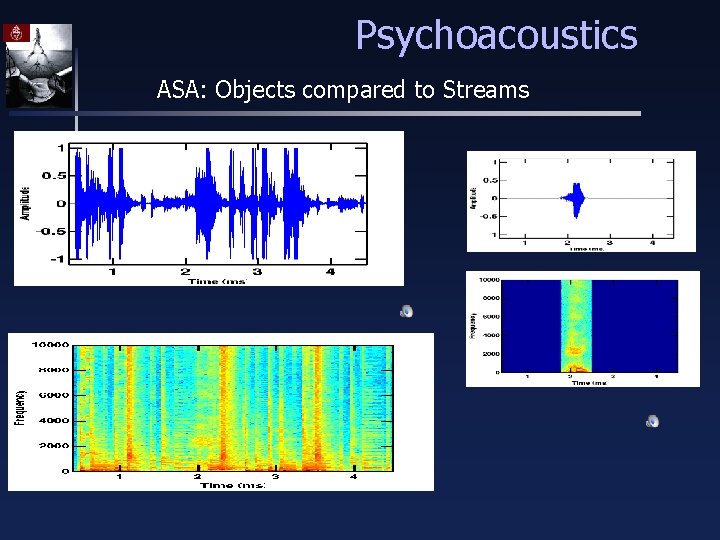

Psychoacoustics ASA: Objects compared to Streams

Psychoacoustics ASA: Objects compared to Streams

Psychoacoustics ASA: Objects compared to Streams

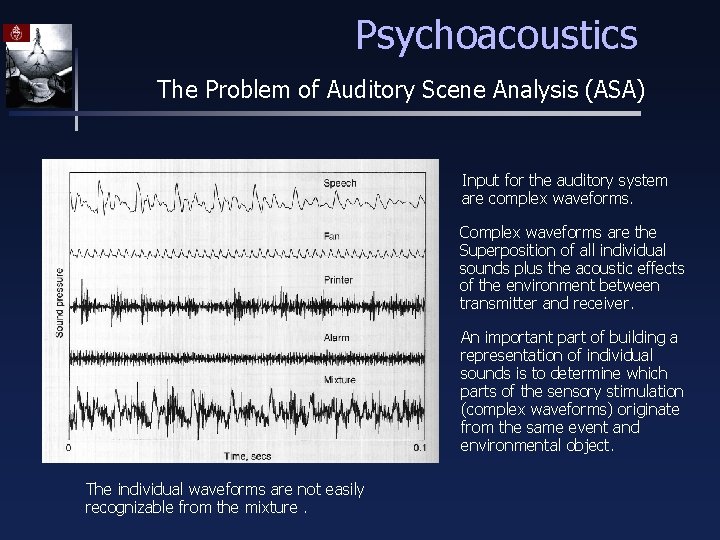

Psychoacoustics The Problem of Auditory Scene Analysis (ASA) Input for the auditory system are complex waveforms. Complex waveforms are the Superposition of all individual sounds plus the acoustic effects of the environment between transmitter and receiver. An important part of building a representation of individual sounds is to determine which parts of the sensory stimulation (complex waveforms) originate from the same event and environmental object. The individual waveforms are not easily recognizable from the mixture.

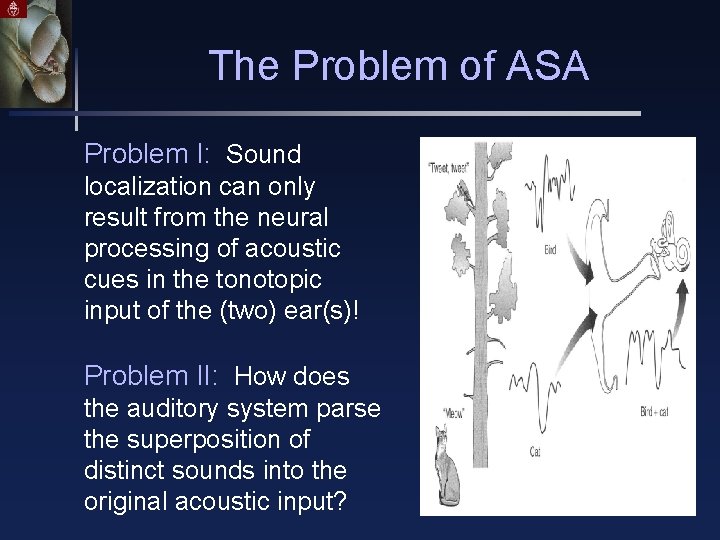

The Problem of ASA Problem I: Sound localization can only result from the neural processing of acoustic cues in the tonotopic input of the (two) ear(s)! Problem II: How does the auditory system parse the superposition of distinct sounds into the original acoustic input?

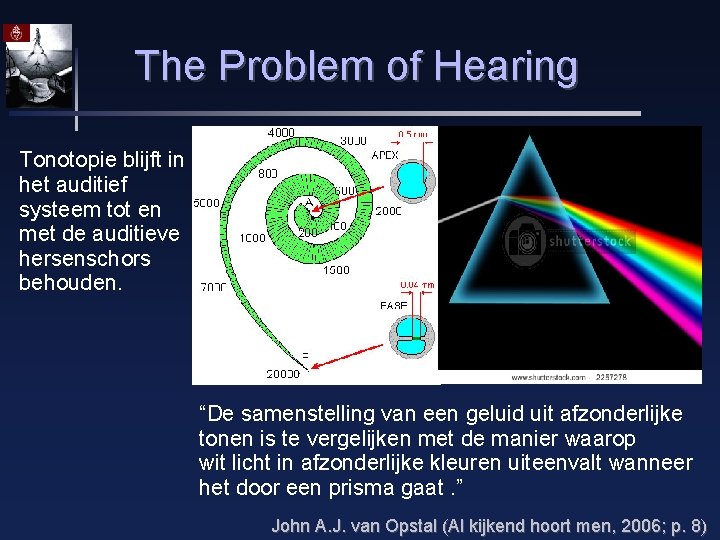

The Problem of Hearing Tonotopie blijft in het auditief systeem tot en met de auditieve hersenschors behouden. “De samenstelling van een geluid uit afzonderlijke tonen is te vergelijken met de manier waarop wit licht in afzonderlijke kleuren uiteenvalt wanneer het door een prisma gaat. ” John A. J. van Opstal (Al kijkend hoort men, 2006; p. 8)

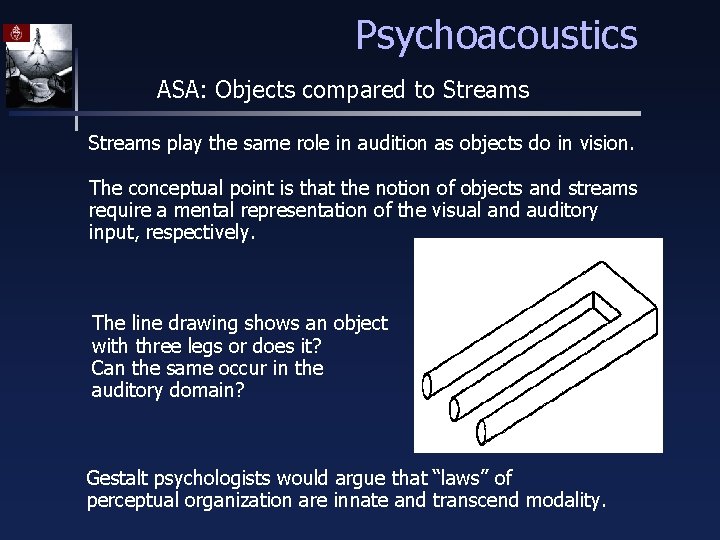

Psychoacoustics ASA: Objects compared to Streams play the same role in audition as objects do in vision. The conceptual point is that the notion of objects and streams require a mental representation of the visual and auditory input, respectively. The line drawing shows an object with three legs or does it? Can the same occur in the auditory domain? Gestalt psychologists would argue that “laws” of perceptual organization are innate and transcend modality.

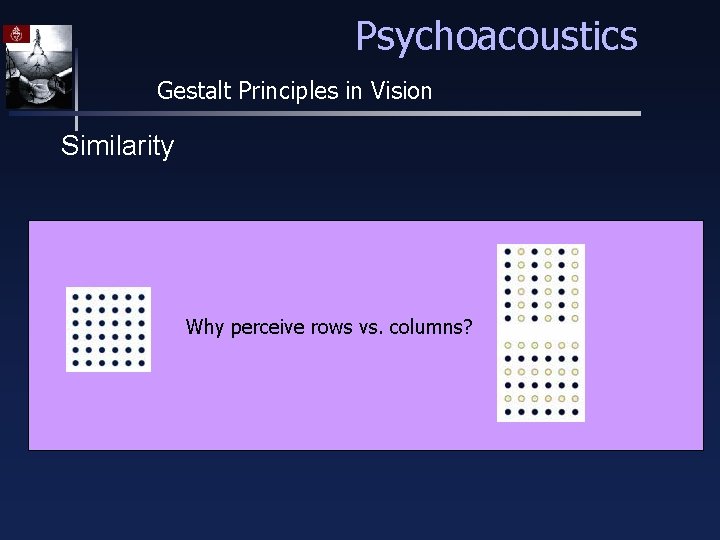

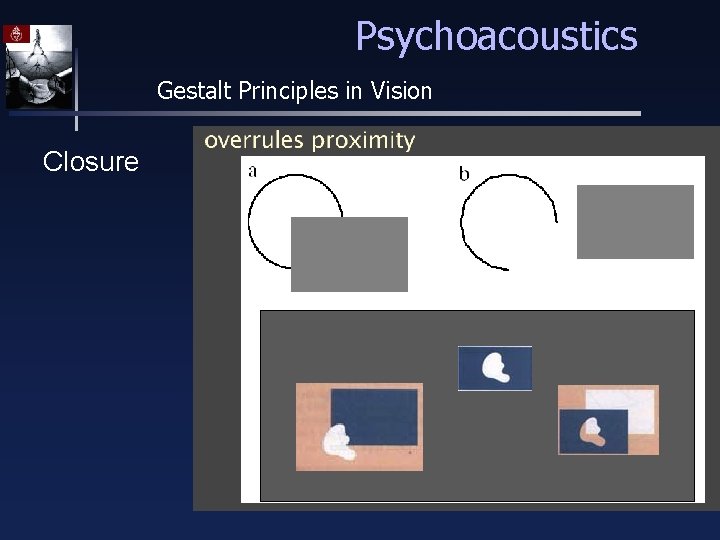

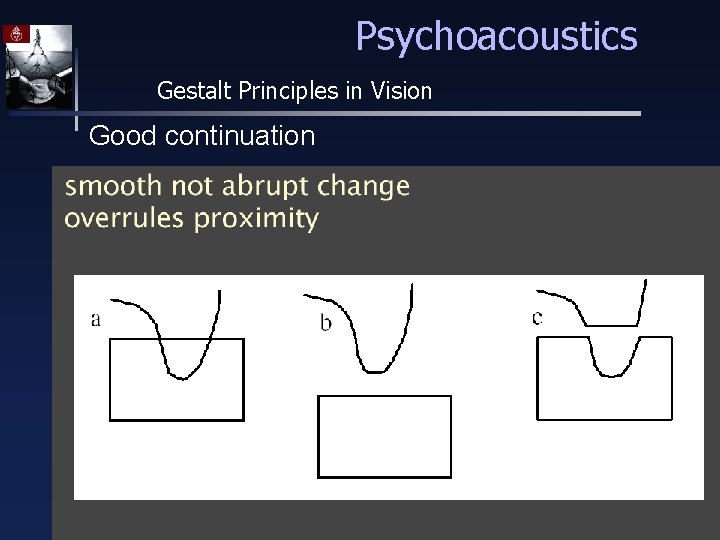

Psychoacoustics Gestalt Principles in Vision Proximity: – grouping of nearby dots Similarity: – grouping of similar dots Closure: – recognition of incomplete patterns Good continuation: – e. g. 2 lines crossing

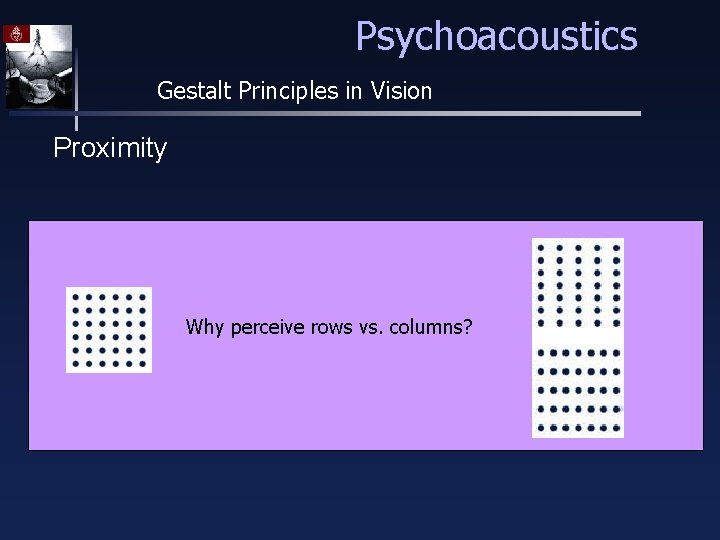

Psychoacoustics Gestalt Principles in Vision Proximity Why perceive rows vs. columns?

Psychoacoustics Gestalt Principles in Vision Similarity Why perceive rows vs. columns?

Psychoacoustics Gestalt Principles in Vision Closure

Psychoacoustics Gestalt Principles in Vision Good continuation

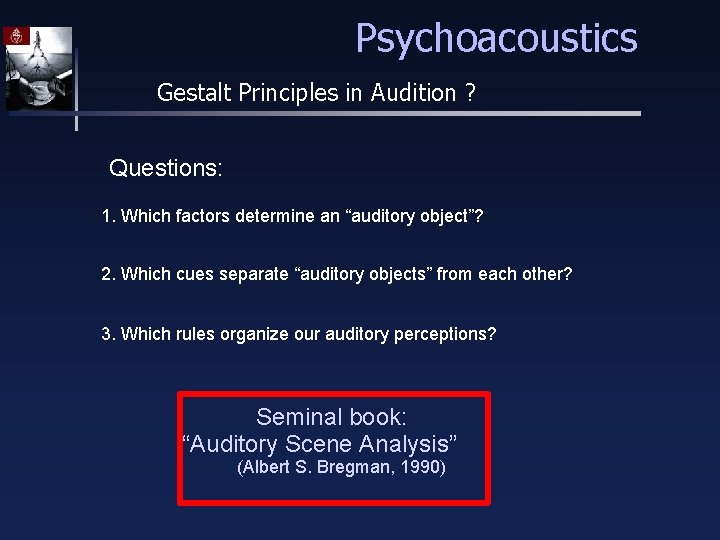

Psychoacoustics Gestalt Principles in Audition ? Questions: 1. Which factors determine an “auditory object”? 2. Which cues separate “auditory objects” from each other? 3. Which rules organize our auditory perceptions? Seminal book: “Auditory Scene Analysis” (Albert S. Bregman, 1990)

Psychoacoustics Gestalt Principles in Audition ? Listeners are capable of parsing an acoustic scene (a complex sound) to form a mental representation of each sound source – stream – in the perceptual process of auditory scene analysis (Bregman, 1990) from events to streams Two conceptual processes of ASA: Segmentation. Decompose the acoustic mixture into sensory elements (segments) Grouping. Combine segments into streams, so that segments in the same stream originate from the same source

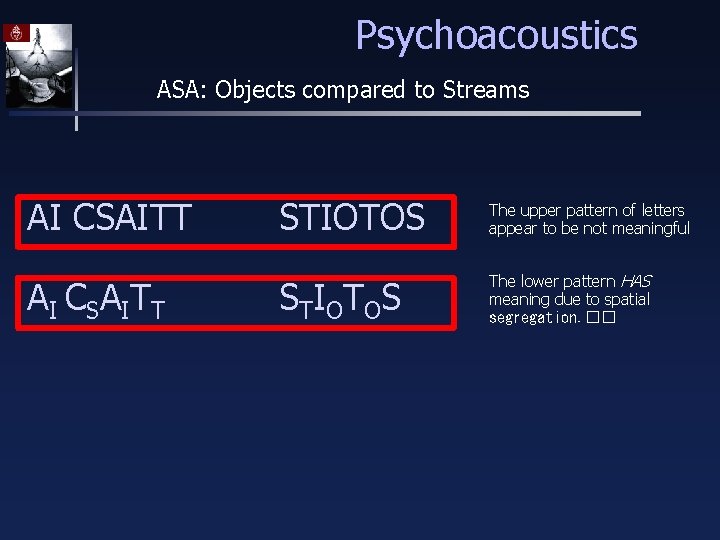

Psychoacoustics ASA: Objects compared to Streams AI CSAITT A I C S A IT T STIOTOS The upper pattern of letters appear to be not meaningful S T IO T O S The lower pattern HAS meaning due to spatial segregation. ��

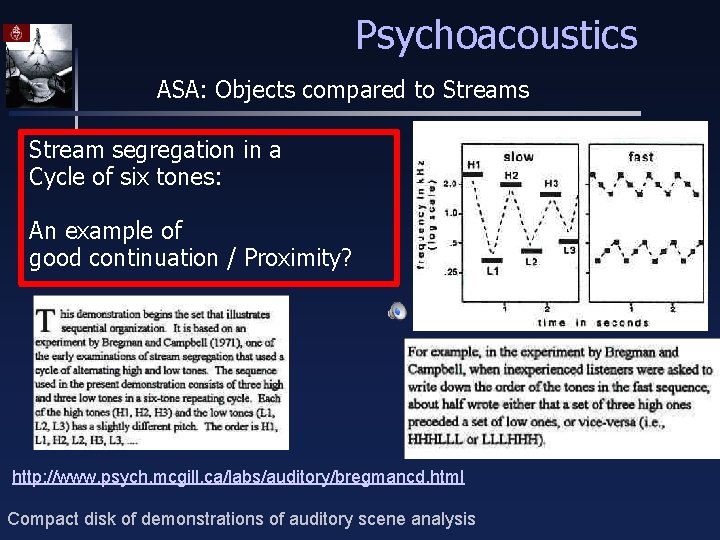

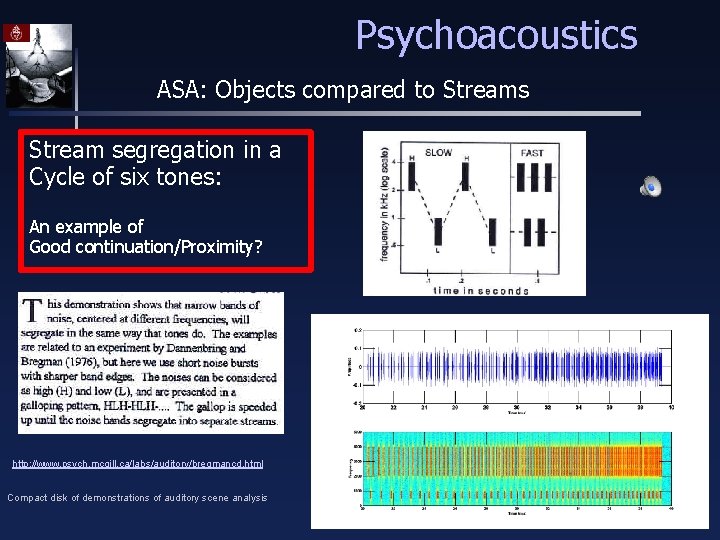

Psychoacoustics ASA: Objects compared to Streams Stream segregation in a Cycle of six tones: An example of good continuation / Proximity? http: //www. psych. mcgill. ca/labs/auditory/bregmancd. html Compact disk of demonstrations of auditory scene analysis

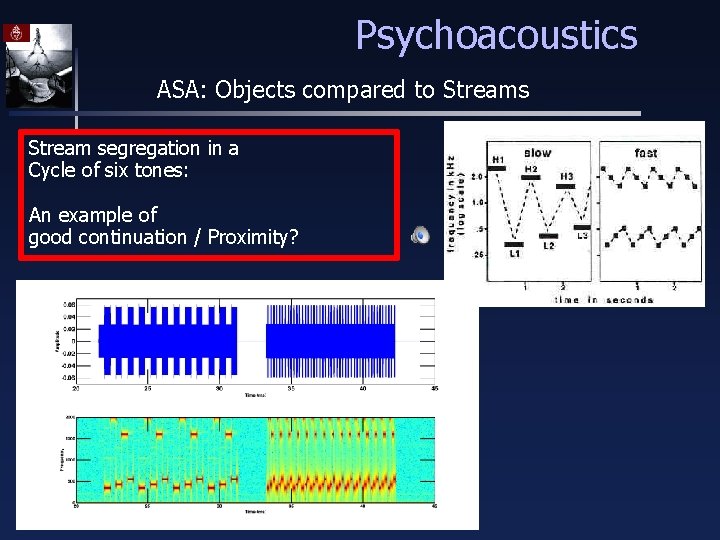

Psychoacoustics ASA: Objects compared to Streams Stream segregation in a Cycle of six tones: An example of good continuation / Proximity?

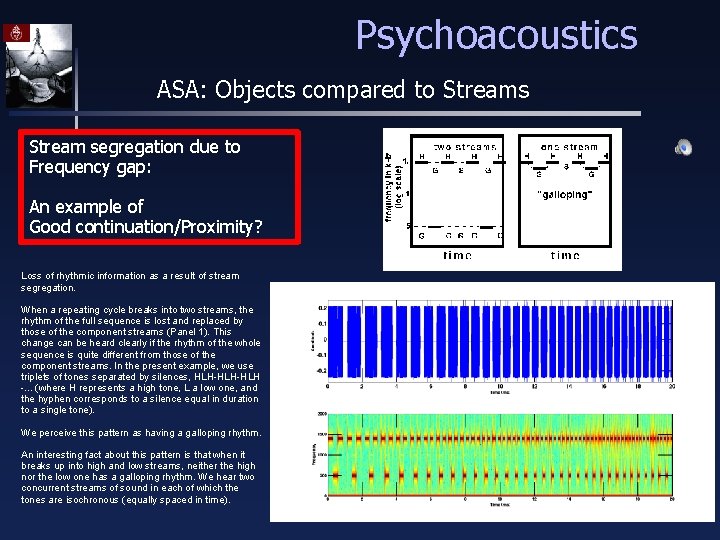

Psychoacoustics ASA: Objects compared to Streams Stream segregation due to Frequency gap: An example of Good continuation/Proximity? Loss of rhythmic information as a result of stream segregation. When a repeating cycle breaks into two streams, the rhythm of the full sequence is lost and replaced by those of the component streams (Panel 1). This change can be heard clearly if the rhythm of the whole sequence is quite different from those of the component streams. In the present example, we use triplets of tones separated by silences, HLH-HLH -. . . (where H represents a high tone, L a low one, and the hyphen corresponds to a silence equal in duration to a single tone). We perceive this pattern as having a galloping rhythm. An interesting fact about this pattern is that when it breaks up into high and low streams, neither the high nor the low one has a galloping rhythm. We hear two concurrent streams of sound in each of which the tones are isochronous (equally spaced in time).

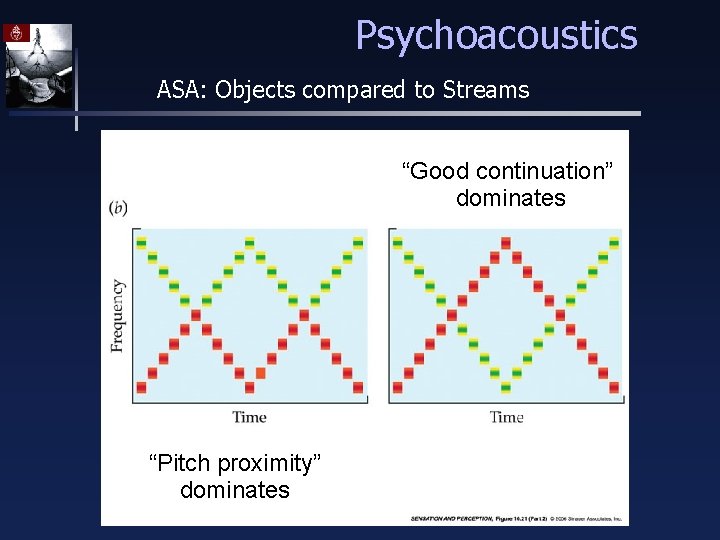

Psychoacoustics ASA: Objects compared to Streams “Good continuation” dominates “Pitch proximity” dominates

Psychoacoustics ASA: Objects compared to Streams Stream segregation in a Cycle of six tones: An example of Good continuation/Proximity? http: //www. psych. mcgill. ca/labs/auditory/bregmancd. html Compact disk of demonstrations of auditory scene analysis

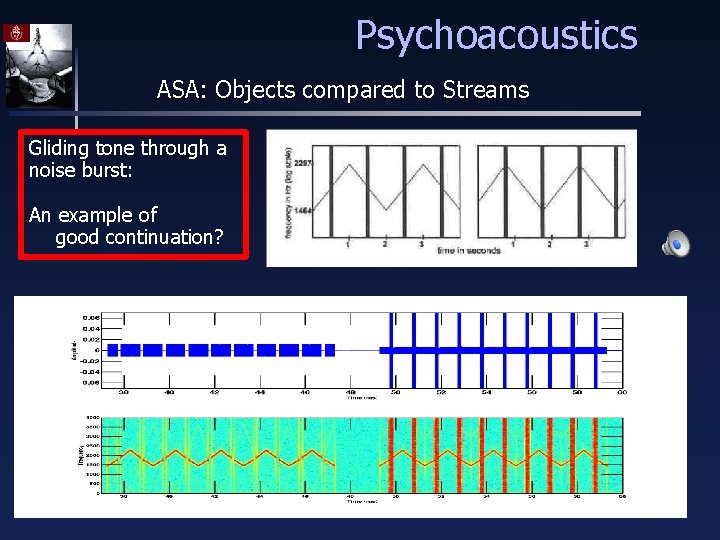

Psychoacoustics ASA: Objects compared to Streams Gliding tone through a noise burst: An example of good continuation?

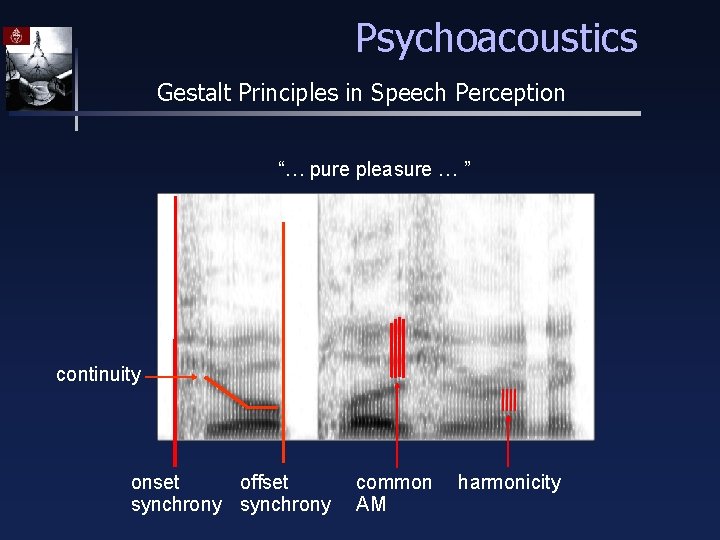

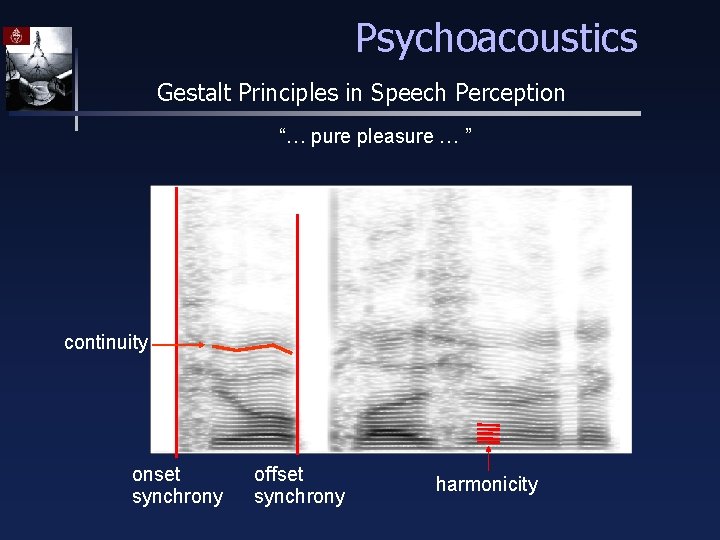

Psychoacoustics Gestalt Principles in Speech Perception “… pure pleasure … ” continuity onset offset synchrony common AM harmonicity

Psychoacoustics Gestalt Principles in Speech Perception “… pure pleasure … ” continuity onset synchrony offset synchrony harmonicity

Psychoacoustics Gestalt Principles in Audition Auditory peripheral processing amounts to a decomposition of the acoustic signal. ASA cues essentially reflect structural coherence of a sound source. A subset of cues believed to be strongly involved in ASA: Simultaneous organization: Periodicity, temporal modulation, onset. Sequential organization: Location, pitch contour and other source characteristics (e. g. vocal tract).

Psychoacoustics Gestalt Principles in Audition Sequential (temporal, melodic) integration • proximity (pitch, time, location) • similarity (timbre, loudness) • lack of sudden changes Simultaneous (spectral, harmonic) integration • simultaneity of onsets • coherence of changes – frequency, SPL, spectral envelope • harmonicity

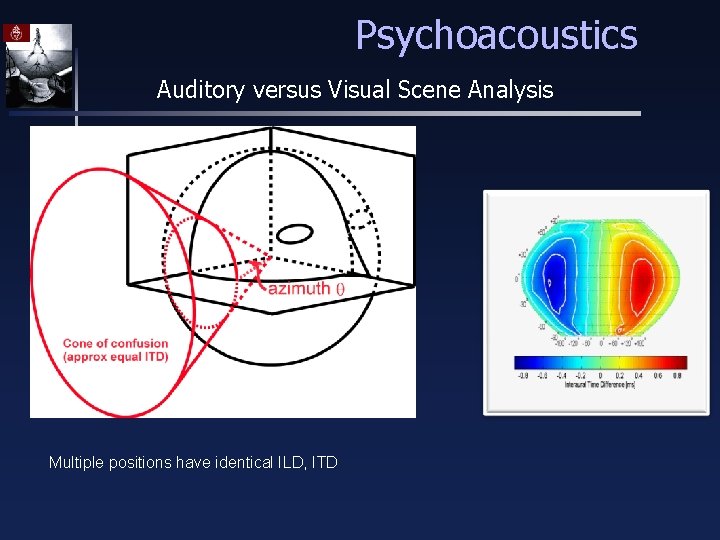

Psychoacoustics Auditory versus Visual Scene Analysis Multiple positions have identical ILD, ITD

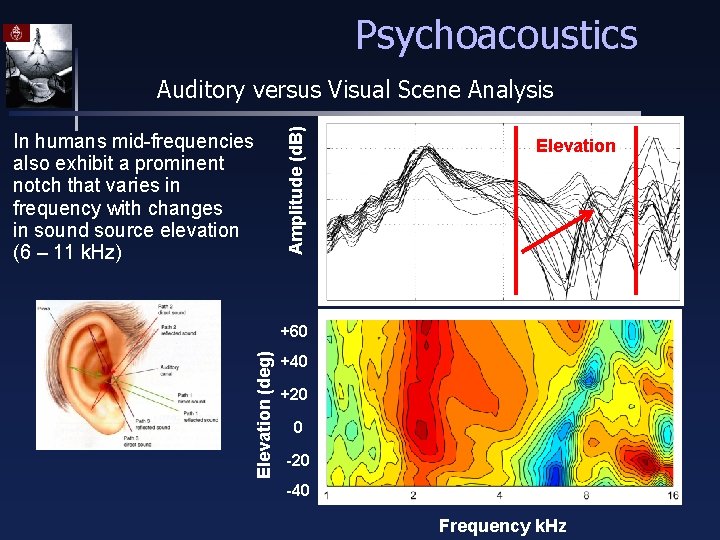

Psychoacoustics In humans mid-frequencies also exhibit a prominent notch that varies in frequency with changes in sound source elevation (6 – 11 k. Hz) Amplitude (d. B) Auditory versus Visual Scene Analysis Elevation (deg) +60 +40 +20 0 -20 -40 Frequency k. Hz

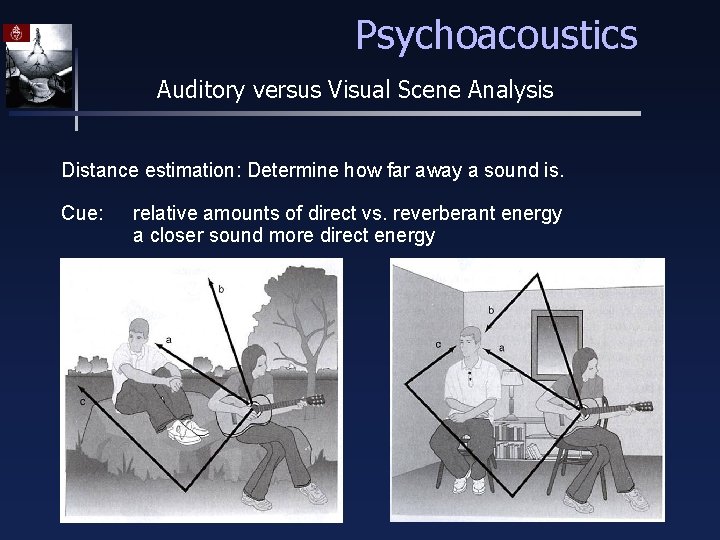

Psychoacoustics Auditory versus Visual Scene Analysis Distance estimation: Determine how far away a sound is. Cue: relative amounts of direct vs. reverberant energy a closer sound more direct energy

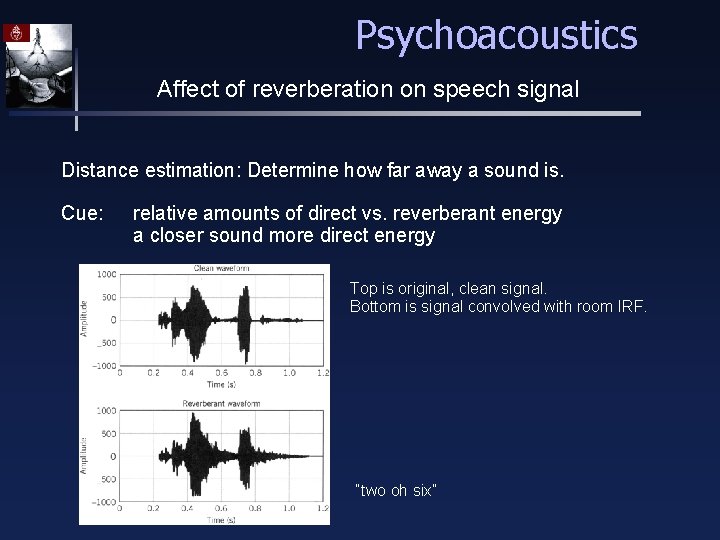

Psychoacoustics Affect of reverberation on speech signal Distance estimation: Determine how far away a sound is. Cue: relative amounts of direct vs. reverberant energy a closer sound more direct energy Top is original, clean signal. Bottom is signal convolved with room IRF. “two oh six”

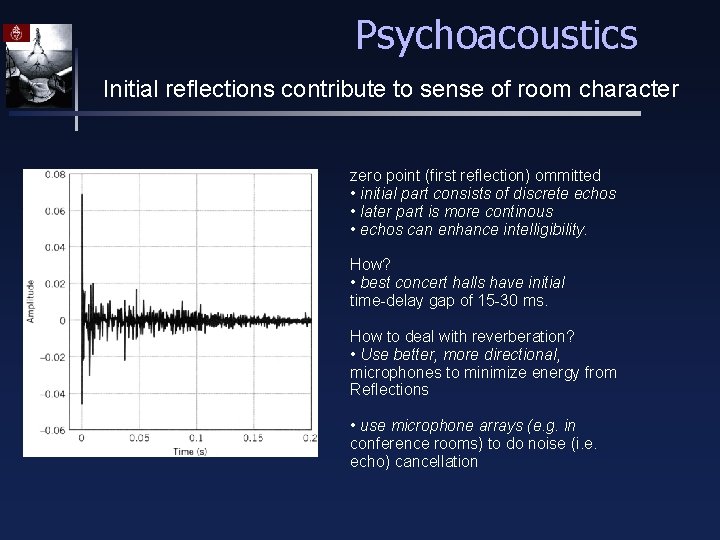

Psychoacoustics Initial reflections contribute to sense of room character zero point (first reflection) ommitted • initial part consists of discrete echos • later part is more continous • echos can enhance intelligibility. How? • best concert halls have initial time-delay gap of 15 -30 ms. How to deal with reverberation? • Use better, more directional, microphones to minimize energy from Reflections • use microphone arrays (e. g. in conference rooms) to do noise (i. e. echo) cancellation

Psychoacoustics Auditory versus Visual Scene Analysis Gestalt principles focus on similarities between the different Modalities such as vision and audition, but there are differences as well due to the difference in physical properties. In audition sound-emitting properties rather than sound-reflecting properties of the environment are important. Sound is used to discover the time and frequency pattern of the source not its spatial shape. In other words acoustic events are transparent; they do not occlude energy from what lies behind. Echoes (reflections) obscure the original properties of sounds. Although echoes are delayed copies (containing all the original information) the superposition of the original sound and its echoes creates redundant information. Acoustic information can only effectively used from large objects such as rooms or mountains. Only than effects of the environment between transmitter and receiver are noticeable.

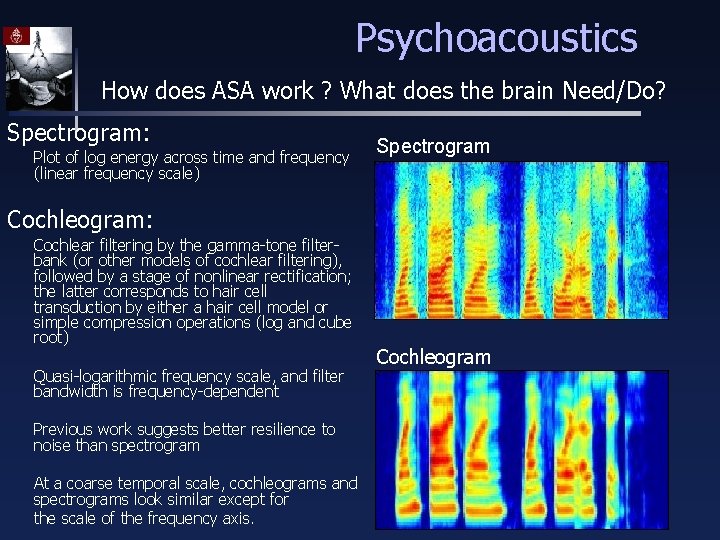

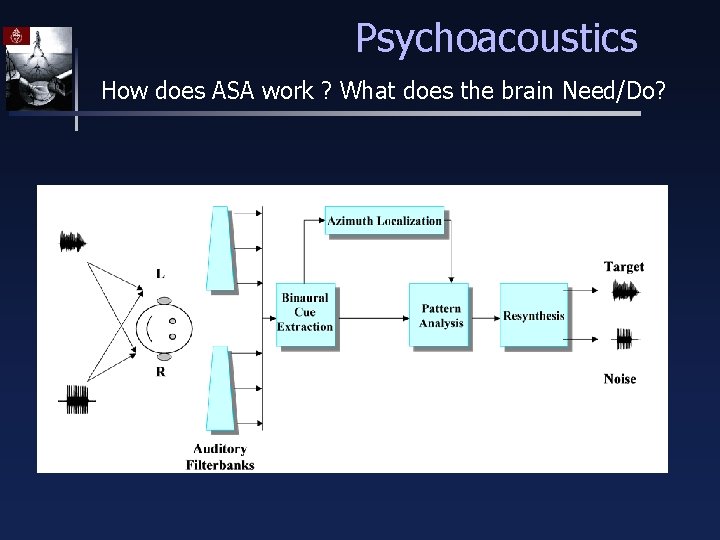

Psychoacoustics How does ASA work ? What does the brain Need/Do? Spectrogram: Plot of log energy across time and frequency (linear frequency scale) Spectrogram Cochleogram: Cochlear filtering by the gamma-tone filterbank (or other models of cochlear filtering), followed by a stage of nonlinear rectification; the latter corresponds to hair cell transduction by either a hair cell model or simple compression operations (log and cube root) Quasi-logarithmic frequency scale, and filter bandwidth is frequency-dependent Previous work suggests better resilience to noise than spectrogram At a coarse temporal scale, cochleograms and spectrograms look similar except for the scale of the frequency axis. Cochleogram

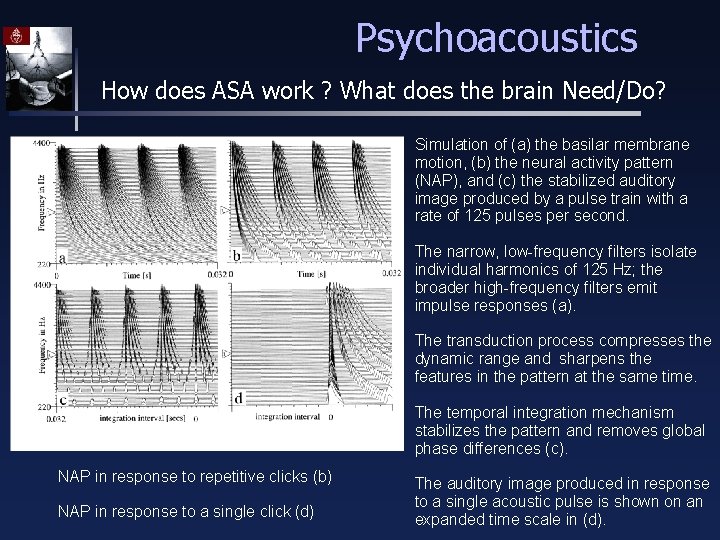

Psychoacoustics How does ASA work ? What does the brain Need/Do? Simulation of (a) the basilar membrane motion, (b) the neural activity pattern (NAP), and (c) the stabilized auditory image produced by a pulse train with a rate of 125 pulses per second. The narrow, low-frequency filters isolate individual harmonics of 125 Hz; the broader high-frequency filters emit impulse responses (a). The transduction process compresses the dynamic range and sharpens the features in the pattern at the same time. The temporal integration mechanism stabilizes the pattern and removes global phase differences (c). NAP in response to repetitive clicks (b) NAP in response to a single click (d) The auditory image produced in response to a single acoustic pulse is shown on an expanded time scale in (d).

Psychoacoustics How does ASA work ? What does the brain Need/Do?

Psychoacoustics Fundamental problem of ASA Auditory scene analysis requires: Analysis over long time windows Analysis over broad spectral widths A sensitive auditory system requires: Analysis over very short time windows Analysis over narrow frequency bands

Psychoacoustics Fundamental problem of ASA Auditory scene analysis requires: Analysis over long time windows Analysis over broad spectral widths A sensitive auditory system requires: Analysis over very short time windows Analysis over narrow frequency bands

- Slides: 51