ATLAS Grid Computing and Data Challenges Nurcan Ozturk

- Slides: 16

ATLAS Grid Computing and Data Challenges Nurcan Ozturk University of Texas at Arlington Recent Progresses in High Energy Physics Bolu, Turkey. June 23 -25, 2004 1

Outline • Introduction • • ATLAS Data Challenges • • • DC 2 Event Samples Data Production Scenario ATLAS New Production System • • • ATLAS Experiment ATLAS Computing System ATLAS Computing Timeline Grid Flavors in Production System Windmill-Supervisor An Example of XML Messages Windmill-Capone Screenshots Grid Tools Conclusions 2

Introduction • Why Grid Computing: • Scientific research becomes more and more complex and international teams of scientists grow larger and larger • Grid technologies enables scientist to use remote computers and data storage systems to be able to retrieve and analyze the data around the world • Grid Computing power will be a key to the success of the LHC experiments • Grid computing is a challenge not only for particle physics experiments but also for biologists, astrophysicists and gravitational wave researchers 3

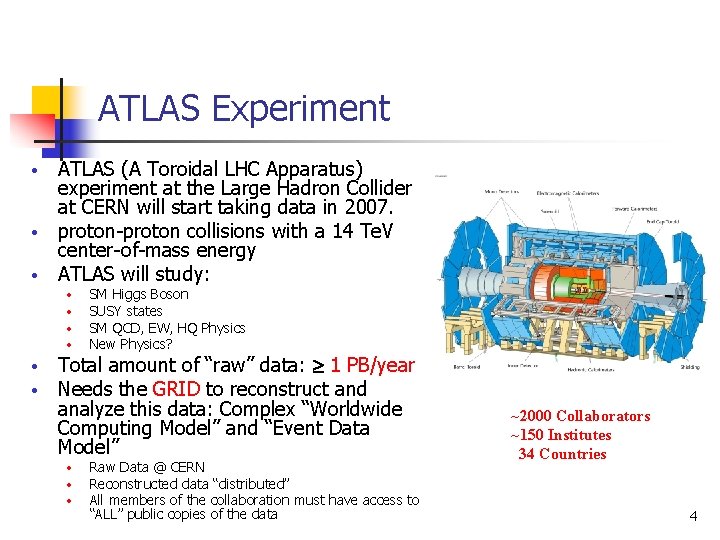

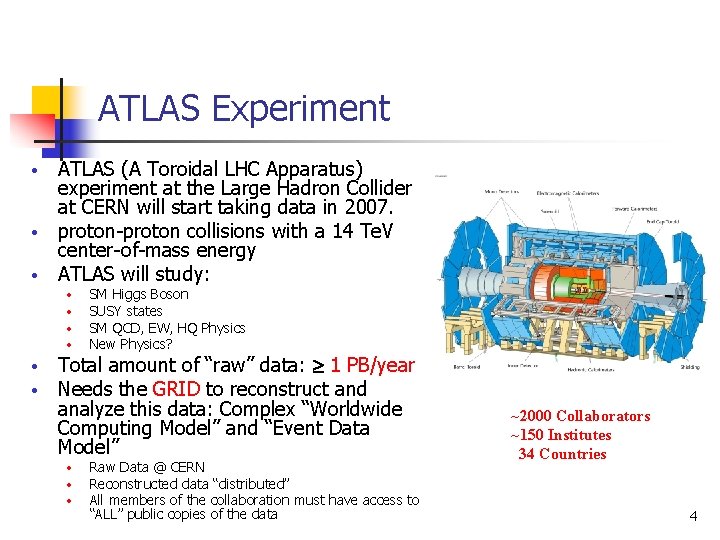

ATLAS Experiment ATLAS (A Toroidal LHC Apparatus) experiment at the Large Hadron Collider at CERN will start taking data in 2007. • proton-proton collisions with a 14 Te. V center-of-mass energy • ATLAS will study: • • SM Higgs Boson SUSY states SM QCD, EW, HQ Physics New Physics? Total amount of “raw” data: 1 PB/year Needs the GRID to reconstruct and analyze this data: Complex “Worldwide Computing Model” and “Event Data Model” • • • Raw Data @ CERN Reconstructed data “distributed” All members of the collaboration must have access to “ALL” public copies of the data ~2000 Collaborators ~150 Institutes 34 Countries 4

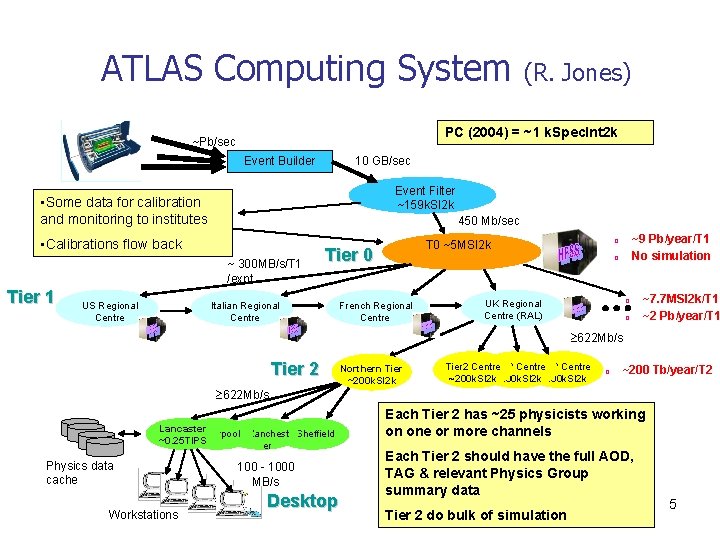

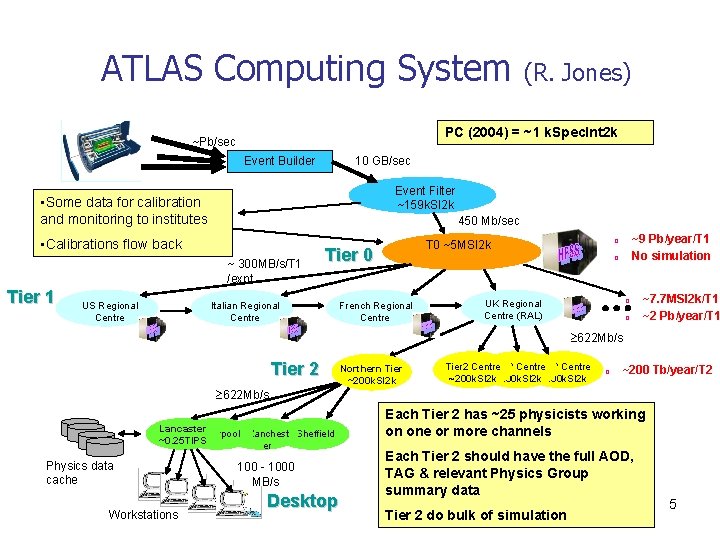

ATLAS Computing System PC (2004) = ~1 k. Spec. Int 2 k ~Pb/sec Event Builder 10 GB/sec Event Filter ~159 k. SI 2 k • Some data for calibration and monitoring to institutes 450 Mb/sec • Calibrations flow back ~ 300 MB/s/T 1 /expt Tier 1 US Regional Centre (R. Jones) Tier 0 Italian Regional Centre ~9 Pb/year/T 1 No simulation ¨ T 0 ~5 MSI 2 k ¨ French Regional Centre ¨ UK Regional Centre (RAL) ¨ ~7. 7 MSI 2 k/T 1 ~2 Pb/year/T 1 622 Mb/s Tier 2 Northern Tier ~200 k. SI 2 k Tier 2 Centre ~200 k. SI 2 k~200 k. SI 2 k ¨ ~200 Tb/year/T 2 622 Mb/s Lancaster Liverpool Manchest Sheffield ~0. 25 TIPS er Physics data cache Workstations 100 - 1000 MB/s Desktop Each Tier 2 has ~25 physicists working on one or more channels Each Tier 2 should have the full AOD, TAG & relevant Physics Group summary data Tier 2 do bulk of simulation 5

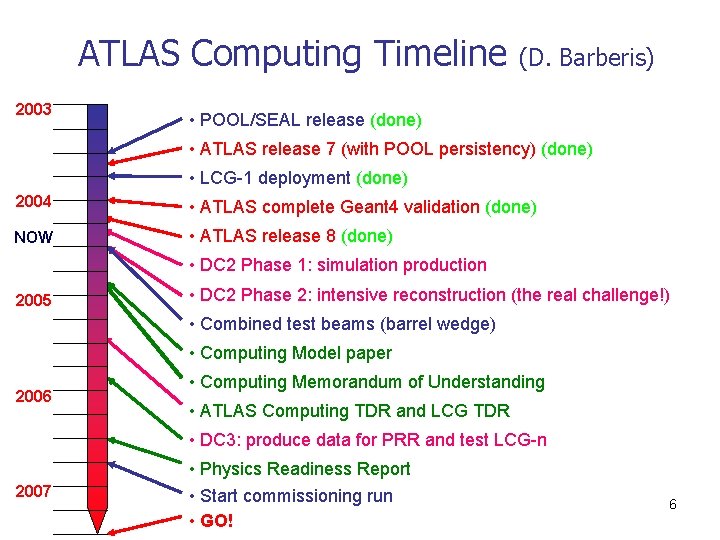

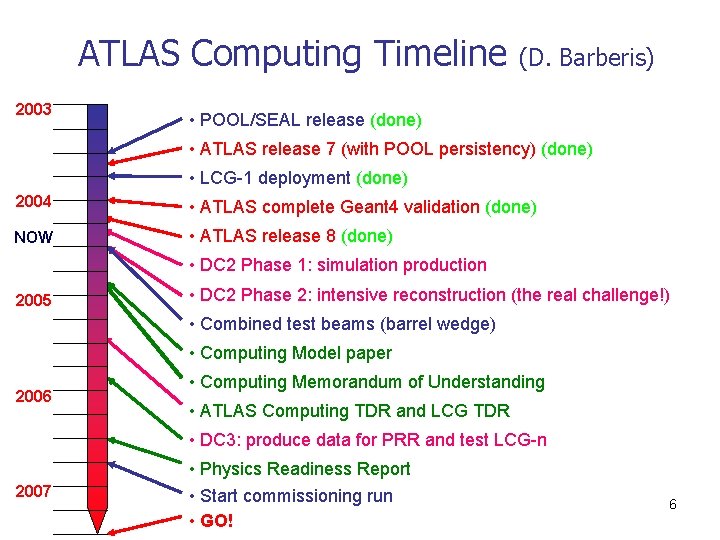

ATLAS Computing Timeline 2003 (D. Barberis) • POOL/SEAL release (done) • ATLAS release 7 (with POOL persistency) (done) • LCG-1 deployment (done) 2004 • ATLAS complete Geant 4 validation (done) NOW • ATLAS release 8 (done) • DC 2 Phase 1: simulation production 2005 • DC 2 Phase 2: intensive reconstruction (the real challenge!) • Combined test beams (barrel wedge) • Computing Model paper 2006 • Computing Memorandum of Understanding • ATLAS Computing TDR and LCG TDR • DC 3: produce data for PRR and test LCG-n 2007 • Physics Readiness Report • Start commissioning run • GO! 6

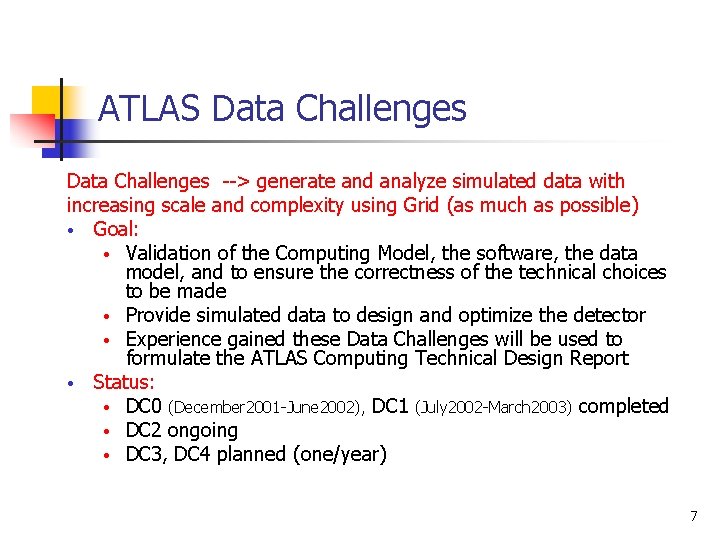

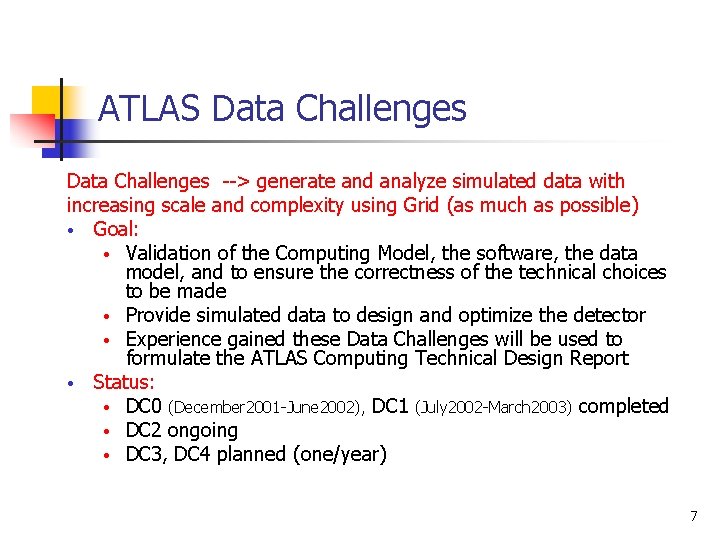

ATLAS Data Challenges --> generate and analyze simulated data with increasing scale and complexity using Grid (as much as possible) • Goal: • Validation of the Computing Model, the software, the data model, and to ensure the correctness of the technical choices to be made • Provide simulated data to design and optimize the detector • Experience gained these Data Challenges will be used to formulate the ATLAS Computing Technical Design Report • Status: • DC 0 (December 2001 -June 2002), DC 1 (July 2002 -March 2003) completed • DC 2 ongoing • DC 3, DC 4 planned (one/year) 7

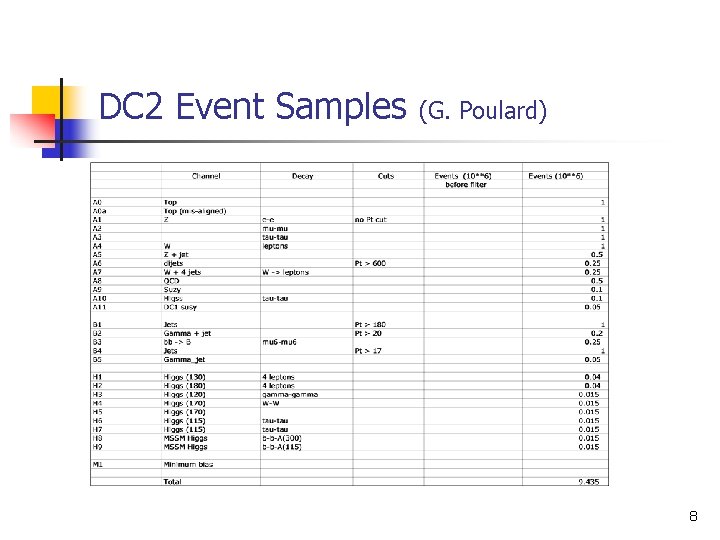

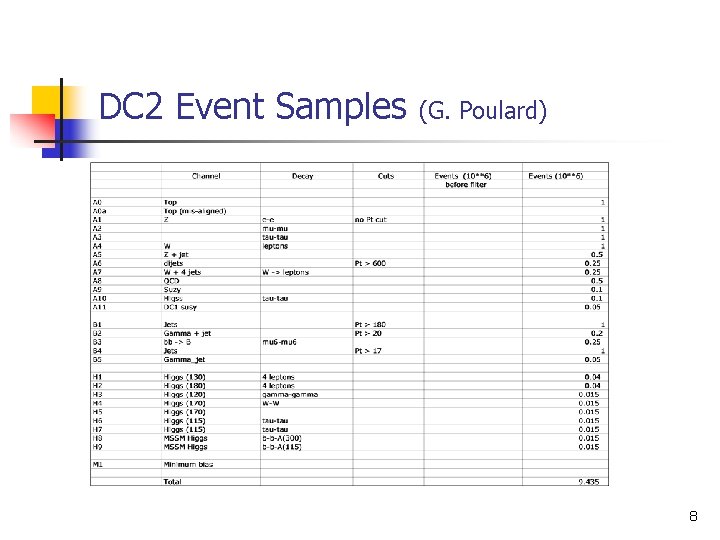

DC 2 Event Samples (G. Poulard) 8

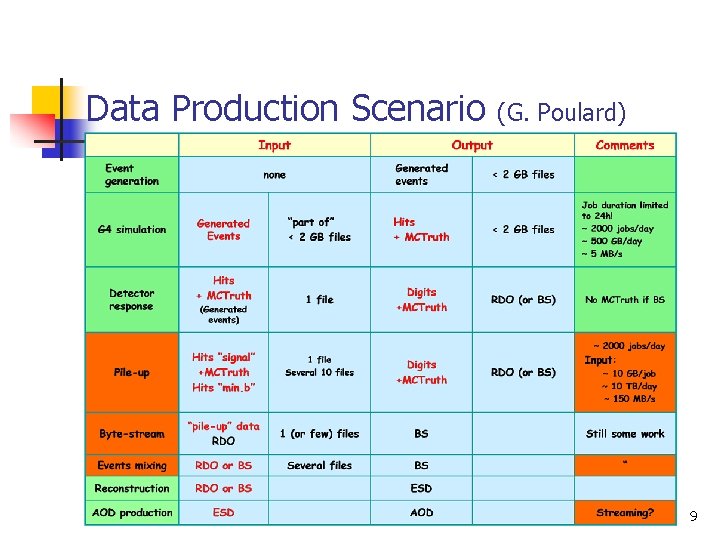

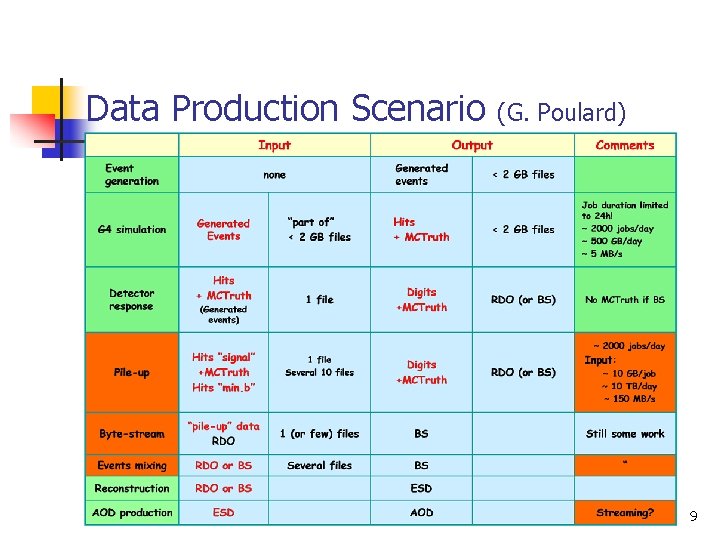

Data Production Scenario (G. Poulard) 9

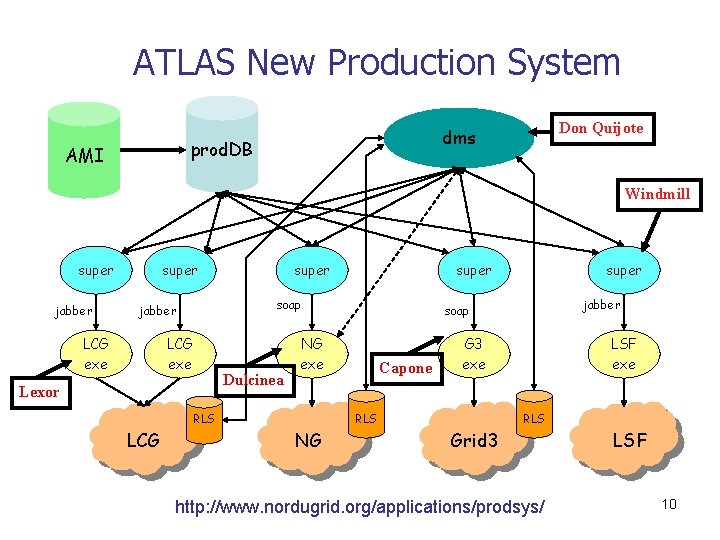

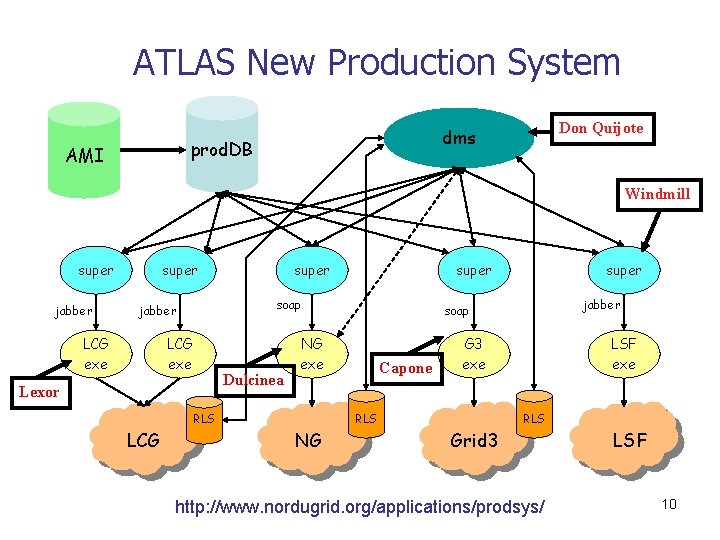

ATLAS New Production System prod. DB AMI Don Quijote dms Windmill super jabber super LCG exe Dulcinea Lexor jabber Capone G 3 exe RLS NG super soap NG exe RLS LCG super soap jabber LCG exe super LSF exe RLS Grid 3 http: //www. nordugrid. org/applications/prodsys/ LSF 10

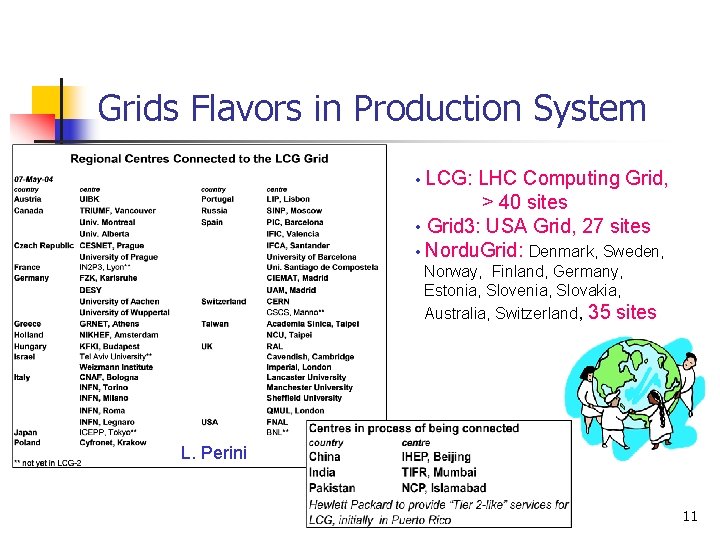

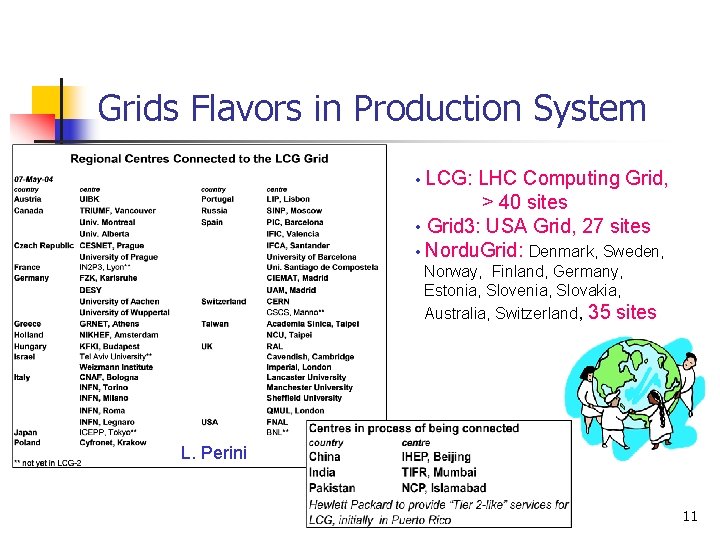

Grids Flavors in Production System LCG: LHC Computing Grid, > 40 sites • Grid 3: USA Grid, 27 sites • Nordu. Grid: Denmark, Sweden, • Norway, Finland, Germany, Estonia, Slovenia, Slovakia, Australia, Switzerland, 35 sites L. Perini 11

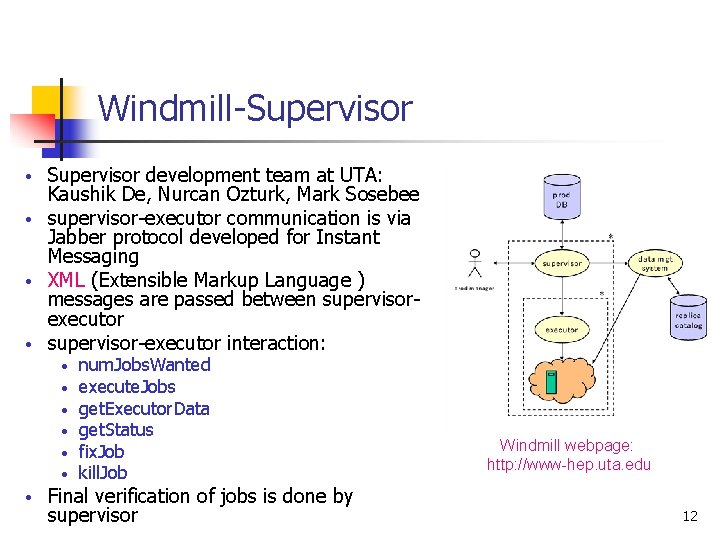

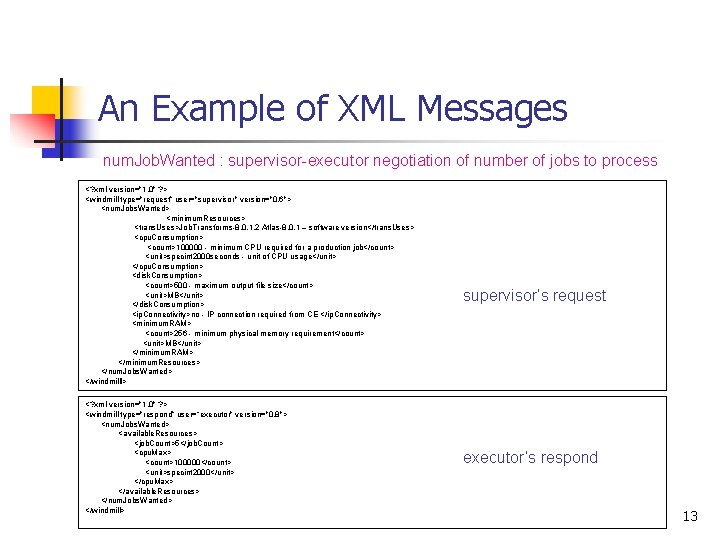

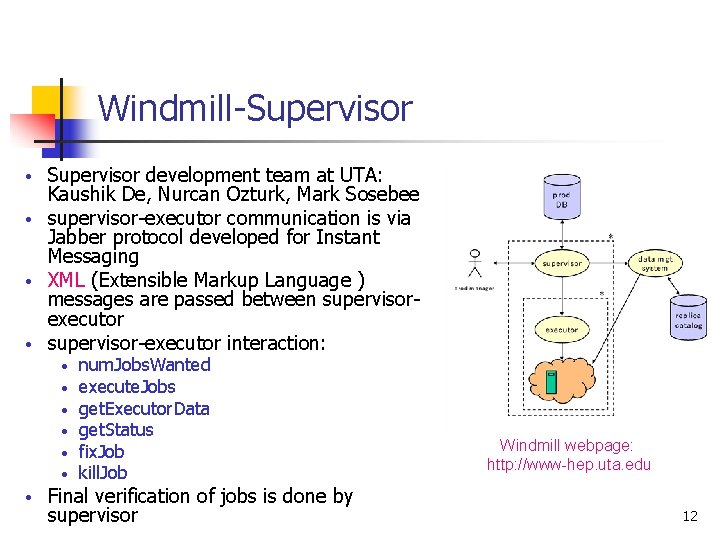

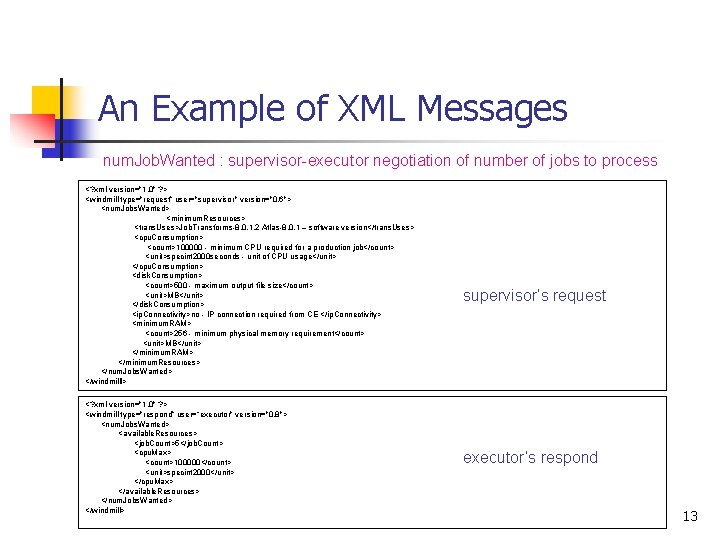

Windmill-Supervisor development team at UTA: Kaushik De, Nurcan Ozturk, Mark Sosebee • supervisor-executor communication is via Jabber protocol developed for Instant Messaging • XML (Extensible Markup Language ) messages are passed between supervisorexecutor • supervisor-executor interaction: • • num. Jobs. Wanted execute. Jobs get. Executor. Data get. Status fix. Job kill. Job Final verification of jobs is done by supervisor Windmill webpage: http: //www-hep. uta. edu 12

An Example of XML Messages num. Job. Wanted : supervisor-executor negotiation of number of jobs to process <? xml version="1. 0" ? > <windmill type="request” user="supervisor" version="0. 6"> <num. Jobs. Wanted> <minimum. Resources> <trans. Uses>Job. Transforms-8. 0. 1. 2 Atlas-8. 0. 1 – software version</trans. Uses> <cpu. Consumption> <count>100000 - minimum CPU required for a production job</count> <unit>specint 2000 seconds - unit of CPU usage</unit> </cpu. Consumption> <disk. Consumption> <count>500 - maximum output file size</count> <unit>MB</unit> </disk. Consumption> <ip. Connectivity>no - IP connection required from CE </ip. Connectivity> <minimum. RAM> <count>256 - minimum physical memory requirement</count> <unit>MB</unit> </minimum. RAM> </minimum. Resources> </num. Jobs. Wanted> </windmilll> <? xml version="1. 0" ? > <windmill type="respond” user=“executor" version="0. 8"> <num. Jobs. Wanted> <available. Resources> <job. Count>5</job. Count> <cpu. Max> <count>100000</count> <unit>specint 2000</unit> </cpu. Max> </available. Resources> </num. Jobs. Wanted> </windmill> supervisor’s request executor’s respond 13

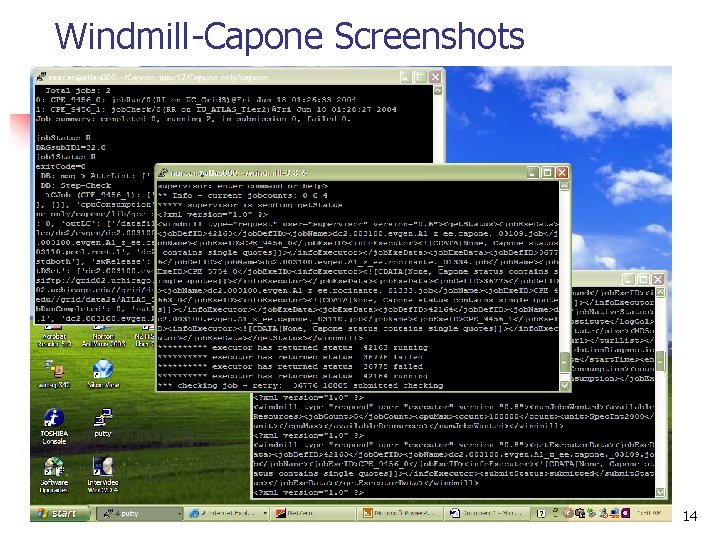

Windmill-Capone Screenshots 14

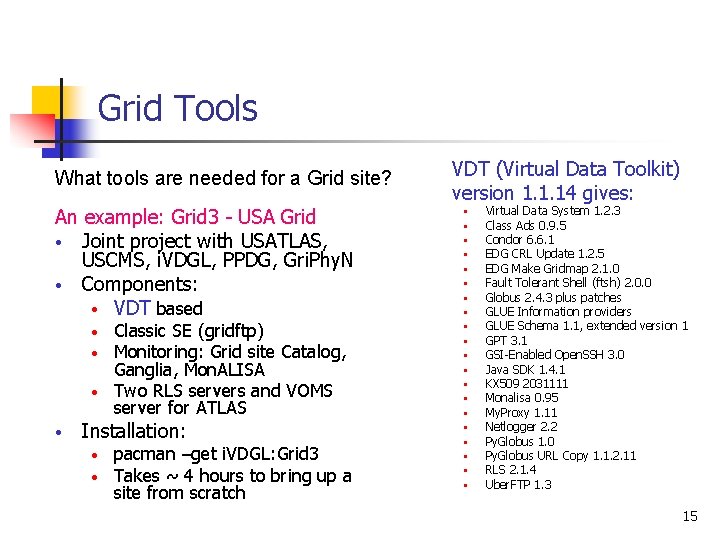

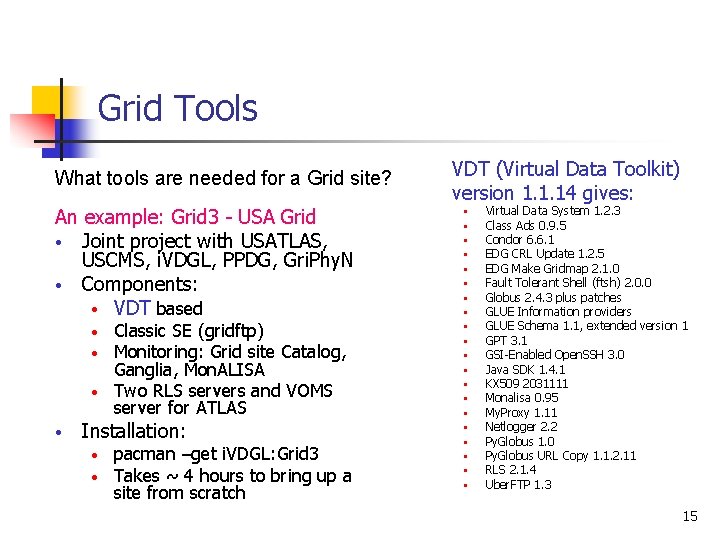

Grid Tools What tools are needed for a Grid site? An example: Grid 3 - USA Grid • Joint project with USATLAS, USCMS, i. VDGL, PPDG, Gri. Phy. N • Components: • VDT based Classic SE (gridftp) Monitoring: Grid site Catalog, Ganglia, Mon. ALISA • Two RLS servers and VOMS server for ATLAS • • • Installation: • • pacman –get i. VDGL: Grid 3 Takes ~ 4 hours to bring up a site from scratch VDT (Virtual Data Toolkit) version 1. 1. 14 gives: • • • • • Virtual Data System 1. 2. 3 Class Ads 0. 9. 5 Condor 6. 6. 1 EDG CRL Update 1. 2. 5 EDG Make Gridmap 2. 1. 0 Fault Tolerant Shell (ftsh) 2. 0. 0 Globus 2. 4. 3 plus patches GLUE Information providers GLUE Schema 1. 1, extended version 1 GPT 3. 1 GSI-Enabled Open. SSH 3. 0 Java SDK 1. 4. 1 KX 509 2031111 Monalisa 0. 95 My. Proxy 1. 11 Netlogger 2. 2 Py. Globus 1. 0 Py. Globus URL Copy 1. 1. 2. 11 RLS 2. 1. 4 Uber. FTP 1. 3 15

Conclusions • • • Grid paradigm works; opportunistic use of existing resources, run anywhere, from anywhere, by anyone. . . Grid computing is a challenge, needs world wide collaboration Data production using Grid is possible, successful so far Data Challenges are the way to test the ATLAS computing model before the real experiment starts Data Challenges also provides data for Physics groups A learning and improving experience with Data Challenges 16