ATLAS DDM Operations Issues Alexei Klimentov BNL US

ATLAS DDM Operations Issues Alexei Klimentov, BNL US ATLAS DDM and Production Workshop BNL, Sep 29 th 2006 Sep 29, 2006 US ATLAS DDM WS. A. Klimentov

DDM Operations issues • DDM Operations (highlights from ATLAS SW week) • DDM T 1/T 2 functional test • Conclusions and Action items Sep 29, 2006 US ATLAS DDM WS. A. Klimentov 2

ATLAS Distributed Data Management Operations Note (ATL-COM-SOFT-2006 -008) ATLAS Distributed Data Management Operations D. Barberis, J. Chudoba, S. Jezequel, J. Kennedy, A. Klimentov, D. Liko, P. Nevski, A. Olszewski, L. Perini, G. Poulard DDM day-by-day operations Operations team organization, T 2 reps joined DDM ops team roles and responsibilities of Tier-1 s and Tier-2 s coordinators https: //twiki. cern. ch/twiki/bin/viewfile/Atlas/DDMOperations? rev=2; filename=DDMops_Note. pdf Sep 29, 2006 US ATLAS DDM WS. A. Klimentov 3

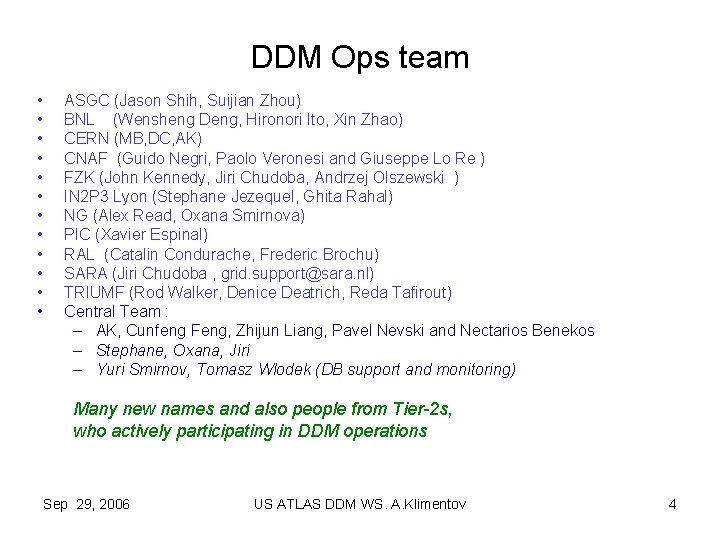

DDM Ops team • • • ASGC (Jason Shih, Suijian Zhou) BNL (Wensheng Deng, Hironori Ito, Xin Zhao) CERN (MB, DC, AK) CNAF (Guido Negri, Paolo Veronesi and Giuseppe Lo Re ) FZK (John Kennedy, Jiri Chudoba, Andrzej Olszewski ) IN 2 P 3 Lyon (Stephane Jezequel, Ghita Rahal) NG (Alex Read, Oxana Smirnova) PIC (Xavier Espinal) RAL (Catalin Condurache, Frederic Brochu) SARA (Jiri Chudoba , grid. support@sara. nl) TRIUMF (Rod Walker, Denice Deatrich, Reda Tafirout) Central Team : – AK, Cunfeng Feng, Zhijun Liang, Pavel Nevski and Nectarios Benekos – Stephane, Oxana, Jiri – Yuri Smirnov, Tomasz Wlodek (DB support and monitoring) Many new names and also people from Tier-2 s, who actively participating in DDM operations Sep 29, 2006 US ATLAS DDM WS. A. Klimentov 4

DDM Operations (US ATLAS) • Alexei Klimentov and Wensheng Deng – – – BNL : Wensheng Deng, Hironori Ito, Xin Zhao GLTier 2 : UM : Shawn Mckee, NETier 2 : BU : Saul Youssef, WTier 2 : Wei Yang, Stephen Gowdy SWTier 2 : Patrick Mc. Guigan – OU : Horst Severini, Karthik Arunachalam – UTA : Patrick Mc. Guigan, Mark Sosebee – MWTier 2 : Dan Schrager • IU : Kristy Kallback-Rose, Dan Schrager, • UC : Robert Gardner, Greg Cross http: //www. usatlas. bnl. gov/twiki/bin/view/Projects/ATLASDDM Sep 29, 2006 US ATLAS DDM WS. A. Klimentov 5

DDM Operations Team Responsibilities (Central Team) • day-by-day data transfer between Tier-0 and Tier-1 s – data transfer monitoring and control – data rerouting in case of Tier-1/Tier-2 or transfer channels instability – resolving data transfer errors and providing first line expertise – reporting data transfer problems to the Computing Operations Coordinator • deployment of DDM/DQ 2 releases • 24/7 support of central DDM operations facilities – production server at CERN – DQ 2 client – central databases • Support DDM Savannah users requests portal • Keep data integrity, in particular clean obsolete datasets and files entries from LFC/LRC and DDM catalogues • Help ATLAS users to transfer data • Communication with services developers and providers (both ATLAS and WLCG) and CERN Networking personnel (Net. Ops) Sep 29, 2006 US ATLAS DDM WS. A. Klimentov 6

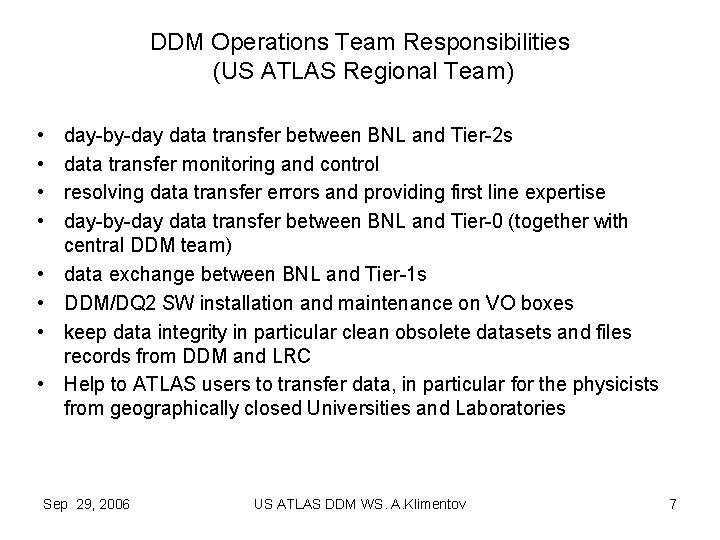

DDM Operations Team Responsibilities (US ATLAS Regional Team) • • day-by-day data transfer between BNL and Tier-2 s data transfer monitoring and control resolving data transfer errors and providing first line expertise day-by-day data transfer between BNL and Tier-0 (together with central DDM team) data exchange between BNL and Tier-1 s DDM/DQ 2 SW installation and maintenance on VO boxes keep data integrity in particular clean obsolete datasets and files records from DDM and LRC Help to ATLAS users to transfer data, in particular for the physicists from geographically closed Universities and Laboratories Sep 29, 2006 US ATLAS DDM WS. A. Klimentov 7

Non french sites: LYON CLOUD TOKYO BEIJING (Upgraded SE end august) List of T 1/T 2/T 3 Tier-2: GRIF CEA/DAPNIA LAL (LLR) LPNHE (IPNO) Tier-2: (Subatech Nantes) Tier-2: LPC Clermont. Ferrand Centre d’Analyse Lyon Tier-3: LAPP Annecy Tier-3: CPPM Marseille Tier-1: CCIN 2 P 3 Lyon Ready since end August Preparation and follow-up Meeting each 3 -4 weeks of french sites + Tokyo (I. Ueda) ● Fast and effective interactions with responsibles in each T 2/T 3 ● Sep 29, 2006 Tests of plain FTS made before SC 4 US ATLAS DDM WS. A. Klimentov 8 S. Jezequel, G. Rahal : Computing Operations Session

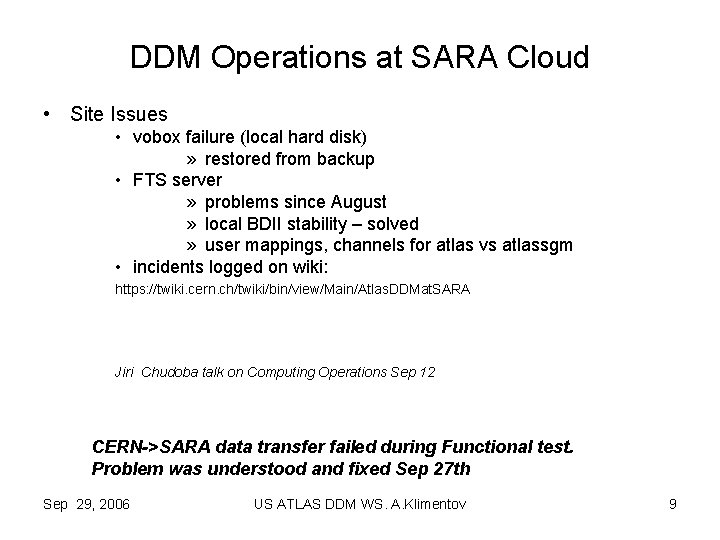

DDM Operations at SARA Cloud • Site Issues • vobox failure (local hard disk) » restored from backup • FTS server » problems since August » local BDII stability – solved » user mappings, channels for atlas vs atlassgm • incidents logged on wiki: https: //twiki. cern. ch/twiki/bin/view/Main/Atlas. DDMat. SARA Jiri Chudoba talk on Computing Operations Sep 12 CERN->SARA data transfer failed during Functional test. Problem was understood and fixed Sep 27 th Sep 29, 2006 US ATLAS DDM WS. A. Klimentov 9

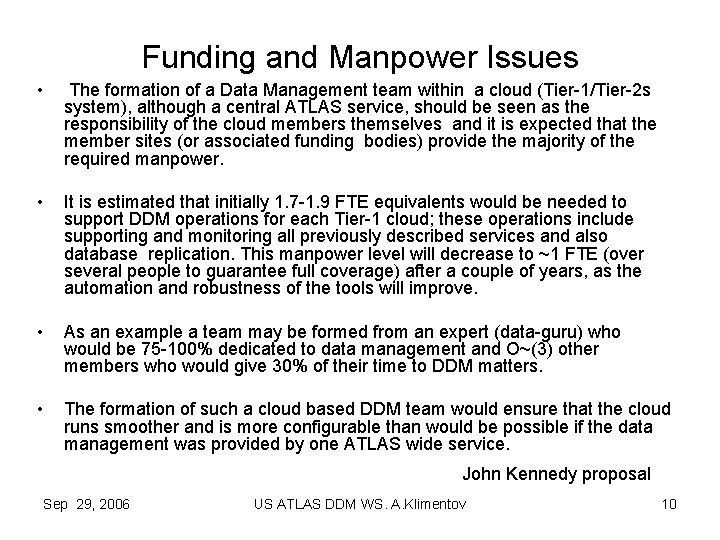

Funding and Manpower Issues • The formation of a Data Management team within a cloud (Tier-1/Tier-2 s system), although a central ATLAS service, should be seen as the responsibility of the cloud members themselves and it is expected that the member sites (or associated funding bodies) provide the majority of the required manpower. • It is estimated that initially 1. 7 -1. 9 FTE equivalents would be needed to support DDM operations for each Tier-1 cloud; these operations include supporting and monitoring all previously described services and also database replication. This manpower level will decrease to ~1 FTE (over several people to guarantee full coverage) after a couple of years, as the automation and robustness of the tools will improve. • As an example a team may be formed from an expert (data-guru) who would be 75 -100% dedicated to data management and O~(3) other members who would give 30% of their time to DDM matters. • The formation of such a cloud based DDM team would ensure that the cloud runs smoother and is more configurable than would be possible if the data management was provided by one ATLAS wide service. John Kennedy proposal Sep 29, 2006 US ATLAS DDM WS. A. Klimentov 10

DDM Ops Main activities since June 1/2 ü ATLAS Distributed Data Management Operations Note : ATL-COM-SOFT-2006 -008 (https: //twiki. cern. ch/twiki/bin/viewfile/Atlas/DDMOperations? rev=2; filename=DDMops_Note. pdf ü Opening up FTS site-to-site access– Dan Schrager (https: //twiki. cern. ch/twiki/bin/viewfile/Atlas/DDMOperations? rev=1; filename=D. S) ü ü FTS channels set up (BNL-Tier-1 s) – Hironori Ito DDM ops Savannah page – Z. Liang et al DDM ops (and DDM developers) Hyper. News US ATLAS DDM operations team as a part of DDM ops ü DQ 2 installation procedure (Patrick, Horst, Dan) ü OSG specifics datasets/files cleaning script (Wensheng, Patrick) Sep 29, 2006 US ATLAS DDM WS. A. Klimentov 11

DDM Ops Main activities since June 2/2 ü DDM/Prod. Sys integration (also part of SWING activity) – AK, PN ü CSC 11 AOD datasets consolidation (together with D. Liko et al) at CERN – Cunfeng Feng, Zhijun Liang, Pavel Nevski at LYON - Stephane Jezequel at BNL - David Adams, Wensheng Deng, Xin Zhao at FZK - John Kennedy But the goal to consolidate all AODs at 3 centers not reached yet. ü Nordic Grid/DQ 2 integration – Alex Read Automatic datasets book-keeping Automatic datasets copying to CERN 1 st step to full integration ü T 1/T 2 data transfer functional test – AK, Pavel Nevski Sep 29, 2006 US ATLAS DDM WS. A. Klimentov 12

ATLAS Tier 1 -Tier 2 s Data Transfer Functional Test (Sep 11 - Sep 21) Alexei Klimentov, Pavel Nevski Sep 29, 2006 US ATLAS DDM WS. A. Klimentov

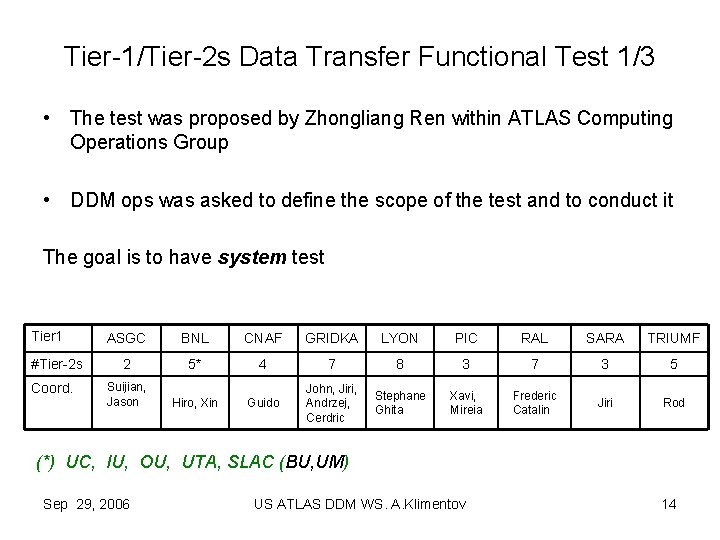

Tier-1/Tier-2 s Data Transfer Functional Test 1/3 • The test was proposed by Zhongliang Ren within ATLAS Computing Operations Group • DDM ops was asked to define the scope of the test and to conduct it The goal is to have system test Tier 1 #Tier-2 s Coord. ASGC BNL CNAF GRIDKA LYON PIC RAL SARA TRIUMF 2 5* 4 7 8 3 7 3 5 Suijian, Jason Hiro, Xin Guido John, Jiri, Andrzej, Cerdric Stephane Ghita Xavi, Mireia Frederic Catalin Jiri Rod (*) UC, IU, OU, UTA, SLAC (BU, UM) Sep 29, 2006 US ATLAS DDM WS. A. Klimentov 14

Tier-1/Tier-2 s Data Transfer Functional Test 2/3 • Functional test is a first phase of DDM regular system check-up • Sites have been asked to run the test without any special actions. – Exceptions : • ASGC (the site was used by developers just before the test to debug DDM software) • LYON and RAL (the latest version of DQ 2 was installed. CC & AK) • A coherent data transfer test between Tier-1 and Tier-2 s for all ‘clouds’, using existing SW to generate data, replicate them to ATLAS sites, monitor and control data transfer. – – Data generated by ATLAS MC production SW Organized in DQ 2 datasets Performance evaluation is not the goal of the test We want to know how functional is our system Sep 29, 2006 US ATLAS DDM WS. A. Klimentov 15

Tier-1/Tier-2 s Data Transfer Functional Test 3/3 – Step 1 • 1 a : Data distribution from Tier-0 to Tier-1 • 1 b : Data distribution from Tier 1 to Tier-2 s within the cloud • 1 c : Bulk data transfer from CERN to Tier-1 – Step 2 • For Tier-2 s passed step 1 b, consolidate data from Tier-2 s on Tier-1 • Step 2 a – Access data located at Tier-2 s in “foreign cloud” from Tier -1 (BNL). Opening up FTS site-to-site access (Dan’s proposal) Sep 29, 2006 US ATLAS DDM WS. A. Klimentov 16

Test Statistics • 8 sites passed Step 1 a (CERN -> Tier-1) • 3. 5 sites passed Step 1 b (BNL, CNAF, GRIDKA and LYON) – Severe SW errors have been found during Step 1 b • The test for several sites was postponed : – ASGC, GRIDKA, PIC and RAL • DDM developers starts investigated the problems • Step 1 c was executed for 4 sites (CNAF, BNL, GRIDKA and LYON) • 2 sites completed the test (LYON and CNAF) – The bulk data transfer (from CERN to BNL) wasn’t succeeded (slow subscription). The problem is understood and fixes will be provided by DDM core team. Sep 29, 2006 US ATLAS DDM WS. A. Klimentov 17

Typical DDM problems observed • Extremely long subscription servicing cycle (20 and more hours between the moment dataset subscription request and the first action to process the request) • Obscure subscription history, lack of monitoring and control • DDM/DQ 2 SW and Computing Facilities interference (DQ 2/Storage and DQ 2/FTS interference - data replication requests from PIC and ASGC blocked BNL storage system. Also LYON was blocked by T 2 data transfer) • Inconsistencies in DDM monitoring • Site FTS related errors : ‘ 0’ length files on CASTOR, files overwrite on d. Cache, keeping FTS/LFC/etc busy and blocking data transfer to the particular site • Dataset location. Though there are no data on site or data transfer failed, site is shown as dataset replicas holder. • Rare 100% data replication, no way for automatic check, control and retransfer. Sep 29, 2006 US ATLAS DDM WS. A. Klimentov 18

Errors observed : Site related HW failures and power outages FTS misconfiguration Authentication failures Problems with local SE Problems with SW components (non-ATLAS, f. e. ORACLE) It takes from 2 h to 3 days to locate and fix problems. All sites have people responding to error report Sep 29, 2006 US ATLAS DDM WS. A. Klimentov 19

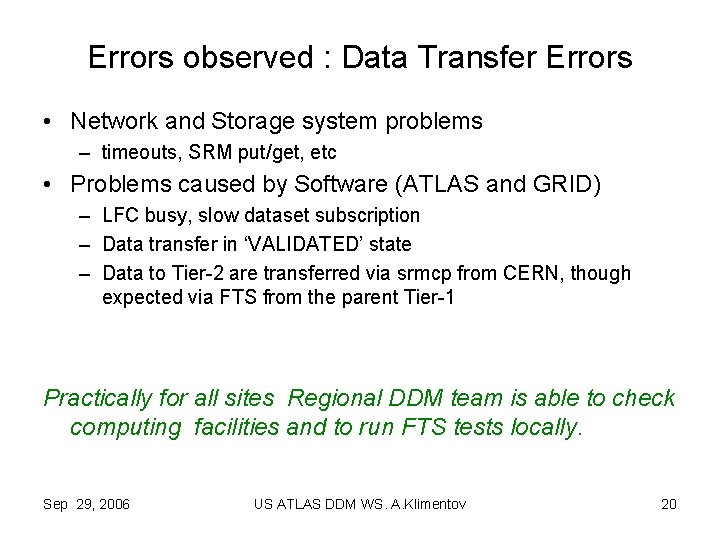

Errors observed : Data Transfer Errors • Network and Storage system problems – timeouts, SRM put/get, etc • Problems caused by Software (ATLAS and GRID) – LFC busy, slow dataset subscription – Data transfer in ‘VALIDATED’ state – Data to Tier-2 are transferred via srmcp from CERN, though expected via FTS from the parent Tier-1 Practically for all sites Regional DDM team is able to check computing facilities and to run FTS tests locally. Sep 29, 2006 US ATLAS DDM WS. A. Klimentov 20

Errors observed : Data Transfer Failures • No data transfer to or/and from site The most severe errors. REQUIRE ACTION FROM DDM DEVELOPERS Typical cases : files transfer is in ‘ASSIGNED’ state for days dataset subscription isn’t processed data transfer agent crashed Impossible to reliably monitor the above errors from DDM monitoring. To find information one must dig in local log files Fixes required manual intervention and manipulation with local DQ 2 database and VO box. Sep 29, 2006 US ATLAS DDM WS. A. Klimentov 21

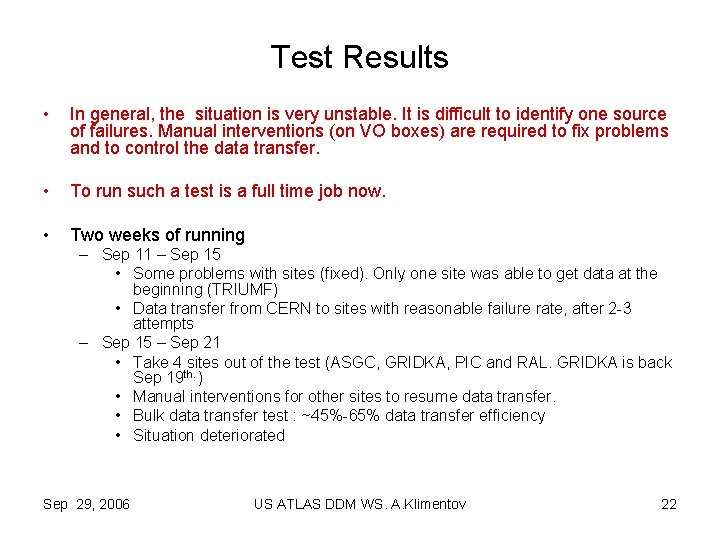

Test Results • In general, the situation is very unstable. It is difficult to identify one source of failures. Manual interventions (on VO boxes) are required to fix problems and to control the data transfer. • To run such a test is a full time job now. • Two weeks of running – Sep 11 – Sep 15 • Some problems with sites (fixed). Only one site was able to get data at the beginning (TRIUMF) • Data transfer from CERN to sites with reasonable failure rate, after 2 -3 attempts – Sep 15 – Sep 21 • Take 4 sites out of the test (ASGC, GRIDKA, PIC and RAL. GRIDKA is back Sep 19 th. ) • Manual interventions for other sites to resume data transfer. • Bulk data transfer test : ~45%-65% data transfer efficiency • Situation deteriorated Sep 29, 2006 US ATLAS DDM WS. A. Klimentov 22

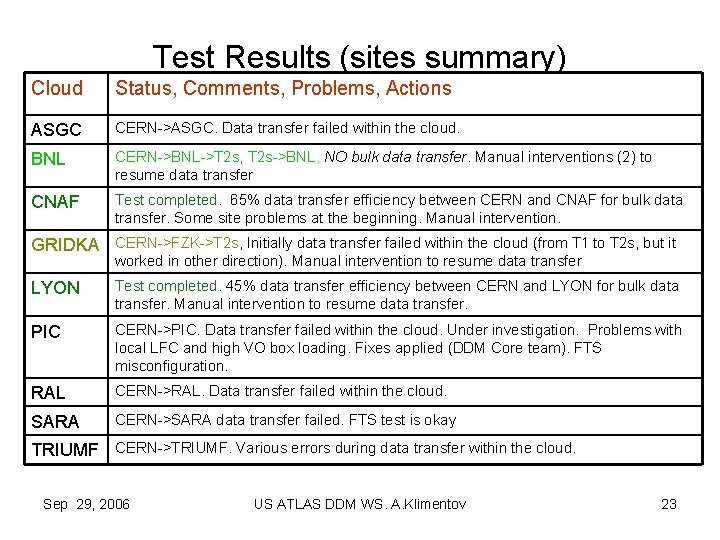

Test Results (sites summary) Cloud Status, Comments, Problems, Actions ASGC CERN->ASGC. Data transfer failed within the cloud. BNL CERN->BNL->T 2 s, T 2 s->BNL, NO bulk data transfer. Manual interventions (2) to resume data transfer CNAF Test completed. 65% data transfer efficiency between CERN and CNAF for bulk data transfer. Some site problems at the beginning. Manual intervention. GRIDKA CERN->FZK->T 2 s, Initially data transfer failed within the cloud (from T 1 to T 2 s, but it worked in other direction). Manual intervention to resume data transfer LYON Test completed. 45% data transfer efficiency between CERN and LYON for bulk data transfer. Manual intervention to resume data transfer. PIC CERN->PIC. Data transfer failed within the cloud. Under investigation. Problems with local LFC and high VO box loading. Fixes applied (DDM Core team). FTS misconfiguration. RAL CERN->RAL. Data transfer failed within the cloud. SARA CERN->SARA data transfer failed. FTS test is okay TRIUMF CERN->TRIUMF. Various errors during data transfer within the cloud. Sep 29, 2006 US ATLAS DDM WS. A. Klimentov 23

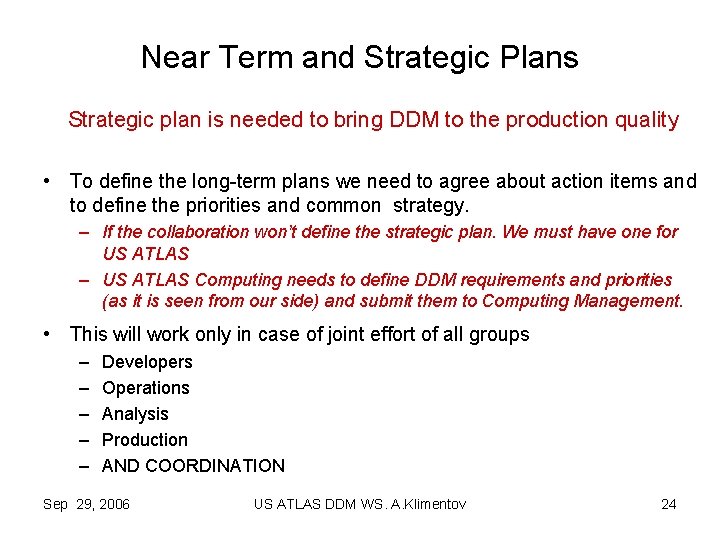

Near Term and Strategic Plans Strategic plan is needed to bring DDM to the production quality • To define the long-term plans we need to agree about action items and to define the priorities and common strategy. – If the collaboration won’t define the strategic plan. We must have one for US ATLAS – US ATLAS Computing needs to define DDM requirements and priorities (as it is seen from our side) and submit them to Computing Management. • This will work only in case of joint effort of all groups – – – Developers Operations Analysis Production AND COORDINATION Sep 29, 2006 US ATLAS DDM WS. A. Klimentov 24

Near Term Plans (DDM ops) • DDM Operations Issues – – – AOD and ESD datasets consolidation at CERN, BNL, LYON, FZK C. Feng, S. Jezequel, D. Adams, X. Zhao, P. Nevski, J. Kennedy Check Data Integrity • BNL (Wensheng) – generic script to check LRC/”storage”/DQ 2 • Pre-DQ data consolidation (Cunfeng) • Check for obscure subscriptions, files, datasets and start clean up (TBD : Dietrich, Cunfeng, Liang, Pavel, AK) Database replication to ATLAS sites (P. Nevski together with A. Vanyashine et al) Support user’s requests for data transfer • Hiro, Xin, Tadashi, Pavel, David, AK Maintain DDM facilities Sep 29, 2006 US ATLAS DDM WS. A. Klimentov 25

Near Term Plans (Functional Test) • Continue the test after SC 4 test (mid of October). The test scope will be updated. • Include functional test as a part of scheduled tests coordinated by Gilbert Poulard. • Consider functional test as a part of DDM ops activity and run the test regularly (GP) Sep 29, 2006 US ATLAS DDM WS. A. Klimentov 26

Near Term Plans (installation and deployment) • DQ 2 new releases and deployment – DDM ops and T 1/T 2 sites Coordinators will take responsibility for DDM deployment. – Major releases should be beta-tested by DDM ops group (as it was done for 0. 2. 9). – GRID specifics features must be tested in advance – Coherent deployment for all sites – Priority must be given to ATLAS production needs – DDM/DQ 2 “full-scale” test bed (anyway we will need it very soon) Sep 29, 2006 US ATLAS DDM WS. A. Klimentov 27

Near Term Plans (integration with Prodsys) • DDM/Prod. Sys Integration – – Nordic. Grid/DQ 2 Integration (A. Read, Z. Liang, AK) ‘Final’ Job/Files state (P. Nevski, AK) “input” files repository (problems with inter-grid files replication) Missing files in MC datasets (P. Nevski) • Need to discuss with Rod, Kaushik, Simone (probably can be done within ‘Final’ Job check). – Production datasets tables, task definitions, integration with AKTR (P. Nevski, AK) Sep 29, 2006 US ATLAS DDM WS. A. Klimentov 28

Conclusions 1/2 • Improvements during last 3 months are evident – – DDM operations team is set up More people are involved in DDM operations and technical discussion GRID and Prodsys Integration with DQ 2 Users work with datasets and DQ 2 subscription mechanism • DQ 2 release 0. 2. 1 x and monitoring are dramatic step forward. We need to keep it running for a while to find out as much as possible correlations and bottlenecks of computing elements and DDM/DQ 2 SW – Some bottlenecks are already observed even for the modest data rates we have now (many of them are known from Panda experience, many are new and related to inter-site communication) Sep 29, 2006 US ATLAS DDM WS. A. Klimentov 29

Conclusions 2/2 Many problems related not only to DQ 2, but to the late DQ 2/prodsys integration, GRID tools, local settings, computing storage facilities, Sites files catalogs for the end-user it is a problem with ATLAS DDM SW WE NEED TO DRAW THE PLAN HOW TO IMPROVE THE SITUATION Sep 29, 2006 US ATLAS DDM WS. A. Klimentov 30

Action Items • SWING, DDM ops and DDM developers must discuss and prepare the list of priorities for the next DQ 2 release(s) to bring ATLAS DDM system to the production quality • DDM production and DDM developers computing facilities must be kept separated. The SW upgrade on production facilities can be done only after testing and debugging. No last minute upgrades. • GRID tools has to be evaluated. We cannot rely on believe that the next release of XYZ will satisfy our needs. We have to select and use tools we can run in production today. The clear understanding of all problems is needed. – LFC performance and robustness look like the most critical for the whole system. LFC and LRC evaluation is one of the first priorities. • ATLAS dedicated DDM functional and performance tests are required. The tests must be considered as a part of computing facilities commissioning and the participation must be mandatory for ATLAS sites. • We need more people to work on DDM operations and monitoring. Probably the DDM ops model need to be tuned, and central team will assign people to help particular Tier-1 s. – DDM Operational model must be reviewed after October’s tests • DDM priorities and strategy need to be defined Sep 29, 2006 US ATLAS DDM WS. A. Klimentov 31

DDM Operations (US ATLAS) • Alexei Klimentov and Wensheng Deng – – – BNL : Wensheng Deng, Hironori Ito, Xin Zhao GLTier 2 : UM : Shawn Mckee, NETier 2 : BU : Saul Youssef, WTier 2 : Wei Yang, Stephen Gowdy SWTier 2 : Patrick Mc. Guigan – OU : Horst Severini, Karthik Arunachalam – UTA : Patrick Mc. Guigan, Mark Sosebee – MWTier 2 : Dan Schrager • IU : Kristy Kallback-Rose, Dan Schrager, • UC : Robert Gardner, Greg Cross What fraction of your time you can dedicate to DDM Operations What is task you are interested in Sep 29, 2006 US ATLAS DDM WS. A. Klimentov 32

- Slides: 32