ATCA based Compute Node as Backend DAQ for

ATCA based Compute Node as Backend DAQ for s. Belle DEPFET Pixel Detector Andreas Kopp, Wolfgang Kühn, Johannes Lang, Jens Sören Lange, Ming Liu, David Münchow, Johannes Roskoss, Qiang Wang (Tiago Perez, Daniel Kirschner) II. Physikalisches Institut, Justus-Liebig-Universität Giessen Colleagues involved in project, but not (s)Belle members Dapeng Jin, Lu Li, Zhen'An Liu, Yunpeng Lu, Shujun Wei, Hao Xu, Dixin Zhao (IHEP Beijing, Beijing) DEPFET Backend DAQ, Giessen Group 1

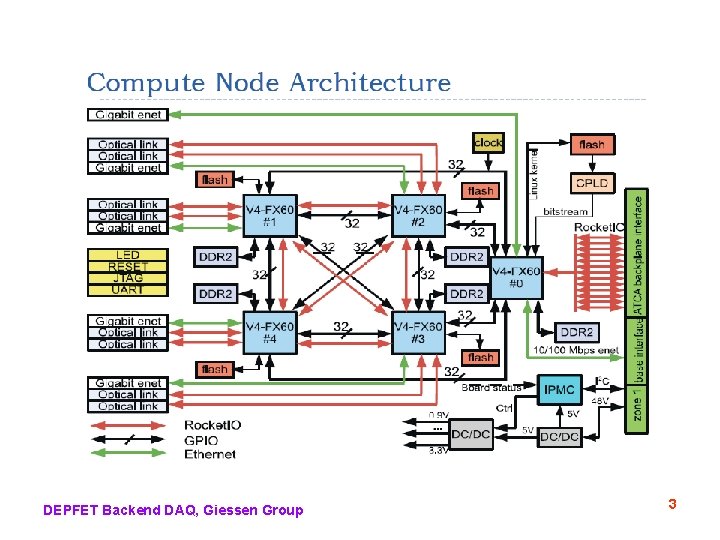

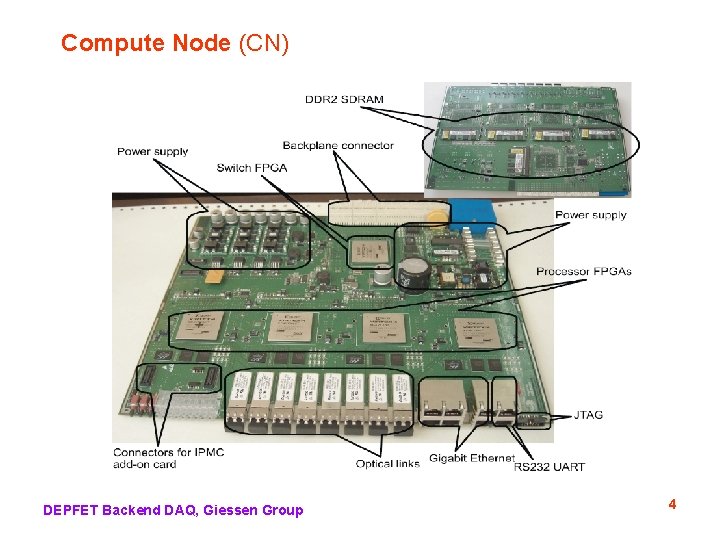

Compute Node (CN) Concept • 5 x VIRTEX 4 FX-60 FPGAs • – each FPGA has 2 x 300 MHz Power. PC – Linux 2. 6. 27 (open source version), stored in FLASH memory – algorithm programming in VHDL (XILINX ISE 10. 1) ATCA (Advanced Telecommunications Computing Architecture) with full mesh backplane (point-to-point connections on backplane from each CN to each other CN, i. e. no bus arbitration) • optical links (connected to Rocket. IO at FPGA) • Gigabit Ethernet • ATCA management (IPMI) by add-on card DEPFET Backend DAQ, Giessen Group 2

DEPFET Backend DAQ, Giessen Group 3

Compute Node (CN) DEPFET Backend DAQ, Giessen Group 4

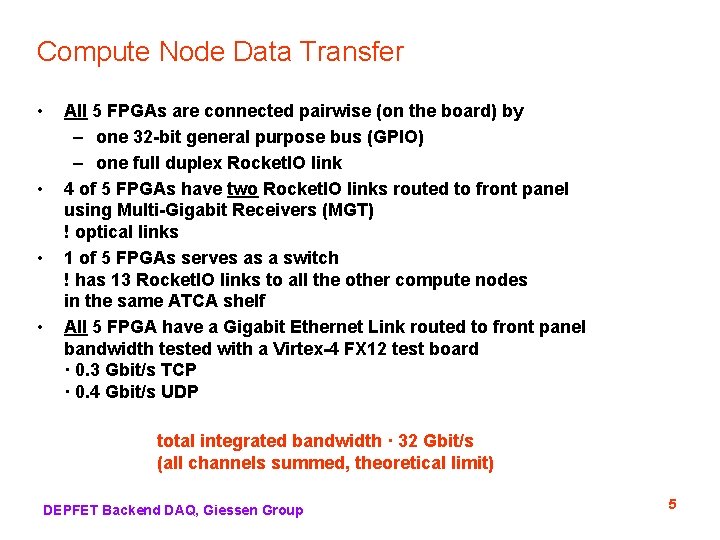

Compute Node Data Transfer • • All 5 FPGAs are connected pairwise (on the board) by – one 32 -bit general purpose bus (GPIO) – one full duplex Rocket. IO link 4 of 5 FPGAs have two Rocket. IO links routed to front panel using Multi-Gigabit Receivers (MGT) ! optical links 1 of 5 FPGAs serves as a switch ! has 13 Rocket. IO links to all the other compute nodes in the same ATCA shelf All 5 FPGA have a Gigabit Ethernet Link routed to front panel bandwidth tested with a Virtex-4 FX 12 test board · 0. 3 Gbit/s TCP · 0. 4 Gbit/s UDP total integrated bandwidth · 32 Gbit/s (all channels summed, theoretical limit) DEPFET Backend DAQ, Giessen Group 5

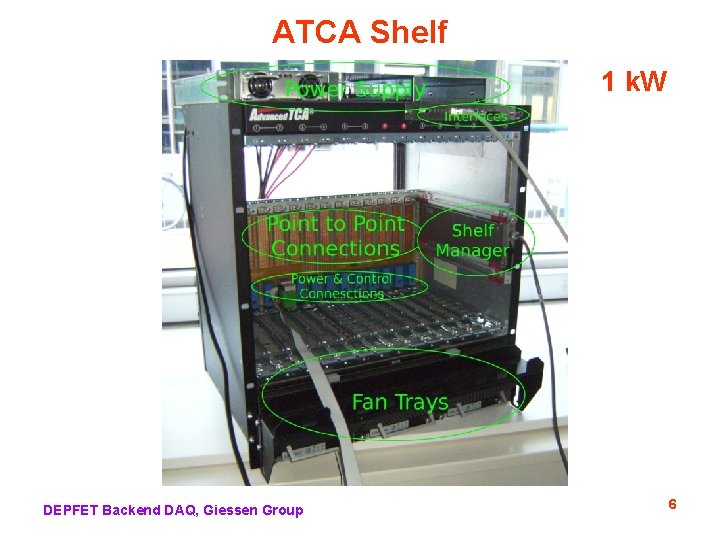

ATCA Shelf 1 k. W DEPFET Backend DAQ, Giessen Group 6

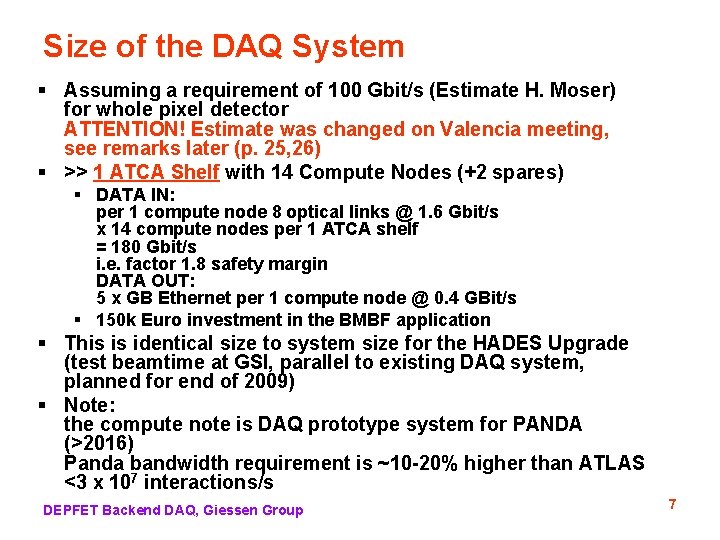

Size of the DAQ System § Assuming a requirement of 100 Gbit/s (Estimate H. Moser) for whole pixel detector ATTENTION! Estimate was changed on Valencia meeting, see remarks later (p. 25, 26) § >> 1 ATCA Shelf with 14 Compute Nodes (+2 spares) § DATA IN: per 1 compute node 8 optical links @ 1. 6 Gbit/s x 14 compute nodes per 1 ATCA shelf = 180 Gbit/s i. e. factor 1. 8 safety margin DATA OUT: 5 x GB Ethernet per 1 compute node @ 0. 4 GBit/s § 150 k Euro investment in the BMBF application § This is identical size to system size for the HADES Upgrade (test beamtime at GSI, parallel to existing DAQ system, planned for end of 2009) § Note: the compute note is DAQ prototype system for PANDA (>2016) Panda bandwidth requirement is ~10 -20% higher than ATLAS <3 x 107 interactions/s DEPFET Backend DAQ, Giessen Group 7

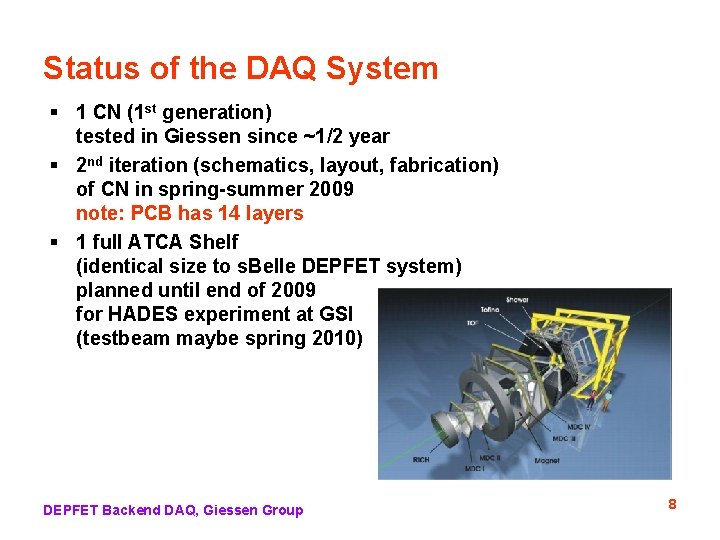

Status of the DAQ System § 1 CN (1 st generation) tested in Giessen since ~1/2 year § 2 nd iteration (schematics, layout, fabrication) of CN in spring-summer 2009 note: PCB has 14 layers § 1 full ATCA Shelf (identical size to s. Belle DEPFET system) planned until end of 2009 for HADES experiment at GSI (testbeam maybe spring 2010) DEPFET Backend DAQ, Giessen Group 8

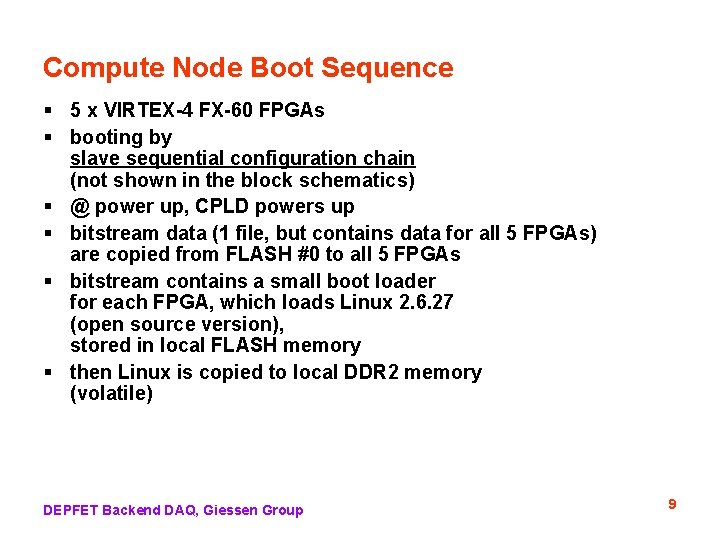

Compute Node Boot Sequence § 5 x VIRTEX-4 FX-60 FPGAs § booting by slave sequential configuration chain (not shown in the block schematics) § @ power up, CPLD powers up § bitstream data (1 file, but contains data for all 5 FPGAs) are copied from FLASH #0 to all 5 FPGAs § bitstream contains a small boot loader for each FPGA, which loads Linux 2. 6. 27 (open source version), stored in local FLASH memory § then Linux is copied to local DDR 2 memory (volatile) DEPFET Backend DAQ, Giessen Group 9

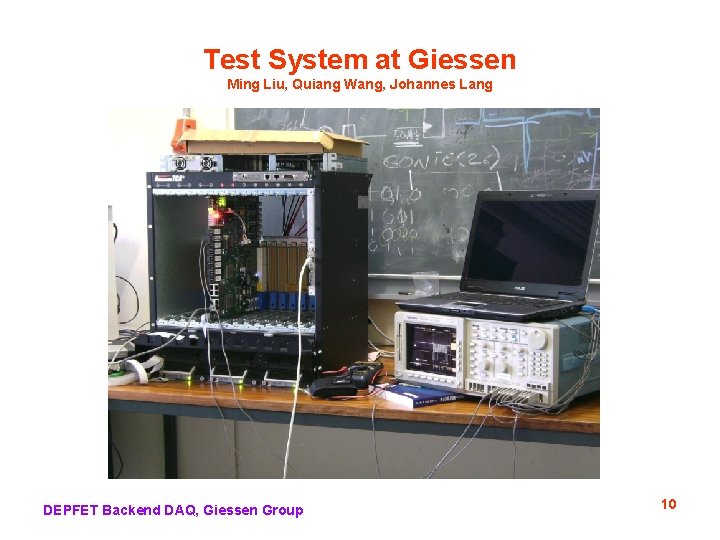

Test System at Giessen Ming Liu, Quiang Wang, Johannes Lang DEPFET Backend DAQ, Giessen Group 10

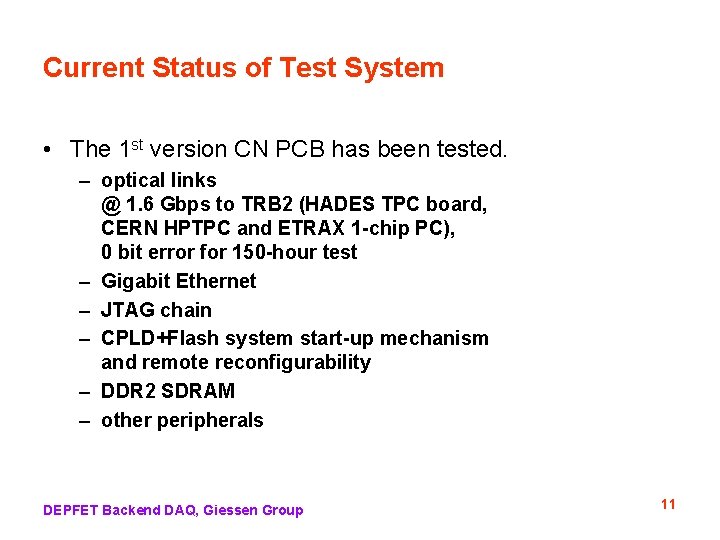

Current Status of Test System • The 1 st version CN PCB has been tested. – optical links @ 1. 6 Gbps to TRB 2 (HADES TPC board, CERN HPTPC and ETRAX 1 -chip PC), 0 bit error for 150 -hour test – Gigabit Ethernet – JTAG chain – CPLD+Flash system start-up mechanism and remote reconfigurability – DDR 2 SDRAM – other peripherals DEPFET Backend DAQ, Giessen Group 11

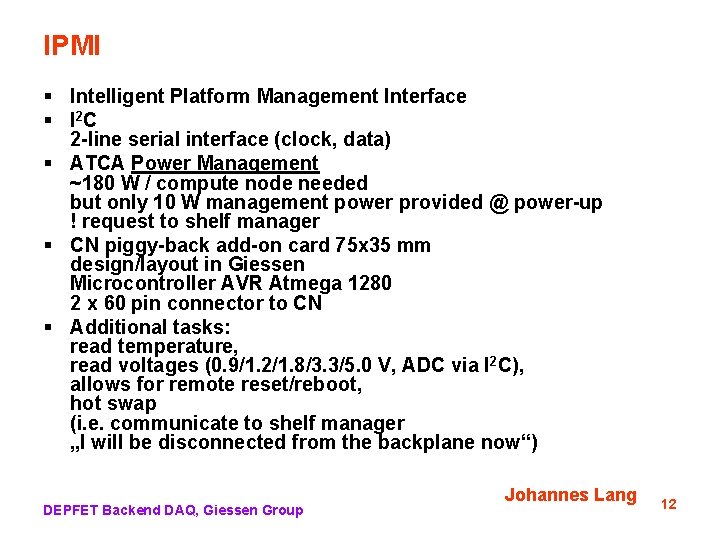

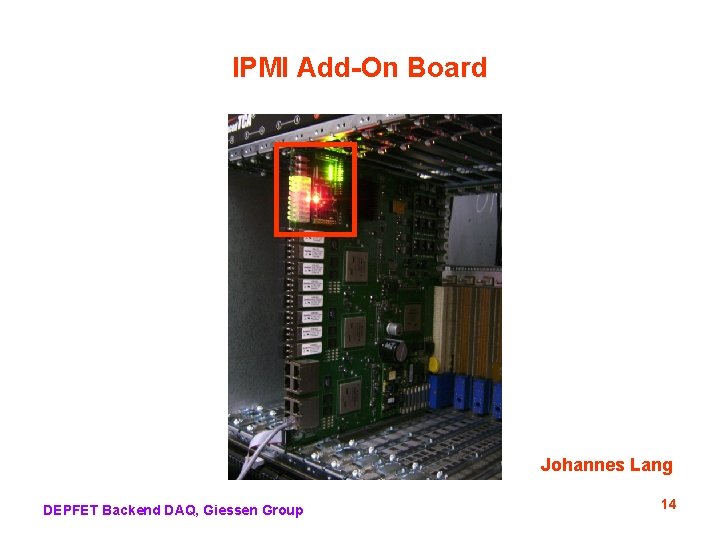

IPMI § Intelligent Platform Management Interface § I 2 C 2 -line serial interface (clock, data) § ATCA Power Management ~180 W / compute node needed but only 10 W management power provided @ power-up ! request to shelf manager § CN piggy-back add-on card 75 x 35 mm design/layout in Giessen Microcontroller AVR Atmega 1280 2 x 60 pin connector to CN § Additional tasks: read temperature, read voltages (0. 9/1. 2/1. 8/3. 3/5. 0 V, ADC via I 2 C), allows for remote reset/reboot, hot swap (i. e. communicate to shelf manager „I will be disconnected from the backplane now“) DEPFET Backend DAQ, Giessen Group Johannes Lang 12

IPMI Add-On Board Johannes Lang DEPFET Backend DAQ, Giessen Group 13

IPMI Add-On Board Johannes Lang DEPFET Backend DAQ, Giessen Group 14

Algorithms to run on the CN ? 1. pixel subevent building 2. data reduction (? ) if rate estimate is correct, we must achieve a data reduction of factor ~20 on the CN. Preliminary idea: 1. 2. 3. 4. 5. 6. 3. receive CDC data (from COPPER) receive SVD data (from COPPER) track finding and track fitting extrapolation to pixel detector matching to pixel hits identify synchrotron radiation hits (i. e. no track match) and discard data compression (? ) DEPFET Backend DAQ, Giessen Group 15

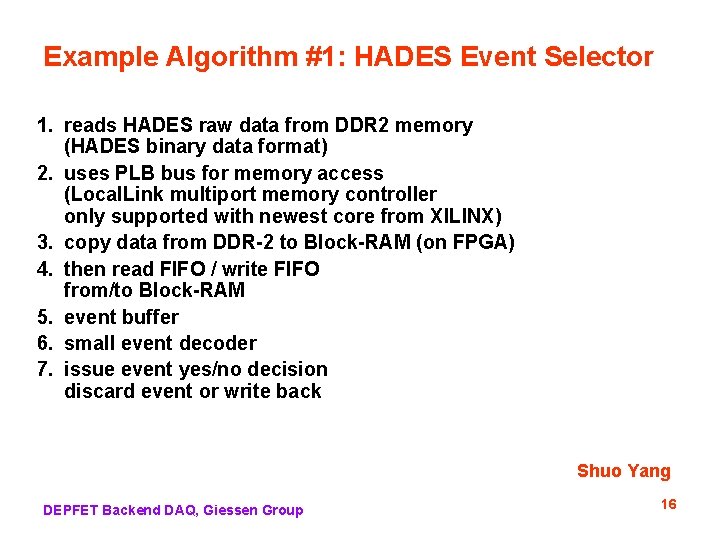

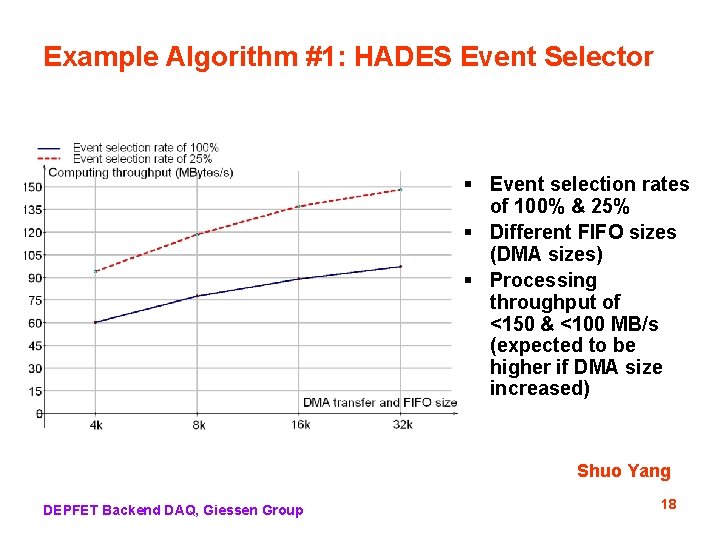

Example Algorithm #1: HADES Event Selector 1. reads HADES raw data from DDR 2 memory (HADES binary data format) 2. uses PLB bus for memory access (Local. Link multiport memory controller only supported with newest core from XILINX) 3. copy data from DDR-2 to Block-RAM (on FPGA) 4. then read FIFO / write FIFO from/to Block-RAM 5. event buffer 6. small event decoder 7. issue event yes/no decision discard event or write back Shuo Yang DEPFET Backend DAQ, Giessen Group 16

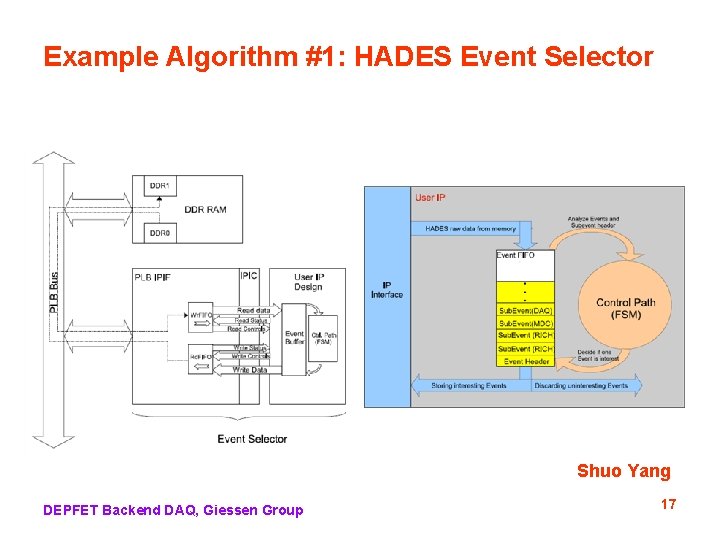

Example Algorithm #1: HADES Event Selector Shuo Yang DEPFET Backend DAQ, Giessen Group 17

Example Algorithm #1: HADES Event Selector § Event selection rates of 100% & 25% § Different FIFO sizes (DMA sizes) § Processing throughput of <150 & <100 MB/s (expected to be higher if DMA size increased) Shuo Yang DEPFET Backend DAQ, Giessen Group 18

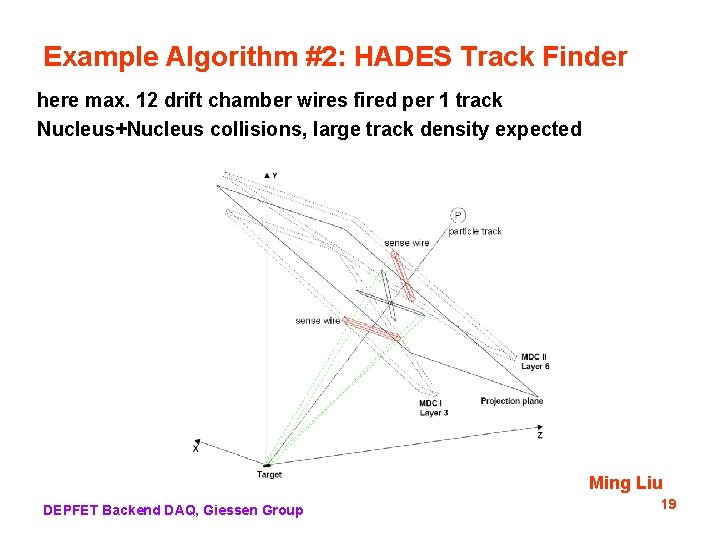

Example Algorithm #2: HADES Track Finder here max. 12 drift chamber wires fired per 1 track Nucleus+Nucleus collisions, large track density expected Ming Liu DEPFET Backend DAQ, Giessen Group 19

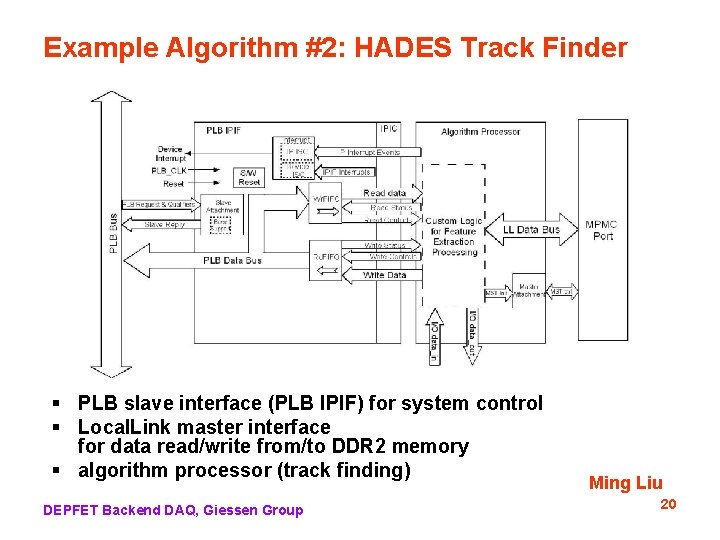

Example Algorithm #2: HADES Track Finder § PLB slave interface (PLB IPIF) for system control § Local. Link master interface for data read/write from/to DDR 2 memory § algorithm processor (track finding) DEPFET Backend DAQ, Giessen Group Ming Liu 20

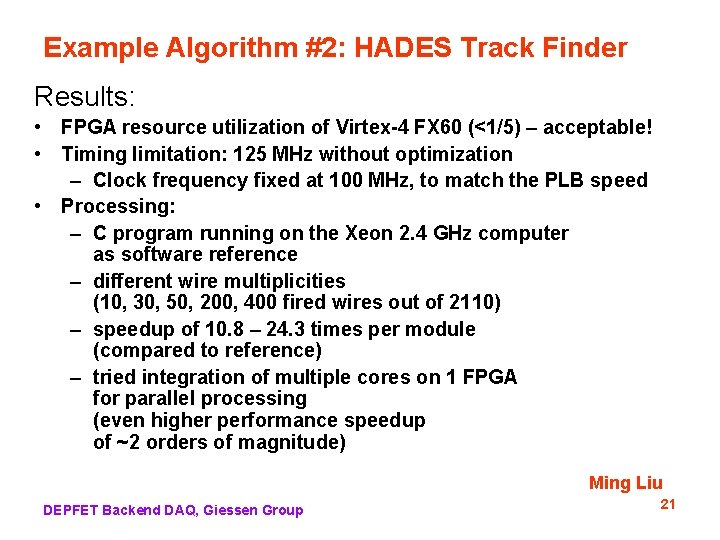

Example Algorithm #2: HADES Track Finder Results: • FPGA resource utilization of Virtex-4 FX 60 (<1/5) – acceptable! • Timing limitation: 125 MHz without optimization – Clock frequency fixed at 100 MHz, to match the PLB speed • Processing: – C program running on the Xeon 2. 4 GHz computer as software reference – different wire multiplicities (10, 30, 50, 200, 400 fired wires out of 2110) – speedup of 10. 8 – 24. 3 times per module (compared to reference) – tried integration of multiple cores on 1 FPGA for parallel processing (even higher performance speedup of ~2 orders of magnitude) Ming Liu DEPFET Backend DAQ, Giessen Group 21

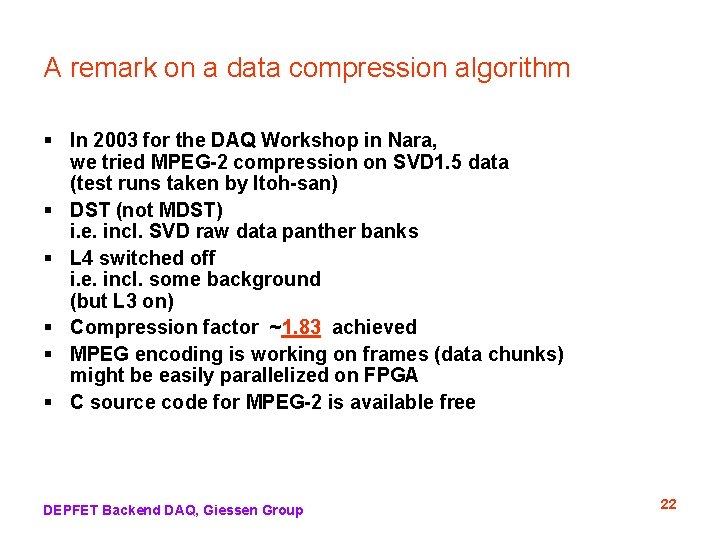

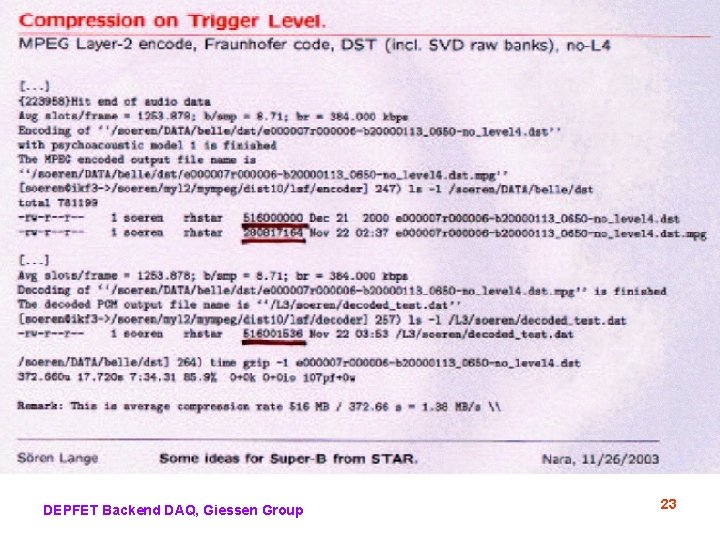

A remark on a data compression algorithm § In 2003 for the DAQ Workshop in Nara, we tried MPEG-2 compression on SVD 1. 5 data (test runs taken by Itoh-san) § DST (not MDST) i. e. incl. SVD raw data panther banks § L 4 switched off i. e. incl. some background (but L 3 on) § Compression factor ~1. 83 achieved § MPEG encoding is working on frames (data chunks) might be easily parallelized on FPGA § C source code for MPEG-2 is available free DEPFET Backend DAQ, Giessen Group 22

DEPFET Backend DAQ, Giessen Group 23

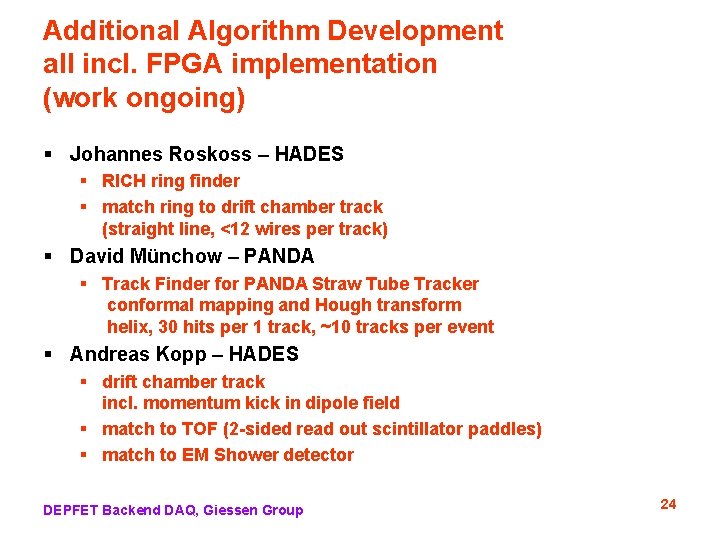

Additional Algorithm Development all incl. FPGA implementation (work ongoing) § Johannes Roskoss – HADES § RICH ring finder § match ring to drift chamber track (straight line, <12 wires per track) § David Münchow – PANDA § Track Finder for PANDA Straw Tube Tracker conformal mapping and Hough transform helix, 30 hits per 1 track, ~10 tracks per event § Andreas Kopp – HADES § drift chamber track incl. momentum kick in dipole field § match to TOF (2 -sided read out scintillator paddles) § match to EM Shower detector DEPFET Backend DAQ, Giessen Group 24

Open Questions on Data Reduction § Can the compute nodes get the CDC and SVD data from COPPER? and then run a track finder algorithm? § by GB Ethernet § if yes, what is the latency? data size? rate? protocol? § At the input of the event builder or at the output of the event builder (i. e. input of L 3)? § Is it acceptable for DAQ group? etc. DEPFET Backend DAQ, Giessen Group 25

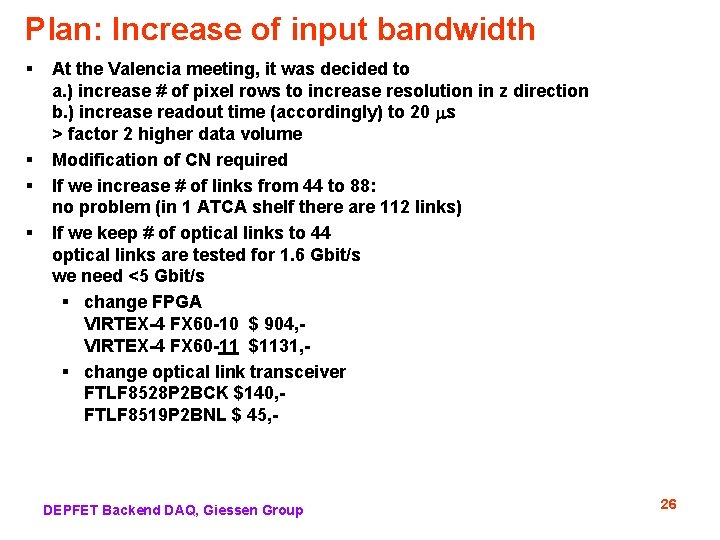

Plan: Increase of input bandwidth § § At the Valencia meeting, it was decided to a. ) increase # of pixel rows to increase resolution in z direction b. ) increase readout time (accordingly) to 20 s > factor 2 higher data volume Modification of CN required If we increase # of links from 44 to 88: no problem (in 1 ATCA shelf there are 112 links) If we keep # of optical links to 44 optical links are tested for 1. 6 Gbit/s we need <5 Gbit/s § change FPGA VIRTEX-4 FX 60 -10 $ 904, VIRTEX-4 FX 60 -11 $1131, § change optical link transceiver FTLF 8528 P 2 BCK $140, FTLF 8519 P 2 BNL $ 45, - DEPFET Backend DAQ, Giessen Group 26

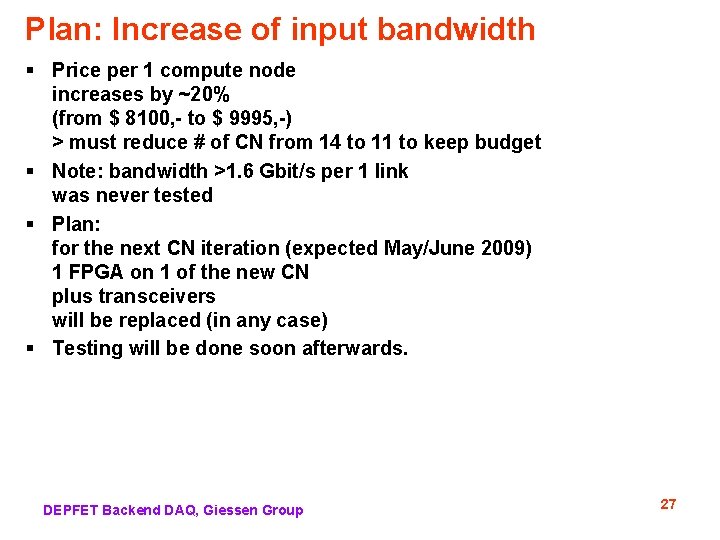

Plan: Increase of input bandwidth § Price per 1 compute node increases by ~20% (from $ 8100, - to $ 9995, -) > must reduce # of CN from 14 to 11 to keep budget § Note: bandwidth >1. 6 Gbit/s per 1 link was never tested § Plan: for the next CN iteration (expected May/June 2009) 1 FPGA on 1 of the new CN plus transceivers will be replaced (in any case) § Testing will be done soon afterwards. DEPFET Backend DAQ, Giessen Group 27

BMBF Application, Details Manpower (applied for) 1 postdoc 2 Ph. D. students Manpower (other funding sources) Wolfgang Kühn 20% Sören Lange 35% 1 Ph. D. student (funded by EU FP 7) 50% Travel budget 2 trips to KEK per year (2 persons) 2 trips inside Germany per year (3 persons) in 2011 6 months at KEK for one person in 2012 3 months at KEK for one person Our share for the workshops (electronics and fine mechanics) is 1: 1: 1 for Panda: Hades: Super-Belle but electronic workshop is not involved in compute node DEPFET Backend DAQ, Giessen Group 28

- Slides: 28