Asynchronous Memory Access Chaining Onur Kocberber EPFL Oracle

Asynchronous Memory Access Chaining Onur Kocberber EPFL Oracle Labs Babak Falsafi EPFL Boris Grot University of Edinburgh Copyright © 2016, Oracle and/or its affiliates. All rights reserved. |

Safe Harbor Statement The following is intended to outline our general product direction. It is intended for information purposes only, and may not be incorporated into any contract. It is not a commitment to deliver any material, code, or functionality, and should not be relied upon in making purchasing decisions. The development, release, and timing of any features or functionality described for Oracle’s products remains at the sole discretion of Oracle. Copyright © 2016, Oracle and/or its affiliates. All rights reserved. | 3

Our World is Data-Driven! Data resides in huge databases – Most frequent task: find data Reliance on pointer-intensive data structures – Used for fast lookup A Lookup efficiency is critical B – Many requests, abundant parallelism – Power-limited hardware C D E Need high-throughput and energy-efficient data lookups Copyright © 2016, Oracle and/or its affiliates. All rights reserved. | 4

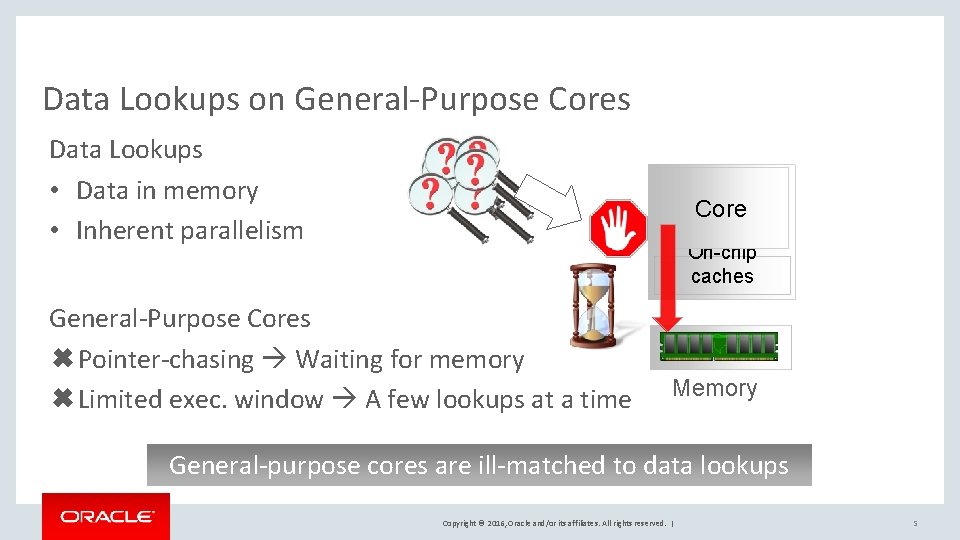

Data Lookups on General-Purpose Cores Data Lookups • Data in memory • Inherent parallelism Core On-chip caches General-Purpose Cores ✖Pointer-chasing Waiting for memory ✖Limited exec. window A few lookups at a time Memory General-purpose cores are ill-matched to data lookups Copyright © 2016, Oracle and/or its affiliates. All rights reserved. | 5

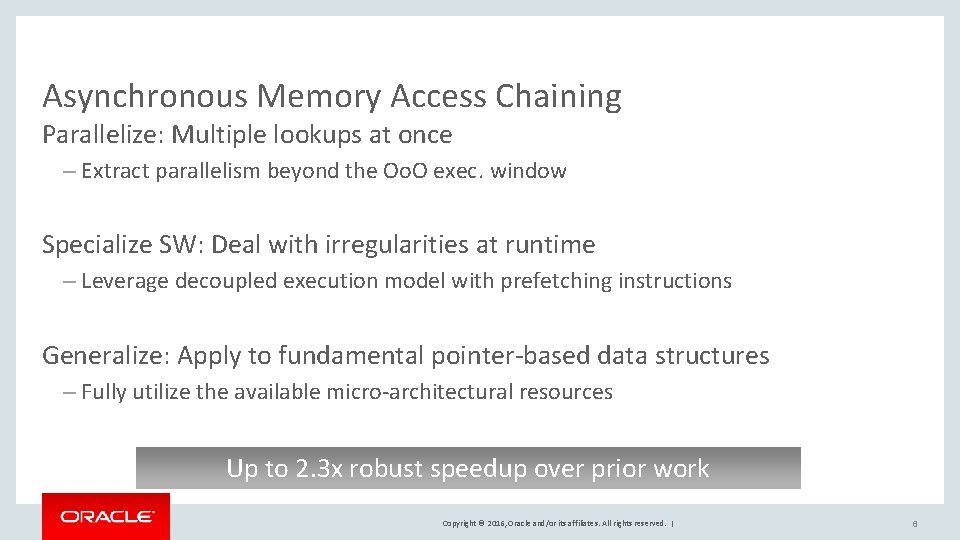

Asynchronous Memory Access Chaining Parallelize: Multiple lookups at once – Extract parallelism beyond the Oo. O exec. window Specialize SW: Deal with irregularities at runtime – Leverage decoupled execution model with prefetching instructions Generalize: Apply to fundamental pointer-based data structures – Fully utilize the available micro-architectural resources Up to 2. 3 x robust speedup over prior work Copyright © 2016, Oracle and/or its affiliates. All rights reserved. | 6

Outline 1 Introduction 2 Irregularities in data structure lookups 3 Hiding Memory Access Latency 4 Asynchronous Memory Access Chaining (AMAC) 5 Evaluation Copyright © 2016, Oracle and/or its affiliates. All rights reserved. | 7

Outline 1 Introduction 2 Irregularities in data structure lookups 3 Hiding Memory Access Latency 4 Asynchronous Memory Access Chaining (AMAC) 5 Evaluation Copyright © 2016, Oracle and/or its affiliates. All rights reserved. | 8

Pointer-Intensive Data Structures Essential for many database operators – Allow for fast data lookup Can be created on demand or exist as an index Hash join: Build and probe a hash table Group-by: Build a hash table Indexed join: Probe a tree or hash table Copyright © 2016, Oracle and/or its affiliates. All rights reserved. | 9

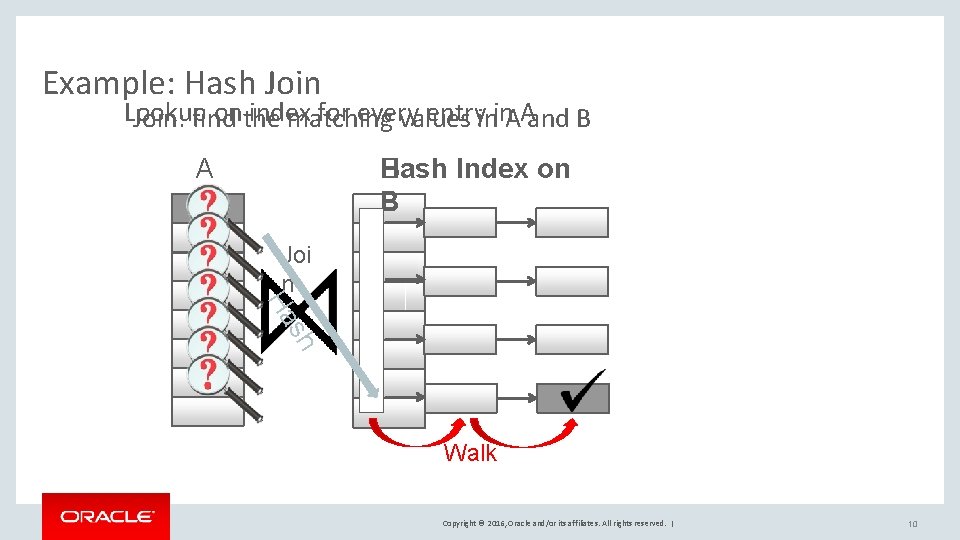

Example: Hash Join Lookup onthe index for every entryinin. AAand B Join: find matching values Hash Index on B B A Joi n h s Ha Walk Copyright © 2016, Oracle and/or its affiliates. All rights reserved. | 10

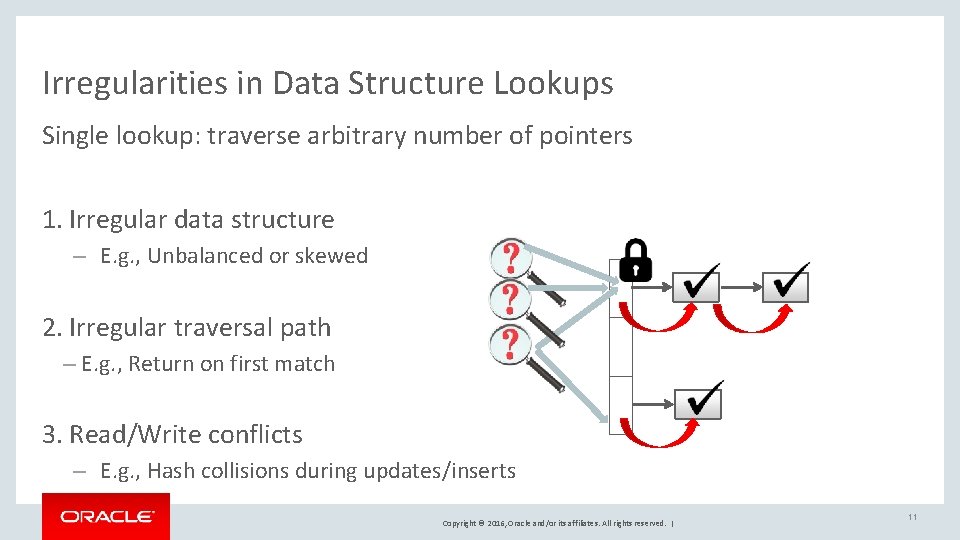

Irregularities in Data Structure Lookups Single lookup: traverse arbitrary number of pointers 1. Irregular data structure – E. g. , Unbalanced or skewed 2. Irregular traversal path – E. g. , Return on first match 3. Read/Write conflicts – E. g. , Hash collisions during updates/inserts Copyright © 2016, Oracle and/or its affiliates. All rights reserved. | 11

Outline 1 Introduction 2 Irregularities in data structure lookups 3 Hiding Memory Access Latency 4 Asynchronous Memory Access Chaining (AMAC) 5 Evaluation Copyright © 2016, Oracle and/or its affiliates. All rights reserved. | 12

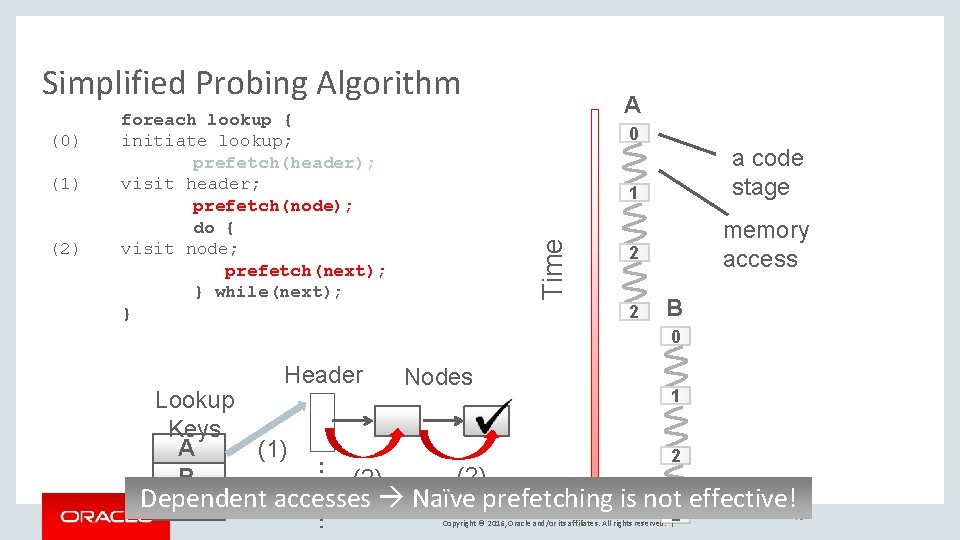

Simplified Probing Algorithm (1) (2) A 0 Time (0) foreach lookup { initiate lookup; prefetch(header); visit header; prefetch(node); do { visit node; prefetch(next); } while(next); } 1 a code stage 2 memory access 2 B 0 Header Nodes 1 Lookup Keys A (1) 2 (2) B (2) Dependent accesses Naïve prefetching is not 2 effective! C Copyright © 2016, Oracle and/or its affiliates. All rights reserved. | 13

![[Chen ‘ 04] [Chen ‘ 07] foreach group of lookup{ foreach lookup in group{ [Chen ‘ 04] [Chen ‘ 07] foreach group of lookup{ foreach lookup in group{](http://slidetodoc.com/presentation_image_h2/77f15dda7a1ec7bb132781c6bd36f090/image-14.jpg)

[Chen ‘ 04] [Chen ‘ 07] foreach group of lookup{ foreach lookup in group{ (0) initiate lookup; prefetch(header); } foreach lookup in group{ (1) visit header; prefetch(next); } foreach lookup in group{ (2) visit node; } } Time Group Prefetching (GP) A B C 0 0 0 1 1 1 2 2 2 Copyright © 2016, Oracle and/or its affiliates. All rights reserved. | Group In-flight lookups 14

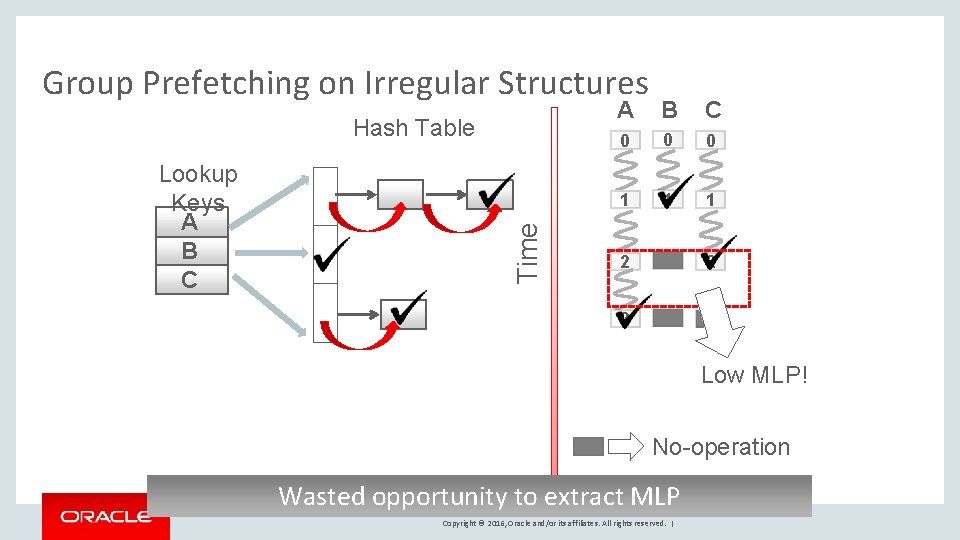

Group Prefetching on Irregular Structures Lookup Keys A B C Time Hash Table A B C 0 0 0 1 1 1 2 2 2 Low MLP! No-operation Wasted opportunity to extract MLP Copyright © 2016, Oracle and/or its affiliates. All rights reserved. |

![[Chen ‘ 04] [Chen ‘ 07] [Kim ‘ 10] Software-Pipelined Prefetching (SPP) for k=0 [Chen ‘ 04] [Chen ‘ 07] [Kim ‘ 10] Software-Pipelined Prefetching (SPP) for k=0](http://slidetodoc.com/presentation_image_h2/77f15dda7a1ec7bb132781c6bd36f090/image-16.jpg)

[Chen ‘ 04] [Chen ‘ 07] [Kim ‘ 10] Software-Pipelined Prefetching (SPP) for k=0 to N-3 do { lookup k+3; (0) initiate lookup; A prefetch(header); 1 (2) } B lookup k+2; visit header; prefetch(next); lookup k+1; visit node; prefetch(next); lookup k; visit node; Time (1) 0 0 C 2 1 0 D 2 2 1 0 2 2 1 2 2 2 Copyright © 2016, Oracle and/or its affiliates. All rights reserved. | In-flight lookups 16

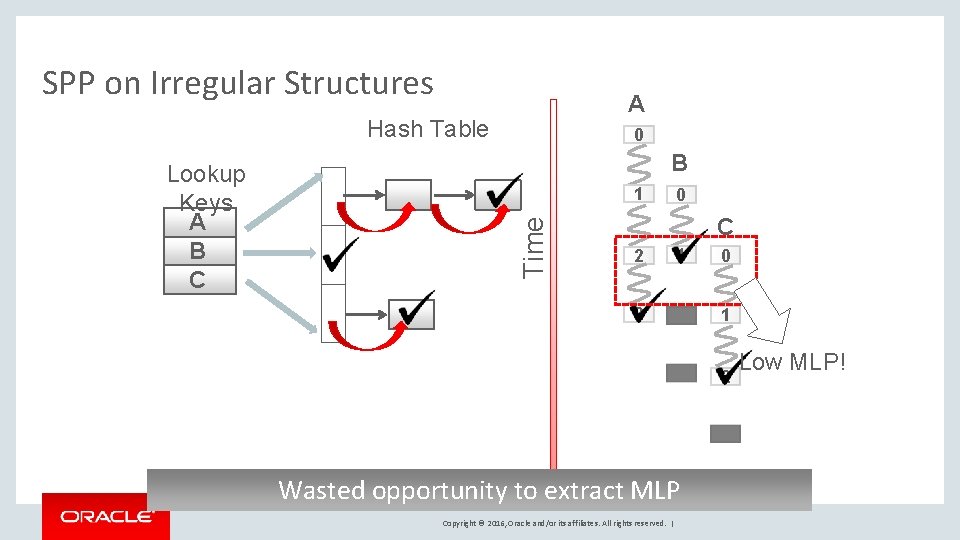

SPP on Irregular Structures A Hash Table B 1 Time Lookup Keys A B C 0 0 C 2 1 2 0 1 2 Wasted opportunity to extract MLP Copyright © 2016, Oracle and/or its affiliates. All rights reserved. | Low MLP!

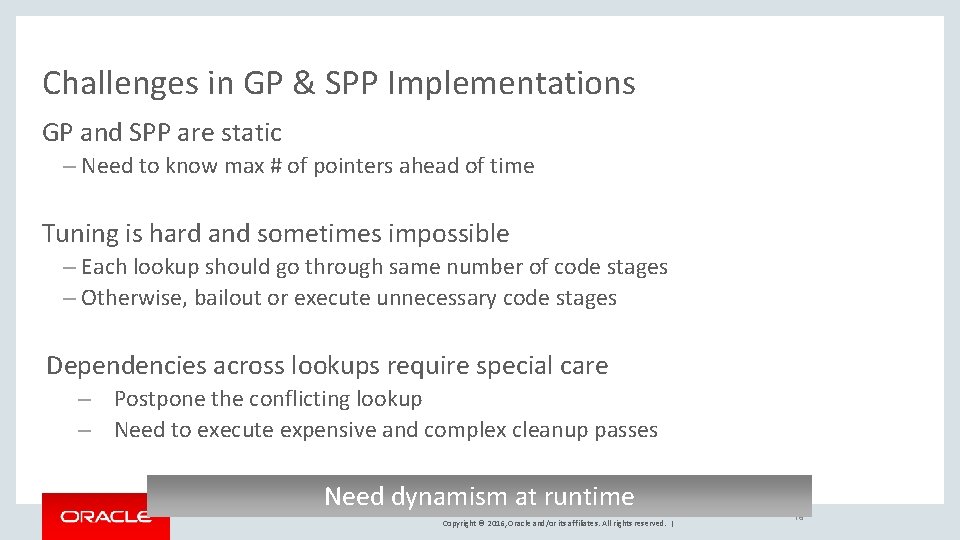

Challenges in GP & SPP Implementations GP and SPP are static – Need to know max # of pointers ahead of time Tuning is hard and sometimes impossible – Each lookup should go through same number of code stages – Otherwise, bailout or execute unnecessary code stages Dependencies across lookups require special care – Postpone the conflicting lookup – Need to execute expensive and complex cleanup passes Need dynamism at runtime Copyright © 2016, Oracle and/or its affiliates. All rights reserved. | 18

Outline 1 Introduction 2 Irregularities in data structure lookups 3 Hiding Memory Access Latency 4 Asynchronous Memory Access Chaining (AMAC) 5 Evaluation Copyright © 2016, Oracle and/or its affiliates. All rights reserved. | 19

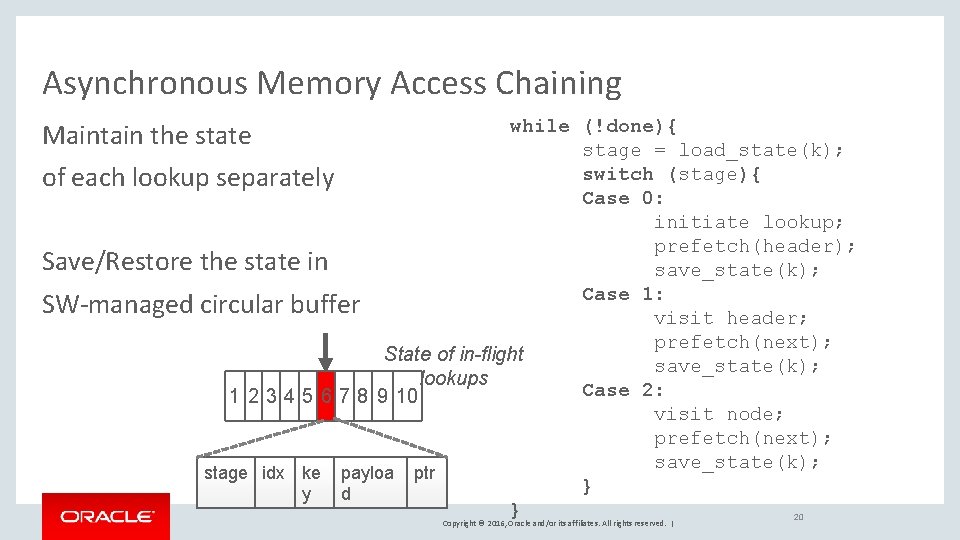

Asynchronous Memory Access Chaining while (!done){ stage = load_state(k); switch (stage){ of each lookup separately Case 0: initiate lookup; prefetch(header); Save/Restore the state in save_state(k); Case 1: SW-managed circular buffer visit header; prefetch(next); State of in-flight save_state(k); lookups Case 2: 1 2 3 4 5 6 7 8 9 10 visit node; prefetch(next); save_state(k); stage idx ke payloa ptr } y d } 20 Maintain the state Copyright © 2016, Oracle and/or its affiliates. All rights reserved. |

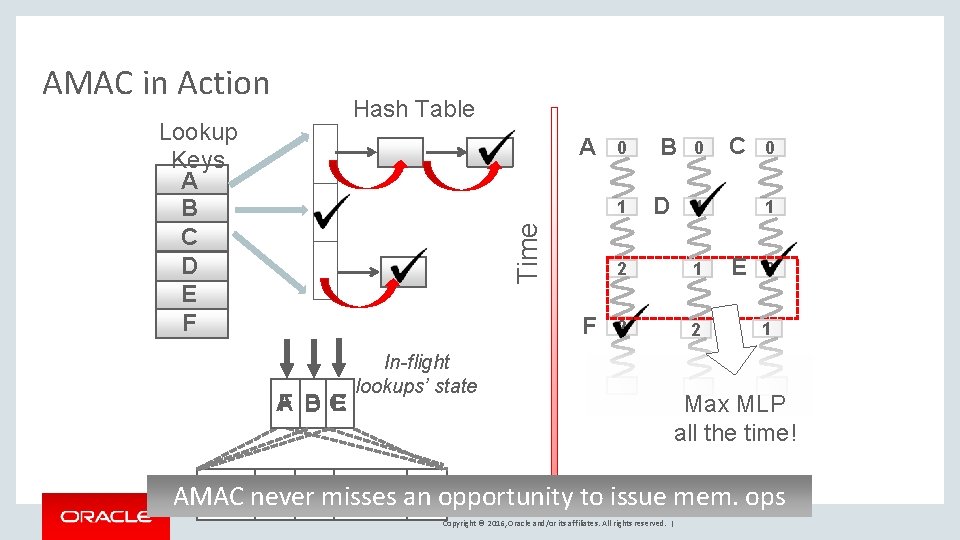

AMAC in Action Hash Table Lookup Keys A B C D E F A 0 Time 1 F F B E A DC In-flight lookups’ state stage idx ke payloa ptr AMAC nevery misses an opportunity d B D 0 C 1 2 2 0 1 E 2 1 Max MLP all the time! to issue mem. ops Copyright © 2016, Oracle and/or its affiliates. All rights reserved. |

Benefits of AMAC Execution Dynamic execution to maximize MLP – Can handle variable-sized ptr. chains at runtime Negligible space overhead – The entire state occupies less than a KB Dependent lookups do not require special handling – If the lock acquire fails, try again later Ordered output w/o redundant work – Lookups’ progress is not lost and results are ordered Copyright © 2016, Oracle and/or its affiliates. All rights reserved. | 22

Outline 1 Introduction 2 Irregularities in data structure lookups 3 Hiding Memory Access Latency 4 Asynchronous Memory Access Chaining (AMAC) 5 Evaluation Copyright © 2016, Oracle and/or its affiliates. All rights reserved. | 23

![Methodology Codebase – Hash Join workload from ETHZ [Balkesen ‘ 13] – Extended with Methodology Codebase – Hash Join workload from ETHZ [Balkesen ‘ 13] – Extended with](http://slidetodoc.com/presentation_image_h2/77f15dda7a1ec7bb132781c6bd36f090/image-24.jpg)

Methodology Codebase – Hash Join workload from ETHZ [Balkesen ‘ 13] – Extended with Unbalanced Tree Search Lookups (BST) Dataset – Tuples: 8 B key, 8 B payload – 227 Relations – Random uniform and Zipf-skewed (z=1) distribution HW Platform – Xeon x 5670 (Westmere) Copyright © 2016, Oracle and/or its affiliates. All rights reserved. | 24

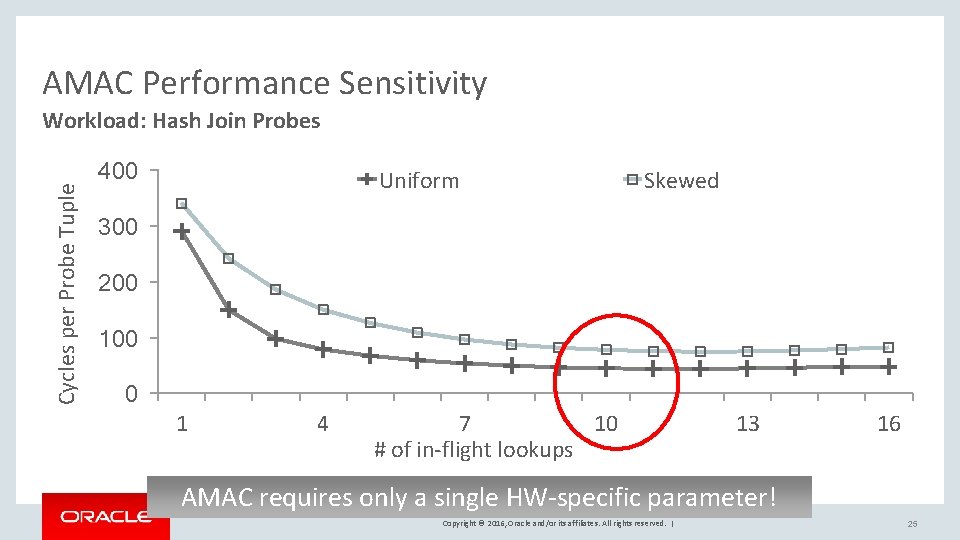

AMAC Performance Sensitivity Cycles per Probe Tuple Workload: Hash Join Probes 400 Uniform Skewed 300 200 100 0 1 4 7 10 # of in-flight lookups 13 16 AMAC requires only a single HW-specific parameter! Copyright © 2016, Oracle and/or its affiliates. All rights reserved. | 25

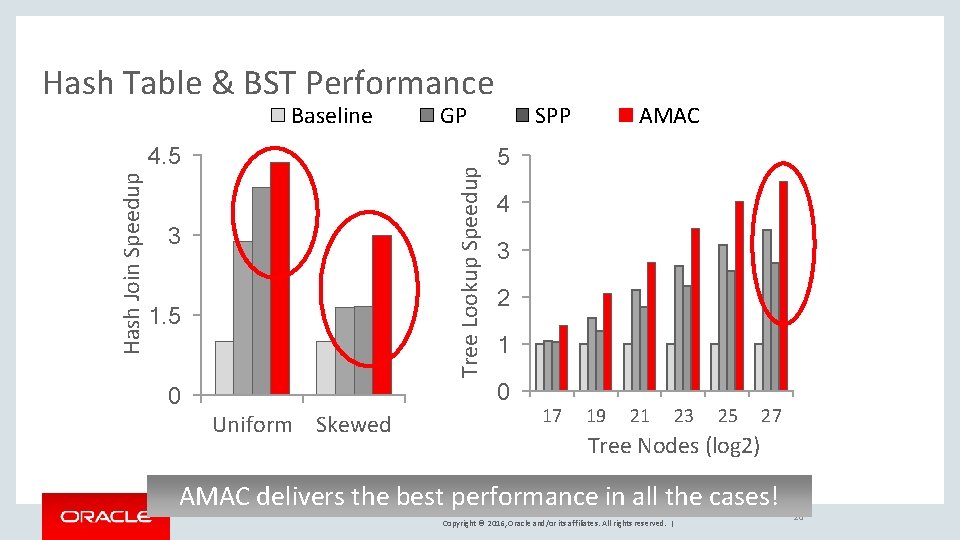

Hash Table & BST Performance Baseline Tree Lookup Speedup Hash Join Speedup 4. 5 GP 3 1. 5 SPP 5 4 3 2 1 0 0 Uniform Skewed AMAC 17 19 21 23 25 27 Tree Nodes (log 2) AMAC delivers the best performance in all the cases! Copyright © 2016, Oracle and/or its affiliates. All rights reserved. | 26

Conclusions Pointer-intensive structures are essential in DBMSs Modern CPUs spend significant time in data lookups – Not efficient & fall short of extracting parallelism AMAC: Extract inter-lookup parallelism at runtime More analysis in the paper: – Memory bottleneck analysis – Additional data structures & platforms Copyright © 2016, Oracle and/or its affiliates. All rights reserved. | 27

Copyright © 2016, Oracle and/or its affiliates. All rights reserved. | 28

- Slides: 29