Associations Between Categorical Variables Case where both explanatory

Associations Between Categorical Variables • Case where both explanatory (independent) variable and response (dependent) variable are qualitative (Chapter 7 includes case where both are binary (2 levels) • Association: The distributions of responses differ among the levels of the explanatory variable (e. g. Party affiliation by gender)

Contingency Tables • Cross-tabulations of frequency counts where the rows (typically) represent the levels of the explanatory variable and the columns represent the levels of the response variable. • Numbers within the table represent the numbers of individuals falling in the corresponding combination of levels of the two variables • Row and column totals are called the marginal distributions for the two variables

Example - Cyclones Near Antarctica • Period of Study: September, 1973 -May, 1975 • Explanatory Variable: Region (40 -49, 50 -59, 60 -79) (Degrees South Latitude) • Response: Season (Aut(4), Wtr(5), Spr(4), Sum(8)) (Number of months in parentheses) • Units: Cyclones in the study area • Treating the observed cyclones as a “random sample” of all cyclones that could have occurred Source: Howarth(1983), “An Analysis of the Variability of Cyclones around Antarctica and Their Relation to Sea-Ice Extent”, Annals of the Association of American Geographers, Vol. 73, pp 519 -537

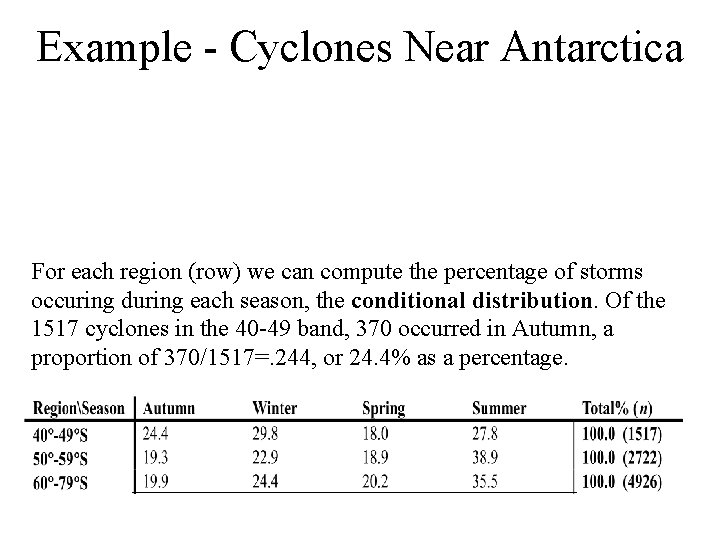

Example - Cyclones Near Antarctica For each region (row) we can compute the percentage of storms occuring during each season, the conditional distribution. Of the 1517 cyclones in the 40 -49 band, 370 occurred in Autumn, a proportion of 370/1517=. 244, or 24. 4% as a percentage.

Example - Cyclones Near Antarctica Graphical Conditional Distributions for Regions

Guidelines for Contingency Tables • Compute percentages for the response (column) variable within the categories of the explanatory (row) variable. Note that in journal articles, rows and columns may be interchanged. • Divide the cell totals by the row (explanatory category) total and multiply by 100 to obtain a percent, the row percents will add to 100 • Give title and clearly define variables and categories. • Include row (explanatory) total sample sizes

Independence & Dependence • Statistically Independent: Population conditional distributions of one variable are the same across all levels of the other variable • Statistically Dependent: Conditional Distributions are not all equal • When testing, researchers typically wish to demonstrate dependence (alternative hypothesis), and wish to refute independence (null hypothesis)

Pearson’s Chi-Square Test • Can be used for nominal or ordinal explanatory and response variables • Variables can have any number of distinct levels • Tests whether the distribution of the response variable is the same for each level of the explanatory variable (H 0: No association between the variables • r = # of levels of explanatory variable • c = # of levels of response variable

Pearson’s Chi-Square Test • Intuition behind test statistic – Obtain marginal distribution of outcomes for the response variable – Apply this common distribution to all levels of the explanatory variable, by multiplying each proportion by the corresponding sample size – Measure the difference between actual cell counts and the expected cell counts in the previous step

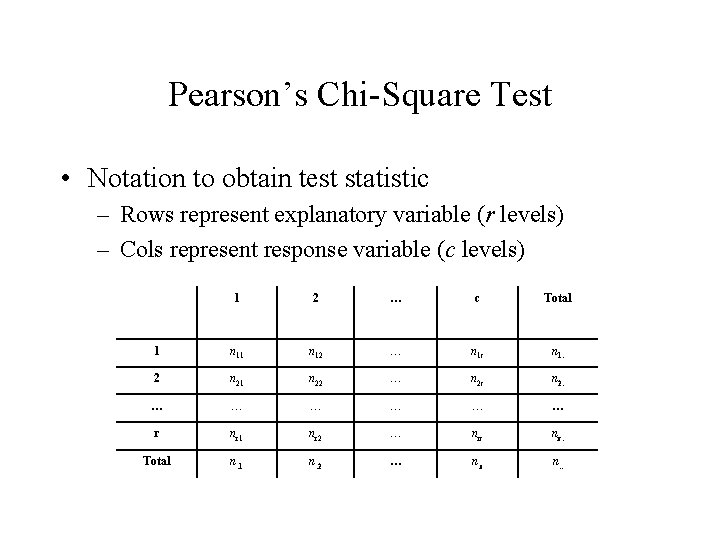

Pearson’s Chi-Square Test • Notation to obtain test statistic – Rows represent explanatory variable (r levels) – Cols represent response variable (c levels) 1 2 … c Total 1 n 12 … n 1 c n 1. 2 n 21 n 22 … n 2 c n 2. … … … r nr 1 nr 2 … nrc nr. Total n. 1 n. 2 … n. c n. .

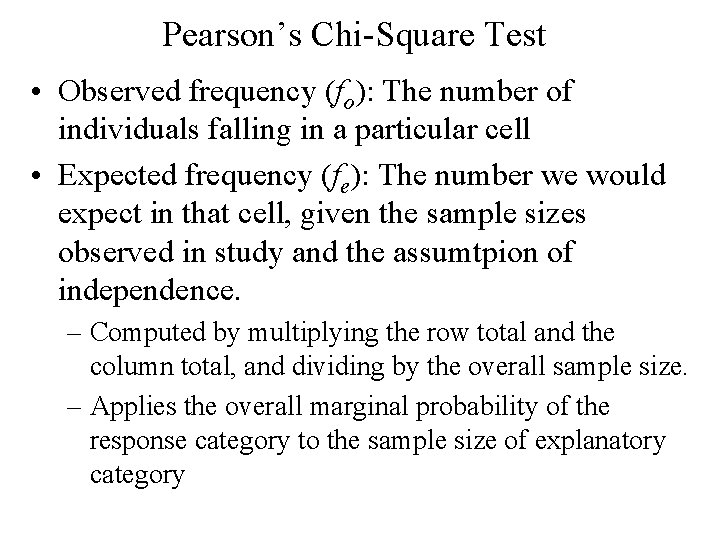

Pearson’s Chi-Square Test • Observed frequency (fo): The number of individuals falling in a particular cell • Expected frequency (fe): The number we would expect in that cell, given the sample sizes observed in study and the assumtpion of independence. – Computed by multiplying the row total and the column total, and dividing by the overall sample size. – Applies the overall marginal probability of the response category to the sample size of explanatory category

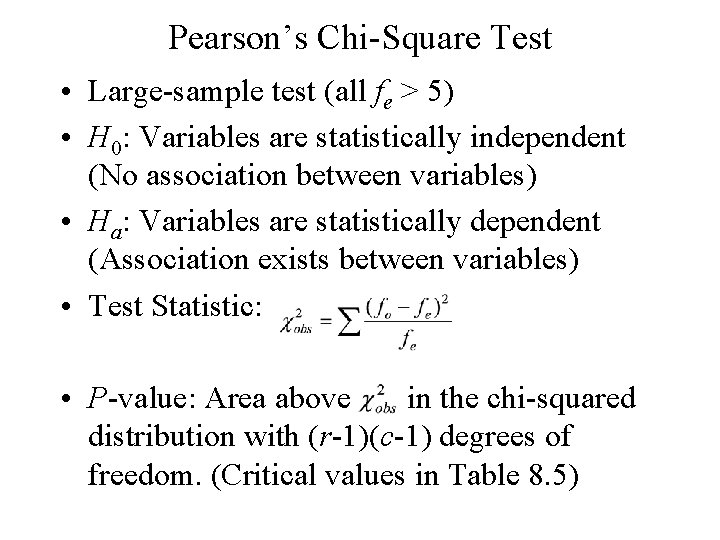

Pearson’s Chi-Square Test • Large-sample test (all fe > 5) • H 0: Variables are statistically independent (No association between variables) • Ha: Variables are statistically dependent (Association exists between variables) • Test Statistic: • P-value: Area above in the chi-squared distribution with (r-1)(c-1) degrees of freedom. (Critical values in Table 8. 5)

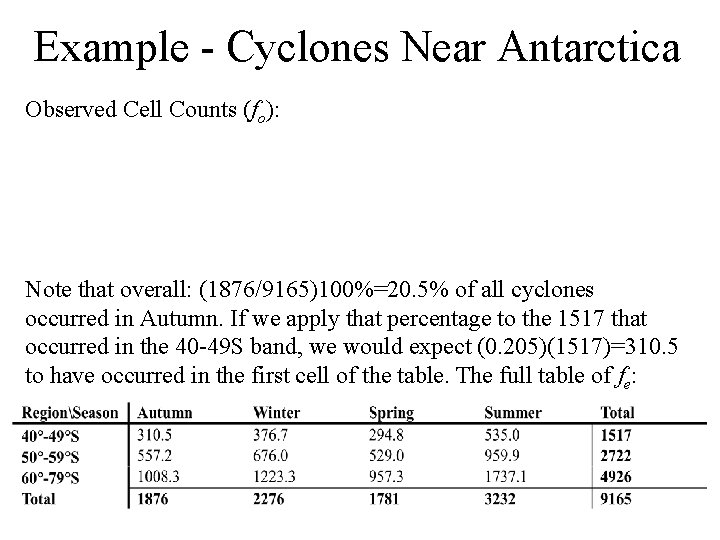

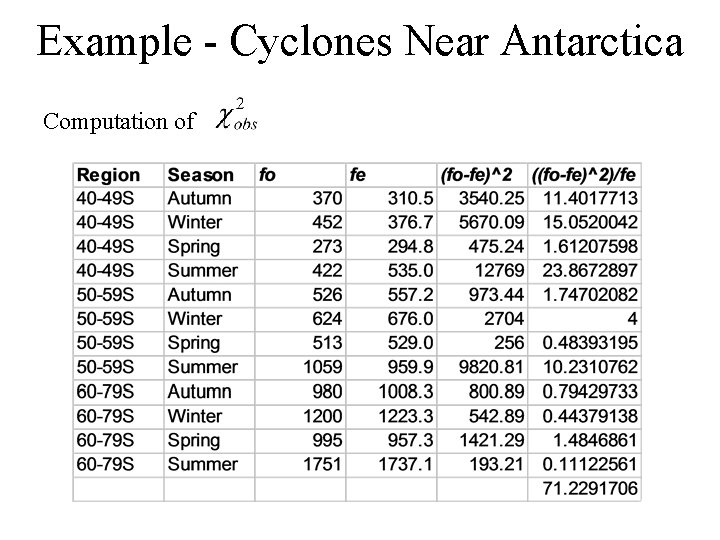

Example - Cyclones Near Antarctica Observed Cell Counts (fo): Note that overall: (1876/9165)100%=20. 5% of all cyclones occurred in Autumn. If we apply that percentage to the 1517 that occurred in the 40 -49 S band, we would expect (0. 205)(1517)=310. 5 to have occurred in the first cell of the table. The full table of fe:

Example - Cyclones Near Antarctica Computation of

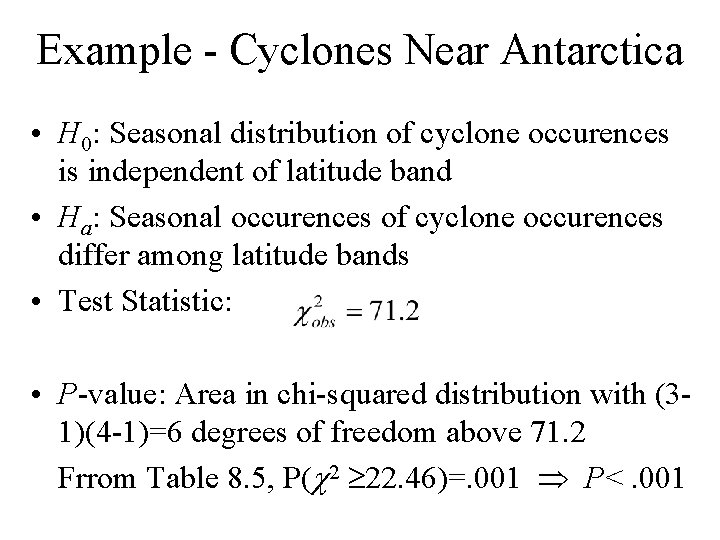

Example - Cyclones Near Antarctica • H 0: Seasonal distribution of cyclone occurences is independent of latitude band • Ha: Seasonal occurences of cyclone occurences differ among latitude bands • Test Statistic: • P-value: Area in chi-squared distribution with (31)(4 -1)=6 degrees of freedom above 71. 2 Frrom Table 8. 5, P(c 2 22. 46)=. 001 P<. 001

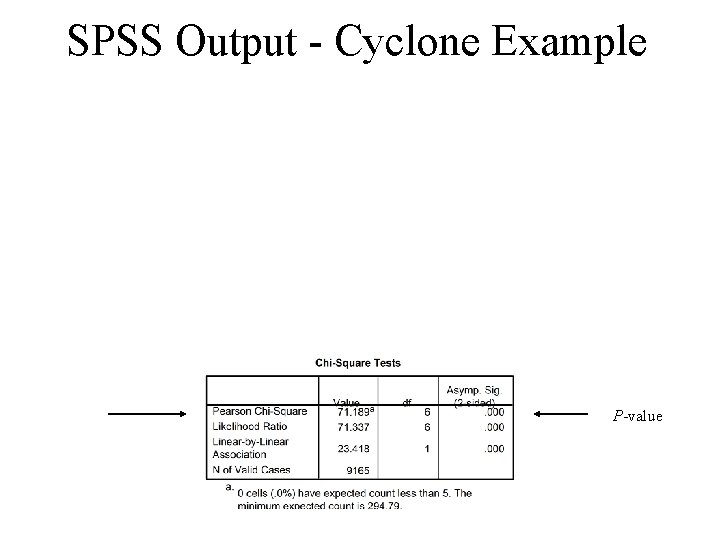

SPSS Output - Cyclone Example P-value

Misuses of chi-squared Test • Expected frequencies too small (all expected counts should be above 5, not necessary for the observed counts) • Dependent samples (the same individuals are in each row, see Mc. Nemar’s test) • Can be used for nominal or ordinal variables, but more powerful methods exist for when both variables are ordinal and a directional association is hypothesized

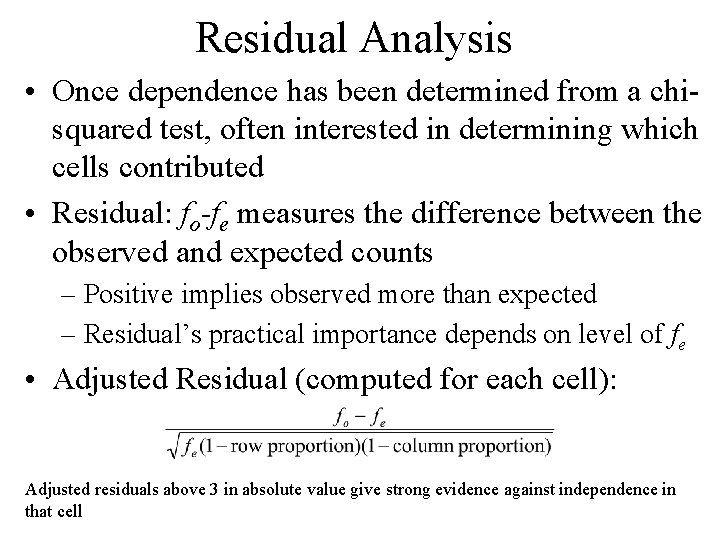

Residual Analysis • Once dependence has been determined from a chisquared test, often interested in determining which cells contributed • Residual: fo-fe measures the difference between the observed and expected counts – Positive implies observed more than expected – Residual’s practical importance depends on level of fe • Adjusted Residual (computed for each cell): Adjusted residuals above 3 in absolute value give strong evidence against independence in that cell

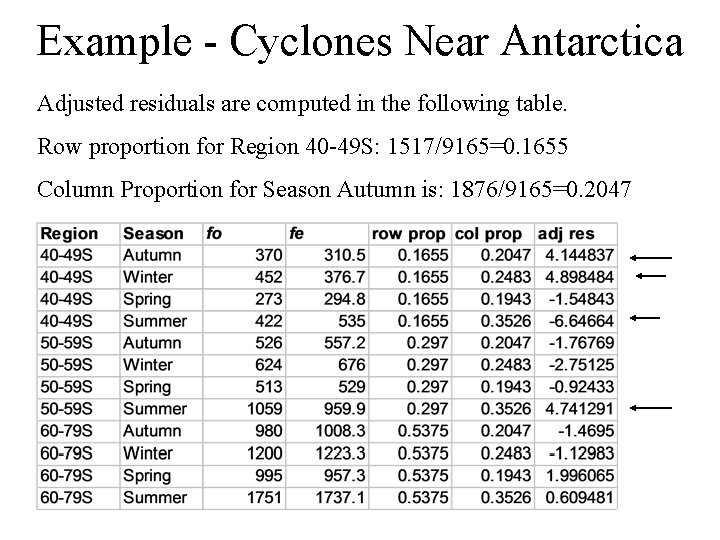

Example - Cyclones Near Antarctica Adjusted residuals are computed in the following table. Row proportion for Region 40 -49 S: 1517/9165=0. 1655 Column Proportion for Season Autumn is: 1876/9165=0. 2047

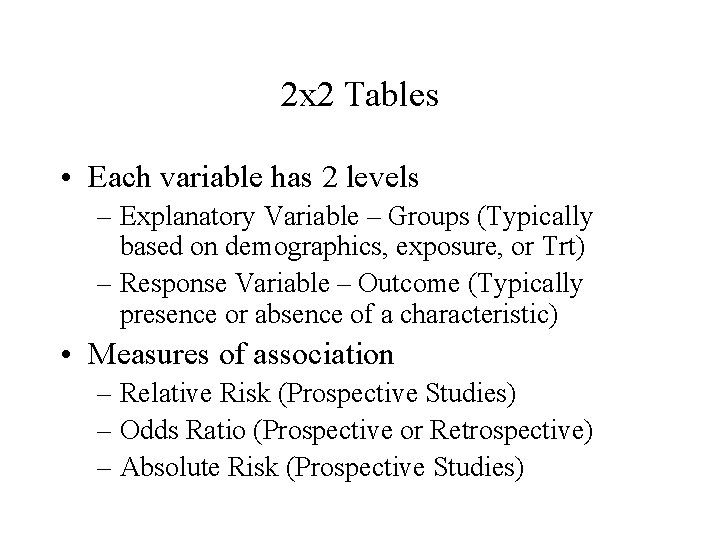

2 x 2 Tables • Each variable has 2 levels – Explanatory Variable – Groups (Typically based on demographics, exposure, or Trt) – Response Variable – Outcome (Typically presence or absence of a characteristic) • Measures of association – Relative Risk (Prospective Studies) – Odds Ratio (Prospective or Retrospective) – Absolute Risk (Prospective Studies)

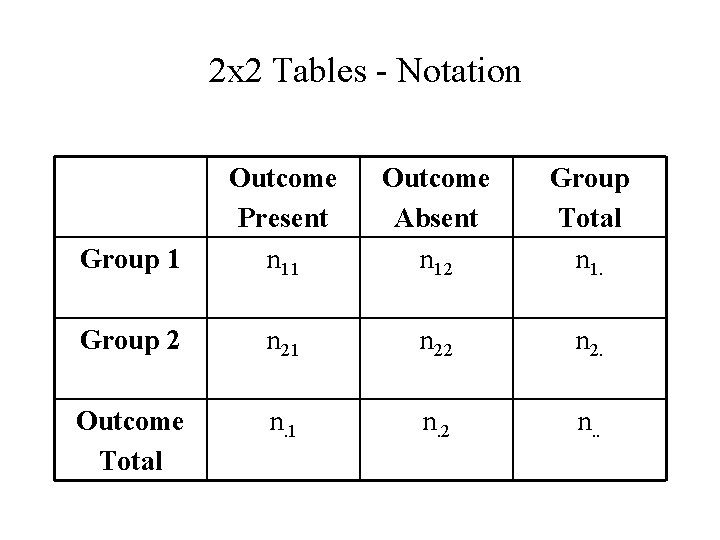

2 x 2 Tables - Notation Group 1 Outcome Present n 11 Outcome Absent n 12 Group Total n 1. Group 2 n 21 n 22 n 2. Outcome Total n. 1 n. 2 n. .

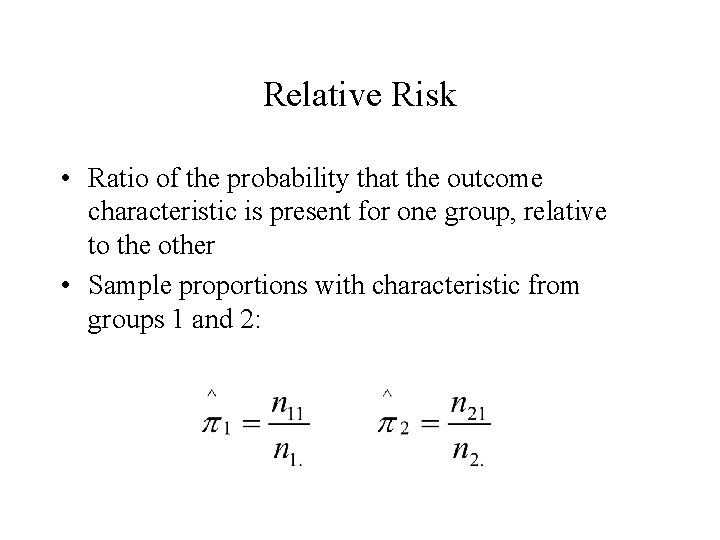

Relative Risk • Ratio of the probability that the outcome characteristic is present for one group, relative to the other • Sample proportions with characteristic from groups 1 and 2:

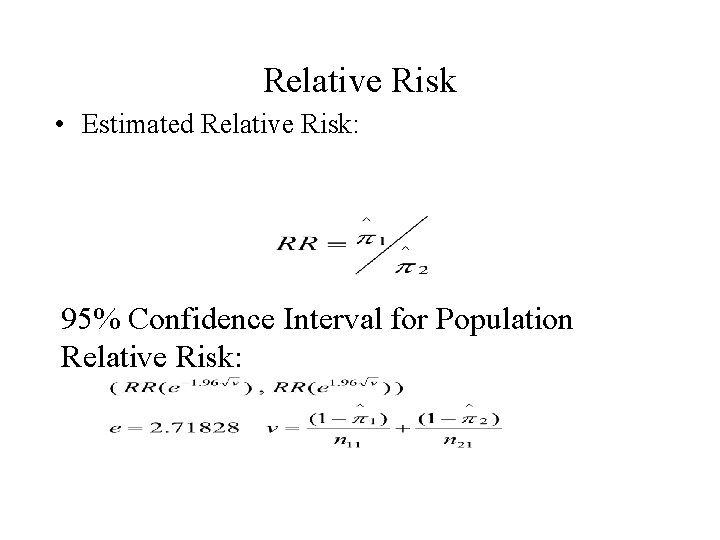

Relative Risk • Estimated Relative Risk: 95% Confidence Interval for Population Relative Risk:

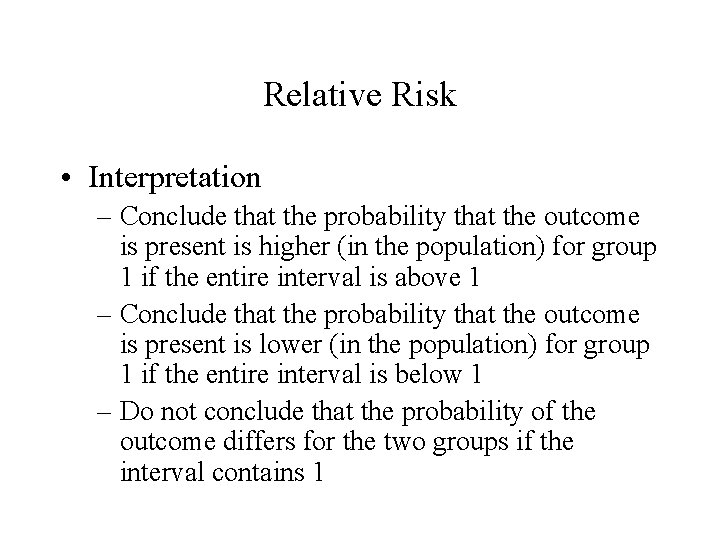

Relative Risk • Interpretation – Conclude that the probability that the outcome is present is higher (in the population) for group 1 if the entire interval is above 1 – Conclude that the probability that the outcome is present is lower (in the population) for group 1 if the entire interval is below 1 – Do not conclude that the probability of the outcome differs for the two groups if the interval contains 1

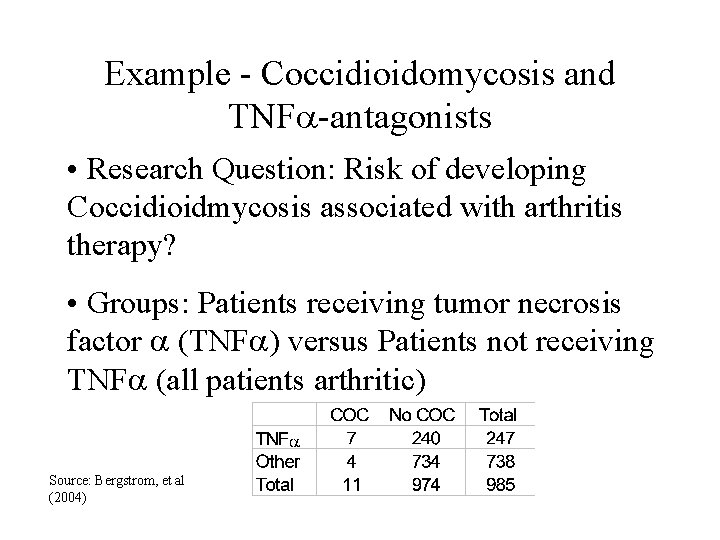

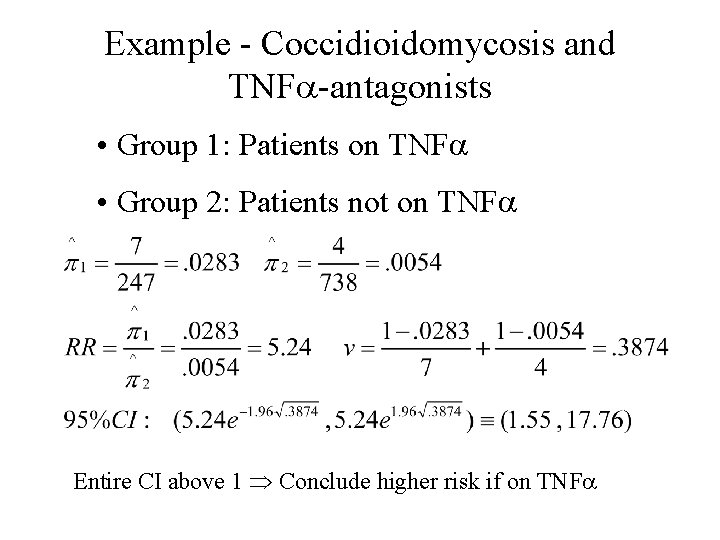

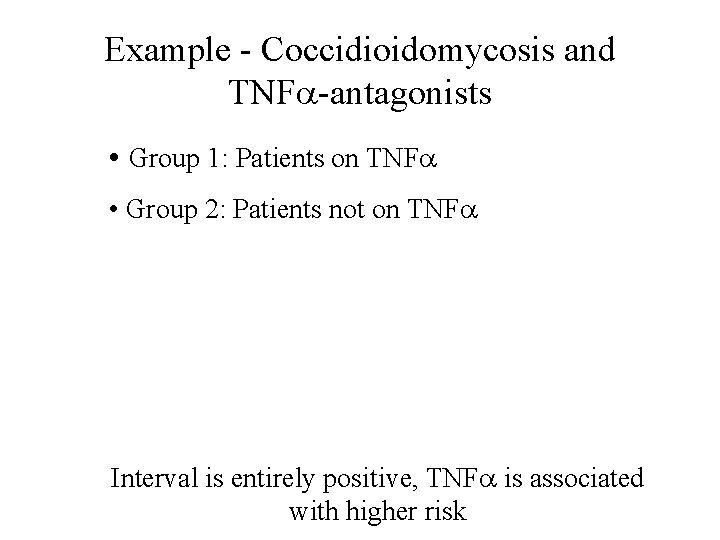

Example - Coccidioidomycosis and TNFa-antagonists • Research Question: Risk of developing Coccidioidmycosis associated with arthritis therapy? • Groups: Patients receiving tumor necrosis factor a (TNFa) versus Patients not receiving TNFa (all patients arthritic) Source: Bergstrom, et al (2004)

Example - Coccidioidomycosis and TNFa-antagonists • Group 1: Patients on TNFa • Group 2: Patients not on TNFa Entire CI above 1 Conclude higher risk if on TNFa

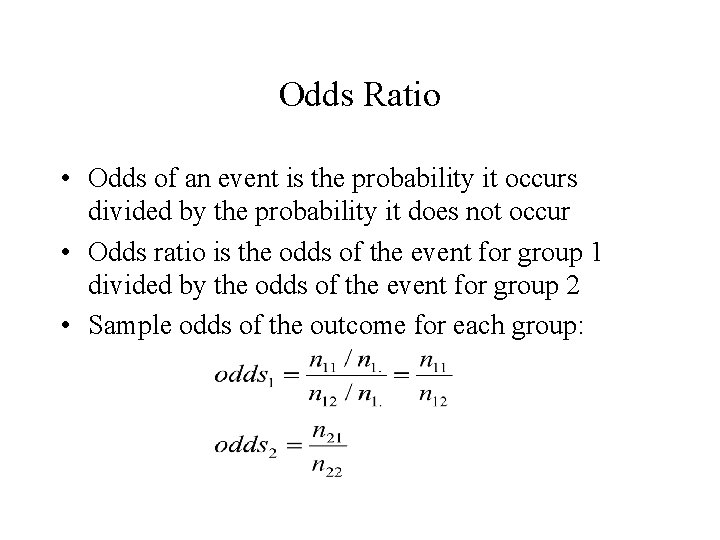

Odds Ratio • Odds of an event is the probability it occurs divided by the probability it does not occur • Odds ratio is the odds of the event for group 1 divided by the odds of the event for group 2 • Sample odds of the outcome for each group:

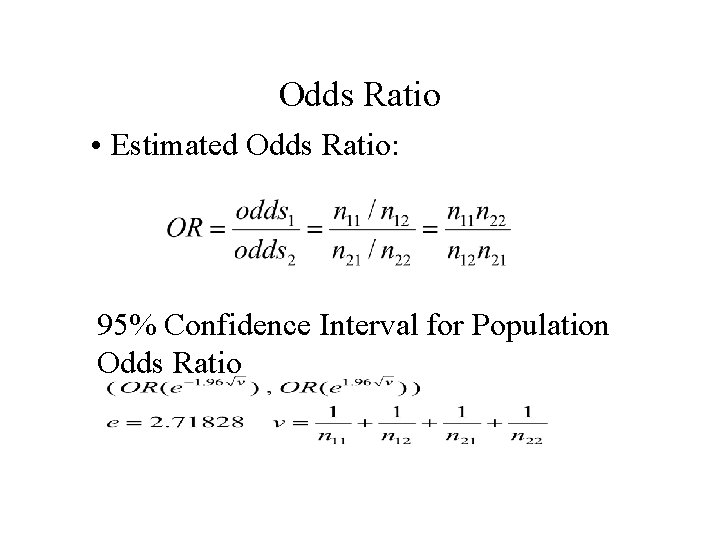

Odds Ratio • Estimated Odds Ratio: 95% Confidence Interval for Population Odds Ratio

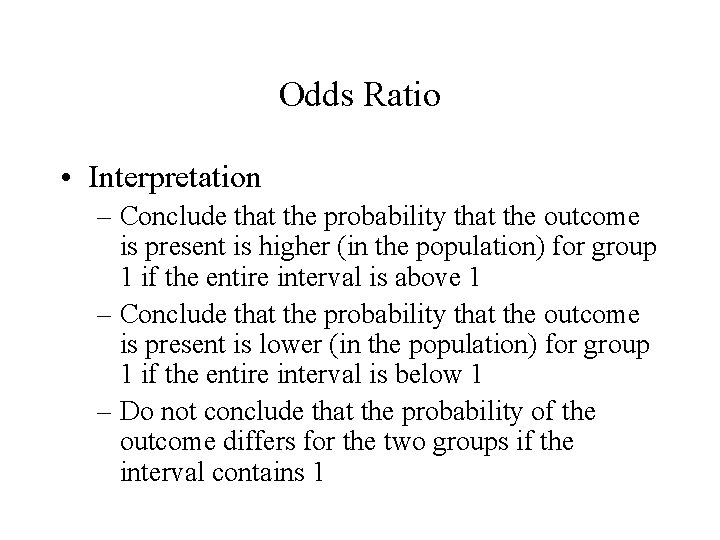

Odds Ratio • Interpretation – Conclude that the probability that the outcome is present is higher (in the population) for group 1 if the entire interval is above 1 – Conclude that the probability that the outcome is present is lower (in the population) for group 1 if the entire interval is below 1 – Do not conclude that the probability of the outcome differs for the two groups if the interval contains 1

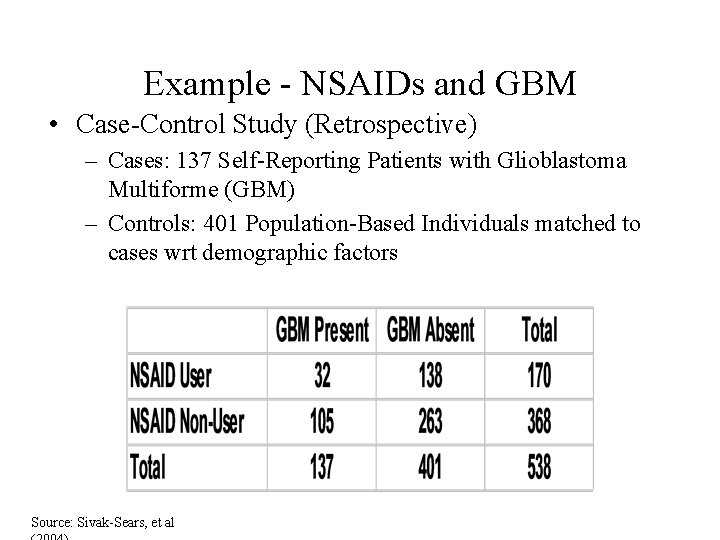

Example - NSAIDs and GBM • Case-Control Study (Retrospective) – Cases: 137 Self-Reporting Patients with Glioblastoma Multiforme (GBM) – Controls: 401 Population-Based Individuals matched to cases wrt demographic factors Source: Sivak-Sears, et al

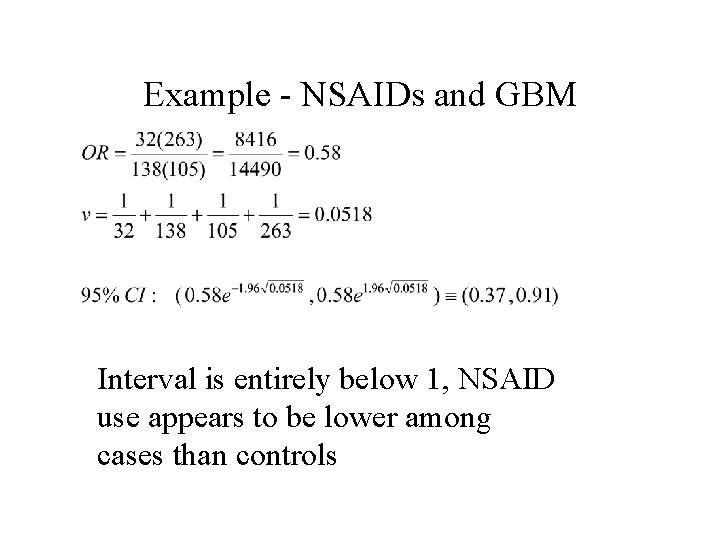

Example - NSAIDs and GBM Interval is entirely below 1, NSAID use appears to be lower among cases than controls

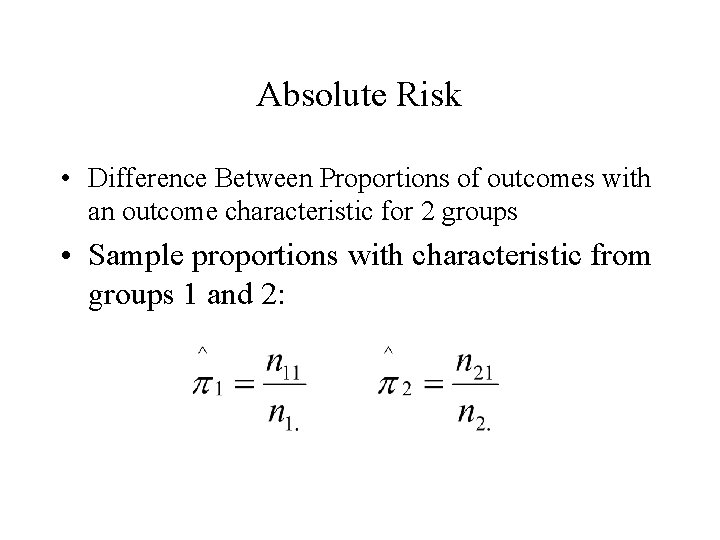

Absolute Risk • Difference Between Proportions of outcomes with an outcome characteristic for 2 groups • Sample proportions with characteristic from groups 1 and 2:

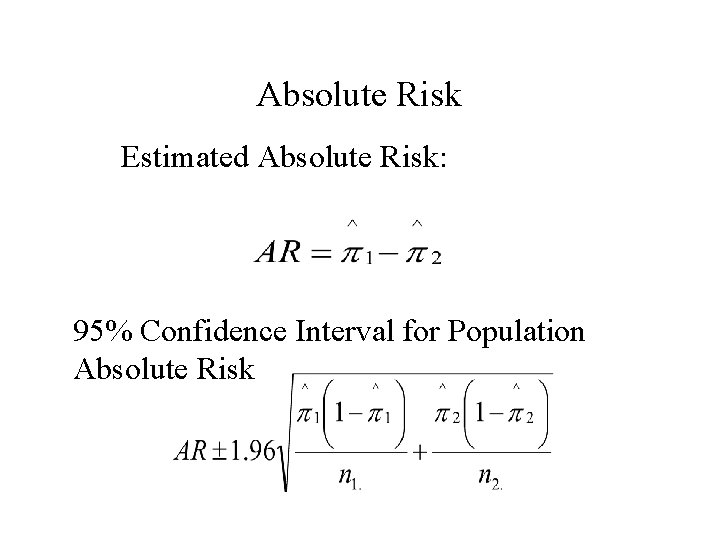

Absolute Risk Estimated Absolute Risk: 95% Confidence Interval for Population Absolute Risk

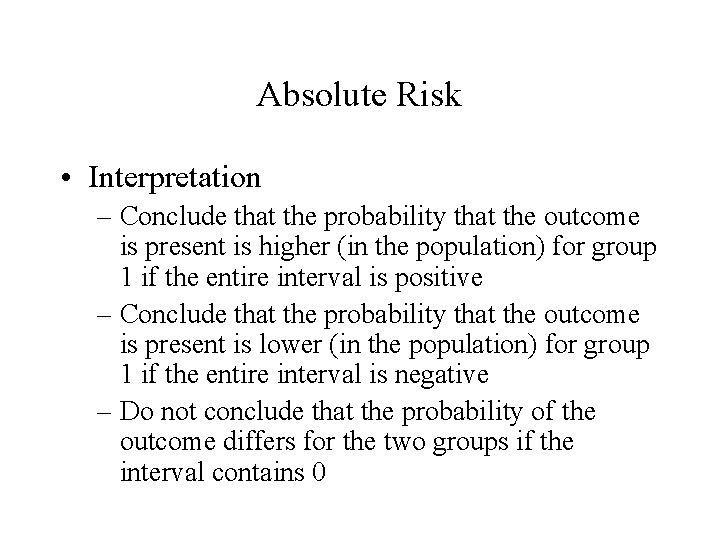

Absolute Risk • Interpretation – Conclude that the probability that the outcome is present is higher (in the population) for group 1 if the entire interval is positive – Conclude that the probability that the outcome is present is lower (in the population) for group 1 if the entire interval is negative – Do not conclude that the probability of the outcome differs for the two groups if the interval contains 0

Example - Coccidioidomycosis and TNFa-antagonists • Group 1: Patients on TNFa • Group 2: Patients not on TNFa Interval is entirely positive, TNFa is associated with higher risk

Ordinal Explanatory and Response Variables • Pearson’s Chi-square test can be used to test associations among ordinal variables, but more powerful methods exist • When theories exist that the association is directional (positive or negative), measures exist to describe and test for these specific alternatives from independence: – Gamma – Kendall’s tb

Concordant and Discordant Pairs • Concordant Pairs - Pairs of individuals where one individual scores “higher” on both ordered variables than the other individual • Discordant Pairs - Pairs of individuals where one individual scores “higher” on one ordered variable and the other individual scores “higher” on the other • C = # Concordant Pairs D = # Discordant Pairs – Under Positive association, expect C > D – Under Negative association, expect C < D – Under No association, expect C D

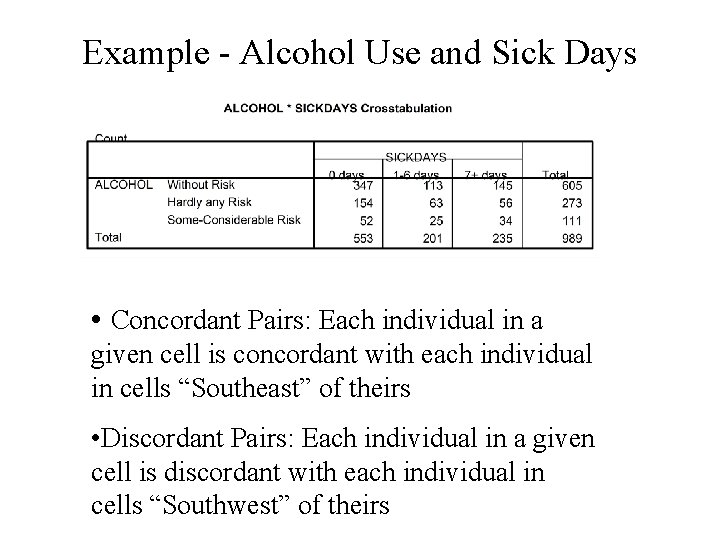

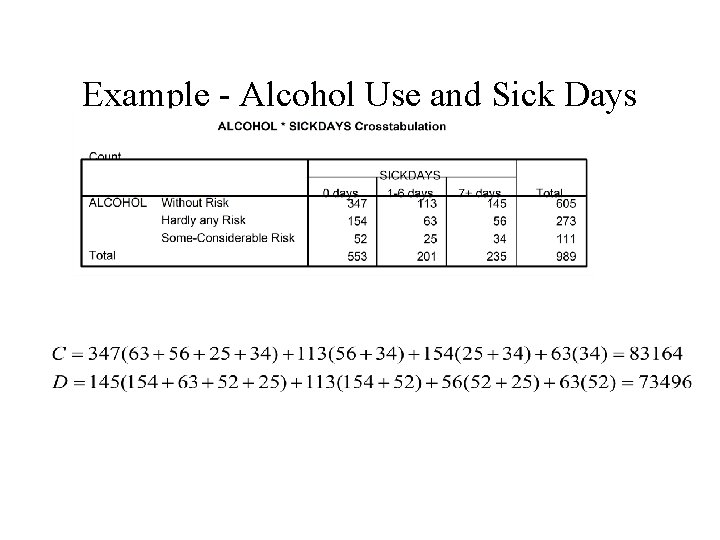

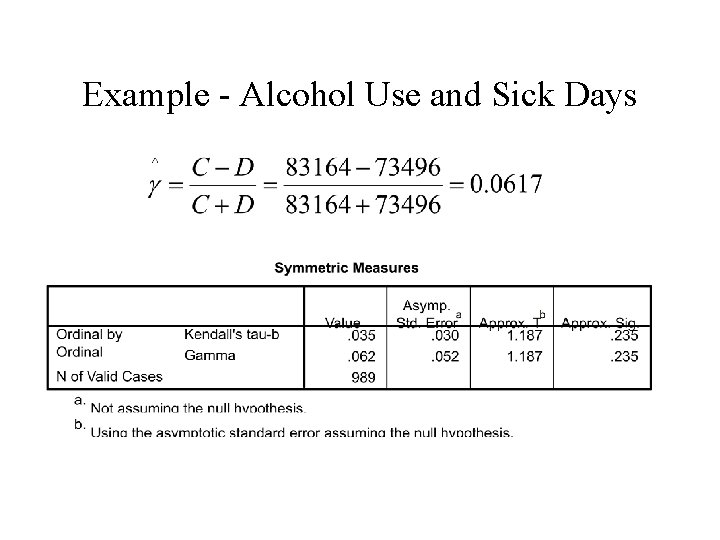

Example - Alcohol Use and Sick Days • Alcohol Risk (Without Risk, Hardly any Risk, Some to Considerable Risk) • Sick Days (0, 1 -6, 7) • Concordant Pairs - Pairs of respondents where one scores higher on both alcohol risk and sick days than the other • Discordant Pairs - Pairs of respondents where one scores higher on alcohol risk and the other scores higher on sick days Source: Hermansson, et al (2003)

Example - Alcohol Use and Sick Days • Concordant Pairs: Each individual in a given cell is concordant with each individual in cells “Southeast” of theirs • Discordant Pairs: Each individual in a given cell is discordant with each individual in cells “Southwest” of theirs

Example - Alcohol Use and Sick Days

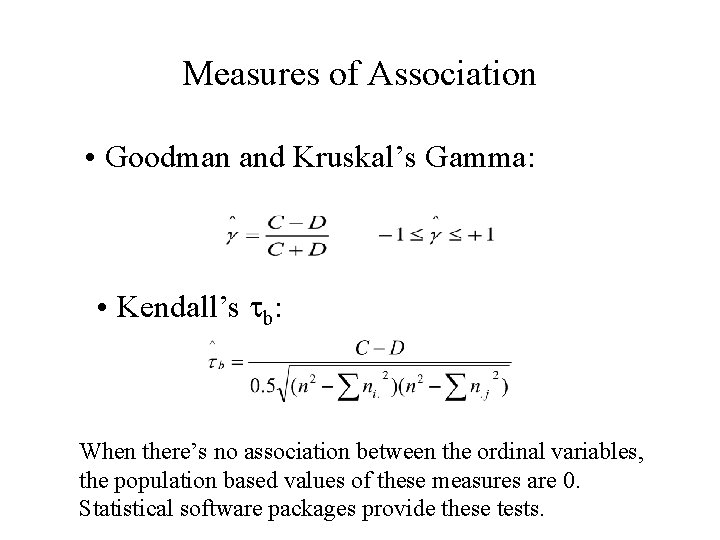

Measures of Association • Goodman and Kruskal’s Gamma: • Kendall’s tb: When there’s no association between the ordinal variables, the population based values of these measures are 0. Statistical software packages provide these tests.

Example - Alcohol Use and Sick Days

- Slides: 42