Association Rules Mining Association Rules in Large Databases

Association Rules

Mining Association Rules in Large Databases n n Association rule mining Mining single-dimensional Boolean association rules from transactional databases Mining multilevel association rules from transactional databases Mining multidimensional association rules from transactional databases and data warehouse n From association mining to correlation analysis n Summary

? ? Where should detergents be placed in the Store to maximize their sales? Are window cleaning products purchased when detergents and orange juice are bought together? Is soda typically purchased with bananas? Does the brand of soda make a difference? How are the demographics of the neighborhood affecting what customers are buying?

What Is Association Mining? n Association rule mining: n n Applications: n n Finding frequent patterns, associations, correlations, or causal structures among sets of items or objects in transaction databases, relational databases, and other information repositories. Basket data analysis, cross-marketing, catalog design, loss-leader analysis, clustering, classification, etc. Examples. n n n Rule form: “Body ® Head [support, confidence]”. buys(x, “diapers”) ® buys(x, “beers”) [0. 5%, 60%] position(x, “DM”) ^ takes(x, “MSDM”) ® get(x, “Promotion”) [1%, 75%]

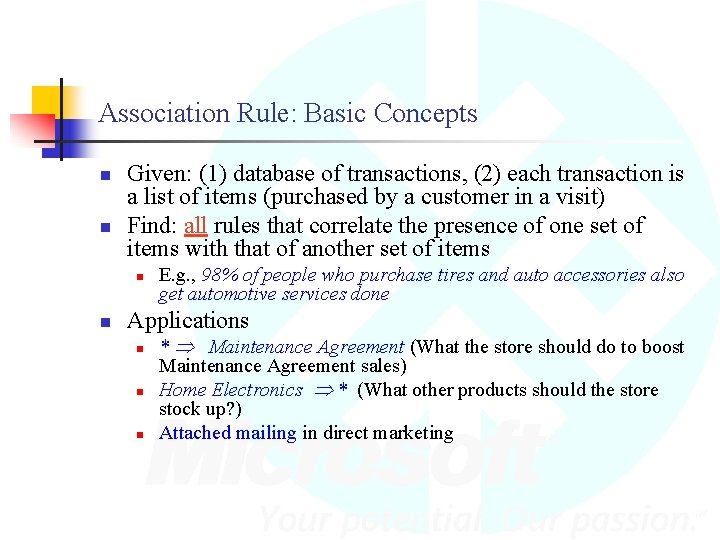

Association Rule: Basic Concepts n n Given: (1) database of transactions, (2) each transaction is a list of items (purchased by a customer in a visit) Find: all rules that correlate the presence of one set of items with that of another set of items n n E. g. , 98% of people who purchase tires and auto accessories also get automotive services done Applications n n n * Maintenance Agreement (What the store should do to boost Maintenance Agreement sales) Home Electronics * (What other products should the store stock up? ) Attached mailing in direct marketing

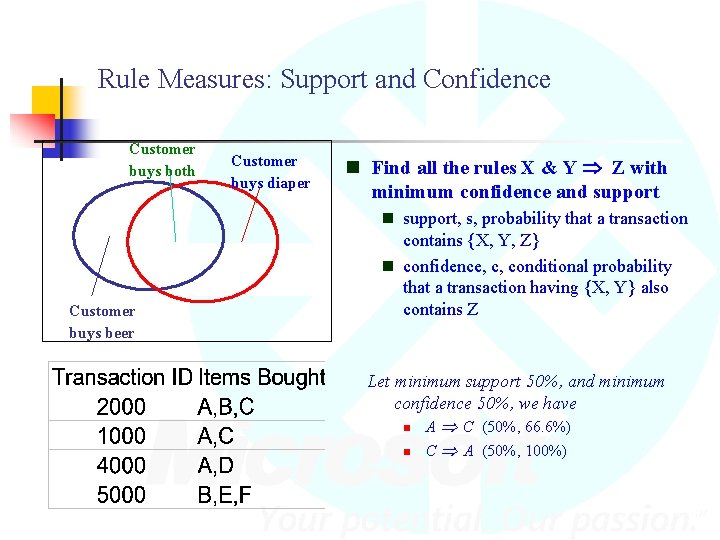

Rule Measures: Support and Confidence Customer buys both Customer buys beer Customer buys diaper n Find all the rules X & Y Z with minimum confidence and support n support, s, probability that a transaction contains {X, Y, Z} n confidence, c, conditional probability that a transaction having {X, Y} also contains Z Let minimum support 50%, and minimum confidence 50%, we have n n A C (50%, 66. 6%) C A (50%, 100%)

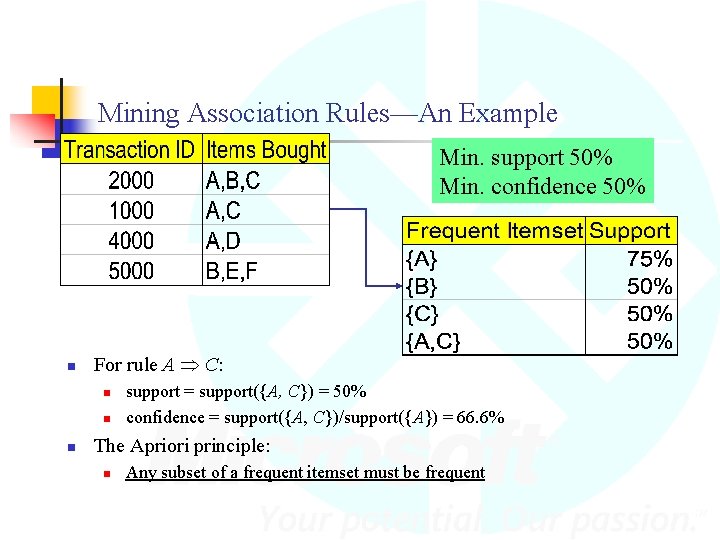

Mining Association Rules—An Example Min. support 50% Min. confidence 50% n For rule A C: n n n support = support({A, C}) = 50% confidence = support({A, C})/support({A}) = 66. 6% The Apriori principle: n Any subset of a frequent itemset must be frequent

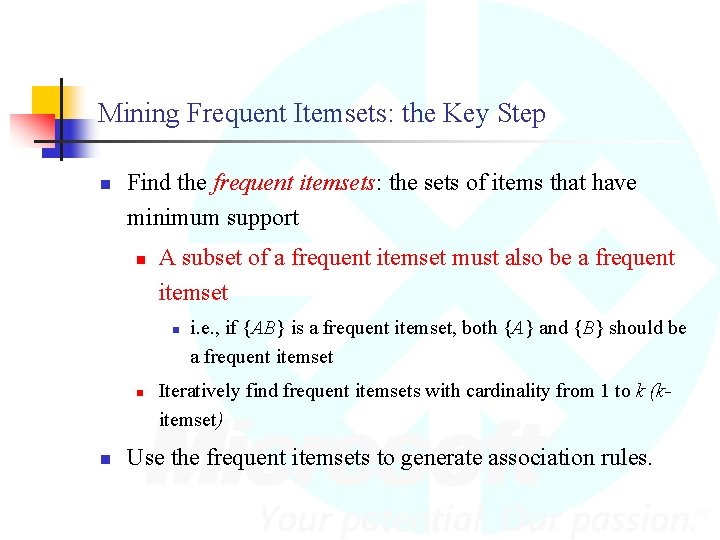

Mining Frequent Itemsets: the Key Step n Find the frequent itemsets: the sets of items that have minimum support n A subset of a frequent itemset must also be a frequent itemset n n n i. e. , if {AB} is a frequent itemset, both {A} and {B} should be a frequent itemset Iteratively find frequent itemsets with cardinality from 1 to k (kitemset) Use the frequent itemsets to generate association rules.

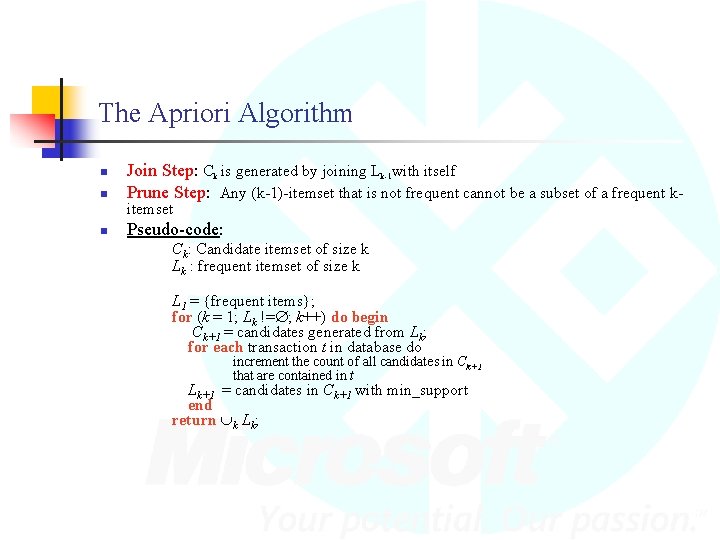

The Apriori Algorithm n Join Step: Ck is generated by joining Lk-1 with itself Prune Step: Any (k-1)-itemset that is not frequent cannot be a subset of a frequent k- n Pseudo-code: n itemset Ck: Candidate itemset of size k Lk : frequent itemset of size k L 1 = {frequent items}; for (k = 1; Lk != ; k++) do begin Ck+1 = candidates generated from Lk; for each transaction t in database do increment the count of all candidates in Ck+1 that are contained in t Lk+1 = candidates in Ck+1 with min_support end return k Lk;

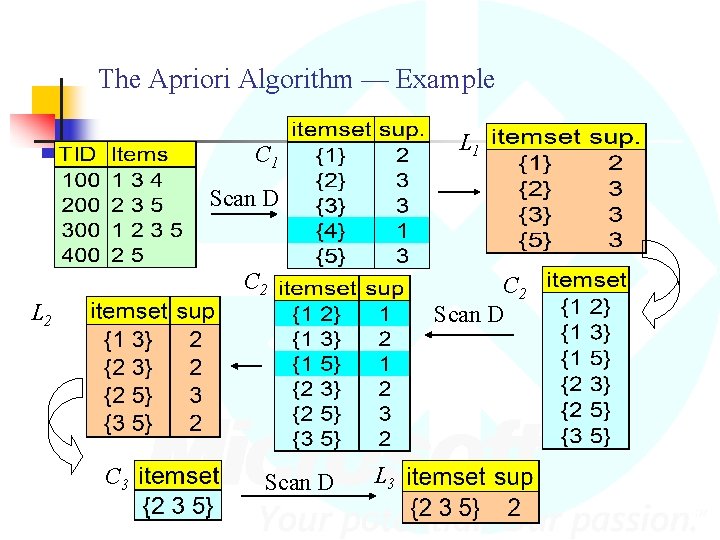

The Apriori Algorithm — Example L 1 C 1 Scan D C 2 Scan D L 2 C 3 Scan D L 3

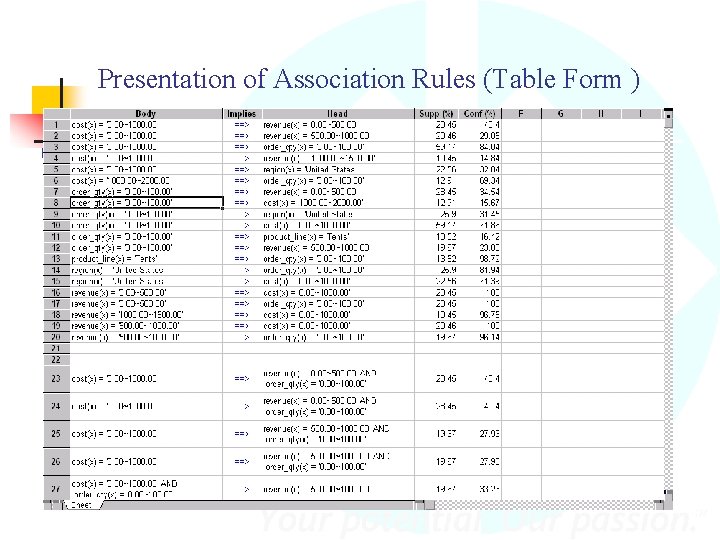

Presentation of Association Rules (Table Form )

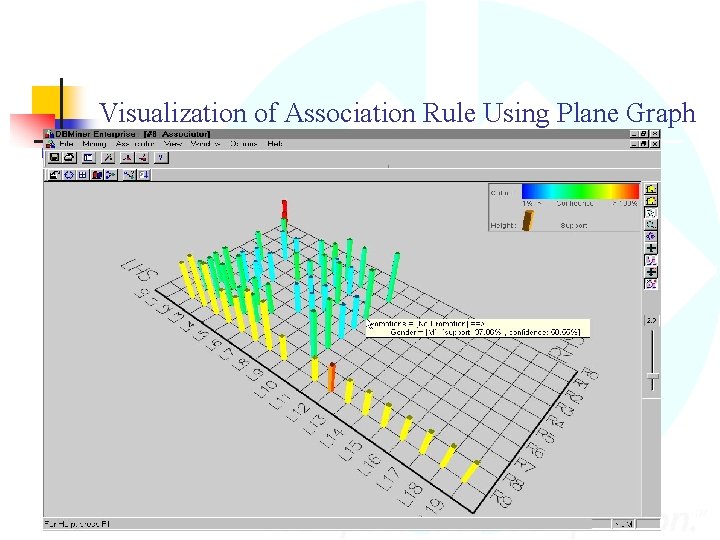

Visualization of Association Rule Using Plane Graph

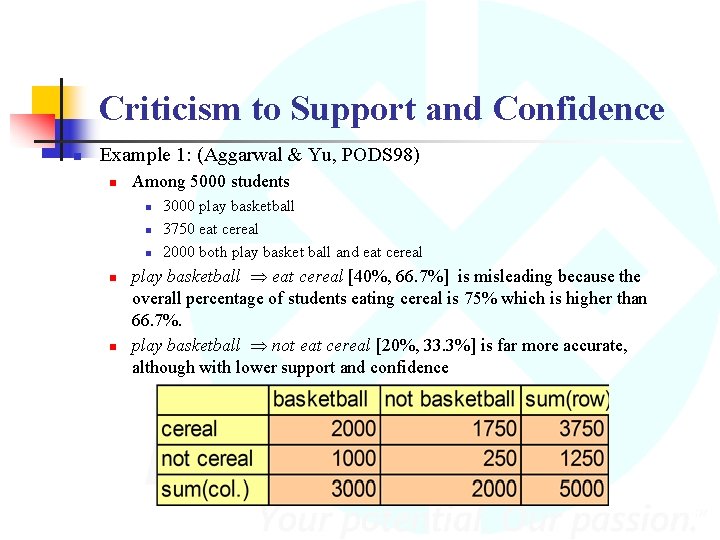

Criticism to Support and Confidence n Example 1: (Aggarwal & Yu, PODS 98) n Among 5000 students n n n 3000 play basketball 3750 eat cereal 2000 both play basket ball and eat cereal play basketball eat cereal [40%, 66. 7%] is misleading because the overall percentage of students eating cereal is 75% which is higher than 66. 7%. play basketball not eat cereal [20%, 33. 3%] is far more accurate, although with lower support and confidence

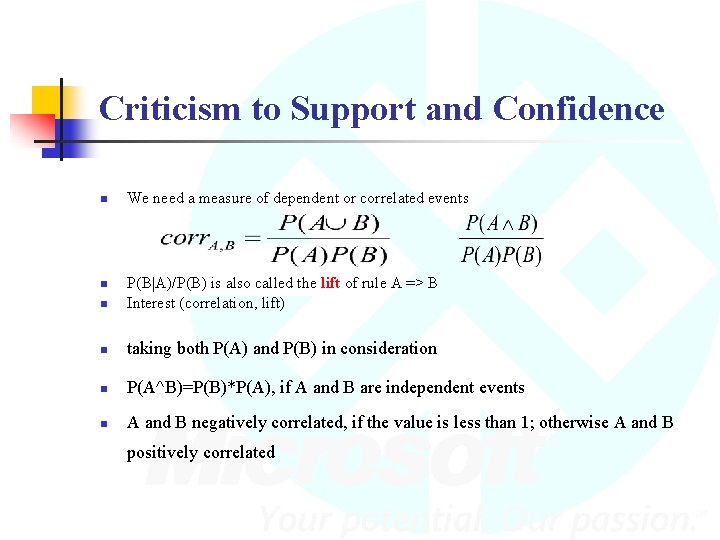

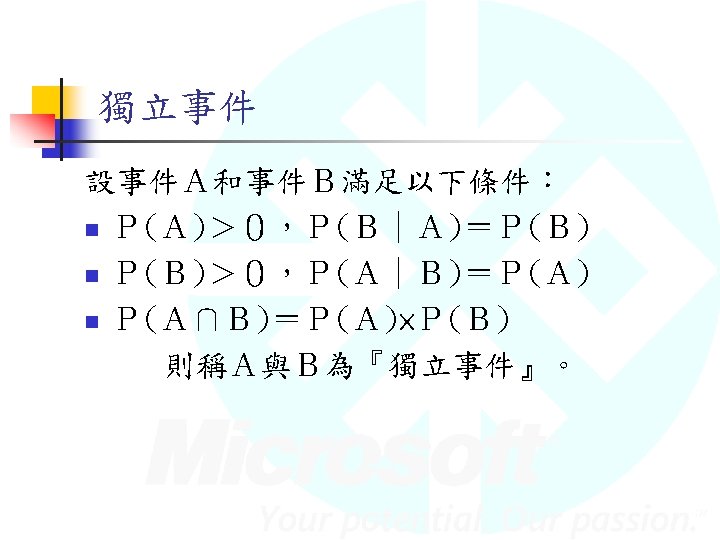

Criticism to Support and Confidence n We need a measure of dependent or correlated events n P(B|A)/P(B) is also called the lift of rule A => B Interest (correlation, lift) n taking both P(A) and P(B) in consideration n P(A^B)=P(B)*P(A), if A and B are independent events n A and B negatively correlated, if the value is less than 1; otherwise A and B n positively correlated

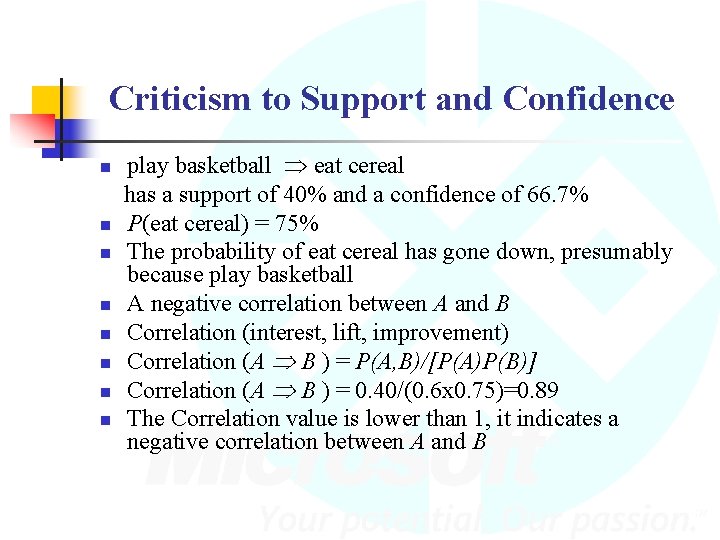

Criticism to Support and Confidence n n n n play basketball eat cereal has a support of 40% and a confidence of 66. 7% P(eat cereal) = 75% The probability of eat cereal has gone down, presumably because play basketball A negative correlation between A and B Correlation (interest, lift, improvement) Correlation (A B ) = P(A, B)/[P(A)P(B)] Correlation (A B ) = 0. 40/(0. 6 x 0. 75)=0. 89 The Correlation value is lower than 1, it indicates a negative correlation between A and B

Measuring Quality of Rules Support: s(A B) = P(A, B) n Confidence: a(A B) = P(B|A) n Correlation: (interest, lift, improvement) Correlation (A B)=P(A, B)/[P(A)P(B)] n

Summary n Association rule mining n n probably the most significant contribution from the database community in KDD A large number of papers have been published n Many interesting issues have been explored n An interesting research direction n Association analysis in other types of data: spatial data, multimedia data, time series data, etc.

Naïve Bayes Classifier

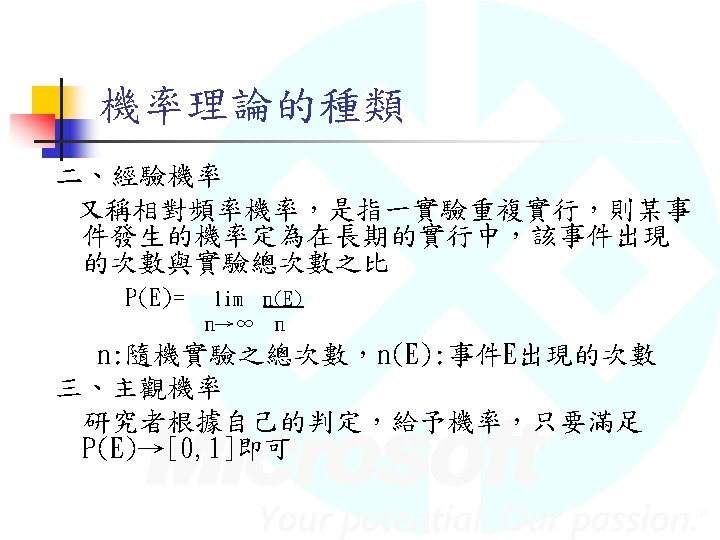

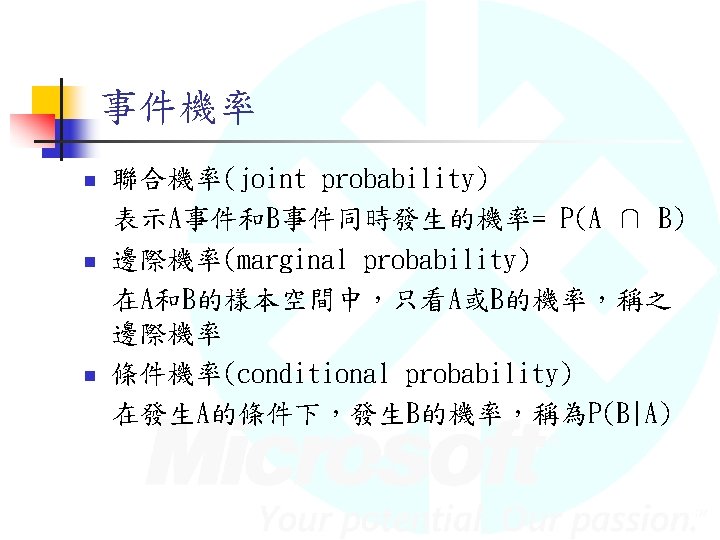

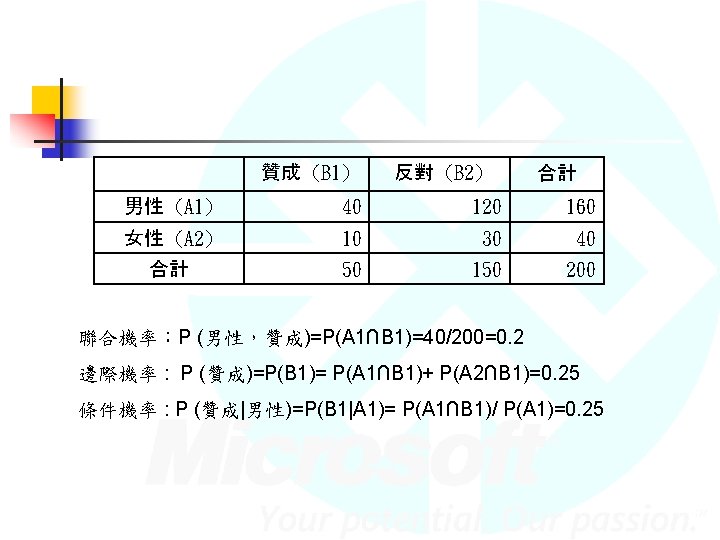

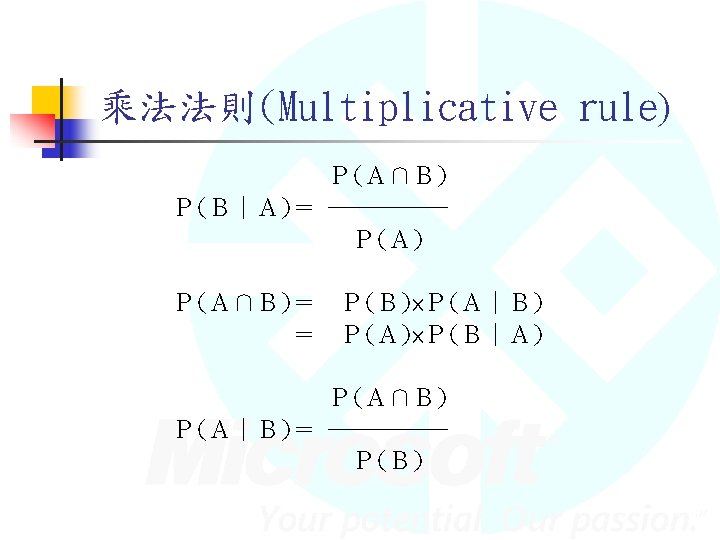

Agenda n n 何謂Naïve Bayes Classifer 機率和貝氏定理 Naïve Bayes Classifer 問題與討論

何謂Naïve Bayes Classifer

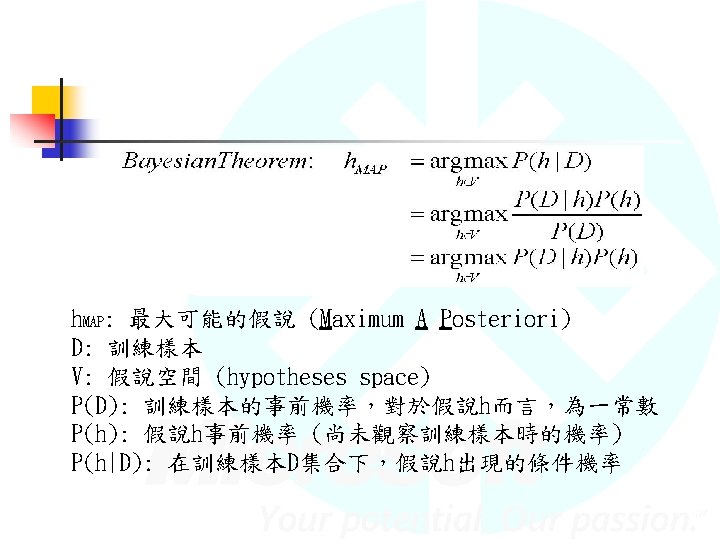

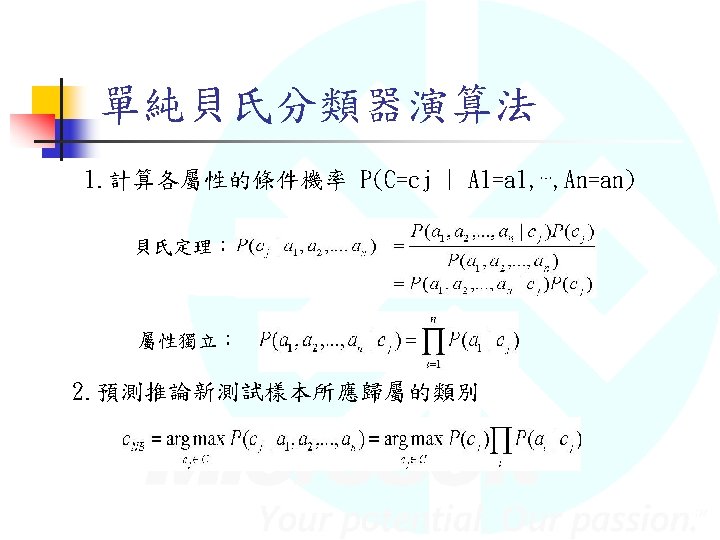

Naïve Bayes Classifer

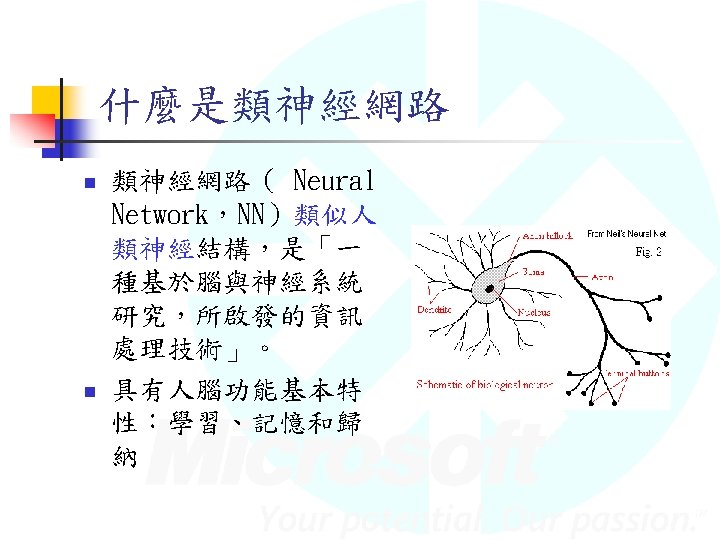

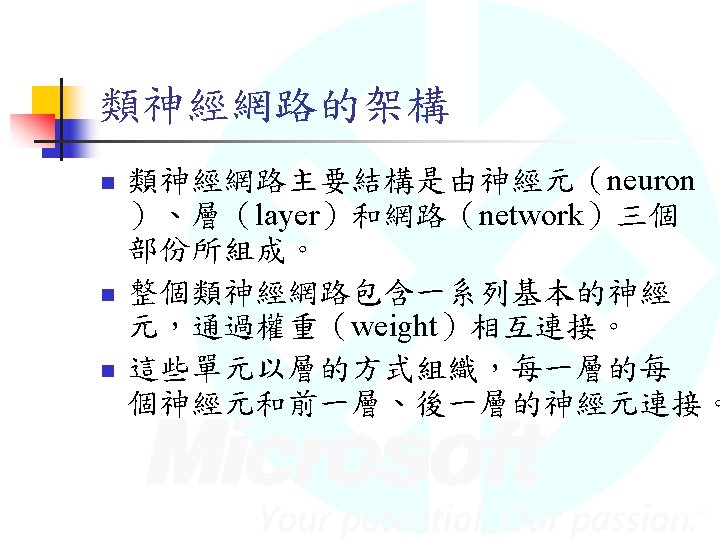

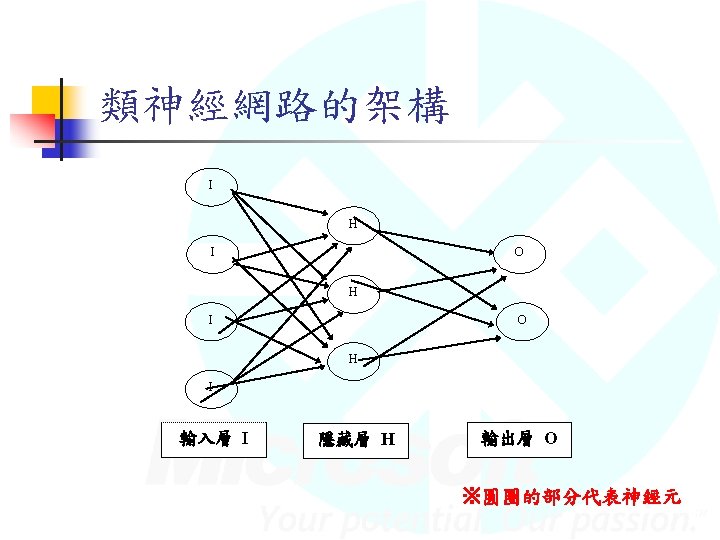

Neural Network

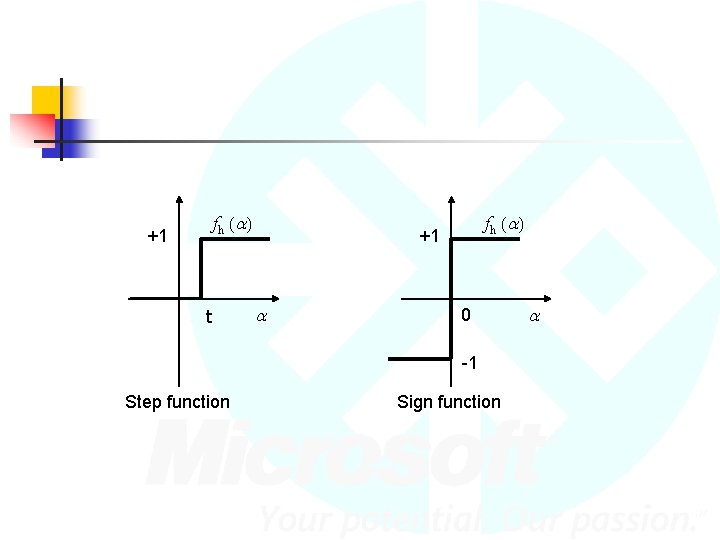

f h (a ) +1 t f h (a ) +1 a 0 -1 Step function Sign function a

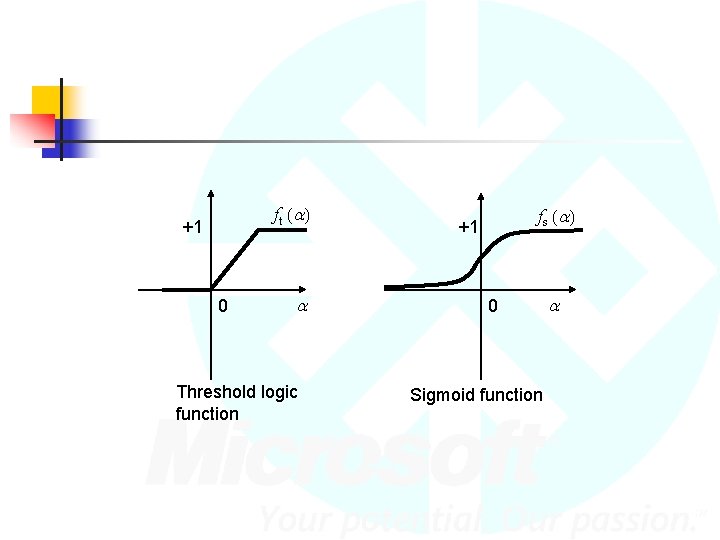

f t (a ) +1 0 a Threshold logic function f s (a ) +1 0 Sigmoid function a

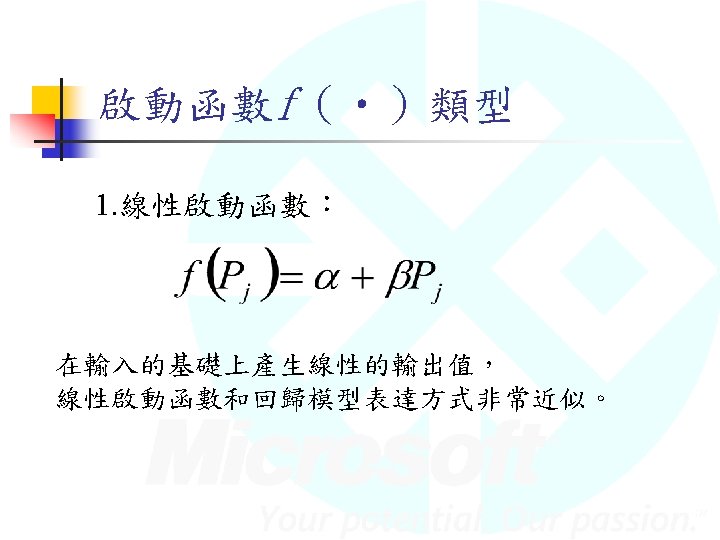

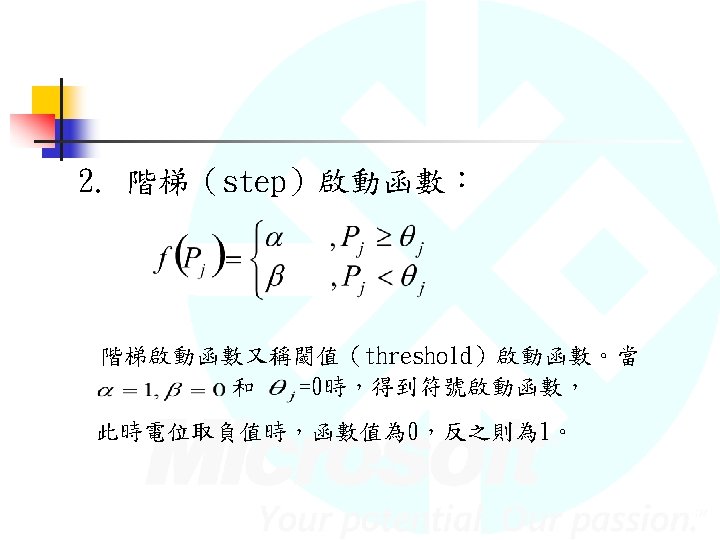

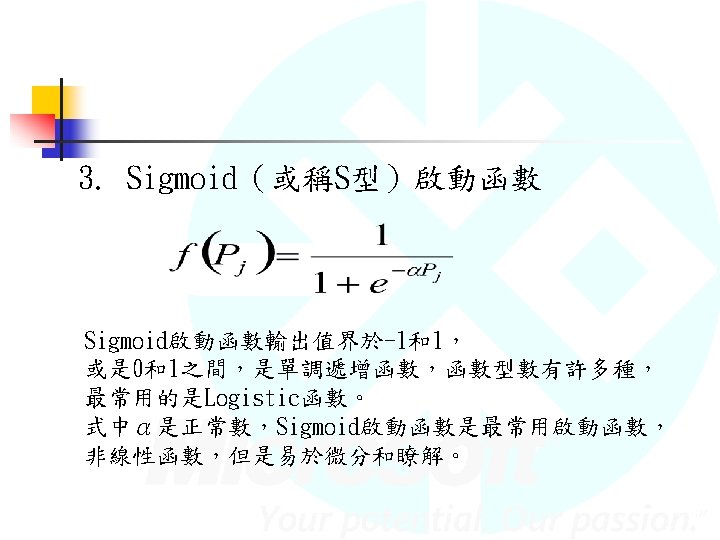

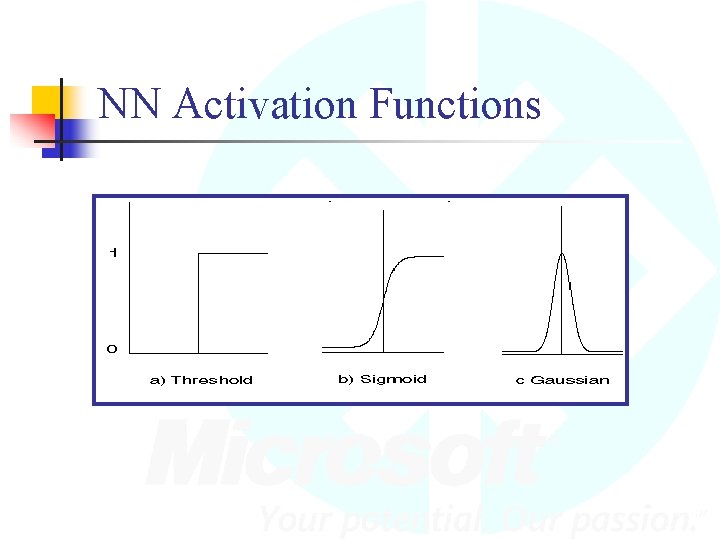

NN Activation Functions

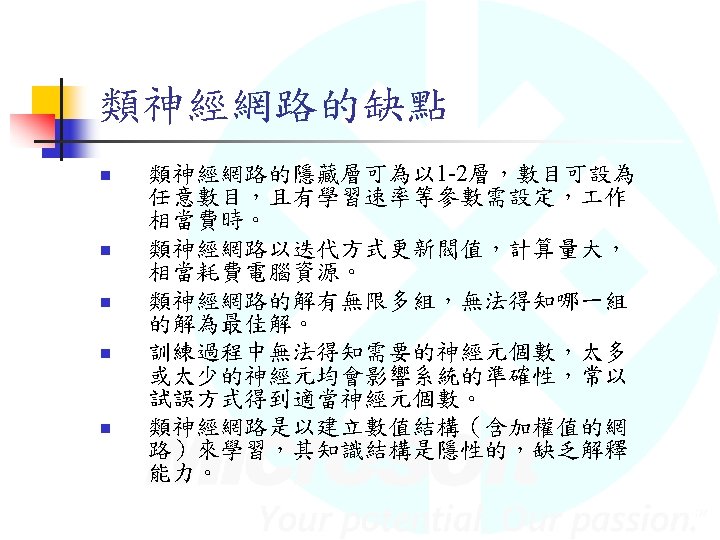

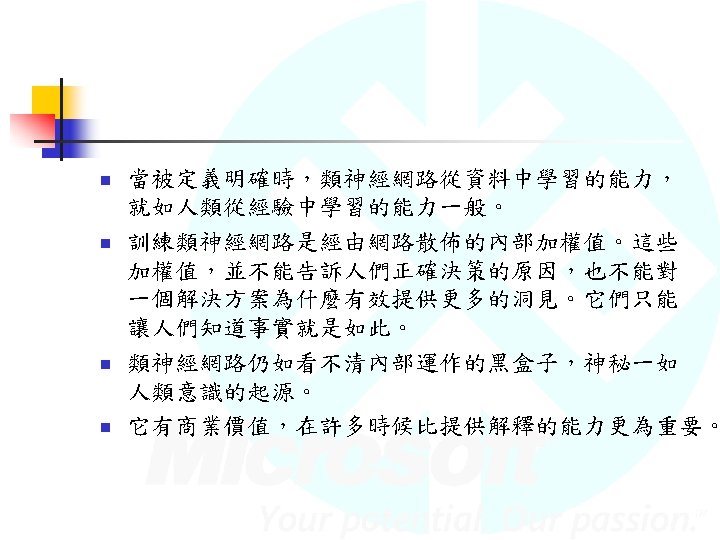

- Slides: 86