Association Rules Market Baskets Frequent Itemsets APriori Algorithm

Association Rules Market Baskets Frequent Itemsets A-Priori Algorithm 1

The Market-Basket Model u. A large set of items, e. g. , things sold in a supermarket. u. A large set of baskets, each of which is a small set of the items, e. g. , the things one customer buys on one day. 2

Market-Baskets – (2) u. Really a general many-many mapping (association) between two kinds of things. w But we ask about connections among “items, ” not “baskets. ” u. The technology focuses on common events, not rare events. 3

Support u. Simplest question: find sets of items that appear “frequently” in the baskets. u. Support for itemset I = the number of baskets containing all items in I. w Sometimes given as a percentage. u. Given a support threshold s, sets of items that appear in at least s baskets are called frequent itemsets. 4

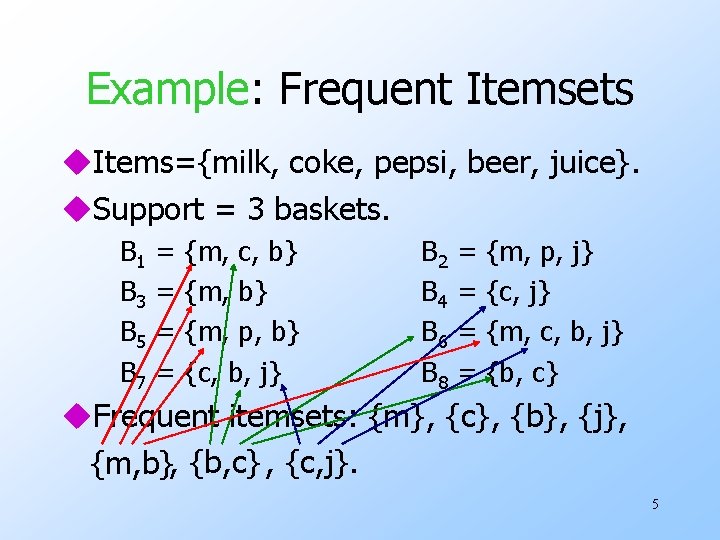

Example: Frequent Itemsets u. Items={milk, coke, pepsi, beer, juice}. u. Support = 3 baskets. B 1 B 3 B 5 B 7 = = {m, c, b} {m, p, b} {c, b, j} B 2 B 4 B 6 B 8 = = {m, p, j} {c, j} {m, c, b, j} {b, c} u. Frequent itemsets: {m}, {c}, {b}, {j}, {m, b}, {b, c} , {c, j}. 5

Applications – (1) u. Items = products; baskets = sets of products someone bought in one trip to the store. u. Example application: given that many people buy beer and diapers together: w Run a sale on diapers; raise price of beer. u. Only useful if many buy diapers & beer. 6

Applications – (2) u. Baskets = sentences; items = documents containing those sentences. u. Items that appear together too often could represent plagiarism. u. Notice items do not have to be “in” baskets. 7

Applications – (3) u. Baskets = Web pages; items = words. u. Unusual words appearing together in a large number of documents, e. g. , “Brad” and “Angelina, ” may indicate an interesting relationship. 8

Scale of the Problem u. Wal. Mart sells 100, 000 items and can store billions of baskets. u. The Web has billions of words and many billions of pages. 12

Association Rules u. If-then rules about the contents of baskets. u{i 1, i 2, …, ik} → j means: “if a basket contains all of i 1, …, ik then it is likely to contain j. ” u. Confidence of this association rule is the probability of j given i 1, …, ik. 13

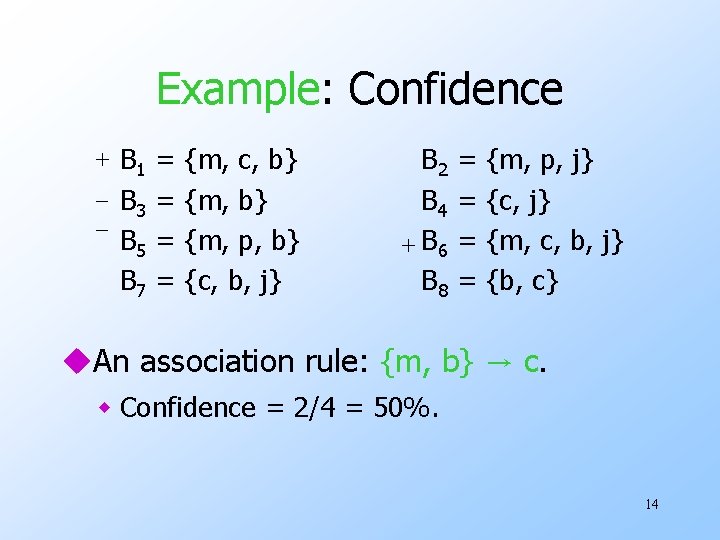

Example: Confidence + B 1 = {m, c, b} _ B 3 = {m, b} _ B 5 = {m, p, b} B 7 = {c, b, j} B 2 B 4 + B 6 B 8 = = {m, p, j} {c, j} {m, c, b, j} {b, c} u. An association rule: {m, b} → c. w Confidence = 2/4 = 50%. 14

Finding Association Rules u. Question: “find all association rules with support ≥ s and confidence ≥ c. ” w Note: “support” of an association rule is the support of the set of items on the left. u. Hard part: finding the frequent itemsets. w Note: if {i 1, i 2, …, ik} → j has high support and confidence, then both {i 1, i 2, …, ik} and {i 1, i 2, …, ik , j } will be “frequent. ” 15

Computation Model u. Typically, data is kept in flat files rather than in a database system. w Stored on disk. w Stored basket-by-basket. w Expand baskets into pairs, triples, etc. as you read baskets. • Use k nested loops to generate all sets of size k. 16

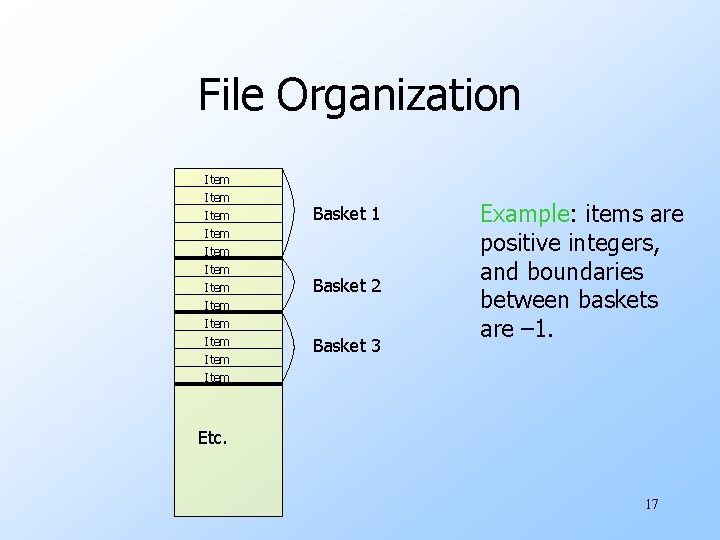

File Organization Item Item Item Basket 1 Basket 2 Basket 3 Example: items are positive integers, and boundaries between baskets are – 1. Etc. 17

Computation Model – (2) u. The true cost of mining disk-resident data is usually the number of disk I/O’s. u. In practice, association-rule algorithms read the data in passes – all baskets read in turn. u. Thus, we measure the cost by the number of passes an algorithm takes. 18

Main-Memory Bottleneck u. For many frequent-itemset algorithms, main memory is the critical resource. w As we read baskets, we need to count something, e. g. , occurrences of pairs. w The number of different things we can count is limited by main memory. w Swapping counts in/out is a disaster (why? ). 19

Finding Frequent Pairs u. The hardest problem often turns out to be finding the frequent pairs. w Why? Often frequent pairs are common, frequent triples are rare. • Why? Probability of being frequent drops exponentially with size; number of sets grows more slowly with size. u. We’ll concentrate on pairs, then extend to larger sets. 20

Naïve Algorithm u. Read file once, counting in main memory the occurrences of each pair. w From each basket of n items, generate its n (n -1)/2 pairs by two nested loops. u. Fails if (#items)2 exceeds main memory. w Remember: #items can be 100 K (Wal. Mart) or 10 B (Web pages). 21

Example: Counting Pairs u. Suppose 105 items. u. Suppose counts are 4 -byte integers. u. Number of pairs of items: 105(105 -1)/2 = 5*109 (approximately). u. Therefore, 2*1010 (20 gigabytes) of main memory needed. 22

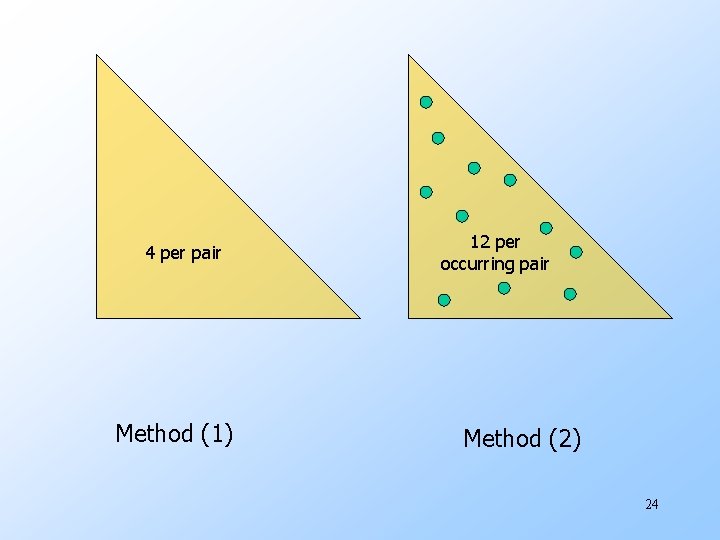

Details of Main-Memory Counting u Two approaches: 1. Count all pairs, using a triangular matrix. 2. Keep a table of triples [i, j, c] = “the count of the pair of items {i, j } is c. ” u (1) requires only 4 bytes/pair. w Note: always assume integers are 4 bytes. u (2) requires 12 bytes, but only for those pairs with count > 0. 23

4 per pair Method (1) 12 per occurring pair Method (2) 24

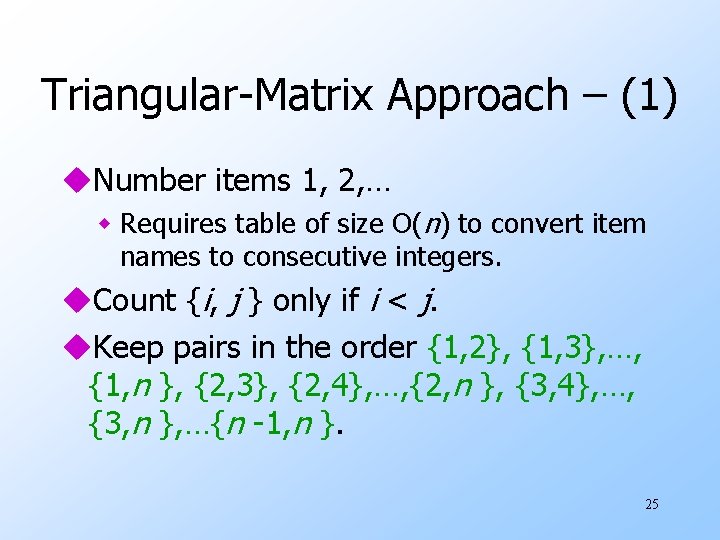

Triangular-Matrix Approach – (1) u. Number items 1, 2, … w Requires table of size O(n) to convert item names to consecutive integers. u. Count {i, j } only if i < j. u. Keep pairs in the order {1, 2}, {1, 3}, …, {1, n }, {2, 3}, {2, 4}, …, {2, n }, {3, 4}, …, {3, n }, …{n -1, n }. 25

Triangular-Matrix Approach – (2) u. Find pair {i, j } at the position (i – 1)(n –i /2) + j – i. u. Total number of pairs n (n – 1)/2; total bytes about 2 n 2. 26

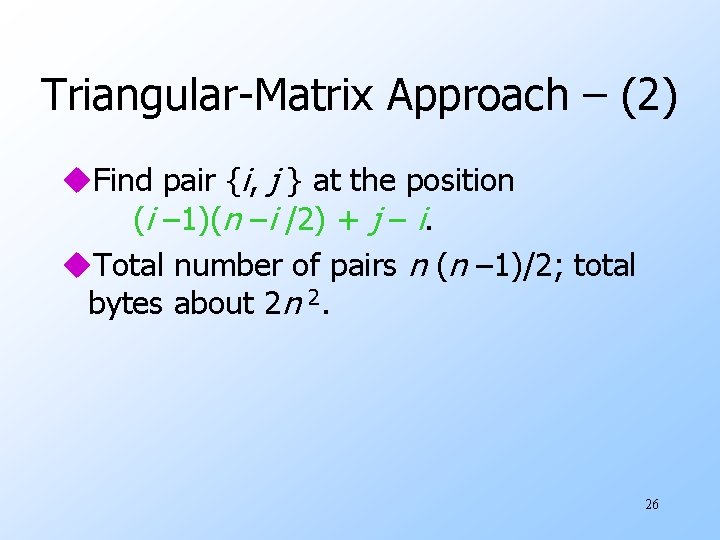

Details of Approach #2 u. Total bytes used is about 12 p, where p is the number of pairs that actually occur. w Beats triangular matrix if at most 1/3 of possible pairs actually occur. u. May require extra space for retrieval structure, e. g. , a hash table. 27

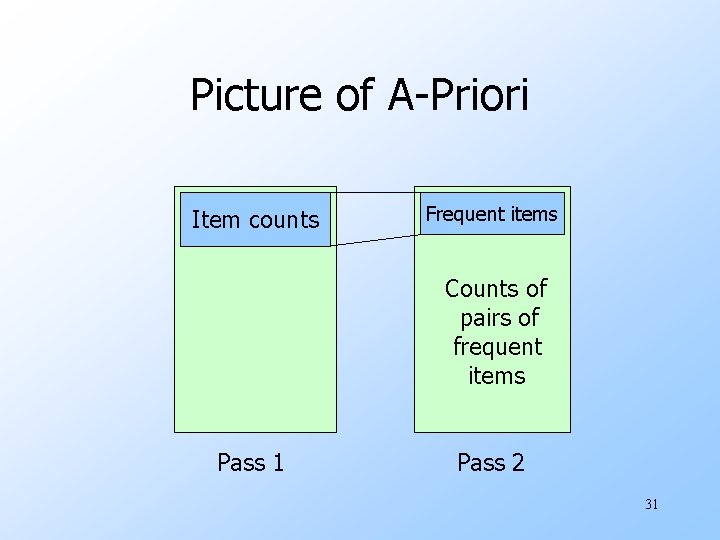

A-Priori Algorithm for Pairs u. A two-pass approach called a-priori limits the need for main memory. u. Key idea: monotonicity : if a set of items appears at least s times, so does every subset. w Contrapositive for pairs: if item i does not appear in s baskets, then no pair including i can appear in s baskets. 28

A-Priori Algorithm – (2) u. Pass 1: Read baskets and count in main memory the occurrences of each item. w Requires only memory proportional to #items. u. Items that appear at least s times are the frequent items. 29

A-Priori Algorithm – (3) u. Pass 2: Read baskets again and count in main memory only those pairs both of which were found in Pass 1 to be frequent. w Requires memory proportional to square of frequent items only (for counts), plus a list of the frequent items (so you know what must be counted). 30

Picture of A-Priori Item counts Frequent items Counts of pairs of frequent items Pass 1 Pass 2 31

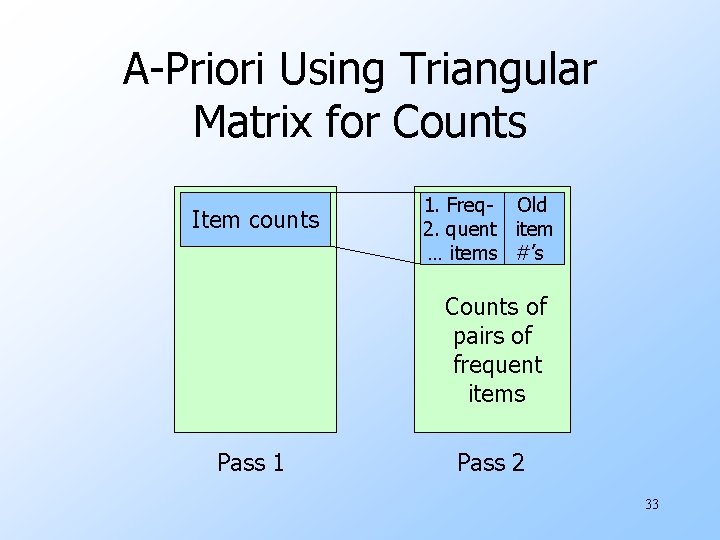

Detail for A-Priori u. You can use the triangular matrix method with n = number of frequent items. w May save space compared with storing triples. u. Trick: number frequent items 1, 2, … and keep a table relating new numbers to original item numbers. 32

A-Priori Using Triangular Matrix for Counts Item counts 1. Freq- Old 2. quent item … items #’s Counts of pairs of frequent items Pass 1 Pass 2 33

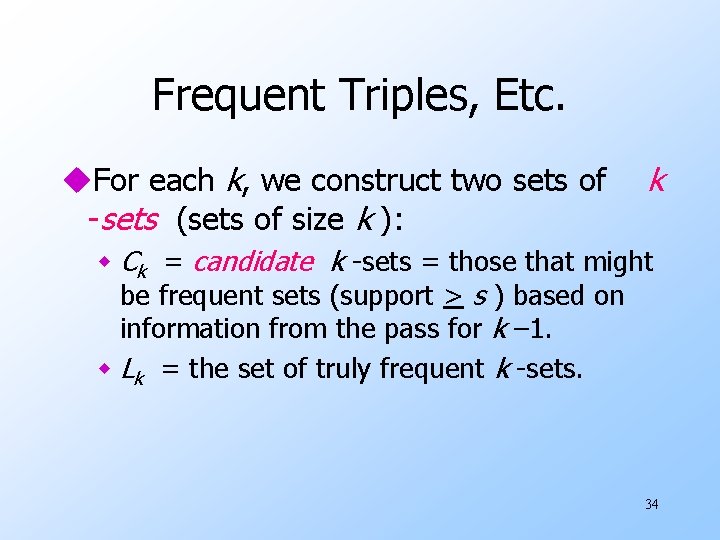

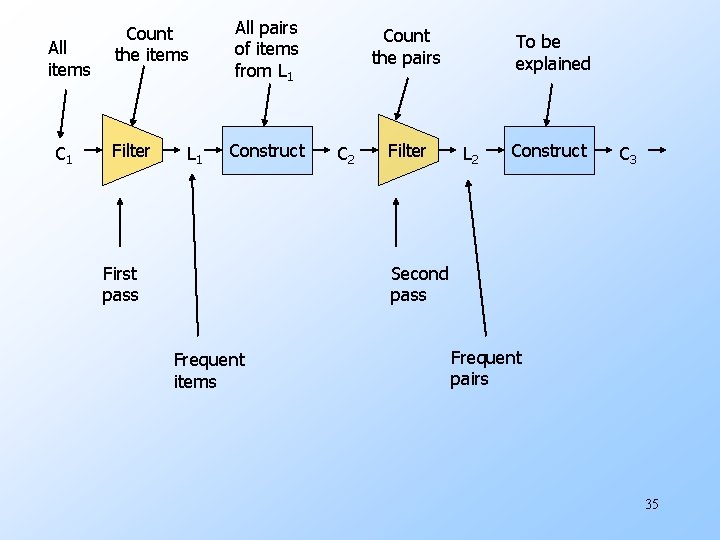

Frequent Triples, Etc. u. For each k, we construct two sets of -sets (sets of size k ): k w Ck = candidate k -sets = those that might be frequent sets (support > s ) based on information from the pass for k – 1. w Lk = the set of truly frequent k -sets. 34

All items C 1 Count the items Filter L 1 All pairs of items from L 1 Construct First pass Count the pairs C 2 Filter To be explained L 2 Construct C 3 Second pass Frequent items Frequent pairs 35

A-Priori for All Frequent Itemsets u. One pass for each k. u. Needs room in main memory to count each candidate k -set. u. For typical market-basket data and reasonable support (e. g. , 1%), k = 2 requires the most memory. 36

Frequent Itemsets – (2) u. C 1 = all items u. In general, Lk = members of Ck with support ≥ s. u. Ck +1 = (k +1) -sets, each k of which is in Lk. 37

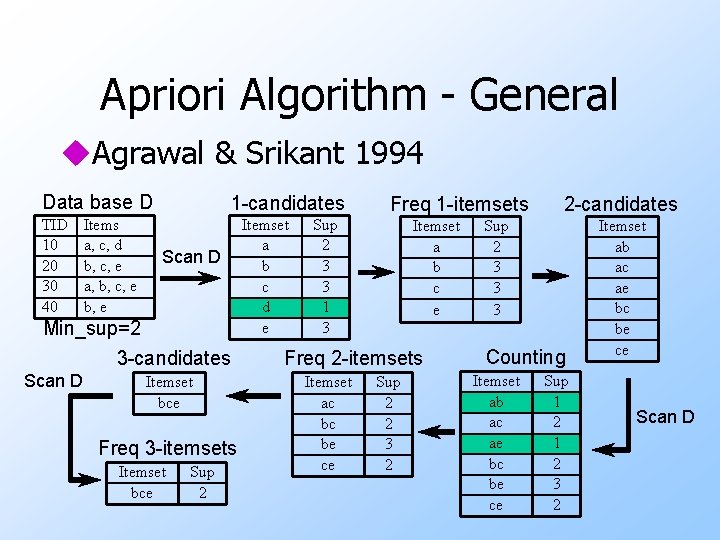

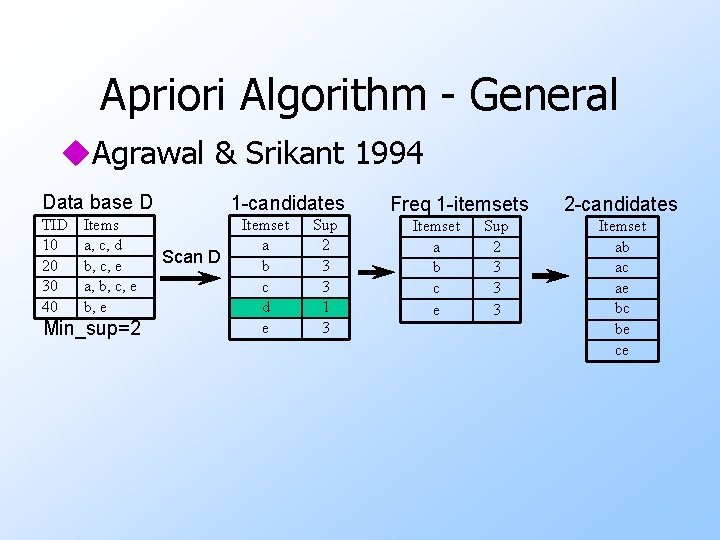

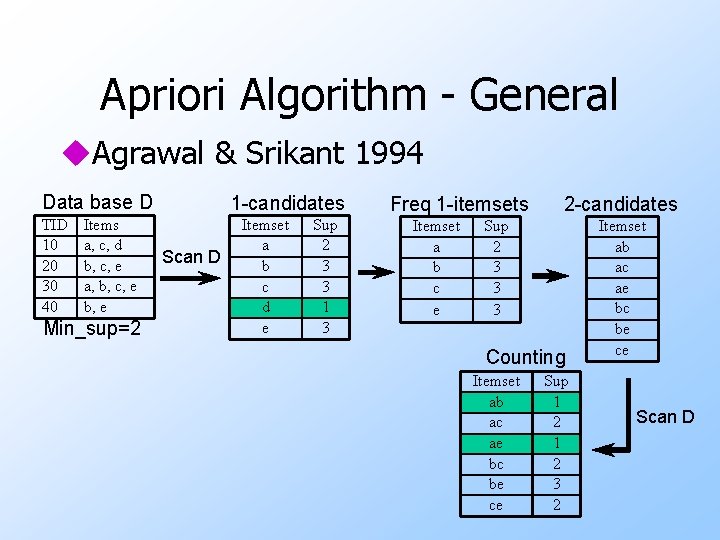

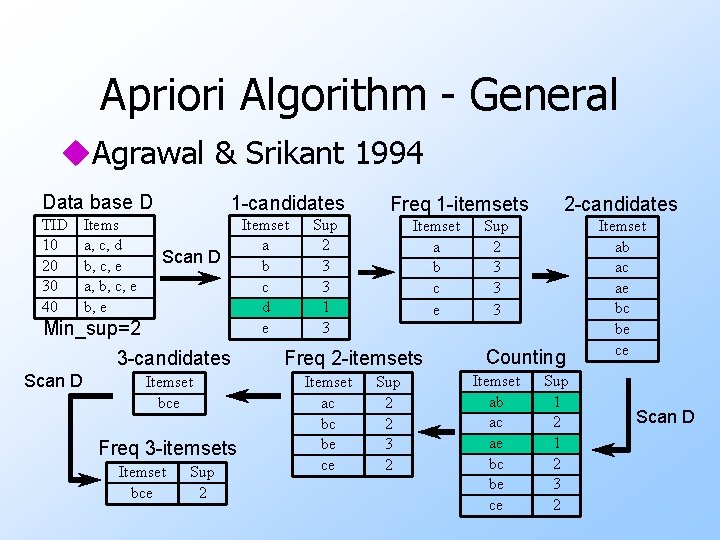

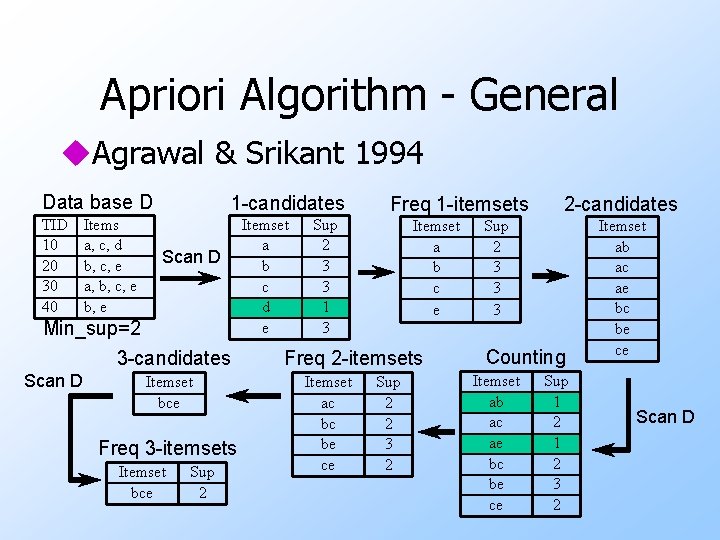

Apriori Algorithm - General u. Agrawal & Srikant 1994 Data base D TID 10 20 30 40 Items a, c, d b, c, e a, b, c, e b, e Min_sup=2 Scan D

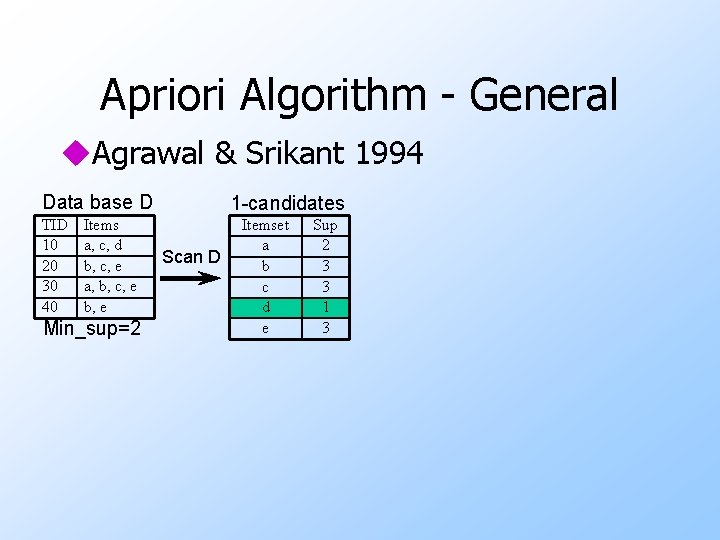

Apriori Algorithm - General u. Agrawal & Srikant 1994 Data base D TID 10 20 30 40 Items a, c, d b, c, e a, b, c, e b, e Min_sup=2 1 -candidates Scan D Itemset a b c d e Sup 2 3 3 1 3

Apriori Algorithm - General u. Agrawal & Srikant 1994 Data base D TID 10 20 30 40 Items a, c, d b, c, e a, b, c, e b, e 1 -candidates Scan D Min_sup=2 3 -candidates Scan D Itemset bce Freq 3 -itemsets Itemset bce Sup 2 Itemset a b c d e Sup 2 3 3 1 3 Freq 1 -itemsets Itemset a b c e Freq 2 -itemsets Itemset ac bc be ce Sup 2 2 3 2 2 -candidates Sup 2 3 3 3 Counting Itemset ab ac ae bc be ce Sup 1 2 3 2 Itemset ab ac ae bc be ce Scan D

Apriori Algorithm - General u. Agrawal & Srikant 1994 Data base D TID 10 20 30 40 Items a, c, d b, c, e a, b, c, e b, e Min_sup=2 1 -candidates Scan D Itemset a b c d e Sup 2 3 3 1 3 Freq 1 -itemsets Itemset a b c e Sup 2 3 3 3 2 -candidates Itemset ab ac ae bc be ce

Apriori Algorithm - General u. Agrawal & Srikant 1994 Data base D TID 10 20 30 40 Items a, c, d b, c, e a, b, c, e b, e Min_sup=2 1 -candidates Scan D Itemset a b c d e Sup 2 3 3 1 3 Freq 1 -itemsets Itemset a b c e 2 -candidates Sup 2 3 3 3 Counting Itemset ab ac ae bc be ce Sup 1 2 3 2 Itemset ab ac ae bc be ce Scan D

Apriori Algorithm - General u. Agrawal & Srikant 1994 Data base D TID 10 20 30 40 Items a, c, d b, c, e a, b, c, e b, e 1 -candidates Scan D Min_sup=2 3 -candidates Scan D Itemset bce Freq 3 -itemsets Itemset bce Sup 2 Itemset a b c d e Sup 2 3 3 1 3 Freq 1 -itemsets Itemset a b c e Freq 2 -itemsets Itemset ac bc be ce Sup 2 2 3 2 2 -candidates Sup 2 3 3 3 Counting Itemset ab ac ae bc be ce Sup 1 2 3 2 Itemset ab ac ae bc be ce Scan D

Apriori Algorithm - General u. Agrawal & Srikant 1994 Data base D TID 10 20 30 40 Items a, c, d b, c, e a, b, c, e b, e 1 -candidates Scan D Min_sup=2 3 -candidates Scan D Itemset bce Freq 3 -itemsets Itemset bce Sup 2 Itemset a b c d e Sup 2 3 3 1 3 Freq 1 -itemsets Itemset a b c e Freq 2 -itemsets Itemset ac bc be ce Sup 2 2 3 2 2 -candidates Sup 2 3 3 3 Counting Itemset ab ac ae bc be ce Sup 1 2 3 2 Itemset ab ac ae bc be ce Scan D

Important Details of Apriori u. How to generate candidates? w Step 1: self-joining Lk w Step 2: pruning u. How to count supports of candidates?

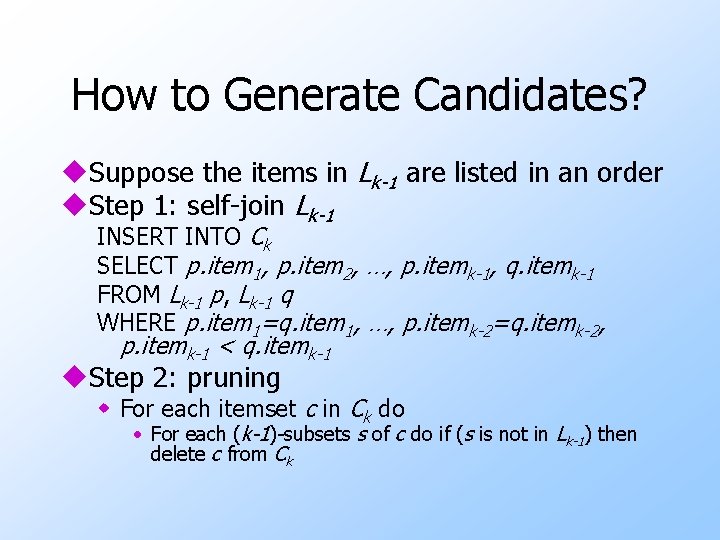

How to Generate Candidates? u. Suppose the items in Lk-1 are listed in an order u. Step 1: self-join Lk-1 INSERT INTO Ck SELECT p. item 1, p. item 2, …, p. itemk-1, q. itemk-1 FROM Lk-1 p, Lk-1 q WHERE p. item 1=q. item 1, …, p. itemk-2=q. itemk-2, p. itemk-1 < q. itemk-1 u. Step 2: pruning w For each itemset c in Ck do • For each (k-1)-subsets s of c do if (s is not in Lk-1) then delete c from Ck

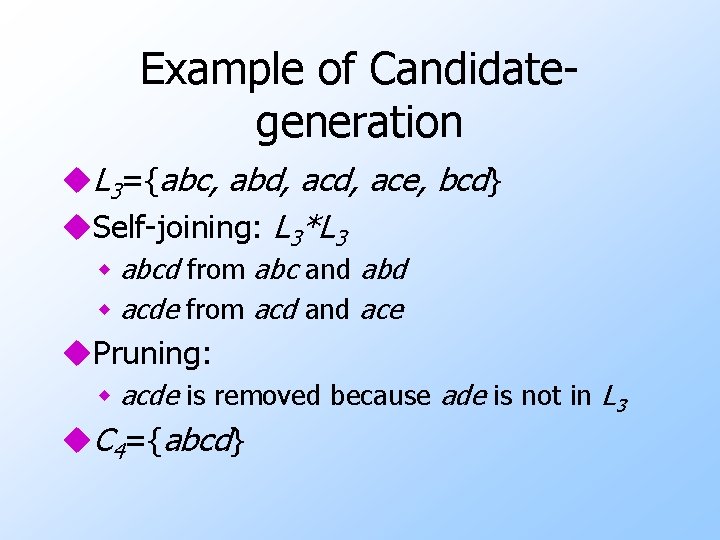

Example of Candidategeneration u. L 3={abc, abd, ace, bcd} u. Self-joining: L 3*L 3 w abcd from abc and abd w acde from acd and ace u. Pruning: w acde is removed because ade is not in L 3 u. C 4={abcd}

How to Count Supports of Candidates? u. Why counting supports of candidates a problem? w The total number of candidates can be very huge w One transaction may contain many candidates u. Method: w Candidate itemsets are stored in a hash-tree w Leaf node of hash-tree contains a list of itemsets and counts w Interior node contains a hash table w Subset function: finds all the candidates contained in a transaction

Apriori: Candidate Generationand-test u. Any subset of a frequent itemset must be also frequent — an anti-monotone property w A transaction containing {beer, diaper, nuts} also contains {beer, diaper} w {beer, diaper, nuts} is frequent {beer, diaper} must also be frequent u. No superset of any infrequent itemset should be generated or tested w Many item combinations can be pruned

The Apriori Algorithm u. Ck: Candidate itemset of size k u. Lk : frequent itemset of size k u. L 1 = {frequent items}; ufor (k = 1; Lk != ; k++) do w Ck+1 = candidates generated from Lk; w for each transaction t in database do increment the count of all candidates in Ck+1 that are contained in t w Lk+1 = candidates in Ck+1 with min_support ureturn k Lk;

Challenges of FPM u. Challenges w Multiple scans of transaction database w Huge number of candidates w Tedious workload of support counting for candidates u. Improving Apriori: general ideas w Reduce number of transaction database scans w Shrink number of candidates w Facilitate support counting of candidates

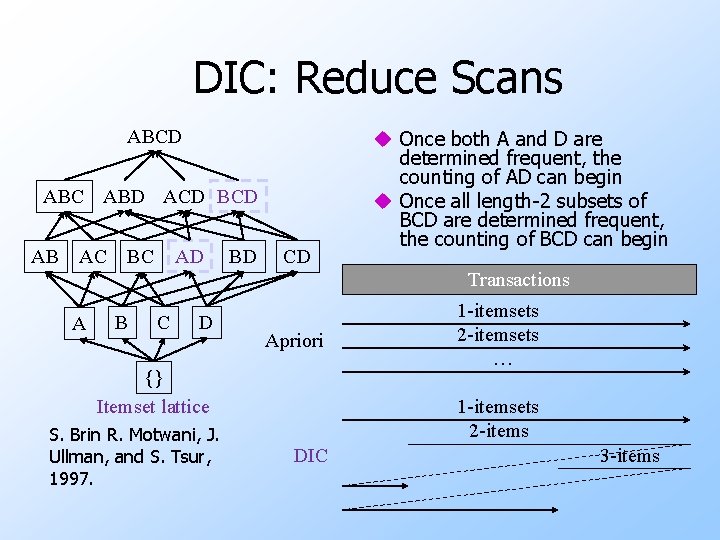

DIC: Reduce Scans ABCD ABC ABD ACD BCD AB AC BC AD BD CD u Once both A and D are determined frequent, the counting of AD can begin u Once all length-2 subsets of BCD are determined frequent, the counting of BCD can begin Transactions A B C D Apriori {} Itemset lattice S. Brin R. Motwani, J. Ullman, and S. Tsur, 1997. 1 -itemsets 2 -itemsets … 1 -itemsets 2 -items DIC 3 -items

Partition: Scan Database Only Twice u. Partition the database into n partitions u. Itemset X is frequent in at least one partition w Scan 1: partition database and find local frequent patterns w Scan 2: consolidate global frequent patterns u. A. Savasere, E. Omiecinski, and S. Navathe, 1995

Sampling for Frequent Patterns u. Select a sample of original database, mine frequent patterns within sample using Apriori u. Scan database once to verify frequent itemsets found in sample, only borders of closure of frequent patterns are checked w Example: check abcd instead of ab, ac, …, etc. u. Scan database again to find missed frequent patterns u. H. Toivonen, 1996

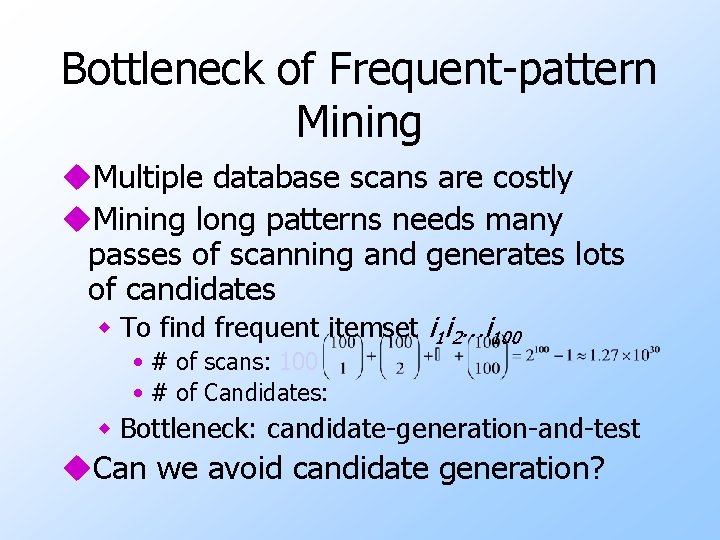

Bottleneck of Frequent-pattern Mining u. Multiple database scans are costly u. Mining long patterns needs many passes of scanning and generates lots of candidates w To find frequent itemset i 1 i 2…i 100 • # of scans: 100 • # of Candidates: w Bottleneck: candidate-generation-and-test u. Can we avoid candidate generation?

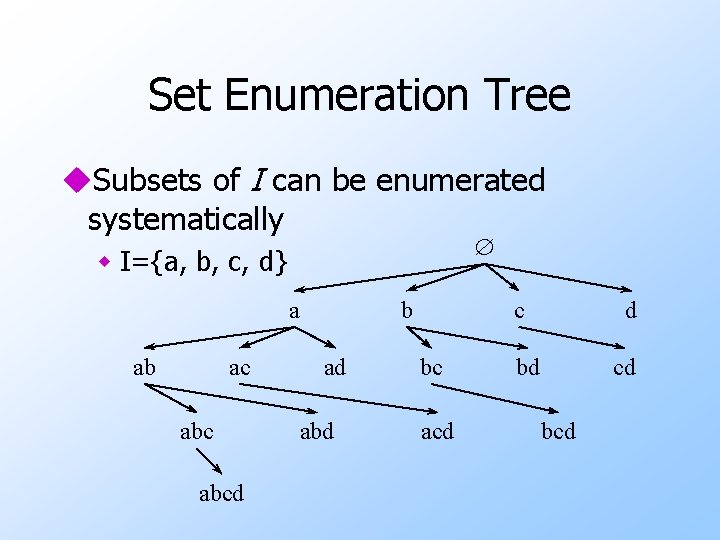

Set Enumeration Tree u. Subsets of I can be enumerated systematically w I={a, b, c, d} a ab ac abcd b ad abd c bc acd d bd cd bcd

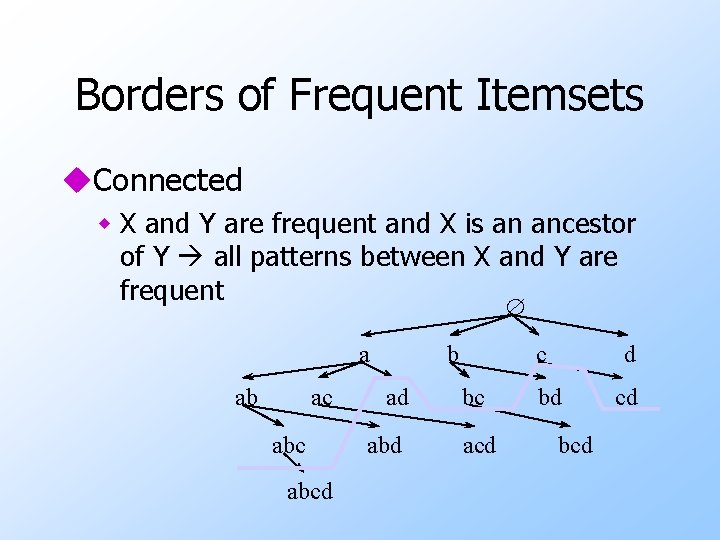

Borders of Frequent Itemsets u. Connected w X and Y are frequent and X is an ancestor of Y all patterns between X and Y are frequent a ab ac abcd b ad abd c bc acd d bd bcd cd

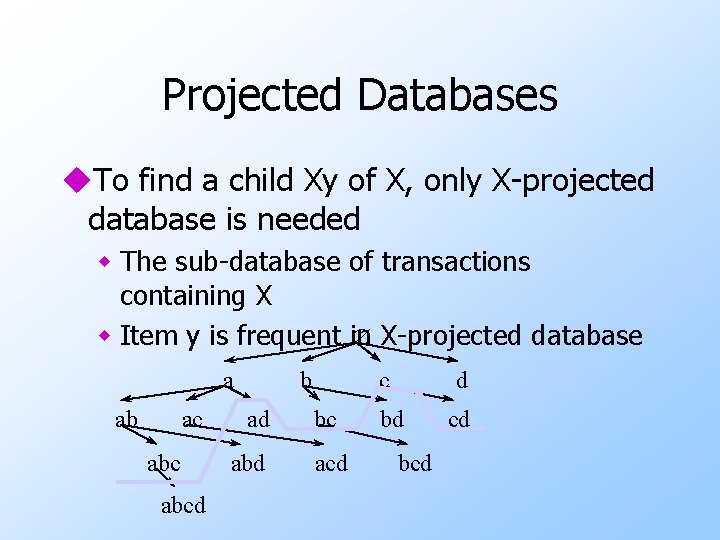

Projected Databases u. To find a child Xy of X, only X-projected database is needed w The sub-database of transactions containing X w Item y is frequent in X-projected database a ab ac abcd b ad abd c bc acd d bd bcd cd

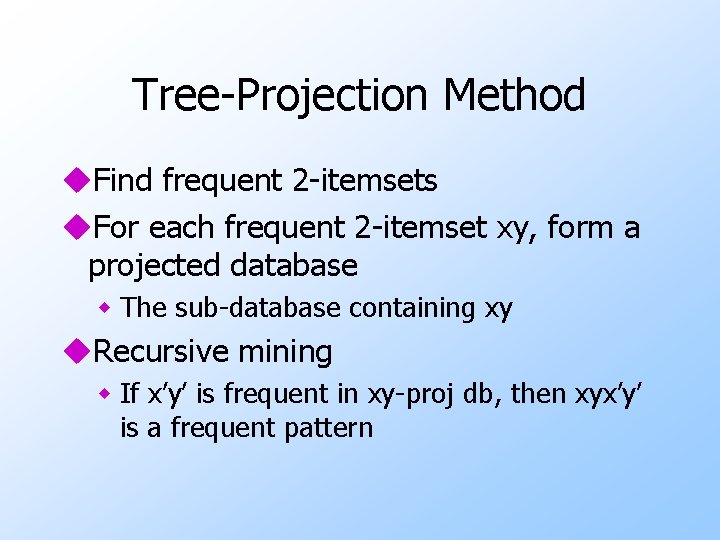

Tree-Projection Method u. Find frequent 2 -itemsets u. For each frequent 2 -itemset xy, form a projected database w The sub-database containing xy u. Recursive mining w If x’y’ is frequent in xy-proj db, then xyx’y’ is a frequent pattern

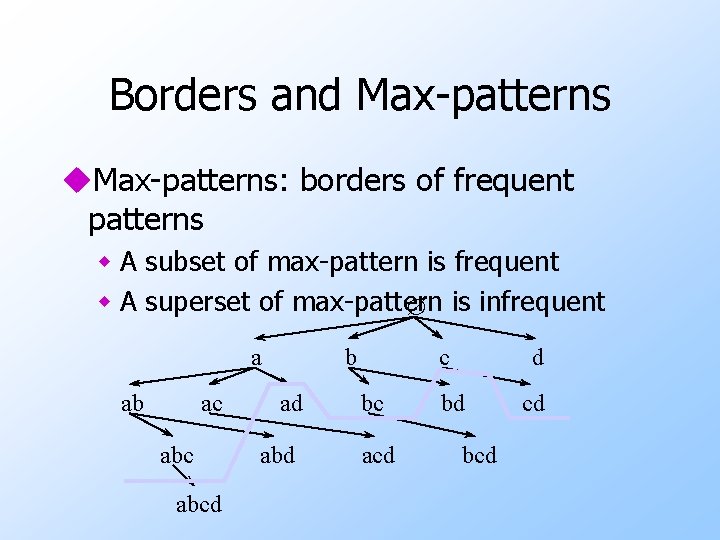

Borders and Max-patterns u. Max-patterns: borders of frequent patterns w A subset of max-pattern is frequent w A superset of max-pattern is infrequent a ab ac abcd b ad abd c bc acd d bd bcd cd

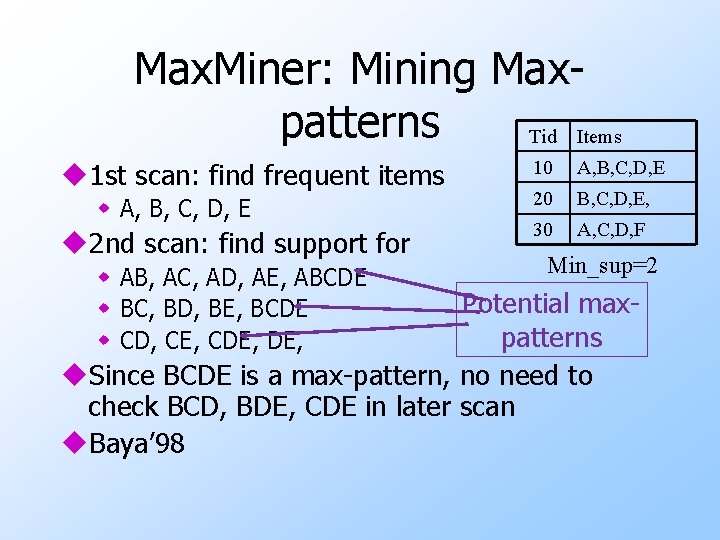

Max. Miner: Mining Maxpatterns Tid Items u 1 st scan: find frequent items w A, B, C, D, E u 2 nd scan: find support for w AB, AC, AD, AE, ABCDE w BC, BD, BE, BCDE w CD, CE, CDE, 10 A, B, C, D, E 20 B, C, D, E, 30 A, C, D, F Min_sup=2 Potential maxpatterns u. Since BCDE is a max-pattern, no need to check BCD, BDE, CDE in later scan u. Baya’ 98

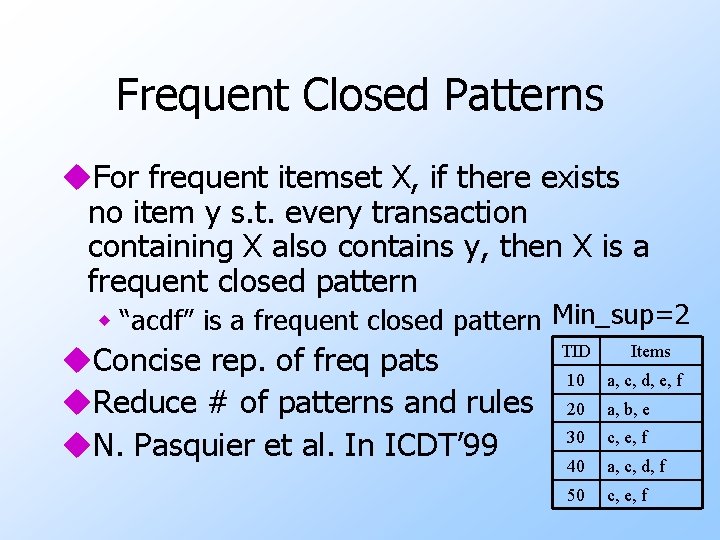

Frequent Closed Patterns u. For frequent itemset X, if there exists no item y s. t. every transaction containing X also contains y, then X is a frequent closed pattern w “acdf” is a frequent closed pattern Min_sup=2 u. Concise rep. of freq pats u. Reduce # of patterns and rules u. N. Pasquier et al. In ICDT’ 99 TID Items 10 a, c, d, e, f 20 a, b, e 30 c, e, f 40 a, c, d, f 50 c, e, f

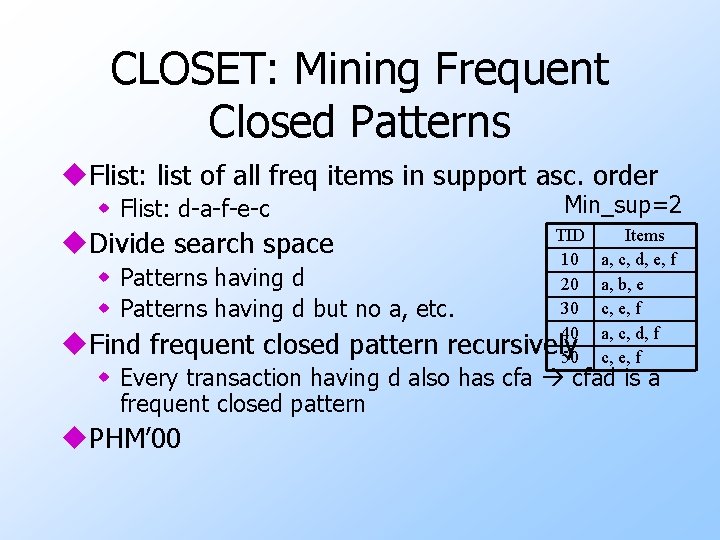

CLOSET: Mining Frequent Closed Patterns u. Flist: list of all freq items in support asc. order w Flist: d-a-f-e-c Min_sup=2 TID 10 w Patterns having d 20 30 w Patterns having d but no a, etc. 40 u. Find frequent closed pattern recursively 50 u. Divide search space Items a, c, d, e, f a, b, e c, e, f a, c, d, f c, e, f w Every transaction having d also has cfad is a frequent closed pattern u. PHM’ 00

Closed and Max-patterns u. Closed pattern mining algorithms can be adapted to mine max-patterns w A max-pattern must be closed u. Depth-first search methods have advantages over breadth-first search ones

- Slides: 61