Association Analysis Apriori Tan Steinbach Kumar Market basket

Association Analysis: Apriori © Tan, Steinbach, Kumar

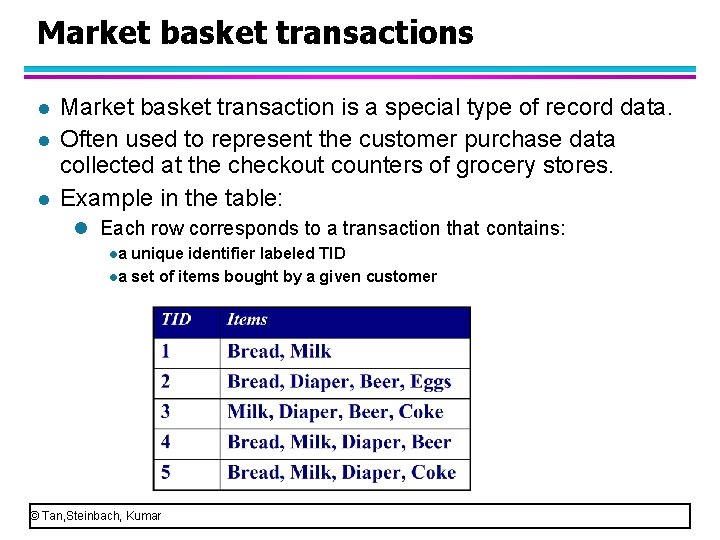

Market basket transactions l l l Market basket transaction is a special type of record data. Often used to represent the customer purchase data collected at the checkout counters of grocery stores. Example in the table: l Each row corresponds to a transaction that contains: la unique identifier labeled TID la set of items bought by a given customer © Tan, Steinbach, Kumar

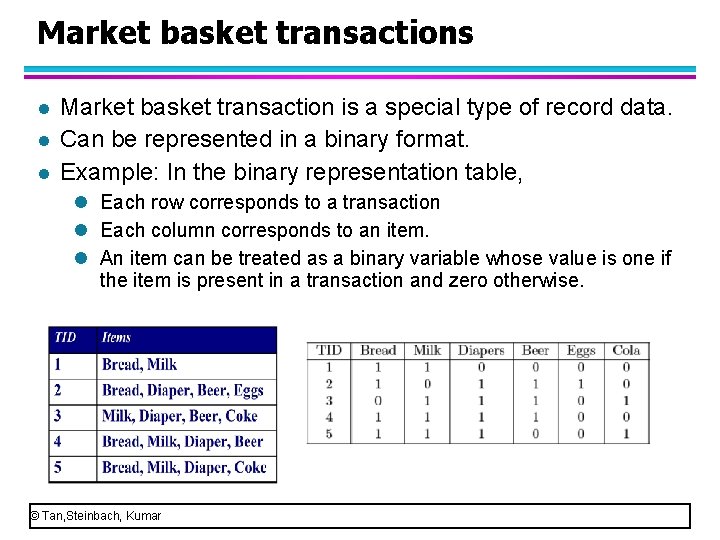

Market basket transactions l l l Market basket transaction is a special type of record data. Can be represented in a binary format. Example: In the binary representation table, l Each row corresponds to a transaction l Each column corresponds to an item. l An item can be treated as a binary variable whose value is one if the item is present in a transaction and zero otherwise. © Tan, Steinbach, Kumar

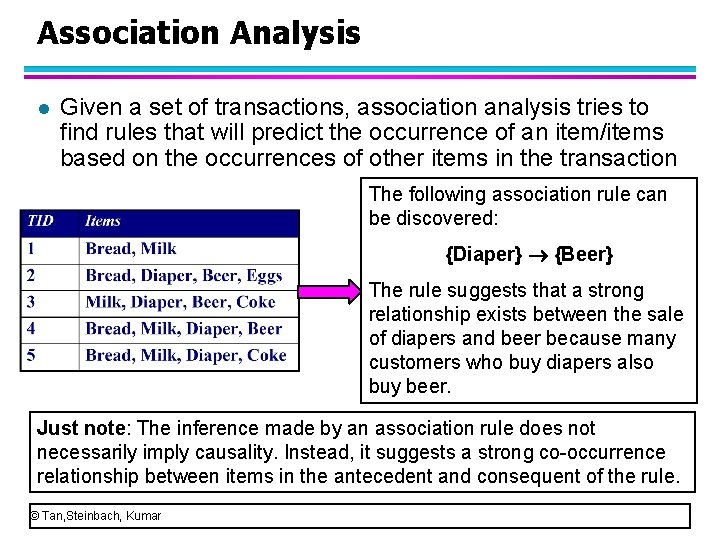

Association Analysis l Given a set of transactions, association analysis tries to find rules that will predict the occurrence of an item/items based on the occurrences of other items in the transaction The following association rule can be discovered: {Diaper} {Beer} The rule suggests that a strong relationship exists between the sale of diapers and beer because many customers who buy diapers also buy beer. Just note: The inference made by an association rule does not necessarily imply causality. Instead, it suggests a strong co occurrence relationship between items in the antecedent and consequent of the rule. © Tan, Steinbach, Kumar

Association Analysis l Association analysis can help retailers to identify new opportunities for cross selling their products to the customers. l Example: place diapers and beer near to promote the sale. l Association analysis is also applicable to other application domains such as bioinformatics, medical diagnosis, Web mining, and scientific data analysis. © Tan, Steinbach, Kumar

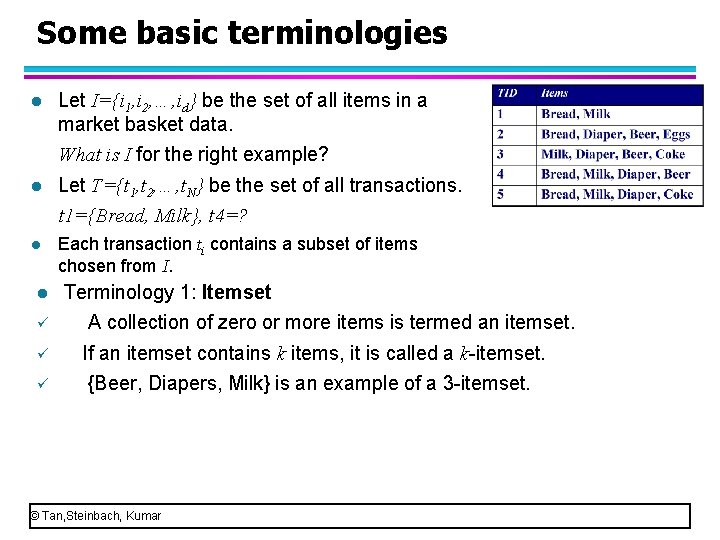

Some basic terminologies l Let I={i 1, i 2, …, id} be the set of all items in a market basket data. What is I for the right example? l Let T={t 1, t 2, …, t. N} be the set of all transactions. t 1={Bread, Milk}, t 4=? l Each transaction ti contains a subset of items chosen from I. l Terminology 1: Itemset ü A collection of zero or more items is termed an itemset. ü If an itemset contains k items, it is called a k itemset. ü {Beer, Diapers, Milk} is an example of a 3 itemset. © Tan, Steinbach, Kumar

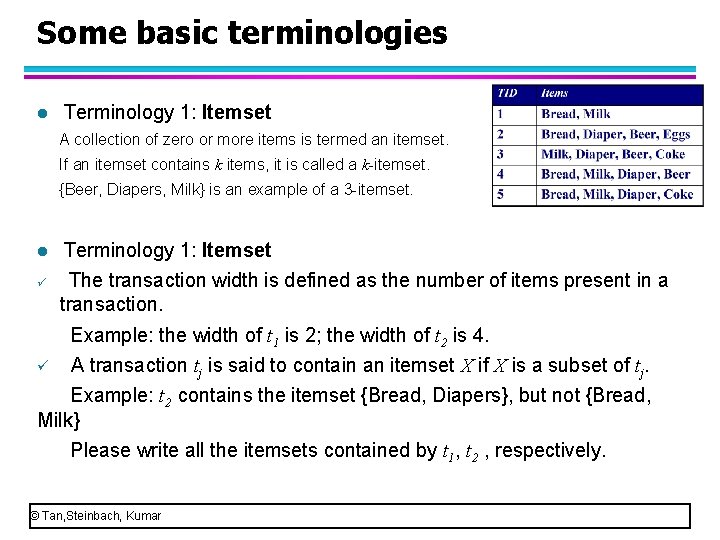

Some basic terminologies l Terminology 1: Itemset A collection of zero or more items is termed an itemset. If an itemset contains k items, it is called a k itemset. {Beer, Diapers, Milk} is an example of a 3 itemset. l ü Terminology 1: Itemset The transaction width is defined as the number of items present in a transaction. Example: the width of t 1 is 2; the width of t 2 is 4. ü A transaction tj is said to contain an itemset X if X is a subset of tj. Example: t 2 contains the itemset {Bread, Diapers}, but not {Bread, Milk} Please write all the itemsets contained by t 1, t 2 , respectively. © Tan, Steinbach, Kumar

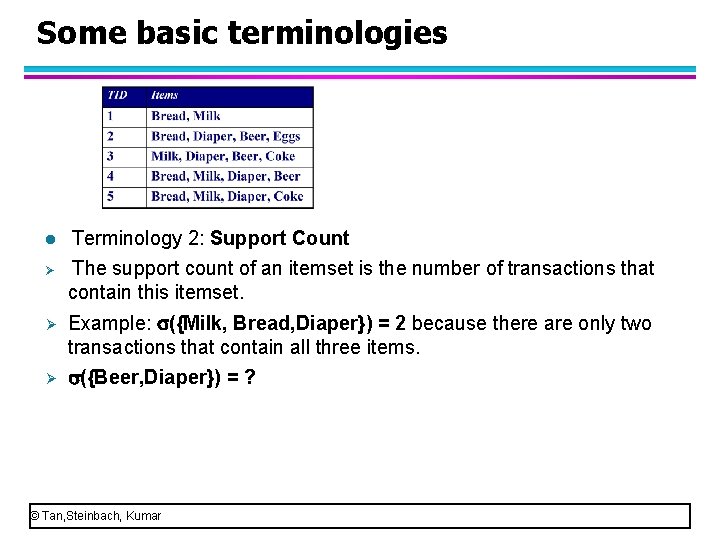

Some basic terminologies l Ø Terminology 2: Support Count The support count of an itemset is the number of transactions that contain this itemset. Ø Example: ({Milk, Bread, Diaper}) = 2 because there are only two transactions that contain all three items. Ø ({Beer, Diaper}) = ? © Tan, Steinbach, Kumar

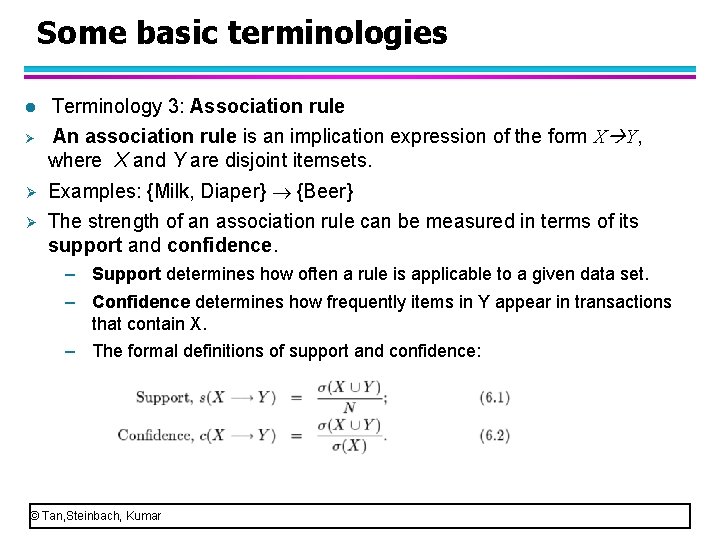

Some basic terminologies l Ø Terminology 3: Association rule An association rule is an implication expression of the form X Y, where X and Y are disjoint itemsets. Ø Examples: {Milk, Diaper} {Beer} Ø The strength of an association rule can be measured in terms of its support and confidence. – Support determines how often a rule is applicable to a given data set. – Confidence determines how frequently items in Y appear in transactions that contain X. – The formal definitions of support and confidence: © Tan, Steinbach, Kumar

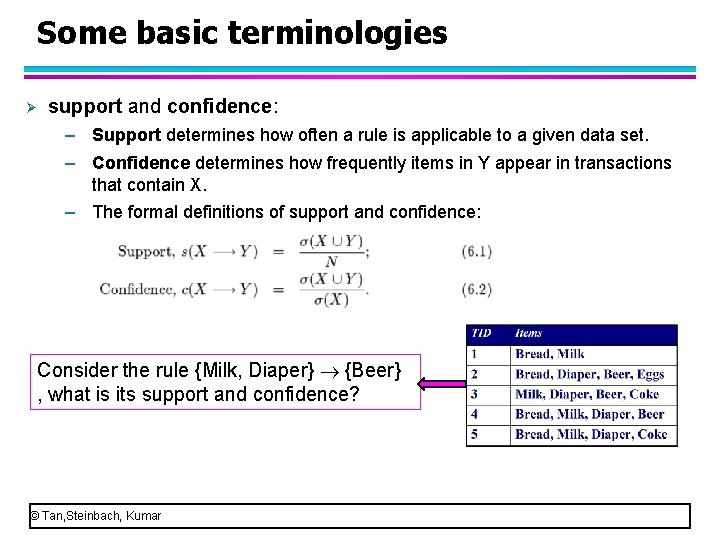

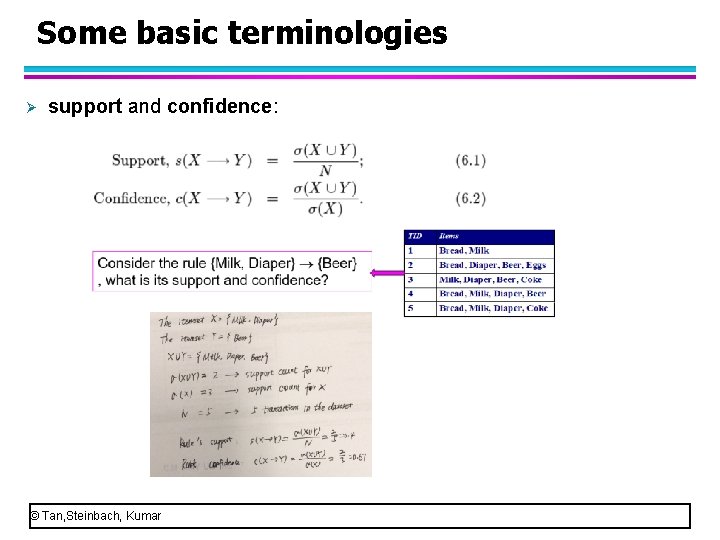

Some basic terminologies Ø support and confidence: – Support determines how often a rule is applicable to a given data set. – Confidence determines how frequently items in Y appear in transactions that contain X. – The formal definitions of support and confidence: Consider the rule {Milk, Diaper} {Beer} , what is its support and confidence? © Tan, Steinbach, Kumar

Some basic terminologies Ø support and confidence: © Tan, Steinbach, Kumar

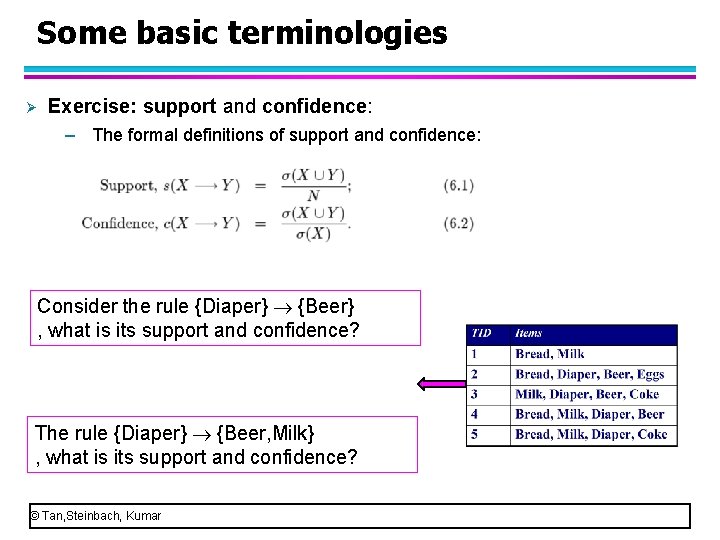

Some basic terminologies Ø Exercise: support and confidence: – The formal definitions of support and confidence: Consider the rule {Diaper} {Beer} , what is its support and confidence? The rule {Diaper} {Beer, Milk} , what is its support and confidence? © Tan, Steinbach, Kumar

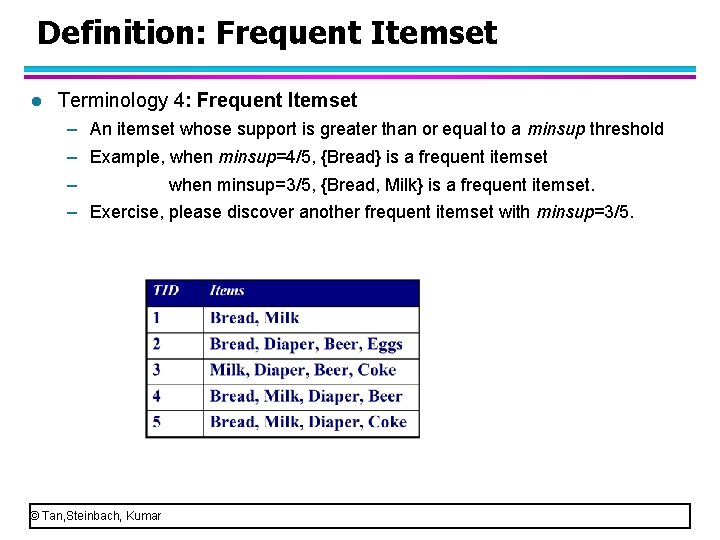

Definition: Frequent Itemset l Terminology 4: Frequent Itemset – An itemset whose support is greater than or equal to a minsup threshold – Example, when minsup=4/5, {Bread} is a frequent itemset – when minsup=3/5, {Bread, Milk} is a frequent itemset. – Exercise, please discover another frequent itemset with minsup=3/5. © Tan, Steinbach, Kumar

Association Rule Mining Task l Given a set of transactions T, the goal of association rule mining is to find all rules having – support ≥ minsup threshold – confidence ≥ minconf threshold © Tan, Steinbach, Kumar

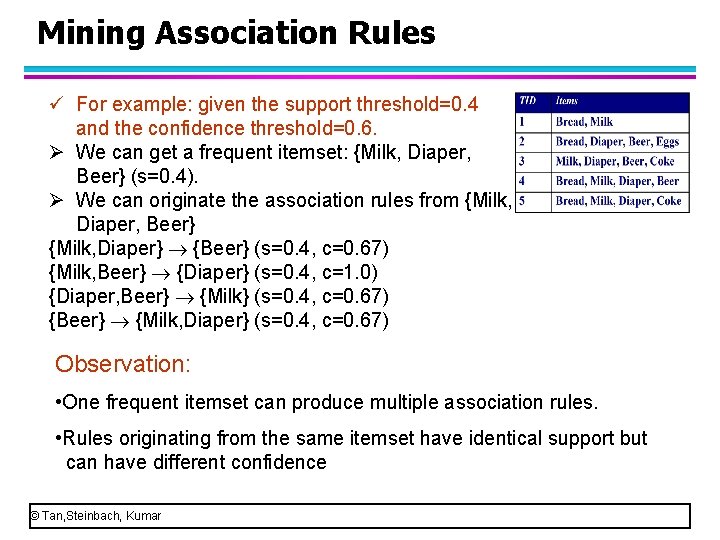

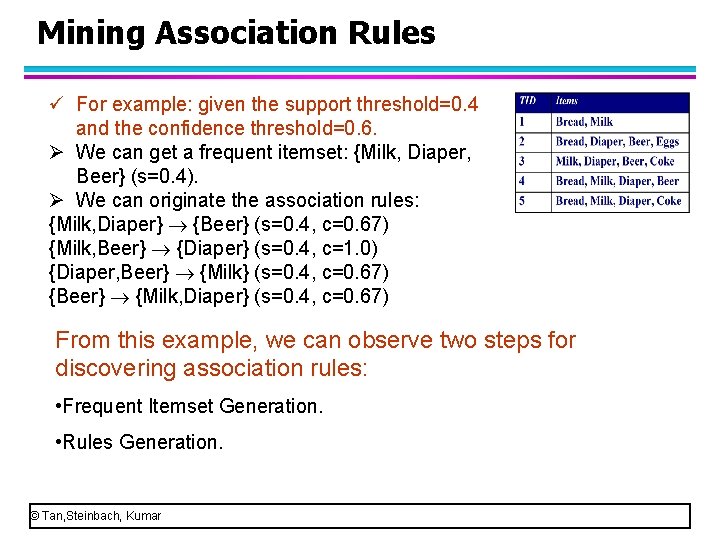

Mining Association Rules ü For example: given the support threshold=0. 4 and the confidence threshold=0. 6. Ø We can get a frequent itemset: {Milk, Diaper, Beer} (s=0. 4). Ø We can originate the association rules from {Milk, Diaper, Beer} {Milk, Diaper} {Beer} (s=0. 4, c=0. 67) {Milk, Beer} {Diaper} (s=0. 4, c=1. 0) {Diaper, Beer} {Milk} (s=0. 4, c=0. 67) {Beer} {Milk, Diaper} (s=0. 4, c=0. 67) Observation: • One frequent itemset can produce multiple association rules. • Rules originating from the same itemset have identical support but can have different confidence © Tan, Steinbach, Kumar

Association Rule Mining Task p Brute force approach to discover association rule: – List all possible association rules – Compute the support and confidence for each rule – Prune rules that fail the minsup and minconf thresholds (Select the rules that meet the minsup and mincof thresholds) Computationally prohibitive! © Tan, Steinbach, Kumar

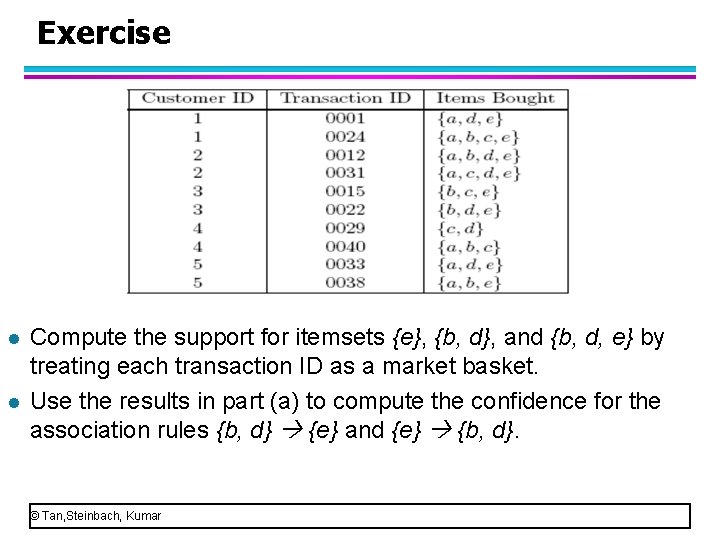

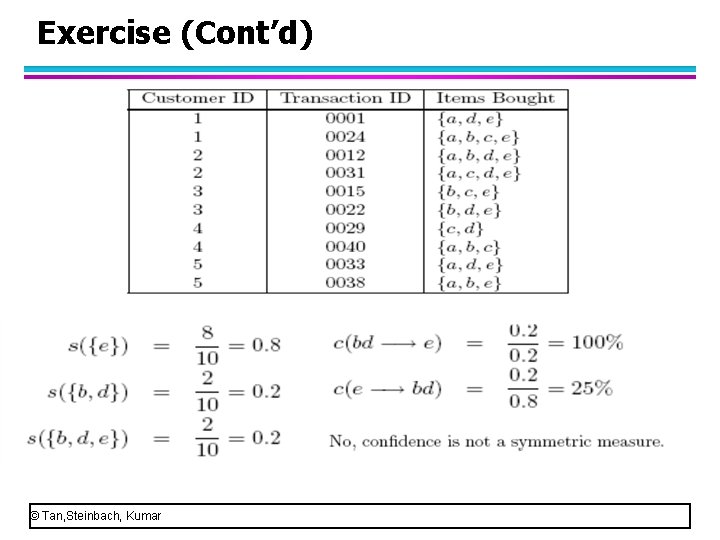

Exercise l l Compute the support for itemsets {e}, {b, d}, and {b, d, e} by treating each transaction ID as a market basket. Use the results in part (a) to compute the confidence for the association rules {b, d} {e} and {e} {b, d}. © Tan, Steinbach, Kumar

Exercise (Cont’d) © Tan, Steinbach, Kumar

Mining Association Rules ü For example: given the support threshold=0. 4 and the confidence threshold=0. 6. Ø We can get a frequent itemset: {Milk, Diaper, Beer} (s=0. 4). Ø We can originate the association rules: {Milk, Diaper} {Beer} (s=0. 4, c=0. 67) {Milk, Beer} {Diaper} (s=0. 4, c=1. 0) {Diaper, Beer} {Milk} (s=0. 4, c=0. 67) {Beer} {Milk, Diaper} (s=0. 4, c=0. 67) From this example, we can observe two steps for discovering association rules: • Frequent Itemset Generation. • Rules Generation. © Tan, Steinbach, Kumar

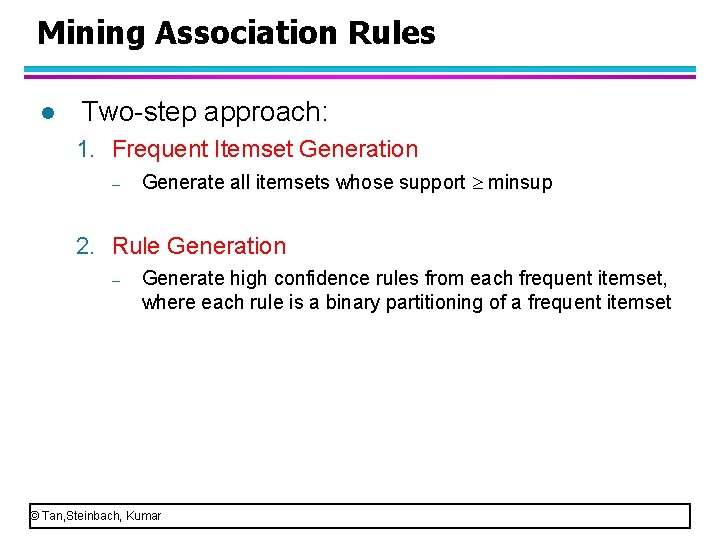

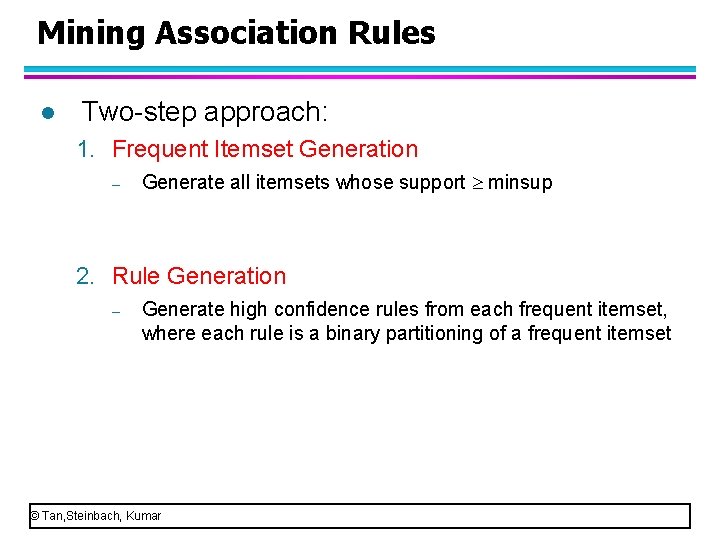

Mining Association Rules l Two step approach: 1. Frequent Itemset Generation – Generate all itemsets whose support minsup 2. Rule Generation – Generate high confidence rules from each frequent itemset, where each rule is a binary partitioning of a frequent itemset © Tan, Steinbach, Kumar

Association Analysis Most typical algorithm for association analysis: Apriori Section 1: Frequent Itemset Generation of Apriori Section 2: Rules generation of Apriori © Tan, Steinbach, Kumar

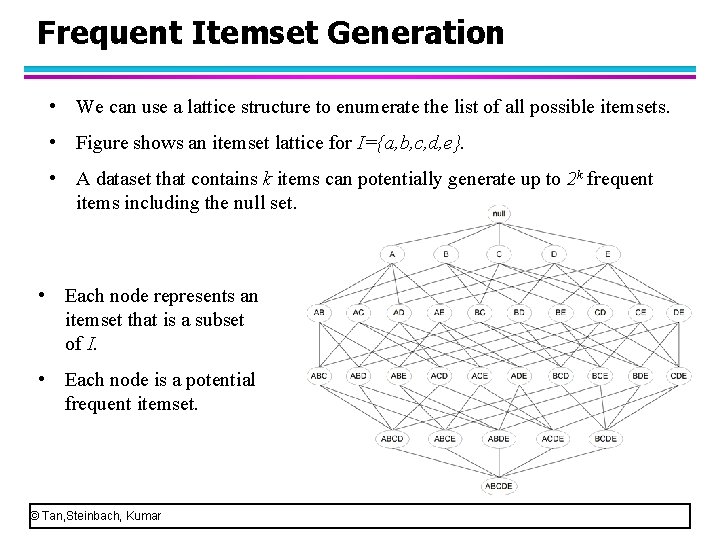

Frequent Itemset Generation • We can use a lattice structure to enumerate the list of all possible itemsets. • Figure shows an itemset lattice for I={a, b, c, d, e}. • A dataset that contains k items can potentially generate up to 2 k frequent items including the null set. • Each node represents an itemset that is a subset of I. • Each node is a potential frequent itemset. © Tan, Steinbach, Kumar

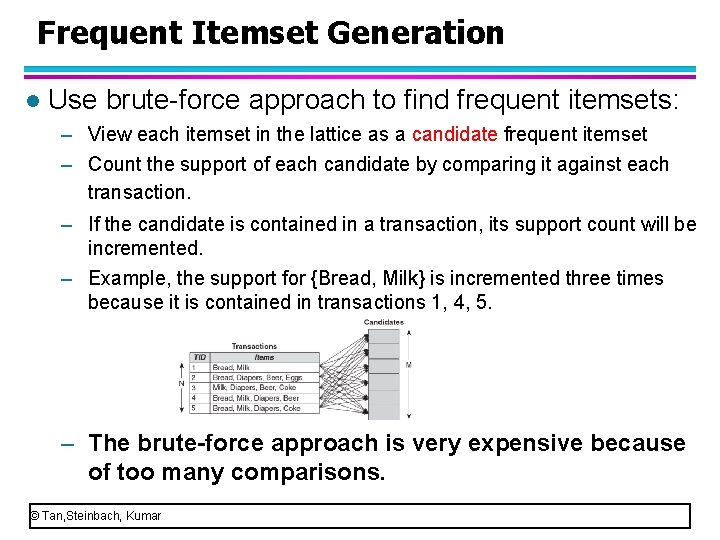

Frequent Itemset Generation l Use brute force approach to find frequent itemsets: – View each itemset in the lattice as a candidate frequent itemset – Count the support of each candidate by comparing it against each transaction. – If the candidate is contained in a transaction, its support count will be incremented. – Example, the support for {Bread, Milk} is incremented three times because it is contained in transactions 1, 4, 5. – The brute-force approach is very expensive because of too many comparisons. © Tan, Steinbach, Kumar

Frequent Itemset Generation Strategies To improve the efficiency, we can l Reduce the number of candidates l – Apriori principle is an effective way to eliminate some of the candidate itemsets without conunting their support values. (Discuss later) l Reduce the number of comparisons – Instead of matching each candidate itemsets against every transaction, we reduce the number of comparisons by using more advanced data structures. © Tan, Steinbach, Kumar

Frequent Itemset Generation Strategies l To improve the efficiency, we can – Reduce the number of candidates – Reduce the number of comparisons © Tan, Steinbach, Kumar

Reducing Number of Candidates l Apriori principle: – If an itemset is frequent, then all of its subsets must also be frequent. l One example of Aprior principle: – Suppose {Milk, Diaper, Beer} is frequent (its support count is 2, appears in 3, 4). – Clearly, any transaction that contains {Milk, Diaper, Beer} must also contain its subsets, {Milk, Diaper}, {Milk, Beer}, {Diaper, Beer}, {Milk}, {Diaper}, {Beer}. – Other transactions may also contain its subsets, for example, transaction 5 contains {Milk, Diaper}, 2 contains {Diaper, beer} – So, the support count of its subsets must be greater than or equal to its support count. ( {Milk, Diaper)=3>2). – So, all of its subsets are frequent. © Tan, Steinbach, Kumar

Reducing Number of Candidates l Apriori principle: – If an itemset is frequent, then all of its subsets must also be frequent. l Conversely, if an itemset is infrequent, then all of its supersets must be infrequent too. – Suppose {Bread, Milk, Diaper} is infrequent (its support count is 2, appears in 3, 4). – Consider one example of its superset: {Bread, Milk, Diaper, Coke}. – A transaction that doesn’t contain {Bread, Milk, Diaper} must not contain {Bread, Milk, Diaper, Coke}. – A transaction that contains {Bread, Milk, Diaper} may not contain {Bread, Milk, Diaper, Coke} (such as transaction 4) – {Bread, Milk, Diaper, Coke}<= {Bread, Milk, Diaper}. Infrequent. © Tan, Steinbach, Kumar

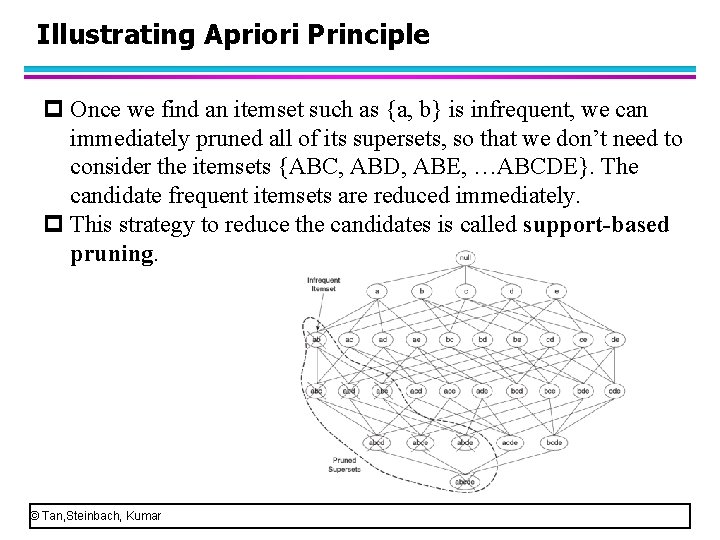

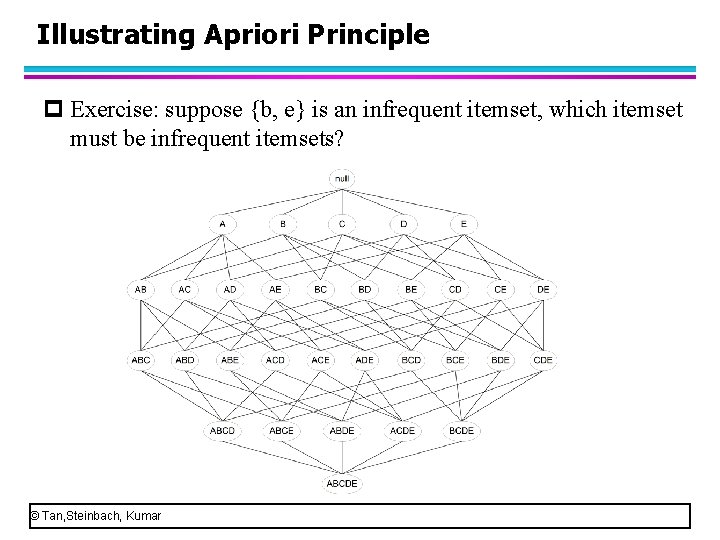

Illustrating Apriori Principle p Once we find an itemset such as {a, b} is infrequent, we can immediately pruned all of its supersets, so that we don’t need to consider the itemsets {ABC, ABD, ABE, …ABCDE}. The candidate frequent itemsets are reduced immediately. p This strategy to reduce the candidates is called support-based pruning. © Tan, Steinbach, Kumar

Illustrating Apriori Principle p Exercise: suppose {b, e} is an infrequent itemset, which itemset must be infrequent itemsets? © Tan, Steinbach, Kumar

Frequent Itemset Generation in Apriori p Two steps of apriori algorithm for association rules mining: p Frequent itemset generation p Rules generation p Apriori algorithm uses the support-based pruning in the step of frequent itemset generation. © Tan, Steinbach, Kumar

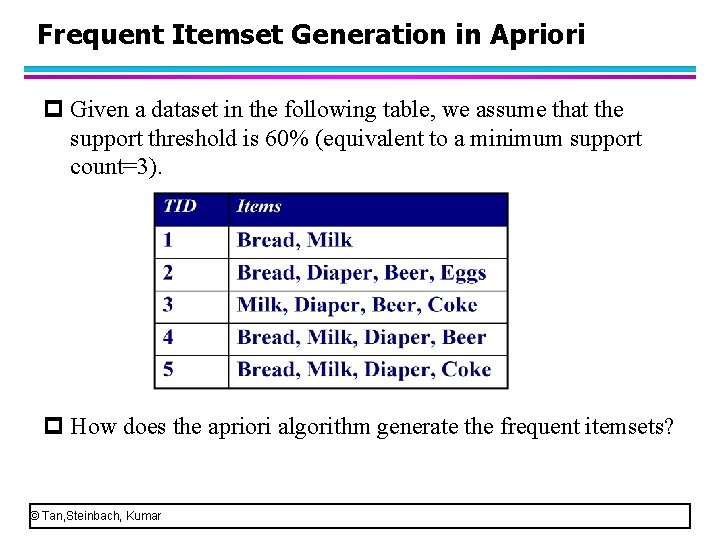

Frequent Itemset Generation in Apriori p Given a dataset in the following table, we assume that the support threshold is 60% (equivalent to a minimum support count=3). p How does the apriori algorithm generate the frequent itemsets? © Tan, Steinbach, Kumar

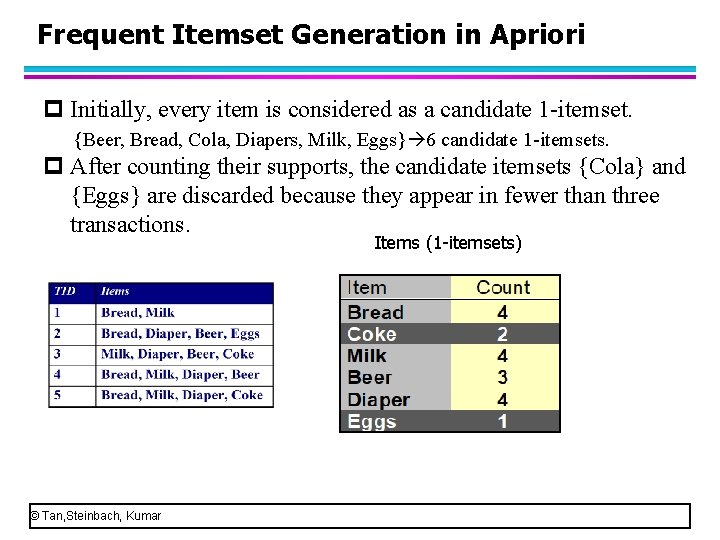

Frequent Itemset Generation in Apriori p Initially, every item is considered as a candidate 1 -itemset. {Beer, Bread, Cola, Diapers, Milk, Eggs} 6 candidate 1 -itemsets. p After counting their supports, the candidate itemsets {Cola} and {Eggs} are discarded because they appear in fewer than three transactions. Items (1 -itemsets) © Tan, Steinbach, Kumar

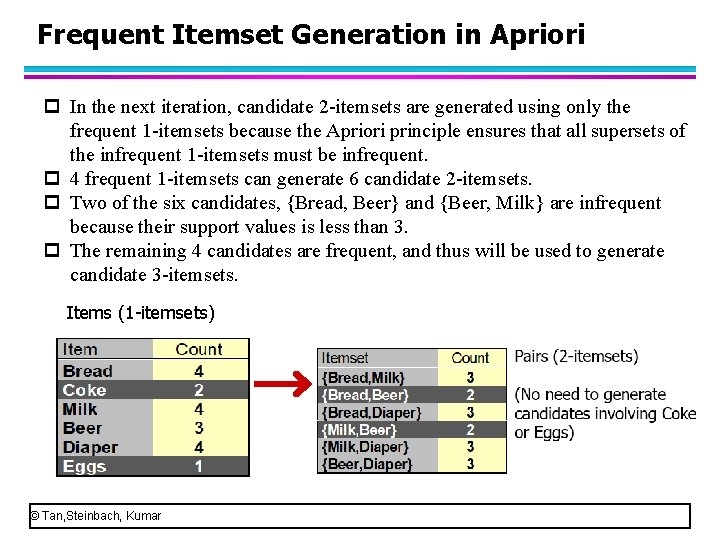

Frequent Itemset Generation in Apriori p In the next iteration, candidate 2 -itemsets are generated using only the frequent 1 -itemsets because the Apriori principle ensures that all supersets of the infrequent 1 -itemsets must be infrequent. p 4 frequent 1 -itemsets can generate 6 candidate 2 -itemsets. p Two of the six candidates, {Bread, Beer} and {Beer, Milk} are infrequent because their support values is less than 3. p The remaining 4 candidates are frequent, and thus will be used to generate candidate 3 -itemsets. Items (1 -itemsets) © Tan, Steinbach, Kumar

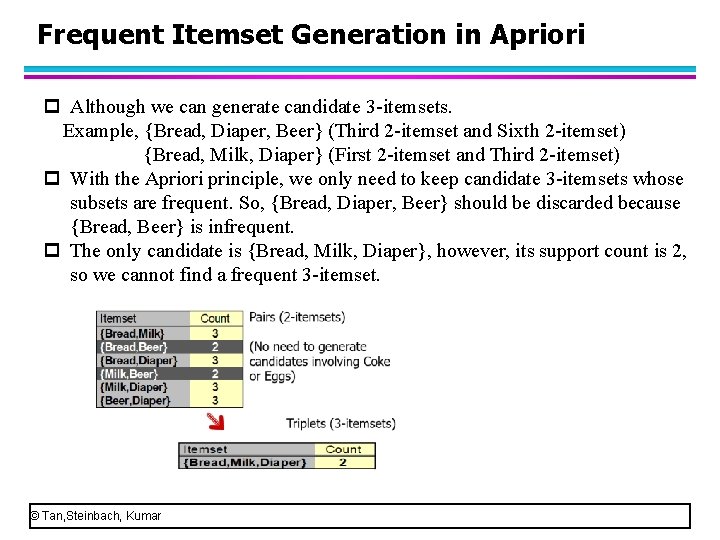

Frequent Itemset Generation in Apriori p Although we can generate candidate 3 -itemsets. Example, {Bread, Diaper, Beer} (Third 2 -itemset and Sixth 2 -itemset) {Bread, Milk, Diaper} (First 2 -itemset and Third 2 -itemset) p With the Apriori principle, we only need to keep candidate 3 -itemsets whose subsets are frequent. So, {Bread, Diaper, Beer} should be discarded because {Bread, Beer} is infrequent. p The only candidate is {Bread, Milk, Diaper}, however, its support count is 2, so we cannot find a frequent 3 -itemset. © Tan, Steinbach, Kumar

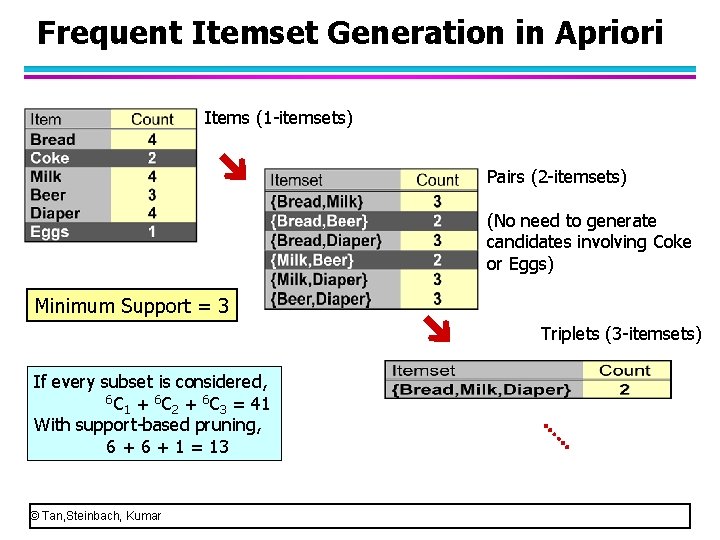

Frequent Itemset Generation in Apriori Items (1 -itemsets) Pairs (2 -itemsets) (No need to generate candidates involving Coke or Eggs) Minimum Support = 3 Triplets (3 -itemsets) If every subset is considered, 6 C + 6 C = 41 1 2 3 With support-based pruning, 6 + 1 = 13 © Tan, Steinbach, Kumar

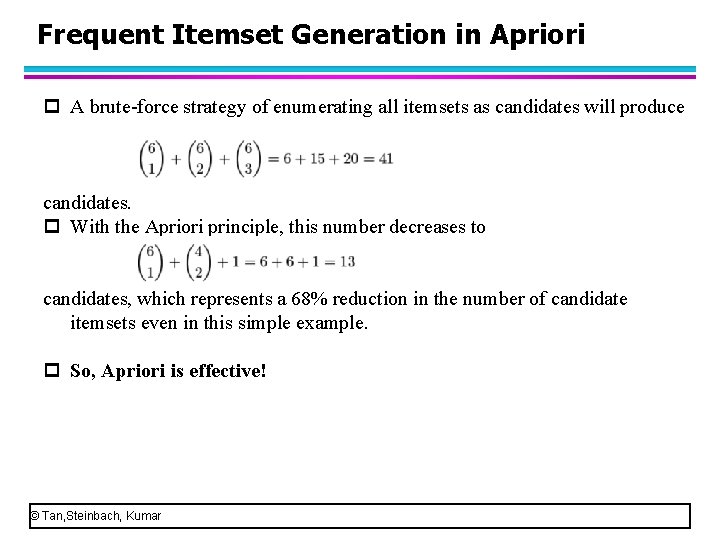

Frequent Itemset Generation in Apriori p A brute-force strategy of enumerating all itemsets as candidates will produce candidates. p With the Apriori principle, this number decreases to candidates, which represents a 68% reduction in the number of candidate itemsets even in this simple example. p So, Apriori is effective! © Tan, Steinbach, Kumar

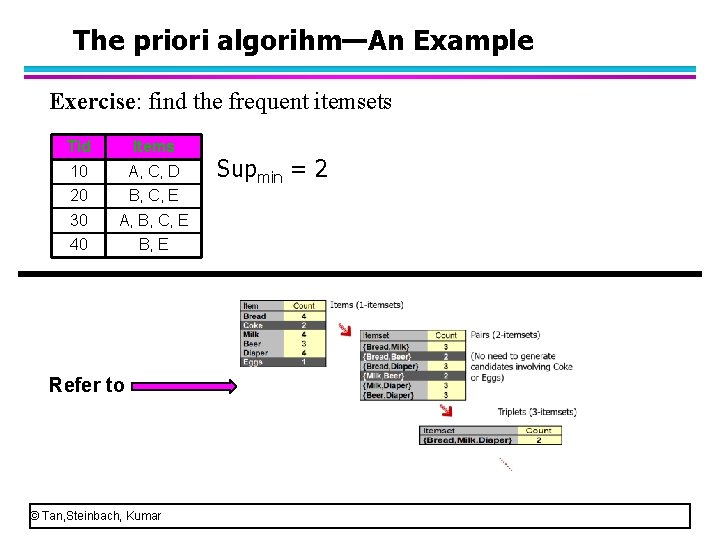

The priori algorihm—An Example Exercise: find the frequent itemsets Tid Items 10 A, C, D 20 B, C, E 30 A, B, C, E 40 B, E Refer to © Tan, Steinbach, Kumar Supmin = 2

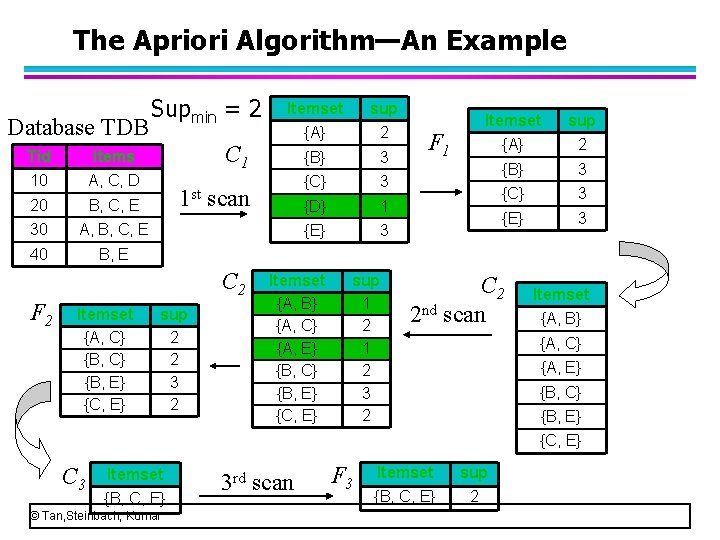

The Apriori Algorithm—An Example Database TDB Tid Items 10 A, C, D 20 B, C, E 30 A, B, C, E 40 B, E Supmin = 2 Itemset {A, C} {B, E} {C, E} sup {A} 2 {B} 3 {C} 3 {D} 1 {E} 3 C 1 1 st scan C 2 F 2 Itemset sup 2 2 3 2 Itemset {A, B} {A, C} {A, E} {B, C} {B, E} {C, E} sup 1 2 3 2 F 1 Itemset sup {A} 2 {B} 3 {C} 3 {E} 3 C 2 2 nd scan Itemset {A, B} {A, C} {A, E} {B, C} {B, E} {C, E} C 3 Itemset {B, C, E} © Tan, Steinbach, Kumar 3 rd scan F 3 Itemset sup {B, C, E} 2

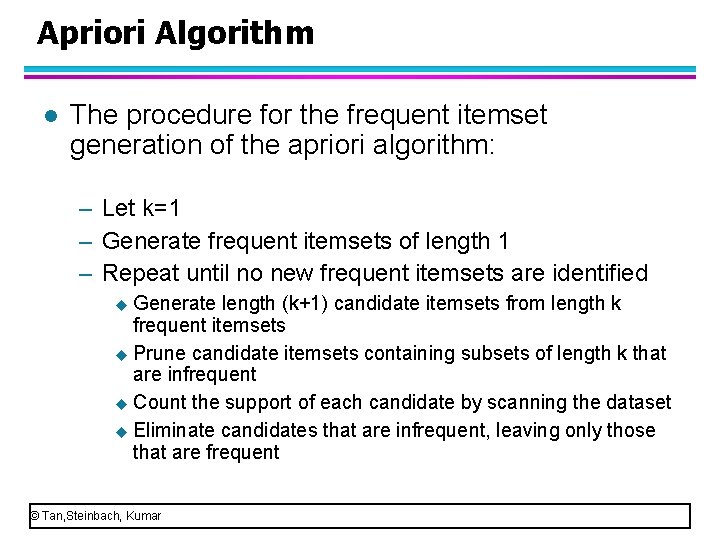

Apriori Algorithm l The procedure for the frequent itemset generation of the apriori algorithm: – Let k=1 – Generate frequent itemsets of length 1 – Repeat until no new frequent itemsets are identified u Generate length (k+1) candidate itemsets from length k frequent itemsets u Prune candidate itemsets containing subsets of length k that are infrequent u Count the support of each candidate by scanning the dataset u Eliminate candidates that are infrequent, leaving only those that are frequent © Tan, Steinbach, Kumar

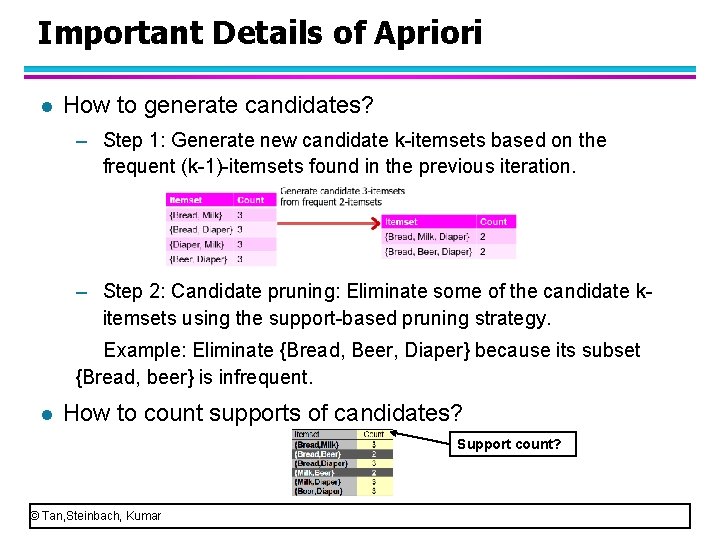

Important Details of Apriori l How to generate candidates? – Step 1: Generate new candidate k itemsets based on the frequent (k 1) itemsets found in the previous iteration. – Step 2: Candidate pruning: Eliminate some of the candidate k itemsets using the support based pruning strategy. Example: Eliminate {Bread, Beer, Diaper} because its subset {Bread, beer} is infrequent. l How to count supports of candidates? Support count? © Tan, Steinbach, Kumar

Important Details of Apriori l How to generate candidates? – Step 1: Candidate Generation: Fk-1 x Fk-1 – Step 2: Candidate pruning. l How to count supports of candidates? © Tan, Steinbach, Kumar

Important Details of Apriori l How to generate candidates? l Using Apriori algorithm, we need to order the items in each candidate itemset and in each transaction in increasing lexicographic order. For example, the transition {Bread, Milk, Diaper, Beer, Eggs, Cola} should be ordered as {Beer, Bread, Cola, Diaper, Eggs, Milk}. Once the items are ordered, we can use numbers/letters to represent the items for convenience. For example, we can represent {Beer, Bread, Cola, Diaper, Eggs, Milk} as {1, 2, 3, 4, 5, 6}. In this case, the candidate itemset {Bread, Diaper, Milk} can be represented as {1, 4, 6}. Please use numbers to represent the candidate itemset {Bread, Diaper}? © Tan, Steinbach, Kumar

Important Details of Apriori l Apriori algorithm uses Fk-1 x Fk-1 method to generate candidate k itemsets by merging a pair of frequent (k 1) itemsets. l When using Fk-1 x Fk-1 method, the items in each frequent (k 1) itemset are needed to order in a lexicographic way. Example: {Bread, Diaper, Beer} {Beer, Bread, Diaper} l Specially, a pair of frequent (k 1) itemsets is merged only if their first k-2 items are identical. © Tan, Steinbach, Kumar

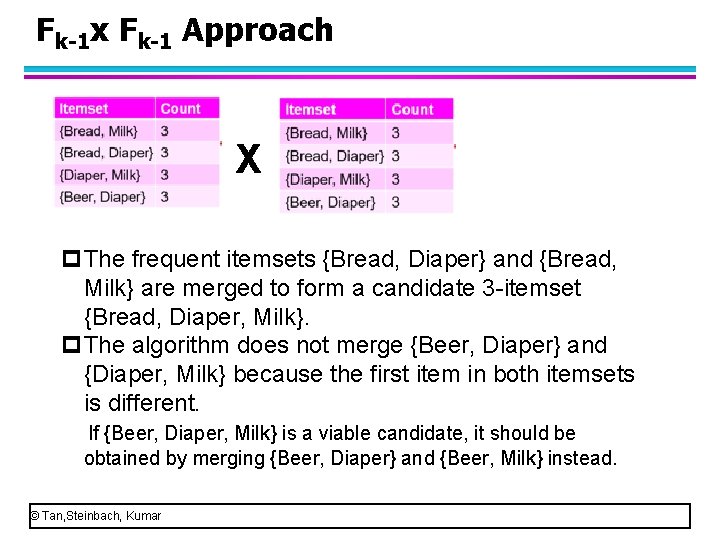

Fk-1 x Fk-1 Approach X p. The frequent itemsets {Bread, Diaper} and {Bread, Milk} are merged to form a candidate 3 itemset {Bread, Diaper, Milk}. p. The algorithm does not merge {Beer, Diaper} and {Diaper, Milk} because the first item in both itemsets is different. If {Beer, Diaper, Milk} is a viable candidate, it should be obtained by merging {Beer, Diaper} and {Beer, Milk} instead. © Tan, Steinbach, Kumar

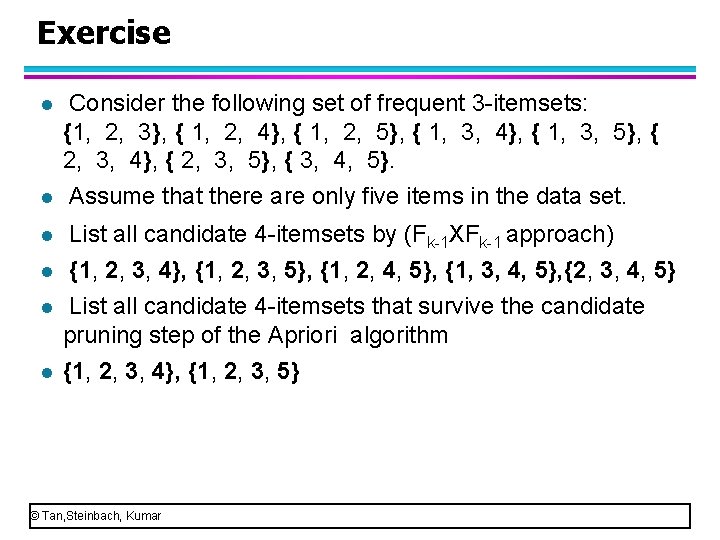

Exercise l Consider the following set of frequent 3 itemsets: {1, 2, 3}, { 1, 2, 4}, { 1, 2, 5}, { 1, 3, 4}, { 1, 3, 5}, { 2, 3, 4}, { 2, 3, 5}, { 3, 4, 5}. Assume that there are only five items in the data set. l List all candidate 4 itemsets by (Fk 1 XFk 1 approach) l {1, 2, 3, 4}, {1, 2, 3, 5}, {1, 2, 4, 5}, {1, 3, 4, 5}, {2, 3, 4, 5} List all candidate 4 itemsets that survive the candidate pruning step of the Apriori algorithm {1, 2, 3, 4}, {1, 2, 3, 5} l l l © Tan, Steinbach, Kumar

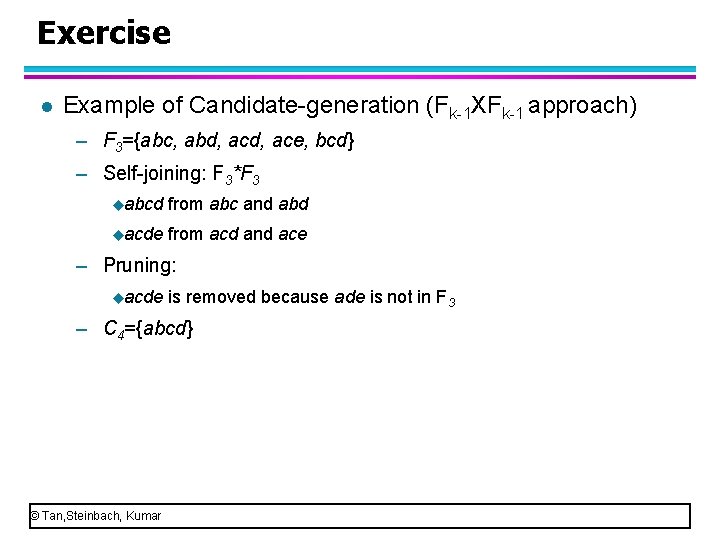

Exercise l Example of Candidate generation (Fk 1 XFk 1 approach) – F 3={abc, abd, ace, bcd} – Self joining: F 3*F 3 uabcd from abc and abd uacde from acd and ace – Pruning: uacde is removed because ade is not in F 3 – C 4={abcd} © Tan, Steinbach, Kumar

Important Details of Apriori l How to generate candidates? – Step 1: Candidate Generation: Fk-1 x Fk-1 – Step 2: Candidate pruning. l How to count supports of candidates? © Tan, Steinbach, Kumar

How to Count Supports of Candidates? l Why counting supports of candidates a problem? – The total number of candidates can be very huge – One transaction may contain many candidates l Method: hash tree – Candidate itemsets are partitioned into different buckets and stored in a hash-tree – Items contained in each transaction are also hashed into their appropriate buckets. – Instead of comparing each itemset in the transaction with every candidate itemset, it is matched only against candidate itemsets that belong to the same bucket. © Tan, Steinbach, Kumar

How to Count Supports of Candidates? l Three issues for counting supports of candidates – How to construct a hash tree for candidate itemsets? – How to numerate the itemsets contained in each transaction. – How to use each transaction to update the support counts. © Tan, Steinbach, Kumar

How to Count Supports of Candidates? l Three issues for counting supports of candidates – How to construct a hash tree for candidate itemsets? – How to numerate the itemsets contained in each transaction. – How to use each transaction to update the support counts. © Tan, Steinbach, Kumar

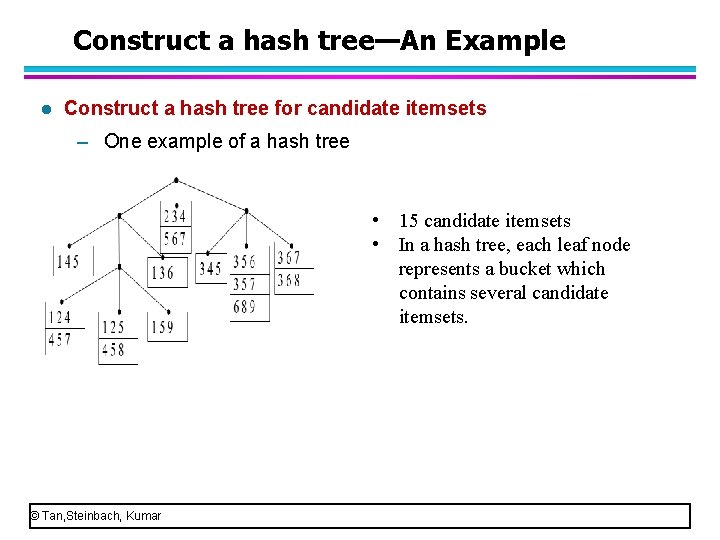

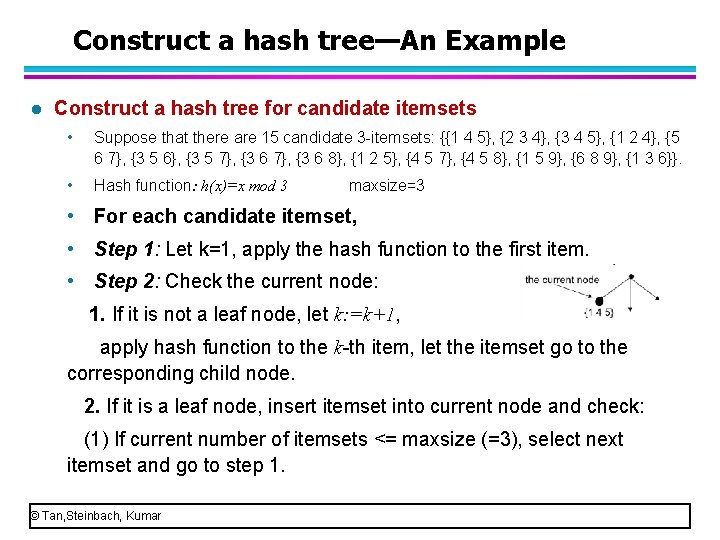

Construct a hash tree—An Example l Construct a hash tree for candidate itemsets – One example of a hash tree • 15 candidate itemsets • In a hash tree, each leaf node represents a bucket which contains several candidate itemsets. © Tan, Steinbach, Kumar

Construct a hash tree—An Example l Construct a hash tree for candidate itemsets – Suppose that there are 15 candidate 3 itemsets: {{1 4 5}, {2 3 4}, {3 4 5}, {1 2 4}, {5 6 7}, {3 5 6}, {3 5 7}, {3 6 8}, {1 2 5}, {4 5 7}, {4 5 8}, {1 5 9}, {6 8 9}, {1 3 6}}. – To construct a hash tree, we need to determine the following issues: – What hash function is used? (The hash function determines: (1) How many child nodes does the root node and each internal node have at most? (2) Which child node does each candidate go to? ) – How many candidate itemsets does a leaf node have at most? – They are usually determined depending on your specific task. © Tan, Steinbach, Kumar

Construct a hash tree—An Example l Construct a hash tree for candidate itemsets – To construct a hash tree, determine the following issues: – What hash function is used? • Modulo function is the most widely used hash function. (the modulo function (h(x)=x mod n) finds the remainder after division of x by n) • Suppose that we use (h(x)=x mod 3) as the hash function. (If we use (h(x)=x mod n) , each non leaf node has n child nodes at most. ) • Example: h(4)=4 mod 3=1. h(5)=? h(6)=? – How many candidate itemsets does a leaf node have at most? • Suppose that the maxsize = 3 (a leaf node can contain three candidates at most) © Tan, Steinbach, Kumar

Construct a hash tree—An Example l Construct a hash tree for candidate itemsets • Suppose that there are 15 candidate 3 itemsets: {{1 4 5}, {2 3 4}, {3 4 5}, {1 2 4}, {5 6 7}, {3 5 6}, {3 5 7}, {3 6 8}, {1 2 5}, {4 5 7}, {4 5 8}, {1 5 9}, {6 8 9}, {1 3 6}}. • Suppose that we use (h(x)=x mod 3) as the hash function. • Suppose that the maxsize = 3 (a leaf node can contain three candidates at most) – In this case, how to construct a hash tree? Note: The items in each itemset have been ordered in lexicographic way. © Tan, Steinbach, Kumar

Construct a hash tree—An Example l Construct a hash tree for candidate itemsets • Suppose that there are 15 candidate 3 itemsets: {{1 4 5}, {2 3 4}, {3 4 5}, {1 2 4}, {5 6 7}, {3 5 6}, {3 5 7}, {3 6 8}, {1 2 5}, {4 5 7}, {4 5 8}, {1 5 9}, {6 8 9}, {1 3 6}}. • Suppose that we use (h(x)=x mod 3) as the hash function. Because we use h(x)=x mod 3, each non leaf node has 3 child nodes at most. (1) In the first figure, there are 3 child nodes: left child node, middle child node, right child node. (2) In the second figure, 2 child nodes, left child node, right child node. (3) In the third, only one child node: left child node. © Tan, Steinbach, Kumar

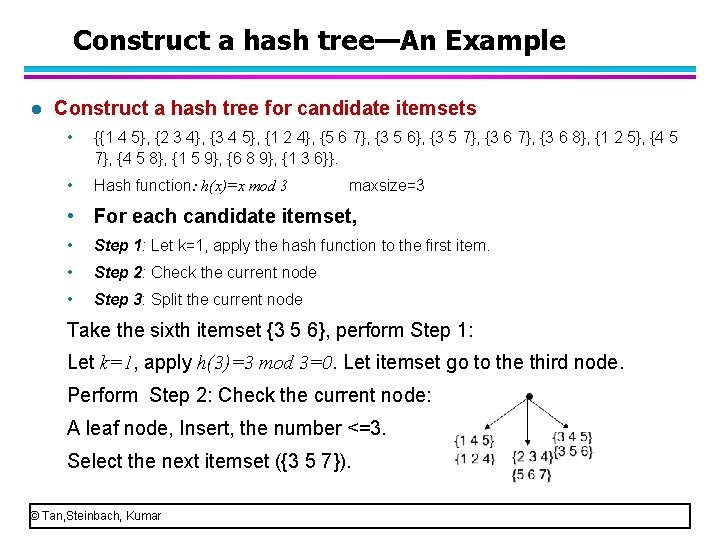

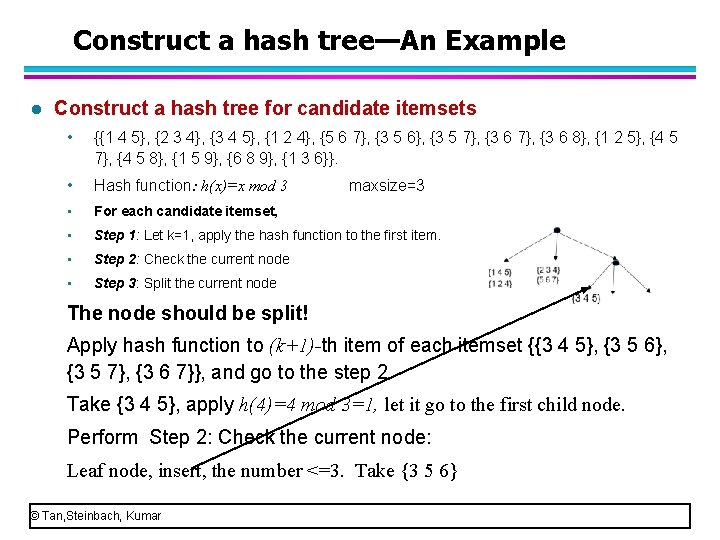

Construct a hash tree—An Example l Construct a hash tree for candidate itemsets • Suppose that there are 15 candidate 3 itemsets: {{1 4 5}, {2 3 4}, {3 4 5}, {1 2 4}, {5 6 7}, {3 5 6}, {3 5 7}, {3 6 8}, {1 2 5}, {4 5 7}, {4 5 8}, {1 5 9}, {6 8 9}, {1 3 6}}. • Hash function: h(x)=x mod 3 maxsize=3 • Initially, we set a root node with three child nodes for a hash tree. The three child nodes are viewed as three leaf nodes. • For each candidate itemset, • Step 1: Let k=1, indicating that we apply the hash function to the first item in an itemset. • Take first itemset {1 4 5}, see the first item that is 1. Apply hash function to 1, h(1)=1 mod 3=1. Let {1 4 5} go to the first child node. © Tan, Steinbach, Kumar

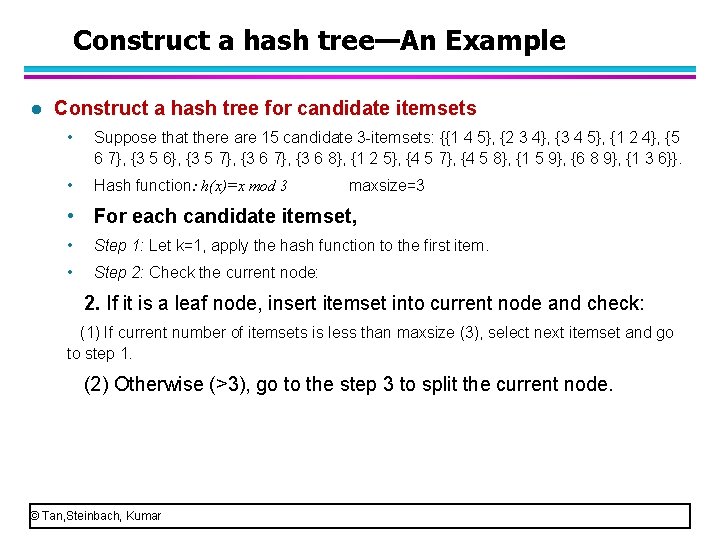

Construct a hash tree—An Example l Construct a hash tree for candidate itemsets • Suppose that there are 15 candidate 3 itemsets: {{1 4 5}, {2 3 4}, {3 4 5}, {1 2 4}, {5 6 7}, {3 5 6}, {3 5 7}, {3 6 8}, {1 2 5}, {4 5 7}, {4 5 8}, {1 5 9}, {6 8 9}, {1 3 6}}. • Hash function: h(x)=x mod 3 maxsize=3 • For each candidate itemset, • Step 1: Let k=1, apply the hash function to the first item. • Step 2: Check the current node: 1. If it is not a leaf node, let k: =k+1, apply hash function to the k th item, let the itemset go to the corresponding child node. 2. If it is a leaf node, insert itemset into current node and check: (1) If current number of itemsets <= maxsize (=3), select next itemset and go to step 1. © Tan, Steinbach, Kumar

Construct a hash tree—An Example l Construct a hash tree for candidate itemsets • Suppose that there are 15 candidate 3 itemsets: {{1 4 5}, {2 3 4}, {3 4 5}, {1 2 4}, {5 6 7}, {3 5 6}, {3 5 7}, {3 6 8}, {1 2 5}, {4 5 7}, {4 5 8}, {1 5 9}, {6 8 9}, {1 3 6}}. • Hash function: h(x)=x mod 3 maxsize=3 • For each candidate itemset, • Step 1: Let k=1, apply the hash function to the first item. • Step 2: Check the current node: 2. If it is a leaf node, insert itemset into current node and check: (1) If current number of itemsets is less than maxsize (3), select next itemset and go to step 1. (2) Otherwise (>3), go to the step 3 to split the current node. © Tan, Steinbach, Kumar

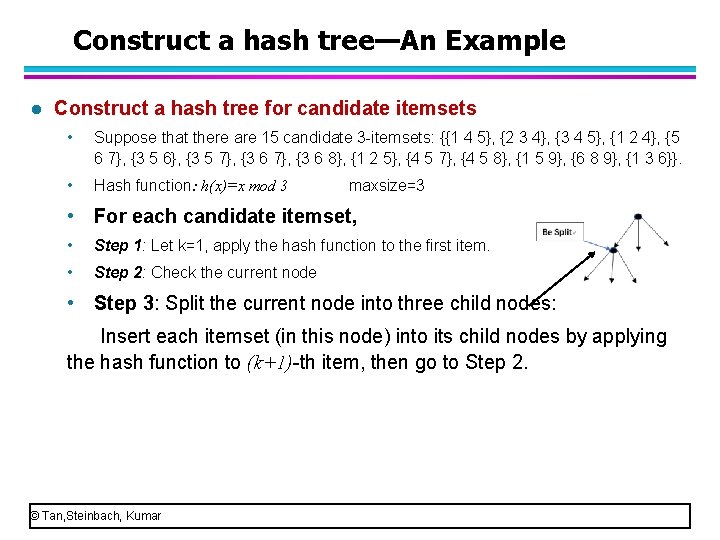

Construct a hash tree—An Example l Construct a hash tree for candidate itemsets • Suppose that there are 15 candidate 3 itemsets: {{1 4 5}, {2 3 4}, {3 4 5}, {1 2 4}, {5 6 7}, {3 5 6}, {3 5 7}, {3 6 8}, {1 2 5}, {4 5 7}, {4 5 8}, {1 5 9}, {6 8 9}, {1 3 6}}. • Hash function: h(x)=x mod 3 maxsize=3 • For each candidate itemset, • Step 1: Let k=1, apply the hash function to the first item. • Step 2: Check the current node • Step 3: Split the current node into three child nodes: Insert each itemset (in this node) into its child nodes by applying the hash function to (k+1) th item, then go to Step 2. © Tan, Steinbach, Kumar

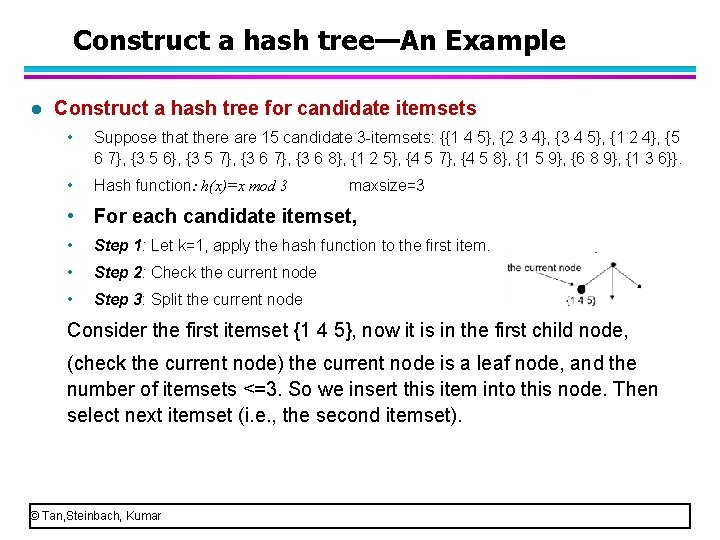

Construct a hash tree—An Example l Construct a hash tree for candidate itemsets • Suppose that there are 15 candidate 3 itemsets: {{1 4 5}, {2 3 4}, {3 4 5}, {1 2 4}, {5 6 7}, {3 5 6}, {3 5 7}, {3 6 8}, {1 2 5}, {4 5 7}, {4 5 8}, {1 5 9}, {6 8 9}, {1 3 6}}. • Hash function: h(x)=x mod 3 maxsize=3 • For each candidate itemset, • Step 1: Let k=1, apply the hash function to the first item. • Step 2: Check the current node • Step 3: Split the current node Consider the first itemset {1 4 5}, now it is in the first child node, (check the current node) the current node is a leaf node, and the number of itemsets <=3. So we insert this item into this node. Then select next itemset (i. e. , the second itemset). © Tan, Steinbach, Kumar

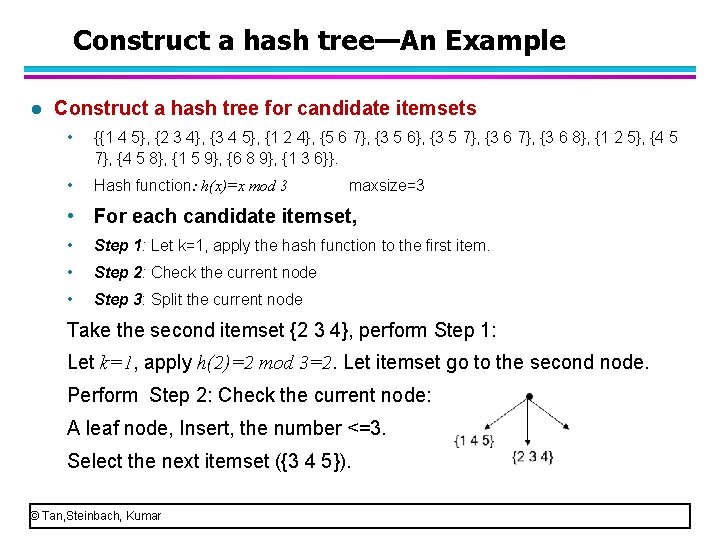

Construct a hash tree—An Example l Construct a hash tree for candidate itemsets • {{1 4 5}, {2 3 4}, {3 4 5}, {1 2 4}, {5 6 7}, {3 5 6}, {3 5 7}, {3 6 8}, {1 2 5}, {4 5 7}, {4 5 8}, {1 5 9}, {6 8 9}, {1 3 6}}. • Hash function: h(x)=x mod 3 maxsize=3 • For each candidate itemset, • Step 1: Let k=1, apply the hash function to the first item. • Step 2: Check the current node • Step 3: Split the current node Take the second itemset {2 3 4}, perform Step 1: Let k=1, apply h(2)=2 mod 3=2. Let itemset go to the second node. Perform Step 2: Check the current node: A leaf node, Insert, the number <=3. Select the next itemset ({3 4 5}). © Tan, Steinbach, Kumar

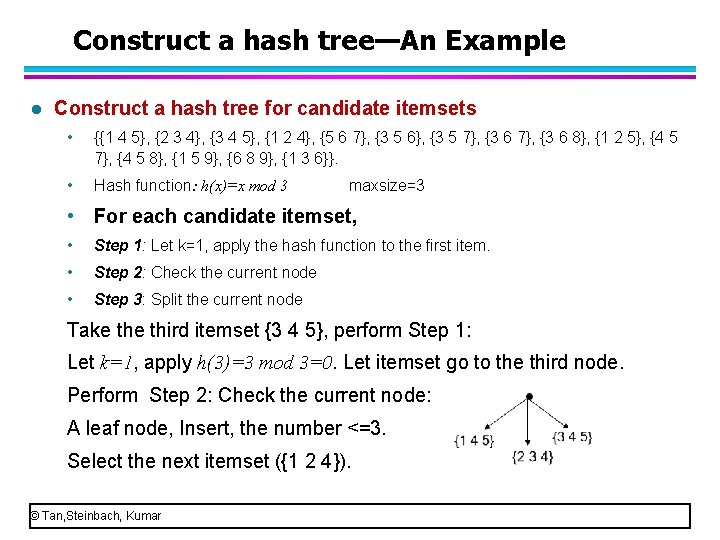

Construct a hash tree—An Example l Construct a hash tree for candidate itemsets • {{1 4 5}, {2 3 4}, {3 4 5}, {1 2 4}, {5 6 7}, {3 5 6}, {3 5 7}, {3 6 8}, {1 2 5}, {4 5 7}, {4 5 8}, {1 5 9}, {6 8 9}, {1 3 6}}. • Hash function: h(x)=x mod 3 maxsize=3 • For each candidate itemset, • Step 1: Let k=1, apply the hash function to the first item. • Step 2: Check the current node • Step 3: Split the current node Take third itemset {3 4 5}, perform Step 1: Let k=1, apply h(3)=3 mod 3=0. Let itemset go to the third node. Perform Step 2: Check the current node: A leaf node, Insert, the number <=3. Select the next itemset ({1 2 4}). © Tan, Steinbach, Kumar

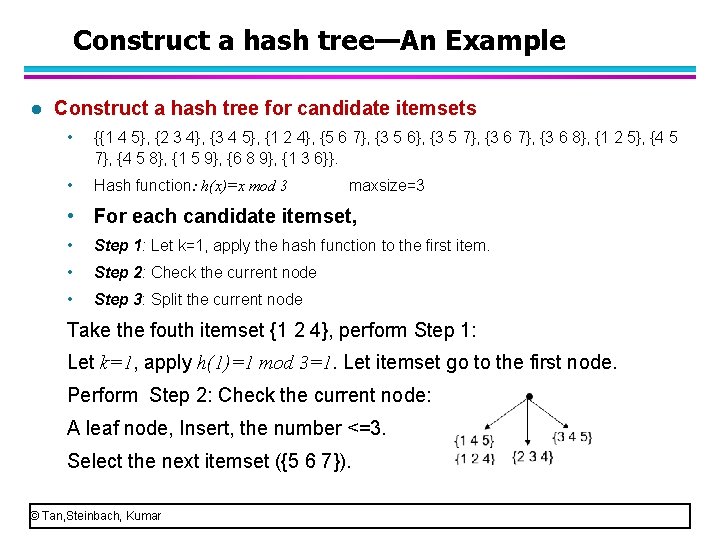

Construct a hash tree—An Example l Construct a hash tree for candidate itemsets • {{1 4 5}, {2 3 4}, {3 4 5}, {1 2 4}, {5 6 7}, {3 5 6}, {3 5 7}, {3 6 8}, {1 2 5}, {4 5 7}, {4 5 8}, {1 5 9}, {6 8 9}, {1 3 6}}. • Hash function: h(x)=x mod 3 maxsize=3 • For each candidate itemset, • Step 1: Let k=1, apply the hash function to the first item. • Step 2: Check the current node • Step 3: Split the current node Take the fouth itemset {1 2 4}, perform Step 1: Let k=1, apply h(1)=1 mod 3=1. Let itemset go to the first node. Perform Step 2: Check the current node: A leaf node, Insert, the number <=3. Select the next itemset ({5 6 7}). © Tan, Steinbach, Kumar

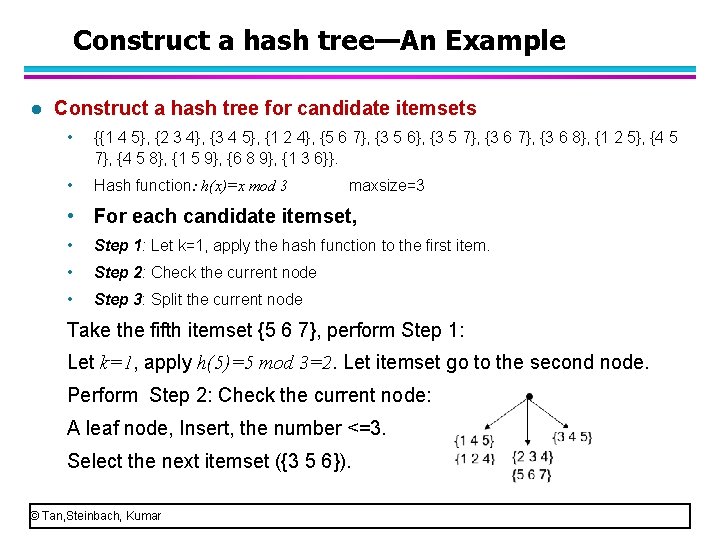

Construct a hash tree—An Example l Construct a hash tree for candidate itemsets • {{1 4 5}, {2 3 4}, {3 4 5}, {1 2 4}, {5 6 7}, {3 5 6}, {3 5 7}, {3 6 8}, {1 2 5}, {4 5 7}, {4 5 8}, {1 5 9}, {6 8 9}, {1 3 6}}. • Hash function: h(x)=x mod 3 maxsize=3 • For each candidate itemset, • Step 1: Let k=1, apply the hash function to the first item. • Step 2: Check the current node • Step 3: Split the current node Take the fifth itemset {5 6 7}, perform Step 1: Let k=1, apply h(5)=5 mod 3=2. Let itemset go to the second node. Perform Step 2: Check the current node: A leaf node, Insert, the number <=3. Select the next itemset ({3 5 6}). © Tan, Steinbach, Kumar

Construct a hash tree—An Example l Construct a hash tree for candidate itemsets • {{1 4 5}, {2 3 4}, {3 4 5}, {1 2 4}, {5 6 7}, {3 5 6}, {3 5 7}, {3 6 8}, {1 2 5}, {4 5 7}, {4 5 8}, {1 5 9}, {6 8 9}, {1 3 6}}. • Hash function: h(x)=x mod 3 maxsize=3 • For each candidate itemset, • Step 1: Let k=1, apply the hash function to the first item. • Step 2: Check the current node • Step 3: Split the current node Take the sixth itemset {3 5 6}, perform Step 1: Let k=1, apply h(3)=3 mod 3=0. Let itemset go to the third node. Perform Step 2: Check the current node: A leaf node, Insert, the number <=3. Select the next itemset ({3 5 7}). © Tan, Steinbach, Kumar

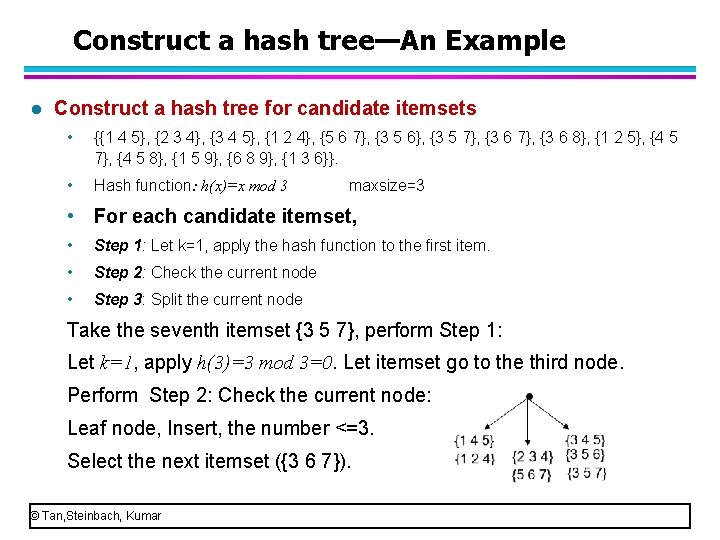

Construct a hash tree—An Example l Construct a hash tree for candidate itemsets • {{1 4 5}, {2 3 4}, {3 4 5}, {1 2 4}, {5 6 7}, {3 5 6}, {3 5 7}, {3 6 8}, {1 2 5}, {4 5 7}, {4 5 8}, {1 5 9}, {6 8 9}, {1 3 6}}. • Hash function: h(x)=x mod 3 maxsize=3 • For each candidate itemset, • Step 1: Let k=1, apply the hash function to the first item. • Step 2: Check the current node • Step 3: Split the current node Take the seventh itemset {3 5 7}, perform Step 1: Let k=1, apply h(3)=3 mod 3=0. Let itemset go to the third node. Perform Step 2: Check the current node: Leaf node, Insert, the number <=3. Select the next itemset ({3 6 7}). © Tan, Steinbach, Kumar

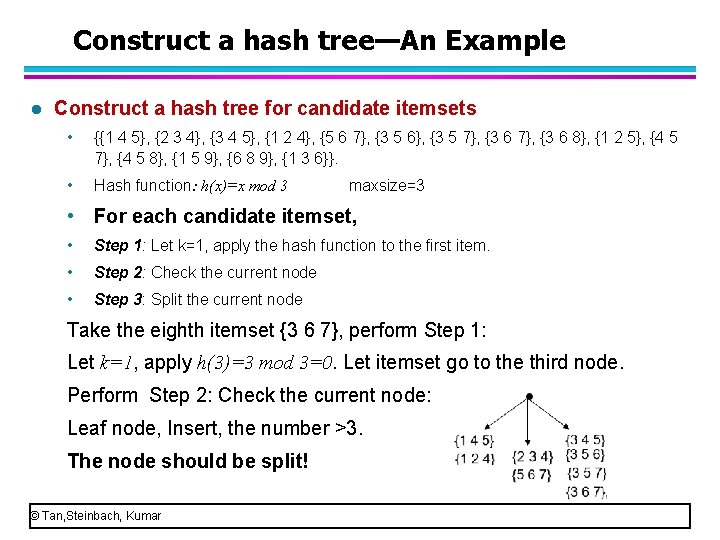

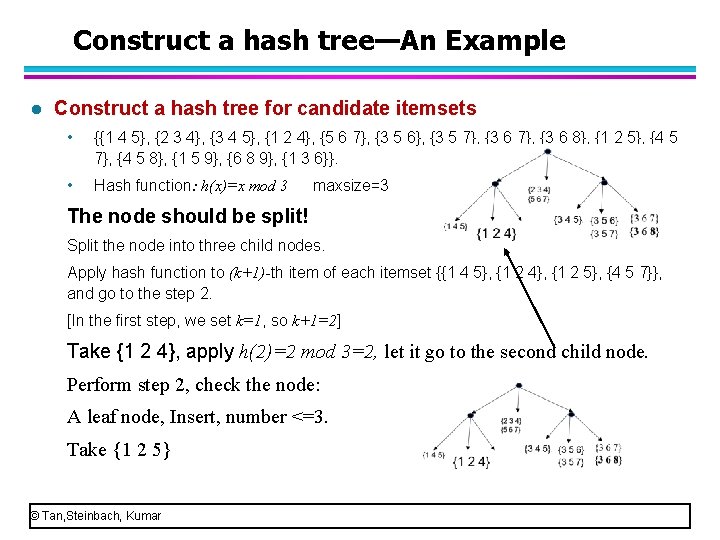

Construct a hash tree—An Example l Construct a hash tree for candidate itemsets • {{1 4 5}, {2 3 4}, {3 4 5}, {1 2 4}, {5 6 7}, {3 5 6}, {3 5 7}, {3 6 8}, {1 2 5}, {4 5 7}, {4 5 8}, {1 5 9}, {6 8 9}, {1 3 6}}. • Hash function: h(x)=x mod 3 maxsize=3 • For each candidate itemset, • Step 1: Let k=1, apply the hash function to the first item. • Step 2: Check the current node • Step 3: Split the current node Take the eighth itemset {3 6 7}, perform Step 1: Let k=1, apply h(3)=3 mod 3=0. Let itemset go to the third node. Perform Step 2: Check the current node: Leaf node, Insert, the number >3. The node should be split! © Tan, Steinbach, Kumar

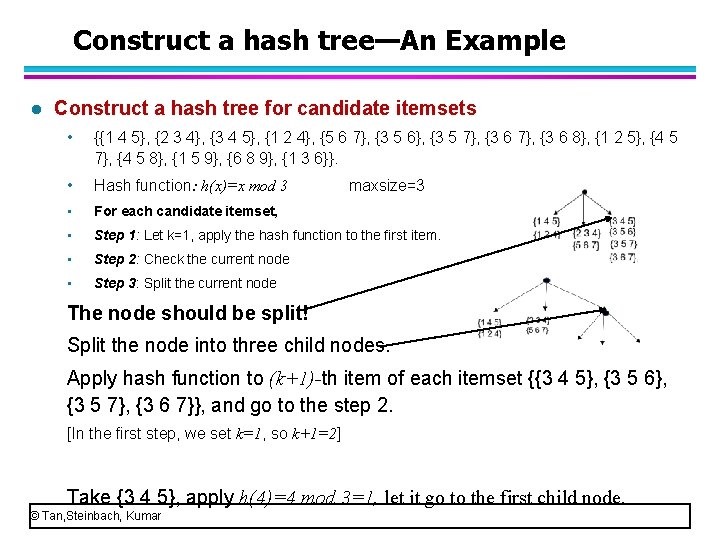

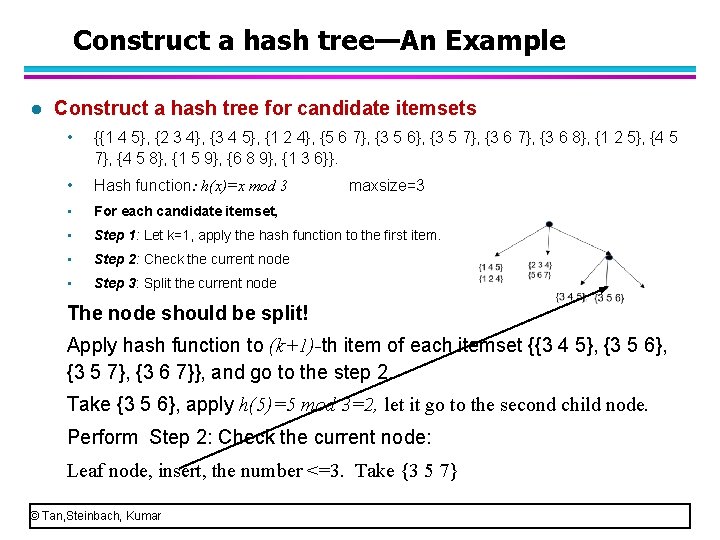

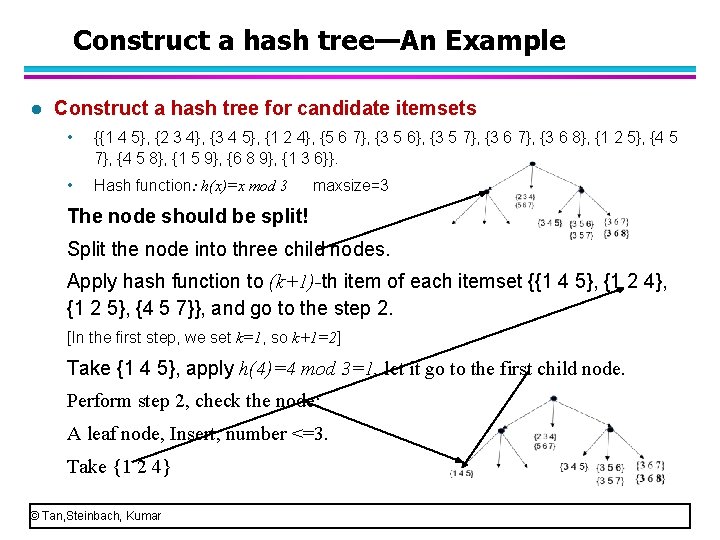

Construct a hash tree—An Example l Construct a hash tree for candidate itemsets • {{1 4 5}, {2 3 4}, {3 4 5}, {1 2 4}, {5 6 7}, {3 5 6}, {3 5 7}, {3 6 8}, {1 2 5}, {4 5 7}, {4 5 8}, {1 5 9}, {6 8 9}, {1 3 6}}. • Hash function: h(x)=x mod 3 • For each candidate itemset, • Step 1: Let k=1, apply the hash function to the first item. • Step 2: Check the current node • Step 3: Split the current node maxsize=3 The node should be split! Split the node into three child nodes. Apply hash function to (k+1)-th item of each itemset {{3 4 5}, {3 5 6}, {3 5 7}, {3 6 7}}, and go to the step 2. [In the first step, we set k=1, so k+1=2] Take {3 4 5}, apply h(4)=4 mod 3=1, let it go to the first child node. © Tan, Steinbach, Kumar

Construct a hash tree—An Example l Construct a hash tree for candidate itemsets • {{1 4 5}, {2 3 4}, {3 4 5}, {1 2 4}, {5 6 7}, {3 5 6}, {3 5 7}, {3 6 8}, {1 2 5}, {4 5 7}, {4 5 8}, {1 5 9}, {6 8 9}, {1 3 6}}. • Hash function: h(x)=x mod 3 • For each candidate itemset, • Step 1: Let k=1, apply the hash function to the first item. • Step 2: Check the current node • Step 3: Split the current node maxsize=3 The node should be split! Apply hash function to (k+1)-th item of each itemset {{3 4 5}, {3 5 6}, {3 5 7}, {3 6 7}}, and go to the step 2. Take {3 4 5}, apply h(4)=4 mod 3=1, let it go to the first child node. Perform Step 2: Check the current node: Leaf node, insert, the number <=3. Take {3 5 6} © Tan, Steinbach, Kumar

Construct a hash tree—An Example l Construct a hash tree for candidate itemsets • {{1 4 5}, {2 3 4}, {3 4 5}, {1 2 4}, {5 6 7}, {3 5 6}, {3 5 7}, {3 6 8}, {1 2 5}, {4 5 7}, {4 5 8}, {1 5 9}, {6 8 9}, {1 3 6}}. • Hash function: h(x)=x mod 3 • For each candidate itemset, • Step 1: Let k=1, apply the hash function to the first item. • Step 2: Check the current node • Step 3: Split the current node maxsize=3 The node should be split! Apply hash function to (k+1)-th item of each itemset {{3 4 5}, {3 5 6}, {3 5 7}, {3 6 7}}, and go to the step 2. Take {3 5 6}, apply h(5)=5 mod 3=2, let it go to the second child node. Perform Step 2: Check the current node: Leaf node, insert, the number <=3. Take {3 5 7} © Tan, Steinbach, Kumar

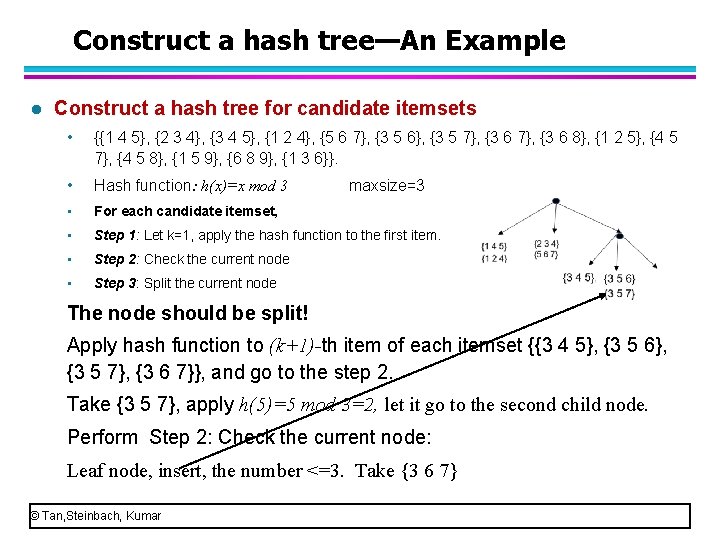

Construct a hash tree—An Example l Construct a hash tree for candidate itemsets • {{1 4 5}, {2 3 4}, {3 4 5}, {1 2 4}, {5 6 7}, {3 5 6}, {3 5 7}, {3 6 8}, {1 2 5}, {4 5 7}, {4 5 8}, {1 5 9}, {6 8 9}, {1 3 6}}. • Hash function: h(x)=x mod 3 • For each candidate itemset, • Step 1: Let k=1, apply the hash function to the first item. • Step 2: Check the current node • Step 3: Split the current node maxsize=3 The node should be split! Apply hash function to (k+1)-th item of each itemset {{3 4 5}, {3 5 6}, {3 5 7}, {3 6 7}}, and go to the step 2. Take {3 5 7}, apply h(5)=5 mod 3=2, let it go to the second child node. Perform Step 2: Check the current node: Leaf node, insert, the number <=3. Take {3 6 7} © Tan, Steinbach, Kumar

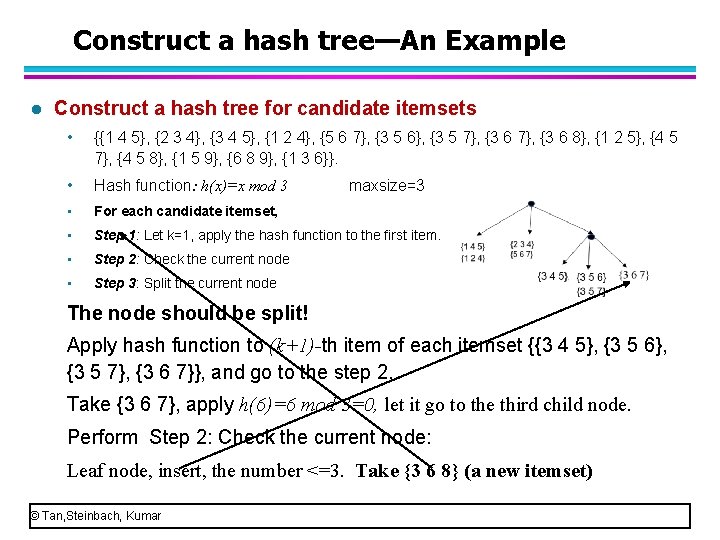

Construct a hash tree—An Example l Construct a hash tree for candidate itemsets • {{1 4 5}, {2 3 4}, {3 4 5}, {1 2 4}, {5 6 7}, {3 5 6}, {3 5 7}, {3 6 8}, {1 2 5}, {4 5 7}, {4 5 8}, {1 5 9}, {6 8 9}, {1 3 6}}. • Hash function: h(x)=x mod 3 • For each candidate itemset, • Step 1: Let k=1, apply the hash function to the first item. • Step 2: Check the current node • Step 3: Split the current node maxsize=3 The node should be split! Apply hash function to (k+1)-th item of each itemset {{3 4 5}, {3 5 6}, {3 5 7}, {3 6 7}}, and go to the step 2. Take {3 6 7}, apply h(6)=6 mod 3=0, let it go to the third child node. Perform Step 2: Check the current node: Leaf node, insert, the number <=3. Take {3 6 8} (a new itemset) © Tan, Steinbach, Kumar

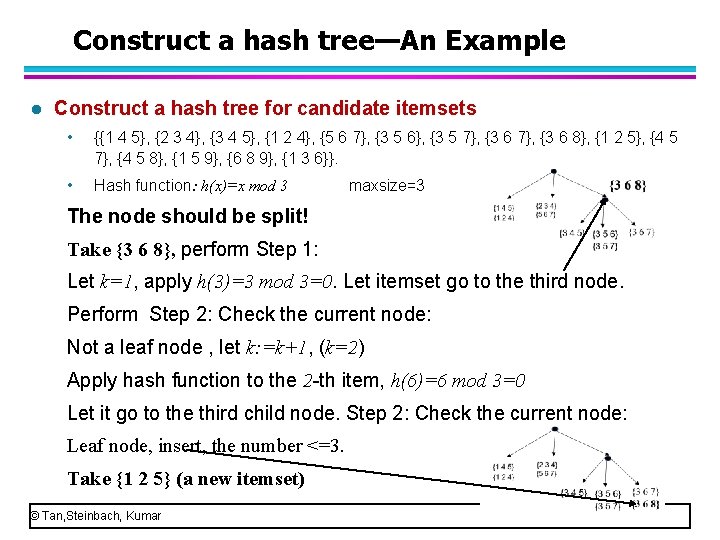

Construct a hash tree—An Example l Construct a hash tree for candidate itemsets • {{1 4 5}, {2 3 4}, {3 4 5}, {1 2 4}, {5 6 7}, {3 5 6}, {3 5 7}, {3 6 8}, {1 2 5}, {4 5 7}, {4 5 8}, {1 5 9}, {6 8 9}, {1 3 6}}. • Hash function: h(x)=x mod 3 maxsize=3 The node should be split! Take {3 6 8}, perform Step 1: Let k=1, apply h(3)=3 mod 3=0. Let itemset go to the third node. Perform Step 2: Check the current node: Not a leaf node , let k: =k+1, (k=2) Apply hash function to the 2 th item, h(6)=6 mod 3=0 Let it go to the third child node. Step 2: Check the current node: Leaf node, insert, the number <=3. Take {1 2 5} (a new itemset) © Tan, Steinbach, Kumar

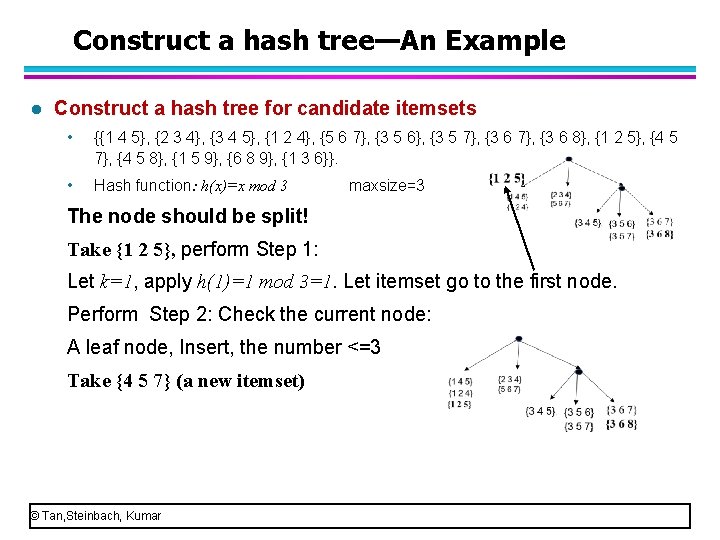

Construct a hash tree—An Example l Construct a hash tree for candidate itemsets • {{1 4 5}, {2 3 4}, {3 4 5}, {1 2 4}, {5 6 7}, {3 5 6}, {3 5 7}, {3 6 8}, {1 2 5}, {4 5 7}, {4 5 8}, {1 5 9}, {6 8 9}, {1 3 6}}. • Hash function: h(x)=x mod 3 maxsize=3 The node should be split! Take {1 2 5}, perform Step 1: Let k=1, apply h(1)=1 mod 3=1. Let itemset go to the first node. Perform Step 2: Check the current node: A leaf node, Insert, the number <=3 Take {4 5 7} (a new itemset) © Tan, Steinbach, Kumar

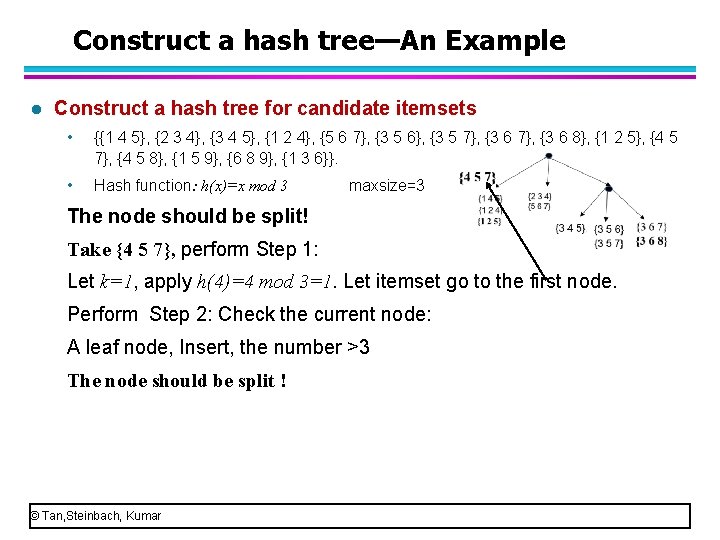

Construct a hash tree—An Example l Construct a hash tree for candidate itemsets • {{1 4 5}, {2 3 4}, {3 4 5}, {1 2 4}, {5 6 7}, {3 5 6}, {3 5 7}, {3 6 8}, {1 2 5}, {4 5 7}, {4 5 8}, {1 5 9}, {6 8 9}, {1 3 6}}. • Hash function: h(x)=x mod 3 maxsize=3 The node should be split! Take {4 5 7}, perform Step 1: Let k=1, apply h(4)=4 mod 3=1. Let itemset go to the first node. Perform Step 2: Check the current node: A leaf node, Insert, the number >3 The node should be split ! © Tan, Steinbach, Kumar

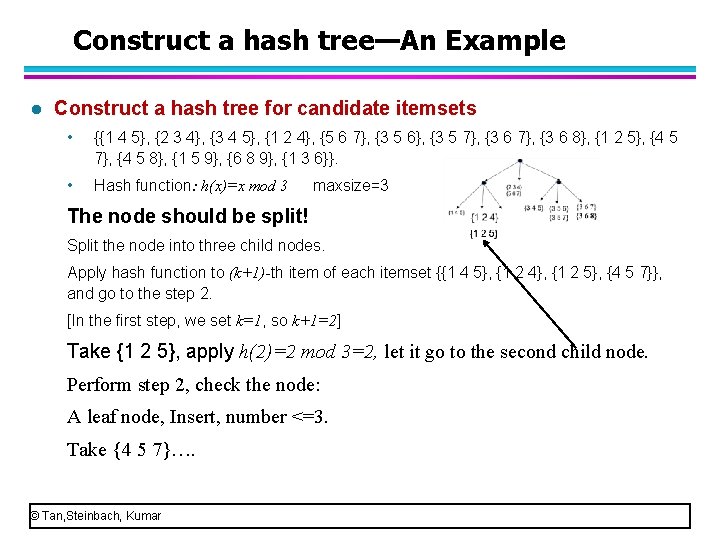

Construct a hash tree—An Example l Construct a hash tree for candidate itemsets • {{1 4 5}, {2 3 4}, {3 4 5}, {1 2 4}, {5 6 7}, {3 5 6}, {3 5 7}, {3 6 8}, {1 2 5}, {4 5 7}, {4 5 8}, {1 5 9}, {6 8 9}, {1 3 6}}. • Hash function: h(x)=x mod 3 maxsize=3 The node should be split! Split the node into three child nodes. Apply hash function to (k+1)-th item of each itemset {{1 4 5}, {1 2 4}, {1 2 5}, {4 5 7}}, and go to the step 2. [In the first step, we set k=1, so k+1=2] Take {1 4 5}, apply h(4)=4 mod 3=1, let it go to the first child node. Perform step 2, check the node: A leaf node, Insert, number <=3. Take {1 2 4} © Tan, Steinbach, Kumar

Construct a hash tree—An Example l Construct a hash tree for candidate itemsets • {{1 4 5}, {2 3 4}, {3 4 5}, {1 2 4}, {5 6 7}, {3 5 6}, {3 5 7}, {3 6 8}, {1 2 5}, {4 5 7}, {4 5 8}, {1 5 9}, {6 8 9}, {1 3 6}}. • Hash function: h(x)=x mod 3 maxsize=3 The node should be split! Split the node into three child nodes. Apply hash function to (k+1)-th item of each itemset {{1 4 5}, {1 2 4}, {1 2 5}, {4 5 7}}, and go to the step 2. [In the first step, we set k=1, so k+1=2] Take {1 2 4}, apply h(2)=2 mod 3=2, let it go to the second child node. Perform step 2, check the node: A leaf node, Insert, number <=3. Take {1 2 5} © Tan, Steinbach, Kumar

Construct a hash tree—An Example l Construct a hash tree for candidate itemsets • {{1 4 5}, {2 3 4}, {3 4 5}, {1 2 4}, {5 6 7}, {3 5 6}, {3 5 7}, {3 6 8}, {1 2 5}, {4 5 7}, {4 5 8}, {1 5 9}, {6 8 9}, {1 3 6}}. • Hash function: h(x)=x mod 3 maxsize=3 The node should be split! Split the node into three child nodes. Apply hash function to (k+1)-th item of each itemset {{1 4 5}, {1 2 4}, {1 2 5}, {4 5 7}}, and go to the step 2. [In the first step, we set k=1, so k+1=2] Take {1 2 5}, apply h(2)=2 mod 3=2, let it go to the second child node. Perform step 2, check the node: A leaf node, Insert, number <=3. Take {4 5 7}…. © Tan, Steinbach, Kumar

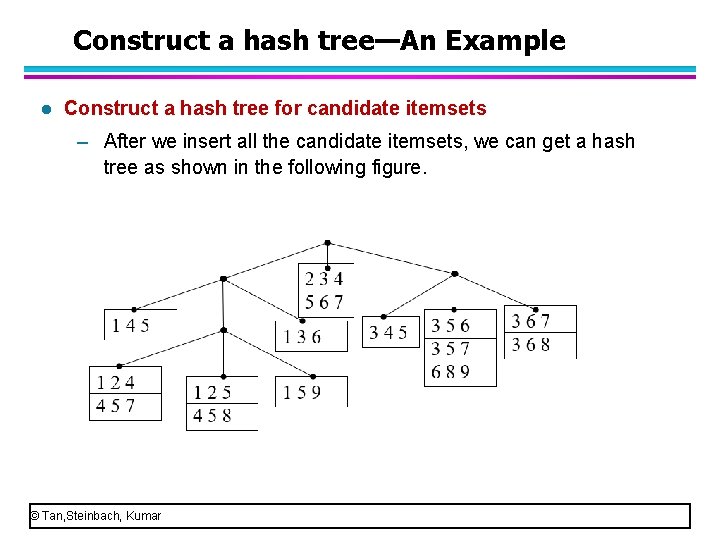

Construct a hash tree—An Example l Construct a hash tree for candidate itemsets – After we insert all the candidate itemsets, we can get a hash tree as shown in the following figure. © Tan, Steinbach, Kumar

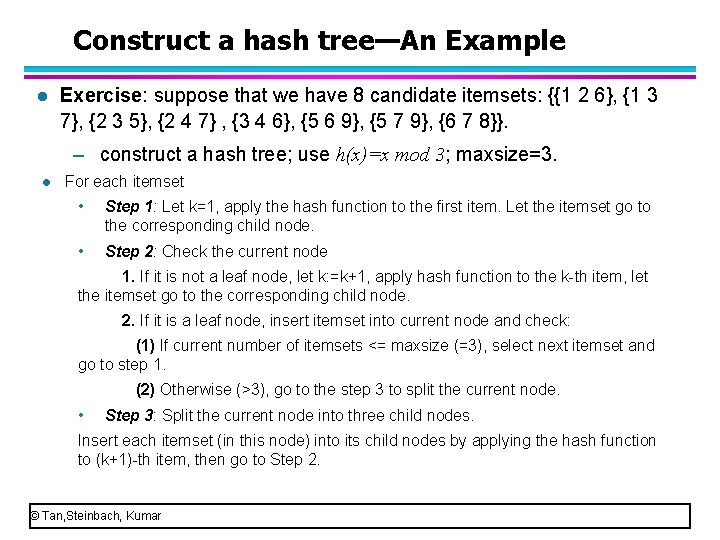

Construct a hash tree—An Example l Exercise: suppose that we have 8 candidate itemsets: {{1 2 6}, {1 3 7}, {2 3 5}, {2 4 7} , {3 4 6}, {5 6 9}, {5 7 9}, {6 7 8}}. – construct a hash tree; use h(x)=x mod 3; maxsize=3. l For each itemset • Step 1: Let k=1, apply the hash function to the first item. Let the itemset go to the corresponding child node. • Step 2: Check the current node 1. If it is not a leaf node, let k: =k+1, apply hash function to the k th item, let the itemset go to the corresponding child node. 2. If it is a leaf node, insert itemset into current node and check: (1) If current number of itemsets <= maxsize (=3), select next itemset and go to step 1. (2) Otherwise (>3), go to the step 3 to split the current node. • Step 3: Split the current node into three child nodes. Insert each itemset (in this node) into its child nodes by applying the hash function to (k+1) th item, then go to Step 2. © Tan, Steinbach, Kumar

How to Count Supports of Candidates? l Three issues for counting supports of candidates – How to construct a hash tree for candidate itemsets? – How to numerate the itemsets contained in a transaction. – How to use each transaction to update the support counts. © Tan, Steinbach, Kumar

Numerate the itemsets l Given a transaction {1 2 3 4} with 4 items, it contains four 3 itemsets : {1 2 3}, {1 2 4}, {1 3 4}, {2 3 4}. l Given a transaction t= {1 2 3 5 6} with 5 items, is there any effective method to enumerate all of its 3 itemsets? Yes. Apriori algorithm uses a systematic tree to enumerate. © Tan, Steinbach, Kumar

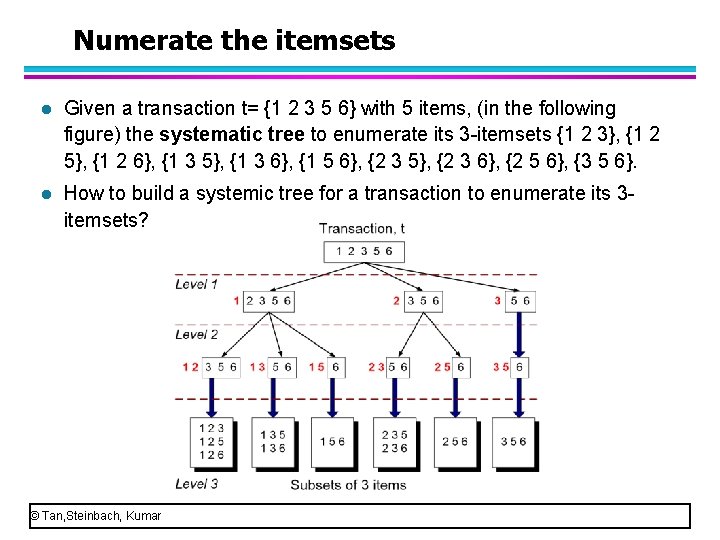

Numerate the itemsets l Given a transaction t= {1 2 3 5 6} with 5 items, (in the following figure) the systematic tree to enumerate its 3 itemsets {1 2 3}, {1 2 5}, {1 2 6}, {1 3 5}, {1 3 6}, {1 5 6}, {2 3 5}, {2 3 6}, {2 5 6}, {3 5 6}. l How to build a systemic tree for a transaction to enumerate its 3 itemsets? © Tan, Steinbach, Kumar

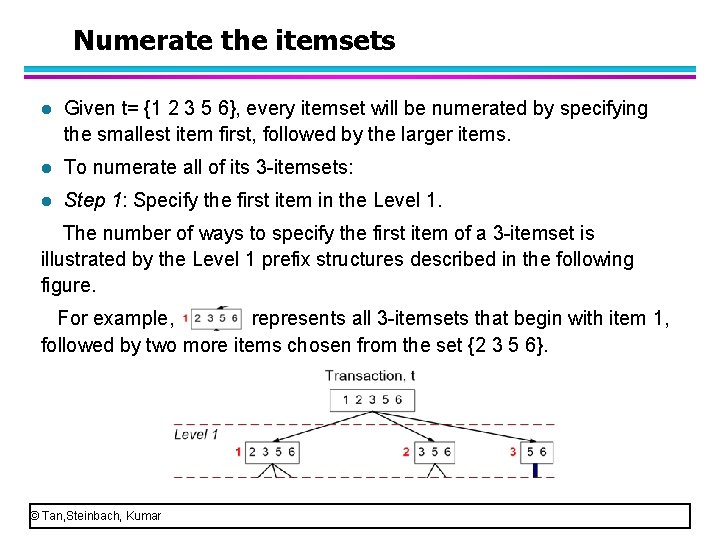

Numerate the itemsets l Given t= {1 2 3 5 6}, every itemset will be numerated by specifying the smallest item first, followed by the larger items. l To numerate all of its 3 itemsets: l Step 1: Specify the first item in the Level 1. All the 3 itemsets must begin with item 1, 2, or 3. It is not possible to construct a 3 itemset that begins with items 5 or 6 because there are only two items in t whose labels are greater than or equal to 5. The number of ways to specify the first item of a 3 itemset is illustrated by the Level 1 prefix structures described in the following figure. © Tan, Steinbach, Kumar

Numerate the itemsets l Given t= {1 2 3 5 6}, every itemset will be numerated by specifying the smallest item first, followed by the larger items. l To numerate all of its 3 itemsets: l Step 1: Specify the first item in the Level 1. The number of ways to specify the first item of a 3 itemset is illustrated by the Level 1 prefix structures described in the following figure. For example, represents all 3 itemsets that begin with item 1, followed by two more items chosen from the set {2 3 5 6}. © Tan, Steinbach, Kumar

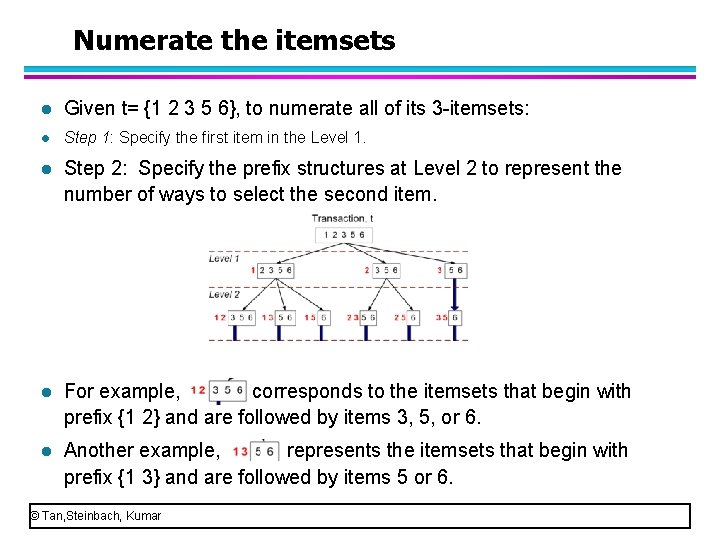

Numerate the itemsets l Given t= {1 2 3 5 6}, to numerate all of its 3 itemsets: l Step 1: Specify the first item in the Level 1. l Step 2: Specify the prefix structures at Level 2 to represent the number of ways to select the second item. l For example, corresponds to the itemsets that begin with prefix {1 2} and are followed by items 3, 5, or 6. l Another example, represents the itemsets that begin with prefix {1 3} and are followed by items 5 or 6. © Tan, Steinbach, Kumar

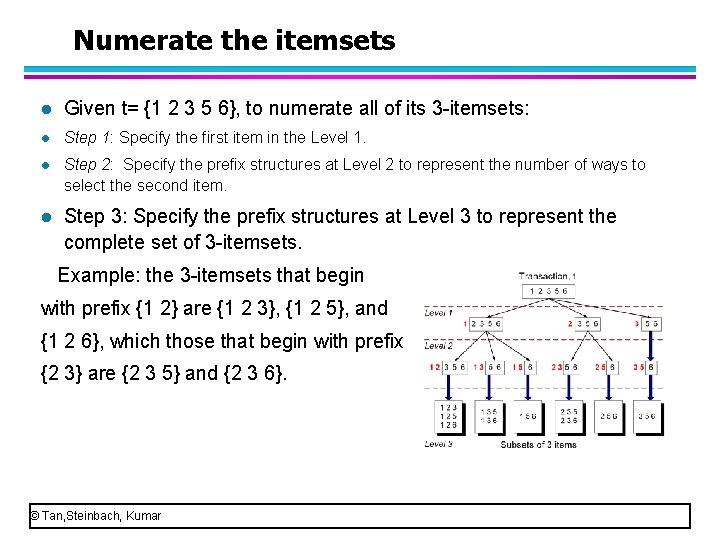

Numerate the itemsets l Given t= {1 2 3 5 6}, to numerate all of its 3 itemsets: l Step 1: Specify the first item in the Level 1. l Step 2: Specify the prefix structures at Level 2 to represent the number of ways to select the second item. l Step 3: Specify the prefix structures at Level 3 to represent the complete set of 3 itemsets. Example: the 3 itemsets that begin with prefix {1 2} are {1 2 3}, {1 2 5}, and {1 2 6}, which those that begin with prefix {2 3} are {2 3 5} and {2 3 6}. © Tan, Steinbach, Kumar

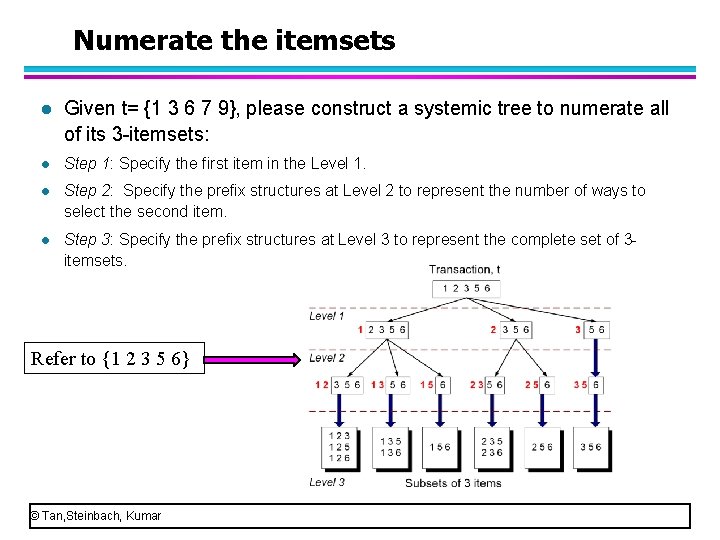

Numerate the itemsets l Given t= {1 3 6 7 9}, please construct a systemic tree to numerate all of its 3 itemsets: l Step 1: Specify the first item in the Level 1. l Step 2: Specify the prefix structures at Level 2 to represent the number of ways to select the second item. l Step 3: Specify the prefix structures at Level 3 to represent the complete set of 3 itemsets. Refer to {1 2 3 5 6} © Tan, Steinbach, Kumar

How to Count Supports of Candidates? l Three issues for counting supports of candidates – How to construct a hash tree for candidate itemsets? – How to numerate the itemsets contained in a transaction. – How to use each transaction to update the support counts. © Tan, Steinbach, Kumar

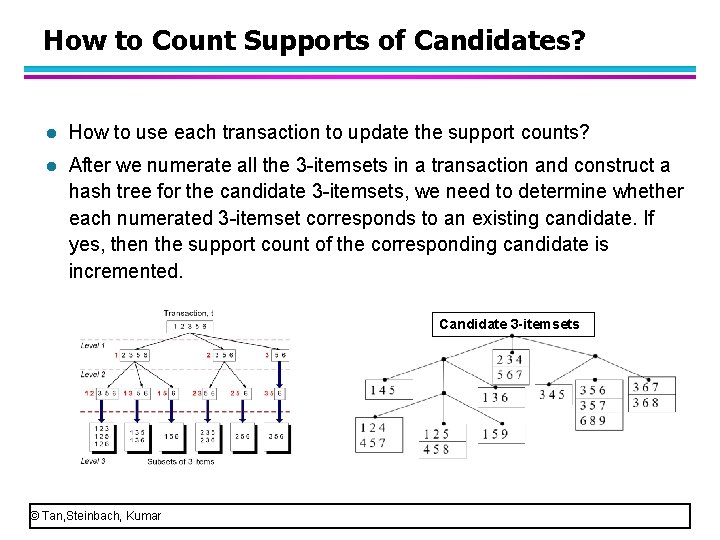

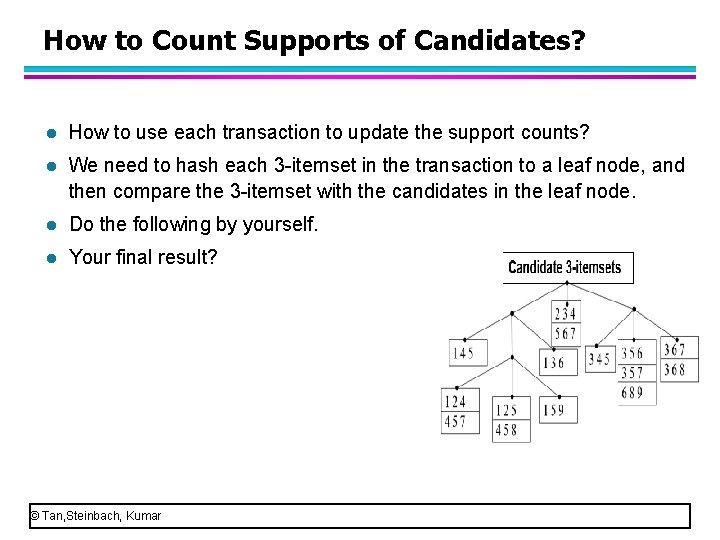

How to Count Supports of Candidates? l How to use each transaction to update the support counts? l After we numerate all the 3 itemsets in a transaction and construct a hash tree for the candidate 3 itemsets, we need to determine whether each numerated 3 itemset corresponds to an existing candidate. If yes, then the support count of the corresponding candidate is incremented. Candidate 3 -itemsets © Tan, Steinbach, Kumar

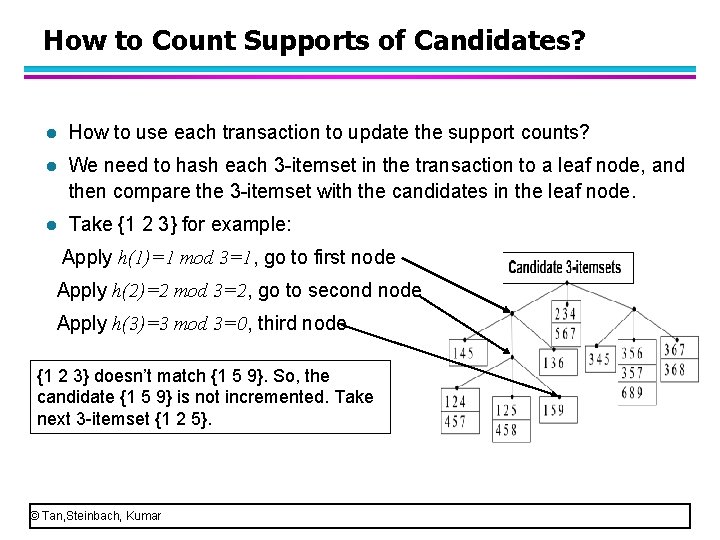

How to Count Supports of Candidates? l How to use each transaction to update the support counts? l We need to hash each 3 itemset in the transaction to a leaf node, and then compare the 3 itemset with the candidates in the leaf node. l Take {1 2 3} for example: Apply h(1)=1 mod 3=1, go to first node Apply h(2)=2 mod 3=2, go to second node Apply h(3)=3 mod 3=0, third node {1 2 3} doesn’t match {1 5 9}. So, the candidate {1 5 9} is not incremented. Take next 3 itemset {1 2 5}. © Tan, Steinbach, Kumar

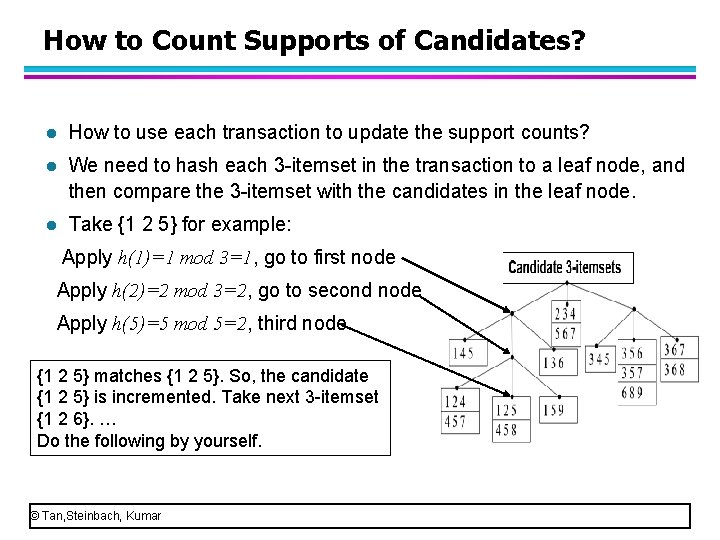

How to Count Supports of Candidates? l How to use each transaction to update the support counts? l We need to hash each 3 itemset in the transaction to a leaf node, and then compare the 3 itemset with the candidates in the leaf node. l Take {1 2 5} for example: Apply h(1)=1 mod 3=1, go to first node Apply h(2)=2 mod 3=2, go to second node Apply h(5)=5 mod 5=2, third node {1 2 5} matches {1 2 5}. So, the candidate {1 2 5} is incremented. Take next 3 itemset {1 2 6}. … Do the following by yourself. © Tan, Steinbach, Kumar

How to Count Supports of Candidates? l How to use each transaction to update the support counts? l We need to hash each 3 itemset in the transaction to a leaf node, and then compare the 3 itemset with the candidates in the leaf node. l Do the following by yourself. l Your final result? © Tan, Steinbach, Kumar

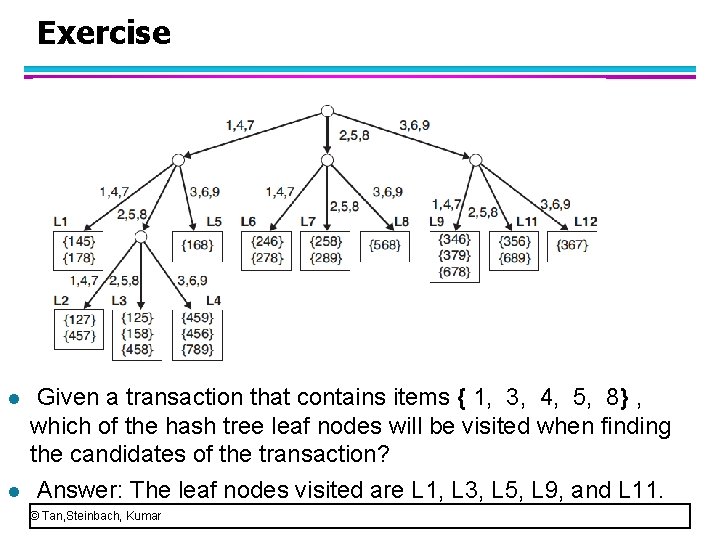

Exercise l l Given a transaction that contains items { 1, 3, 4, 5, 8} , which of the hash tree leaf nodes will be visited when finding the candidates of the transaction? Answer: The leaf nodes visited are L 1, L 3, L 5, L 9, and L 11. © Tan, Steinbach, Kumar

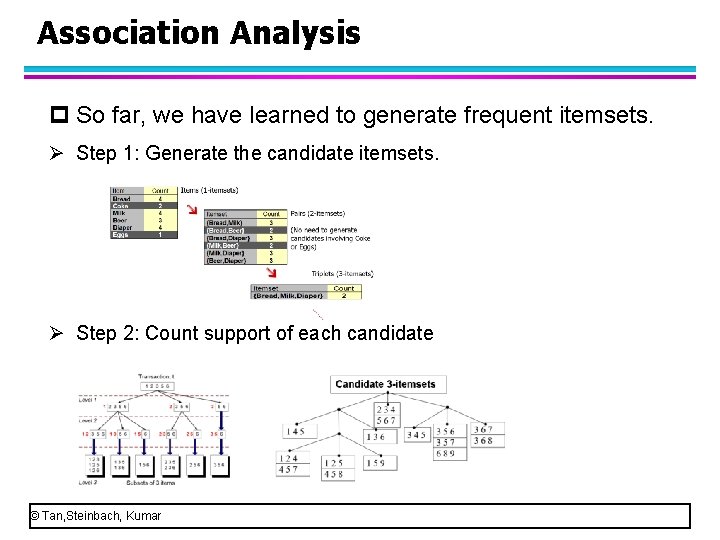

Association Analysis p So far, we have learned to generate frequent itemsets. Ø Step 1: Generate the candidate itemsets. Ø Step 2: Count support of each candidate © Tan, Steinbach, Kumar

Association Analysis p Most typical algorithm for association analysis: Apriori Ø Section 1: Frequent Itemset Generation of Apriori Ø Section 2: Rules generation of Apriori p We have learned to generate frequent itemsets. Now let’s move to the section 2, rules generation. © Tan, Steinbach, Kumar

Mining Association Rules l Two step approach: 1. Frequent Itemset Generation – Generate all itemsets whose support minsup 2. Rule Generation – Generate high confidence rules from each frequent itemset, where each rule is a binary partitioning of a frequent itemset © Tan, Steinbach, Kumar

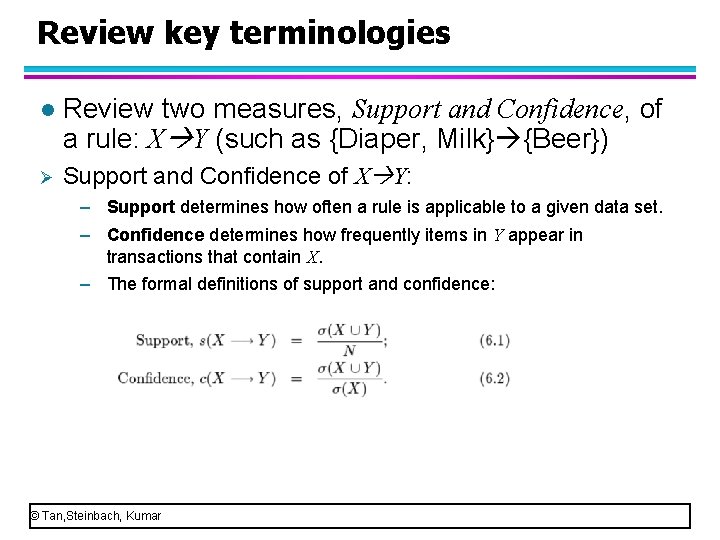

Review key terminologies l Review two measures, Support and Confidence, of a rule: X Y (such as {Diaper, Milk} {Beer}) Ø Support and Confidence of X Y: – Support determines how often a rule is applicable to a given data set. – Confidence determines how frequently items in Y appear in transactions that contain X. – The formal definitions of support and confidence: © Tan, Steinbach, Kumar

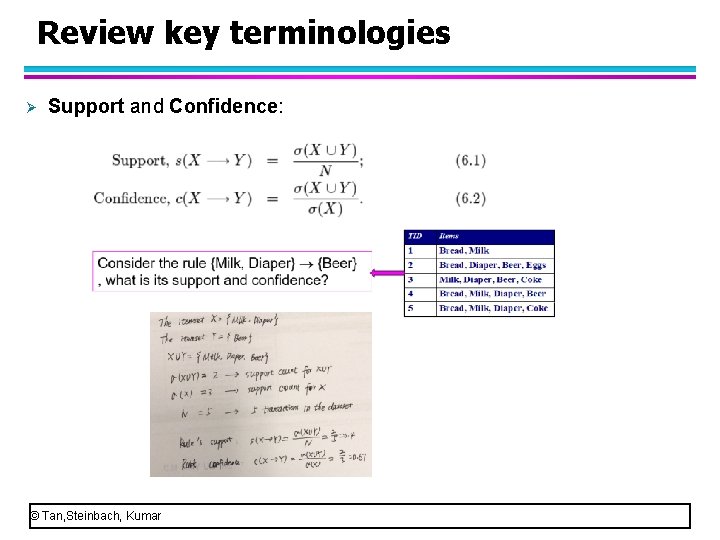

Review key terminologies Ø Support and Confidence: © Tan, Steinbach, Kumar

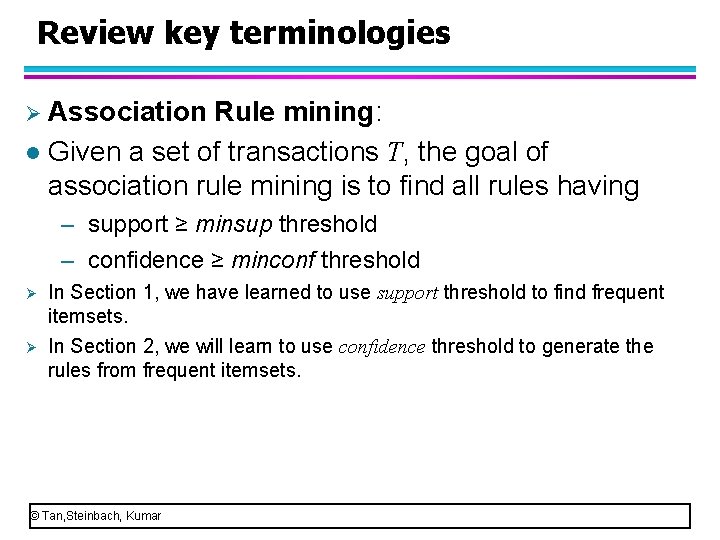

Review key terminologies Ø Association Rule mining: l Given a set of transactions T, the goal of association rule mining is to find all rules having – support ≥ minsup threshold – confidence ≥ minconf threshold Ø In Section 1, we have learned to use support threshold to find frequent itemsets. Ø In Section 2, we will learn to use confidence threshold to generate the rules from frequent itemsets. © Tan, Steinbach, Kumar

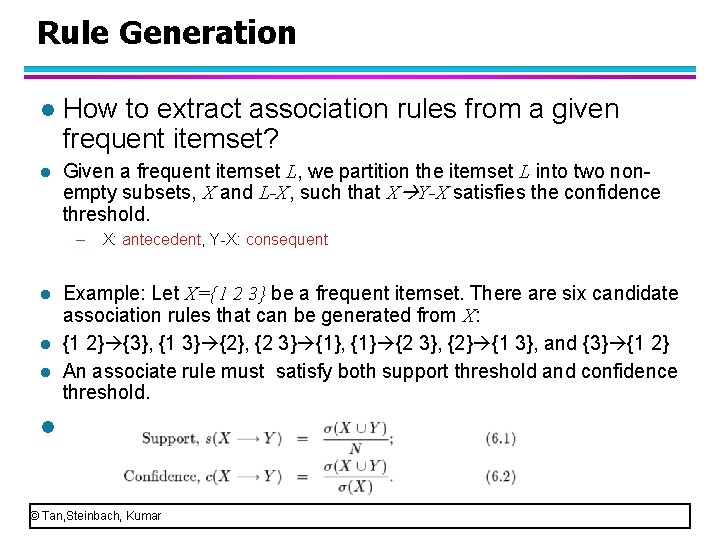

Rule Generation l How to extract association rules from a given frequent itemset? l Given a frequent itemset L, we partition the itemset L into two non empty subsets, X and L-X, such that X Y-X satisfies the confidence threshold. – l l l X: antecedent, Y X: consequent Example: Let X={1 2 3} be a frequent itemset. There are six candidate association rules that can be generated from X: {1 2} {3}, {1 3} {2}, {2 3} {1}, {1} {2 3}, {2} {1 3}, and {3} {1 2} An associate rule must satisfy both support threshold and confidence threshold. l © Tan, Steinbach, Kumar

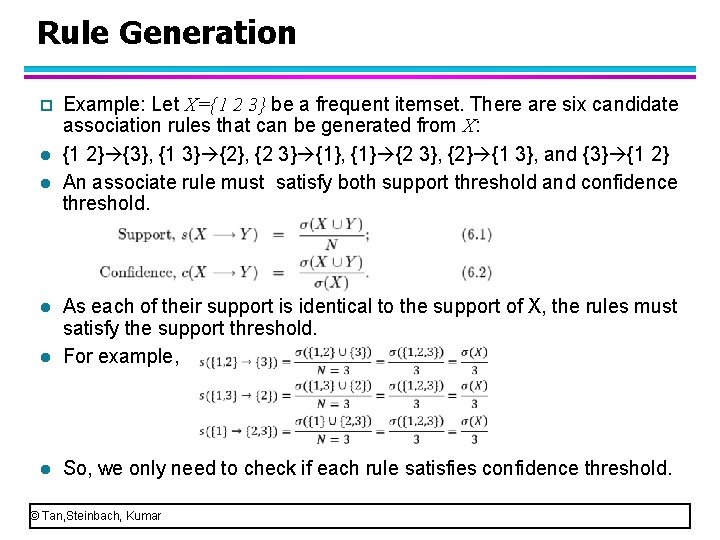

Rule Generation p l l Example: Let X={1 2 3} be a frequent itemset. There are six candidate association rules that can be generated from X: {1 2} {3}, {1 3} {2}, {2 3} {1}, {1} {2 3}, {2} {1 3}, and {3} {1 2} An associate rule must satisfy both support threshold and confidence threshold. l As each of their support is identical to the support of X, the rules must satisfy the support threshold. For example, l So, we only need to check if each rule satisfies confidence threshold. l © Tan, Steinbach, Kumar

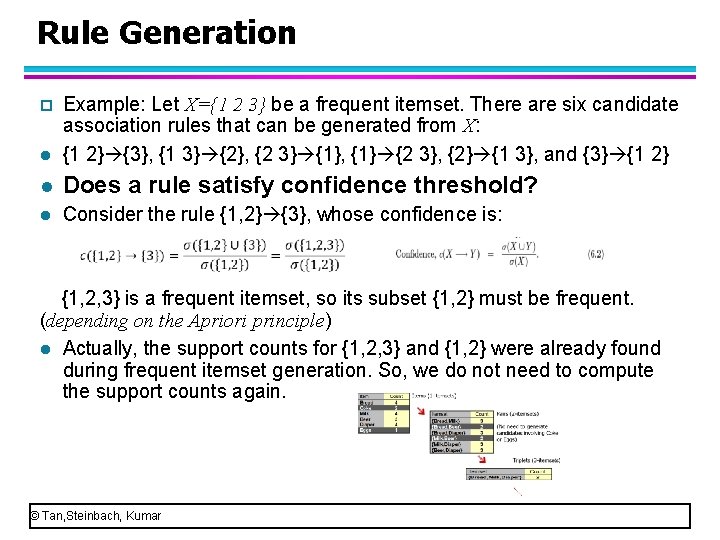

Rule Generation l Example: Let X={1 2 3} be a frequent itemset. There are six candidate association rules that can be generated from X: {1 2} {3}, {1 3} {2}, {2 3} {1}, {1} {2 3}, {2} {1 3}, and {3} {1 2} l Does a rule satisfy confidence threshold? l Consider the rule {1, 2} {3}, whose confidence is: p {1, 2, 3} is a frequent itemset, so its subset {1, 2} must be frequent. (depending on the Apriori principle) l Actually, the support counts for {1, 2, 3} and {1, 2} were already found during frequent itemset generation. So, we do not need to compute the support counts again. © Tan, Steinbach, Kumar

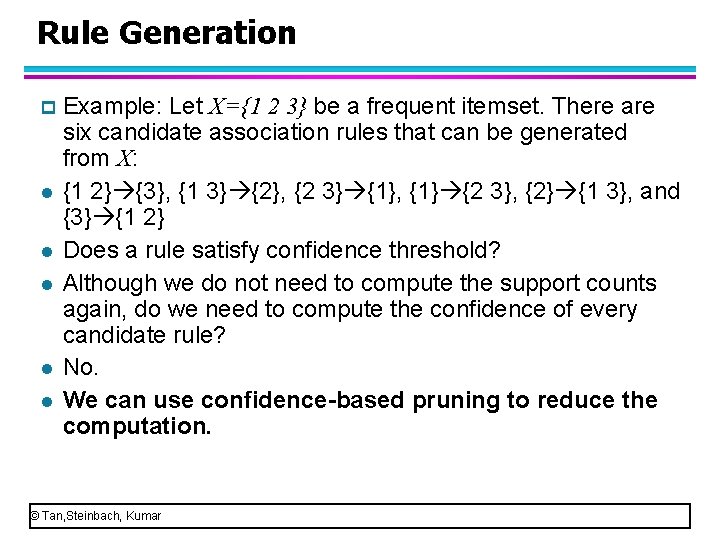

Rule Generation p l l l Example: Let X={1 2 3} be a frequent itemset. There are six candidate association rules that can be generated from X: {1 2} {3}, {1 3} {2}, {2 3} {1}, {1} {2 3}, {2} {1 3}, and {3} {1 2} Does a rule satisfy confidence threshold? Although we do not need to compute the support counts again, do we need to compute the confidence of every candidate rule? No. We can use confidence-based pruning to reduce the computation. © Tan, Steinbach, Kumar

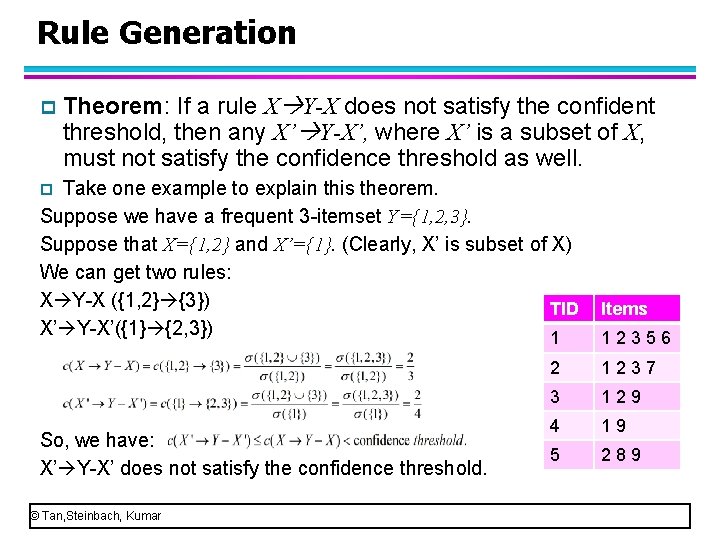

Rule Generation p Theorem: If a rule X Y-X does not satisfy the confident threshold, then any X’ Y-X’, where X’ is a subset of X, must not satisfy the confidence threshold as well. Take one example to explain this theorem. Suppose we have a frequent 3 itemset Y={1, 2, 3}. Suppose that X={1, 2} and X’={1}. (Clearly, X’ is subset of X) We can get two rules: X Y X ({1, 2} {3}) TID X’ Y X’({1} {2, 3}) 1 p So, we have: X’ Y X’ does not satisfy the confidence threshold. © Tan, Steinbach, Kumar Items 1 2 3 5 6 2 1 2 3 7 3 1 2 9 4 1 9 5 2 8 9

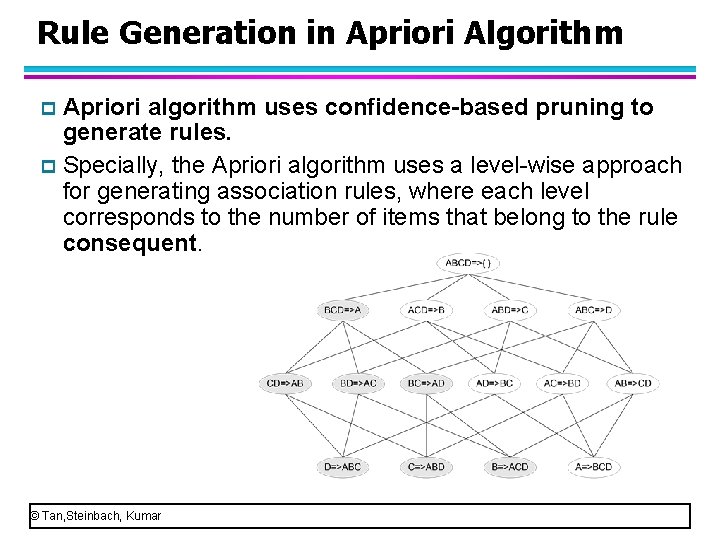

Rule Generation in Apriori Algorithm Apriori algorithm uses confidence-based pruning to generate rules. p Specially, the Apriori algorithm uses a level wise approach for generating association rules, where each level corresponds to the number of items that belong to the rule consequent. p © Tan, Steinbach, Kumar

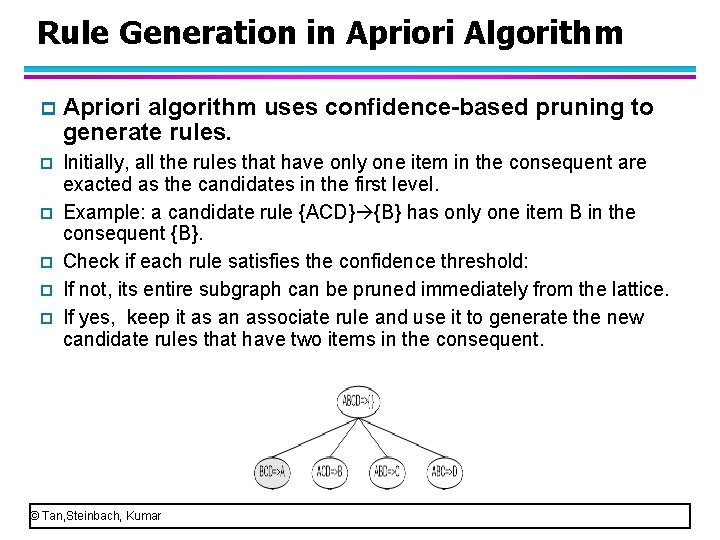

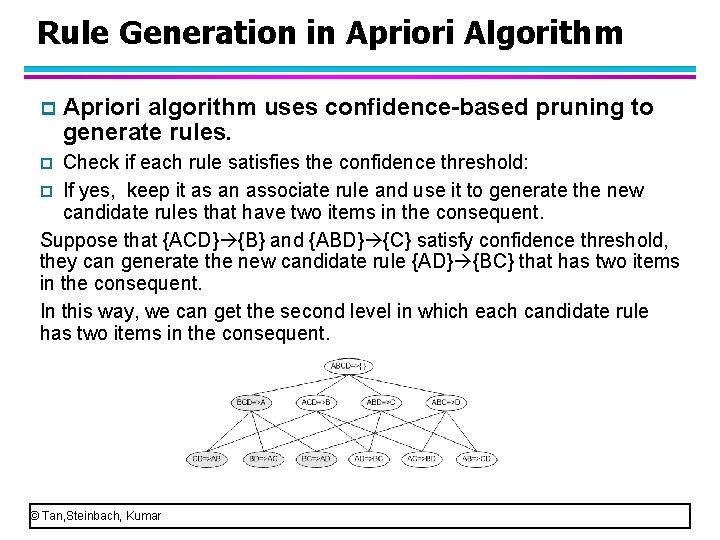

Rule Generation in Apriori Algorithm p Apriori algorithm uses confidence-based pruning to generate rules. p Initially, all the rules that have only one item in the consequent are exacted as the candidates in the first level. Example: a candidate rule {ACD} {B} has only one item B in the consequent {B}. Check if each rule satisfies the confidence threshold: If not, its entire subgraph can be pruned immediately from the lattice. If yes, keep it as an associate rule and use it to generate the new candidate rules that have two items in the consequent. p p © Tan, Steinbach, Kumar

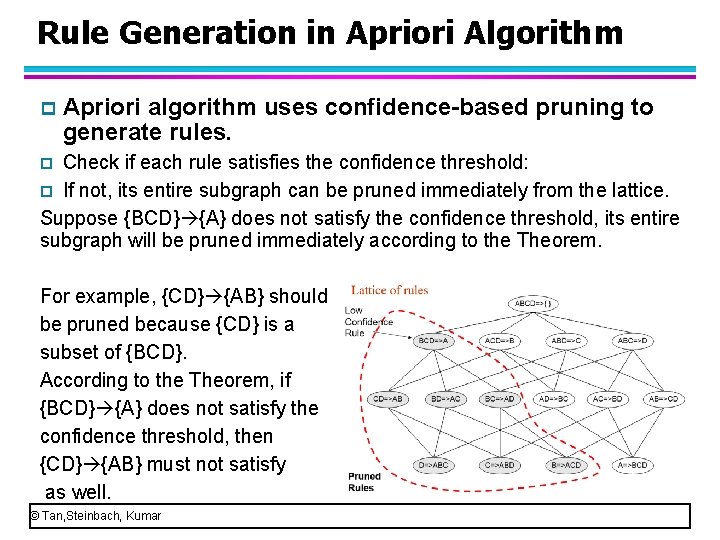

Rule Generation in Apriori Algorithm p Apriori algorithm uses confidence-based pruning to generate rules. Check if each rule satisfies the confidence threshold: p If not, its entire subgraph can be pruned immediately from the lattice. Suppose {BCD} {A} does not satisfy the confidence threshold, its entire subgraph will be pruned immediately according to the Theorem. p For example, {CD} {AB} should be pruned because {CD} is a subset of {BCD}. According to the Theorem, if {BCD} {A} does not satisfy the confidence threshold, then {CD} {AB} must not satisfy as well. © Tan, Steinbach, Kumar

Rule Generation in Apriori Algorithm p Apriori algorithm uses confidence-based pruning to generate rules. Check if each rule satisfies the confidence threshold: p If yes, keep it as an associate rule and use it to generate the new candidate rules that have two items in the consequent. Suppose that {ACD} {B} and {ABD} {C} satisfy confidence threshold, they can generate the new candidate rule {AD} {BC} that has two items in the consequent. In this way, we can get the second level in which each candidate rule has two items in the consequent. p © Tan, Steinbach, Kumar

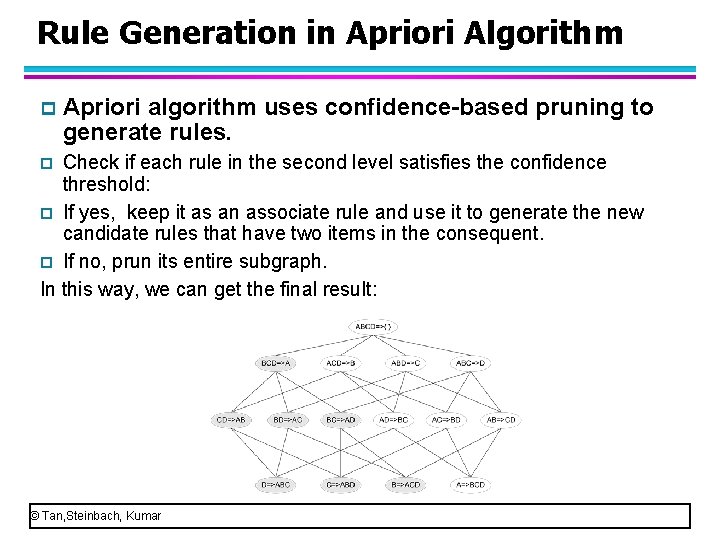

Rule Generation in Apriori Algorithm p Apriori algorithm uses confidence-based pruning to generate rules. Check if each rule in the second level satisfies the confidence threshold: p If yes, keep it as an associate rule and use it to generate the new candidate rules that have two items in the consequent. p If no, prun its entire subgraph. In this way, we can get the final result: p © Tan, Steinbach, Kumar

Rule Generation for Apriori Algorithm l Candidate rule is generated by merging two rules that share the same prefix in the rule consequent l join(CD=>AB, BD=>AC) would produce the candidate rule D => ABC l Prune rule D=>ABC if its subset AD=>BC does not have high confidence © Tan, Steinbach, Kumar

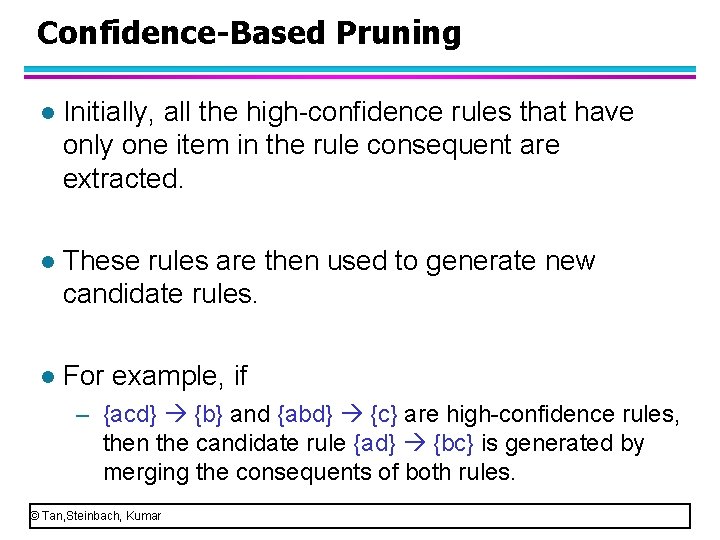

Confidence-Based Pruning l Initially, all the high confidence rules that have only one item in the rule consequent are extracted. l These rules are then used to generate new candidate rules. l For example, if – {acd} {b} and {abd} {c} are high confidence rules, then the candidate rule {ad} {bc} is generated by merging the consequents of both rules. © Tan, Steinbach, Kumar

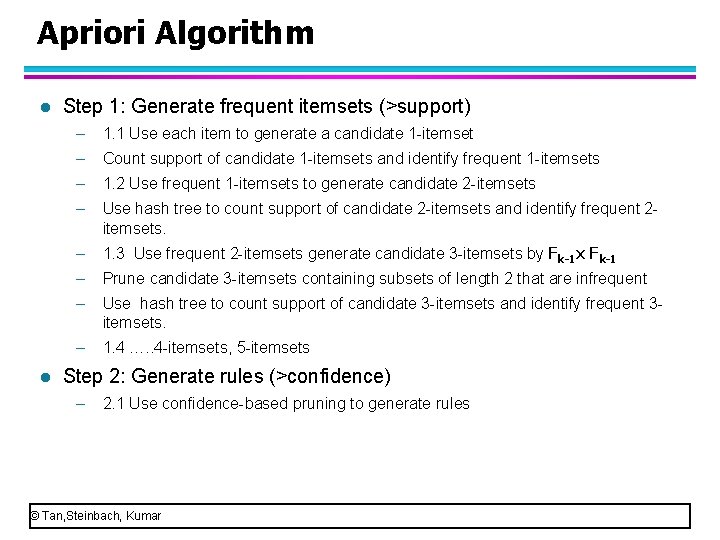

Apriori Algorithm We have learned the Apriori algorithm. l Let’s review two main steps of Apriori algorithm l – Step 1: Generate frequent itemsets (>support) – Step 2: Generate rules (>confidence) © Tan, Steinbach, Kumar

Apriori Algorithm l l Step 1: Generate frequent itemsets (>support) – 1. 1 Use each item to generate a candidate 1 itemset – Count support of candidate 1 itemsets and identify frequent 1 itemsets – 1. 2 Use frequent 1 itemsets to generate candidate 2 itemsets – Use hash tree to count support of candidate 2 itemsets and identify frequent 2 itemsets. – 1. 3 Use frequent 2 itemsets generate candidate 3 itemsets by Fk-1 x Fk-1 – Prune candidate 3 itemsets containing subsets of length 2 that are infrequent – Use hash tree to count support of candidate 3 itemsets and identify frequent 3 itemsets. – 1. 4 …. . 4 itemsets, 5 itemsets Step 2: Generate rules (>confidence) – 2. 1 Use confidence based pruning to generate rules © Tan, Steinbach, Kumar

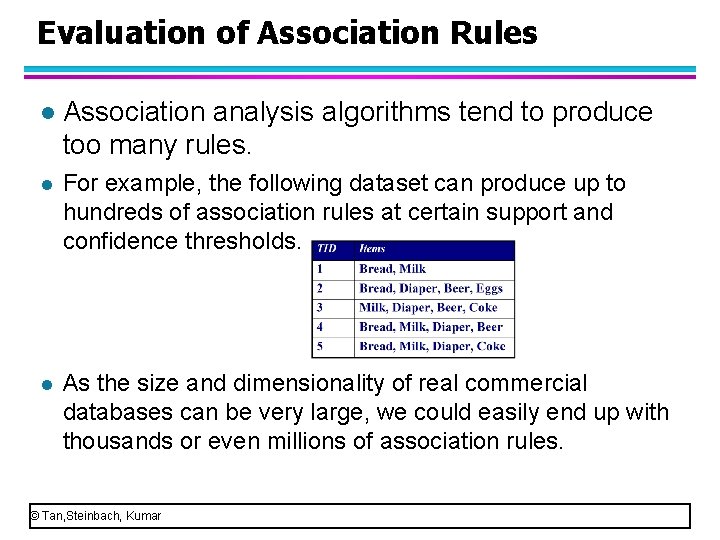

Evaluation of Association Rules l Association analysis algorithms tend to produce too many rules. l For example, the following dataset can produce up to hundreds of association rules at certain support and confidence thresholds. l As the size and dimensionality of real commercial databases can be very large, we could easily end up with thousands or even millions of association rules. © Tan, Steinbach, Kumar

Evaluation of Association Rules l Criteria for evaluating association rules. l The first set of criteria can be established though statistical arguments. Patterns that involves a set of mutually independent items or cover very few transactions are considered uninteresting because they may capture spurious relationships. Such rules can be eliminated by applying an objective interestingness measure such as support, confidence and correlations. l The second set of criteria can be established though subjective arguments. A pattern is considered subjectively uninteresting unless it reveals unexpected information or provides useful knowledge that can lead to profitable actions. For example, the rule {Butter} {Bread} may not be interesting because the relationship represented by the rule may seem rather obvious. In contrast, {Diaper} {Beer} is interesting because the relationship is quite unexpected and may suggest a new cross selling opportunity. © Tan, Steinbach, Kumar

- Slides: 116