AssessmentConcepts Global Standards for Education Office of Overseas

Assessment—Concepts Global Standards for Education Office of Overseas Programming & Training Support (OPATS)

What do you already know about assessment? 1. 2. You have one class of 25 students and one class of 75 students. You have to test them on the same content--will you use the same test? Why or why not? An administrator at your school has just discovered the TOEIC test (a test of general, professionally oriented English). He wants to use a version of it to assess the progress students are making in your class. How do you feel about that—any concerns? 3. What do you think? In what ways should an entrance exam look different from an exit exam? 4. You have been doing your best to teach your biology class through many demonstrations, using locally-available materials and emphasizing connections between the science and students daily lives. The high school leaving exam however, is multiple choice and demands rote memorization of traditional biology principles and terminology. Your students complain that you are not preparing them to pass this test. How can you how can you satisfy both you and your students?

Differences to Consider In Designing Assessments High or Low Stakes? Are they high or low? Low stakes assessments do not have to be so carefully designed. Class Size? If you have to assess many students, for feasibility’s sake, you may have to sacrifice more detailed information on each student. Audience for results? If my audience is only my students, I will likely design a different assessment than I would for needs of the Ministry of Education. Ratio of teaching time to testing time? If we spend a relatively large amount of time for assessment in relation to time spent teaching, improved assessment may not justify lost teaching time.

Different Times to Assess (& Why) Before? To discover student needs & what they already know and can do. During? To test student progress; to test student satisfaction, to test whether the class is doing what we wanted it to do. After? To know, on the whole, if the curriculum worked and to decide what changes need to be made before the next offering.

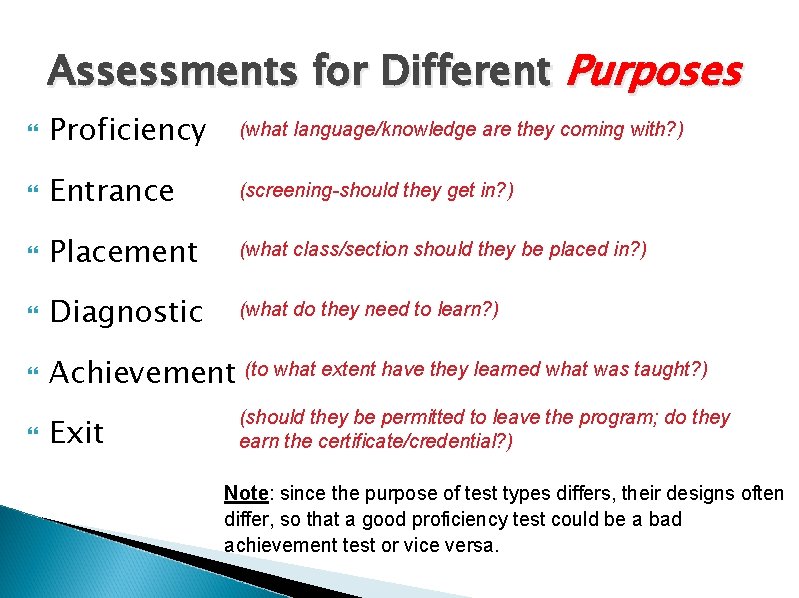

Assessments for Different Purposes Proficiency (what language/knowledge are they coming with? ) Entrance (screening-should they get in? ) Placement (what class/section should they be placed in? ) Diagnostic (what do they need to learn? ) Achievement (to what extent have they learned what was taught? ) Exit (should they be permitted to leave the program; do they earn the certificate/credential? ) Note: since the purpose of test types differs, their designs often differ, so that a good proficiency test could be a bad achievement test or vice versa.

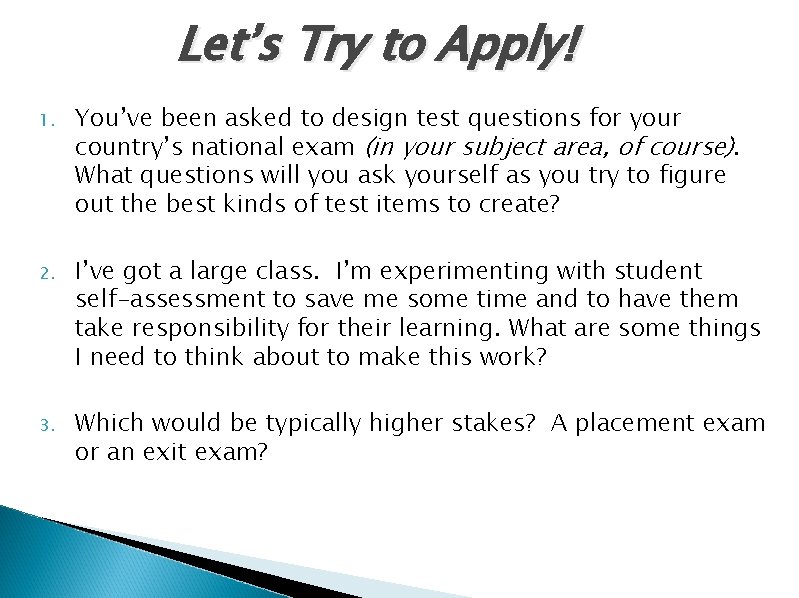

Let’s Try to Apply! 1. You’ve been asked to design test questions for your country’s national exam (in your subject area, of course). What questions will you ask yourself as you try to figure out the best kinds of test items to create? 2. I’ve got a large class. I’m experimenting with student self-assessment to save me some time and to have them take responsibility for their learning. What are some things I need to think about to make this work? 3. Which would be typically higher stakes? A placement exam or an exit exam?

Evaluating Test Design Ways in which tests are good – or bad!

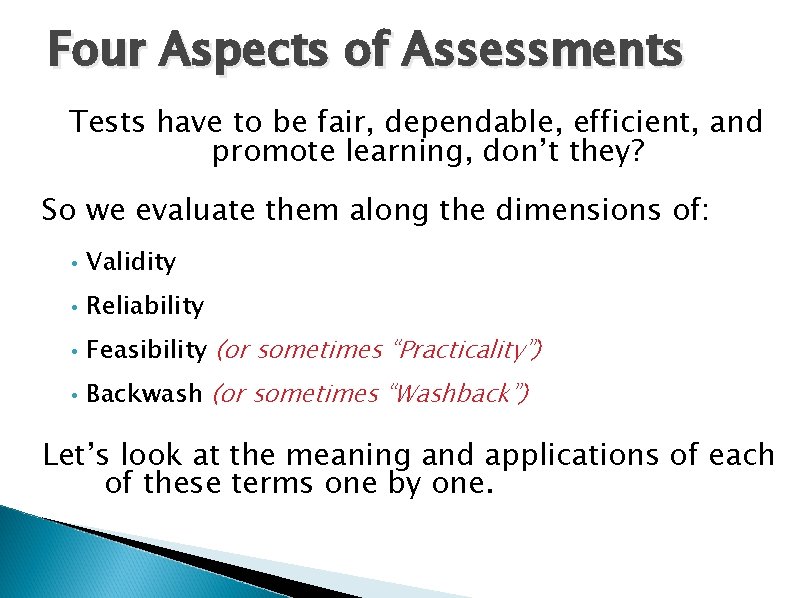

Four Aspects of Assessments Tests have to be fair, dependable, efficient, and promote learning, don’t they? So we evaluate them along the dimensions of: • Validity • Reliability • Feasibility (or sometimes “Practicality”) • Backwash (or sometimes “Washback”) Let’s look at the meaning and applications of each of these terms one by one.

VALIDITY A test is valid if it tests what it claims to test. How do we know? Some examples: Does the assessment require the subject to produce the skill the assessment claims to assess? ◦ Would it be good to assess essay-writing ability with a multiple choice test on grammar and stylistic knowledge? ◦ Would it be good to assess students’ understanding of a physics lab experiment by asking students multiple choice questions that deal generally with the topic of the experiment?

More on VALIDITY Could correct answers be given by using other skills or knowledge than that which the assessment claims to assess? ◦ A reading comprehension test item asks U. S. students, “When did Columbus discover America? ” [to ESOL students enrolled in US schools for several years] ◦ A listening assessment asks “Who is the girl talking to? ” after a short listening passage. There is a photo on the student answer sheet showing a girl talking with a nurse.

Would you mind recapping? Can you think of an example of an invalid test? What might be some reasons for you or a counterpart to use an invalid test? (with or without realizing it)

RELIABILITY: Are the results consistent? Examples of unreliability: If the same person takes the same test again one week later (without any further study or preparation) and the results are quite different. If two people who have very similar abilities take the same test, but have very different results. If two or more trained raters using the same criteria come up with very different evaluations of scores on the same speaking or writing sample.

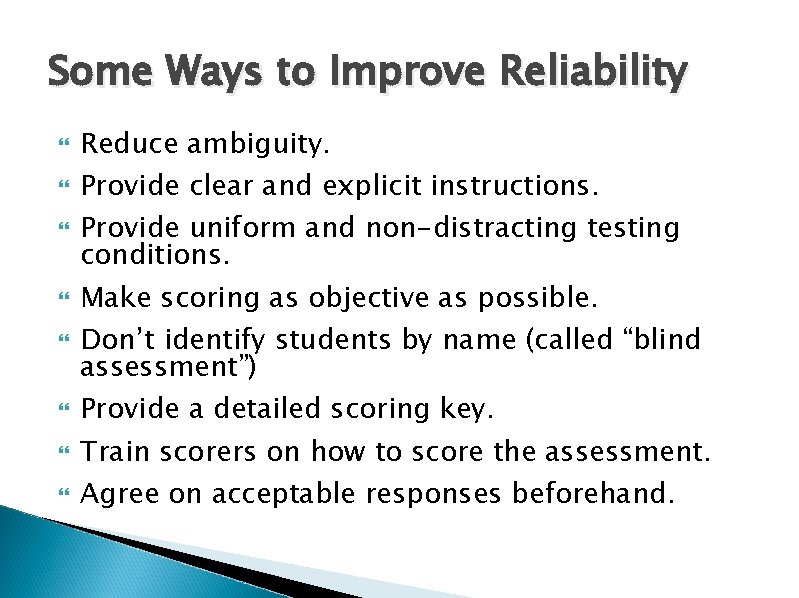

Some Ways to Improve Reliability Reduce ambiguity. Provide clear and explicit instructions. Provide uniform and non-distracting testing conditions. Make scoring as objective as possible. Don’t identify students by name (called “blind assessment”) Provide a detailed scoring key. Train scorers on how to score the assessment. Agree on acceptable responses beforehand.

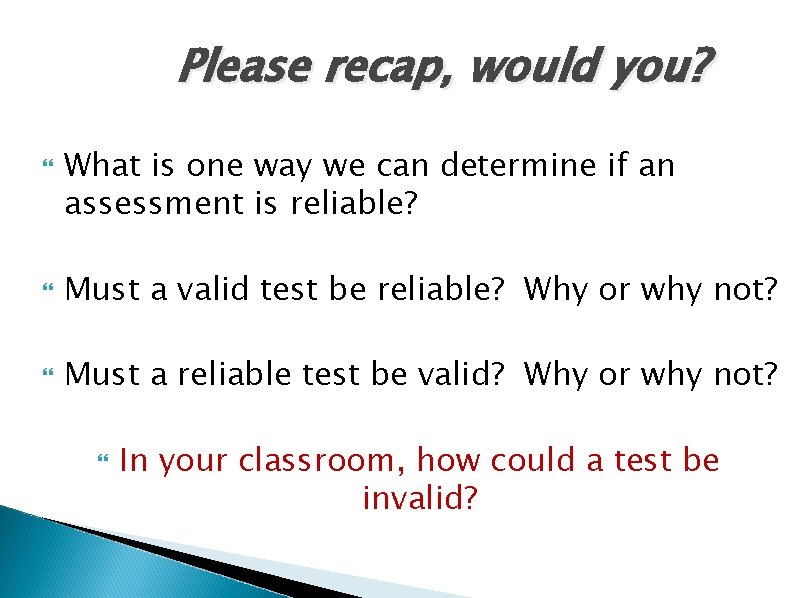

Please recap, would you? What is one way we can determine if an assessment is reliable? Must a valid test be reliable? Why or why not? Must a reliable test be valid? Why or why not? In your classroom, how could a test be invalid?

Many developing countries require high stakes national exams at key points in students’ academic careers. What might be some common factors that could affect these exams’ reliability?

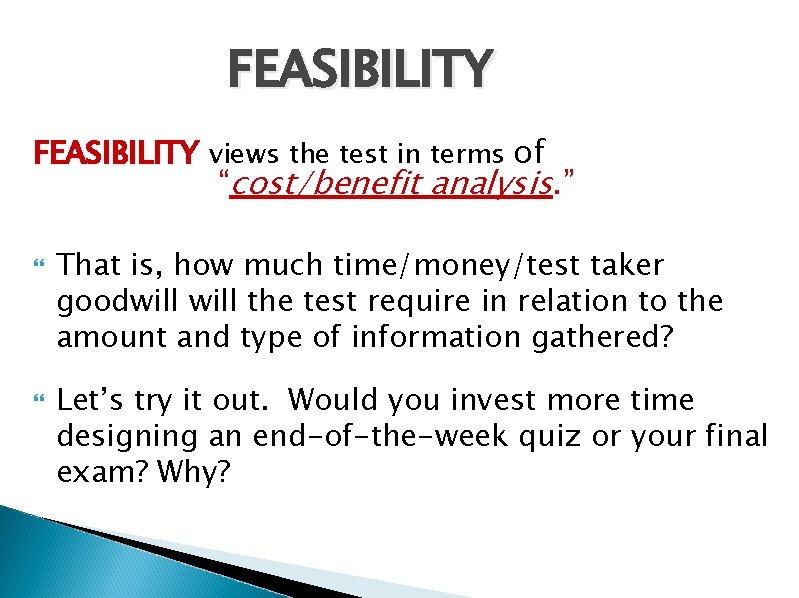

FEASIBILITY views the test in terms of “cost/benefit analysis. ” That is, how much time/money/test taker goodwill the test require in relation to the amount and type of information gathered? Let’s try it out. Would you invest more time designing an end-of-the-week quiz or your final exam? Why?

Recap? Can you think of a situation or an assessment where you might have to stop and consider feasibility? — especially in relation to the depth of information that you might need.

BACKWASH is the effect that testing has on learning. Consequently, backwash can be positive or negative.

How might you have negative backwash slip into your classroom/teaching?

Would you mind a quick recap? Often in this PST, we give you tools or examples, ask you practice a skill, and then we ask you to engage in that skill (for example, design a test). What do you think the backwash will be like?

Now, let’s practice…

…and apply what you’ve learned! 3

- Slides: 22