ASSESSMENT OF SOCCER REFEREE PROFICIENCY IN TIMESENSITIVE DECISIONMAKING

- Slides: 61

ASSESSMENT OF SOCCER REFEREE PROFICIENCY IN TIME-SENSITIVE DECISION-MAKING Nathan Jones Andrew Cann Hina Popal Saud Almashhadi

Agenda 1. 2. 3. 4. 5. 6. 7. 8. Context Problem & Need Statement Design Alternatives Simulation Output Utility Analysis Conclusions Management 2

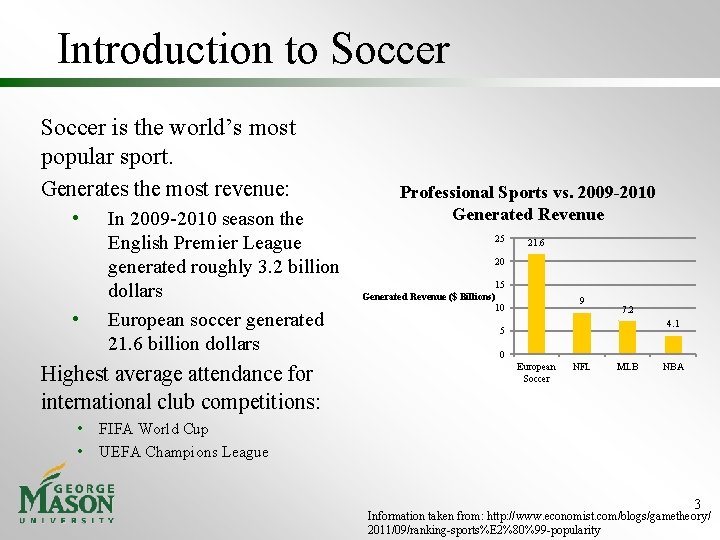

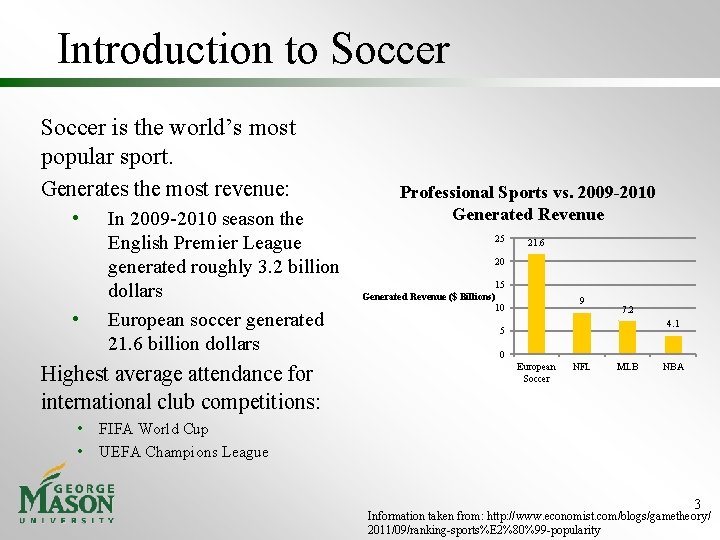

Introduction to Soccer is the world’s most popular sport. Generates the most revenue: • • In 2009 -2010 season the English Premier League generated roughly 3. 2 billion dollars European soccer generated 21. 6 billion dollars Highest average attendance for international club competitions: • • Professional Sports vs. 2009 -2010 Generated Revenue 25 21. 6 20 15 Generated Revenue ($ Billions) 10 9 7. 2 4. 1 5 0 European Soccer NFL MLB NBA FIFA World Cup UEFA Champions League 3 Information taken from: http: //www. economist. com/blogs/gametheory/ 2011/09/ranking-sports%E 2%80%99 -popularity

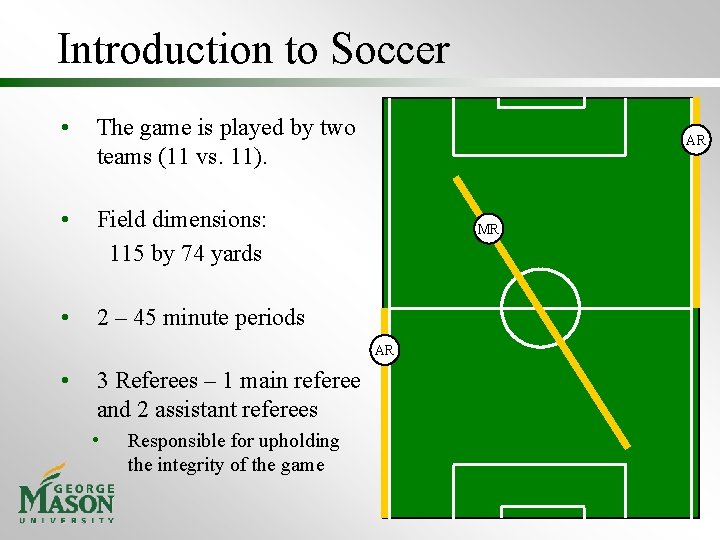

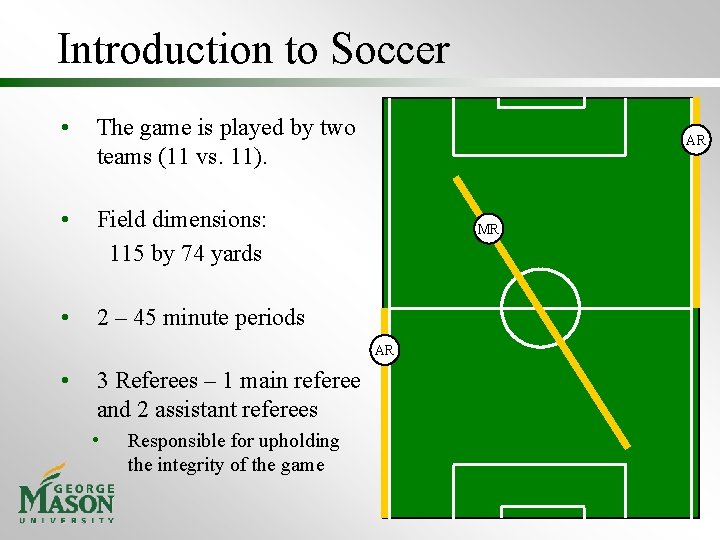

Introduction to Soccer • The game is played by two teams (11 vs. 11). • Field dimensions: 115 by 74 yards • 2 – 45 minute periods AR MR AR • 3 Referees – 1 main referee and 2 assistant referees • Responsible for upholding the integrity of the game 4

Referee Responsibilities Upholding the integrity of the game: • • • Make accurate calls Make calls that don’t interrupt the flow of the game Be in proper position, to assess, process, and identify correct call Current MLS referees make 86. 1 % correct calls. (USSF) Referees are categorized as either junior referees (entry level) or senior referees (advanced level). 5

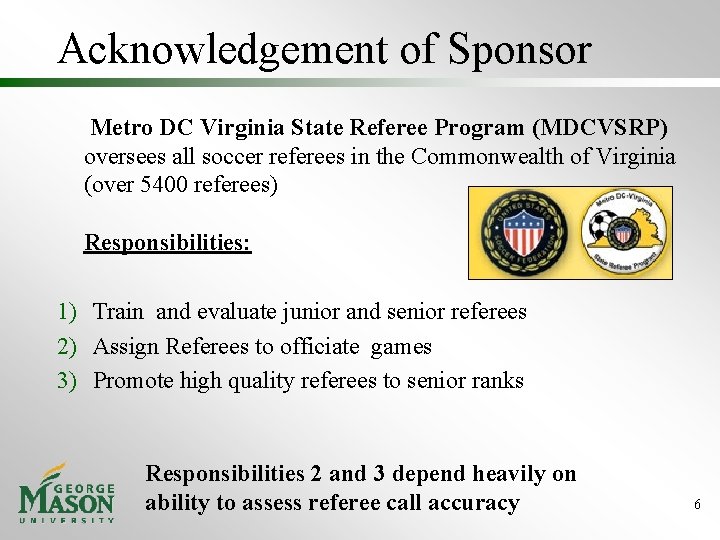

Acknowledgement of Sponsor Metro DC Virginia State Referee Program (MDCVSRP) oversees all soccer referees in the Commonwealth of Virginia (over 5400 referees) Responsibilities: 1) Train and evaluate junior and senior referees 2) Assign Referees to officiate games 3) Promote high quality referees to senior ranks Responsibilities 2 and 3 depend heavily on ability to assess referee call accuracy 6

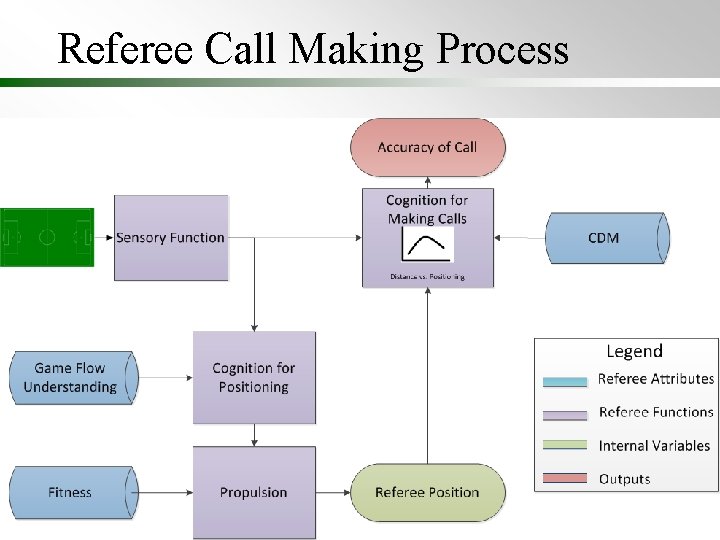

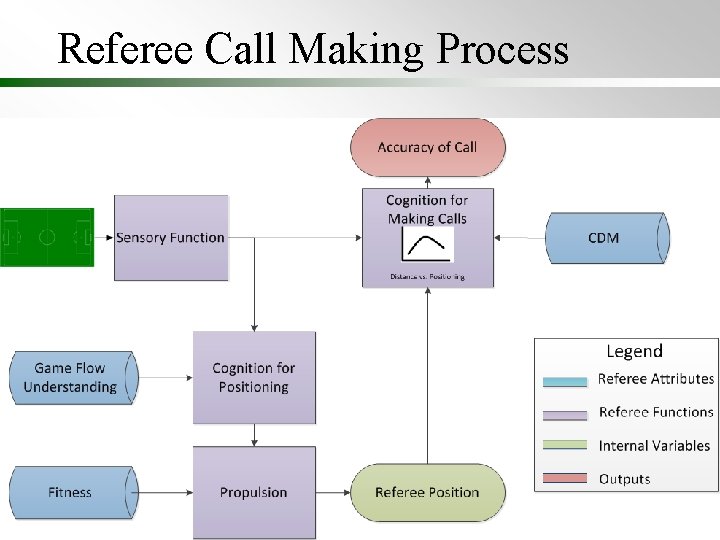

Referee Call Making Process 7

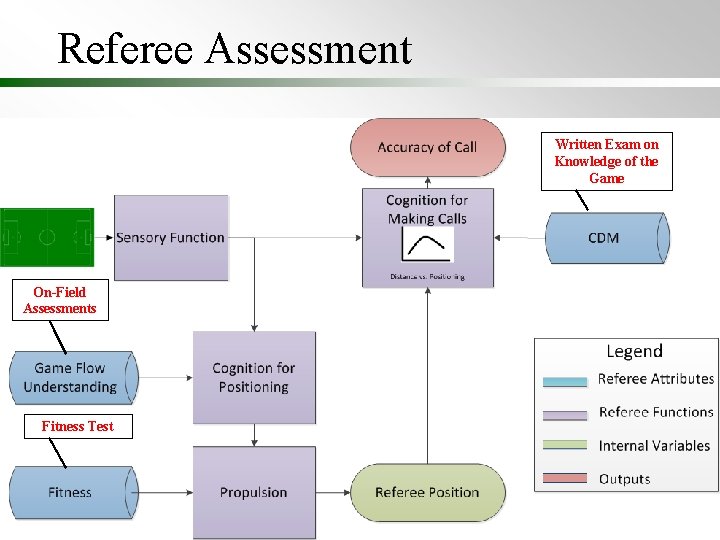

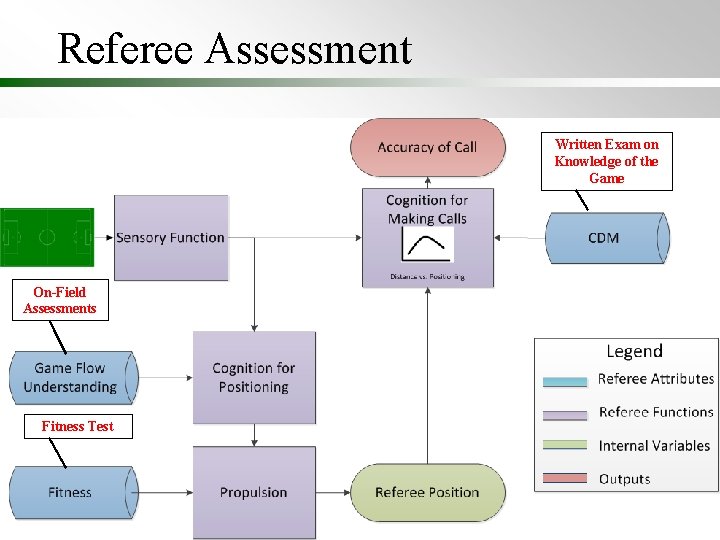

Referee Assessment Written Exam on Knowledge of the Game On-Field Assessments Fitness Test 8

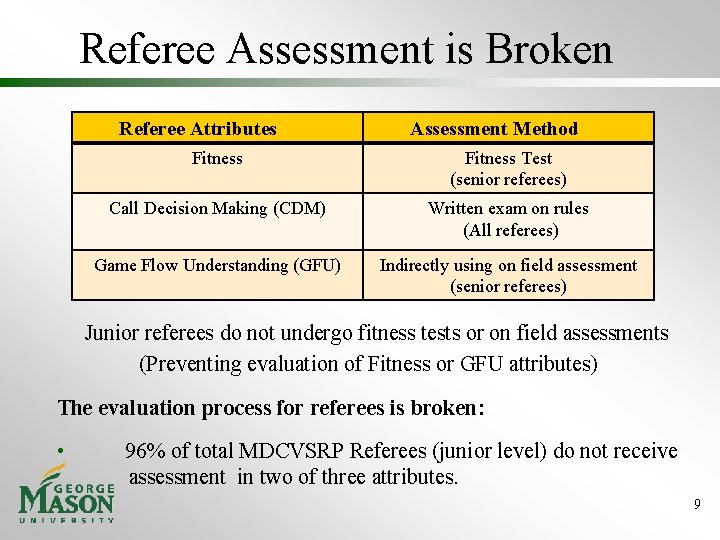

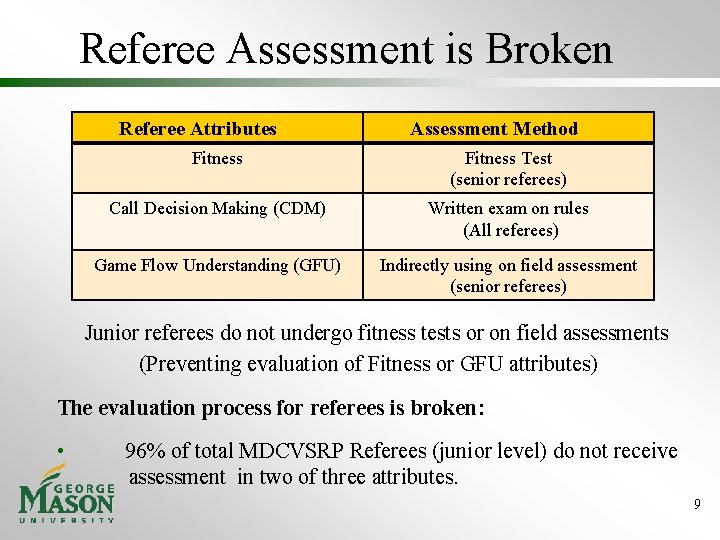

Referee Assessment is Broken Referee Attributes Assessment Method Fitness Test (senior referees) Call Decision Making (CDM) Written exam on rules (All referees) Game Flow Understanding (GFU) Indirectly using on field assessment (senior referees) Junior referees do not undergo fitness tests or on field assessments (Preventing evaluation of Fitness or GFU attributes) The evaluation process for referees is broken: • 96% of total MDCVSRP Referees (junior level) do not receive assessment in two of three attributes. 9

Agenda 1. 2. 3. 4. 5. 6. 7. 8. Context Problem & Need Statement Design Alternatives Simulation Output Utility Analysis Conclusions Management 10

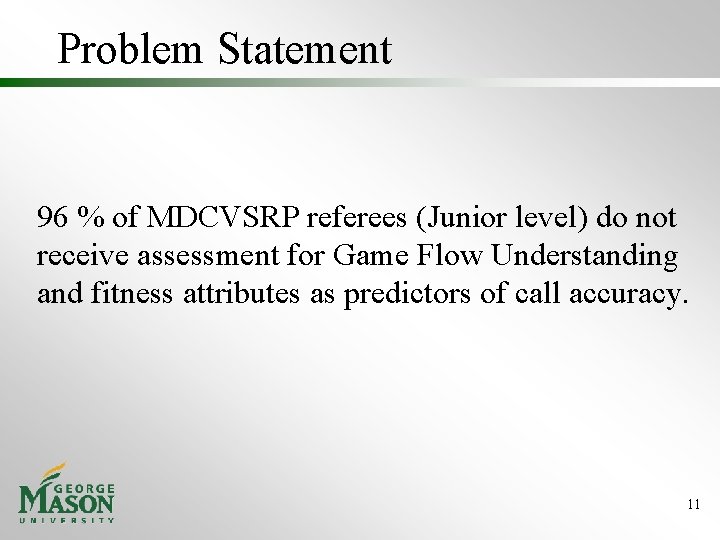

Problem Statement 96 % of MDCVSRP referees (Junior level) do not receive assessment for Game Flow Understanding and fitness attributes as predictors of call accuracy. 11

Need Statement An assessment method is needed to evaluate referee accuracy in a cost effective manner utilizing fitness and/or Game Flow Understanding (GFU). Scope: Our analysis will focus on determining the best system concept for assessing MDCVSRP junior referees. Specifics of design and implementation are considered future work. 12

Agenda 1. 2. 3. 4. 5. 6. 7. 8. Context Problem & Need Statement Design Alternatives Simulation Output Utility Analysis Conclusions Management 13

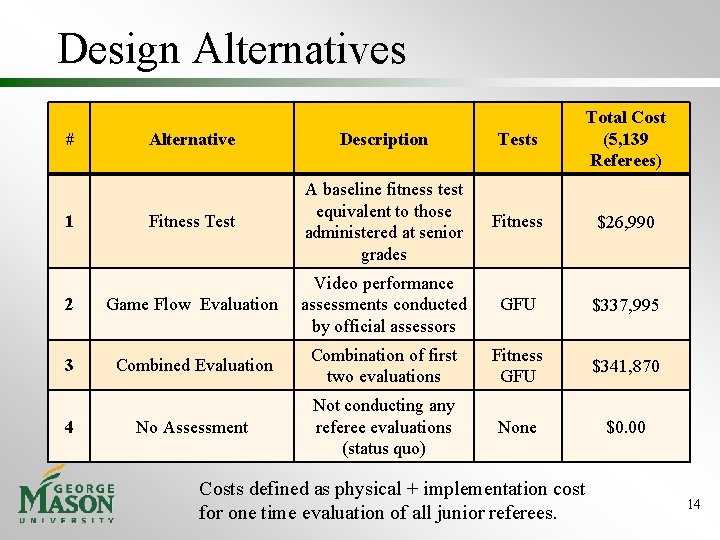

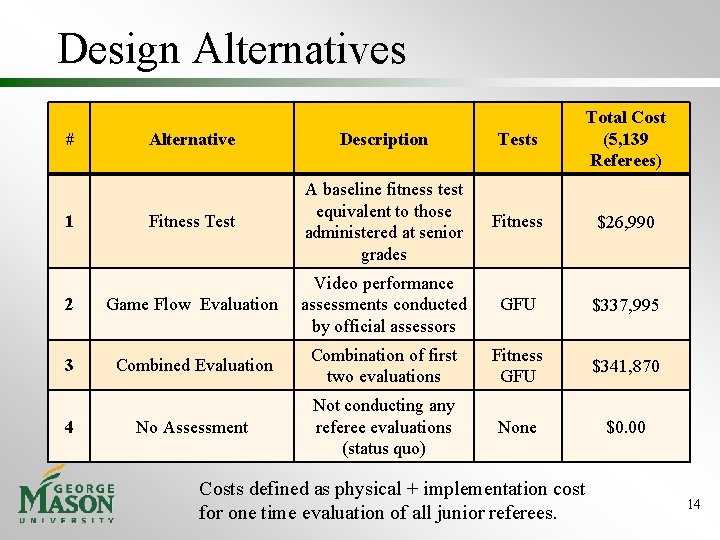

Design Alternatives Alternative Description Tests Total Cost (5, 139 Referees) Fitness Test A baseline fitness test equivalent to those administered at senior grades Fitness $26, 990 2 Game Flow Evaluation Video performance assessments conducted by official assessors GFU $337, 995 3 Combined Evaluation Combination of first two evaluations Fitness GFU $341, 870 No Assessment Not conducting any referee evaluations (status quo) None $0. 00 # 1 4 Costs defined as physical + implementation cost for one time evaluation of all junior referees. 14

Evaluation Of Alternatives Utility of each alternative defined as: Expected call accuracy of the top 100 referees identified using each alternative within junior referee pool (5000 referees). To determine utilities, a two part analysis was conducted: 1) Function for call accuracy based on fitness and GFU levels developed using discrete soccer game simulator. 2) Using part 1 function, expected call accuracy of top 100 referees selected by each alternative computed through Monte Carlo analysis. 15

Agenda 1. 2. 3. 4. 5. 6. 7. 8. Context Problem & Need Statement Design Alternatives Simulation Output Utility Analysis Conclusions Management 16

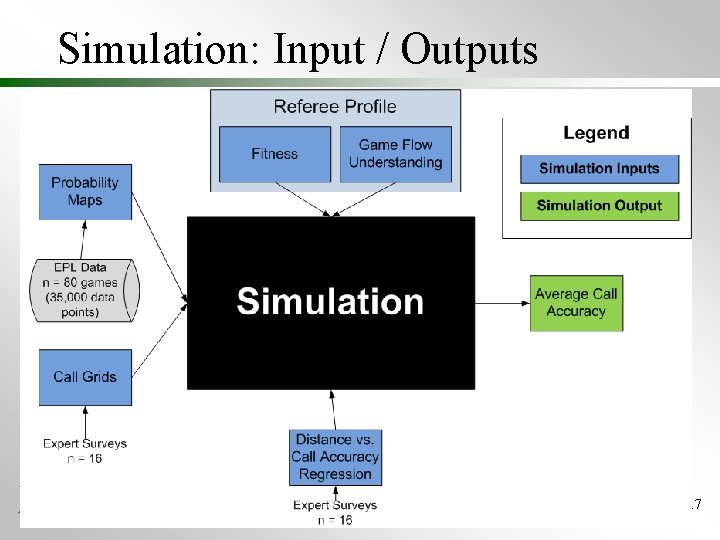

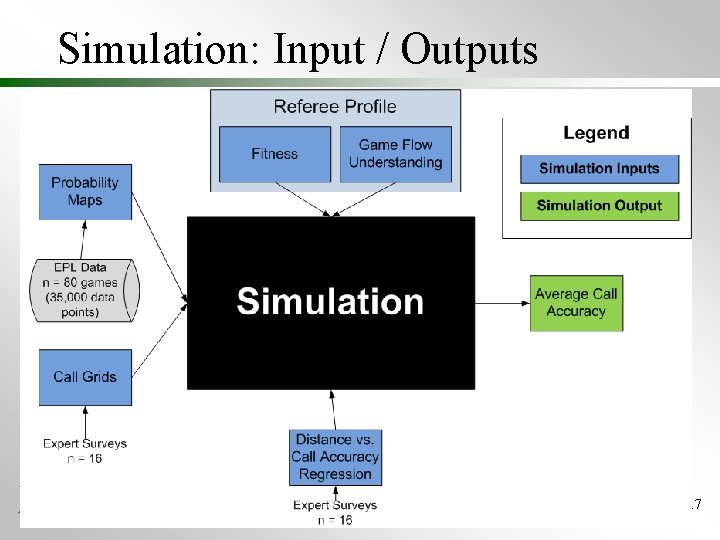

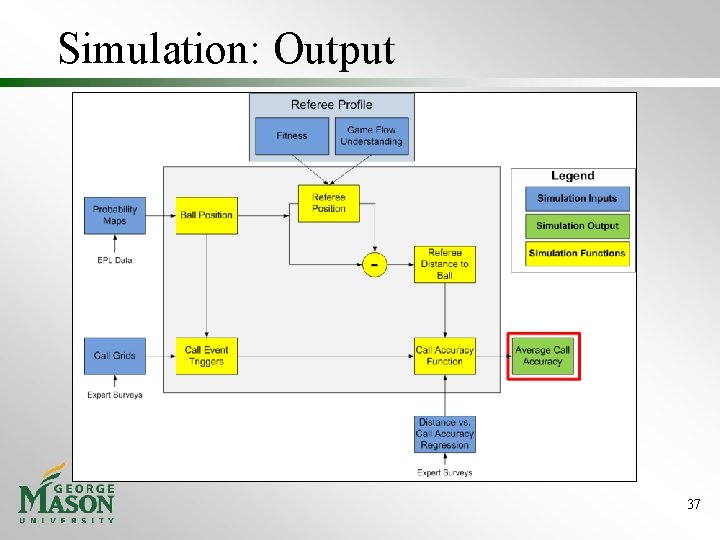

Simulation: Input / Outputs 17

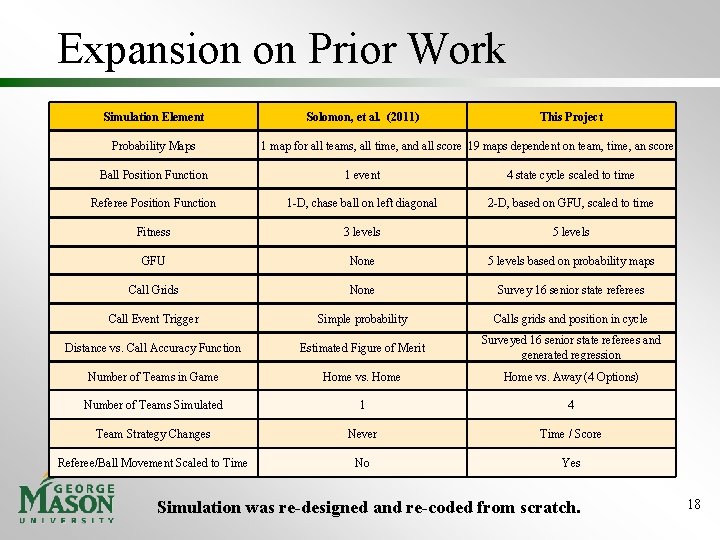

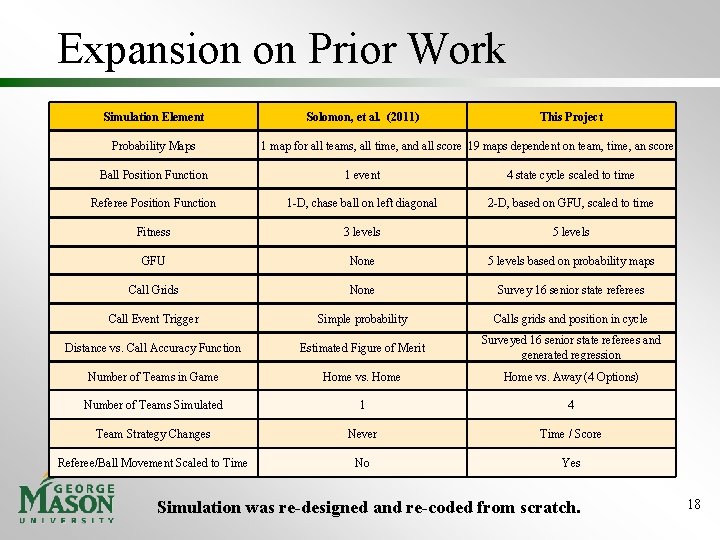

Expansion on Prior Work Simulation Element Probability Maps Solomon, et al. (2011) This Project 1 map for all teams, all time, and all score 19 maps dependent on team, time, an score Ball Position Function 1 event 4 state cycle scaled to time Referee Position Function 1 -D, chase ball on left diagonal 2 -D, based on GFU, scaled to time Fitness 3 levels 5 levels GFU None 5 levels based on probability maps Call Grids None Survey 16 senior state referees Call Event Trigger Simple probability Calls grids and position in cycle Distance vs. Call Accuracy Function Estimated Figure of Merit Surveyed 16 senior state referees and generated regression Number of Teams in Game Home vs. Away (4 Options) Number of Teams Simulated 1 4 Team Strategy Changes Never Time / Score Referee/Ball Movement Scaled to Time No Yes Simulation was re-designed and re-coded from scratch. 18

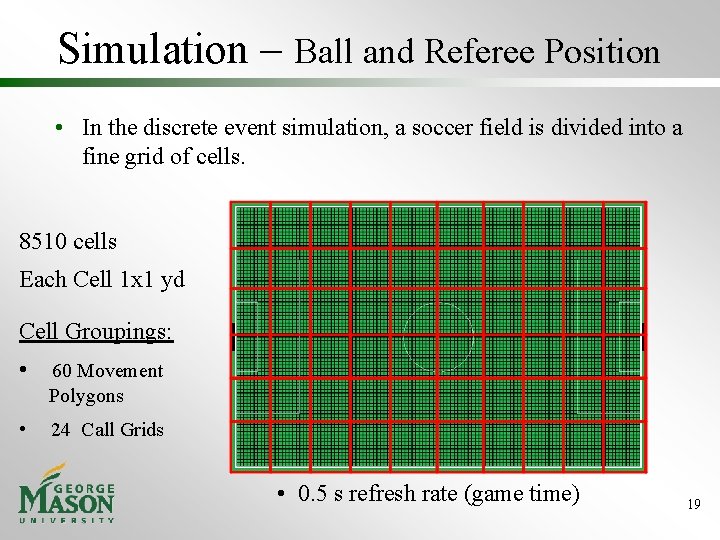

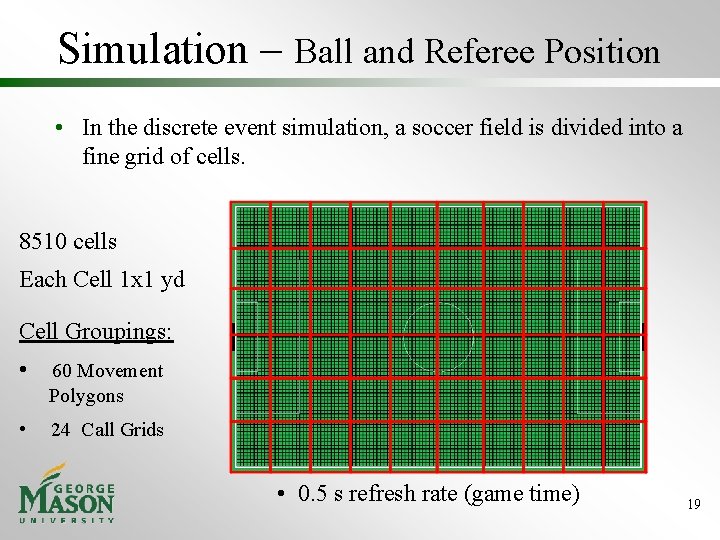

Simulation – Ball and Referee Position • In the discrete event simulation, a soccer field is divided into a fine grid of cells. 8510 cells Each Cell 1 x 1 yd Cell Groupings: • 60 Movement Polygons • 24 Call Grids • 0. 5 s refresh rate (game time) 19

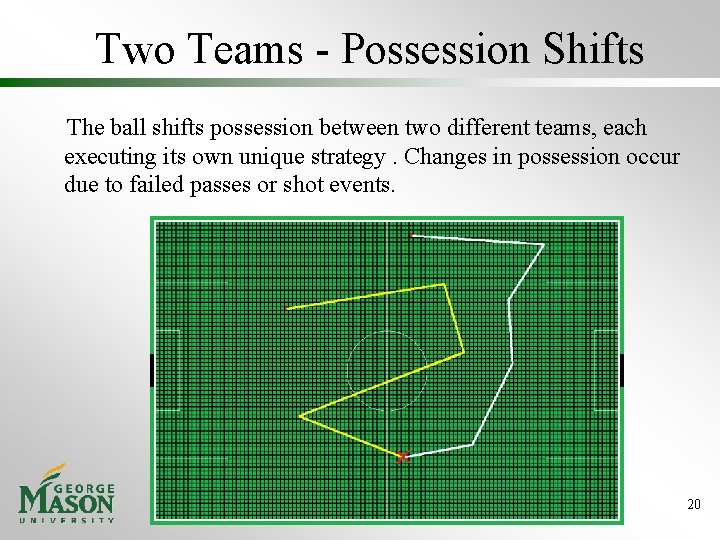

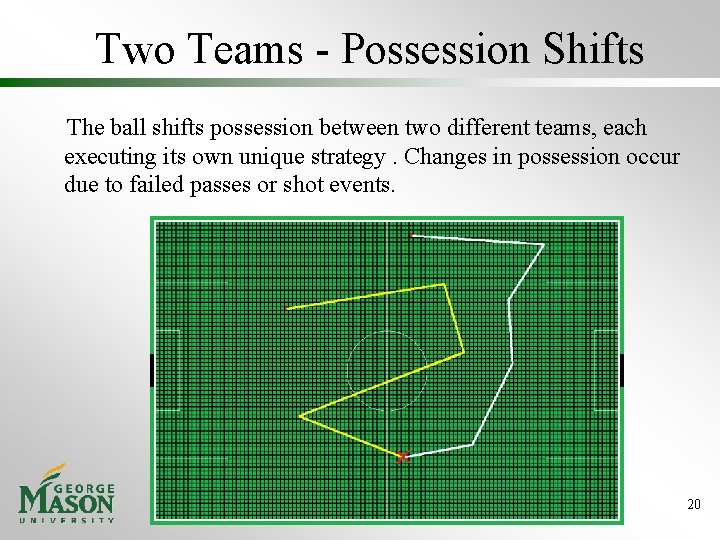

Two Teams - Possession Shifts The ball shifts possession between two different teams, each executing its own unique strategy. Changes in possession occur due to failed passes or shot events. 20

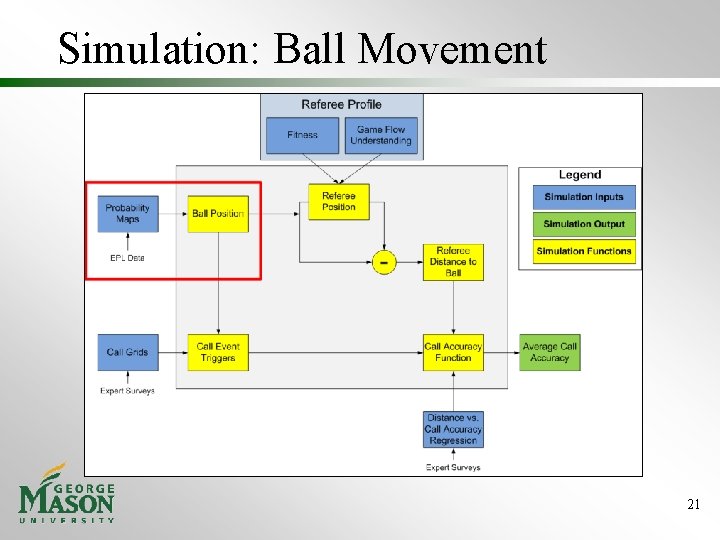

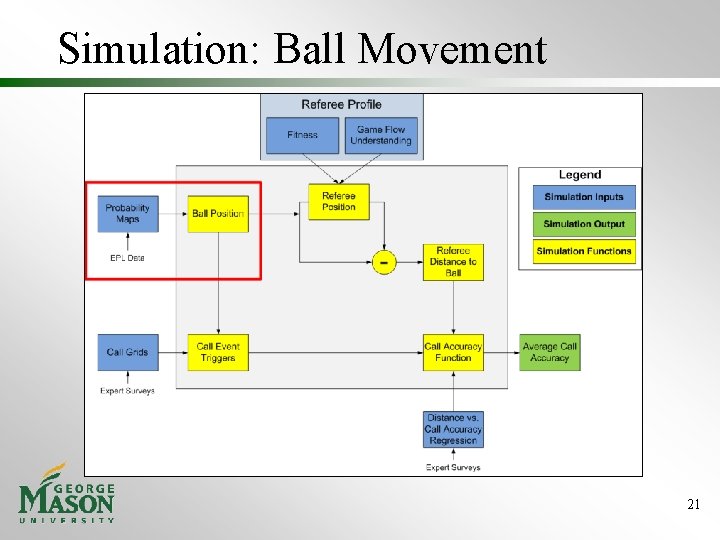

Simulation: Ball Movement 21

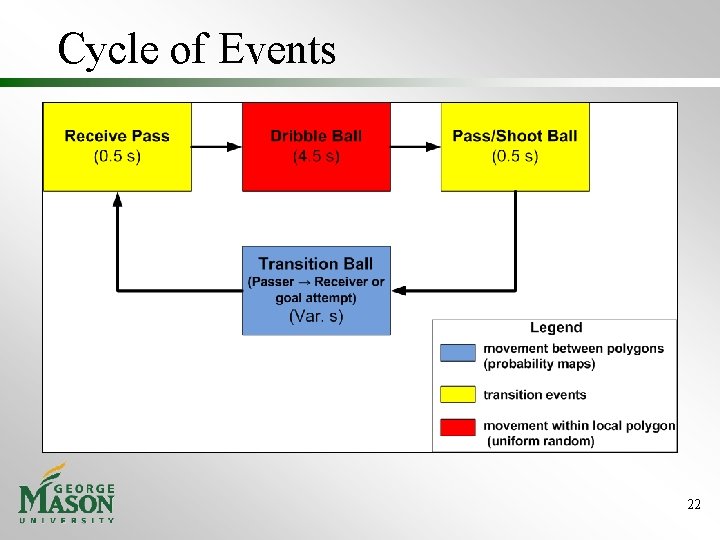

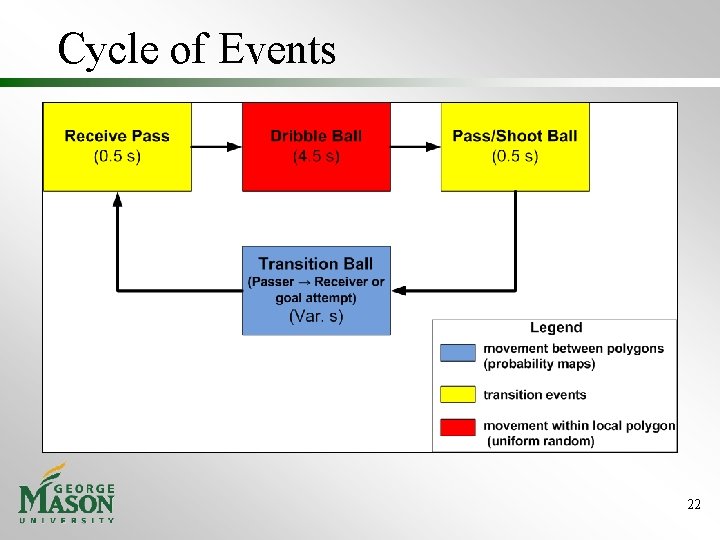

Cycle of Events 22

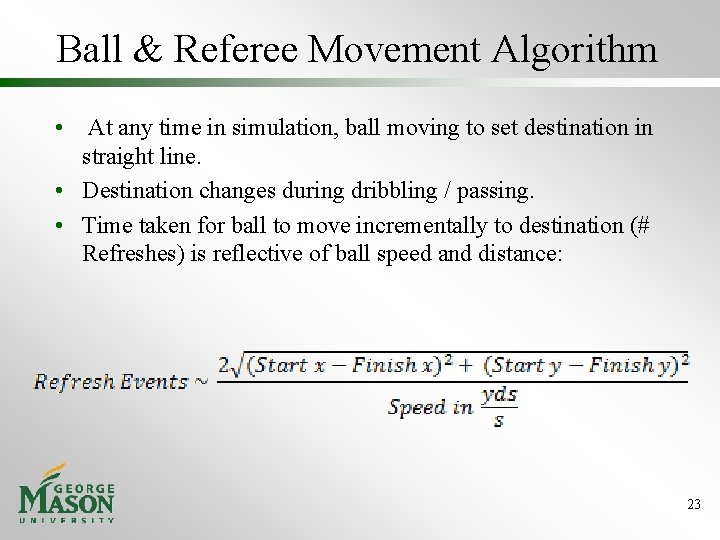

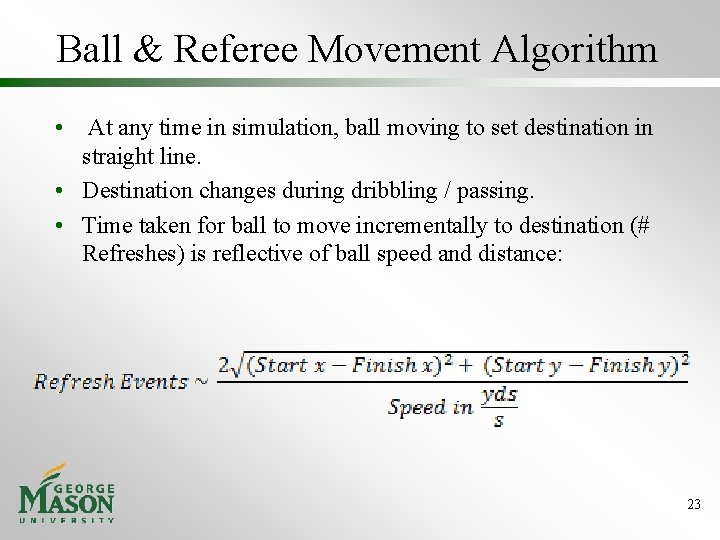

Ball & Referee Movement Algorithm • At any time in simulation, ball moving to set destination in straight line. • Destination changes during dribbling / passing. • Time taken for ball to move incrementally to destination (# Refreshes) is reflective of ball speed and distance: 23

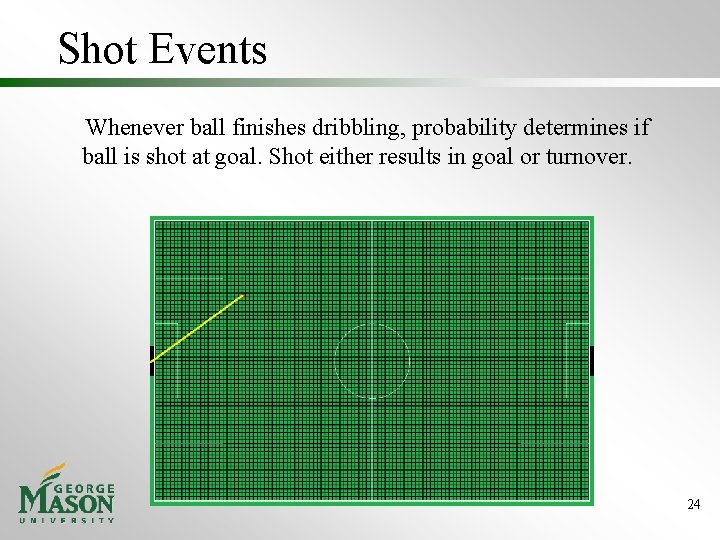

Shot Events Whenever ball finishes dribbling, probability determines if ball is shot at goal. Shot either results in goal or turnover. 24

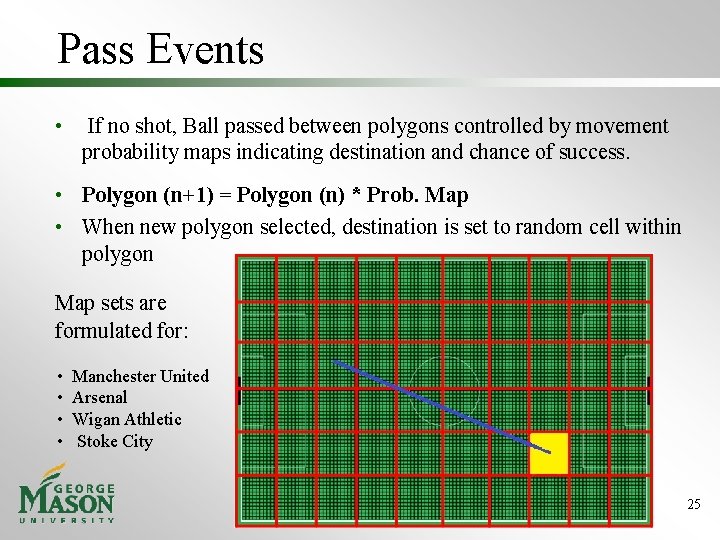

Pass Events • If no shot, Ball passed between polygons controlled by movement probability maps indicating destination and chance of success. • Polygon (n+1) = Polygon (n) * Prob. Map • When new polygon selected, destination is set to random cell within polygon Map sets are formulated for: • • Manchester United Arsenal Wigan Athletic Stoke City 25

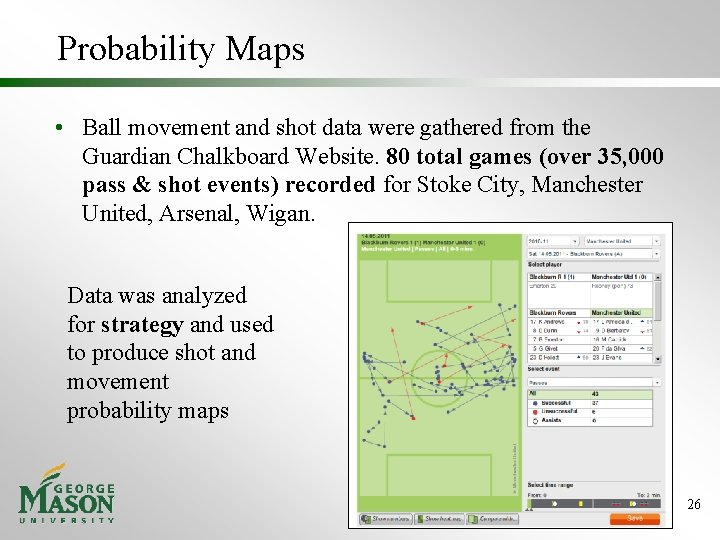

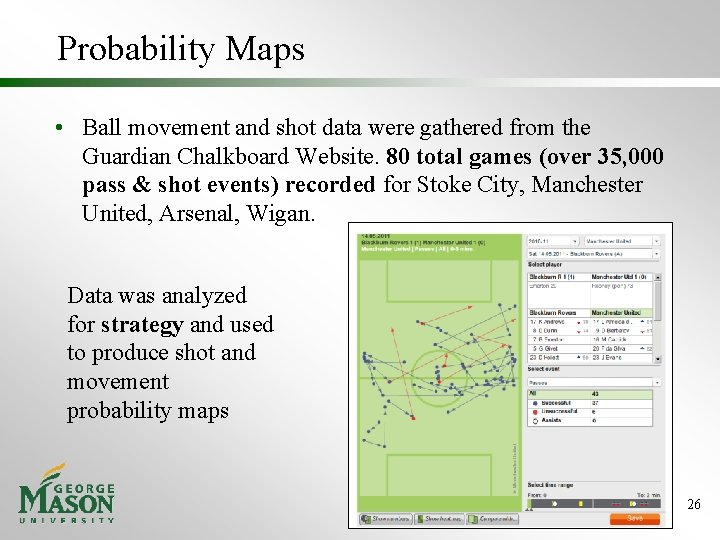

Probability Maps • Ball movement and shot data were gathered from the Guardian Chalkboard Website. 80 total games (over 35, 000 pass & shot events) recorded for Stoke City, Manchester United, Arsenal, Wigan. Data was analyzed for strategy and used to produce shot and movement probability maps 26

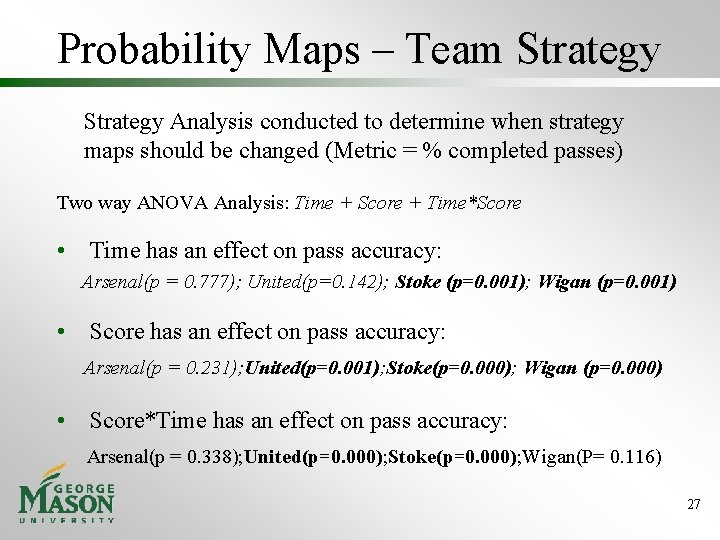

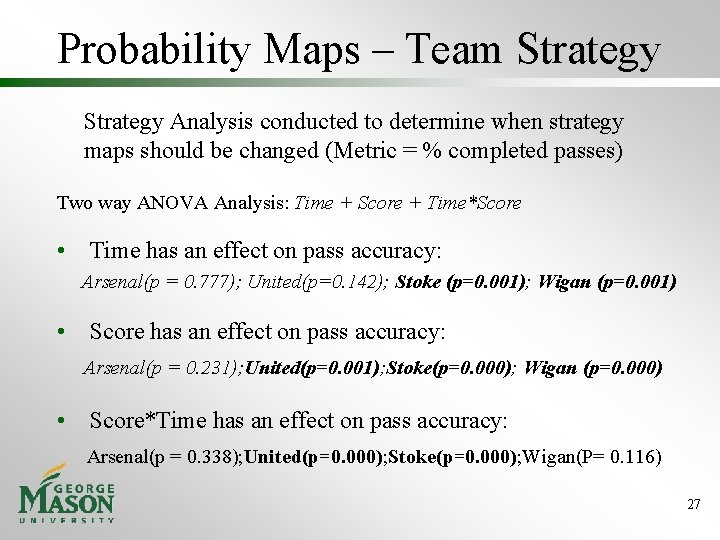

Probability Maps – Team Strategy Analysis conducted to determine when strategy maps should be changed (Metric = % completed passes) Two way ANOVA Analysis: Time + Score + Time*Score • Time has an effect on pass accuracy: Arsenal(p = 0. 777); United(p=0. 142); Stoke (p=0. 001); Wigan (p=0. 001) • Score has an effect on pass accuracy: Arsenal(p = 0. 231); United(p=0. 001); Stoke(p=0. 000); Wigan (p=0. 000) • Score*Time has an effect on pass accuracy: Arsenal(p = 0. 338); United(p=0. 000); Stoke(p=0. 000); Wigan(P= 0. 116) 27

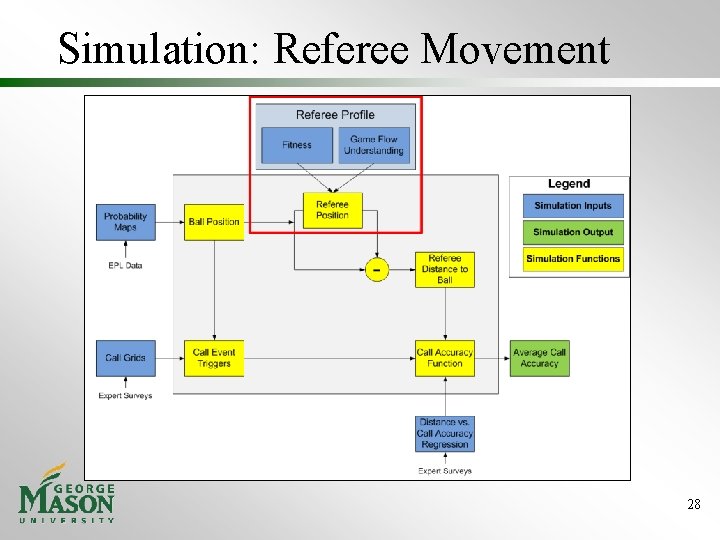

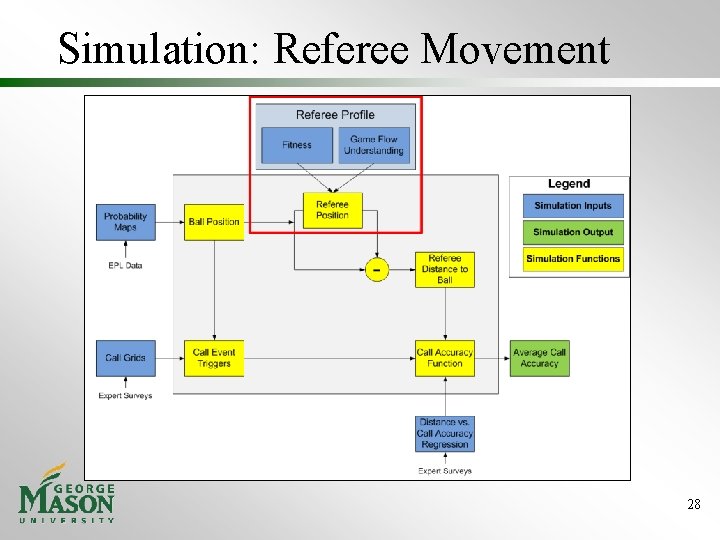

Simulation: Referee Movement 28

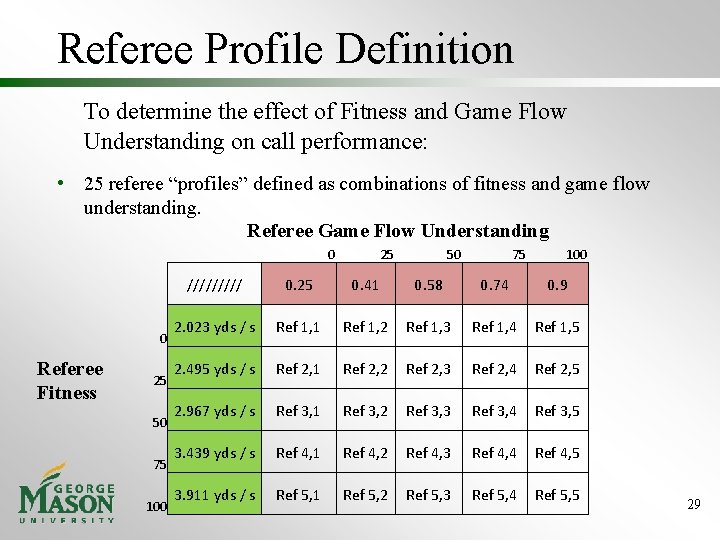

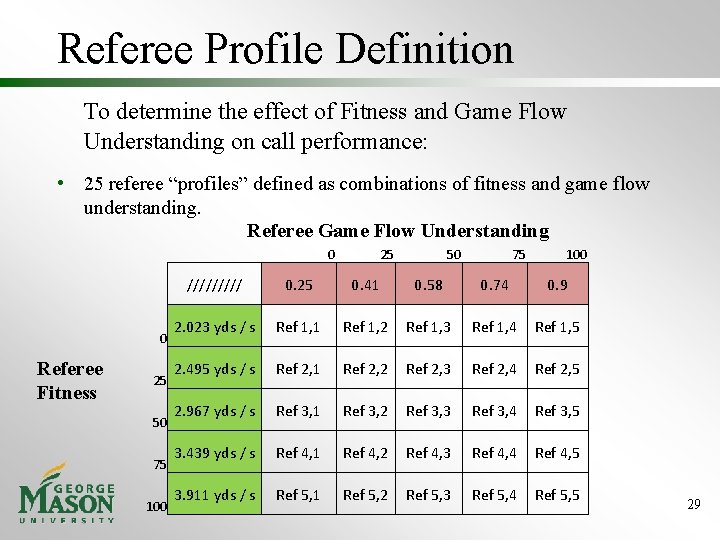

Referee Profile Definition To determine the effect of Fitness and Game Flow Understanding on call performance: • 25 referee “profiles” defined as combinations of fitness and game flow understanding. Referee Game Flow Understanding 0 0 Referee Fitness 25 50 75 100 ///// 0. 25 0. 41 0. 58 0. 74 0. 9 2. 023 yds / s Ref 1, 1 Ref 1, 2 Ref 1, 3 Ref 1, 4 Ref 1, 5 2. 495 yds / s Ref 2, 1 Ref 2, 2 Ref 2, 3 Ref 2, 4 Ref 2, 5 2. 967 yds / s Ref 3, 1 Ref 3, 2 Ref 3, 3 Ref 3, 4 Ref 3, 5 3. 439 yds / s Ref 4, 1 Ref 4, 2 Ref 4, 3 Ref 4, 4 Ref 4, 5 3. 911 yds / s Ref 5, 1 Ref 5, 2 Ref 5, 3 Ref 5, 4 Ref 5, 5 29

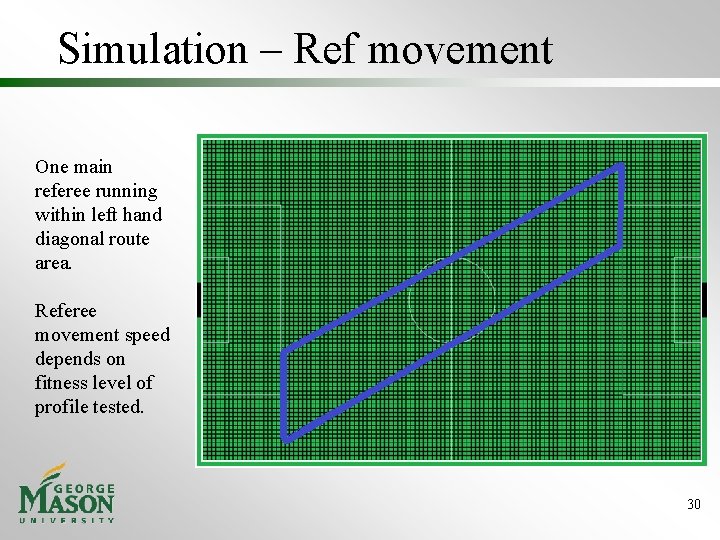

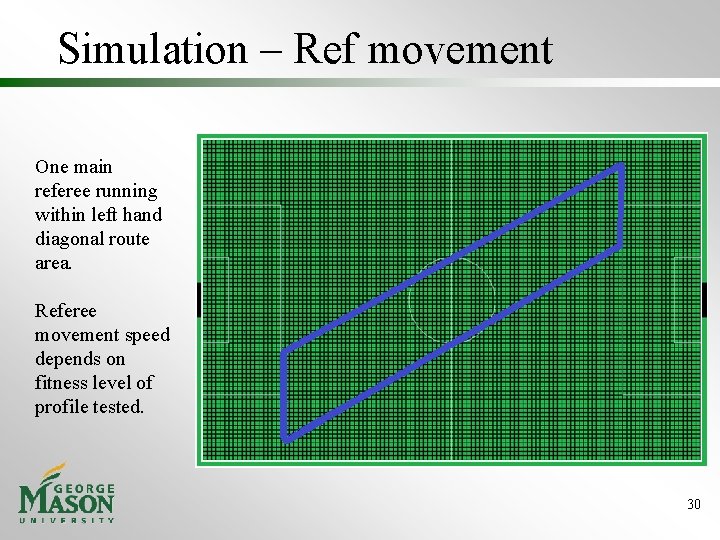

Simulation – Ref movement One main referee running within left hand diagonal route area. Referee movement speed depends on fitness level of profile tested. 30

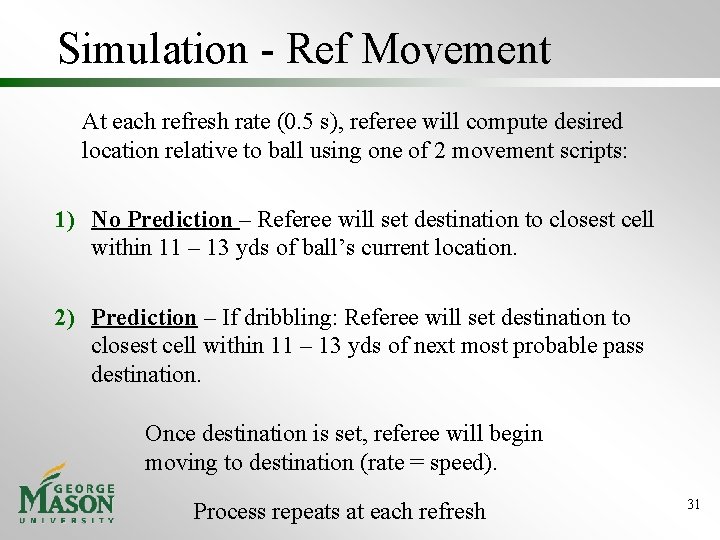

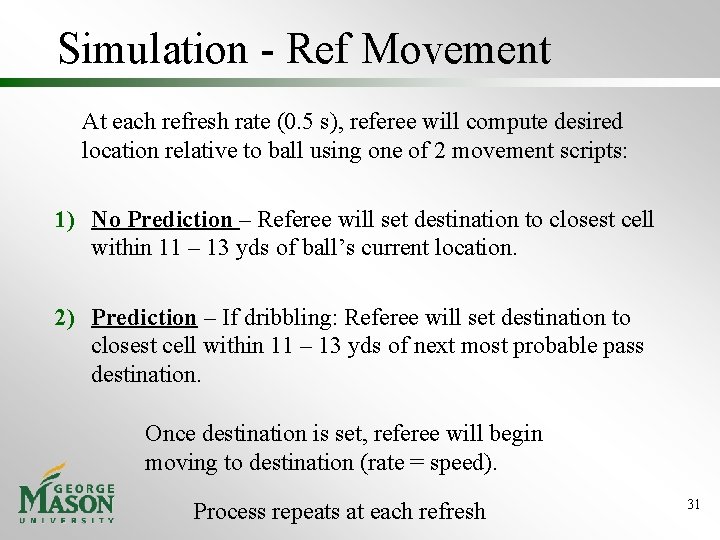

Simulation - Ref Movement At each refresh rate (0. 5 s), referee will compute desired location relative to ball using one of 2 movement scripts: 1) No Prediction – Referee will set destination to closest cell within 11 – 13 yds of ball’s current location. 2) Prediction – If dribbling: Referee will set destination to closest cell within 11 – 13 yds of next most probable pass destination. Once destination is set, referee will begin moving to destination (rate = speed). Process repeats at each refresh 31

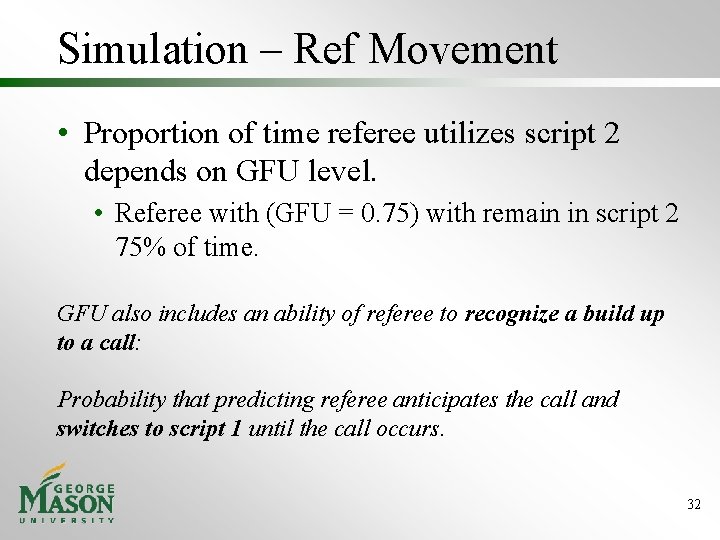

Simulation – Ref Movement • Proportion of time referee utilizes script 2 depends on GFU level. • Referee with (GFU = 0. 75) with remain in script 2 75% of time. GFU also includes an ability of referee to recognize a build up to a call: Probability that predicting referee anticipates the call and switches to script 1 until the call occurs. 32

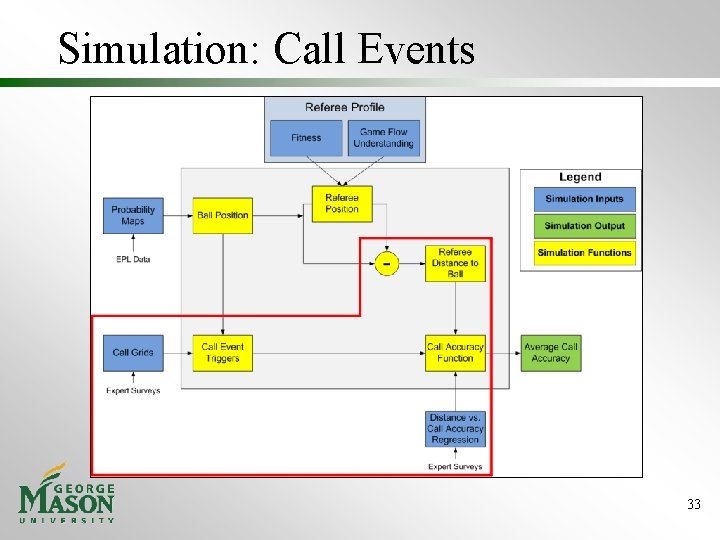

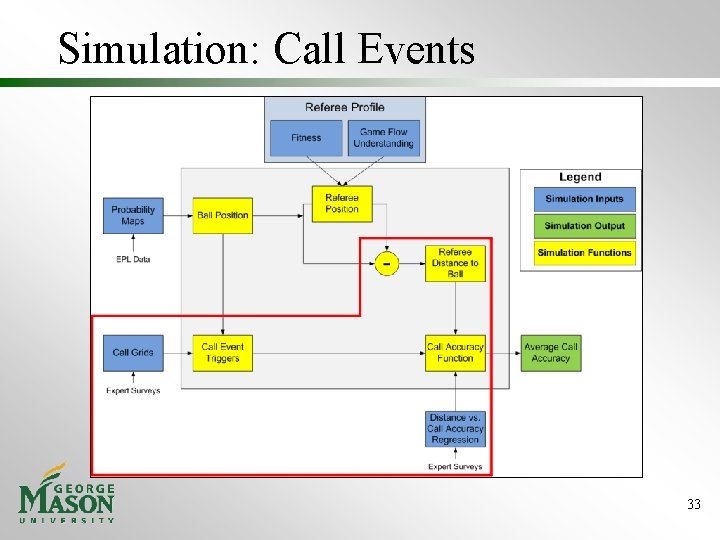

Simulation: Call Events 33

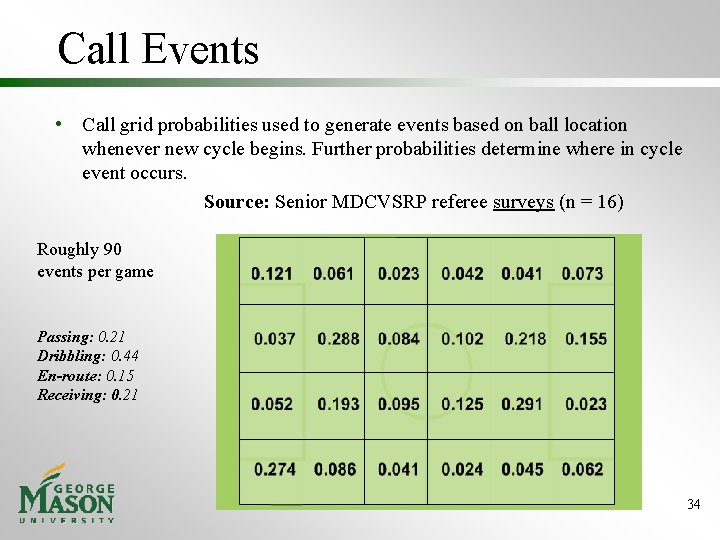

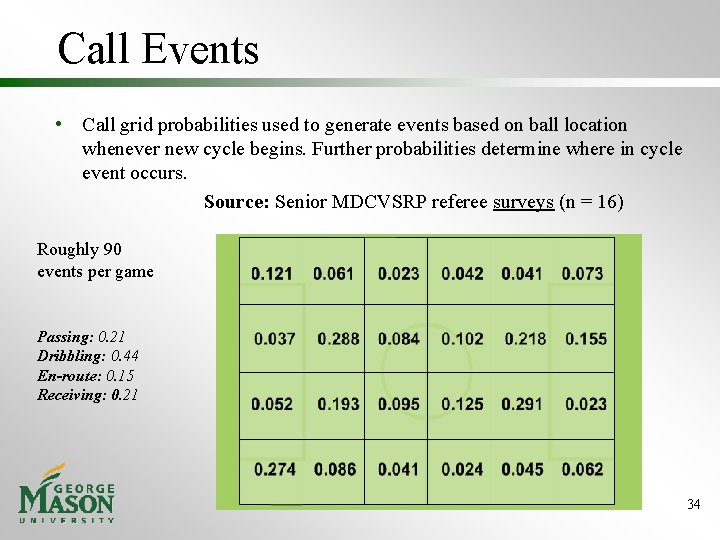

Call Events • Call grid probabilities used to generate events based on ball location whenever new cycle begins. Further probabilities determine where in cycle event occurs. Source: Senior MDCVSRP referee surveys (n = 16) Roughly 90 events per game Passing: 0. 21 Dribbling: 0. 44 En-route: 0. 15 Receiving: 0. 21 34

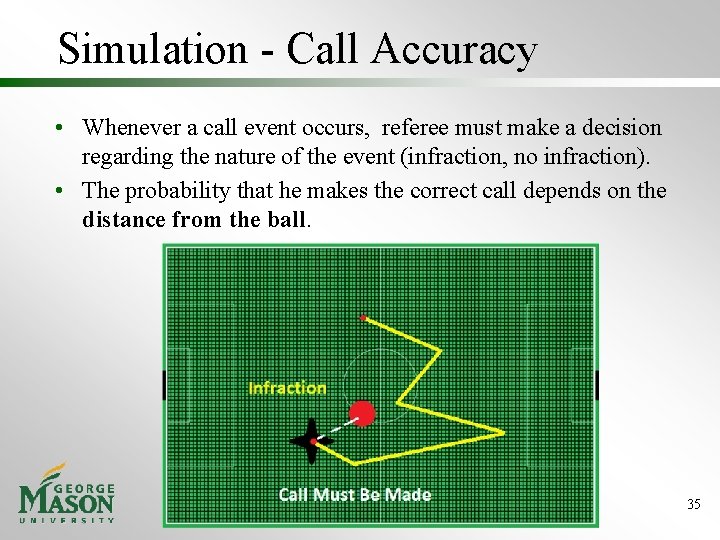

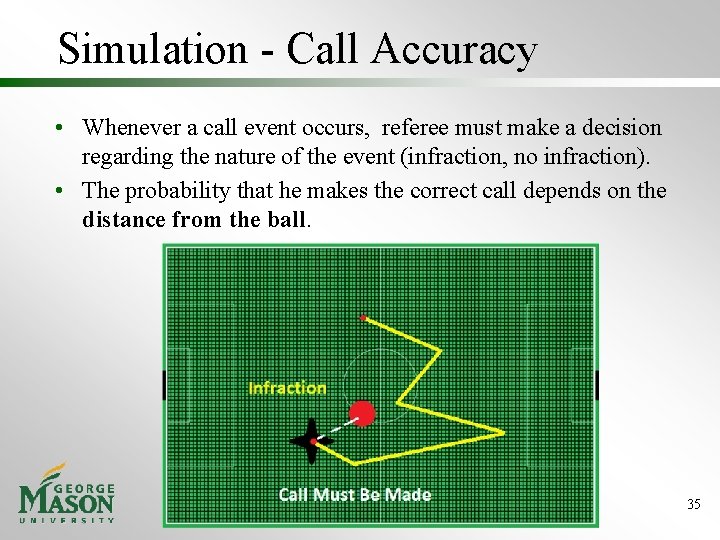

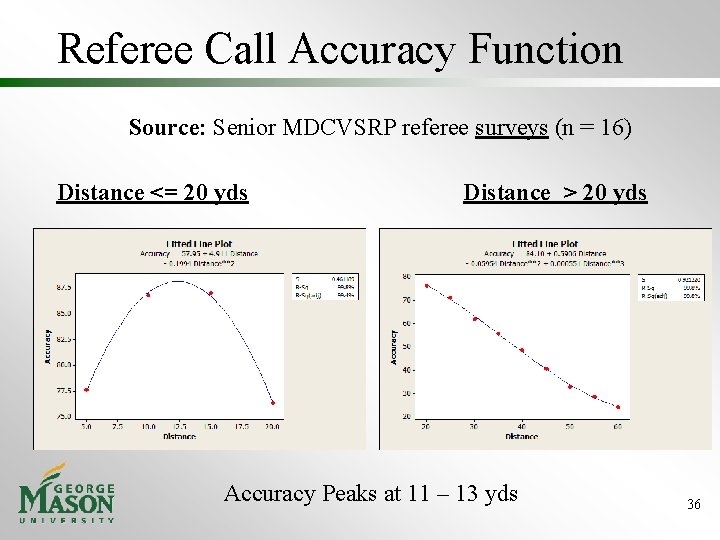

Simulation - Call Accuracy • Whenever a call event occurs, referee must make a decision regarding the nature of the event (infraction, no infraction). • The probability that he makes the correct call depends on the distance from the ball. 35

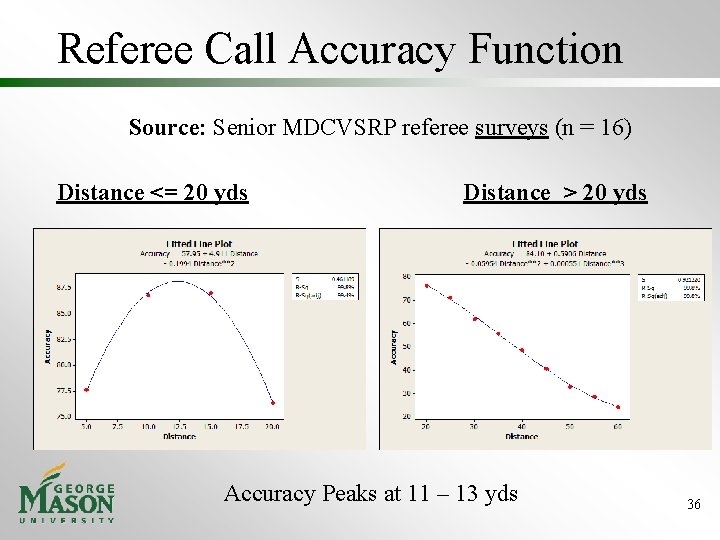

Referee Call Accuracy Function Source: Senior MDCVSRP referee surveys (n = 16) Distance <= 20 yds Distance > 20 yds Accuracy Peaks at 11 – 13 yds 36

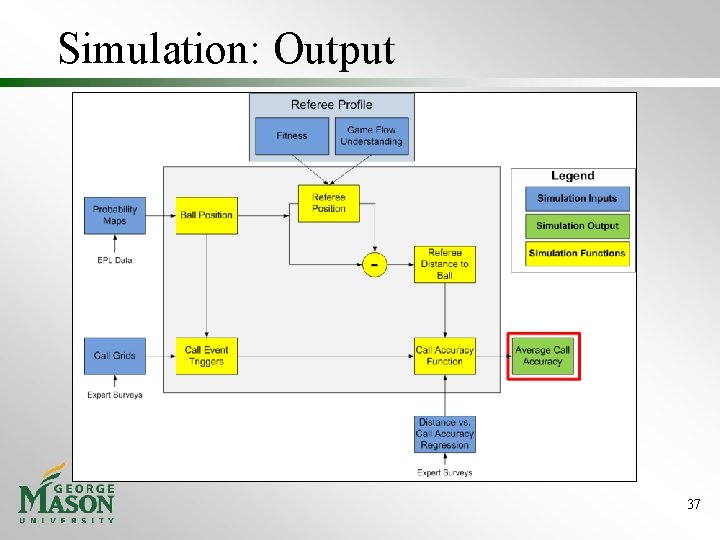

Simulation: Output 37

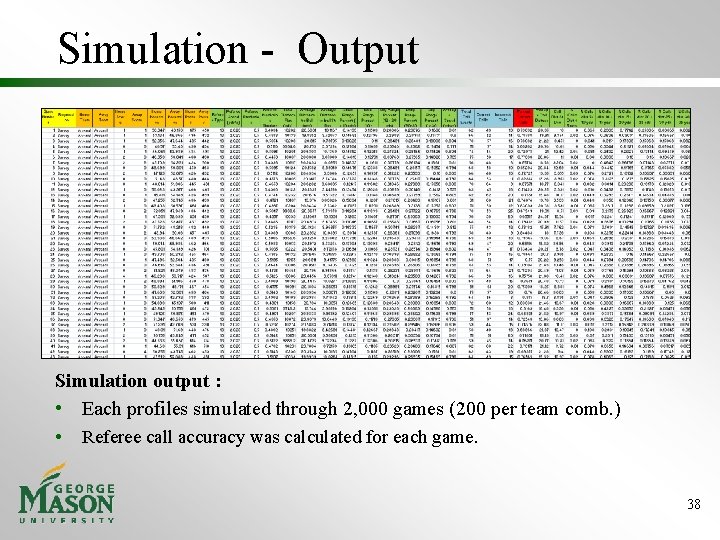

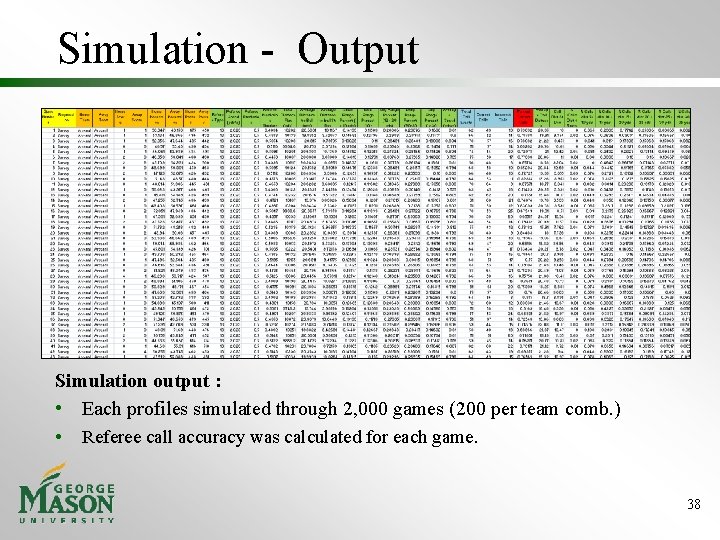

Simulation - Output Simulation output : • Each profiles simulated through 2, 000 games (200 per team comb. ) • Referee call accuracy was calculated for each game. 38

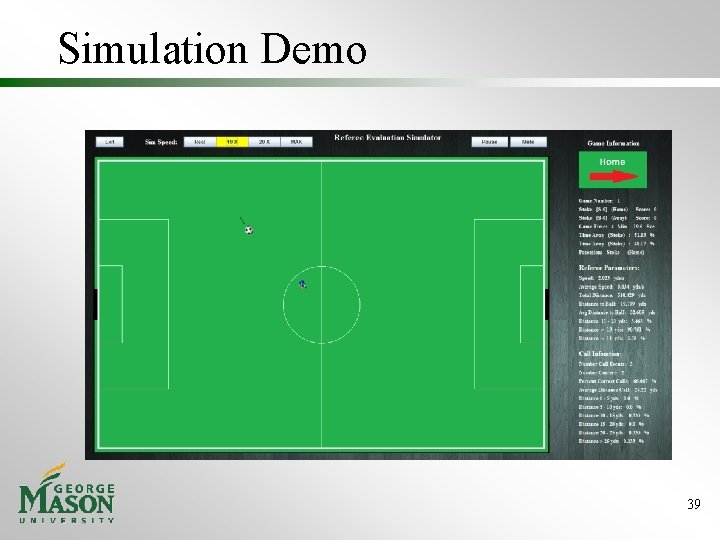

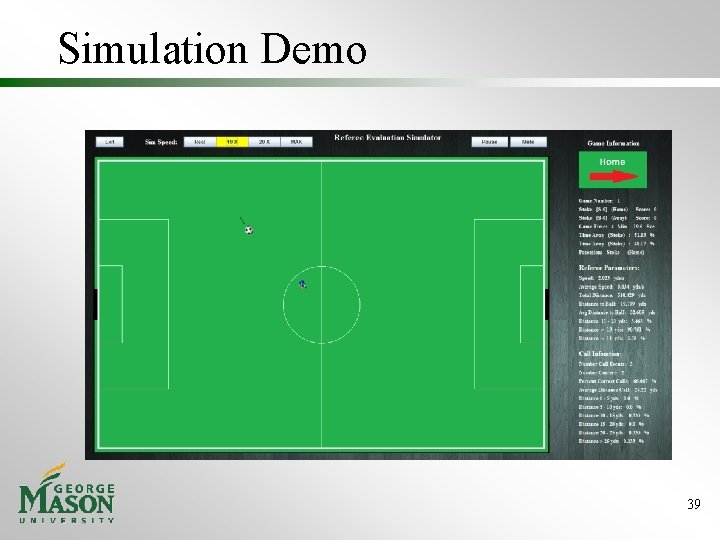

Simulation Demo 39

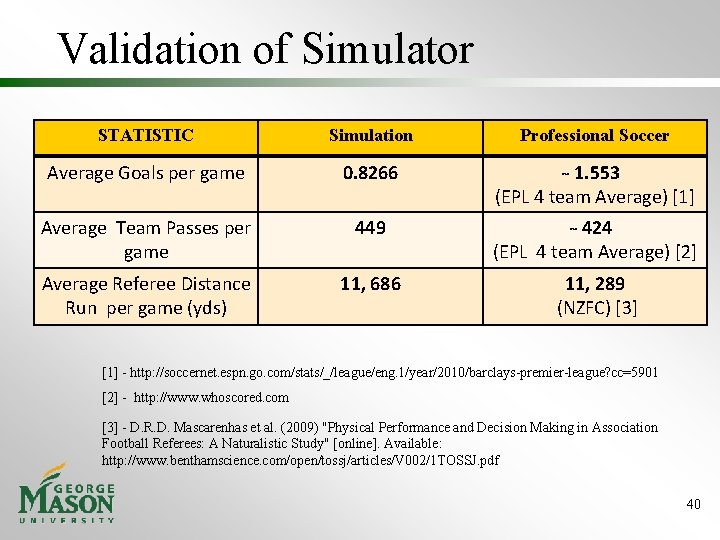

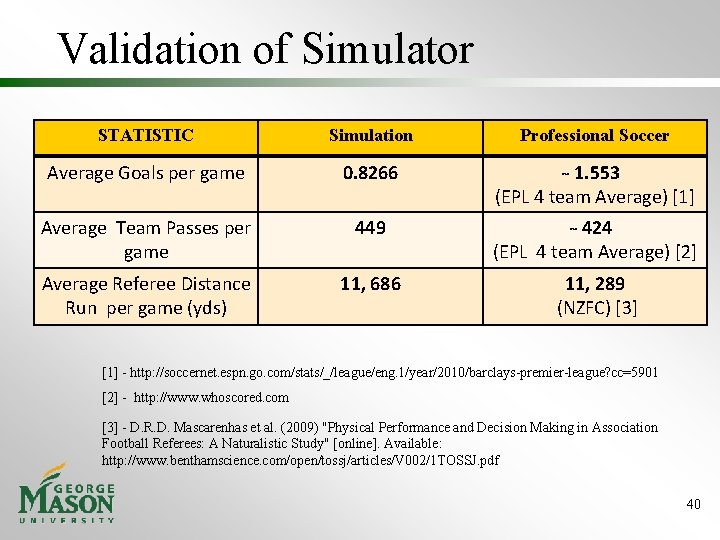

Validation of Simulator STATISTIC Simulation Professional Soccer Average Goals per game 0. 8266 1. 553 (EPL 4 team Average) [1] Average Team Passes per game 449 424 (EPL 4 team Average) [2] Average Referee Distance Run per game (yds) 11, 686 11, 289 (NZFC) [3] [1] - http: //soccernet. espn. go. com/stats/_/league/eng. 1/year/2010/barclays-premier-league? cc=5901 [2] - http: //www. whoscored. com [3] - D. R. D. Mascarenhas et al. (2009) "Physical Performance and Decision Making in Association Football Referees: A Naturalistic Study" [online]. Available: http: //www. benthamscience. com/open/tossj/articles/V 002/1 TOSSJ. pdf 40

Agenda 1. 2. 3. 4. 5. 6. 7. 8. Context Problem & Need Statement Design Alternatives Simulation Output Utility Analysis Conclusions Management 41

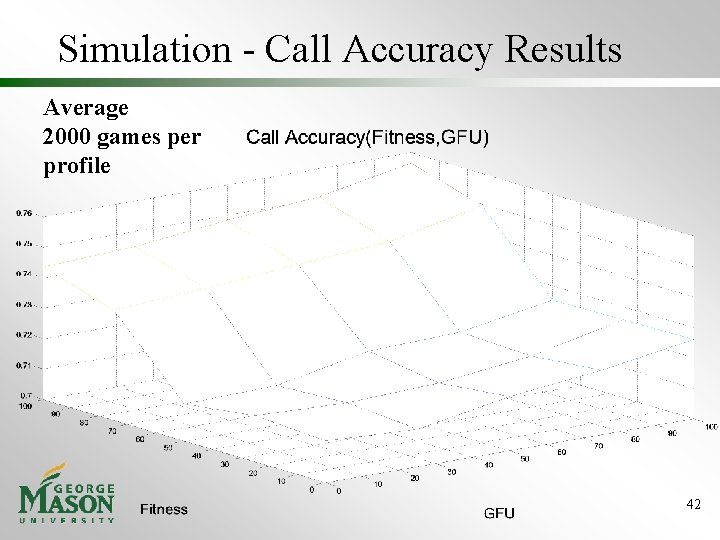

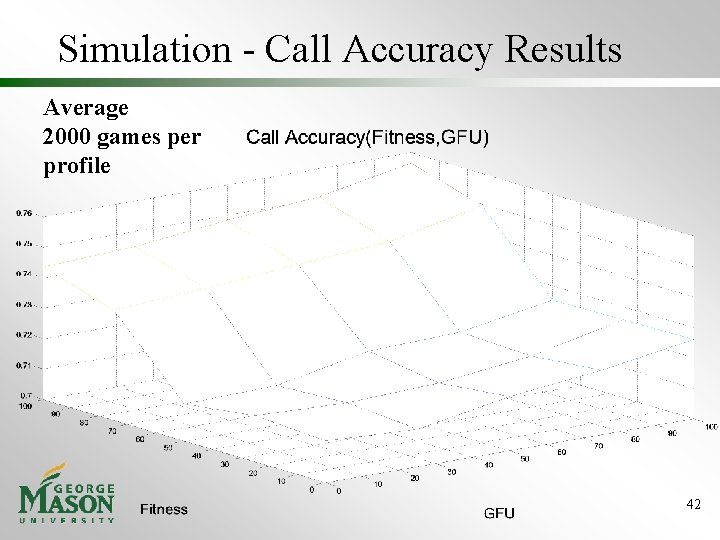

Simulation - Call Accuracy Results Average 2000 games per profile 42

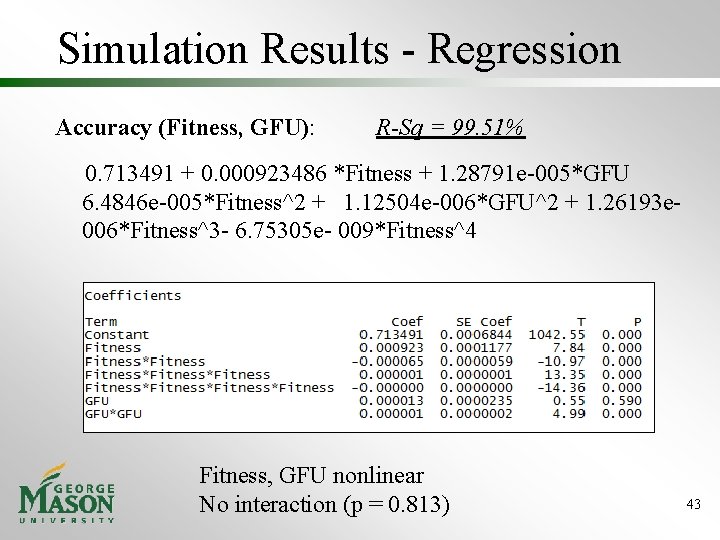

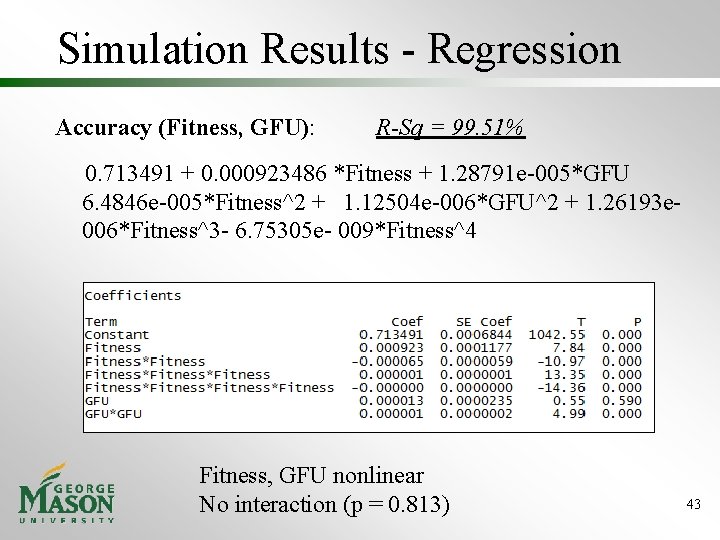

Simulation Results - Regression Accuracy (Fitness, GFU): R-Sq = 99. 51% 0. 713491 + 0. 000923486 *Fitness + 1. 28791 e-005*GFU 6. 4846 e-005*Fitness^2 + 1. 12504 e-006*GFU^2 + 1. 26193 e 006*Fitness^3 - 6. 75305 e- 009*Fitness^4 Fitness, GFU nonlinear No interaction (p = 0. 813) 43

Agenda 1. 2. 3. 4. 5. 6. 7. 8. Context Problem & Need Statement Design Alternatives Simulation Output Utility Analysis Conclusions Management 44

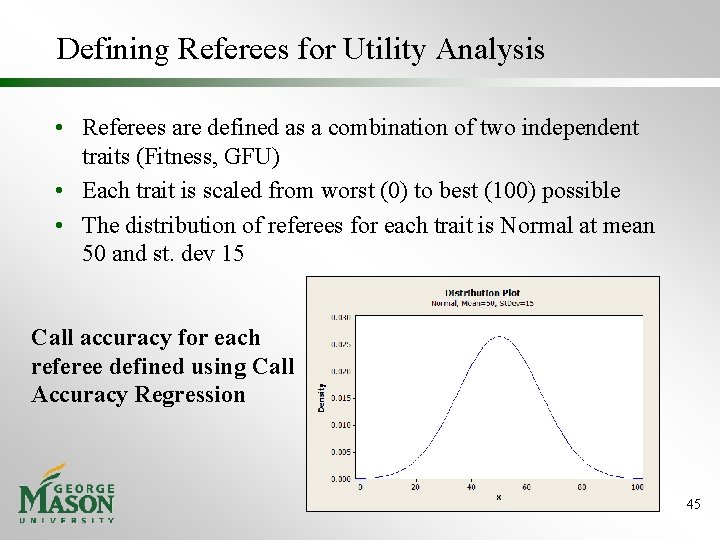

Defining Referees for Utility Analysis • Referees are defined as a combination of two independent traits (Fitness, GFU) • Each trait is scaled from worst (0) to best (100) possible • The distribution of referees for each trait is Normal at mean 50 and st. dev 15 Call accuracy for each referee defined using Call Accuracy Regression 45

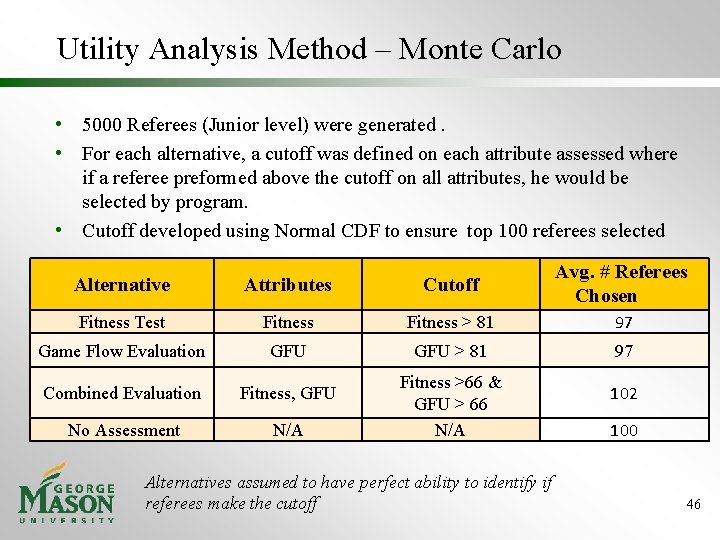

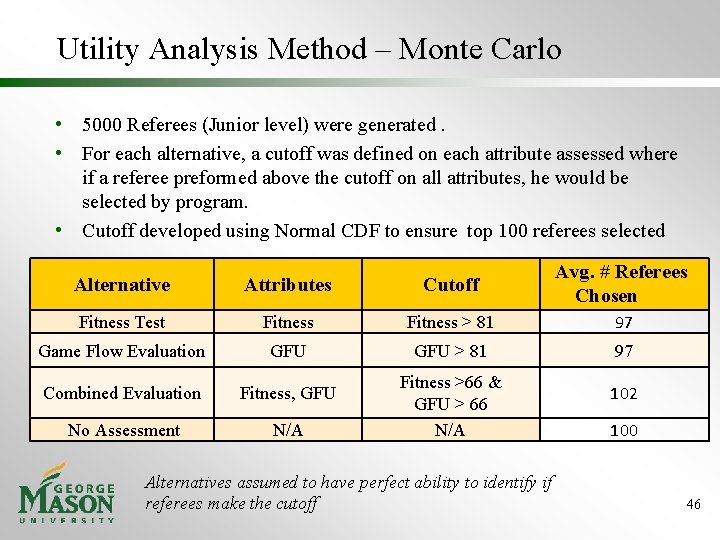

Utility Analysis Method – Monte Carlo • 5000 Referees (Junior level) were generated. • For each alternative, a cutoff was defined on each attribute assessed where if a referee preformed above the cutoff on all attributes, he would be selected by program. • Cutoff developed using Normal CDF to ensure top 100 referees selected Alternative Attributes Cutoff Avg. # Referees Chosen Fitness Test Fitness > 81 97 Game Flow Evaluation GFU > 81 97 Combined Evaluation Fitness, GFU No Assessment N/A Fitness >66 & GFU > 66 N/A Alternatives assumed to have perfect ability to identify if referees make the cutoff 102 100 46

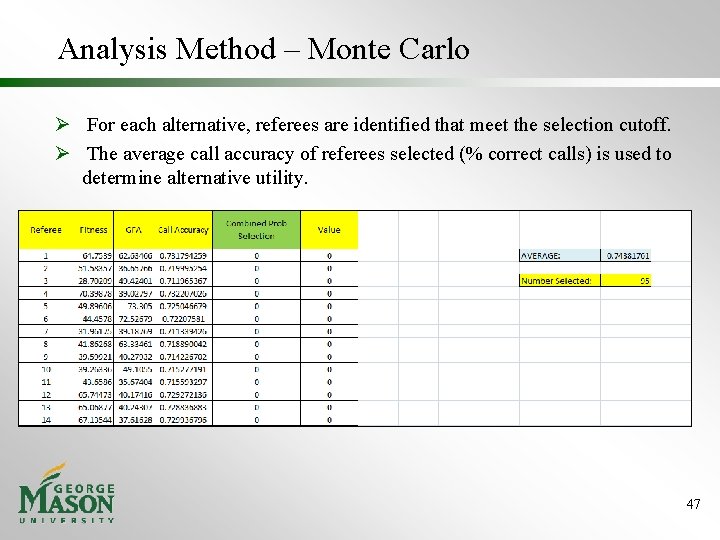

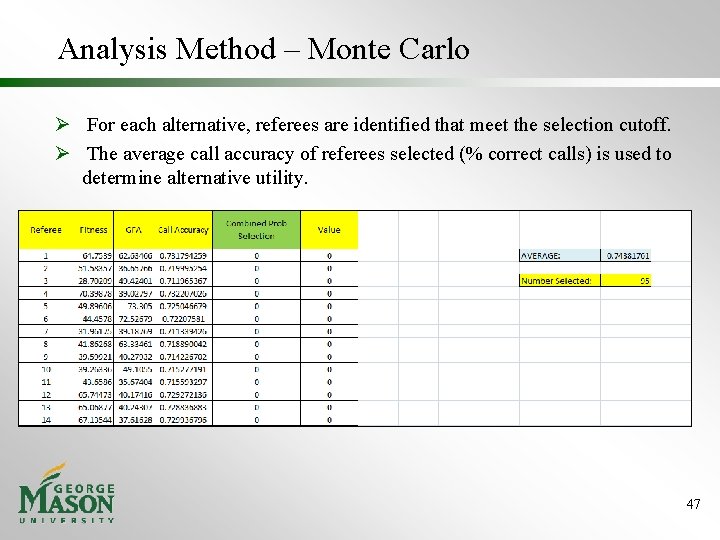

Analysis Method – Monte Carlo Ø For each alternative, referees are identified that meet the selection cutoff. Ø The average call accuracy of referees selected (% correct calls) is used to determine alternative utility. 47

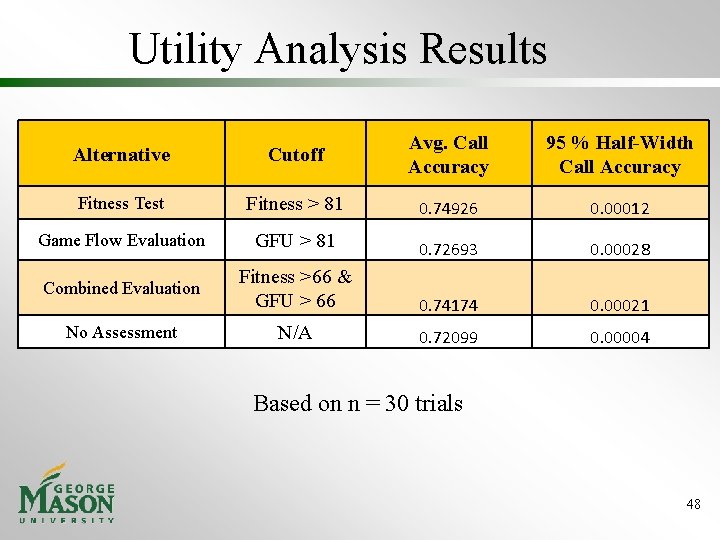

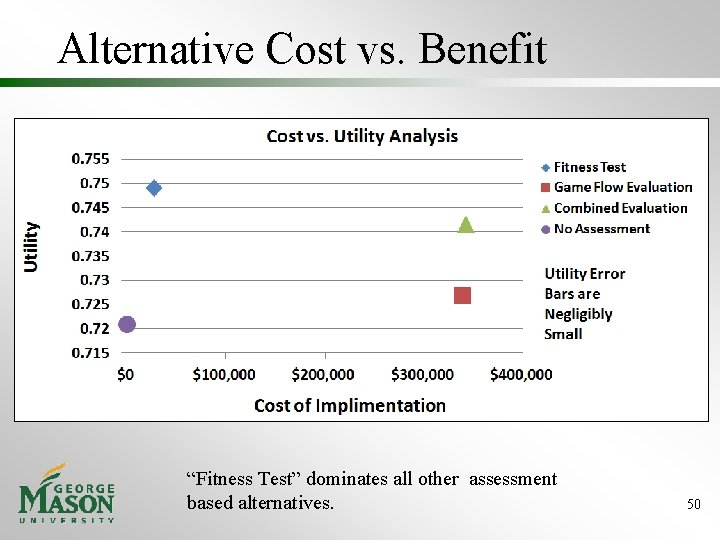

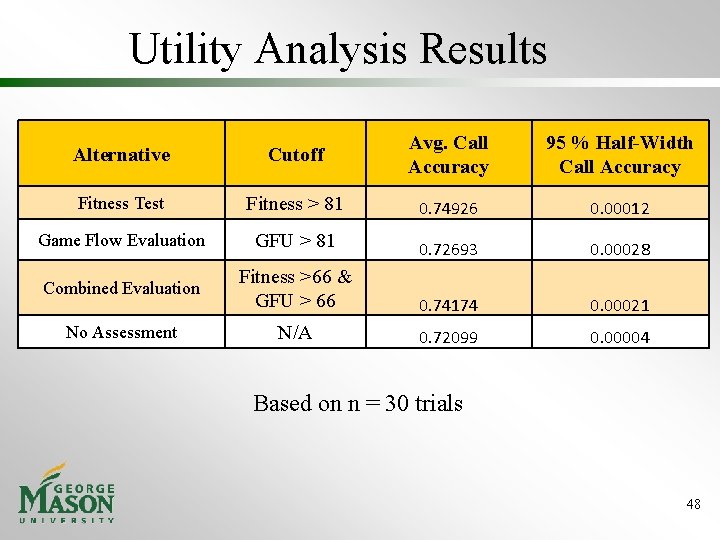

Utility Analysis Results Alternative Cutoff Avg. Call Accuracy 95 % Half-Width Call Accuracy Fitness Test Fitness > 81 0. 74926 0. 00012 Game Flow Evaluation GFU > 81 0. 72693 0. 00028 Combined Evaluation Fitness >66 & GFU > 66 0. 74174 0. 00021 No Assessment N/A 0. 72099 0. 00004 Based on n = 30 trials 48

Agenda 1. 2. 3. 4. 5. 6. 7. 8. Context Problem & Need Statement Design Alternatives Simulation Output Utility Analysis Conclusions Management 49

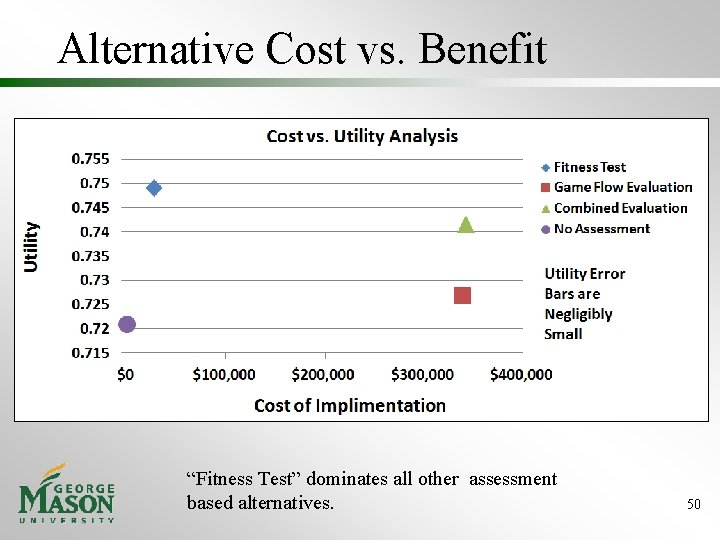

Alternative Cost vs. Benefit “Fitness Test” dominates all other assessment based alternatives. 50

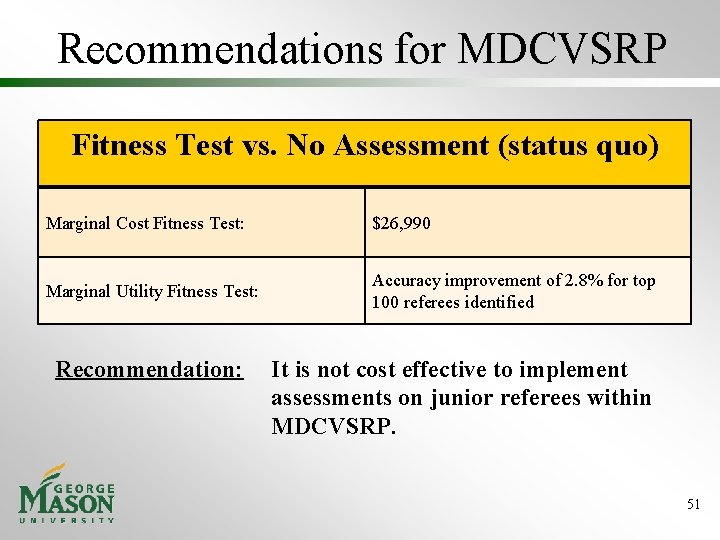

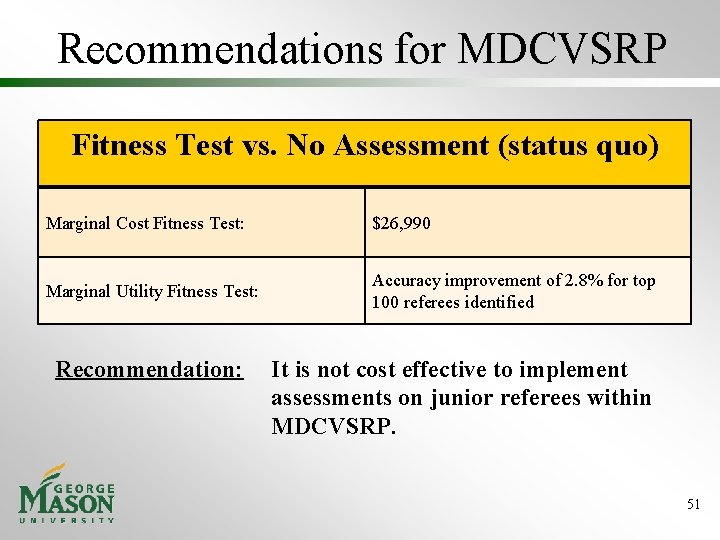

Recommendations for MDCVSRP Fitness Test vs. No Assessment (status quo) Marginal Cost Fitness Test: $26, 990 Marginal Utility Fitness Test: Accuracy improvement of 2. 8% for top 100 referees identified Recommendation: It is not cost effective to implement assessments on junior referees within MDCVSRP. 51

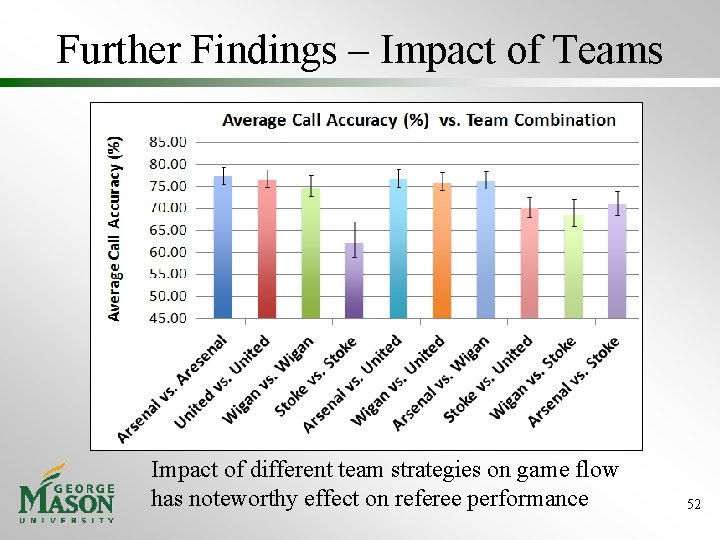

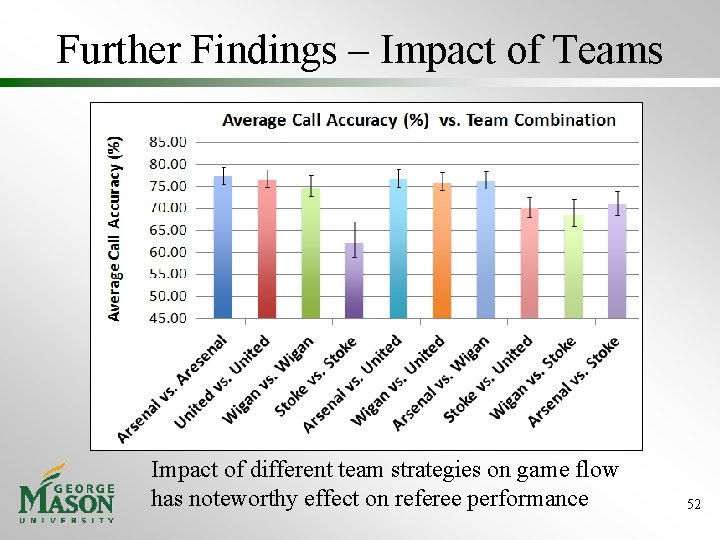

Further Findings – Impact of Teams Impact of different team strategies on game flow has noteworthy effect on referee performance 52

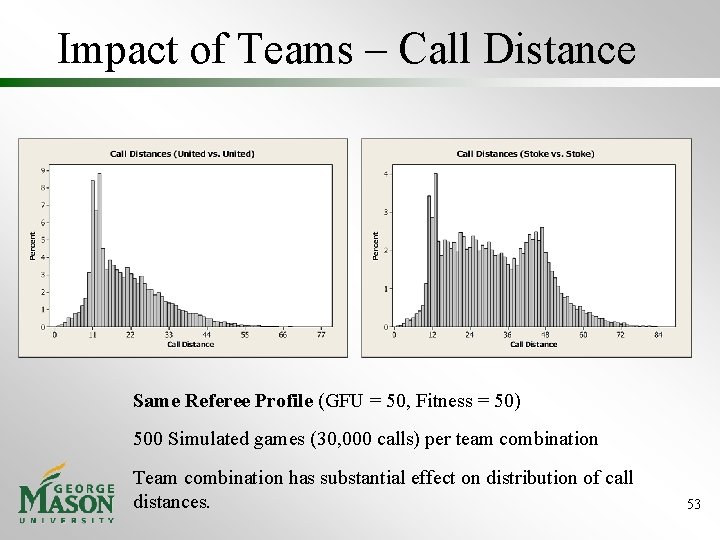

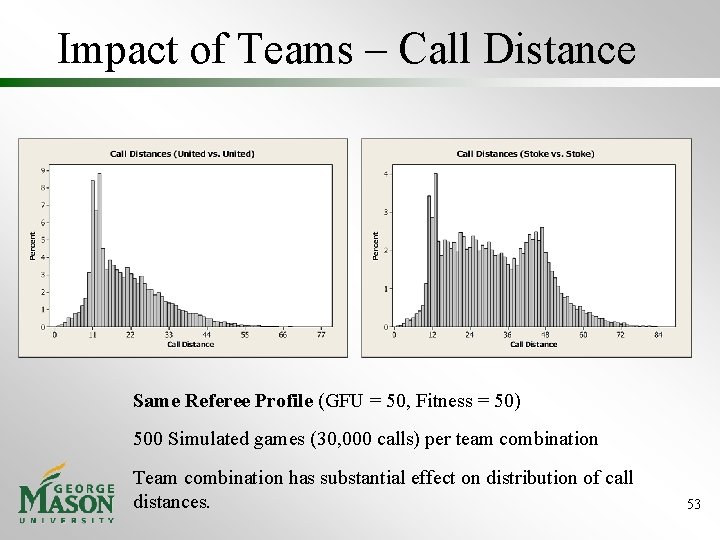

Impact of Teams – Call Distance Same Referee Profile (GFU = 50, Fitness = 50) 500 Simulated games (30, 000 calls) per team combination Team combination has substantial effect on distribution of call distances. 53

Additional Findings – Recommendation for USSF • When comparing the quality of multiple referees based on in-game performance, match difficulty in terms of game flow and team combination must be taken into consideration. 54

Agenda 1. 2. 3. 4. 5. 6. 7. 8. Context Problem & Need Statement Design Alternatives Simulation Output Utility Analysis Conclusions Management 55

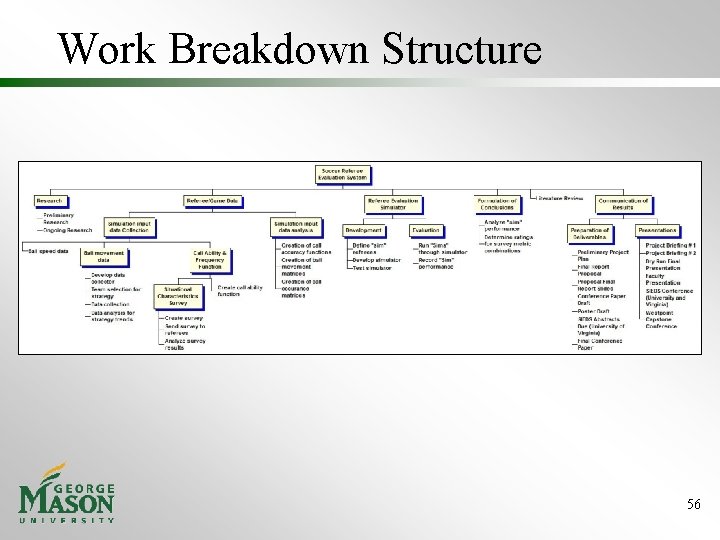

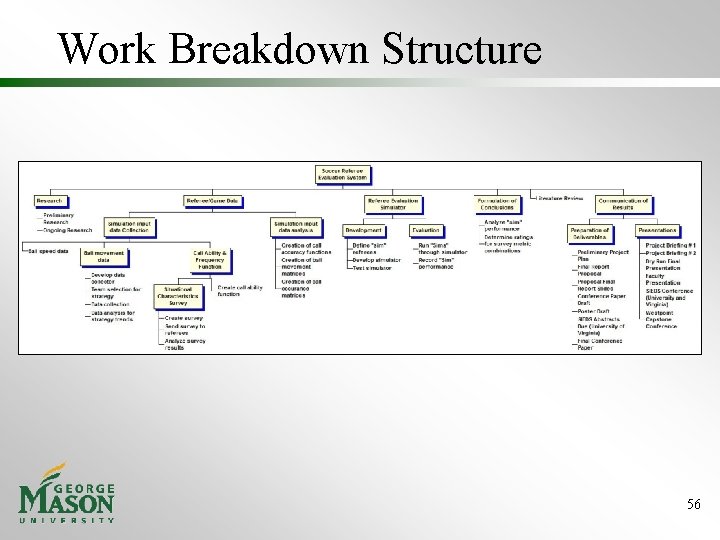

Work Breakdown Structure 56

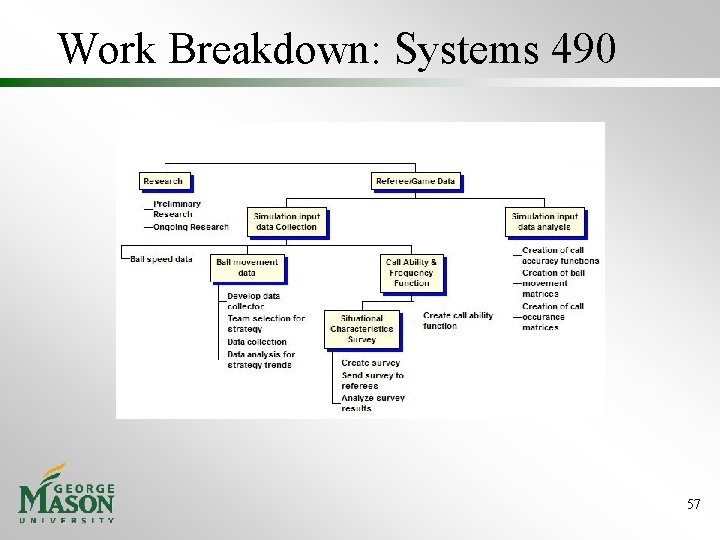

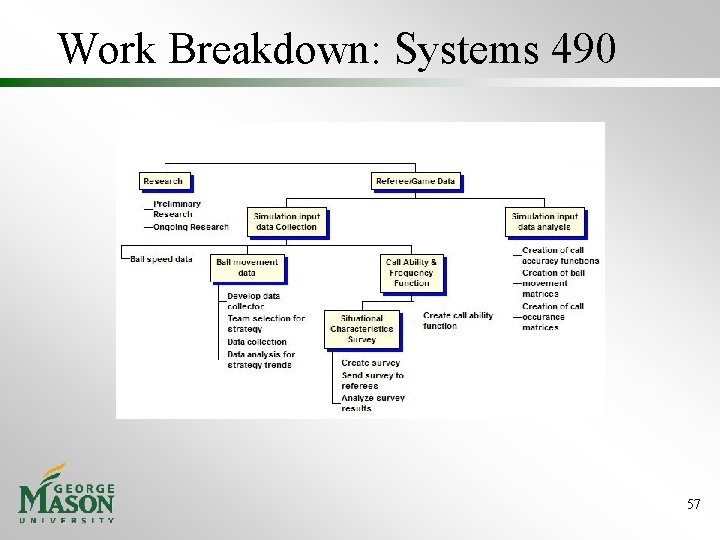

Work Breakdown: Systems 490 57

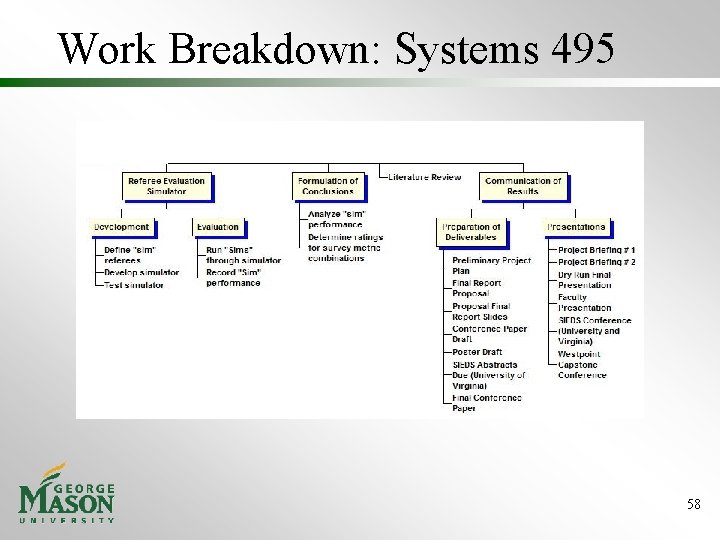

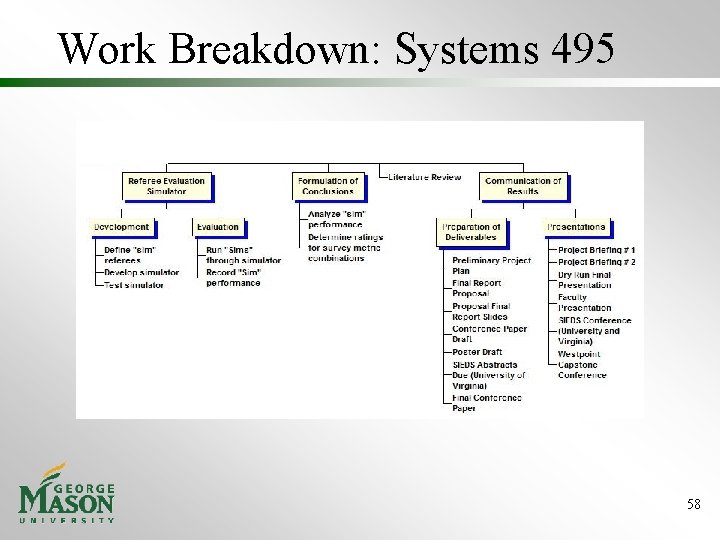

Work Breakdown: Systems 495 58

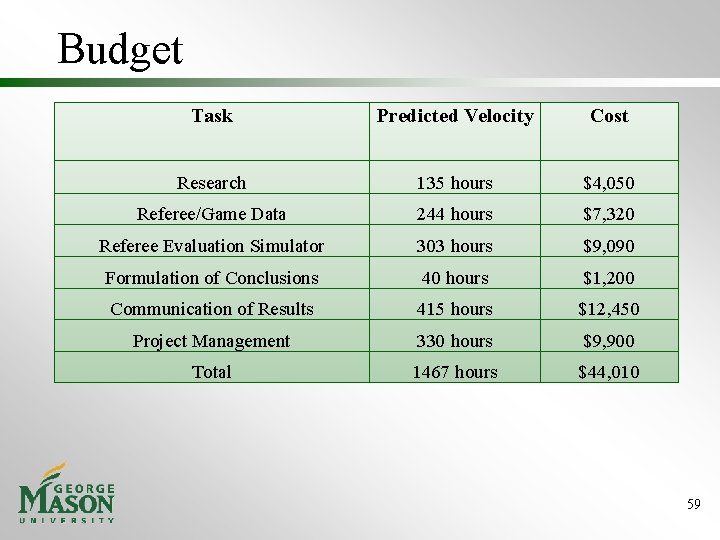

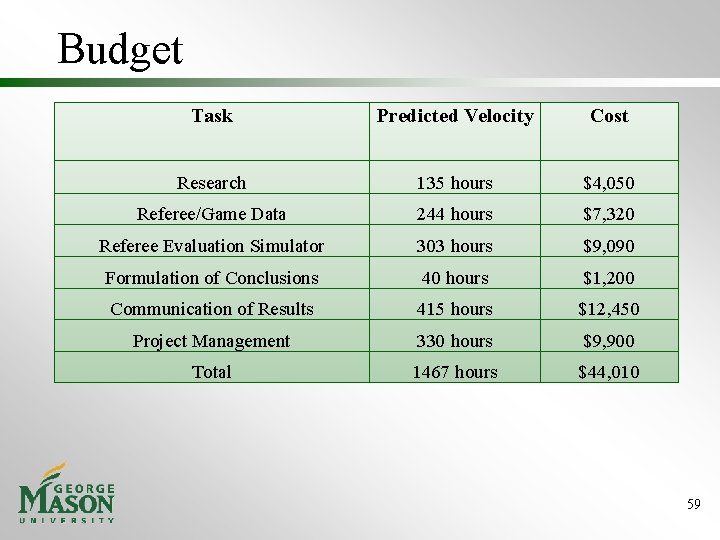

Budget Task Predicted Velocity Cost Research 135 hours $4, 050 Referee/Game Data 244 hours $7, 320 Referee Evaluation Simulator 303 hours $9, 090 Formulation of Conclusions 40 hours $1, 200 Communication of Results 415 hours $12, 450 Project Management 330 hours $9, 900 Total 1467 hours $44, 010 59

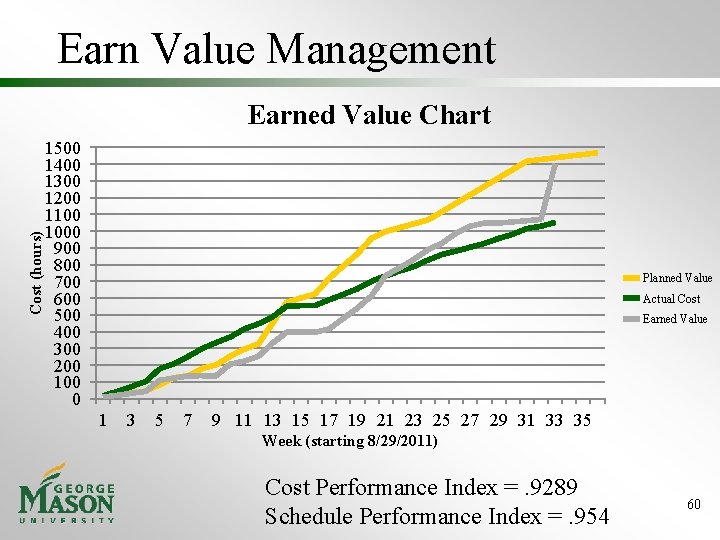

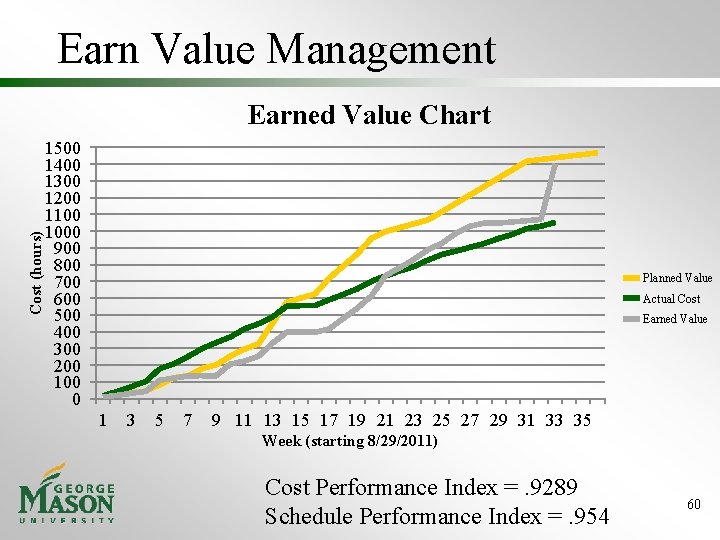

Earn Value Management Cost (hours) Earned Value Chart 1500 1400 1300 1200 1100 1000 900 800 700 600 500 400 300 200 100 0 Planned Value Actual Cost Earned Value 1 3 5 7 9 11 13 15 17 19 21 23 25 27 29 31 33 35 Week (starting 8/29/2011) Cost Performance Index =. 9289 Schedule Performance Index =. 954 60

Sponsor Testimony “ The analysis done by the students has been incredibly eye-opening. They have changed the way our management at MDCVSRP think about referee development and where to use our budget. ” -Pat Delaney MDCVSRP Chairman 61