Aspects of Submodular Maximization Subject to a Matroid

Aspects of Submodular Maximization Subject to a Matroid Constraint Moran Feldman Based on • • • A Unified Continuous Greedy Algorithm for Submodular Maximization. Moran Feldman, Joseph (Seffi) Naor and Roy Schwartz (FOCS 2011). Submodular Maximization with Cardinality Constraints. Niv Buchbinder, Moran Feldman, Joseph (Seffi) Naor and Roy Schwartz (SODA 2014). Comparing Apples and Oranges: Query Tradeoff in Submodular Maximization. Niv Buchbinder, Moran Feldman and Roy Schwartz (SODA 2015).

Submodular Maximization Subject to a Matroid Constraint Why? What? • Generalizes Classical Problems § Max-SAT, Max-Cut, k-cover, GAP… • Applications § Machine Learning § Image Processing § Algorithmic Game Theory 2

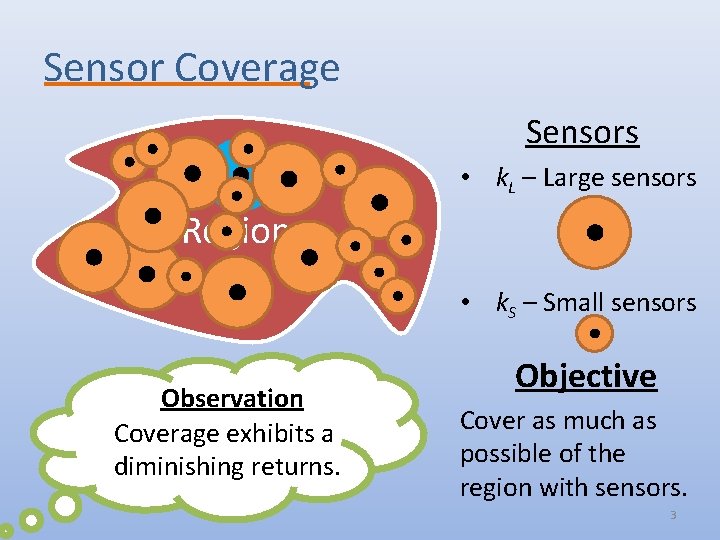

Sensor Coverage Sensors • k. L – Large sensors Region • k. S – Small sensors Observation Coverage exhibits a diminishing returns. Objective Cover as much as possible of the region with sensors. 3

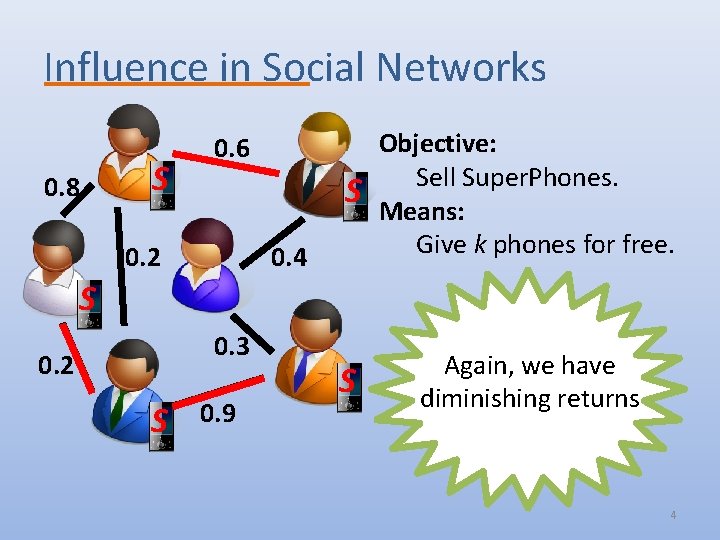

Influence in Social Networks 0. 8 S S Objective: Sell Super. Phones. Means: Give k phones for free. S Again, we have diminishing returns 0. 6 0. 2 0. 4 S 0. 3 0. 2 S 0. 9 4

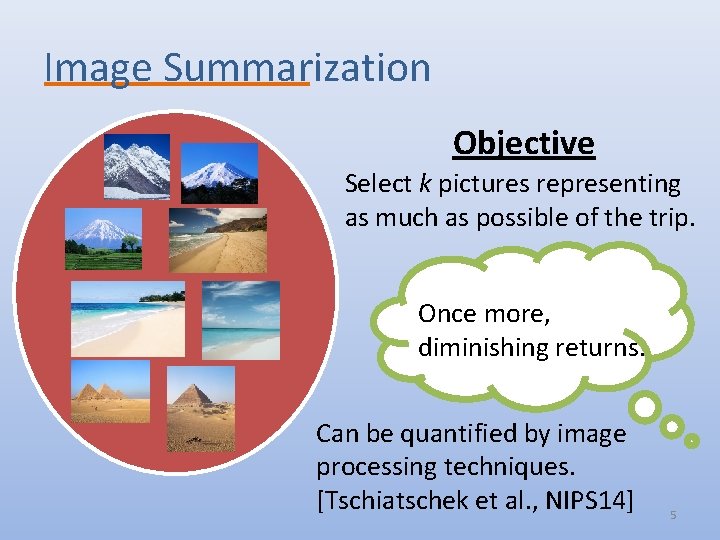

Image Summarization Objective Select k pictures representing as much as possible of the trip. Once more, diminishing returns. Can be quantified by image processing techniques. [Tschiatschek et al. , NIPS 14] 5

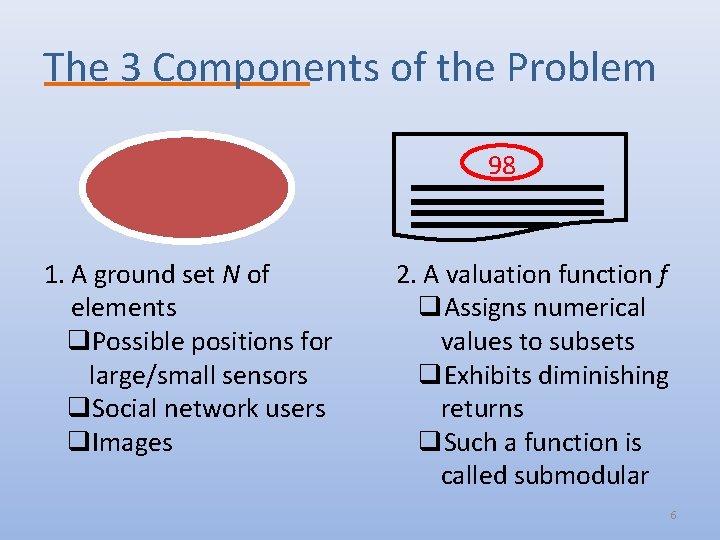

The 3 Components of the Problem 98 1. A ground set N of elements q. Possible positions for large/small sensors q. Social network users q. Images 2. A valuation function f q. Assigns numerical values to subsets q. Exhibits diminishing returns q. Such a function is called submodular 6

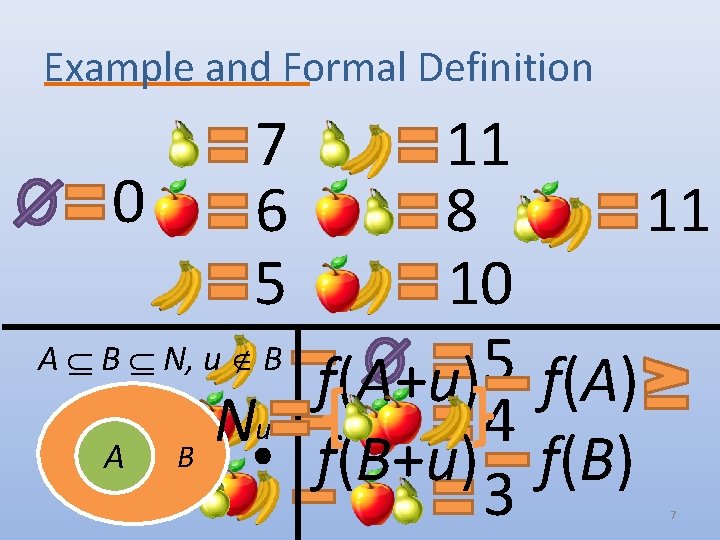

Example and Formal Definition 7 6 5 11 0 8 11 10 A B N, u B 5 f(A+u) f(A) u N 4 A B f(B+u) f(B) 3 7

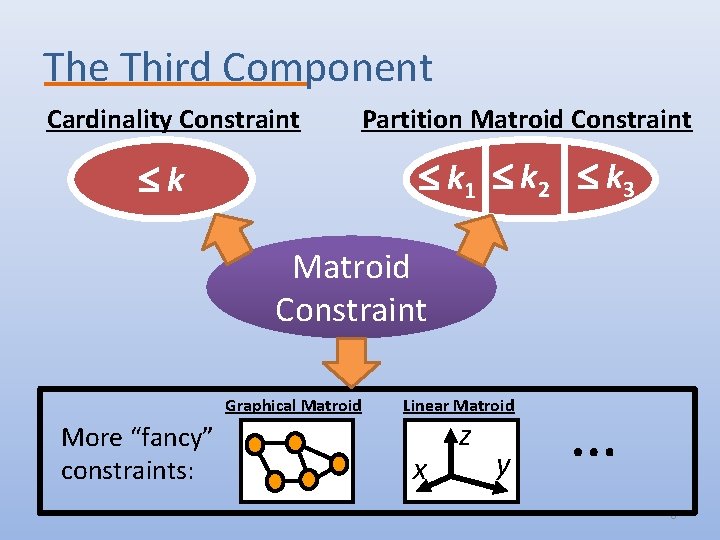

The Third Component Cardinality Constraint Partition Matroid Constraint k 1 k 2 k 3 k Matroid Constraint Graphical Matroid More “fancy” constraints: Linear Matroid x z y … 8

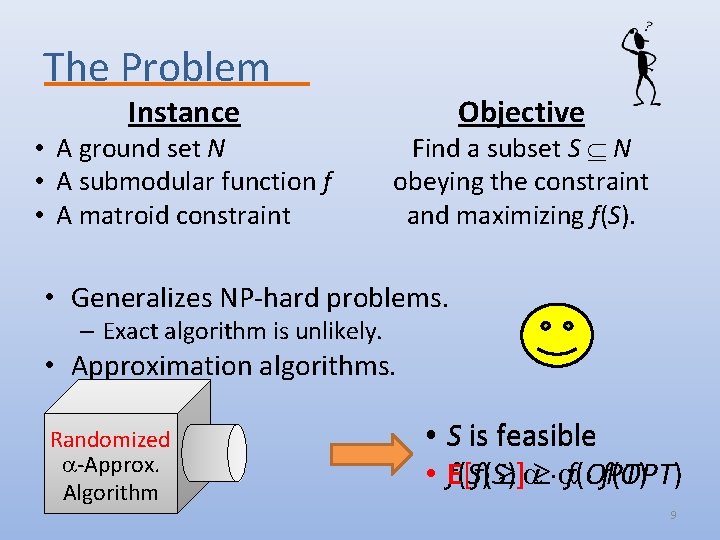

The Problem Instance • A ground set N • A submodular function f • A matroid constraint Objective Find a subset S N obeying the constraint and maximizing f(S). • Generalizes NP-hard problems. – Exact algorithm is unlikely. • Approximation algorithms. Randomized -Approx. Algorithm S • S is feasible • E[f(S)] f(OPT) f(S) f(OPT) 9

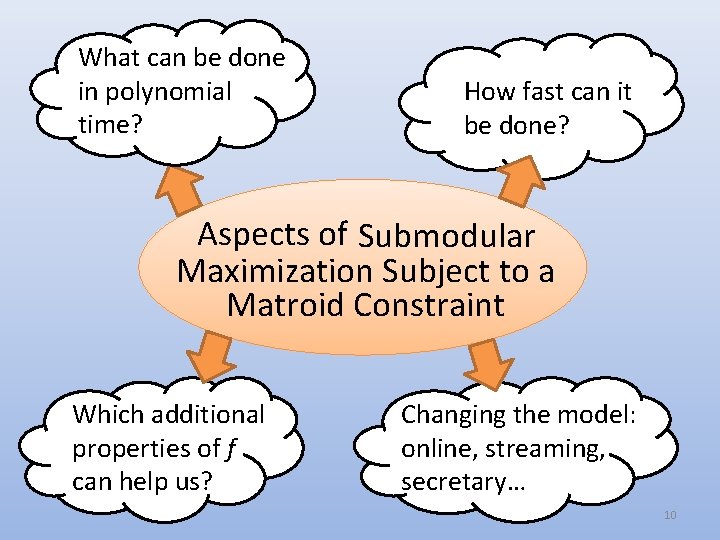

What can be done in polynomial time? How fast can it be done? Aspects of Submodular Maximization Subject to a Matroid Constraint Which additional properties of f can help us? Changing the model: online, streaming, secretary… 10

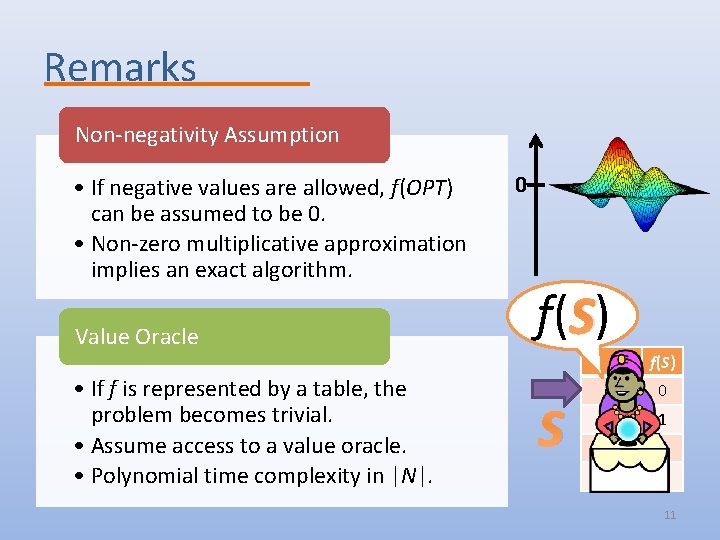

Remarks Non-negativity Assumption • If negative values are allowed, f(OPT) can be assumed to be 0. • Non-zero multiplicative approximation implies an exact algorithm. Value Oracle • If f is represented by a table, the problem becomes trivial. • Assume access to a value oracle. • Polynomial time complexity in |N|. 0 f( S) S S f(S) 0 {a} 1 {b} 2 {a, b} 2 11

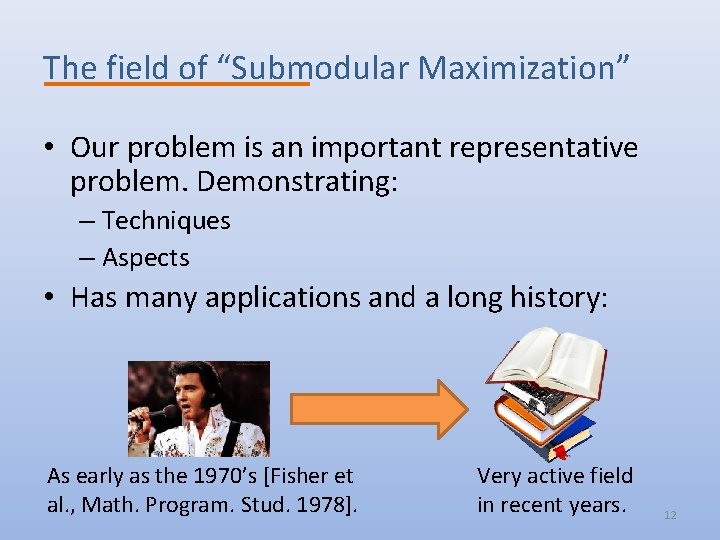

The field of “Submodular Maximization” • Our problem is an important representative problem. Demonstrating: – Techniques – Aspects • Has many applications and a long history: As early as the 1970’s [Fisher et al. , Math. Program. Stud. 1978]. Very active field in recent years. 12

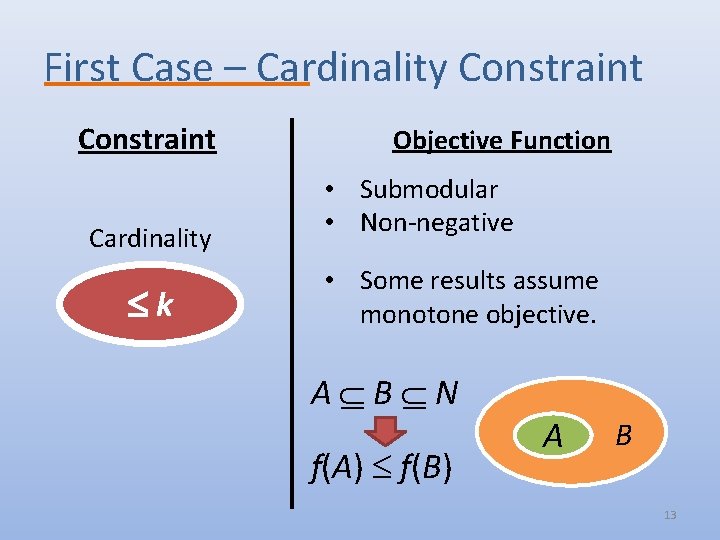

First Case – Cardinality Constraint Cardinality k Objective Function • Submodular • Non-negative • Some results assume monotone objective. A B N f(A) f(B) A B 13

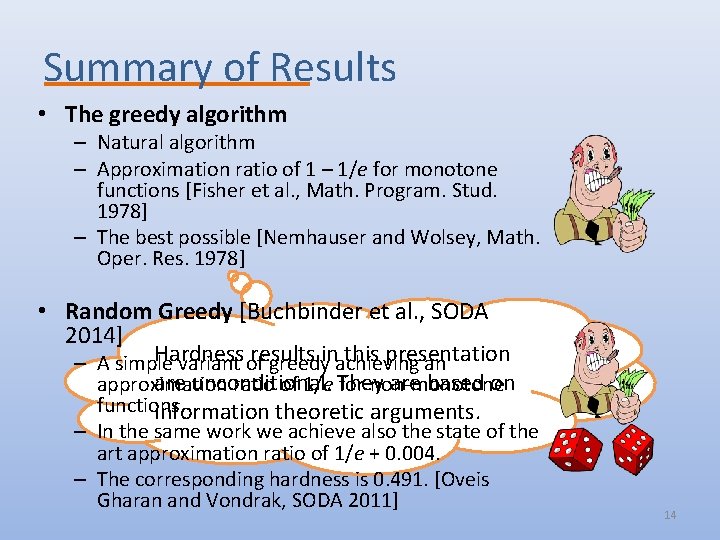

Summary of Results • The greedy algorithm – Natural algorithm – Approximation ratio of 1 – 1/e for monotone functions [Fisher et al. , Math. Program. Stud. 1978] – The best possible [Nemhauser and Wolsey, Math. Oper. Res. 1978] • Random Greedy [Buchbinder et al. , SODA 2014] Hardness results this presentation – A simple variant of greedyinachieving an are unconditional. are based on approximation ratio of 1/e They for non-monotone functions. information theoretic arguments. – In the same work we achieve also the state of the art approximation ratio of 1/e + 0. 004. – The corresponding hardness is 0. 491. [Oveis Gharan and Vondrak, SODA 2011] 14

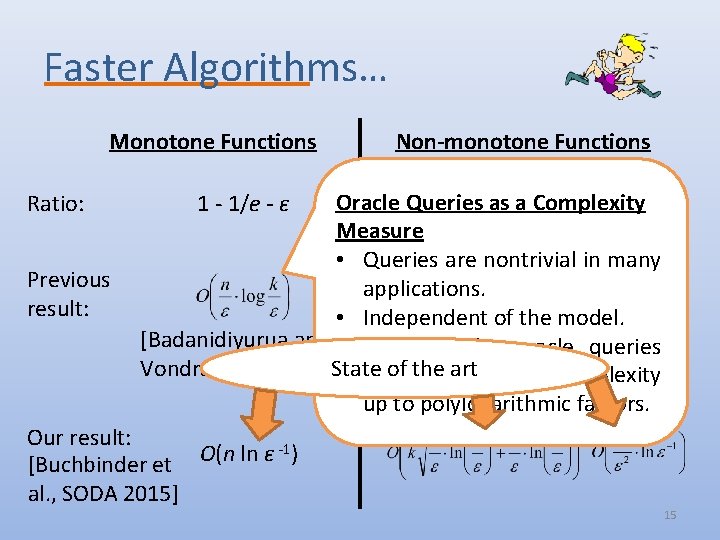

Faster Algorithms… Monotone Functions Ratio: Previous result: Non-monotone Functions Oracle Queries as 1/e a Complexity -ε Measure • Queries are nontrivial in many applications. O(nk) • Independent of the model. [Badanidiyurua and • Typically, (Random Greedy) the oracle queries Vondrak, SODA 2014] State of the art represent the time complexity up to polylogarithmic factors. 1 - 1/e - ε Our result: -1) O(n ln ε [Buchbinder et al. , SODA 2015] 15

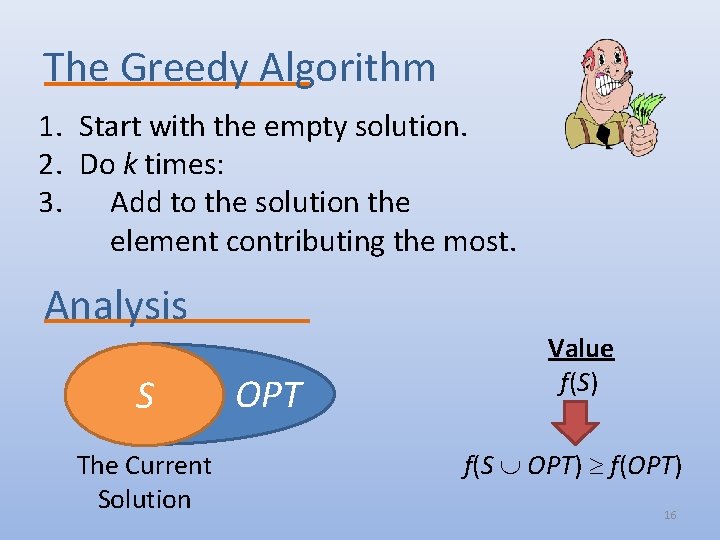

The Greedy Algorithm 1. Start with the empty solution. 2. Do k times: 3. Add to the solution the element contributing the most. Analysis S The Current Solution OPT Value f(S) f(S OPT) f(OPT) 16

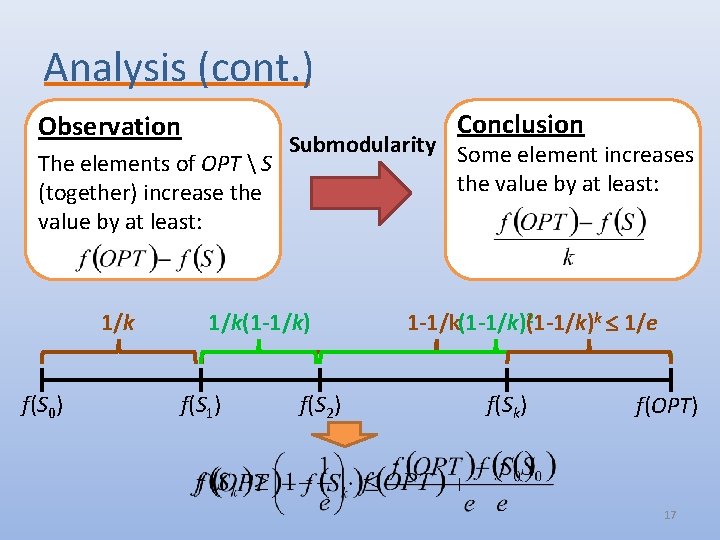

Analysis (cont. ) Conclusion Observation Submodularity Some element increases The elements of OPT S the value by at least: (together) increase the value by at least: 1/k f(S 0) 1/k(1 -1/k) f(S 1) f(S 2) 2 k 1/e 1 -1/k(1 -1/k) f(Sk) f(OPT) 17

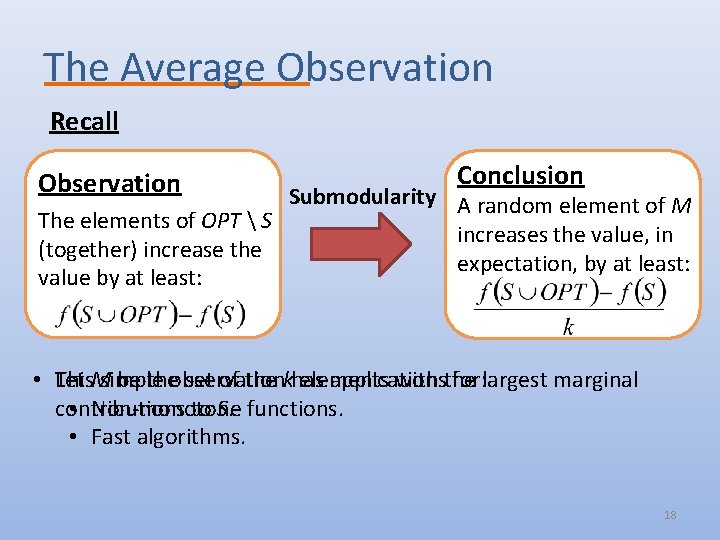

The Average Observation Recall Observation Conclusion Submodularity Some element increases A random element of M The elements of OPT S the value by least: in increases theatvalue, (together) increase the expectation, by at least: value by at least: • This Let Msimple be theobservation set of the khas elements applications with the for: largest marginal contributions • Non-monotone to S. functions. • Fast algorithms. 18

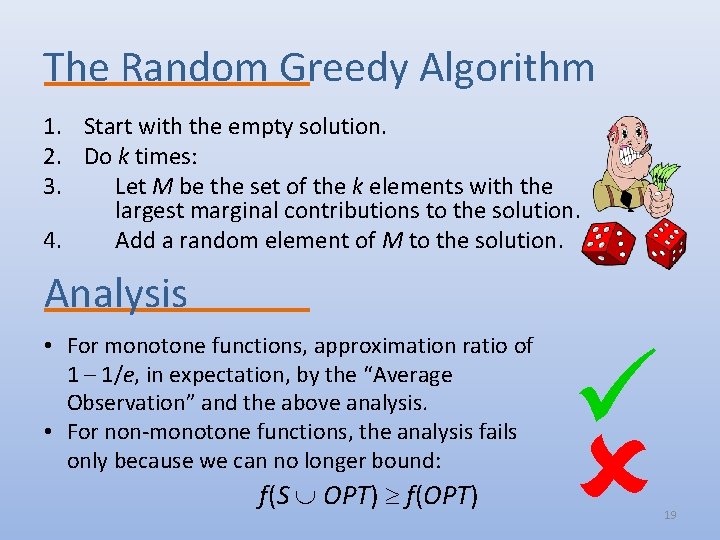

The Random Greedy Algorithm 1. Start with the empty solution. 2. Do k times: 3. Let M be the set of the k elements with the largest marginal contributions to the solution. 4. Add a random element of M to the solution. Analysis • For monotone functions, approximation ratio of 1 – 1/e, in expectation, by the “Average Observation” and the above analysis. • For non-monotone functions, the analysis fails only because we can no longer bound: f(S OPT) f(OPT) 19

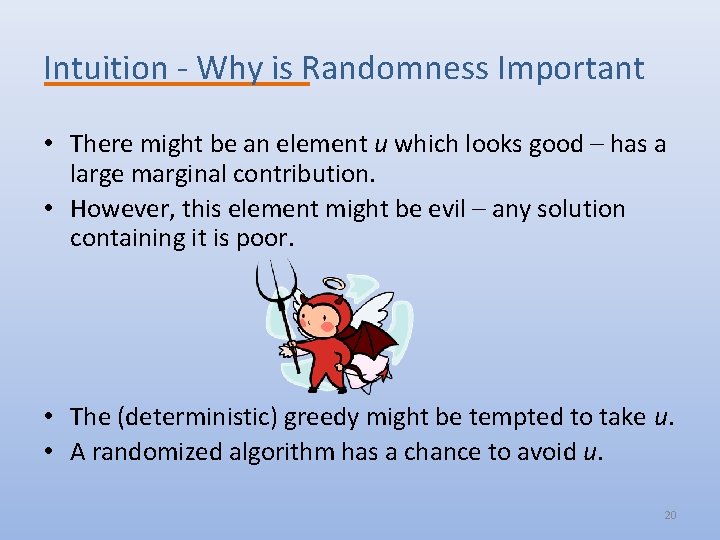

Intuition - Why is Randomness Important • There might be an element u which looks good – has a large marginal contribution. • However, this element might be evil – any solution containing it is poor. • The (deterministic) greedy might be tempted to take u. • A randomized algorithm has a chance to avoid u. 20

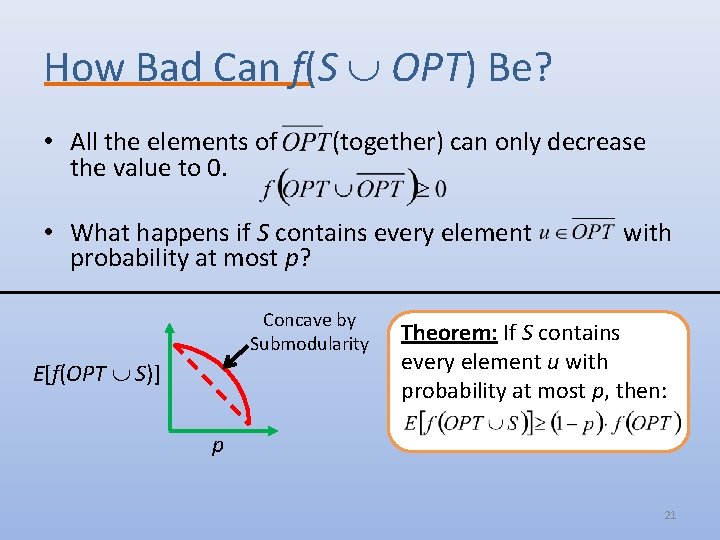

How Bad Can f(S OPT) Be? • All the elements of the value to 0. (together) can only decrease • What happens if S contains every element probability at most p? Concave by Submodularity E[f(OPT S)] with Theorem: If S contains every element u with probability at most p, then: p 21

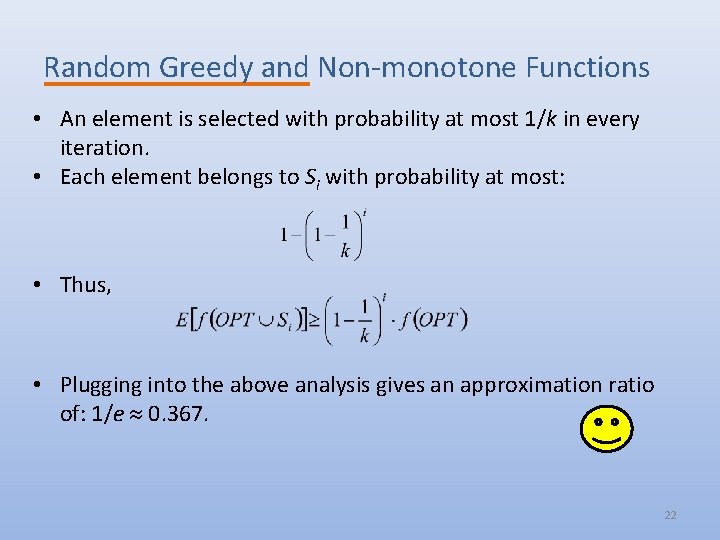

Random Greedy and Non-monotone Functions • An element is selected with probability at most 1/k in every iteration. • Each element belongs to Si with probability at most: • Thus, • Plugging into the above analysis gives an approximation ratio of: 1/e 0. 367. 22

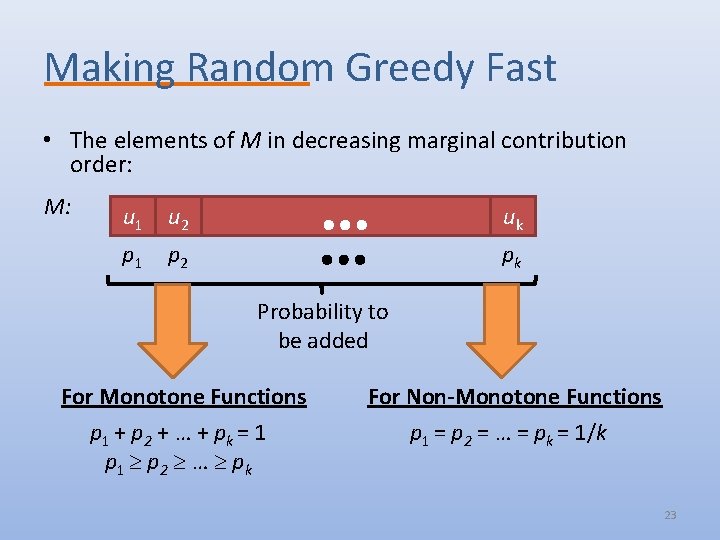

Making Random Greedy Fast • The elements of M in decreasing marginal contribution order: M: u 1 u 2 p 1 p 2 uk pk Probability to be added For Monotone Functions For Non-Monotone Functions p 1 + p 2 + … + pk = 1 p 1 p 2 … pk p 1 = p 2 = … = pk = 1/k 23

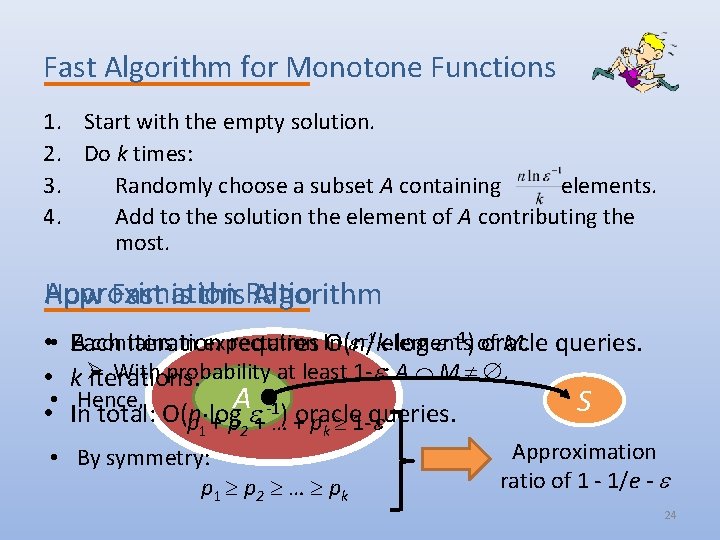

Fast Algorithm for Monotone Functions 1. Start with the empty solution. 2. Do k times: 3. Randomly choose a subset A containing elements. 4. Add to the solution the element of A contributing the most. Approximation How Fast is this. Ratio Algorithm • • • A contains in expectation -1 elements M. queries. Each iteration requires ln O(n/k log -1) of oracle With probability at least 1 - : A M . kØ iterations. Hence, A -1) oracle queries. S In total: O(n log p + … + p 1 - 1 2 k • By symmetry: p 1 p 2 … pk Approximation ratio of 1 - 1/e - 24

Fast Algorithm for Non-monotone Functions Why not use the same algorithm? • If |A M| > 1, then we always select the best element. • Consequently, p 1 >> p 2 >> … >> pk, although we need them to be (roughly) equal. Desired Solution • Select an element u A M uniformly at random. • Unfortunately, determining |A M| requires us to look at all the elements – too costly. Solution 25

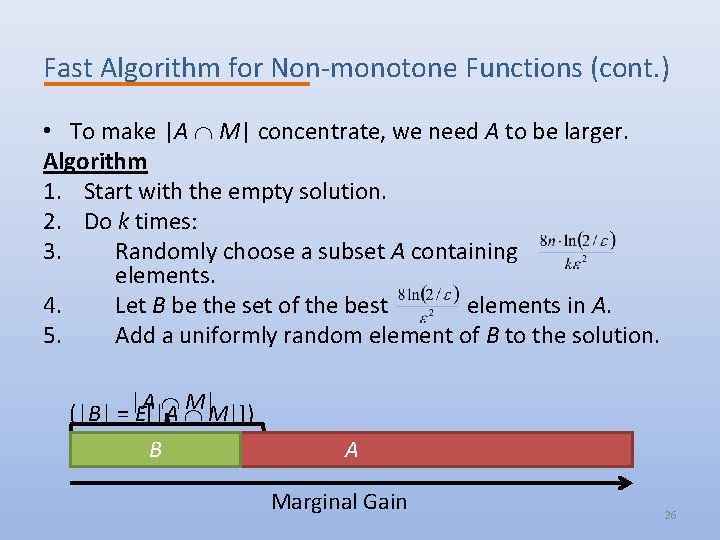

Fast Algorithm for Non-monotone Functions (cont. ) • To make |A M| concentrate, we need A to be larger. Algorithm 1. Start with the empty solution. 2. Do k times: 3. Randomly choose a subset A containing elements. 4. Let B be the set of the best elements in A. 5. Add a uniformly random element of B to the solution. M|M|]) (|B| = |A E[|A B A Marginal Gain 26

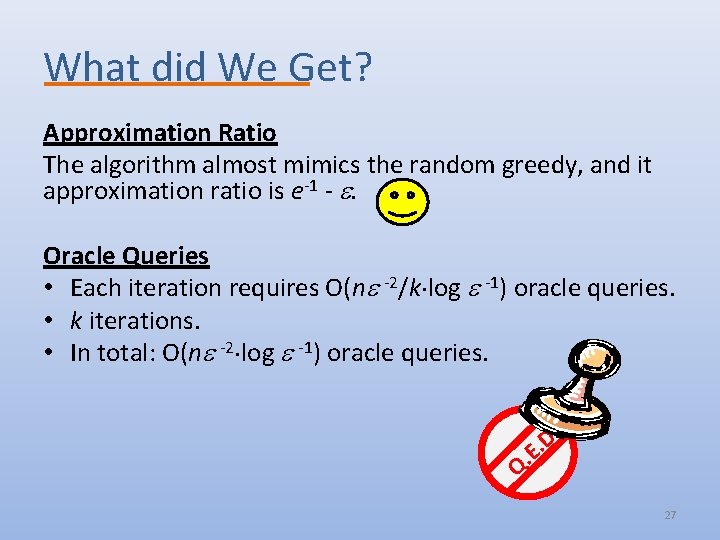

What did We Get? Approximation Ratio The algorithm almost mimics the random greedy, and it approximation ratio is e-1 - . Oracle Queries • Each iteration requires O(n -2/k log -1) oracle queries. • k iterations. • In total: O(n -2 log -1) oracle queries. . D. E. Q 27

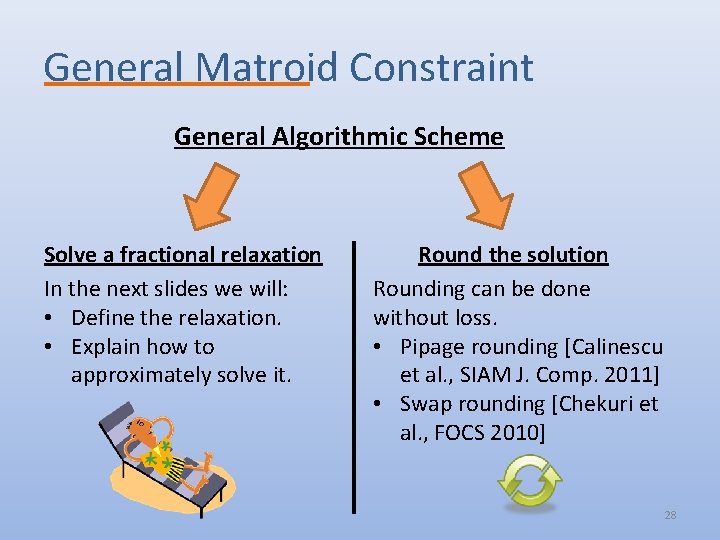

General Matroid Constraint General Algorithmic Scheme Solve a fractional relaxation In the next slides we will: • Define the relaxation. • Explain how to approximately solve it. Round the solution Rounding can be done without loss. • Pipage rounding [Calinescu et al. , SIAM J. Comp. 2011] • Swap rounding [Chekuri et al. , FOCS 2010] 28

![Summary of Results • Continuous Greedy [Calinescu et al. , SIAM J. Comp. 2011] Summary of Results • Continuous Greedy [Calinescu et al. , SIAM J. Comp. 2011]](http://slidetodoc.com/presentation_image_h/34ecbbf1402b5a02775b30dfa883a48b/image-29.jpg)

Summary of Results • Continuous Greedy [Calinescu et al. , SIAM J. Comp. 2011] – An (1 -1/e)-approximation for monotone functions. – Optimal even for cardinality constraints [Nemhauser and Wolsey, Math. Oper. Res. 1978]. • Measured Continuous Greedy [Feldman et al. , FOCS 2011] – An 1/e-approximation non-monotone Improved results for special cases when functions. the function is monotone: – State of the art –Max-SAT previous result was a 0. 325 • Submodular approximation • Submodular [Chekuri Welfare et al. , STOC 2011]. – Hardness – 0. 478 [Oveis Gharan and Vondrak, SODA 2011] 29

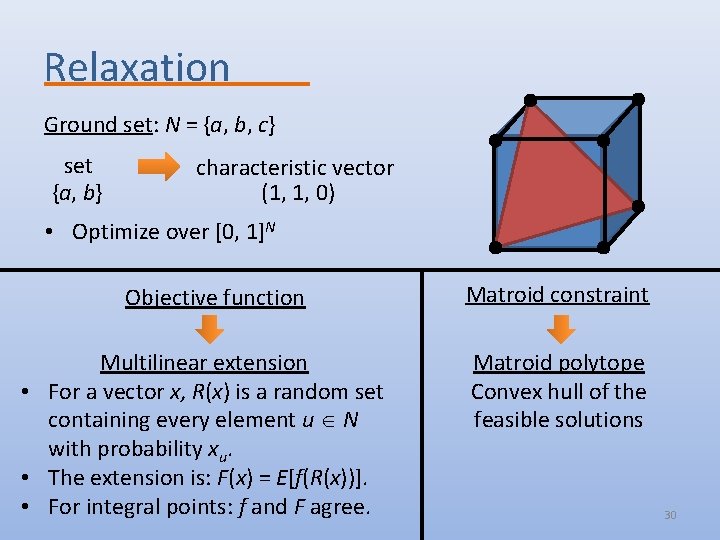

Relaxation Ground set: N = {a, b, c} set {a, b} characteristic vector (1, 1, 0) • Optimize over [0, 1]N Objective function Multilinear extension • For a vector x, R(x) is a random set containing every element u N with probability xu. • The extension is: F(x) = E[f(R(x))]. • For integral points: f and F agree. Matroid constraint Matroid polytope Convex hull of the feasible solutions 30

Before Presenting the Algorithms… • The algorithms we describe are continuous processes. – An implementation has to discretize the process by working in small steps. • The multilinear extension F: – Cannot be evaluated exactly (in general). – Can be approximated arbitrary well by sampling. 31

![The Continuous Greedy • For every time point t [0, 1]: – Consider the The Continuous Greedy • For every time point t [0, 1]: – Consider the](http://slidetodoc.com/presentation_image_h/34ecbbf1402b5a02775b30dfa883a48b/image-32.jpg)

The Continuous Greedy • For every time point t [0, 1]: – Consider the directions corresponding to the feasible sets. – Move in the best (locally) direction at a speed of 1. • Feasibility: the output is a convex combination of feasible sets. Approximation Ratio – OPT is a good direction y + OPT • Monotonicity: “real” is better than “imaginary”. y Real direction F(y OPT) y OPT y Imaginary direction F(y) Concave by Submodularity 32

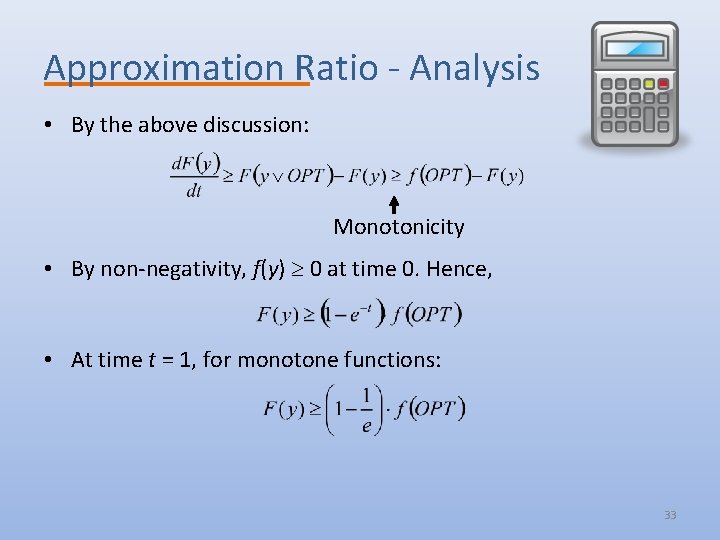

Approximation Ratio - Analysis • By the above discussion: Monotonicity • By non-negativity, f(y) 0 at time 0. Hence, • At time t = 1, for monotone functions: 33

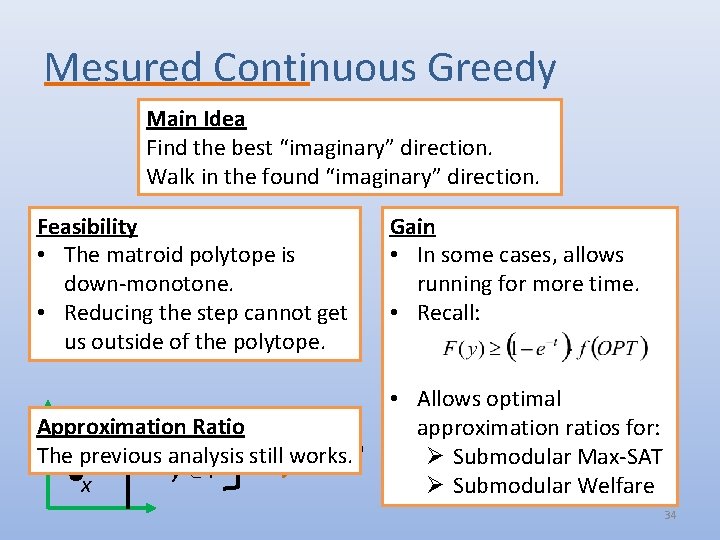

Mesured Continuous Greedy Main Idea Find the best “imaginary” direction. Walk in the found “imaginary” direction. Feasibility • The matroid polytope is down-monotone. • Reducing the step cannot get us outside of the polytope. Approximation Ratio x y x P The previous analysis still works. y y P x Gain • In some cases, allows running for more time. • Recall: • Allows optimal approximation ratios for: Ø Submodular Max-SAT Ø Submodular Welfare 34

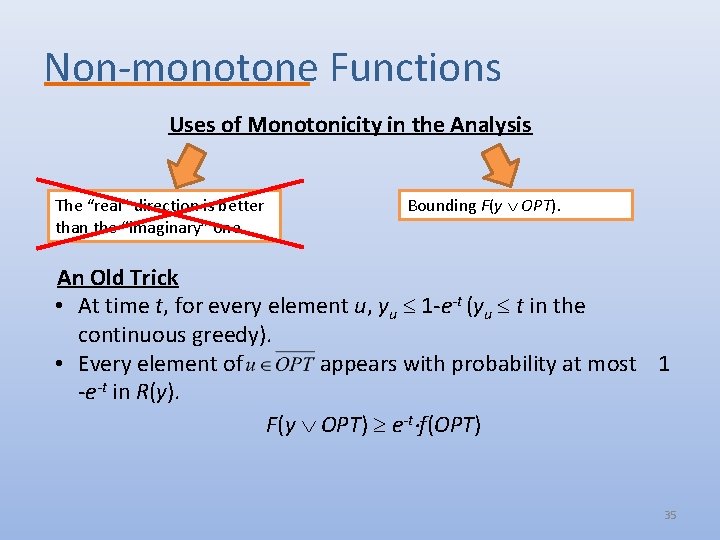

Non-monotone Functions Uses of Monotonicity in the Analysis The “real” direction is better than the “imaginary” one. Bounding F(y OPT). An Old Trick • At time t, for every element u, yu 1 -e-t (yu t in the continuous greedy). • Every element of appears with probability at most 1 -e-t in R(y). F(y OPT) e-t f(OPT) 35

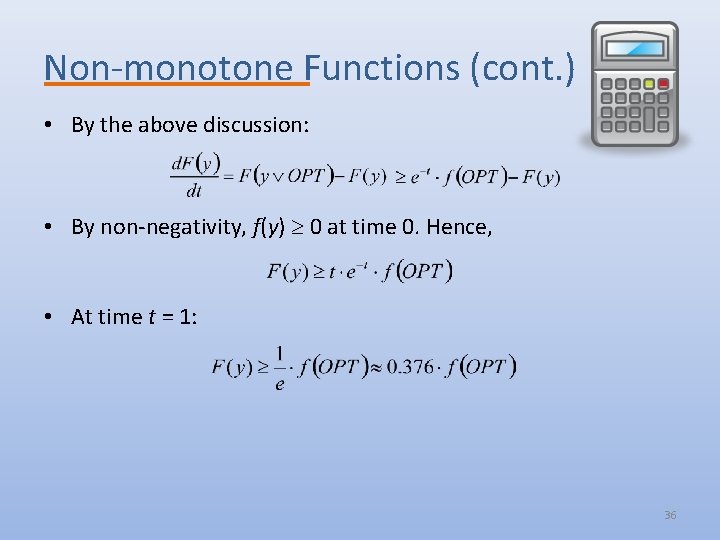

Non-monotone Functions (cont. ) • By the above discussion: • By non-negativity, f(y) 0 at time 0. Hence, • At time t = 1: 36

Future Work Monotone Functions • The basic question is answered (what can be done in polynomial time). • Many open problems in other aspects: – Fast algorithms – almost linear time algorithms for more general constraints. – Online and streaming algorithms – getting tight bounds. ? • Important for big data applications. 37

Future Work (cont. ) Non-monotone Functions • Largely terra-incognita. Optimal approximation ratio • For cardinality constraint? • For general matroids? Properties that can help • Symmetry? • Others? Other Aspects Fast algorithms, online, … Here be dragons 38

Other Main Fields of Interest • Online and Secretary algorithms – State of the art result for the Matroid Secretary Problem. [Feldman et al. , SODA 2015] • Algorithmic Game Theory – Mechanism Design – Analysis of game models inspired by combinatorial problems. 39

Additional Results on Submodular Maximization • Nonmonotone Submodular Maximization via a Structural Continuous Greedy Algorithm. Moran Feldman, Joseph (Seffi) Naor and Roy Schwartz (ICALP 2011). • Improved Competitive Ratios for Submodular Secretary Problems. Moran Feldman, Joseph (Seffi) Naor and Roy Schwartz (APPROX 2011). • Improved Approximations for k-Exchange Systems. Moran Feldman, Joseph (Seffi) Naor, Roy Schwartz and Justin Ward (ESA 2011). • A Tight Linear Time (1/2)-Approximation for Unconstrained Submodular Maximization. Niv Buchbinder, Moran Feldman, Joseph (Seffi) Naor and Roy Schwartz (FOCS 2012). • Online Submodular Maximization with Preemption. Niv Buchbinder, Moran Feldman and Roy Schwartz (SODA 2015).

- Slides: 40