Artificial Intelligence 3 Search in Problem Solving Course

Artificial Intelligence 3. Search in Problem Solving Course V 231 Department of Computing Imperial College, London Jeremy Gow

Problem Solving Agents l Looking to satisfy some goal – l Have a number of possible actions – l An action changes environment What sequence of actions reaches the goal? – l Wants environment to be in particular state Many possible sequences Agent must search through sequences

Examples of Search Problems l Chess – l Route finding – l Search routes for one that reaches destination Theorem proving (L 6 -9) – l Each turn, search moves for win Search chains of reasoning for proof Machine learning (L 10 -14) – Search through concepts for one which achieves target categorisation

Search Terminology l l l States: “places” the search can visit Search space: the set of possible states Search path – l Sequence of states the agent actually visits Solution – A state which solves the given problem l – l Either known or has a checkable property May be more than one solution Strategy – How to choose the next state in the path at any given state

Specifying a Search Problem 1. Initial state – Where the search starts 2. Operators – – Function taking one state to another state How the agent moves around search space 3. Goal test – How the agent knows if solution state found Search strategies apply operators to chosen states

Example: Chess l l Initial state (right) Operators – l Moving pieces Goal test – Checkmate l Can the king move without being taken?

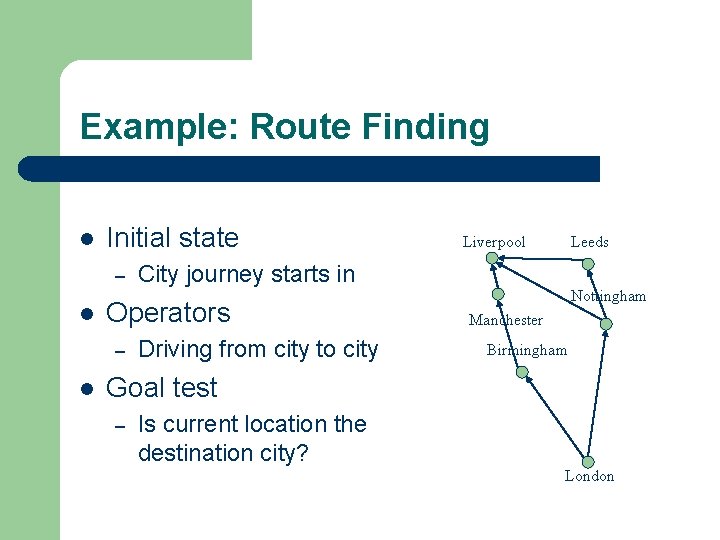

Example: Route Finding l Initial state – l l Leeds City journey starts in Operators – Liverpool Driving from city to city Nottingham Manchester Birmingham Goal test – Is current location the destination city? London

General Search Considerations 1. Artefact or Path? l l Interested in solution only, or path which got there? Route finding – l Anagram puzzle – – l Doesn’t matter how you find the word Only the word itself (artefact) is important Machine learning – l Known destination, must find the route (path) Usually only the concept (artefact) is important Theorem proving – The proof is a sequence (path) of reasoning steps

General Search Considerations 2. Completeness l Task may require one, many or all solutions – l E. g. how many different ways to get from A to B? Complete search space contains all solutions – Exhaustive search explores entire space (assuming finite) l Complete search strategy will find solution if one exists l Pruning rules out certain operators in certain states – – Space still complete if no solutions pruned Strategy still complete if not all solutions pruned

General Search Considerations 3. Soundness l l A sound search contains only correct solutions An unsound search contains incorrect solutions – l Caused by unsound operators or goal check Dangers – find solutions to problems with no solutions l l l – find a route to an unreachable destination prove a theorem which is actually false (Not a problem if all your problems have solutions) produce incorrect solution to problem

General Search Considerations 4. Time & Space Tradeoffs l Fast programs can be written – l Memory efficient programs can be written – l But they often use up too much memory But they are often slow Different search strategies have different memory/speed tradeoffs

General Search Considerations 5. Additional Information l Given initial state, operators and goal test – l Uninformed search strategies – l Can you give the agent additional information? Have no additional information Informed search strategies – – Uses problem specific information Heuristic measure (Guess how far from goal)

Graph and Agenda Analogies l Graph Analogy – – – l States are nodes in graph, operators are edges Expanding a node adds edges to new states Strategy chooses which node to expand next Agenda Analogy – – New states are put onto an agenda (a list) Top of the agenda is explored next l – Apply operators to generate new states Strategy chooses where to put new states on agenda

Example Search Problem l A genetics professor – – l Search through possible strings (states) – – – l Wants to name her new baby boy Using only the letters D, N & A D, DNNA, AND, DNAN, etc. 3 operators: add D, N or A onto end of string Initial state is an empty string Goal test – Look up state in a book of boys’ names, e. g. DAN

Uninformed Search Strategies l Breadth-first search Depth-first search Iterative deepening search Bidirectional search Uniform-cost search l Also known as blind search l l

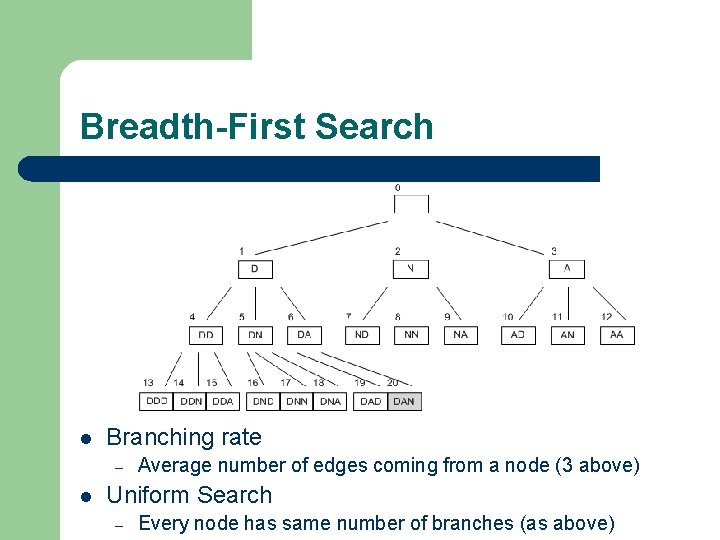

Breadth-First Search l Every time a new state is reached – l When state “NA” is reached – – l New states put on the bottom of the agenda New states “NAD”, “NAN”, “NAA” added to bottom These get explored later (possibly much later) Graph analogy – Each node of depth d is fully expanded before any node of depth d+1 is looked at

Breadth-First Search l Branching rate – l Average number of edges coming from a node (3 above) Uniform Search – Every node has same number of branches (as above)

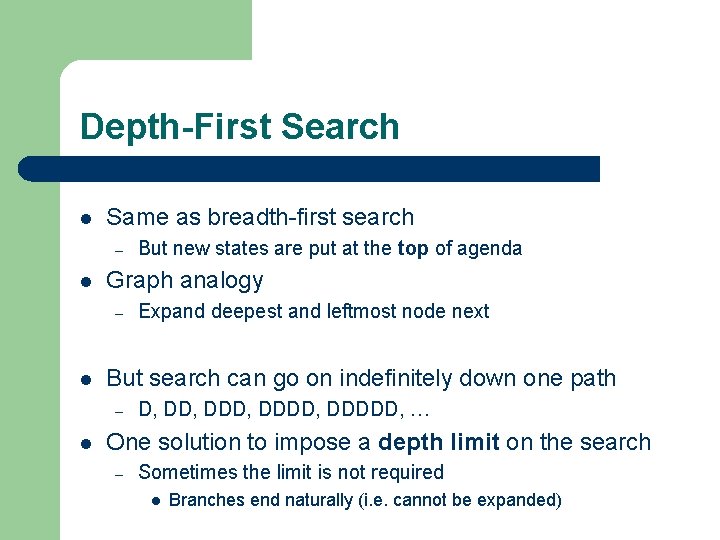

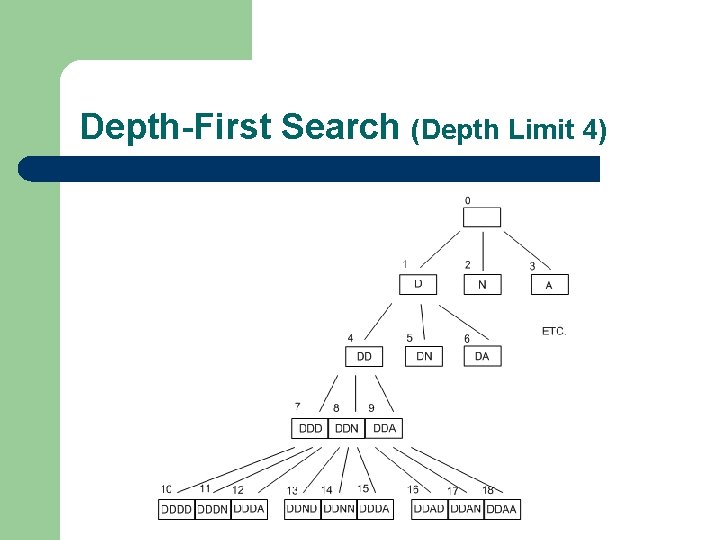

Depth-First Search l Same as breadth-first search – l Graph analogy – l Expand deepest and leftmost node next But search can go on indefinitely down one path – l But new states are put at the top of agenda D, DDD, DDDDD, … One solution to impose a depth limit on the search – Sometimes the limit is not required l Branches end naturally (i. e. cannot be expanded)

Depth-First Search (Depth Limit 4)

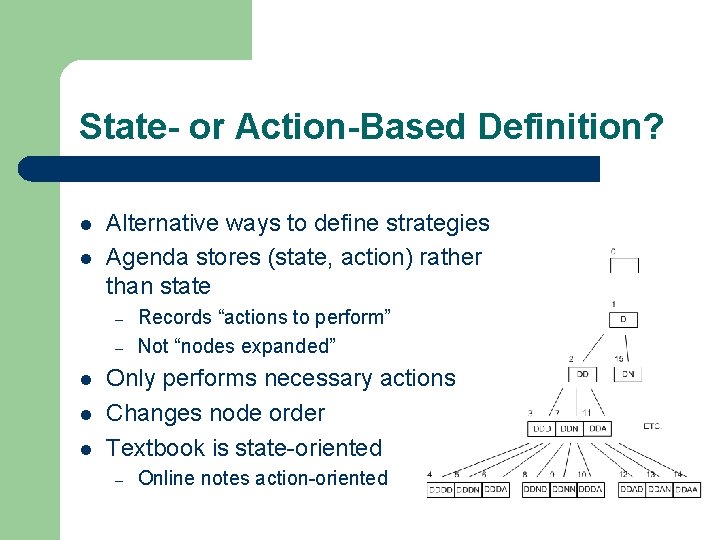

State- or Action-Based Definition? l l Alternative ways to define strategies Agenda stores (state, action) rather than state – – l l l Records “actions to perform” Not “nodes expanded” Only performs necessary actions Changes node order Textbook is state-oriented – Online notes action-oriented

Depth- v. Breadth-First Search l l Suppose branching rate b Breadth-first – – Complete (guaranteed to find solution) Requires a lot of memory l l At depth d needs to remember up to bd-1 states Depth-first – – Not complete because of indefinite paths or depth limit But is memory efficient l Only needs to remember up to b*d states

Iterative Deepening Search l Idea: do repeated depth first searches – – – l Increasing the depth limit by one every time DFS to depth 1, DFS to depth 2, etc. Completely re-do the previous search each time Most DFS effort is in expanding last line of the tree – e. g. to depth five, branching rate of 10 l l l DFS: 111, 111 states, IDS: 123, 456 states Repetition of only 11% Combines best of BFS and DFS – – Complete and memory efficient But slower than either

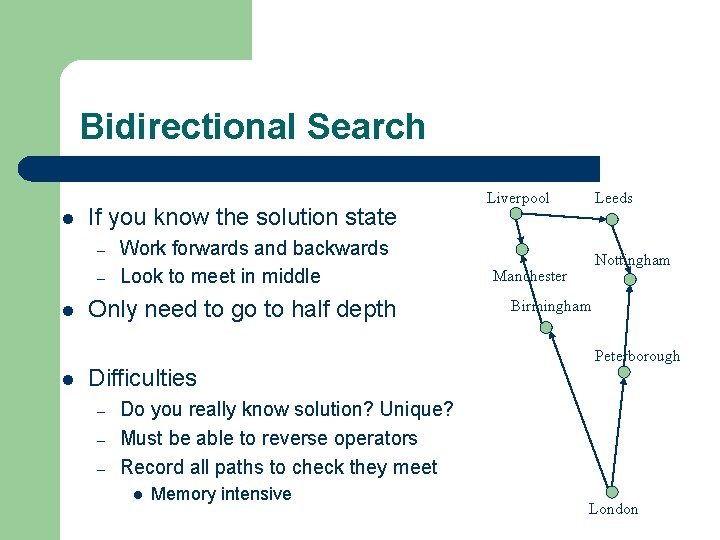

Bidirectional Search l If you know the solution state – – l l Work forwards and backwards Look to meet in middle Only need to go to half depth Difficulties – – – Liverpool Leeds Nottingham Manchester Birmingham Peterborough Do you really know solution? Unique? Must be able to reverse operators Record all paths to check they meet l Memory intensive London

Action and Path Costs l Action cost – l Examples – – l Particular value associated with an action Distance in route planning Power consumption in circuit board construction Path cost – – Sum of all the action costs in the path If action cost = 1 (always), then path cost = path length

Uniform-Cost Search l Breadth-first search – – l Uniform path cost search – l Choose to expand node with the least path cost Guaranteed to find a solution with least cost – l Guaranteed to find the shortest path to a solution Not necessarily the least costly path If we know that path cost increases with path length This method is optimal and complete – But can be very slow

Informed Search Strategies l Greedy search A* search IDA* search Hill climbing Simulated annealing l Also known as heuristic search l l – require heuristic function

Best-First Search l Evaluation function f gives cost for each state – – – l Choose state with smallest f(state) (‘the best’) Agenda: f decides where new states are put Graph: f decides which node to expand next Many different strategies depending on f – – For uniform-cost search f = path cost Informed search strategies defines f based on heuristic function

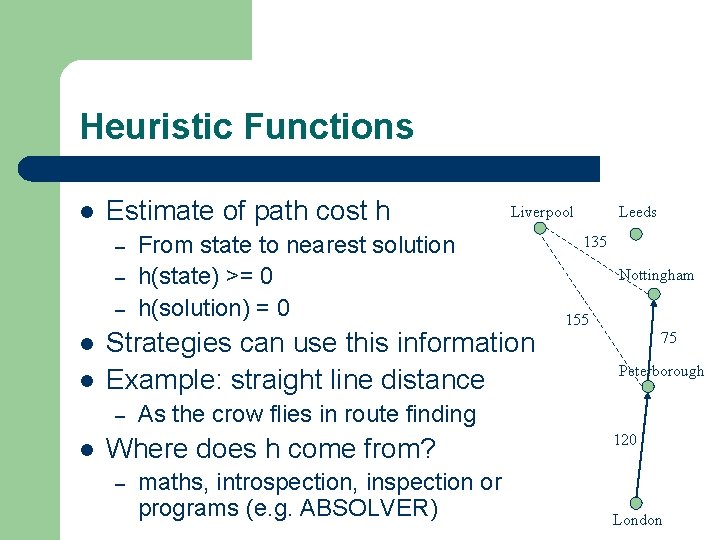

Heuristic Functions l Estimate of path cost h – – – l l From state to nearest solution h(state) >= 0 h(solution) = 0 Strategies can use this information Example: straight line distance – l Liverpool 135 Nottingham 155 75 Peterborough As the crow flies in route finding Where does h come from? – Leeds maths, introspection, inspection or programs (e. g. ABSOLVER) 120 London

Greedy Search l l Always take the biggest bite f(state) = h(state) – Choose smallest estimated cost to solution l Ignores the path cost l Blind alley effect: early estimates very misleading – l One solution: delay the use of greedy search Not guaranteed to find optimal solution – Remember we are estimating the path cost to solution

A* Search l Path cost is g and heuristic function is h – – l l f(state) = g(state) + h(state) Choose smallest overall path cost (known + estimate) Combines uniform-cost and greedy search Can prove that A* is complete and optimal – But only if h is admissable, i. e. underestimates the true path cost from state to solution – See Russell and Norvig for proof

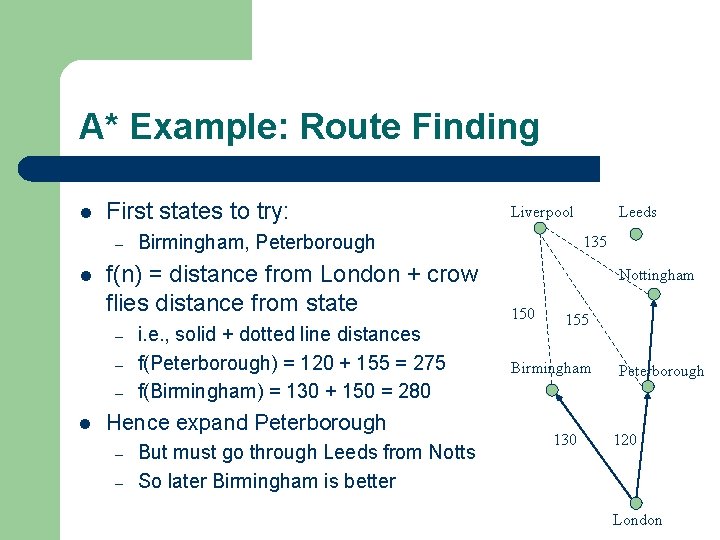

A* Example: Route Finding l First states to try: – l – – l Birmingham, Peterborough f(n) = distance from London + crow flies distance from state – i. e. , solid + dotted line distances f(Peterborough) = 120 + 155 = 275 f(Birmingham) = 130 + 150 = 280 Hence expand Peterborough – – Liverpool But must go through Leeds from Notts So later Birmingham is better Leeds 135 Nottingham 150 155 Birmingham 130 Peterborough 120 London

IDA* Search l Problem with A* search – – l l A* searches often run out of memory, not time Use the same iterative deepening trick as IDS – – l You have to record all the nodes In case you have to back up from a dead-end But iterate over f(state) rather than depth Define contours: f < 100, f < 200, f < 300 etc. Complete & optimal as A*, but less memory

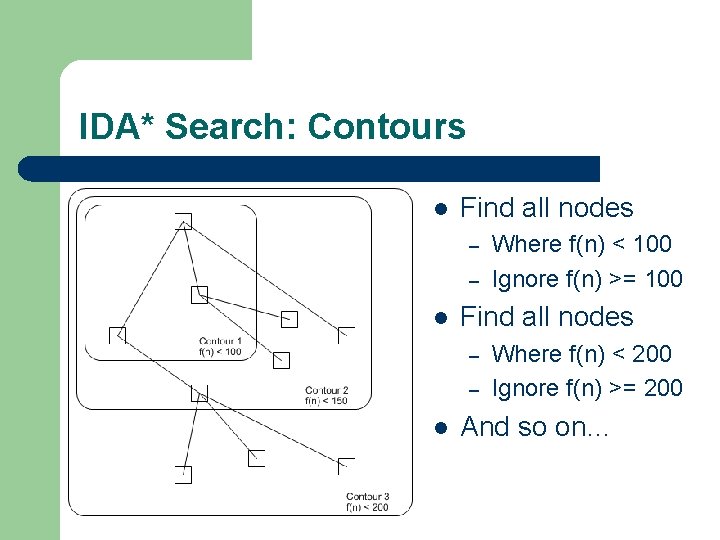

IDA* Search: Contours l Find all nodes – – l Where f(n) < 100 Ignore f(n) >= 100 Where f(n) < 200 Ignore f(n) >= 200 And so on…

Hill Climbing & Gradient Descent l l For artefact-only problems (don’t care about the path) Depends on some e(state) – – l Hill climbing tries to maximise score e Gradient descent tries to minimise cost e (the same strategy!) Randomly choose a state – – Only choose actions which improve e If cannot improve e, then perform a random restart l l Choose another random state to restart the search from Only ever have to store one state (the present one) – Can’t have cycles as e always improves

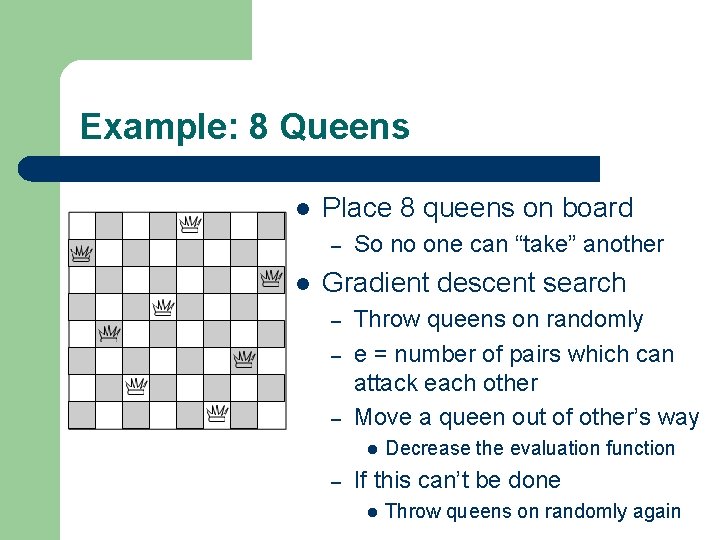

Example: 8 Queens l Place 8 queens on board – l So no one can “take” another Gradient descent search – – – Throw queens on randomly e = number of pairs which can attack each other Move a queen out of other’s way l – Decrease the evaluation function If this can’t be done l Throw queens on randomly again

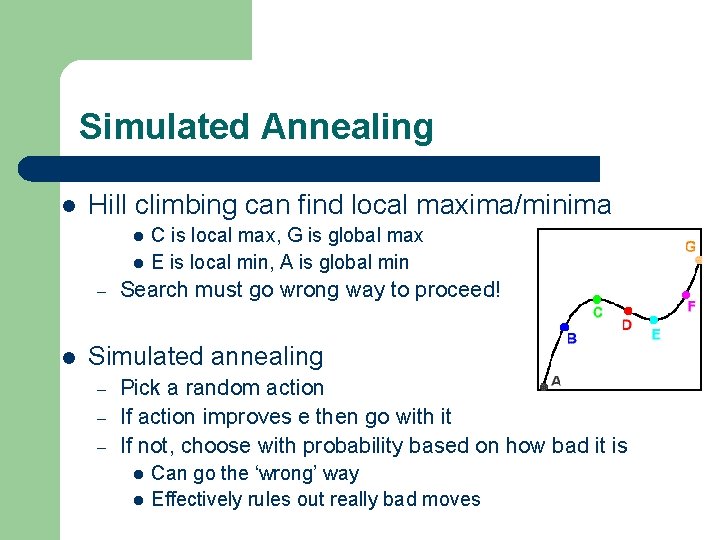

Simulated Annealing l Hill climbing can find local maxima/minima l l – l C is local max, G is global max E is local min, A is global min Search must go wrong way to proceed! Simulated annealing – – – Pick a random action If action improves e then go with it If not, choose with probability based on how bad it is l l Can go the ‘wrong’ way Effectively rules out really bad moves

Comparing Heuristic Searches l Effective branching rate – Idea: compare to a uniform search e. g. BFS l l Where each node has same number of edges from it Expanded n nodes to find solution at depth d – What would the branching rate be if uniform? l – Use this formula to calculate it l – Effective branching factor b* n = 1 + b* + (b*)2 + (b*)3 + … + (b*)d One heuristic function h 1 dominates another h 2 l If b* is always smaller for h 1 than for h 2

Example: Effective Branching Rate l Suppose a search has taken 52 steps – l l 52 = 1 + b* + (b*)2 + … + (b*)5 So, using the mathematical equality from notes – l And found a solution at depth 5 We can calculate that b* = 1. 91 If instead, the agent – – Had a uniform breadth first search It would branch 1. 91 times from each node

Search Strategies Uninformed l Breadth-first search l Depth-first search l Iterative deepening l Bidirectional search l Uniform-cost search Informed l Greedy search l A* search l IDA* search l Hill climbing l Simulated annealing l SMA* in textbook

- Slides: 39