ARIES Concluded and Distributed Databases R Zachary G

ARIES, Concluded and Distributed Databases: R* Zachary G. Ives University of Pennsylvania CIS 650 – Implementing Data Management Systems October 2, 2008 Some content on 2 -phase commit courtesy Ramakrishnan, Gehrke

Administrivia Next reading assignment: § Principles of Data Integration Chapter 3. 3 § No review required for this § Review Mariposa & R* for Tuesday 2

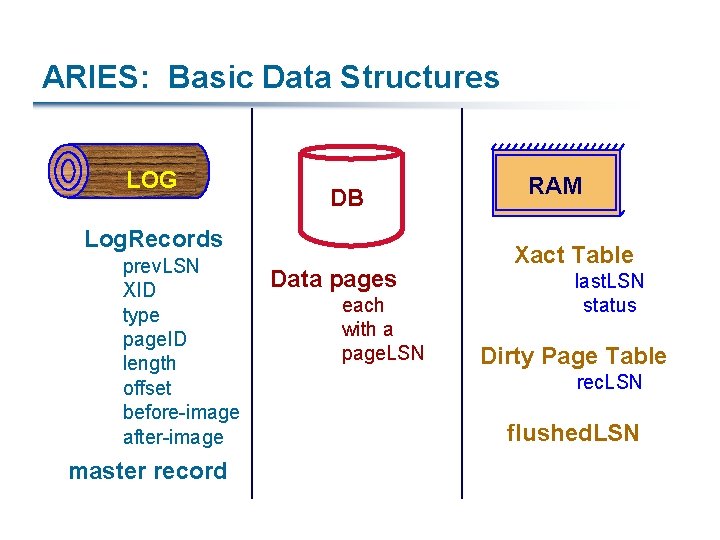

ARIES: Basic Data Structures LOG DB Log. Records prev. LSN XID type page. ID length offset before-image after-image master record Data pages each with a page. LSN RAM Xact Table last. LSN status Dirty Page Table rec. LSN flushed. LSN

Normal Execution of a Transaction Series of reads & writes, followed by commit or abort We will assume that write is atomic on disk In practice, additional details to deal with non-atomic writes Strict 2 PL STEAL, NO-FORCE buffer management, with Write- Ahead Logging

Checkpointing Periodically, the DBMS creates a checkpoint § Minimizes recovery time in the event of a system crash § Write to log: begin_checkpoint record: when checkpoint began end_checkpoint record: current Xact table and dirty page table A “fuzzy checkpoint”: s Other Xacts continue to run; so these tables accurate only as of the time of the begin_checkpoint record s No attempt to force dirty pages to disk; effectiveness of checkpoint limited by oldest unwritten change to a dirty page. (So it’s a good idea to periodically flush dirty pages to disk!) Store LSN of checkpoint record in a safe place (master record)

Simple Transaction Abort, 1/2 For now, consider an explicit abort of a Xact § (No crash involved) We want to “play back” the log in reverse order, UNDOing updates § Get last. LSN of Xact from Xact table § Can follow chain of log records backward via the prev. LSN field When do we quit? § Before starting UNDO, write an Abort log record For recovering from crash during UNDO!

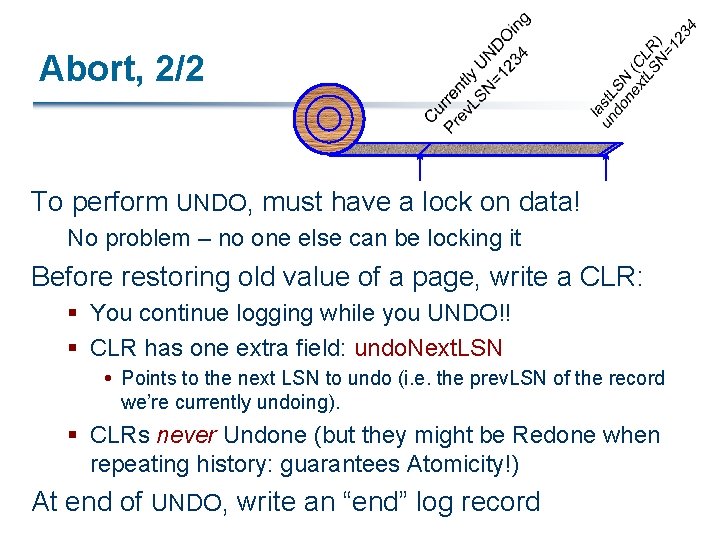

Abort, 2/2 To perform UNDO, must have a lock on data! No problem – no one else can be locking it Before restoring old value of a page, write a CLR: § You continue logging while you UNDO!! § CLR has one extra field: undo. Next. LSN Points to the next LSN to undo (i. e. the prev. LSN of the record we’re currently undoing). § CLRs never Undone (but they might be Redone when repeating history: guarantees Atomicity!) At end of UNDO, write an “end” log record

Transaction Commit § Write commit record to log § All log records up to Xact’s last. LSN are flushed § Guarantees that flushed. LSN ³ last. LSN § Note that log flushes are sequential, synchronous writes to disk § Many log records per log page § Commit() returns § Write end record to log

Crash Recovery: Big Picture Oldest log rec. of Xact active at crash § Smallest rec. LSN in dirty page table after Analysis § Start from a checkpoint (found via master record) Three phases: 1. Figure out which Xacts committed since checkpoint, which failed (Analysis) 2. REDO all actions – (repeat history) 3. UNDO effects of failed Xacts Last chkpt CRASH A R U

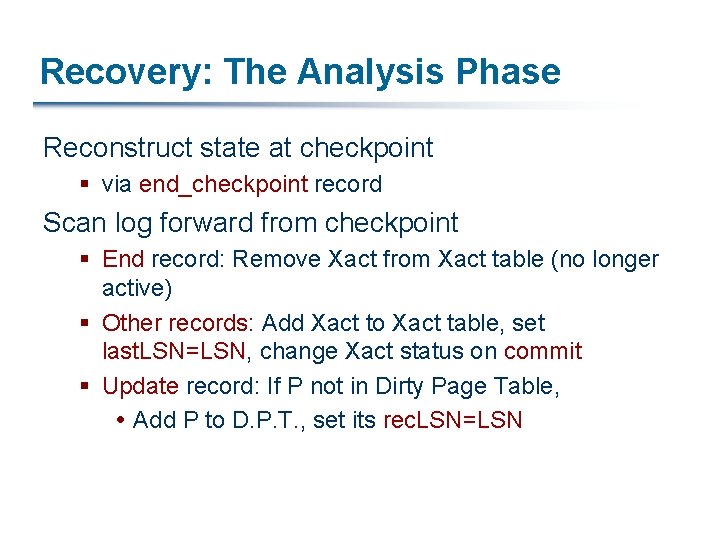

Recovery: The Analysis Phase Reconstruct state at checkpoint § via end_checkpoint record Scan log forward from checkpoint § End record: Remove Xact from Xact table (no longer active) § Other records: Add Xact to Xact table, set last. LSN=LSN, change Xact status on commit § Update record: If P not in Dirty Page Table, Add P to D. P. T. , set its rec. LSN=LSN

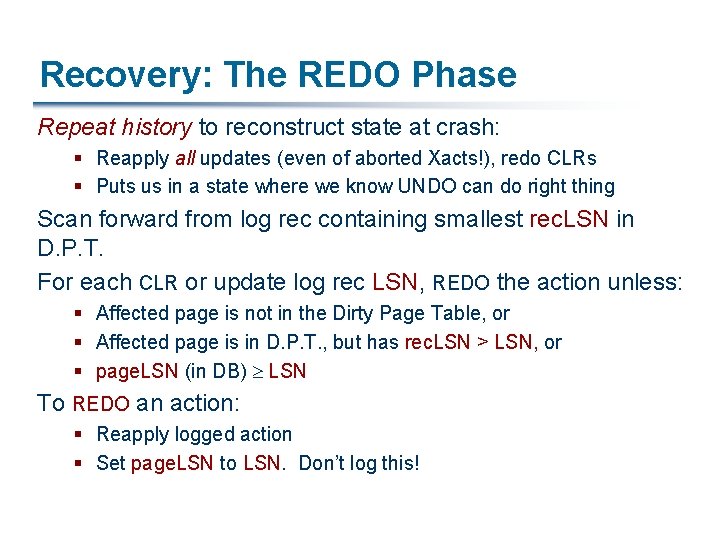

Recovery: The REDO Phase Repeat history to reconstruct state at crash: § Reapply all updates (even of aborted Xacts!), redo CLRs § Puts us in a state where we know UNDO can do right thing Scan forward from log rec containing smallest rec. LSN in D. P. T. For each CLR or update log rec LSN, REDO the action unless: § Affected page is not in the Dirty Page Table, or § Affected page is in D. P. T. , but has rec. LSN > LSN, or § page. LSN (in DB) ³ LSN To REDO an action: § Reapply logged action § Set page. LSN to LSN. Don’t log this!

Recovery: The UNDO Phase To. Undo = { l | l a last. LSN of a “loser” Xact} Repeat: § Choose largest LSN among To. Undo § If this LSN is a CLR and undo. Next. LSN==NULL Write an End record for this Xact § If this LSN is a CLR and undo. Next. LSN != NULL Add undo. Next. LSN to To. Undo § Else this LSN is an update Undo the update, write a CLR, add prev. LSN to To. Undo Until To. Undo is empty

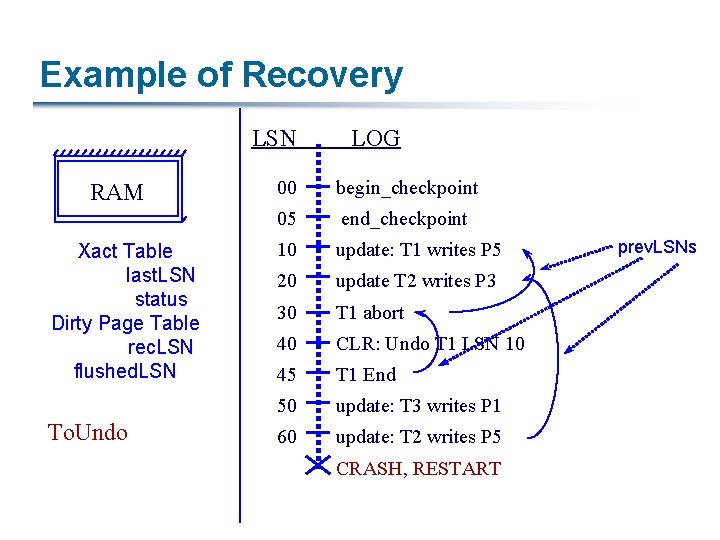

Example of Recovery LSN RAM Xact Table last. LSN status Dirty Page Table rec. LSN flushed. LSN To. Undo LOG 00 begin_checkpoint 05 end_checkpoint 10 update: T 1 writes P 5 20 update T 2 writes P 3 30 T 1 abort 40 CLR: Undo T 1 LSN 10 45 T 1 End 50 update: T 3 writes P 1 60 update: T 2 writes P 5 CRASH, RESTART prev. LSNs

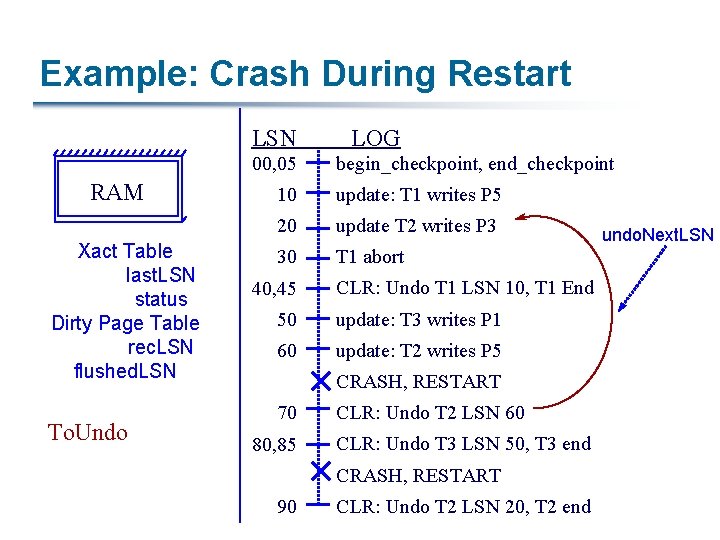

Example: Crash During Restart LSN 00, 05 RAM Xact Table last. LSN status Dirty Page Table rec. LSN flushed. LSN To. Undo LOG begin_checkpoint, end_checkpoint 10 update: T 1 writes P 5 20 update T 2 writes P 3 30 T 1 abort 40, 45 CLR: Undo T 1 LSN 10, T 1 End 50 update: T 3 writes P 1 60 update: T 2 writes P 5 CRASH, RESTART 70 80, 85 CLR: Undo T 2 LSN 60 CLR: Undo T 3 LSN 50, T 3 end CRASH, RESTART 90 CLR: Undo T 2 LSN 20, T 2 end undo. Next. LSN

Additional Crash Issues What happens if system crashes during Analysis? How do you limit the amount of work in REDO? § Flush asynchronously in the background § Watch “hot spots”! How do you limit the amount of work in UNDO? § Avoid long-running Xacts

Summary of Logging/Recovery § Recovery Manager guarantees Atomicity & Durability § Use WAL to allow STEAL/NO-FORCE w/o sacrificing correctness § LSNs identify log records; linked into backwards chains per transaction (via prev. LSN) § page. LSN allows comparison of data page and log records

Summary, Continued § Checkpointing: A quick way to limit the amount of log to scan on recovery. § Recovery works in 3 phases: § Analysis: Forward from checkpoint § Redo: Forward from oldest rec. LSN § Undo: Backward from end to first LSN of oldest Xact alive at crash § Upon Undo, write CLRs § Redo “repeats history”: Simplifies the logic!

Distributed Databases § Goal: provide an abstraction of a single database, but with the data distributed on different sites § Pose a new set of challenges: § Source tables (or subsets of tables) may be located on different machines § There is a data transfer cost – over the network § Different CPUs have different amounts of resources § Available resources change during optimization- and run-time § Today: § R*: the first “real” distributed DBMS prototype (Distributed INGRES never actually ran) – focus was a LAN, 10 -12 sites § Mariposa: an attempt to distribute across the wide area 18

Issues in Distributed Databases § How do we place the data? § R*: this is done by humans § Mariposa: this is done via economic model § What new capabilities do we have? § R*: SHIP, 2 -phase dependent join, bloomjoin, … § Mariposa: ship processing to another node § Challenges in optimization § R*: more complex cost model, more exec. options § Mariposa: bidding on computation and other resources 19

System-R* Optimization § Focus: distributed joins 1. Can ship a table and then join it (“ship whole”) 2. Can probe the inner table and return matches (“fetch matches”) § Their measurements favored #1 – why? Why do they require: § Cardinality of outer < ½ # messages required to ship inner Join cardinality < inner cardinality How can the 2 nd case be improved, in the spirit of block NLJ? 20

Parallelism in Joins § They assert it’s better to ship from outer relation to site of inner relation because of potential for parallelism § Where? § What changes if the inner relation is horizontally partitioned across multiple sites (i. e. , relations are “striped”)? § They can also exploit the possibility of parallelism in sorting for a merge join – how? 21

Other Joins § Ship the inner relation and then index it § Two-phase semijoin § § Very similar to “fetch matches” Take S, T, sort them and remove duplicates Ship to opposites, use to fetch tuples that match Merge-join the matching tuples § Bloomjoin § Generate a Bloom filter from S § Send to site of T, find matches § Return to S, join 22

Bloom Filters (Bloom 1970) m bits § Use k hash functions, m-bit vector § For each tuple, hash key k with each function hi Set bit hi(k) in the bit vector § Probe the Bloom filter for k’ by testing whether all hi(k’) are set § After n values have been isnerted, probability of false positive is (1 – 1/m)kn h 1(k) h 2(k) 1 1 h 3(k) h 4(k) 1 23

Joins – “High-Speed” Network Semijoin R* + Temp Indices R* (Distributed) R* (Local) Bloomjoin 24

R* Optimization Assessment § Distributed optimization is hard! § They ignore load-balance issues, and messaging overhead is probably ~10%, but still… § Shipping costs are difficult to assess, since they depend on precise cardinality results § Optimizing the plan locally, and using that as a model for distributed processing, doesn’t provide any optimality guarantees either – doesn’t account for parallelism 25

Updates in R* Require Two-Phase Commit (2 PC) § Site at which a transaction originates is the coordinator; other sites at which it executes are subordinates § Two rounds of communication, initiated by coordinator: § Voting Coordinator sends prepare messages, waits for yes or no votes § Then, decision or termination Coordinator sends commit or rollback messages, waits for acks § Any site can decide to abort a transaction!

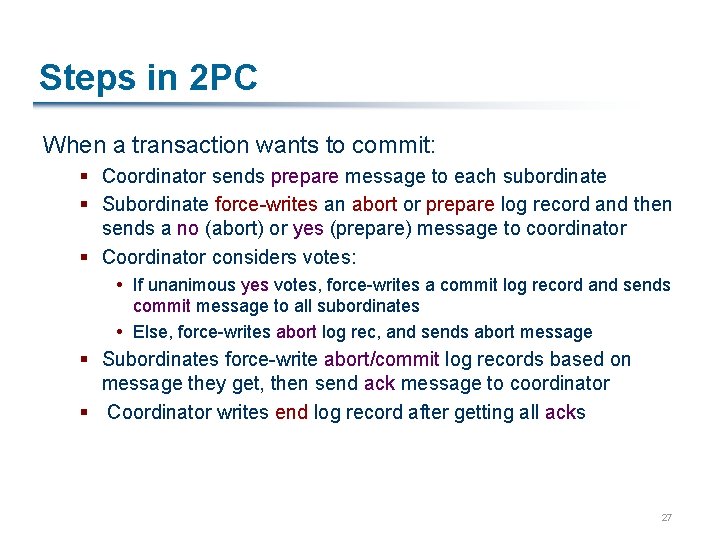

Steps in 2 PC When a transaction wants to commit: § Coordinator sends prepare message to each subordinate § Subordinate force-writes an abort or prepare log record and then sends a no (abort) or yes (prepare) message to coordinator § Coordinator considers votes: If unanimous yes votes, force-writes a commit log record and sends commit message to all subordinates Else, force-writes abort log rec, and sends abort message § Subordinates force-write abort/commit log records based on message they get, then send ack message to coordinator § Coordinator writes end log record after getting all acks 27

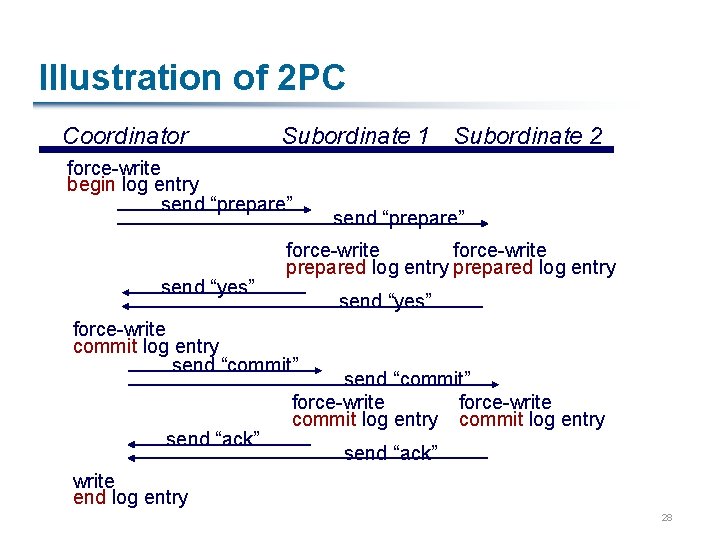

Illustration of 2 PC Coordinator Subordinate 1 Subordinate 2 force-write begin log entry send “prepare” send “yes” send “prepare” force-write prepared log entry send “yes” force-write commit log entry send “commit” send “ack” send “commit” force-write commit log entry send “ack” write end log entry 28

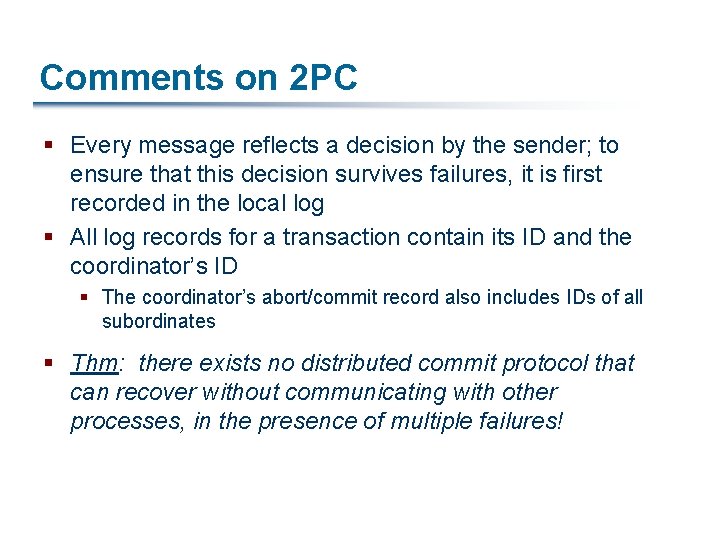

Comments on 2 PC § Every message reflects a decision by the sender; to ensure that this decision survives failures, it is first recorded in the local log § All log records for a transaction contain its ID and the coordinator’s ID § The coordinator’s abort/commit record also includes IDs of all subordinates § Thm: there exists no distributed commit protocol that can recover without communicating with other processes, in the presence of multiple failures!

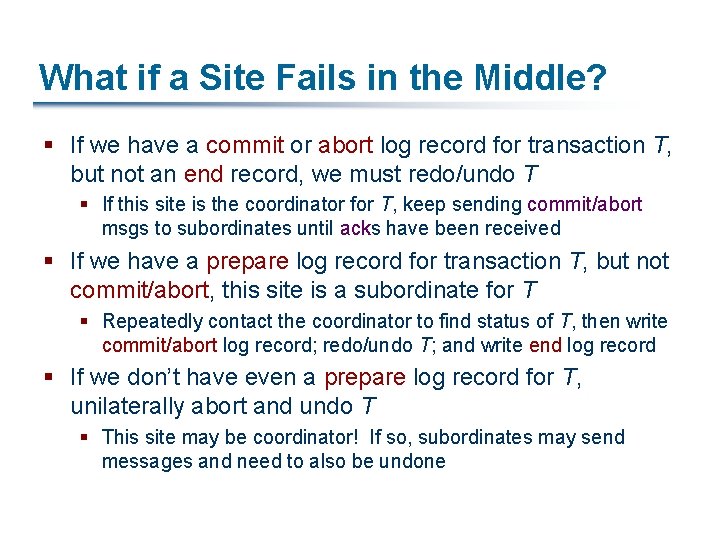

What if a Site Fails in the Middle? § If we have a commit or abort log record for transaction T, but not an end record, we must redo/undo T § If this site is the coordinator for T, keep sending commit/abort msgs to subordinates until acks have been received § If we have a prepare log record for transaction T, but not commit/abort, this site is a subordinate for T § Repeatedly contact the coordinator to find status of T, then write commit/abort log record; redo/undo T; and write end log record § If we don’t have even a prepare log record for T, unilaterally abort and undo T § This site may be coordinator! If so, subordinates may send messages and need to also be undone

Blocking for the Coordinator § If coordinator for transaction T fails, subordinates who have voted yes cannot decide whether to commit or abort T until coordinator recovers § T is blocked § Even if all subordinates know each other (extra overhead in prepare msg) they are blocked unless one of them voted no

Link and Remote Site Failures § If a remote site does not respond during the commit protocol for transaction T, either because the site failed or the link failed: § If the current site is the coordinator for T, should abort T § If the current site is a subordinate, and has not yet voted yes, it should abort T § If the current site is a subordinate and has voted yes, it is blocked until the coordinator responds!

Observations on 2 PC § Ack msgs used to let coordinator know when it’s done with a transaction; until it receives all acks, it must keep T in the transaction-pending table § If the coordinator fails after sending prepare msgs but before writing commit/abort log recs, when it comes back up it aborts the transaction

R* Wrap-Up § One of the first systems to address both distributed query processing and distributed updates § Focus was on local-area networks, small number of sites § Next system, Mariposa, focuses on environments more like the Internet… 34

- Slides: 34