Are MultipleChoice Items about to Go Extinct 10282010

Are Multiple-Choice Items about to Go Extinct? 10/28/2010 (updated 11/19/2010) © David E. W. Mott, ROSworks

Are Multiple-Choice Items about to Go Extinct? David E. W. Mott Tests for Higher Standards ROSworks LLC Presented at the 2010 Virginia Association of Test Directors Conference, Koger Center, Richmond, VA, October 28, 2010 10/28/2010 (updated 11/19/2010) © David E. W. Mott, ROSworks

About Me (so you know where I’m coming from) z Was at the VA DOE for 17+ years, in what is now called Assessment and Reporting. n Supervisor of Test Development (and psychometrics – test equating, etc. ) n Administrator of the VSAP (SRA, ITBS, SAT-9) z Got together with Stuart Flanagan to do SOL Math tests – then English, Science, & H/SS. z Developed ROS so that teachers didn’t have to hand score tests. z Have been working with, on, for, & against the SOLs for 30+ years. Amazing ! 10/28/2010 (updated 11/19/2010) © David E. W. Mott, ROSworks 3

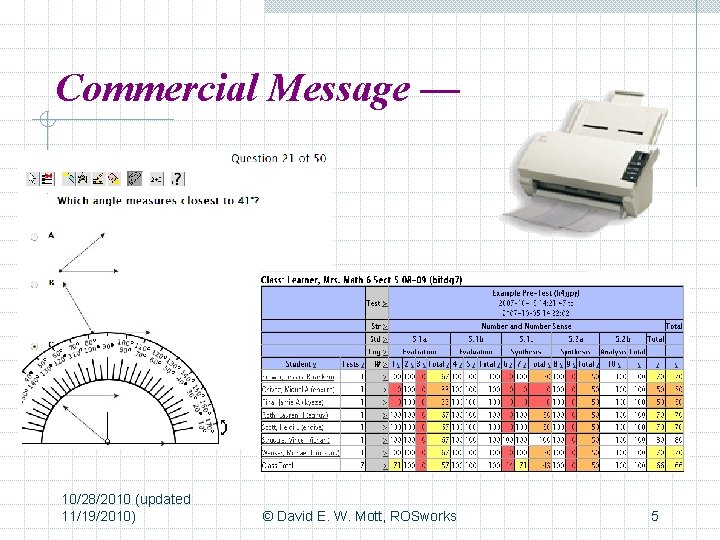

Commercial Message — Helping schools put the pieces of the assessment puzzle together. 10/28/2010 (updated 11/19/2010) © David E. W. Mott, ROSworks 4

Commercial Message — 10/28/2010 (updated 11/19/2010) © David E. W. Mott, ROSworks 5

Session Abstract Are Multiple-Choice Items about to Go Extinct? Multiple. Choice items have had their day in the sun. It may be time to put them out to pasture in favor of a variety of more “authentic” assessments. Indeed, that is what we read from official pronouncements from U. S. Secretary of Education Arne Duncan on down to comments on local educational blogs. Mostly, the subtext is, “And good riddance!” However, to paraphrase Mark Twain, “The reports of the death of the multiple-choice item are greatly exaggerated. ” We will cover the topic from bottom to top; the bad and the good; the past, present, and future of our plucky protagonist. 10/28/2010 (updated 11/19/2010) © David E. W. Mott, ROSworks 6

Prolog – always remember – The WYTIWYG Principle What You Test Is What You Get. 10/28/2010 (updated 11/19/2010) © David E. W. Mott, ROSworks 7

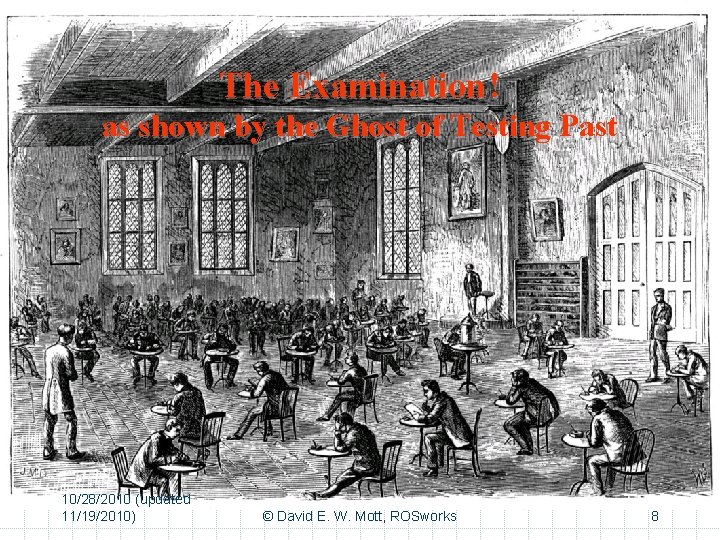

The Examination – Large room, Examinees (seated apart) some looking desperate, Invigulators, Hourglass, Closed Doors The Examination ! as shown by the Ghost of Testing Past 10/28/2010 (updated 11/19/2010) © David E. W. Mott, ROSworks 8

So what is going on nationally? 10/28/2010 (updated 11/19/2010) © David E. W. Mott, ROSworks 9

Why are Multiple-Choice items BAD – 1? z They test can only low-level facts. z Multiple-choice items are unlike real life. z They can’t handle situations that have shades-of-grey z z answers. They are not useful for assessing critical or higher-order thinking or the ability to apply knowledge or to solve problems. Multiple-choice items cannot measure the ability to write. Students can work backwards to guess the correct answer. Students have a hard time with multiple-choice items. 10/28/2010 (updated 11/19/2010) © David E. W. Mott, ROSworks 10

Why are Multiple-Choice items BAD – 2? z They cannot measure the process(es) by which the student z z z came to the correct answer. They encourage the measurement of what is easy to measure in the multiple-choice format over what is not. Students tend to guess if they don’t know the correct answer. They perpetuate the myth that there is usually one correct answer. One question can give away the answer to another. The curriculum can be narrowed because only what is testable in a multiple-choice format gets taught. 10/28/2010 (updated 11/19/2010) © David E. W. Mott, ROSworks 11

Why are Multiple-Choice items BAD – 3? z z z z The State uses them in testing, so they must be bad. They do not measure instructionally-sensitive material. Students often misinterpret multiple-choice items. Multiple-choice items allow only a single answer. Students respond impulsively to multiple-choice items. Multiple-choice items tend to trick students. It is difficult to write good multiple-choice items. Multiple-choice items are on tests that harm students and teachers. z Big, powerful testing companies use multiple-choice items, so they must be bad. z Some more issues — 10/28/2010 (updated 11/19/2010) © David E. W. Mott, ROSworks 12

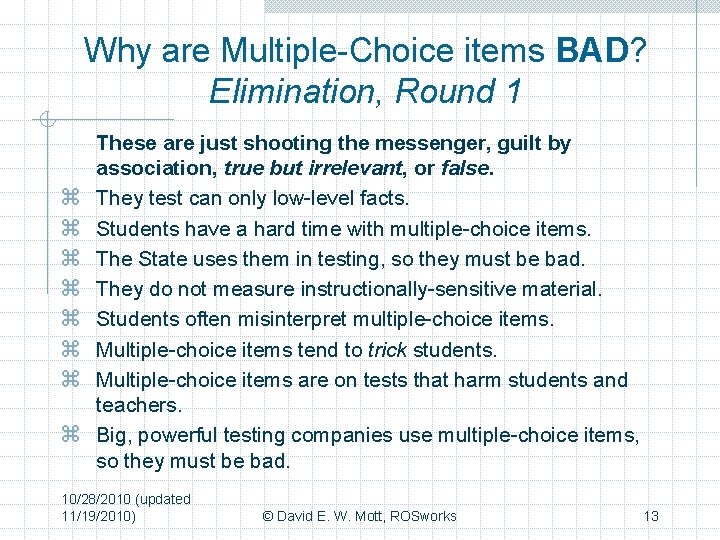

Why are Multiple-Choice items BAD? Elimination, Round 1 z z z z These are just shooting the messenger, guilt by association, true but irrelevant, or false. They test can only low-level facts. Students have a hard time with multiple-choice items. The State uses them in testing, so they must be bad. They do not measure instructionally-sensitive material. Students often misinterpret multiple-choice items. Multiple-choice items tend to trick students. Multiple-choice items are on tests that harm students and teachers. Big, powerful testing companies use multiple-choice items, so they must be bad. 10/28/2010 (updated 11/19/2010) © David E. W. Mott, ROSworks 13

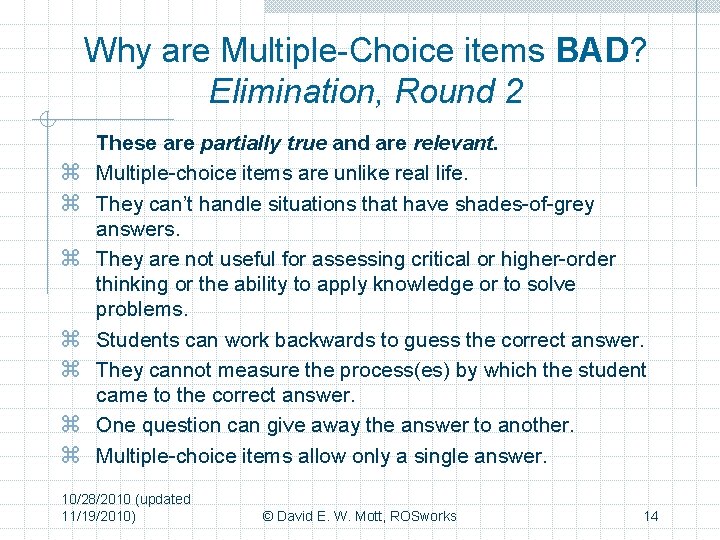

Why are Multiple-Choice items BAD? Elimination, Round 2 z z z z These are partially true and are relevant. Multiple-choice items are unlike real life. They can’t handle situations that have shades-of-grey answers. They are not useful for assessing critical or higher-order thinking or the ability to apply knowledge or to solve problems. Students can work backwards to guess the correct answer. They cannot measure the process(es) by which the student came to the correct answer. One question can give away the answer to another. Multiple-choice items allow only a single answer. 10/28/2010 (updated 11/19/2010) © David E. W. Mott, ROSworks 14

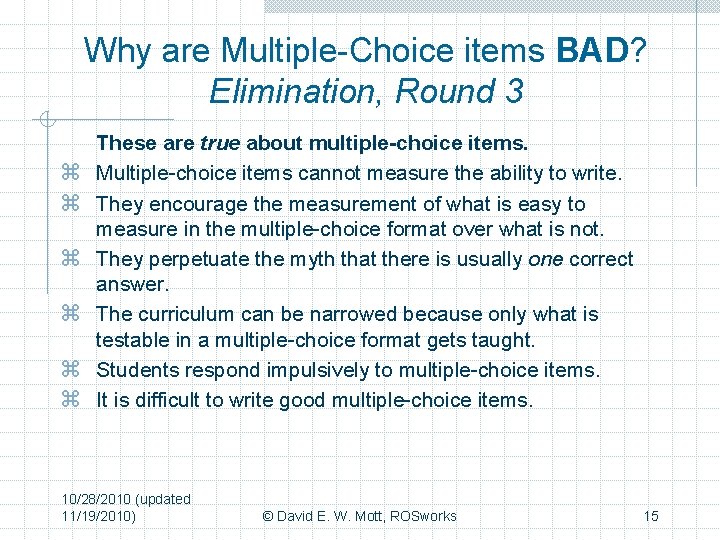

Why are Multiple-Choice items BAD? Elimination, Round 3 z z z These are true about multiple-choice items. Multiple-choice items cannot measure the ability to write. They encourage the measurement of what is easy to measure in the multiple-choice format over what is not. They perpetuate the myth that there is usually one correct answer. The curriculum can be narrowed because only what is testable in a multiple-choice format gets taught. Students respond impulsively to multiple-choice items. It is difficult to write good multiple-choice items. 10/28/2010 (updated 11/19/2010) © David E. W. Mott, ROSworks 15

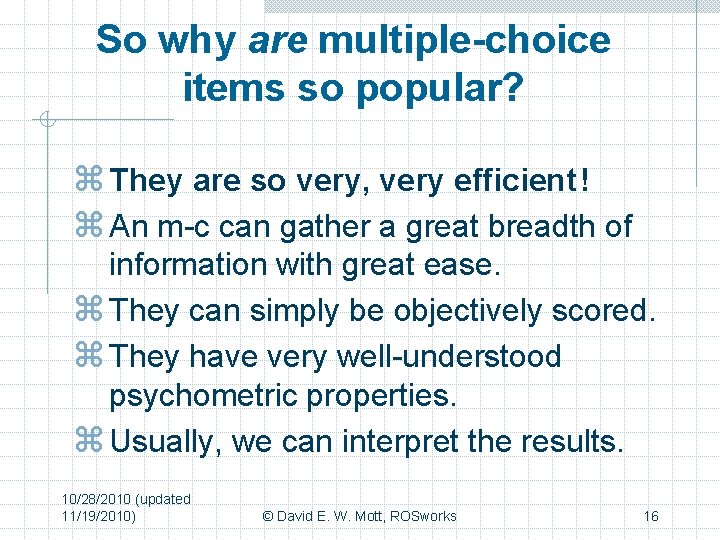

So why are multiple-choice items so popular? z They are so very, very efficient ! z An m-c can gather a great breadth of information with great ease. z They can simply be objectively scored. z They have very well-understood psychometric properties. z Usually, we can interpret the results. 10/28/2010 (updated 11/19/2010) © David E. W. Mott, ROSworks 16

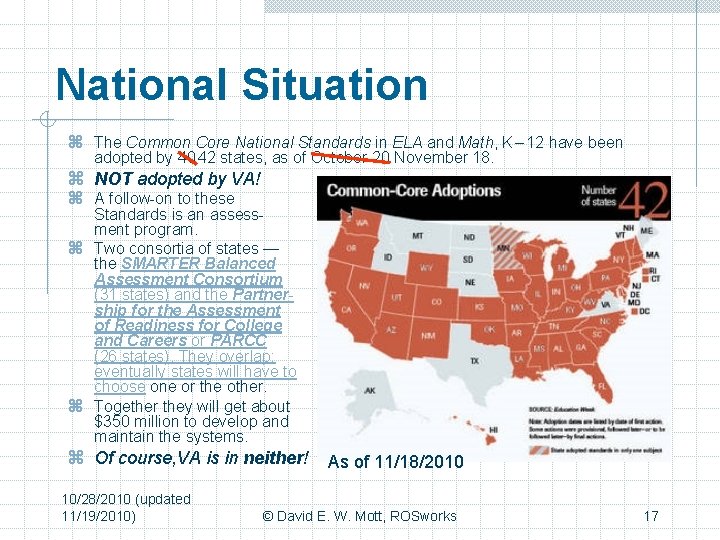

National Situation z The Common Core National Standards in ELA and Math, K – 12 have been adopted by 40 42 states, as of October 20 November 18. z NOT adopted by VA! z A follow-on to these Standards is an assessment program. z Two consortia of states — the SMARTER Balanced Assessment Consortium (31 states) and the Partnership for the Assessment of Readiness for College and Careers or PARCC (26 states). They overlap; eventually states will have to choose one or the other. z Together they will get about $350 million to develop and maintain the systems. z Of course, VA is in neither! As of 11/18/2010 10/28/2010 (updated 11/19/2010) © David E. W. Mott, ROSworks 17

National Situation – continued z The SMARTER Balanced Assessment Consortium. (SMARTER) [Fiscal agent – State of Washington] n n n A high-quality, balanced multi-state assessment system Options for customizable system components while also ensuring comparability of high-stakes summative test results across States. A variety of item types and performance events to measure the full range of the CCSS and to ensure accurate assessment of all students, including students with disabilities, English learners, and both low- and high-performing students. 10/28/2010 (updated 11/19/2010) © David E. W. Mott, ROSworks 18

SMARTER – Details 1 z Summative Tests A Common CCSS-based computer adaptive summative assessments that make use of technology-enhanced item types and teacher-developed and scored performance events z Interim/Benchmark Assessments Computer adaptive — reflecting learning progressions or content clusters z Classroom Formative Assessments Research-supported instructionally sensitive tools, processes, and practices developed by State educators to improve teaching and increase learning 10/28/2010 (updated 11/19/2010) © David E. W. Mott, ROSworks 19

SMARTER – Details 2 z Teacher support Focused ongoing support to teachers through professional development opportunities and exemplary instructional materials linked to the CCSS z An online reporting and tracking system To provide information about student progress toward collegeand career-readiness and about strengths and limitations in what students know and can do at each grade level z Cross-State communications network To inform stakeholders about SMARTER activities and ensure common focus on college- and career-readiness for all 10/28/2010 (updated 11/19/2010) © David E. W. Mott, ROSworks 20

SMARTER – Summary z High-tech computer testing for adaptive testing with innovative, complex item types z High-tech collaboration for assessment developing, scoring, results sharing z High-tech classroom support systems z High-tech shared databases to store, crunch, and report assessment results, including longitudinal information 10/28/2010 (updated 11/19/2010) © David E. W. Mott, ROSworks 21

National Situation – continued 2 z The Partnership for the Assessment of Readiness for College and Careers (PARCC) [Fiscal agent – State of Florida] n n n A comprehensively designed assessment system Strategic use of selected-response items, constructedresponse items, technology-enhanced items, and performance events with an emphasis on problem solving, analysis, synthesis, and critical thinking (HOTS) Providing accurate assessment of all students — including students with disabilities, English learners, and low- and high-performing students 10/28/2010 (updated 11/19/2010) © David E. W. Mott, ROSworks 22

PARCC – Details 1 z Summative Tests The end-of-year assessments (after 9/10 of instruction) in ELA and Math will be computer-enhanced and incorporate computer -scorable items to assess higher order skills. z Interim/Benchmark Assessments In ELA and Math 3 -8 & EOC, students will take focused assessments after 1/4 & 1/2 of instructional time. Then an extended and engaging performance-based task at the 3/4 point. In ELA/literacy, students will identify or read relevant research materials and compose written essays, publicly present the results, and answer questions or debate, so teachers can assess speaking and listening skills. z Results All back in time for inclusion on Report Cards. 10/28/2010 (updated 11/19/2010) © David E. W. Mott, ROSworks 23

National Situation Summary z The SMARTER Balanced Assessment Consortium (SBAC) supports the development and implementation of learning and assessment systems to radically reshape the education enterprise in participating States in order to improve student outcomes z Fully implement the summative assessments in grades 3 – 8 and high school by 2014 – 15 school year. 10/28/2010 (updated 11/19/2010) © David E. W. Mott, ROSworks 24

Humor Time flies like an arrow; fruit flies like a banana. 10/28/2010 (updated 11/19/2010) © David E. W. Mott, ROSworks 25

Humor Time flies like an arrow; fruit flies like a banana. 10/28/2010 (updated 11/19/2010) © David E. W. Mott, ROSworks 26

Humor Time flies like an arrow; fruit flies like a banana. 10/28/2010 (updated 11/19/2010) © David E. W. Mott, ROSworks 27

So, why worry! As a state that has so far declined to adopt the Common Standards and is also not participating in either of the two assessment consortia, thus Virginia educators should not be affected by the Common Standards or the assessments. 10/28/2010 (updated 11/19/2010) © David E. W. Mott, ROSworks Alfred E. Newman 28

So, why worry! Not True! Virginia will have to appear to be at least as innovative and forward-looking as the participating states. 10/28/2010 (updated 11/19/2010) © David E. W. Mott, ROSworks Alfred E. Newman 29

So, why worry! Alfred E. Newman Please, some Discussion 10/28/2010 (updated 11/19/2010) © David E. W. Mott, ROSworks 30

A look to the almost present z The Virginia Grade Level Assessments (VGLA) have been in use in Virginia for several years as an alternate SOL assessment for student who cannot take the regular SOL tests even with accommodations. z The VGLA have been low tech assessments. z This is about to change — 10/28/2010 (updated 11/19/2010) © David E. W. Mott, ROSworks 31

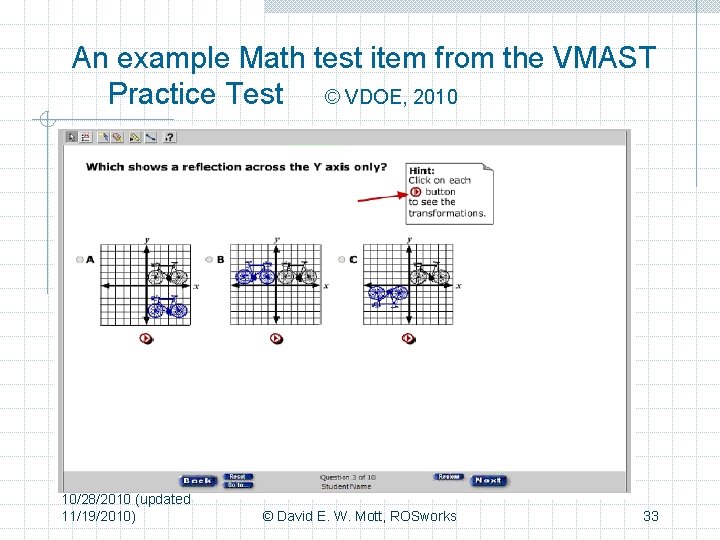

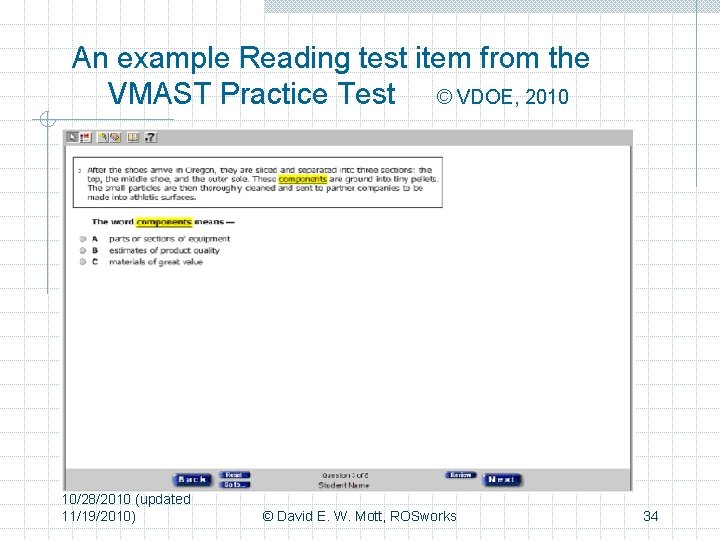

The VMAST program z The Virginia Modified Achievement Standards Test in math for grades 3 -8 and Algebra I will be introduced during 2011 -2012. Reading assessments will be introduced in the following year. z Although these tests are designed for special populations we can look at them to see how some future enhanced tests may look. 10/28/2010 (updated 11/19/2010) © David E. W. Mott, ROSworks 32

An example Math test item from the VMAST Practice Test © VDOE, 2010 10/28/2010 (updated 11/19/2010) © David E. W. Mott, ROSworks 33

An example Reading test item from the VMAST Practice Test © VDOE, 2010 10/28/2010 (updated 11/19/2010) © David E. W. Mott, ROSworks 34

At a recent testing conference z A paper was presented about an artificial world science assessment. z A avatar representing a student is placed in an artificial world containing a lake with an overgrowth of algae. z The avatar has access to various scientific instruments (p. H, dissolved-oxygen meters, etc. ) z The task is to discover why the algae are growing by performing the necessary experiments. 10/28/2010 (updated 11/19/2010) © David E. W. Mott, ROSworks 35

Two examples of what can go wrongg with technology. z The first involves batteries on 4 -function calculators. z The second involves the Wyoming online testing program and involves Pearson as the testing company. 10/28/2010 (updated 11/19/2010) © David E. W. Mott, ROSworks 36

So what does this mean for YOU? z More collaboration for you, as the central staffs have neither the expertise nor the personnel to do it all. z More money needed for technology. z Ubiquitous computers. z Many disruptions because of technology breakdowns – many of which you will be expected to “fix”. 10/28/2010 (updated 11/19/2010) © David E. W. Mott, ROSworks 37

Final Discussion 10/28/2010 (updated 11/19/2010) © David E. W. Mott, ROSworks 38

Questions, comments, requests for copies, etc. David E. W. Mott, Ph. D. Tests for Higher Standards / ROSworks, LLC 866 -724 -9722, 804 -282 -3111 dem@ rosworks. com or go to the blog www. thoughtsonassessment. com 10/28/2010 (updated 11/19/2010) © David E. W. Mott, ROSworks 39

- Slides: 39