Apriori Algorithm Apriori A Candidate Generation Test Approach

- Slides: 12

Apriori Algorithm

Apriori: A Candidate Generation & Test Approach • Apriori pruning principle: If there is any itemset which is infrequent, its superset should not be generated/tested! (Agrawal & Srikant @VLDB’ 94, Mannila, et al. @ KDD’ 94) • Method: – Initially, scan DB once to get frequent 1 -itemset – Generate length (k+1) candidate itemsets from length k frequent itemsets – Test the candidates against DB – Terminate when no frequent or candidate set can be generated 2

Steps In Apriori • • Apriori algorithm is a sequence of steps to be followed to find the most frequent itemset in the given database. This data mining technique follows the join and the prune steps iteratively until the most frequent itemset is achieved. A minimum support threshold is given in the problem or it is assumed by the user. #1) In the first iteration of the algorithm, each item is taken as a 1 -itemsets candidate. The algorithm will count the occurrences of each item. #2) Let there be some minimum support, min_sup ( eg 2). The set of 1 – itemsets whose occurrence is satisfying the min sup are determined. Only those candidates which count more than or equal to min_sup, are taken ahead for the next iteration and the others are pruned. #3) Next, 2 -itemset frequent items with min_sup are discovered. For this in the join step, the 2 itemset is generated by forming a group of 2 by combining items with itself. #4) The 2 -itemset candidates are pruned using min-sup threshold value. Now the table will have 2 – itemsets with min-sup only. #5) The next iteration will form 3 –itemsets using join and prune step. This iteration will follow antimonotone property where the subsets of 3 -itemsets, that is the 2 –itemset subsets of each group fall in min_sup. If all 2 -itemset subsets are frequent then the superset will be frequent otherwise it is pruned. #6) Next step will follow making 4 -itemset by joining 3 -itemset with itself and pruning if its subset does not meet the min_sup criteria. The algorithm is stopped when the most frequent itemset is achieved.

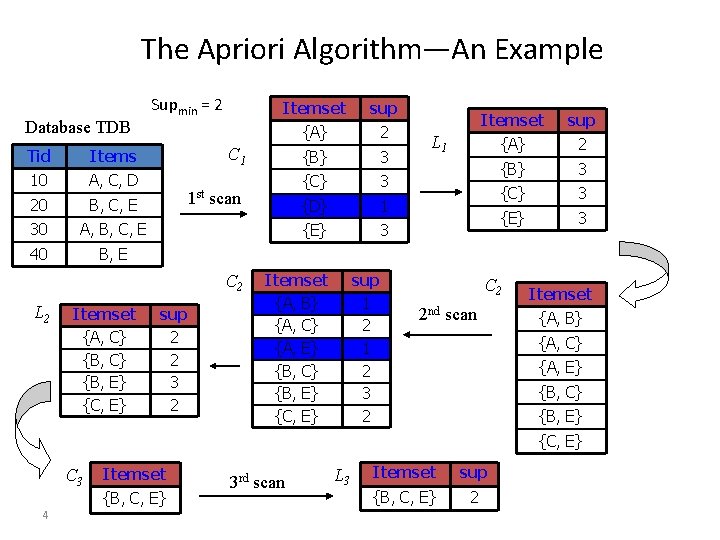

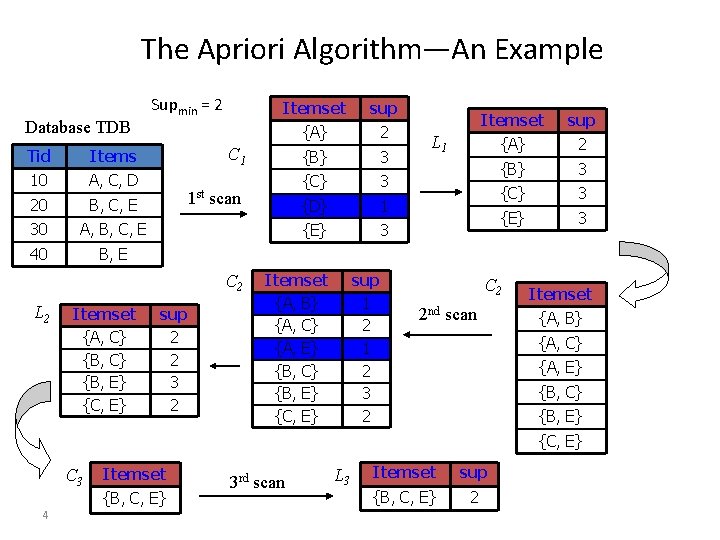

The Apriori Algorithm—An Example Database TDB Tid Items 10 A, C, D 20 B, C, E 30 A, B, C, E 40 B, E Supmin = 2 Itemset {A, C} {B, E} {C, E} sup {A} 2 {B} 3 {C} 3 {D} 1 {E} 3 C 1 1 st scan C 2 L 2 Itemset sup 2 2 3 2 Itemset {A, B} {A, C} {A, E} {B, C} {B, E} {C, E} sup 1 2 3 2 Itemset sup {A} 2 {B} 3 {C} 3 {E} 3 L 1 C 2 2 nd scan Itemset {A, B} {A, C} {A, E} {B, C} {B, E} {C, E} C 3 4 Itemset {B, C, E} 3 rd scan L 3 Itemset sup {B, C, E} 2

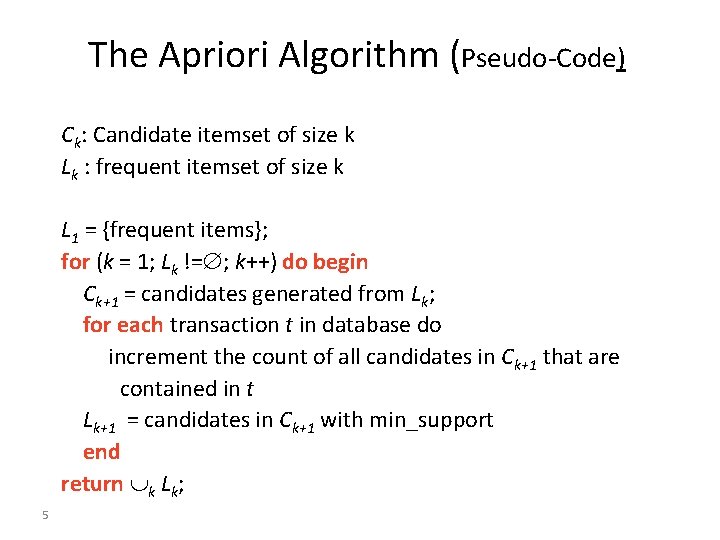

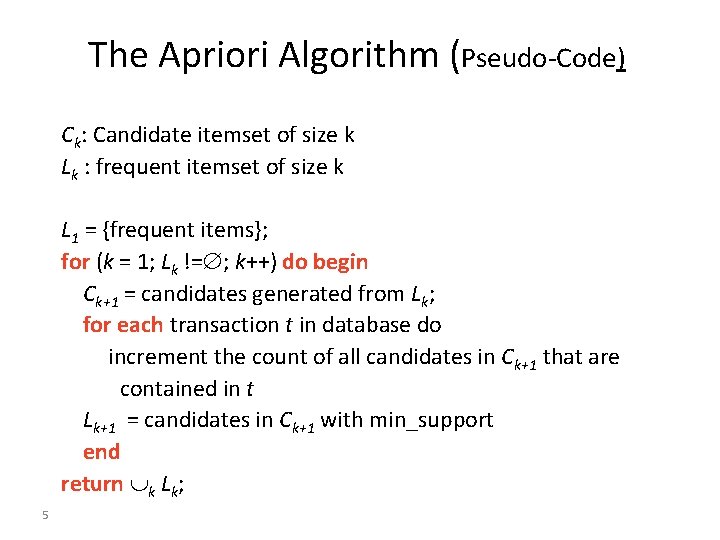

The Apriori Algorithm (Pseudo-Code) Ck: Candidate itemset of size k Lk : frequent itemset of size k L 1 = {frequent items}; for (k = 1; Lk != ; k++) do begin Ck+1 = candidates generated from Lk; for each transaction t in database do increment the count of all candidates in Ck+1 that are contained in t Lk+1 = candidates in Ck+1 with min_support end return k Lk; 5

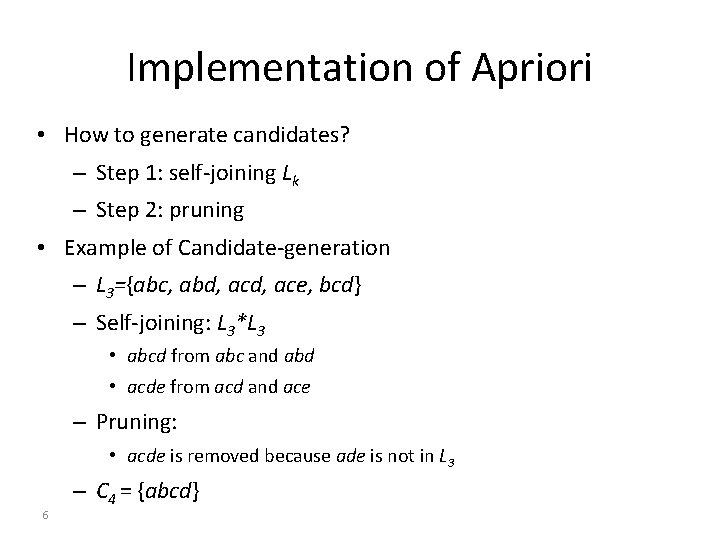

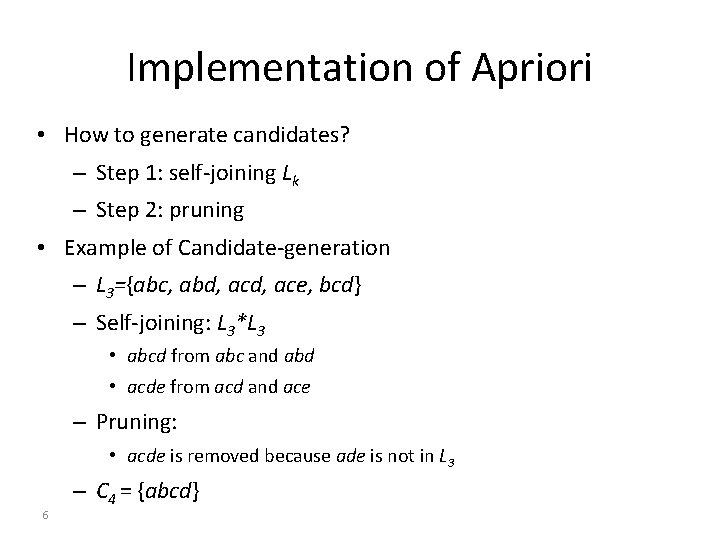

Implementation of Apriori • How to generate candidates? – Step 1: self-joining Lk – Step 2: pruning • Example of Candidate-generation – L 3={abc, abd, ace, bcd} – Self-joining: L 3*L 3 • abcd from abc and abd • acde from acd and ace – Pruning: • acde is removed because ade is not in L 3 6 – C 4 = {abcd}

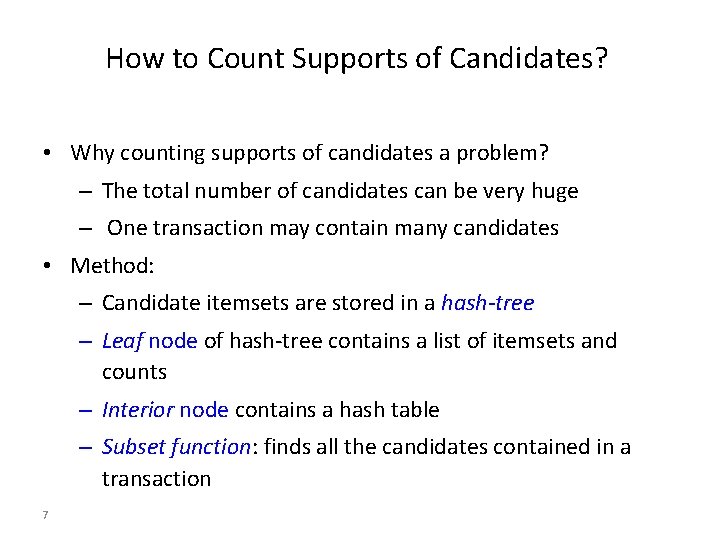

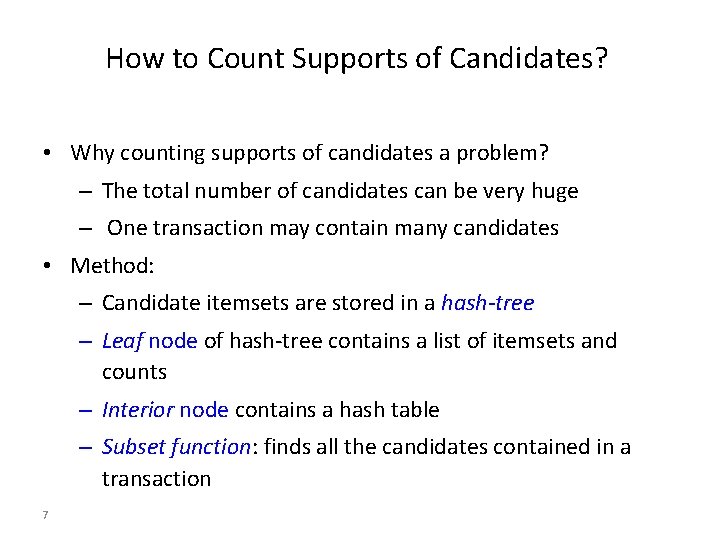

How to Count Supports of Candidates? • Why counting supports of candidates a problem? – The total number of candidates can be very huge – One transaction may contain many candidates • Method: – Candidate itemsets are stored in a hash-tree – Leaf node of hash-tree contains a list of itemsets and counts – Interior node contains a hash table – Subset function: finds all the candidates contained in a transaction 7

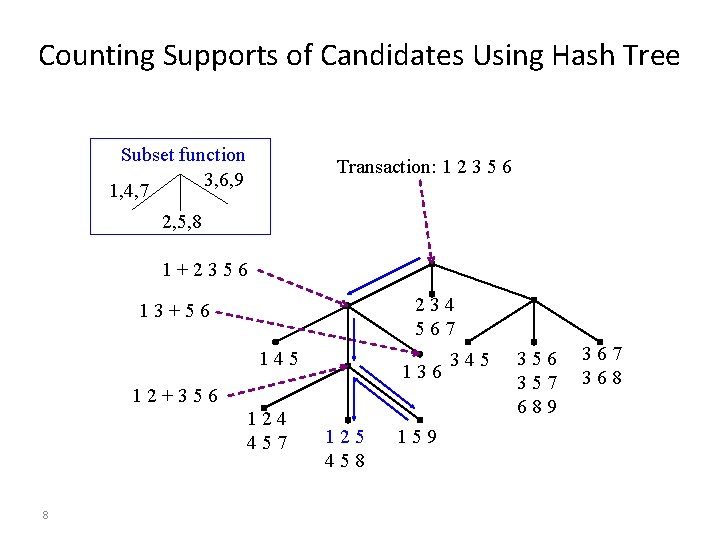

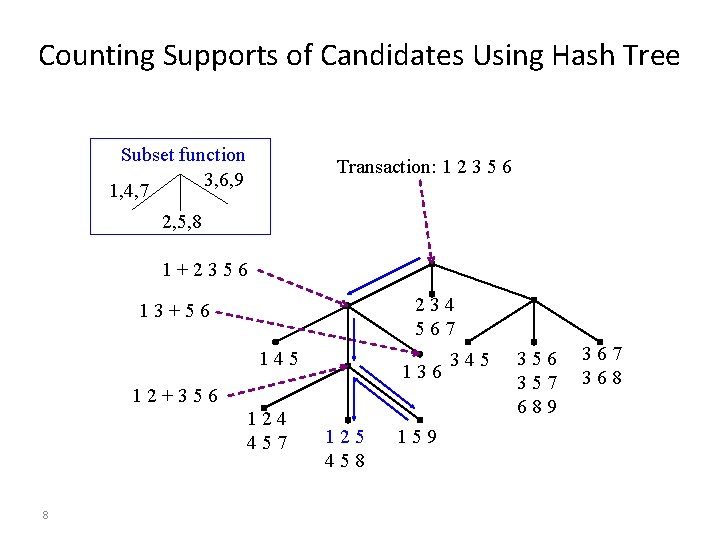

Counting Supports of Candidates Using Hash Tree Subset function 3, 6, 9 1, 4, 7 Transaction: 1 2 3 5 6 2, 5, 8 1+2356 234 567 13+56 145 136 12+356 124 457 8 125 458 159 345 356 357 689 367 368

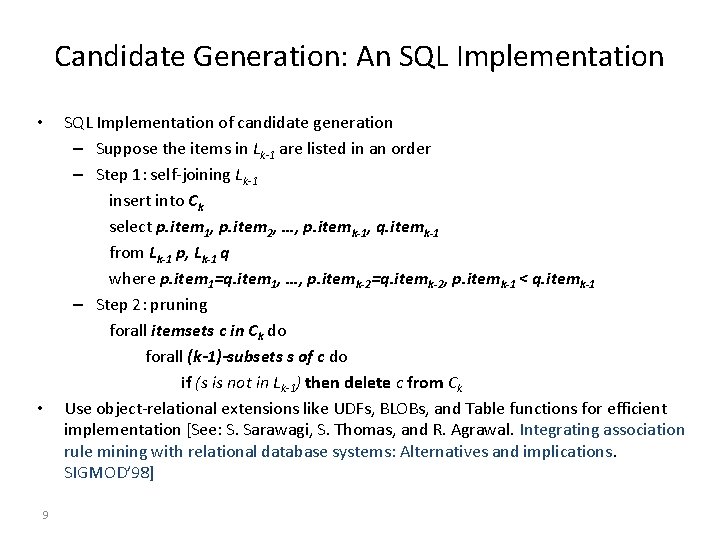

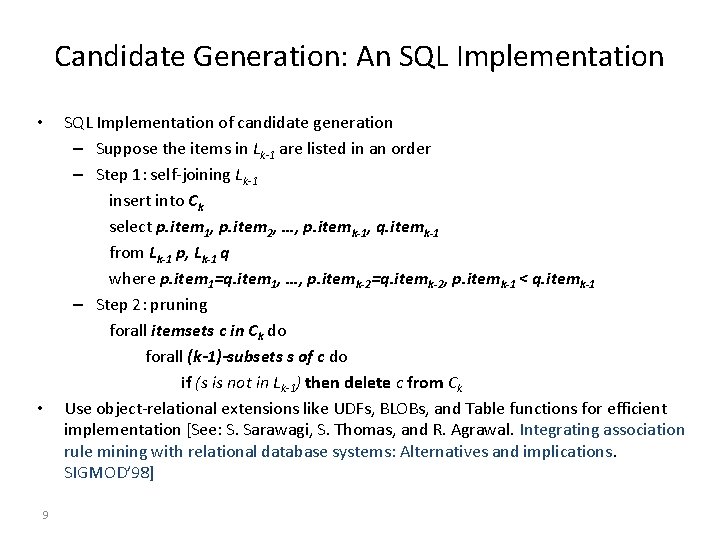

Candidate Generation: An SQL Implementation • • 9 SQL Implementation of candidate generation – Suppose the items in Lk-1 are listed in an order – Step 1: self-joining Lk-1 insert into Ck select p. item 1, p. item 2, …, p. itemk-1, q. itemk-1 from Lk-1 p, Lk-1 q where p. item 1=q. item 1, …, p. itemk-2=q. itemk-2, p. itemk-1 < q. itemk-1 – Step 2: pruning forall itemsets c in Ck do forall (k-1)-subsets s of c do if (s is not in Lk-1) then delete c from Ck Use object-relational extensions like UDFs, BLOBs, and Table functions for efficient implementation [See: S. Sarawagi, S. Thomas, and R. Agrawal. Integrating association rule mining with relational database systems: Alternatives and implications. SIGMOD’ 98]

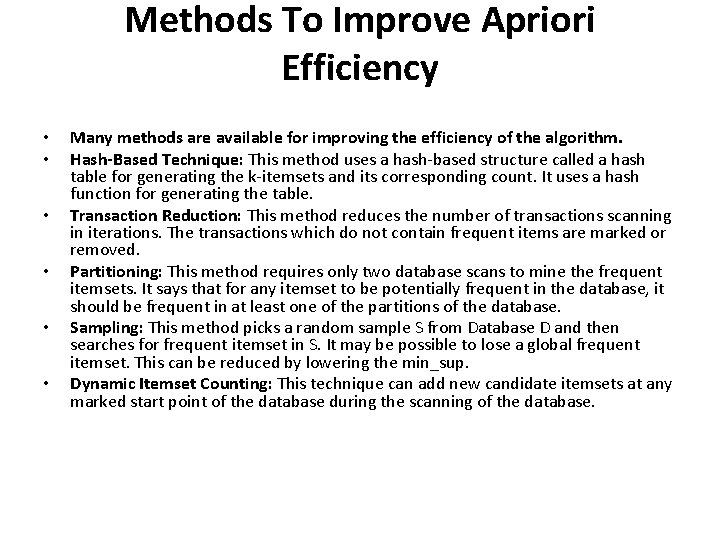

Methods To Improve Apriori Efficiency • • • Many methods are available for improving the efficiency of the algorithm. Hash-Based Technique: This method uses a hash-based structure called a hash table for generating the k-itemsets and its corresponding count. It uses a hash function for generating the table. Transaction Reduction: This method reduces the number of transactions scanning in iterations. The transactions which do not contain frequent items are marked or removed. Partitioning: This method requires only two database scans to mine the frequent itemsets. It says that for any itemset to be potentially frequent in the database, it should be frequent in at least one of the partitions of the database. Sampling: This method picks a random sample S from Database D and then searches for frequent itemset in S. It may be possible to lose a global frequent itemset. This can be reduced by lowering the min_sup. Dynamic Itemset Counting: This technique can add new candidate itemsets at any marked start point of the database during the scanning of the database.

Applications Of Apriori Algorithm Some fields where Apriori is used: • In Education Field: Extracting association rules in data mining of admitted students through characteristics and specialties. • In the Medical field: For example Analysis of the patient's database. • In Forestry: Analysis of probability and intensity of forest fire with the forest fire data. • Apriori is used by many companies like Amazon in the Recommender System and by Google for the auto-complete feature.

References • https: //www. geeksforgeeks. org/apriorialgorithm/ • https: //www. softwaretestinghelp. com/apriori -algorithm/ • https: //www. edureka. co/blog/apriorialgorithm/