Approximating the CutNorm Hubert Chan Approximating the CutNorm

Approximating the Cut-Norm Hubert Chan

• “Approximating the Cut-Norm via Grothendieck’s Inequality” Noga Alon, Assaf Naor appearing in STOC ‘ 04

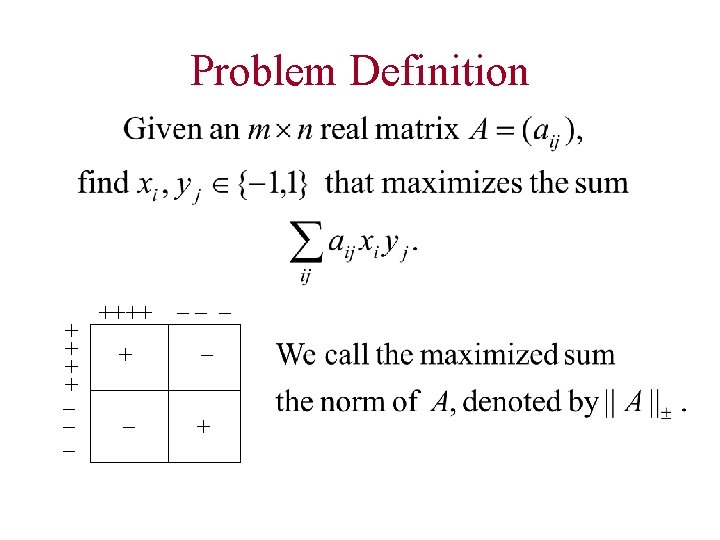

Problem Definition + + + _+ _ _ ++++ __ _ +

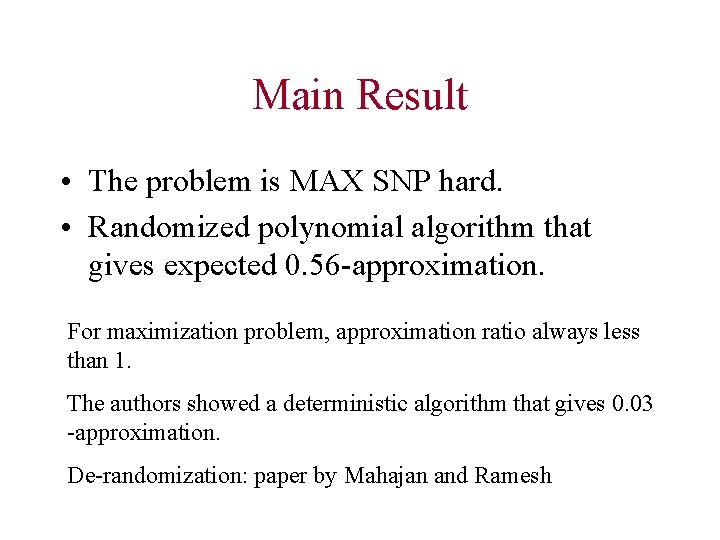

Main Result • The problem is MAX SNP hard. • Randomized polynomial algorithm that gives expected 0. 56 -approximation. For maximization problem, approximation ratio always less than 1. The authors showed a deterministic algorithm that gives 0. 03 -approximation. De-randomization: paper by Mahajan and Ramesh

Road Map • Motivation • • Hardness Result General Approach Outline of Algorithm Conclusion

Motivation • Inspired by the MAX-CUT problem Frieze and Kannan proposed decomposition scheme for solving problems on dense graphs • Estimating the norm of a matrix is a key step in the decomposition scheme

Comparing with Previous result • Previously, computes norm with additive error • This is good only for a matrix whose norm is large. • The new algorithm approximates norm for all real matrices within constant factor 0. 56 in expectation.

Road Map • Motivation • Hardness Result • General Approach • Outline of Algorithm • Conclusion

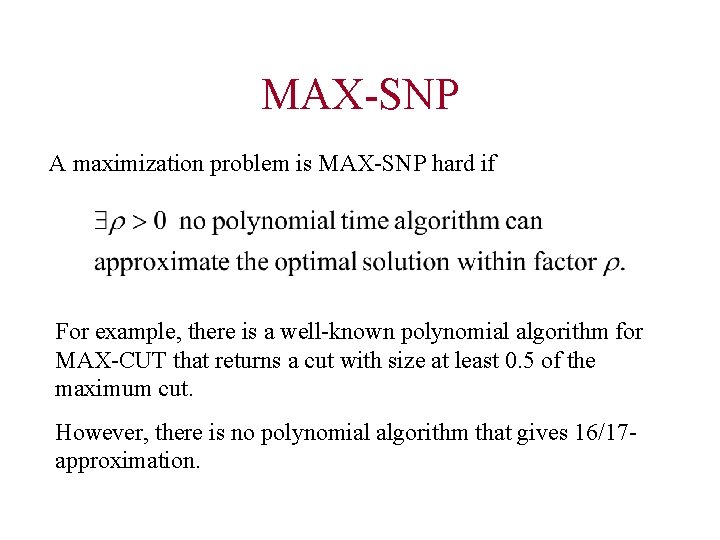

MAX-SNP A maximization problem is MAX-SNP hard if For example, there is a well-known polynomial algorithm for MAX-CUT that returns a cut with size at least 0. 5 of the maximum cut. However, there is no polynomial algorithm that gives 16/17 approximation.

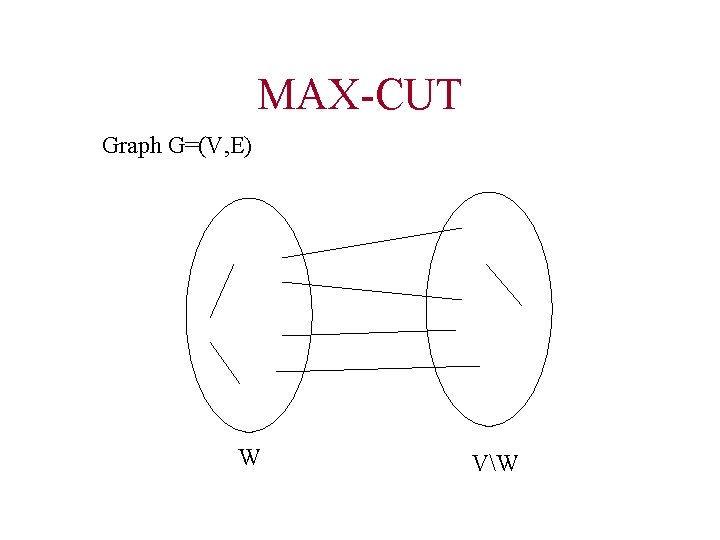

MAX-CUT Graph G=(V, E) W VW

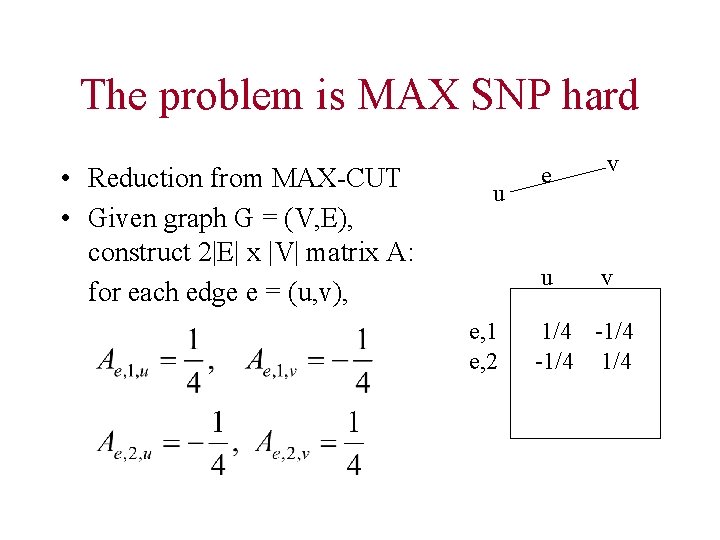

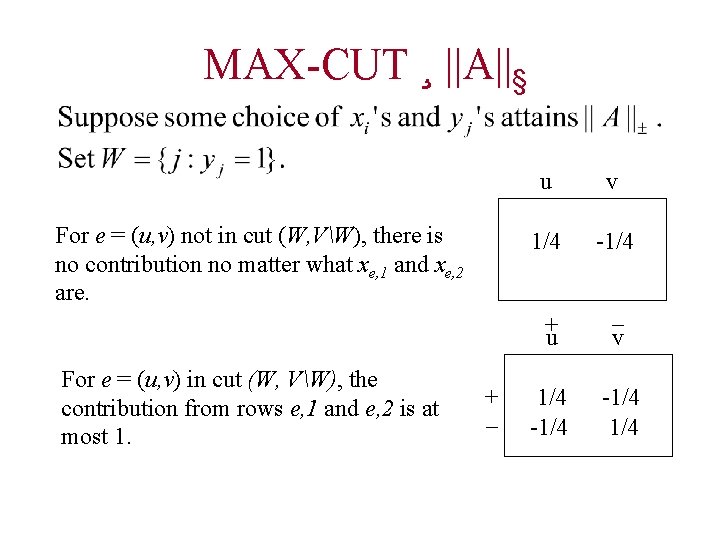

The problem is MAX SNP hard • Reduction from MAX-CUT • Given graph G = (V, E), construct 2|E| x |V| matrix A: for each edge e = (u, v), u e, 1 e, 2 e v u v 1/4 -1/4

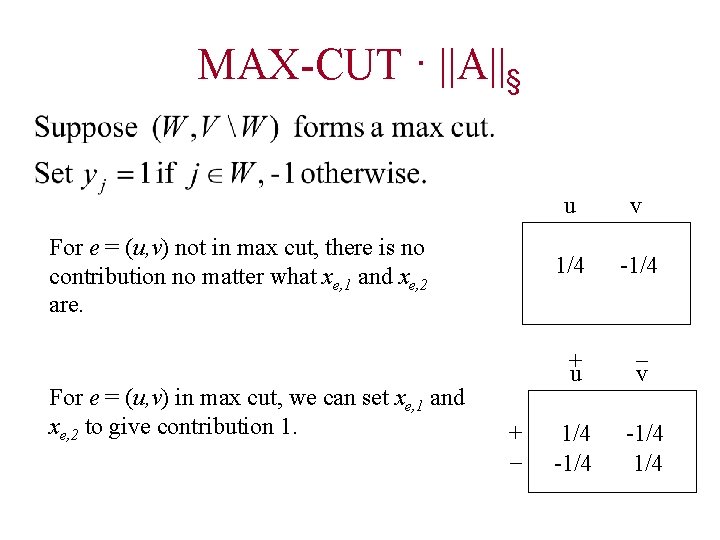

MAX-CUT · ||A||§ For e = (u, v) not in max cut, there is no contribution no matter what xe, 1 and xe, 2 are. For e = (u, v) in max cut, we can set xe, 1 and xe, 2 to give contribution 1. + _ u v 1/4 -1/4 + u _ v 1/4 -1/4

MAX-CUT ¸ ||A||§ For e = (u, v) not in cut (W, VW), there is no contribution no matter what xe, 1 and xe, 2 are. For e = (u, v) in cut (W, VW), the contribution from rows e, 1 and e, 2 is at most 1. + _ u v 1/4 -1/4 + u _ v 1/4 -1/4

Road Map • Motivation • Hardness Result • General Approach • Outline of Algorithm • Conclusion

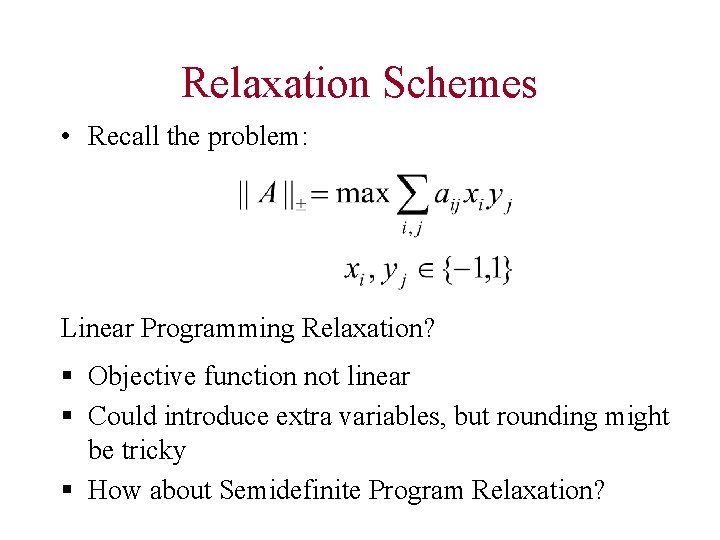

Relaxation Schemes • Recall the problem: Linear Programming Relaxation? § Objective function not linear § Could introduce extra variables, but rounding might be tricky § How about Semidefinite Program Relaxation?

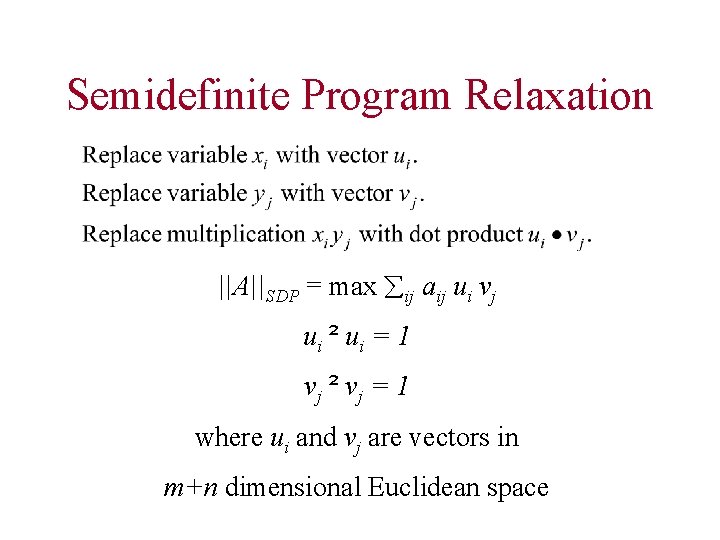

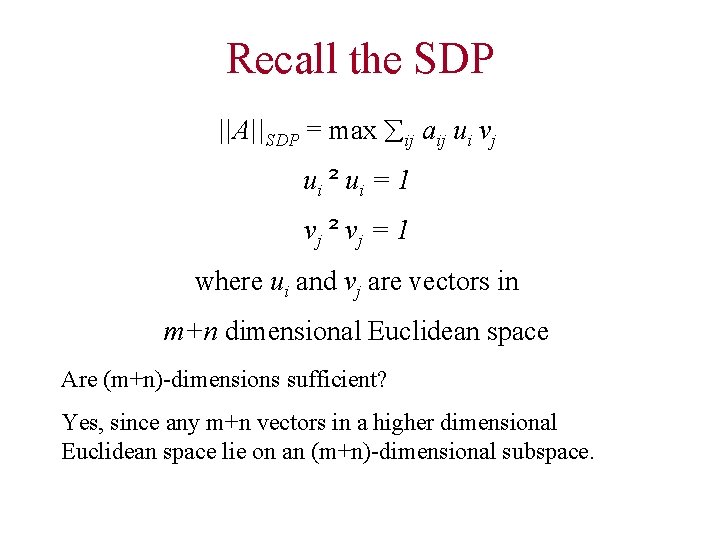

Semidefinite Program Relaxation ||A||SDP = max ij aij ui vj ui ² ui = 1 vj ² vj = 1 where ui and vj are vectors in m+n dimensional Euclidean space

Remarks about SDP ² Are (m+n)-dimensions sufficient? Yes, since any m+n vectors in a higher dimensional Euclidean space lie on an (m+n)-dimensional subspace. ² Fact: There exists an algorithm that given > 0, returns solution vectors ui’s and vj’s that attains value at least ||A||SDP - in time polynomial in the length of input and the logarithm of 1/.

Are we done? We need to convert the vectors back to integers in {-1, 1}! General strategy: 1. Obtain optimal vectors ui and vj for the SDP. 2. Use some randomized procedure to reconstruct integer solutions xi, yj 2 {-1, 1} from the vectors. 3. Give good expected bound: Find some constant > 0 such that 4. E[ ij aij xi yj] ¸ ||A||SDP ¸ ||A||§

Road Map • Motivation • Hardness Result • General Approach • Outline of Rounding Algorithm • Conclusion

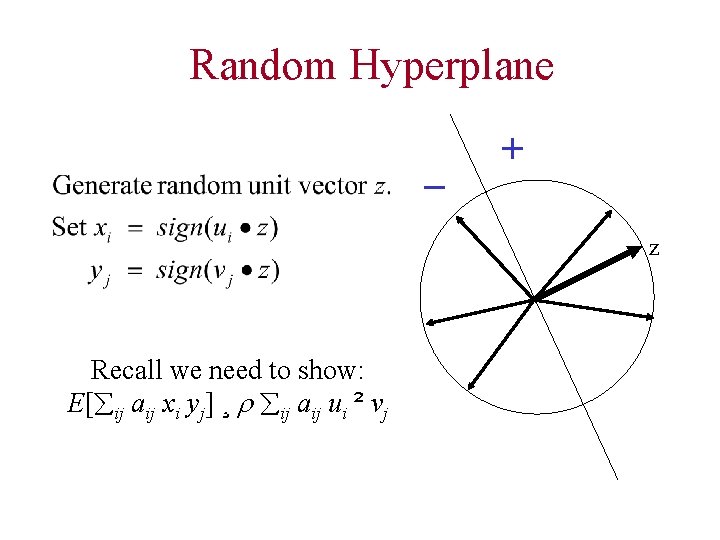

Random Hyperplane _ + z Recall we need to show: E[ ij aij xi yj] ¸ ij aij ui ² vj

![Analyzing E[xy] Unit vectors u and v such that cos = u ² v Analyzing E[xy] Unit vectors u and v such that cos = u ² v](http://slidetodoc.com/presentation_image_h2/3db2dcad2d1c64ef2100dbaf3af37e04/image-21.jpg)

Analyzing E[xy] Unit vectors u and v such that cos = u ² v A random unit vector z determines a hyperplane. Pr[u and v are separated] = / Set x = sign(u ² z), y = sign(v ² z). E[xy] = (1 - / ) - / = 1 - 2 / = 2/ ( / 2 - ) = 2/ arcsin(u ² v) z u v

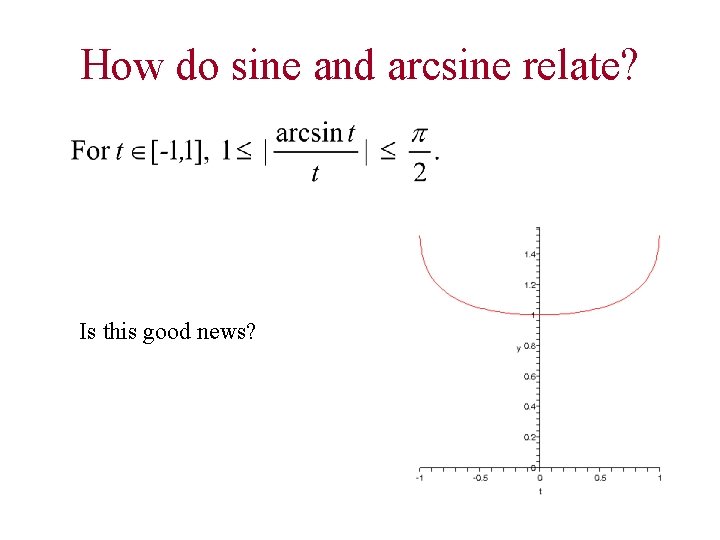

How do sine and arcsine relate? Is this good news?

Performance Guarantee? • We have term by term constant factor approximation. • Bad news: cancellation because terms have different signs • Hence, we need global approximation.

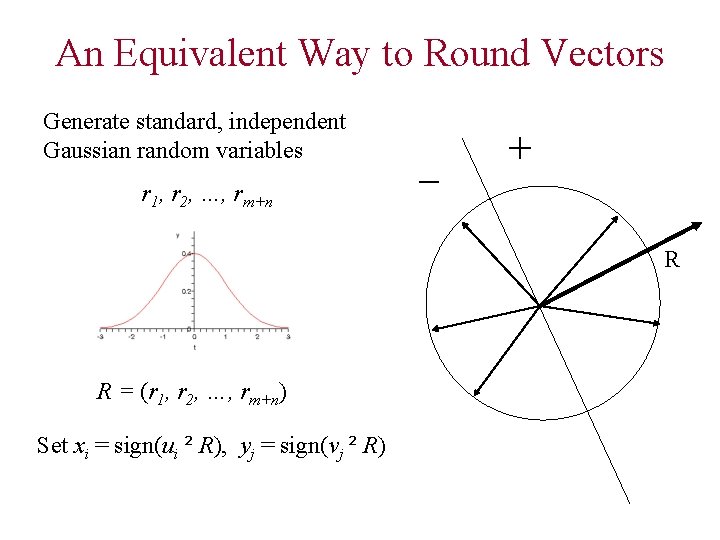

An Equivalent Way to Round Vectors Generate standard, independent Gaussian random variables r 1, r 2, …, rm+n _ + R R = (r 1, r 2, …, rm+n) Set xi = sign(ui ² R), yj = sign(vj ² R)

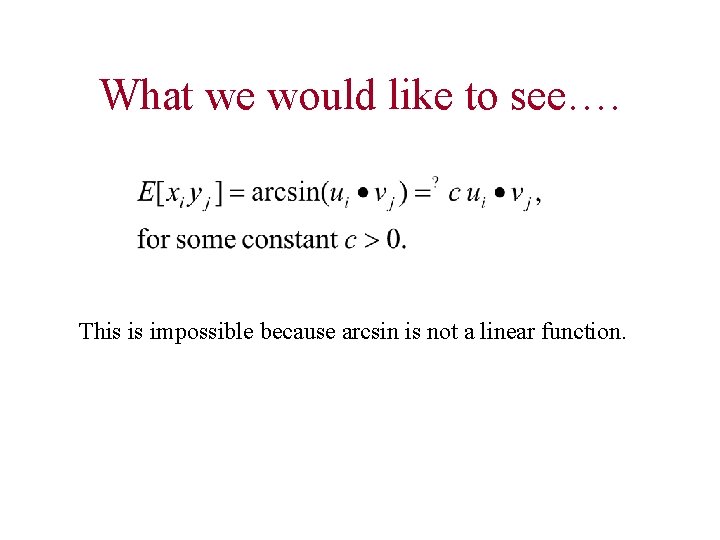

What we would like to see…. This is impossible because arcsin is not a linear function.

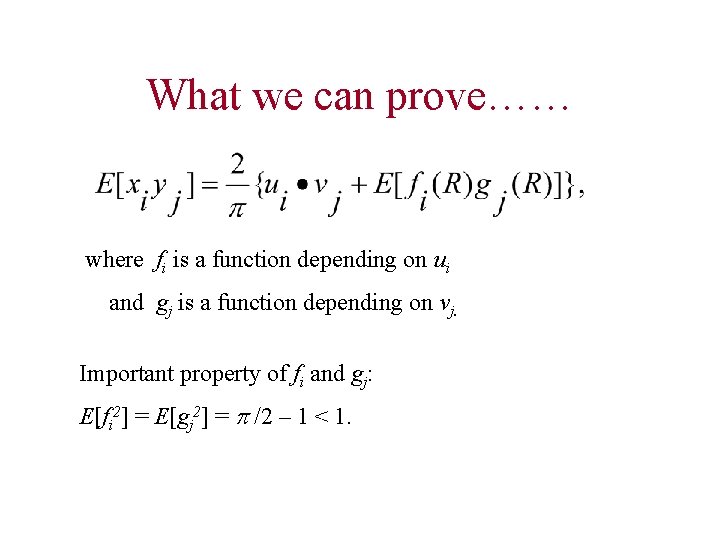

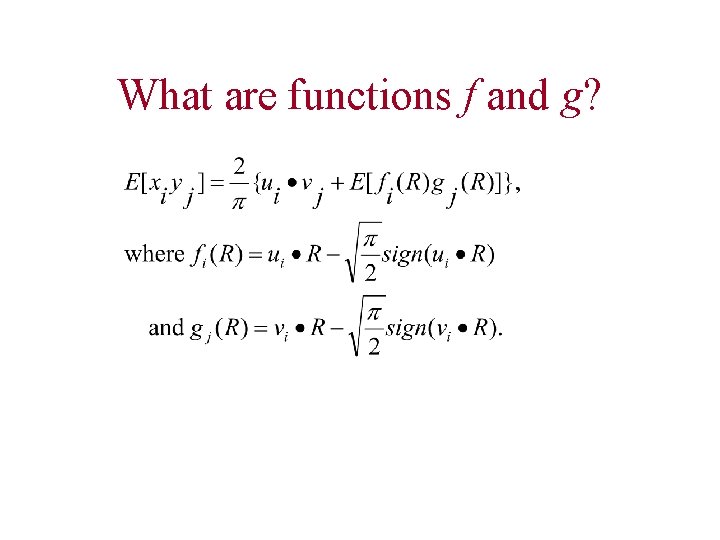

What we can prove…… where fi is a function depending on ui and gj is a function depending on vj. Important property of fi and gj: E[fi 2] = E[gj 2] = /2 – 1 < 1.

![Inner Product and E[f g] Inner Product and E[f g]](http://slidetodoc.com/presentation_image_h2/3db2dcad2d1c64ef2100dbaf3af37e04/image-27.jpg)

Inner Product and E[f g]

Recall the SDP ||A||SDP = max ij aij ui vj ui ² ui = 1 vj ² vj = 1 where ui and vj are vectors in m+n dimensional Euclidean space Are (m+n)-dimensions sufficient? Yes, since any m+n vectors in a higher dimensional Euclidean space lie on an (m+n)-dimensional subspace.

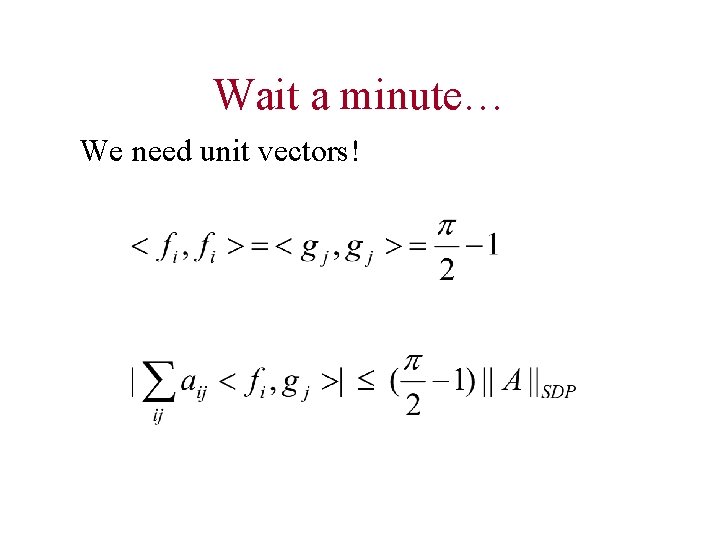

Wait a minute… We need unit vectors!

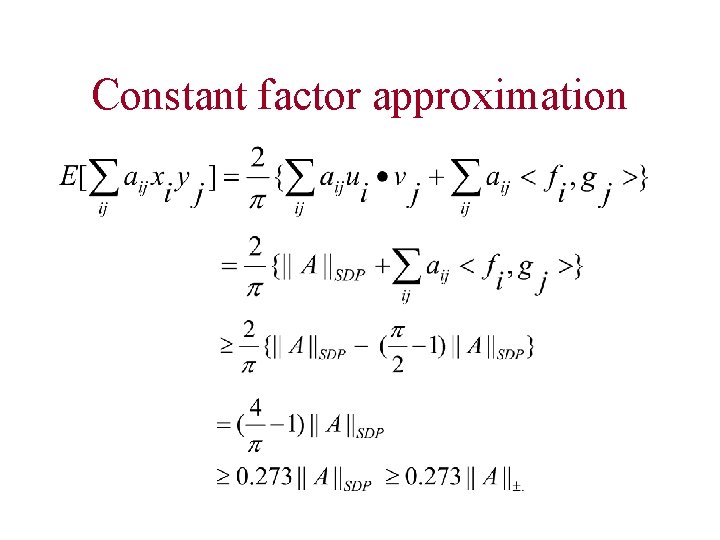

Constant factor approximation

What are functions f and g?

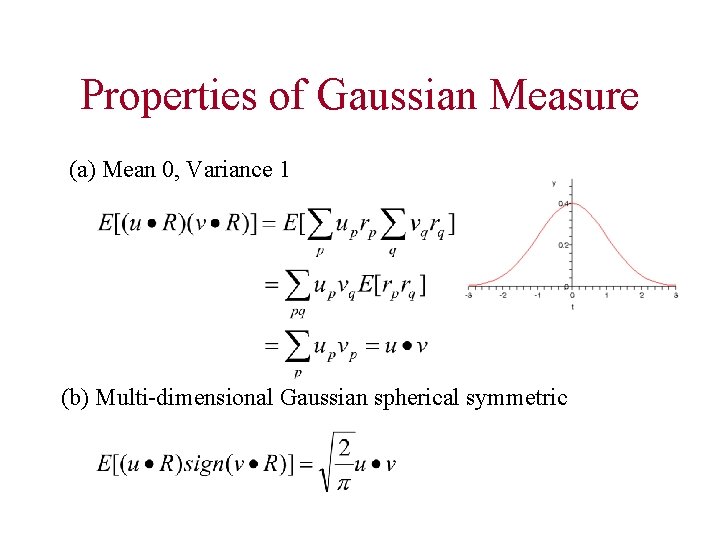

Properties of Gaussian Measure (a) Mean 0, Variance 1 (b) Multi-dimensional Gaussian spherical symmetric

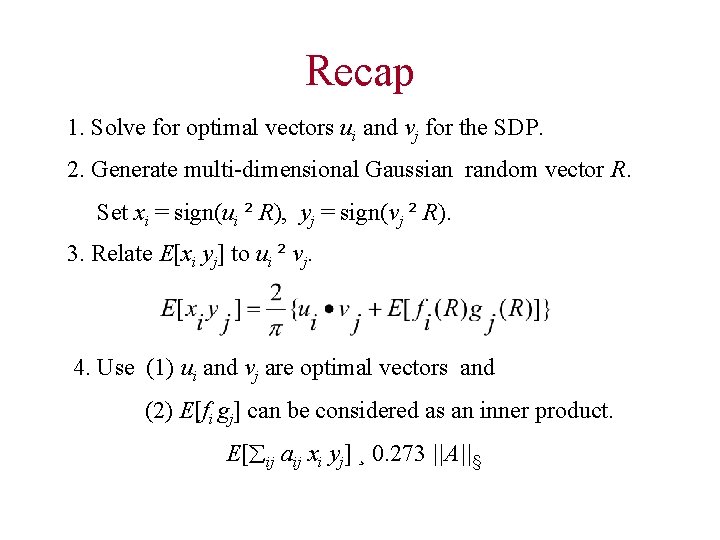

Recap 1. Solve for optimal vectors ui and vj for the SDP. 2. Generate multi-dimensional Gaussian random vector R. Set xi = sign(ui ² R), yj = sign(vj ² R). 3. Relate E[xi yj] to ui ² vj. 4. Use (1) ui and vj are optimal vectors and (2) E[fi gj] can be considered as an inner product. E[ ij aij xi yj] ¸ 0. 273 ||A||§

What we would like to see…. This is impossible because arcsin is not a linear function.

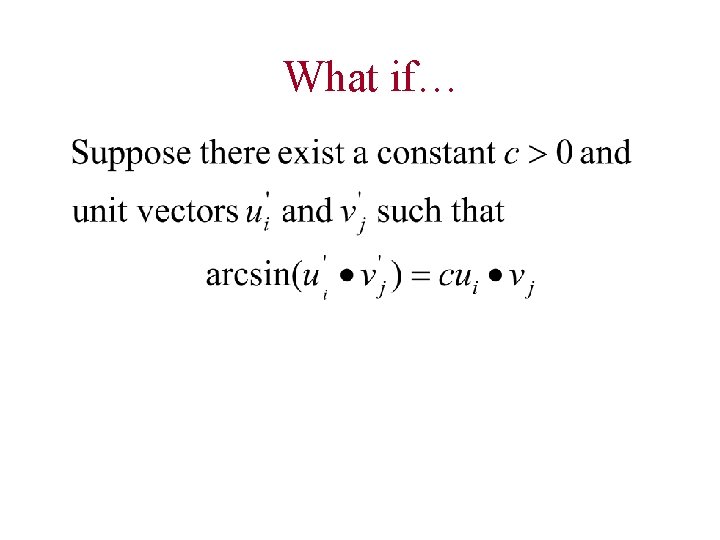

What if…

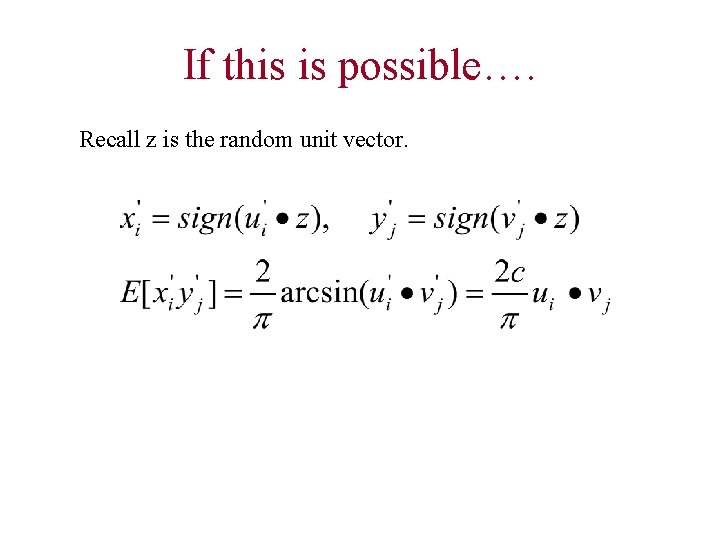

If this is possible…. Recall z is the random unit vector.

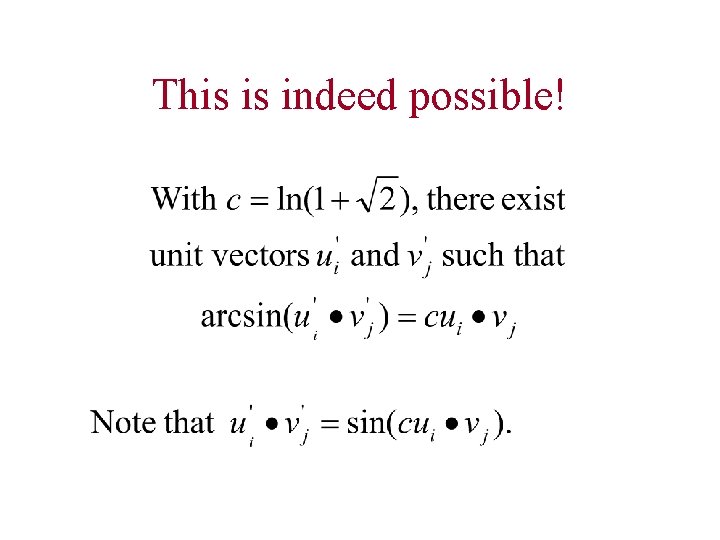

This is indeed possible!

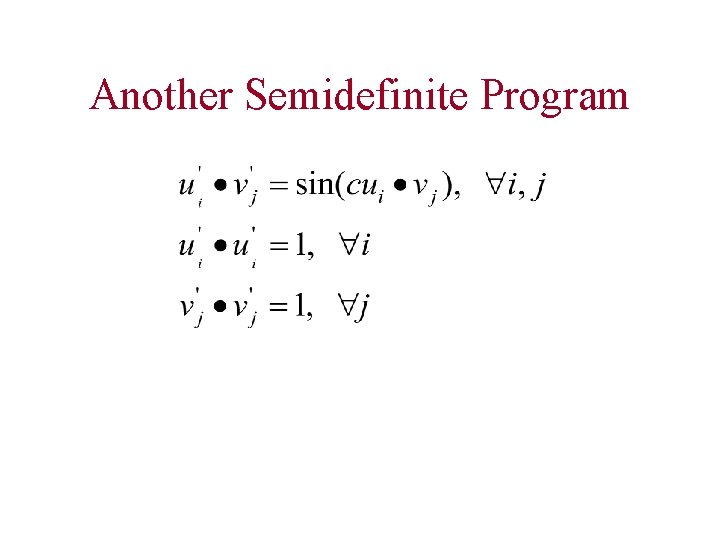

Another Semidefinite Program

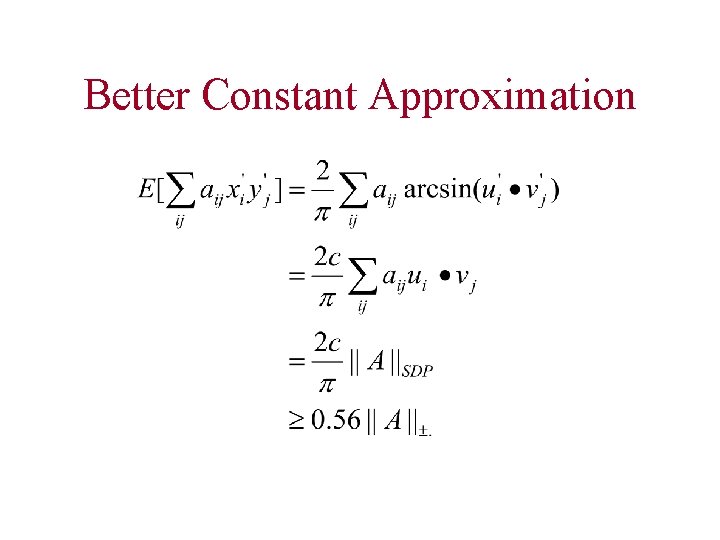

Better Constant Approximation

Road Map • • Motivation Hardness Result General Approach Outline of Algorithm • Conclusion

Main Ideas • Semidefinite Program Relaxation - a powerful tool for optimization problems • Randomized Rounding Scheme - random hyperplane - multi-dimensional Gaussian • Apply similar techniques directly to approximate MAX-CUT

- Slides: 41