Approaching a New Language in Machine Translation Anna

- Slides: 28

Approaching a New Language in Machine Translation Anna Sågvall Hein, Per Weijnitz SALTMIL, LREC 2006, Sågvall Hein & Weijnitz

A Swedish example Experiences of • rule-based translation by means of translation software that was developed from scratch • statistical translation by means of publicly available software SALTMIL, LREC 2006, Sågvall Hein & Weijnitz

Developing a robust transferbased system for Swedish • collecting a small sv-en translation corpus from the automotive domain (Scania) • building a prototype of a core translation engine, Multra • extending the translation corpus to 50 k words for each language and scaling-up the dictionaries for the extended corpus • building a translation system, Mats for hosting Multra and processing real-word documents • making the system robust, transparent and trace-able • building an extended, more flexible version of Mats, Convertus SALTMIL, LREC 2006, Sågvall Hein & Weijnitz

Features of the Multra engine • • • transfer-based modular analysis by chart parsing transfer based on unification generation based on unification and concatenation • non-deterministic processing • preference machinery SALTMIL, LREC 2006, Sågvall Hein & Weijnitz

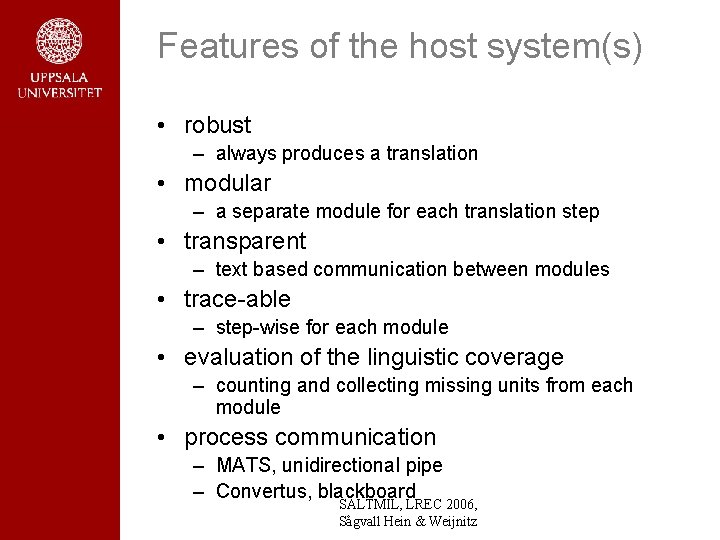

Features of the host system(s) • robust – always produces a translation • modular – a separate module for each translation step • transparent – text based communication between modules • trace-able – step-wise for each module • evaluation of the linguistic coverage – counting and collecting missing units from each module • process communication – MATS, unidirectional pipe – Convertus, blackboard SALTMIL, LREC 2006, Sågvall Hein & Weijnitz

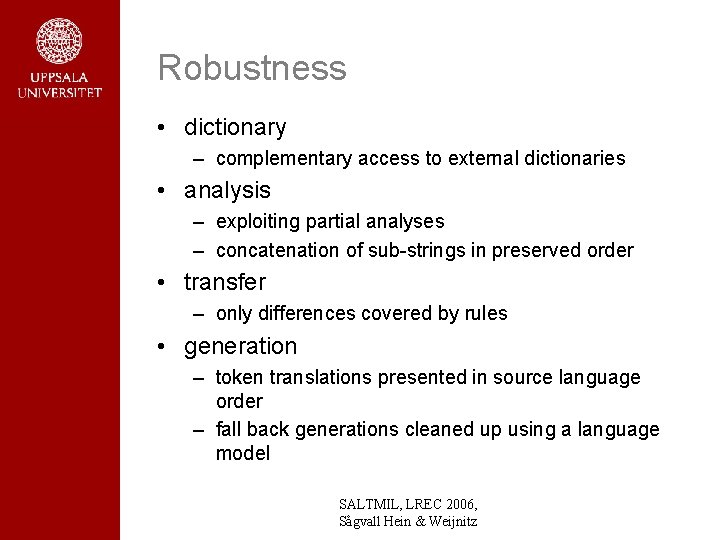

Robustness • dictionary – complementary access to external dictionaries • analysis – exploiting partial analyses – concatenation of sub-strings in preserved order • transfer – only differences covered by rules • generation – token translations presented in source language order – fall back generations cleaned up using a language model SALTMIL, LREC 2006, Sågvall Hein & Weijnitz

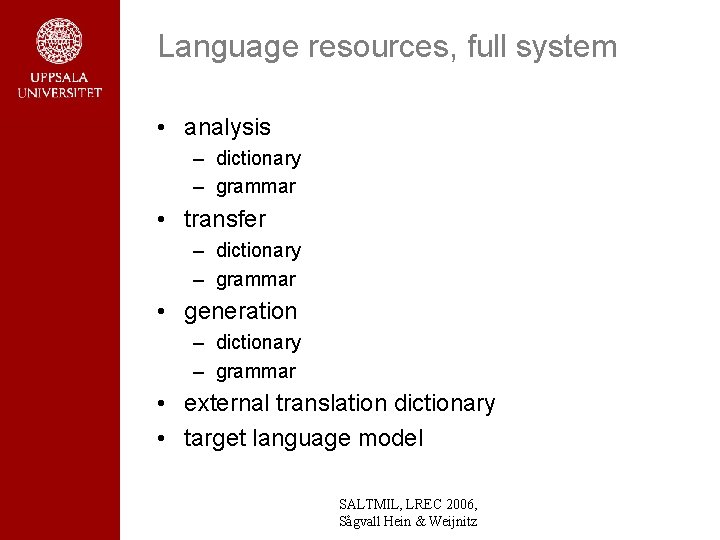

Language resources, full system • analysis – dictionary – grammar • transfer – dictionary – grammar • generation – dictionary – grammar • external translation dictionary • target language model SALTMIL, LREC 2006, Sågvall Hein & Weijnitz

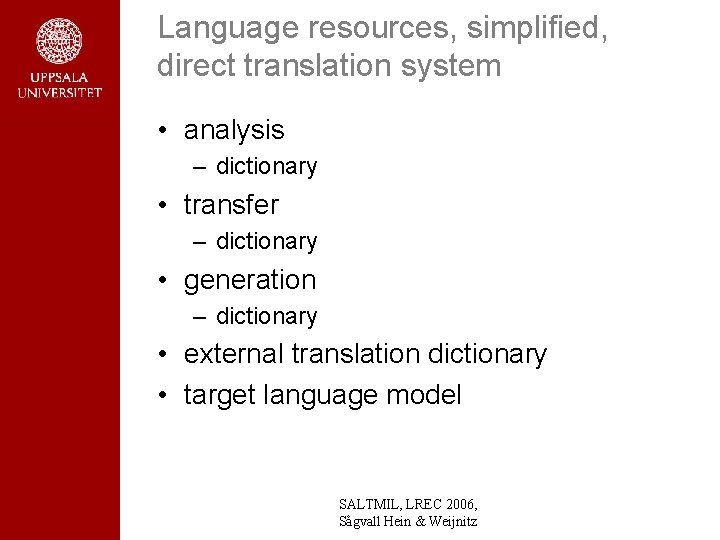

Language resources, simplified, direct translation system • analysis – dictionary • transfer – dictionary • generation – dictionary • external translation dictionary • target language model SALTMIL, LREC 2006, Sågvall Hein & Weijnitz

Achievements • Bleu scores ~0. 4 -0. 5 for training materials – – automotive service literature EU agricultural texts security police communication academic curricula SALTMIL, LREC 2006, Sågvall Hein & Weijnitz

Current project • Translation of curricula of Uppsala University from Swedish to English SALTMIL, LREC 2006, Sågvall Hein & Weijnitz

Current development • • initial studies of automatic extraction of grammar rules from text and tree-banks for parsing and generation inspired by – – Megyesi, B. (2002). Data-Driven Syntactic Analysis - Methods and Applications for Swedish. Ph. D. Thesis. Department of Speech, Music and Hearing, KTH, Stockholm, Sweden. Nivre, J. , Hall, J. and Nilsson, J. (2006) Malt. Parser: A Data-Driven Parser-Generator for Dependency Parsing. In Proceedings of LREC. SALTMIL, LREC 2006, Sågvall Hein & Weijnitz

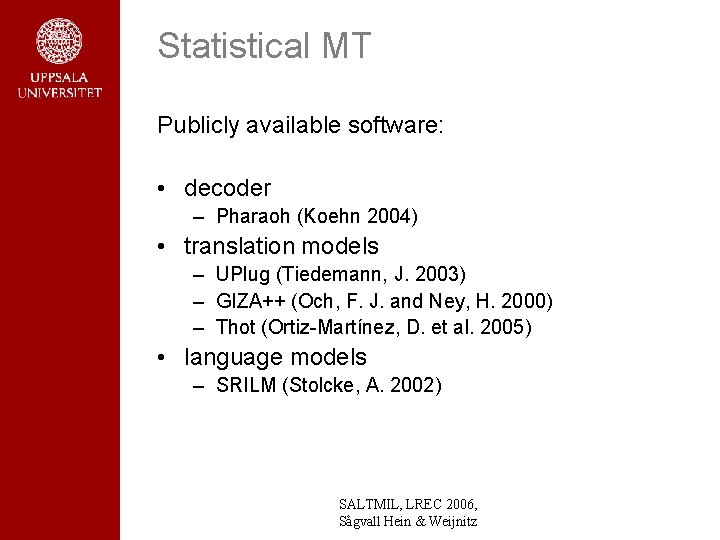

Statistical MT Publicly available software: • decoder – Pharaoh (Koehn 2004) • translation models – UPlug (Tiedemann, J. 2003) – GIZA++ (Och, F. J. and Ney, H. 2000) – Thot (Ortiz-Martínez, D. et al. 2005) • language models – SRILM (Stolcke, A. 2002) SALTMIL, LREC 2006, Sågvall Hein & Weijnitz

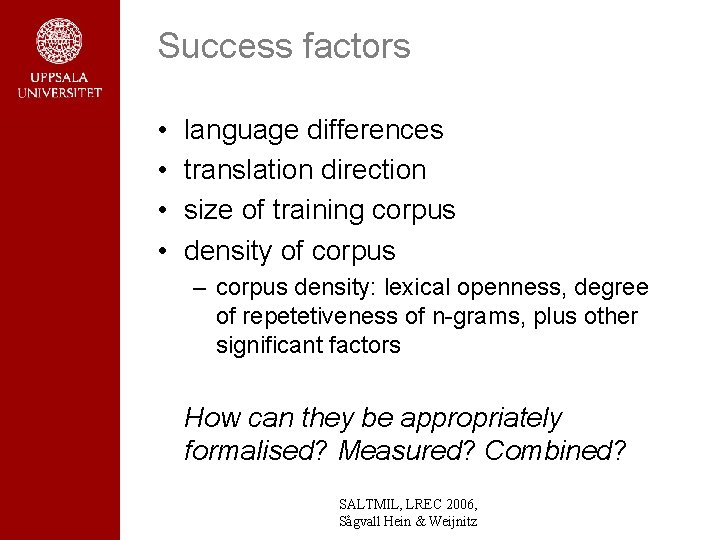

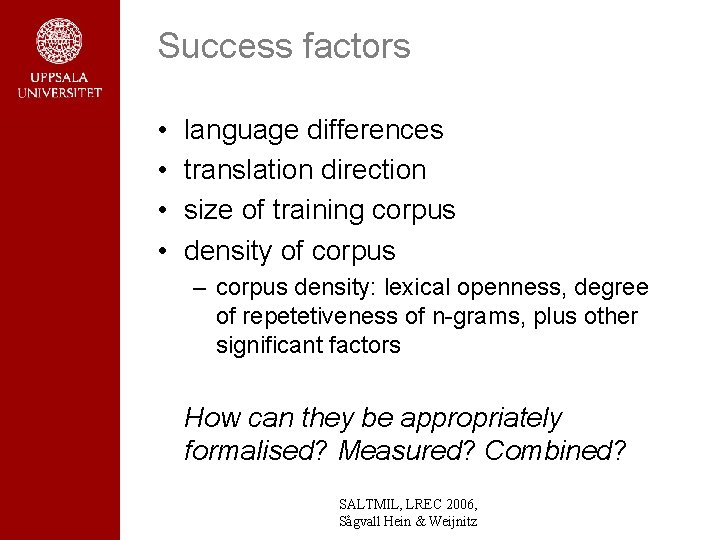

Success factors • • language differences translation direction size of training corpus density of corpus – corpus density: lexical openness, degree of repetetiveness of n-grams, plus other significant factors How can they be appropriately formalised? Measured? Combined? SALTMIL, LREC 2006, Sågvall Hein & Weijnitz

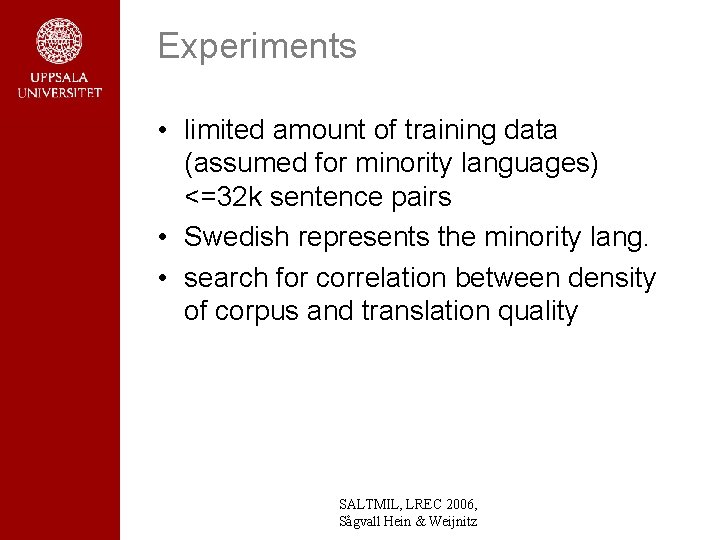

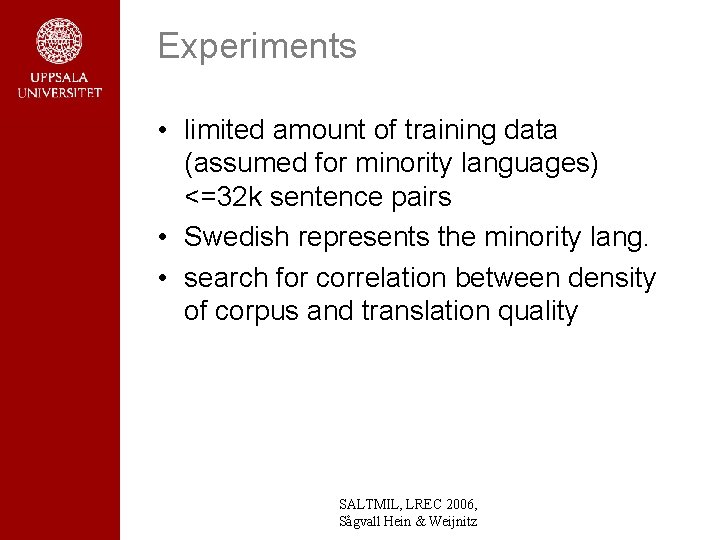

Experiments • limited amount of training data (assumed for minority languages) <=32 k sentence pairs • Swedish represents the minority lang. • search for correlation between density of corpus and translation quality SALTMIL, LREC 2006, Sågvall Hein & Weijnitz

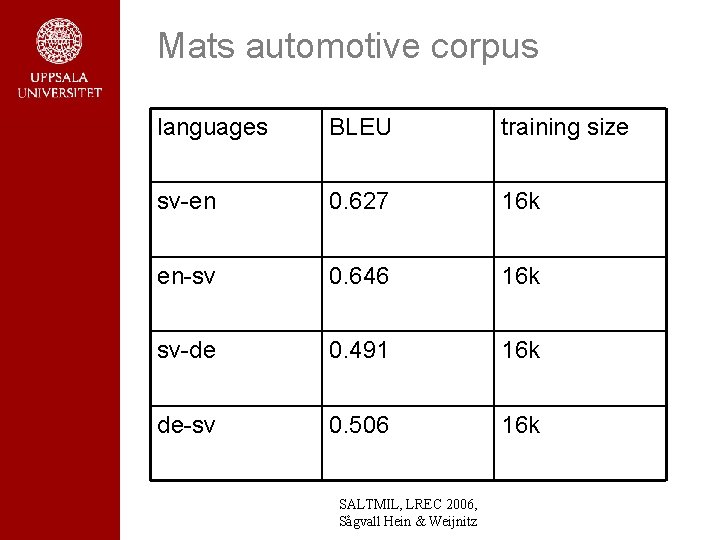

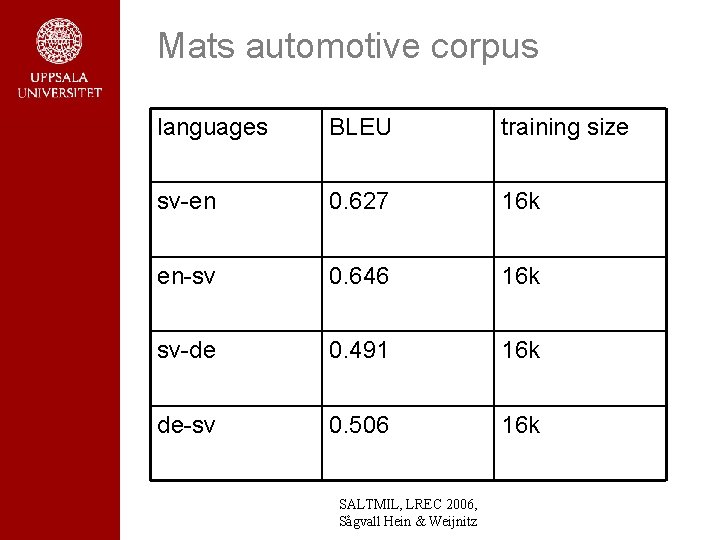

Mats automotive corpus languages BLEU training size sv-en 0. 627 16 k en-sv 0. 646 16 k sv-de 0. 491 16 k de-sv 0. 506 16 k SALTMIL, LREC 2006, Sågvall Hein & Weijnitz

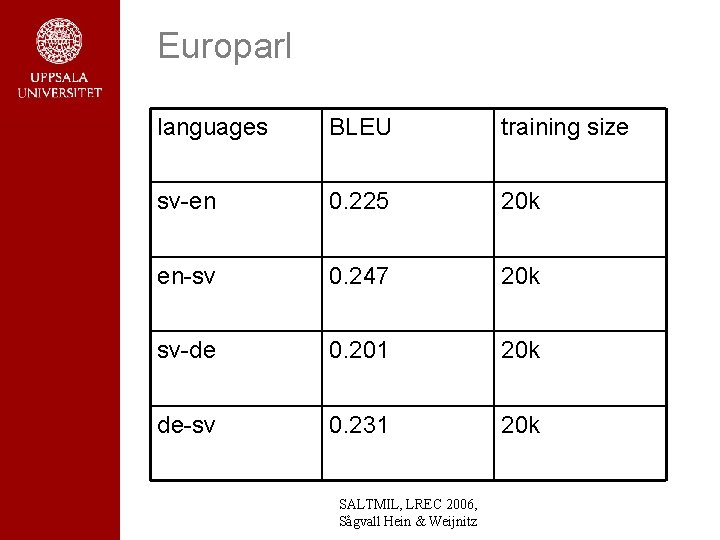

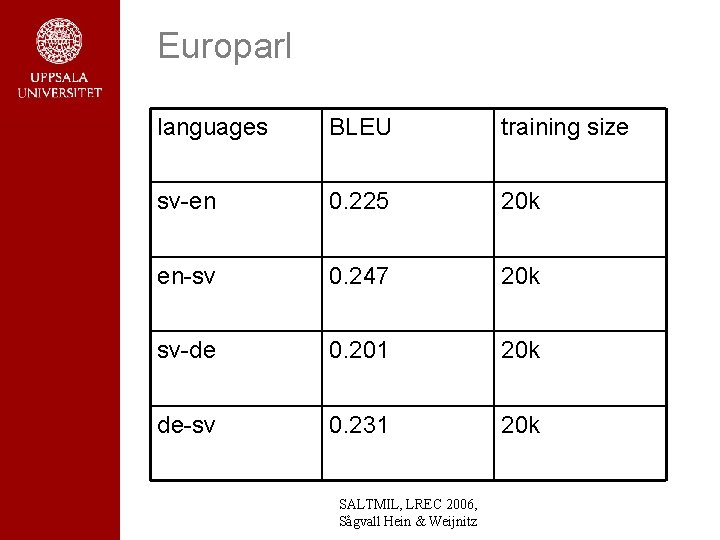

Europarl languages BLEU training size sv-en 0. 225 20 k en-sv 0. 247 20 k sv-de 0. 201 20 k de-sv 0. 231 20 k SALTMIL, LREC 2006, Sågvall Hein & Weijnitz

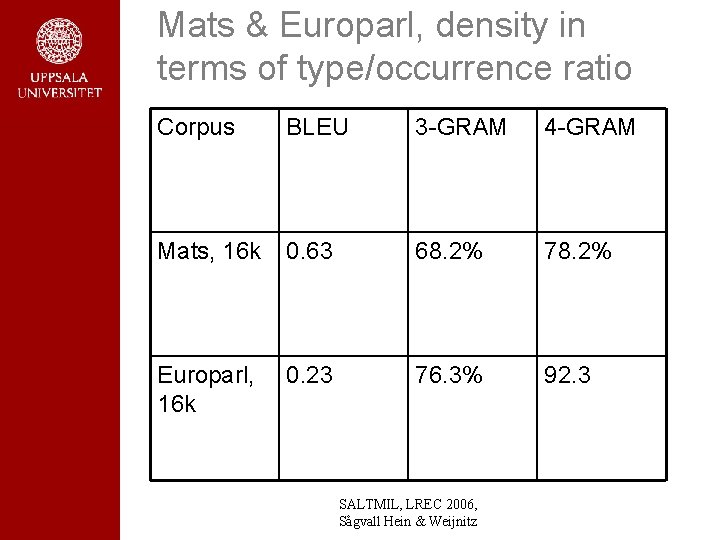

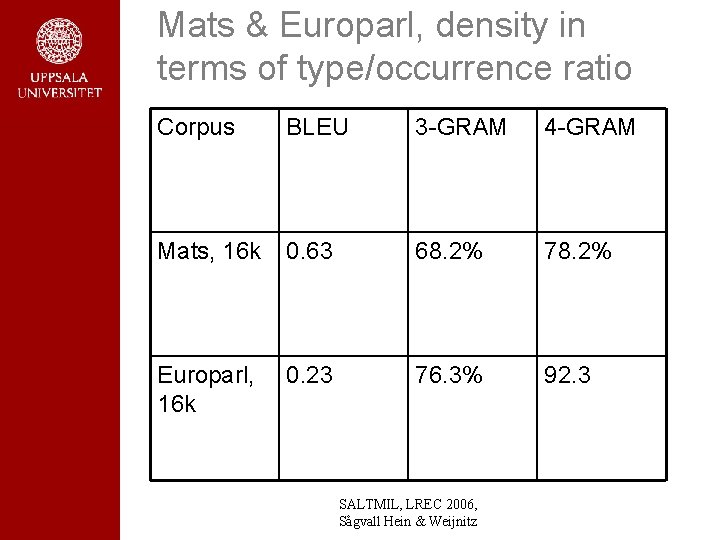

Mats & Europarl, density in terms of type/occurrence ratio Corpus BLEU 3 -GRAM 4 -GRAM Mats, 16 k 0. 63 68. 2% 78. 2% Europarl, 16 k 0. 23 76. 3% 92. 3 SALTMIL, LREC 2006, Sågvall Hein & Weijnitz

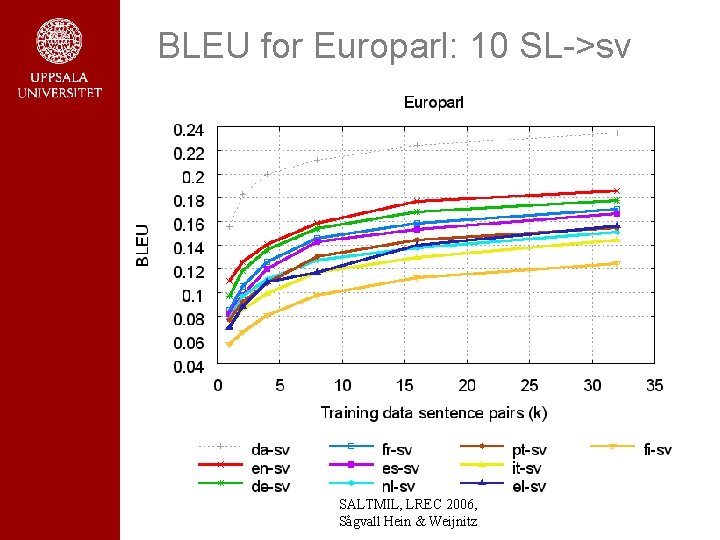

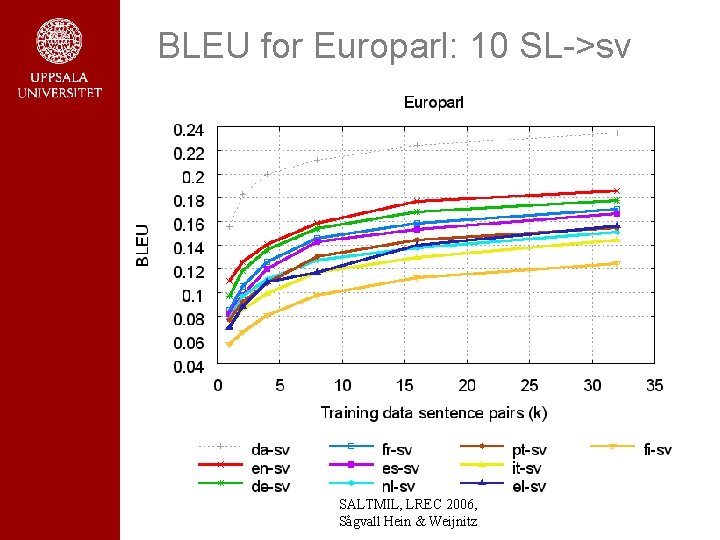

BLEU for Europarl: 10 SL->sv SALTMIL, LREC 2006, Sågvall Hein & Weijnitz

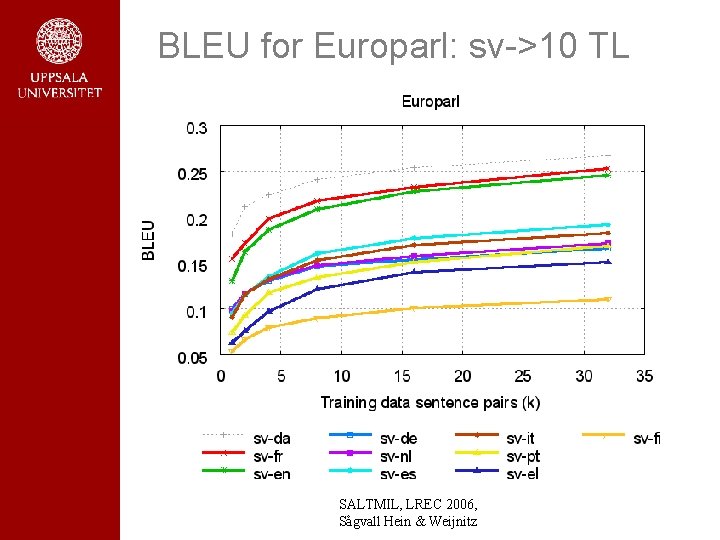

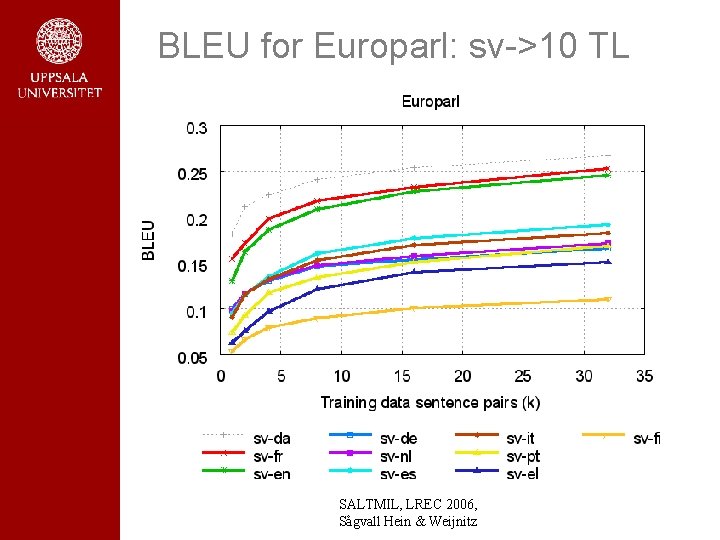

BLEU for Europarl: sv->10 TL SALTMIL, LREC 2006, Sågvall Hein & Weijnitz

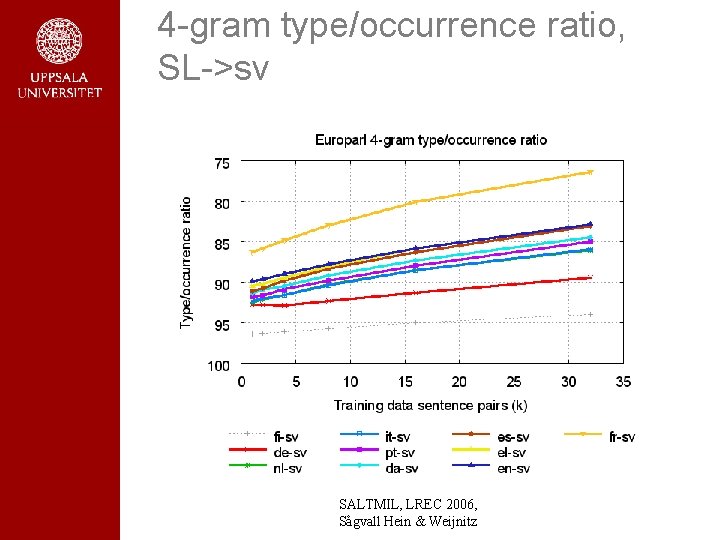

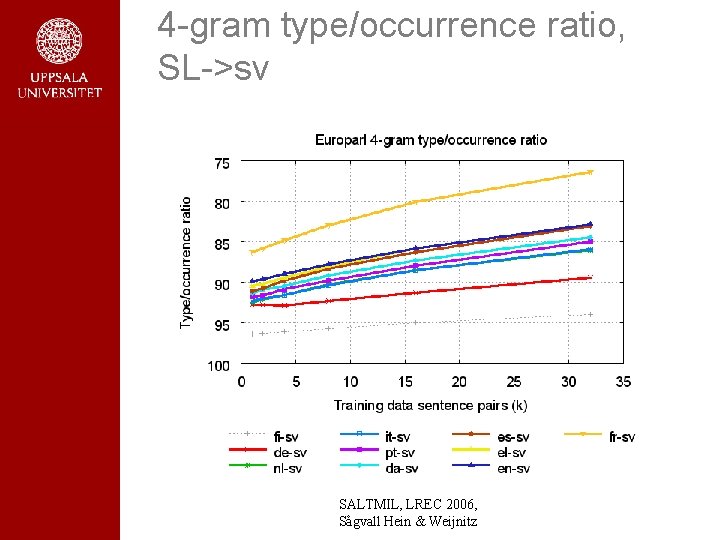

4 -gram type/occurrence ratio, SL->sv SALTMIL, LREC 2006, Sågvall Hein & Weijnitz

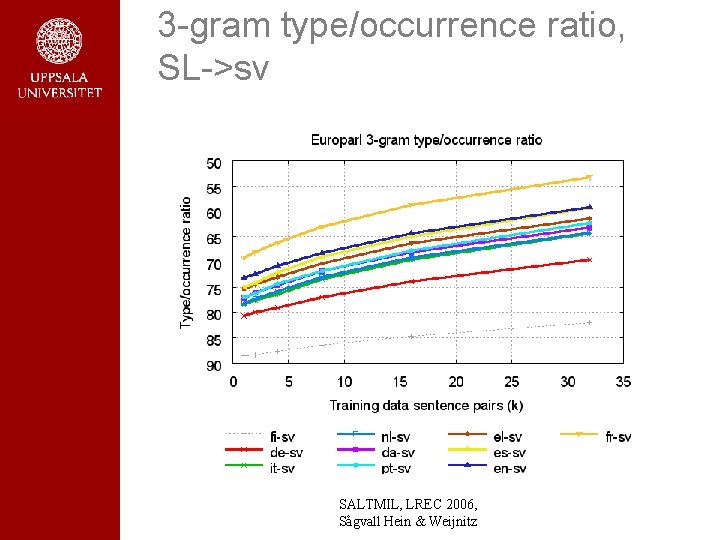

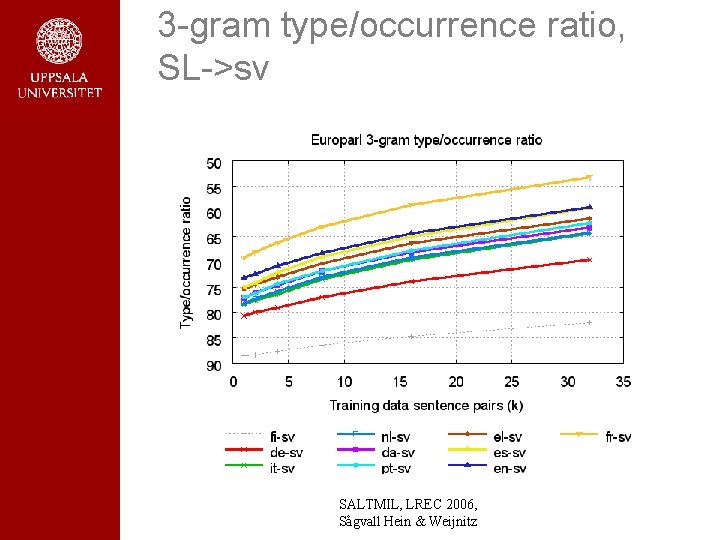

3 -gram type/occurrence ratio, SL->sv SALTMIL, LREC 2006, Sågvall Hein & Weijnitz

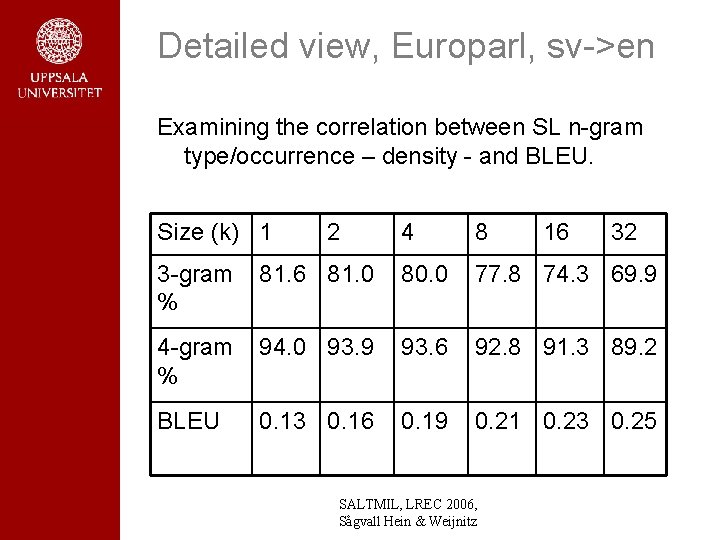

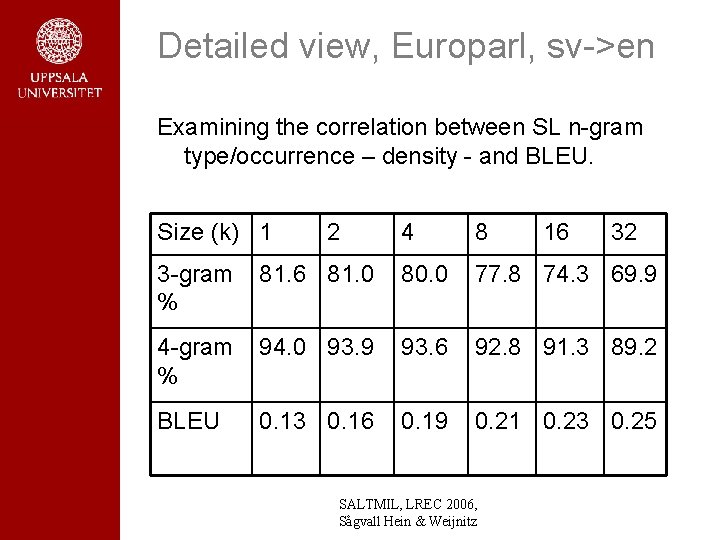

Detailed view, Europarl, sv->en Examining the correlation between SL n-gram type/occurrence – density - and BLEU. Size (k) 1 2 4 8 16 32 3 -gram % 81. 6 81. 0 80. 0 77. 8 74. 3 69. 9 4 -gram % 94. 0 93. 9 93. 6 92. 8 91. 3 89. 2 BLEU 0. 13 0. 16 0. 19 0. 21 0. 23 0. 25 SALTMIL, LREC 2006, Sågvall Hein & Weijnitz

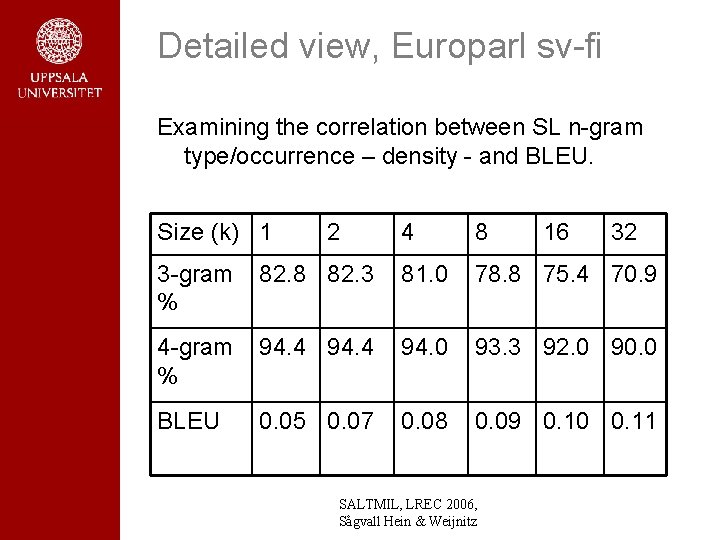

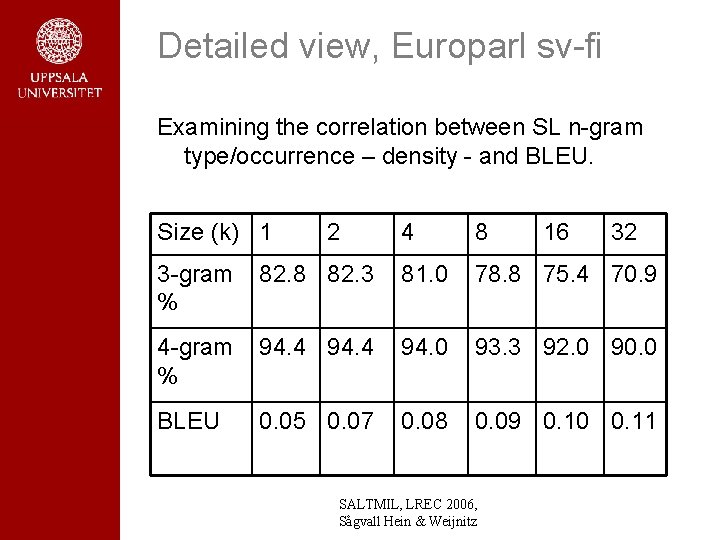

Detailed view, Europarl sv-fi Examining the correlation between SL n-gram type/occurrence – density - and BLEU. Size (k) 1 2 4 8 16 32 3 -gram % 82. 8 82. 3 81. 0 78. 8 75. 4 70. 9 4 -gram % 94. 4 94. 0 93. 3 92. 0 90. 0 BLEU 0. 05 0. 07 0. 08 0. 09 0. 10 0. 11 SALTMIL, LREC 2006, Sågvall Hein & Weijnitz

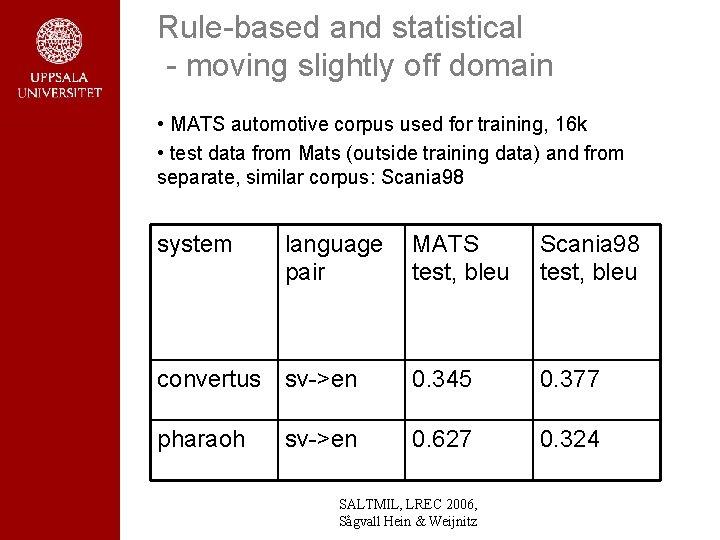

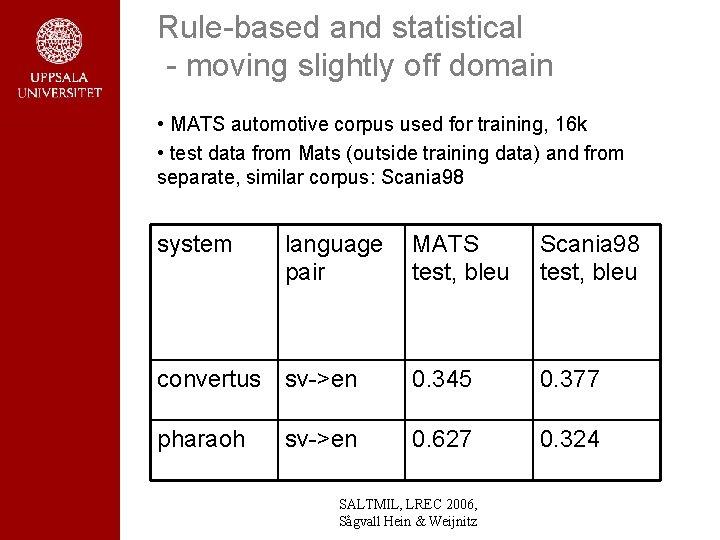

Rule-based and statistical - moving slightly off domain • MATS automotive corpus used for training, 16 k • test data from Mats (outside training data) and from separate, similar corpus: Scania 98 system language pair MATS test, bleu Scania 98 test, bleu convertus sv->en 0. 345 0. 377 pharaoh 0. 627 0. 324 sv->en SALTMIL, LREC 2006, Sågvall Hein & Weijnitz

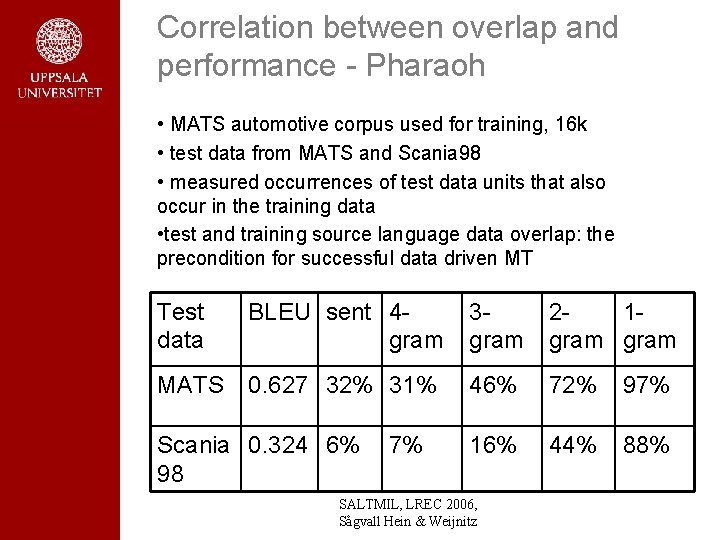

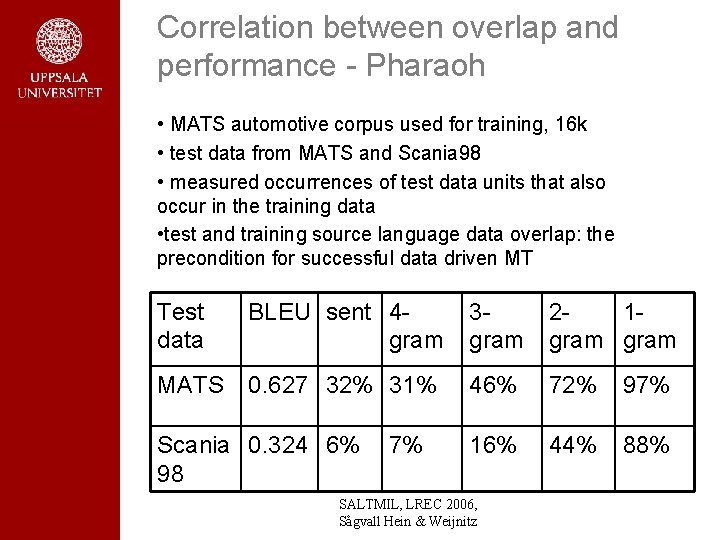

Correlation between overlap and performance - Pharaoh • MATS automotive corpus used for training, 16 k • test data from MATS and Scania 98 • measured occurrences of test data units that also occur in the training data • test and training source language data overlap: the precondition for successful data driven MT Test data BLEU sent 4 gram 3 gram 21 gram MATS 0. 627 32% 31% 46% 72% 97% 16% 44% 88% Scania 0. 324 6% 98 7% SALTMIL, LREC 2006, Sågvall Hein & Weijnitz

Summary • development of Convertus, a robust transferbased system equipped with language resources for sv-en translation in several domains • BLEU measures of smt using publicly available software (Pharaoh) and Europarl – 10 languages, two translation directions, and training intervals of 5 k sentence pairs up to 32 k – data on density of Europarl in terms of overlaps • comparing rbmt and smt using Convertus and Pharaoh • searching for a formal way of quantifying how well a corpus will work for SMT – starting with density of source language SALTMIL, LREC 2006, Sågvall Hein & Weijnitz

Concluding remarks • building a rule-based system from scratch is a major undertaking – customizing existing software is better • smt systems can be built fairly easily using publicly available software – restrictions on commercial use, though • factors influencing quality in smt – size of training corpus – density of source side of training corpus – language differences and translation direction • other important factors (future work) – quality of training corpus, alignment quality, … SALTMIL, LREC 2006, Sågvall Hein & Weijnitz

Concluding remarks (cont. ) • smt versus rbmt – smt seems more sensitive to density than rbmt – error analysis and correction can be linguistically controlled in rbmt as opposed to smt SALTMIL, LREC 2006, Sågvall Hein & Weijnitz