Applied Business Statistics 7 th ed by Ken

Applied Business Statistics, 7 th ed. by Ken Black Chapter 12 Analysis of Categorical Data Copyright 2011 John. Wiley&&Sons, Inc. Copyright 1

Learning Objectives Explain the purpose of regression analysis and the meaning of independent versus dependent variables. Compute the equation of a simple regression line from a sample of data, and interpret the slope and intercept of the equation. Estimate values of Y to forecast outcomes using the regression model. Understand residual analysis in testing the assumptions and in examining the fit underlying the regression line. Compute a standard error of the estimate and interpret its meaning. Compute a coefficient of determination and interpret it. Test hypotheses about the slope of the regression model and interpret the results. Copyright 2011 John Wiley & Sons, Inc. 2

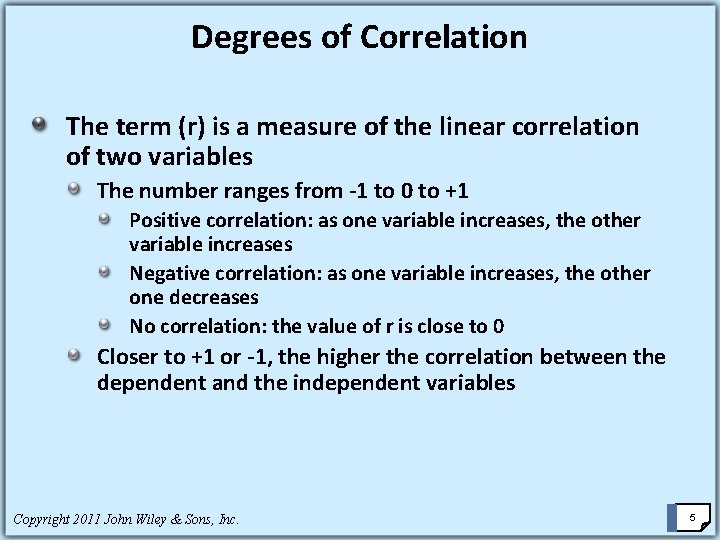

Correlation is a measure of the degree of relatedness of variables. Coefficient of Correlation (r) - applicable only if both variables being analyzed have at least an interval level of data. Copyright 2011 John Wiley & Sons, Inc. 3

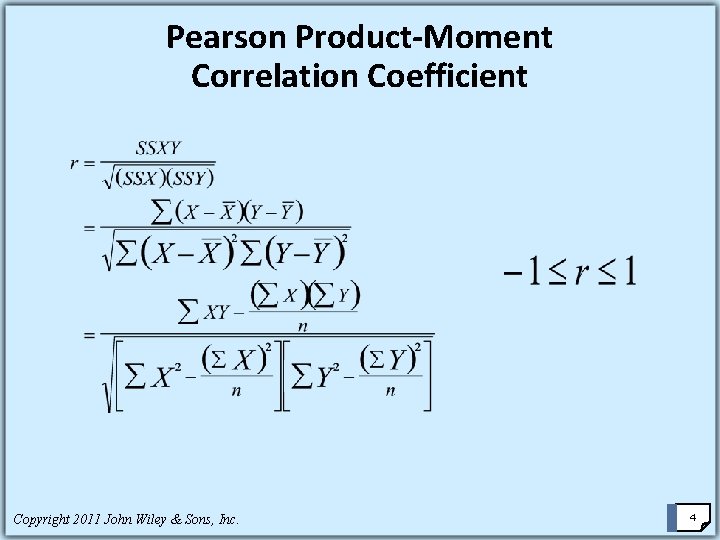

Pearson Product-Moment Correlation Coefficient Copyright 2011 John Wiley & Sons, Inc. 4

Degrees of Correlation The term (r) is a measure of the linear correlation of two variables The number ranges from -1 to 0 to +1 Positive correlation: as one variable increases, the other variable increases Negative correlation: as one variable increases, the other one decreases No correlation: the value of r is close to 0 Closer to +1 or -1, the higher the correlation between the dependent and the independent variables Copyright 2011 John Wiley & Sons, Inc. 5

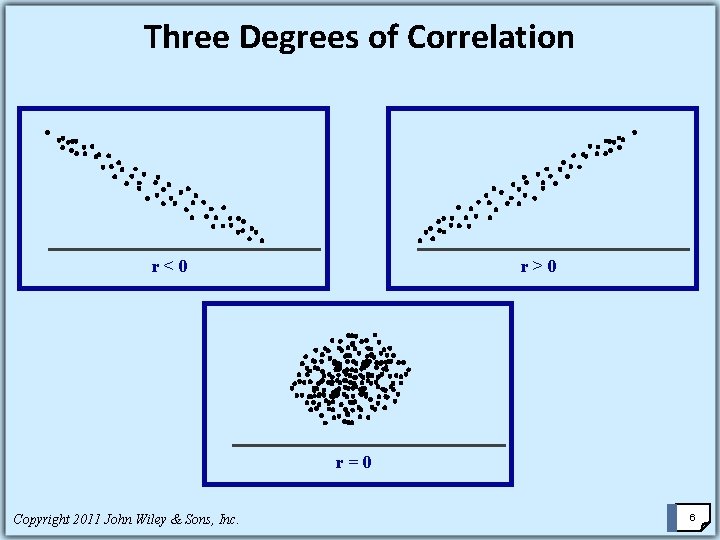

Three Degrees of Correlation r<0 r>0 r=0 Copyright 2011 John Wiley & Sons, Inc. 6

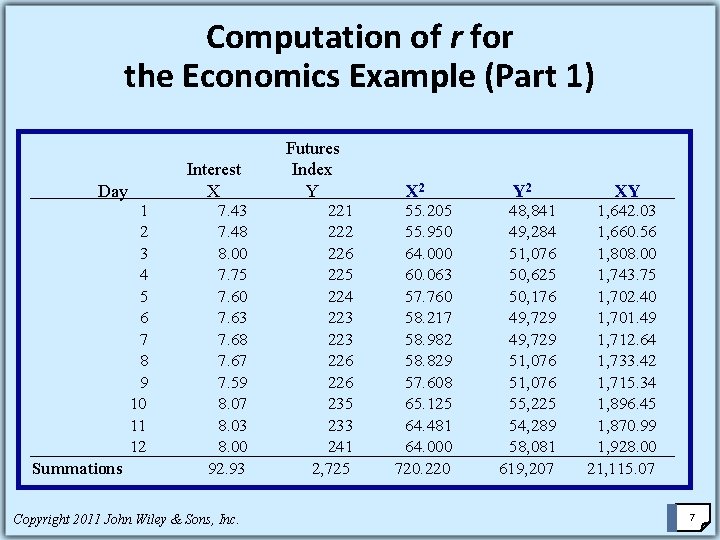

Computation of r for the Economics Example (Part 1) Day Summations 1 2 3 4 5 6 7 8 9 10 11 12 Interest X 7. 43 7. 48 8. 00 7. 75 7. 60 7. 63 7. 68 7. 67 7. 59 8. 07 8. 03 8. 00 92. 93 Copyright 2011 John Wiley & Sons, Inc. Futures Index Y 221 222 226 225 224 223 226 235 233 241 2, 725 X 2 55. 205 55. 950 64. 000 60. 063 57. 760 58. 217 58. 982 58. 829 57. 608 65. 125 64. 481 64. 000 720. 220 Y 2 48, 841 49, 284 51, 076 50, 625 50, 176 49, 729 51, 076 55, 225 54, 289 58, 081 619, 207 XY 1, 642. 03 1, 660. 56 1, 808. 00 1, 743. 75 1, 702. 40 1, 701. 49 1, 712. 64 1, 733. 42 1, 715. 34 1, 896. 45 1, 870. 99 1, 928. 00 21, 115. 07 7

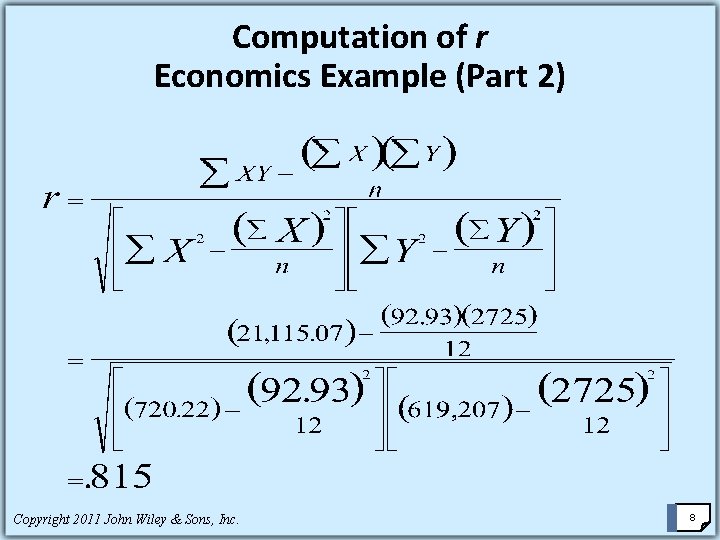

Computation of r Economics Example (Part 2) Copyright 2011 John Wiley & Sons, Inc. 8

Computation of r Economics Example (Part 2) Is r = 0. 815 high or low? What can we conclude about the variables of interest? Copyright 2011 John Wiley & Sons, Inc. 9

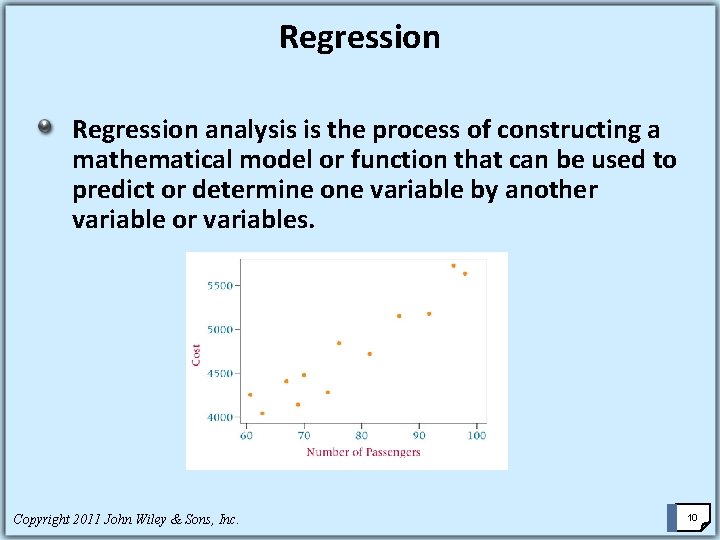

Regression analysis is the process of constructing a mathematical model or function that can be used to predict or determine one variable by another variable or variables. Copyright 2011 John Wiley & Sons, Inc. 10

Simple Regression Analysis Bivariate (two variables) linear regression -- the most elementary regression model dependent variable, the variable to be predicted, usually called Y independent variable, the predictor or explanatory variable, usually called X Usually the first step in this analysis is to construct a scatter plot of the data Nonlinear relationships and regression models with more than one independent variable can be explored by using multiple regression models Copyright 2011 John Wiley & Sons, Inc. 11

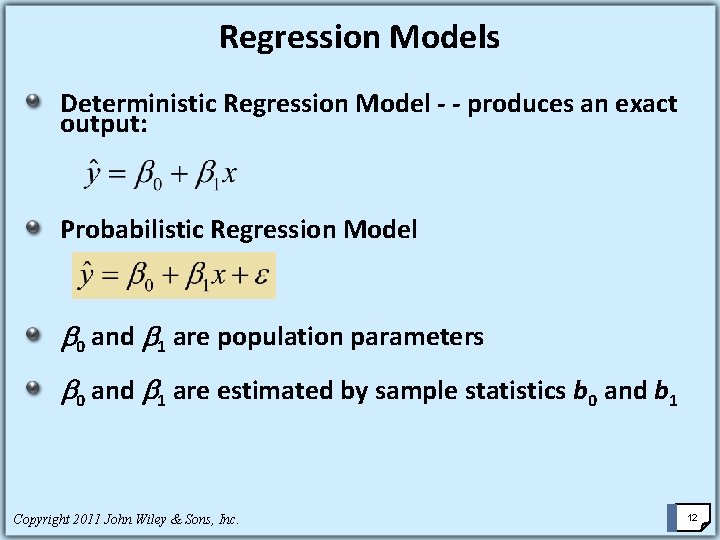

Regression Models Deterministic Regression Model - - produces an exact output: Probabilistic Regression Model 0 and 1 are population parameters 0 and 1 are estimated by sample statistics b 0 and b 1 Copyright 2011 John Wiley & Sons, Inc. 12

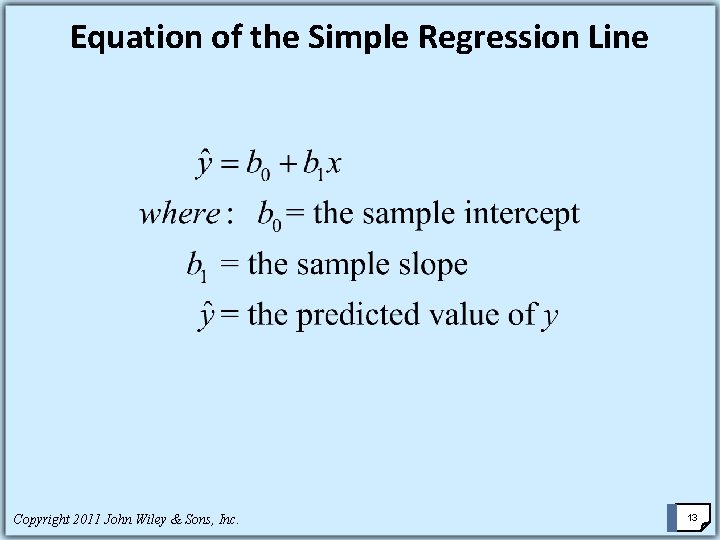

Equation of the Simple Regression Line Copyright 2011 John Wiley & Sons, Inc. 13

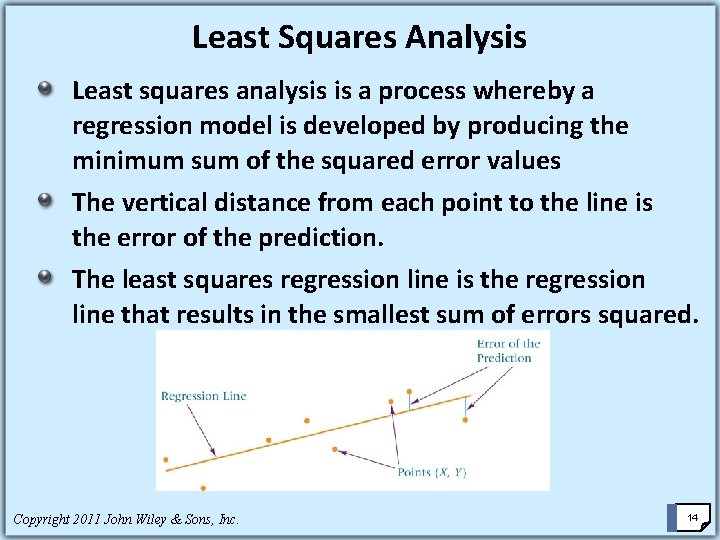

Least Squares Analysis Least squares analysis is a process whereby a regression model is developed by producing the minimum sum of the squared error values The vertical distance from each point to the line is the error of the prediction. The least squares regression line is the regression line that results in the smallest sum of errors squared. Copyright 2011 John Wiley & Sons, Inc. 14

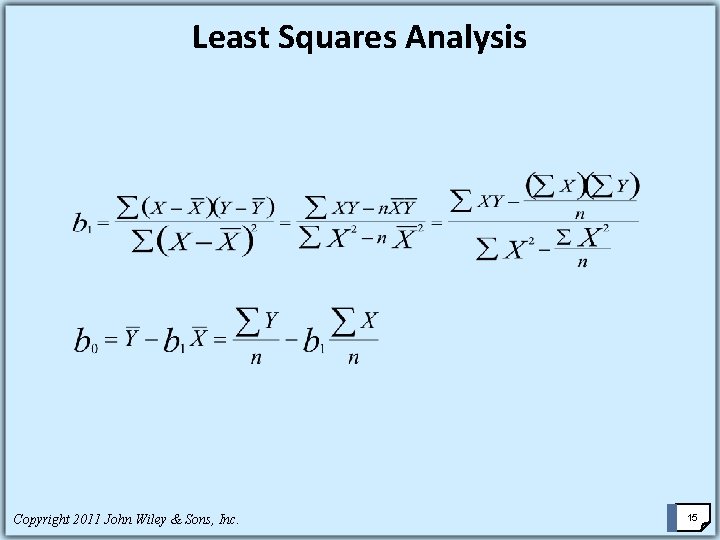

Least Squares Analysis Copyright 2011 John Wiley & Sons, Inc. 15

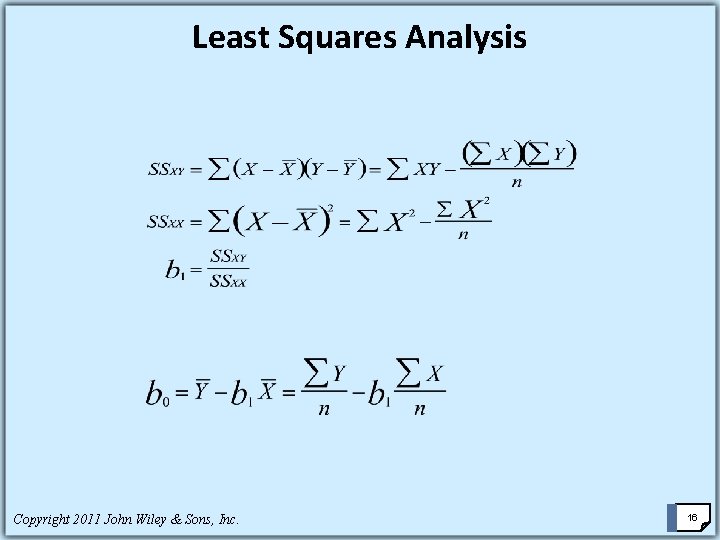

Least Squares Analysis Copyright 2011 John Wiley & Sons, Inc. 16

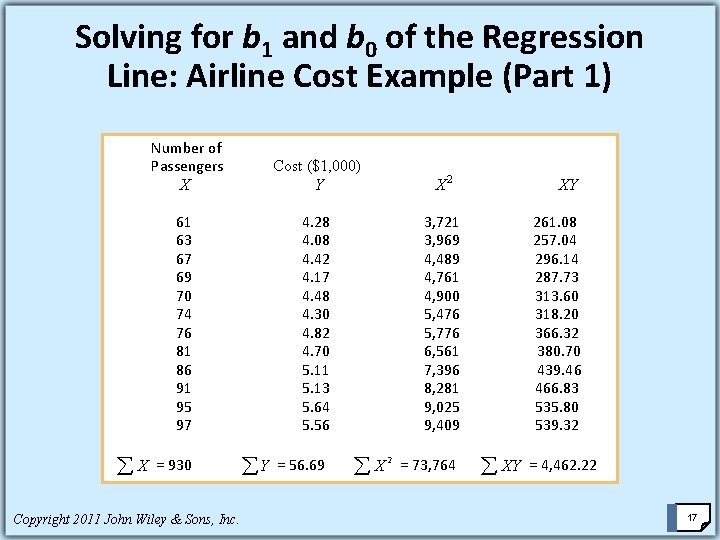

Solving for b 1 and b 0 of the Regression Line: Airline Cost Example (Part 1) Number of Passengers X 61 63 67 69 70 74 76 81 86 91 95 97 Cost ($1, 000) Y XY 4. 28 3, 721 261. 08 4. 08 3, 969 257. 04 296. 14 4. 42 4, 489 4. 17 4, 761 287. 73 4. 48 4, 900 313. 60 4. 30 5, 476 318. 20 4. 82 5, 776 366. 32 4. 70 6, 561 380. 70 5. 11 7, 396 439. 46 5. 13 8, 281 466. 83 5. 64 9, 025 535. 80 5. 56 9, 409 539. 32 å X = 930 å Y = 56. 69 å X Copyright 2011 John Wiley & Sons, Inc. X 2 2 = 73, 764 å XY = 4, 462. 22 17

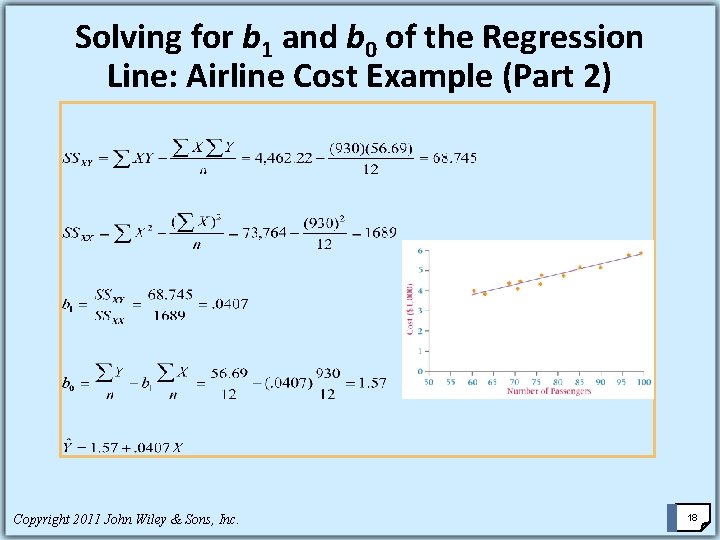

Solving for b 1 and b 0 of the Regression Line: Airline Cost Example (Part 2) Copyright 2011 John Wiley & Sons, Inc. 18

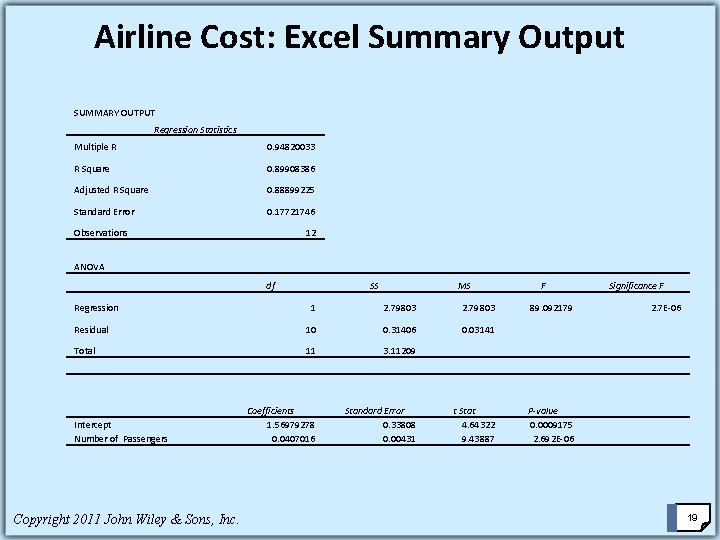

Airline Cost: Excel Summary Output SUMMARY OUTPUT Regression Statistics Multiple R 0. 94820033 R Square 0. 89908386 Adjusted R Square 0. 88899225 Standard Error 0. 17721746 Observations 12 ANOVA Regression df SS MS 1 2. 79803 Residual 10 0. 31406 0. 03141 Total 11 3. 11209 Coefficients 1. 56979278 0. 0407016 Standard Error 0. 33808 0. 00431 Intercept Number of Passengers Copyright 2011 John Wiley & Sons, Inc. F 89. 092179 t Stat 4. 64322 9. 43887 Significance F 2. 7 E-06 P-value 0. 0009175 2. 692 E-06 19

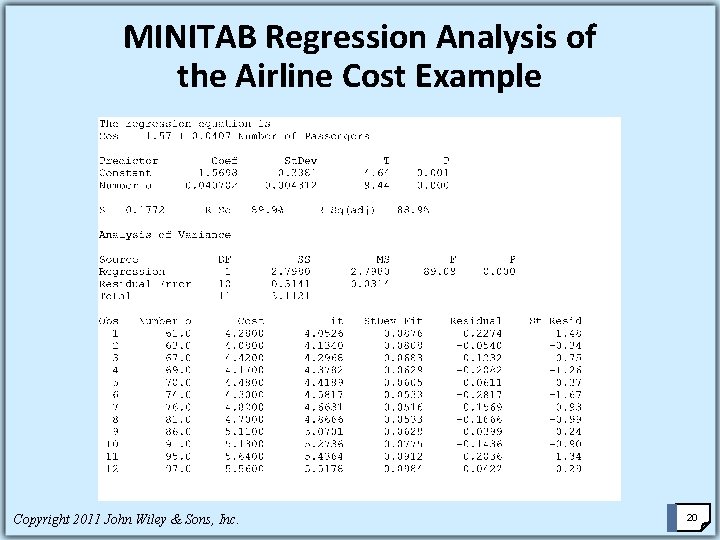

MINITAB Regression Analysis of the Airline Cost Example Copyright 2011 John Wiley & Sons, Inc. 20

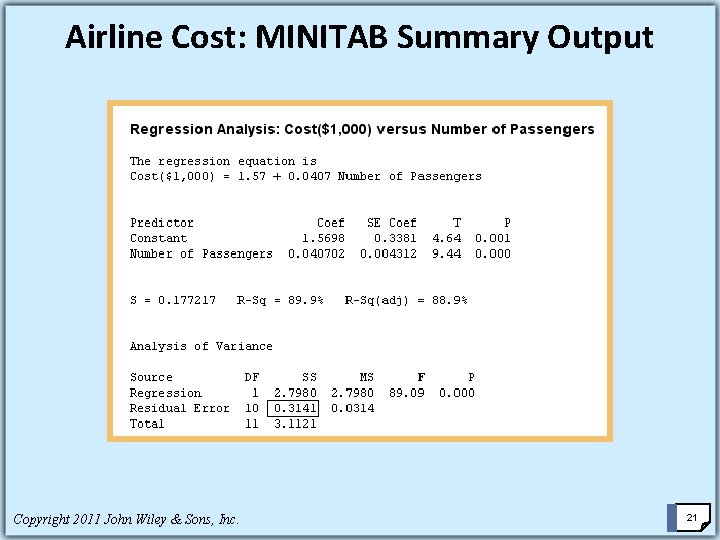

Airline Cost: MINITAB Summary Output Copyright 2011 John Wiley & Sons, Inc. 21

Residual Analysis Residual is the difference between the actual y values and the predicted values. Reflects the error of the regression line at any given point. Copyright 2011 John Wiley & Sons, Inc. 22

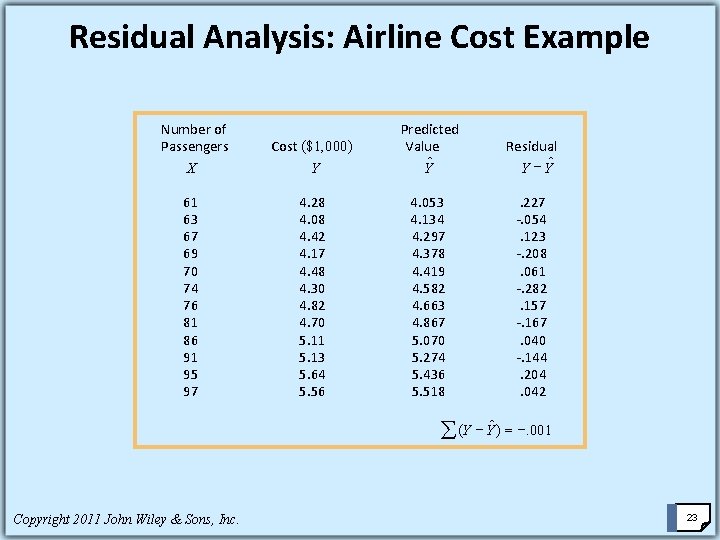

Residual Analysis: Airline Cost Example Number of Passengers X 61 63 67 69 70 74 76 81 86 91 95 97 Cost ($1, 000) Y Predicted Value Yˆ Residual Y - Yˆ 4. 28 4. 053 . 227 4. 08 4. 134 -. 054 4. 42 4. 297 . 123 4. 17 4. 378 -. 208 4. 419 . 061 4. 30 4. 582 -. 282 4. 663 . 157 4. 70 4. 867 -. 167 5. 11 5. 070 . 040 5. 13 5. 274 -. 144 5. 64 5. 436 . 204 5. 56 5. 518 . 042 å (Y - Yˆ ) = -. 001 Copyright 2011 John Wiley & Sons, Inc. 23

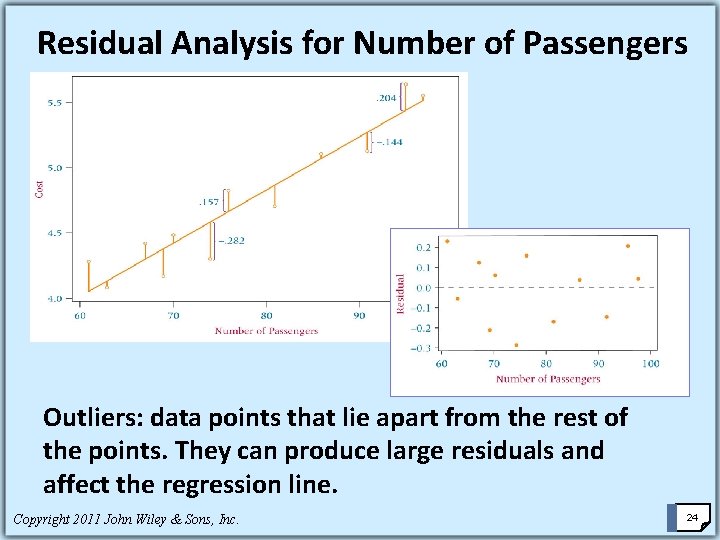

Residual Analysis for Number of Passengers Outliers: data points that lie apart from the rest of the points. They can produce large residuals and affect the regression line. Copyright 2011 John Wiley & Sons, Inc. 24

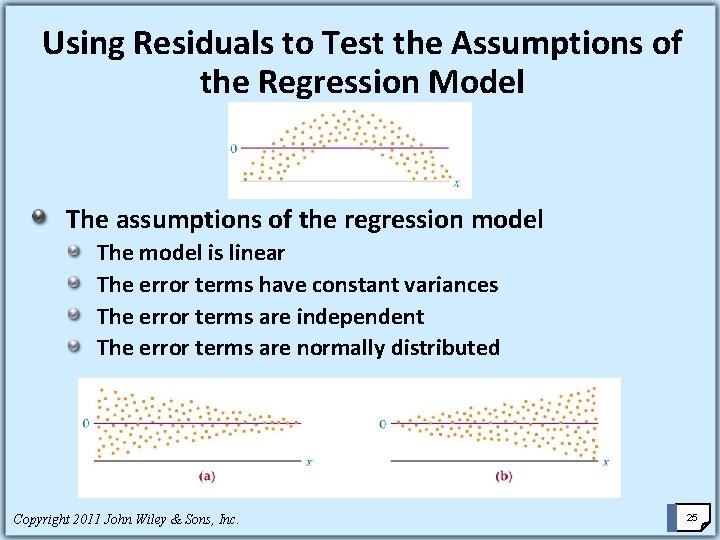

Using Residuals to Test the Assumptions of the Regression Model The assumptions of the regression model The model is linear The error terms have constant variances The error terms are independent The error terms are normally distributed Copyright 2011 John Wiley & Sons, Inc. 25

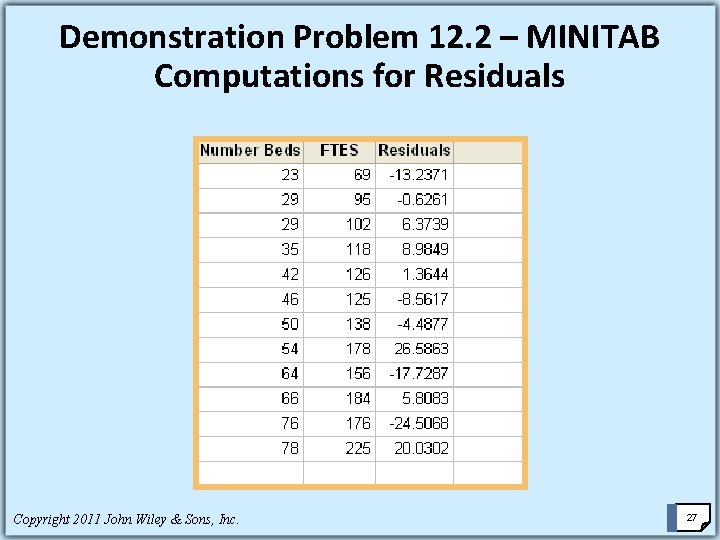

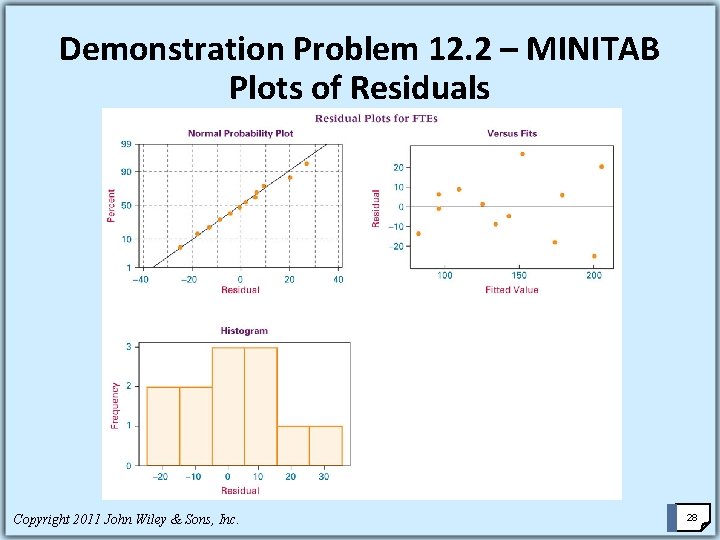

Demonstration Problem 12. 2 Compute the residuals for Demonstration Problem 12. 1 in which a regression model was developed to predict the number of full-time equivalent workers (FTEs) by the number of beds in a hospital. Analyze the residuals by using MINITAB graphic diagnostics. Copyright 2011 John Wiley & Sons, Inc. 26

Demonstration Problem 12. 2 – MINITAB Computations for Residuals Copyright 2011 John Wiley & Sons, Inc. 27

Demonstration Problem 12. 2 – MINITAB Plots of Residuals Copyright 2011 John Wiley & Sons, Inc. 28

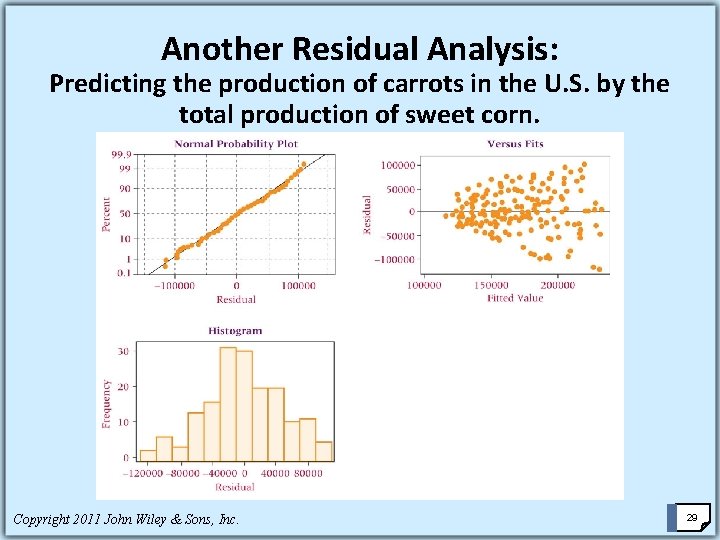

Another Residual Analysis: Predicting the production of carrots in the U. S. by the total production of sweet corn. Copyright 2011 John Wiley & Sons, Inc. 29

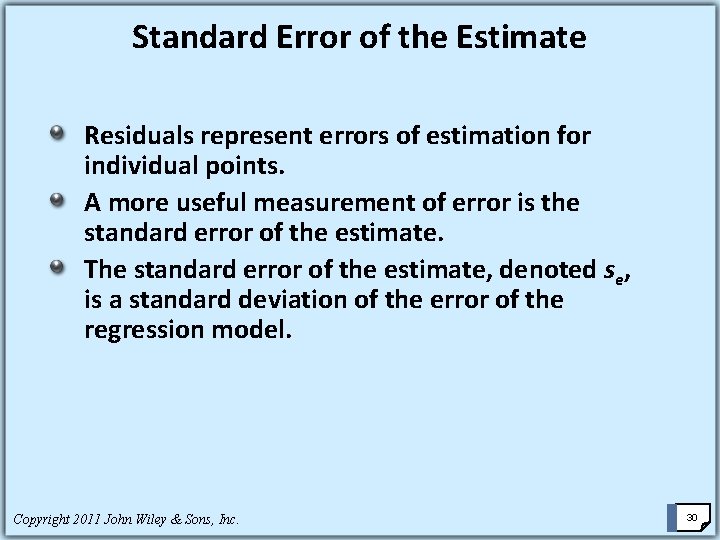

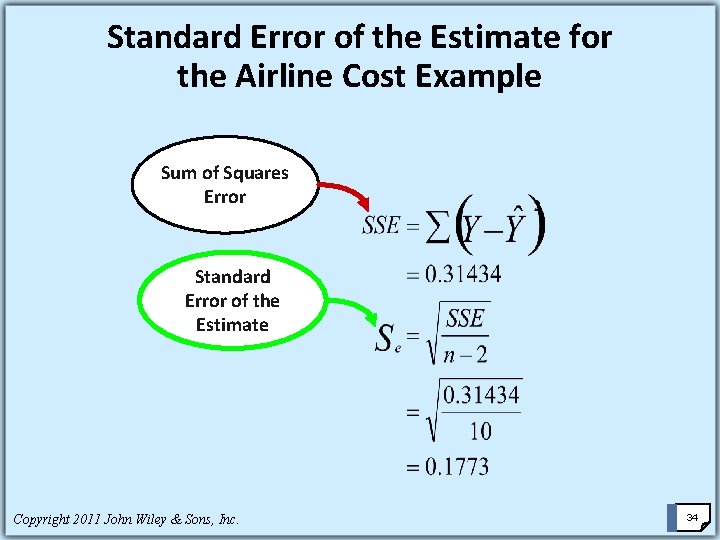

Standard Error of the Estimate Residuals represent errors of estimation for individual points. A more useful measurement of error is the standard error of the estimate. The standard error of the estimate, denoted se, is a standard deviation of the error of the regression model. Copyright 2011 John Wiley & Sons, Inc. 30

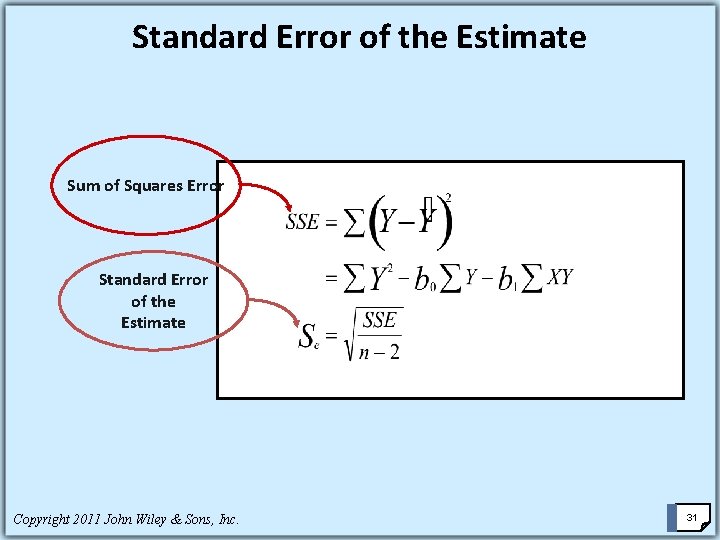

Standard Error of the Estimate Sum of Squares Error Standard Error of the Estimate Copyright 2011 John Wiley & Sons, Inc. 31

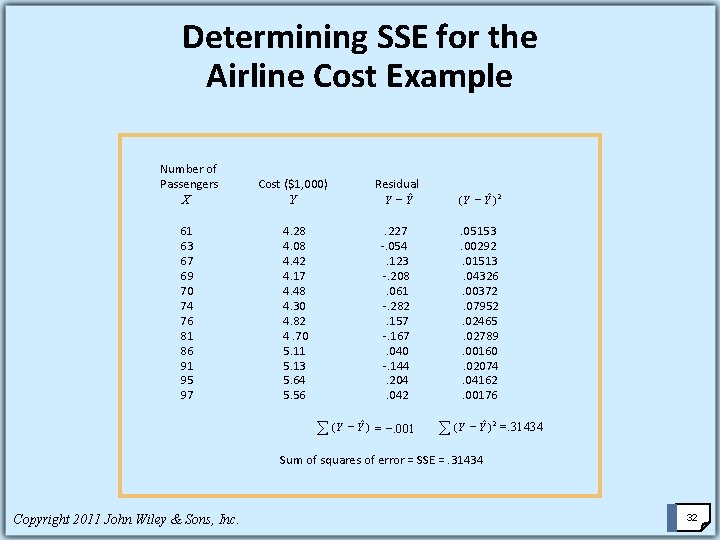

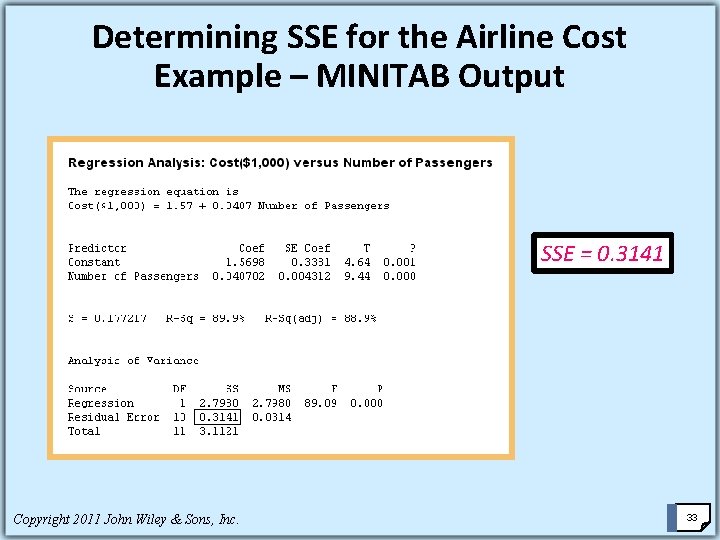

Determining SSE for the Airline Cost Example Number of Passengers X Cost ($1, 000) Residual Y - Yˆ Y 61 63 67 69 70 74 76 81 86 91 95 97 (Y - Yˆ ) 2 4. 28 . 227 . 05153 4. 08 -. 054 . 00292 4. 42 . 123 . 01513 4. 17 -. 208 . 04326 4. 48 . 061 . 00372 4. 30 -. 282 . 07952 4. 82 . 157 . 02465 4. 70 -. 167 . 02789 5. 11 . 040 . 00160 5. 13 -. 144 . 02074 5. 64 . 204 . 04162 5. 56 . 042 . 00176 å (Y - Yˆ ) = -. 001 å (Y - Yˆ ) 2 =. 31434 Sum of squares of error = SSE =. 31434 Copyright 2011 John Wiley & Sons, Inc. 32

Determining SSE for the Airline Cost Example – MINITAB Output SSE = 0. 3141 Copyright 2011 John Wiley & Sons, Inc. 33

Standard Error of the Estimate for the Airline Cost Example Sum of Squares Error Standard Error of the Estimate Copyright 2011 John Wiley & Sons, Inc. 34

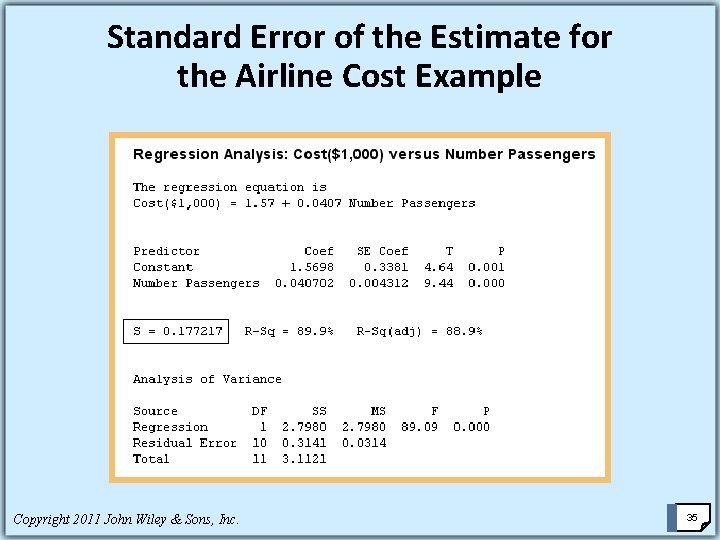

Standard Error of the Estimate for the Airline Cost Example Copyright 2011 John Wiley & Sons, Inc. 35

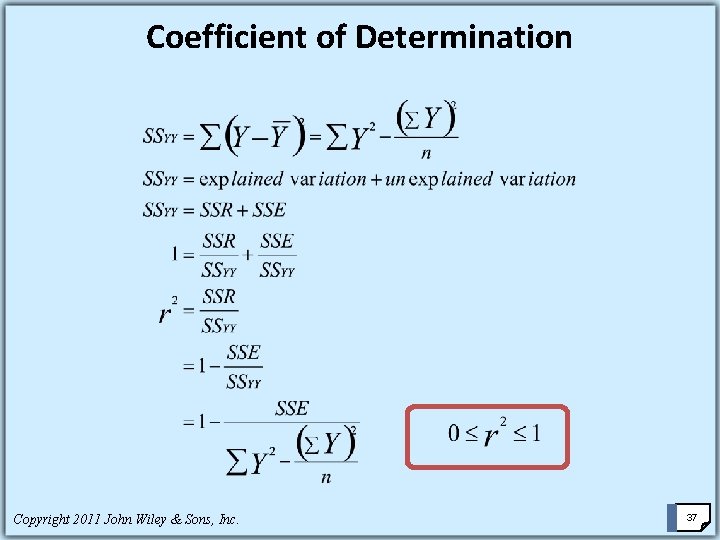

Coefficient of Determination The coefficient of determination is the proportion of variability of the dependent variable (y) accounted for or explained by the independent variable (x) The coefficient of determination ranges from 0 to 1. An r 2 of zero means that the predictor accounts for none of the variability of the dependent variable and that there is no regression prediction of y by x. An r 2 of 1 means perfect prediction of y by x and that 100% of the variability of y is accounted for by x. Copyright 2011 John Wiley & Sons, Inc. 36

Coefficient of Determination Copyright 2011 John Wiley & Sons, Inc. 37

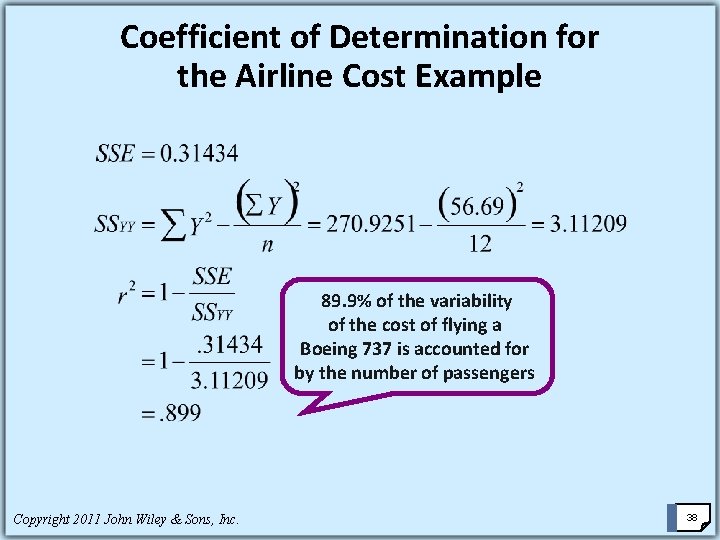

Coefficient of Determination for the Airline Cost Example 89. 9% of the variability of the cost of flying a Boeing 737 is accounted for by the number of passengers. Copyright 2011 John Wiley & Sons, Inc. 38

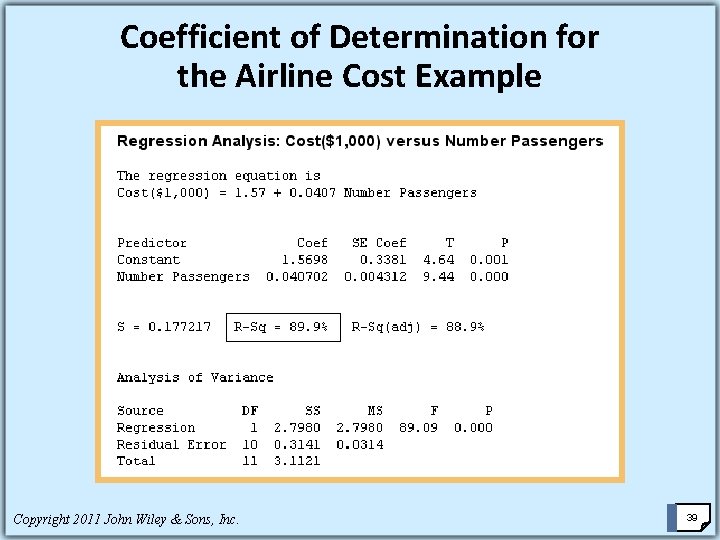

Coefficient of Determination for the Airline Cost Example Copyright 2011 John Wiley & Sons, Inc. 39

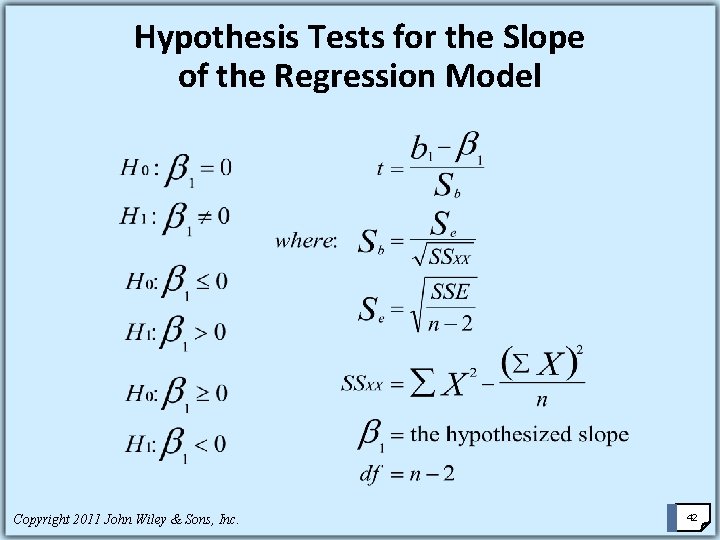

Hypothesis Tests for the Slope of the Regression Model A hypothesis test can be conducted on the sample slope of the regression model to determine whether the population slope is significantly different from zero. Using this non-regression model (the model) as a worst case, the researcher can analyze the regression line to determine whether it adds a more significant amount of predictability of y than does the model. Copyright 2011 John Wiley & Sons, Inc. 40

Hypothesis Tests for the Slope of the Regression Model As the slope of the regression line diverges from zero, the regression model is adding predictability that the line is not generating. Testing the slope of the regression line to determine whether the slope is different from zero is important. If the slope is not different from zero, the regression line is doing nothing more than the average line of y predicting y. Copyright 2011 John Wiley & Sons, Inc. 41

Hypothesis Tests for the Slope of the Regression Model Copyright 2011 John Wiley & Sons, Inc. 42

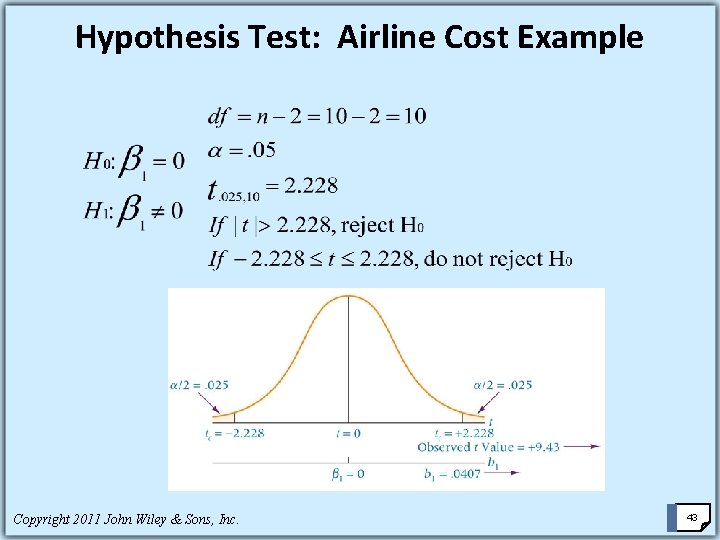

Hypothesis Test: Airline Cost Example Copyright 2011 John Wiley & Sons, Inc. 43

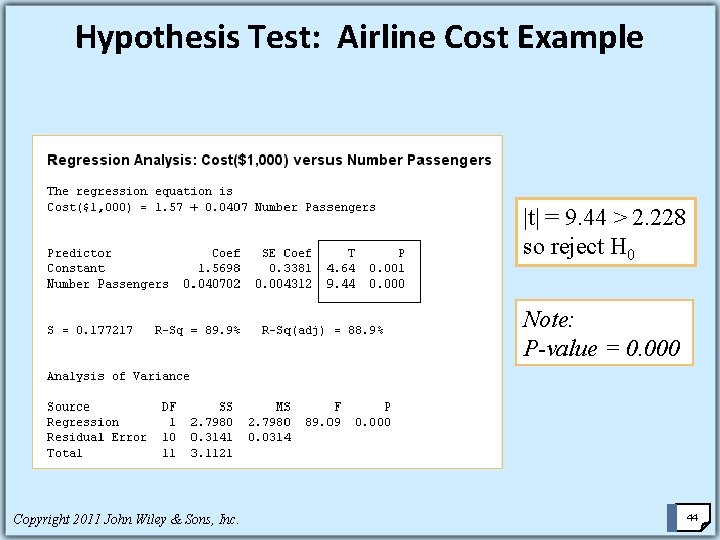

Hypothesis Test: Airline Cost Example |t| = 9. 44 > 2. 228 so reject H 0 Note: P-value = 0. 000 Copyright 2011 John Wiley & Sons, Inc. 44

Hypothesis Test: Airline Cost Example The t value calculated from the sample slope falls in the rejection region and the p-value is. 00000014. The null hypothesis that the population slope is zero is rejected. This linear regression model is adding significantly more predictive information to the model (no regression). Copyright 2011 John Wiley & Sons, Inc. 45

Testing the Overall Model It is common in regression analysis to compute an F test to determine the overall significance of the model. In multiple regression, this test determines whether at least one of the regression coefficients (from multiple predictors) is different from zero. Simple regression provides only one predictor and only one regression coefficient to test. Because the regression coefficient is the slope of the regression line, the F test for overall significance is testing the same thing as the t test in simple regression Copyright 2011 John Wiley & Sons, Inc. 46

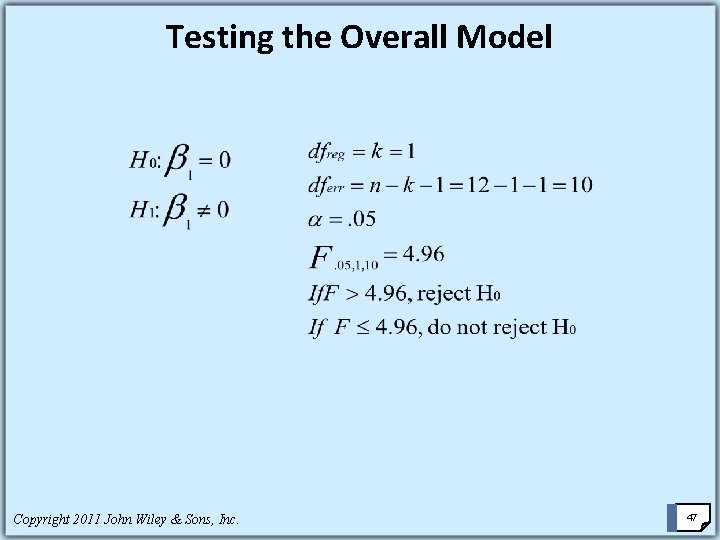

Testing the Overall Model Copyright 2011 John Wiley & Sons, Inc. 47

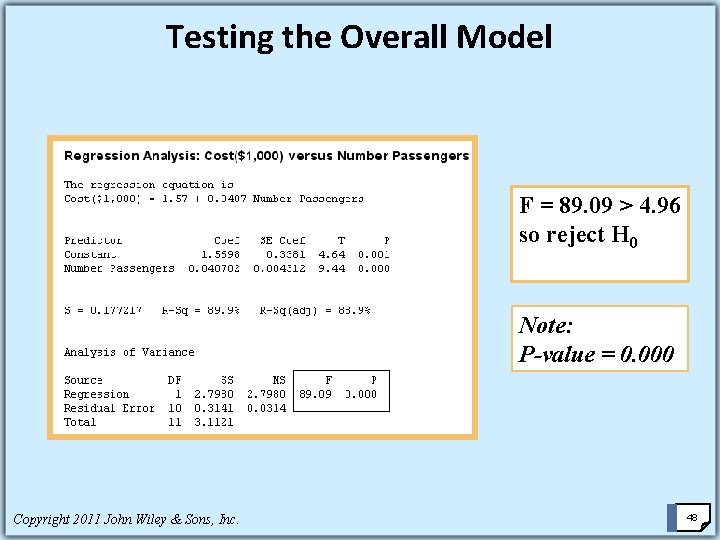

Testing the Overall Model F = 89. 09 > 4. 96 so reject H 0 Note: P-value = 0. 000 Copyright 2011 John Wiley & Sons, Inc. 48

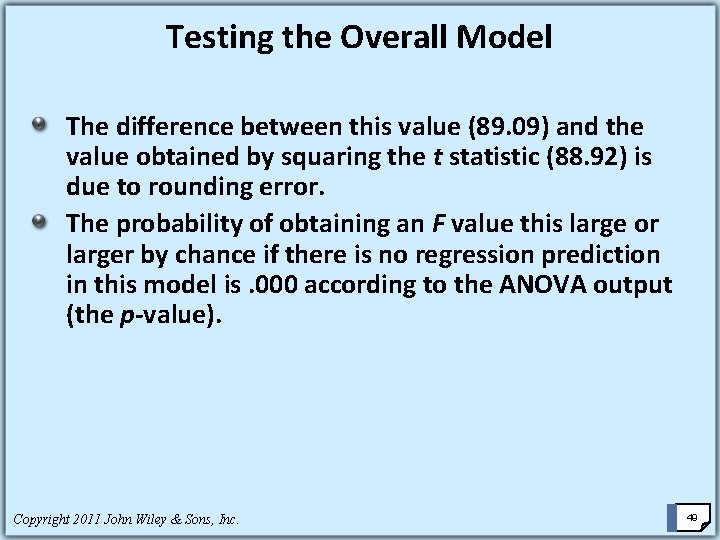

Testing the Overall Model The difference between this value (89. 09) and the value obtained by squaring the t statistic (88. 92) is due to rounding error. The probability of obtaining an F value this large or larger by chance if there is no regression prediction in this model is. 000 according to the ANOVA output (the p-value). Copyright 2011 John Wiley & Sons, Inc. 49

Estimation One of the main uses of regression analysis is as a prediction tool. If the regression function is a good model, the researcher can use the regression equation to determine values of the dependent variable from various values of the independent variable. In simple regression analysis, a point estimate prediction of y can be made by substituting The associated value of x into the regression equation and solving for y. Copyright 2011 John Wiley & Sons, Inc. 50

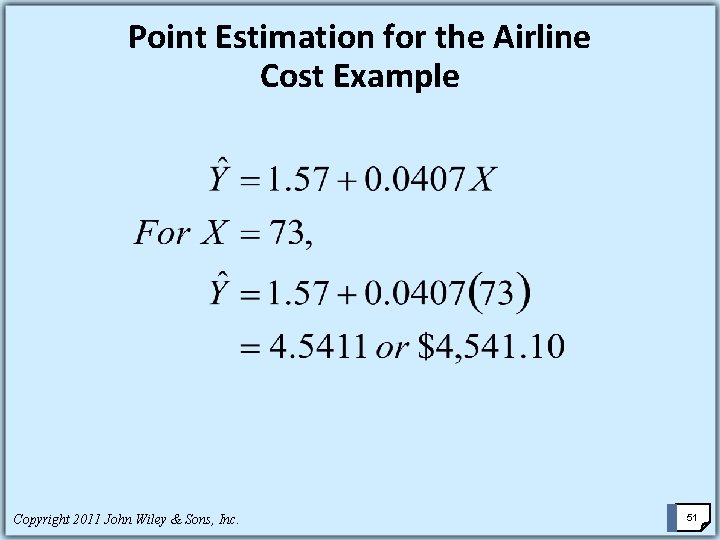

Point Estimation for the Airline Cost Example Copyright 2011 John Wiley & Sons, Inc. 51

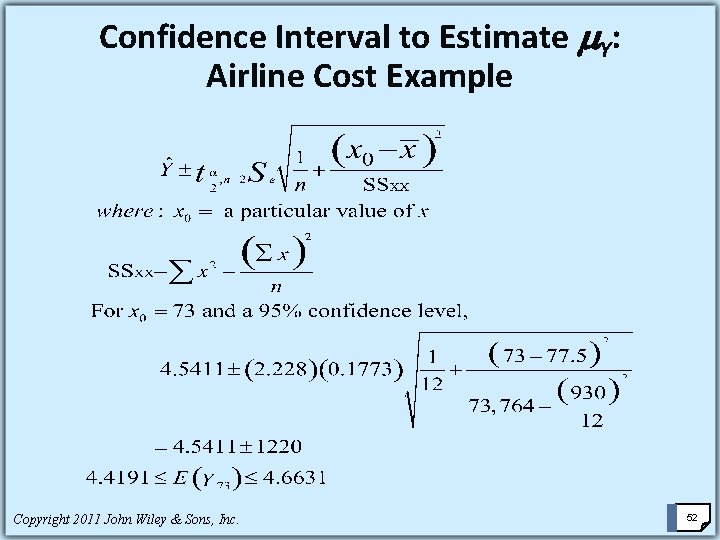

Confidence Interval to Estimate Y: Airline Cost Example Copyright 2011 John Wiley & Sons, Inc. 52

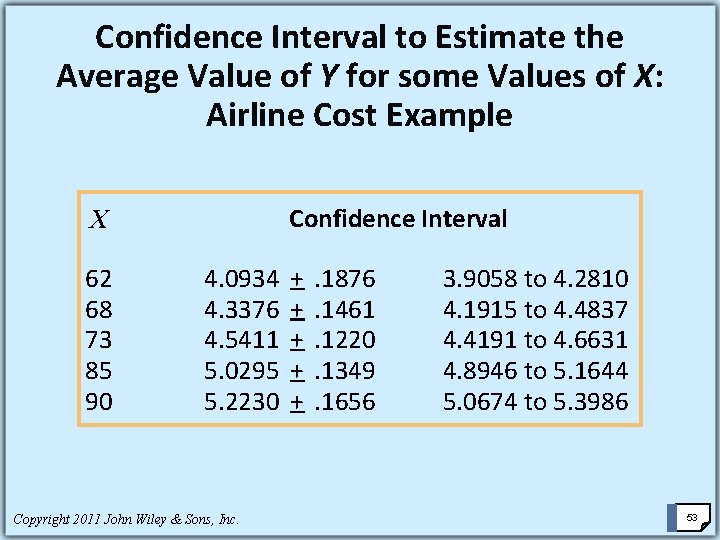

Confidence Interval to Estimate the Average Value of Y for some Values of X: Airline Cost Example X Confidence Interval 62 4. 0934 + . 1876 3. 9058 to 4. 2810 68 4. 3376 + . 1461 4. 1915 to 4. 4837 73 4. 5411 + . 1220 4. 4191 to 4. 6631 85 5. 0295 + . 1349 4. 8946 to 5. 1644 90 5. 2230 + . 1656 5. 0674 to 5. 3986 Copyright 2011 John Wiley & Sons, Inc. 53

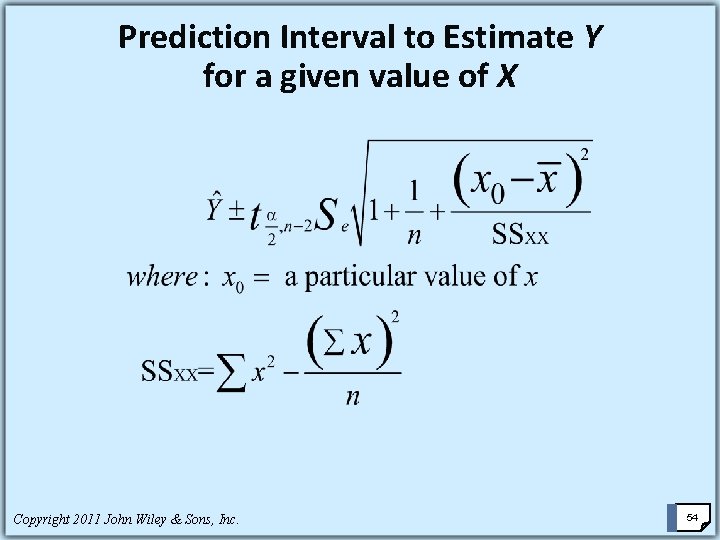

Prediction Interval to Estimate Y for a given value of X Copyright 2011 John Wiley & Sons, Inc. 54

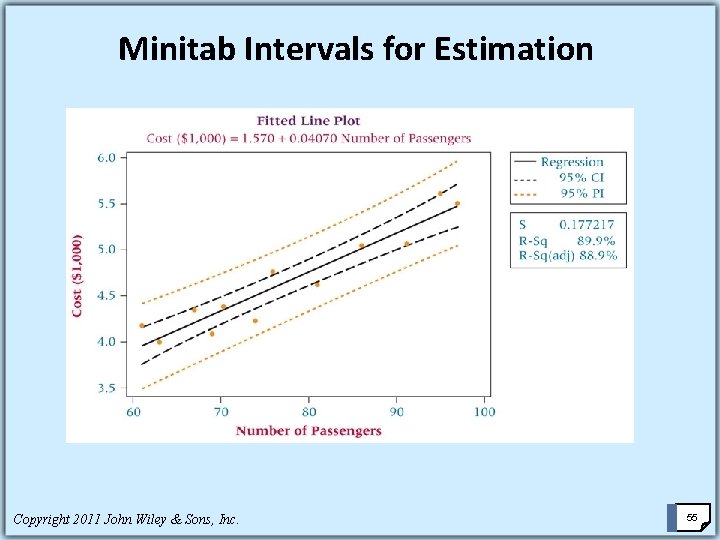

Minitab Intervals for Estimation Copyright 2011 John Wiley & Sons, Inc. 55

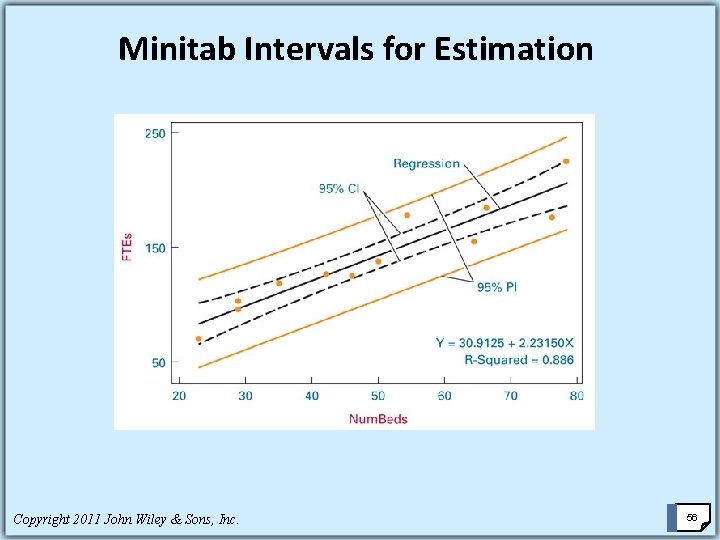

Minitab Intervals for Estimation Copyright 2011 John Wiley & Sons, Inc. 56

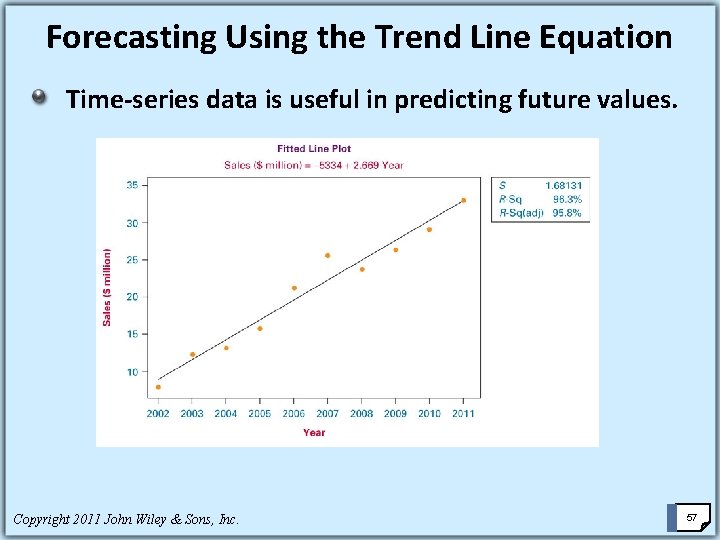

Forecasting Using the Trend Line Equation Time-series data is useful in predicting future values. Copyright 2011 John Wiley & Sons, Inc. 57

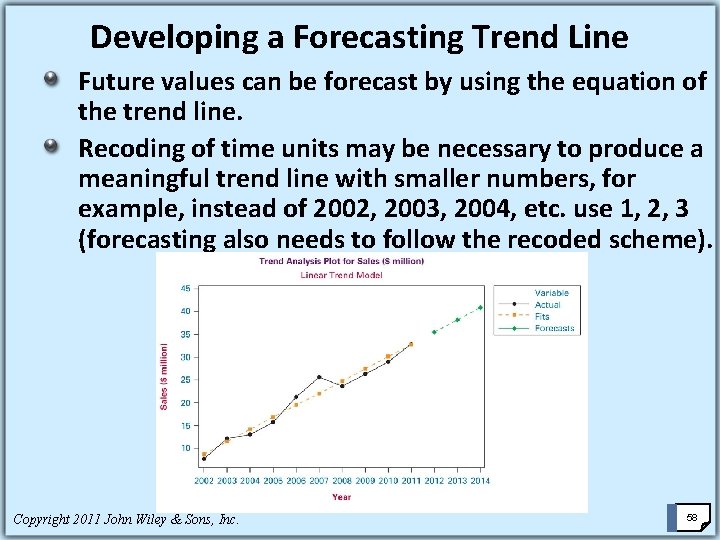

Developing a Forecasting Trend Line Future values can be forecast by using the equation of the trend line. Recoding of time units may be necessary to produce a meaningful trend line with smaller numbers, for example, instead of 2002, 2003, 2004, etc. use 1, 2, 3 (forecasting also needs to follow the recoded scheme). Copyright 2011 John Wiley & Sons, Inc. 58

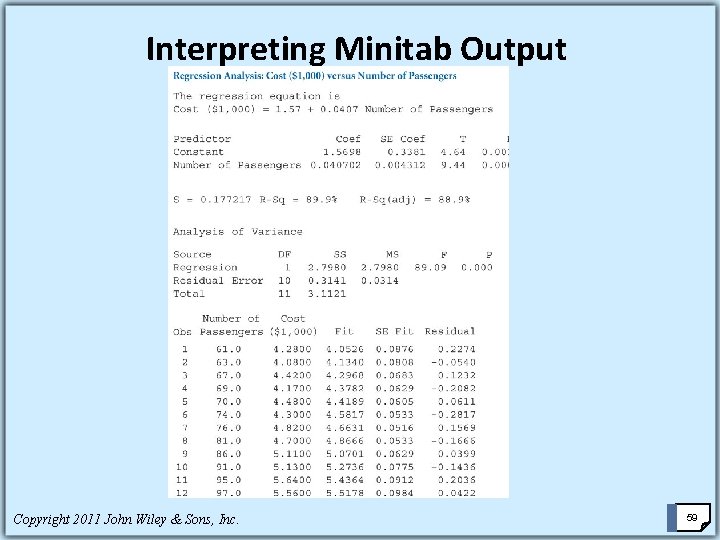

Interpreting Minitab Output Copyright 2011 John Wiley & Sons, Inc. 59

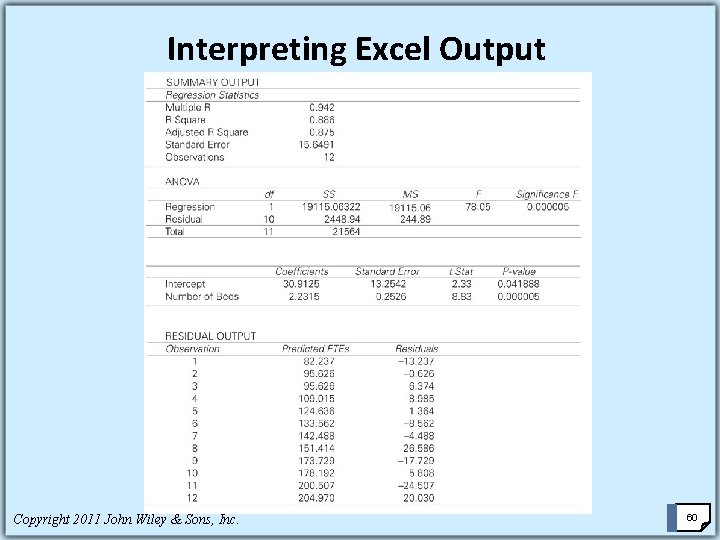

Interpreting Excel Output Copyright 2011 John Wiley & Sons, Inc. 60

- Slides: 60