Applied Business Forecasting and planning Multiple Regression Analysis

Applied Business Forecasting and planning Multiple Regression Analysis

Introduction n n In simple linear regression we studied the relationship between one explanatory variable and one response variable. Now, we look at situations where several explanatory variables works together to explain the response.

Introduction n n Following our principles of data analysis, we look first at each variable separately, then at relationships among the variables. We look at the distribution of each variable to be used in multiple regression to determine if there any unusual patterns that may be important in building our regression analysis.

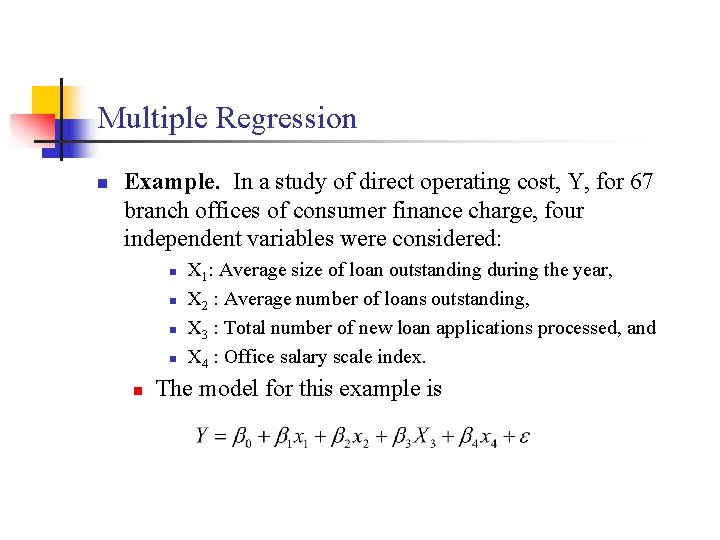

Multiple Regression n Example. In a study of direct operating cost, Y, for 67 branch offices of consumer finance charge, four independent variables were considered: n n n X 1: Average size of loan outstanding during the year, X 2 : Average number of loans outstanding, X 3 : Total number of new loan applications processed, and X 4 : Office salary scale index. The model for this example is

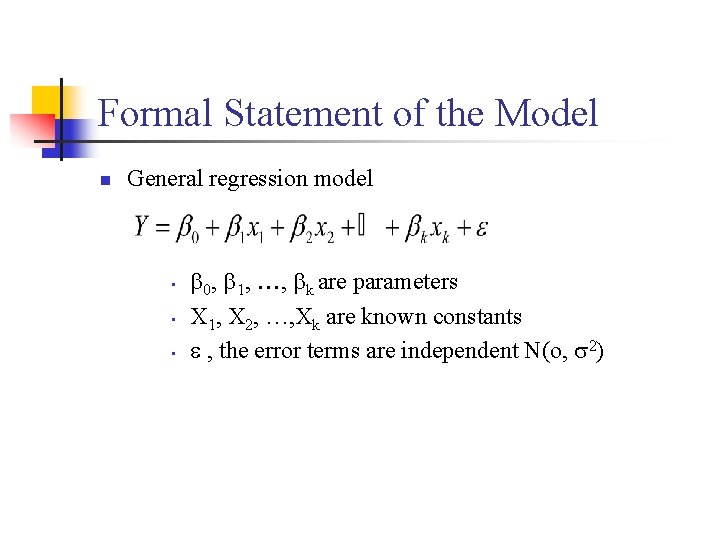

Formal Statement of the Model n General regression model • • • 0, 1, , k are parameters X 1, X 2, …, Xk are known constants , the error terms are independent N(o, 2)

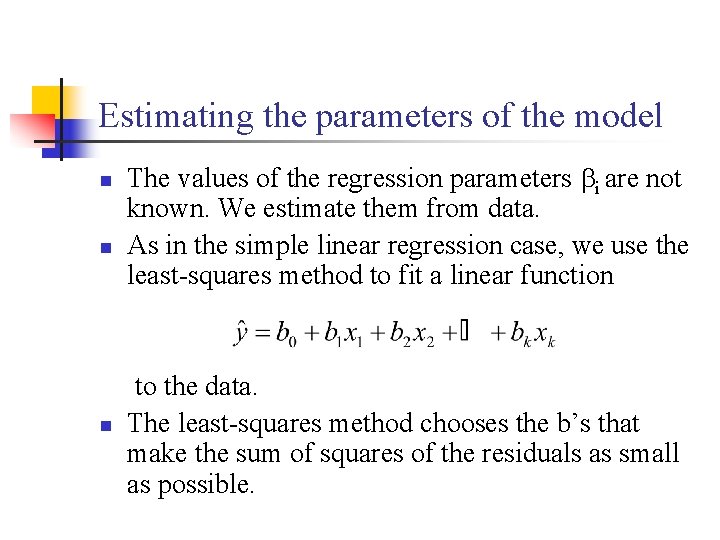

Estimating the parameters of the model n n The values of the regression parameters i are not known. We estimate them from data. As in the simple linear regression case, we use the least-squares method to fit a linear function to the data. n The least-squares method chooses the b’s that make the sum of squares of the residuals as small as possible.

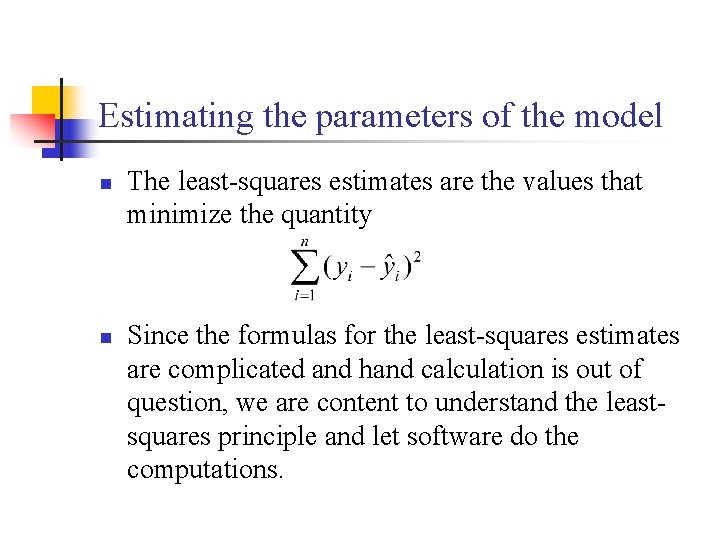

Estimating the parameters of the model n n The least-squares estimates are the values that minimize the quantity Since the formulas for the least-squares estimates are complicated and hand calculation is out of question, we are content to understand the leastsquares principle and let software do the computations.

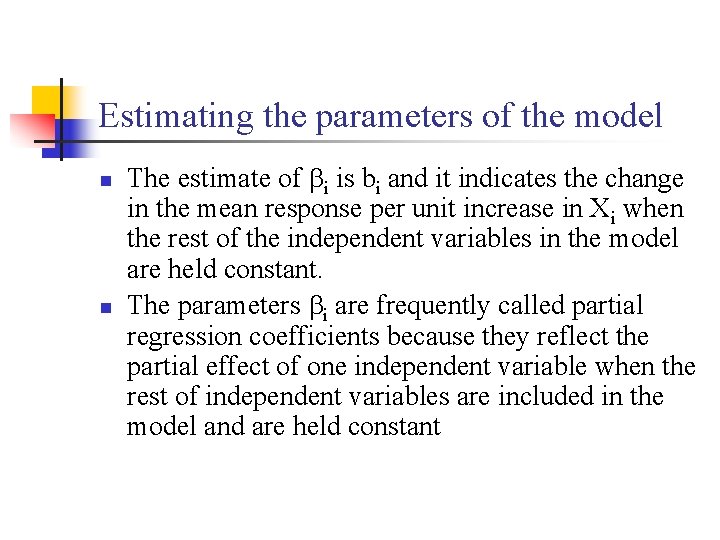

Estimating the parameters of the model n n The estimate of i is bi and it indicates the change in the mean response per unit increase in Xi when the rest of the independent variables in the model are held constant. The parameters i are frequently called partial regression coefficients because they reflect the partial effect of one independent variable when the rest of independent variables are included in the model and are held constant

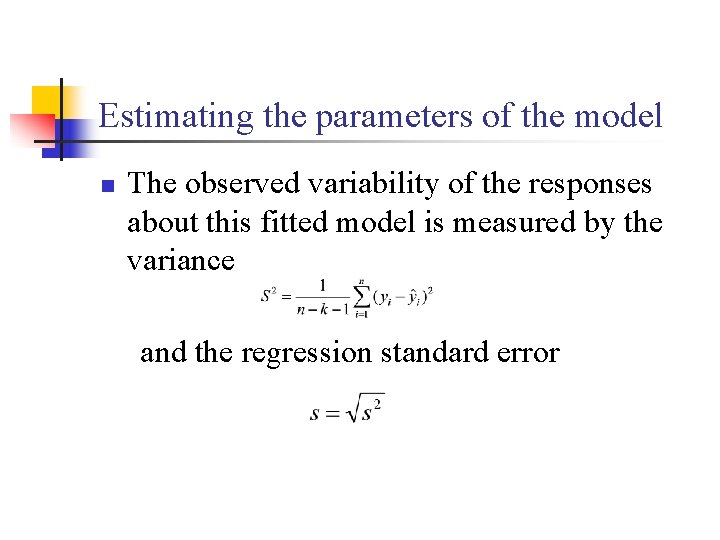

Estimating the parameters of the model n The observed variability of the responses about this fitted model is measured by the variance and the regression standard error

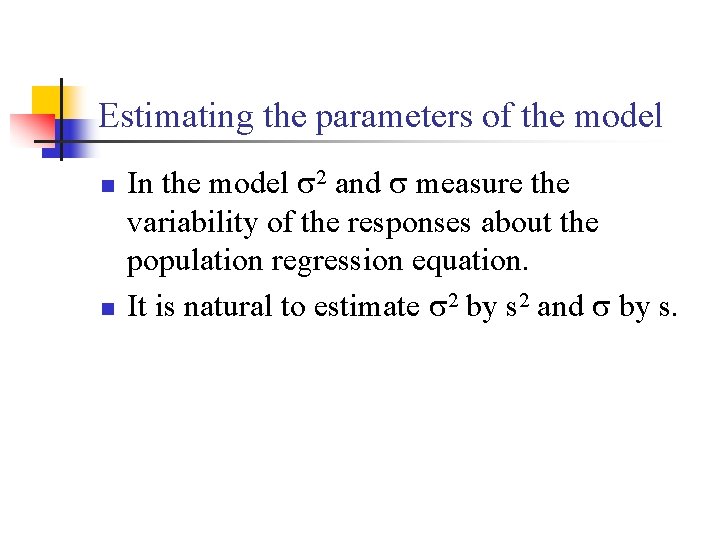

Estimating the parameters of the model n n In the model 2 and measure the variability of the responses about the population regression equation. It is natural to estimate 2 by s 2 and by s.

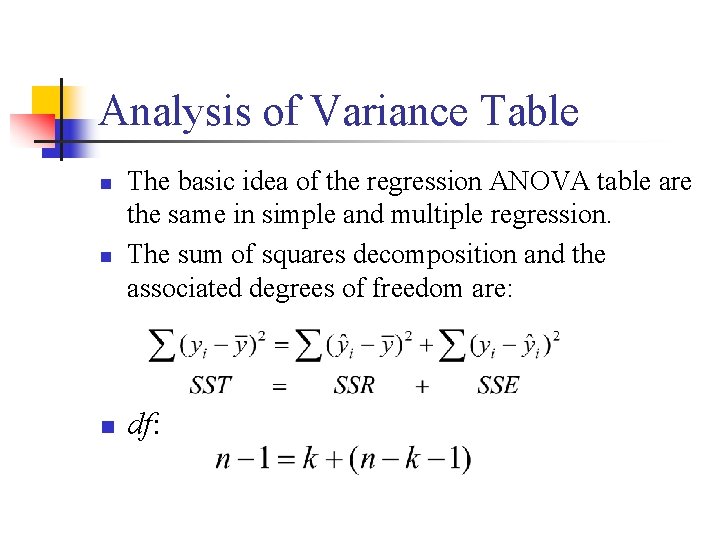

Analysis of Variance Table n n n The basic idea of the regression ANOVA table are the same in simple and multiple regression. The sum of squares decomposition and the associated degrees of freedom are: df:

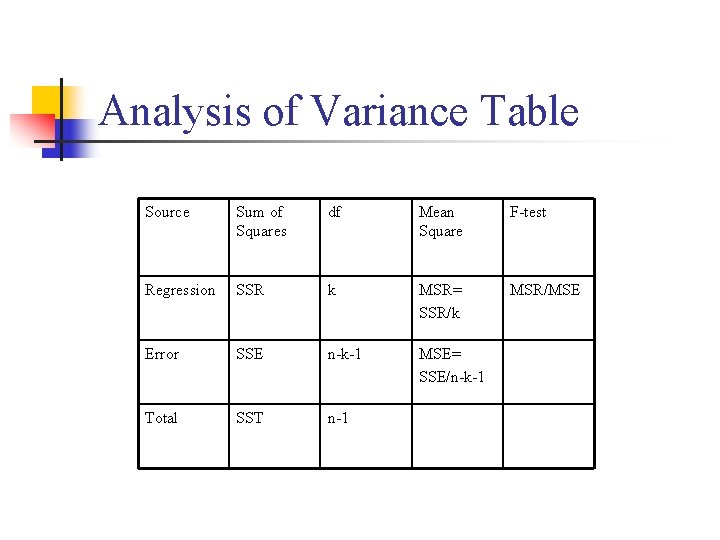

Analysis of Variance Table Source Sum of Squares df Mean Square F-test Regression SSR k MSR= SSR/k MSR/MSE Error SSE n-k-1 MSE= SSE/n-k-1 Total SST n-1

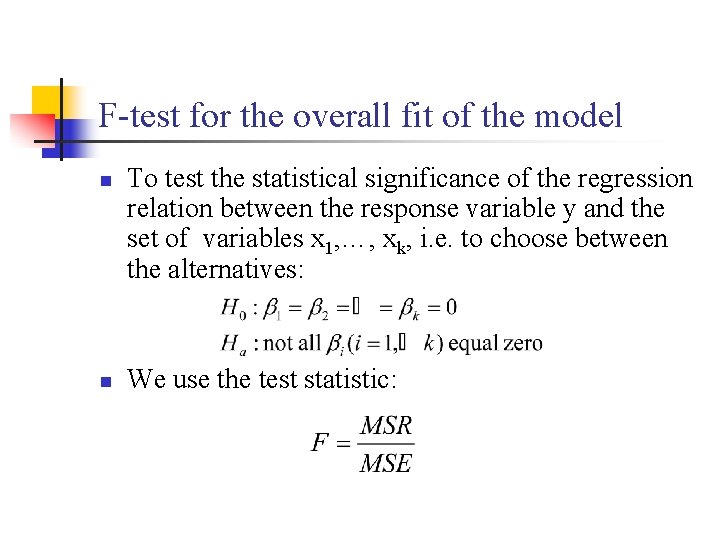

F-test for the overall fit of the model n n To test the statistical significance of the regression relation between the response variable y and the set of variables x 1, …, xk, i. e. to choose between the alternatives: We use the test statistic:

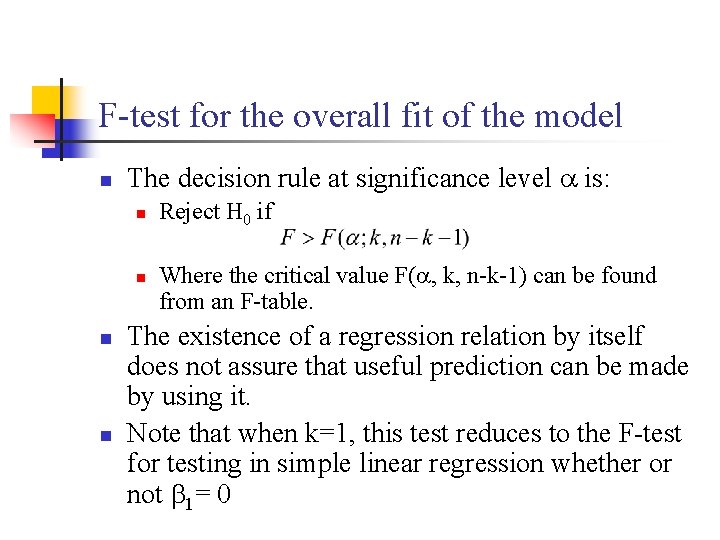

F-test for the overall fit of the model n The decision rule at significance level is: n n Reject H 0 if Where the critical value F( , k, n-k-1) can be found from an F-table. The existence of a regression relation by itself does not assure that useful prediction can be made by using it. Note that when k=1, this test reduces to the F-test for testing in simple linear regression whether or not 1= 0

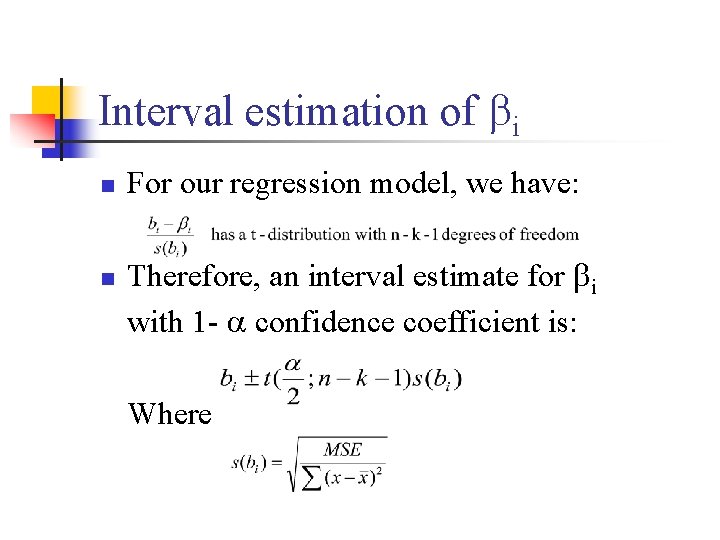

Interval estimation of i n n For our regression model, we have: Therefore, an interval estimate for i with 1 - confidence coefficient is: Where

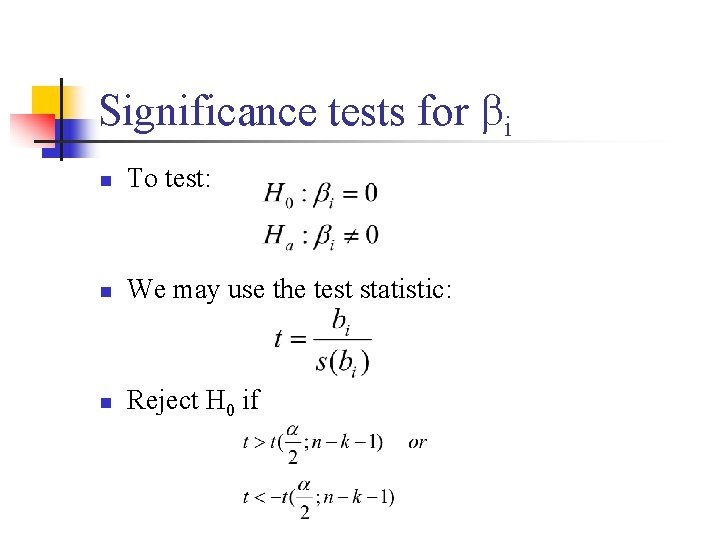

Significance tests for i n To test: n We may use the test statistic: n Reject H 0 if

Multiple regression model Building n n Often we have many explanatory variables, and our goal is to use these to explain the variation in the response variable. A model using just a few of the variables often predicts about as well as the model using all the explanatory variables.

Multiple regression model Building n n We may find that the reciprocal of a variable is a better choice than the variable itself, or that including the square of an explanatory variable improves prediction. We may find that the effect of one explanatory variable may depends upon the value of another explanatory variable. We account for this situation by including interaction terms.

Multiple regression model Building n n The simplest way to construct an interaction term is to multiply the two explanatory variables together. How can we find a good model?

Selecting the best Regression equation. n After a lengthy list of potentially useful independent variables has been compiled, some of the independent variables can be screened out. An independent variable n n n May not be fundamental to the problem May be subject to large measurement error May effectively duplicate another independent variable in the list.

Selecting the best Regression Equation. n Once the investigator has tentatively decided upon the functional forms of the regression relations (linear, quadratic, etc. ), the next step is to obtain a subset of the explanatory variables (x) that “best” explain the variability in the response variable y.

Selecting the best Regression Equation. n n n An automatic search procedure that develops sequentially the subset of explanatory variables to be included in the regression model is called stepwise procedure. It was developed to economize on computational efforts. It will end with the identification of a single regression model as “best”.

Example: Sales Forecasting n n Sales Forecasting n Multiple regression is a popular technique for predicting product sales with the help of other variables that are likely to have a bearing on sales. Example n The growth of cable television has created vast new potential in the home entertainment business. The following table gives the values of several variables measured in a random sample of 20 local television stations which offer their programming to cable subscribers. A TV industry analyst wants to build a statistical model for predicting the number of subscribers that a cable station can expect.

Example: Sales Forecasting n n n Y = Number of cable subscribers (SUSCRIB) X 1 = Advertising rate which the station charges local advertisers for one minute of prim time space (ADRATE) X 2 = Kilowatt power of the station’s non-cable signal (KILOWATT) X 3 = Number of families living in the station’s area of dominant influence (ADI), a geographical division of radio and TV audiences (APIPOP) X 4 = Number of competing stations in the ADI (COMPETE)

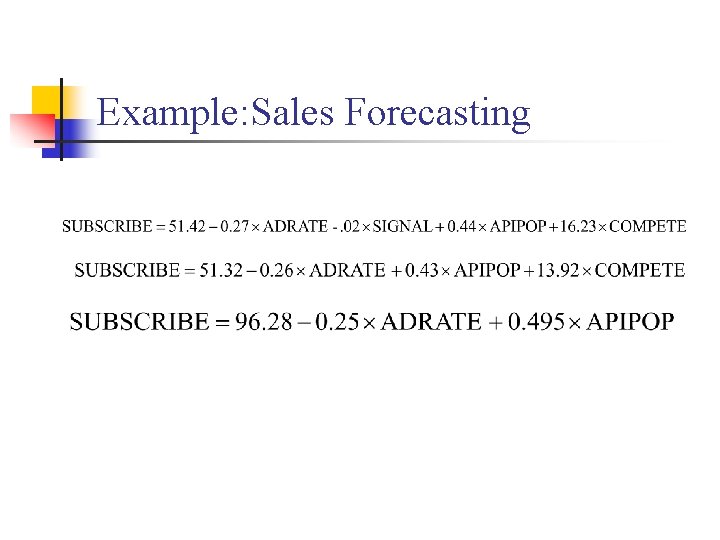

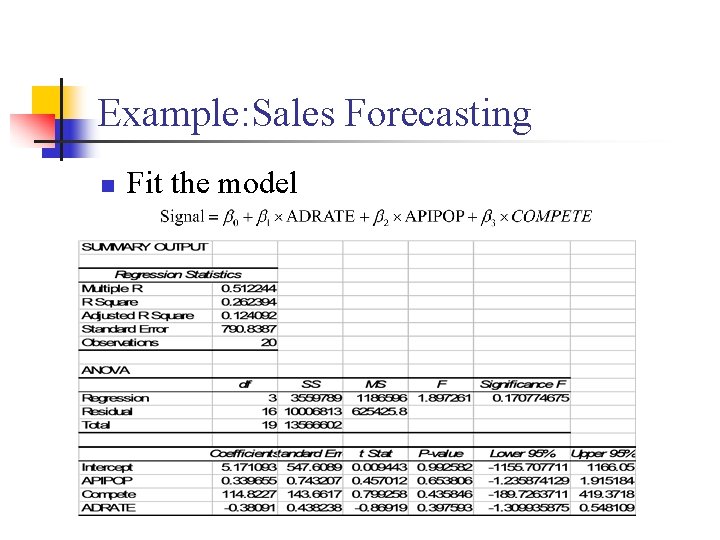

Example: Sales Forecasting n n n The sample data are fitted by a multiple regression model using Excel program. The marginal t-test provides a way of choosing the variables for inclusion in the equation. The fitted Model is

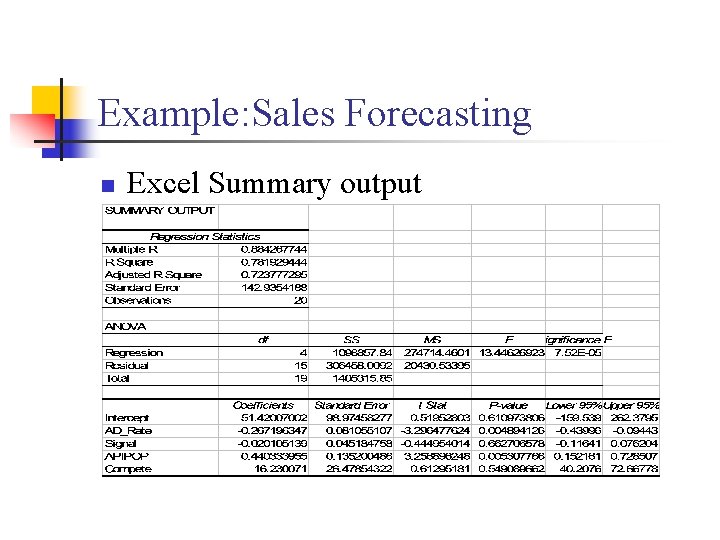

Example: Sales Forecasting n Excel Summary output

Example: Sales Forecasting n n n Do we need all the four variables in the model? Based on the partial t-test, the variables signal and compete are the least significant variables in our model. Let’s drop the least significant variables one at a time.

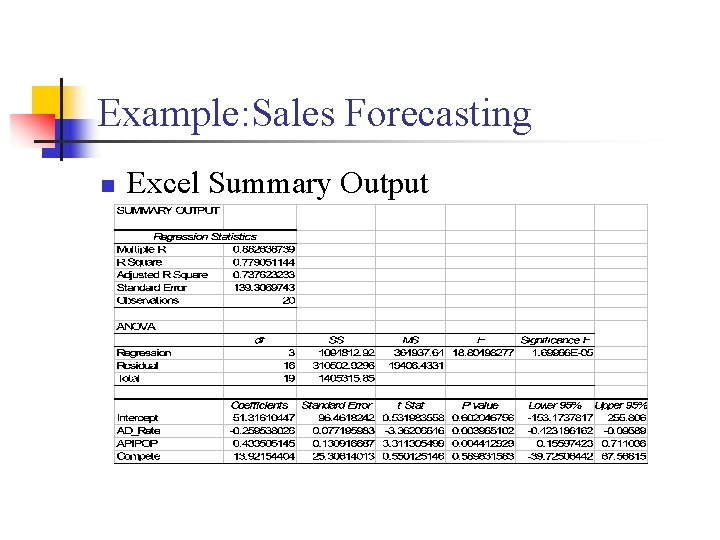

Example: Sales Forecasting n Excel Summary Output

Example: Sales Forecasting n The variable Compete is the next variable to get rid of.

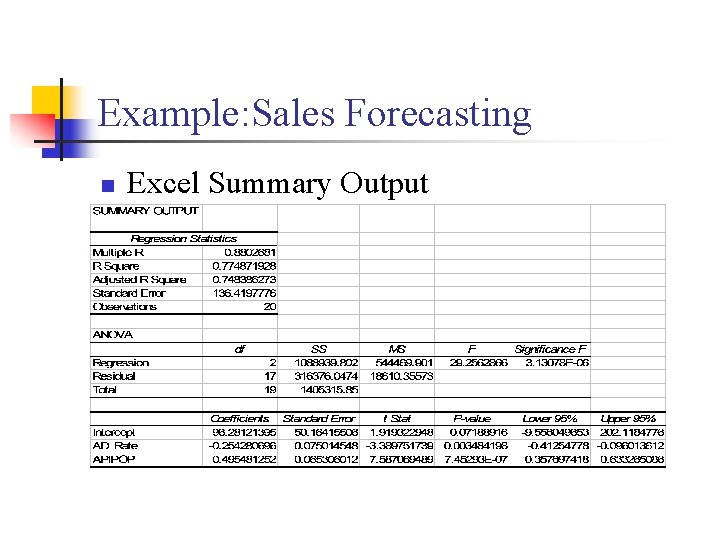

Example: Sales Forecasting n Excel Summary Output

Example: Sales Forecasting • All the variables in the model are statistically significant, therefore our final model is: Final Model

Interpreting the Final Model n n n What is the interpretation of the estimated parameters. Is the association positive or negative? Does this make sense intuitively, based on what the data represents? What other variables could be confounders? Are there other analysis that you might consider doing? New questions raised?

Multicollinearity n n In multiple regression analysis, one is often concerned with the nature and significance of the relations between the explanatory variables and the response variable. Questions that are frequently asked are: n n What is the relative importance of the effects of the different independent variables? What is the magnitude of the effect of a given independent variable on the dependent variable?

Multicollinearity n n n Can any independent variable be dropped from the model because it has little or no effect on the dependent variable? Should any independent variables not yet included in the model be considered for possible inclusion? Simple answers can be given to these questions if n n The independent variables in the model are uncorrelated among themselves. They are uncorrelated with any other independent variables that are related to the dependent variable but omitted from the model.

Multicollinearity n n n When the independent variables are correlated among themselves, multicollinearity or colinearity among them is said to exist. In many non-experimental situations in business, economics, and the social and biological sciences, the independent variables tend to be correlated among themselves. For example, in a regression of family food expenditures on the variables: family income, family savings, and the age of head of household, the explanatory variables will be correlated among themselves.

Multicollinearity n Further, the explanatory variables will also be correlated with other socioeconomic variables not included in the model that do affect family food expenditures, such as family size.

Multicollinearity Some key problems that typically arise when the explanatory variables being considered for the regression model are highly correlated among themselves are: n 1. 2. 3. Adding or deleting an explanatory variable changes the regression coefficients. The estimated standard deviations of the regression coefficients become large when the explanatory variables in the regression model are highly correlated with each other. The estimated regression coefficients individually may not be statistically significant even though a definite statistical relation exists between the response variable and the set of explanatory variables.

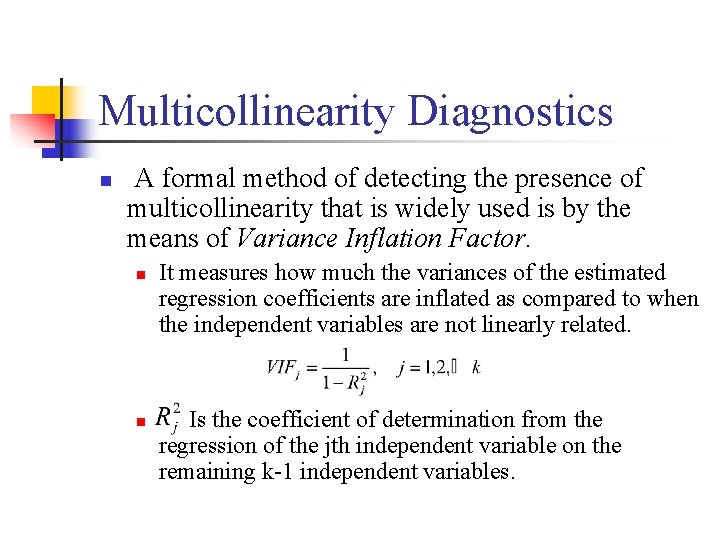

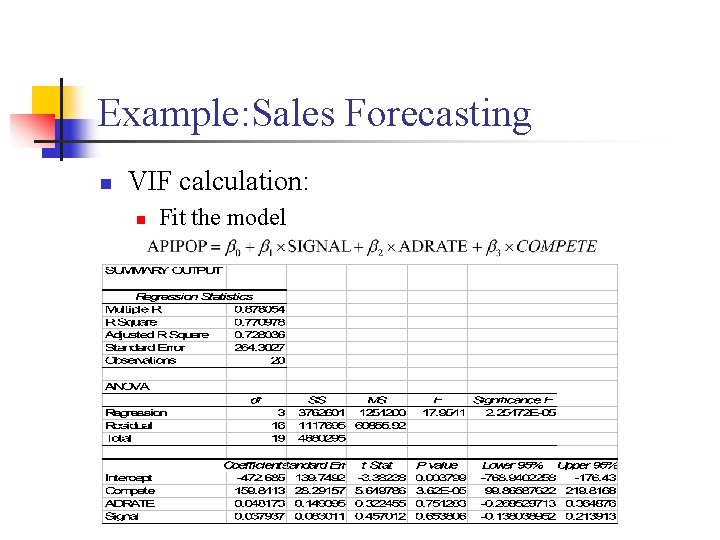

Multicollinearity Diagnostics n A formal method of detecting the presence of multicollinearity that is widely used is by the means of Variance Inflation Factor. n n It measures how much the variances of the estimated regression coefficients are inflated as compared to when the independent variables are not linearly related. Is the coefficient of determination from the regression of the jth independent variable on the remaining k-1 independent variables.

Multicollinearity Diagnostics n AVIF near 1 suggests that multicollinearity is not a problem for the independent variables. n n Its estimated coefficient and associated t value will not change much as the other independent variables are added or deleted from the regression equation. A VIF much greater than 1 indicates the presence of multicollinearity. A maximum VIF value in excess of 10 is often taken as an indication that the multicollinearity may be unduly influencing the least square estimates. n the estimated coefficient attached to the variable is unstable and its associated t statistic may change considerably as the other independent variables are added or deleted.

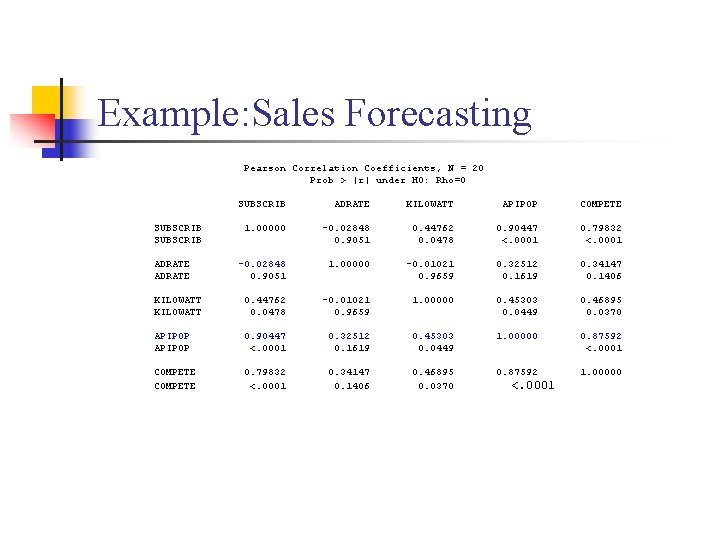

Multicollinearity Diagnostics n n The simple correlation coefficient between all pairs of explanatory variables (i. e. , X 1, X 2, …, Xk ) is helpful in selecting appropriate explanatory variables for a regression model and is also critical for examining multicollinearity. While it is true that a correlation very close to +1 or – 1 does suggest multicollinearity, it is not true (unless there are only two explanatory variables) to infer multicollinearity does not exist when there are no high correlations between any pair of explanatory variables.

Example: Sales Forecasting Pearson Correlation Coefficients, N = 20 Prob > |r| under H 0: Rho=0 SUBSCRIB ADRATE KILOWATT APIPOP COMPETE SUBSCRIB 1. 00000 -0. 02848 0. 44762 0. 90447 0. 79832 SUBSCRIB 0. 9051 0. 0478 <. 0001 ADRATE -0. 02848 1. 00000 -0. 01021 0. 32512 0. 34147 ADRATE 0. 9051 0. 9659 0. 1619 0. 1406 KILOWATT 0. 44762 -0. 01021 1. 00000 0. 45303 0. 46895 KILOWATT 0. 0478 0. 9659 0. 0449 0. 0370 APIPOP 0. 90447 0. 32512 0. 45303 1. 00000 0. 87592 APIPOP <. 0001 0. 1619 0. 0449 <. 0001 COMPETE 0. 79832 0. 34147 0. 46895 0. 87592 1. 00000 COMPETE <. 0001 0. 1406 0. 0370 <. 0001

Example: Sales Forecasting

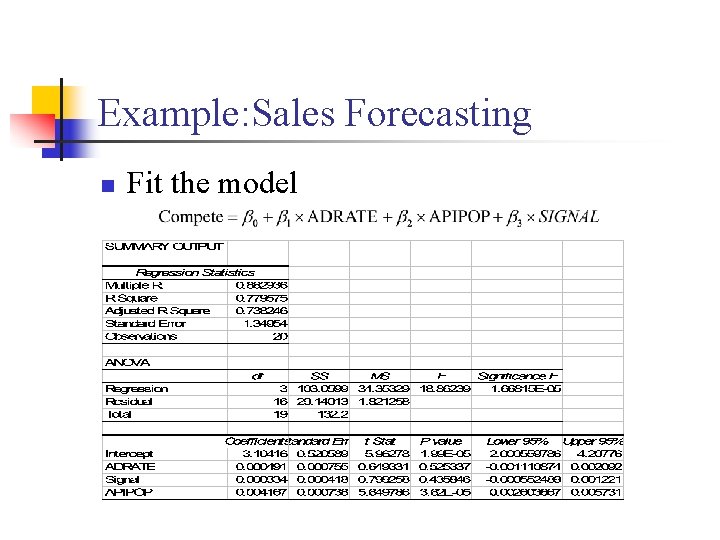

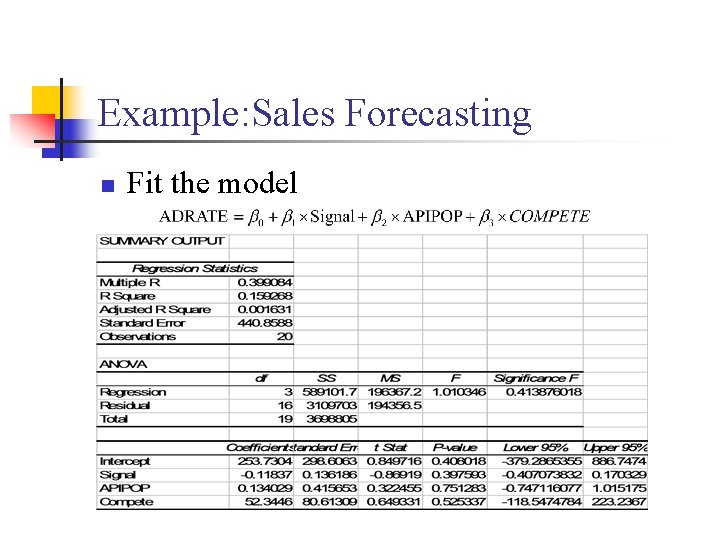

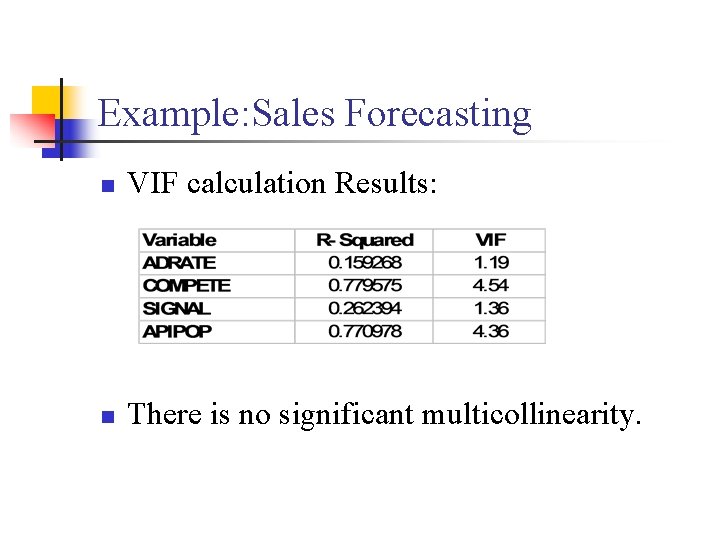

Example: Sales Forecasting n VIF calculation: n Fit the model

Example: Sales Forecasting n Fit the model

Example: Sales Forecasting n Fit the model

Example: Sales Forecasting n Fit the model

Example: Sales Forecasting n VIF calculation Results: n There is no significant multicollinearity.

Qualitative Independent Variables n n n Many variables of interest in business, economics, and social and biological sciences are not quantitative but are qualitative. Examples of qualitative variables are gender (male, female), purchase status (purchase, no purchase), and type of firms. Qualitative variables can also be used in multiple regression.

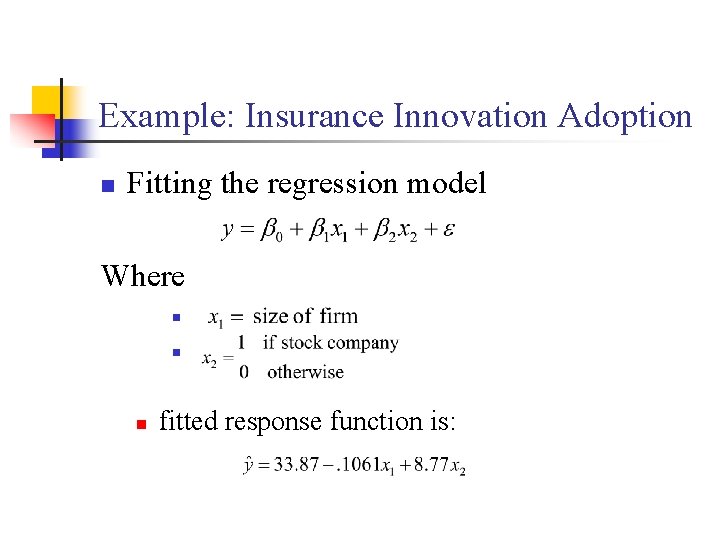

Qualitative Independent Variables n An economist wished to relate the speed with which a particular insurance innovation is adopted (y) to the size of the insurance firm (x 1) and the type of firm. The dependent variable is measured by the number of months elapsed between the time the first firm adopted the innovation and the time the given firm adopted the innovation. The first independent variable, size of the firm, is quantitative, and measured by the amount of total assets of the firm. The second independent variable, type of firm, is qualitative and is composed of two classes-Stock companies and mutual companies.

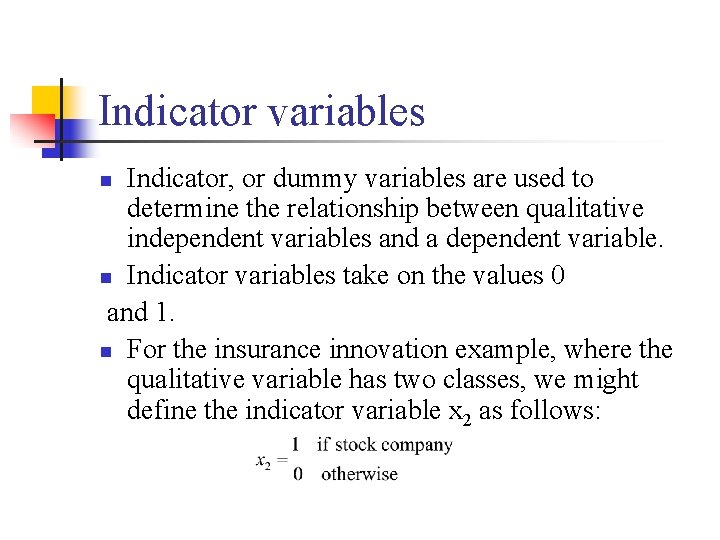

Indicator variables Indicator, or dummy variables are used to determine the relationship between qualitative independent variables and a dependent variable. n Indicator variables take on the values 0 and 1. n For the insurance innovation example, where the qualitative variable has two classes, we might define the indicator variable x 2 as follows: n

Indicator variables n n A qualitative variable with c classes will be represented by c-1 indicator variables. A regression function with an indicator variable with two levels (c = 2) will yield two estimated lines.

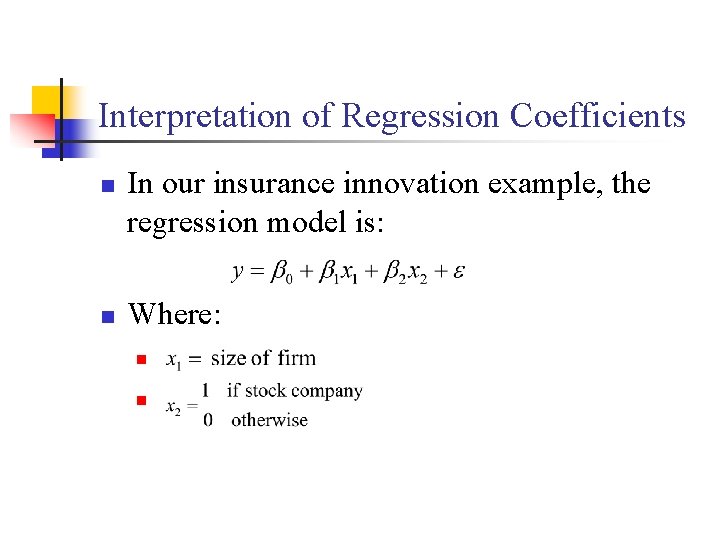

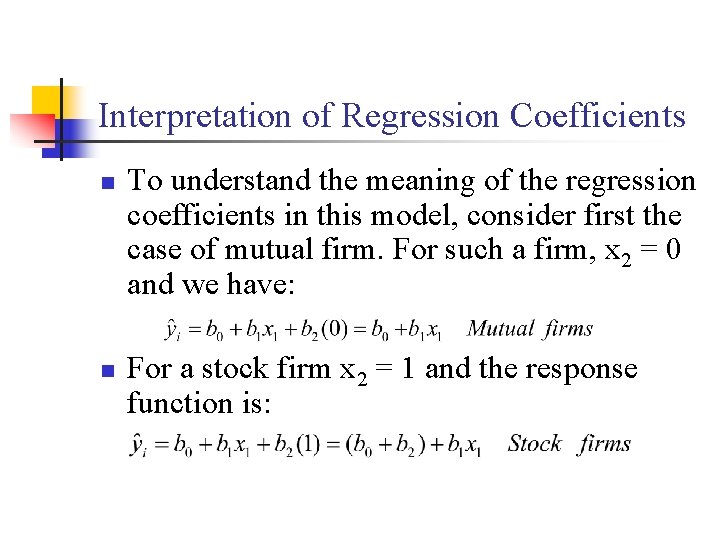

Interpretation of Regression Coefficients n n In our insurance innovation example, the regression model is: Where: n n

Interpretation of Regression Coefficients n n To understand the meaning of the regression coefficients in this model, consider first the case of mutual firm. For such a firm, x 2 = 0 and we have: For a stock firm x 2 = 1 and the response function is:

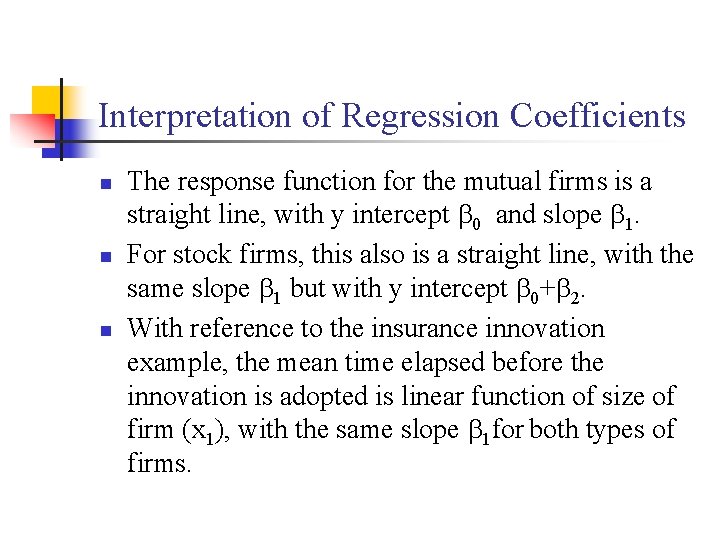

Interpretation of Regression Coefficients n n n The response function for the mutual firms is a straight line, with y intercept 0 and slope 1. For stock firms, this also is a straight line, with the same slope 1 but with y intercept 0+ 2. With reference to the insurance innovation example, the mean time elapsed before the innovation is adopted is linear function of size of firm (x 1), with the same slope 1 for both types of firms.

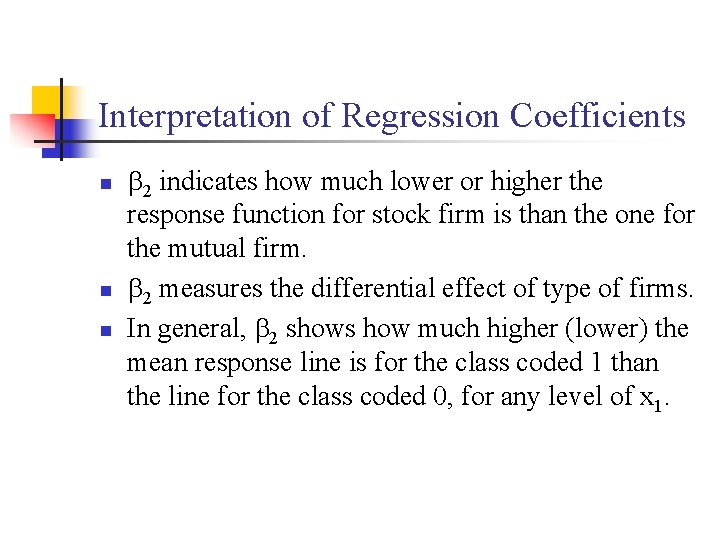

Interpretation of Regression Coefficients n n n 2 indicates how much lower or higher the response function for stock firm is than the one for the mutual firm. 2 measures the differential effect of type of firms. In general, 2 shows how much higher (lower) the mean response line is for the class coded 1 than the line for the class coded 0, for any level of x 1.

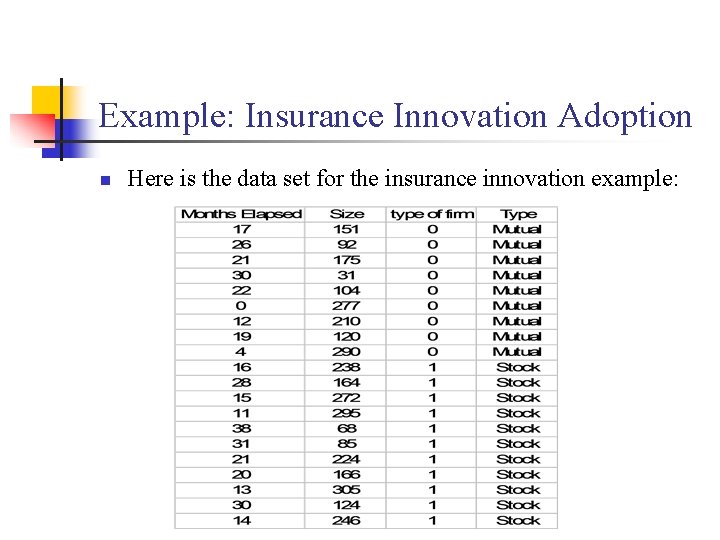

Example: Insurance Innovation Adoption n Here is the data set for the insurance innovation example:

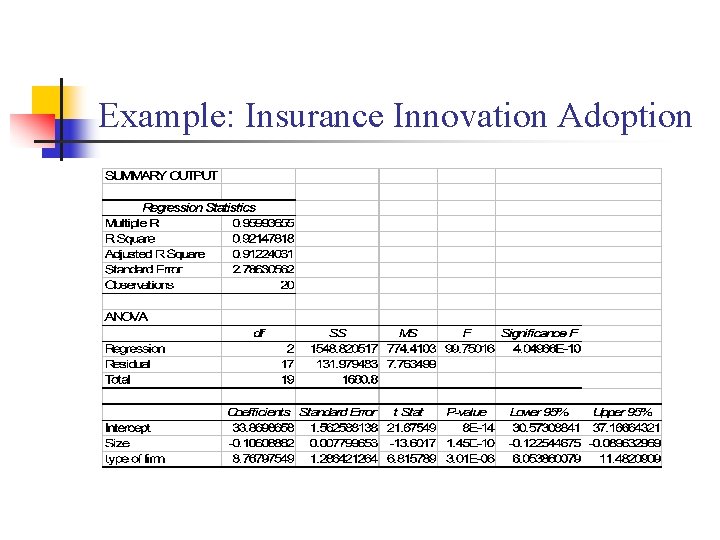

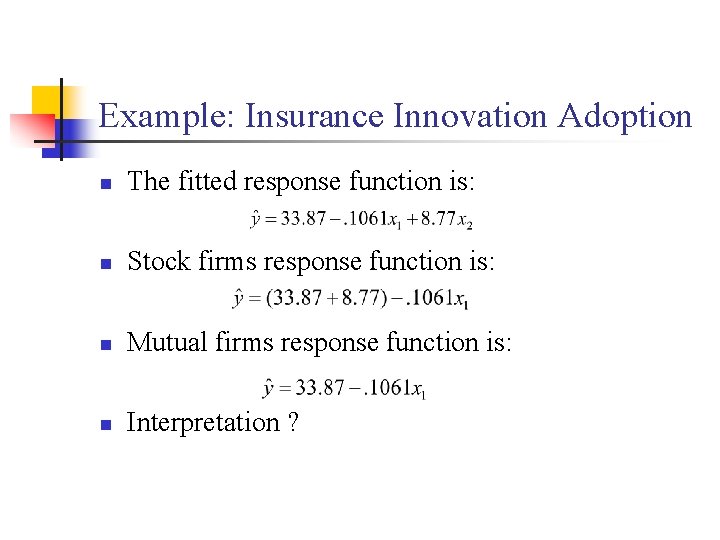

Example: Insurance Innovation Adoption Fitting the regression model Where n n fitted response function is:

Example: Insurance Innovation Adoption

Example: Insurance Innovation Adoption n The fitted response function is: n Stock firms response function is: n Mutual firms response function is: n Interpretation ?

Accounting for Seasonality in a Multiple regression Model n n Seasonal Patterns are not easily accounted for by the typical causal variables that we use in regression analysis. An indicator variable can be used effectively to account for seasonality in our time series data. The number of seasonal indicator variables to use depends on the data. If we have p periods in our data series, we can not use more than P-1 seasonal indicator variables.

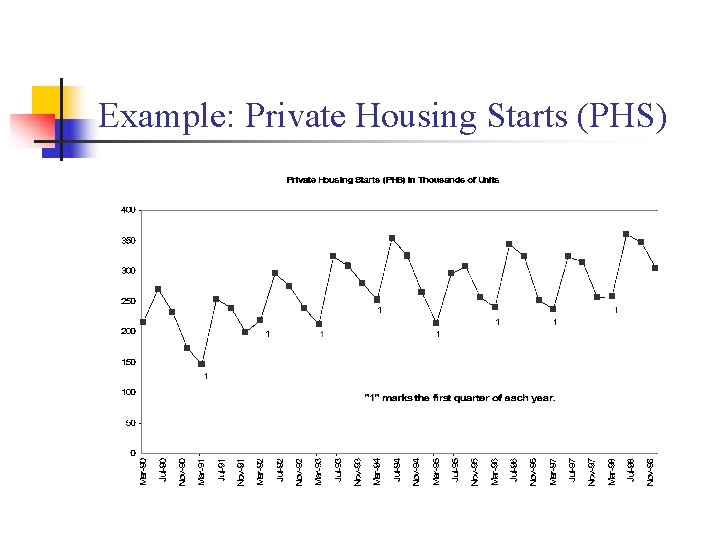

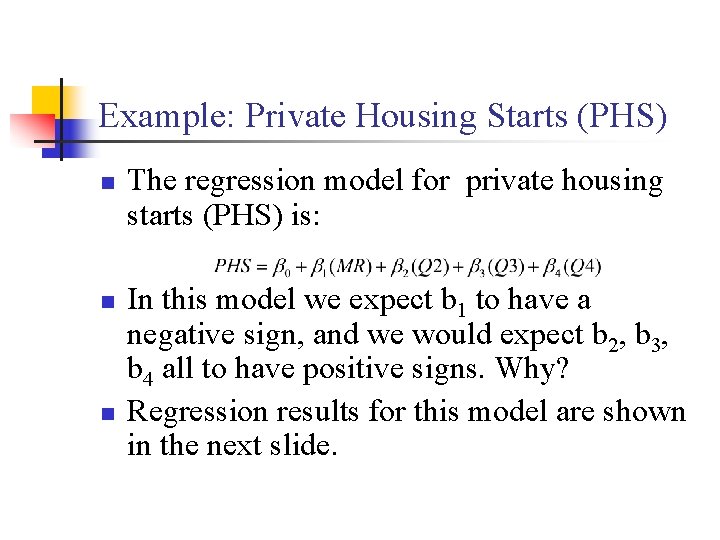

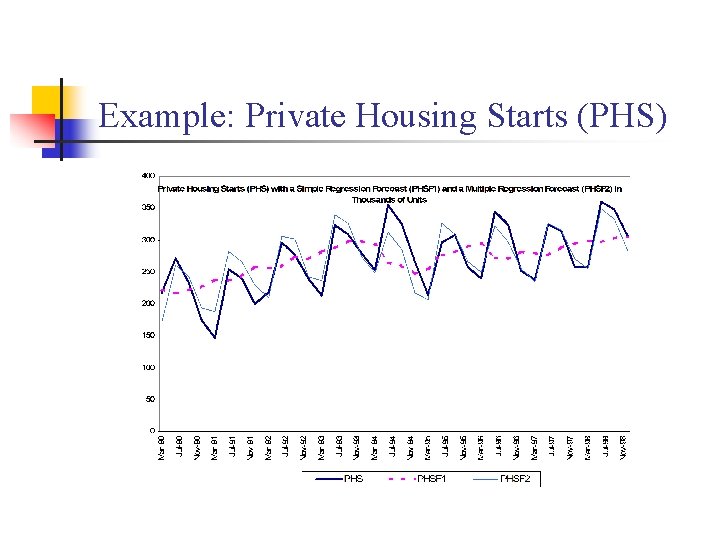

Example: Private Housing Starts (PHS) n Housing starts in the United States measured in thousands of units. These data are plotted for 1990 Q 1 through 1999 Q 4. There are typically few housing starts during the first quarter of the year (January, February, March); there is usually a big increase in the second quarter of (April, May, June), followed by some decline in the third quarter (July, August, September), and further decline in the fourth quarter (October, November, December).

Example: Private Housing Starts (PHS)

Example: Private Housing Starts (PHS) n To Account for and measure this seasonality in a regression model, we will use three dummy variables: Q 2 for the second quarter, Q 3 for the third quarter, and Q 4 for the fourth quarter. These will be coded as follows: n n n Q 2 = 1 for all second quarters and zero otherwise. Q 3 = 1 for all third quarters and zero otherwise Q 4 = 1 for all fourth quarters and zero otherwise.

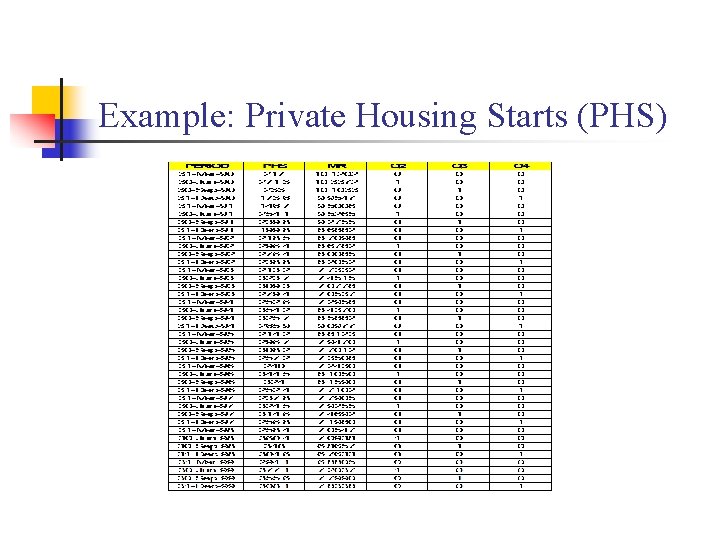

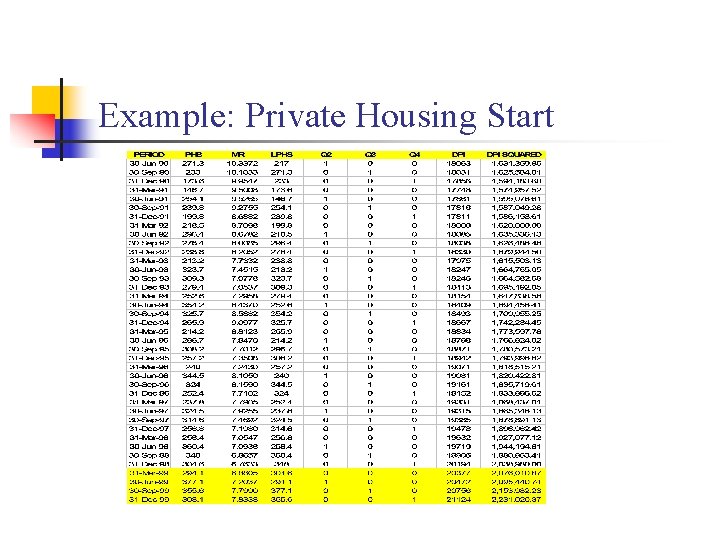

Example: Private Housing Starts (PHS) n n Data for private housing starts (PHS), the mortgage rate (MR), and these seasonal indicator variables are shown in the following slide. Examine the data carefully to verify your understanding of the coding for Q 2, Q 3, Q 4. Since we have assigned dummy variables for the second, third, and fourth quarters, the first quarter is the base quarter for our regression model. Note that any quarter could be used as the base, with indicator variables to adjust for differences in other quarters.

Example: Private Housing Starts (PHS)

Example: Private Housing Starts (PHS) n n n The regression model for private housing starts (PHS) is: In this model we expect b 1 to have a negative sign, and we would expect b 2, b 3, b 4 all to have positive signs. Why? Regression results for this model are shown in the next slide.

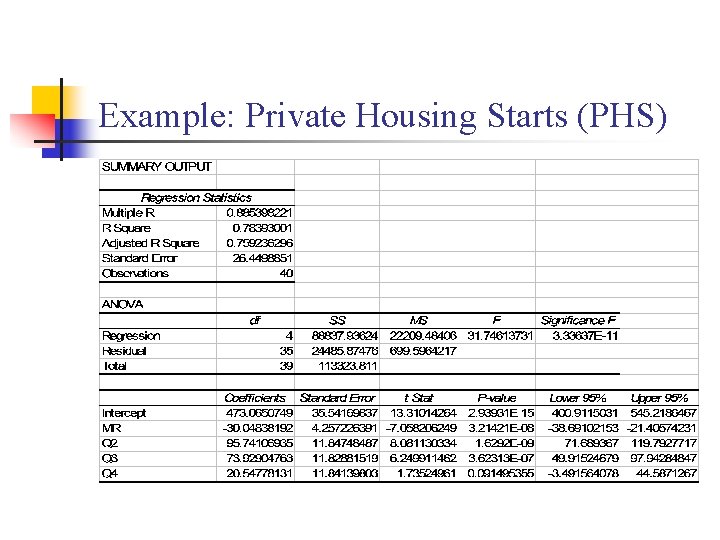

Example: Private Housing Starts (PHS)

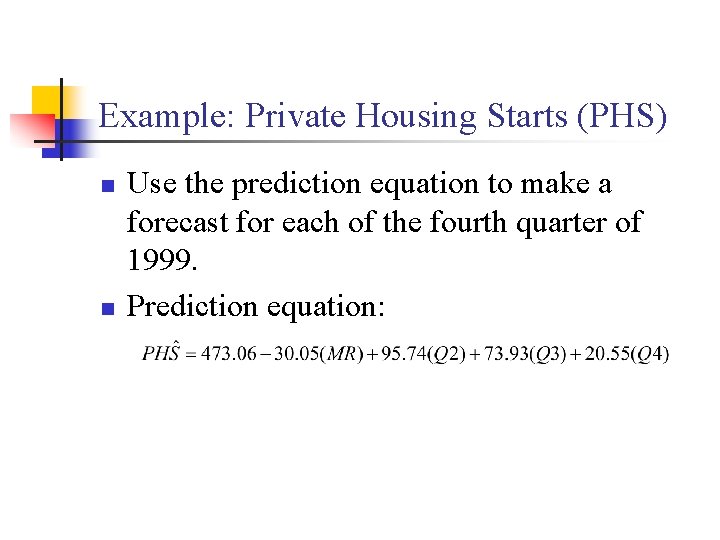

Example: Private Housing Starts (PHS) n n Use the prediction equation to make a forecast for each of the fourth quarter of 1999. Prediction equation:

Example: Private Housing Starts (PHS)

Regression Diagnostics and Residual Analysis n n It is important to check the adequacy of the model before it becomes part of the decision making process. Residual plots can be used to check the model assumptions. It is important to study outlying observations to decide whether they should be retained or eliminated. If retained, whether their influence should be reduced in the fitting process or revise the regression function.

Time Series Data and the Problem of Serial Correlation n In the regression models we assume that the errors i are independent. In business and economics, many regression applications involve time series data. For such data, the assumption of uncorrelated or independent error terms is often not appropriate.

Problems of Serial Correlation n If the error terms in the regression model are autocorrelated, the use of ordinary least squares procedures has a number of important consequences n n n MSE underestimate the variance of the error terms The confidence intervals and tests using the t and F distribution are no longer strictly applicable. The standard error of the regression coefficients underestimate the variability of the estimated regression coefficients. Spurious regression can result.

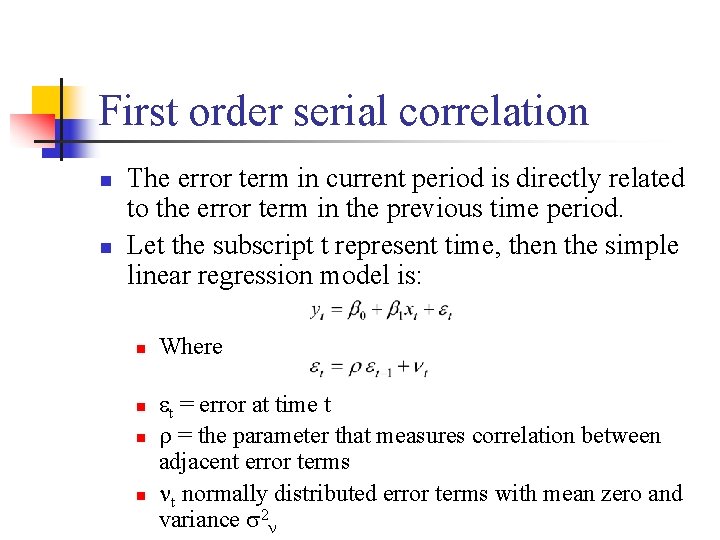

First order serial correlation n n The error term in current period is directly related to the error term in the previous time period. Let the subscript t represent time, then the simple linear regression model is: n n Where t = error at time t = the parameter that measures correlation between adjacent error terms t normally distributed error terms with mean zero and variance 2

Example n The effect of positive serial correlation in a simple linear regression model. n n n Misleading forecasts of future y values. Standard error of the estimate, S y. x will underestimate the variability of the y’s about the true regression line. Strong autocorrelation can make two unrelated variables appear to be related.

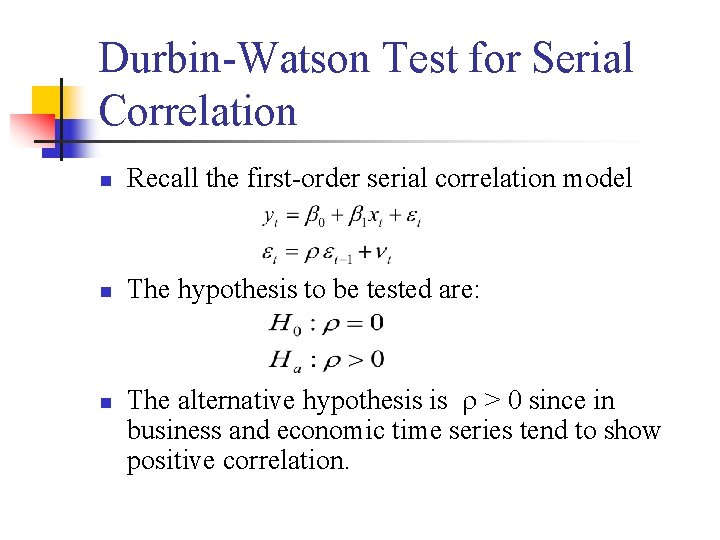

Durbin-Watson Test for Serial Correlation n Recall the first-order serial correlation model n The hypothesis to be tested are: n The alternative hypothesis is > 0 since in business and economic time series tend to show positive correlation.

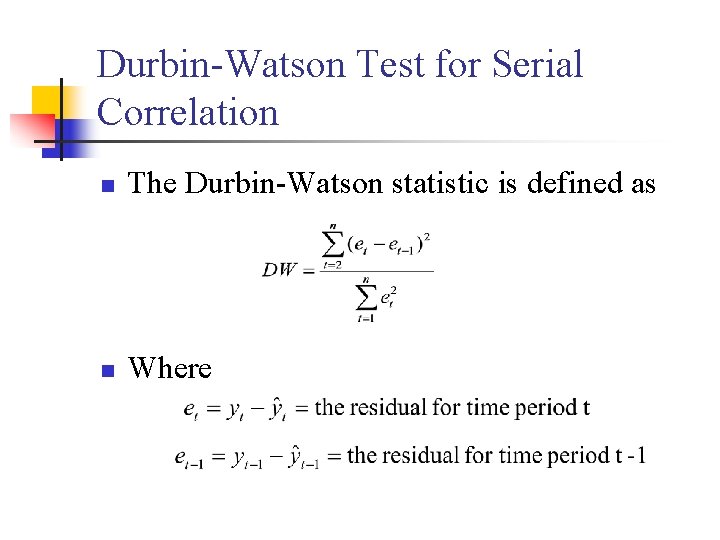

Durbin-Watson Test for Serial Correlation n The Durbin-Watson statistic is defined as n Where

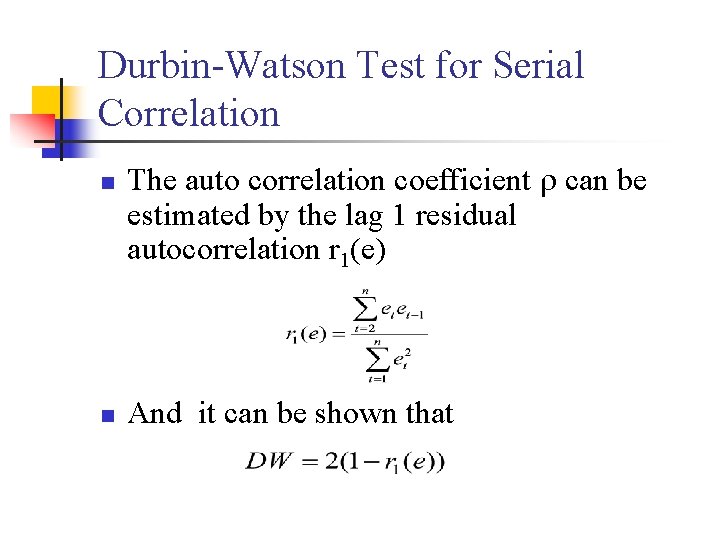

Durbin-Watson Test for Serial Correlation n The auto correlation coefficient can be estimated by the lag 1 residual autocorrelation r 1(e) n And it can be shown that

Durbin-Watson Test for Serial Correlation n n Since – 1 < r 1(e) < 1 then 0 < DW < 4 If r 1(e) = 0, then DW = 2 (there is no correlation. ) If r 1(e) > 0, then DW < 2 (positive correlation) If r 1(e) < 0, Then DW > 2 (negative correlation)

Durbin-Watson Test for Serial Correlation n Decision rule: n n n If DW > U, Do not reject H 0. If DW < L, Reject H 0 If L DW U, the test is inconclusive. The critical Upper (U) an Lower (L) bound can be found in Durbin-Watson table of your text book. To use this table you need to know The significance level ( ) The number of independent parameters in the model (k), and the sample size (n).

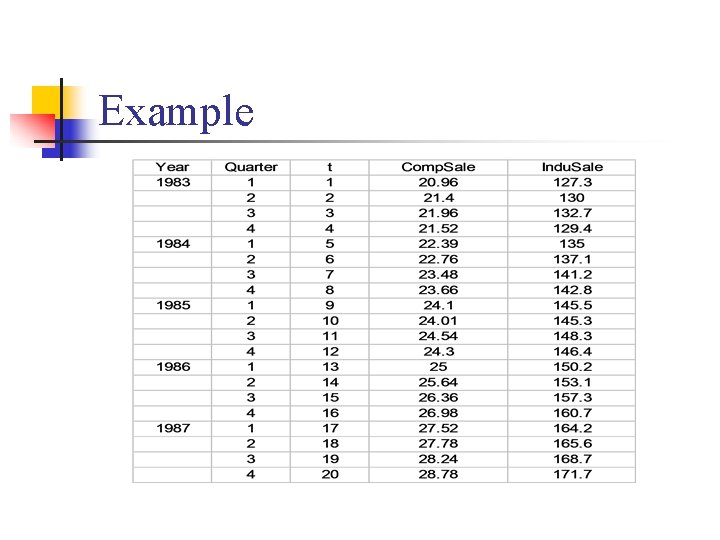

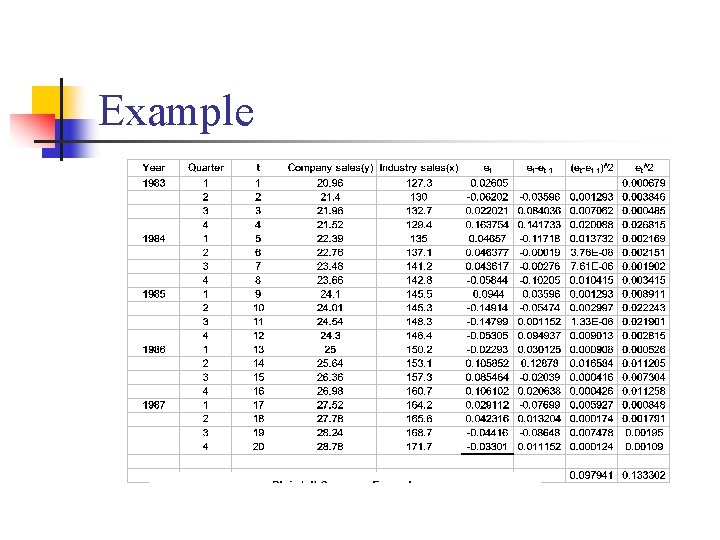

Example n The Blaisdell Company wished to predict its sales by using industry sales as a predictor variable. The following table gives seasonally adjusted quarterly data on company sales and industry sales for the period 1983 -1987.

Example

Example

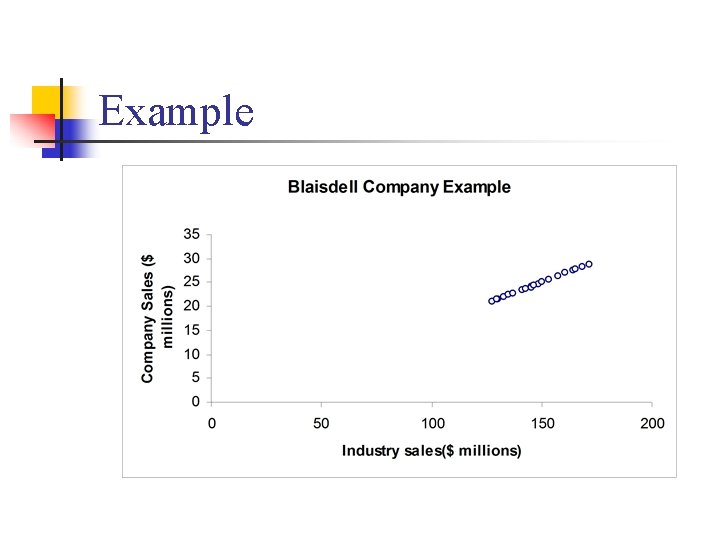

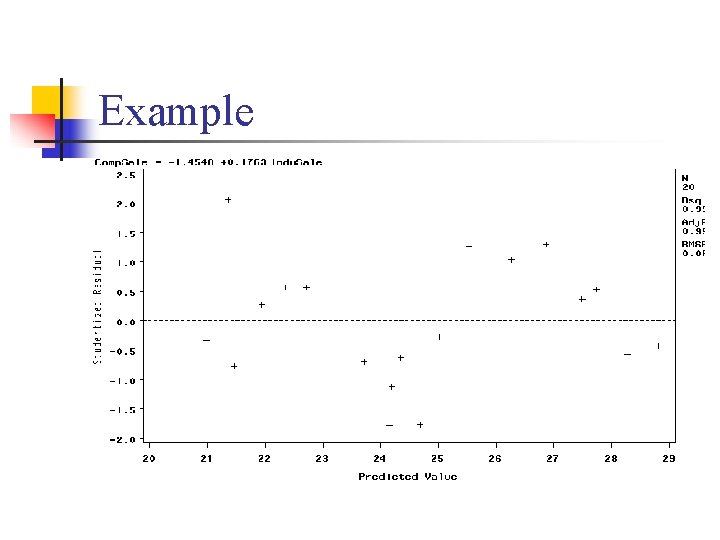

Example n n The scatter plot suggests that a linear regression model is appropriate. Least squares method was used to fit a regression line to the data. The residuals were plotted against the fitted values. The plot shows that the residuals are consistently above or below the fitted value for extended periods.

Example

Example n n To confirm this graphic diagnosis we will use the Durbin-Watson test for: The test statistic is:

Example

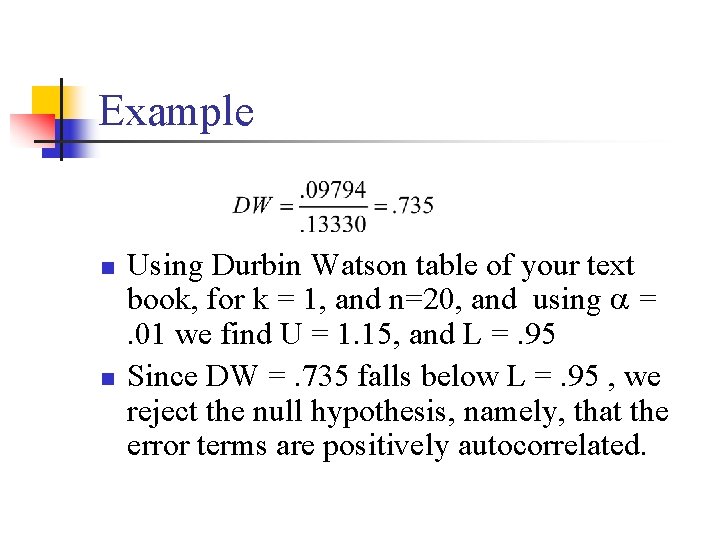

Example n n Using Durbin Watson table of your text book, for k = 1, and n=20, and using = . 01 we find U = 1. 15, and L =. 95 Since DW =. 735 falls below L =. 95 , we reject the null hypothesis, namely, that the error terms are positively autocorrelated.

Remedial Measures for Serial Correlation n Addition of one or more independent variables to the regression model. n n One major cause of autocorrelated error terms is the omission from the model of one or more key variables that have time-ordered effects on the dependent variable. Use transformed variables. n The regression model is specified in terms of changes rather than levels.

Extensions of the Multiple Regression Model n In some situations, nonlinear terms may be needed as independent variables in a regression analysis. n n n Business or economic logic may suggest that nonlinearity is expected. A graphic display of the data may be helpful in determining whether non-linearity is present. One common economic cause for non-linearity is diminishing returns. n Fore example, the effect of advertising on sales may diminish as increased advertising is used.

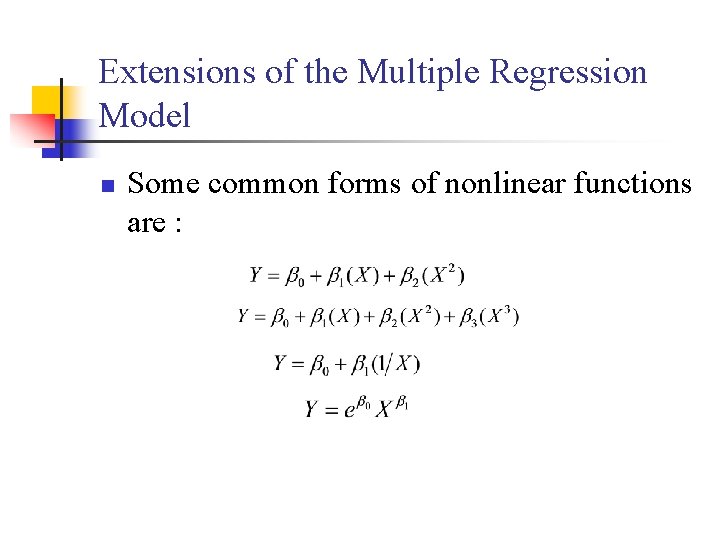

Extensions of the Multiple Regression Model n Some common forms of nonlinear functions are :

Extensions of the Multiple Regression Model n n To illustrate the use and interpretation of a non-linear term, we return to the problem of developing a forecasting model for private housing starts (PHS). So far we have looked at the following model n Where MR is the mortgage rate and Q 2, Q 3, and Q 4 are indicators variables for quarters 2, 3, and 4.

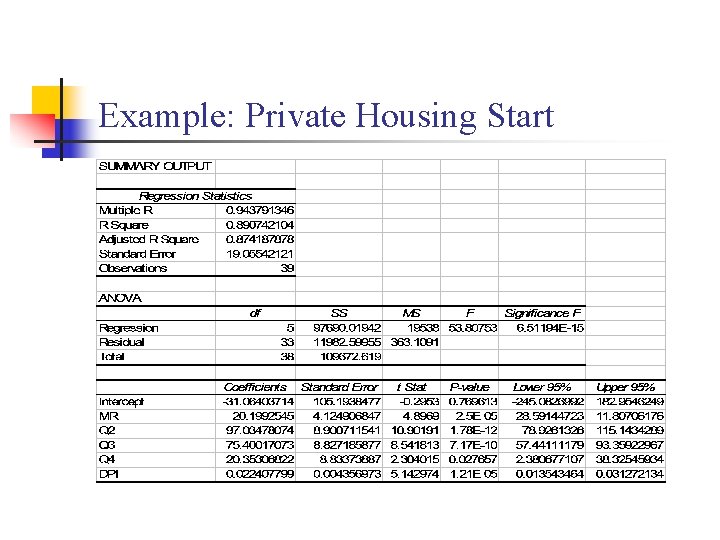

Example: Private Housing Start n n First we add real disposable personal income per capita (DPI) as an independent variable. Our new model for this data set is: Regression results for this model are shown in the next slide.

Example: Private Housing Start

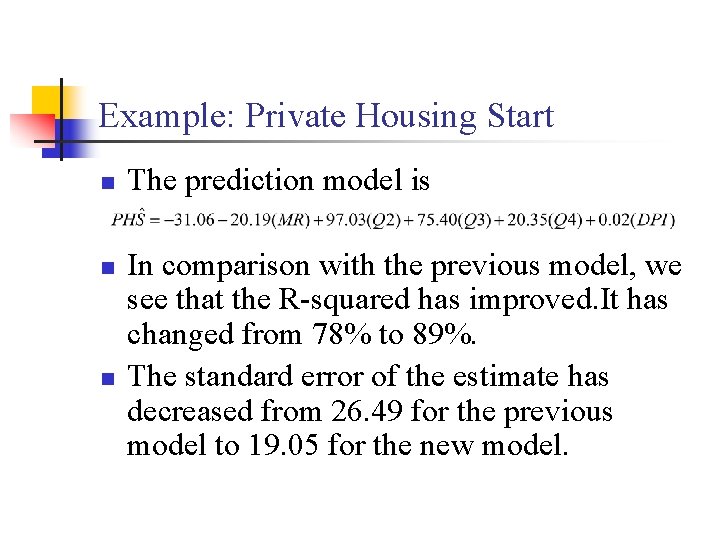

Example: Private Housing Start n n n The prediction model is In comparison with the previous model, we see that the R-squared has improved. It has changed from 78% to 89%. The standard error of the estimate has decreased from 26. 49 for the previous model to 19. 05 for the new model.

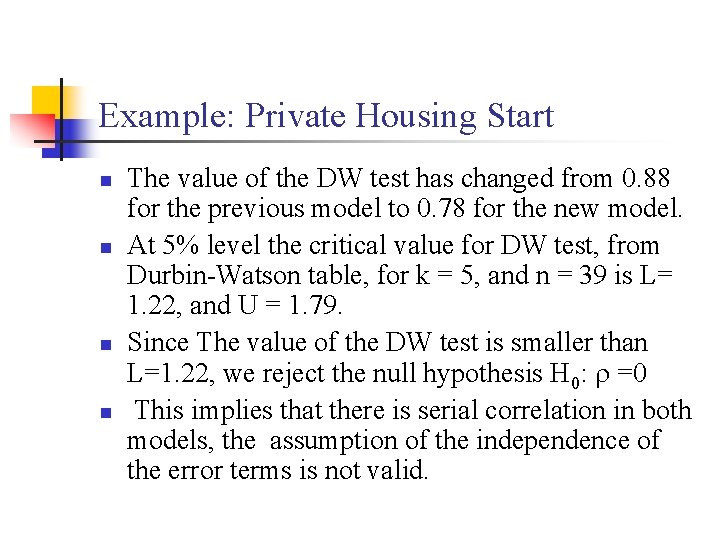

Example: Private Housing Start n n The value of the DW test has changed from 0. 88 for the previous model to 0. 78 for the new model. At 5% level the critical value for DW test, from Durbin-Watson table, for k = 5, and n = 39 is L= 1. 22, and U = 1. 79. Since The value of the DW test is smaller than L=1. 22, we reject the null hypothesis H 0: =0 This implies that there is serial correlation in both models, the assumption of the independence of the error terms is not valid.

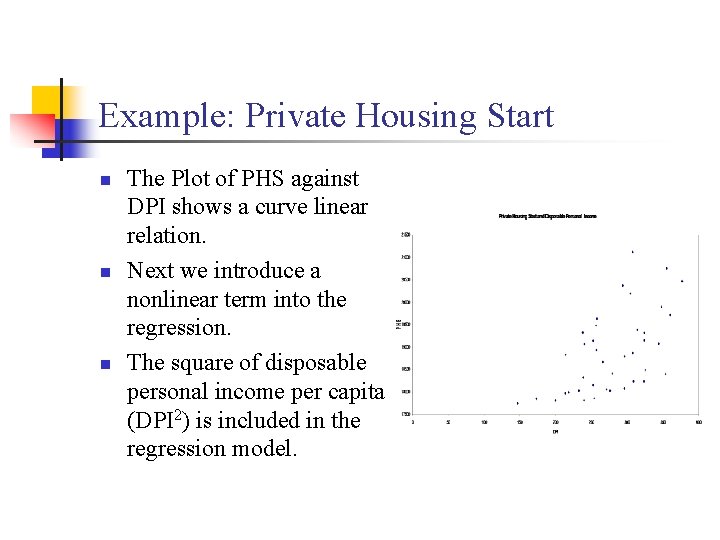

Example: Private Housing Start n n n The Plot of PHS against DPI shows a curve linear relation. Next we introduce a nonlinear term into the regression. The square of disposable personal income per capita (DPI 2) is included in the regression model.

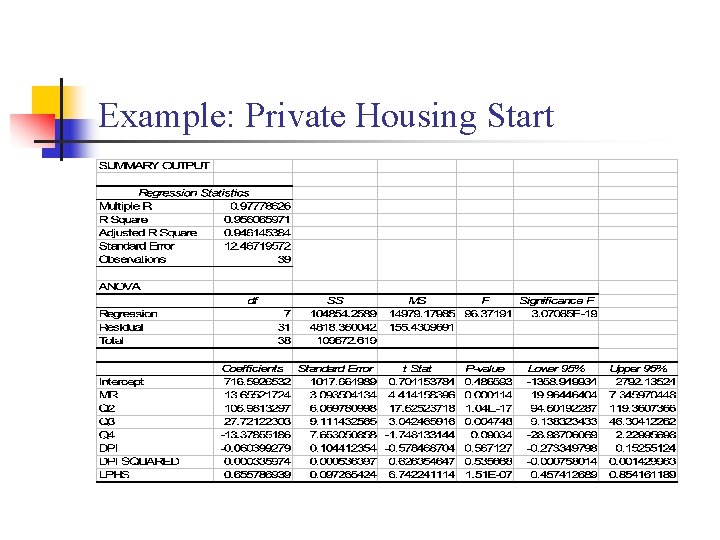

Example: Private Housing Start n n n We also add the dependent variable, lagged one quarter, as an independent variable in order to help reduce serial correlation. The third model that we fit to our data set is: Regression results for this model are shown in the next slide.

Example: Private Housing Start

Example: Private Housing Start n n n The inclusion of DPI 2 and Lagged PHS has increased the R-squared to 96% The standard error of the estimate has decreased to 12. 45 The value of the DW test has increased to 2. 32 which is greater than U = 1. 79 which rule out positive serial correlation. You see that the third model worked best for this data set. The following slide gives the data set.

Example: Private Housing Start

- Slides: 100