Appendix A Probability Theory Many processes inevitably involves

Appendix A: Probability Theory Many processes inevitably involves uncertainties, which may result from error, noise, imprecision, incompletion, distortion, etc. . Probability theory provides a mathematical framework for dealing with uncertainties. A. 1 Probabilistic Models -- A probabilistic model is a mathematical description of a process under an uncertain situation (i. e. , random process). 1

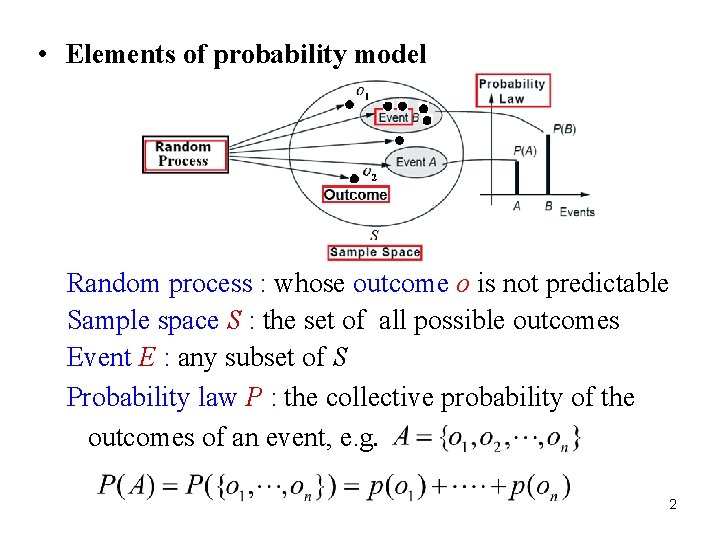

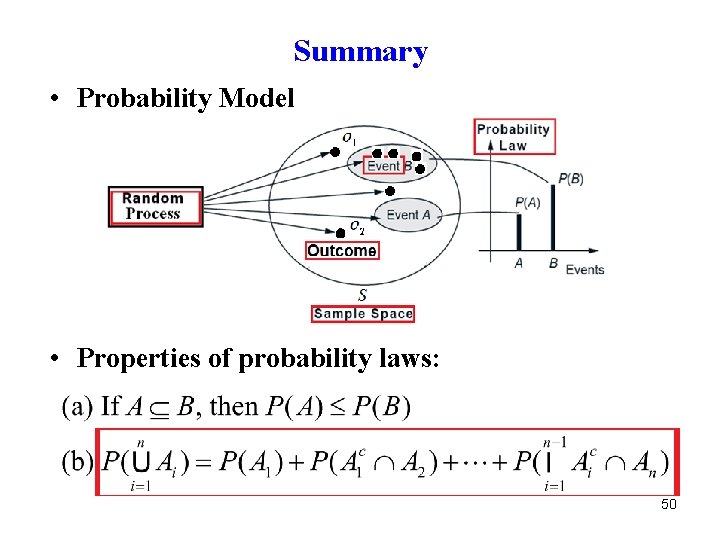

• Elements of probability model Random process : whose outcome o is not predictable Sample space S : the set of all possible outcomes Event E : any subset of S Probability law P : the collective probability of the outcomes of an event, e. g. 2

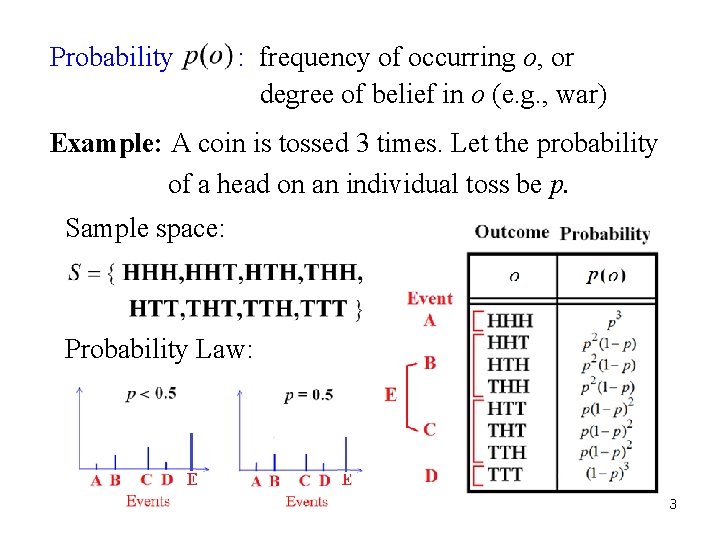

Probability : frequency of occurring o, or degree of belief in o (e. g. , war) Example: A coin is tossed 3 times. Let the probability of a head on an individual toss be p. Sample space: Probability Law: 3

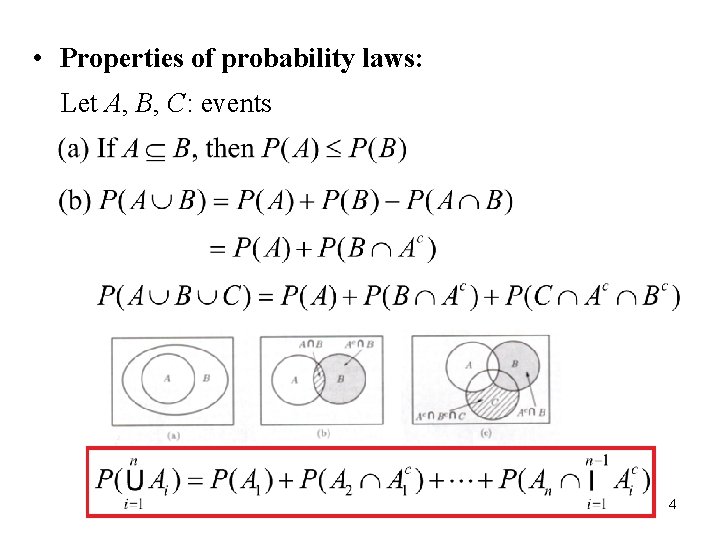

• Properties of probability laws: Let A, B, C: events 4

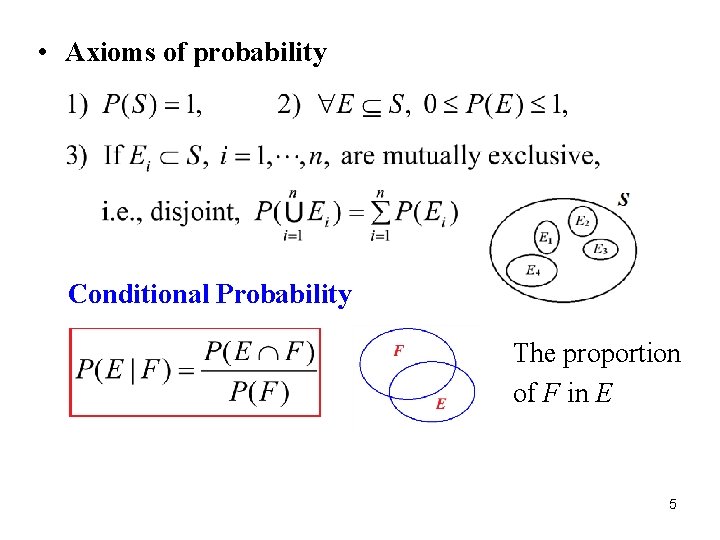

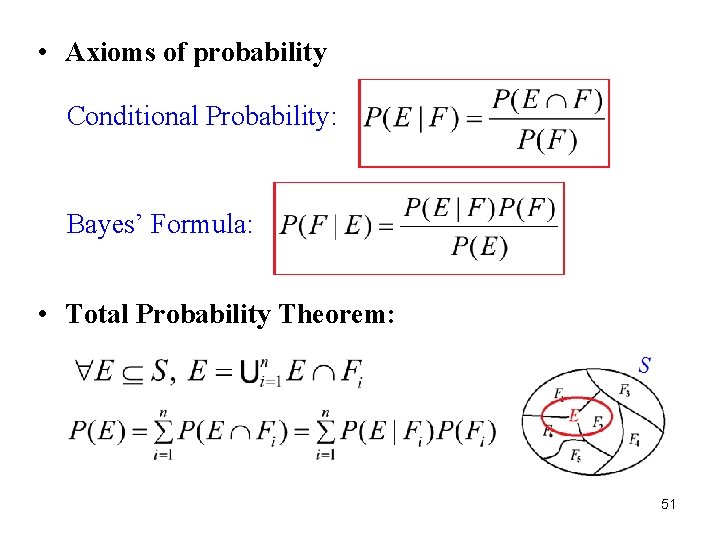

• Axioms of probability Conditional Probability The proportion of F in E 5

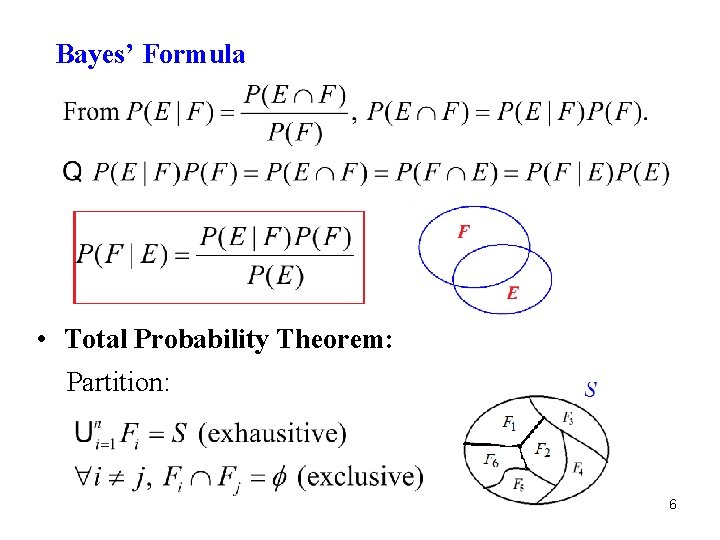

Bayes’ Formula • Total Probability Theorem: Partition: 6

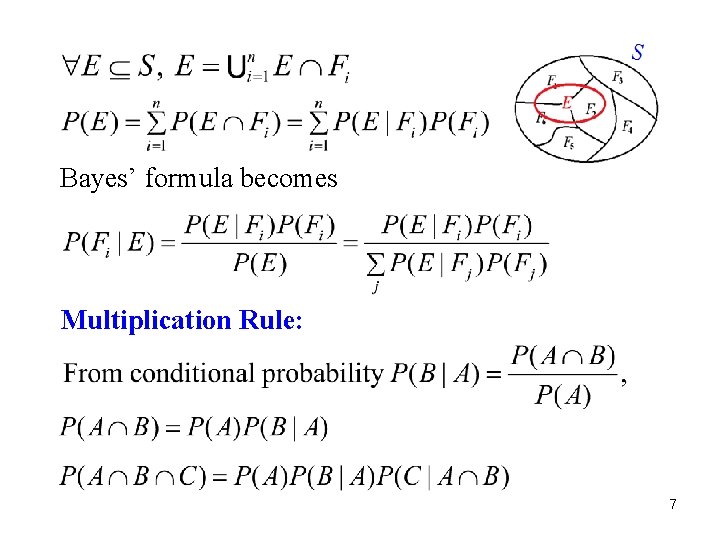

Bayes’ formula becomes Multiplication Rule: 7

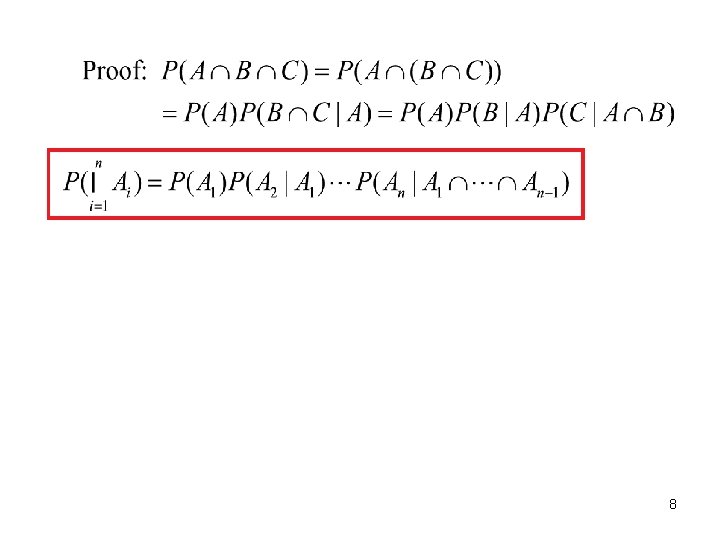

8

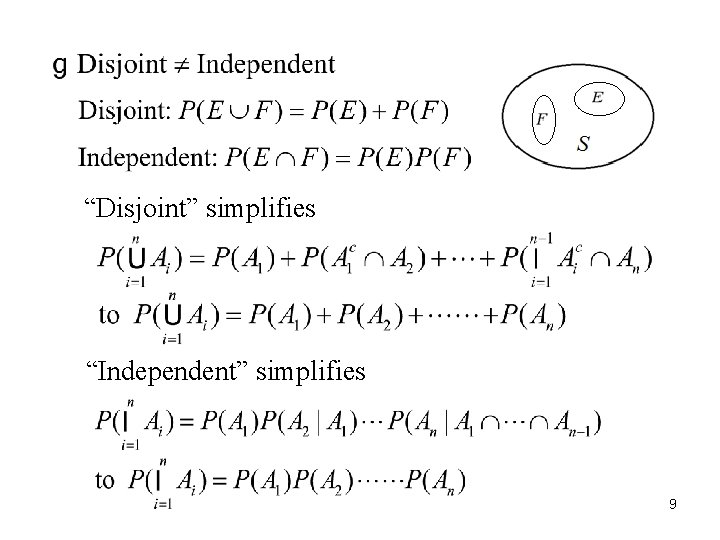

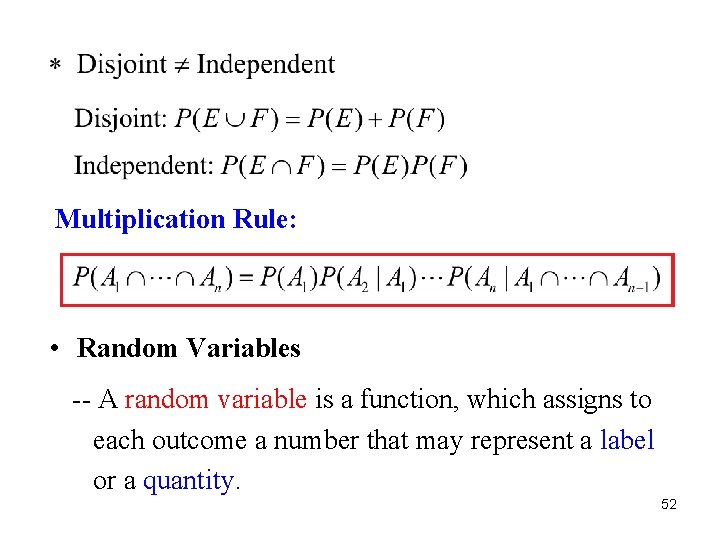

“Disjoint” simplifies “Independent” simplifies 9

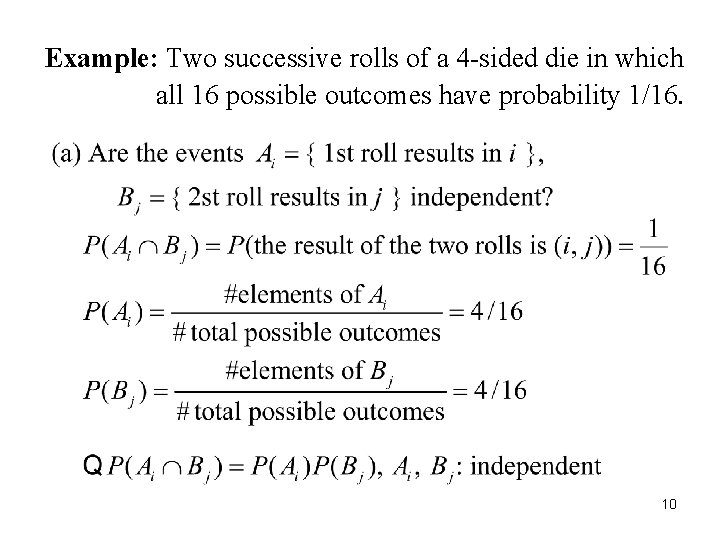

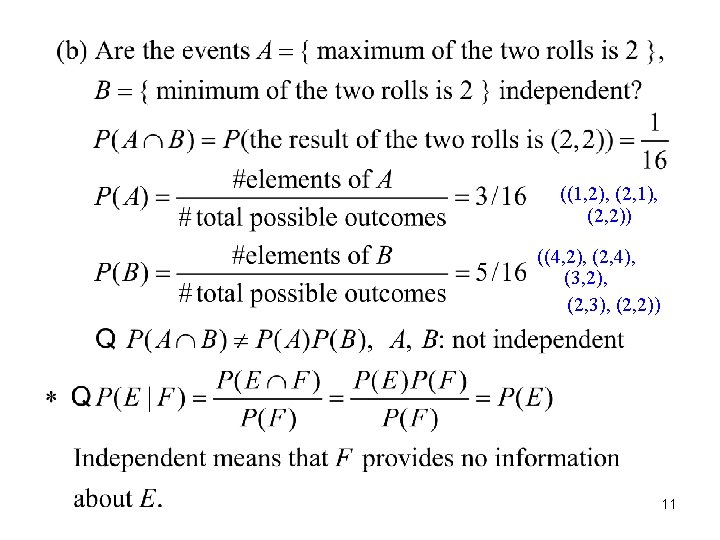

Example: Two successive rolls of a 4 -sided die in which all 16 possible outcomes have probability 1/16. 10

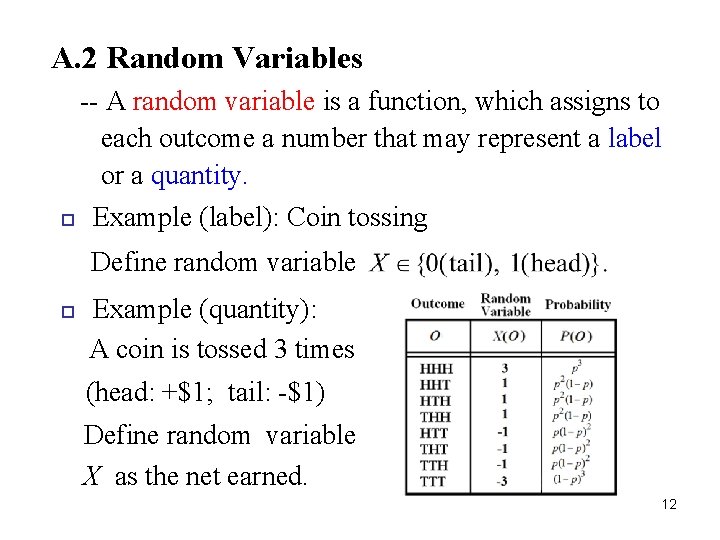

A. 2 Random Variables -- A random variable is a function, which assigns to each outcome a number that may represent a label or a quantity. Example (label): Coin tossing Define random variable Example (quantity): A coin is tossed 3 times (head: +$1; tail: -$1) Define random variable X as the net earned. 12

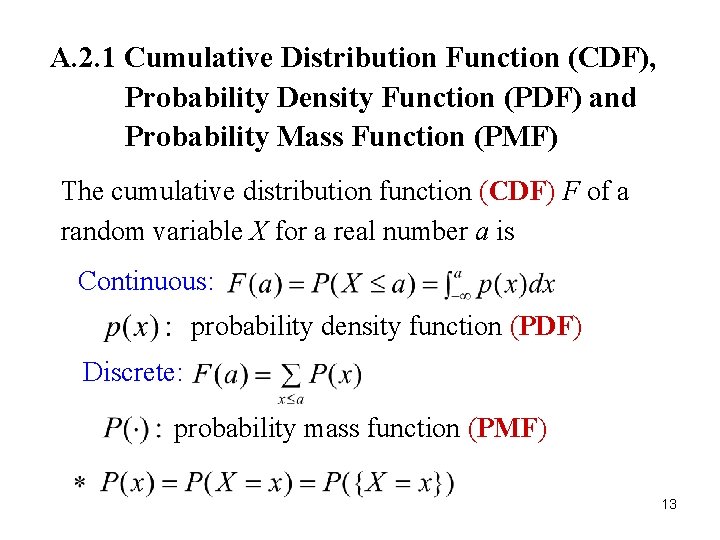

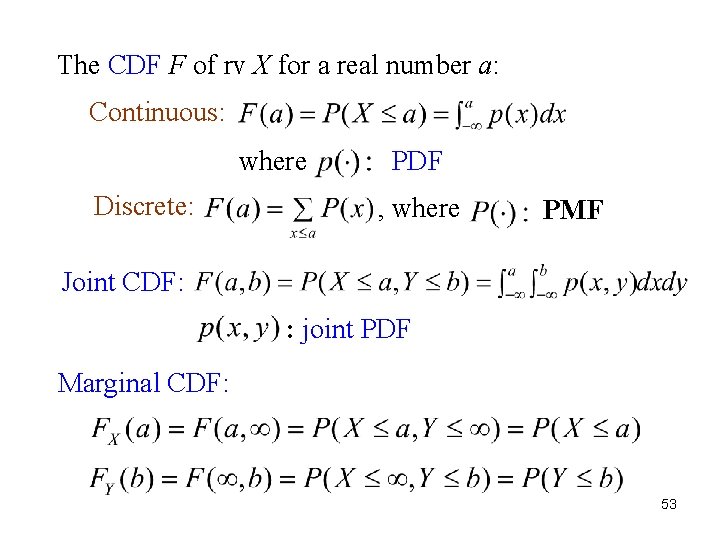

A. 2. 1 Cumulative Distribution Function (CDF), Probability Density Function (PDF) and Probability Mass Function (PMF) The cumulative distribution function (CDF) F of a random variable X for a real number a is Continuous: probability density function (PDF) Discrete: probability mass function (PMF) 13

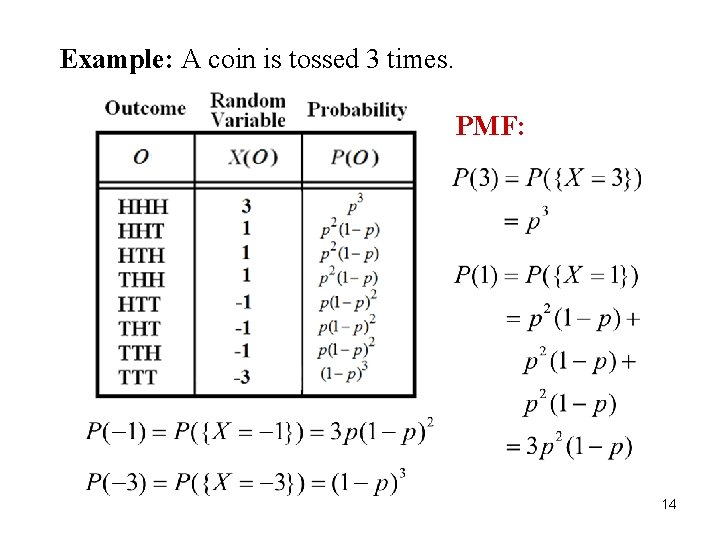

Example: A coin is tossed 3 times. PMF: 14

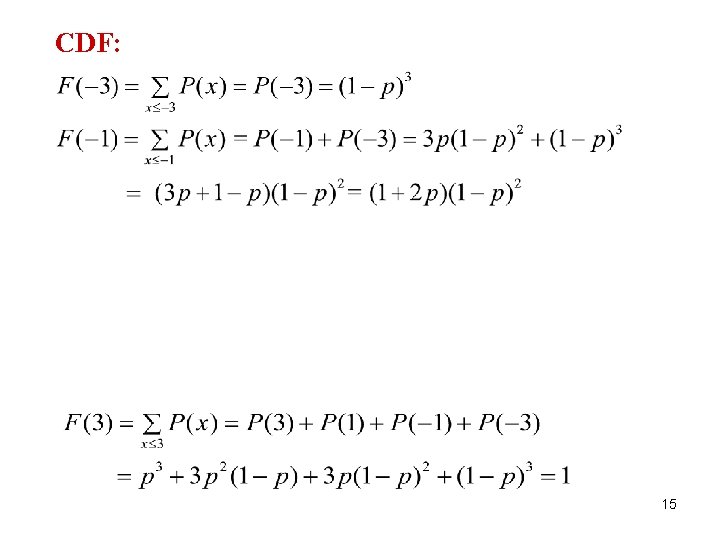

CDF: 15

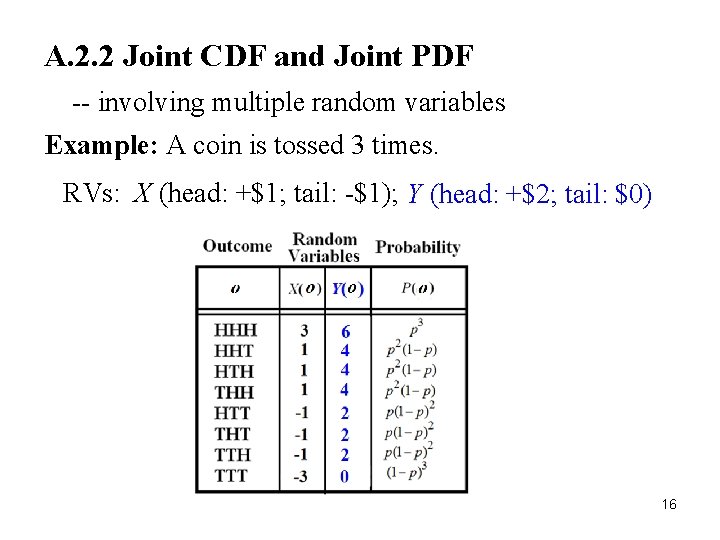

A. 2. 2 Joint CDF and Joint PDF -- involving multiple random variables Example: A coin is tossed 3 times. RVs: X (head: +$1; tail: -$1); Y (head: +$2; tail: $0) 16

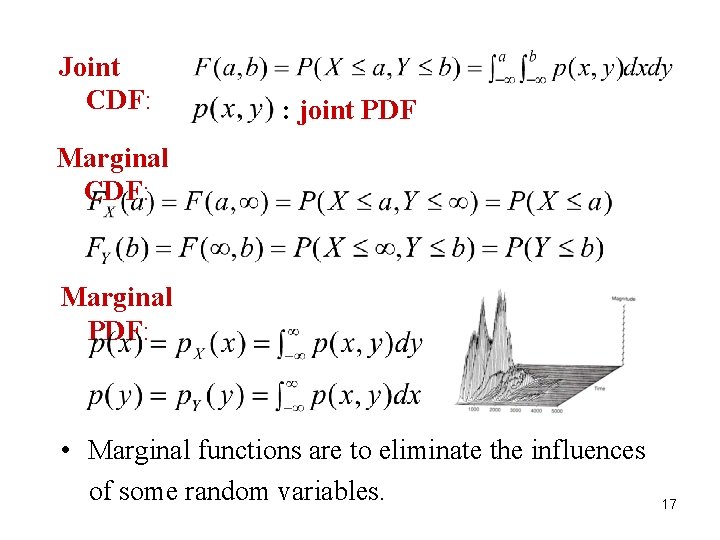

Joint CDF: : joint PDF Marginal CDF: Marginal PDF: • Marginal functions are to eliminate the influences of some random variables. 17

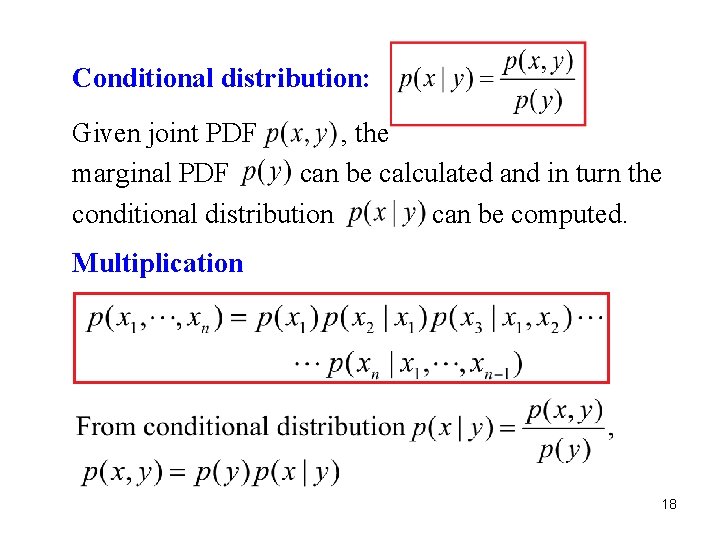

Conditional distribution: Given joint PDF , the marginal PDF can be calculated and in turn the conditional distribution can be computed. Multiplication rule: 18

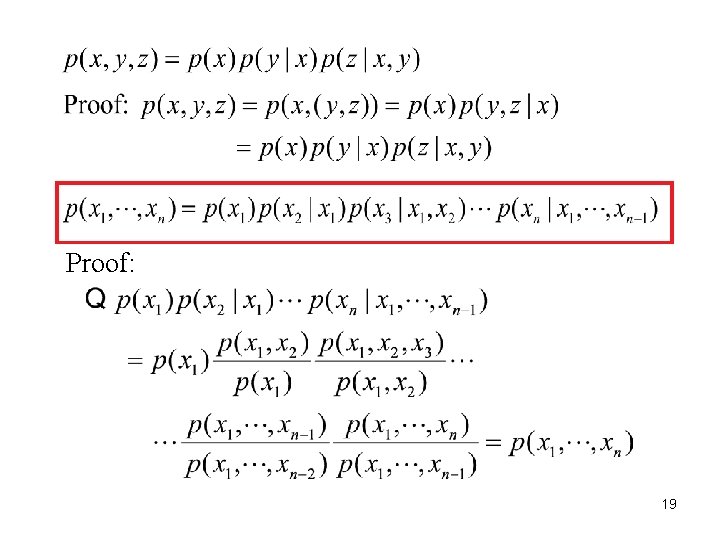

Proof: 19

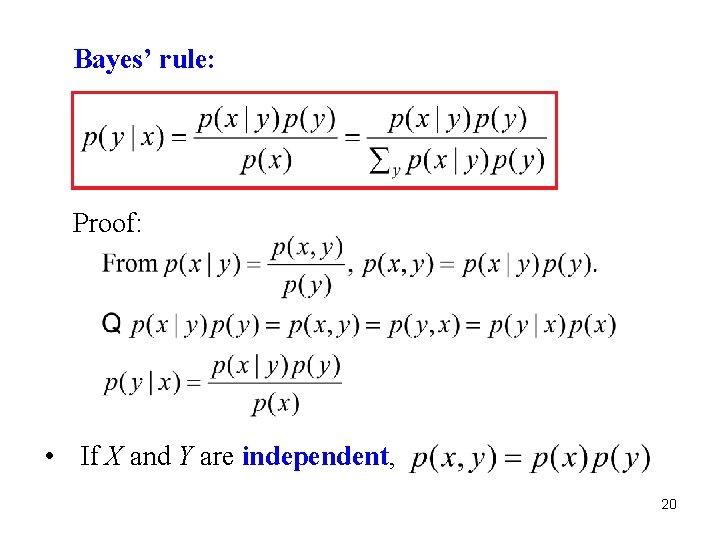

Bayes’ rule: Proof: • If X and Y are independent, 20

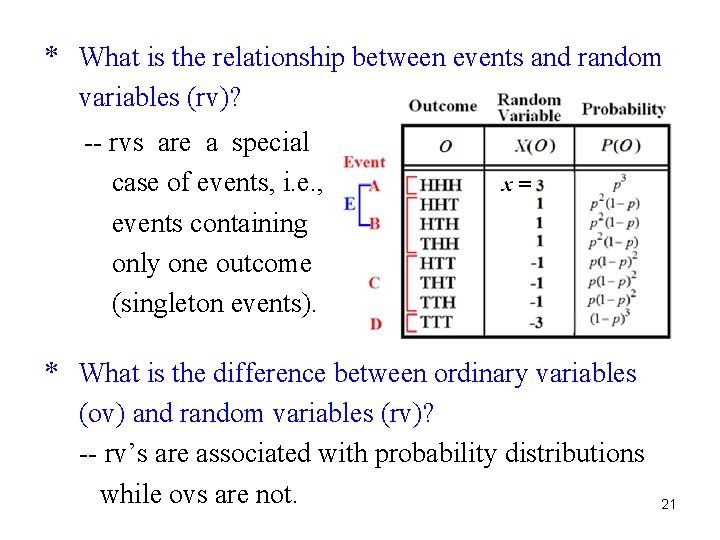

* What is the relationship between events and random variables (rv)? -- rvs are a special case of events, i. e. , events containing only one outcome (singleton events). * What is the difference between ordinary variables (ov) and random variables (rv)? -- rv’s are associated with probability distributions while ovs are not. 21

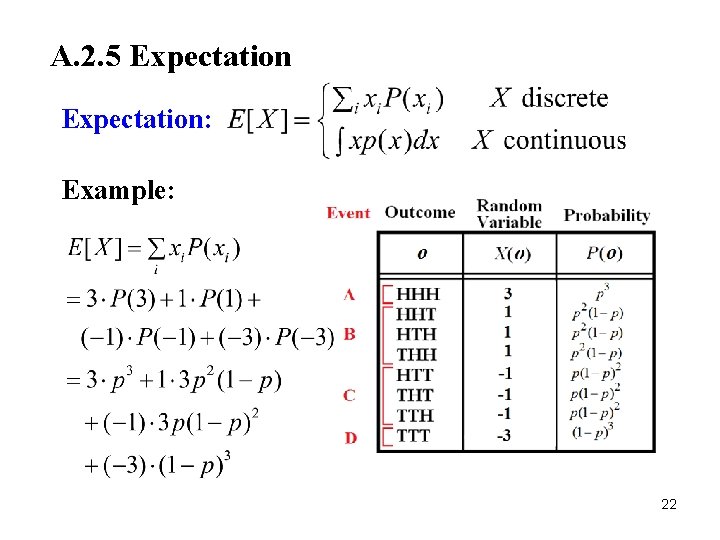

A. 2. 5 Expectation: Example: 22

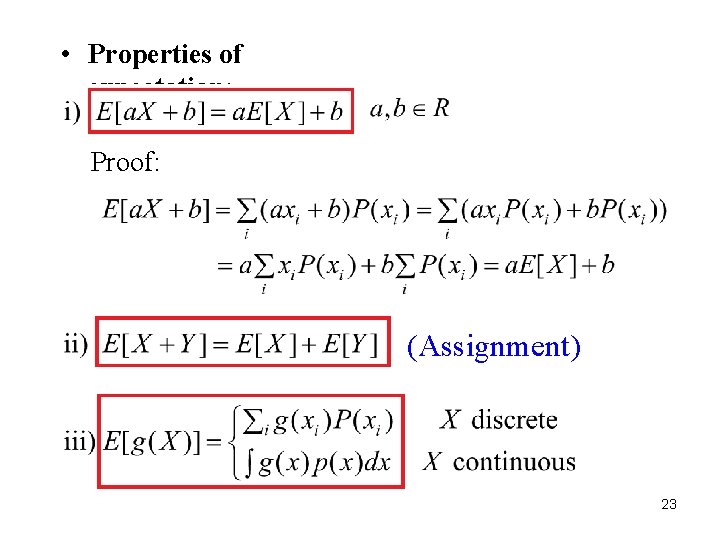

• Properties of expectation: Proof: (Assignment) 23

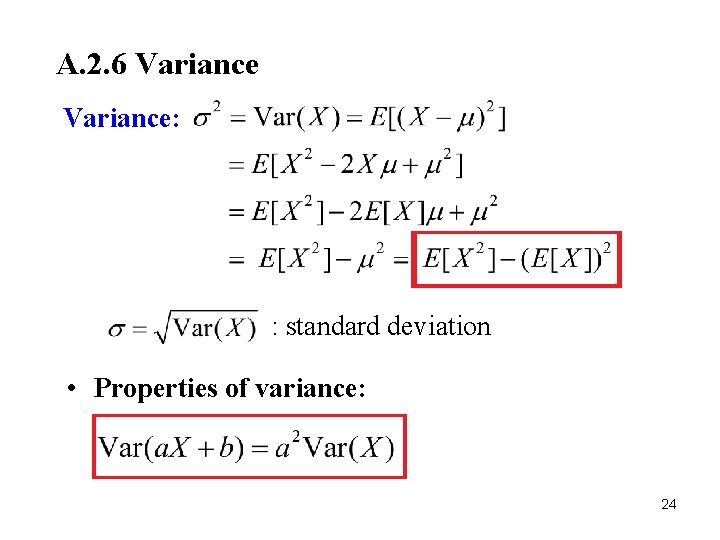

A. 2. 6 Variance: : standard deviation • Properties of variance: 24

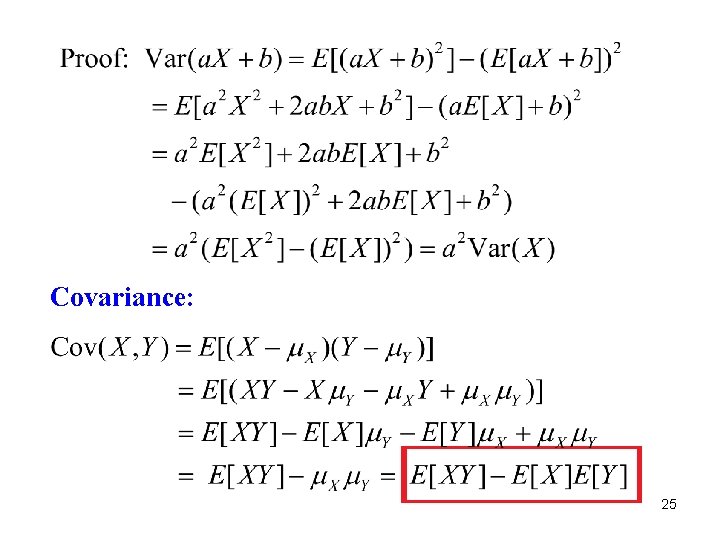

Covariance: 25

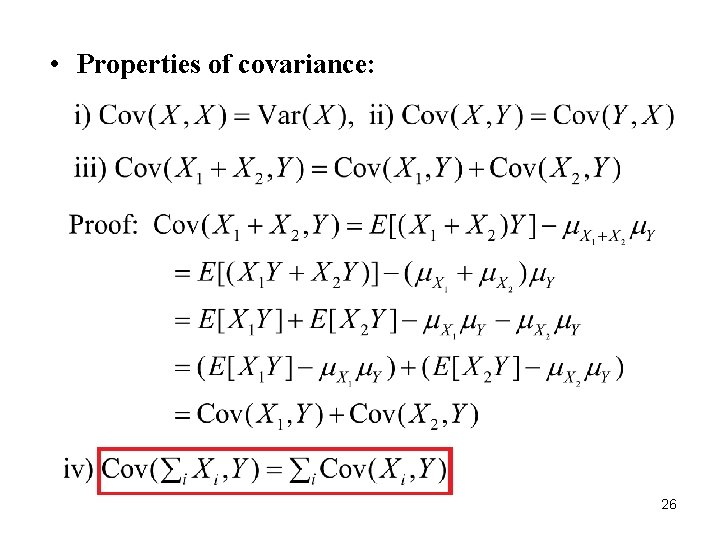

• Properties of covariance: 26

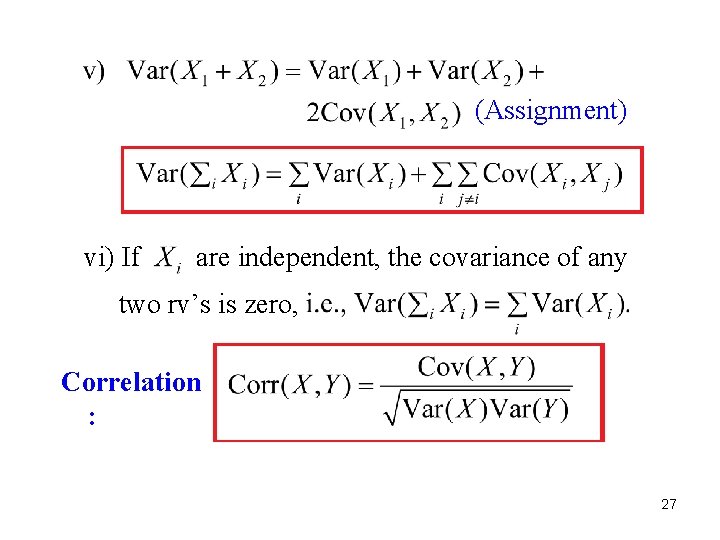

(Assignment) vi) If are independent, the covariance of any two rv’s is zero, Correlation : 27

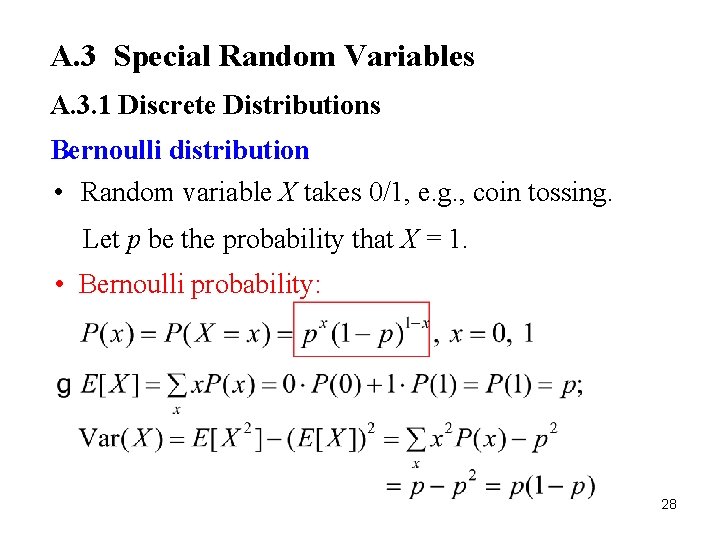

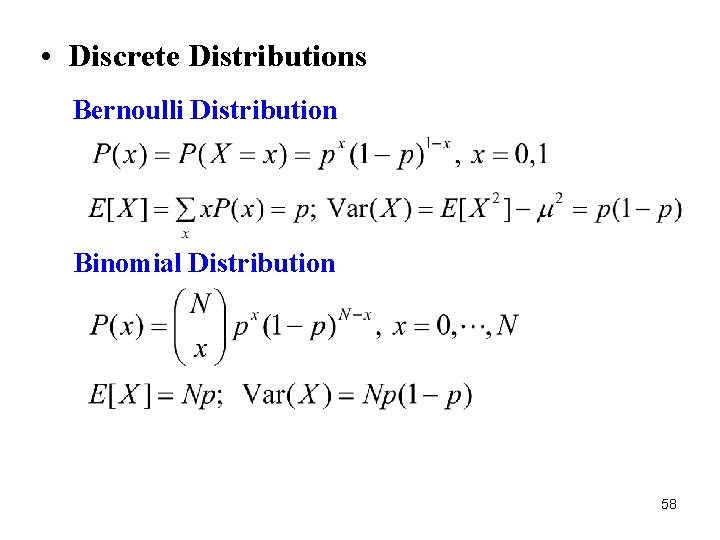

A. 3 Special Random Variables A. 3. 1 Discrete Distributions Bernoulli distribution • Random variable X takes 0/1, e. g. , coin tossing. Let p be the probability that X = 1. • Bernoulli probability: 28

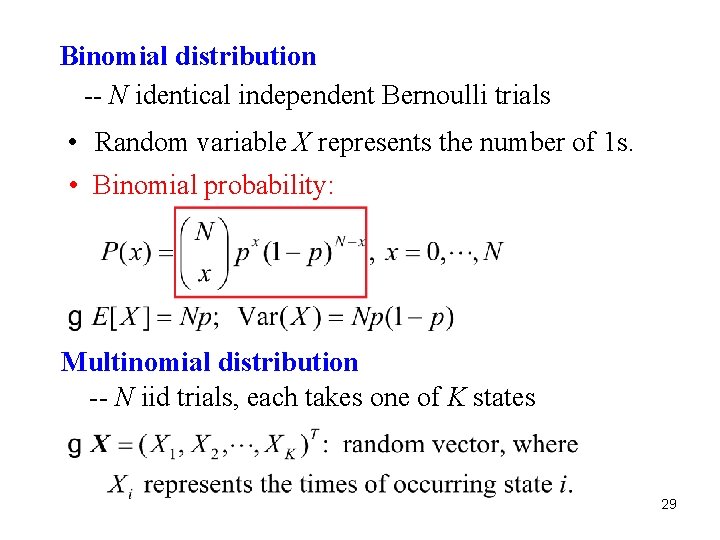

Binomial distribution -- N identical independent Bernoulli trials • Random variable X represents the number of 1 s. • Binomial probability: Multinomial distribution -- N iid trials, each takes one of K states 29

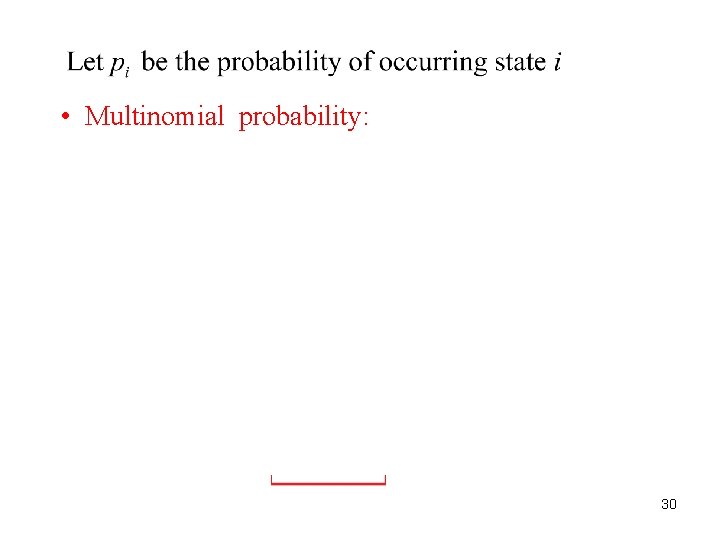

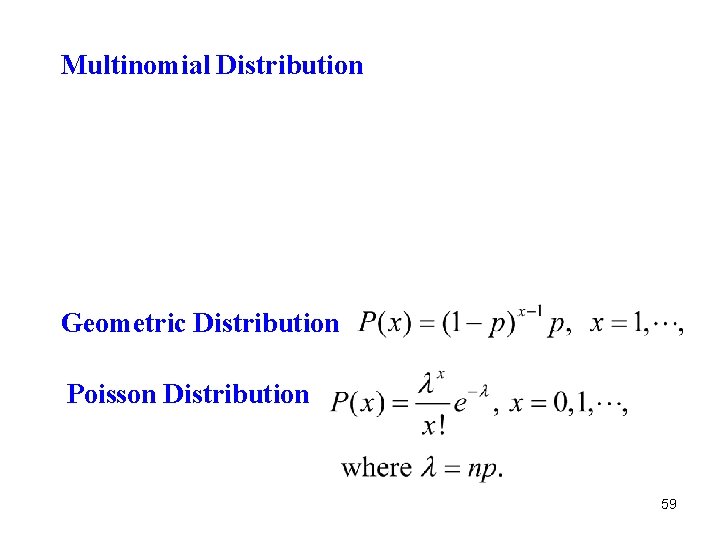

• Multinomial probability: 30

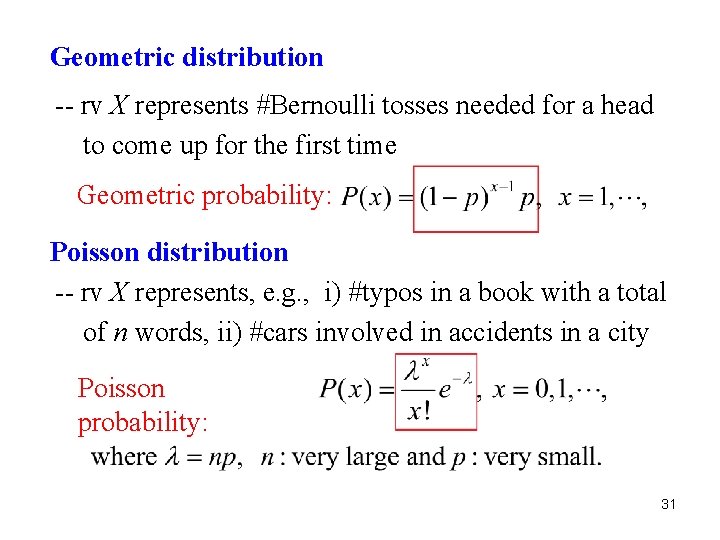

Geometric distribution -- rv X represents #Bernoulli tosses needed for a head to come up for the first time Geometric probability: Poisson distribution -- rv X represents, e. g. , i) #typos in a book with a total of n words, ii) #cars involved in accidents in a city Poisson probability: 31

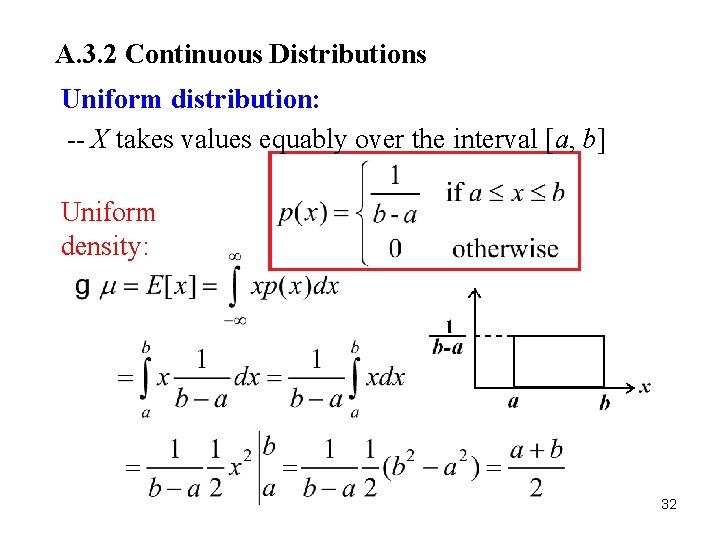

A. 3. 2 Continuous Distributions Uniform distribution: -- X takes values equably over the interval [a, b] Uniform density: 32

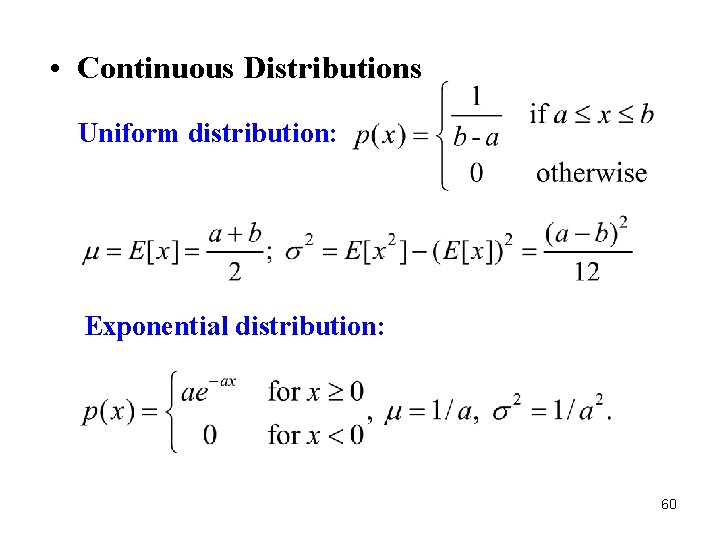

33

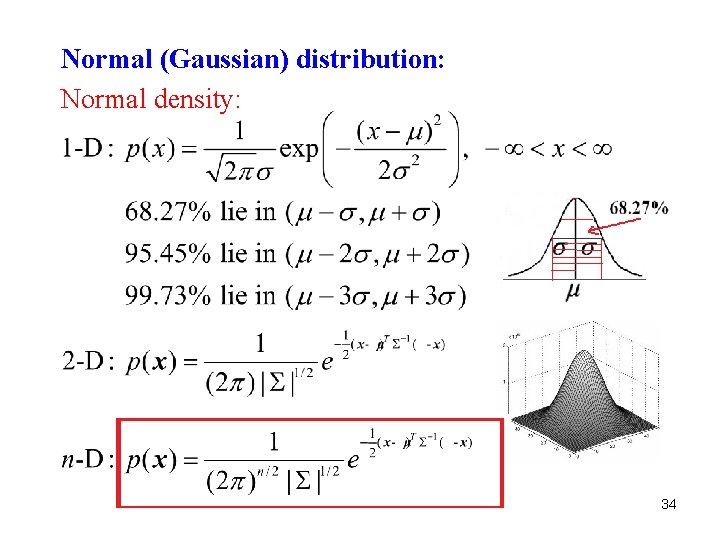

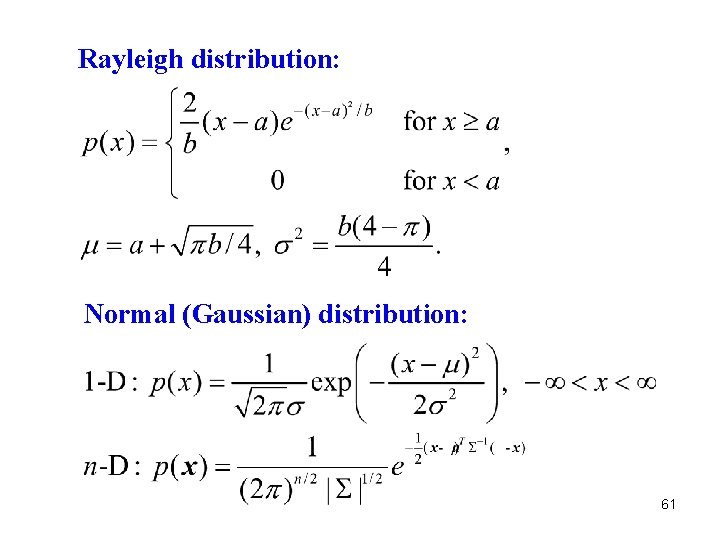

Normal (Gaussian) distribution: Normal density: 34

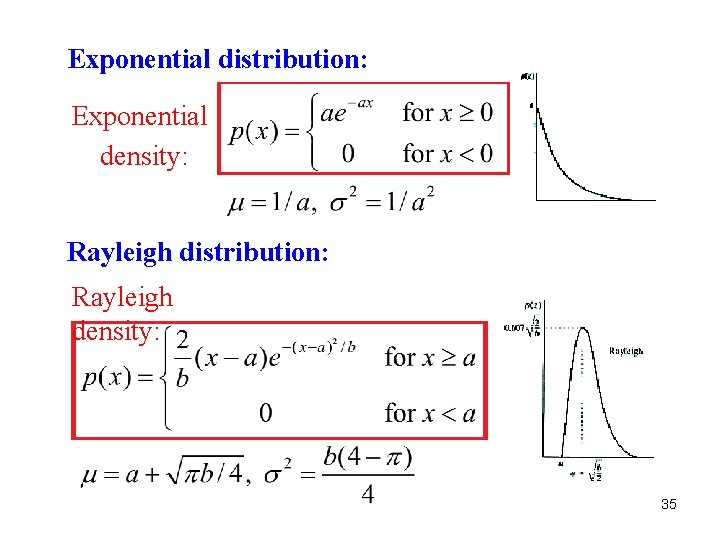

Exponential distribution: Exponential density: Rayleigh distribution: Rayleigh density: 35

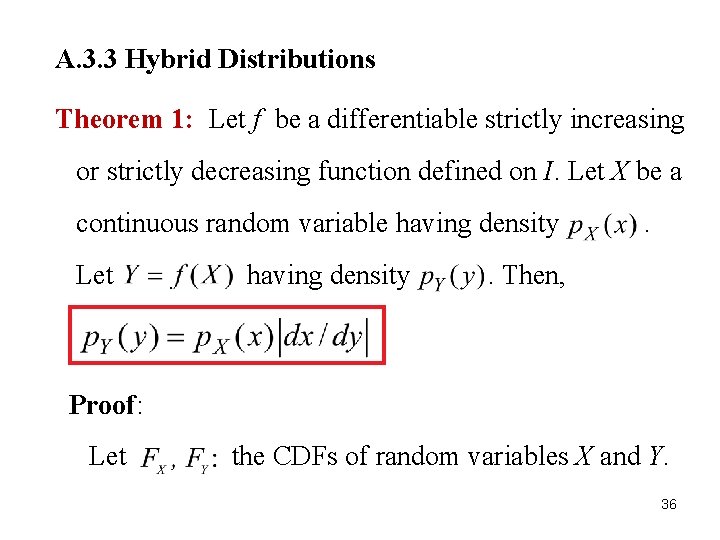

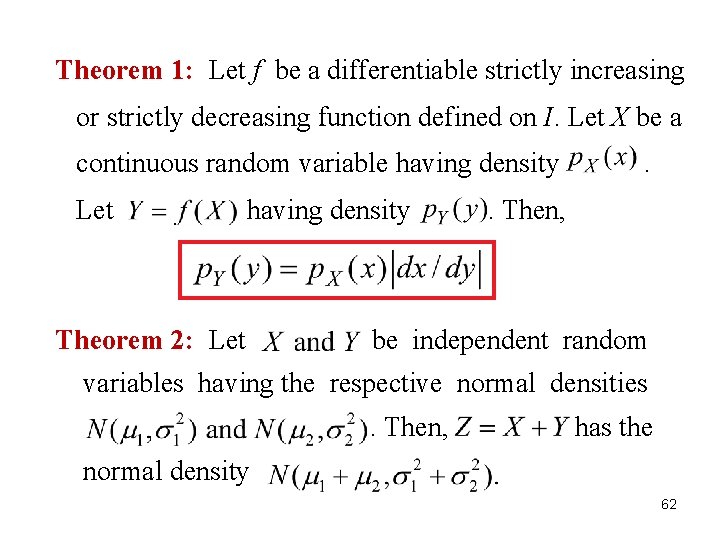

A. 3. 3 Hybrid Distributions Theorem 1: Let f be a differentiable strictly increasing or strictly decreasing function defined on I. Let X be a continuous random variable having density Let having density . . Then, Proof: Let the CDFs of random variables X and Y. 36

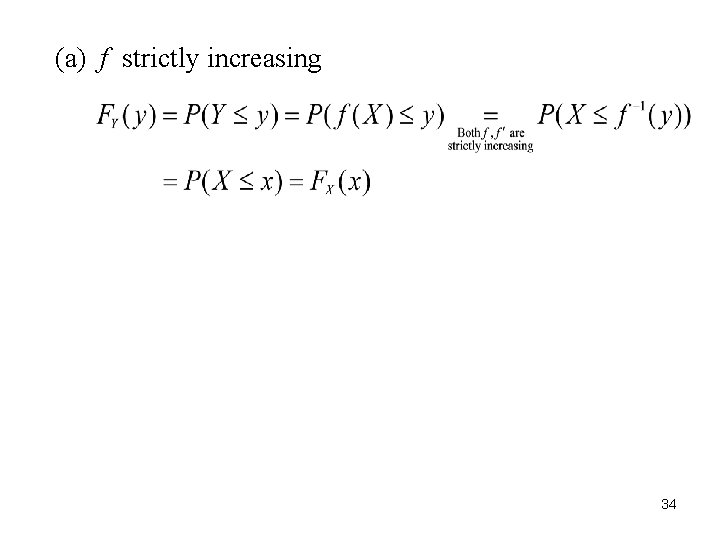

(a) f strictly increasing 34

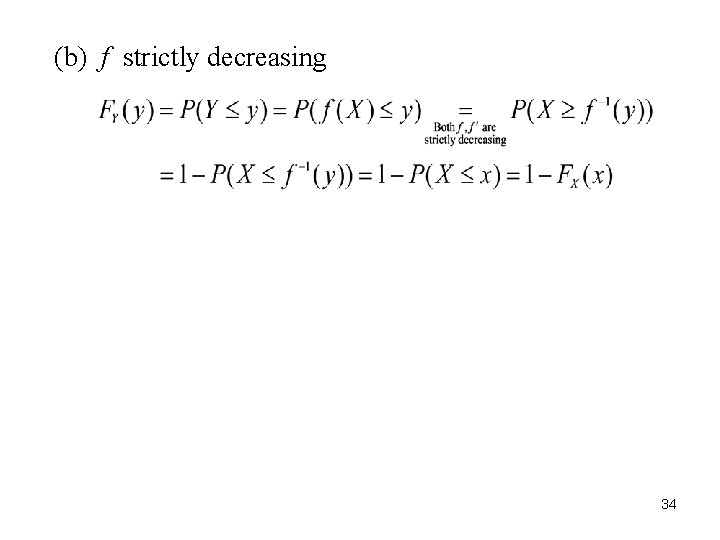

(b) f strictly decreasing 34

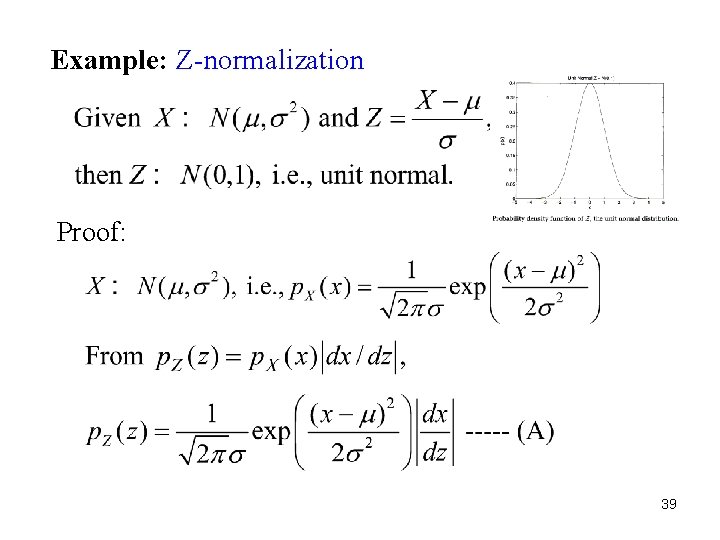

Example: Z-normalization Proof: 39

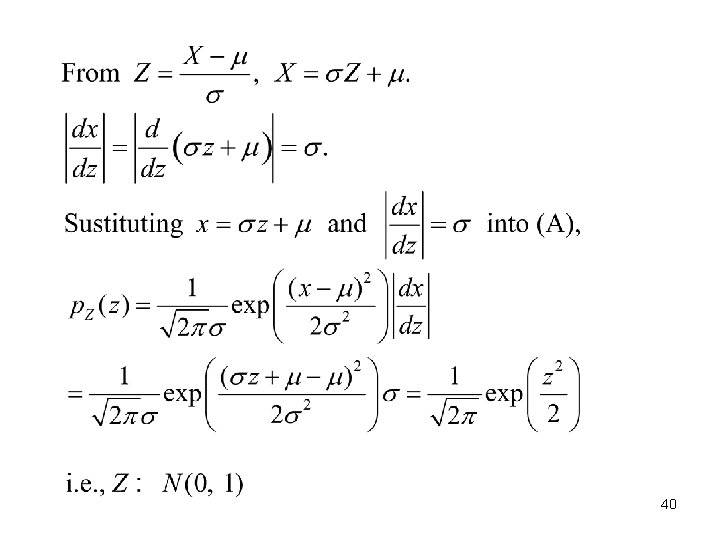

40

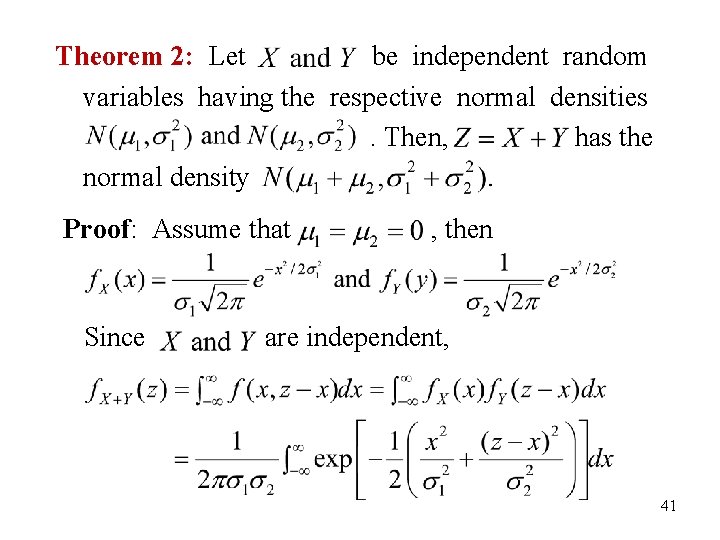

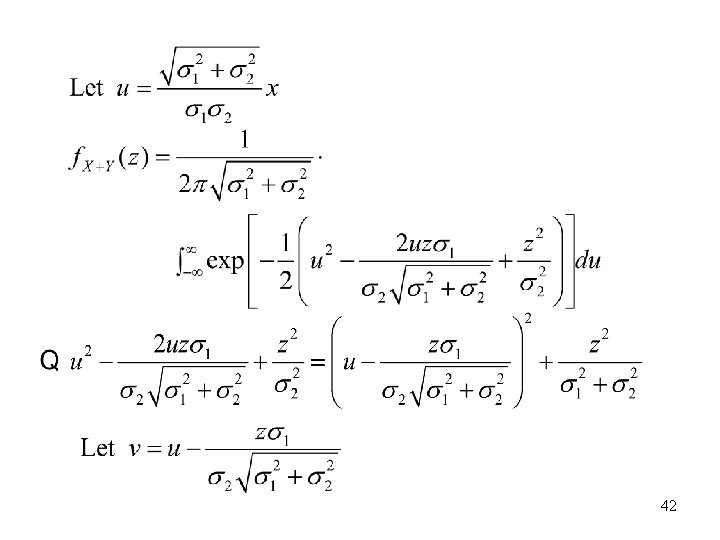

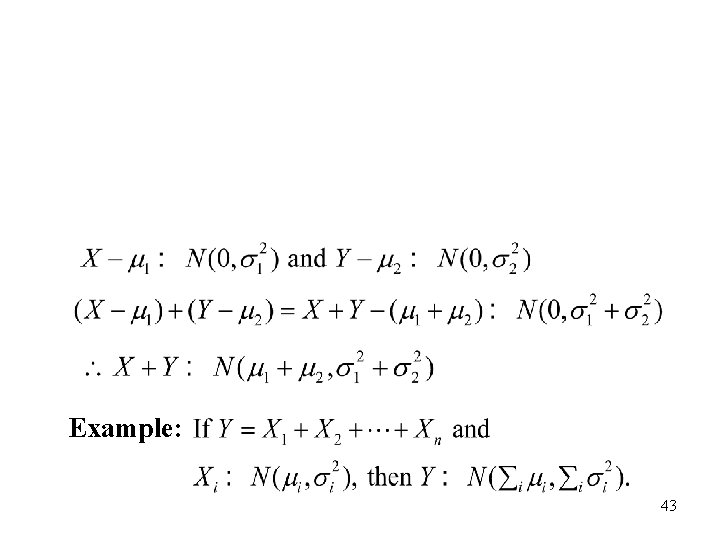

Theorem 2: Let be independent random variables having the respective normal densities. Then, has the normal density Proof: Assume that Since , then are independent, 41

42

Example: 43

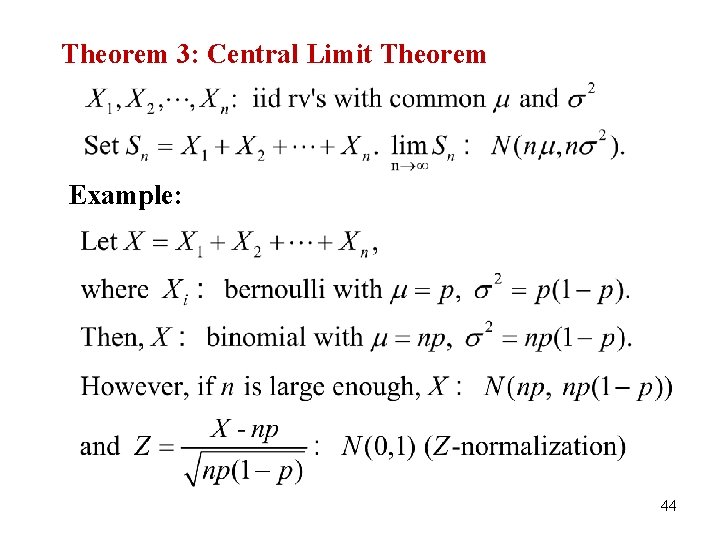

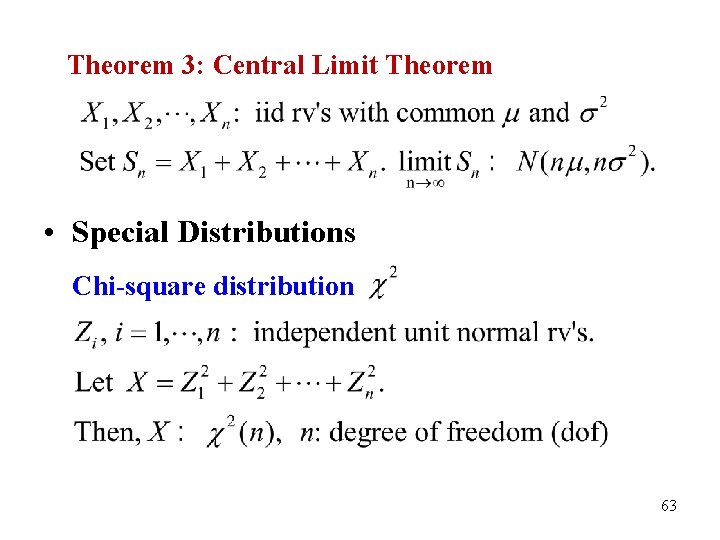

Theorem 3: Central Limit Theorem Example: 44

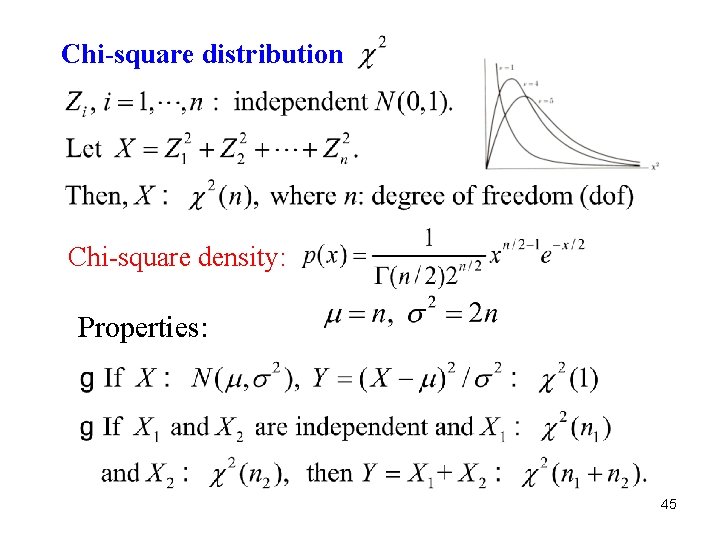

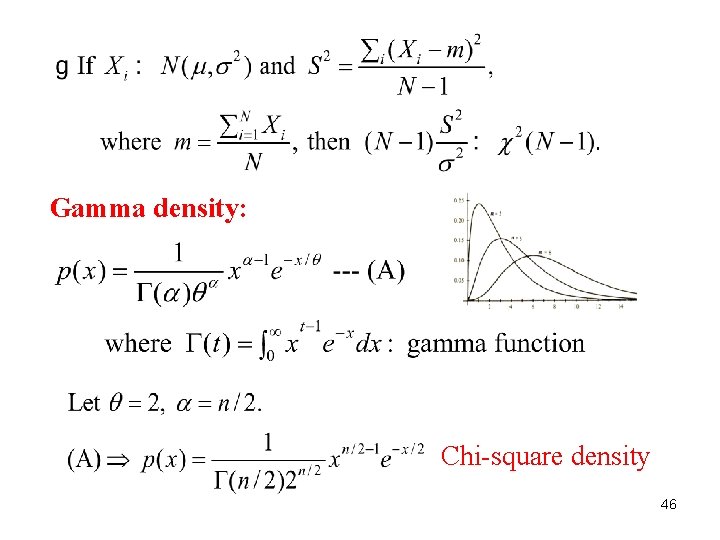

Chi-square distribution Chi-square density: Properties: 45

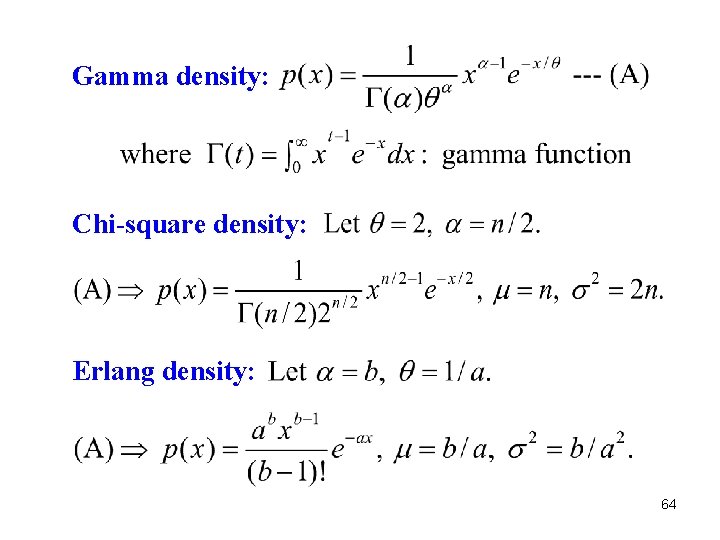

Gamma density: Chi-square density 46

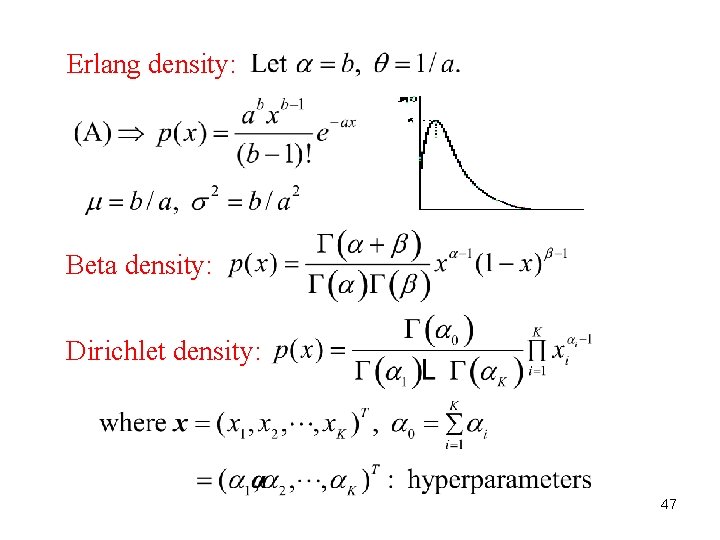

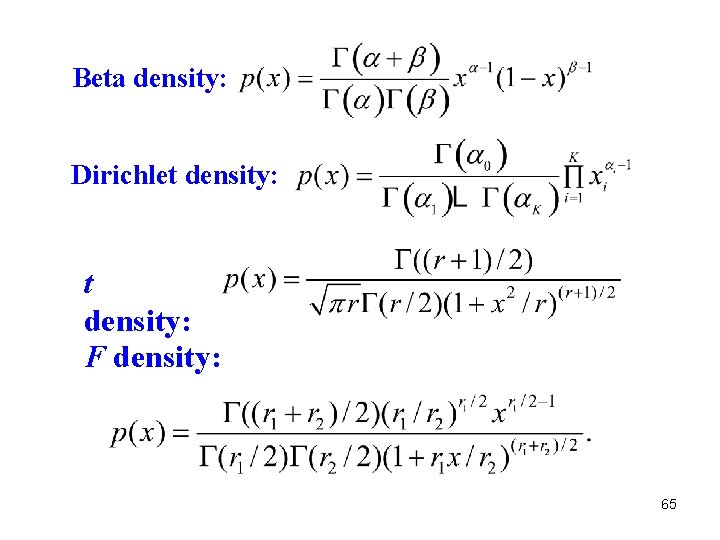

Erlang density: Beta density: Dirichlet density: 47

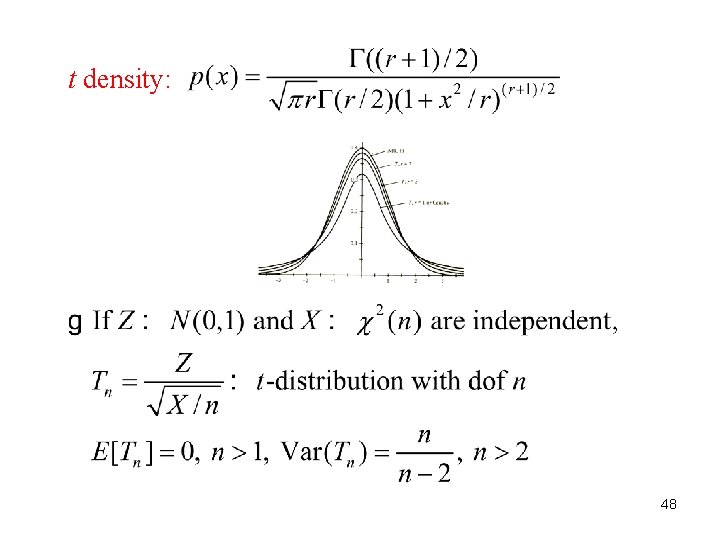

t density: 48

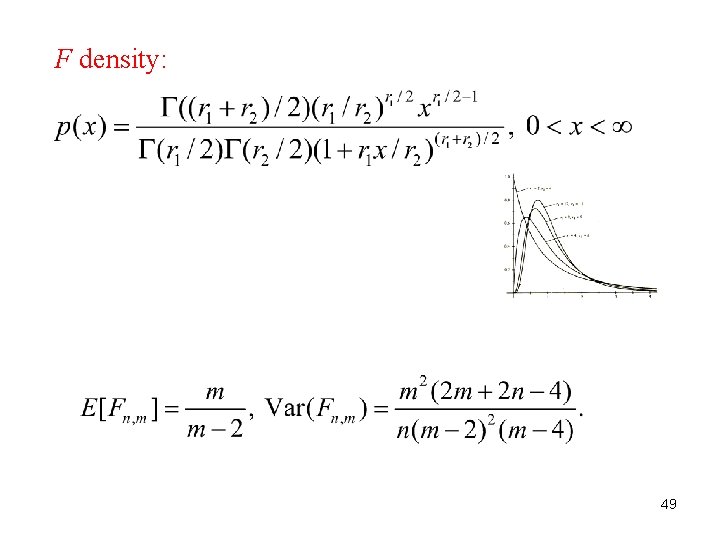

F density: 49

Summary • Probability Model • Properties of probability laws: 50

• Axioms of probability Conditional Probability: Bayes’ Formula: • Total Probability Theorem: 51

Multiplication Rule: • Random Variables -- A random variable is a function, which assigns to each outcome a number that may represent a label or a quantity. 52

The CDF F of rv X for a real number a: Continuous: where Discrete: PDF , where PMF Joint CDF: : joint PDF Marginal CDF: 53

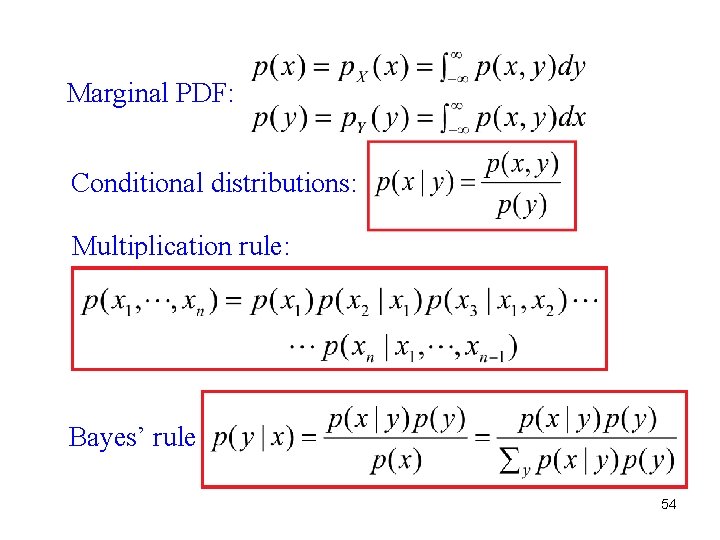

Marginal PDF: Conditional distributions: Multiplication rule: Bayes’ rule: 54

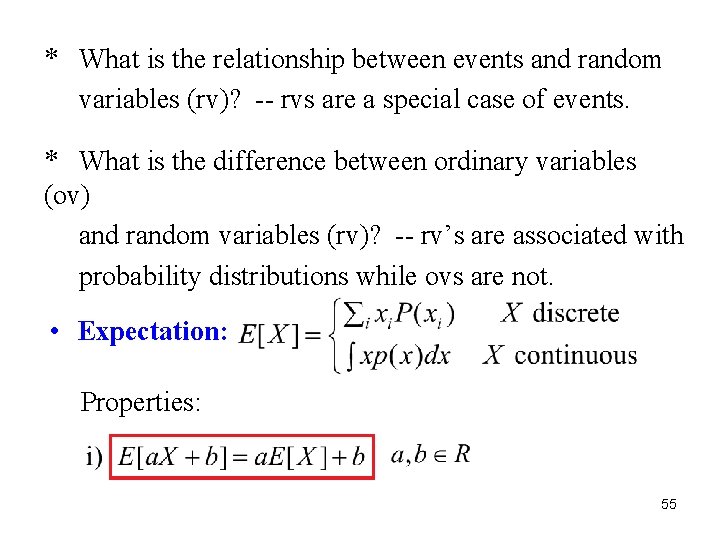

* What is the relationship between events and random variables (rv)? -- rvs are a special case of events. * What is the difference between ordinary variables (ov) and random variables (rv)? -- rv’s are associated with probability distributions while ovs are not. • Expectation: Properties: 55

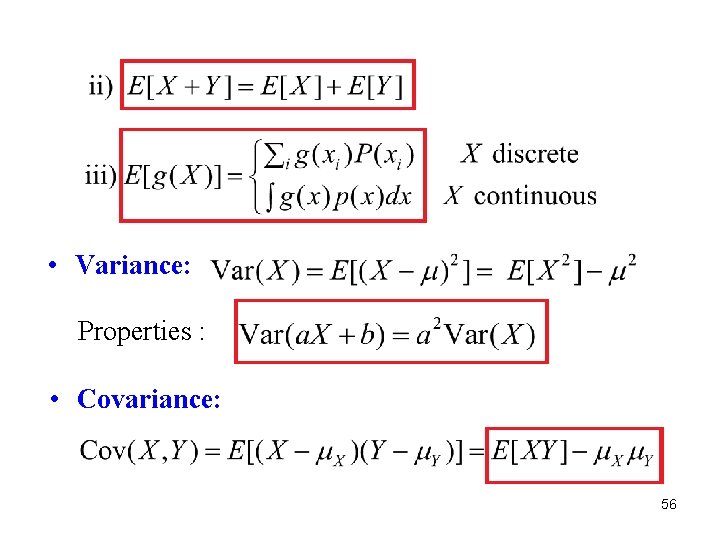

• Variance: Properties : • Covariance: 56

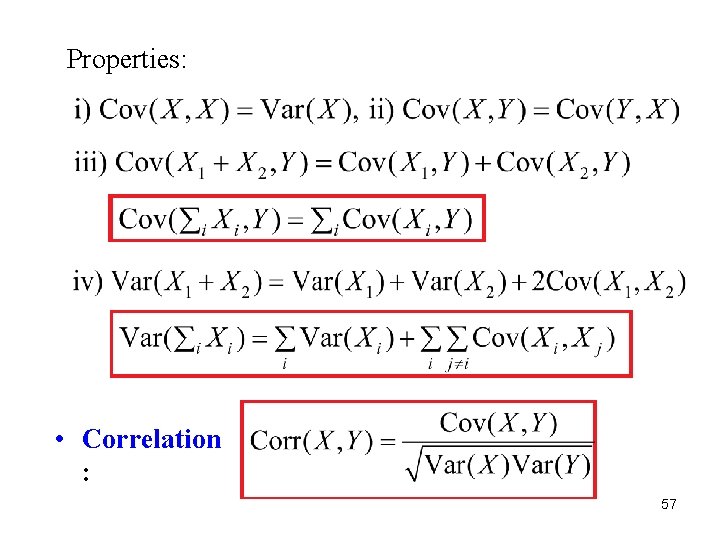

Properties: • Correlation : 57

• Discrete Distributions Bernoulli Distribution Binomial Distribution 58

Multinomial Distribution Geometric Distribution Poisson Distribution 59

• Continuous Distributions Uniform distribution: Exponential distribution: 60

Rayleigh distribution: Normal (Gaussian) distribution: 61

Theorem 1: Let f be a differentiable strictly increasing or strictly decreasing function defined on I. Let X be a continuous random variable having density Let having density Theorem 2: Let . . Then, be independent random variables having the respective normal densities. Then, has the normal density 62

Theorem 3: Central Limit Theorem • Special Distributions Chi-square distribution 63

Gamma density: Chi-square density: Erlang density: 64

Beta density: Dirichlet density: F density: 65

- Slides: 65