Apache Architecture How do we measure performance Benchmarks

Apache Architecture

How do we measure performance? • Benchmarks – Requests per Second – Bandwidth – Latency – Concurrency (Scalability)

Building a scalable web server • handling an HTTP request – map the URL to a resource – check whether client has permission to access the resource – choose a handler and generate a response – transmit the response to the client – log the request • must handle many clients simultaneously • must do this as fast as possible

Resource Pools • one bottleneck to server performance is the operating system – system calls to allocate memory, access a file, or create a child process take significant amounts of time – as with many scaling problems in computer systems, caching is one solution • resource pool: application-level data structure to allocate and cache resources – allocate and free memory in the application instead of using a system call – cache files, URL mappings, recent responses – limits critical functions to a small, well-tested part of code

Multi-Processor Architectures • a critical factor in web server performance is how each new connection is handled – common optimization strategy: identify the most commonlyexecuted code and make this run as fast as possible – common case: accept a client and return several static objects – make this run fast: pre-allocate a process or thread, cache commonly-used files and the HTTP message for the response

Connections • must multiplex handling many connections simultaneously – select(), poll(): event-driven, singly-threaded – fork(): create a new process for a connection – pthread create(): create a new thread for a connection • synchronization among processes/threads – shared memory: semaphores – message passing

Select • select(int nfds, fd_set *readfds, fd_set *writefds, fd_set *exceptfds, struct timeval *timeout); • Allows a process to block until data is available on any one of a set of file descriptors. • One web server process can service hundreds of socket connections

Event Driven Architecture • one process handles all events • must multiplex handling of many clients and their messages – use select() or poll() to multiplex socket I/O events – provide a list of sockets waiting for I/O events – sleeps until an event occurs on one or more sockets – can provide a timeout to limit waiting time • must use non-blocking system calls • some evidence that it can be more efficient than process or thread architectures

Process Driven Architecture • devote a separate process/thread to each event – master process listens for connections – master creates a separate process/thread for each new connection • performance considerations – creating a new process involves significant overhead – threads are less expensive, but still involve overhead • may create too many processes/threads on a busy server

Process/Thread Pool Architecture • master thread – creates a pool of threads – listens for incoming connections – places connections on a shared queue • processes/threads – – take connections from shared queue handle one I/O event for the connection return connection to the queue live for a certain number of events (prevents longlived memory leaks) • need memory synchronization

Hybrid Architectures • each process can handle multiple requests – each process is an event-driven server – must coordinate switching among events/requests • each process controls several threads – threads can share resources easily – requires some synchronization primitives • event driven server that handles fast tasks but spawns helper processes for time-consuming requests

What makes a good Web Server? • • • Correctness Reliability Scalability Stability Speed

Correctness • Does it conform to the HTTP specification? • Does it work with every browser? • Does it handle erroneous input gracefully?

Reliability • Can you sleep at night? • Are you being paged during dinner? • It is an appliance?

Scalability • Does it handle nominal load? • Have you been Slashdotted? – And did you survive? • What is your peak load?

Speed (Latency) • Does it feel fast? • Do pages snap in quickly? • Do users often reload pages?

Apache the General Purpose Webserver Apache developers strive for correctness first, and speed second.

Apache HTTP Server Architecture Overview

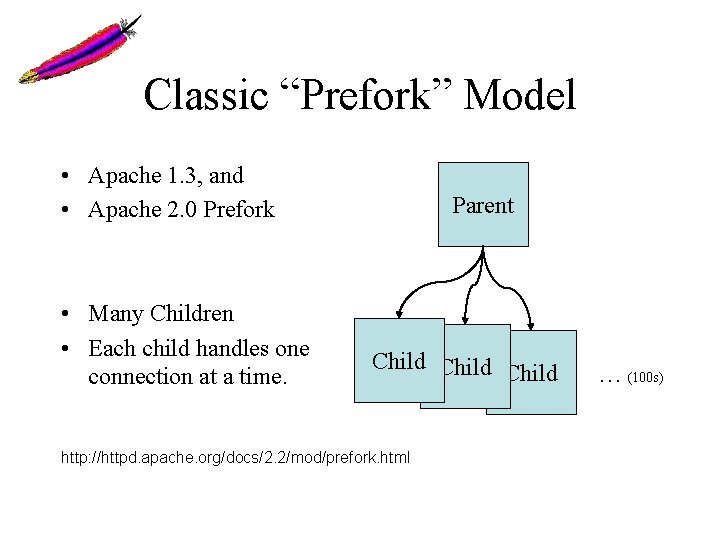

Classic “Prefork” Model • Apache 1. 3, and • Apache 2. 0 Prefork • Many Children • Each child handles one connection at a time. Parent Child http: //httpd. apache. org/docs/2. 2/mod/prefork. html … (100 s)

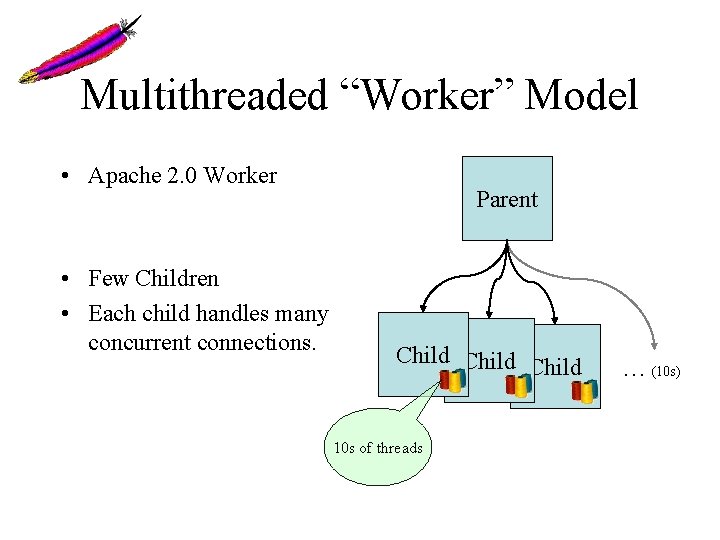

Multithreaded “Worker” Model • Apache 2. 0 Worker • Few Children • Each child handles many concurrent connections. Parent Child 10 s of threads … (10 s)

Dynamic Content: Modules • Extensive API • Pluggable Interface • Dynamic or Static Linkage

In-process Modules • Run from inside the httpd process – CGI (mod_cgi) – mod_perl – mod_php – mod_python – mod_tcl

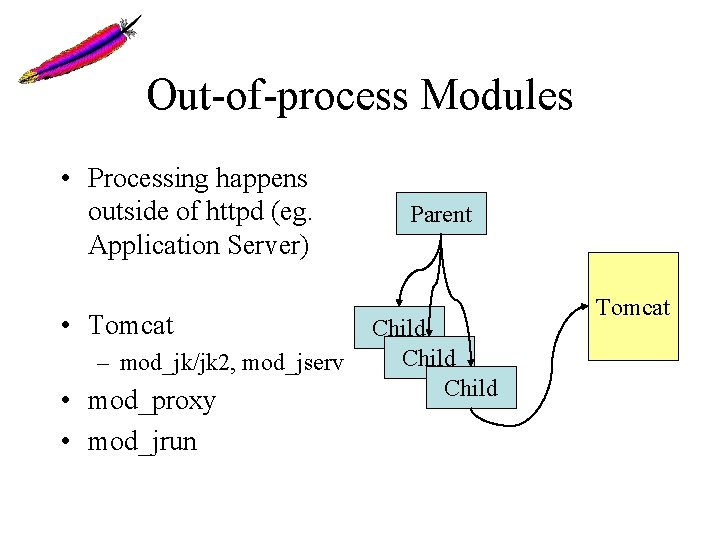

Out-of-process Modules • Processing happens outside of httpd (eg. Application Server) • Tomcat – mod_jk/jk 2, mod_jserv • mod_proxy • mod_jrun Parent Child Tomcat

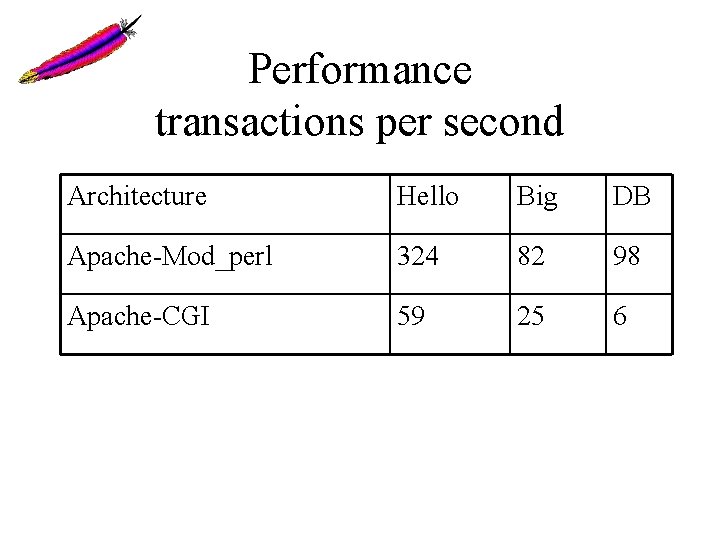

Performance transactions per second Architecture Hello Big DB Apache-Mod_perl 324 82 98 Apache-CGI 59 25 6

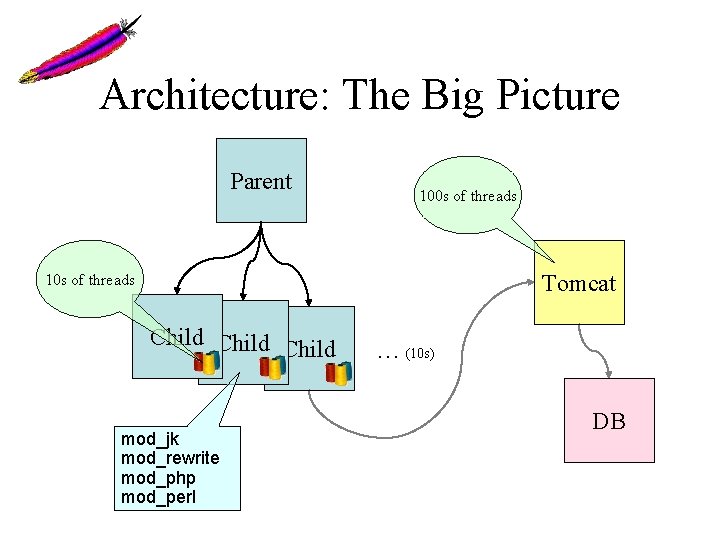

Architecture: The Big Picture Parent 100 s of threads Tomcat 10 s of threads Child mod_jk mod_rewrite mod_php mod_perl … (10 s) DB

“MPM” • Multi-Processing Module • An MPM defines how the server will receive and manage incoming requests. • Allows OS-specific optimizations. • Allows vastly different server models (eg. threaded vs. multiprocess).

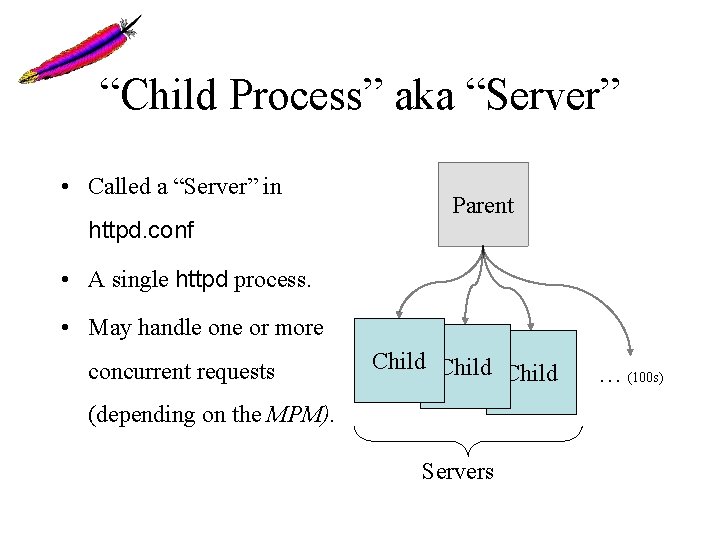

“Child Process” aka “Server” • Called a “Server” in httpd. conf Parent • A single httpd process. • May handle one or more concurrent requests Child (depending on the MPM). Servers … (100 s)

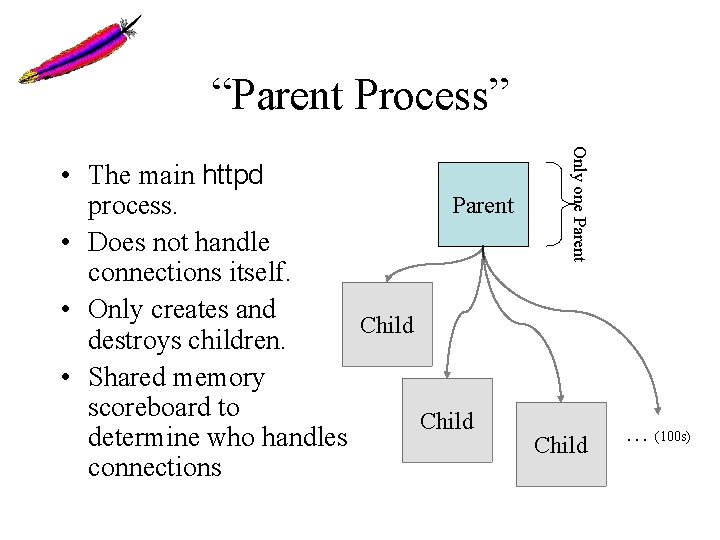

“Parent Process” Only one Parent • The main httpd Parent process. • Does not handle connections itself. • Only creates and Child destroys children. • Shared memory scoreboard to Child determine who handles Child connections … (100 s)

“Thread” • In multi-threaded MPMs (eg. Worker). • Each thread handles a single connection. • Allows Children to handle many connections at once.

Prefork MPM • • Apache 1. 3 and Apache 2. 0 Prefork Each child handles one connection at a time Many children High memory requirements • “You’ll run out of memory before CPU”

Prefork Directives (Apache 2. 0) • • • Start. Servers Min. Spare. Servers Max. Clients Max. Requests. Per. Child

Worker MPM • • Apache 2. 0 and later Multithreaded within each child Dramatically reduced memory footprint Only a few children (fewer than prefork)

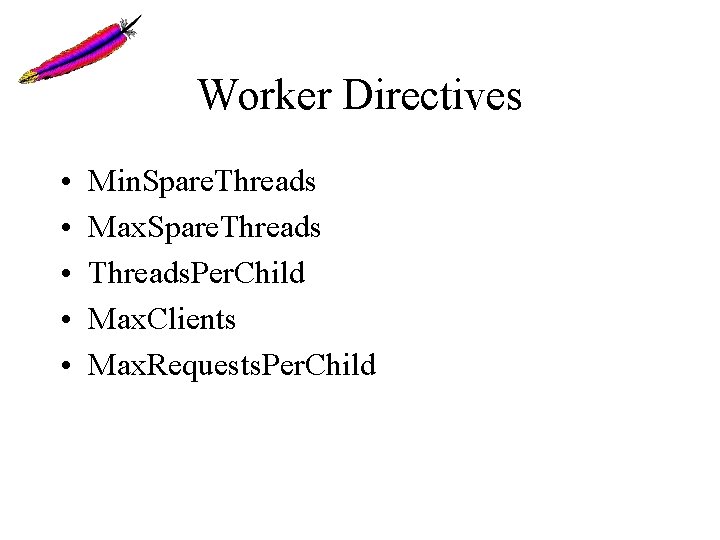

Worker Directives • • • Min. Spare. Threads Max. Spare. Threads. Per. Child Max. Clients Max. Requests. Per. Child

Apache 1. 3 and 2. 0 Performance Characteristics Multi-process, Multi-threaded, or Both?

Prefork • High memory usage • Highly tolerant of faulty modules • Highly tolerant of crashing children • Fast • Well-suited for 1 and 2 -CPU systems • Tried-and-tested model from Apache 1. 3 • “You’ll run out of memory before CPU. ”

Worker • • • Low to moderate memory usage Moderately tolerant to faulty modules Faulty threads can affect all threads in child Highly-scalable Well-suited for multiple processors Requires a mature threading library (Solaris, AIX, Linux 2. 6 and others work well) • Memory is no longer the bottleneck.

Important Performance Considerations • sendfile() support • DNS considerations

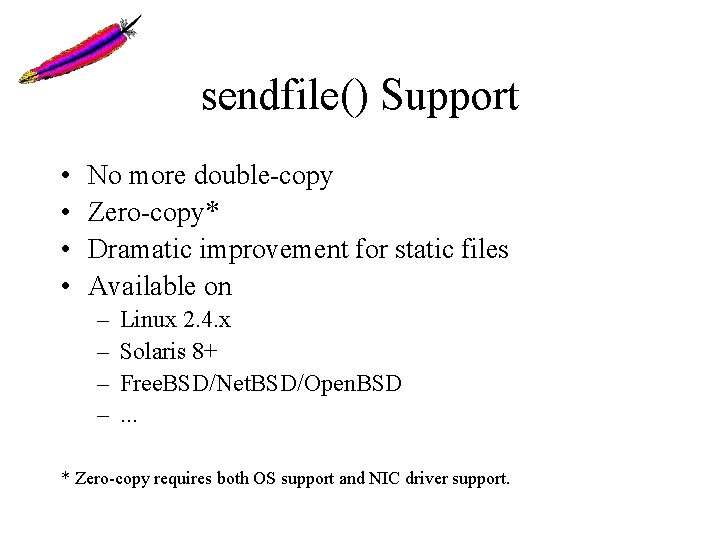

sendfile() Support • • No more double-copy Zero-copy* Dramatic improvement for static files Available on – – Linux 2. 4. x Solaris 8+ Free. BSD/Net. BSD/Open. BSD. . . * Zero-copy requires both OS support and NIC driver support.

aio • Process does not block on – Socket – Disk read or write – semaphores

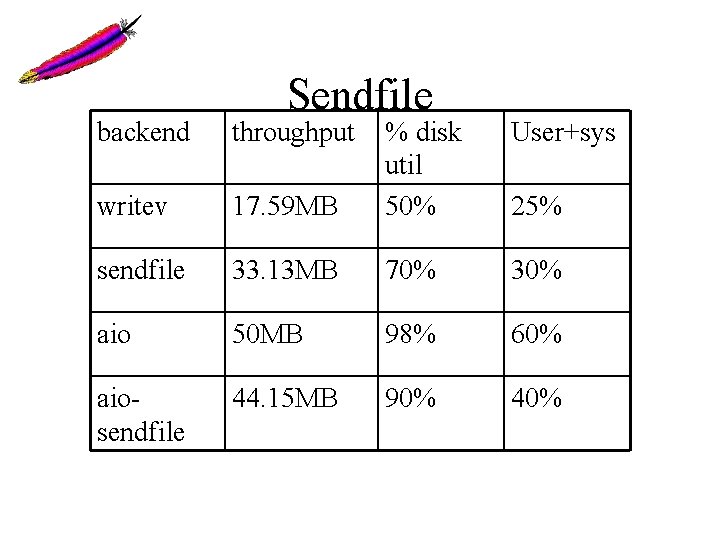

Sendfile backend throughput User+sys 17. 59 MB % disk util 50% writev sendfile 33. 13 MB 70% 30% aio 50 MB 98% 60% aiosendfile 44. 15 MB 90% 40% 25%

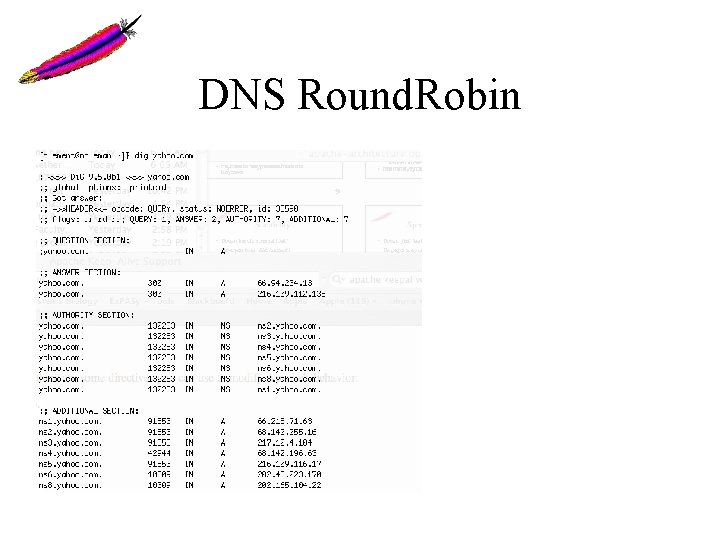

DNS Round. Robin

- Slides: 41