Anomaly detection for ECAL DQM Nabarun Dev 1

Anomaly detection for ECAL DQM Nabarun Dev 1, Colin Jessop 1, Nancy Marinelli 1, Maurizio Pierini 2 08/11/2017 1 University of Notre Dame 2 CERN DQM-ML meeting

Outline Ø Introduction Ø Dataset Ø Models Ø Preliminary results N. Dev, University of Notre Dame DQM-ML Meeting, 08/11/17

Introduction Ø DQM (data quality monitoring) system is an important tool to ensure high quality data-taking for analyses purposes. It is used both online and offline. Ø In real time data quality is currently assessed by looking at a dashboard containing a set of histograms which are compared to a reference set of histograms according to certain set of instructions. Ø By spotting anomalies in these images (plots/histograms) it is possible to identify problems that appear in the detector, flag poor quality data and/or take steps towards fixing these issues. N. Dev, University of Notre Dame DQM-ML Meeting, 08/11/17

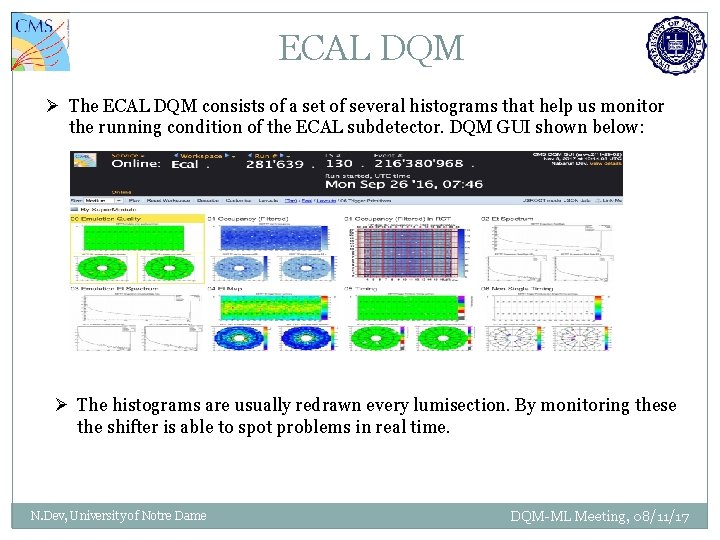

ECAL DQM Ø The ECAL DQM consists of a set of several histograms that help us monitor the running condition of the ECAL subdetector. DQM GUI shown below: Ø The histograms are usually redrawn every lumisection. By monitoring these the shifter is able to spot problems in real time. N. Dev, University of Notre Dame DQM-ML Meeting, 08/11/17

Avenues of improvements Ø Although the DQM system has been performing well over the years there areas which can be potentially improved. Ø The number of plots to monitor can be overwhelmingly large which can cause delay in spotting a problem or cause a transient problem to be overlooked. Ø Lot of manpower is needed to constantly monitor these systems during data-taking. Ø There is a possibility that the monitoring decisions can vary from shifter to shifter. Machine Learning techniques can help reduce these issues. The aim of this project is to (study the feasibility of) automatize(ing) the ECAL DQM system. This is under the umbrella of the entire ML for DQM effort for the entire CMS detector N. Dev, University of Notre Dame DQM-ML Meeting, 08/11/17

DISCLAIMER: WORK IN PROGRESS The following is very much a work in progress and all models and strategies discussed are preliminary. Kindly chime in with suggestions. N. Dev, University of Notre Dame DQM-ML Meeting, 08/11/17

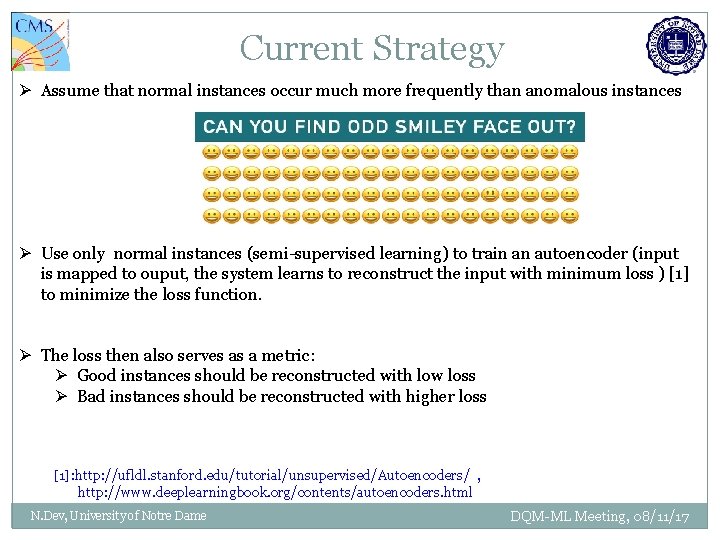

Current Strategy Ø Assume that normal instances occur much more frequently than anomalous instances Ø Use only normal instances (semi-supervised learning) to train an autoencoder (input is mapped to ouput, the system learns to reconstruct the input with minimum loss ) [1] to minimize the loss function. Ø The loss then also serves as a metric: Ø Good instances should be reconstructed with low loss Ø Bad instances should be reconstructed with higher loss [1]: http: //ufldl. stanford. edu/tutorial/unsupervised/Autoencoders/ , http: //www. deeplearningbook. org/contents/autoencoders. html N. Dev, University of Notre Dame DQM-ML Meeting, 08/11/17

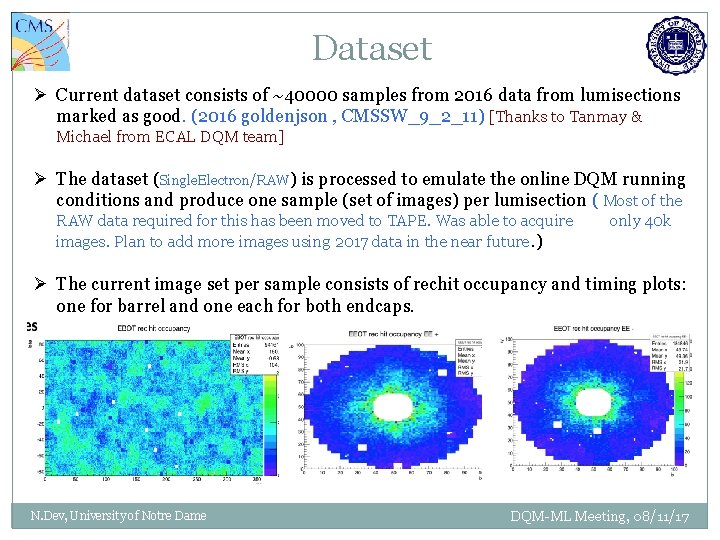

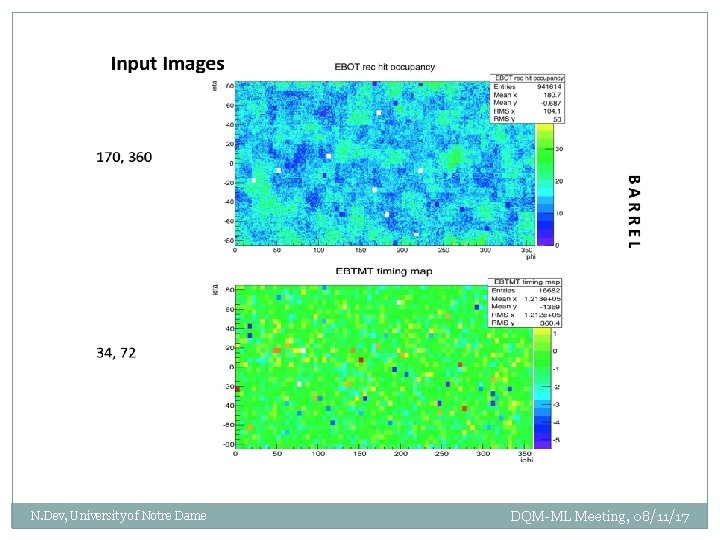

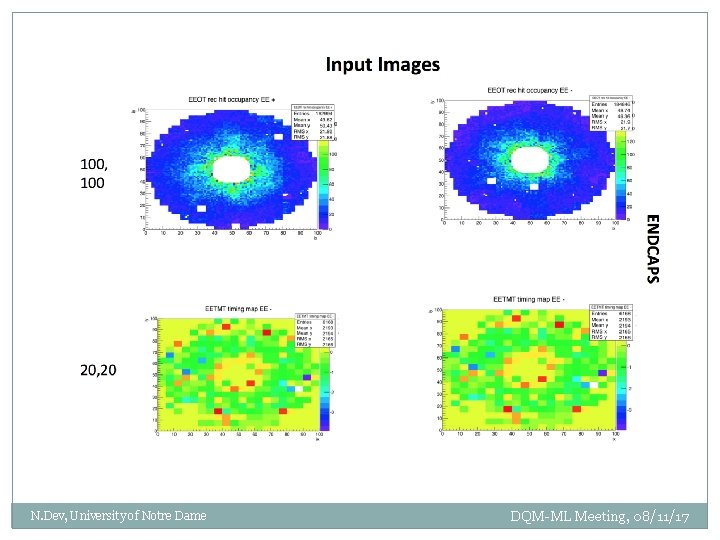

Dataset Ø Current dataset consists of ~40000 samples from 2016 data from lumisections marked as good. (2016 goldenjson , CMSSW_9_2_11) [Thanks to Tanmay & Michael from ECAL DQM team] Ø The dataset (Single. Electron/RAW) is processed to emulate the online DQM running conditions and produce one sample (set of images) per lumisection ( Most of the RAW data required for this has been moved to TAPE. Was able to acquire images. Plan to add more images using 2017 data in the near future. ) only 40 k Ø The current image set per sample consists of rechit occupancy and timing plots: one for barrel and one each for both endcaps. N. Dev, University of Notre Dame DQM-ML Meeting, 08/11/17

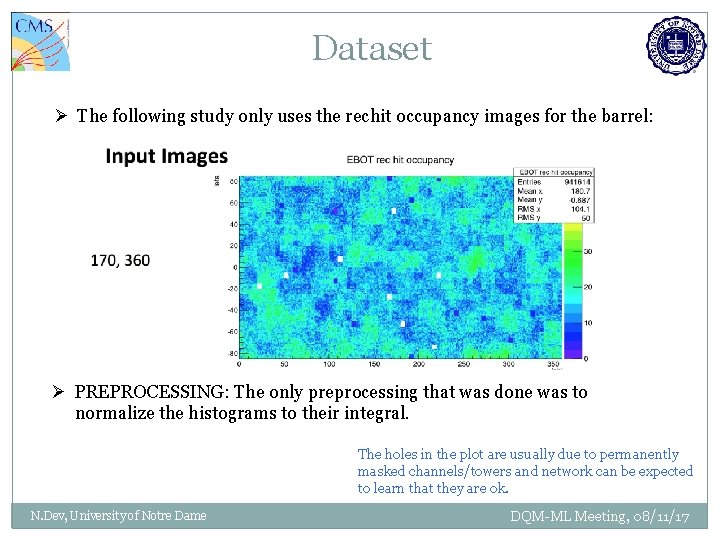

Dataset Ø The following study only uses the rechit occupancy images for the barrel: Ø PREPROCESSING: The only preprocessing that was done was to normalize the histograms to their integral. The holes in the plot are usually due to permanently masked channels/towers and network can be expected to learn that they are ok. N. Dev, University of Notre Dame DQM-ML Meeting, 08/11/17

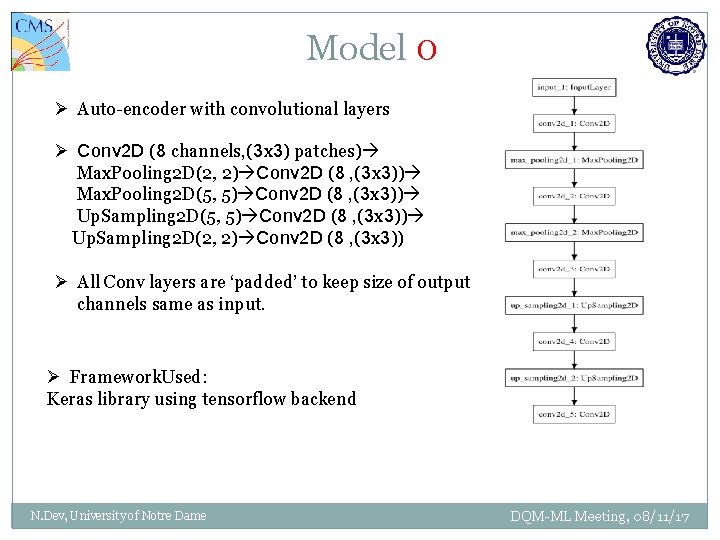

Model 0 Ø Auto-encoder with convolutional layers Ø Conv 2 D (8 channels, (3 x 3) patches) Max. Pooling 2 D(2, 2) Conv 2 D (8 , (3 x 3)) Max. Pooling 2 D(5, 5) Conv 2 D (8 , (3 x 3)) Up. Sampling 2 D(2, 2) Conv 2 D (8 , (3 x 3)) Ø All Conv layers are ‘padded’ to keep size of output channels same as input. Ø Framework. Used: Keras library using tensorflow backend N. Dev, University of Notre Dame DQM-ML Meeting, 08/11/17

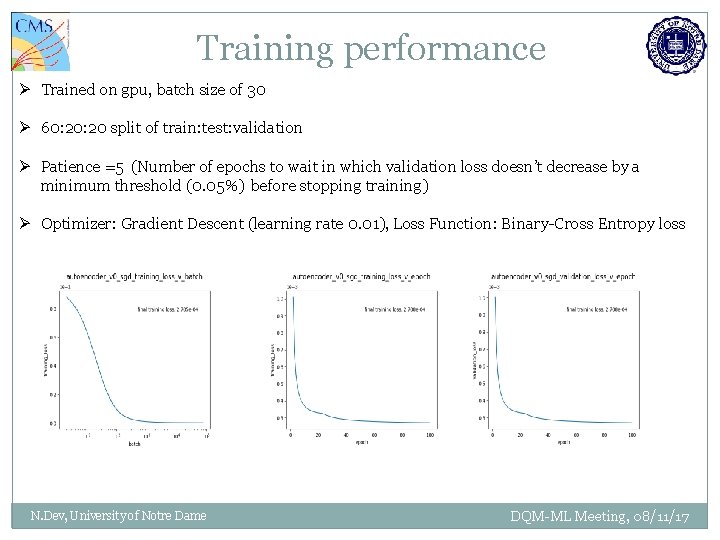

Training performance Ø Trained on gpu, batch size of 30 Ø 60: 20 split of train: test: validation Ø Patience =5 (Number of epochs to wait in which validation loss doesn’t decrease by a minimum threshold (0. 05%) before stopping training) Ø Optimizer: Gradient Descent (learning rate 0. 01), Loss Function: Binary-Cross Entropy loss N. Dev, University of Notre Dame DQM-ML Meeting, 08/11/17

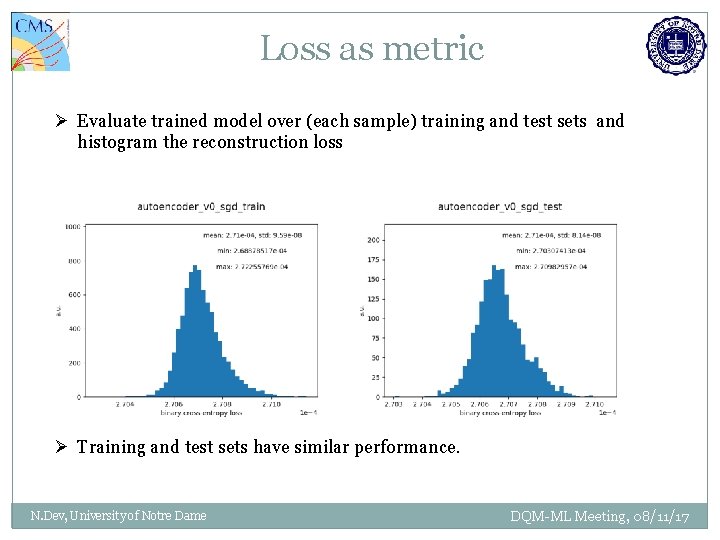

Loss as metric Ø Evaluate trained model over (each sample) training and test sets and histogram the reconstruction loss Ø Training and test sets have similar performance. N. Dev, University of Notre Dame DQM-ML Meeting, 08/11/17

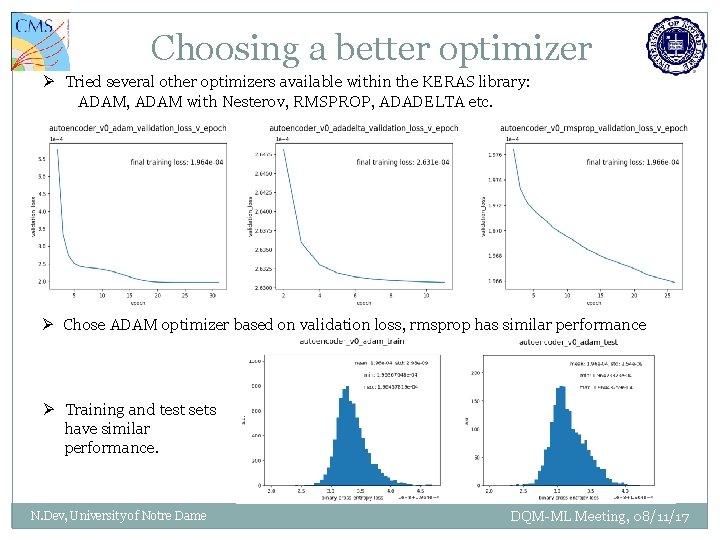

Choosing a better optimizer Ø Tried several other optimizers available within the KERAS library: ADAM, ADAM with Nesterov, RMSPROP, ADADELTA etc. Ø Chose ADAM optimizer based on validation loss, rmsprop has similar performance Ø Training and test sets have similar performance. N. Dev, University of Notre Dame DQM-ML Meeting, 08/11/17

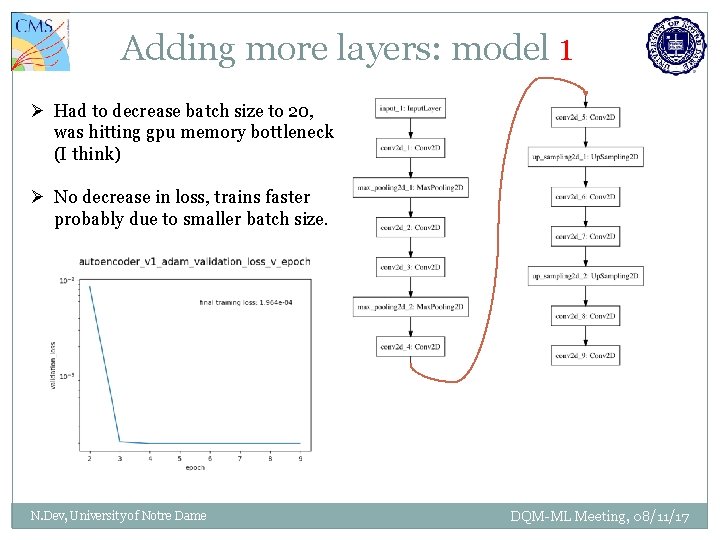

Adding more layers: model 1 Ø Had to decrease batch size to 20, was hitting gpu memory bottleneck (I think) Ø No decrease in loss, trains faster probably due to smaller batch size. N. Dev, University of Notre Dame DQM-ML Meeting, 08/11/17

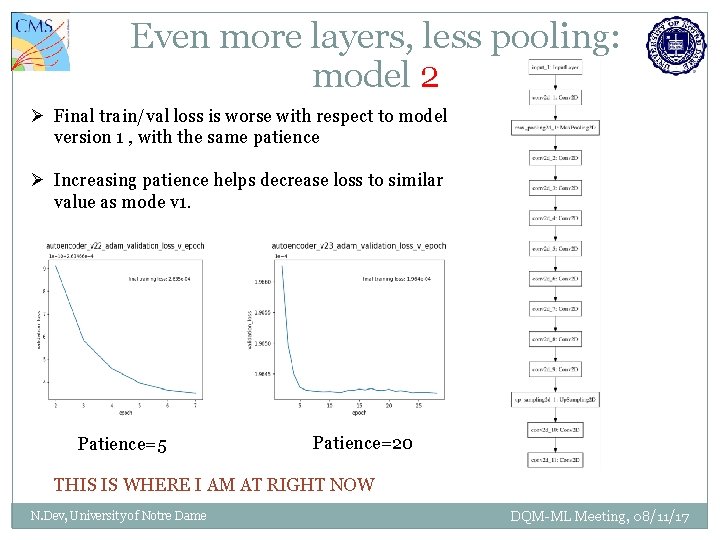

Even more layers, less pooling: model 2 Ø Final train/val loss is worse with respect to model version 1 , with the same patience Ø Increasing patience helps decrease loss to similar value as mode v 1. Patience=5 Patience=20 THIS IS WHERE I AM AT RIGHT NOW N. Dev, University of Notre Dame DQM-ML Meeting, 08/11/17

Next Steps Ø Gather some anomalous examples and evaluate the model on them Ø Compare their loss spectrum to that of normal examples Ø Increase training set size. Ø Try more sophisticated networks: bigger autoencoders with sparsity constraints etc. Ø Use other images besides occupancy (e. g. timing) as input. Ø Try other (supervised) learning techniques (e. g. - SVMs ) and compare performance. N. Dev, University of Notre Dame DQM-ML Meeting, 08/11/17

BACK UP N. Dev, University of Notre Dame DQM-ML Meeting, 08/11/17

N. Dev, University of Notre Dame DQM-ML Meeting, 08/11/17

N. Dev, University of Notre Dame DQM-ML Meeting, 08/11/17

- Slides: 19