Animation by Example Michael Gleicher and the UW

Animation by Example Michael Gleicher and the UW Graphics Group University of Wisconsin- Madison www. cs. wisc. edu/~gleicher www. cs. wisc. edu/graphics

Animation by Example: The Summary n We can create new animations in response to situations by adapting existing animations and piecing them together as needed. n To do this we will: n n n Extend our work on adapting motions Present ways of putting motions together Adapt example-based synthesis to be appropriate for interactive applications

Virtual Experiences n n For training, entertainment, design, … Experience a scenario n n People are a part of it n n Not just a space Just one part, but an interesting one Emergency rescue training and rehearsal n Crowds populating a space

Virtual Experience Applications n Training n n Design n n Evaluating populated spaces Entertainment / Commerce n n Emergency Rescure, Crowd Control Games, Shopping Malls, Chat All need people!

The Demands of Virtual Experiences n n n n Fast Responsive Streams of Motion Realistic Attractive Detailed Directable Autonomous

The problems n What do characters do? n n How do they do it? n n How do they choose their actions? How do we generate their movements? What do they look like? n How do we draw them?

Cut to the chase… An Example n How do you make a character sneak around? n Start with some captured motion of a person sneaking around Synthesize a new motion of a character “sneaking” somewhere else n

What did you just see? Small amount of example motion n Examples of what I want n Actions n Quality n n Character did something different n n New path Character did it the same way n Preserves “style” and “quality”

How to make a Character “Sneak”? n What is sneaking? Hard to define mathematically n Abstract qualities matter n n n Style, mood, realism, … Details matter Feet not sliding on the floor n Subtle gestures n

Computer Animation 101: How do we get motion? n Create it manually (keyframing) n n n Synthesize it by procedural methods n n n Common method used for film VERY talent and labor intensive Physical simulation, or ad-hoc methods Can’t get exactly what you want Capture it from a performer n n Motion Capture Animation from Observation

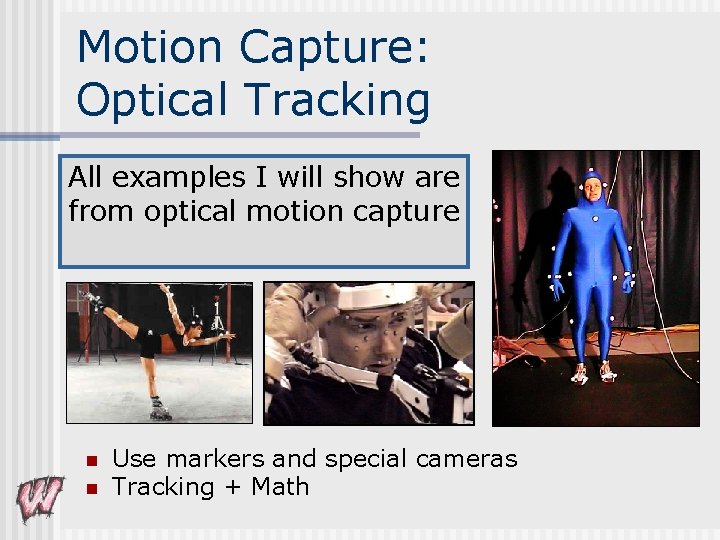

Motion Capture: Optical Tracking All examples I will show are from optical motion capture n n Use markers and special cameras Tracking + Math

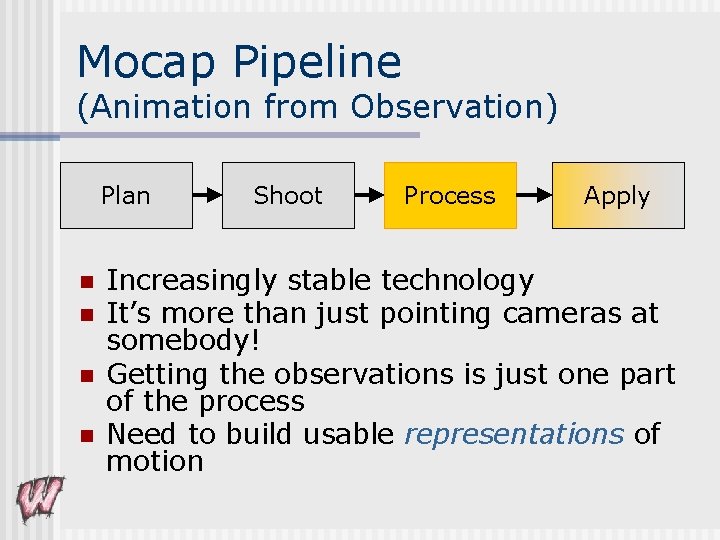

Mocap Pipeline (Animation from Observation) Plan n n Shoot Process Apply Increasingly stable technology It’s more than just pointing cameras at somebody! Getting the observations is just one part of the process Need to build usable representations of motion

Why Edit Motion? n What you get is not what you want! n You get observations of the performance n n n A specific performer A real human Doing whatever they did With the noise and “realism” of real sensors You want animation n A character Doing something And maybe doing something else…

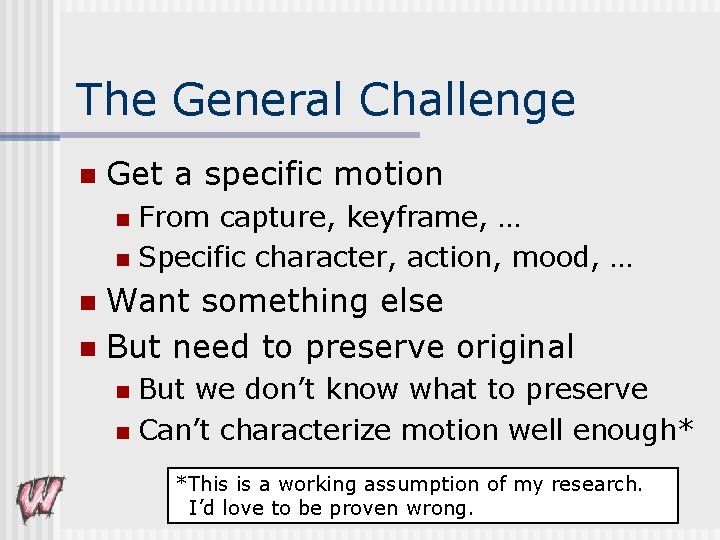

The General Challenge n Get a specific motion From capture, keyframe, … n Specific character, action, mood, … n Want something else n But need to preserve original n But we don’t know what to preserve n Can’t characterize motion well enough* n *This is a working assumption of my research. I’d love to be proven wrong.

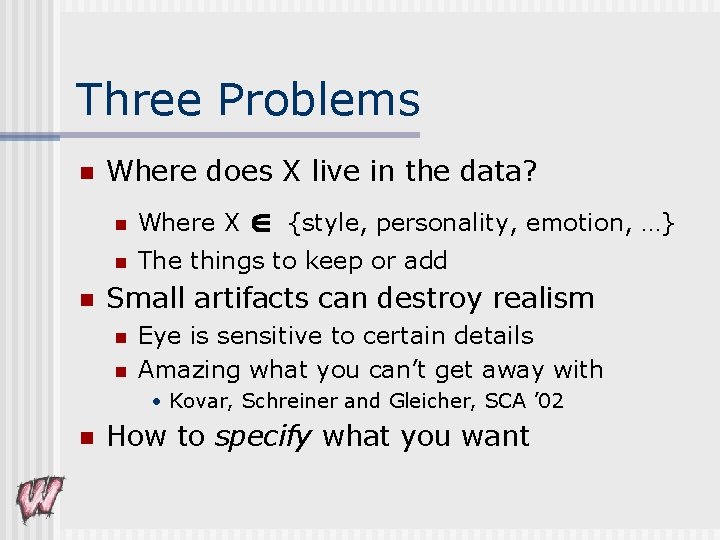

Three Problems n n Where does X live in the data? n Where X {style, personality, emotion, …} n The things to keep or add Small artifacts can destroy realism n n Eye is sensitive to certain details Amazing what you can’t get away with • Kovar, Schreiner and Gleicher, SCA ’ 02 n How to specify what you want

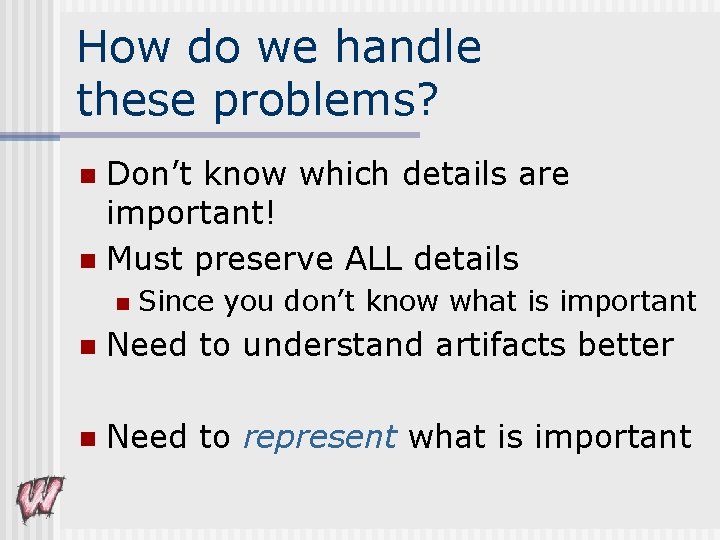

How do we handle these problems? Don’t know which details are important! n Must preserve ALL details n n Since you don’t know what is important n Need to understand artifacts better n Need to represent what is important

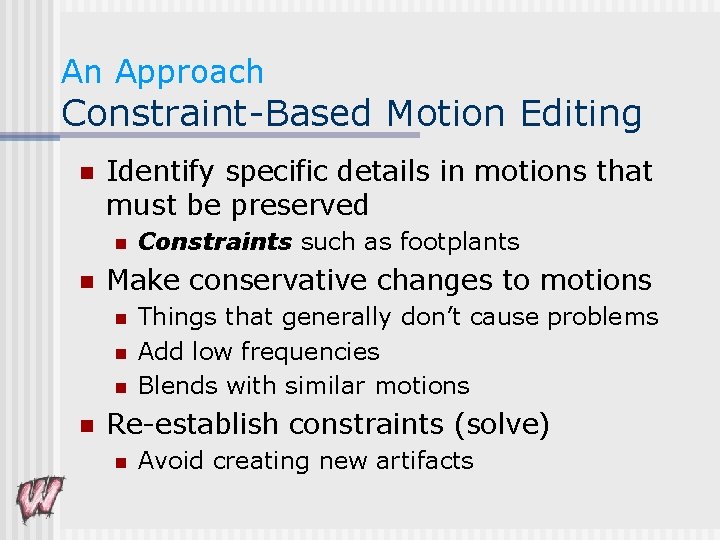

An Approach Constraint-Based Motion Editing n Identify specific details in motions that must be preserved n n Make conservative changes to motions n n Constraints such as footplants Things that generally don’t cause problems Add low frequencies Blends with similar motions Re-establish constraints (solve) n Avoid creating new artifacts

Band-limited adaptation n High frequencies are important Eye is sensitive to them n Always signifies important events n n Avoid high frequency changes Preserve existing high-frequencies n Avoid adding new ones n n Band limit the changes n Not the resulting motions

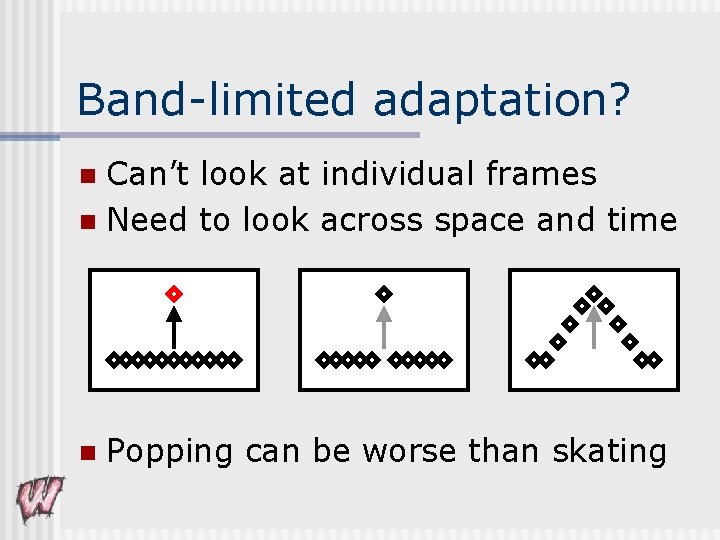

Band-limited adaptation? Can’t look at individual frames n Need to look across space and time n n Popping can be worse than skating

Constraint Solutions for Editing n Spacetime (single large non-linear optimization) n n Hierarchical Splines n n Gleicher ’ 00 Importance-Based n n Lee and Shin ’ 99 IK + Filter n n Gleicher ’ 97, Gleicher ’ 98, Popovic and Witkin ’ 99 Shin, Lee, Gleicher and Shin ’ 01 IK + Blending n Kovar, Schreiner and Gleicher ’ 02

How to use this n Editing methods just need to get close n Avoid nasty artifacts (high-frequencies) n Footskate cleanup fixes many important details n Makes editing methods easier to devise and implement

Motion Editing Interactive methods n Many are easy to implement n Cleanup solvers n Footskate n Physics (Shin et al 2003) n n Alter clips n Retain the length

Getting Beyond Clips Want to generate a wider range n Want more control n n Applications need streams of motion n n Dynamically generated Applications need “long clips”

Idea: Put Clips Together n New motions from pieces of old ones! n Good news: n n n Keeps the qualities of the original (with care) Can create long and novel “streams” (keep putting clips together) Challenges: n n How to connect clips? How to decide what clips to connect?

Connecting Clips Transition Generation n Transitions between motions can be hard n Simple method work sometimes Blends between aligned motions n Cleanup footskate artifacts n n Just need to know when is “sometime”

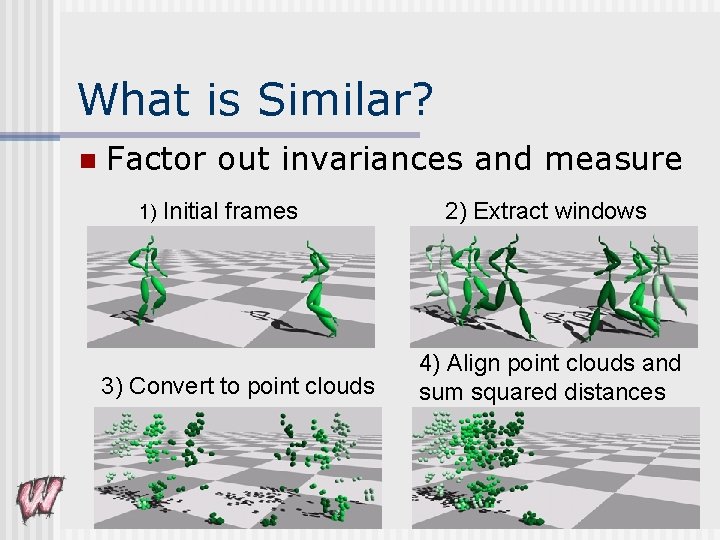

What is Similar? n Factor out invariances and measure 1) Initial frames 3) Convert to point clouds 2) Extract windows 4) Align point clouds and sum squared distances

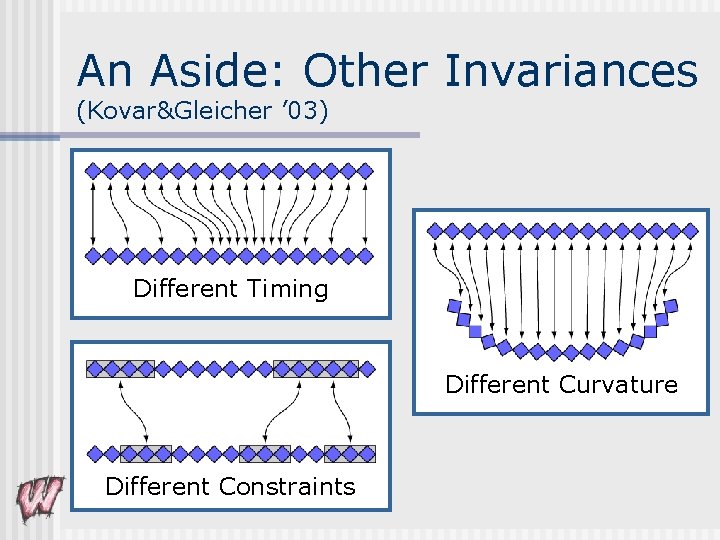

An Aside: Other Invariances (Kovar&Gleicher ’ 03) Different Timing Different Curvature Different Constraints

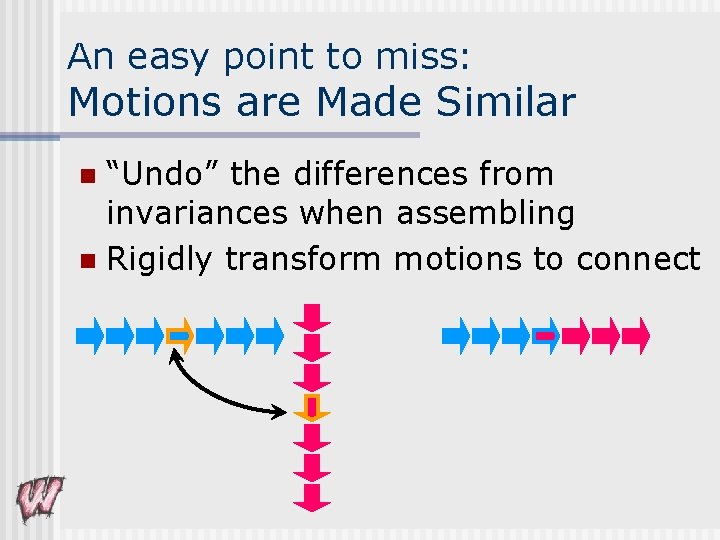

An easy point to miss: Motions are Made Similar “Undo” the differences from invariances when assembling n Rigidly transform motions to connect n

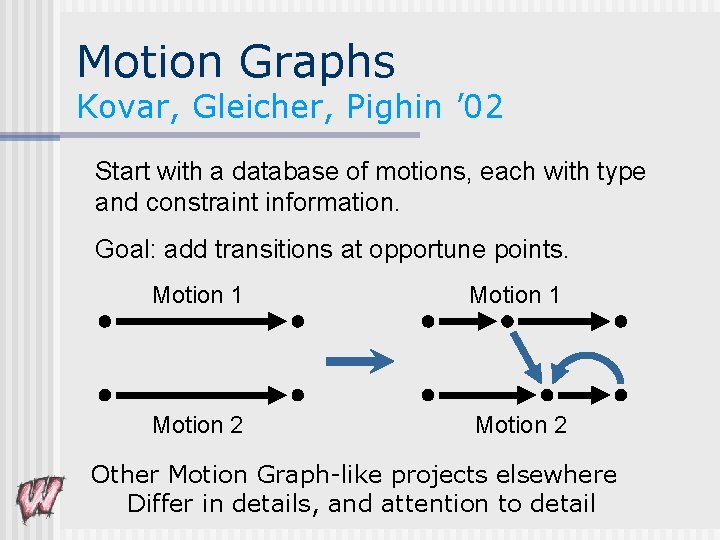

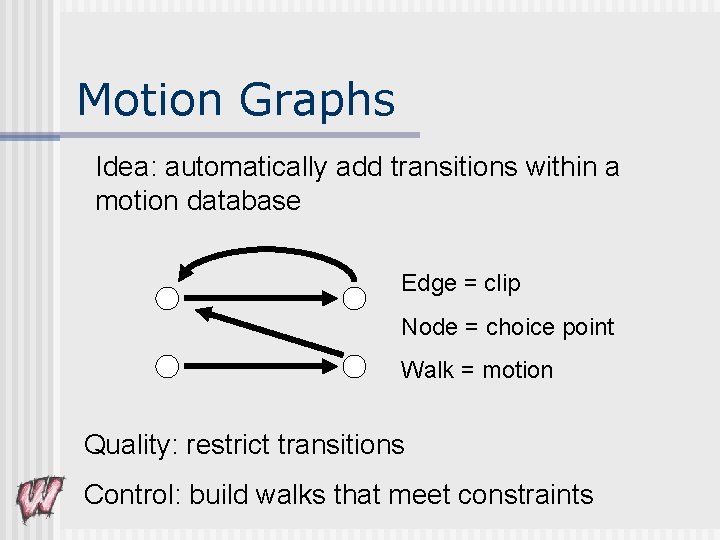

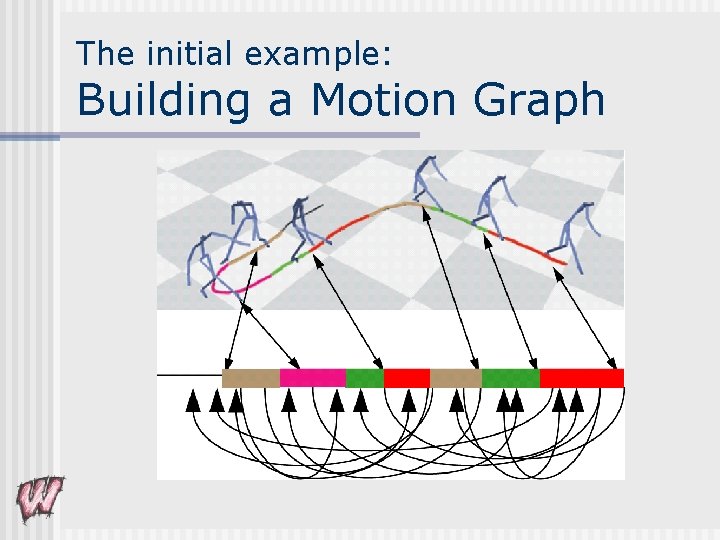

Motion Graphs Kovar, Gleicher, Pighin ’ 02 Start with a database of motions, each with type and constraint information. Goal: add transitions at opportune points. Motion 1 Motion 2 Other Motion Graph-like projects elsewhere Differ in details, and attention to detail

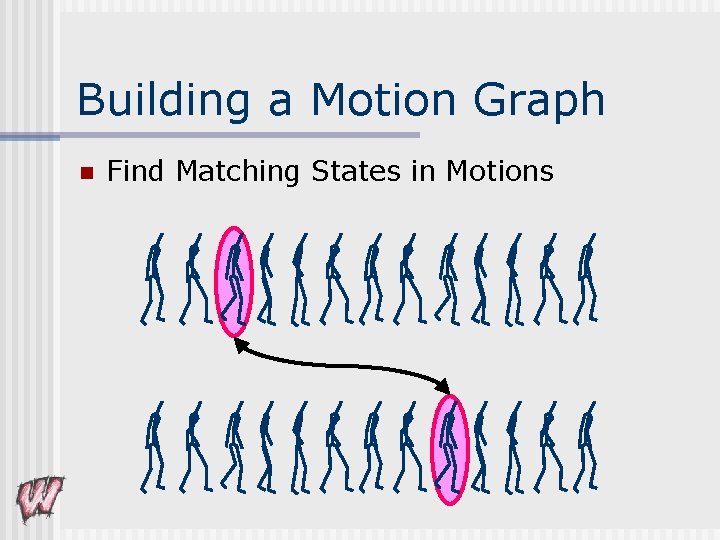

Building a Motion Graph n Find Matching States in Motions

Motion Graphs Idea: automatically add transitions within a motion database Edge = clip Node = choice point Walk = motion Quality: restrict transitions Control: build walks that meet constraints

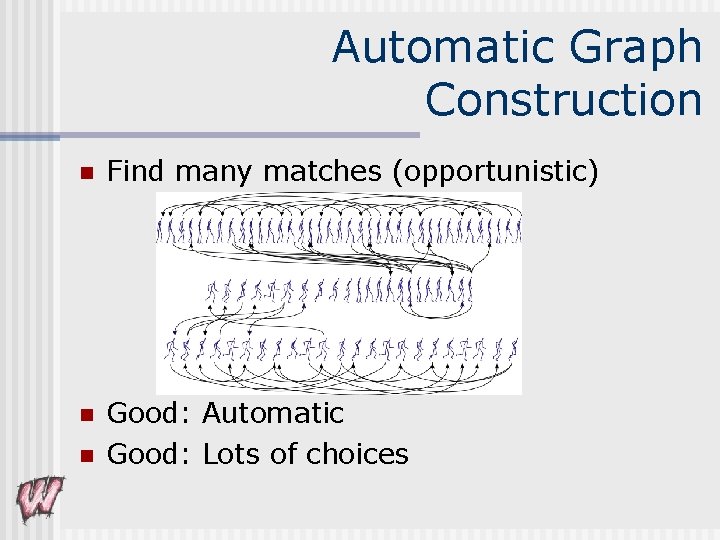

Automatic Graph Construction n Find many matches (opportunistic) n Good: Automatic Good: Lots of choices n

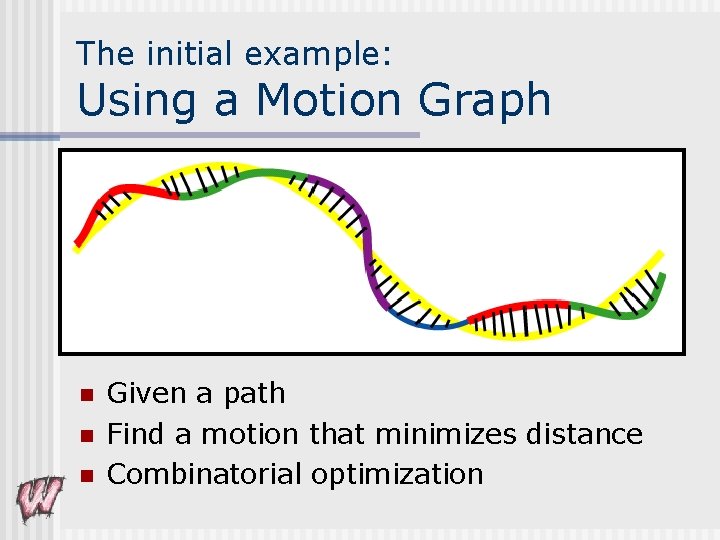

Using a motion graph n Any walk on the graph is a valid motion n Generate walks to meet goals n n n Random walks (screen savers) Search to meet constraints Other Motion Graph-like projects elsewhere n Differ in details, and attention to detail

The initial example: Building a Motion Graph

The initial example: Using a Motion Graph n n n Given a path Find a motion that minimizes distance Combinatorial optimization

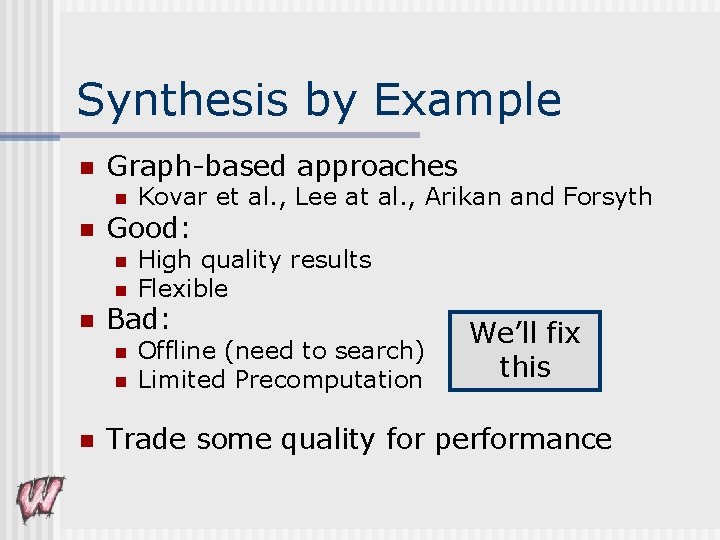

Synthesis by Example n Graph-based approaches n n Good: n n n High quality results Flexible Bad: n n n Kovar et al. , Lee at al. , Arikan and Forsyth Offline (need to search) Limited Precomputation We’ll fix this Trade some quality for performance

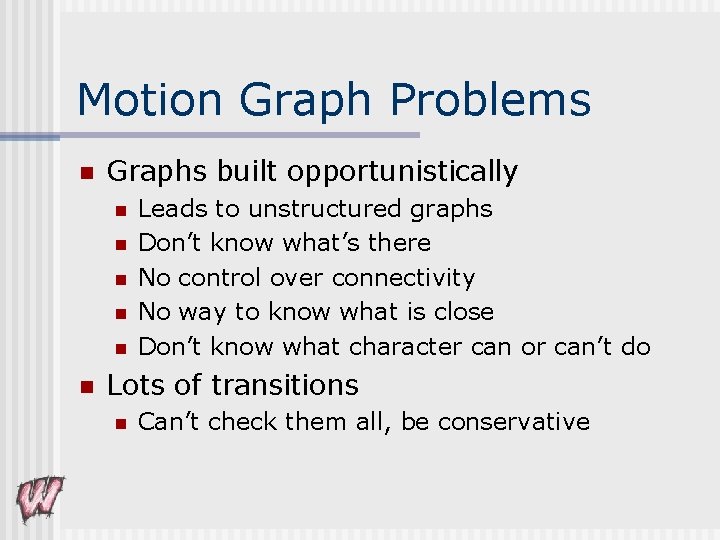

Motion Graph Problems n Graphs built opportunistically n n n Leads to unstructured graphs Don’t know what’s there No control over connectivity No way to know what is close Don’t know what character can or can’t do Lots of transitions n Can’t check them all, be conservative

Why is this OK? Search the graphs for motions n Look ahead to make sure we don’t get stuck n Cleanup motions as generated n Plan “around” missing transitions n Optimization gets close as possible n Not OK for Interactive Apps!

Snap-Together Motion Three basic ideas n Find “Hub Nodes” n Pre-process motions so they “snap” together n Build small, contrived graphs

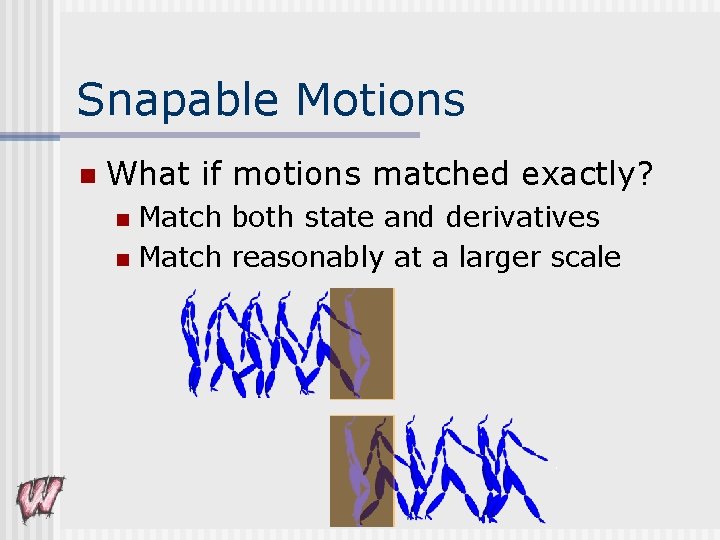

Snapable Motions n What if motions matched exactly? Match both state and derivatives n Match reasonably at a larger scale n

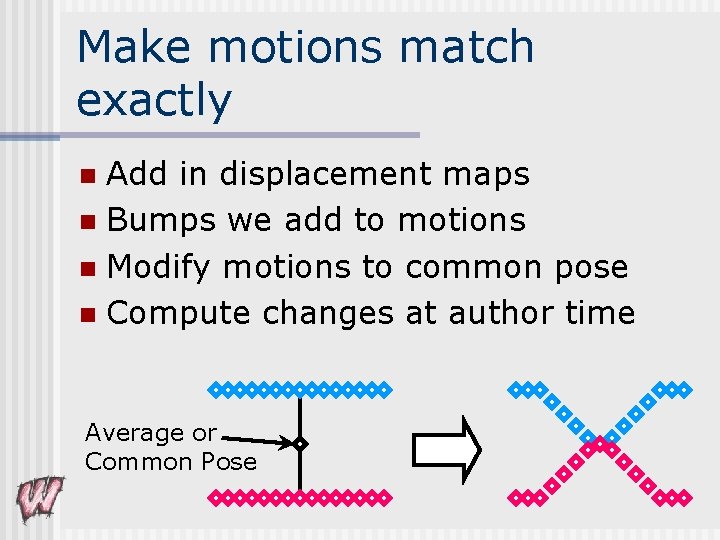

Make motions match exactly Add in displacement maps n Bumps we add to motions n Modify motions to common pose n Compute changes at author time n Average or Common Pose

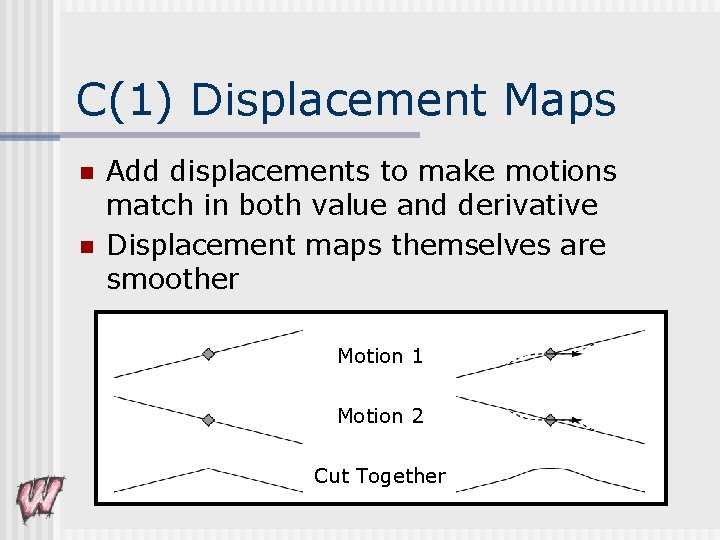

C(1) Displacement Maps n n Add displacements to make motions match in both value and derivative Displacement maps themselves are smoother Motion 1 Motion 2 Cut Together

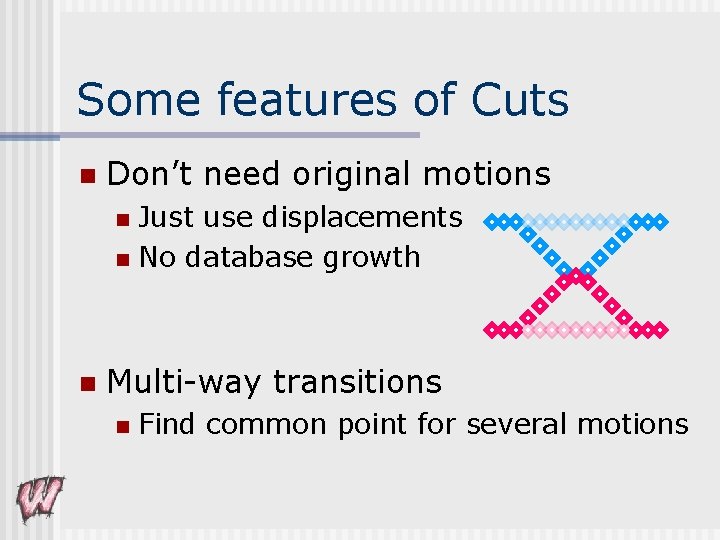

Some features of Cuts n Don’t need original motions Just use displacements n No database growth n n Multi-way transitions n Find common point for several motions

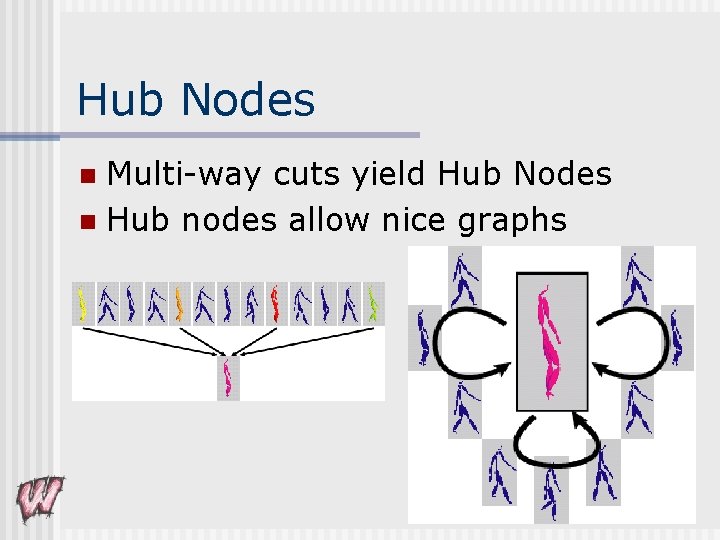

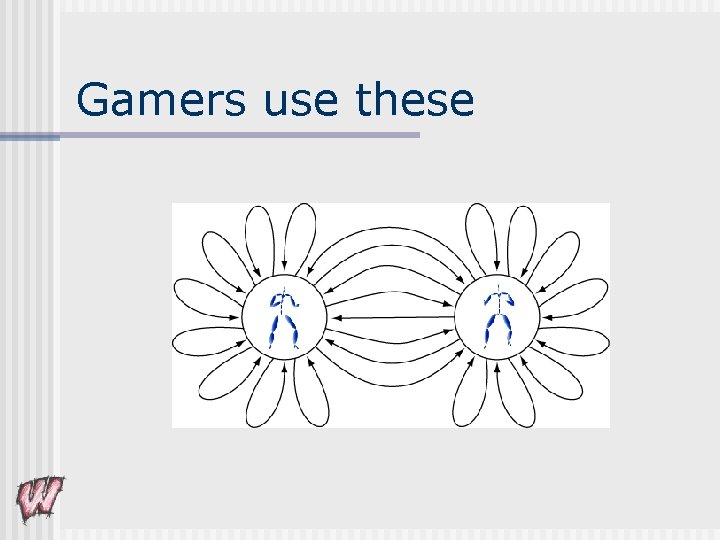

Hub Nodes Multi-way cuts yield Hub Nodes n Hub nodes allow nice graphs n

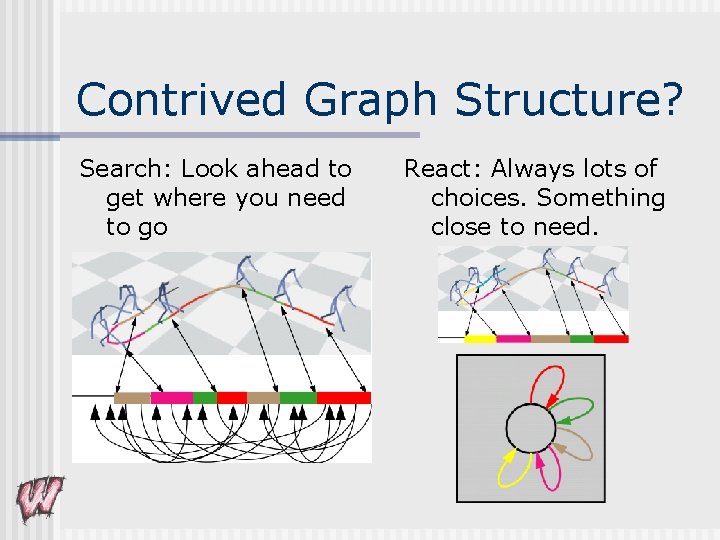

Contrived Graph Structure? Search: Look ahead to get where you need to go React: Always lots of choices. Something close to need.

Gamers use these

Semi-Automatic Graph Construction n Pick set of match frames User selects n System picks “best” one n Modify motions to build hub node n Check graph and transitions n

Common poses for Multi-way Hubs All motions displace to one pose n Pose must be consistent with all motions n Average “common” pose n Average of poses n Constraints solved on frame n n Must solve constraints on all motions n Inconsistencies are possible

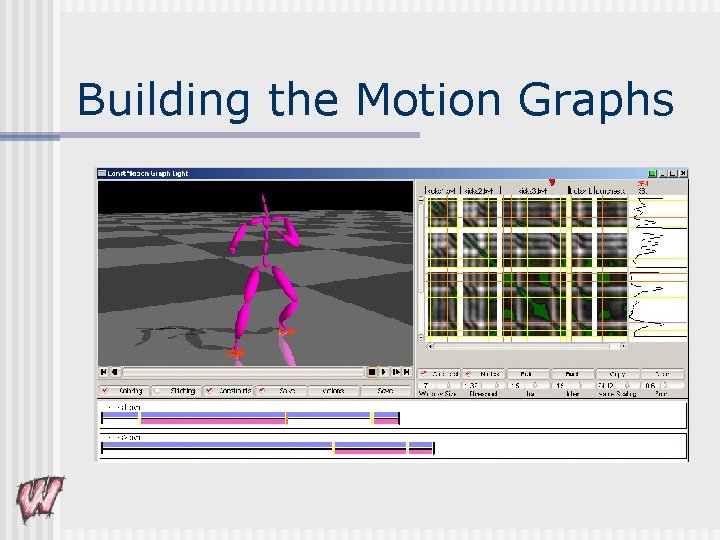

Building the Motion Graphs

Why should we care? n Precompute everything but rigid transforms = FAST! n Nodes with high connectivity = RESPONSIVE / CONTROLLABLE n Add in user interaction n n Build convenient graph structures Verify transitions (less conservative)

Some results n Corpus of Karate Motions Pick two best poses n Map motions to game controller n 12 minutes – including picking buttons n Walk + Karate n n 20 minutes – including picking buttons

Nothing for Free n n n Less Variety Less “Exactness” Lower Quality Transitions n n Displacements not as good as blends “Big” Transitions Big changes to get multi-way Need user intervention n n Pick Match Frames Verify Transitions In Practice, heuristics work REALLY well – but no guaruntees

Are we done yet? n May not have exact motions you need n n Discrete nature (choices) n n Parametric “fat” arcs Better matching of dissimilar motions n n n Make them using blending techniques New and better invariances Motion specific invariances How do characters decide what to do?

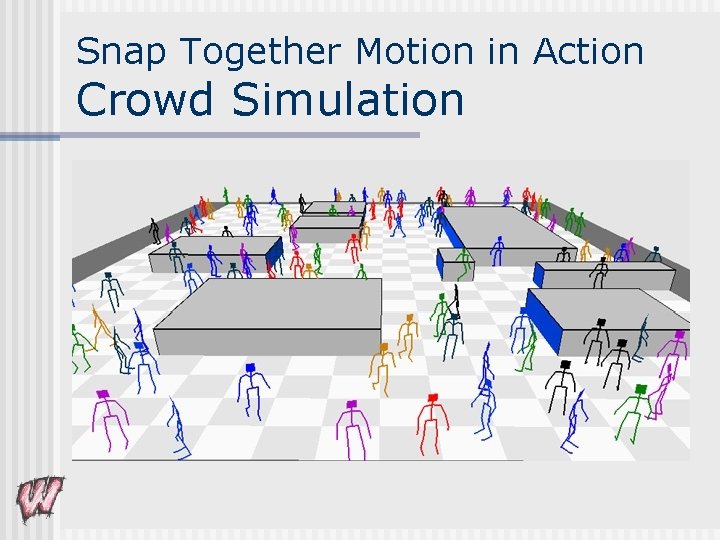

Snap Together Motion in Action Crowd Simulation

Snap-Together Motion n Animation by Example for Interactive applications! User-controlled graph structures n Multi-way cut transitions n Pre-computed transitions n

Still more to do n Interactive Systems n n n Better synthesis n n n n Online generation (no search) Low-cost runtimes Self-awareness (what can you do? ) Parameterized motions Better goal specifications Multiple interacting characters Crowds Characters The bigger picture

The Vision… Visual Media for Everyone ! n Visual Media n Pictures / Models n Animations / Interactive Environments n n Everyone Not just artists n Easier for artists too n

The Computer Science Tools for the creation and delivery of visual media n What representations to use n Build from “real-world” data n Use for synthesis n n Some current projects…

Current Projects n Animation (Virtual Experiences) Motion Synthesis n Character Geometry n Crowds and Behavior authoring n Vascular Visualization n Virtual Videography n

Virtual Videography n Want to record classroom lectures n n Needs to be done well n n n Static camera video is unwatchable Good video holds interest, guides attention Can’t afford a professional crew n n n And more (start with something easy) Good videography is hard Intrusive Get a computer to simulate the crew?

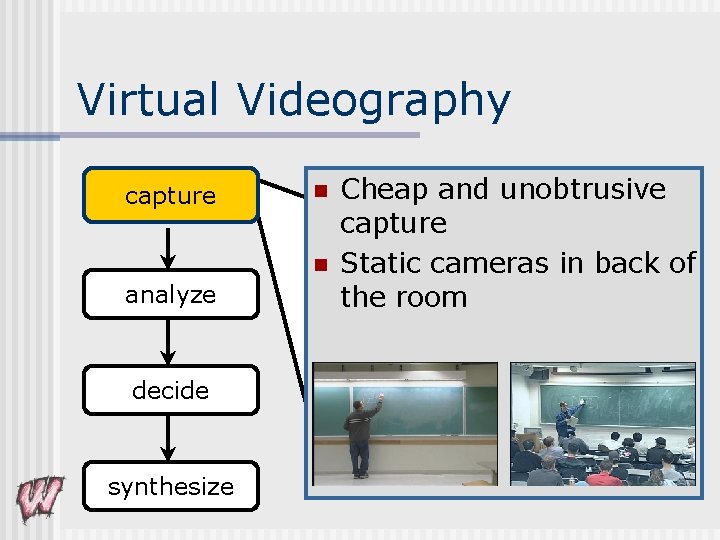

Virtual Videography capture n n analyze decide synthesize Cheap and unobtrusive capture Static cameras in back of the room

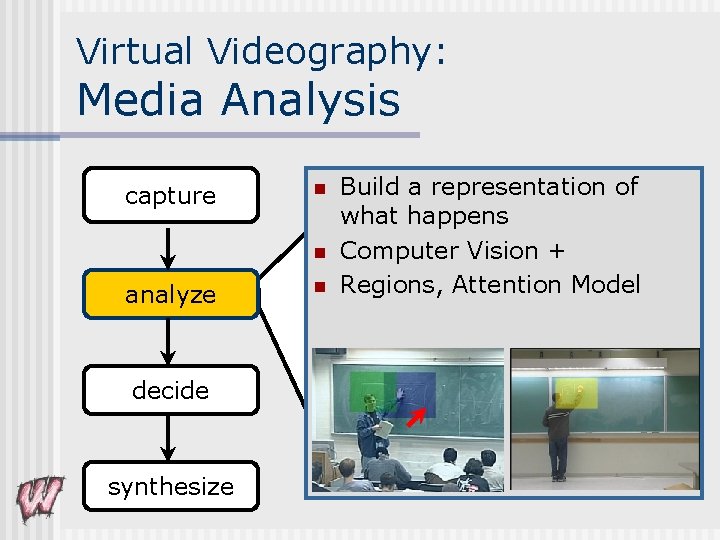

Virtual Videography: Media Analysis capture n n analyze decide synthesize n Build a representation of what happens Computer Vision + Regions, Attention Model

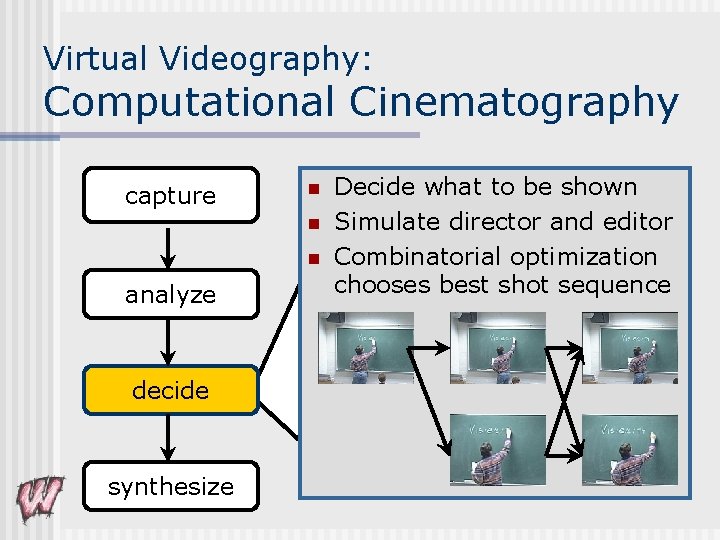

Virtual Videography: Computational Cinematography capture n n n analyze decide synthesize Decide what to be shown Simulate director and editor Combinatorial optimization chooses best shot sequence

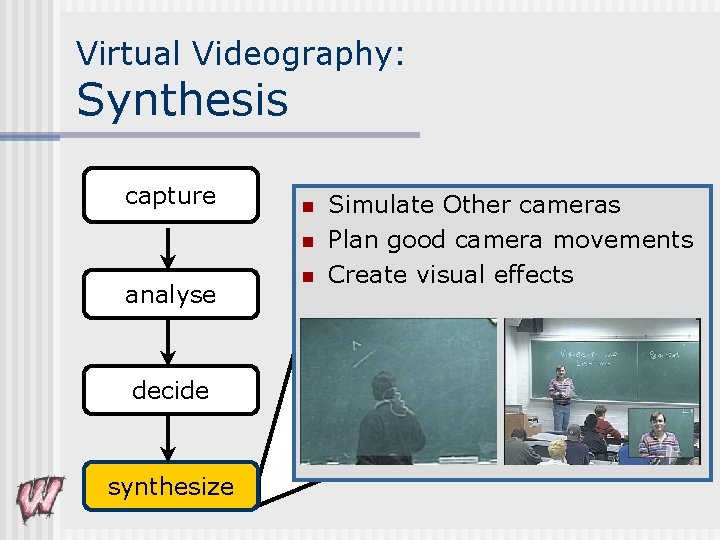

Virtual Videography: Synthesis capture n n analyse decide synthesize n Simulate Other cameras Plan good camera movements Create visual effects

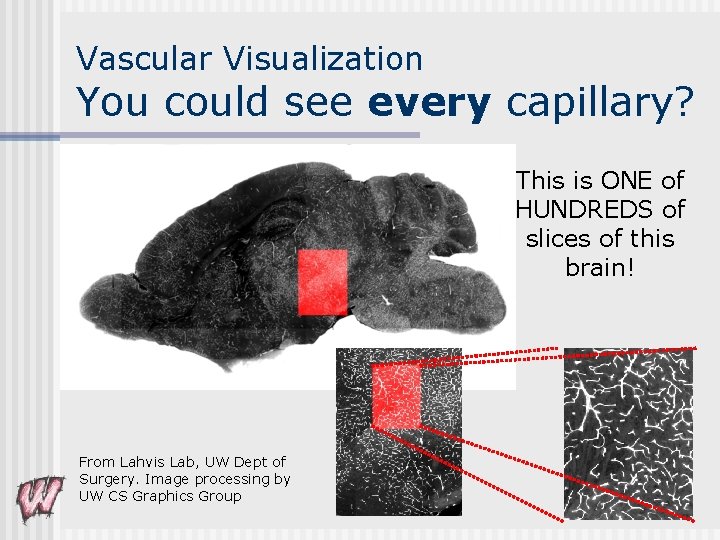

Vascular Visualization w/Garet Lahvis, UW Dept of Surgery n n How does the vascular system develop? In the brain? How do we help a biologist study this? How to work with visual complexity? n n n Not an artist Not a film maker Not even a computer scientist

Vascular Visualization You could see every capillary? This is ONE of HUNDREDS of slices of this brain! From Lahvis Lab, UW Dept of Surgery. Image processing by UW CS Graphics Group

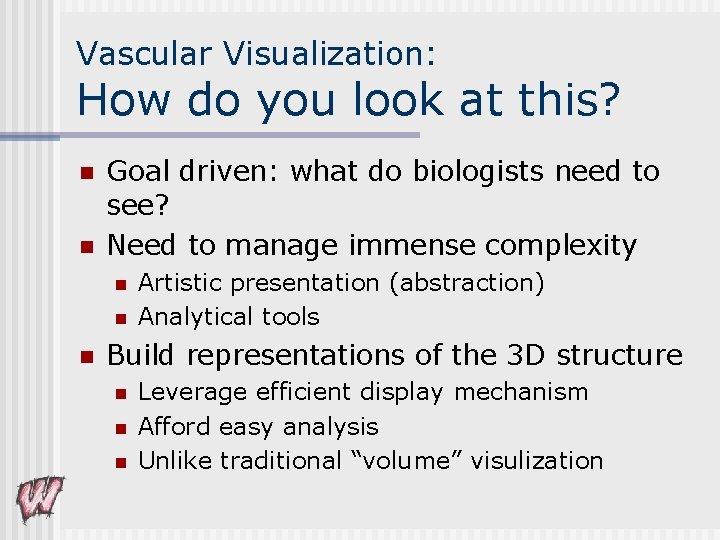

Vascular Visualization: How do you look at this? n n Goal driven: what do biologists need to see? Need to manage immense complexity n n n Artistic presentation (abstraction) Analytical tools Build representations of the 3 D structure n n n Leverage efficient display mechanism Afford easy analysis Unlike traditional “volume” visulization

Thanks! n n n To the UW graphics gang. Animation research at UW is sponsored by the National Science Foundation, Microsoft, and the Wisconsin University and Industrial Relations program. House of Moves, IBM, Alias/Wavefront, Discreet, Pixar and Intel have given us stuff. House of Moves, Ohio State ACCAD, and Demian Gordon for data. And to all our friends in the business who have given us data and inspiration.

- Slides: 68