Android Sensor Programming Lecture 13 Wenbing Zhao Department

Android Sensor Programming Lecture 13 Wenbing Zhao Department of Electrical Engineering and Computer Science Cleveland State University w. zhao 1@csuohio. edu 11/25/2020 Android Sensor Programming 1

Mobile Vision n Image processing with bitmap q n Open. CV 4 Android SDK: q n https: //github. com/bytedeco/javacv Google Mobile Vision q n https: //docs. opencv. org/2. 4/doc/tutorials/introduction/android_binary_pac kage/O 4 A_SDK. html Java. CV: q n https: //xjaphx. wordpress. com/learning/tutorials/ https: //developers. google. com/vision/ Gooel ML (machine learning) Kit q https: //developers. google. com/ml-kit/ 11/25/2020 Android Sensor Programming 2

Google Mobile Vision API n n n Face tracking Barcode reading Text recognition 11/25/2020 Android Sensor Programming 3

Google Mobile Vision Face Tracking n n n https: //developers. google. com/vision/android/getting-started Face detection is the process of automatically locating human faces in visual media (digital images or video) A face that is detected is reported at a position with an associated size and orientation Once a face is detected, it can be searched for landmarks (points of interest within a face) such as the eyes and nose Classification is determining whether a certain facial characteristic is present. For example, a face can be classified with regards to whether its eyes are open or closed. Another example is whether the face is smiling or not 11/25/2020 Android Sensor Programming 4

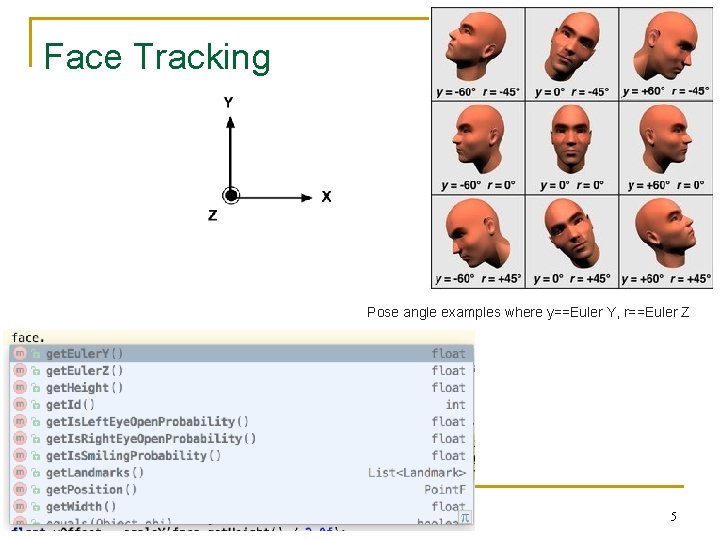

Face Tracking Pose angle examples where y==Euler Y, r==Euler Z 11/25/2020 Android Sensor Programming 5

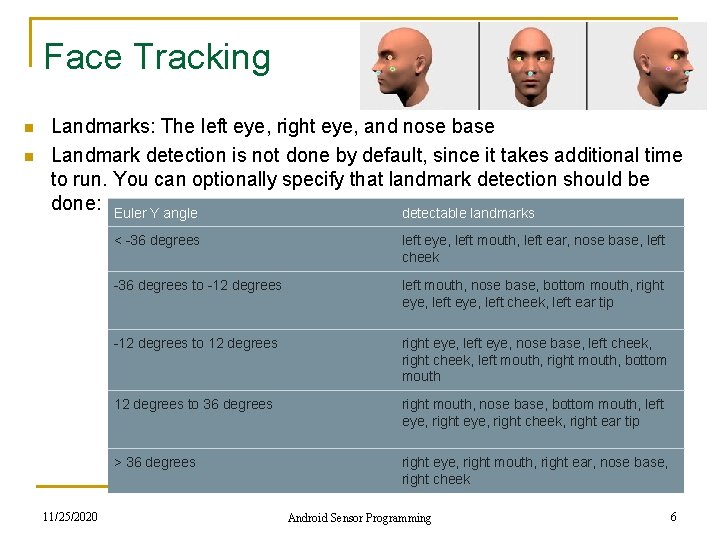

Face Tracking n n Landmarks: The left eye, right eye, and nose base Landmark detection is not done by default, since it takes additional time to run. You can optionally specify that landmark detection should be done: Euler Y angle detectable landmarks 11/25/2020 < -36 degrees left eye, left mouth, left ear, nose base, left cheek -36 degrees to -12 degrees left mouth, nose base, bottom mouth, right eye, left cheek, left ear tip -12 degrees to 12 degrees right eye, left eye, nose base, left cheek, right cheek, left mouth, right mouth, bottom mouth 12 degrees to 36 degrees right mouth, nose base, bottom mouth, left eye, right cheek, right ear tip > 36 degrees right eye, right mouth, right ear, nose base, right cheek Android Sensor Programming 6

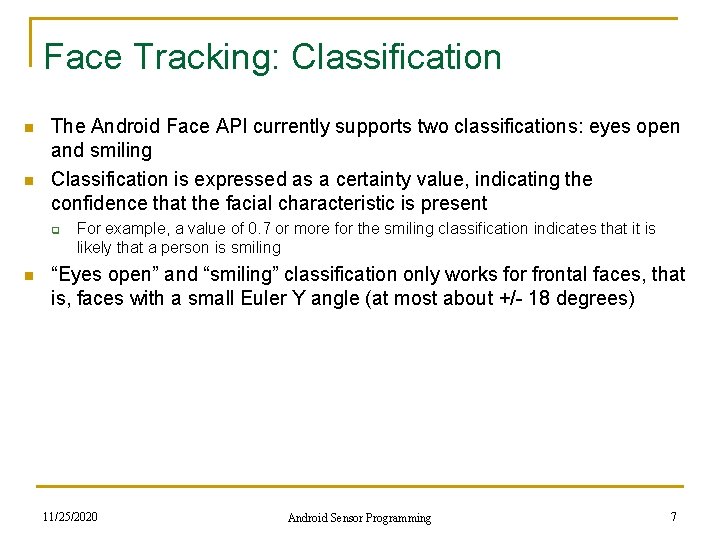

Face Tracking: Classification n n The Android Face API currently supports two classifications: eyes open and smiling Classification is expressed as a certainty value, indicating the confidence that the facial characteristic is present q n For example, a value of 0. 7 or more for the smiling classification indicates that it is likely that a person is smiling “Eyes open” and “smiling” classification only works for frontal faces, that is, faces with a small Euler Y angle (at most about +/- 18 degrees) 11/25/2020 Android Sensor Programming 7

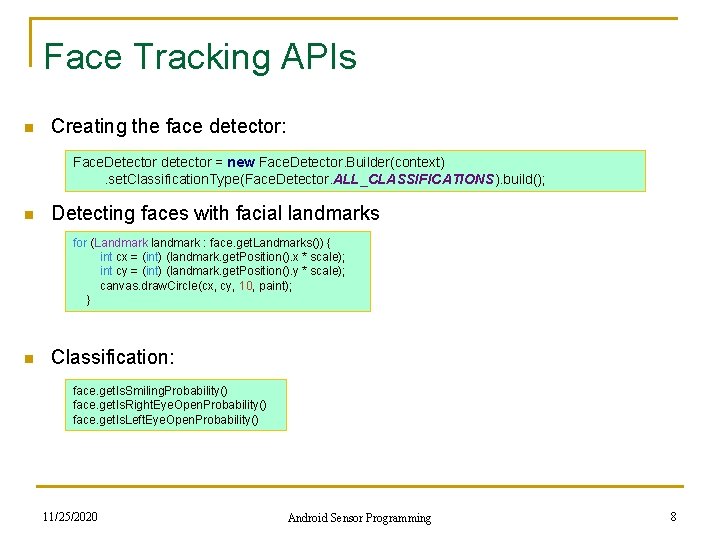

Face Tracking APIs n Creating the face detector: Face. Detector detector = new Face. Detector. Builder(context) . set. Classification. Type(Face. Detector. ALL_CLASSIFICATIONS). build(); n Detecting faces with facial landmarks for (Landmark landmark : face. get. Landmarks()) { int cx = (int) (landmark. get. Position(). x * scale); int cy = (int) (landmark. get. Position(). y * scale); canvas. draw. Circle(cx, cy, 10, paint); } n Classification: face. get. Is. Smiling. Probability() face. get. Is. Right. Eye. Open. Probability() face. get. Is. Left. Eye. Open. Probability() 11/25/2020 Android Sensor Programming 8

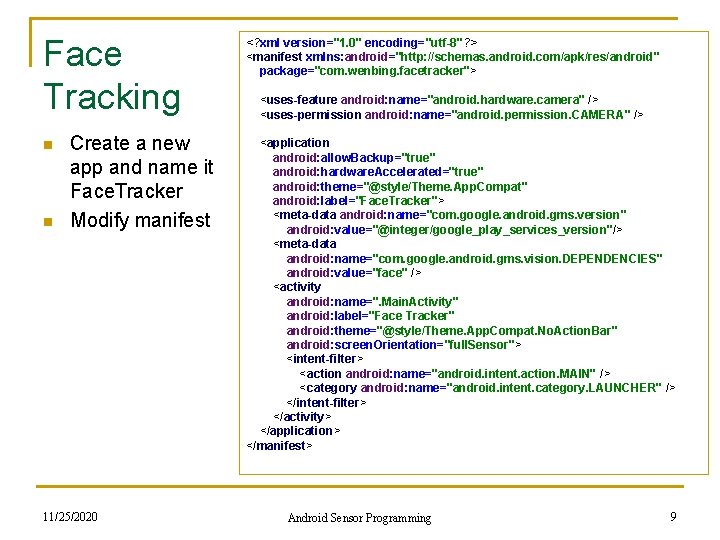

Face Tracking n n Create a new app and name it Face. Tracker Modify manifest 11/25/2020 <? xml version="1. 0" encoding="utf-8"? > <manifest xmlns: android="http: //schemas. android. com/apk/res/android" package="com. wenbing. facetracker"> <uses-feature android: name="android. hardware. camera" /> <uses-permission android: name="android. permission. CAMERA" /> <application android: allow. Backup="true" android: hardware. Accelerated="true" android: theme="@style/Theme. App. Compat" android: label="Face. Tracker"> <meta-data android: name="com. google. android. gms. version" android: value="@integer/google_play_services_version"/> <meta-data android: name="com. google. android. gms. vision. DEPENDENCIES" android: value="face" /> <activity android: name=". Main. Activity" android: label="Face Tracker" android: theme="@style/Theme. App. Compat. No. Action. Bar" android: screen. Orientation="full. Sensor"> <intent-filter> <action android: name="android. intent. action. MAIN" /> <category android: name="android. intent. category. LAUNCHER" /> </intent-filter> </activity> </application> </manifest> Android Sensor Programming 9

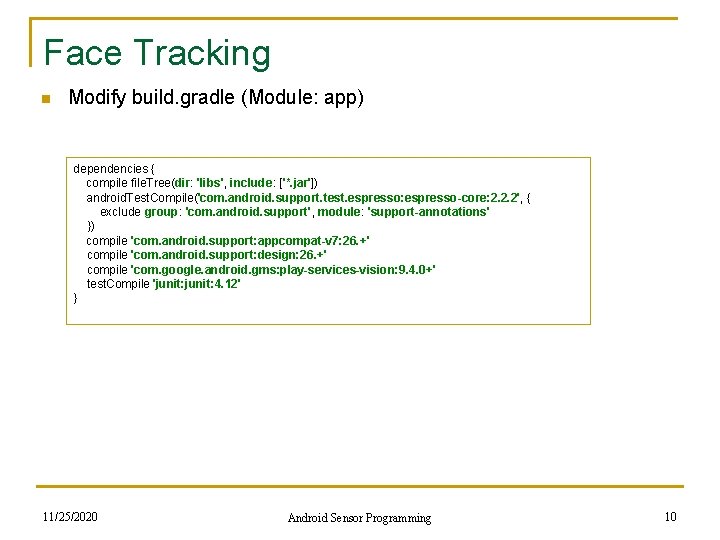

Face Tracking n Modify build. gradle (Module: app) dependencies { compile file. Tree(dir: 'libs', include: ['*. jar']) android. Test. Compile('com. android. support. test. espresso: espresso-core: 2. 2. 2', { exclude group: 'com. android. support', module: 'support-annotations' }) compile 'com. android. support: appcompat-v 7: 26. +' compile 'com. android. support: design: 26. +' compile 'com. google. android. gms: play-services-vision: 9. 4. 0+' test. Compile 'junit: 4. 12' } 11/25/2020 Android Sensor Programming 10

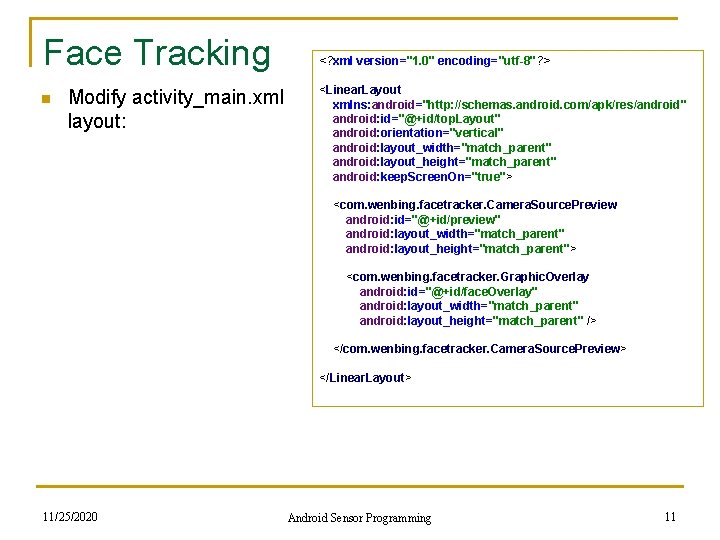

Face Tracking n <? xml version="1. 0" encoding="utf-8"? > Modify activity_main. xml layout: <Linear. Layout xmlns: android="http: //schemas. android. com/apk/res/android" android: id="@+id/top. Layout" android: orientation="vertical" android: layout_width="match_parent" android: layout_height="match_parent" android: keep. Screen. On="true"> <com. wenbing. facetracker. Camera. Source. Preview android: id="@+id/preview" android: layout_width="match_parent" android: layout_height="match_parent"> <com. wenbing. facetracker. Graphic. Overlay android: id="@+id/face. Overlay" android: layout_width="match_parent" android: layout_height="match_parent" /> </com. wenbing. facetracker. Camera. Source. Preview> </Linear. Layout> 11/25/2020 Android Sensor Programming 11

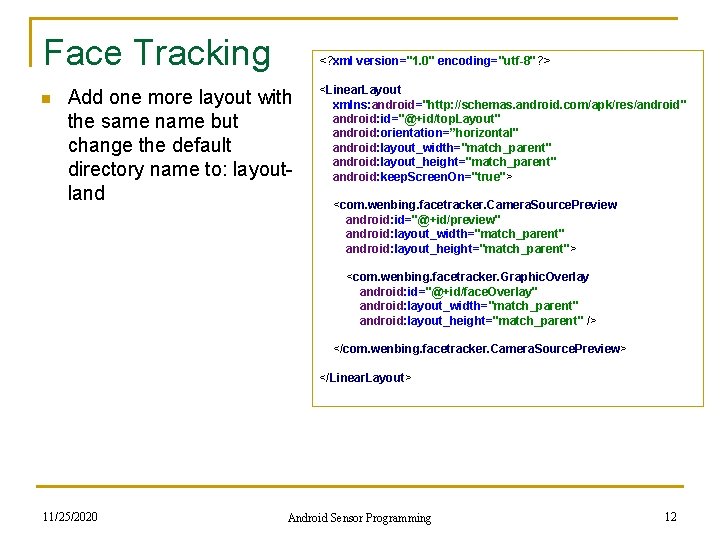

Face Tracking n <? xml version="1. 0" encoding="utf-8"? > Add one more layout with the same name but change the default directory name to: layoutland <Linear. Layout xmlns: android="http: //schemas. android. com/apk/res/android" android: id="@+id/top. Layout" android: orientation=”horizontal" android: layout_width="match_parent" android: layout_height="match_parent" android: keep. Screen. On="true"> <com. wenbing. facetracker. Camera. Source. Preview android: id="@+id/preview" android: layout_width="match_parent" android: layout_height="match_parent"> <com. wenbing. facetracker. Graphic. Overlay android: id="@+id/face. Overlay" android: layout_width="match_parent" android: layout_height="match_parent" /> </com. wenbing. facetracker. Camera. Source. Preview> </Linear. Layout> 11/25/2020 Android Sensor Programming 12

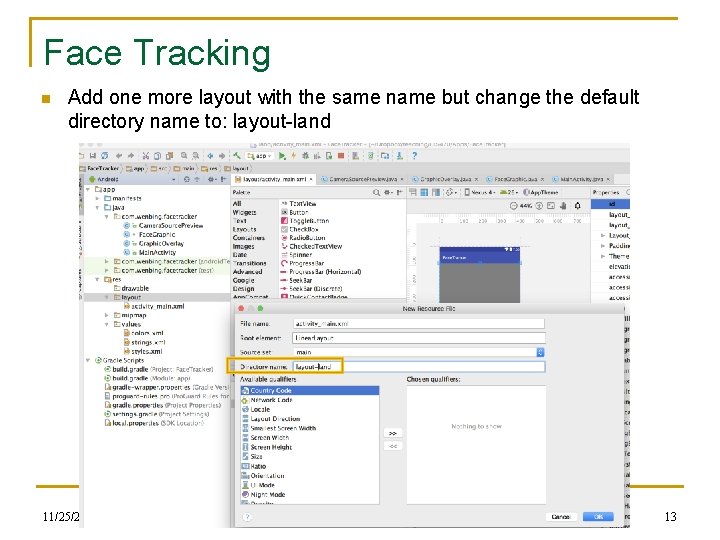

Face Tracking n Add one more layout with the same name but change the default directory name to: layout-land 11/25/2020 Android Sensor Programming 13

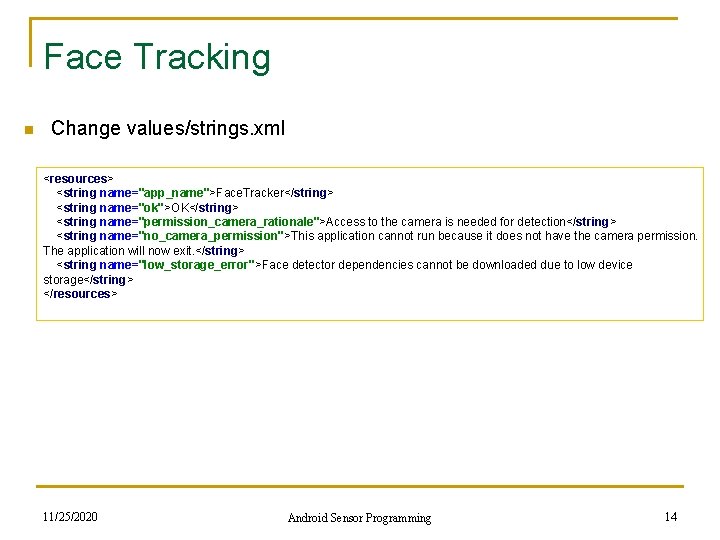

Face Tracking n Change values/strings. xml <resources> <string name="app_name">Face. Tracker</string> <string name="ok">OK</string> <string name="permission_camera_rationale">Access to the camera is needed for detection</string> <string name="no_camera_permission">This application cannot run because it does not have the camera permission. The application will now exit. </string> <string name="low_storage_error">Face detector dependencies cannot be downloaded due to low device storage</string> </resources> 11/25/2020 Android Sensor Programming 14

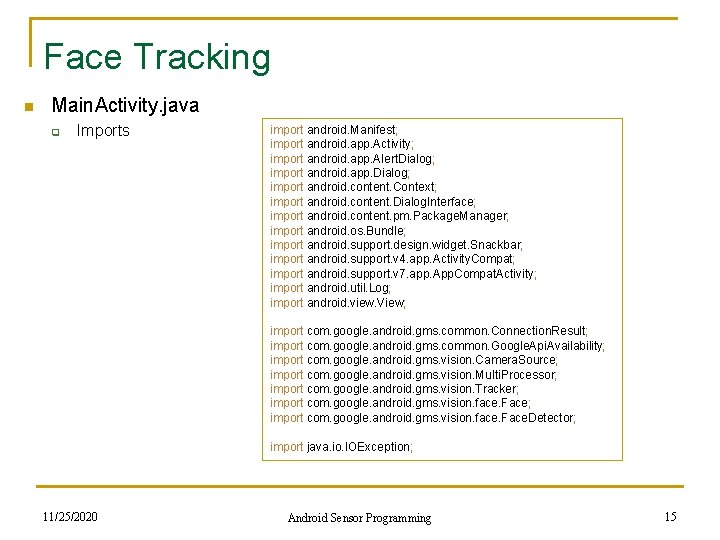

Face Tracking n Main. Activity. java q Imports import android. Manifest; import android. app. Activity; import android. app. Alert. Dialog; import android. app. Dialog; import android. content. Context; import android. content. Dialog. Interface; import android. content. pm. Package. Manager; import android. os. Bundle; import android. support. design. widget. Snackbar; import android. support. v 4. app. Activity. Compat; import android. support. v 7. app. App. Compat. Activity; import android. util. Log; import android. view. View; import com. google. android. gms. common. Connection. Result; import com. google. android. gms. common. Google. Api. Availability; import com. google. android. gms. vision. Camera. Source; import com. google. android. gms. vision. Multi. Processor; import com. google. android. gms. vision. Tracker; import com. google. android. gms. vision. face. Face. Detector; import java. io. IOException; 11/25/2020 Android Sensor Programming 15

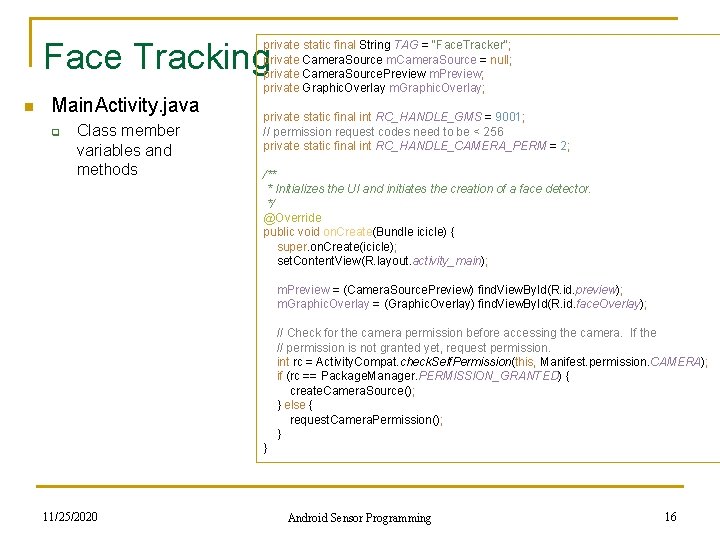

Face Tracking n Main. Activity. java q Class member variables and methods private static final String TAG = "Face. Tracker"; private Camera. Source m. Camera. Source = null; private Camera. Source. Preview m. Preview; private Graphic. Overlay m. Graphic. Overlay; private static final int RC_HANDLE_GMS = 9001; // permission request codes need to be < 256 private static final int RC_HANDLE_CAMERA_PERM = 2; /** * Initializes the UI and initiates the creation of a face detector. */ @Override public void on. Create(Bundle icicle) { super. on. Create(icicle); set. Content. View(R. layout. activity_main); m. Preview = (Camera. Source. Preview) find. View. By. Id(R. id. preview); m. Graphic. Overlay = (Graphic. Overlay) find. View. By. Id(R. id. face. Overlay); // Check for the camera permission before accessing the camera. If the // permission is not granted yet, request permission. int rc = Activity. Compat. check. Self. Permission(this, Manifest. permission. CAMERA); if (rc == Package. Manager. PERMISSION_GRANTED) { create. Camera. Source(); } else { request. Camera. Permission(); } } 11/25/2020 Android Sensor Programming 16

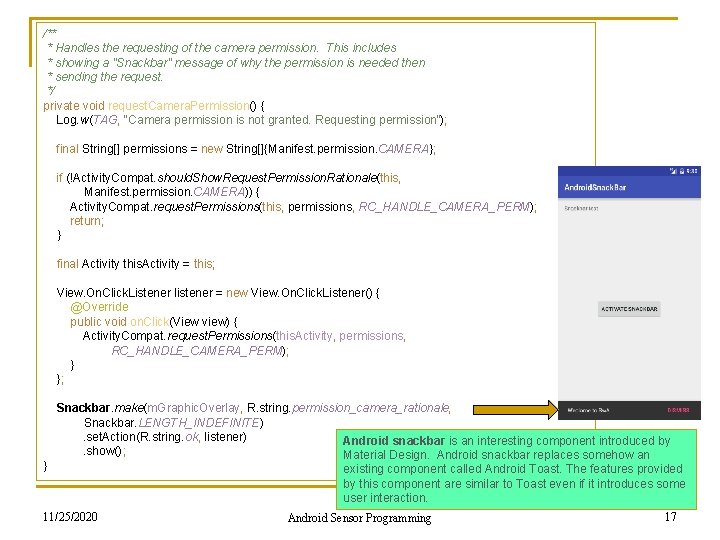

/** * Handles the requesting of the camera permission. This includes * showing a "Snackbar" message of why the permission is needed then * sending the request. */ private void request. Camera. Permission() { Log. w(TAG, "Camera permission is not granted. Requesting permission"); final String[] permissions = new String[]{Manifest. permission. CAMERA}; if (!Activity. Compat. should. Show. Request. Permission. Rationale(this, Manifest. permission. CAMERA)) { Activity. Compat. request. Permissions(this, permissions, RC_HANDLE_CAMERA_PERM); return; } final Activity this. Activity = this; View. On. Click. Listener listener = new View. On. Click. Listener() { @Override public void on. Click(View view) { Activity. Compat. request. Permissions(this. Activity, permissions, RC_HANDLE_CAMERA_PERM); } }; Snackbar. make(m. Graphic. Overlay, R. string. permission_camera_rationale, Snackbar. LENGTH_INDEFINITE) . set. Action(R. string. ok, listener) Android snackbar is an interesting component introduced by . show(); Material Design. Android snackbar replaces somehow an } existing component called Android Toast. The features provided by this component are similar to Toast even if it introduces some user interaction. 11/25/2020 Android Sensor Programming 17

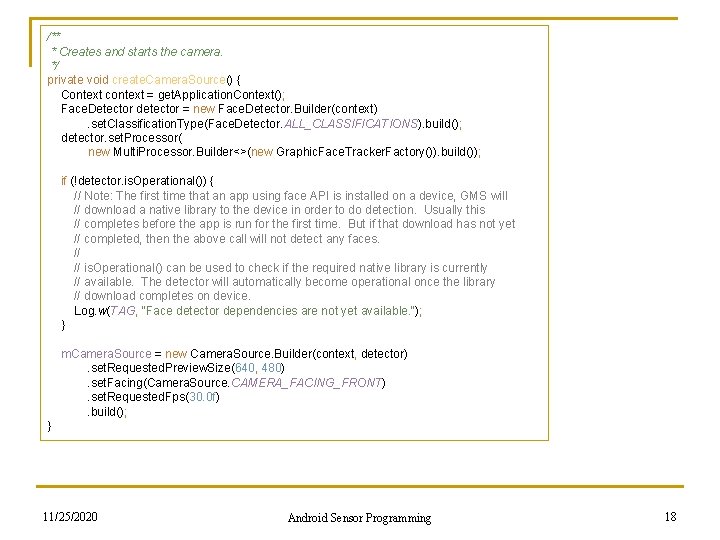

/** * Creates and starts the camera. */ private void create. Camera. Source() { Context context = get. Application. Context(); Face. Detector detector = new Face. Detector. Builder(context) . set. Classification. Type(Face. Detector. ALL_CLASSIFICATIONS). build(); detector. set. Processor( new Multi. Processor. Builder<>(new Graphic. Face. Tracker. Factory()). build()); if (!detector. is. Operational()) { // Note: The first time that an app using face API is installed on a device, GMS will // download a native library to the device in order to do detection. Usually this // completes before the app is run for the first time. But if that download has not yet // completed, then the above call will not detect any faces. // is. Operational() can be used to check if the required native library is currently // available. The detector will automatically become operational once the library // download completes on device. Log. w(TAG, "Face detector dependencies are not yet available. "); } m. Camera. Source = new Camera. Source. Builder(context, detector) . set. Requested. Preview. Size(640, 480) . set. Facing(Camera. Source. CAMERA_FACING_FRONT) . set. Requested. Fps(30. 0 f) . build(); } 11/25/2020 Android Sensor Programming 18

![@Override public void on. Request. Permissions. Result(int request. Code, String[] permissions, int[] grant. Results) @Override public void on. Request. Permissions. Result(int request. Code, String[] permissions, int[] grant. Results)](http://slidetodoc.com/presentation_image_h/a004590258fb89235a5824e10a36d0e5/image-19.jpg)

@Override public void on. Request. Permissions. Result(int request. Code, String[] permissions, int[] grant. Results) { if (request. Code != RC_HANDLE_CAMERA_PERM) { Log. d(TAG, "Got unexpected permission result: " + request. Code); super. on. Request. Permissions. Result(request. Code, permissions, grant. Results); return; } if (grant. Results. length != 0 && grant. Results[0] == Package. Manager. PERMISSION_GRANTED) { Log. d(TAG, "Camera permission granted - initialize the camera source"); // we have permission, so create the camerasource create. Camera. Source(); return; } Log. e(TAG, "Permission not granted: results len = " + grant. Results. length + " Result code = " + (grant. Results. length > 0 ? grant. Results[0] : "(empty)")); Dialog. Interface. On. Click. Listener listener = new Dialog. Interface. On. Click. Listener() { public void on. Click(Dialog. Interface dialog, int id) { finish(); } }; Alert. Dialog. Builder builder = new Alert. Dialog. Builder(this); builder. set. Title("Face Tracker sample") . set. Message(R. string. no_camera_permission) . set. Positive. Button(R. string. ok, listener) . show(); } 11/25/2020 Android Sensor Programming 19

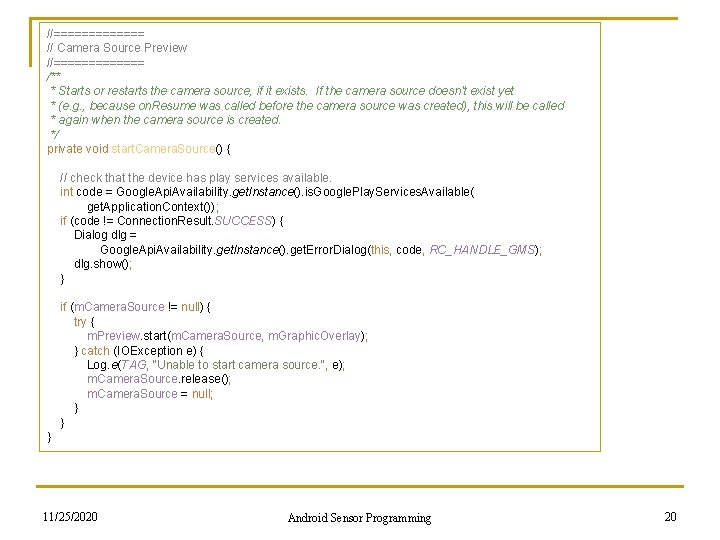

//======= // Camera Source Preview //======= /** * Starts or restarts the camera source, if it exists. If the camera source doesn't exist yet * (e. g. , because on. Resume was called before the camera source was created), this will be called * again when the camera source is created. */ private void start. Camera. Source() { // check that the device has play services available. int code = Google. Api. Availability. get. Instance(). is. Google. Play. Services. Available( get. Application. Context()); if (code != Connection. Result. SUCCESS) { Dialog dlg = Google. Api. Availability. get. Instance(). get. Error. Dialog(this, code, RC_HANDLE_GMS); dlg. show(); } if (m. Camera. Source != null) { try { m. Preview. start(m. Camera. Source, m. Graphic. Overlay); } catch (IOException e) { Log. e(TAG, "Unable to start camera source. ", e); m. Camera. Source. release(); m. Camera. Source = null; } } } 11/25/2020 Android Sensor Programming 20

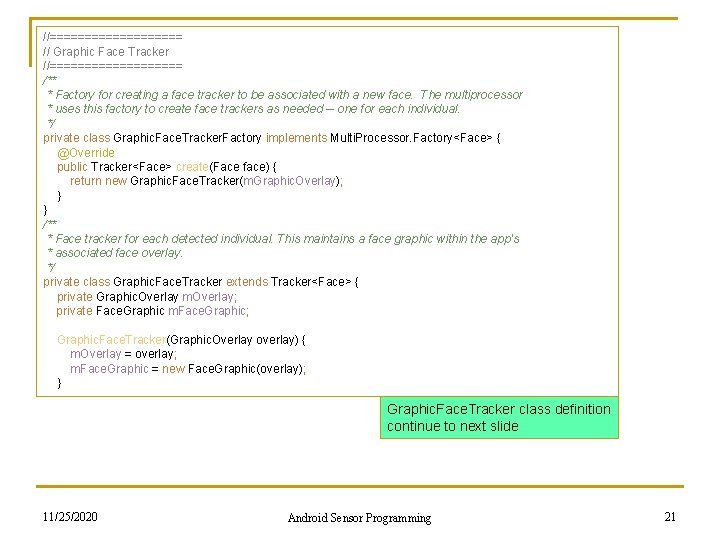

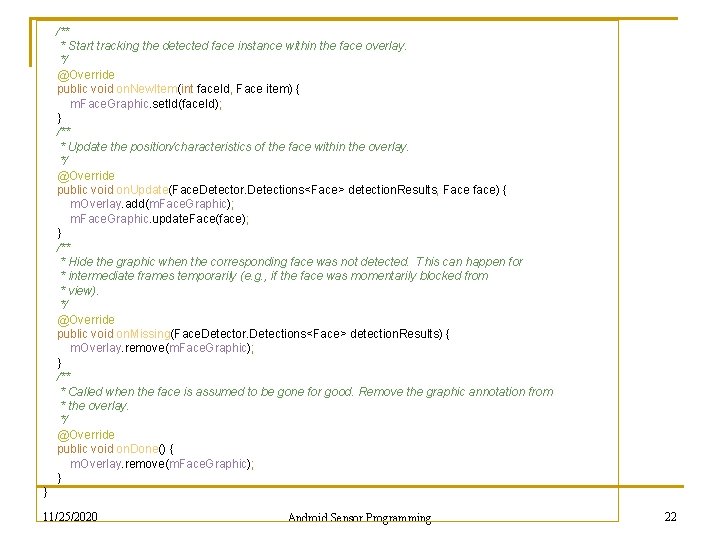

//========== // Graphic Face Tracker //========== /** * Factory for creating a face tracker to be associated with a new face. The multiprocessor * uses this factory to create face trackers as needed -- one for each individual. */ private class Graphic. Face. Tracker. Factory implements Multi. Processor. Factory<Face> { @Override public Tracker<Face> create(Face face) { return new Graphic. Face. Tracker(m. Graphic. Overlay); } } /** * Face tracker for each detected individual. This maintains a face graphic within the app's * associated face overlay. */ private class Graphic. Face. Tracker extends Tracker<Face> { private Graphic. Overlay m. Overlay; private Face. Graphic m. Face. Graphic; Graphic. Face. Tracker(Graphic. Overlay overlay) { m. Overlay = overlay; m. Face. Graphic = new Face. Graphic(overlay); } Graphic. Face. Tracker class definition continue to next slide 11/25/2020 Android Sensor Programming 21

/** * Start tracking the detected face instance within the face overlay. */ @Override public void on. New. Item(int face. Id, Face item) { m. Face. Graphic. set. Id(face. Id); } /** * Update the position/characteristics of the face within the overlay. */ @Override public void on. Update(Face. Detector. Detections<Face> detection. Results, Face face) { m. Overlay. add(m. Face. Graphic); m. Face. Graphic. update. Face(face); } /** * Hide the graphic when the corresponding face was not detected. This can happen for * intermediate frames temporarily (e. g. , if the face was momentarily blocked from * view). */ @Override public void on. Missing(Face. Detector. Detections<Face> detection. Results) { m. Overlay. remove(m. Face. Graphic); } /** * Called when the face is assumed to be gone for good. Remove the graphic annotation from * the overlay. */ @Override public void on. Done() { m. Overlay. remove(m. Face. Graphic); } } 11/25/2020 Android Sensor Programming 22

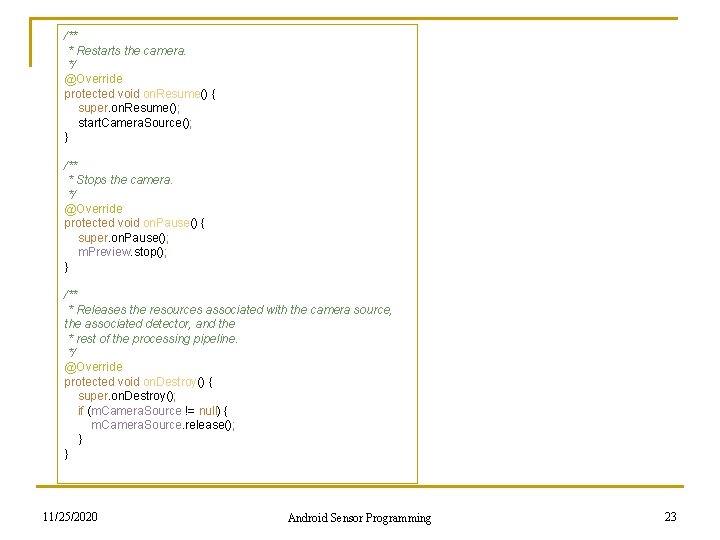

/** * Restarts the camera. */ @Override protected void on. Resume() { super. on. Resume(); start. Camera. Source(); } /** * Stops the camera. */ @Override protected void on. Pause() { super. on. Pause(); m. Preview. stop(); } /** * Releases the resources associated with the camera source, the associated detector, and the * rest of the processing pipeline. */ @Override protected void on. Destroy() { super. on. Destroy(); if (m. Camera. Source != null) { m. Camera. Source. release(); } } 11/25/2020 Android Sensor Programming 23

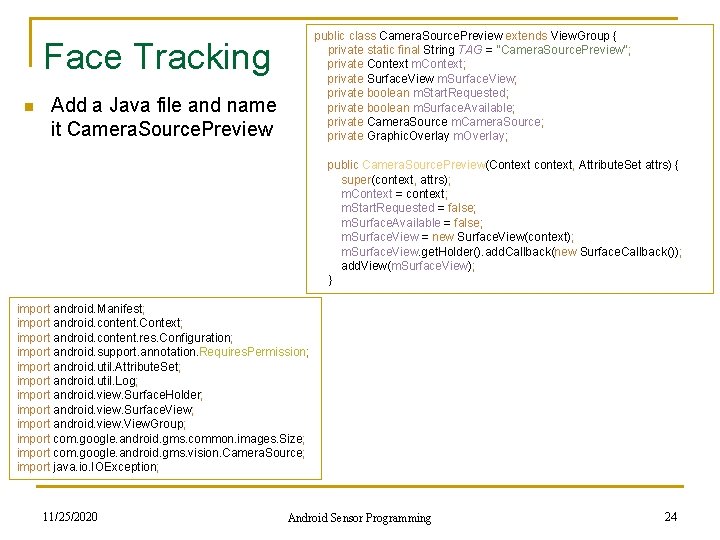

public class Camera. Source. Preview extends View. Group { private static final String TAG = "Camera. Source. Preview"; private Context m. Context; private Surface. View m. Surface. View; private boolean m. Start. Requested; private boolean m. Surface. Available; private Camera. Source m. Camera. Source; private Graphic. Overlay m. Overlay; Face Tracking n Add a Java file and name it Camera. Source. Preview public Camera. Source. Preview(Context context, Attribute. Set attrs) { super(context, attrs); m. Context = context; m. Start. Requested = false; m. Surface. Available = false; m. Surface. View = new Surface. View(context); m. Surface. View. get. Holder(). add. Callback(new Surface. Callback()); add. View(m. Surface. View); } import android. Manifest; import android. content. Context; import android. content. res. Configuration; import android. support. annotation. Requires. Permission; import android. util. Attribute. Set; import android. util. Log; import android. view. Surface. Holder; import android. view. Surface. View; import android. view. View. Group; import com. google. android. gms. common. images. Size; import com. google. android. gms. vision. Camera. Source; import java. io. IOException; 11/25/2020 Android Sensor Programming 24

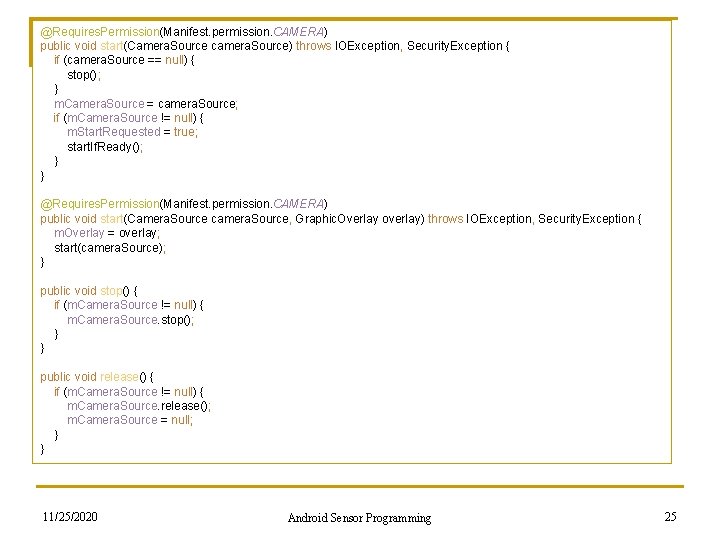

@Requires. Permission(Manifest. permission. CAMERA) public void start(Camera. Source camera. Source) throws IOException, Security. Exception { if (camera. Source == null) { stop(); } m. Camera. Source = camera. Source; if (m. Camera. Source != null) { m. Start. Requested = true; start. If. Ready(); } } @Requires. Permission(Manifest. permission. CAMERA) public void start(Camera. Source camera. Source, Graphic. Overlay overlay) throws IOException, Security. Exception { m. Overlay = overlay; start(camera. Source); } public void stop() { if (m. Camera. Source != null) { m. Camera. Source. stop(); } } public void release() { if (m. Camera. Source != null) { m. Camera. Source. release(); m. Camera. Source = null; } } 11/25/2020 Android Sensor Programming 25

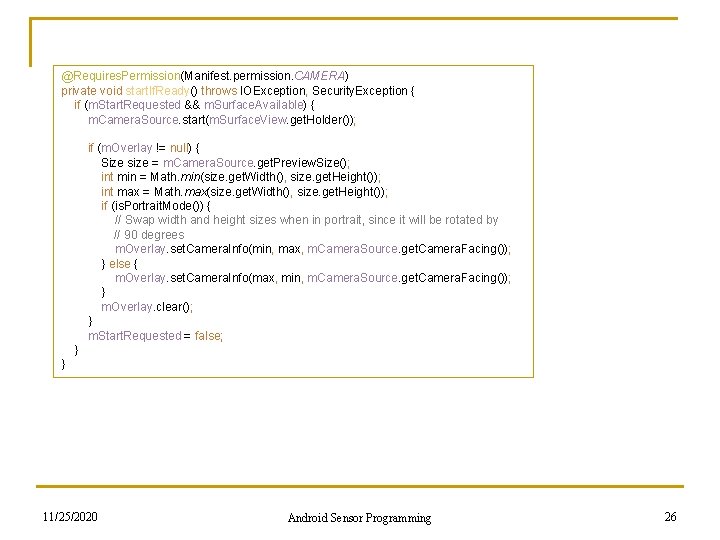

@Requires. Permission(Manifest. permission. CAMERA) private void start. If. Ready() throws IOException, Security. Exception { if (m. Start. Requested && m. Surface. Available) { m. Camera. Source. start(m. Surface. View. get. Holder()); if (m. Overlay != null) { Size size = m. Camera. Source. get. Preview. Size(); int min = Math. min(size. get. Width(), size. get. Height()); int max = Math. max(size. get. Width(), size. get. Height()); if (is. Portrait. Mode()) { // Swap width and height sizes when in portrait, since it will be rotated by // 90 degrees m. Overlay. set. Camera. Info(min, max, m. Camera. Source. get. Camera. Facing()); } else { m. Overlay. set. Camera. Info(max, min, m. Camera. Source. get. Camera. Facing()); } m. Overlay. clear(); } m. Start. Requested = false; } } 11/25/2020 Android Sensor Programming 26

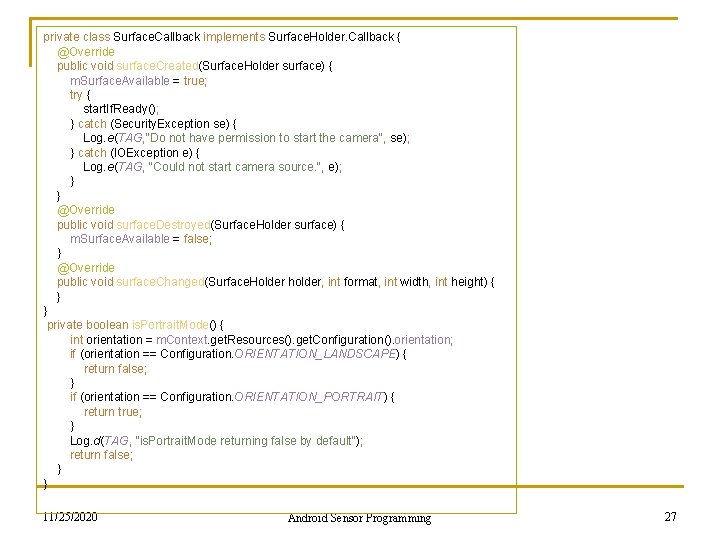

private class Surface. Callback implements Surface. Holder. Callback { @Override public void surface. Created(Surface. Holder surface) { m. Surface. Available = true; try { start. If. Ready(); } catch (Security. Exception se) { Log. e(TAG, "Do not have permission to start the camera", se); } catch (IOException e) { Log. e(TAG, "Could not start camera source. ", e); } } @Override public void surface. Destroyed(Surface. Holder surface) { m. Surface. Available = false; } @Override public void surface. Changed(Surface. Holder holder, int format, int width, int height) { } } private boolean is. Portrait. Mode() { int orientation = m. Context. get. Resources(). get. Configuration(). orientation; if (orientation == Configuration. ORIENTATION_LANDSCAPE) { return false; } if (orientation == Configuration. ORIENTATION_PORTRAIT) { return true; } Log. d(TAG, "is. Portrait. Mode returning false by default"); return false; } } 11/25/2020 Android Sensor Programming 27

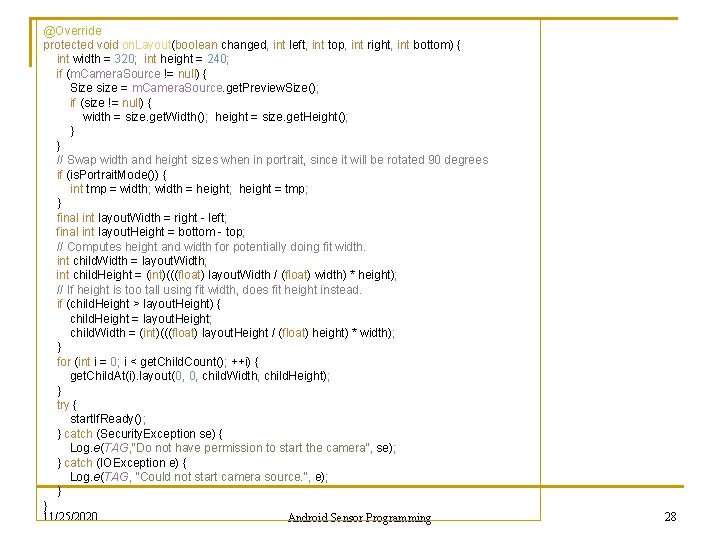

@Override protected void on. Layout(boolean changed, int left, int top, int right, int bottom) { int width = 320; int height = 240; if (m. Camera. Source != null) { Size size = m. Camera. Source. get. Preview. Size(); if (size != null) { width = size. get. Width(); height = size. get. Height(); } } // Swap width and height sizes when in portrait, since it will be rotated 90 degrees if (is. Portrait. Mode()) { int tmp = width; width = height; height = tmp; } final int layout. Width = right - left; final int layout. Height = bottom - top; // Computes height and width for potentially doing fit width. int child. Width = layout. Width; int child. Height = (int)(((float) layout. Width / (float) width) * height); // If height is too tall using fit width, does fit height instead. if (child. Height > layout. Height) { child. Height = layout. Height; child. Width = (int)(((float) layout. Height / (float) height) * width); } for (int i = 0; i < get. Child. Count(); ++i) { get. Child. At(i). layout(0, 0, child. Width, child. Height); } try { start. If. Ready(); } catch (Security. Exception se) { Log. e(TAG, "Do not have permission to start the camera", se); } catch (IOException e) { Log. e(TAG, "Could not start camera source. ", e); } } 11/25/2020 Android Sensor Programming 28

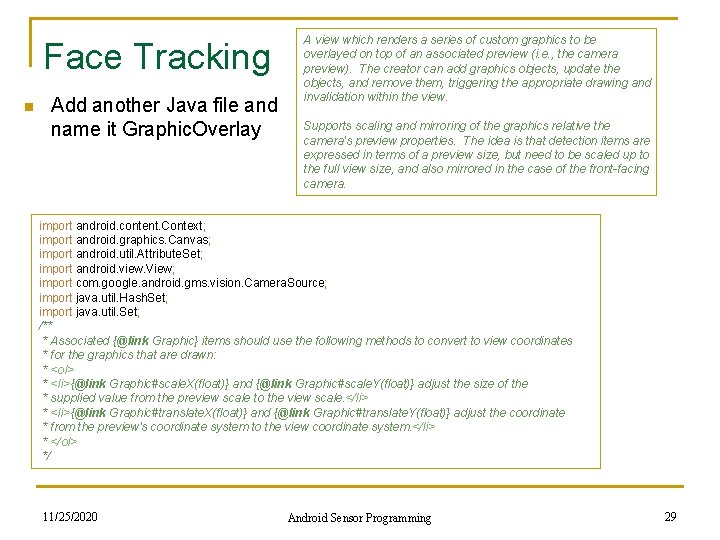

Face Tracking n Add another Java file and name it Graphic. Overlay A view which renders a series of custom graphics to be overlayed on top of an associated preview (i. e. , the camera preview). The creator can add graphics objects, update the objects, and remove them, triggering the appropriate drawing and invalidation within the view. Supports scaling and mirroring of the graphics relative the camera's preview properties. The idea is that detection items are expressed in terms of a preview size, but need to be scaled up to the full view size, and also mirrored in the case of the front-facing camera. import android. content. Context; import android. graphics. Canvas; import android. util. Attribute. Set; import android. view. View; import com. google. android. gms. vision. Camera. Source; import java. util. Hash. Set; import java. util. Set; /** * Associated {@link Graphic} items should use the following methods to convert to view coordinates * for the graphics that are drawn: * <ol> * <li>{@link Graphic#scale. X(float)} and {@link Graphic#scale. Y(float)} adjust the size of the * supplied value from the preview scale to the view scale. </li> * <li>{@link Graphic#translate. X(float)} and {@link Graphic#translate. Y(float)} adjust the coordinate * from the preview's coordinate system to the view coordinate system. </li> * </ol> */ 11/25/2020 Android Sensor Programming 29

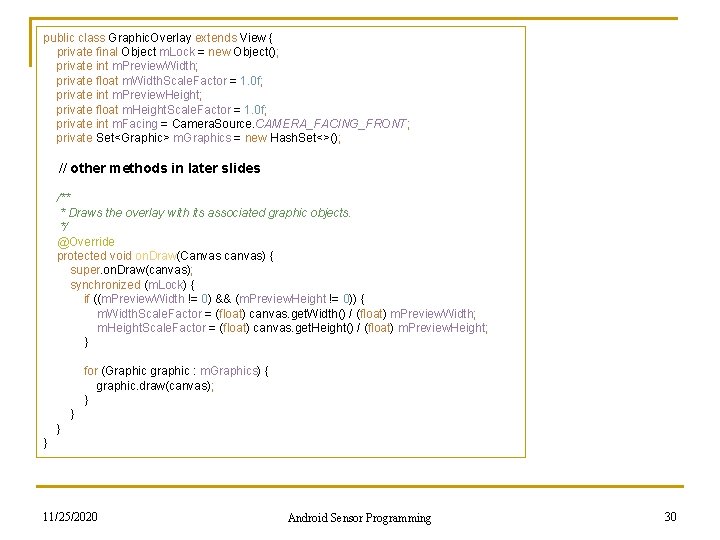

public class Graphic. Overlay extends View { private final Object m. Lock = new Object(); private int m. Preview. Width; private float m. Width. Scale. Factor = 1. 0 f; private int m. Preview. Height; private float m. Height. Scale. Factor = 1. 0 f; private int m. Facing = Camera. Source. CAMERA_FACING_FRONT; private Set<Graphic> m. Graphics = new Hash. Set<>(); // other methods in later slides /** * Draws the overlay with its associated graphic objects. */ @Override protected void on. Draw(Canvas canvas) { super. on. Draw(canvas); synchronized (m. Lock) { if ((m. Preview. Width != 0) && (m. Preview. Height != 0)) { m. Width. Scale. Factor = (float) canvas. get. Width() / (float) m. Preview. Width; m. Height. Scale. Factor = (float) canvas. get. Height() / (float) m. Preview. Height; } for (Graphic graphic : m. Graphics) { graphic. draw(canvas); } } } 11/25/2020 Android Sensor Programming 30

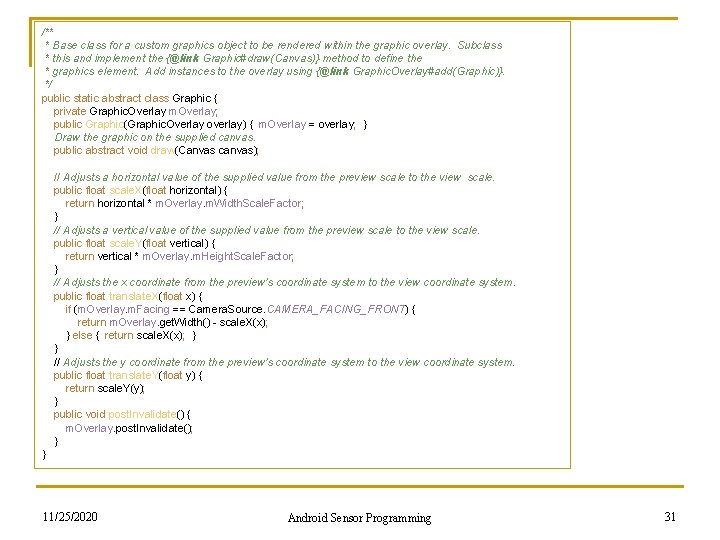

/** * Base class for a custom graphics object to be rendered within the graphic overlay. Subclass * this and implement the {@link Graphic#draw(Canvas)} method to define the * graphics element. Add instances to the overlay using {@link Graphic. Overlay#add(Graphic)}. */ public static abstract class Graphic { private Graphic. Overlay m. Overlay; public Graphic(Graphic. Overlay overlay) { m. Overlay = overlay; } Draw the graphic on the supplied canvas. public abstract void draw(Canvas canvas); // Adjusts a horizontal value of the supplied value from the preview scale to the view scale. public float scale. X(float horizontal) { return horizontal * m. Overlay. m. Width. Scale. Factor; } // Adjusts a vertical value of the supplied value from the preview scale to the view scale. public float scale. Y(float vertical) { return vertical * m. Overlay. m. Height. Scale. Factor; } // Adjusts the x coordinate from the preview's coordinate system to the view coordinate system. public float translate. X(float x) { if (m. Overlay. m. Facing == Camera. Source. CAMERA_FACING_FRONT) { return m. Overlay. get. Width() - scale. X(x); } else { return scale. X(x); } } // Adjusts the y coordinate from the preview's coordinate system to the view coordinate system. public float translate. Y(float y) { return scale. Y(y); } public void post. Invalidate() { m. Overlay. post. Invalidate(); } } 11/25/2020 Android Sensor Programming 31

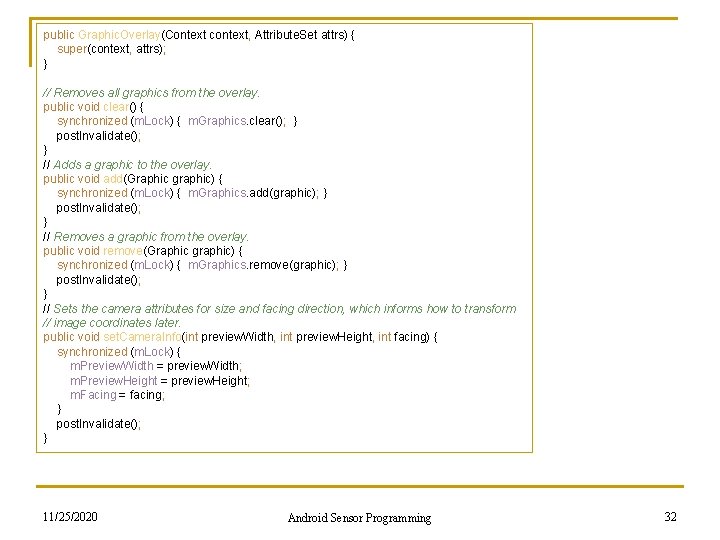

public Graphic. Overlay(Context context, Attribute. Set attrs) { super(context, attrs); } // Removes all graphics from the overlay. public void clear() { synchronized (m. Lock) { m. Graphics. clear(); } post. Invalidate(); } // Adds a graphic to the overlay. public void add(Graphic graphic) { synchronized (m. Lock) { m. Graphics. add(graphic); } post. Invalidate(); } // Removes a graphic from the overlay. public void remove(Graphic graphic) { synchronized (m. Lock) { m. Graphics. remove(graphic); } post. Invalidate(); } // Sets the camera attributes for size and facing direction, which informs how to transform // image coordinates later. public void set. Camera. Info(int preview. Width, int preview. Height, int facing) { synchronized (m. Lock) { m. Preview. Width = preview. Width; m. Preview. Height = preview. Height; m. Facing = facing; } post. Invalidate(); } 11/25/2020 Android Sensor Programming 32

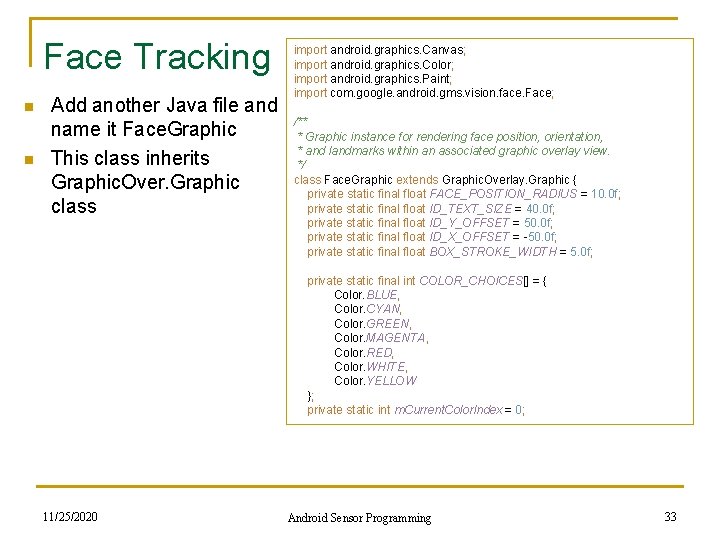

Face Tracking n n Add another Java file and name it Face. Graphic This class inherits Graphic. Over. Graphic class import android. graphics. Canvas; import android. graphics. Color; import android. graphics. Paint; import com. google. android. gms. vision. face. Face; /** * Graphic instance for rendering face position, orientation, * and landmarks within an associated graphic overlay view. */ class Face. Graphic extends Graphic. Overlay. Graphic { private static final float FACE_POSITION_RADIUS = 10. 0 f; private static final float ID_TEXT_SIZE = 40. 0 f; private static final float ID_Y_OFFSET = 50. 0 f; private static final float ID_X_OFFSET = -50. 0 f; private static final float BOX_STROKE_WIDTH = 5. 0 f; private static final int COLOR_CHOICES[] = { Color. BLUE, Color. CYAN, Color. GREEN, Color. MAGENTA, Color. RED, Color. WHITE, Color. YELLOW }; private static int m. Current. Color. Index = 0; 11/25/2020 Android Sensor Programming 33

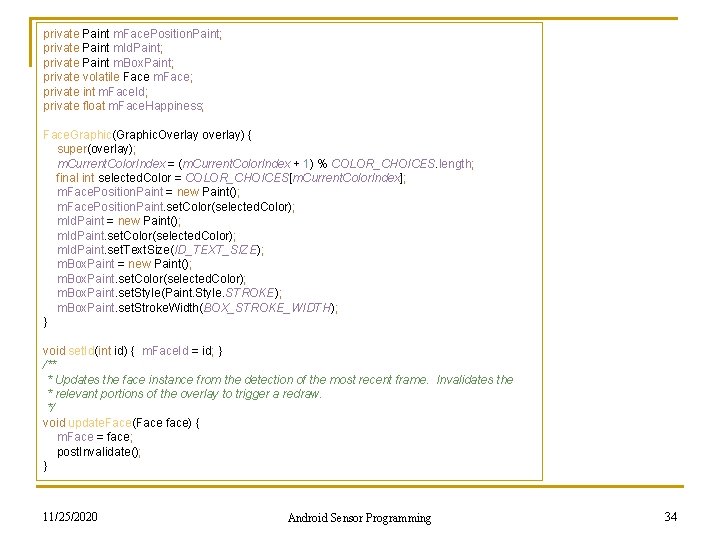

private Paint m. Face. Position. Paint; private Paint m. Id. Paint; private Paint m. Box. Paint; private volatile Face m. Face; private int m. Face. Id; private float m. Face. Happiness; Face. Graphic(Graphic. Overlay overlay) { super(overlay); m. Current. Color. Index = (m. Current. Color. Index + 1) % COLOR_CHOICES. length; final int selected. Color = COLOR_CHOICES[m. Current. Color. Index]; m. Face. Position. Paint = new Paint(); m. Face. Position. Paint. set. Color(selected. Color); m. Id. Paint = new Paint(); m. Id. Paint. set. Color(selected. Color); m. Id. Paint. set. Text. Size(ID_TEXT_SIZE); m. Box. Paint = new Paint(); m. Box. Paint. set. Color(selected. Color); m. Box. Paint. set. Style(Paint. Style. STROKE); m. Box. Paint. set. Stroke. Width(BOX_STROKE_WIDTH); } void set. Id(int id) { m. Face. Id = id; } /** * Updates the face instance from the detection of the most recent frame. Invalidates the * relevant portions of the overlay to trigger a redraw. */ void update. Face(Face face) { m. Face = face; post. Invalidate(); } 11/25/2020 Android Sensor Programming 34

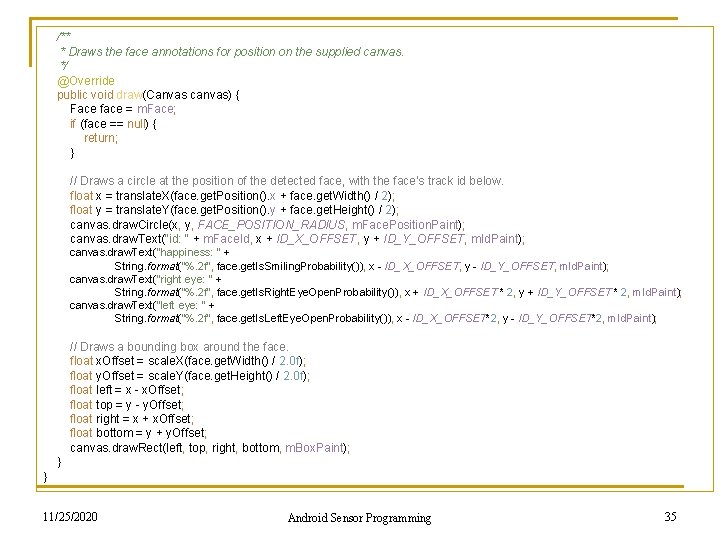

/** * Draws the face annotations for position on the supplied canvas. */ @Override public void draw(Canvas canvas) { Face face = m. Face; if (face == null) { return; } // Draws a circle at the position of the detected face, with the face's track id below. float x = translate. X(face. get. Position(). x + face. get. Width() / 2); float y = translate. Y(face. get. Position(). y + face. get. Height() / 2); canvas. draw. Circle(x, y, FACE_POSITION_RADIUS, m. Face. Position. Paint); canvas. draw. Text("id: " + m. Face. Id, x + ID_X_OFFSET, y + ID_Y_OFFSET, m. Id. Paint); canvas. draw. Text("happiness: " + String. format("%. 2 f", face. get. Is. Smiling. Probability()), x - ID_X_OFFSET, y - ID_Y_OFFSET, m. Id. Paint); canvas. draw. Text("right eye: " + String. format("%. 2 f", face. get. Is. Right. Eye. Open. Probability()), x + ID_X_OFFSET * 2, y + ID_Y_OFFSET * 2, m. Id. Paint); canvas. draw. Text("left eye: " + String. format("%. 2 f", face. get. Is. Left. Eye. Open. Probability()), x - ID_X_OFFSET*2, y - ID_Y_OFFSET*2, m. Id. Paint); // Draws a bounding box around the face. float x. Offset = scale. X(face. get. Width() / 2. 0 f); float y. Offset = scale. Y(face. get. Height() / 2. 0 f); float left = x - x. Offset; float top = y - y. Offset; float right = x + x. Offset; float bottom = y + y. Offset; canvas. draw. Rect(left, top, right, bottom, m. Box. Paint); } } 11/25/2020 Android Sensor Programming 35

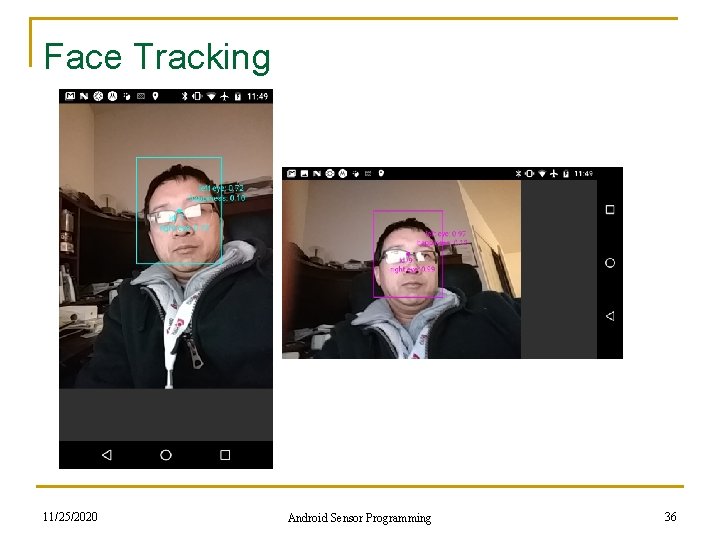

Face Tracking 11/25/2020 Android Sensor Programming 36

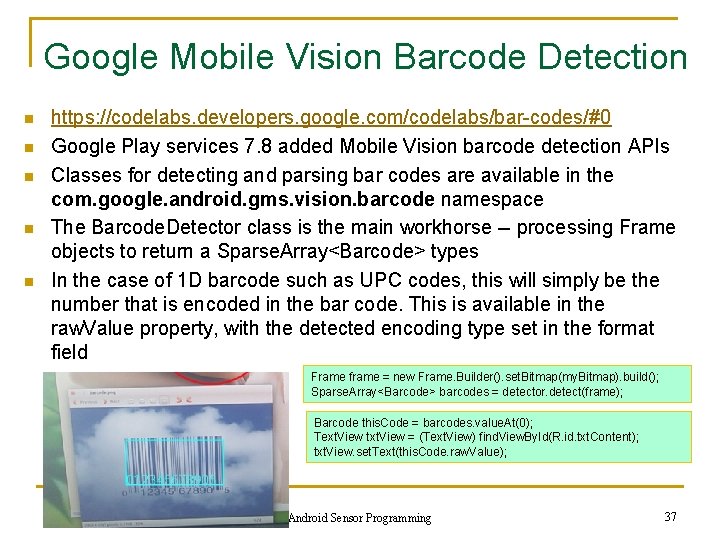

Google Mobile Vision Barcode Detection n n https: //codelabs. developers. google. com/codelabs/bar-codes/#0 Google Play services 7. 8 added Mobile Vision barcode detection APIs Classes for detecting and parsing bar codes are available in the com. google. android. gms. vision. barcode namespace The Barcode. Detector class is the main workhorse -- processing Frame objects to return a Sparse. Array<Barcode> types In the case of 1 D barcode such as UPC codes, this will simply be the number that is encoded in the bar code. This is available in the raw. Value property, with the detected encoding type set in the format field Frame frame = new Frame. Builder(). set. Bitmap(my. Bitmap). build(); Sparse. Array<Barcode> barcodes = detector. detect(frame); Barcode this. Code = barcodes. value. At(0); Text. View txt. View = (Text. View) find. View. By. Id(R. id. txt. Content); txt. View. set. Text(this. Code. raw. Value); 11/25/2020 Android Sensor Programming 37

Google Mobile Vision Barcode Detection n n For 2 D bar codes that contain structured data, such as QR codes -- the value. Format field is set to the detected value type, and the corresponding data field is set For example, if the URL type is detected, the constant URL will be loaded into the value. Format, and the Barcode. Url. Bookmark will contain the URL value Beyond URLs, there are lots of different data types that the QR code can support Barcode detection works in all orientations, code parsing is done locally 11/25/2020 Android Sensor Programming 38

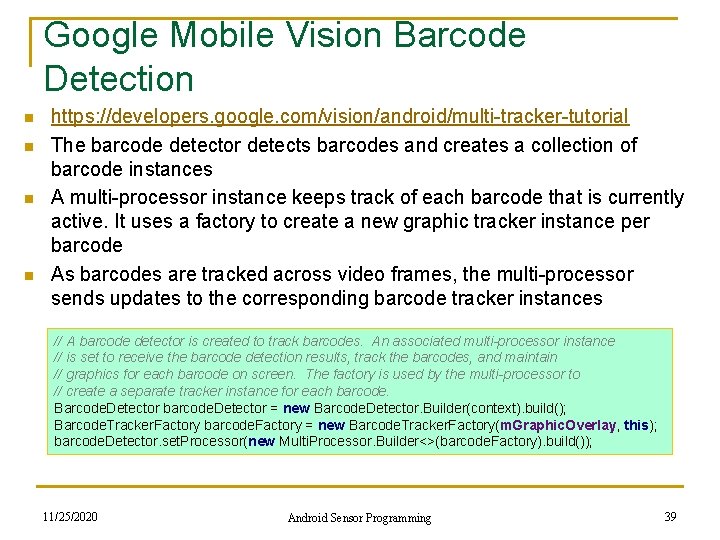

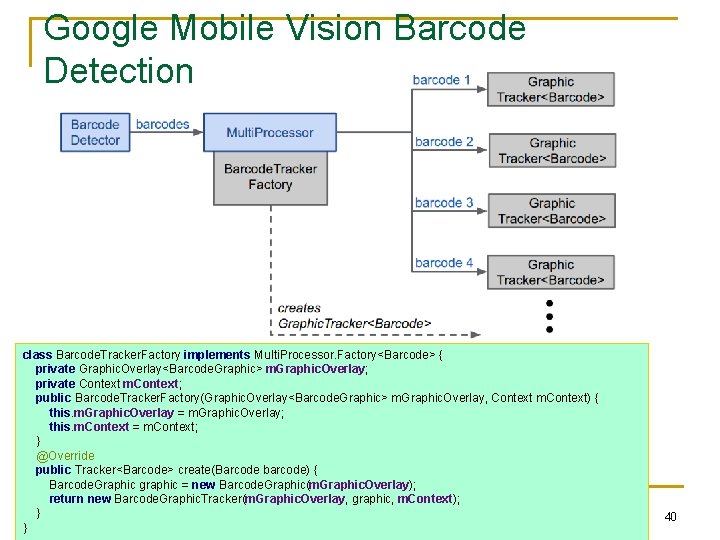

Google Mobile Vision Barcode Detection n n https: //developers. google. com/vision/android/multi-tracker-tutorial The barcode detector detects barcodes and creates a collection of barcode instances A multi-processor instance keeps track of each barcode that is currently active. It uses a factory to create a new graphic tracker instance per barcode As barcodes are tracked across video frames, the multi-processor sends updates to the corresponding barcode tracker instances // A barcode detector is created to track barcodes. An associated multi-processor instance // is set to receive the barcode detection results, track the barcodes, and maintain // graphics for each barcode on screen. The factory is used by the multi-processor to // create a separate tracker instance for each barcode. Barcode. Detector barcode. Detector = new Barcode. Detector. Builder(context). build(); Barcode. Tracker. Factory barcode. Factory = new Barcode. Tracker. Factory(m. Graphic. Overlay, this); barcode. Detector. set. Processor(new Multi. Processor. Builder<>(barcode. Factory). build()); 11/25/2020 Android Sensor Programming 39

Google Mobile Vision Barcode Detection class Barcode. Tracker. Factory implements Multi. Processor. Factory<Barcode> { private Graphic. Overlay<Barcode. Graphic> m. Graphic. Overlay; private Context m. Context; public Barcode. Tracker. Factory(Graphic. Overlay<Barcode. Graphic> m. Graphic. Overlay, Context m. Context) { this. m. Graphic. Overlay = m. Graphic. Overlay; this. m. Context = m. Context; } @Override public Tracker<Barcode> create(Barcode barcode) { Barcode. Graphic graphic = new Barcode. Graphic(m. Graphic. Overlay); return new Barcode. Graphic. Tracker(m. Graphic. Overlay, graphic, m. Context); } 11/25/2020 Android Sensor Programming } 40

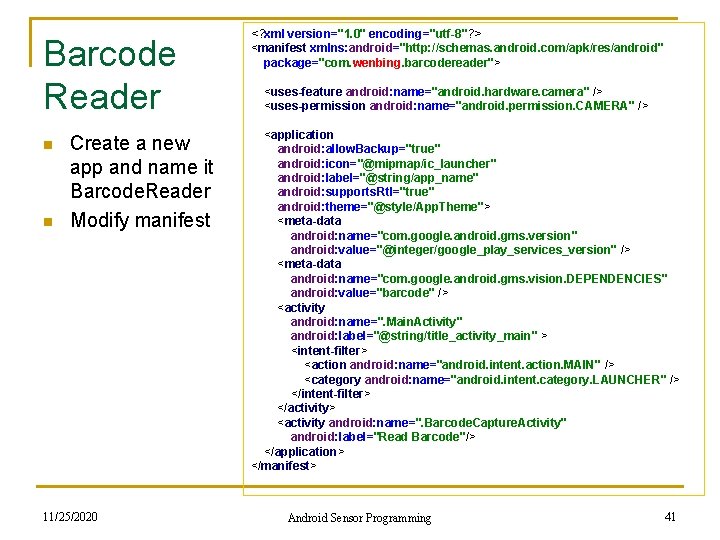

Barcode Reader n n Create a new app and name it Barcode. Reader Modify manifest 11/25/2020 <? xml version="1. 0" encoding="utf-8"? > <manifest xmlns: android="http: //schemas. android. com/apk/res/android" package="com. wenbing. barcodereader"> <uses-feature android: name="android. hardware. camera" /> <uses-permission android: name="android. permission. CAMERA" /> <application android: allow. Backup="true" android: icon="@mipmap/ic_launcher" android: label="@string/app_name" android: supports. Rtl="true" android: theme="@style/App. Theme"> <meta-data android: name="com. google. android. gms. version" android: value="@integer/google_play_services_version" /> <meta-data android: name="com. google. android. gms. vision. DEPENDENCIES" android: value="barcode" /> <activity android: name=". Main. Activity" android: label="@string/title_activity_main" > <intent-filter> <action android: name="android. intent. action. MAIN" /> <category android: name="android. intent. category. LAUNCHER" /> </intent-filter> </activity> <activity android: name=". Barcode. Capture. Activity" android: label="Read Barcode"/> </application> </manifest> Android Sensor Programming 41

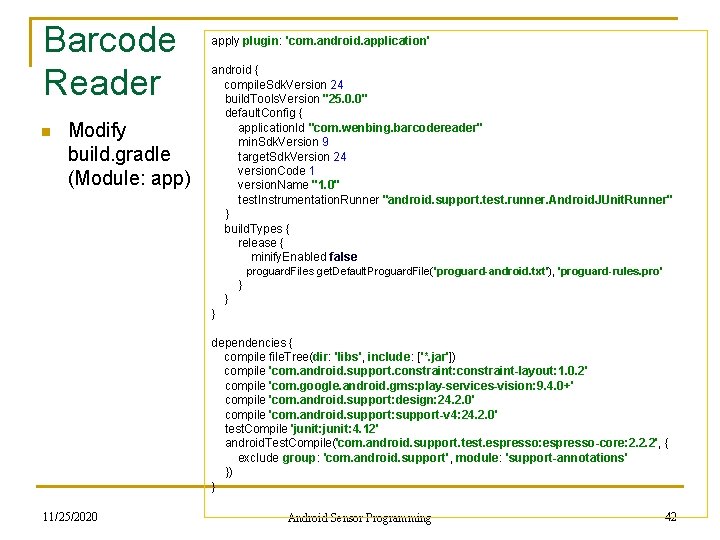

Barcode Reader n Modify build. gradle (Module: app) apply plugin: 'com. android. application' android { compile. Sdk. Version 24 build. Tools. Version "25. 0. 0" default. Config { application. Id "com. wenbing. barcodereader" min. Sdk. Version 9 target. Sdk. Version 24 version. Code 1 version. Name "1. 0" test. Instrumentation. Runner "android. support. test. runner. Android. JUnit. Runner" } build. Types { release { minify. Enabled false proguard. Files get. Default. Proguard. File('proguard-android. txt'), 'proguard-rules. pro' } } } dependencies { compile file. Tree(dir: 'libs', include: ['*. jar']) compile 'com. android. support. constraint: constraint-layout: 1. 0. 2' compile 'com. google. android. gms: play-services-vision: 9. 4. 0+' compile 'com. android. support: design: 24. 2. 0' compile 'com. android. support: support-v 4: 24. 2. 0' test. Compile 'junit: 4. 12' android. Test. Compile('com. android. support. test. espresso: espresso-core: 2. 2. 2', { exclude group: 'com. android. support', module: 'support-annotations' }) } 11/25/2020 Android Sensor Programming 42

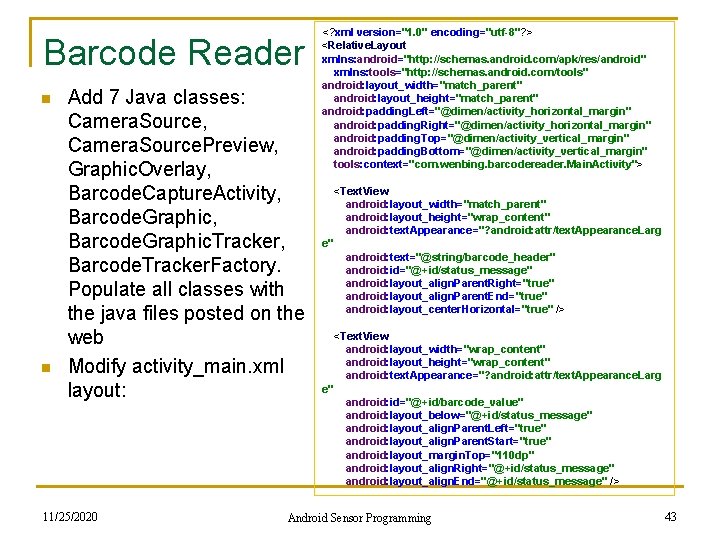

Barcode Reader n n Add 7 Java classes: Camera. Source, Camera. Source. Preview, Graphic. Overlay, Barcode. Capture. Activity, Barcode. Graphic. Tracker, Barcode. Tracker. Factory. Populate all classes with the java files posted on the web Modify activity_main. xml layout: 11/25/2020 <? xml version="1. 0" encoding="utf-8"? > <Relative. Layout xmlns: android="http: //schemas. android. com/apk/res/android" xmlns: tools="http: //schemas. android. com/tools" android: layout_width="match_parent" android: layout_height="match_parent" android: padding. Left="@dimen/activity_horizontal_margin" android: padding. Right="@dimen/activity_horizontal_margin" android: padding. Top="@dimen/activity_vertical_margin" android: padding. Bottom="@dimen/activity_vertical_margin" tools: context="com. wenbing. barcodereader. Main. Activity"> <Text. View android: layout_width="match_parent" android: layout_height="wrap_content" android: text. Appearance="? android: attr/text. Appearance. Larg e" android: text="@string/barcode_header" android: id="@+id/status_message" android: layout_align. Parent. Right="true" android: layout_align. Parent. End="true" android: layout_center. Horizontal="true" /> <Text. View android: layout_width="wrap_content" android: layout_height="wrap_content" android: text. Appearance="? android: attr/text. Appearance. Larg e" android: id="@+id/barcode_value" android: layout_below="@+id/status_message" android: layout_align. Parent. Left="true" android: layout_align. Parent. Start="true" android: layout_margin. Top="110 dp" android: layout_align. Right="@+id/status_message" android: layout_align. End="@+id/status_message" /> Android Sensor Programming 43

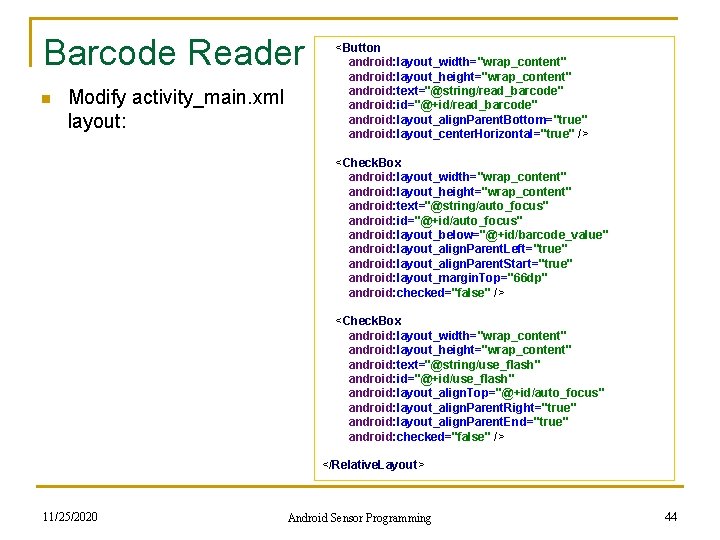

Barcode Reader n Modify activity_main. xml layout: <Button android: layout_width="wrap_content" android: layout_height="wrap_content" android: text="@string/read_barcode" android: id="@+id/read_barcode" android: layout_align. Parent. Bottom="true" android: layout_center. Horizontal="true" /> <Check. Box android: layout_width="wrap_content" android: layout_height="wrap_content" android: text="@string/auto_focus" android: id="@+id/auto_focus" android: layout_below="@+id/barcode_value" android: layout_align. Parent. Left="true" android: layout_align. Parent. Start="true" android: layout_margin. Top="66 dp" android: checked="false" /> <Check. Box android: layout_width="wrap_content" android: layout_height="wrap_content" android: text="@string/use_flash" android: id="@+id/use_flash" android: layout_align. Top="@+id/auto_focus" android: layout_align. Parent. Right="true" android: layout_align. Parent. End="true" android: checked="false" /> </Relative. Layout> 11/25/2020 Android Sensor Programming 44

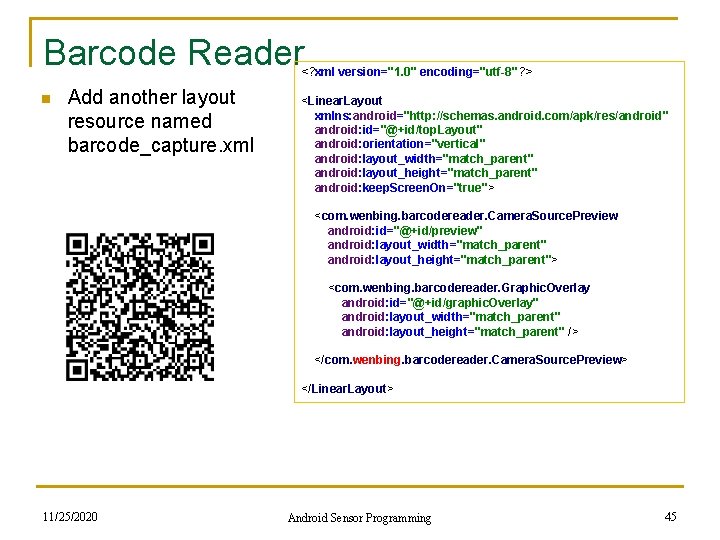

Barcode Reader <? xml version="1. 0" encoding="utf-8"? > n Add another layout resource named barcode_capture. xml <Linear. Layout xmlns: android="http: //schemas. android. com/apk/res/android" android: id="@+id/top. Layout" android: orientation="vertical" android: layout_width="match_parent" android: layout_height="match_parent" android: keep. Screen. On="true"> <com. wenbing. barcodereader. Camera. Source. Preview android: id="@+id/preview" android: layout_width="match_parent" android: layout_height="match_parent"> <com. wenbing. barcodereader. Graphic. Overlay android: id="@+id/graphic. Overlay" android: layout_width="match_parent" android: layout_height="match_parent" /> </com. wenbing. barcodereader. Camera. Source. Preview> </Linear. Layout> 11/25/2020 Android Sensor Programming 45

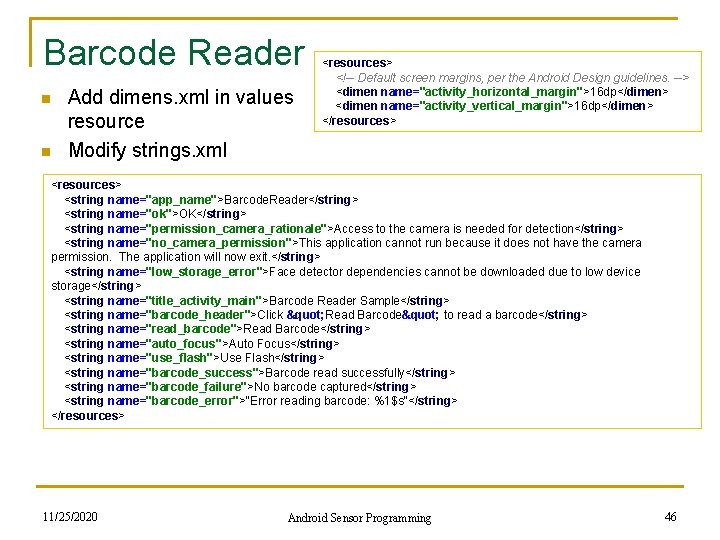

Barcode Reader n n Add dimens. xml in values resource Modify strings. xml <resources> <!-- Default screen margins, per the Android Design guidelines. --> <dimen name="activity_horizontal_margin">16 dp</dimen> <dimen name="activity_vertical_margin">16 dp</dimen> </resources> <string name="app_name">Barcode. Reader</string> <string name="ok">OK</string> <string name="permission_camera_rationale">Access to the camera is needed for detection</string> <string name="no_camera_permission">This application cannot run because it does not have the camera permission. The application will now exit. </string> <string name="low_storage_error">Face detector dependencies cannot be downloaded due to low device storage</string> <string name="title_activity_main">Barcode Reader Sample</string> <string name="barcode_header">Click " Read Barcode" to read a barcode</string> <string name="read_barcode">Read Barcode</string> <string name="auto_focus">Auto Focus</string> <string name="use_flash">Use Flash</string> <string name="barcode_success">Barcode read successfully</string> <string name="barcode_failure">No barcode captured</string> <string name="barcode_error">"Error reading barcode: %1$s"</string> </resources> 11/25/2020 Android Sensor Programming 46

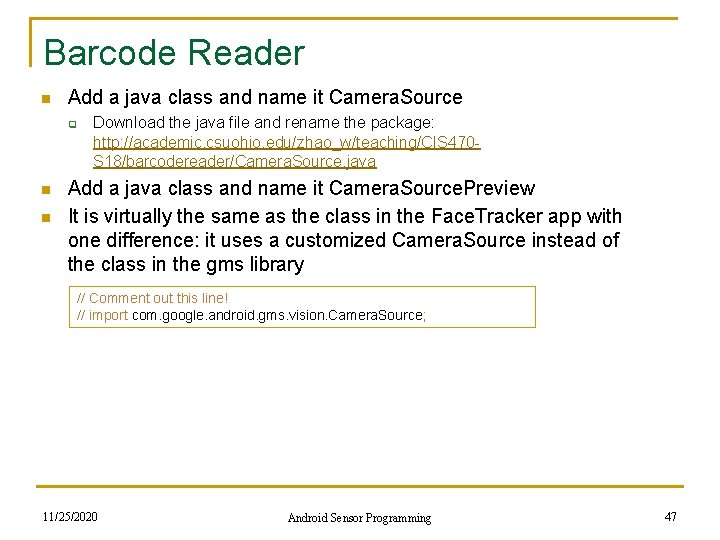

Barcode Reader n Add a java class and name it Camera. Source q n n Download the java file and rename the package: http: //academic. csuohio. edu/zhao_w/teaching/CIS 470 S 18/barcodereader/Camera. Source. java Add a java class and name it Camera. Source. Preview It is virtually the same as the class in the Face. Tracker app with one difference: it uses a customized Camera. Source instead of the class in the gms library // Comment out this line! // import com. google. android. gms. vision. Camera. Source; 11/25/2020 Android Sensor Programming 47

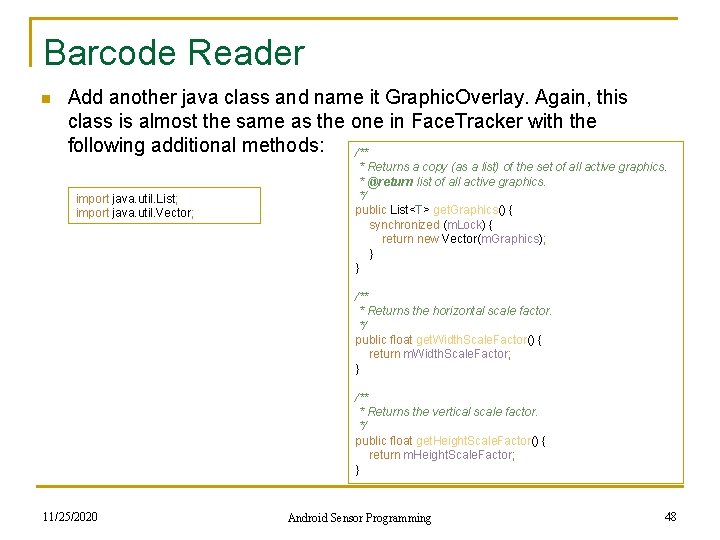

Barcode Reader n Add another java class and name it Graphic. Overlay. Again, this class is almost the same as the one in Face. Tracker with the following additional methods: /** import java. util. List; import java. util. Vector; * Returns a copy (as a list) of the set of all active graphics. * @return list of all active graphics. */ public List<T> get. Graphics() { synchronized (m. Lock) { return new Vector(m. Graphics); } } /** * Returns the horizontal scale factor. */ public float get. Width. Scale. Factor() { return m. Width. Scale. Factor; } /** * Returns the vertical scale factor. */ public float get. Height. Scale. Factor() { return m. Height. Scale. Factor; } 11/25/2020 Android Sensor Programming 48

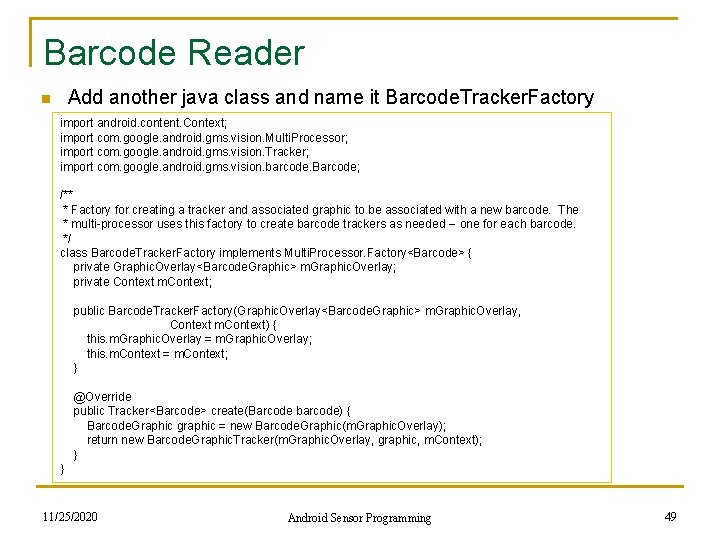

Barcode Reader n Add another java class and name it Barcode. Tracker. Factory import android. content. Context; import com. google. android. gms. vision. Multi. Processor; import com. google. android. gms. vision. Tracker; import com. google. android. gms. vision. barcode. Barcode; /** * Factory for creating a tracker and associated graphic to be associated with a new barcode. The * multi-processor uses this factory to create barcode trackers as needed -- one for each barcode. */ class Barcode. Tracker. Factory implements Multi. Processor. Factory<Barcode> { private Graphic. Overlay<Barcode. Graphic> m. Graphic. Overlay; private Context m. Context; public Barcode. Tracker. Factory(Graphic. Overlay<Barcode. Graphic> m. Graphic. Overlay, Context m. Context) { this. m. Graphic. Overlay = m. Graphic. Overlay; this. m. Context = m. Context; } @Override public Tracker<Barcode> create(Barcode barcode) { Barcode. Graphic graphic = new Barcode. Graphic(m. Graphic. Overlay); return new Barcode. Graphic. Tracker(m. Graphic. Overlay, graphic, m. Context); } } 11/25/2020 Android Sensor Programming 49

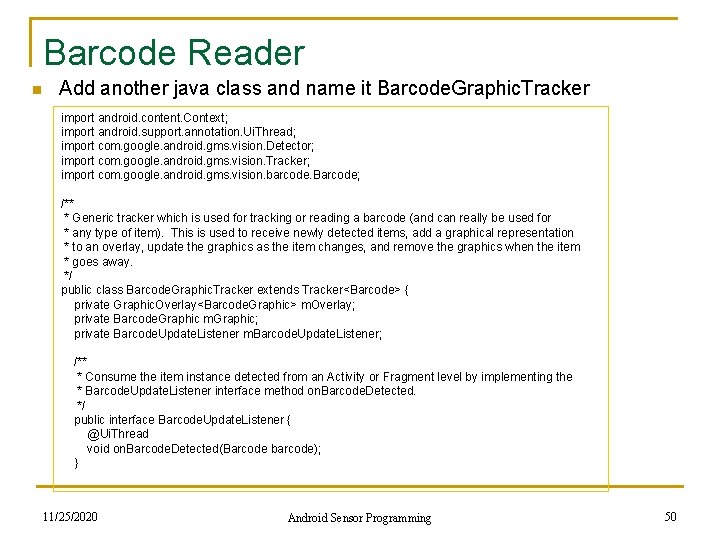

Barcode Reader n Add another java class and name it Barcode. Graphic. Tracker import android. content. Context; import android. support. annotation. Ui. Thread; import com. google. android. gms. vision. Detector; import com. google. android. gms. vision. Tracker; import com. google. android. gms. vision. barcode. Barcode; /** * Generic tracker which is used for tracking or reading a barcode (and can really be used for * any type of item). This is used to receive newly detected items, add a graphical representation * to an overlay, update the graphics as the item changes, and remove the graphics when the item * goes away. */ public class Barcode. Graphic. Tracker extends Tracker<Barcode> { private Graphic. Overlay<Barcode. Graphic> m. Overlay; private Barcode. Graphic m. Graphic; private Barcode. Update. Listener m. Barcode. Update. Listener; /** * Consume the item instance detected from an Activity or Fragment level by implementing the * Barcode. Update. Listener interface method on. Barcode. Detected. */ public interface Barcode. Update. Listener { @Ui. Thread void on. Barcode. Detected(Barcode barcode); } 11/25/2020 Android Sensor Programming 50

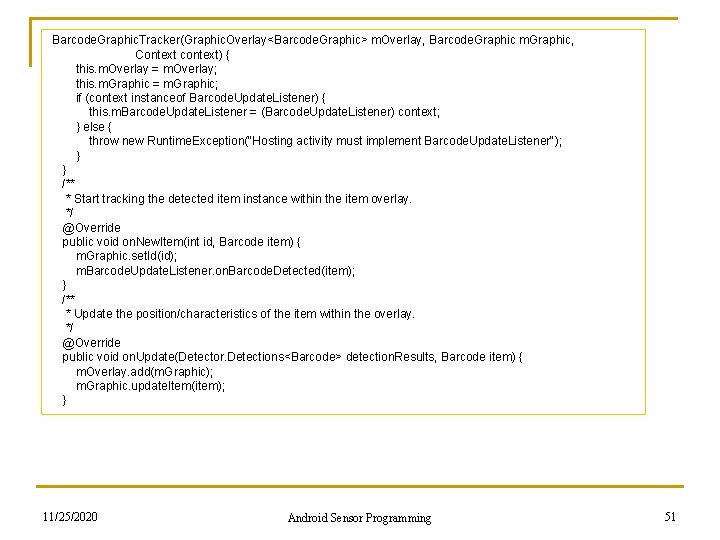

Barcode. Graphic. Tracker(Graphic. Overlay<Barcode. Graphic> m. Overlay, Barcode. Graphic m. Graphic, Context context) { this. m. Overlay = m. Overlay; this. m. Graphic = m. Graphic; if (context instanceof Barcode. Update. Listener) { this. m. Barcode. Update. Listener = (Barcode. Update. Listener) context; } else { throw new Runtime. Exception("Hosting activity must implement Barcode. Update. Listener"); } } /** * Start tracking the detected item instance within the item overlay. */ @Override public void on. New. Item(int id, Barcode item) { m. Graphic. set. Id(id); m. Barcode. Update. Listener. on. Barcode. Detected(item); } /** * Update the position/characteristics of the item within the overlay. */ @Override public void on. Update(Detector. Detections<Barcode> detection. Results, Barcode item) { m. Overlay. add(m. Graphic); m. Graphic. update. Item(item); } 11/25/2020 Android Sensor Programming 51

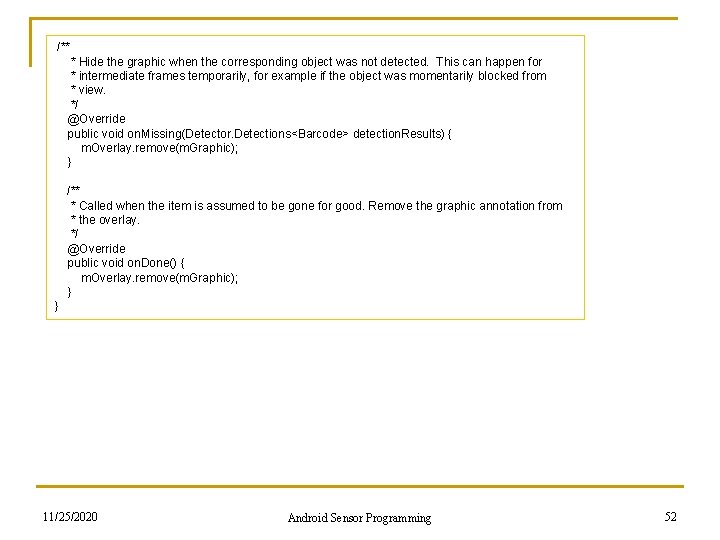

/** * Hide the graphic when the corresponding object was not detected. This can happen for * intermediate frames temporarily, for example if the object was momentarily blocked from * view. */ @Override public void on. Missing(Detector. Detections<Barcode> detection. Results) { m. Overlay. remove(m. Graphic); } /** * Called when the item is assumed to be gone for good. Remove the graphic annotation from * the overlay. */ @Override public void on. Done() { m. Overlay. remove(m. Graphic); } } 11/25/2020 Android Sensor Programming 52

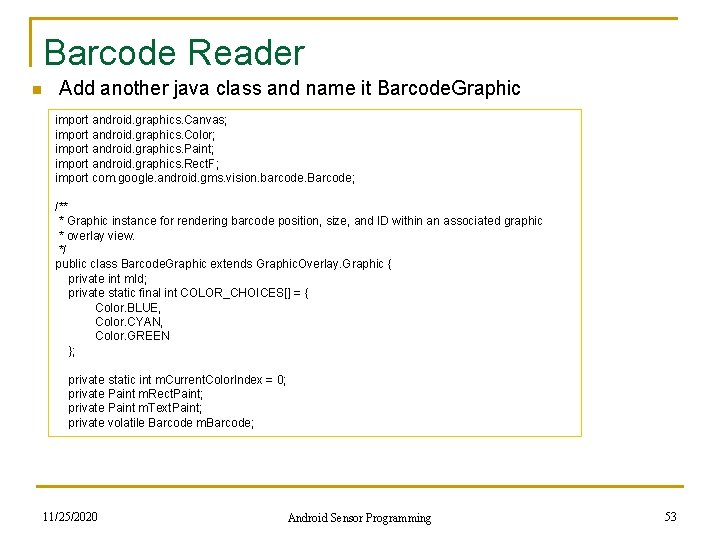

Barcode Reader n Add another java class and name it Barcode. Graphic import android. graphics. Canvas; import android. graphics. Color; import android. graphics. Paint; import android. graphics. Rect. F; import com. google. android. gms. vision. barcode. Barcode; /** * Graphic instance for rendering barcode position, size, and ID within an associated graphic * overlay view. */ public class Barcode. Graphic extends Graphic. Overlay. Graphic { private int m. Id; private static final int COLOR_CHOICES[] = { Color. BLUE, Color. CYAN, Color. GREEN }; private static int m. Current. Color. Index = 0; private Paint m. Rect. Paint; private Paint m. Text. Paint; private volatile Barcode m. Barcode; 11/25/2020 Android Sensor Programming 53

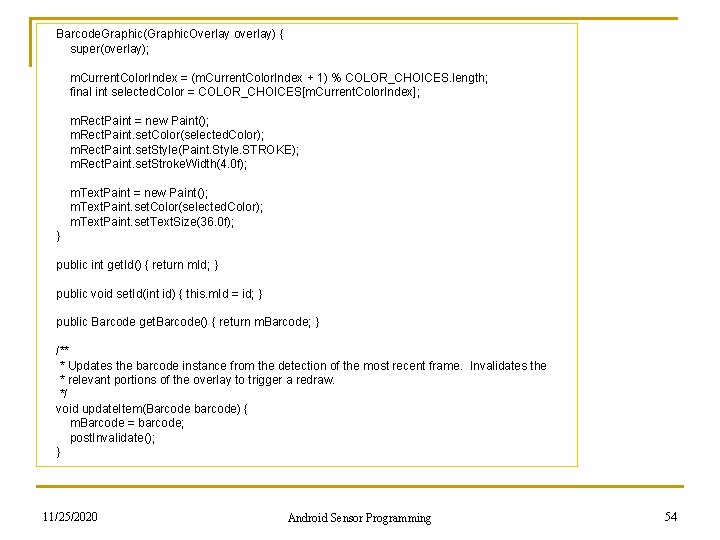

Barcode. Graphic(Graphic. Overlay overlay) { super(overlay); m. Current. Color. Index = (m. Current. Color. Index + 1) % COLOR_CHOICES. length; final int selected. Color = COLOR_CHOICES[m. Current. Color. Index]; m. Rect. Paint = new Paint(); m. Rect. Paint. set. Color(selected. Color); m. Rect. Paint. set. Style(Paint. Style. STROKE); m. Rect. Paint. set. Stroke. Width(4. 0 f); m. Text. Paint = new Paint(); m. Text. Paint. set. Color(selected. Color); m. Text. Paint. set. Text. Size(36. 0 f); } public int get. Id() { return m. Id; } public void set. Id(int id) { this. m. Id = id; } public Barcode get. Barcode() { return m. Barcode; } /** * Updates the barcode instance from the detection of the most recent frame. Invalidates the * relevant portions of the overlay to trigger a redraw. */ void update. Item(Barcode barcode) { m. Barcode = barcode; post. Invalidate(); } 11/25/2020 Android Sensor Programming 54

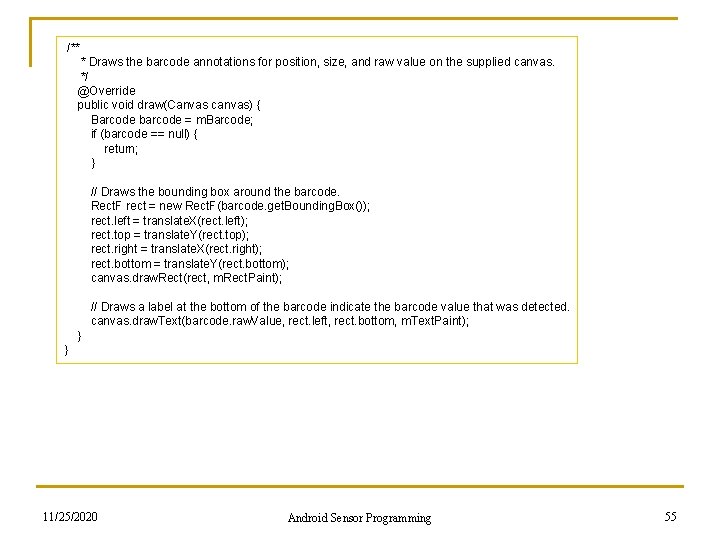

/** * Draws the barcode annotations for position, size, and raw value on the supplied canvas. */ @Override public void draw(Canvas canvas) { Barcode barcode = m. Barcode; if (barcode == null) { return; } // Draws the bounding box around the barcode. Rect. F rect = new Rect. F(barcode. get. Bounding. Box()); rect. left = translate. X(rect. left); rect. top = translate. Y(rect. top); rect. right = translate. X(rect. right); rect. bottom = translate. Y(rect. bottom); canvas. draw. Rect(rect, m. Rect. Paint); // Draws a label at the bottom of the barcode indicate the barcode value that was detected. canvas. draw. Text(barcode. raw. Value, rect. left, rect. bottom, m. Text. Paint); } } 11/25/2020 Android Sensor Programming 55

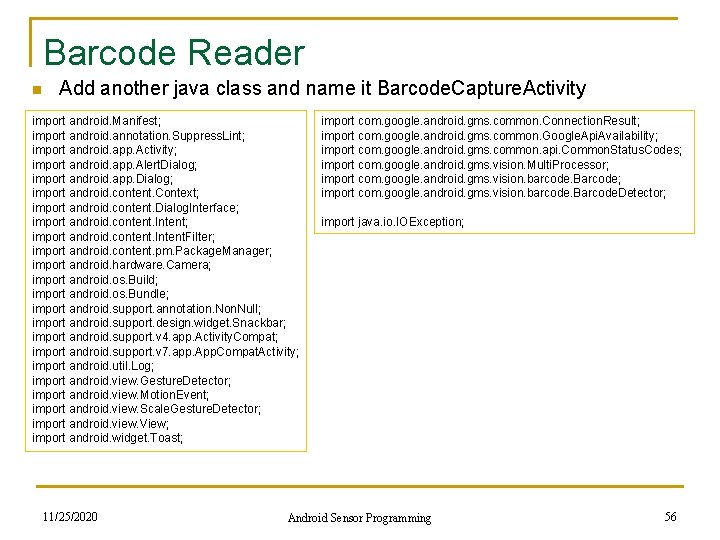

Barcode Reader n Add another java class and name it Barcode. Capture. Activity import android. Manifest; import android. annotation. Suppress. Lint; import android. app. Activity; import android. app. Alert. Dialog; import android. app. Dialog; import android. content. Context; import android. content. Dialog. Interface; import android. content. Intent. Filter; import android. content. pm. Package. Manager; import android. hardware. Camera; import android. os. Build; import android. os. Bundle; import android. support. annotation. Null; import android. support. design. widget. Snackbar; import android. support. v 4. app. Activity. Compat; import android. support. v 7. app. App. Compat. Activity; import android. util. Log; import android. view. Gesture. Detector; import android. view. Motion. Event; import android. view. Scale. Gesture. Detector; import android. view. View; import android. widget. Toast; 11/25/2020 import com. google. android. gms. common. Connection. Result; import com. google. android. gms. common. Google. Api. Availability; import com. google. android. gms. common. api. Common. Status. Codes; import com. google. android. gms. vision. Multi. Processor; import com. google. android. gms. vision. barcode. Barcode. Detector; import java. io. IOException; Android Sensor Programming 56

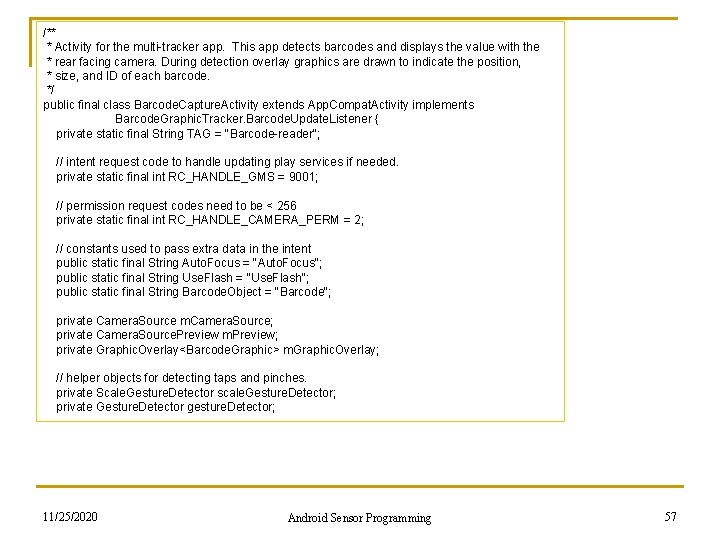

/** * Activity for the multi-tracker app. This app detects barcodes and displays the value with the * rear facing camera. During detection overlay graphics are drawn to indicate the position, * size, and ID of each barcode. */ public final class Barcode. Capture. Activity extends App. Compat. Activity implements Barcode. Graphic. Tracker. Barcode. Update. Listener { private static final String TAG = "Barcode-reader"; // intent request code to handle updating play services if needed. private static final int RC_HANDLE_GMS = 9001; // permission request codes need to be < 256 private static final int RC_HANDLE_CAMERA_PERM = 2; // constants used to pass extra data in the intent public static final String Auto. Focus = "Auto. Focus"; public static final String Use. Flash = "Use. Flash"; public static final String Barcode. Object = "Barcode"; private Camera. Source m. Camera. Source; private Camera. Source. Preview m. Preview; private Graphic. Overlay<Barcode. Graphic> m. Graphic. Overlay; // helper objects for detecting taps and pinches. private Scale. Gesture. Detector scale. Gesture. Detector; private Gesture. Detector gesture. Detector; 11/25/2020 Android Sensor Programming 57

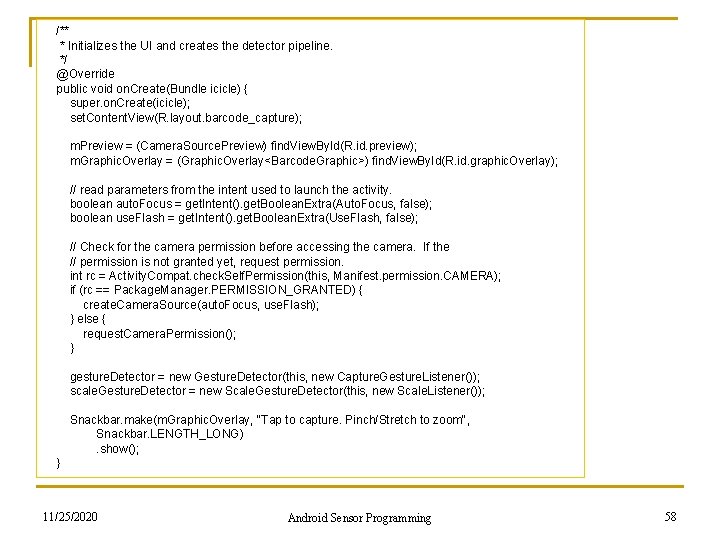

/** * Initializes the UI and creates the detector pipeline. */ @Override public void on. Create(Bundle icicle) { super. on. Create(icicle); set. Content. View(R. layout. barcode_capture); m. Preview = (Camera. Source. Preview) find. View. By. Id(R. id. preview); m. Graphic. Overlay = (Graphic. Overlay<Barcode. Graphic>) find. View. By. Id(R. id. graphic. Overlay); // read parameters from the intent used to launch the activity. boolean auto. Focus = get. Intent(). get. Boolean. Extra(Auto. Focus, false); boolean use. Flash = get. Intent(). get. Boolean. Extra(Use. Flash, false); // Check for the camera permission before accessing the camera. If the // permission is not granted yet, request permission. int rc = Activity. Compat. check. Self. Permission(this, Manifest. permission. CAMERA); if (rc == Package. Manager. PERMISSION_GRANTED) { create. Camera. Source(auto. Focus, use. Flash); } else { request. Camera. Permission(); } gesture. Detector = new Gesture. Detector(this, new Capture. Gesture. Listener()); scale. Gesture. Detector = new Scale. Gesture. Detector(this, new Scale. Listener()); Snackbar. make(m. Graphic. Overlay, "Tap to capture. Pinch/Stretch to zoom", Snackbar. LENGTH_LONG) . show(); } 11/25/2020 Android Sensor Programming 58

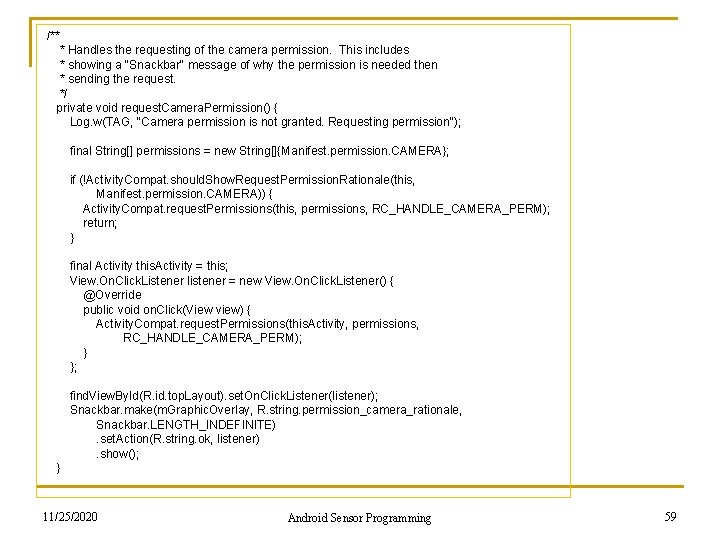

/** * Handles the requesting of the camera permission. This includes * showing a "Snackbar" message of why the permission is needed then * sending the request. */ private void request. Camera. Permission() { Log. w(TAG, "Camera permission is not granted. Requesting permission"); final String[] permissions = new String[]{Manifest. permission. CAMERA}; if (!Activity. Compat. should. Show. Request. Permission. Rationale(this, Manifest. permission. CAMERA)) { Activity. Compat. request. Permissions(this, permissions, RC_HANDLE_CAMERA_PERM); return; } final Activity this. Activity = this; View. On. Click. Listener listener = new View. On. Click. Listener() { @Override public void on. Click(View view) { Activity. Compat. request. Permissions(this. Activity, permissions, RC_HANDLE_CAMERA_PERM); }; find. View. By. Id(R. id. top. Layout). set. On. Click. Listener(listener); Snackbar. make(m. Graphic. Overlay, R. string. permission_camera_rationale, Snackbar. LENGTH_INDEFINITE) . set. Action(R. string. ok, listener) . show(); } 11/25/2020 Android Sensor Programming 59

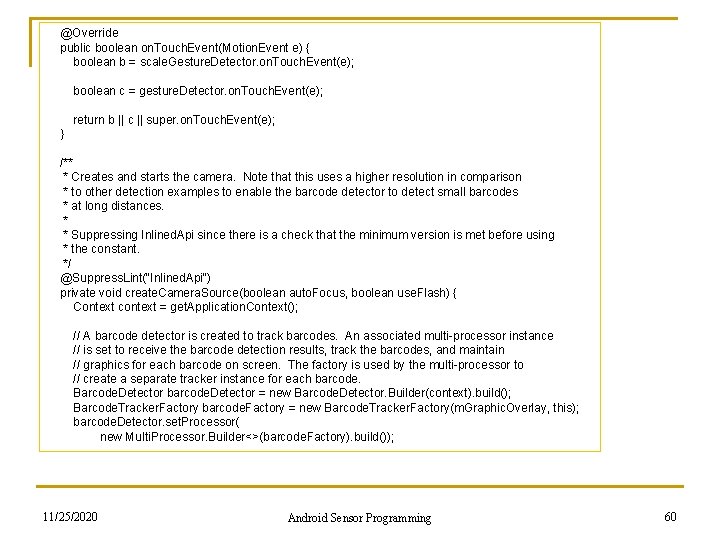

@Override public boolean on. Touch. Event(Motion. Event e) { boolean b = scale. Gesture. Detector. on. Touch. Event(e); boolean c = gesture. Detector. on. Touch. Event(e); return b || c || super. on. Touch. Event(e); } /** * Creates and starts the camera. Note that this uses a higher resolution in comparison * to other detection examples to enable the barcode detector to detect small barcodes * at long distances. * * Suppressing Inlined. Api since there is a check that the minimum version is met before using * the constant. */ @Suppress. Lint("Inlined. Api") private void create. Camera. Source(boolean auto. Focus, boolean use. Flash) { Context context = get. Application. Context(); // A barcode detector is created to track barcodes. An associated multi-processor instance // is set to receive the barcode detection results, track the barcodes, and maintain // graphics for each barcode on screen. The factory is used by the multi-processor to // create a separate tracker instance for each barcode. Barcode. Detector barcode. Detector = new Barcode. Detector. Builder(context). build(); Barcode. Tracker. Factory barcode. Factory = new Barcode. Tracker. Factory(m. Graphic. Overlay, this); barcode. Detector. set. Processor( new Multi. Processor. Builder<>(barcode. Factory). build()); 11/25/2020 Android Sensor Programming 60

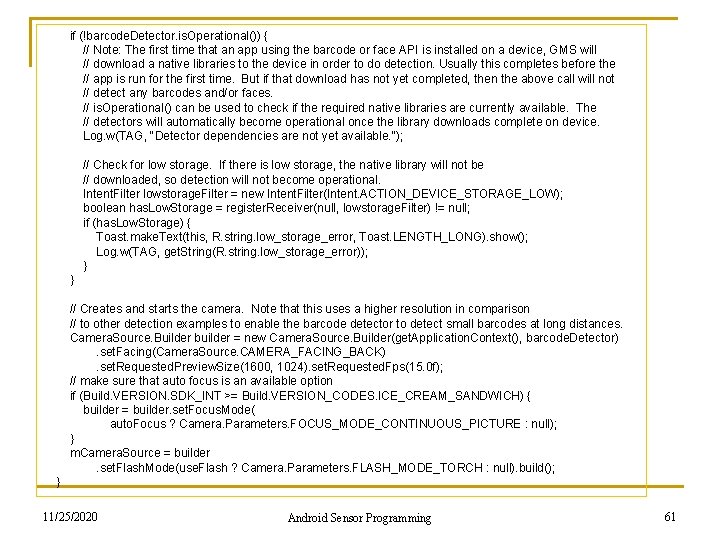

if (!barcode. Detector. is. Operational()) { // Note: The first time that an app using the barcode or face API is installed on a device, GMS will // download a native libraries to the device in order to do detection. Usually this completes before the // app is run for the first time. But if that download has not yet completed, then the above call will not // detect any barcodes and/or faces. // is. Operational() can be used to check if the required native libraries are currently available. The // detectors will automatically become operational once the library downloads complete on device. Log. w(TAG, "Detector dependencies are not yet available. "); // Check for low storage. If there is low storage, the native library will not be // downloaded, so detection will not become operational. Intent. Filter lowstorage. Filter = new Intent. Filter(Intent. ACTION_DEVICE_STORAGE_LOW); boolean has. Low. Storage = register. Receiver(null, lowstorage. Filter) != null; if (has. Low. Storage) { Toast. make. Text(this, R. string. low_storage_error, Toast. LENGTH_LONG). show(); Log. w(TAG, get. String(R. string. low_storage_error)); } // Creates and starts the camera. Note that this uses a higher resolution in comparison // to other detection examples to enable the barcode detector to detect small barcodes at long distances. Camera. Source. Builder builder = new Camera. Source. Builder(get. Application. Context(), barcode. Detector) . set. Facing(Camera. Source. CAMERA_FACING_BACK) . set. Requested. Preview. Size(1600, 1024). set. Requested. Fps(15. 0 f); // make sure that auto focus is an available option if (Build. VERSION. SDK_INT >= Build. VERSION_CODES. ICE_CREAM_SANDWICH) { builder = builder. set. Focus. Mode( auto. Focus ? Camera. Parameters. FOCUS_MODE_CONTINUOUS_PICTURE : null); } m. Camera. Source = builder . set. Flash. Mode(use. Flash ? Camera. Parameters. FLASH_MODE_TORCH : null). build(); } 11/25/2020 Android Sensor Programming 61

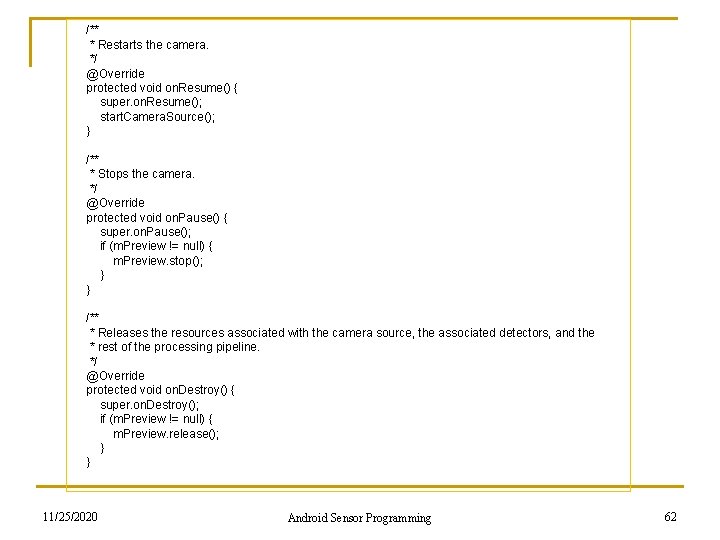

/** * Restarts the camera. */ @Override protected void on. Resume() { super. on. Resume(); start. Camera. Source(); } /** * Stops the camera. */ @Override protected void on. Pause() { super. on. Pause(); if (m. Preview != null) { m. Preview. stop(); } } /** * Releases the resources associated with the camera source, the associated detectors, and the * rest of the processing pipeline. */ @Override protected void on. Destroy() { super. on. Destroy(); if (m. Preview != null) { m. Preview. release(); } } 11/25/2020 Android Sensor Programming 62

![@Override public void on. Request. Permissions. Result(int request. Code, @Non. Null String[] permissions, @Override public void on. Request. Permissions. Result(int request. Code, @Non. Null String[] permissions,](http://slidetodoc.com/presentation_image_h/a004590258fb89235a5824e10a36d0e5/image-63.jpg)

@Override public void on. Request. Permissions. Result(int request. Code, @Non. Null String[] permissions, @Non. Null int[] grant. Results) { if (request. Code != RC_HANDLE_CAMERA_PERM) { Log. d(TAG, "Got unexpected permission result: " + request. Code); super. on. Request. Permissions. Result(request. Code, permissions, grant. Results); return; } if (grant. Results. length != 0 && grant. Results[0] == Package. Manager. PERMISSION_GRANTED) { Log. d(TAG, "Camera permission granted - initialize the camera source"); // we have permission, so create the camerasource boolean auto. Focus = get. Intent(). get. Boolean. Extra(Auto. Focus, false); boolean use. Flash = get. Intent(). get. Boolean. Extra(Use. Flash, false); create. Camera. Source(auto. Focus, use. Flash); return; } Log. e(TAG, "Permission not granted: results len = " + grant. Results. length + " Result code = " + (grant. Results. length > 0 ? grant. Results[0] : "(empty)")); Dialog. Interface. On. Click. Listener listener = new Dialog. Interface. On. Click. Listener() { public void on. Click(Dialog. Interface dialog, int id) { finish(); }; Alert. Dialog. Builder builder = new Alert. Dialog. Builder(this); builder. set. Title("Multitracker sample") . set. Message(R. string. no_camera_permission). set. Positive. Button(R. string. ok, listener). show(); } 11/25/2020 Android Sensor Programming 63

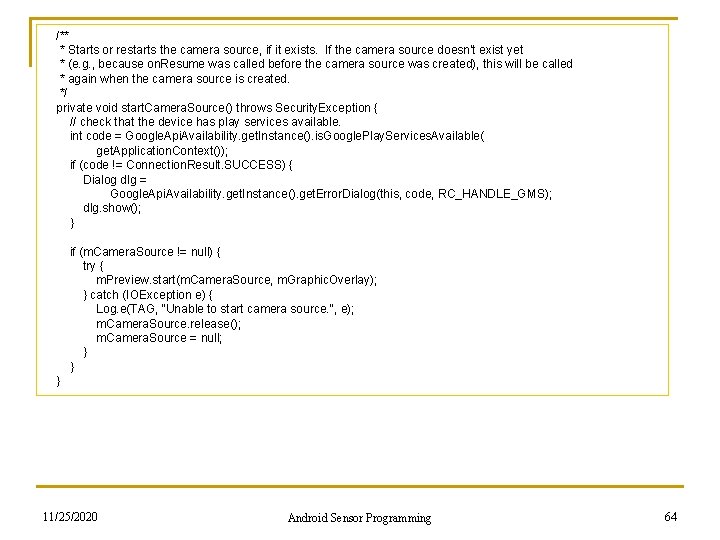

/** * Starts or restarts the camera source, if it exists. If the camera source doesn't exist yet * (e. g. , because on. Resume was called before the camera source was created), this will be called * again when the camera source is created. */ private void start. Camera. Source() throws Security. Exception { // check that the device has play services available. int code = Google. Api. Availability. get. Instance(). is. Google. Play. Services. Available( get. Application. Context()); if (code != Connection. Result. SUCCESS) { Dialog dlg = Google. Api. Availability. get. Instance(). get. Error. Dialog(this, code, RC_HANDLE_GMS); dlg. show(); } if (m. Camera. Source != null) { try { m. Preview. start(m. Camera. Source, m. Graphic. Overlay); } catch (IOException e) { Log. e(TAG, "Unable to start camera source. ", e); m. Camera. Source. release(); m. Camera. Source = null; } } 11/25/2020 Android Sensor Programming 64

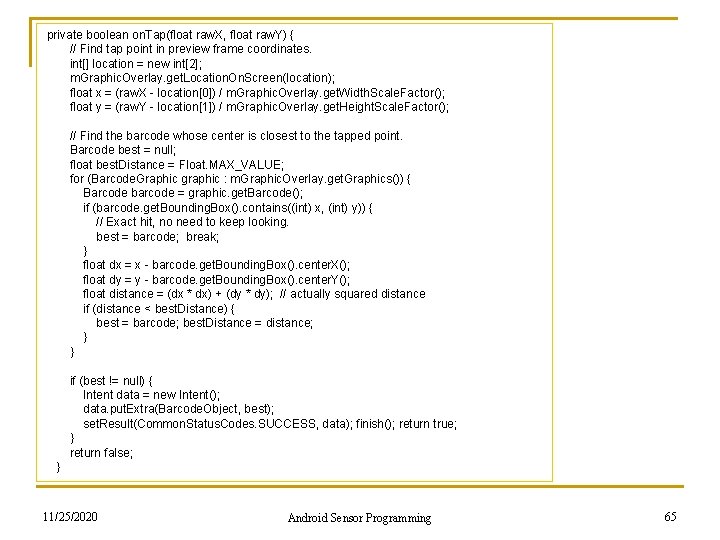

private boolean on. Tap(float raw. X, float raw. Y) { // Find tap point in preview frame coordinates. int[] location = new int[2]; m. Graphic. Overlay. get. Location. On. Screen(location); float x = (raw. X - location[0]) / m. Graphic. Overlay. get. Width. Scale. Factor(); float y = (raw. Y - location[1]) / m. Graphic. Overlay. get. Height. Scale. Factor(); // Find the barcode whose center is closest to the tapped point. Barcode best = null; float best. Distance = Float. MAX_VALUE; for (Barcode. Graphic graphic : m. Graphic. Overlay. get. Graphics()) { Barcode barcode = graphic. get. Barcode(); if (barcode. get. Bounding. Box(). contains((int) x, (int) y)) { // Exact hit, no need to keep looking. best = barcode; break; } float dx = x - barcode. get. Bounding. Box(). center. X(); float dy = y - barcode. get. Bounding. Box(). center. Y(); float distance = (dx * dx) + (dy * dy); // actually squared distance if (distance < best. Distance) { best = barcode; best. Distance = distance; } if (best != null) { Intent data = new Intent(); data. put. Extra(Barcode. Object, best); set. Result(Common. Status. Codes. SUCCESS, data); finish(); return true; } return false; } 11/25/2020 Android Sensor Programming 65

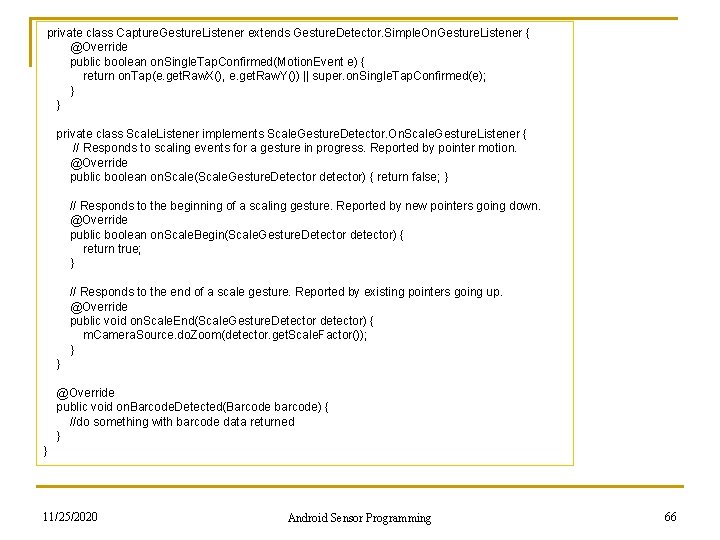

private class Capture. Gesture. Listener extends Gesture. Detector. Simple. On. Gesture. Listener { @Override public boolean on. Single. Tap. Confirmed(Motion. Event e) { return on. Tap(e. get. Raw. X(), e. get. Raw. Y()) || super. on. Single. Tap. Confirmed(e); } } private class Scale. Listener implements Scale. Gesture. Detector. On. Scale. Gesture. Listener { // Responds to scaling events for a gesture in progress. Reported by pointer motion. @Override public boolean on. Scale(Scale. Gesture. Detector detector) { return false; } // Responds to the beginning of a scaling gesture. Reported by new pointers going down. @Override public boolean on. Scale. Begin(Scale. Gesture. Detector detector) { return true; } // Responds to the end of a scale gesture. Reported by existing pointers going up. @Override public void on. Scale. End(Scale. Gesture. Detector detector) { m. Camera. Source. do. Zoom(detector. get. Scale. Factor()); } } @Override public void on. Barcode. Detected(Barcode barcode) { //do something with barcode data returned } } 11/25/2020 Android Sensor Programming 66

Homework# 24 n Assume that the barcode you scanned is a 2 d barcode that contains a URL. Add another activity or fragment to display the URL content (i. e. , the webpage). Add a button to launch the activity or fragment 11/25/2020 Android Sensor Programming 67

- Slides: 67