An Introduction to Support Vector Machine In part

- Slides: 59

An Introduction to Support Vector Machine In part from of Jinwei Gu

Support Vector Machine • • A classifier derived from statistical learning theory by Vapnik, et al. in 1992 SVM became famous when, using images as input, it gave accuracy comparable to neural networks in a handwriting recognition task Currently, SVM is widely used in object detection & recognition, content-based image retrieval, text recognition, biometrics, speech recognition, etc. Still one of the best non-deep methods – in many tasks, comparable performance

Support Vector Machine A Support Vector Machine (SVM) is a discriminative classifier formally defined by a separating hyperplane. In other words, given labeled training data (supervised learning both for classification and regression), the algorithm outputs an optimal hyperplane which categorizes new examples. In two dimentional space this hyperplane is a line dividing a plane in two parts where in each class lay in either side.

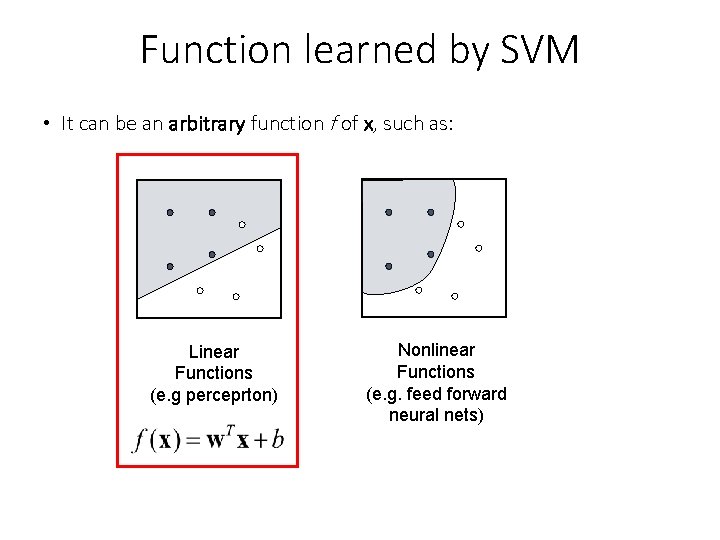

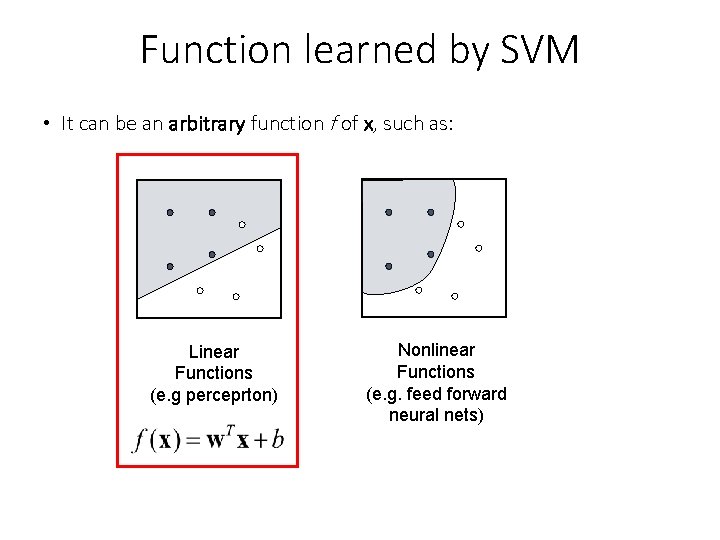

Function learned by SVM • It can be an arbitrary function f of x, such as: Linear Functions (e. g perceprton) Nonlinear Functions (e. g. feed forward neural nets)

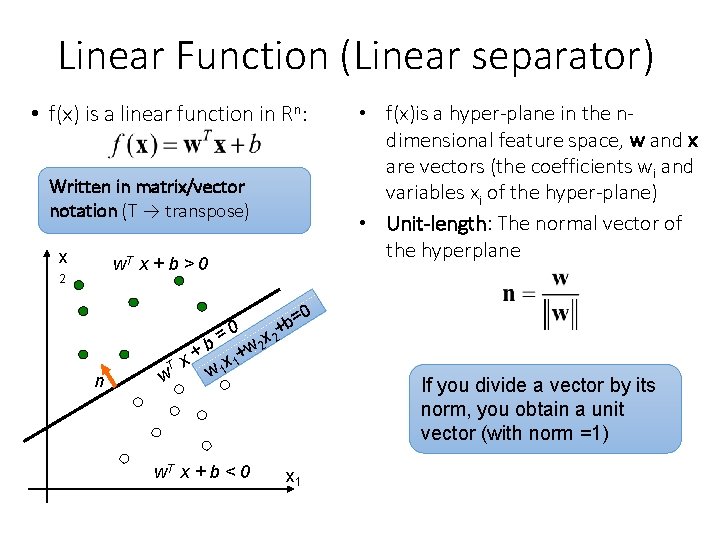

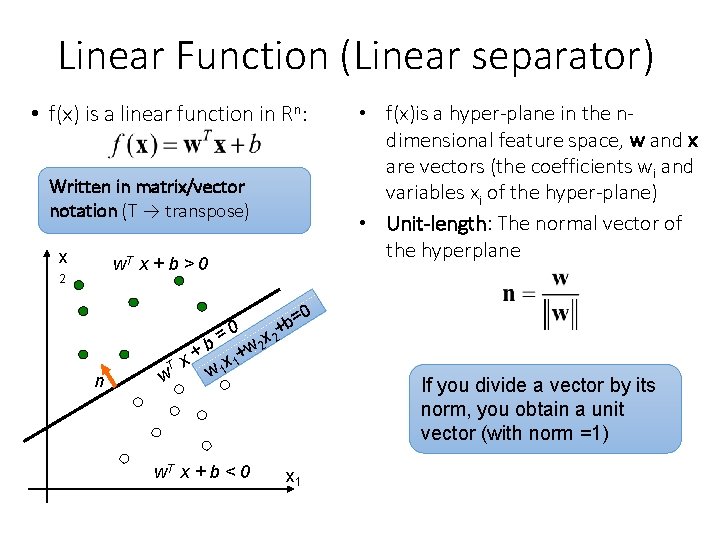

Linear Function (Linear separator) • f(x) is a linear function in Rn: Written in matrix/vector notation (T → transpose) x w. T x + b > 0 2 • f(x)is a hyper-plane in the ndimensional feature space, w and x are vectors (the coefficients wi and variables xi of the hyper-plane) • Unit-length: The normal vector of the hyperplane 0 = +b n 0 = x 2 2 b w + x 1+ T x w 1 w w. T x + b < 0 If you divide a vector by its norm, you obtain a unit vector (with norm =1) x 1

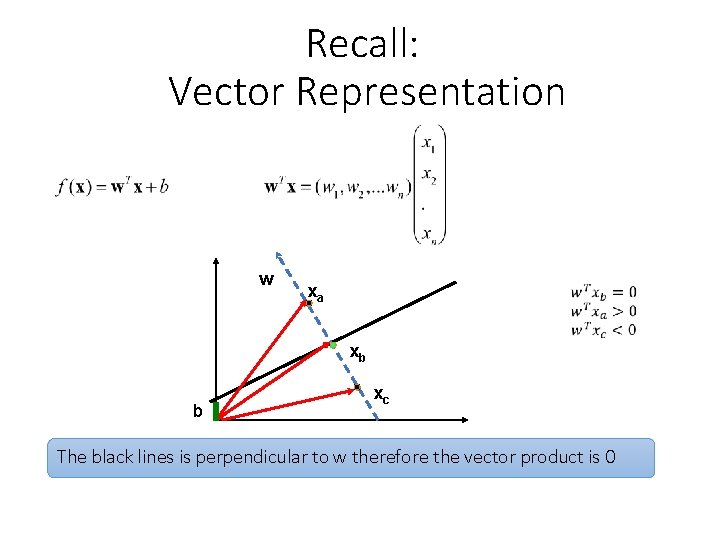

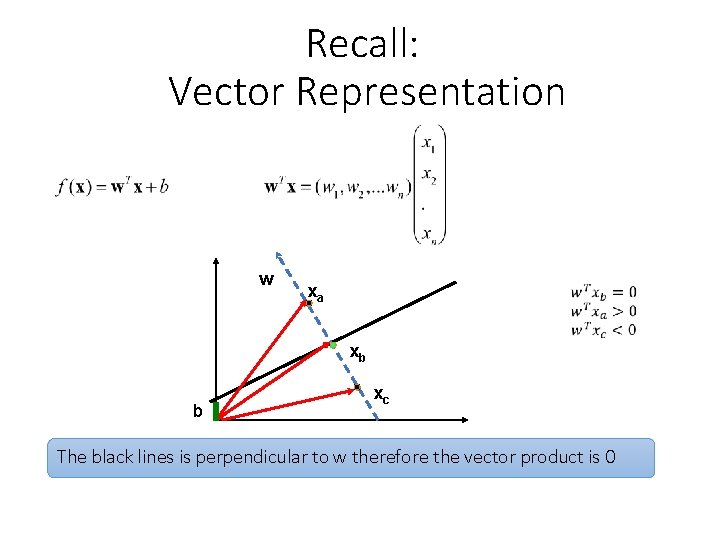

Recall: Vector Representation w xa xb b xc The black lines is perpendicular to w therefore the vector product is 0

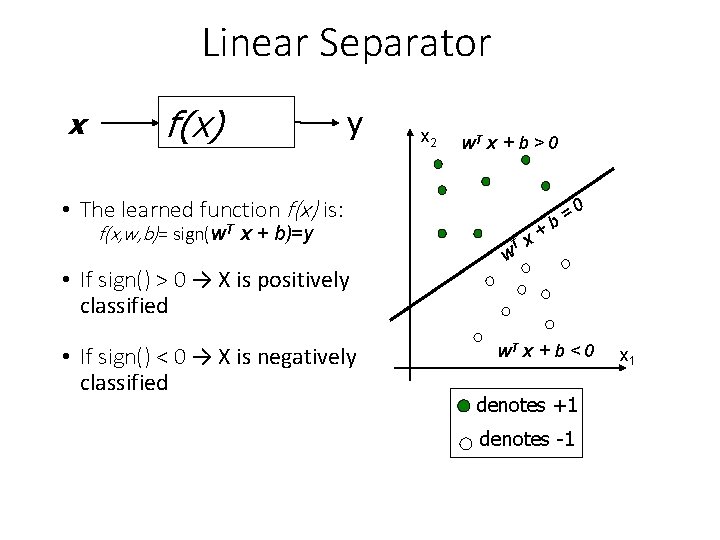

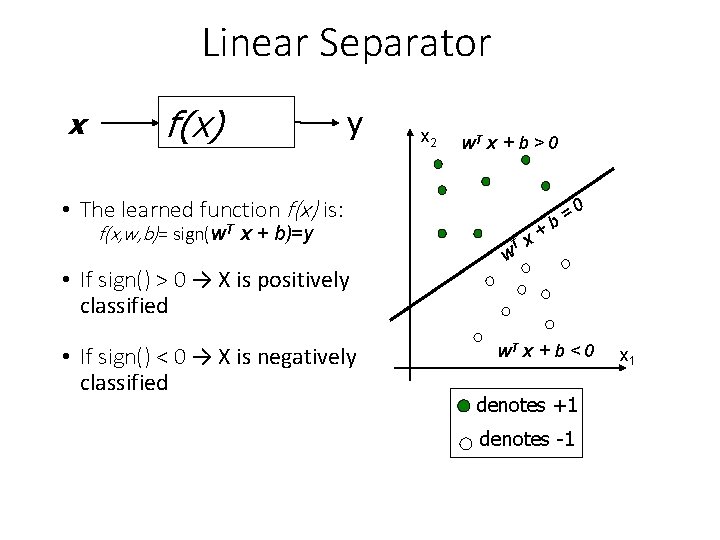

Linear Separator x f(x) y x 2 w. T x + b > 0 • The learned function f(x) is: f(x, w, b)= sign(w. T x + b)=y • If sign() > 0 → X is positively classified • If sign() < 0 → X is negatively classified T w x+ 0 = b w. T x + b < 0 denotes +1 denotes -1 x 1

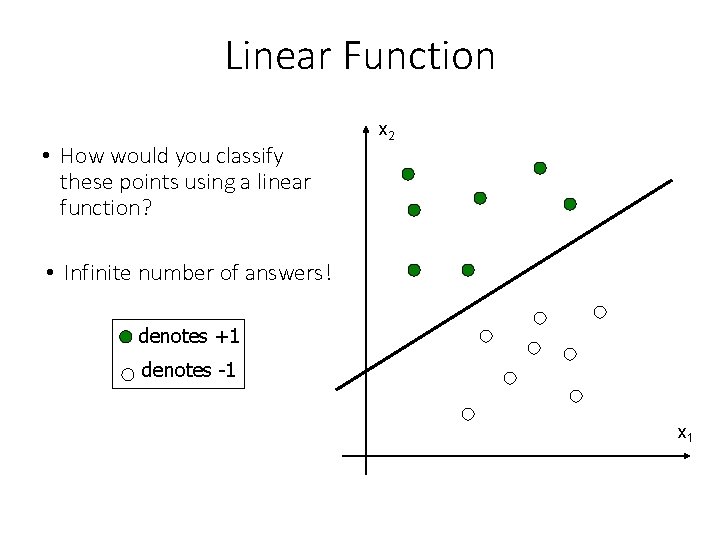

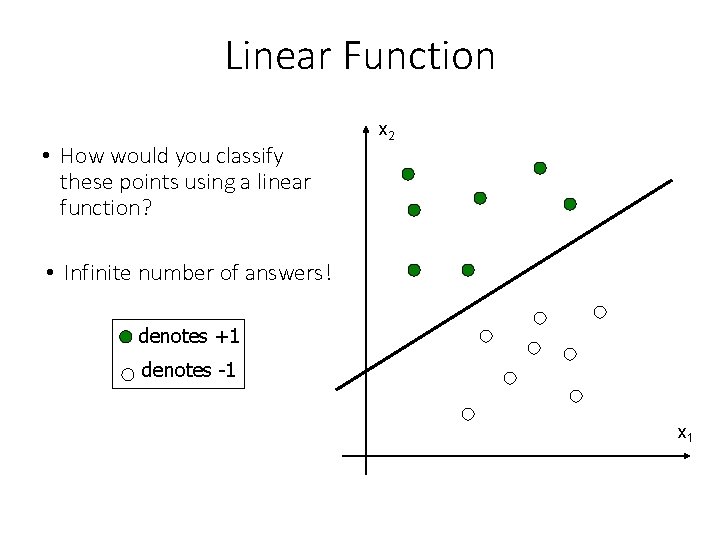

Linear Function • How would you classify these points using a linear function? x 2 • Infinite number of answers! denotes +1 denotes -1 x 1

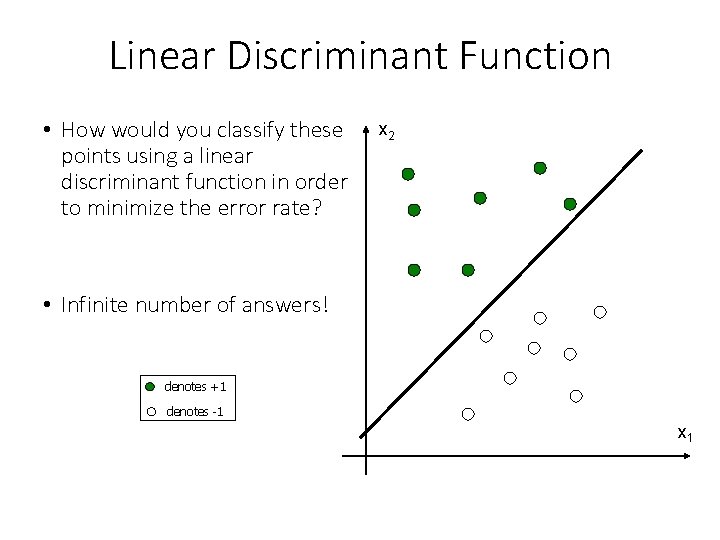

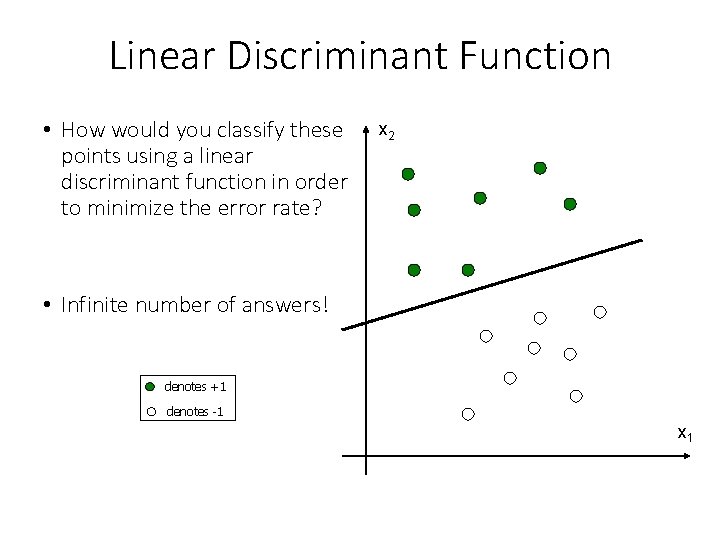

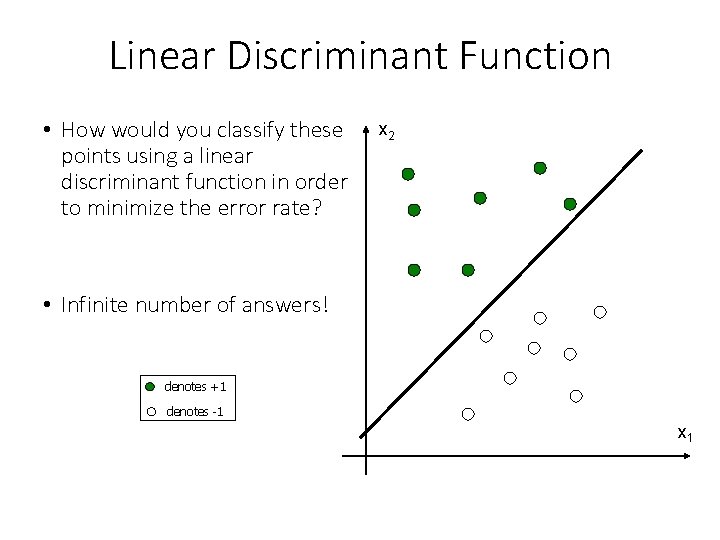

Linear Discriminant Function • How would you classify these points using a linear discriminant function in order to minimize the error rate? x 2 • Infinite number of answers! denotes +1 denotes -1 x 1

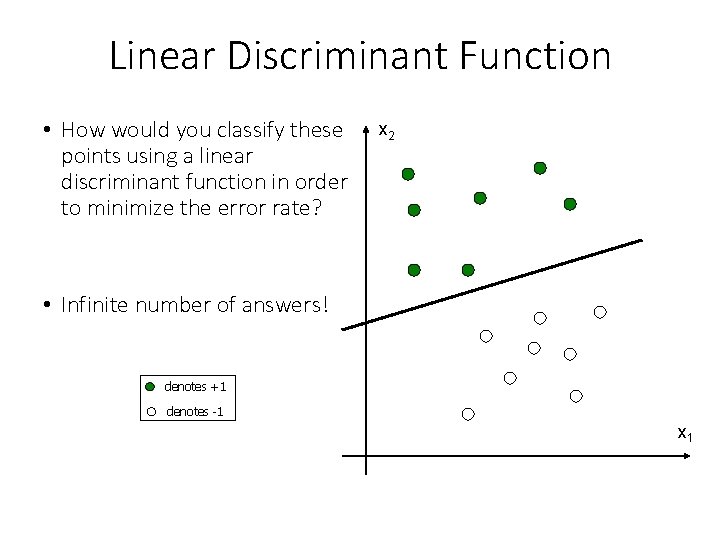

Linear Discriminant Function • How would you classify these points using a linear discriminant function in order to minimize the error rate? x 2 • Infinite number of answers! denotes +1 denotes -1 x 1

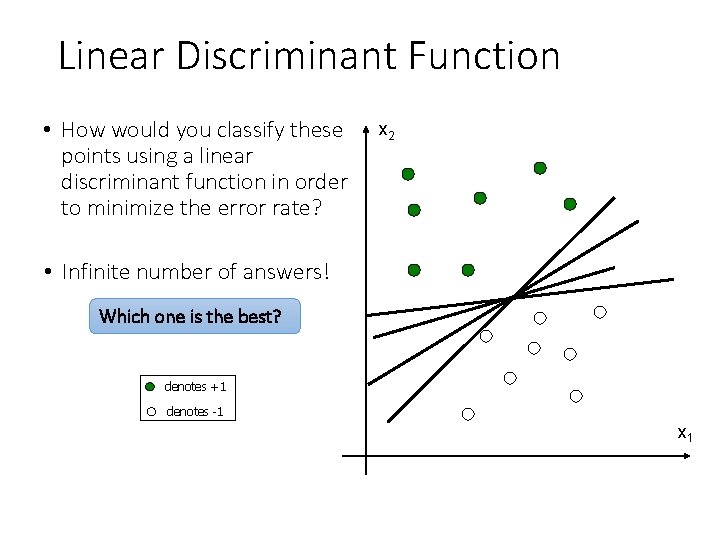

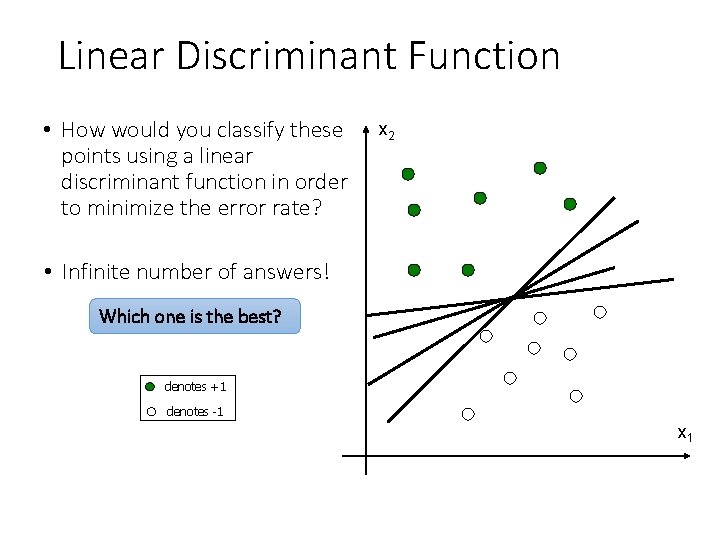

Linear Discriminant Function • How would you classify these points using a linear discriminant function in order to minimize the error rate? x 2 • Infinite number of answers! Which one is the best? denotes +1 denotes -1 x 1

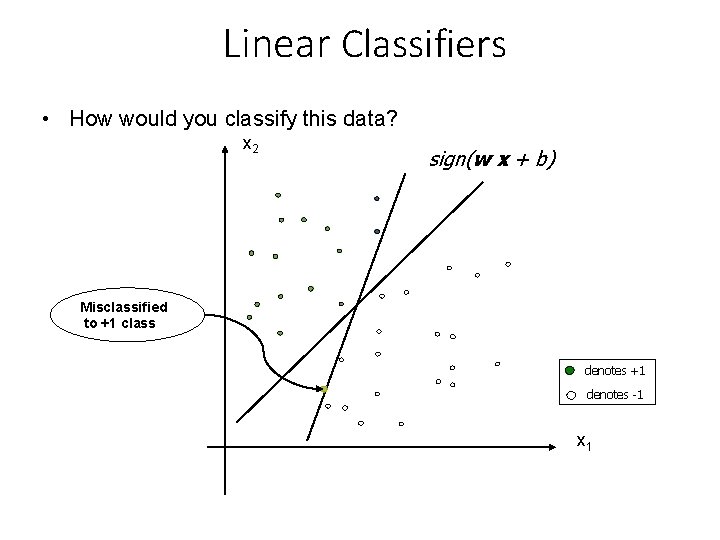

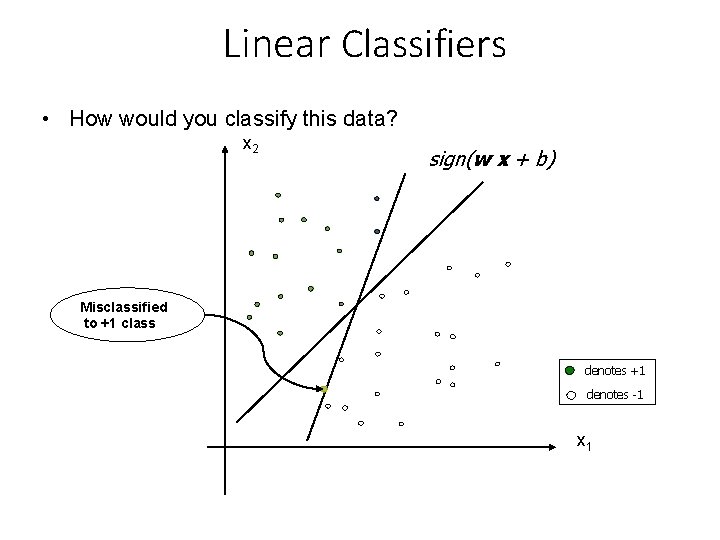

Linear Classifiers • How would you classify this data? x 2 sign(w x + b) Misclassified to +1 class denotes +1 denotes -1 x 1

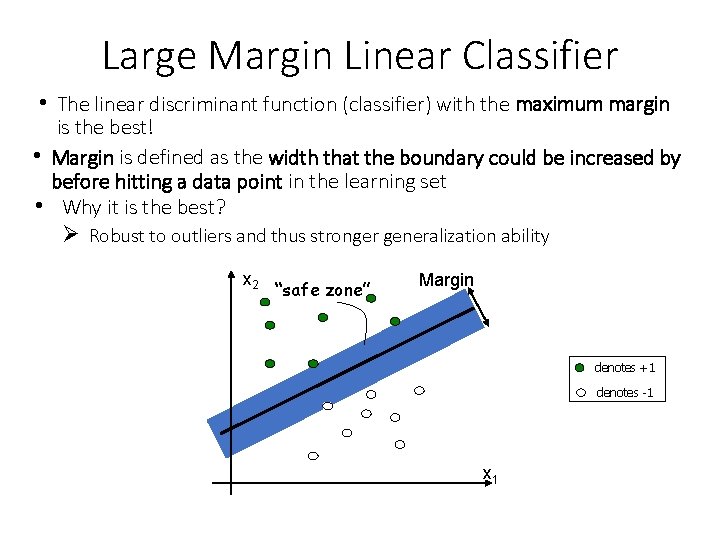

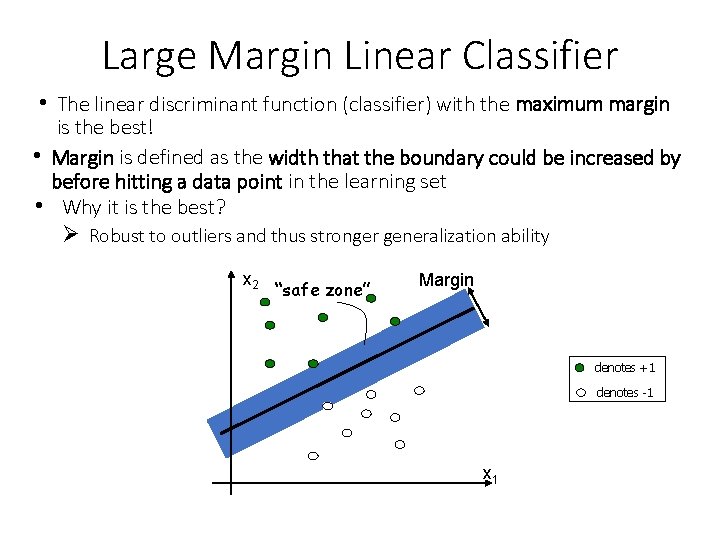

Large Margin Linear Classifier • The linear discriminant function (classifier) with the maximum margin is the best! • Margin is defined as the width that the boundary could be increased by before hitting a data point in the learning set • Why it is the best? Ø Robust to outliers and thus stronger generalization ability x 2 “safe zone” Margin denotes +1 denotes -1 x 1

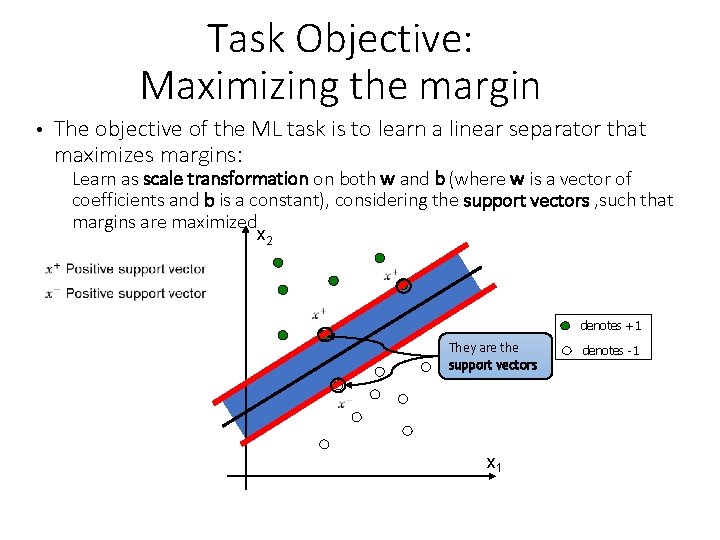

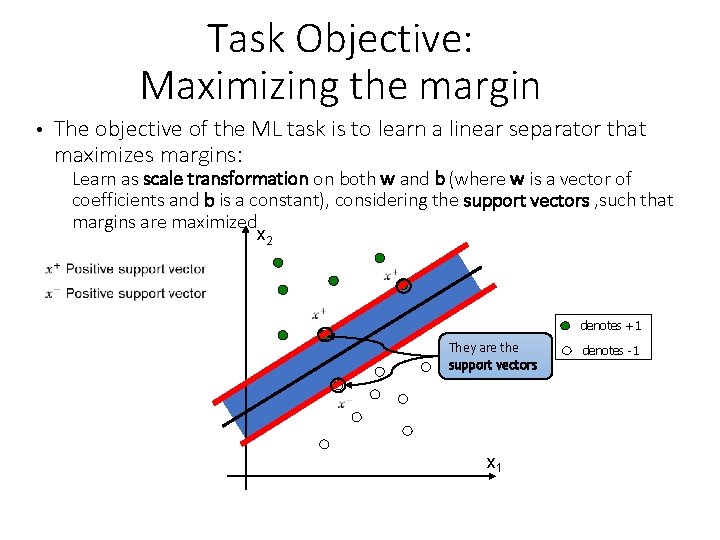

Task Objective: Maximizing the margin • The objective of the ML task is to learn a linear separator that maximizes margins: Learn as scale transformation on both w and b (where w is a vector of coefficients and b is a constant), considering the support vectors , such that margins are maximized x 2 denotes +1 They are the support vectors x 1 denotes -1

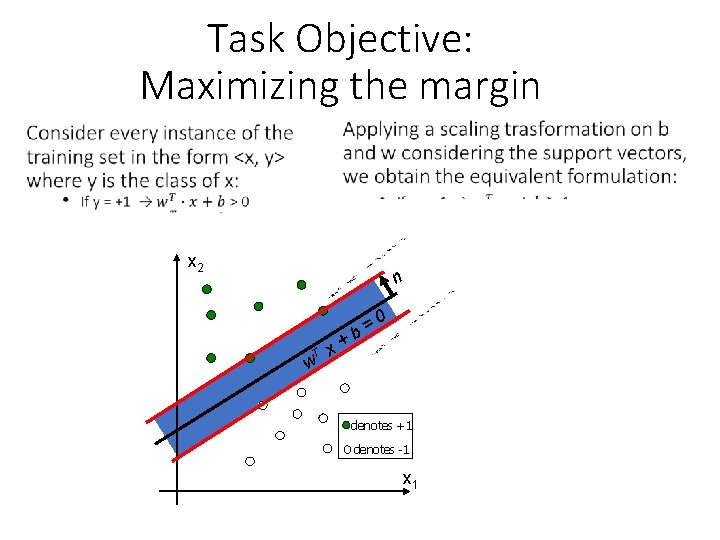

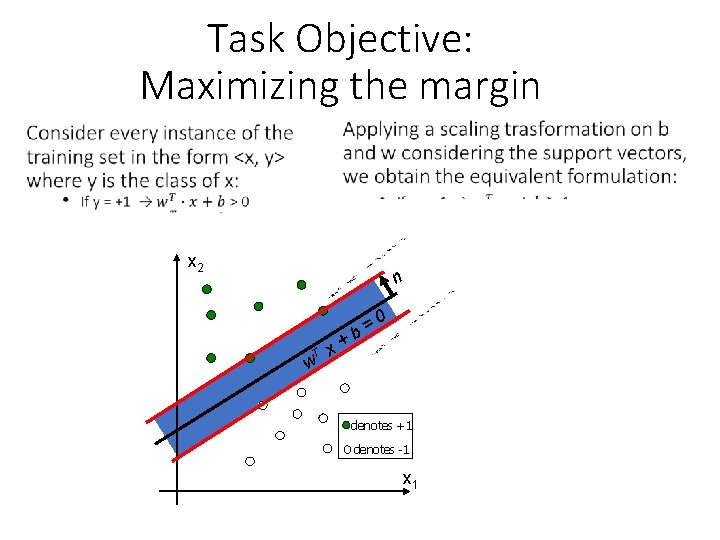

Task Objective: Maximizing the margin • x 2 n T w x+ 0 = b denotes +1 denotes -1 x 1

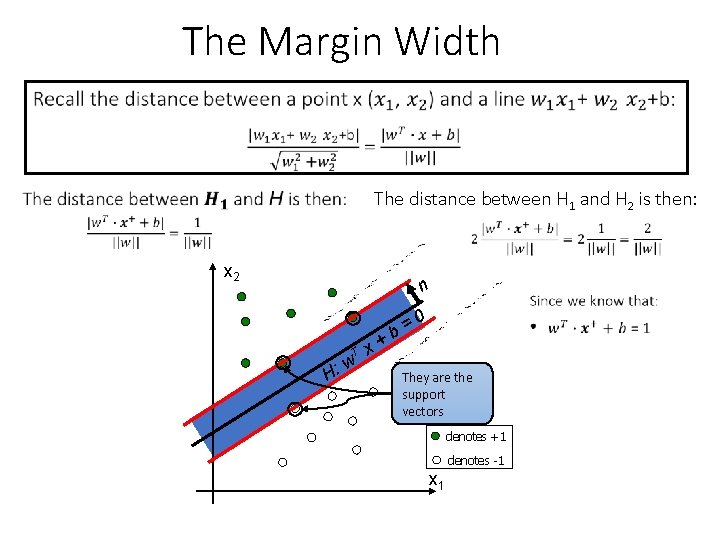

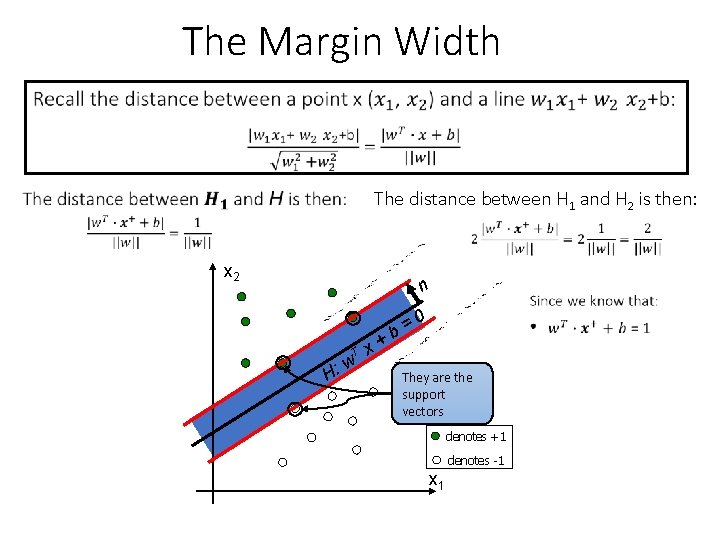

The Margin Width The distance between H 1 and H 2 is then: x 2 n H: T w x+ 0 = b They are the support vectors denotes +1 denotes -1 x 1

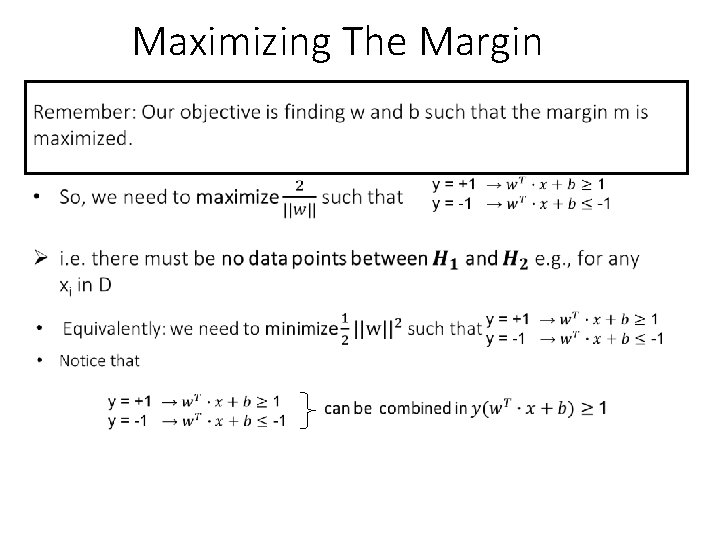

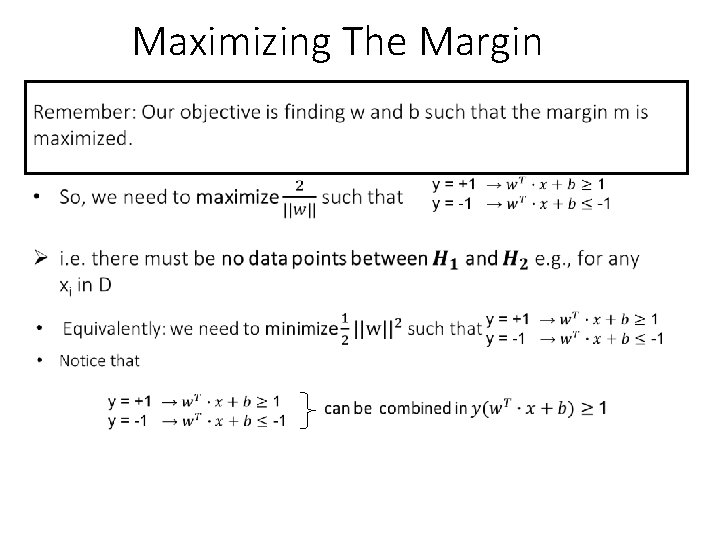

Maximizing The Margin

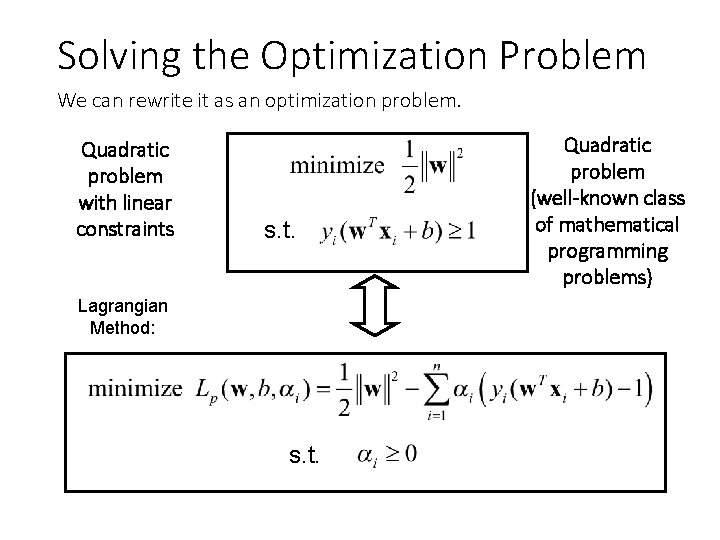

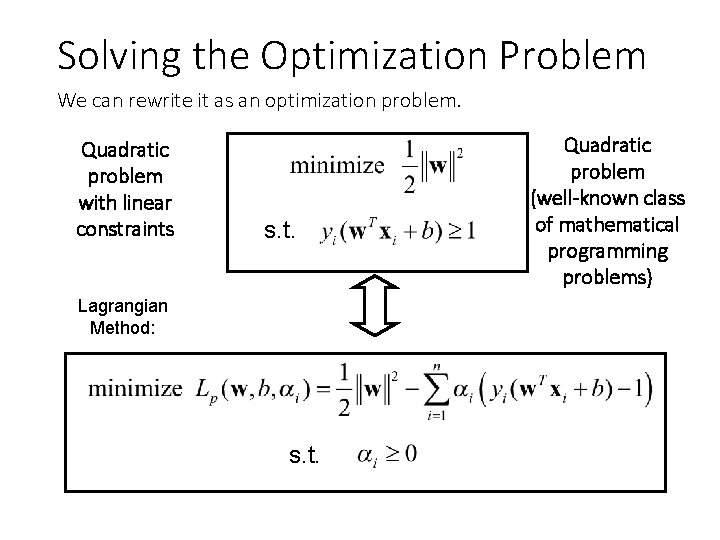

Solving the Optimization Problem We can rewrite it as an optimization problem. Quadratic problem with linear constraints s. t. Lagrangian Method: s. t. Quadratic problem (well-known class of mathematical programming problems)

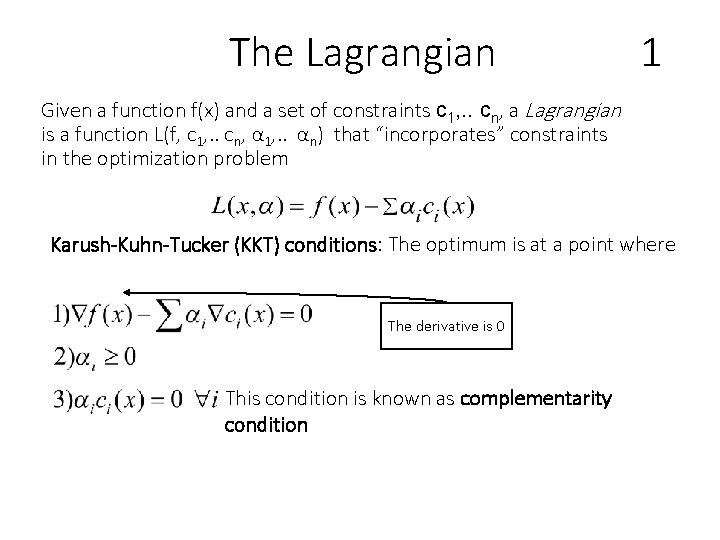

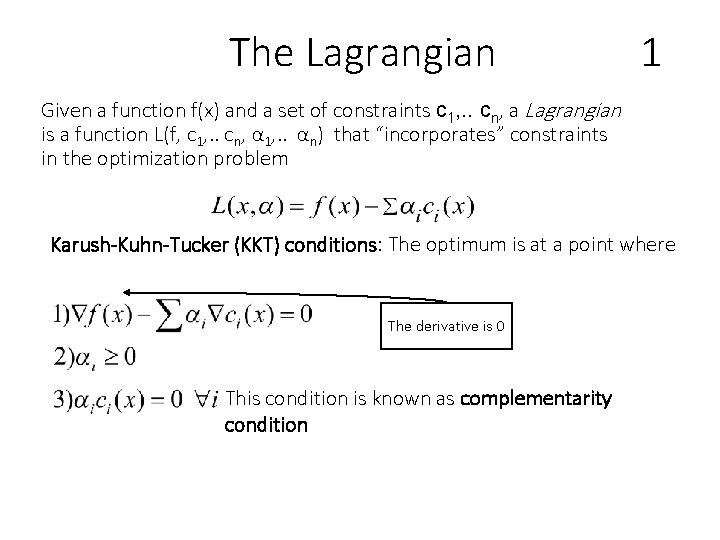

The Lagrangian 1 Given a function f(x) and a set of constraints c 1, . . cn, a Lagrangian is a function L(f, c 1, . . cn, α 1, . . αn) that “incorporates” constraints in the optimization problem Karush-Kuhn-Tucker (KKT) conditions: The optimum is at a point where The derivative is 0 This condition is known as complementarity condition

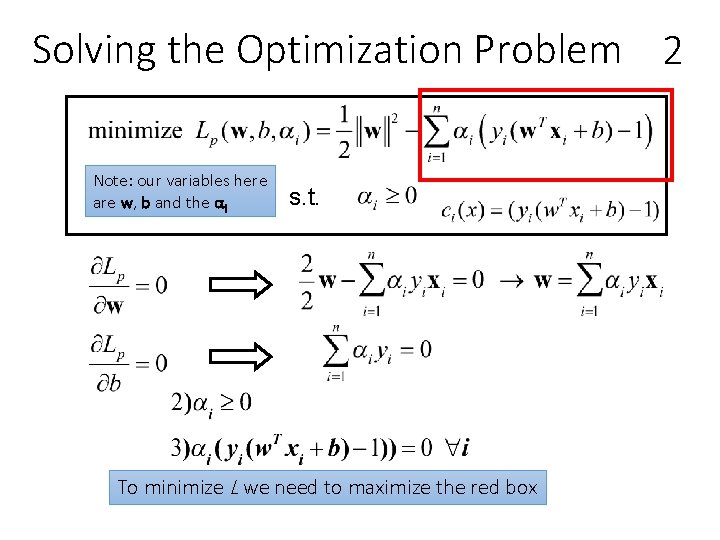

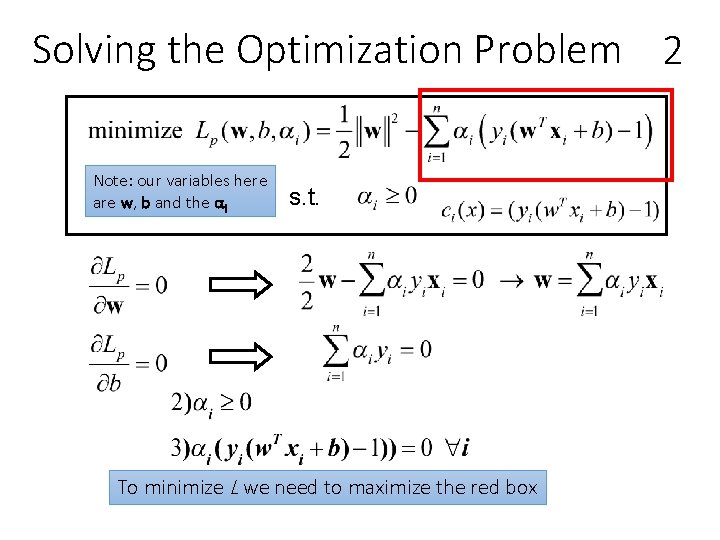

Solving the Optimization Problem 2 Note: our variables here are w, b and the αi s. t. To minimize L we need to maximize the red box

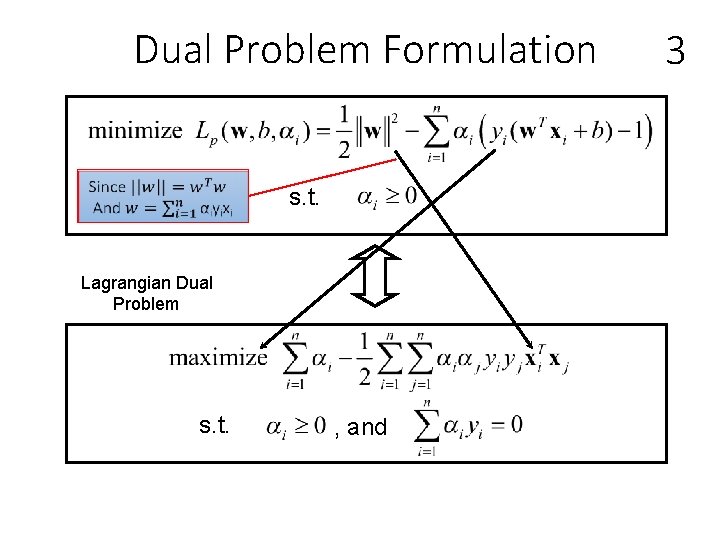

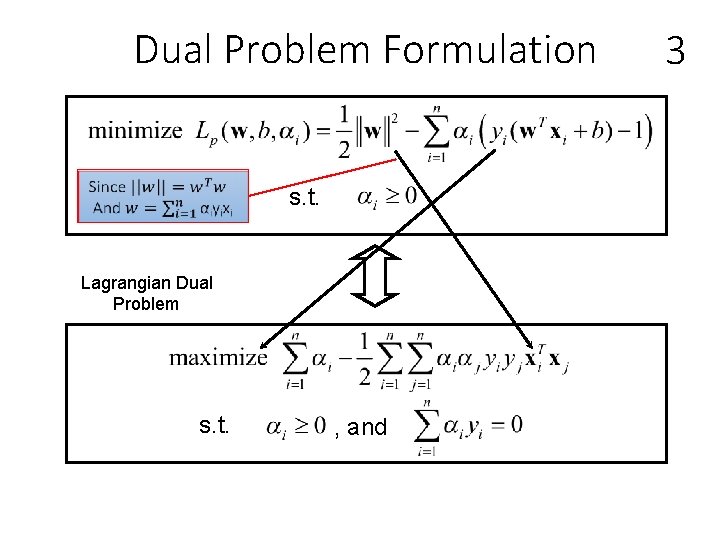

Dual Problem Formulation s. t. Lagrangian Dual Problem s. t. , and 3

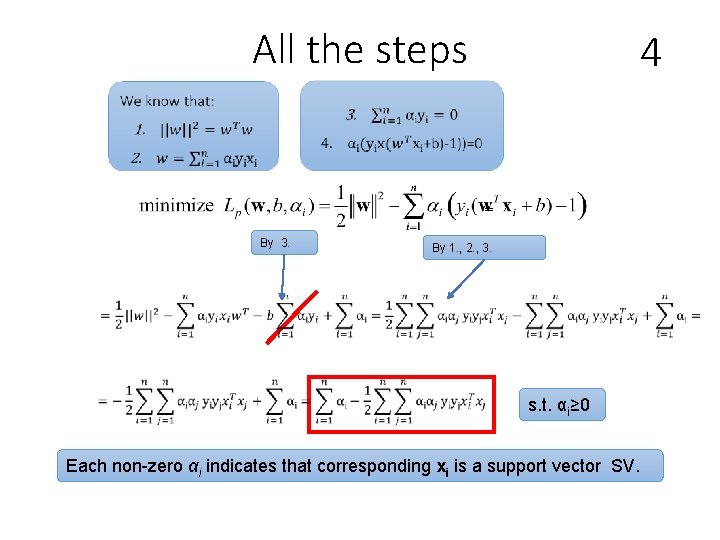

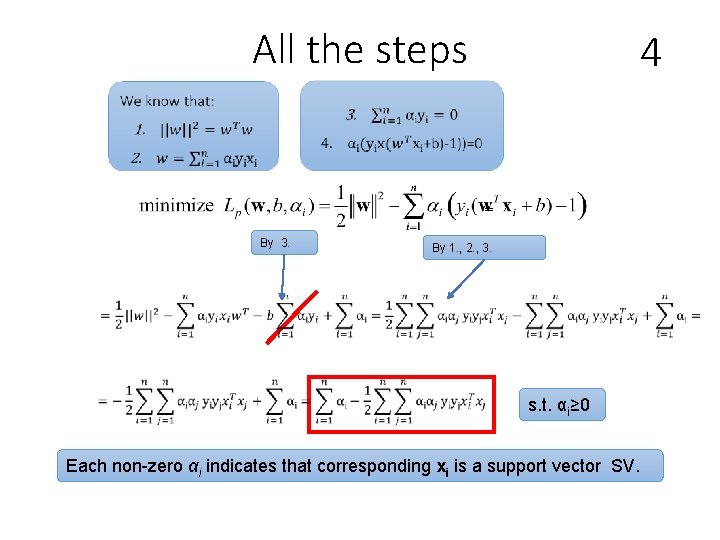

All the steps 4 = By 3. By 1. , 2. , 3. s. t. αi≥ 0 Each non-zero αi indicates that corresponding xi is a support vector SV.

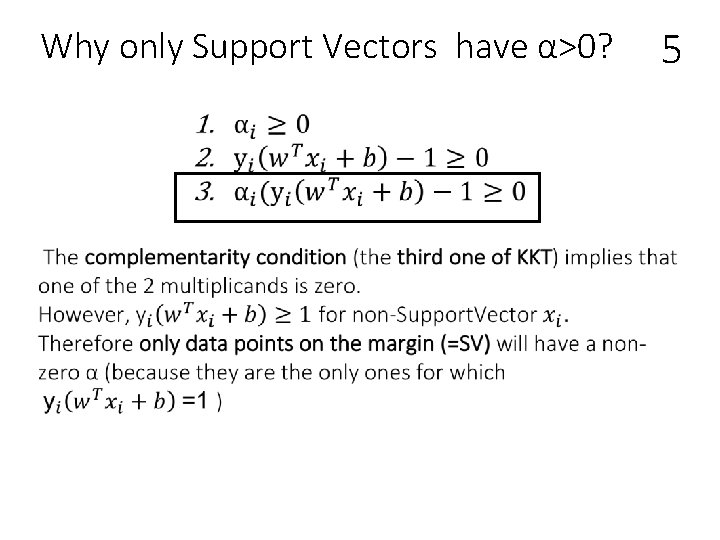

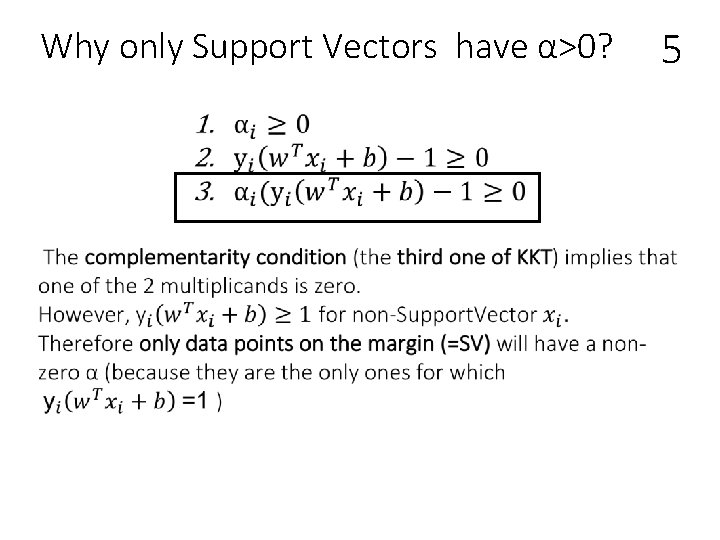

Why only Support Vectors have α>0? 5

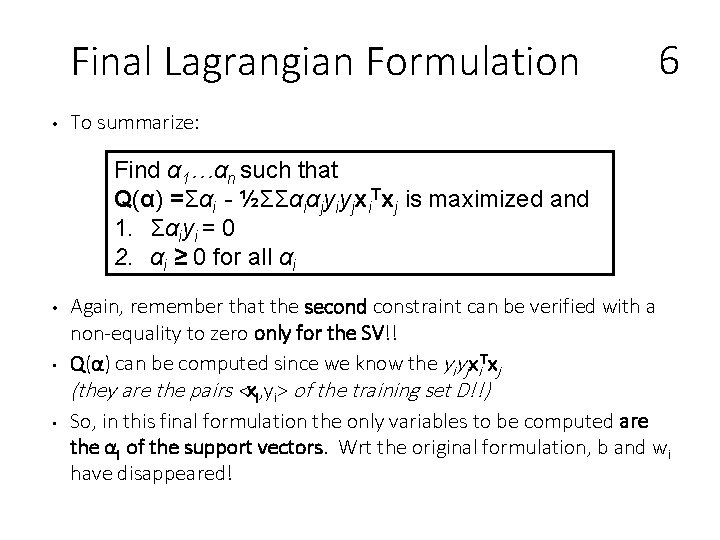

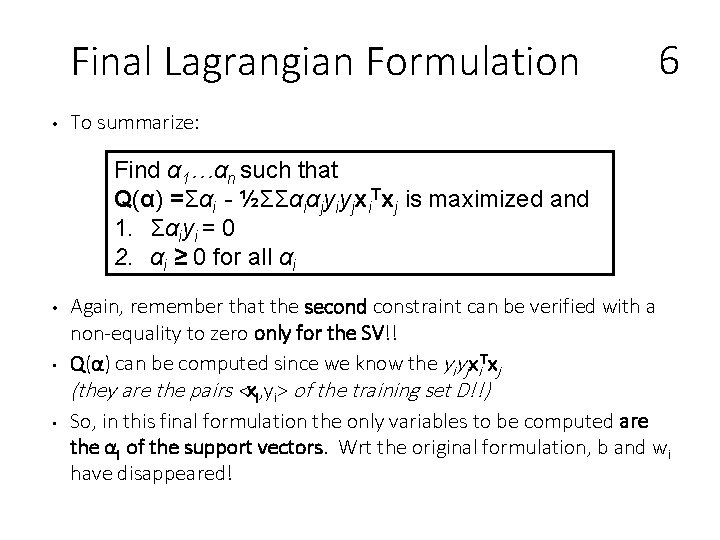

Final Lagrangian Formulation • 6 To summarize: Find α 1…αn such that Q(α) =Σαi - ½ΣΣαiαjyiyjxi. Txj is maximized and 1. Σαiyi = 0 2. αi ≥ 0 for all αi • • • Again, remember that the second constraint can be verified with a non-equality to zero only for the SV!! Q(α) can be computed since we know the yiyjxi. Txj (they are the pairs <xi, yi> of the training set D!!) So, in this final formulation the only variables to be computed are the αi of the support vectors. Wrt the original formulation, b and wi have disappeared!

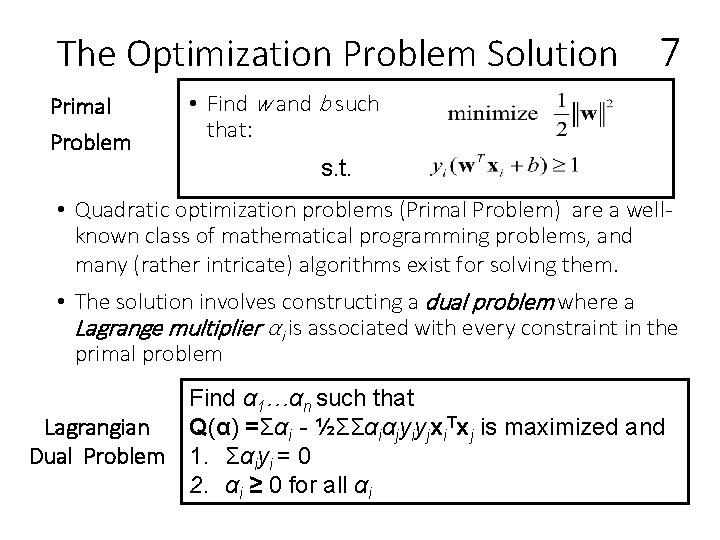

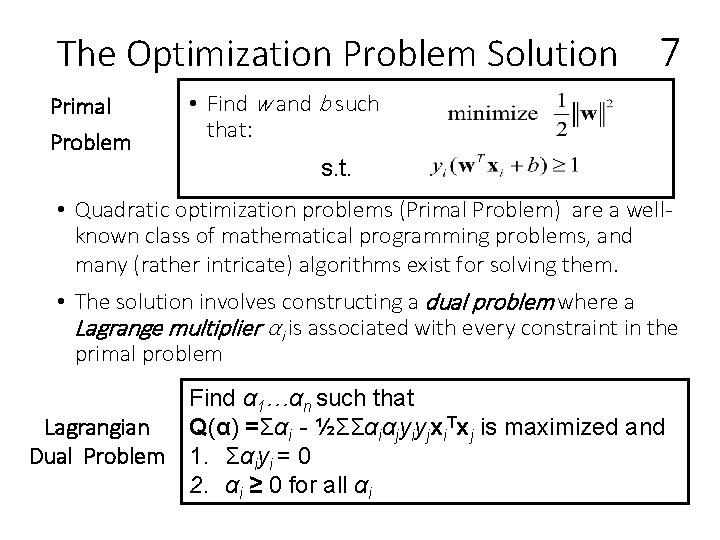

The Optimization Problem Solution Primal Problem 7 • Find w and b such that: s. t. • Quadratic optimization problems (Primal Problem) are a wellknown class of mathematical programming problems, and many (rather intricate) algorithms exist for solving them. • The solution involves constructing a dual problem where a Lagrange multiplier αi is associated with every constraint in the primal problem Find α 1…αn such that Lagrangian Q(α) =Σαi - ½ΣΣαiαjyiyjxi. Txj is maximized and Dual Problem 1. Σαiyi = 0 2. αi ≥ 0 for all αi

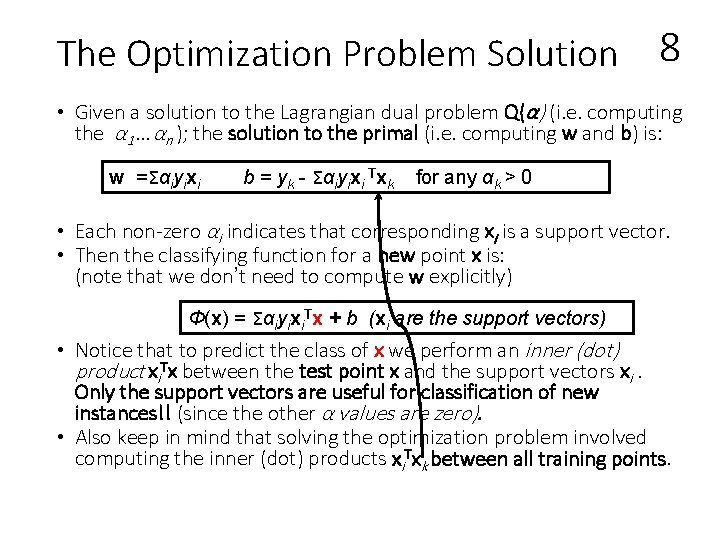

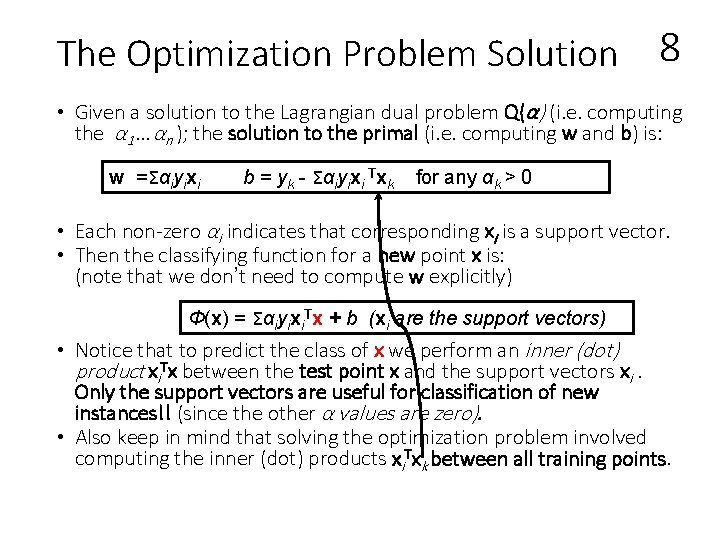

The Optimization Problem Solution 8 • Given a solution to the Lagrangian dual problem Q(α) (i. e. computing the α 1…αn ); the solution to the primal (i. e. computing w and b) is: w =Σαiyixi b = yk - Σαiyixi Txk for any αk > 0 • Each non-zero αi indicates that corresponding xi is a support vector. • Then the classifying function for a new point x is: (note that we don’t need to compute w explicitly) Φ(x) = Σαiyixi. Tx + b (xi are the support vectors) • Notice that to predict the class of x we perform an inner (dot) product xi. Tx between the test point x and the support vectors xi. Only the support vectors are useful for classification of new instances!! (since the other α values are zero). • Also keep in mind that solving the optimization problem involved computing the inner (dot) products xi. Txk between all training points.

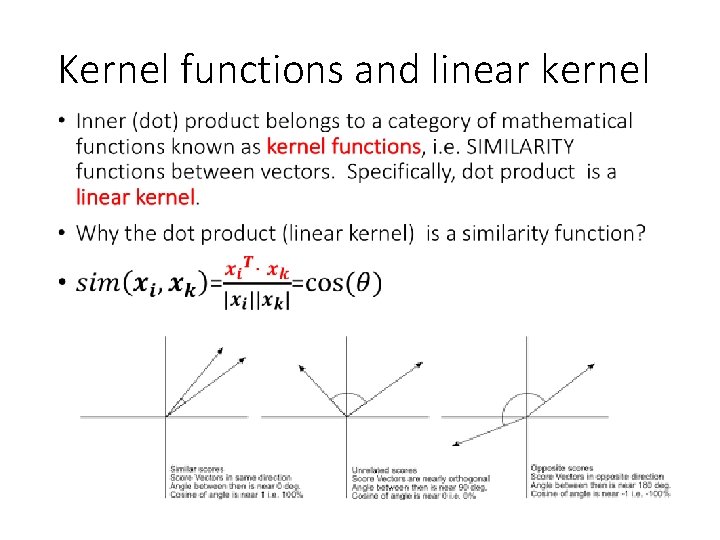

Kernel functions and linear kernel •

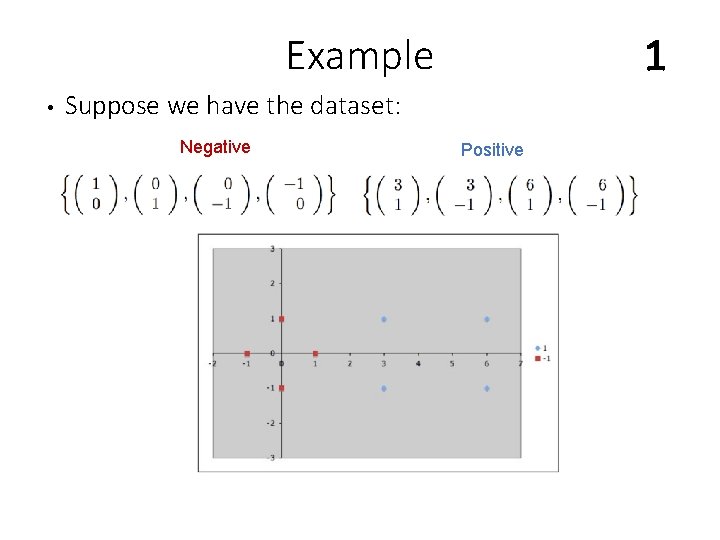

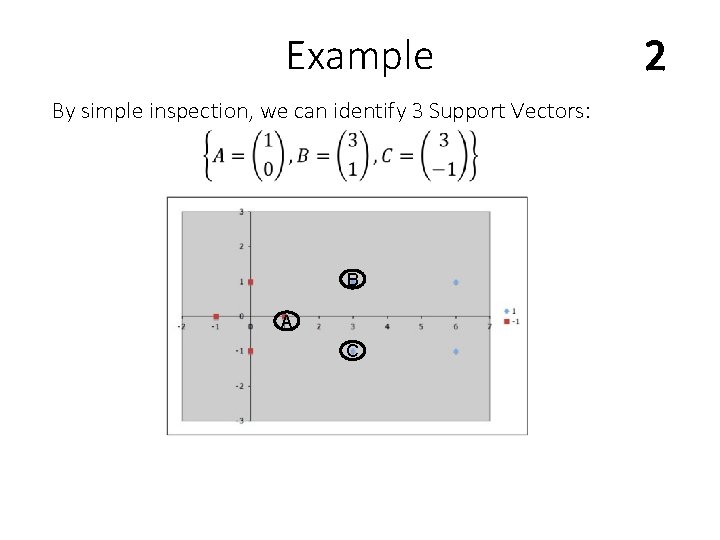

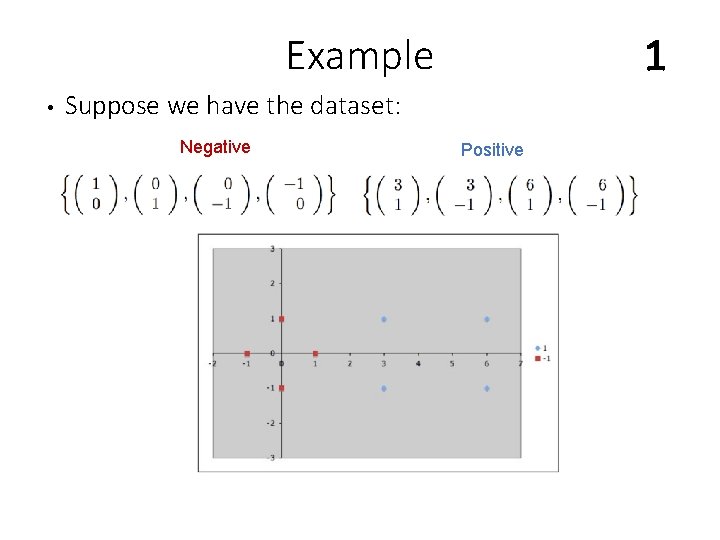

Example • 1 Suppose we have the dataset: Negative Positive

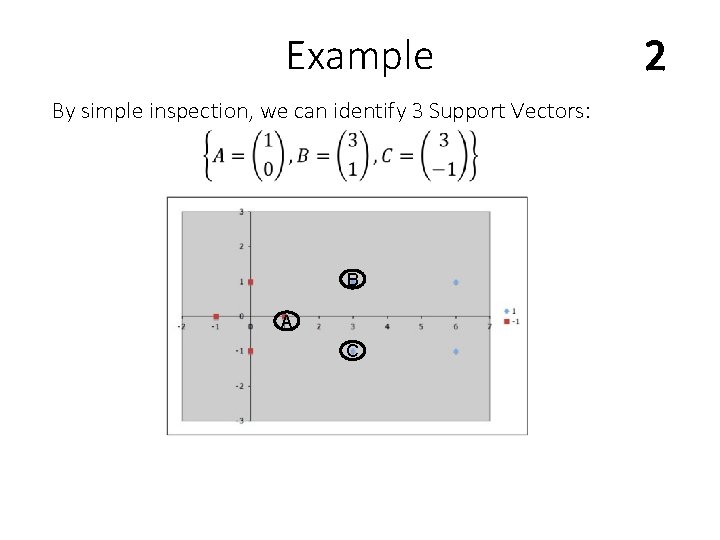

Example By simple inspection, we can identify 3 Support Vectors: B A C 2

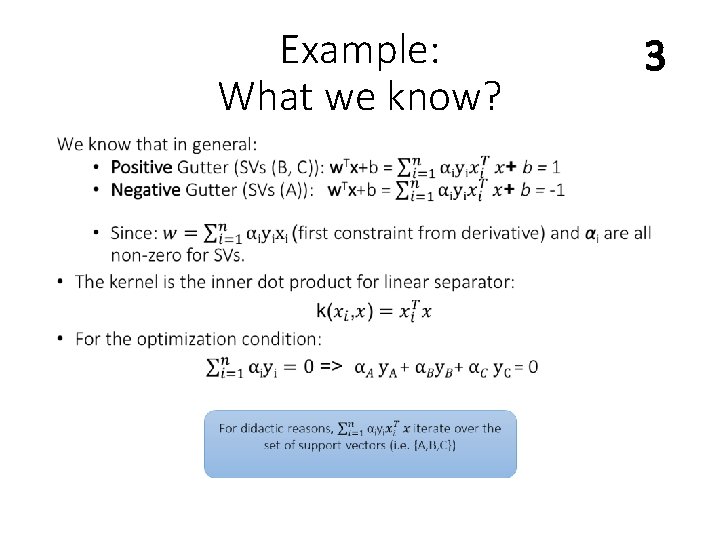

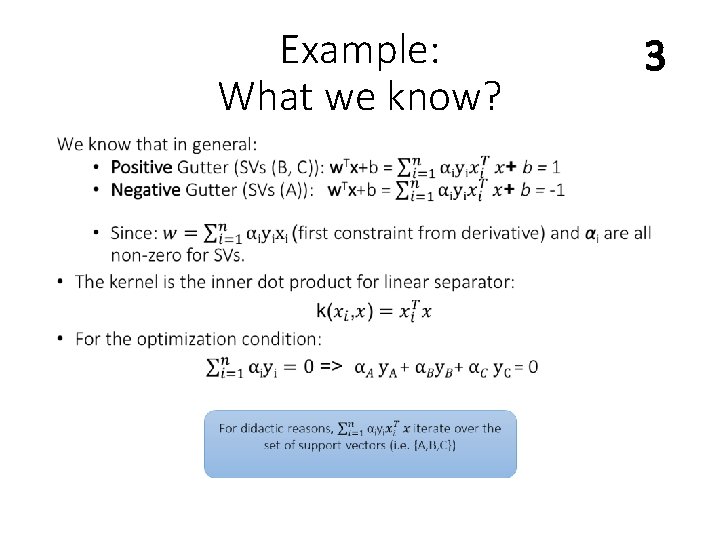

Example: What we know? • 3

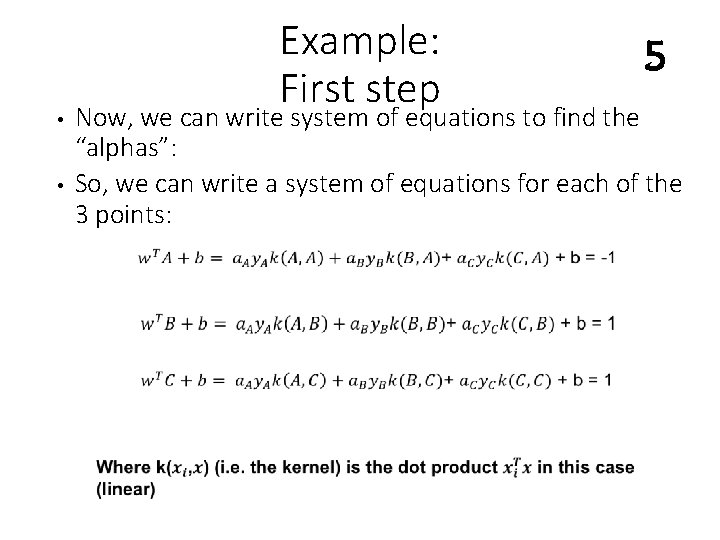

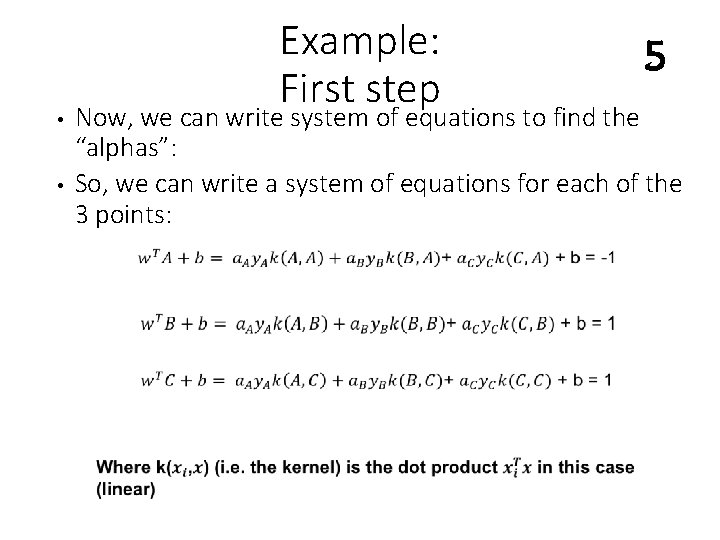

• • Example: First step 5 Now, we can write system of equations to find the “alphas”: So, we can write a system of equations for each of the 3 points:

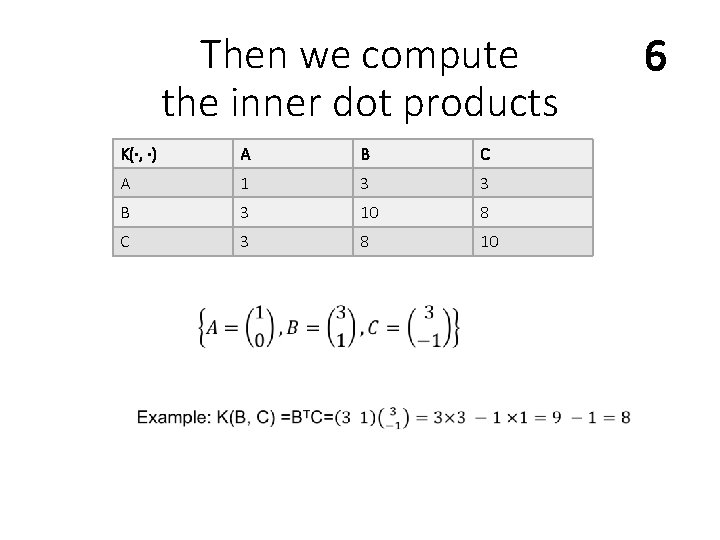

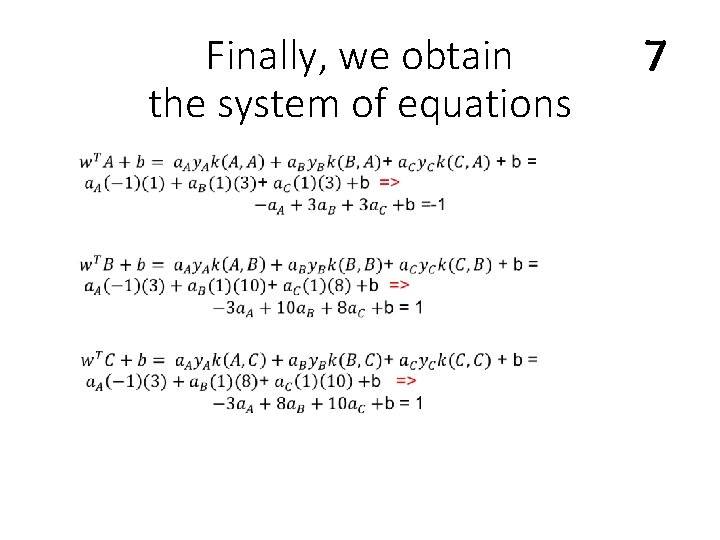

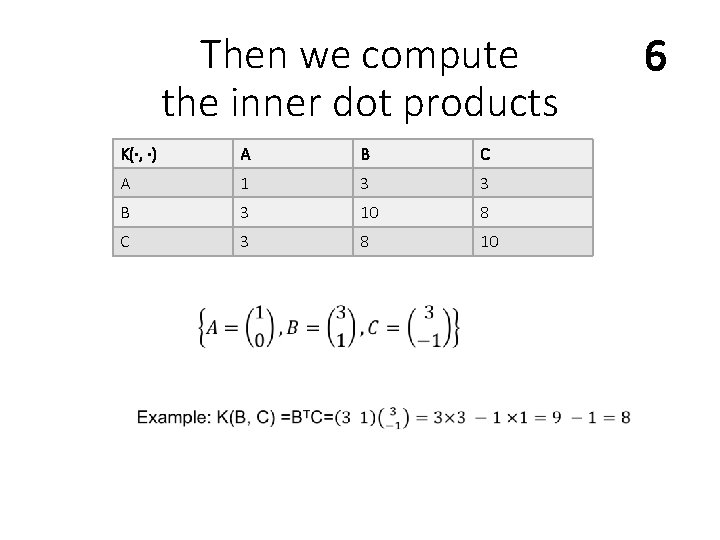

Then we compute the inner dot products K(·, ·) A B C A 1 3 3 B 3 10 8 C 3 8 10 6

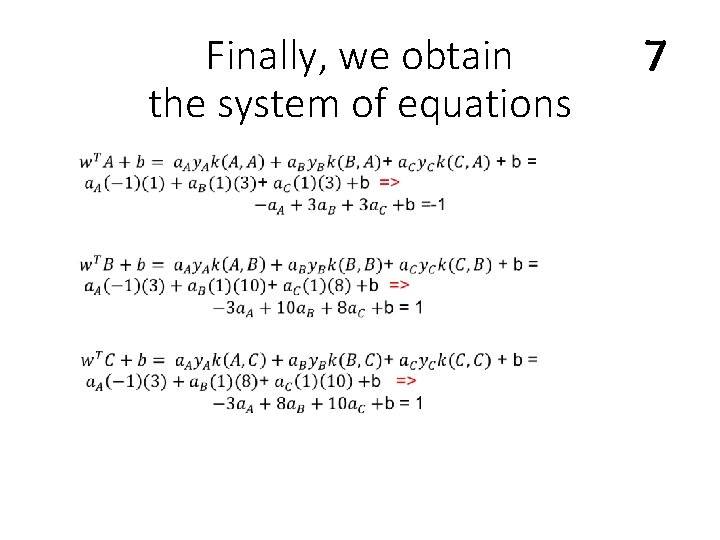

Finally, we obtain the system of equations 7

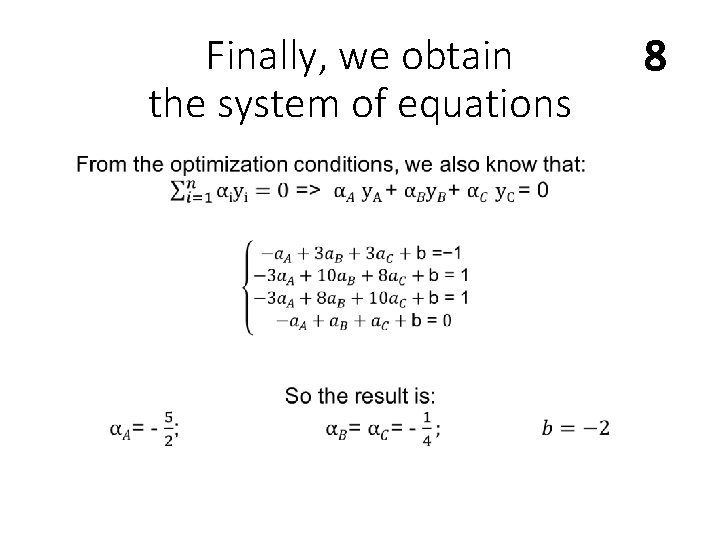

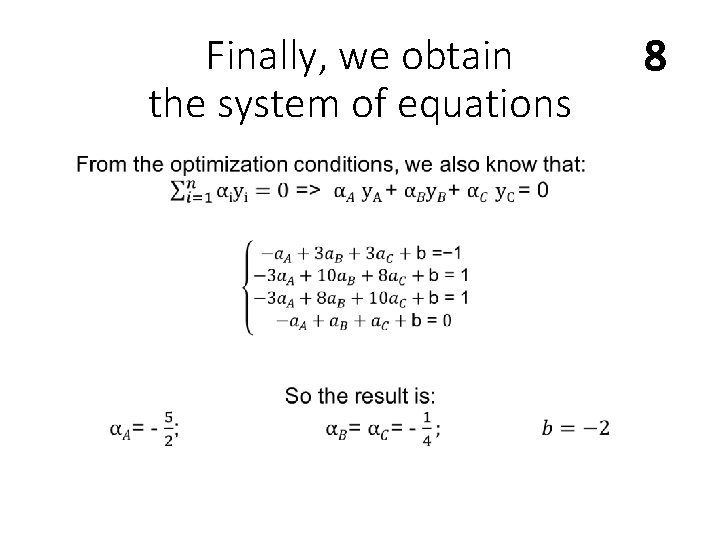

Finally, we obtain the system of equations 8

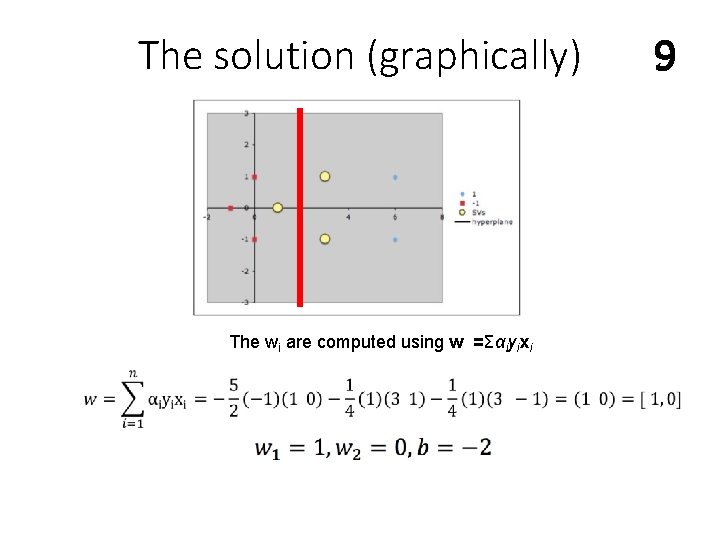

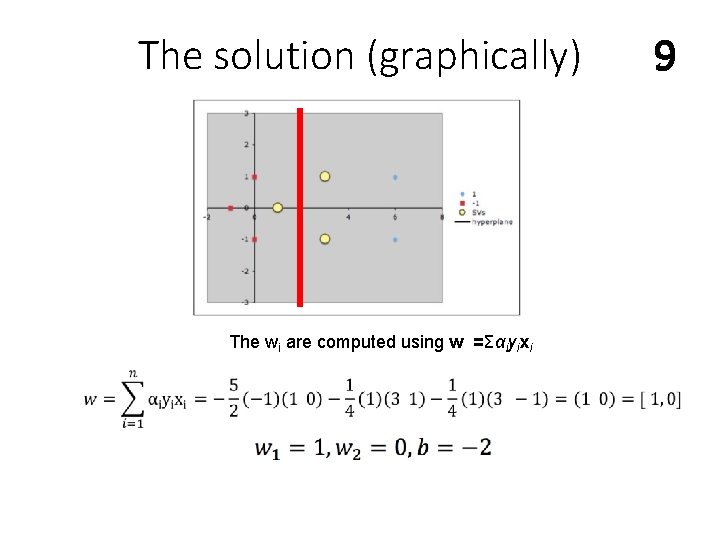

The solution (graphically) The wi are computed using w =Σαiyixi 9

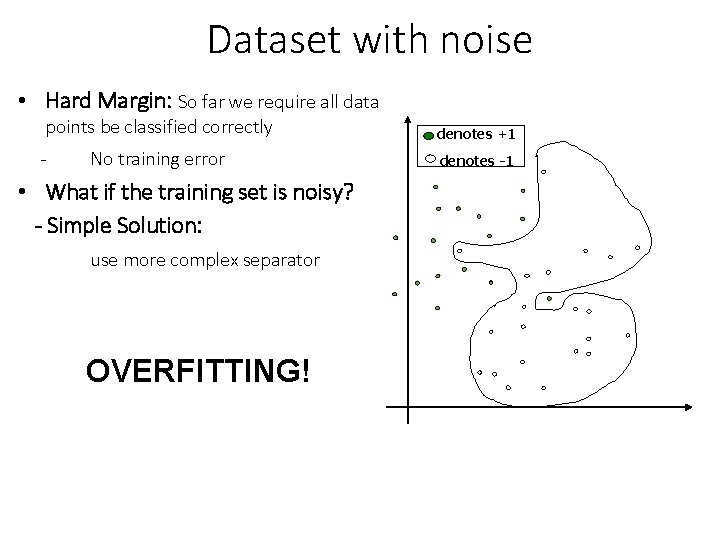

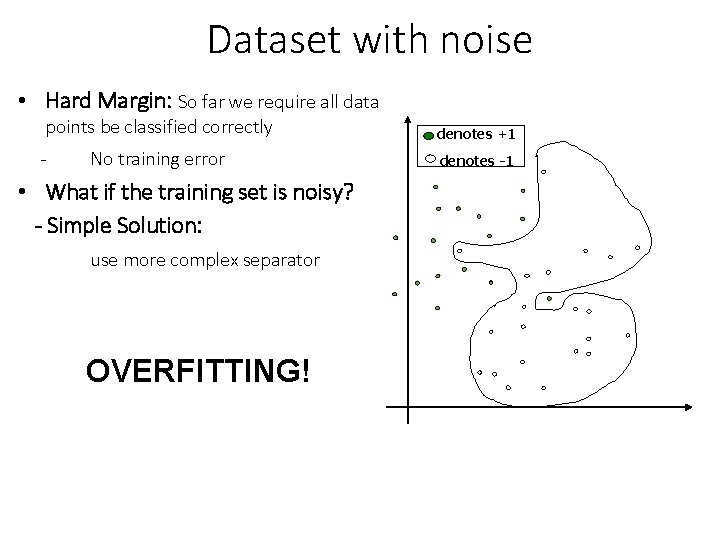

Dataset with noise • Hard Margin: So far we require all data points be classified correctly - No training error • What if the training set is noisy? - Simple Solution: use more complex separator OVERFITTING! denotes +1 denotes -1

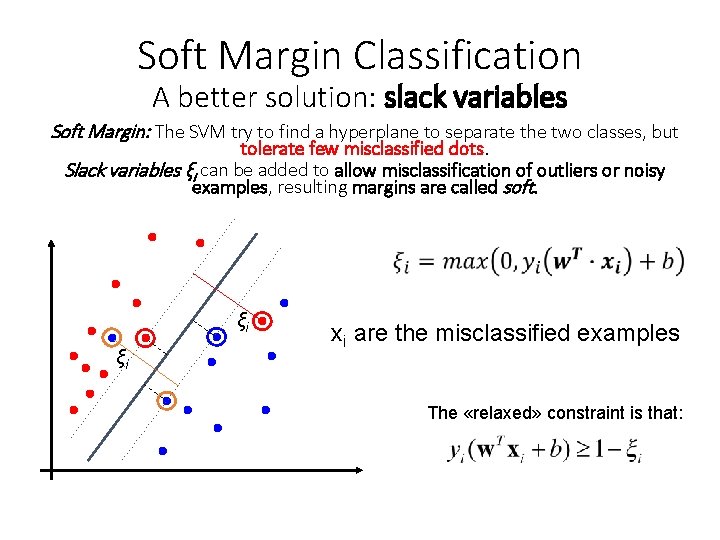

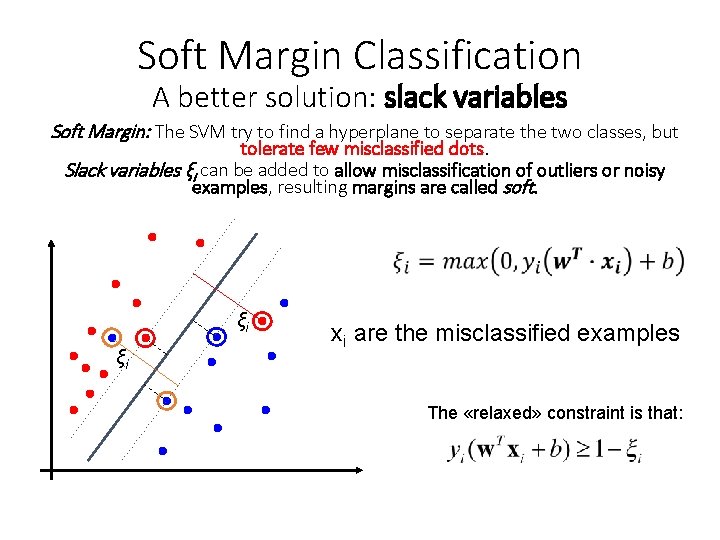

Soft Margin Classification A better solution: slack variables Soft Margin: The SVM try to find a hyperplane to separate the two classes, but tolerate few misclassified dots. Slack variables ξi can be added to allow misclassification of outliers or noisy examples, resulting margins are called soft. ξi ξi xi are the misclassified examples The «relaxed» constraint is that:

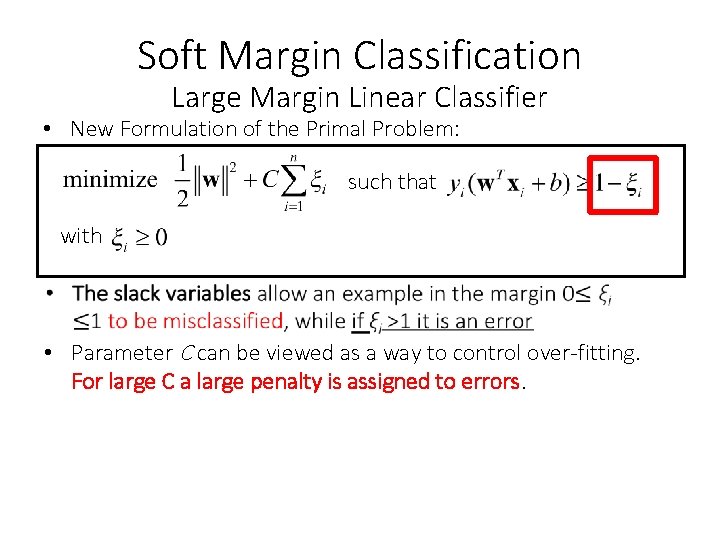

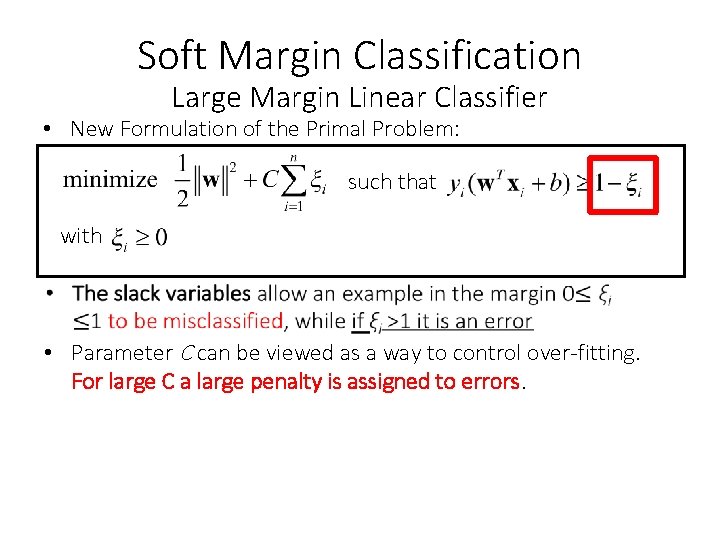

Soft Margin Classification Large Margin Linear Classifier • New Formulation of the Primal Problem: such that with • Parameter C can be viewed as a way to control over-fitting. For large C a large penalty is assigned to errors.

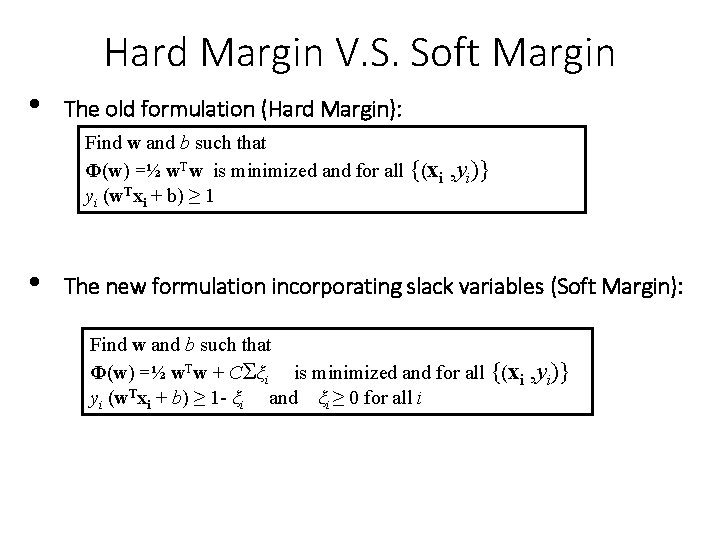

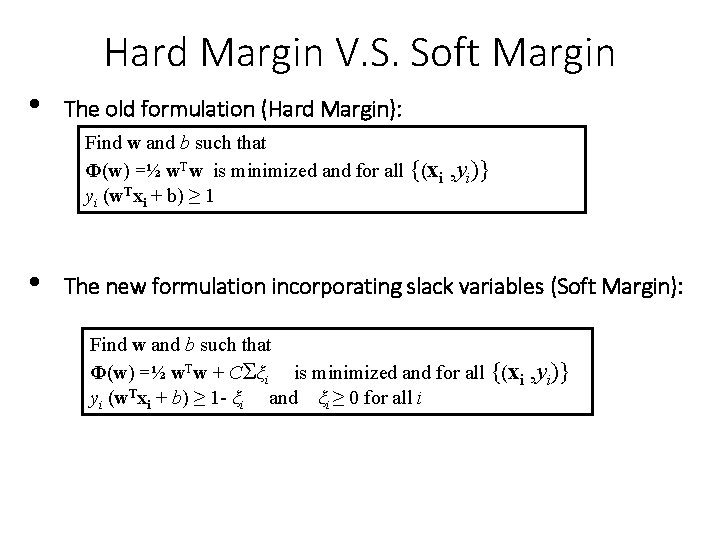

Hard Margin V. S. Soft Margin • The old formulation (Hard Margin): Find w and b such that Φ(w) =½ w. Tw is minimized and for all {(xi yi (w. Txi + b) ≥ 1 • , yi)} The new formulation incorporating slack variables (Soft Margin): Find w and b such that Φ(w) =½ w. Tw + CΣξi is minimized and for all {(xi yi (w. Txi + b) ≥ 1 - ξi and ξi ≥ 0 for all i , yi)}

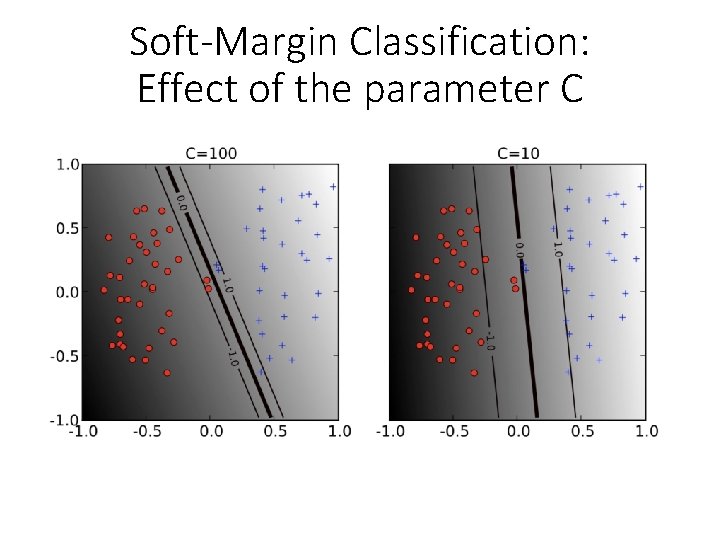

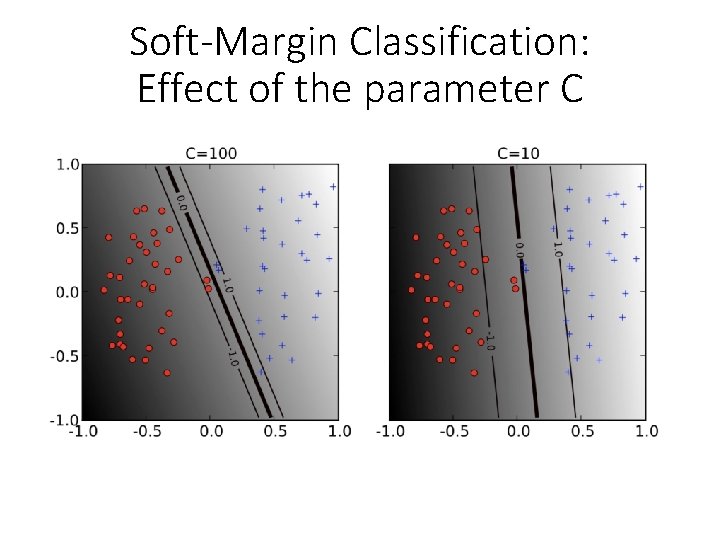

Soft-Margin Classification: Effect of the parameter C

Summary: Linear SVM • The classifier is a separating hyperplane. • Most “important” training points are support vectors; they define the hyperplane. • Quadratic optimization algorithms can identify which training points xi are support vectors with non-zero Lagrangian multipliers αi. • Both in the dual formulation of the problem and in the solution, the training points appear only inside dot products • Slack variables allow to tolerate quasi-linearly separable data

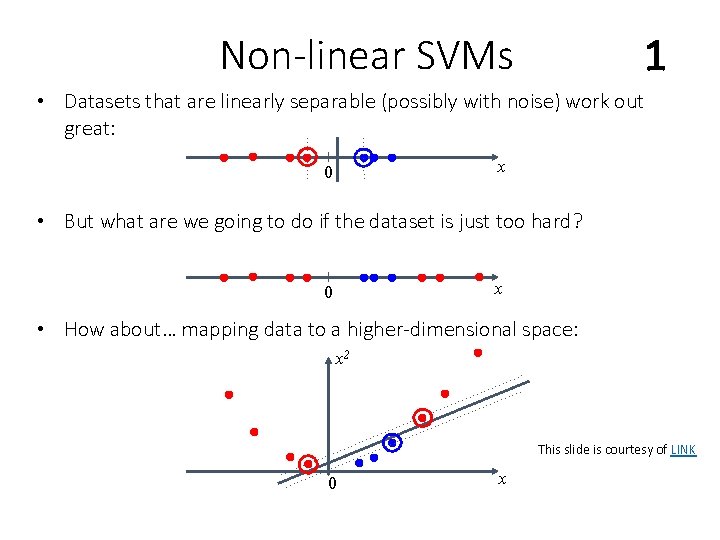

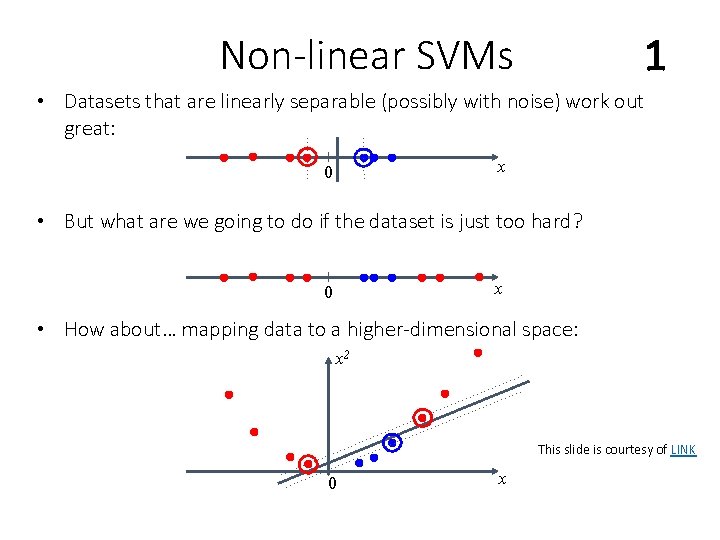

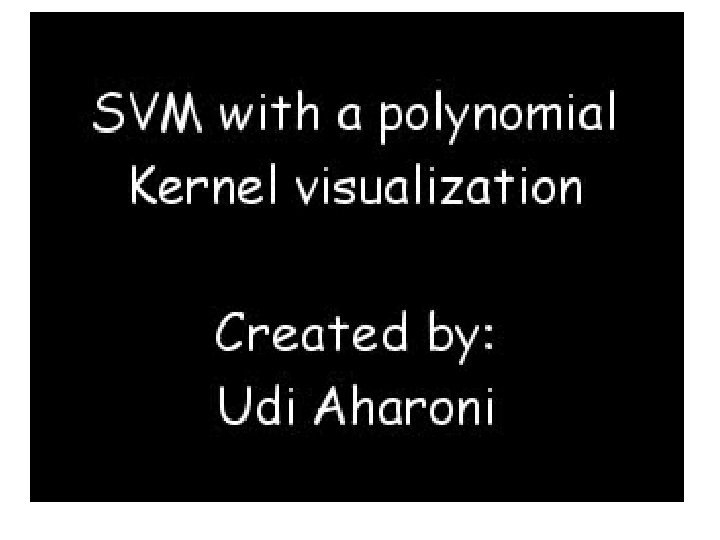

Non-linear SVMs 1 • Datasets that are linearly separable (possibly with noise) work out great: x 0 • But what are we going to do if the dataset is just too hard? x 0 • How about… mapping data to a higher-dimensional space: x 2 This slide is courtesy of LINK 0 x

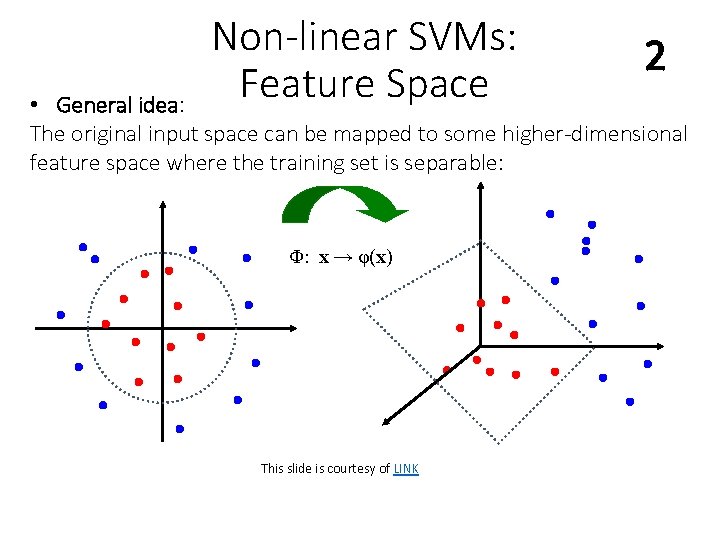

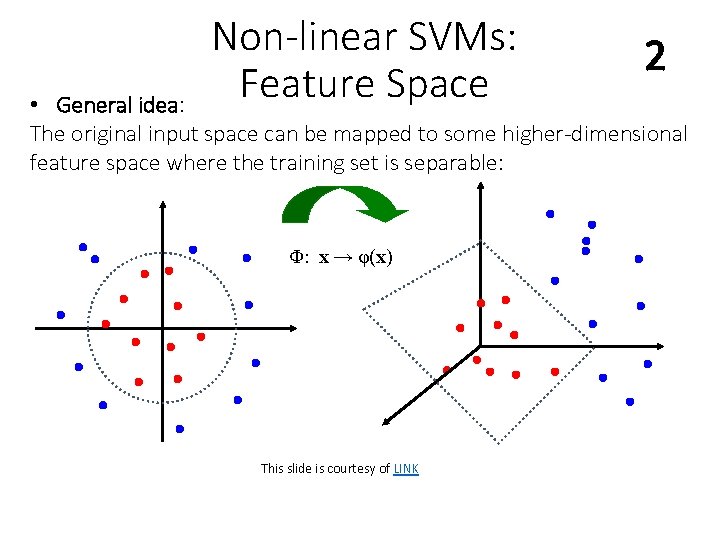

Non-linear SVMs: Feature Space 2 • General idea: The original input space can be mapped to some higher-dimensional feature space where the training set is separable: Φ: x → φ(x) This slide is courtesy of LINK

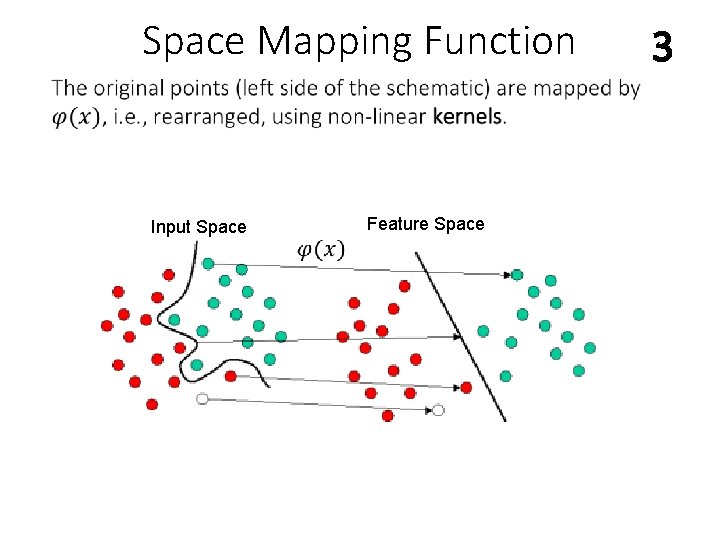

Space Mapping Function Input Space Feature Space 3

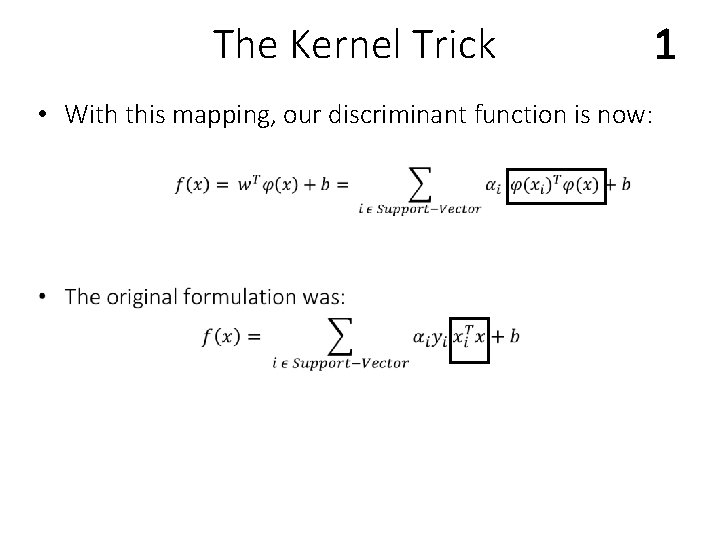

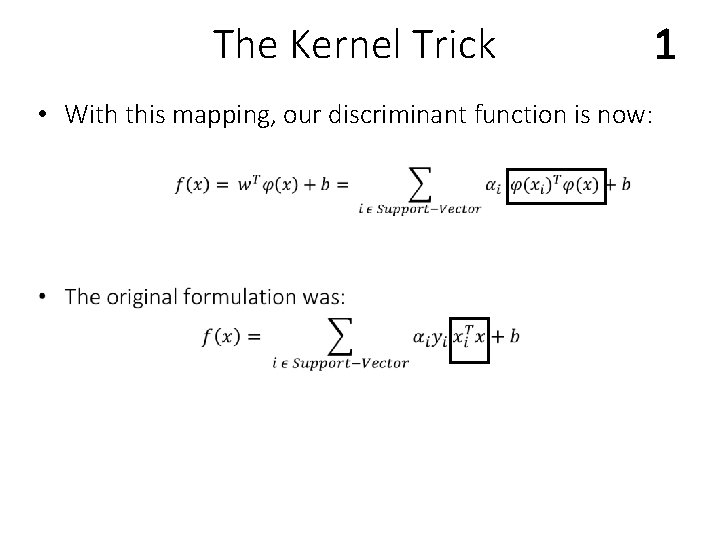

The Kernel Trick • With this mapping, our discriminant function is now: 1

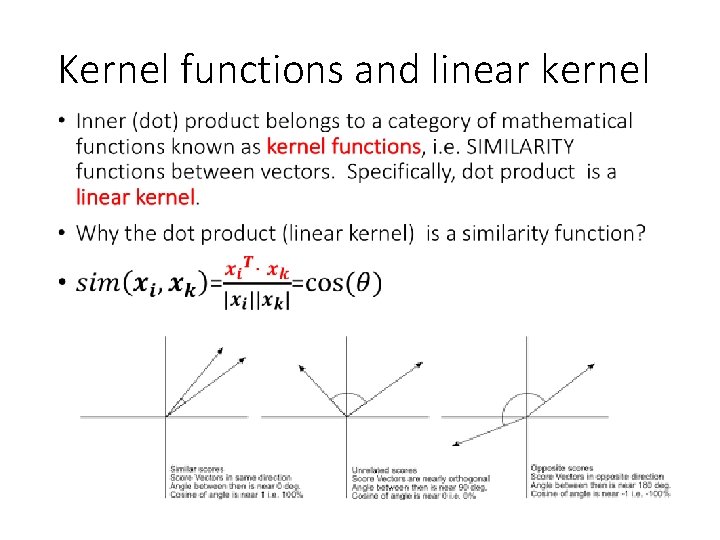

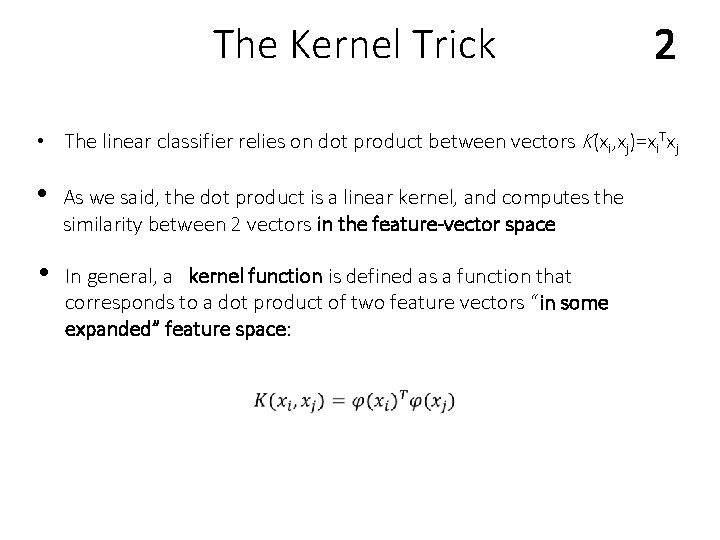

The Kernel Trick 2 • The linear classifier relies on dot product between vectors K(xi, xj)=xi. Txj • As we said, the dot product is a linear kernel, and computes the similarity between 2 vectors in the feature-vector space • In general, a kernel function is defined as a function that corresponds to a dot product of two feature vectors “in some expanded” feature space:

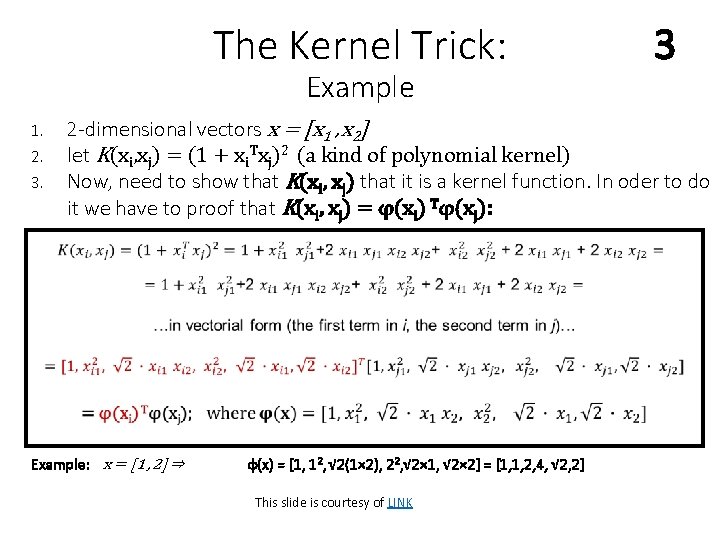

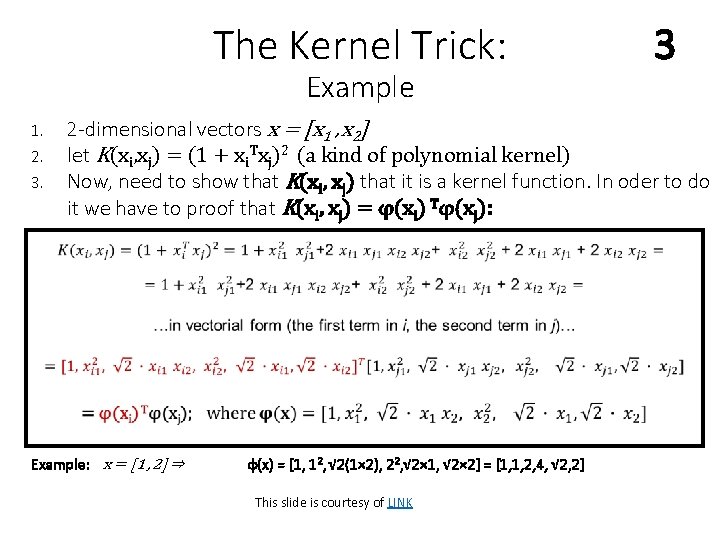

The Kernel Trick: Example 1. 2. 3. 3 2 -dimensional vectors x = [x 1 , x 2] let K(xi, xj) = (1 + xi. Txj)2 (a kind of polynomial kernel) Now, need to show that K(xi, xj) that it is a kernel function. In oder to do it we have to proof that K(xi, xj) = φ(xi) Tφ(xj): Example: x = [1 , 2] ⇒ φ(x) = [1, 12, √ 2(1× 2), 22, √ 2× 1, √ 2× 2] = [1, 1, 2, 4, √ 2, 2] This slide is courtesy of LINK

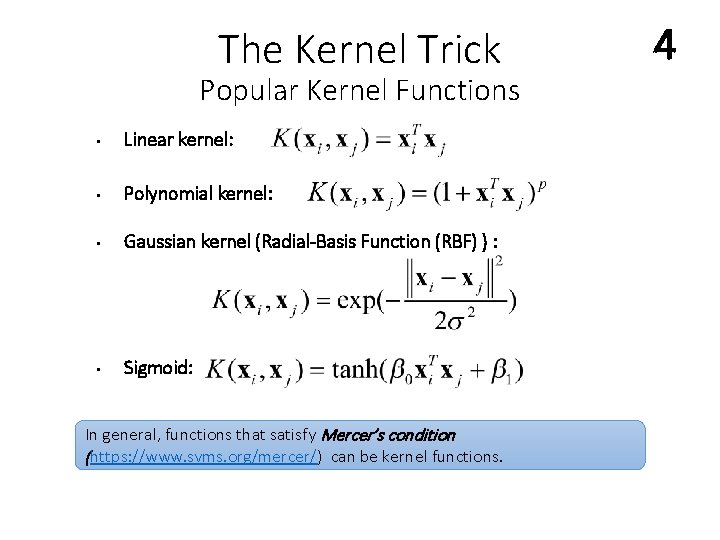

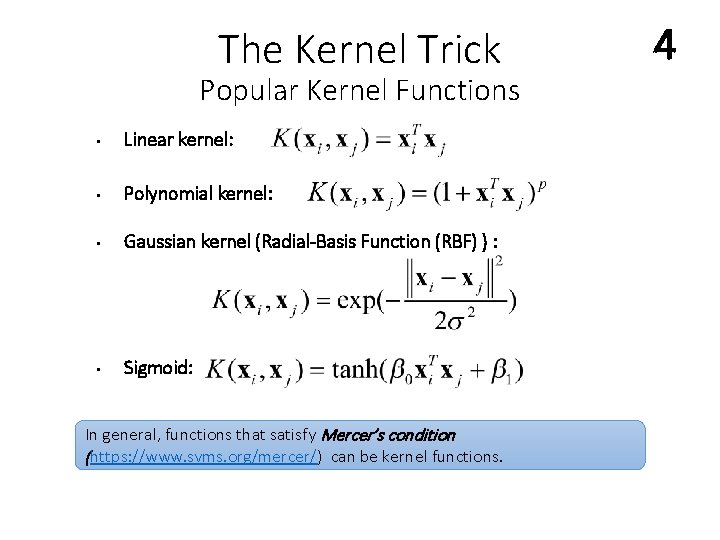

The Kernel Trick Popular Kernel Functions • Linear kernel: • Polynomial kernel: • Gaussian kernel (Radial-Basis Function (RBF) ) : • Sigmoid: In general, functions that satisfy Mercer’s condition (https: //www. svms. org/mercer/) can be kernel functions. 4

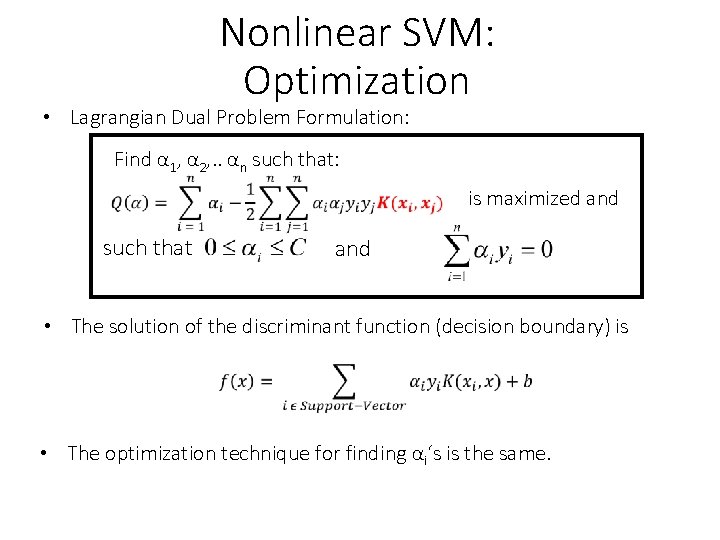

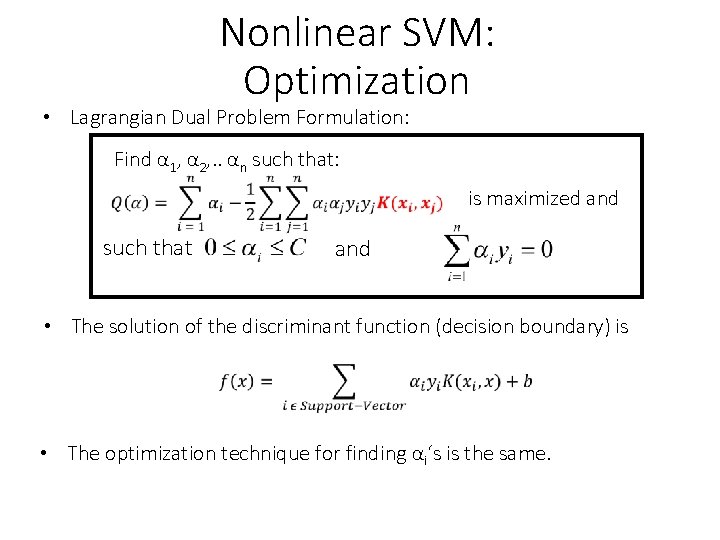

Nonlinear SVM: Optimization • Lagrangian Dual Problem Formulation: Find α 1, α 2, . . αn such that: is maximized and such that and • The solution of the discriminant function (decision boundary) is • The optimization technique for finding αi‘s is the same.

Nonlinear SVM: Summary • SVM locates a separating hyperplane in the feature space and classify points in that space • It does not need to represent the space explicitly, rather it simply defines a kernel function • The kernel function (a dot product in an “augmented” space) plays the role of the dot product in the feature space.

Support Vector Machine: Algorithm 1. Choose a kernel function 2. Choose a value for C 3. Solve the quadratic programming problem (many software packages available) 4. Construct the discriminant function from the support vectors

Summary 1. Maximum Margin Classifier • Better generalization ability & less over-fitting 2. The Kernel Trick • Map data points to higher dimensional space in order to make them linearly separable. • Since only dot product is used, we do not need to represent the mapping explicitly.

Issues • Choice of kernel function: ØGaussian or polynomial kernel is the default ØIf they are ineffective, more elaborate kernels are needed ØDomain experts can give assistance in formulating the appropriate similarity measures • Choice of kernel parameters: Øe. g. σ in Gaussian kernel Øσ is the distance between closest points with different classifications ØIn the absence of reliable criteria, applications rely on the use of a validation set or cross-validation to set such parameters. • Optimization criterion – Hard margin vs. Soft margin: ØA lengthy series of experiments in which various parameters are tested This slide is courtesy of LINK

Issues • Maximum Margin classifiers are known to be sensitive to the scaling transformation applied to the features. Therefore it is essential to normalize the data. (see slides on data transformation) • Maximum Margin classifiers are also sensible to unbalanced data • Hyper-parameter tuning (C, kernel): read

Properties of SVM • Flexibility in choosing a similarity function • Ability to deal with large data sets. Only support vectors are used to specify the separating hyperplane • Ability to handle large feature spaces. Complexity of the algorithm does not depend on the dimensionality of the feature space • Overfitting can be controlled by soft margin approach • Nice math property: a simple convex optimization problem which is guaranteed to converge to a single global solution (link)

Weakness of SVM • Sensitive to noise: A relatively small number of mislabeled examples can dramatically decrease the performance • It only considers two classes: • how to do multi-classification with SVM? • Answer: 1) with output arity m, learn m SVM’s • SVM 1 learns “Output==1” vs “Output != 1” • SVM 2 learns “Output==2” vs “Output != 2” • : • SVM m learns “Output==m” vs “Output != m” 2) To predict the output for a new input, just predict with each SVM, and find out which one puts the prediction the furthest into the positive region.

Additional Resource ■ ■ ■ Recommended lectures: LINK, LINK Additional Resource LINK Lib. SVM best implementation for SVM LINK Supplementary Material: LINK , LINK, LINK Interpretation of SVM learning: LINK, LINK SVM sheet LINK