An Introduction to Princetons New Computing Resources IBM

An Introduction to Princeton’s New Computing Resources: IBM Blue Gene, SGI Altix, and Dell Beowulf Cluster PICASso Mini-Course October 18, 2006 Curt Hillegas

Introduction • • • SGI Altix - Hecate IBM Blue Gene/L – Orangena Dell Beowulf Cluster – Della Storage Other resources

TIGRESS High Performance Computing Center Terascale Infrastructure for Groundbreaking Research in Engineering and Science

Partnerships • Princeton Institute for Computational Science and Engineering (PICSci. E) • Office of Information Technology (OIT) • School of Engineering and Applied Science (SEAS) • Lewis-Sigler Institute for Integrative Genomics • Astrophysical Sciences • Princeton Plasma Physics Laboratory (PPPL)

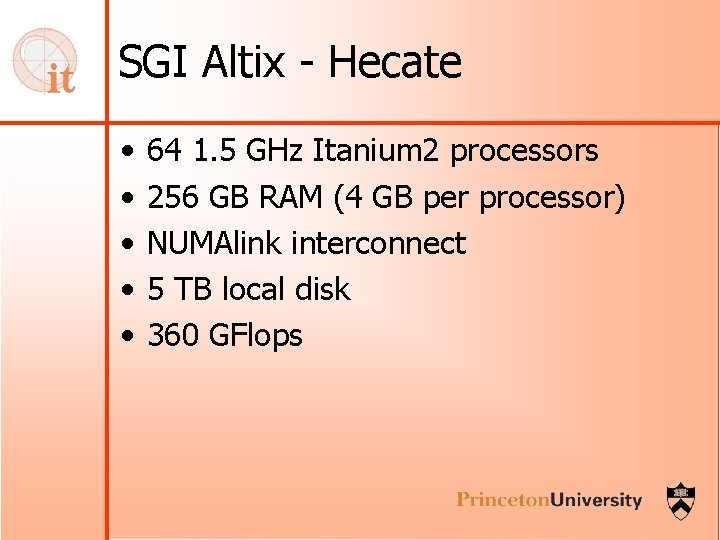

SGI Altix - Hecate • • • 64 1. 5 GHz Itanium 2 processors 256 GB RAM (4 GB per processor) NUMAlink interconnect 5 TB local disk 360 GFlops

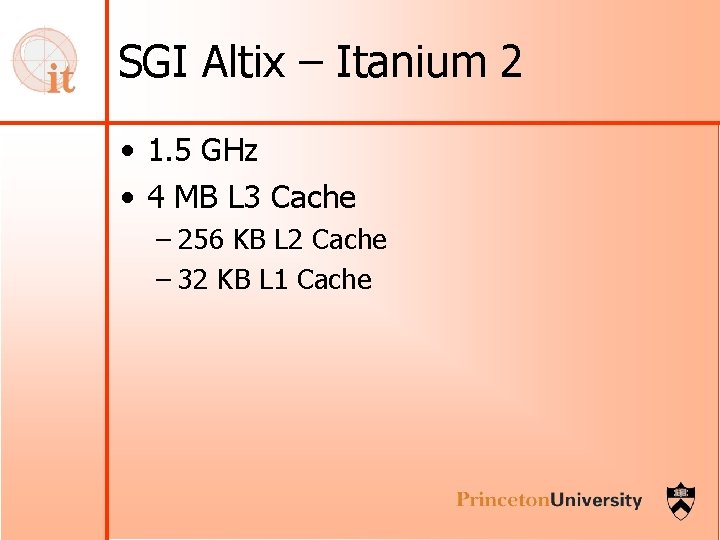

SGI Altix – Itanium 2 • 1. 5 GHz • 4 MB L 3 Cache – 256 KB L 2 Cache – 32 KB L 1 Cache

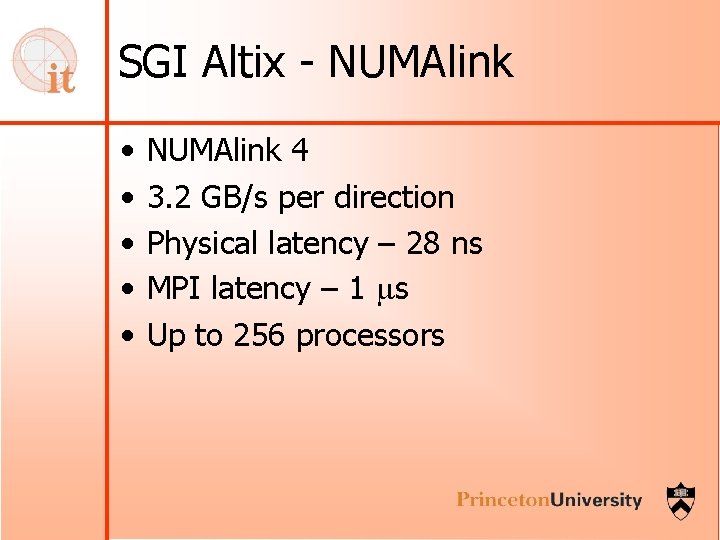

SGI Altix - NUMAlink • • • NUMAlink 4 3. 2 GB/s per direction Physical latency – 28 ns MPI latency – 1 ms Up to 256 processors

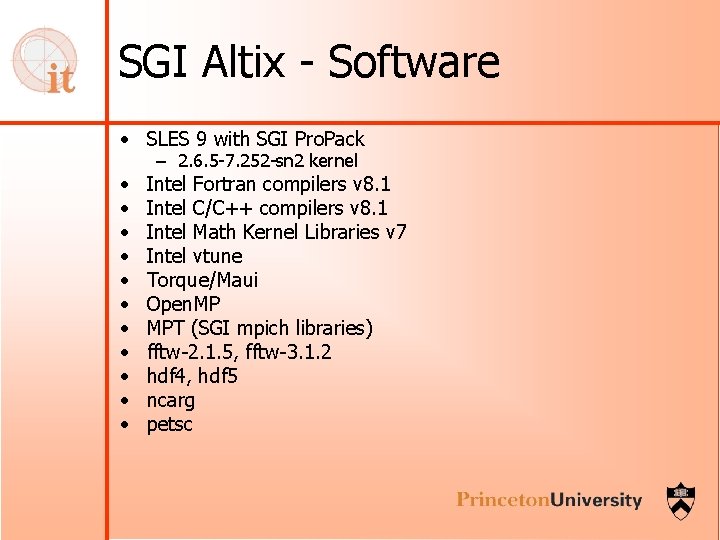

SGI Altix - Software • SLES 9 with SGI Pro. Pack • • • – 2. 6. 5 -7. 252 -sn 2 kernel Intel Fortran compilers v 8. 1 Intel C/C++ compilers v 8. 1 Intel Math Kernel Libraries v 7 Intel vtune Torque/Maui Open. MP MPT (SGI mpich libraries) fftw-2. 1. 5, fftw-3. 1. 2 hdf 4, hdf 5 ncarg petsc

IBM Blue Gene/L - Orangena • • • 2048 700 MHz Power 4 processors 1024 nodes 512 MB RAM (256 MB per processor) 5 Interconnects including a 3 D torus 8 TB local disk 4. 713 TFlops

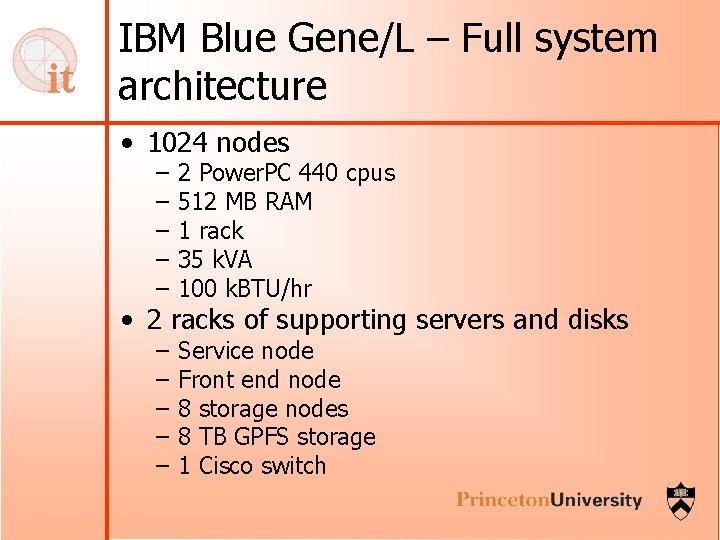

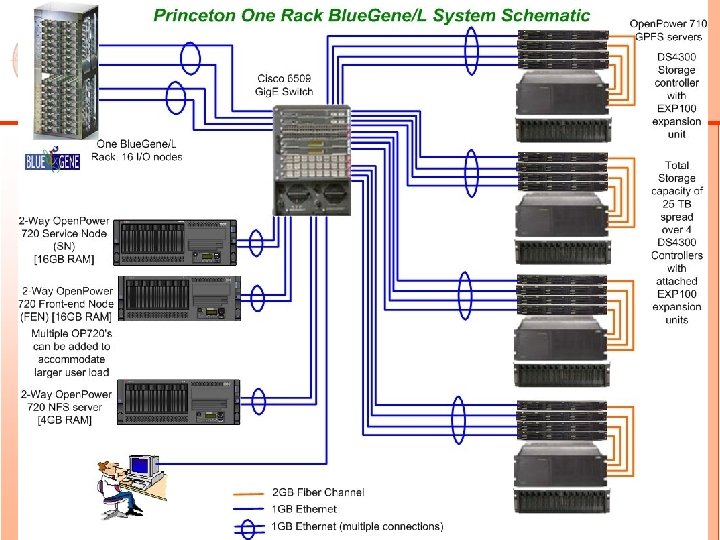

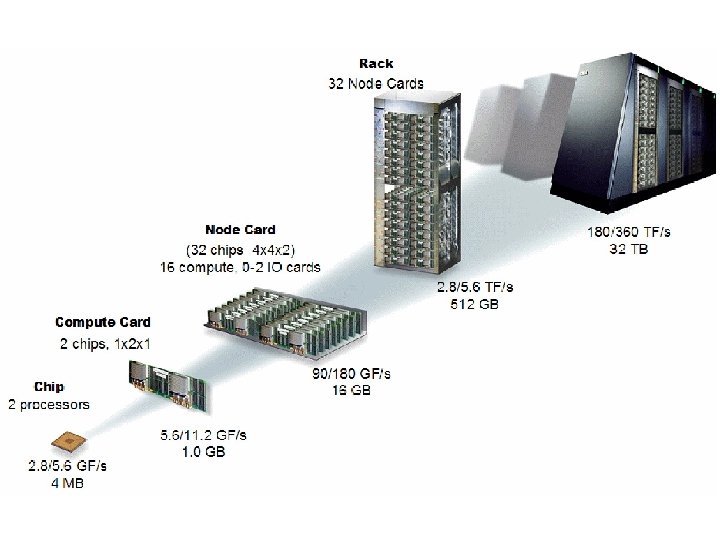

IBM Blue Gene/L – Full system architecture • 1024 nodes – – – 2 Power. PC 440 cpus 512 MB RAM 1 rack 35 k. VA 100 k. BTU/hr – – – Service node Front end node 8 storage nodes 8 TB GPFS storage 1 Cisco switch • 2 racks of supporting servers and disks

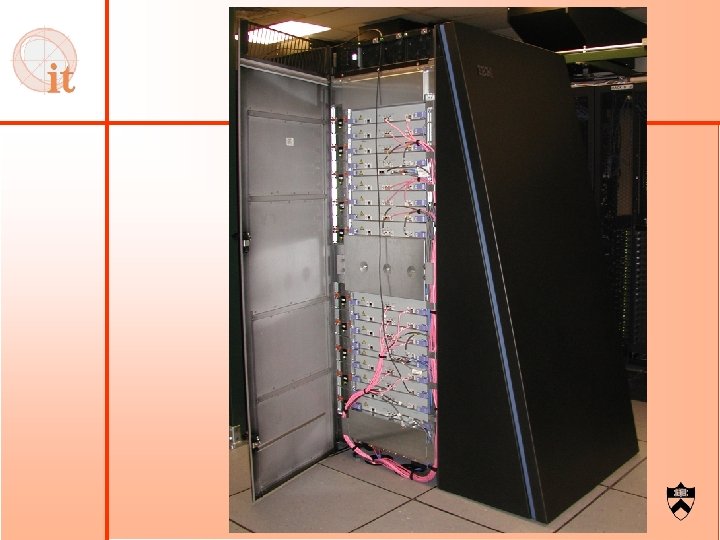

IBM Blue Gene/L

IBM Blue Gene/L - networks • • • 3 D Torus network Collective (tree) network Barrier network Functional network Service network

IBM Blue Gene/L - Software • Load. Leveler (coming soon) • mpich • XL Fortran Advanced Edition V 9. 1 – mpxlf, mpf 90, mpf 95 • XL C/C++ Advanced Edition V 7. 0 • • – Mpcc, mpxlc, mp. CC fftw-2. 1. 5 and fftw-3. 0. 1 hdf 5 -1. 6. 2 netcdf-3. 6. 0 BLAS, LAPACK, Sca. LAPACK

IBM Blue Gene/L – More… • http: //orangena. Princeton. EDU • http: //orangena-sn. Princeton. EDU

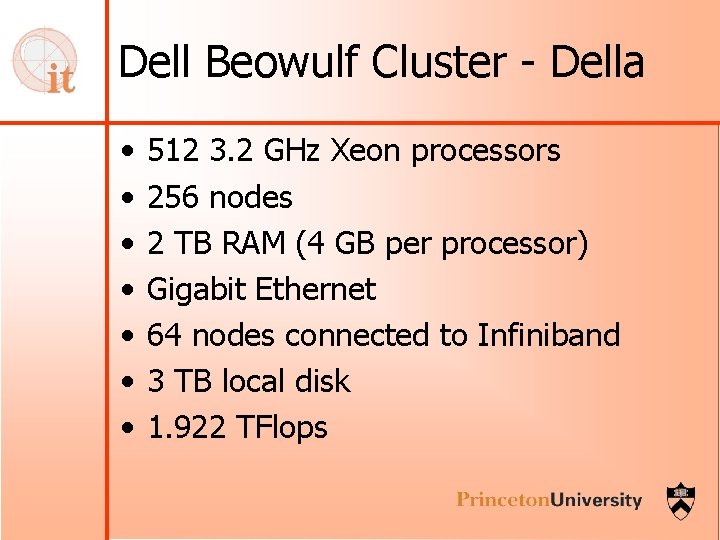

Dell Beowulf Cluster - Della • • 512 3. 2 GHz Xeon processors 256 nodes 2 TB RAM (4 GB per processor) Gigabit Ethernet 64 nodes connected to Infiniband 3 TB local disk 1. 922 TFlops

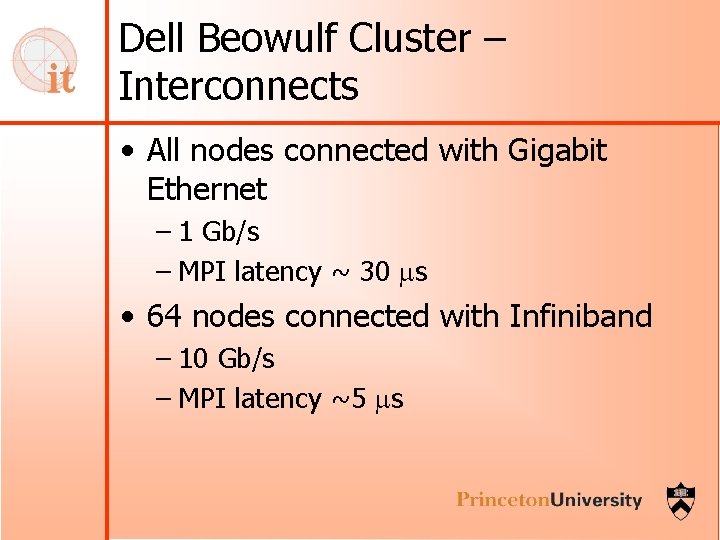

Dell Beowulf Cluster – Interconnects • All nodes connected with Gigabit Ethernet – 1 Gb/s – MPI latency ~ 30 ms • 64 nodes connected with Infiniband – 10 Gb/s – MPI latency ~5 ms

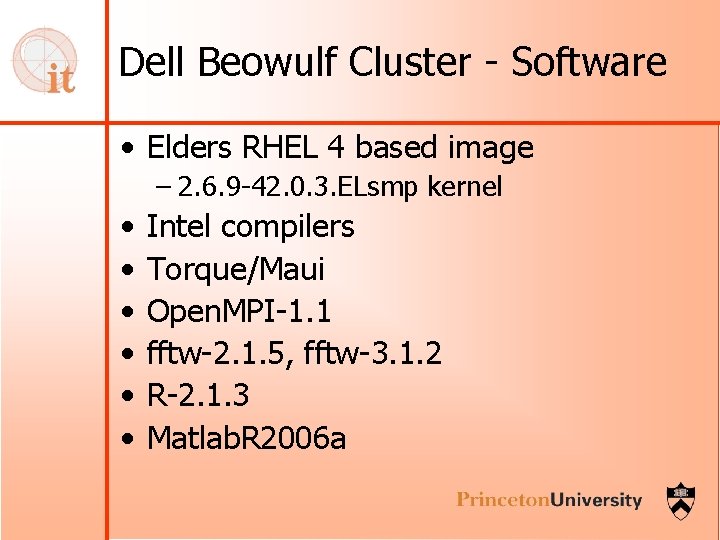

Dell Beowulf Cluster - Software • Elders RHEL 4 based image – 2. 6. 9 -42. 0. 3. ELsmp kernel • • • Intel compilers Torque/Maui Open. MPI-1. 1 fftw-2. 1. 5, fftw-3. 1. 2 R-2. 1. 3 Matlab. R 2006 a

Dell Beowulf Cluster – More… • https: //della. Princeton. EDU/ganglia

Storage • • • 38 TB delivered GPFS filesystem At least 200 MB/s Installation at the end of this month Fees to recover half the cost

Getting Access • 1 – 3 page proposal • Scientific background and merit • Resource requirements – – # concurrent cpus Total cpu hours Memory per process/total memory Disk space • A few references • curt@Princeton. EDU

Other resources • adr. OIT • Condor • Programming help

Questions

- Slides: 26