AN EXPERIMENT IN SECURITY DECISION MAKING Adrian Baldwin

AN EXPERIMENT IN SECURITY DECISION MAKING Adrian Baldwin, Yolanta Beres, Marco Casassa Mont, Simon Shiu (all HP Labs) Geoff Duggan, Hilary Johnson (University of Bath) Chris Middup (Open University) 1© Copyright 2010 Hewlett-Packard Development Company, L. P.

CONTEXT – TSB funded trust economics project: • We developed an approach (using economic and mathematical modelling) to help enterprises make “better” security decisions • A series of case studies providing good feedback and anecdotal evidence that were on a good path – Challenge – can we do better than that? – This paper: • An in depth study of a small group of security professionals (one stakeholder type), on how our approach to security decision making affects them 2 © Copyright 2010 Hewlett-Packard Development Company, L. P.

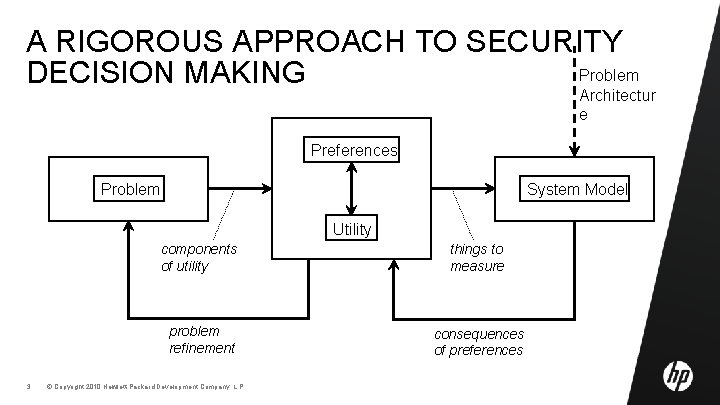

A RIGOROUS APPROACH TO SECURITY Problem DECISION MAKING Architectur e Preferences Problem System Model Utility 3 components of utility things to measure problem refinement consequences of preferences © Copyright 2010 Hewlett-Packard Development Company, L. P.

SDM HYPOTHESES Our methods will positively influence: – the conclusions or decisions made, – the thought process followed, – the justifications given, and – the confidence the stakeholder has in the final conclusions or decisions made. 4 © Copyright 2010 Hewlett-Packard Development Company, L. P.

SDM EXPERIMENT SCOPE – Measure effect on security professionals/experts (i. e. not our effect on other stakeholders nor groups/organisations) – Qualitative in depth study of decision making process (of twelve professionals) – Bundled economic framing and system modelling as a “single” intervention – Controlled experiment, i. e. two groups one intervened using our methods, one left as a control 5 © Copyright 2010 Hewlett-Packard Development Company, L. P.

THE SDM PROBLEM – Chose a problem on the security of client infrastructure – Why – we had several similar case studies that meant we knew: • it was a representative current and challenging business security problem • we had decent/realistic empirical data relating to the problem • there are interesting “trade-offs” that meant the answer is subjective and contextual and likely to be different for different stakeholders – We had 4 decision options that represented different trade-offs – We had to iterate a number of times before we had sufficient supporting material and a problem we could control, and that was rich enough! 6 © Copyright 2010 Hewlett-Packard Development Company, L. P.

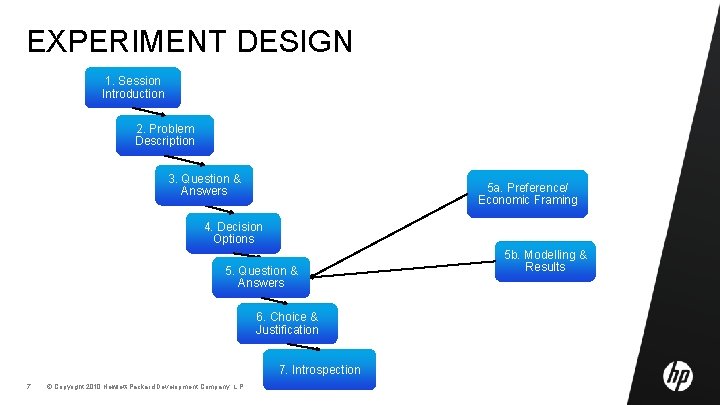

EXPERIMENT DESIGN 1. Session Introduction 2. Problem Description 3. Question & Answers 5 a. Preference/ Economic Framing 4. Decision Options 5. Question & Answers 6. Choice & Justification 7. Introspection 7 © Copyright 2010 Hewlett-Packard Development Company, L. P. 5 b. Modelling & Results

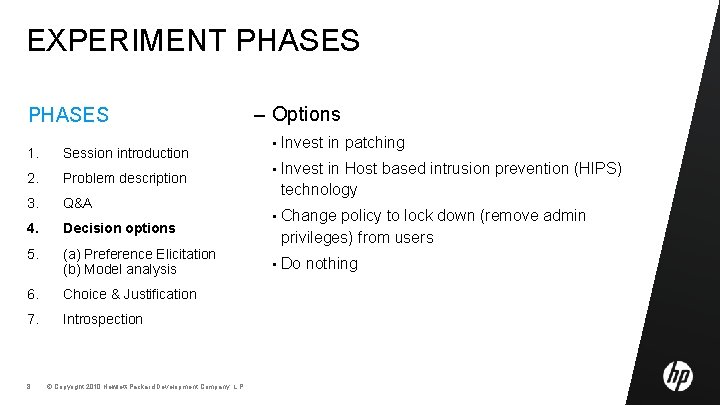

EXPERIMENT PHASES 1. Session introduction 2. Problem description 3. Q&A 4. Decision options 5. (a) Preference Elicitation (b) Model analysis 6. Choice & Justification 7. Introspection 8 © Copyright 2010 Hewlett-Packard Development Company, L. P. – Options • Invest in patching • Invest in Host based intrusion prevention (HIPS) technology • Change policy to lock down (remove admin privileges) from users • Do nothing

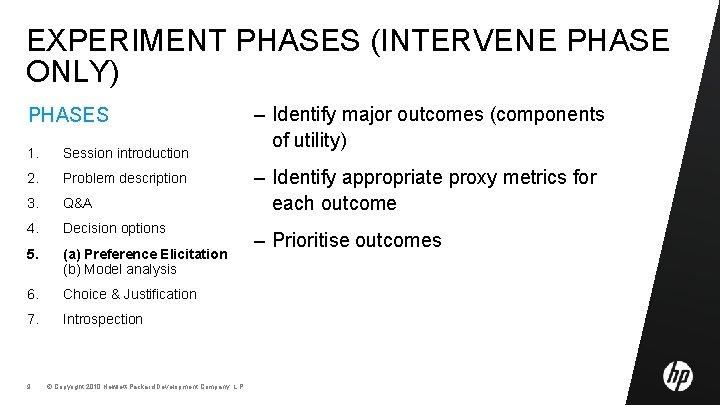

EXPERIMENT PHASES (INTERVENE PHASE ONLY) PHASES 1. Session introduction 2. Problem description 3. Q&A 4. Decision options 5. (a) Preference Elicitation (b) Model analysis 6. Choice & Justification 7. Introspection 9 © Copyright 2010 Hewlett-Packard Development Company, L. P. – Identify major outcomes (components of utility) – Identify appropriate proxy metrics for each outcome – Prioritise outcomes

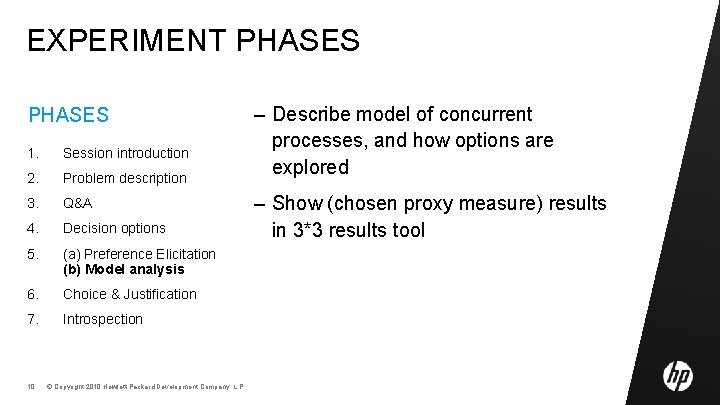

EXPERIMENT PHASES 1. Session introduction 2. Problem description 3. Q&A 4. Decision options 5. (a) Preference Elicitation (b) Model analysis 6. Choice & Justification 7. Introspection 10 © Copyright 2010 Hewlett-Packard Development Company, L. P. – Describe model of concurrent processes, and how options are explored – Show (chosen proxy measure) results in 3*3 results tool

DATA ANALYSIS – 173 questions before intervention (from all twelve participants) – 152 justifications (from all twelve participants) – 6 ordered prioritised outcomes – 12 decision options – 48 Likert scores on confidence (four from each participant) 11 © Copyright 2010 Hewlett-Packard Development Company, L. P.

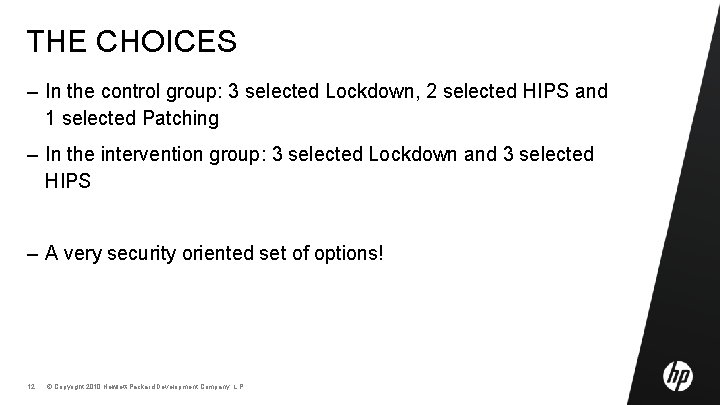

THE CHOICES – In the control group: 3 selected Lockdown, 2 selected HIPS and 1 selected Patching – In the intervention group: 3 selected Lockdown and 3 selected HIPS – A very security oriented set of options! 12 © Copyright 2010 Hewlett-Packard Development Company, L. P.

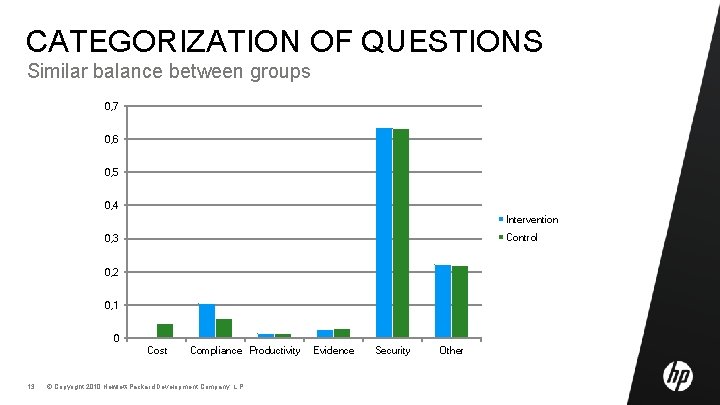

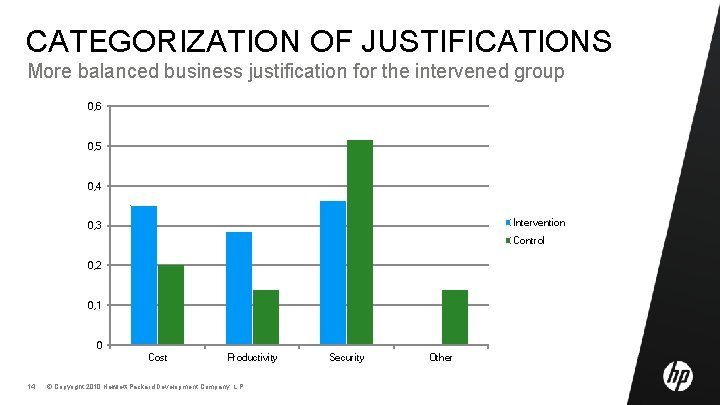

CATEGORIZATION OF QUESTIONS Similar balance between groups 0, 7 0, 6 0, 5 0, 4 Intervention Control 0, 3 0, 2 0, 1 0 Cost 13 Compliance Productivity © Copyright 2010 Hewlett-Packard Development Company, L. P. Evidence Security Other

CATEGORIZATION OF JUSTIFICATIONS More balanced business justification for the intervened group 0, 6 0, 5 0, 4 Intervention 0, 3 Control 0, 2 0, 1 0 Cost 14 Productivity © Copyright 2010 Hewlett-Packard Development Company, L. P. Security Other

WHAT DO THE DATA RESULTS SAY IN RELATION TO OUR ORIGINAL HYPOTHESIS SDM HYPOTHESES SDM RESULTS Our methods will positively influence: • the conclusions or decisions made, • the thought process followed, • the justifications given, and • the confidence the stakeholder has in the final conclusions or decisions made. 15 © Copyright 2010 Hewlett-Packard Development Company, L. P. – Not sufficient evidence that we influenced conclusions or decisions made – There is evidence we influenced the justifications given • Which in turn suggests we affected their thought processes – There was a slight (but not significant) increase in confidence in decisions made

SOME FURTHER ANALYSIS potential theoretical explanations NB on study style: smaller qualitative studies often fertile for early theoretical development – Security priority in questions (and control group’s justifications) suggest presence of confirmation bias – The intervened group’s broader justifications suggest our methods managed to counter some of this bias – The intervened group did not value the economic framing • “i’d made those trade offs already” is at odds with this result - suggests cognitive dissonance 16 © Copyright 2010 Hewlett-Packard Development Company, L. P.

CONCLUSIONS & NEXT STEPS – Encouragement that economic framing improves analysis • Assume that a study of group decision support would make this results stronger – Encouragement to use tools to support simultaneous comparison of multiple outcomes and choices – More cognitive science should be done to complement security economics – Future analysis • Study ‘question’ data to see methods/structure followed by security profession (compared with ISO 27 k, hunting for low hanging fruit, . . . ) – Future studies • To test the suggested theories • To explore the effect on multi-stakeholder decision making 17 © Copyright 2010 Hewlett-Packard Development Company, L. P.

QUESTIONS 18 © Copyright 2010 Hewlett-Packard Development Company, L. P.

EXPERIMENT PHASES (INTERVENE PHASE ONLY) PHASES 1. Session introduction 2. Problem description 3. Q&A 4. Decision options 5. (a) Preference Elicitation (b) Model analysis 6. Choice & Justification 7. Introspection 19 © Copyright 2010 Hewlett-Packard Development Company, L. P. – Identify major outcomes (components of utility) – Identify appropriate proxy metrics for each outcome – Prioritise outcomes

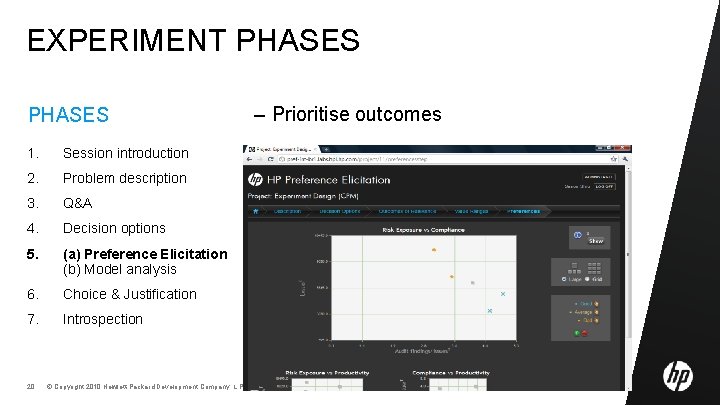

EXPERIMENT PHASES 1. Session introduction 2. Problem description 3. Q&A 4. Decision options 5. (a) Preference Elicitation (b) Model analysis 6. Choice & Justification 7. Introspection 20 © Copyright 2010 Hewlett-Packard Development Company, L. P. – Prioritise outcomes

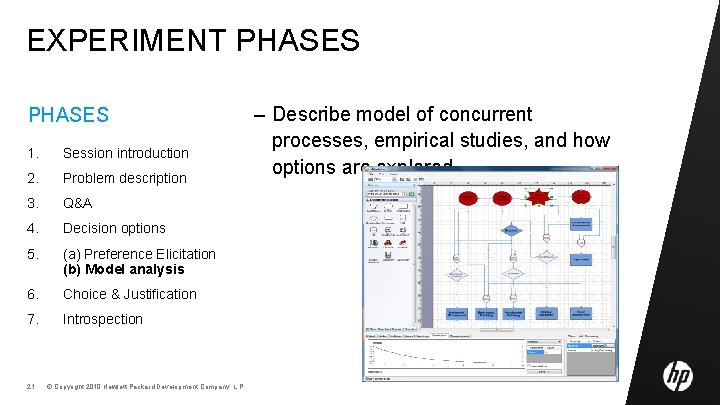

EXPERIMENT PHASES 1. Session introduction 2. Problem description 3. Q&A 4. Decision options 5. (a) Preference Elicitation (b) Model analysis 6. Choice & Justification 7. Introspection 21 © Copyright 2010 Hewlett-Packard Development Company, L. P. – Describe model of concurrent processes, empirical studies, and how options are explored

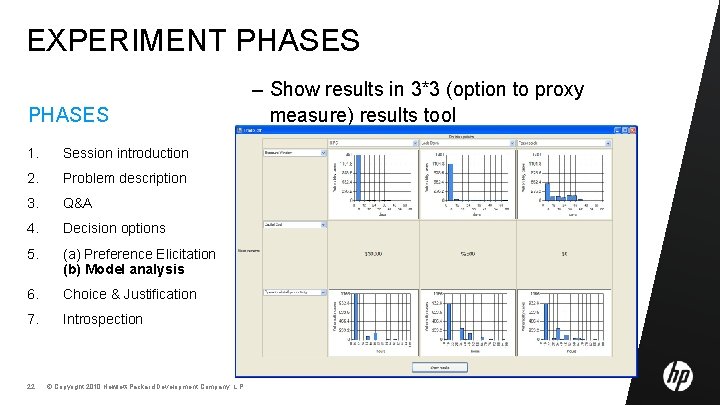

EXPERIMENT PHASES 1. Session introduction 2. Problem description 3. Q&A 4. Decision options 5. (a) Preference Elicitation (b) Model analysis 6. Choice & Justification 7. Introspection 22 © Copyright 2010 Hewlett-Packard Development Company, L. P. – Show results in 3*3 (option to proxy measure) results tool

EXPERIMENT PHASES – 10 minutes to ask any questions they deem relevant 1. Session introduction 2. Problem description 3. Q&A – Scripted answers (e. g. on history, culture, processes, architecture, business, regulations etc…) 4. Decision options 5. (a) Preference Elicitation (b) Model analysis 6. Choice & Justification 7. Introspection 23 © Copyright 2010 Hewlett-Packard Development Company, L. P. – Answers to “new” questions were added to the script for future sessions – After 10 minutes we provided “essential” information that had not been asked about – This allowed us to collect data on what questions were asked and in what order

EXPERIMENT PHASES – Choose preferred option 1. Session introduction – For each option: 2. Problem description • Pro’s 3. Q&A • Con’s – reasons why option would be bad 4. Decision options • Likert scale 1 -7 confidence in the option 5. (a) Preference Elicitation (b) Model analysis 6. Choice & Justification 7. Introspection 24 © Copyright 2010 Hewlett-Packard Development Company, L. P. – reasons why option would be good

EXPERIMENT PHASES 1. Session introduction 2. Problem description 3. Q&A 4. Decision options 5. (a) Preference Elicitation (b) Model analysis 6. Choice & Justification 7. Introspection 25 © Copyright 2010 Hewlett-Packard Development Company, L. P. – For intervened group • What difference the interventions and tools made – What information they used to reach their conclusion – Any strategies they used when asking questions

EXPERIMENT PHASES – 3 Roles: interviewer, expert and observer 1. Session introduction 2. Problem description 3. Q&A • Structure 4. Decision options • Incentives 5. (a) Preference Elicitation (b) Model analysis • Experience 6. Choice & Justification 7. Introspection 26 © Copyright 2010 Hewlett-Packard Development Company, L. P. – Interviewer explained and gathered: of session for trying hard of participant

EXPERIMENT PHASES 1. Session introduction 2. Problem description 3. Q&A 4. Decision options 5. (a) Preference Elicitation (b) Model analysis 6. Choice & Justification 7. Introspection 27 © Copyright 2010 Hewlett-Packard Development Company, L. P. – Verbally scripted, web based and written material introducing them to the security role they are being asked to play and the client infrastructure security problem the CISO has. – Whether/how to deal with rising risk from malware on client infrastructure

DATA ANALYSIS – All questions and justifications were transcribed and put in ‘random’ order – 3 experts categorised these – differences resolved through discussion • Relation to ISO 27000 • Relation to main business outcomes (compliance, productivity, cost, security risk) 28 © Copyright 2010 Hewlett-Packard Development Company, L. P.

- Slides: 28