An Evaluation Methodology Applied to the Damaging Downburst

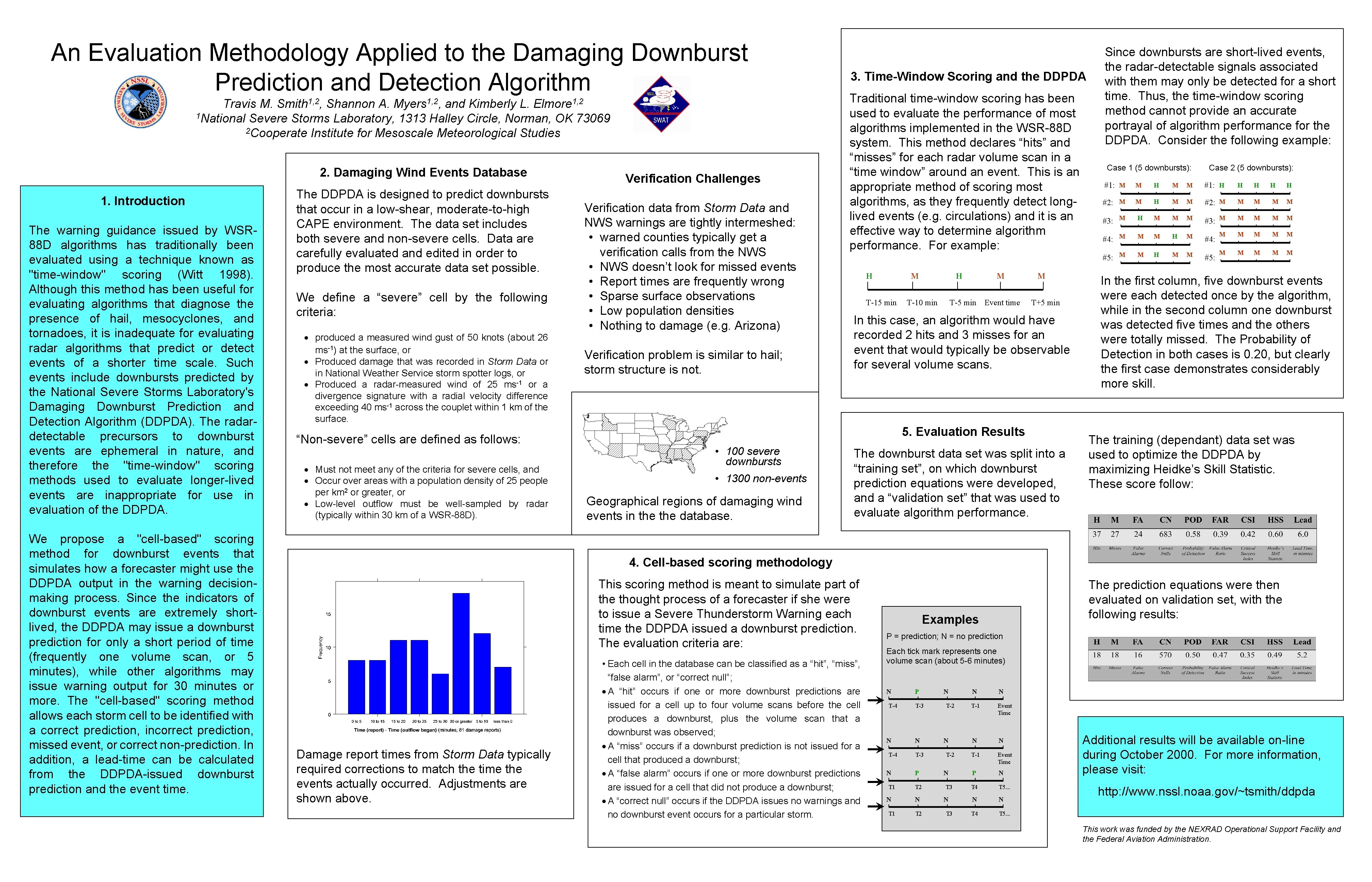

An Evaluation Methodology Applied to the Damaging Downburst Prediction and Detection Algorithm Smith 1, 2, Myers 1, 2, Elmore 1, 2 Travis M. Shannon A. and Kimberly L. 1 National Severe Storms Laboratory, 1313 Halley Circle, Norman, OK 73069 2 Cooperate Institute for Mesoscale Meteorological Studies 2. Damaging Wind Events Database 1. Introduction The warning guidance issued by WSR 88 D algorithms has traditionally been evaluated using a technique known as "time-window" scoring (Witt 1998). Although this method has been useful for evaluating algorithms that diagnose the presence of hail, mesocyclones, and tornadoes, it is inadequate for evaluating radar algorithms that predict or detect events of a shorter time scale. Such events include downbursts predicted by the National Severe Storms Laboratory's Damaging Downburst Prediction and Detection Algorithm (DDPDA). The radardetectable precursors to downburst events are ephemeral in nature, and therefore the "time-window" scoring methods used to evaluate longer-lived events are inappropriate for use in evaluation of the DDPDA. We propose a "cell-based" scoring method for downburst events that simulates how a forecaster might use the DDPDA output in the warning decisionmaking process. Since the indicators of downburst events are extremely shortlived, the DDPDA may issue a downburst prediction for only a short period of time (frequently one volume scan, or 5 minutes), while other algorithms may issue warning output for 30 minutes or more. The "cell-based" scoring method allows each storm cell to be identified with a correct prediction, incorrect prediction, missed event, or correct non-prediction. In addition, a lead-time can be calculated from the DDPDA-issued downburst prediction and the event time. The DDPDA is designed to predict downbursts that occur in a low-shear, moderate-to-high CAPE environment. The data set includes both severe and non-severe cells. Data are carefully evaluated and edited in order to produce the most accurate data set possible. We define a “severe” cell by the following criteria: · produced a measured wind gust of 50 knots (about 26 ms-1) at the surface, or · Produced damage that was recorded in Storm Data or in National Weather Service storm spotter logs, or · Produced a radar-measured wind of 25 ms-1 or a divergence signature with a radial velocity difference exceeding 40 ms-1 across the couplet within 1 km of the surface. “Non-severe” cells are defined as follows: · Must not meet any of the criteria for severe cells, and · Occur over areas with a population density of 25 people per km 2 or greater, or · Low-level outflow must be well-sampled by radar (typically within 30 km of a WSR-88 D). Verification Challenges Verification data from Storm Data and NWS warnings are tightly intermeshed: • warned counties typically get a verification calls from the NWS • NWS doesn’t look for missed events • Report times are frequently wrong • Sparse surface observations • Low population densities • Nothing to damage (e. g. Arizona) Verification problem is similar to hail; storm structure is not. 3. Time-Window Scoring and the DDPDA Traditional time-window scoring has been used to evaluate the performance of most algorithms implemented in the WSR-88 D system. This method declares “hits” and “misses” for each radar volume scan in a “time window” around an event. This is an appropriate method of scoring most algorithms, as they frequently detect longlived events (e. g. circulations) and it is an effective way to determine algorithm performance. For example: H M T-15 min H T-10 min M T-5 min Event time M T+5 min In this case, an algorithm would have recorded 2 hits and 3 misses for an event that would typically be observable for several volume scans. 5. Evaluation Results • 100 severe downbursts • 1300 non-events Geographical regions of damaging wind events in the database. The downburst data set was split into a “training set”, on which downburst prediction equations were developed, and a “validation set” that was used to evaluate algorithm performance. Since downbursts are short-lived events, the radar-detectable signals associated with them may only be detected for a short time. Thus, the time-window scoring method cannot provide an accurate portrayal of algorithm performance for the DDPDA. Consider the following example: Case 1 (5 downbursts): Case 2 (5 downbursts): #1: M M H M M #1: H H H #2: M M H M M #2: M M M #3: M H M M M #3: M M M #4: M M M H M M M #5: M M H M M M M #4: #5: H H In the first column, five downburst events were each detected once by the algorithm, while in the second column one downburst was detected five times and the others were totally missed. The Probability of Detection in both cases is 0. 20, but clearly the first case demonstrates considerably more skill. The training (dependant) data set was used to optimize the DDPDA by maximizing Heidke’s Skill Statistic. These score follow: 4. Cell-based scoring methodology This scoring method is meant to simulate part of the thought process of a forecaster if she were to issue a Severe Thunderstorm Warning each time the DDPDA issued a downburst prediction. The evaluation criteria are: Damage report times from Storm Data typically required corrections to match the time the events actually occurred. Adjustments are shown above. • Each cell in the database can be classified as a “hit”, “miss”, “false alarm”, or “correct null”; · A “hit” occurs if one or more downburst predictions are issued for a cell up to four volume scans before the cell produces a downburst, plus the volume scan that a downburst was observed; · A “miss” occurs if a downburst prediction is not issued for a cell that produced a downburst; · A “false alarm” occurs if one or more downburst predictions are issued for a cell that did not produce a downburst; · A “correct null” occurs if the DDPDA issues no warnings and no downburst event occurs for a particular storm. The prediction equations were then evaluated on validation set, with the following results: Examples P = prediction; N = no prediction Each tick mark represents one volume scan (about 5 -6 minutes) N T-4 N T 1 P T-3 N T-3 P T 2 N T-2 N T 3 N N T-1 Event Time P N T 4 T 5. . . N N T 4 T 5. . . Additional results will be available on-line during October 2000. For more information, please visit: http: //www. nssl. noaa. gov/~tsmith/ddpda This work was funded by the NEXRAD Operational Support Facility and the Federal Aviation Administration.

- Slides: 1