AMPI Adaptive MPI Gengbin Zheng Parallel Programming Laboratory

AMPI: Adaptive MPI Gengbin Zheng Parallel Programming Laboratory University of Illinois at Urbana-Champaign

Outline Motivation AMPI: Overview Benefits of AMPI Converting MPI Codes to AMPI Handling Global/Static Variables Running AMPI Programs AMPI Status AMPI References, Conclusion AMPI: Adaptive MPI 2

AMPI: Motivation Challenges New generation parallel applications are: Dynamically varying: load shifting during execution Adaptively refined Composed of multi-physics modules Typical MPI Implementations: Not naturally suitable for dynamic applications Available processor set may not match algorithm Alternative: Adaptive MPI (AMPI) MPI & Charm++ virtualization: VP (“Virtual Processors”) AMPI: Adaptive MPI 3

Outline Motivation AMPI: Overview Benefits of AMPI Converting MPI Codes to AMPI Handling Global/Static Variables Running AMPI Programs AMPI Status AMPI References, Conclusion AMPI: Adaptive MPI 4

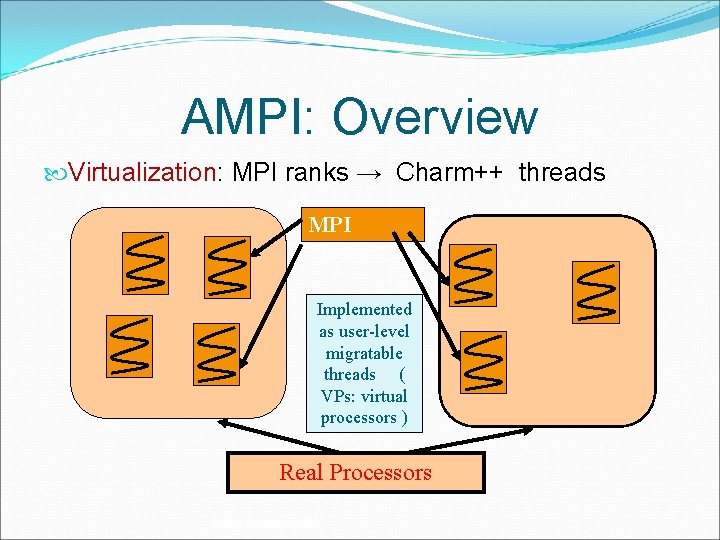

AMPI: Overview Virtualization: MPI ranks → Charm++ threads MPI “tasks” Implemented as user-level migratable threads ( VPs: virtual processors ) Real Processors AMPI: Adaptive MPI 5

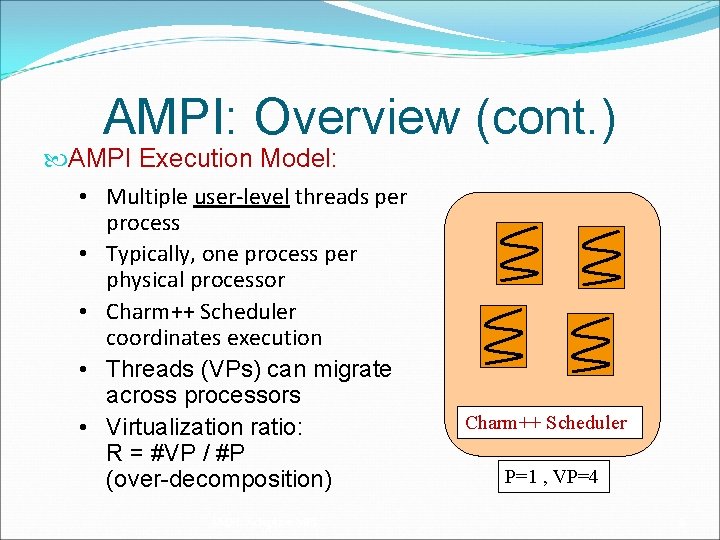

AMPI: Overview (cont. ) AMPI Execution Model: • Multiple user-level threads per process • Typically, one process per physical processor • Charm++ Scheduler coordinates execution • Threads (VPs) can migrate across processors • Virtualization ratio: R = #VP / #P (over-decomposition) AMPI: Adaptive MPI Charm++ Scheduler P=1 , VP=4 6

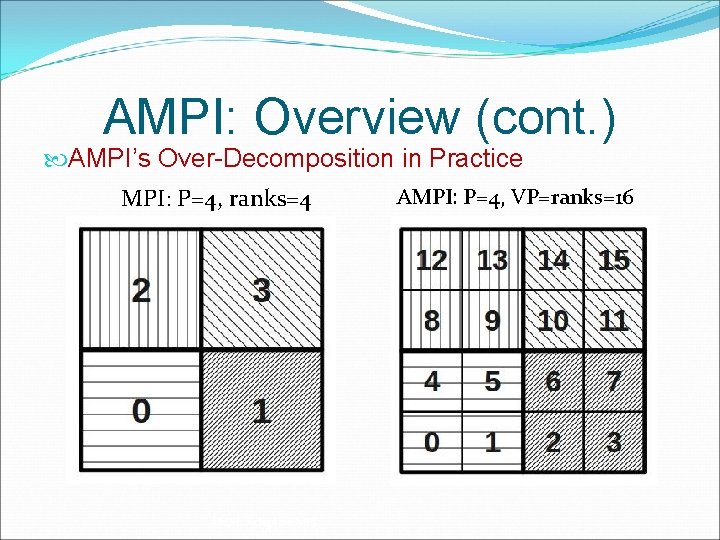

AMPI: Overview (cont. ) AMPI’s Over-Decomposition in Practice MPI: P=4, ranks=4 AMPI: Adaptive MPI AMPI: P=4, VP=ranks=16 7

Outline Motivation AMPI: Overview Benefits of AMPI Converting MPI Codes to AMPI Handling Global/Static Variables Running AMPI Programs AMPI Status AMPI References, Conclusion AMPI: Adaptive MPI 8

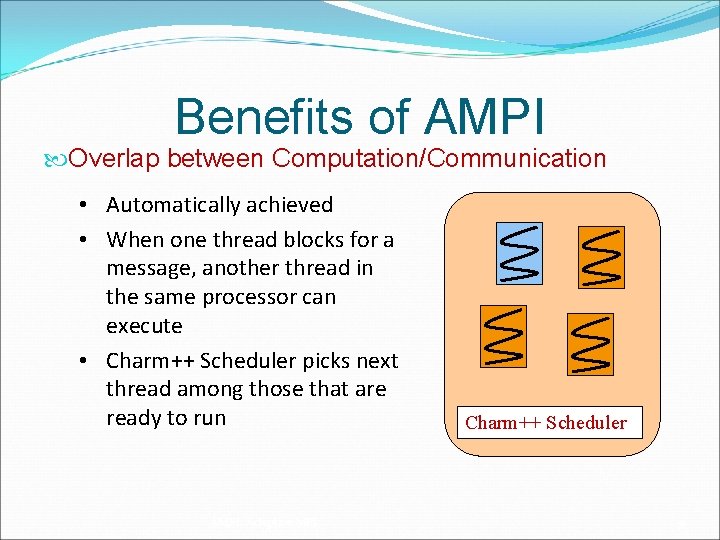

Benefits of AMPI Overlap between Computation/Communication • Automatically achieved • When one thread blocks for a message, another thread in the same processor can execute • Charm++ Scheduler picks next thread among those that are ready to run AMPI: Adaptive MPI Charm++ Scheduler 9

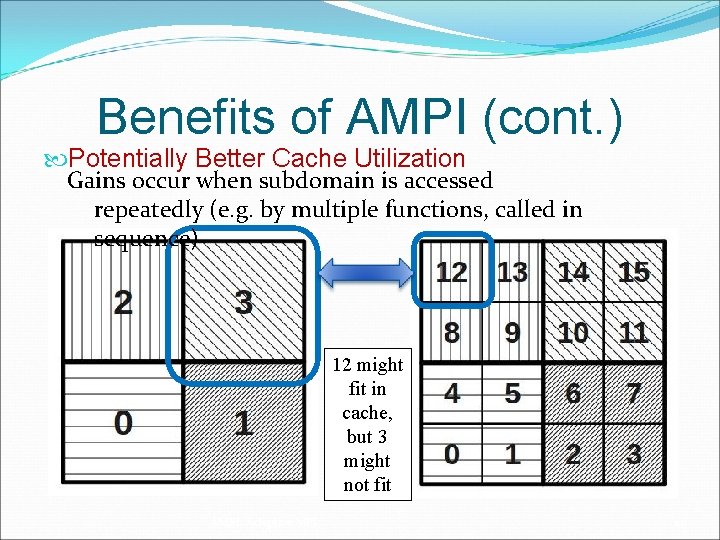

Benefits of AMPI (cont. ) Potentially Better Cache Utilization Gains occur when subdomain is accessed repeatedly (e. g. by multiple functions, called in sequence) 12 might fit in cache, but 3 might not fit AMPI: Adaptive MPI 10

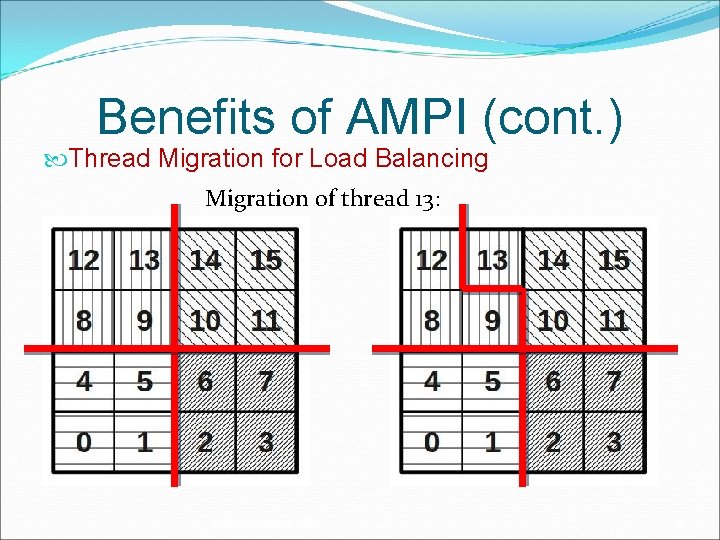

Benefits of AMPI (cont. ) Thread Migration for Load Balancing Migration of thread 13: AMPI: Adaptive MPI 11

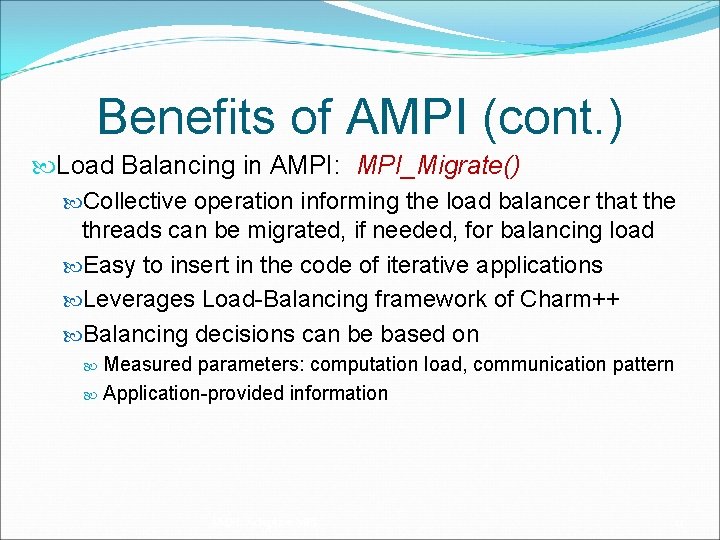

Benefits of AMPI (cont. ) Load Balancing in AMPI: MPI_Migrate() Collective operation informing the load balancer that the threads can be migrated, if needed, for balancing load Easy to insert in the code of iterative applications Leverages Load-Balancing framework of Charm++ Balancing decisions can be based on Measured parameters: computation load, communication pattern Application-provided information AMPI: Adaptive MPI 12

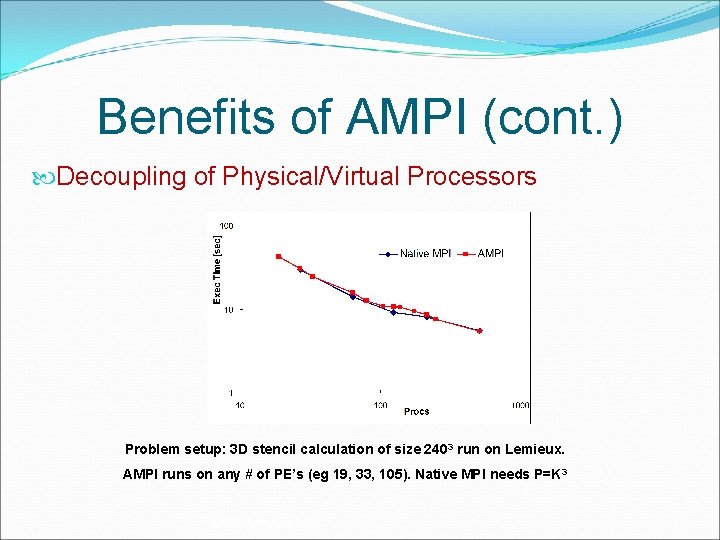

Benefits of AMPI (cont. ) Decoupling of Physical/Virtual Processors Problem setup: 3 D stencil calculation of size 2403 run on Lemieux. AMPI runs on any # of PE’s (eg 19, 33, 105). Native MPI needs P=K 3 AMPI: Adaptive MPI 13

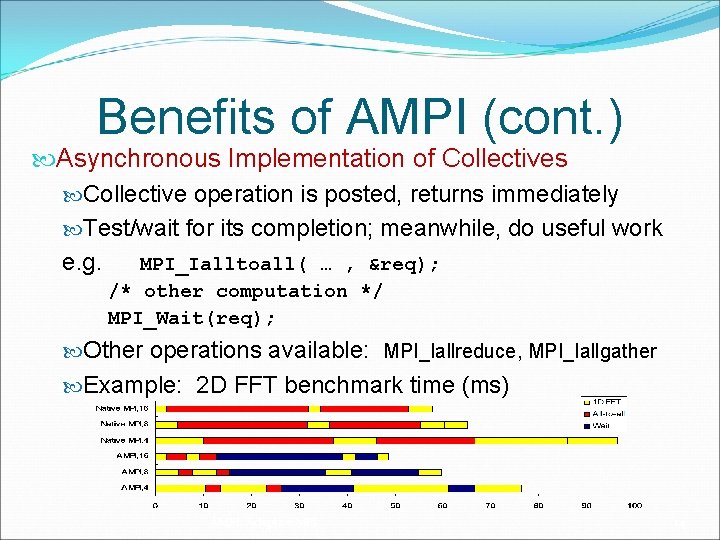

Benefits of AMPI (cont. ) Asynchronous Implementation of Collectives Collective operation is posted, returns immediately Test/wait for its completion; meanwhile, do useful work e. g. MPI_Ialltoall( … , &req); /* other computation */ MPI_Wait(req); Other operations available: MPI_Iallreduce, MPI_Iallgather Example: 2 D FFT benchmark time (ms) AMPI: Adaptive MPI 14

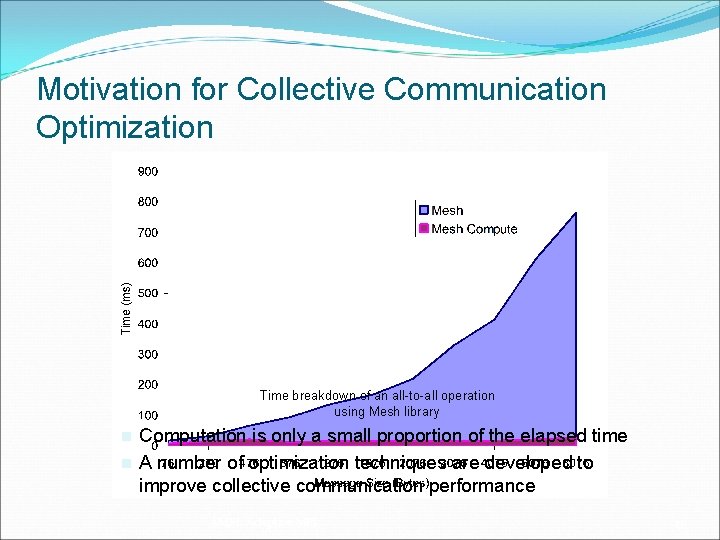

Motivation for Collective Communication Optimization Time breakdown of an all-to-all operation using Mesh library n n Computation is only a small proportion of the elapsed time A number of optimization techniques are developed to improve collective communication performance AMPI: Adaptive MPI 15

Benefits of AMPI (cont. ) Fault Tolerance via Checkpoint/Restart State of application checkpointed to disk or memory Capable of restarting on different number of physical processors! Synchronous checkpoint, collective call: In-disk: MPI_Checkpoint(DIRNAME) In-memory: MPI_Mem. Checkpoint(void) Restart: In-disk: charmrun +p 4 prog +restart DIRNAME In-memory: automatic restart upon failure detection AMPI: Adaptive MPI 16

Outline Motivation AMPI: Overview Benefits of AMPI Converting MPI Codes to AMPI Handling Global/Static Variables Running AMPI Programs AMPI Status AMPI References, Conclusion AMPI: Adaptive MPI 17

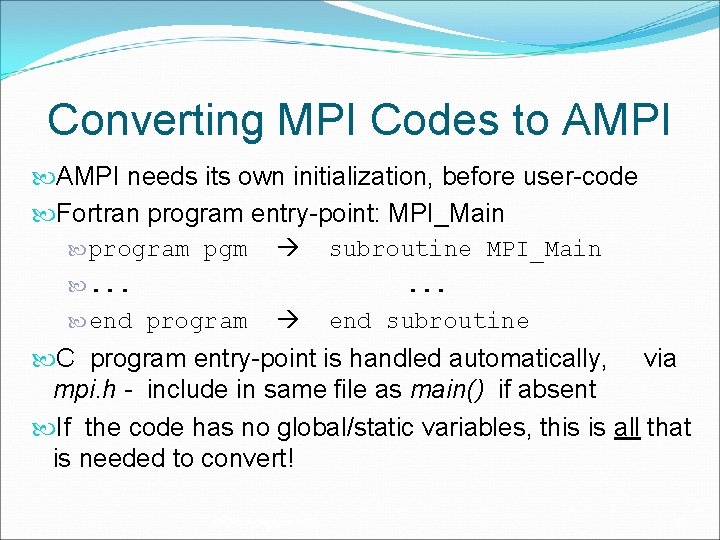

Converting MPI Codes to AMPI needs its own initialization, before user-code Fortran program entry-point: MPI_Main program pgm subroutine MPI_Main . . . end program end subroutine C program entry-point is handled automatically, via mpi. h - include in same file as main() if absent If the code has no global/static variables, this is all that is needed to convert! AMPI: Adaptive MPI 18

Outline Motivation AMPI: Overview Benefits of AMPI Converting MPI Codes to AMPI Handling Global/Static Variables Running AMPI Programs AMPI Status AMPI References, Conclusion AMPI: Adaptive MPI 19

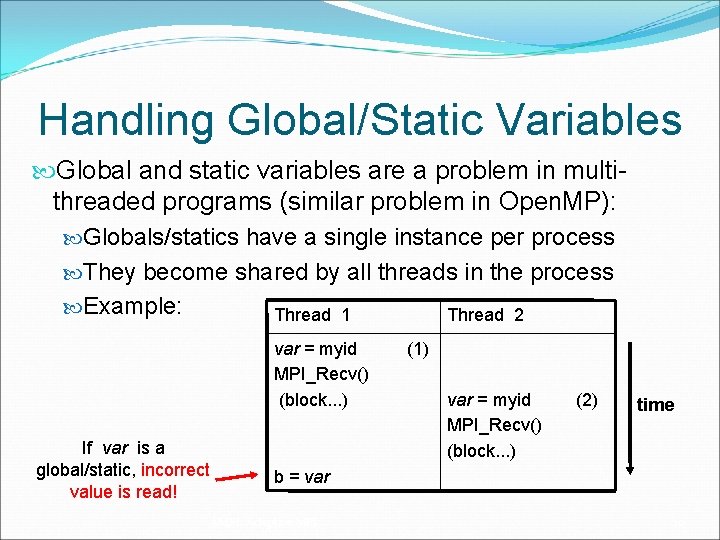

Handling Global/Static Variables Global and static variables are a problem in multithreaded programs (similar problem in Open. MP): Globals/statics have a single instance per process They become shared by all threads in the process Example: Thread 1 Thread 2 var = myid MPI_Recv() (block. . . ) If var is a global/static, incorrect value is read! (1) var = myid MPI_Recv() (block. . . ) (2) time b = var AMPI: Adaptive MPI 20

Handling Global/Static Variables (cont. ) • General Solution: Privatize variables in thread • Approaches: a) Swap global variables b) Source-to-source transformation via Photran c) Use TLS scheme (in development) Specific approach to use must be decided on a caseby-case basis AMPI: Adaptive MPI 21

Handling Global/Static Variables (cont. ) First Approach: Swap global variables Leverage ELF – Execut. & Linking Format (e. g. Linux) ELF maintains a Global Offset Table (GOT) for globals Switch GOT contents at thread context-switch Implemented in AMPI via build flag –swapglobals + No source code changes needed + Works with any language (C, C++, Fortran, etc) - Does not handle static variables - Context-switch overhead grows with num. variables AMPI: Adaptive MPI 22

Handling Global/Static Variables (cont. ) Second Approach: Source-to-source transform Move globals/statics to an object, then pass it around Automatic solution for Fortran codes: Photran Similar idea can be applied to C/C++ codes + Totally portable across systems/compilers + May improve locality and cache utilization + No extra overhead at context-switch - Requires new implementation for each language AMPI: Adaptive MPI 23

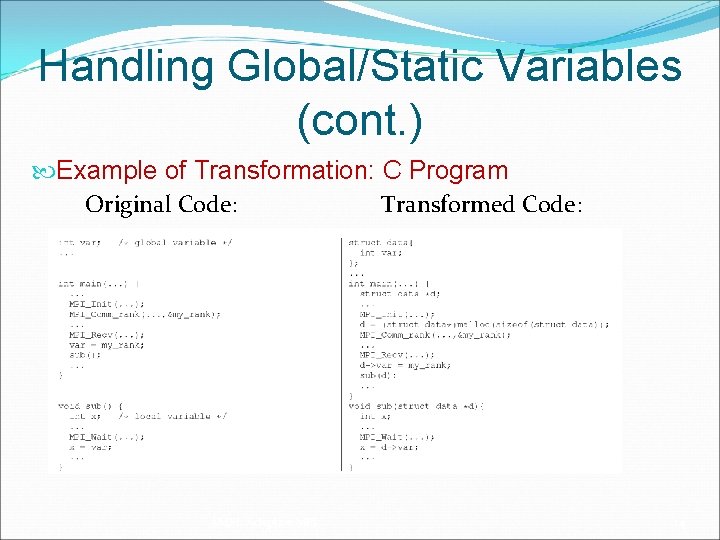

Handling Global/Static Variables (cont. ) Example of Transformation: C Program Original Code: Transformed Code: AMPI: Adaptive MPI 24

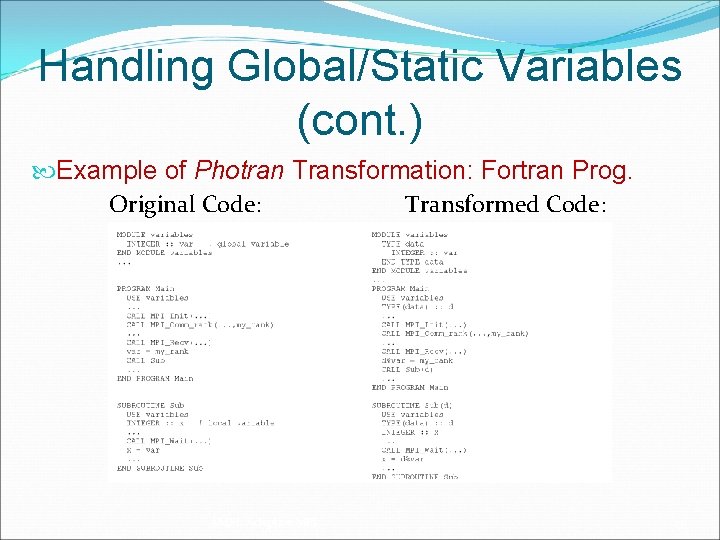

Handling Global/Static Variables (cont. ) Example of Photran Transformation: Fortran Prog. Original Code: Transformed Code: AMPI: Adaptive MPI 25

Handling Global/Static Variables (cont. ) Photran Transformation Tool Eclipse-based IDE, implemented in Java Incorporates automatic refactorings for Fortran codes Operates on “pure” Fortran 90 programs Code transformation infrastructure: Construct rewriteable ASTs are augmented with binding information Source: Stas Negara & Ralph Johnson http: //www. eclipse. org/photran/ AMPI: Adaptive MPI 26

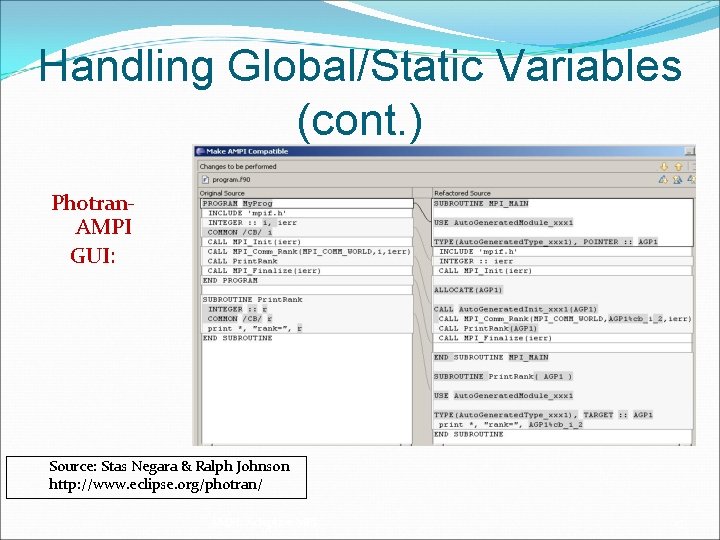

Handling Global/Static Variables (cont. ) Photran. AMPI GUI: Source: Stas Negara & Ralph Johnson http: //www. eclipse. org/photran/ AMPI: Adaptive MPI 27

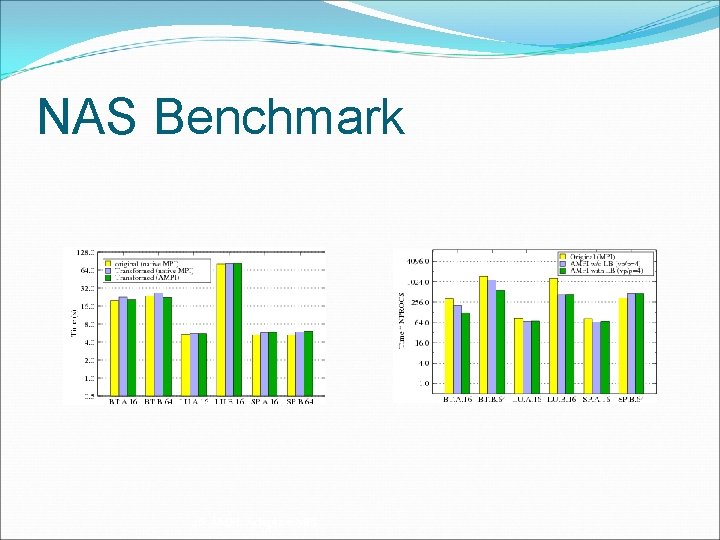

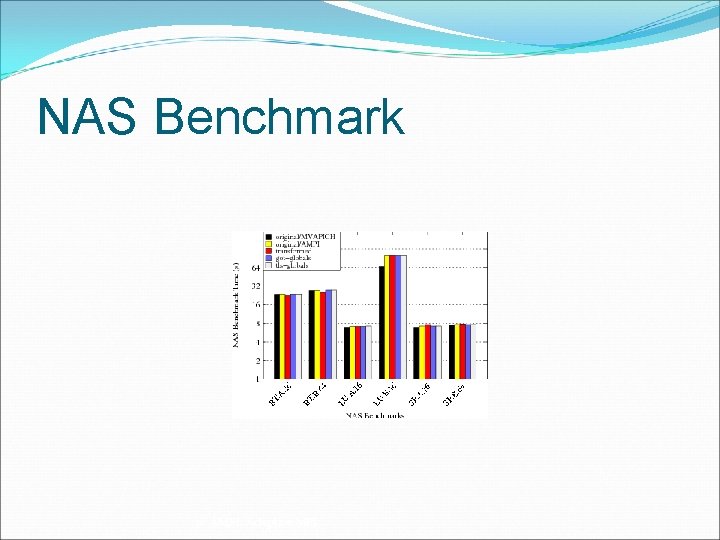

NAS Benchmark 28 AMPI: Adaptive MPI

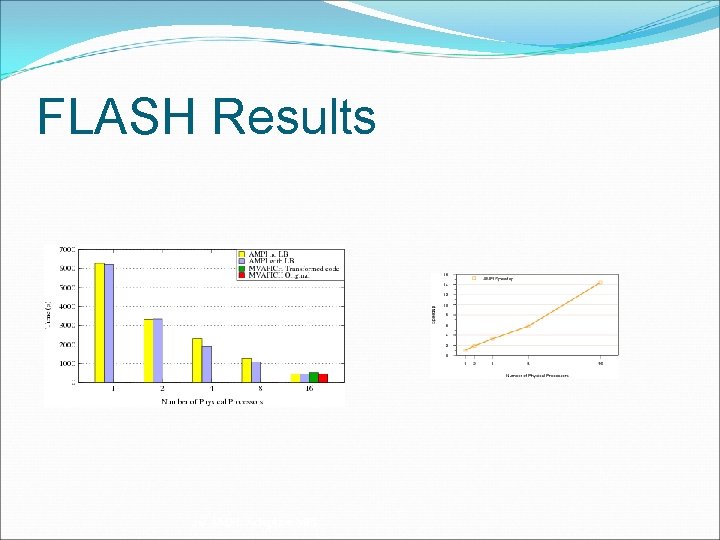

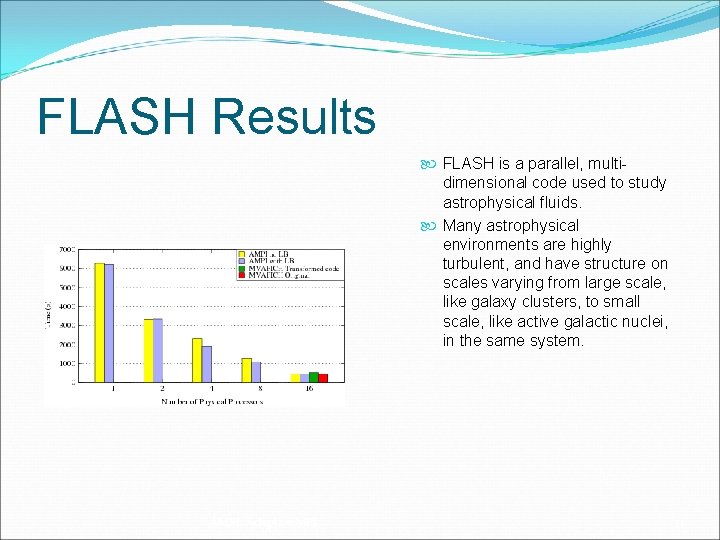

FLASH Results 29 AMPI: Adaptive MPI

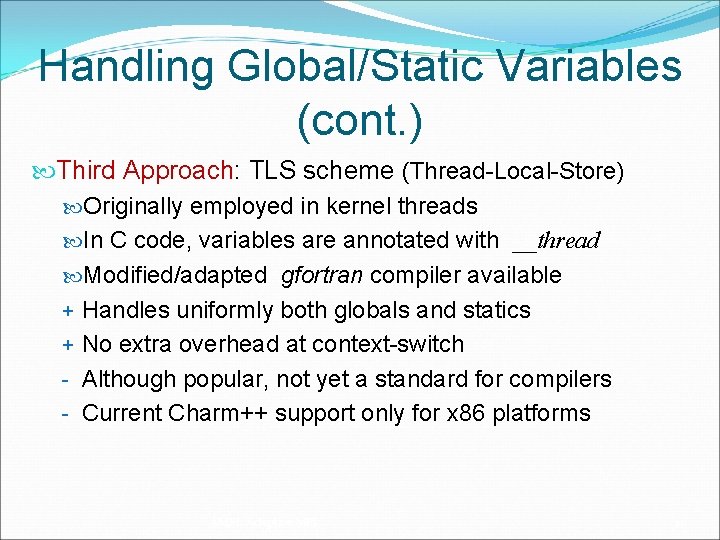

Handling Global/Static Variables (cont. ) Third Approach: TLS scheme (Thread-Local-Store) Originally employed in kernel threads In C code, variables are annotated with __thread Modified/adapted gfortran compiler available + Handles uniformly both globals and statics + No extra overhead at context-switch - Although popular, not yet a standard for compilers - Current Charm++ support only for x 86 platforms AMPI: Adaptive MPI 30

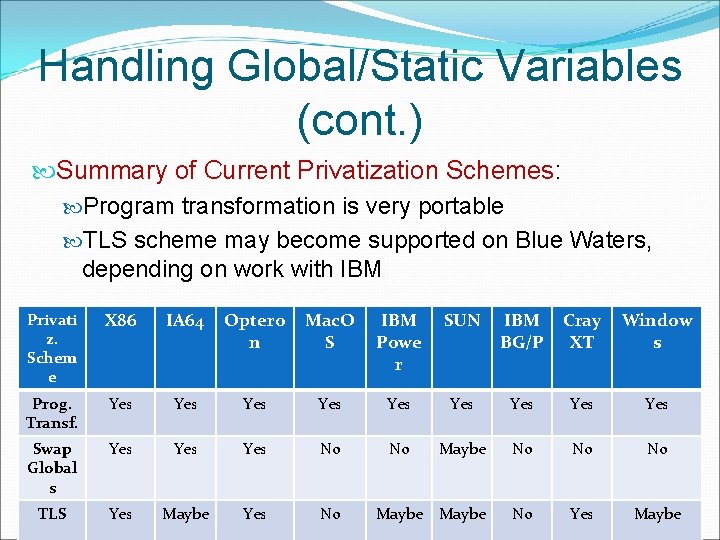

Handling Global/Static Variables (cont. ) Summary of Current Privatization Schemes: Program transformation is very portable TLS scheme may become supported on Blue Waters, depending on work with IBM Privati z. Schem e X 86 IA 64 Optero n Mac. O S IBM Powe r SUN IBM BG/P Cray XT Window s Prog. Transf. Yes Yes Yes Swap Global s Yes Yes No No Maybe No No No TLS Yes Maybe No Yes Maybe 31 Maybe AMPI: Yes No Adaptive MPI

NAS Benchmark 32 AMPI: Adaptive MPI

FLASH Results FLASH is a parallel, multidimensional code used to study astrophysical fluids. Many astrophysical environments are highly turbulent, and have structure on scales varying from large scale, like galaxy clusters, to small scale, like active galactic nuclei, in the same system. AMPI: Adaptive MPI 33

Outline Motivation AMPI: Overview Benefits of AMPI Converting MPI Codes to AMPI Handling Global/Static Variables Running AMPI Programs AMPI Status AMPI References, Conclusion AMPI: Adaptive MPI 34

Object Migration 35 AMPI: Adaptive MPI

Object Migration How do we move work between processors? Application-specific methods E. g. , move rows of sparse matrix, elements of FEM computation Often very difficult for application Application-independent methods E. g. , move entire virtual processor Application’s problem decomposition doesn’t change AMPI: Adaptive MPI 36

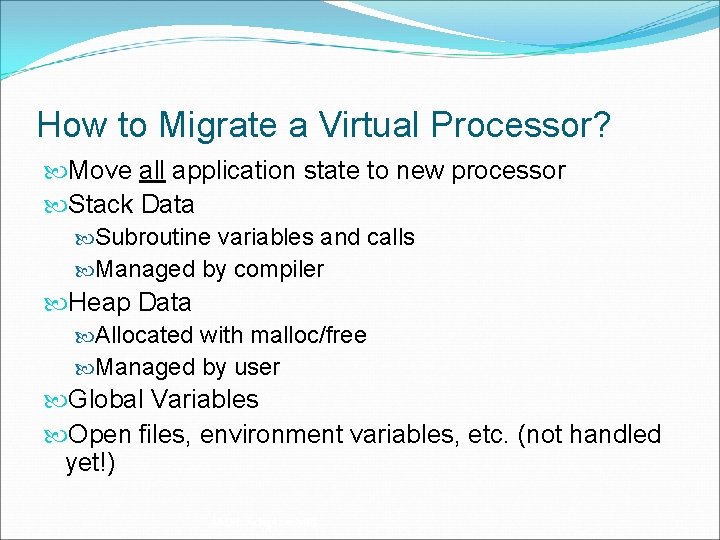

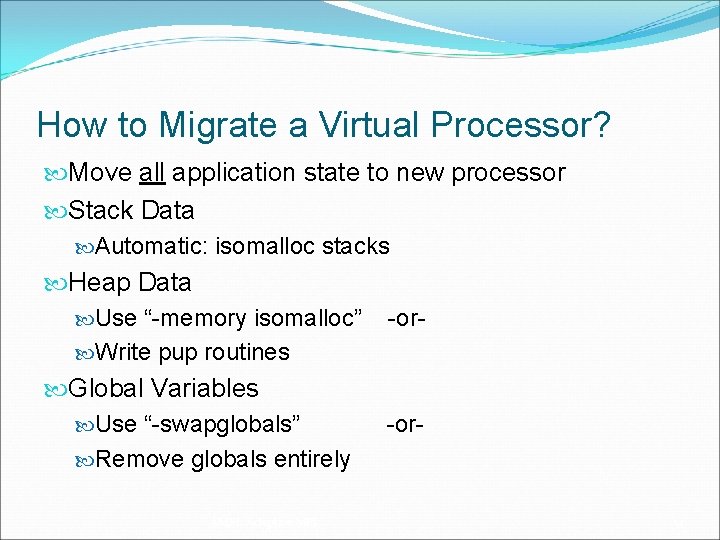

How to Migrate a Virtual Processor? Move all application state to new processor Stack Data Subroutine variables and calls Managed by compiler Heap Data Allocated with malloc/free Managed by user Global Variables Open files, environment variables, etc. (not handled yet!) AMPI: Adaptive MPI 37

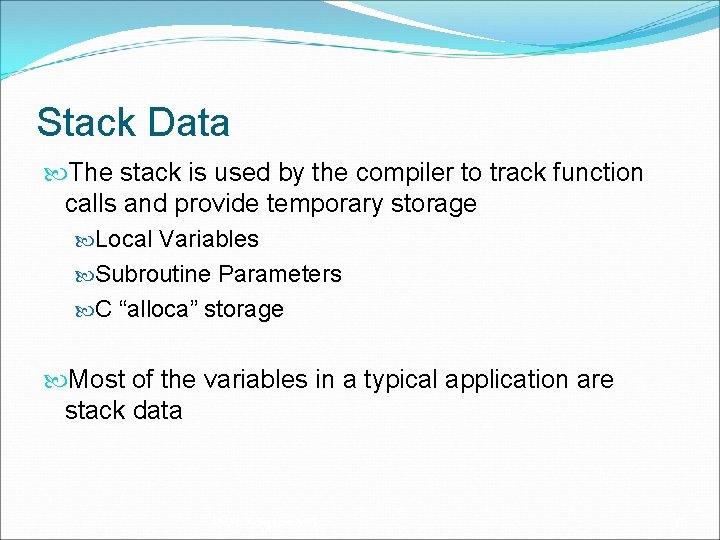

Stack Data The stack is used by the compiler to track function calls and provide temporary storage Local Variables Subroutine Parameters C “alloca” storage Most of the variables in a typical application are stack data AMPI: Adaptive MPI 38

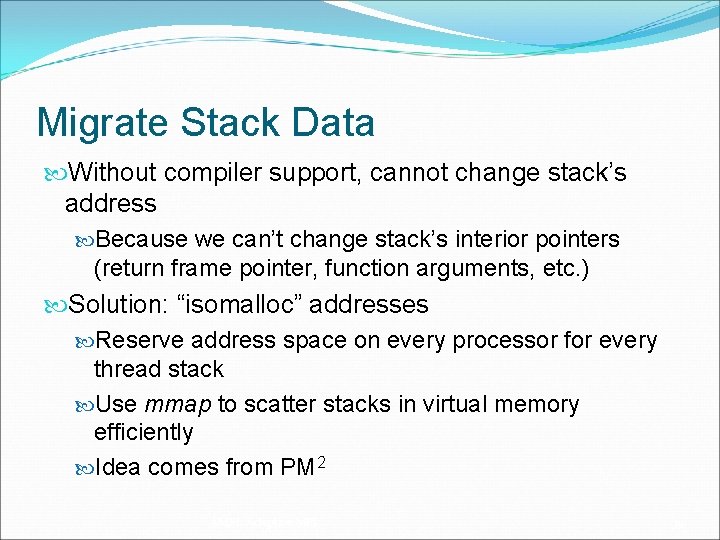

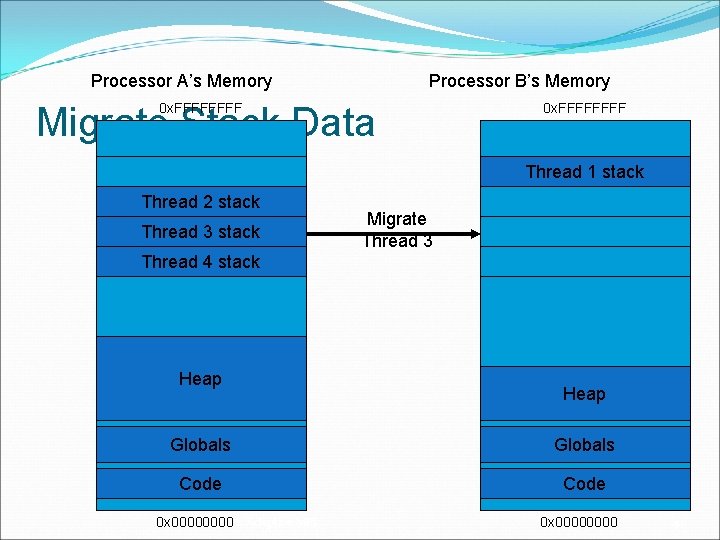

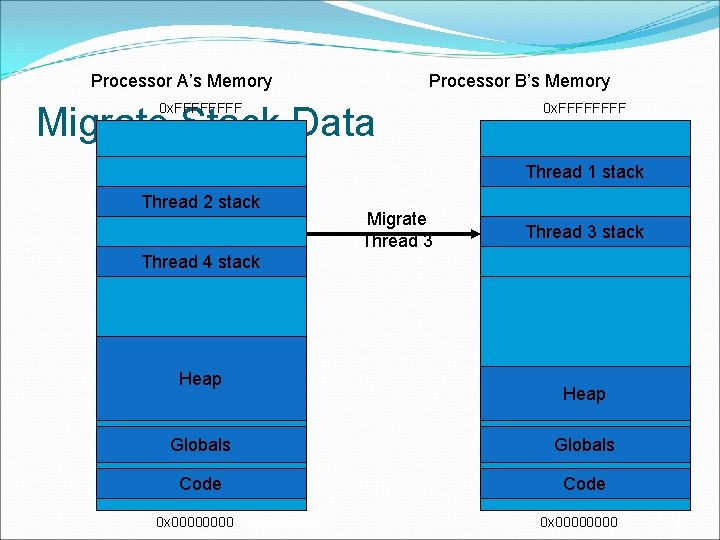

Migrate Stack Data Without compiler support, cannot change stack’s address Because we can’t change stack’s interior pointers (return frame pointer, function arguments, etc. ) Solution: “isomalloc” addresses Reserve address space on every processor for every thread stack Use mmap to scatter stacks in virtual memory efficiently Idea comes from PM 2 AMPI: Adaptive MPI 39

Processor A’s Memory Processor B’s Memory Migrate Stack Data 0 x. FFFFFFFF Thread 1 stack Thread 2 stack Thread 3 stack Migrate Thread 3 Thread 4 stack Heap Globals Code AMPI: Adaptive MPI 0 x 00000000 40

Processor A’s Memory Processor B’s Memory Migrate Stack Data 0 x. FFFFFFFF Thread 1 stack Thread 2 stack Migrate Thread 3 stack Thread 4 stack Heap Globals Code AMPI: Adaptive MPI 0 x 00000000 41

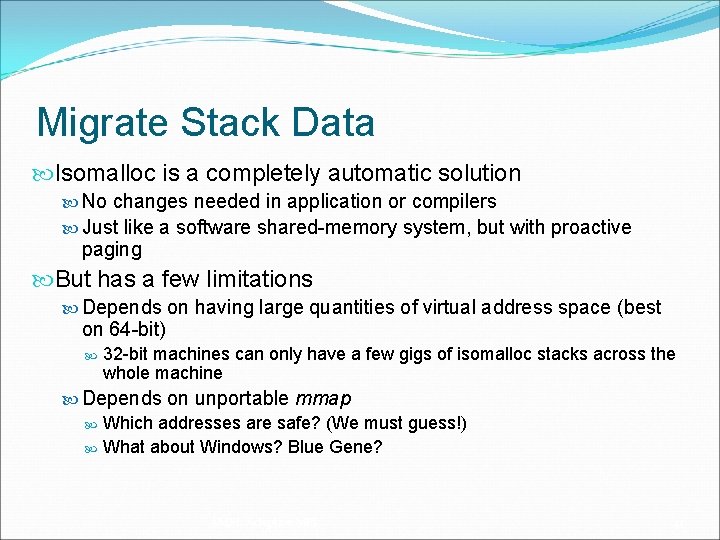

Migrate Stack Data Isomalloc is a completely automatic solution No changes needed in application or compilers Just like a software shared-memory system, but with proactive paging But has a few limitations Depends on having large quantities of virtual address space (best on 64 -bit) 32 -bit machines can only have a few gigs of isomalloc stacks across the whole machine Depends on unportable mmap Which addresses are safe? (We must guess!) What about Windows? Blue Gene? AMPI: Adaptive MPI 42

Heap Data Heap data is any dynamically allocated data C “malloc” and “free” C++ “new” and “delete” F 90 “ALLOCATE” and “DEALLOCATE” Arrays and linked data structures are almost always heap data AMPI: Adaptive MPI 43

Migrate Heap Data Automatic solution: isomalloc all heap data just like stacks! “-memory isomalloc” link option Overrides malloc/free No new application code needed Same limitations as isomalloc Manual solution: application moves its heap data Need to be able to size message buffer, pack data into message, and unpack on other side “pup” abstraction does all three AMPI: Adaptive MPI 44

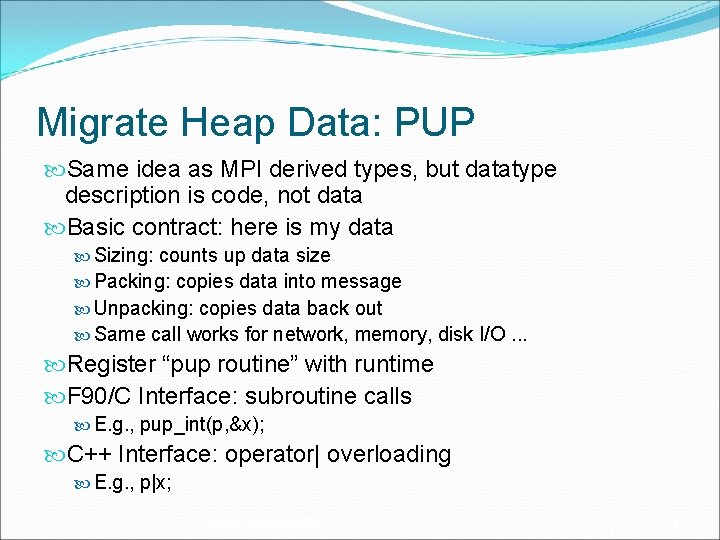

Migrate Heap Data: PUP Same idea as MPI derived types, but datatype description is code, not data Basic contract: here is my data Sizing: counts up data size Packing: copies data into message Unpacking: copies data back out Same call works for network, memory, disk I/O. . . Register “pup routine” with runtime F 90/C Interface: subroutine calls E. g. , pup_int(p, &x); C++ Interface: operator| overloading E. g. , p|x; AMPI: Adaptive MPI 45

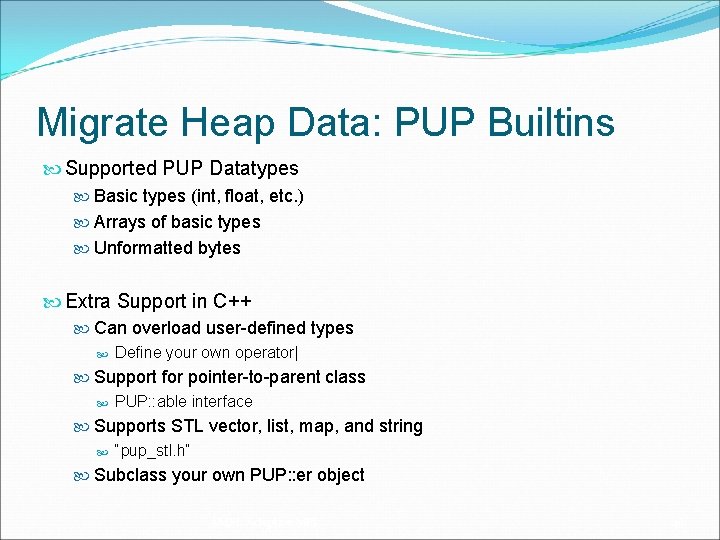

Migrate Heap Data: PUP Builtins Supported PUP Datatypes Basic types (int, float, etc. ) Arrays of basic types Unformatted bytes Extra Support in C++ Can overload user-defined types Define your own operator| Support for pointer-to-parent class PUP: : able interface Supports STL vector, list, map, and string “pup_stl. h” Subclass your own PUP: : er object AMPI: Adaptive MPI 46

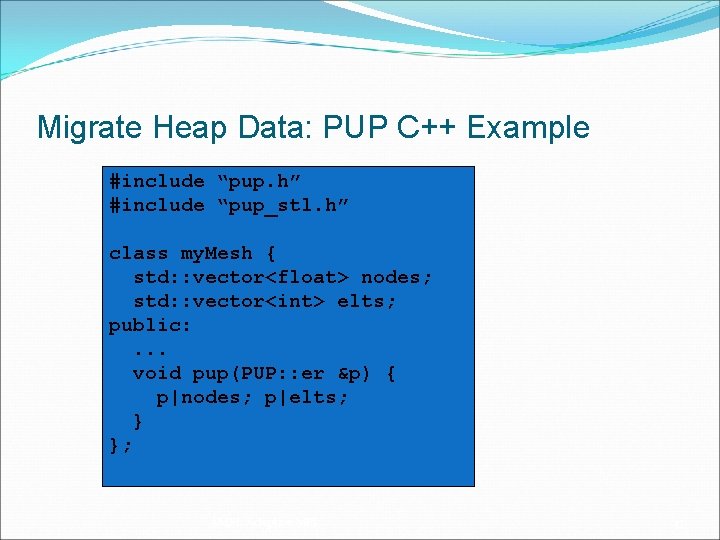

Migrate Heap Data: PUP C++ Example #include “pup. h” #include “pup_stl. h” class my. Mesh { std: : vector<float> nodes; std: : vector<int> elts; public: . . . void pup(PUP: : er &p) { p|nodes; p|elts; } }; AMPI: Adaptive MPI 47

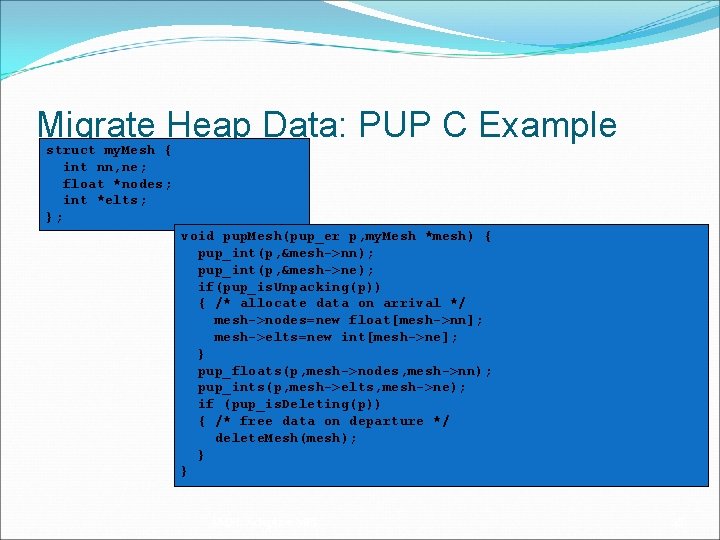

Migrate Heap Data: PUP C Example struct my. Mesh { int nn, ne; float *nodes; int *elts; }; void pup. Mesh(pup_er p, my. Mesh *mesh) { pup_int(p, &mesh->nn); pup_int(p, &mesh->ne); if(pup_is. Unpacking(p)) { /* allocate data on arrival */ mesh->nodes=new float[mesh->nn]; mesh->elts=new int[mesh->ne]; } pup_floats(p, mesh->nodes, mesh->nn); pup_ints(p, mesh->elts, mesh->ne); if (pup_is. Deleting(p)) { /* free data on departure */ delete. Mesh(mesh); } } AMPI: Adaptive MPI 48

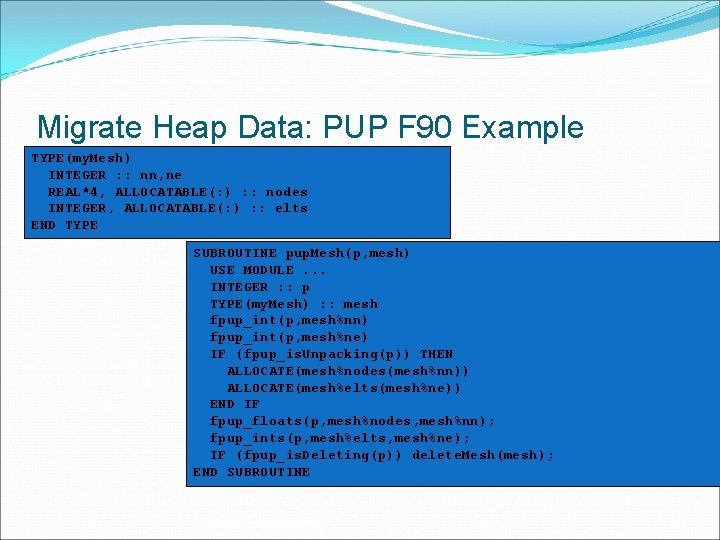

Migrate Heap Data: PUP F 90 Example TYPE(my. Mesh) INTEGER : : nn, ne REAL*4, ALLOCATABLE(: ) : : nodes INTEGER, ALLOCATABLE(: ) : : elts END TYPE SUBROUTINE pup. Mesh(p, mesh) USE MODULE. . . INTEGER : : p TYPE(my. Mesh) : : mesh fpup_int(p, mesh%nn) fpup_int(p, mesh%ne) IF (fpup_is. Unpacking(p)) THEN ALLOCATE(mesh%nodes(mesh%nn)) ALLOCATE(mesh%elts(mesh%ne)) END IF fpup_floats(p, mesh%nodes, mesh%nn); fpup_ints(p, mesh%elts, mesh%ne); IF (fpup_is. Deleting(p)) delete. Mesh(mesh); END SUBROUTINE AMPI: Adaptive MPI 49

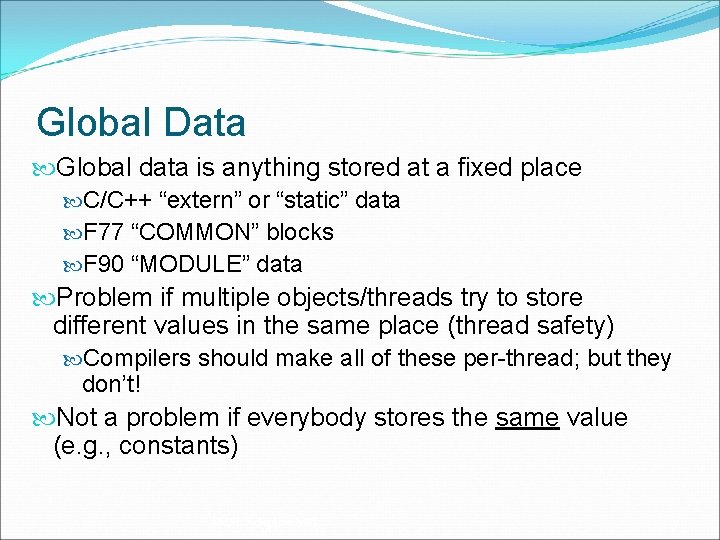

Global Data Global data is anything stored at a fixed place C/C++ “extern” or “static” data F 77 “COMMON” blocks F 90 “MODULE” data Problem if multiple objects/threads try to store different values in the same place (thread safety) Compilers should make all of these per-thread; but they don’t! Not a problem if everybody stores the same value (e. g. , constants) AMPI: Adaptive MPI 50

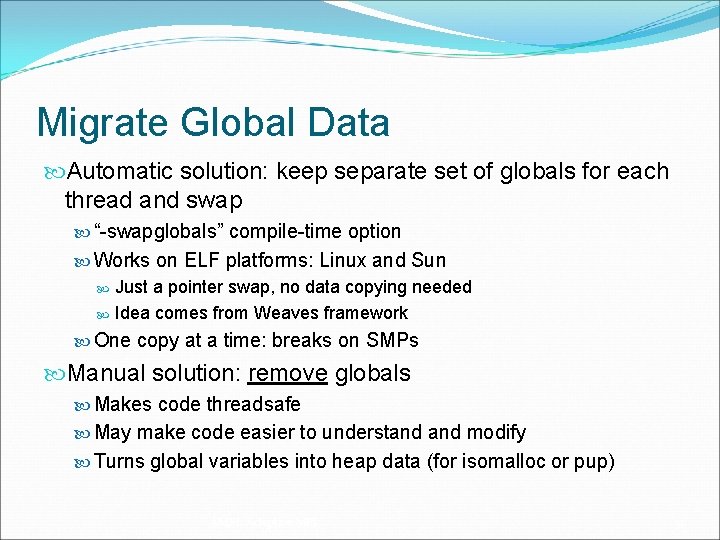

Migrate Global Data Automatic solution: keep separate set of globals for each thread and swap “-swapglobals” compile-time option Works on ELF platforms: Linux and Sun Just a pointer swap, no data copying needed Idea comes from Weaves framework One copy at a time: breaks on SMPs Manual solution: remove globals Makes code threadsafe May make code easier to understand modify Turns global variables into heap data (for isomalloc or pup) AMPI: Adaptive MPI 51

How to Migrate a Virtual Processor? Move all application state to new processor Stack Data Automatic: isomalloc stacks Heap Data Use “-memory isomalloc” -or Write pup routines Global Variables Use “-swapglobals” -or Remove globals entirely AMPI: Adaptive MPI 52

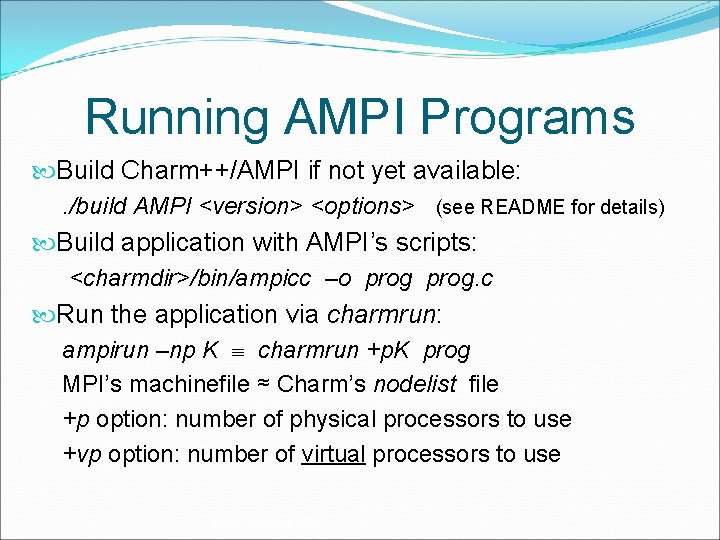

Running AMPI Programs Build Charm++/AMPI if not yet available: . /build AMPI <version> <options> (see README for details) Build application with AMPI’s scripts: <charmdir>/bin/ampicc –o prog. c Run the application via charmrun: ampirun –np K charmrun +p. K prog MPI’s machinefile ≈ Charm’s nodelist file +p option: number of physical processors to use +vp option: number of virtual processors to use AMPI: Adaptive MPI 53

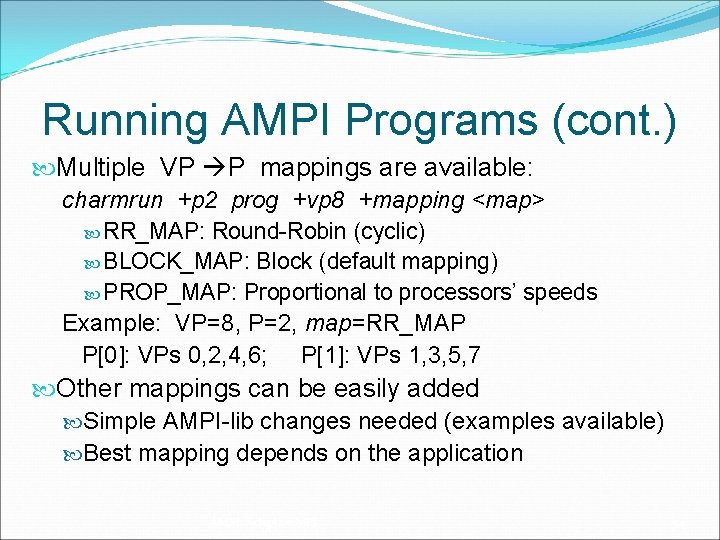

Running AMPI Programs (cont. ) Multiple VP P mappings are available: charmrun +p 2 prog +vp 8 +mapping <map> RR_MAP: Round-Robin (cyclic) BLOCK_MAP: Block (default mapping) PROP_MAP: Proportional to processors’ speeds Example: VP=8, P=2, map=RR_MAP P[0]: VPs 0, 2, 4, 6; P[1]: VPs 1, 3, 5, 7 Other mappings can be easily added Simple AMPI-lib changes needed (examples available) Best mapping depends on the application AMPI: Adaptive MPI 54

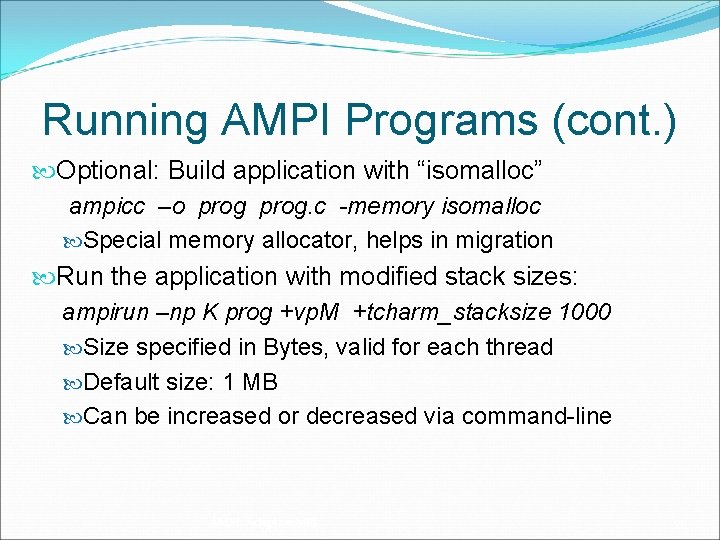

Running AMPI Programs (cont. ) Optional: Build application with “isomalloc” ampicc –o prog. c -memory isomalloc Special memory allocator, helps in migration Run the application with modified stack sizes: ampirun –np K prog +vp. M +tcharm_stacksize 1000 Size specified in Bytes, valid for each thread Default size: 1 MB Can be increased or decreased via command-line AMPI: Adaptive MPI 55

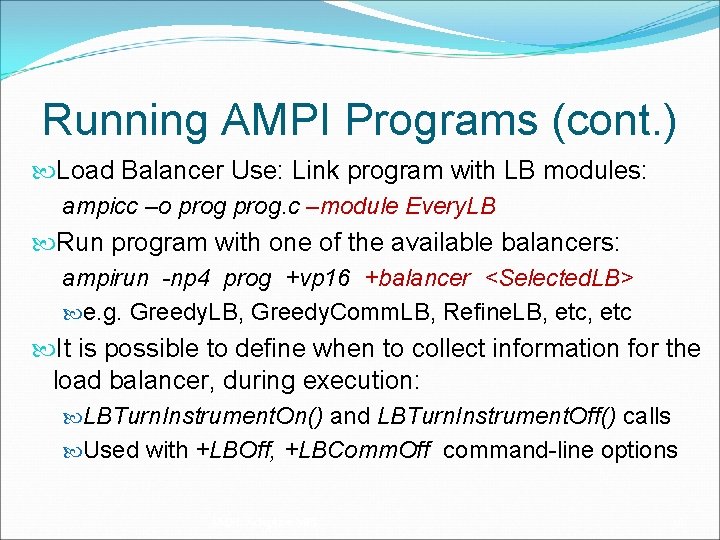

Running AMPI Programs (cont. ) Load Balancer Use: Link program with LB modules: ampicc –o prog. c –module Every. LB Run program with one of the available balancers: ampirun -np 4 prog +vp 16 +balancer <Selected. LB> e. g. Greedy. LB, Greedy. Comm. LB, Refine. LB, etc It is possible to define when to collect information for the load balancer, during execution: LBTurn. Instrument. On() and LBTurn. Instrument. Off() calls Used with +LBOff, +LBComm. Off command-line options AMPI: Adaptive MPI 56

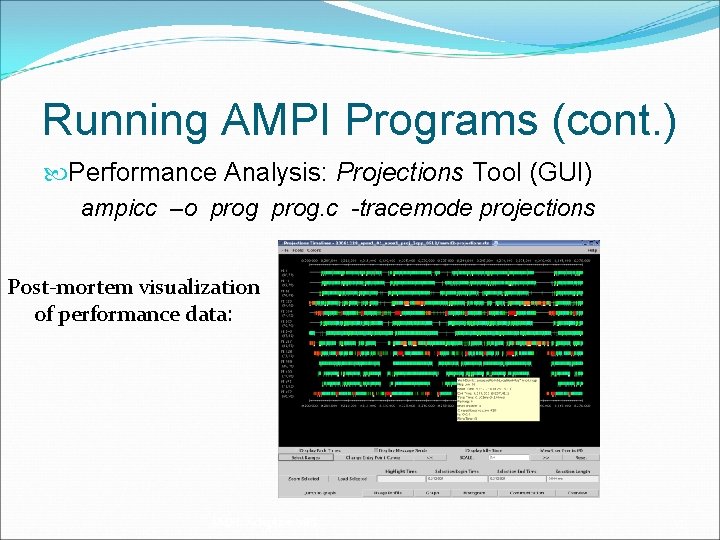

Running AMPI Programs (cont. ) Performance Analysis: Projections Tool (GUI) ampicc –o prog. c -tracemode projections Post-mortem visualization of performance data: AMPI: Adaptive MPI 57

Outline Motivation AMPI: Overview Benefits of AMPI Converting MPI Codes to AMPI Handling Global/Static Variables Running AMPI Programs AMPI Status AMPI References, Conclusion AMPI: Adaptive MPI 58

AMPI Status Compliance to MPI-1. 1 Standard Missing: error handling, profiling interface Partial MPI-2 support: Some new functions implemented when needed ROMIO integrated for parallel I/O Major missing features: dynamic process management, language bindings Most missing features are documented Tested periodically via MPICH-1 test-suite AMPI: Adaptive MPI 59

AMPI Applications Rocstar Rocket simulation Fractography 3 D FLASH BRAM AMPI: Adaptive MPI 60

Outline Motivation AMPI: Overview Benefits of AMPI Converting MPI Codes to AMPI Handling Global/Static Variables Running AMPI Programs AMPI Status AMPI References, Conclusion AMPI: Adaptive MPI 61

AMPI References Charm++ site for manuals http: //charm. cs. uiuc. edu/manuals/ Papers on AMPI http: //charm. cs. uiuc. edu/research/ampi/index. shtml#Papers AMPI Source Code: part of Charm++ distribution http: //charm. cs. uiuc. edu/download/ AMPI’s current funding support (indirect): NSF/NCSA Blue Waters (Charm++, Big. Sim) Do. E – Colony 2 project (Load Balancing, Fault Tolerance) AMPI: Adaptive MPI 62

Conclusion AMPI makes exciting features from Charm++ available for many MPI applications! VPs in AMPI are used in Big. Sim to emulate processors of future machines – see next talk… We support AMPI through our regular mailing list: charm@cs. illinois. edu Feedback on AMPI is always welcome Thank You! Questions ? AMPI: Adaptive MPI 63

- Slides: 63