Alpha Go versions Alphago Fan beaten Fan Hui

Alpha. Go versions Alphago Fan beaten Fan Hui 2015/10/5~9 (Mastering the game of Go with deep neural networks and tree search) 2016/1/27 Alphago Lee beaten Lee Sedol 2016/3/9~15 Alphago master 2016/12/29~2017/1/4 Alphago Zero (Mastering the Game of Go without Human Knowledge) 2017/10/19 Alpha. Zero (Mastering Chess and Shogi by Self-Play with a General Reinforcement Learning Algorithm) 2017/12/5

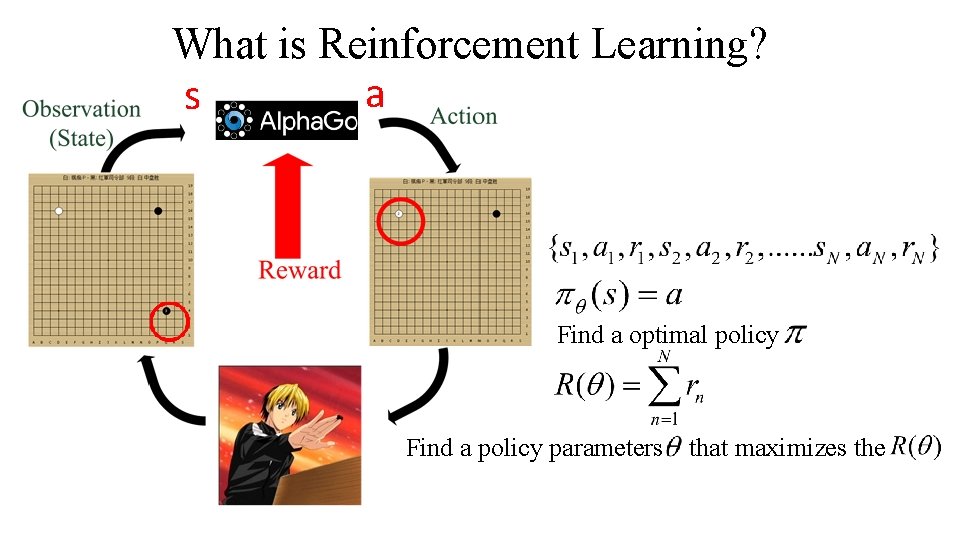

What is Reinforcement Learning? a s Find a optimal policy Find a policy parameters that maximizes the

Mastering the game of Go with deep neural networks and tree search David Silver 1*, Aja Huang 1*, Chris J. Maddison 1, Arthur Guez 1, Laurent Sifre 1, George van den Driessche 1, Julian Schrittwieser 1, Ioannis Antonoglou 1, Veda Panneershelvam 1, Marc Lanctot 1, Sander Dieleman 1, Dominik Grewe 1, John Nham 2, Nal Kalchbrenner 1, Ilya Sutskever 2, Timothy Lillicrap 1, Madeleine Leach 1, Koray Kavukcuoglu 1, Thore Graepel 1 & Demis Hassabis 1 Nature 2016/1/27

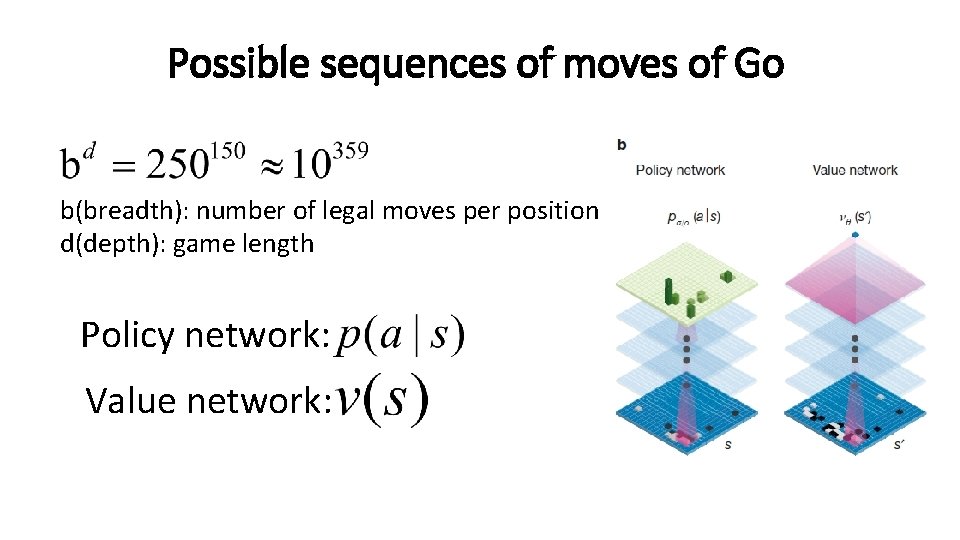

Possible sequences of moves of Go b(breadth): number of legal moves per position d(depth): game length Policy network: Value network:

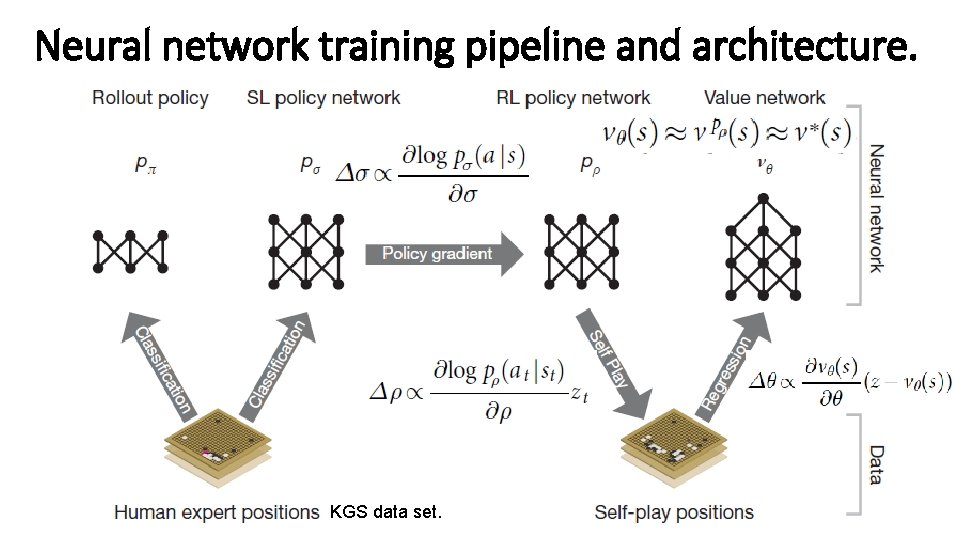

Neural network training pipeline and architecture. KGS data set.

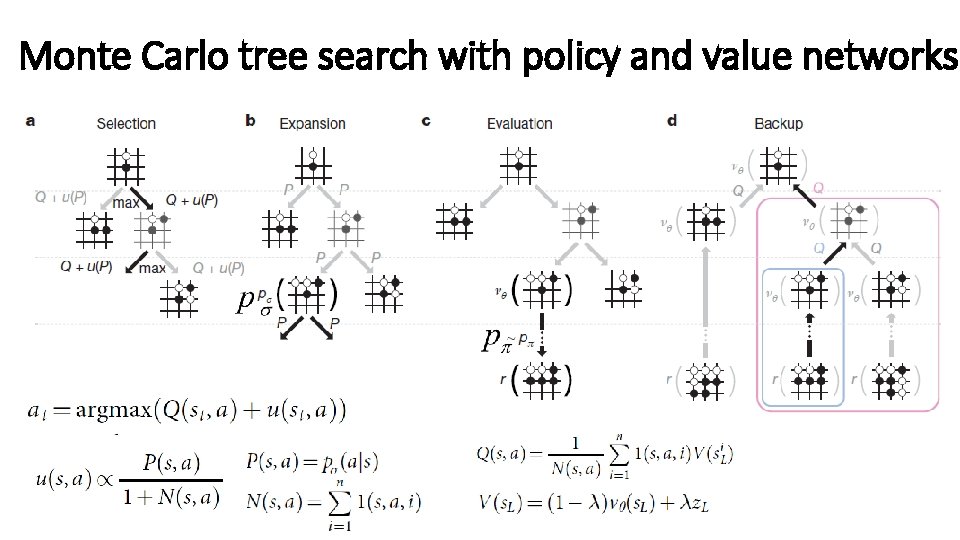

Monte Carlo tree search with policy and value networks

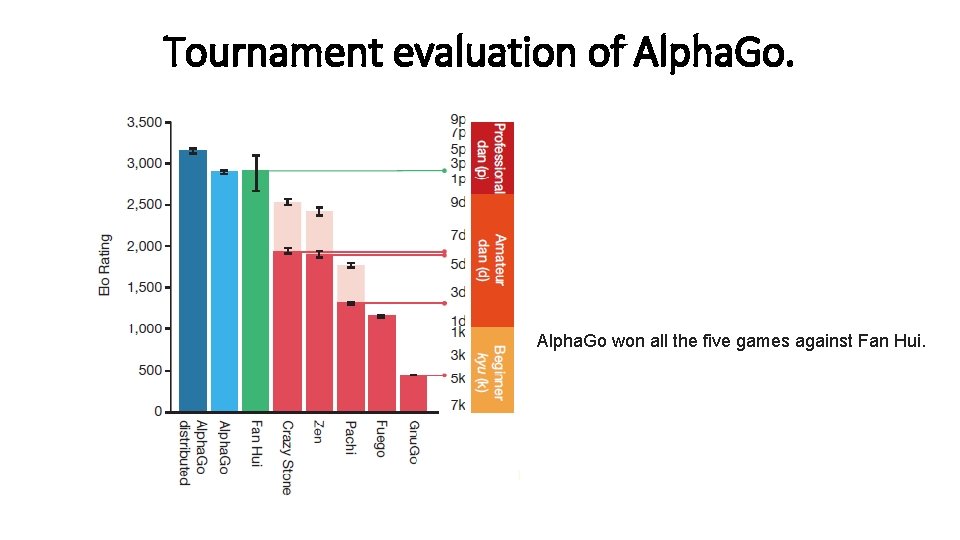

Tournament evaluation of Alpha. Go won all the five games against Fan Hui.

Mastering the Game of Go without Human Knowledge David Silver*, Julian Schrittwieser*, Karen Simonyan*, Ioannis Antonoglou, Aja Huang, Arthur Guez, Thomas Hubert, Lucas Baker, Matthew Lai, Adrian Bolton, Yutian Chen, Timothy Lillicrap, Fan Hui, Laurent Sifre, George van den Driessche, Thore Graepel, Demis Hassabis. Nature 2017/10/19

Comparing to previous Alpha. Go Work 1. It is trained solely by self-play reinforcement learning, starting from random play, without any supervision or use of human data. 2. It uses a single neural network, rather than separate policy and value networks.

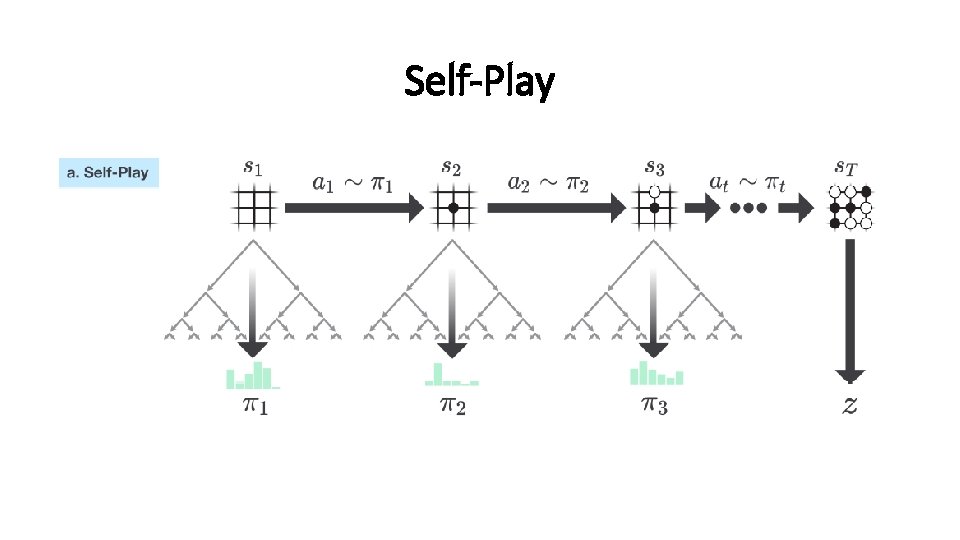

Self-Play

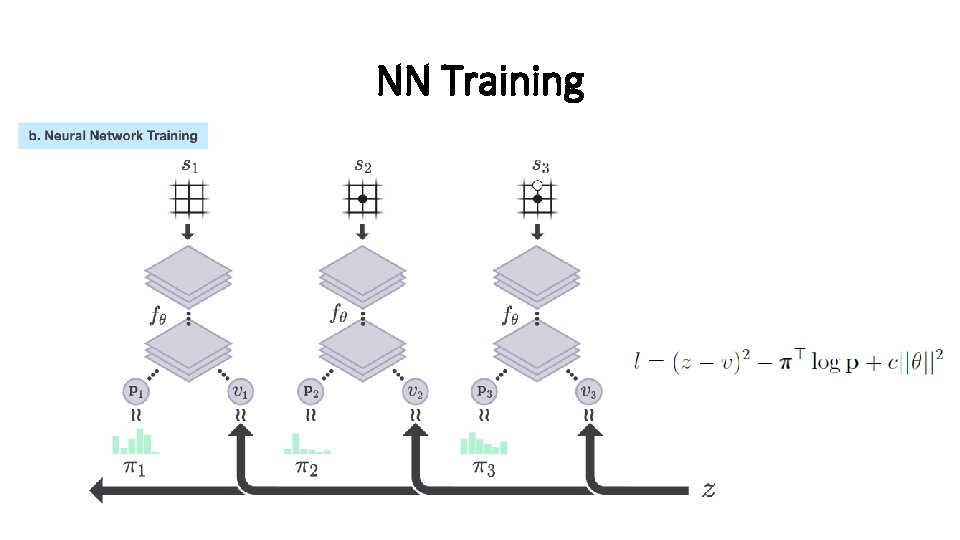

NN Training

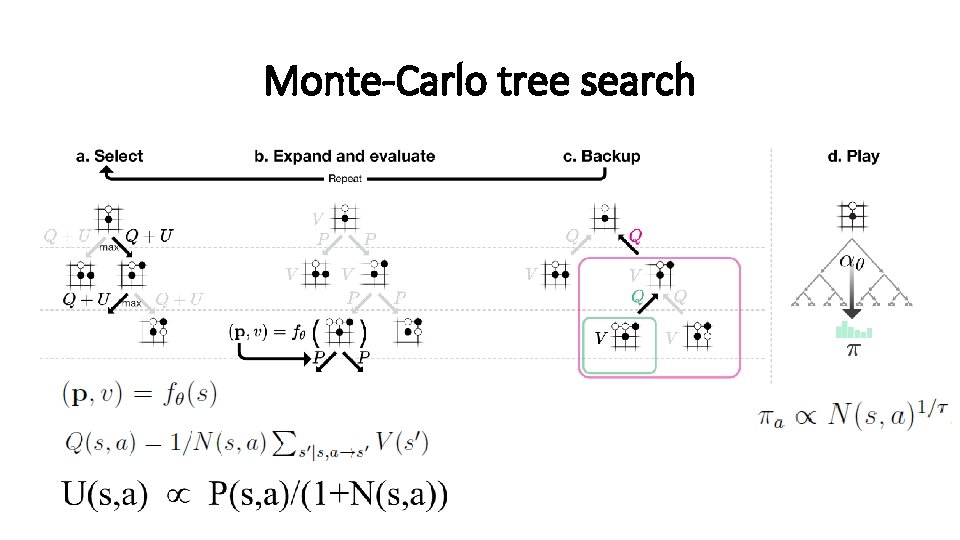

Monte-Carlo tree search

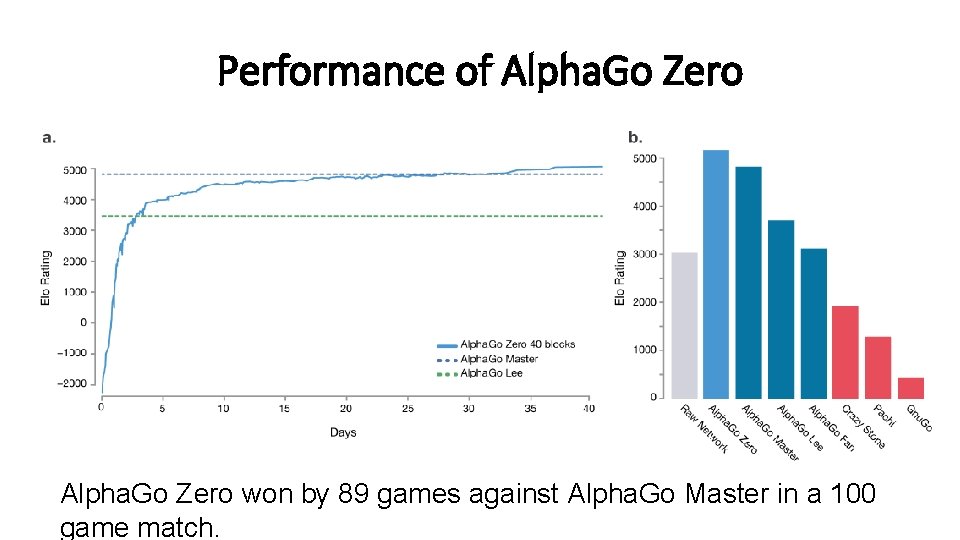

Performance of Alpha. Go Zero won by 89 games against Alpha. Go Master in a 100 game match.

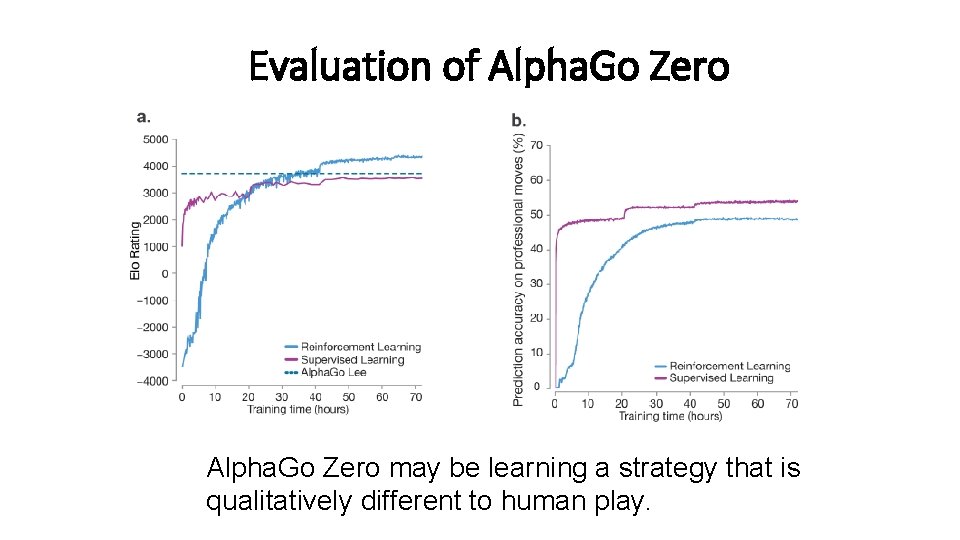

Evaluation of Alpha. Go Zero may be learning a strategy that is qualitatively different to human play.

Conclusion Humankind has accumulated Go knowledge from millions of games played over thousandsof years. In the space of a few days, Alpha. Go Zero was able to rediscover much of this Go knowledge, as well as novel strategies that provide new insights into the oldest of games.

Thank you

- Slides: 17