ALGORITHMS What is backtracking A For some problems

ALGORITHMS What is backtracking A) For some problems solution is expressible as an N- table (X 1, X 2 -----Xn) where the Xi are chosen from some finite set Si. The brute force method would yeiled all these n tuples , evaluate each one with the criterion function P and same those which yeid the optimum. Backtracking is a which is used in superior to brute force method. Its basic idea is to buid up the solution vector one component at a time and to use modified criterion functions Pi (x 1, x 2 ----xi), a bounding function to test whether the vector being formed has any chance of success. The major advantage of this method is if it is realized that the partial vector (X 1, X 2 -----Xi) can in no way lead to an optimal solution, then m I+1, …mn possible test vectors can be ignored entirely. The problems which uses backtracking requires that they have to satisfy the constraints divided into two categories. 1) Explicit 2) Implicit Explicit constraints are used that restrict each Xi to take on values only from a given set. Implicit constraints are rules that determined which of the tuples in the solution spare of I satisfy the criterion function. Thus these describe the way in which the Xi must rebate to each other.

2) Which of the sorting algorithms uses Divide and conquer strategy A) Given a function to compute on N inputs the divide and conquer strategy suggests splitting the inputs into k distinct subsets, 1<K=n, yeliding k sub problems. These sub problems must be solved and then a method must be found to combine sub solutions into a solution of the whole. If the sub problems are still relatively large, then the divide and conquer strategy can possibly be re applied. For problems like binary search, merge sort, quicksort divide and conquer stratify can be used. 3) What is branch and bound strategy A) A branch and bound method searches a state stat the using any search mechanism in which all the children of the E-node are generated before another node becomes the E node. Three common strategis are FIF 0, LIFO and LC. A cost function C( ) such that C (X)>= C(x) is used to provide lower bounds on solution obtainable form any node X. If u is an upper bound on the cost of a minimu cost solution of live nodes X with C-(x)>U may be killed as all answer nodes rechable form X have cost c(x)>=c-(x)>U. In case an answer node with cost U has already been reached then all live nodes with c(x)>= >U my be killed.

4) What is dynamic programming A) A Dynamic programming is an algorithms design method that can be used when the solution to a problem can be viewed as the result of a sequence of decisions. We could enumerate all decisions sequences and then pick out the best. The optimal sequence decisions are obtained by making explic it appeal to the principle of optimality. The principle of optimality states that an optimal sequence of decisions has the property that what ever the initial state and design are, the remaining must constitute an optimal decision sequence, with regard to the state resulting from the first decision. .

5) what is Greedy Method A) Most of the problems have N inputs and require us to obtain a subset that sales us some constraints. Any subset that satifies these constraints is called a feasible solution. We need to obtain a feasible solution that either maximises / minimizes a given objective function. A feasible solution that does this is called an optimal solotio. The greedy method suggests that one can devise an algorithms that works in stages considering one input at a time. At each stage desicion is made regarding whether a particular input is in an optimal solution (using some selection procudure). If the inclusion of the next input into the partially constructed optimal solution will result in an unfeasible solution, the this input is not added to the partial solution. otherwise it is added. This version of greedy method is called subset paradigm. For problems that do not call for the selection of an optimal subset in the greedy method, we make decisions by considering the inputs in some order. Each decision is made using an optimization criterion that can be computed using decision already made. This version of greedy technique is called ordering paradegim.

6) What is time complexity? A) Time complexity of algorithms • When you need to write a program that needs to be reused many times other issues may arise – how much times does it take to run it? – how much storage space do its variables use? – how much network traffic does it generate? • For large problems the running time is what really determines whether a program should be used • To measure the running time of a program, we – Select different sets of inputs that it should be tested on (for benchmarking). Such inputs may correspond to • the easiest case of the problem that needs to be solved(best case) • the hardest case of the problem that needs to be solved(worse case) • a case that falls between these two extremes(average case) • In many cases, the running time of a program depends on a particular type of input, not just the size of the input

– the running time of a factorial program depends on the particular number whose factorial is being sought because this determines the total number of multiplications that need to be performed – the running time of a search program may depend on whether the value being sought occurs in the collection of items to be searched • To assess the running time, we have to accept the idea that certain programming operations take a fixed amount of time (independent of the input size): – arithmetic operations (+, -, *, etc. ) – logical operations (and, or, not) – comparison operations (==, <, >, etc. ) – array/vector indexing simple assignments (n = 2, etc. )

7) what are the different notations used to measure the time complexity A)Related asymptotic notations: O, o, Ω, ω, Θ, Õ Big O is the most commonly used asymptotic notation for comparing functions, although in many cases Big O may be replaced with Θ for asymptotically tighter bounds (Theta). Here, we define some related notations in terms of "big O": 8) How the efficiency of an algorithm is measured A) Algorithmic efficiency Efficiency is generally contained in two properties: speed, (the time it takes for an operation to complete), and space, (the memory or nonvolatile storage used up by the construct). Optimization is the process of making code as efficient as possible, sometimes focusing on space at the cost of speed, or vice versa.

The speed of an algorithm is measured in various ways. The most common method uses time complexity to determine the Big –oh of an algorithm: often, it is possible to make an algorithm faster at the expense of space. This is the case whenever you cache the result of an expensive calculation rather than recalculating it on demand. The space of an algorithm is actually two separate but related things. The first part is the space taken up by the compiled executable on disk (or equivalent, depending on the hardware and language) by the algorithm. 9) what is space complexity A) Space complexity The better the time complexity of an algorithm is, the faster the algorithm will carry out his work in practice. Apart from time complexity, its space complexity is also important: This is essentially the number of memory cells which an algorithm needs. A good algorithm keeps this number as small as possible, too. There is often a time-space-tradeoff involved in a problem, that is, it cannot be solved with few computing time and low memory consumption. One then has to make a compromise and to exchange computing time for memory consumption or vice versa, depending on which algorithm one chooses and how one parameterizes it.

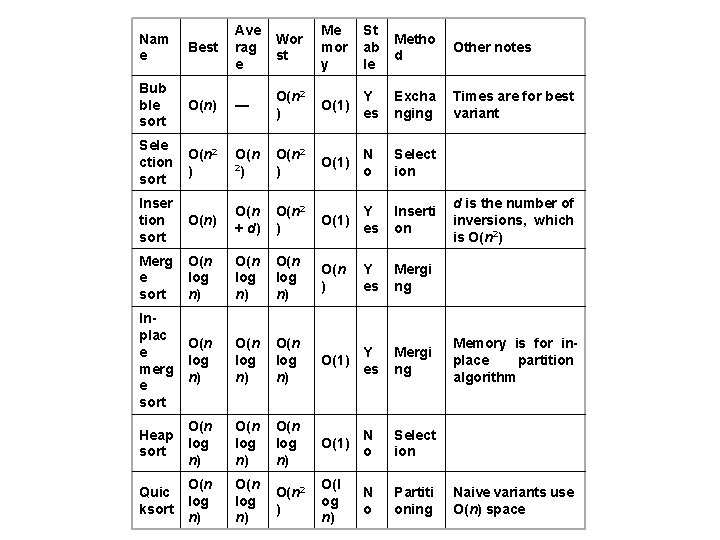

10) what are the best , average and worst case complexities of (selection , bubble insertion , quick , heap , merge sorting methods) A) Classification • For typical sorting algorithms good behavior is O (n log n) and bad behavior is Ω(n 2). Ideal behavior for a sort is O(n). Sort algorithms which only use an abstract key comparison operation always need at least Ω(n log n) comparisons on average. List of sorting algorithms In this table, n is the number of records to be sorted and k is the average length of the keys. The columns "Best", "Average", and "Worst" give the time complexity in each case; estimates that do not use k assume k to be constant. "Memory" denotes the amount of auxiliary storage needed beyond that used by the list itself. . 22

Best Ave rag e Wor st Me mor y St ab le Metho d Other notes Bub ble sort O(n) — O(n 2 ) O(1) Y es Excha nging Times are for best variant Sele ction sort O(n 2 ) O(1) N o Select ion Inser tion sort O(n) O(n + d) O(n 2 ) Y O(1) es Inserti on Merg e sort O(n log n) O(n ) Y es Mergi ng Inplac e merg e sort O(n log n) Y O(1) es Mergi ng Heap sort O(n log n) O(1) N o Select ion Quic ksort O(n log n) O(n 2 ) O(l og n) N o Partiti oning Nam e d is the number of inversions, which is O(n 2) Memory is for inplace partition algorithm Naive variants use O(n) space

11) wat is an inplace and non-inplace algorithms A) An algorithm which is not in-place is sometimes called not-in-place or out-of-place. An algorithm is sometimes informally called in-place as long as it overwrites its input with its output. In reality this is not sufficient (as the case of quicksort demonstrates) nor is it necessary; the output space may be constant, or may not even be counted, for example if the output is to a stream. On the other hand, sometimes it may be more practical to count the output space in determining whether an algorithm is in-place, such as in the first reverse example below; this makes it difficult to strictly define in-place algorithms. 12) What is the order of an algorithm which perfrms matrix multiplication A) The order of the matrix multiplication using strassens matrix multiplication is n 2. 807

13) What is the best , average and worse case complexities of an algorithm A)Time complexity in the best case measures the leaset amount of time that the algorithms needs to solve an instance of the problem Time complexity in the worse case measures the largest amount of time that the algorithms needs to solve an instance of the problem Time complexity in the average case measures the average amount of time that the algorithms needs to solve an instance of the problem 14) what is the best case average case and worse time complexities of binary search and linear search A) Complexity of linear search for successful searches Best case Ө(1) Average case Ө(log n) Worse case Ө(log n) Complexity of linear search for unsuccessful searches Best case Ө(logn) Average case Ө(log n) Worse case Ө(log n) Complexity of binary search Best case Ө(logn) Average case Ө(log n) Worse case Ө(log n)

15) Which sorting algorithms has very high space complexity A) Merge sort takes high space complexity 16) how many maximum comparisions are required o search a key in binary search tree A) The number of element comparisions is atmost k for a successful is in the rane of (2 k-1 , 2 k) for binary search tree. For unsuccessful search the element comparision are either K or k-1. unsuccessful complexity is Ө(logn). For successful search the element comparision are either K or k 1. unsuccessful complexity is O(logn) 17) what is a pass A) It is an iteration or one time execution of a loop for n times 18)what is the best , average and worse case time complexity of the push operation of a stack A) It is O(1) 19) what is the best , average and worse case time complexity of the pop operation of a stack A) It is O(1) 20) what is the best , average and worse case time complexity of the insert and delete operations of a queue A) It is O(n) for insert and O(1) for delete operations

- Slides: 13