Algorithms for Query Processing and Optimization 1 0

- Slides: 117

Algorithms for Query Processing and Optimization 1

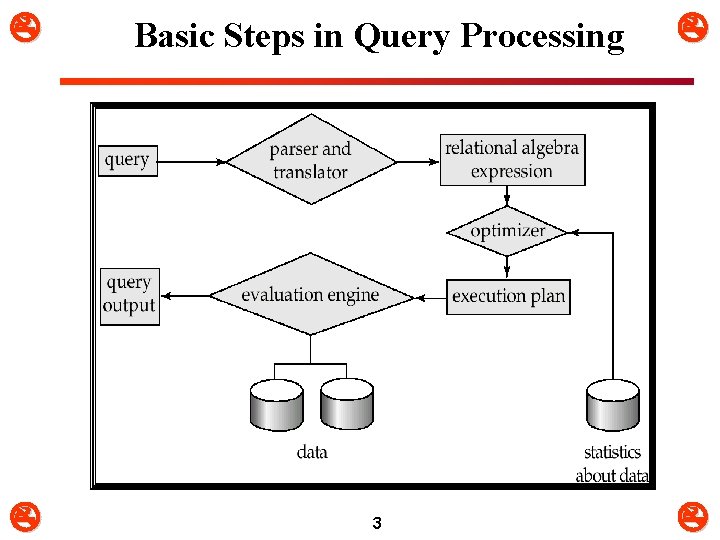

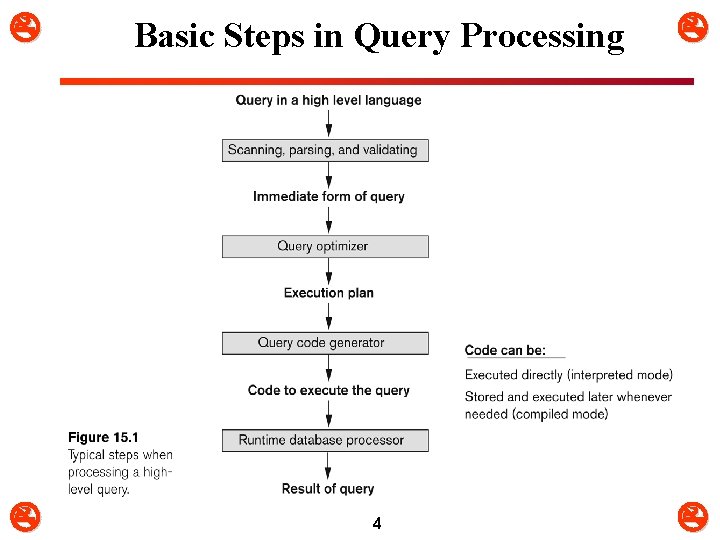

0. Basic Steps in Query Processing m m m 1. Parsing and translation: q Translate the query into its internal form. q This is then translated into relational algebra. q Parser checks syntax, verifies relations 2. Query optimization: q The process of choosing a suitable execution strategy for processing a query. 3. Evaluation: q The query evaluation engine takes a query execution plan, executes that plan, and returns the answers to the query. 2

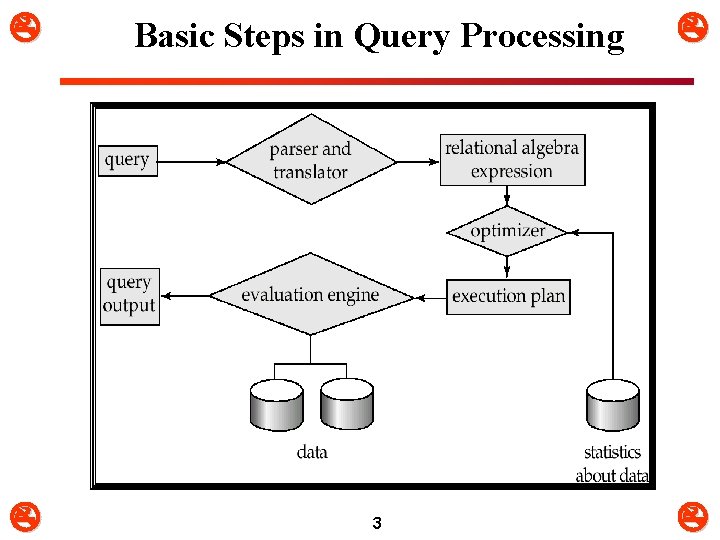

Basic Steps in Query Processing 3

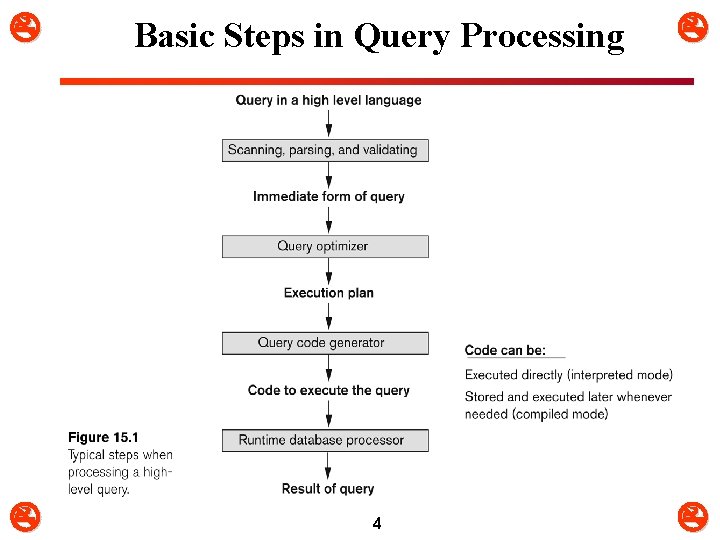

Basic Steps in Query Processing 4

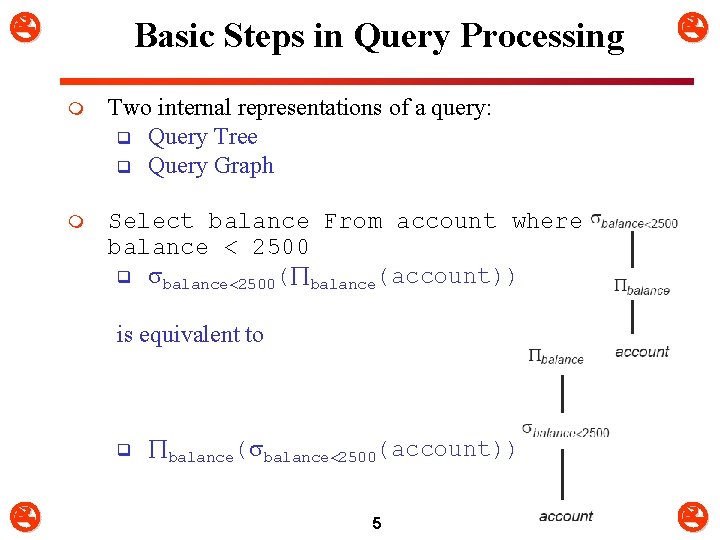

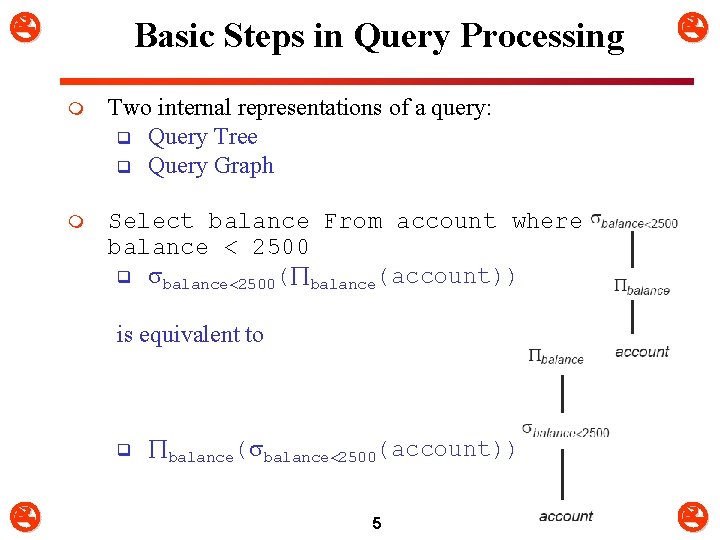

Basic Steps in Query Processing m Two internal representations of a query: q Query Tree q Query Graph m Select balance From account where balance < 2500 q balance 2500( balance(account)) is equivalent to q balance( balance 2500(account)) 5

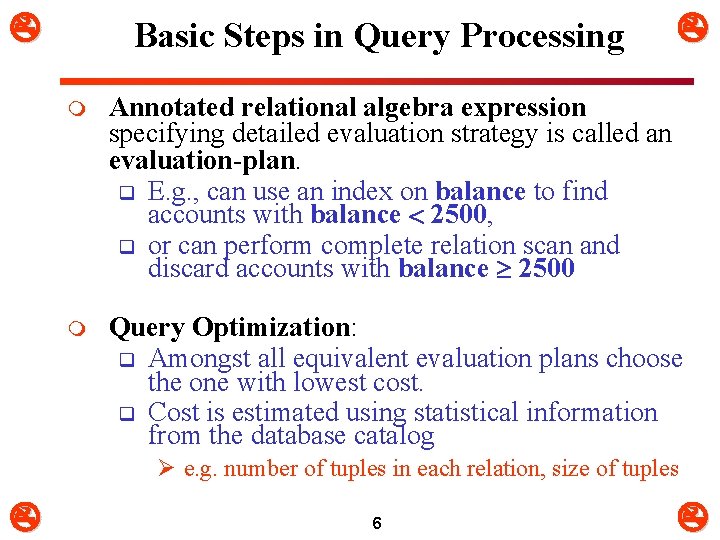

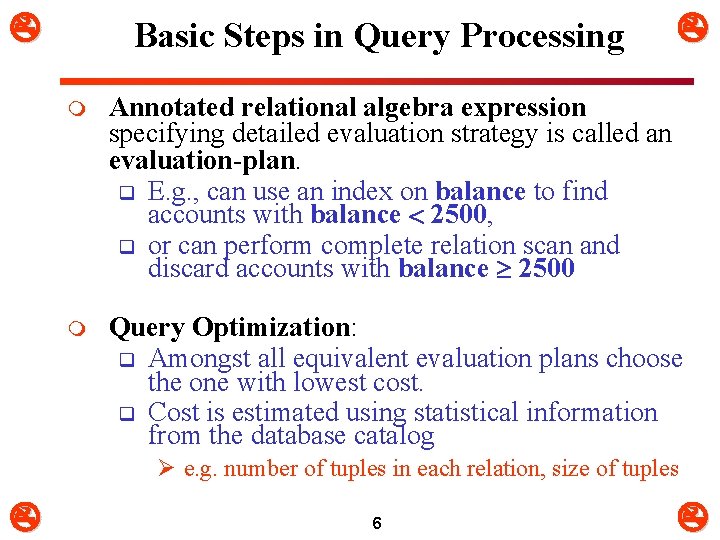

Basic Steps in Query Processing m Annotated relational algebra expression specifying detailed evaluation strategy is called an evaluation-plan. q E. g. , can use an index on balance to find accounts with balance 2500, q or can perform complete relation scan and discard accounts with balance 2500 m Query Optimization: q Amongst all equivalent evaluation plans choose the one with lowest cost. q Cost is estimated using statistical information from the database catalog Ø e. g. number of tuples in each relation, size of tuples 6

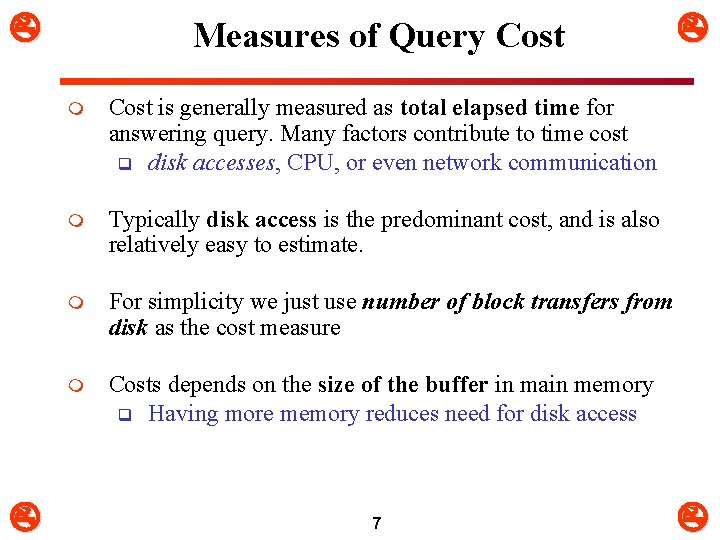

Measures of Query Cost m Cost is generally measured as total elapsed time for answering query. Many factors contribute to time cost q disk accesses, CPU, or even network communication m Typically disk access is the predominant cost, and is also relatively easy to estimate. m For simplicity we just use number of block transfers from disk as the cost measure m Costs depends on the size of the buffer in main memory q Having more memory reduces need for disk access 7

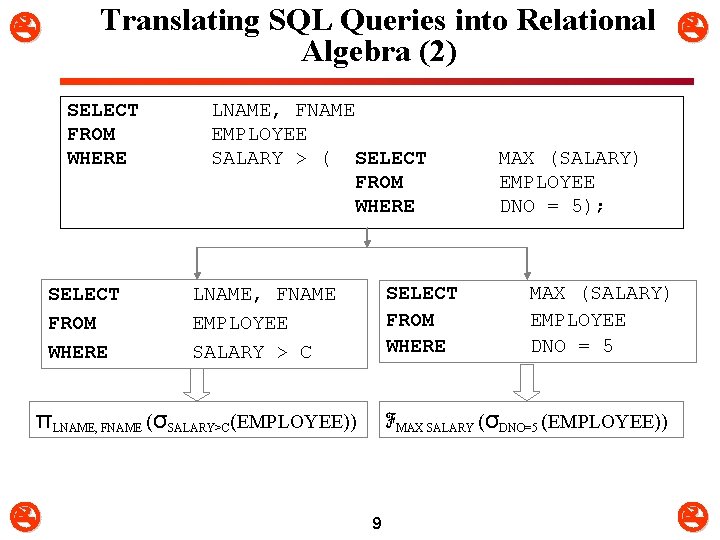

1. Translating SQL Queries into Relational Algebra (1) m m Query Block: q The basic unit that can be translated into the algebraic operators and optimized. A query block contains a single SELECT-FROMWHERE expression, as well as GROUP BY and HAVING clause if these are part of the block. Nested queries within a query are identified as separate query blocks Aggregate operators in SQL must be included in the extended algebra. 8

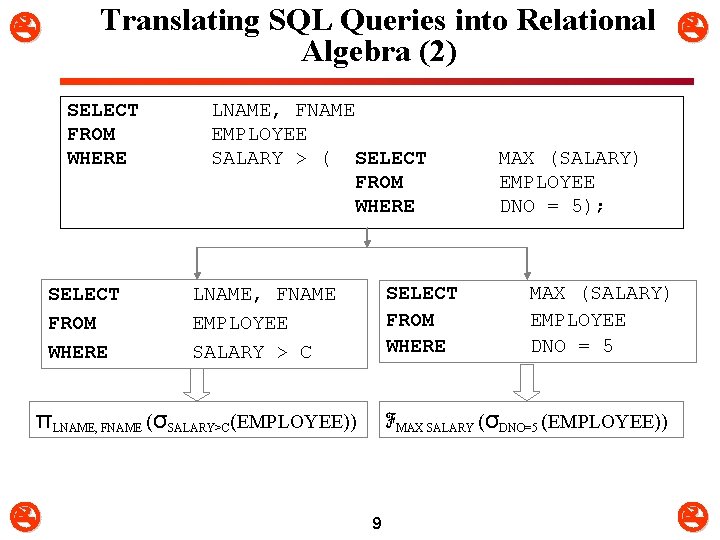

Translating SQL Queries into Relational Algebra (2) SELECT FROM WHERE LNAME, FNAME EMPLOYEE SALARY > ( SELECT FROM WHERE LNAME, FNAME EMPLOYEE SALARY > C πLNAME, FNAME (σSALARY>C(EMPLOYEE)) MAX (SALARY) EMPLOYEE DNO = 5); MAX (SALARY) EMPLOYEE DNO = 5 ℱMAX SALARY (σDNO=5 (EMPLOYEE)) 9

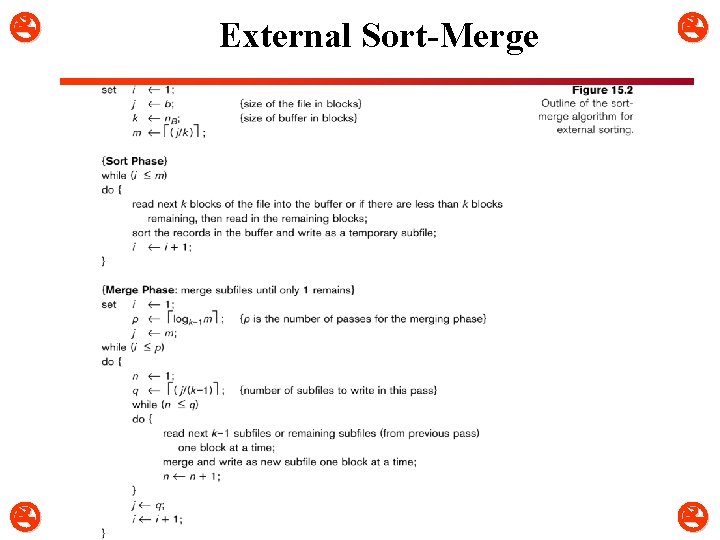

2. Algorithms for External Sorting (1) m Sorting is needed in q Order by, join, union, intersection, distinct, … m For relations that fit in memory, techniques like quicksort can be used. m External sorting: q Refers to sorting algorithms that are suitable for large files of records stored on disk that do not fit entirely in main memory, such as most database files. m For relations that don’t fit in memory, external sort-merge is a good choice 10

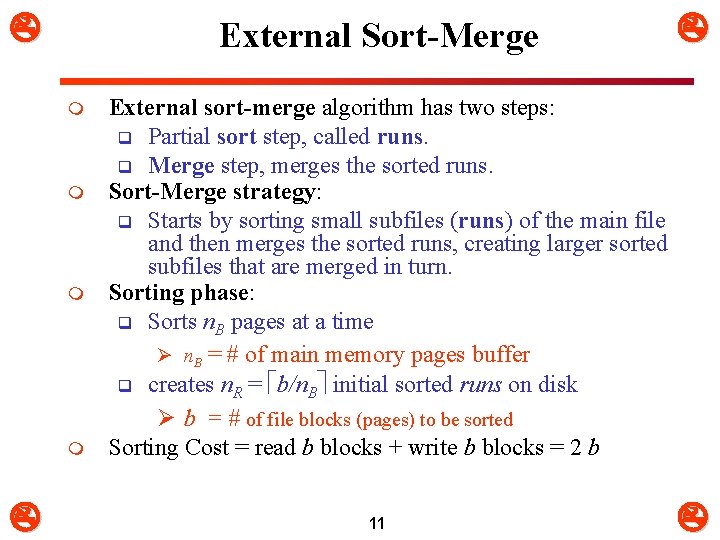

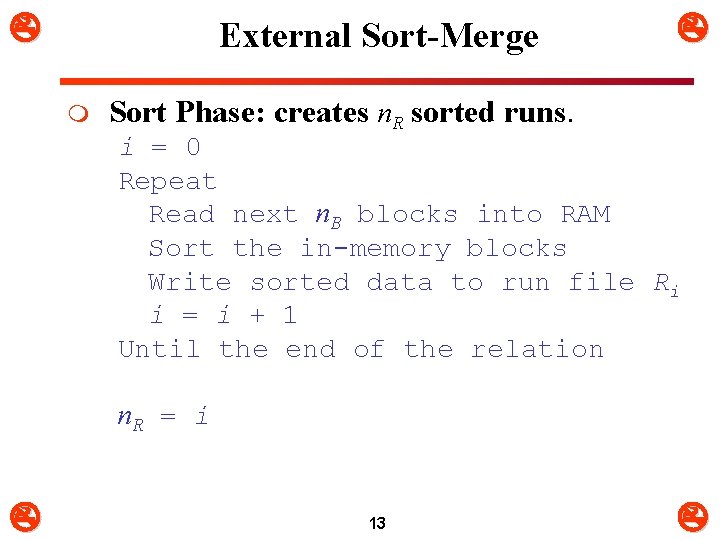

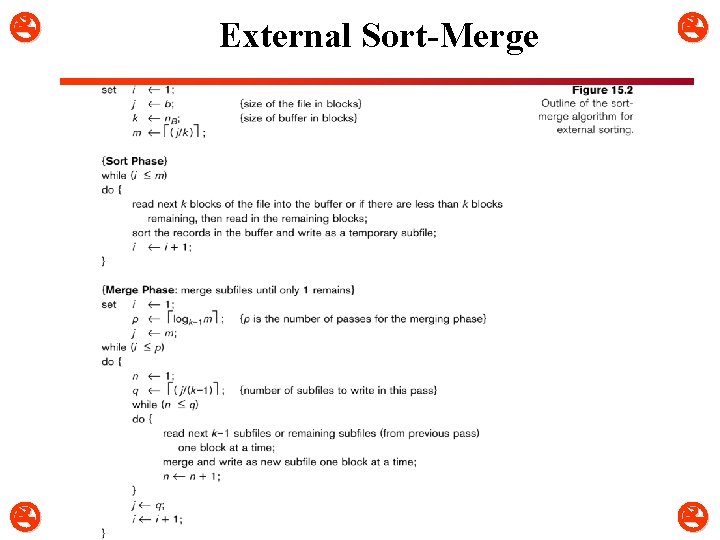

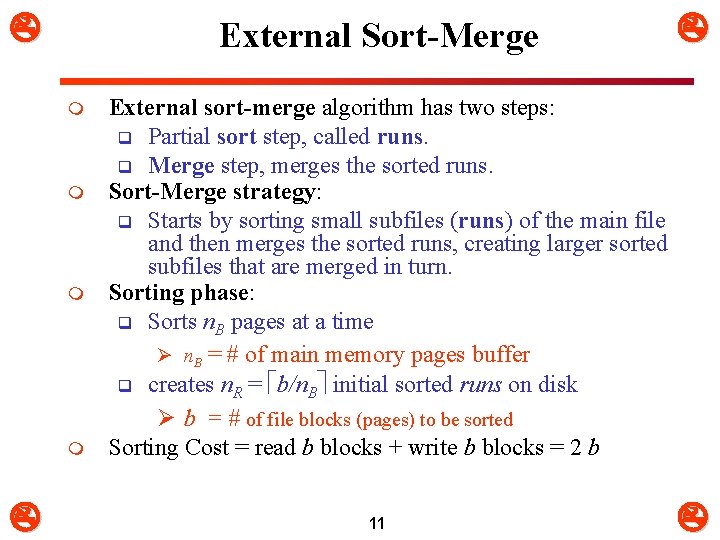

External Sort-Merge m m External sort-merge algorithm has two steps: q Partial sort step, called runs. q Merge step, merges the sorted runs. Sort-Merge strategy: q Starts by sorting small subfiles (runs) of the main file and then merges the sorted runs, creating larger sorted subfiles that are merged in turn. Sorting phase: q Sorts n. B pages at a time Ø n. B = # of main memory pages buffer q creates n. R = b/n. B initial sorted runs on disk Ø b = # of file blocks (pages) to be sorted Sorting Cost = read b blocks + write b blocks = 2 b 11

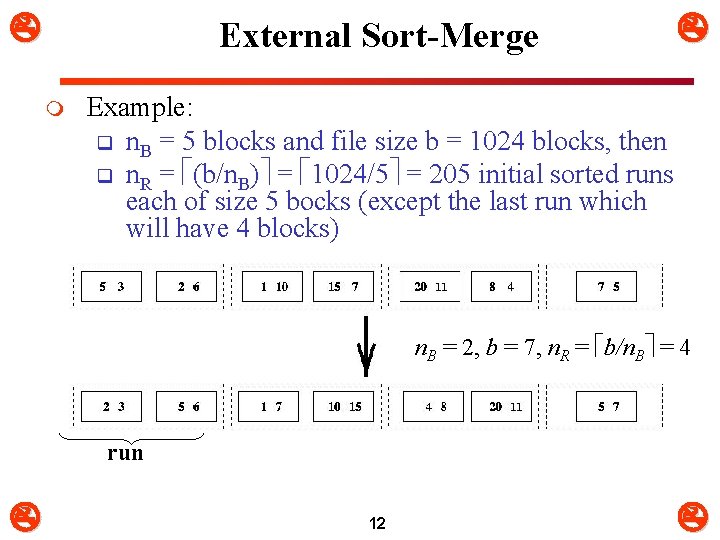

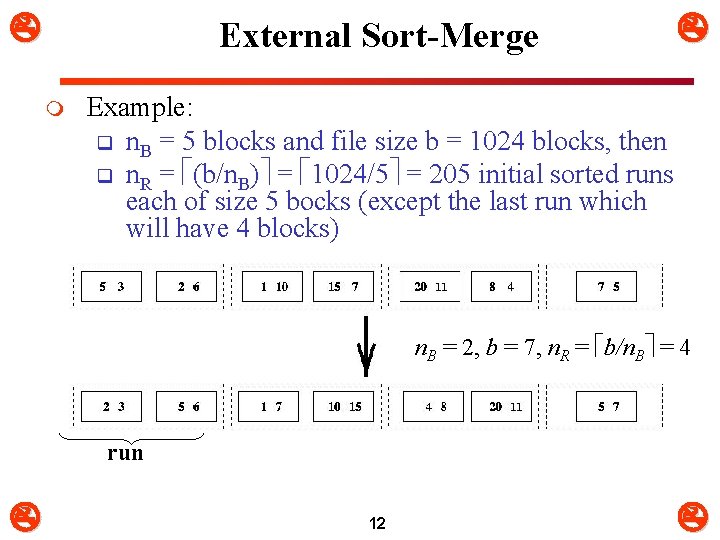

External Sort-Merge m Example: q n. B = 5 blocks and file size b = 1024 blocks, then q n. R = (b/n. B) = 1024/5 = 205 initial sorted runs each of size 5 bocks (except the last run which will have 4 blocks) n. B = 2, b = 7, n. R = b/n. B = 4 run 12

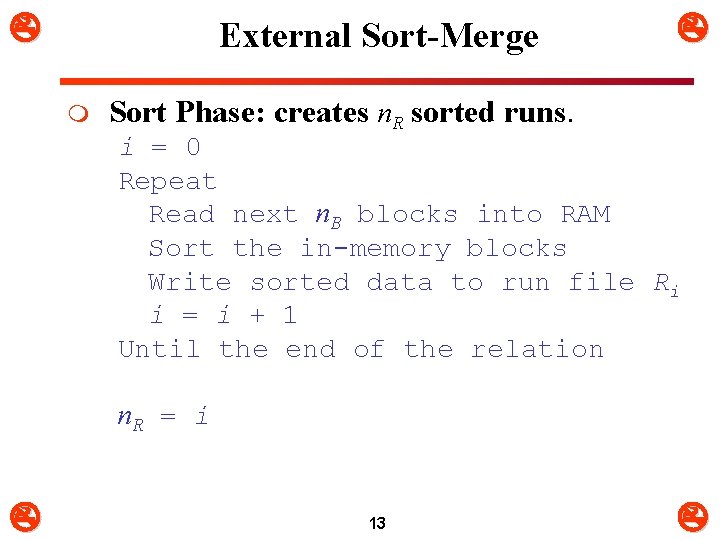

External Sort-Merge m Sort Phase: creates n. R sorted runs. i = 0 Repeat Read next n. B blocks into RAM Sort the in-memory blocks Write sorted data to run file Ri i = i + 1 Until the end of the relation n. R = i 13

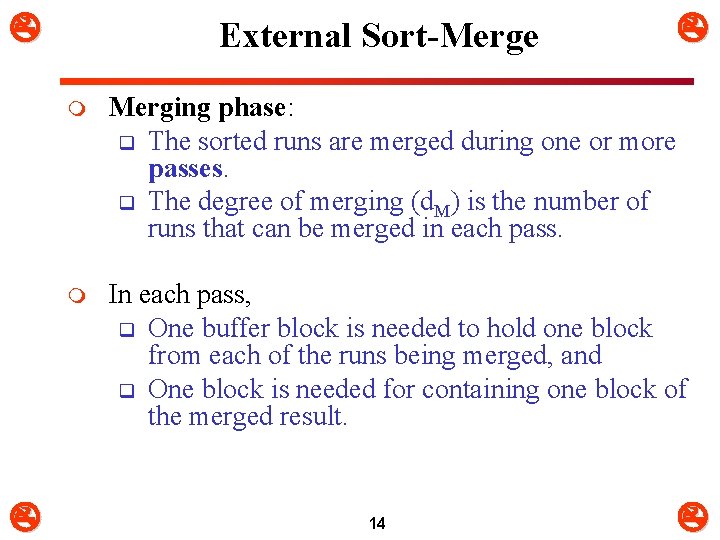

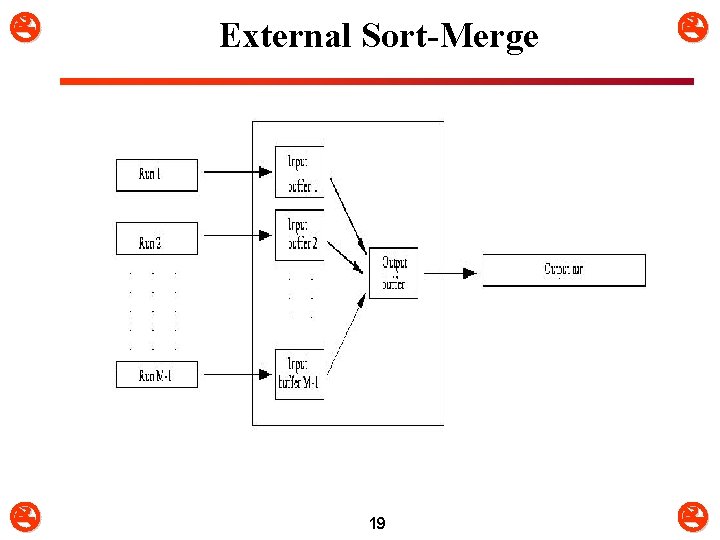

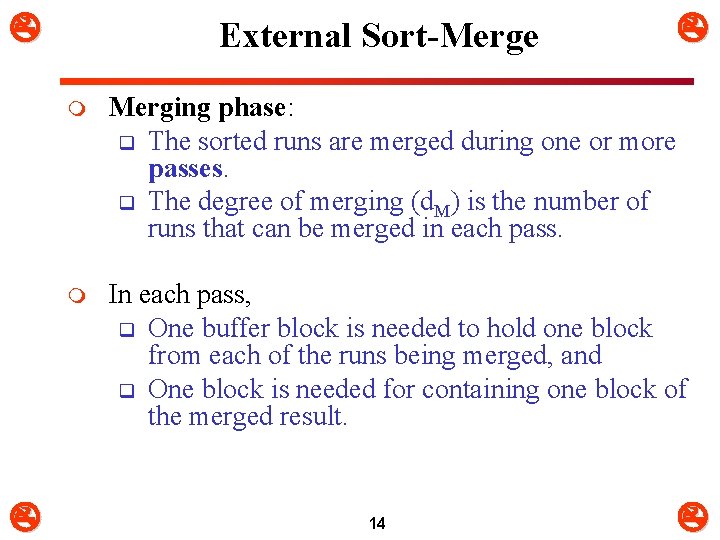

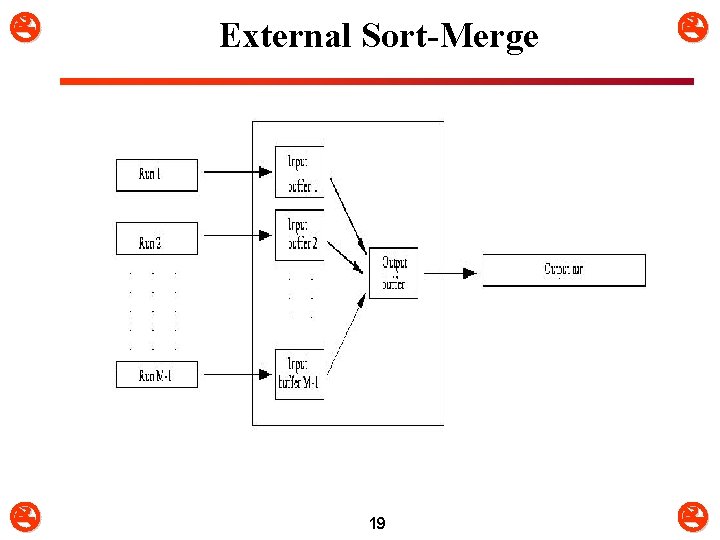

External Sort-Merge m Merging phase: q The sorted runs are merged during one or more passes. q The degree of merging (d. M) is the number of runs that can be merged in each pass. m In each pass, q One buffer block is needed to hold one block from each of the runs being merged, and q One block is needed for containing one block of the merged result. 14

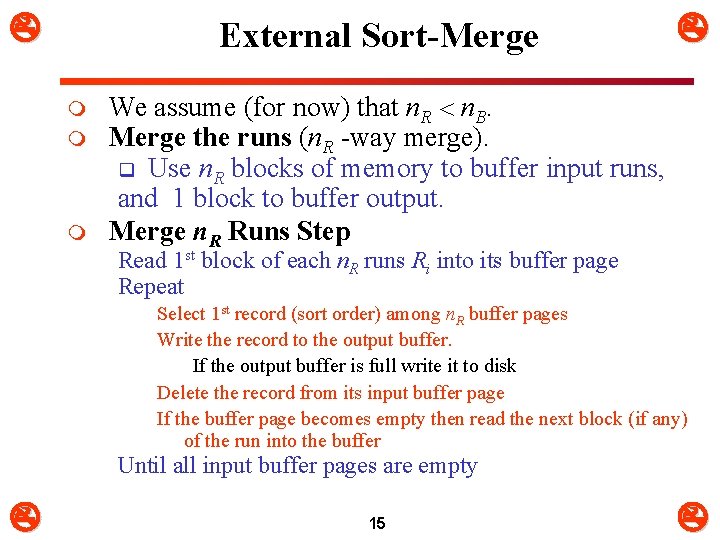

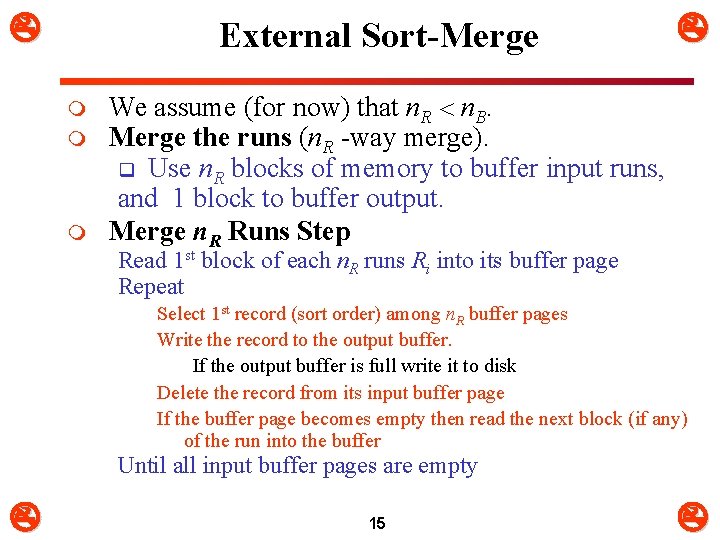

External Sort-Merge m m m We assume (for now) that n. R n. B. Merge the runs (n. R -way merge). q Use n. R blocks of memory to buffer input runs, and 1 block to buffer output. Merge n. R Runs Step Read 1 st block of each n. R runs Ri into its buffer page Repeat Select 1 st record (sort order) among n. R buffer pages Write the record to the output buffer. If the output buffer is full write it to disk Delete the record from its input buffer page If the buffer page becomes empty then read the next block (if any) of the run into the buffer Until all input buffer pages are empty 15

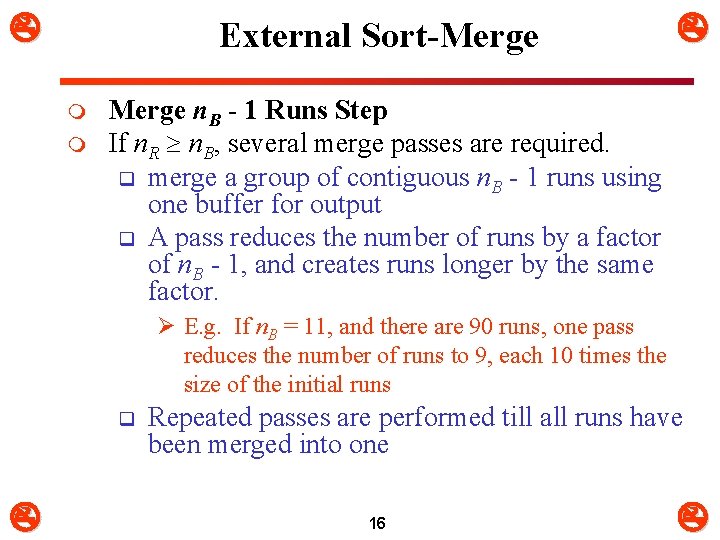

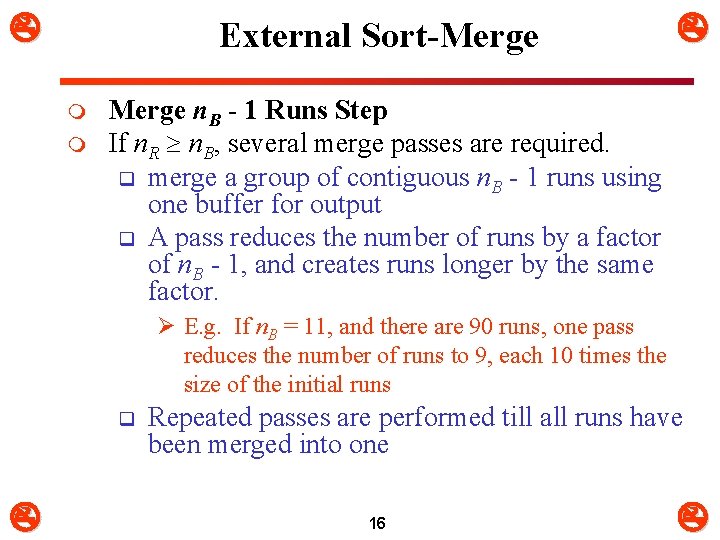

External Sort-Merge m m Merge n. B - 1 Runs Step If n. R n. B, several merge passes are required. q merge a group of contiguous n. B - 1 runs using one buffer for output q A pass reduces the number of runs by a factor of n. B - 1, and creates runs longer by the same factor. Ø E. g. If n. B = 11, and there are 90 runs, one pass reduces the number of runs to 9, each 10 times the size of the initial runs q Repeated passes are performed till all runs have been merged into one 16

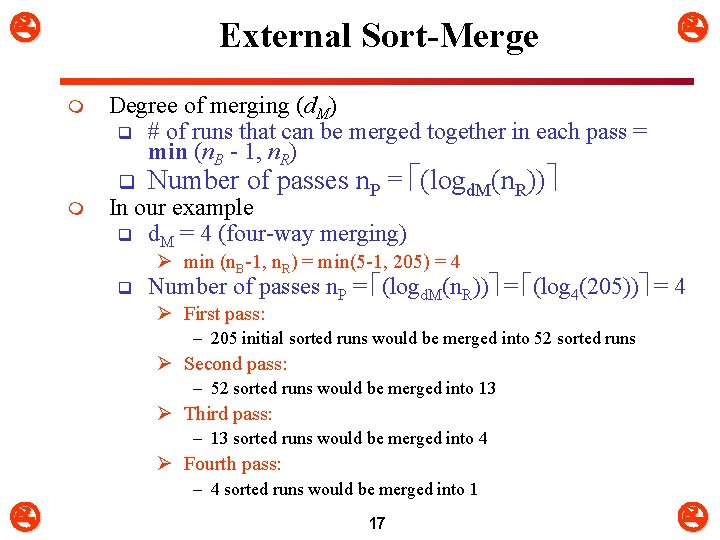

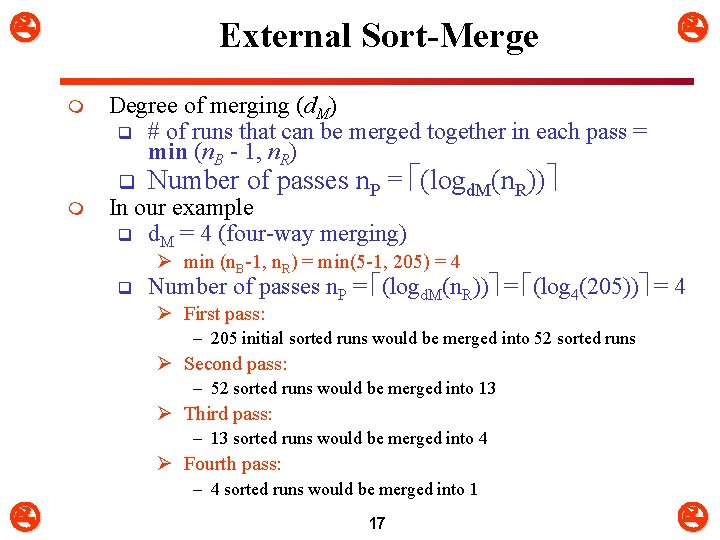

External Sort-Merge m Degree of merging (d. M) q # of runs that can be merged together in each pass = min (n. B - 1, n. R) q m Number of passes n. P = (logd. M(n. R)) In our example q d. M = 4 (four-way merging) Ø min (n. B-1, n. R) = min(5 -1, 205) = 4 q Number of passes n. P = (logd. M(n. R)) = (log 4(205)) = 4 Ø First pass: – 205 initial sorted runs would be merged into 52 sorted runs Ø Second pass: – 52 sorted runs would be merged into 13 Ø Third pass: – 13 sorted runs would be merged into 4 Ø Fourth pass: – 4 sorted runs would be merged into 1 17

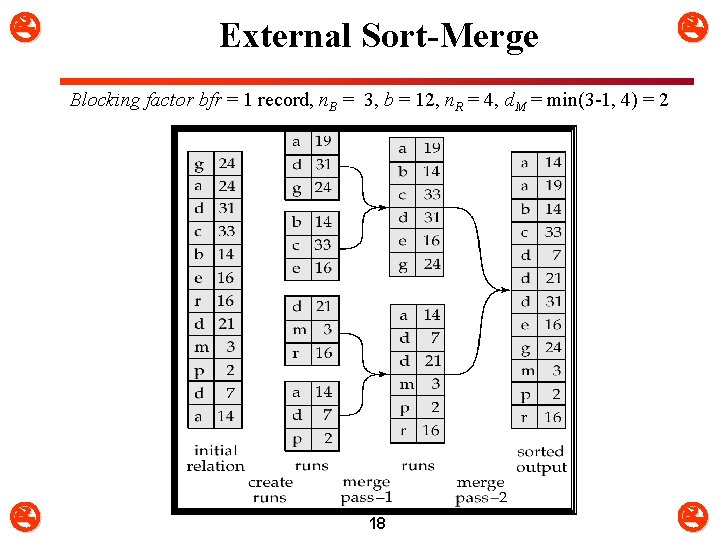

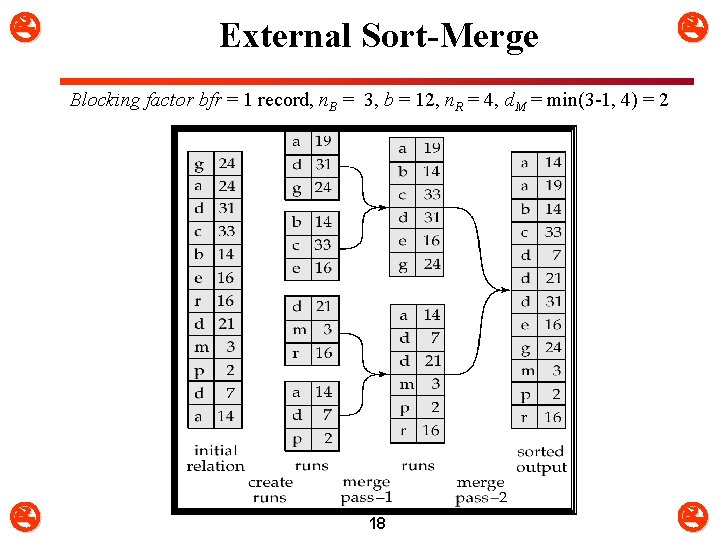

External Sort-Merge Blocking factor bfr = 1 record, n. B = 3, b = 12, n. R = 4, d. M = min(3 -1, 4) = 2 18

External Sort-Merge 19

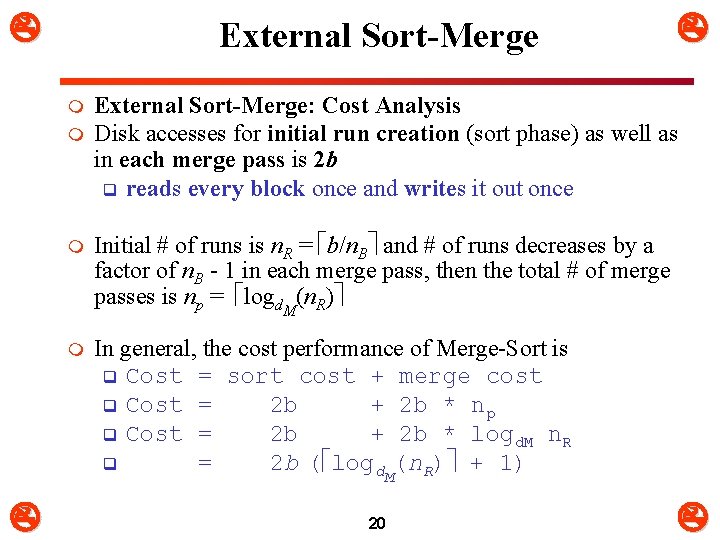

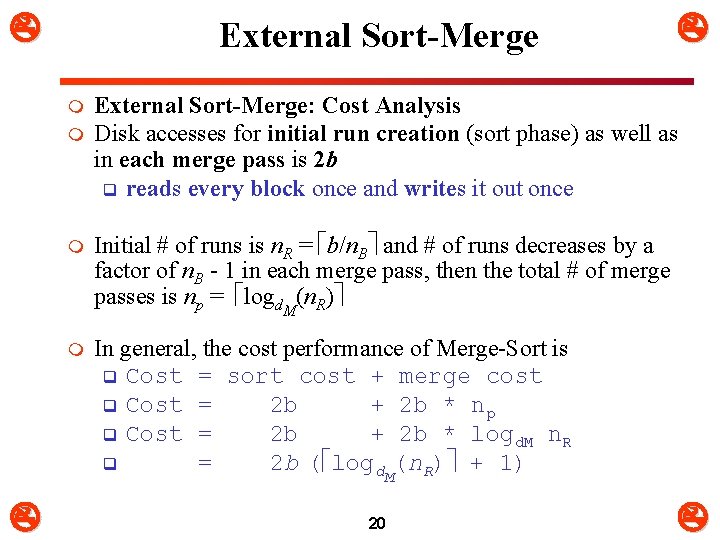

External Sort-Merge m m External Sort-Merge: Cost Analysis Disk accesses for initial run creation (sort phase) as well as in each merge pass is 2 b q reads every block once and writes it out once m Initial # of runs is n. R = b/n. B and # of runs decreases by a factor of n. B - 1 in each merge pass, then the total # of merge passes is np = logd. M(n. R) m In general, the cost performance of Merge-Sort is q Cost = sort cost + merge cost q Cost = 2 b + 2 b * np q Cost = 2 b + 2 b * logd. M n. R q = 2 b ( logd. M(n. R) + 1) 20

External Sort-Merge 21

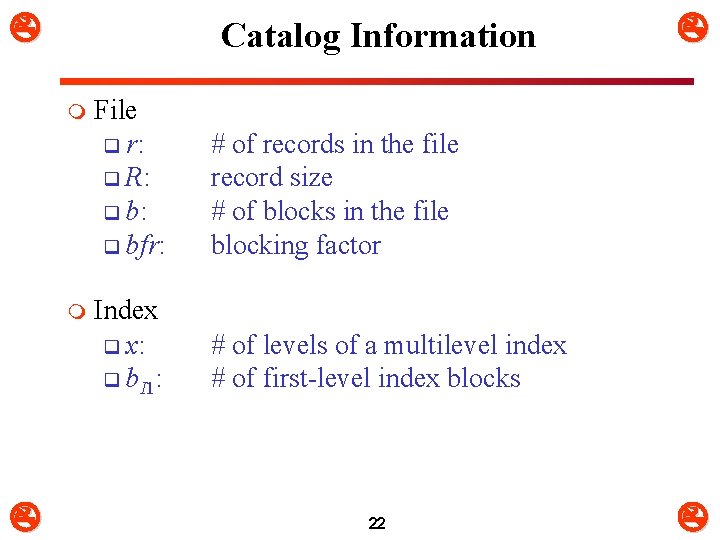

Catalog Information m m File q r: q R: q bfr: # of records in the file record size # of blocks in the file blocking factor Index q x: q b. I 1: # of levels of a multilevel index # of first-level index blocks 22

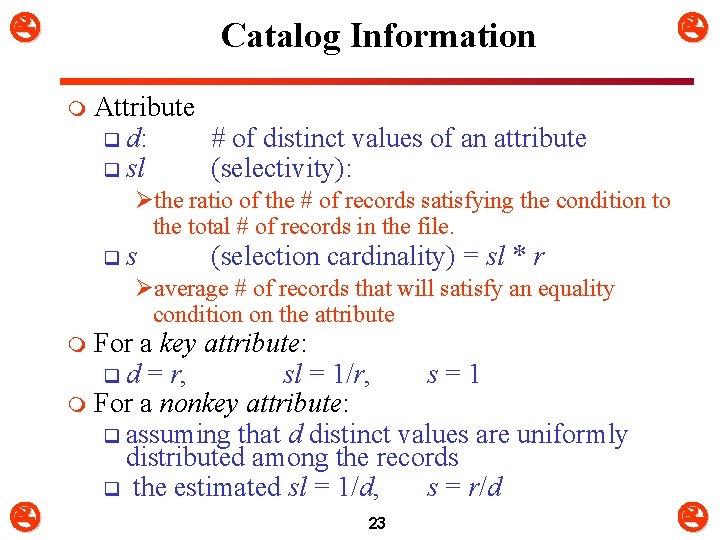

Catalog Information m Attribute q d: # of distinct values of an attribute q sl (selectivity): Øthe ratio of the # of records satisfying the condition to the total # of records in the file. q s (selection cardinality) = sl * r Øaverage # of records that will satisfy an equality condition on the attribute For a key attribute: q d = r, sl = 1/r, s = 1 m For a nonkey attribute: q assuming that d distinct values are uniformly distributed among the records q the estimated sl = 1/d, s = r/d m 23

File Scans m Types of scans q File scan – search algorithms that locate and retrieve records that fulfill a selection condition. q Index scan – search algorithms that use an index Øselection condition must be on search-key of index. m Cost estimate C = # of disk blocks scanned 24

3. Algorithms for SELECT Operations m Implementing the SELECT Operation m Examples: q (OP 1): SSN='123456789' (EMP) q (OP 2): DNUMBER>5(DEPT) q (OP 3): DNO=5(EMP) q (OP 4): DNO=5 AND SALARY>30000 AND SEX=F(EMP) q (OP 5): ESSN=123456789 AND PNO=10(WORKS_ON) 25

Algorithms for Selection Operation Search Methods for Simple Selection: m S 1 (linear search) q Retrieve every record in the file, and test whether its attribute values satisfy the selection condition. m q If selection is on a nonkey attribute, C = b q If selection is equality on a key attribute, Øif record found, average cost C = b/2, else C = b 26

Algorithms for Selection Operation m S 2 (binary search) q Applicable if selection is an equality comparison on the attribute on which file is ordered. q Assume that the blocks of a relation are stored contiguously q If selection is on a nonkey attribute: ØC = log 2 b: cost of locating the 1 st tuple + Ø s/bfr - 1: # of blocks containing records that satisfy selection condition q If selection is equality on a key attribute: ØC = log 2 b, since s = 1, in this case 27

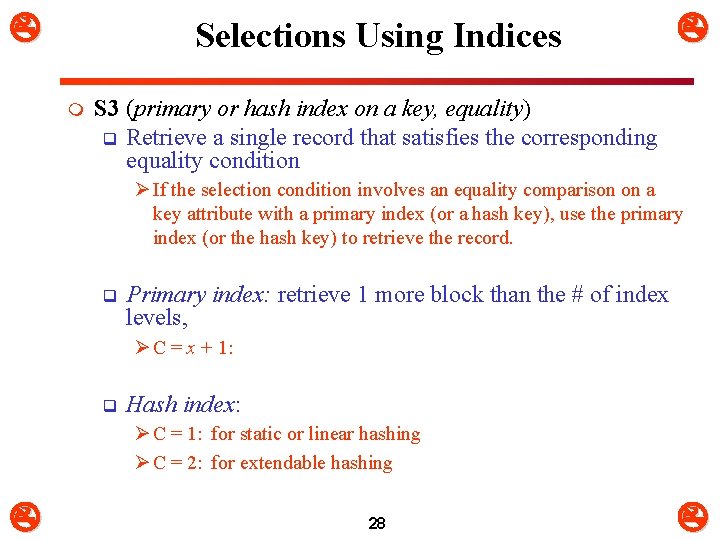

Selections Using Indices m S 3 (primary or hash index on a key, equality) q Retrieve a single record that satisfies the corresponding equality condition Ø If the selection condition involves an equality comparison on a key attribute with a primary index (or a hash key), use the primary index (or the hash key) to retrieve the record. q Primary index: retrieve 1 more block than the # of index levels, Ø C = x + 1: q Hash index: Ø C = 1: for static or linear hashing Ø C = 2: for extendable hashing 28

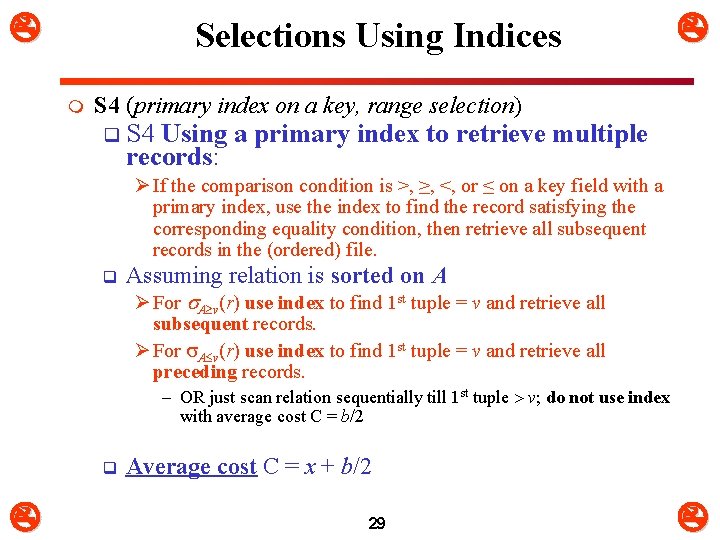

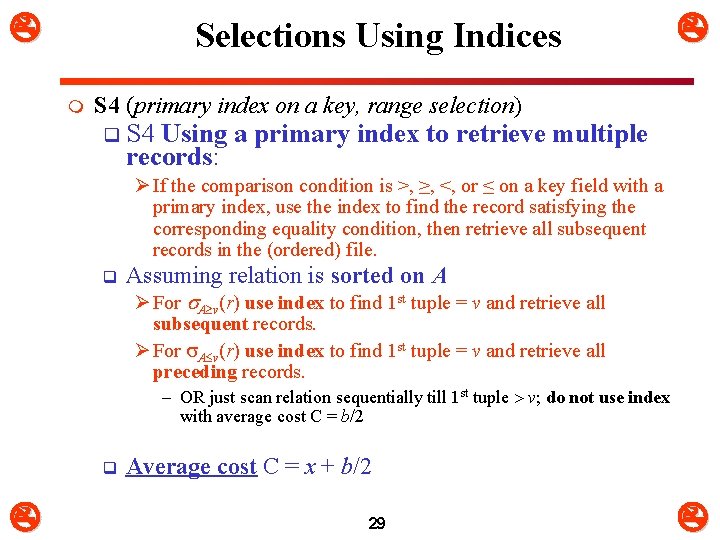

Selections Using Indices m S 4 (primary index on a key, range selection) q S 4 Using records: a primary index to retrieve multiple Ø If the comparison condition is >, ≥, <, or ≤ on a key field with a primary index, use the index to find the record satisfying the corresponding equality condition, then retrieve all subsequent records in the (ordered) file. q Assuming relation is sorted on A Ø For A v(r) use index to find 1 st tuple = v and retrieve all subsequent records. Ø For A v(r) use index to find 1 st tuple = v and retrieve all preceding records. – OR just scan relation sequentially till 1 st tuple v; do not use index with average cost C = b/2 q Average cost C = x + b/2 29

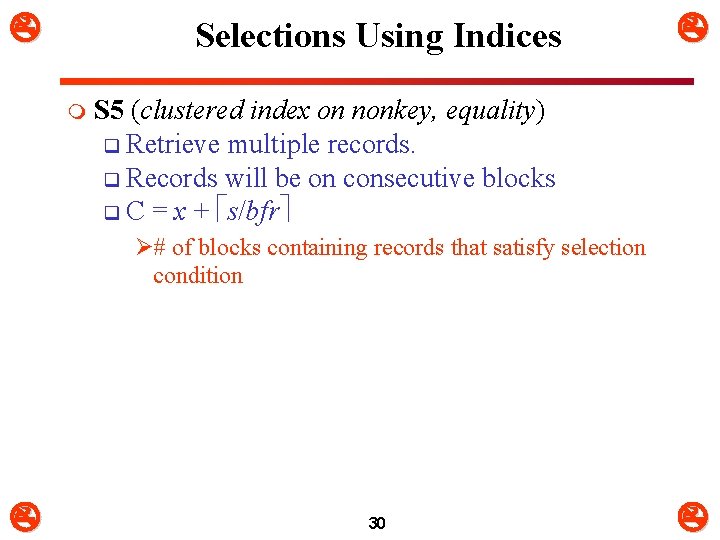

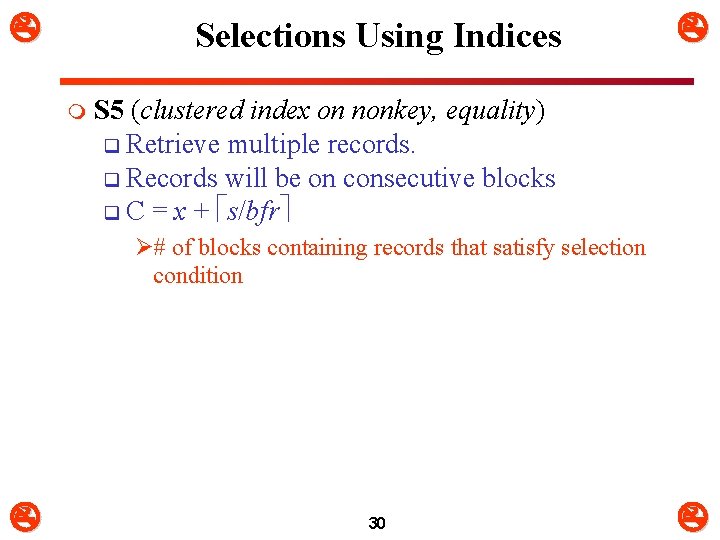

Selections Using Indices m S 5 (clustered index on nonkey, equality) q Retrieve multiple records. q Records will be on consecutive blocks q C = x + s/bfr Ø# of blocks containing records that satisfy selection condition 30

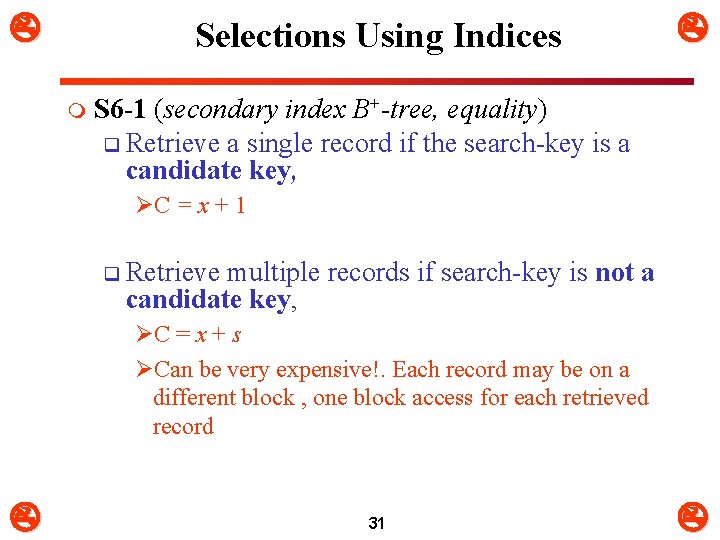

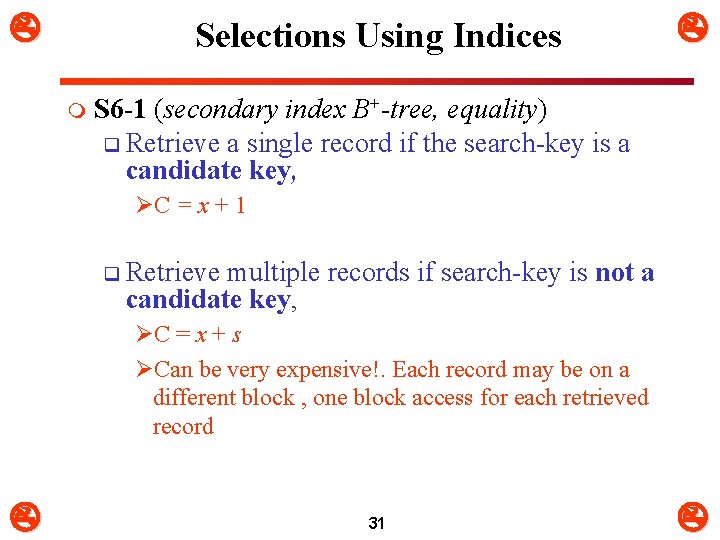

Selections Using Indices m S 6 -1 (secondary index B+-tree, equality) q Retrieve a single record if the search-key is a candidate key, ØC = x + 1 q Retrieve multiple records if search-key is not candidate key, a ØC = x + s ØCan be very expensive!. Each record may be on a different block , one block access for each retrieved record 31

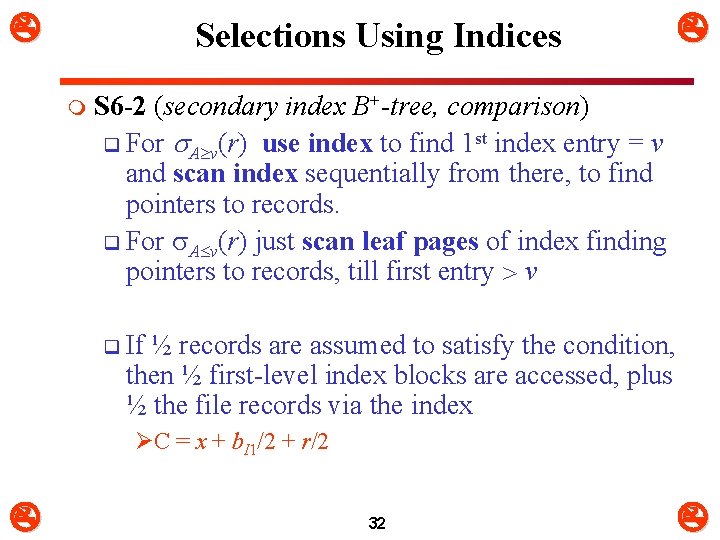

Selections Using Indices m S 6 -2 (secondary index B+-tree, comparison) q For A v(r) use index to find 1 st index entry = v and scan index sequentially from there, to find pointers to records. q For A v(r) just scan leaf pages of index finding pointers to records, till first entry v q If ½ records are assumed to satisfy the condition, then ½ first-level index blocks are accessed, plus ½ the file records via the index ØC = x + b. I 1/2 + r/2 32

Complex Selections: 1 2 … n(r) m S 7 (conjunctive selection using one index) q Select i and algorithms S 1 through S 6 that results in the least cost for i(r). q Test other conditions on tuple after fetching it into memory buffer. q Cost of the algorithms chosen. 33

Complex Selections: 1 2 … n(r) m S 8 (conjunctive selection using composite index). q If two or more attributes are involved in equality conditions in the conjunctive condition and a composite index (or hash structure) exists on the combined field, we can use the index directly. q Use appropriate composite index if available using one the algorithms S 3 (primary index), S 5, or S 6 (B+-tree, equality). 34

Complex Selections: 1 2 … n(r) m S 9 (conjunctive selection by intersection of record pointers) q Requires indices with record pointers. q Use corresponding index for each condition, and take intersection of all the obtained sets of record pointers, then fetch records from file q If some conditions do not have appropriate indices, apply test in memory. q Cost is the sum of the costs of the individual index scan plus the cost of retrieving records from disk. 35

Complex Selections: 1 2 … n(r) m S 10 (disjunctive selection by union of identifiers) q Applicable if all conditions have available indices. ØOtherwise use linear scan. q Use corresponding index for each condition, and take union of all the obtained sets of record pointers. Then fetch records from file m READ q “Examples of Cost Functions for Select” page 569 -570. 36

Join Algorithms Join (EQUIJOIN, NATURAL JOIN) q two–way join: a join on two files e. g. R A=B S q multi-way joins: joins involving more than two files. e. g. , R A=B S C=D T m Examples q (OP 6): EMP DNO=DNUMBER DEPT q (OP 7): DEPT MGRSSN=SSN EMP m m Factors affecting JOIN performance q Available buffer space q Join selection factor q Choice of inner vs outer relation 37

Join Algorithms m Join selectivity (js) : 0 js 1 q the ratio of the # of tuples of the resulting join file to the # of tuples of the Cartesian product file. q = |R A=BS| / |R S| = |R A=BS| / (r. R * r. S) m If A is a key of R, then q |R S| r. S, so js 1/r. R m If B is a key of S, then q |R S| r. R, so js 1/r. S 38

Join Algorithms m Having an estimate of js, q the estimate # of tuples of the resulting join file is |R S| = js * r. R * r. S m bfr. RS is the blocking factor of the resulting join file m Cost of writing the resulting join file to disk (b. RS) is q (js * r. R * r. S)/bfr. RS 39

Join Algorithms m J 1: Nested-loop join (brute force): q For each record t in R (outer loop), retrieve every record s from S (inner loop) and test whether the two records satisfy the join condition t[A] = s[B]. m J 2: Single-loop join (Using an index): q If an index (or hash key) exists for one of the two join attributes — say, B of S — retrieve each record t in R, one at a time, and then use the index to retrieve directly all matching records s from S that satisfy s[B] = t[A]. 40

Join Algorithms m J 3 Sort-merge join: q If the records of R and S are physically sorted (ordered) by value of the join attributes A and B, respectively, we can implement the join in the most efficient way possible. q Both files are scanned in order of the join attributes, matching the records that have the same values for A and B. q In this method, the records of each file are scanned only once each for matching with the other file—unless both A and B are non-key attributes, in which case the method needs to be modified slightly. 41

Join Algorithms m J 4 Hash-join: q The records of files R and S are both hashed to the same hash file, using the same hashing function on the join attributes A of R and B of S as hash keys. q A single pass through the file with fewer records (say, R) hashes its records to the hash file buckets. q A single pass through the other file (S) then hashes each of its records to the appropriate bucket, where the record is combined with all matching records from R. 42

Nested-Loop Join (J 1) m To compute theta join R S for each tuple t. R in R do for each tuple t. S in S do if t. R, t. S satisfy , add t. R • t. S to result m R is called the outer relation and S the inner relation of the join. Requires no indices and can be used with any kind of join condition. m Expensive since it examines every pair of tuples in the two relations. m 43

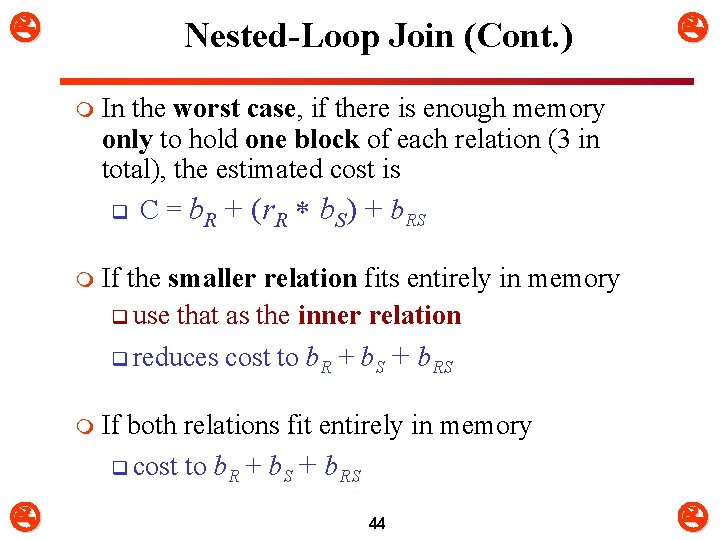

Nested-Loop Join (Cont. ) m In the worst case, if there is enough memory only to hold one block of each relation (3 in total), the estimated cost is q C = b. R + (r. R b. S) + b. RS m If the smaller relation fits entirely in memory q use that as the inner relation q reduces cost to b. R + b. S + b. RS m If both relations fit entirely in memory q cost to b. R + b. S + b. RS 44

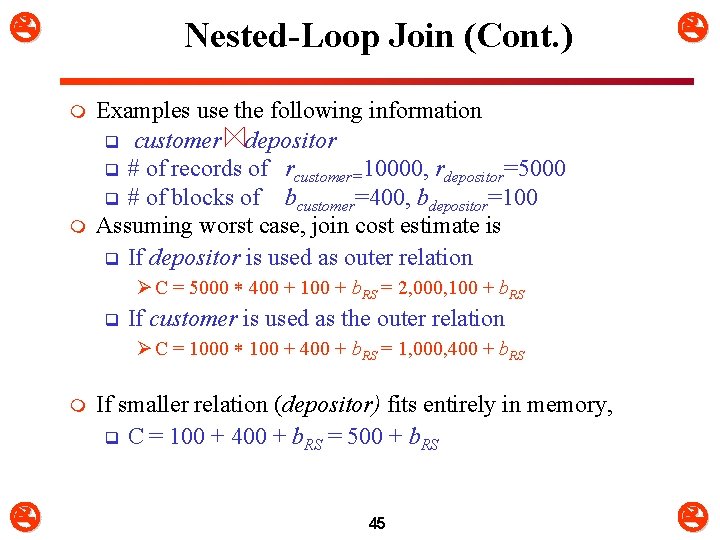

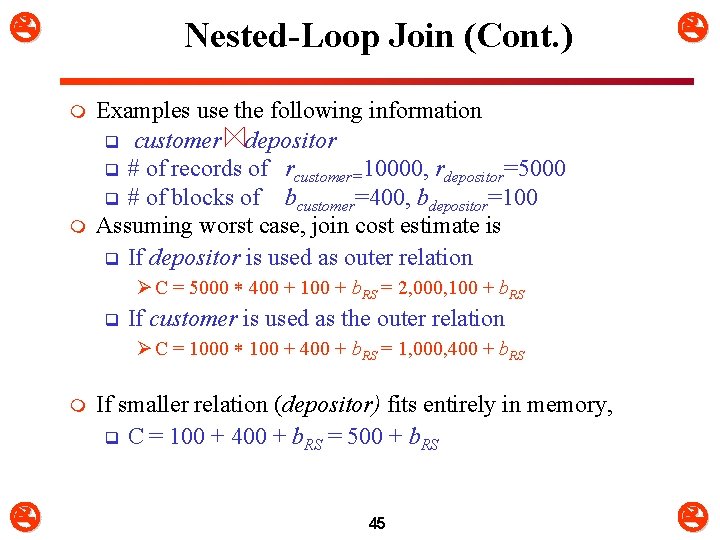

Nested-Loop Join (Cont. ) m m Examples use the following information q customer depositor q # of records of rcustomer=10000, rdepositor=5000 q # of blocks of bcustomer=400, bdepositor=100 Assuming worst case, join cost estimate is q If depositor is used as outer relation Ø C = 5000 400 + 100 + b. RS = 2, 000, 100 + b. RS q If customer is used as the outer relation Ø C = 1000 100 + 400 + b. RS = 1, 000, 400 + b. RS m If smaller relation (depositor) fits entirely in memory, q C = 100 + 400 + b. RS = 500 + b. RS 45

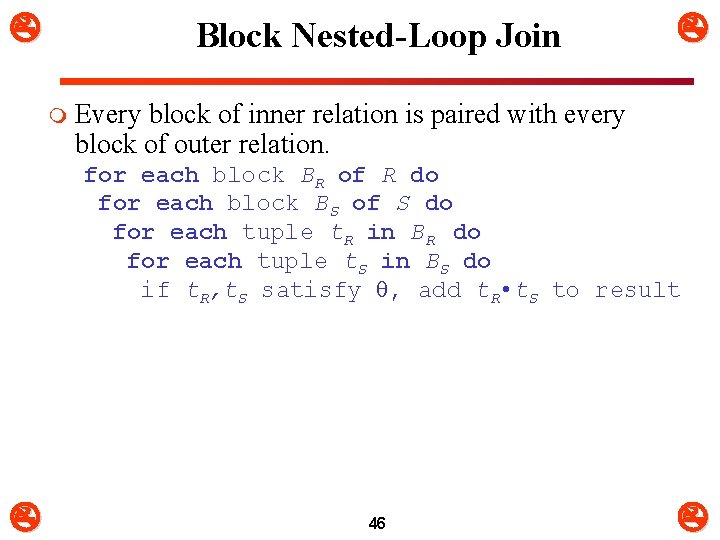

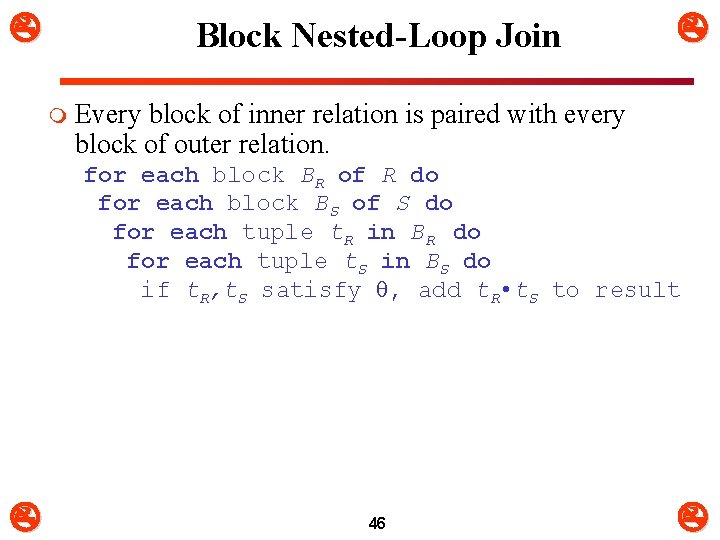

Block Nested-Loop Join m Every block of inner relation is paired with every block of outer relation. for each block BR of R do for each block BS of S do for each tuple t. R in BR do for each tuple t. S in BS do if t. R, t. S satisfy , add t. R • t. S to result 46

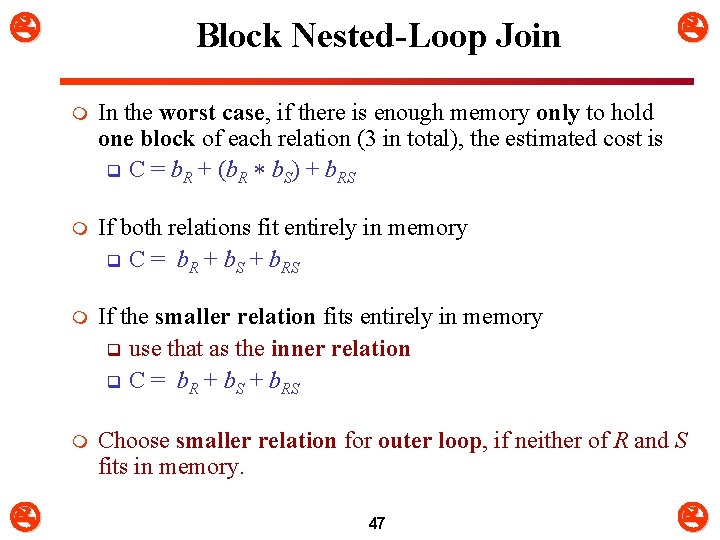

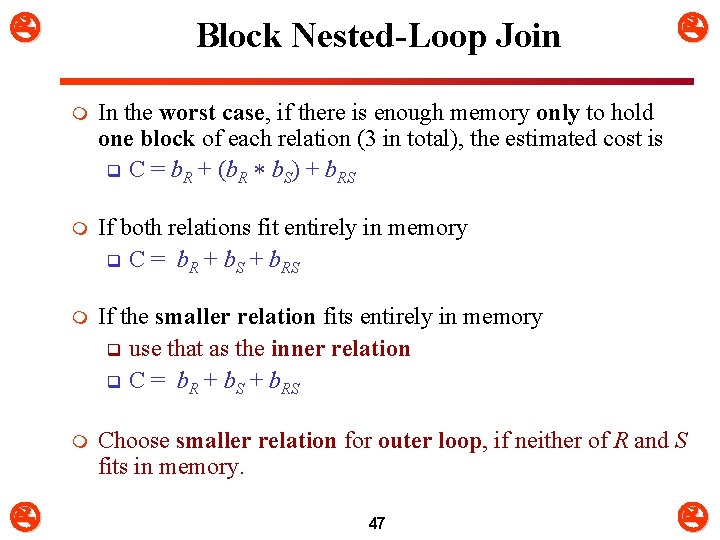

Block Nested-Loop Join m In the worst case, if there is enough memory only to hold one block of each relation (3 in total), the estimated cost is q C = b. R + (b. R b. S) + b. RS m If both relations fit entirely in memory q C = b. R + b. S + b. RS m If the smaller relation fits entirely in memory q use that as the inner relation q C = b. R + b. S + b. RS m Choose smaller relation for outer loop, if neither of R and S fits in memory. 47

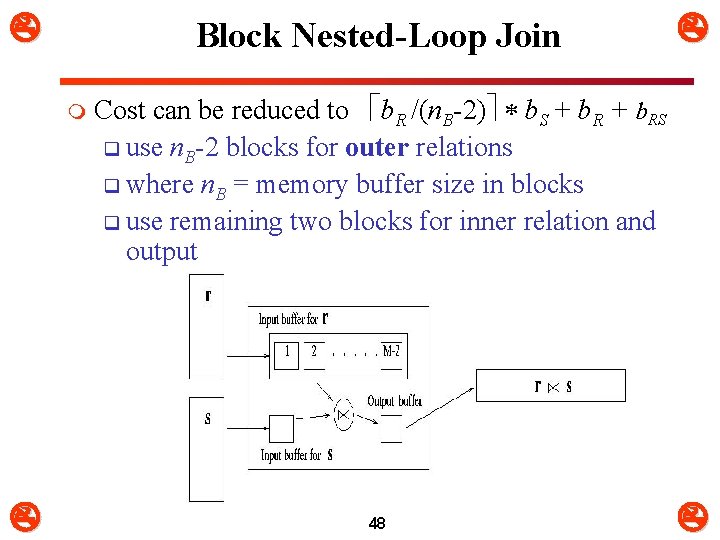

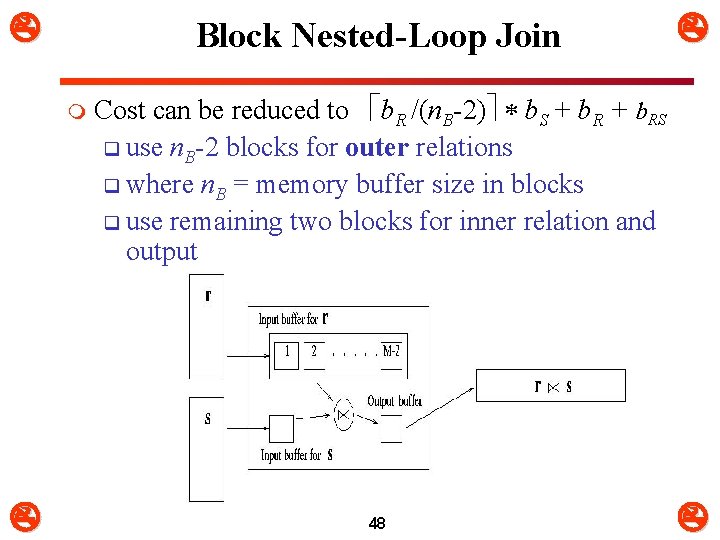

Block Nested-Loop Join m Cost can be reduced to b. R /(n. B-2) b. S + b. RS q use n. B-2 blocks for outer relations q where n. B = memory buffer size in blocks q use remaining two blocks for inner relation and output 48

Block Nested-Loop Join m Cost can be reduced q If equi-join or natural join attribute forms a key on the inner relation, stop inner loop on first match q Scan inner loop forward and backward alternately, to make use of the blocks remaining in buffer. q Use index on inner relation if available 49

Indexed Nested-Loop Join (J 2) m Index lookups can replace file scans if q an index is available on the inner relation’s join attribute Ø Can construct an index just to compute a join. m For each tuple t. R in the outer relation R, q use the index to retrieve tuples in S that satisfy the join condition with tuple t. R m If indices are available on join attributes of both R and S, q use the relation with fewer tuples as the outer relation. 50

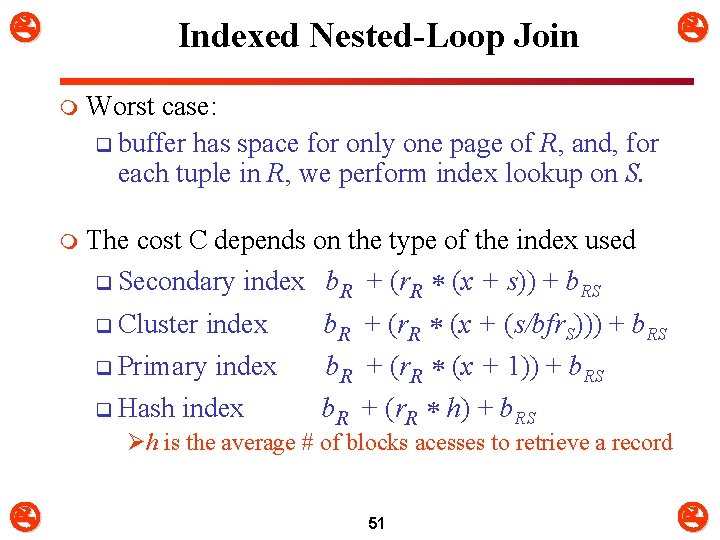

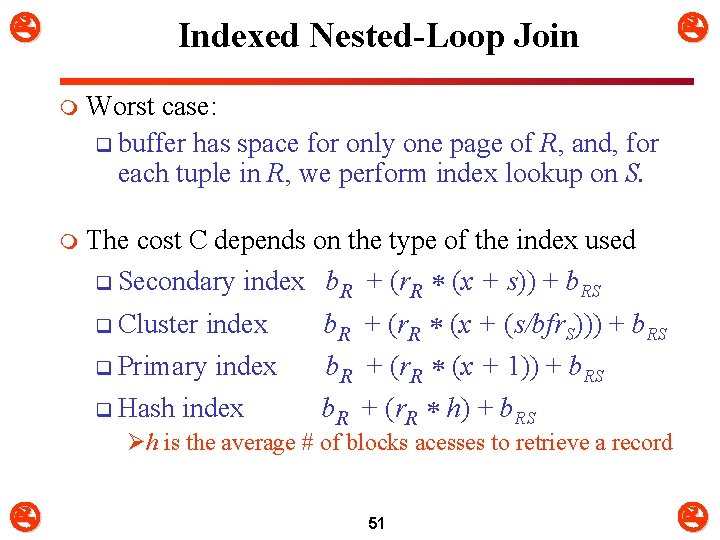

Indexed Nested-Loop Join m Worst case: q buffer has space for only one page of R, and, for each tuple in R, we perform index lookup on S. m The cost C depends on the type of the index used q Secondary index b. R + (r. R (x + s)) + b. RS q Cluster index b. R + (r. R (x + (s/bfr. S))) + b. RS q Primary index b. R + (r. R (x + 1)) + b. RS q Hash index b. R + (r. R h) + b. RS Øh is the average # of blocks acesses to retrieve a record 51

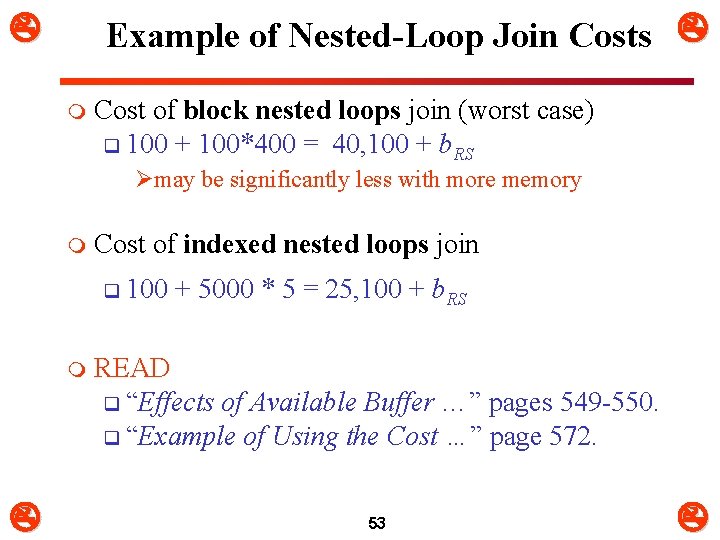

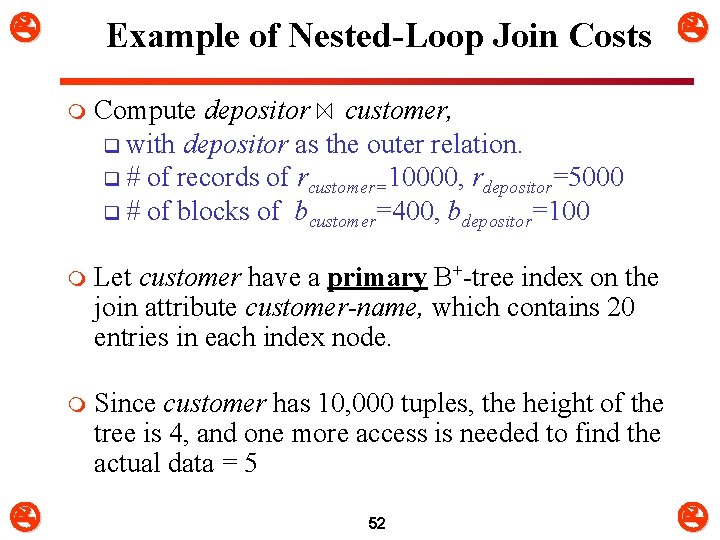

Example of Nested-Loop Join Costs m Compute depositor customer, q with depositor as the outer relation. q # of records of rcustomer=10000, rdepositor=5000 q # of blocks of bcustomer=400, bdepositor=100 m Let customer have a primary B+-tree index on the join attribute customer-name, which contains 20 entries in each index node. m Since customer has 10, 000 tuples, the height of the tree is 4, and one more access is needed to find the actual data = 5 52

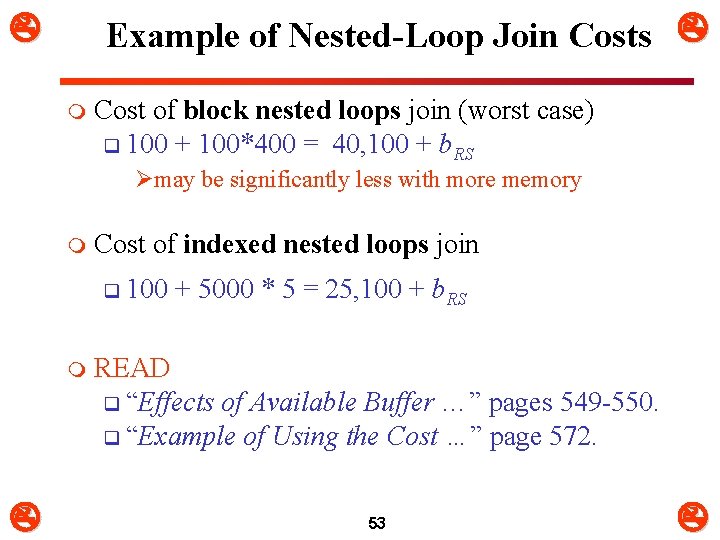

Example of Nested-Loop Join Costs m Cost of block nested loops join (worst case) q 100 + 100*400 = 40, 100 + b. RS Ømay be significantly less with more memory m Cost of indexed nested loops join q 100 + 5000 * 5 = 25, 100 + b. RS m READ q “Effects of Available Buffer …” pages 549 -550. q “Example of Using the Cost …” page 572. 53

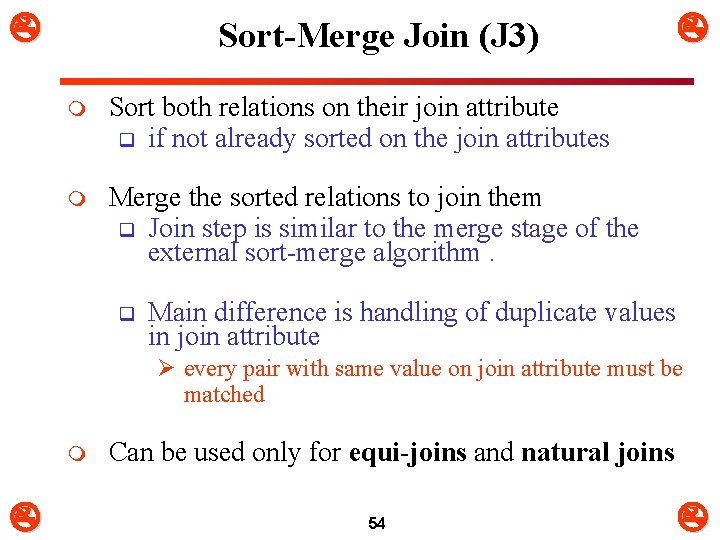

Sort-Merge Join (J 3) m Sort both relations on their join attribute q if not already sorted on the join attributes m Merge the sorted relations to join them q Join step is similar to the merge stage of the external sort-merge algorithm. q Main difference is handling of duplicate values in join attribute Ø every pair with same value on join attribute must be matched m Can be used only for equi-joins and natural joins 54

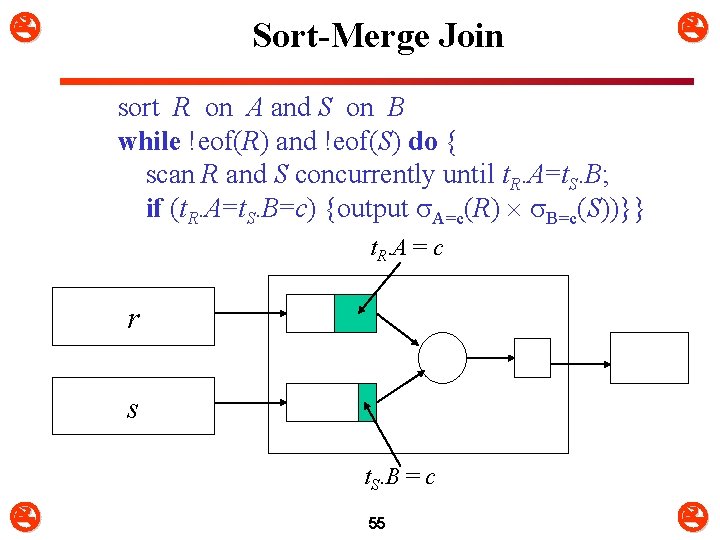

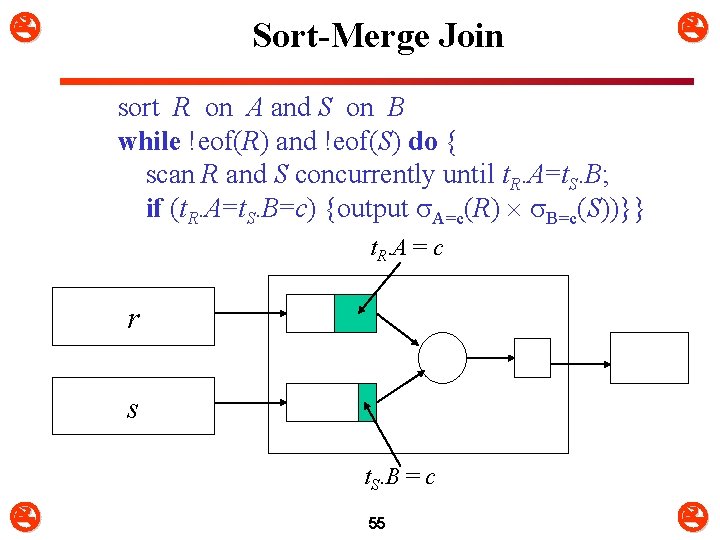

Sort-Merge Join sort R on A and S on B while !eof(R) and !eof(S) do { scan R and S concurrently until t. R. A=t. S. B; if (t. R. A=t. S. B=c) {output A=c(R) B=c(S))}} t. R. A = c r s t. S. B = c 55

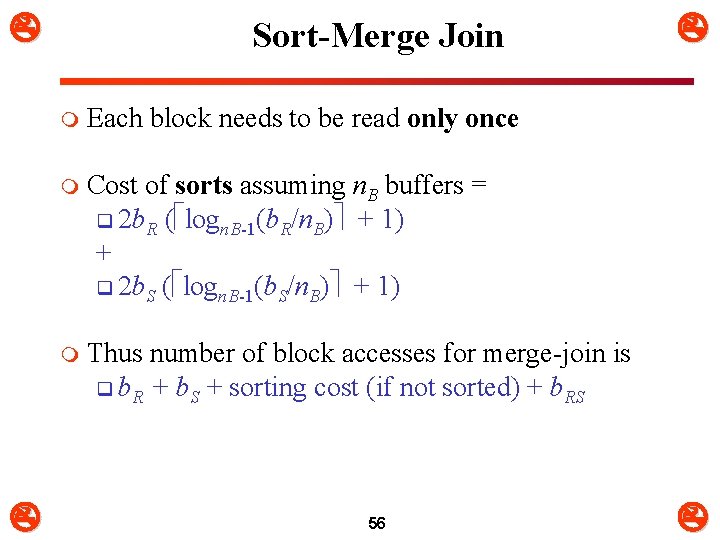

Sort-Merge Join m Each block needs to be read only once m Cost of sorts assuming n. B buffers = q 2 b. R ( logn. B-1(b. R/n. B) + 1) + q 2 b. S ( logn. B-1(b. S/n. B) + 1) m Thus number of block accesses for merge-join is q b. R + b. S + sorting cost (if not sorted) + b. RS 56

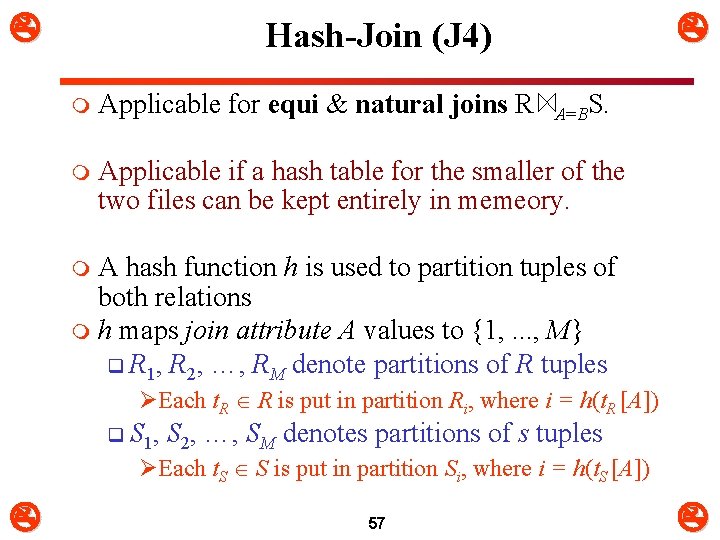

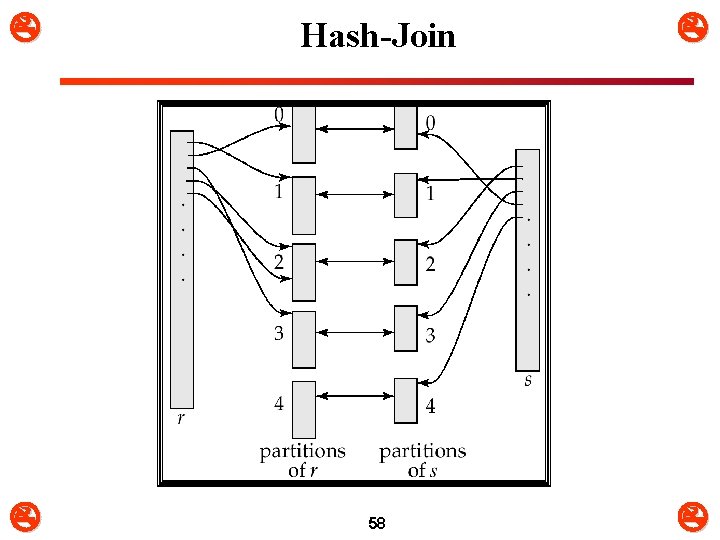

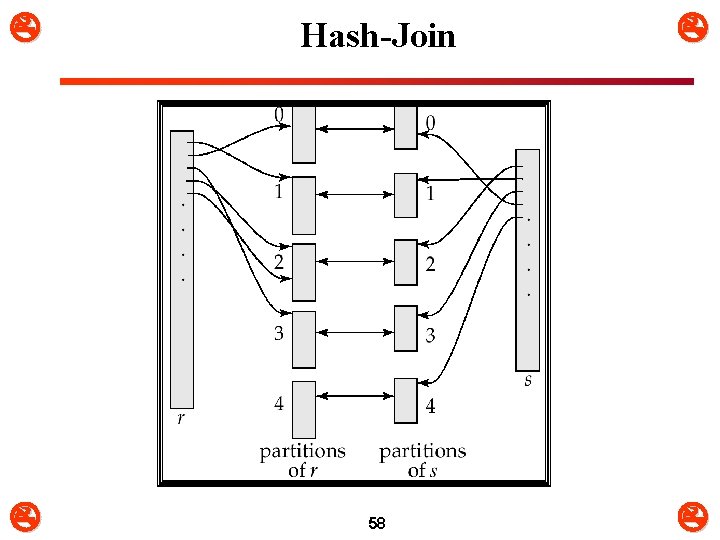

Hash-Join (J 4) m Applicable for equi & natural joins R A=BS. m Applicable if a hash table for the smaller of the two files can be kept entirely in memeory. A hash function h is used to partition tuples of both relations m h maps join attribute A values to {1, . . . , M} q R 1, R 2, …, RM denote partitions of R tuples m ØEach t. R R is put in partition Ri, where i = h(t. R [A]) q S 1, S 2, …, SM denotes partitions of s tuples ØEach t. S S is put in partition Si, where i = h(t. S [A]) 57

Hash-Join 58

Hash-Join m R tuples in Ri need only to be compared with S tuples in Si m Need not be compared with S tuples in any other partition, since: q an R tuple and an S tuple that satisfy the join condition will have the same value for the join attributes. q If that value is hashed to some value i, the R tuple has to be in Ri and the S tuple in Si 59

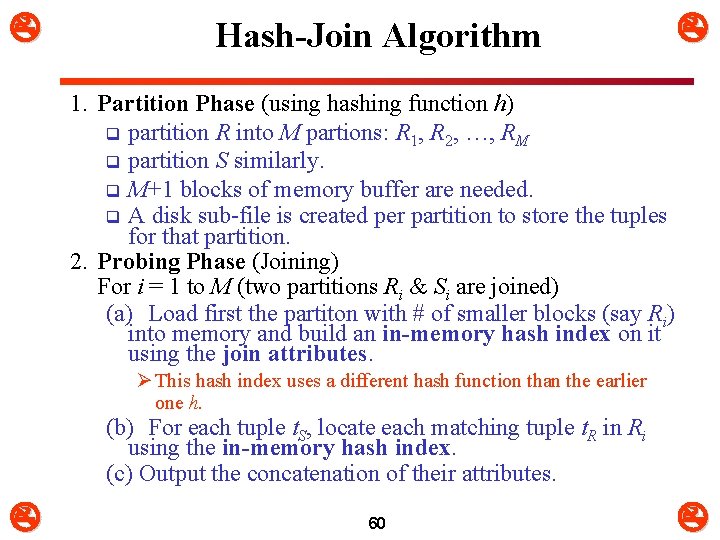

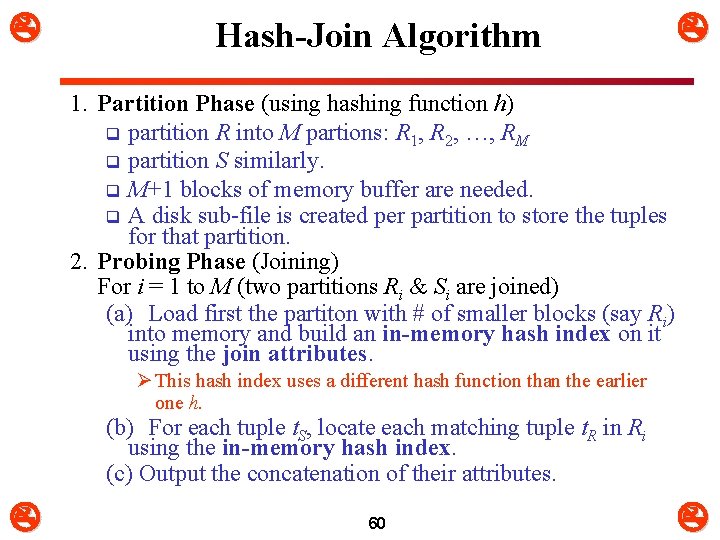

Hash-Join Algorithm 1. Partition Phase (using hashing function h) q partition R into M partions: R 1, R 2, …, RM q partition S similarly. q M+1 blocks of memory buffer are needed. q A disk sub-file is created per partition to store the tuples for that partition. 2. Probing Phase (Joining) For i = 1 to M (two partitions Ri & Si are joined) (a) Load first the partiton with # of smaller blocks (say Ri) into memory and build an in-memory hash index on it using the join attributes. Ø This hash index uses a different hash function than the earlier one h. (b) For each tuple t. S, locate each matching tuple t. R in Ri using the in-memory hash index. (c) Output the concatenation of their attributes. 60

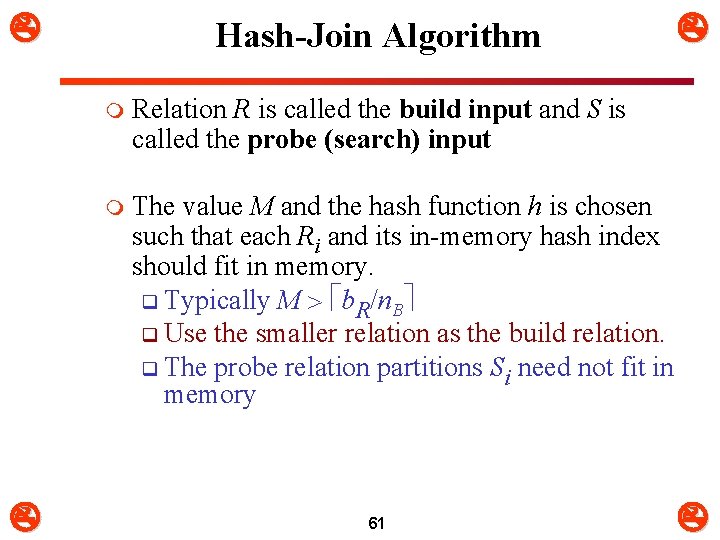

Hash-Join Algorithm m Relation R is called the build input and S is called the probe (search) input m The value M and the hash function h is chosen such that each Ri and its in-memory hash index should fit in memory. q Typically M b. R/n. B q Use the smaller relation as the build relation. q The probe relation partitions Si need not fit in memory 61

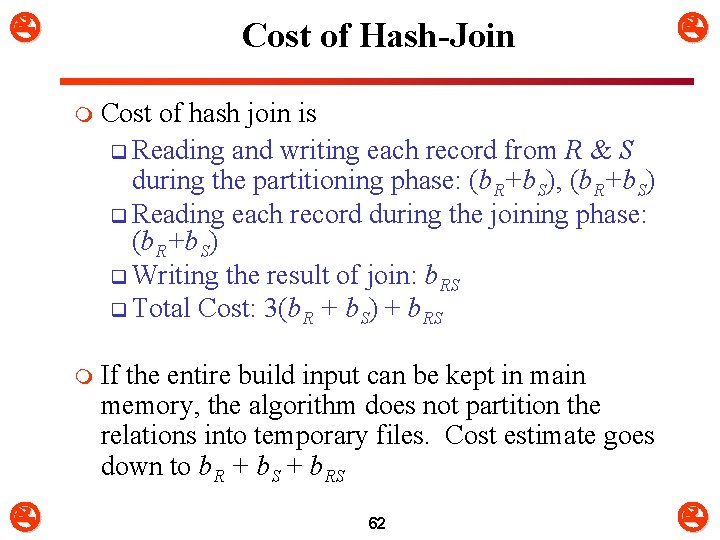

Cost of Hash-Join m Cost of hash join is q Reading and writing each record from R & S during the partitioning phase: (b. R+b. S), (b. R+b. S) q Reading each record during the joining phase: (b. R+b. S) q Writing the result of join: b. RS q Total Cost: 3(b. R + b. S) + b. RS m If the entire build input can be kept in main memory, the algorithm does not partition the relations into temporary files. Cost estimate goes down to b. R + b. S + b. RS 62

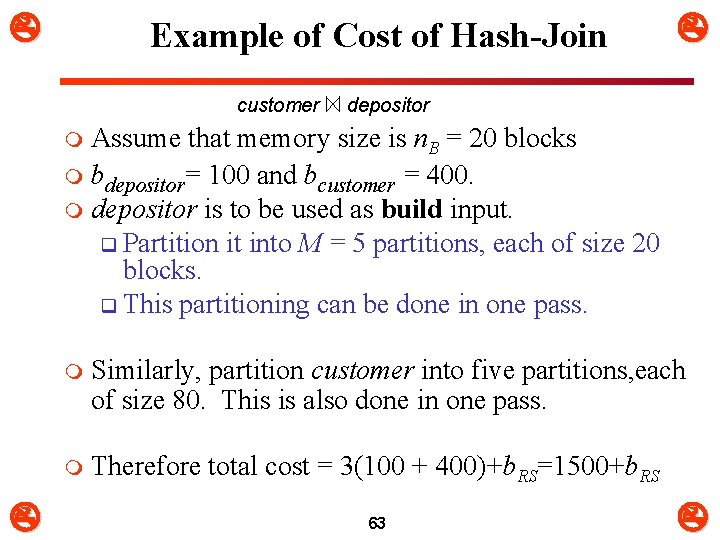

Example of Cost of Hash-Join customer depositor Assume that memory size is n. B = 20 blocks m bdepositor= 100 and bcustomer = 400. m depositor is to be used as build input. q Partition it into M = 5 partitions, each of size 20 blocks. q This partitioning can be done in one pass. m m Similarly, partition customer into five partitions, each of size 80. This is also done in one pass. m Therefore total cost = 3(100 + 400)+b. RS=1500+b. RS 63

Partition Hash Join m Partition hash join q Partitioning phase: Ø Each file (R and S) is first partitioned into M partitions using a partitioning hash function on the join attributes: – R 1 , R 2 , R 3 , . . . Rm and S 1 , S 2 , S 3 , . . . Sm Ø Minimum number of in-memory buffers needed for the partitioning phase: M+1. Ø A disk sub-file is created per partition to store the tuples for that partition. q Joining or probing phase: Ø Involves M iterations, one per partitioned file. Ø Iteration i involves joining partitions Ri and Si. 64

Partition Hash Join m Partitioned Hash Join Procedure: q Assume Ri is smaller than Si. 1. Copy records from Ri into memory buffers. 2. Read all blocks from Si, one at a time and each record from Si is used to probe for a matching record(s) from partition Si. 3. Write matching record from Ri after joining to the record from Si into the result file. 65

Partition Hash Join m Cost analysis of partition hash join: 1. Reading and writing each record from R and S during the partitioning phase: (b. R + b. S), (b. R + b. S) 2. Reading each record during the joining phase: (b. R + b. S) 3. Writing the result of join: b. RES m Total Cost: q 3* (b. R + b. S) + b. RES 66

Hybrid Hash–Join m Hybrid hash join: q Same as partitioned hash join except: Ø Joining phase of one of the partitions is included during the partitioning phase. q Partitioning phase: Ø Allocate buffers for smaller relation- one block for each of the M-1 partitions, remaining blocks to partition 1. Ø Repeat for the larger relation in the pass through S. ) q Joining phase: Ø M-1 iterations are needed for the partitions R 2, R 3, R 4, …, RM and S 2, S 3, S 4, …, SM. Ø R 1 and S 1 are joined during the partitioning of S 1, and results of joining R 1 and S 1 are already written to the disk by the end of partitioning phase. 67

Hybrid Hash–Join E. g. With memory size of 25 blocks, depositor can be partitioned into five partitions, each of size 20 blocks. m Division of memory: q The first partition of the R (R 1) can be kept in memory. (It occupies 20 blocks of memory) q 1 block is used for input, and 1 block each for buffering the other 4 partitions. m customer is similarly partitioned into five partitions each of size 80; the 1 st partition is used right away for probing, instead of being written out and read back. m 68

Hybrid Hash–Join m Cost of hybrid hash join q 3(100 -20 + 400 -80) + 20 + 80 + b. RS = 1300+b. RS q instead of 1500 + b. RS with plain hash-join 69

Complex Joins m Join with a conjunctive condition: R 1 2. . . n S q Either use nested-loop/block nested-loop joins, or q Compute the result of one of the simpler joins R i S q final result comprises those tuples in the intermediate result that satisfy the remaining conditions Ø 1 . . . i – 1 i +1 . . . n 70

Complex Joins m Join with a disjunctive condition R 1 2 . . . n S q Compute as the union of the records in individual joins R i S: (R 1 S) (R 2 S) . . . (R n S) 71

Duplicate Elimination m Duplicate elimination can be implemented via hashing or sorting. q On sorting, duplicates will come adjacent to each other, and all but one set of duplicates can be deleted. q Optimization: duplicates can be deleted during run generation as well as at intermediate merge steps in external sort-merge. q Cost is the same as the cost of sorting q Hashing is similar – duplicates will come into the same bucket. 72

PROJECT m Algorithm for PROJECT operations (Figure 15. 3 b) <attribute list>(R) 1. If <attribute list> has a key of relation R, extract all tuples from R with only the values for the attributes in <attribute list>. 2. If <attribute list> does NOT include a key of relation R, duplicated tuples must be removed from the results. m Methods to remove duplicate tuples 1. Sorting 2. Hashing 73

Cartesian Product m CARTESIAN PRODUCT of relations R and S include all possible combinations of records from R and S. The attribute of the result include all attributes of R and S. m Cost analysis of CARTESIAN PRODUCT q If R has n records and j attributes and S has m records and k attributes, the result relation will have n*m records and j+k attributes. m CARTESIAN PRODUCT operation is very expensive and should be avoided if possible. 74

Set Operations m m m R S: (See Figure 15. 3 c) q 1. Sort the two relations on the same attributes. q 2. Scan and merge both sorted files concurrently, whenever the same tuple exists in both relations, only one is kept in the merged results. R S: (See Figure 15. 3 d) q 1. Sort the two relations on the same attributes. q 2. Scan and merge both sorted files concurrently, keep in the merged results only those tuples that appear in both relations. R – S: (See Figure 15. 3 e) q keep in the merged results only those tuples that appear in relation R but not in relation S. 75

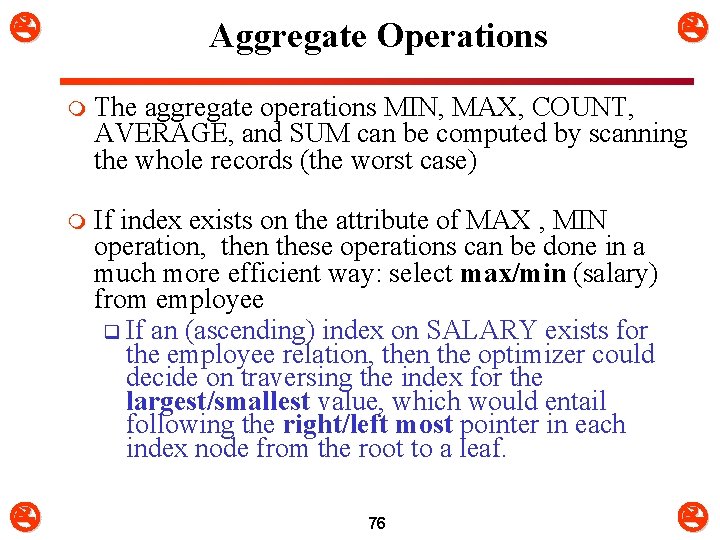

Aggregate Operations m The aggregate operations MIN, MAX, COUNT, AVERAGE, and SUM can be computed by scanning the whole records (the worst case) m If index exists on the attribute of MAX , MIN operation, then these operations can be done in a much more efficient way: select max/min (salary) from employee q If an (ascending) index on SALARY exists for the employee relation, then the optimizer could decide on traversing the index for the largest/smallest value, which would entail following the right/left most pointer in each index node from the root to a leaf. 76

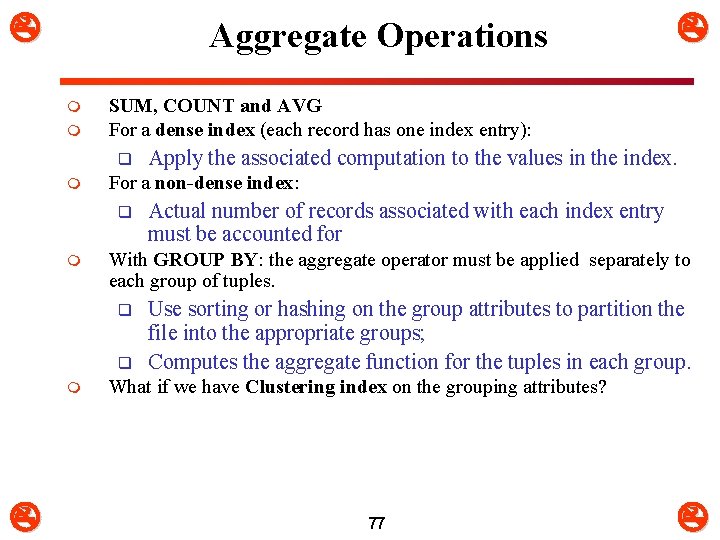

Aggregate Operations m m SUM, COUNT and AVG For a dense index (each record has one index entry): q m q Actual number of records associated with each index entry must be accounted for With GROUP BY: the aggregate operator must be applied separately to each group of tuples. q m Apply the associated computation to the values in the index. For a non-dense index: q m Use sorting or hashing on the group attributes to partition the file into the appropriate groups; Computes the aggregate function for the tuples in each group. What if we have Clustering index on the grouping attributes? 77

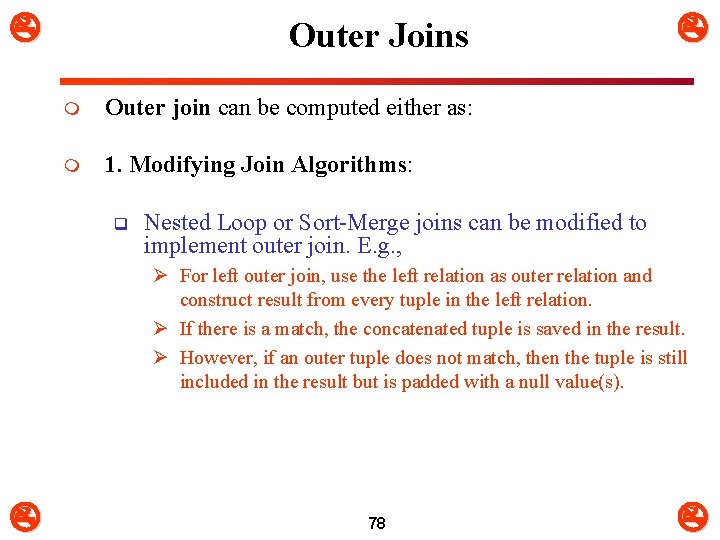

Outer Joins m Outer join can be computed either as: m 1. Modifying Join Algorithms: q Nested Loop or Sort-Merge joins can be modified to implement outer join. E. g. , Ø For left outer join, use the left relation as outer relation and construct result from every tuple in the left relation. Ø If there is a match, the concatenated tuple is saved in the result. Ø However, if an outer tuple does not match, then the tuple is still included in the result but is padded with a null value(s). 78

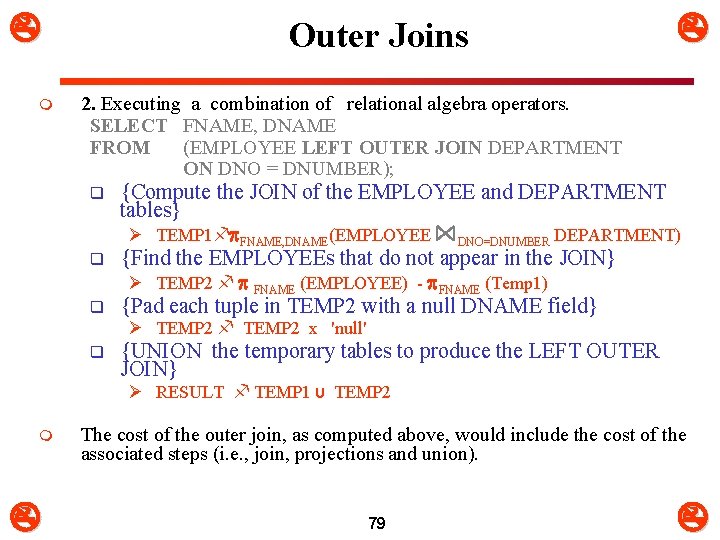

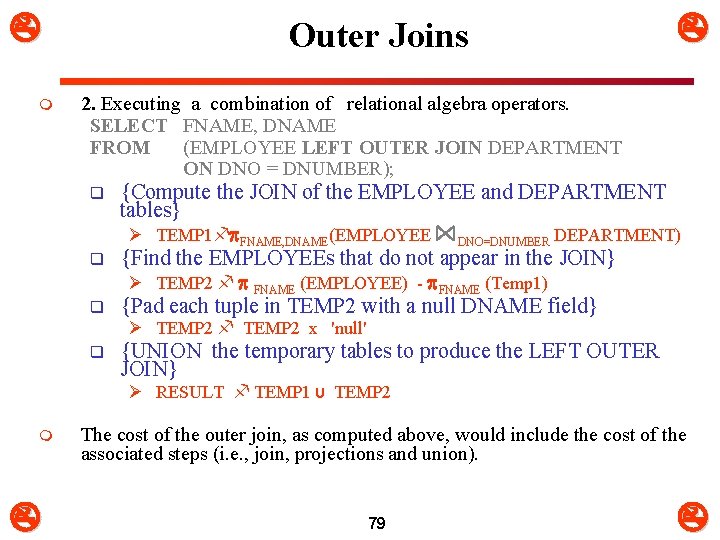

m Outer Joins 2. Executing a combination of relational algebra operators. SELECT FNAME, DNAME FROM (EMPLOYEE LEFT OUTER JOIN DEPARTMENT ON DNO = DNUMBER); q {Compute the JOIN of the EMPLOYEE and DEPARTMENT tables} Ø TEMP 1 FNAME, DNAME(EMPLOYEE DNO=DNUMBER DEPARTMENT) q {Find the EMPLOYEEs that do not appear in the JOIN} Ø TEMP 2 FNAME (EMPLOYEE) - FNAME (Temp 1) q {Pad each tuple in TEMP 2 with a null DNAME field} Ø TEMP 2 x 'null' q {UNION the temporary tables to produce the LEFT OUTER JOIN} Ø RESULT TEMP 1 υ TEMP 2 m The cost of the outer join, as computed above, would include the cost of the associated steps (i. e. , join, projections and union). 79

6. Combining Operations using Pipelining Motivation q A query is mapped into a sequence of operations. q Each execution of an operation produces a temporary result (Materialization). q Generating & saving temporary files on disk is time consuming. m Alternative: q Avoid constructing temporary results as much as possible. q Pipeline the data through multiple operations m Øpass the result of a previous operator to the next without waiting to complete the previous operation. q Also known as stream-based processing. 80

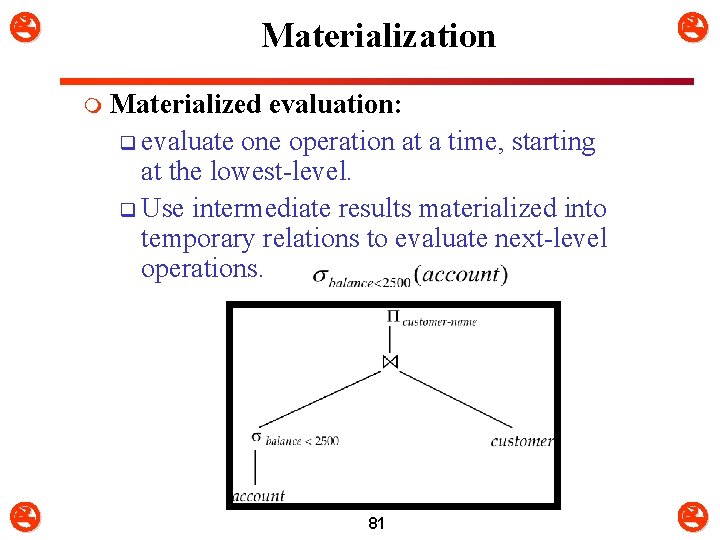

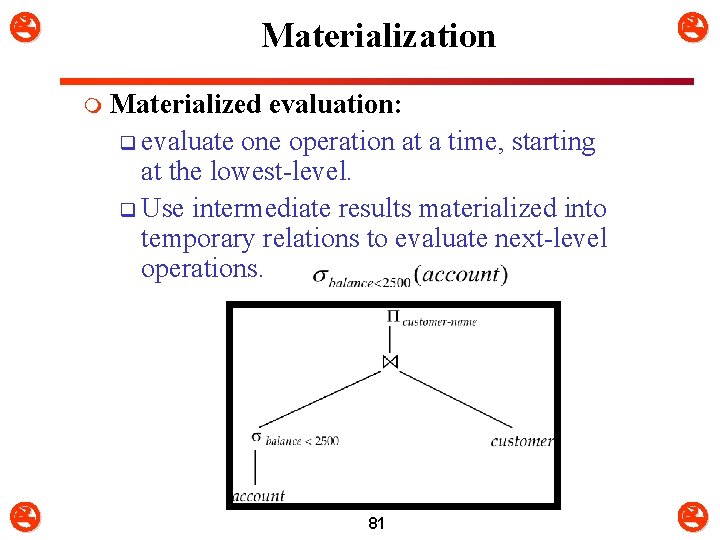

Materialization m Materialized evaluation: q evaluate one operation at a time, starting at the lowest-level. q Use intermediate results materialized into temporary relations to evaluate next-level operations. 81

Pipelining m Pipelined evaluation q evaluate several operations simultaneously, passing the results of one operation on to the next. q E. g. , in previous expression tree, don’t store result of q instead, pass tuples directly to the join. q Similarly, don’t store result of join, pass tuples directly to projection. Much cheaper than materialization: q no need to store a temporary relation to disk. m Pipelining may not always be possible q e. g. , sort, hash-join. m 82

7. Using Heuristics in Query Optimization(1) m Process for heuristics optimization 1. The parser of a high-level query generates an initial internal representation; 2. Apply heuristics rules to optimize the internal representation. 3. A query execution plan is generated to execute groups of operations based on the access paths available on the files involved in the query. m The main heuristic is to apply first the operations that reduce the size of intermediate results. q E. g. , Apply SELECT and PROJECT operations before applying the JOIN or other binary operations. 83

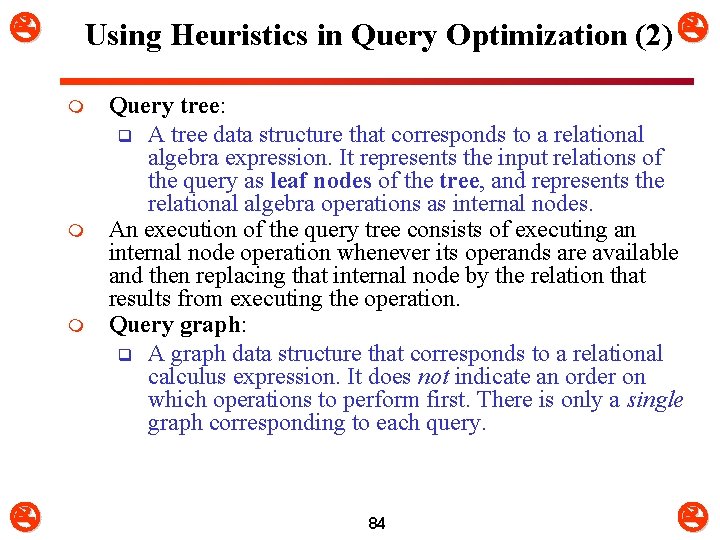

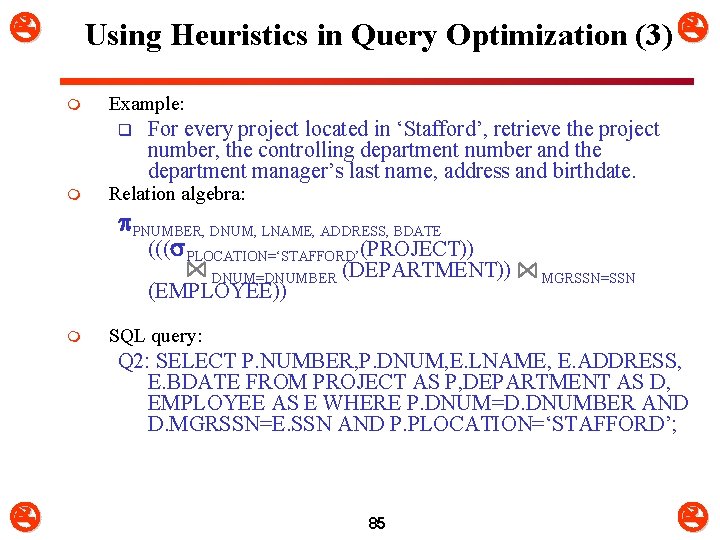

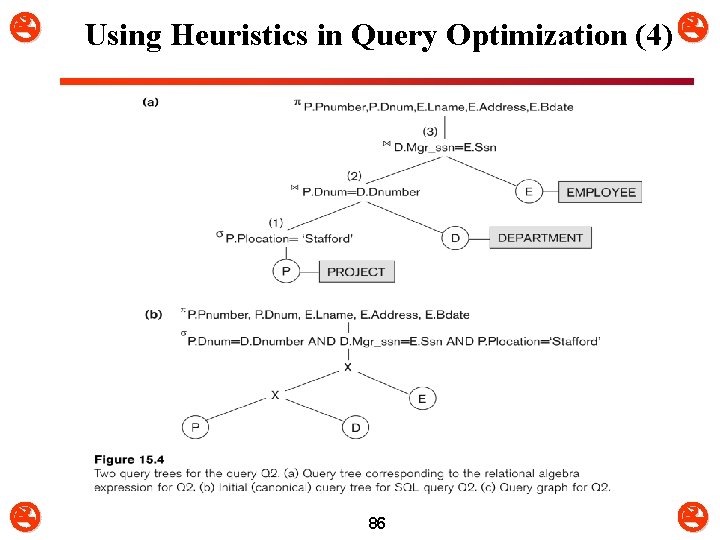

Using Heuristics in Query Optimization (2) m m m Query tree: q A tree data structure that corresponds to a relational algebra expression. It represents the input relations of the query as leaf nodes of the tree, and represents the relational algebra operations as internal nodes. An execution of the query tree consists of executing an internal node operation whenever its operands are available and then replacing that internal node by the relation that results from executing the operation. Query graph: q A graph data structure that corresponds to a relational calculus expression. It does not indicate an order on which operations to perform first. There is only a single graph corresponding to each query. 84

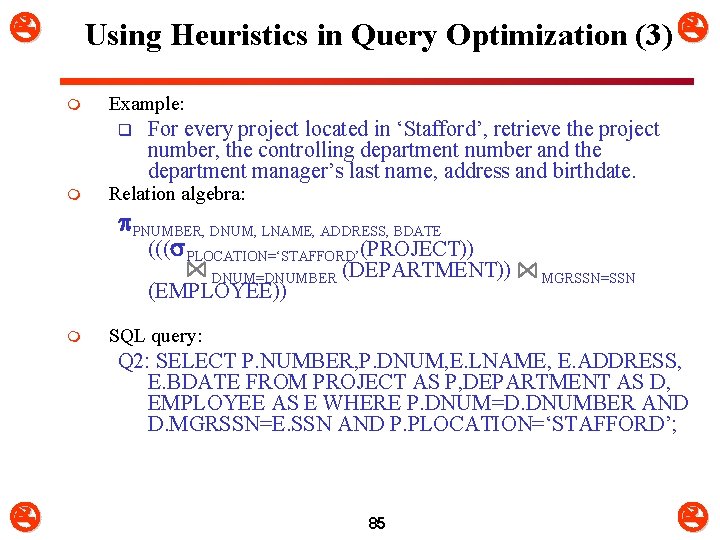

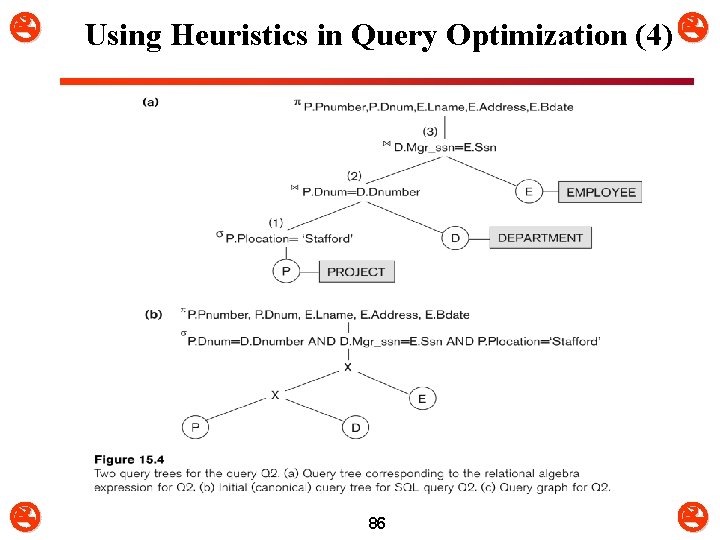

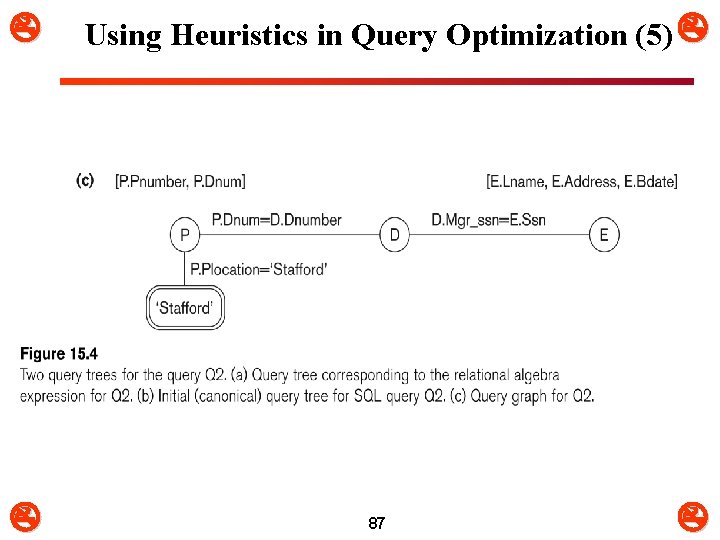

Using Heuristics in Query Optimization (3) m Example: q m For every project located in ‘Stafford’, retrieve the project number, the controlling department number and the department manager’s last name, address and birthdate. Relation algebra: PNUMBER, DNUM, LNAME, ADDRESS, BDATE ((( PLOCATION=‘STAFFORD’(PROJECT)) DNUM=DNUMBER (DEPARTMENT)) MGRSSN=SSN (EMPLOYEE)) m SQL query: Q 2: SELECT P. NUMBER, P. DNUM, E. LNAME, E. ADDRESS, E. BDATE FROM PROJECT AS P, DEPARTMENT AS D, EMPLOYEE AS E WHERE P. DNUM=D. DNUMBER AND D. MGRSSN=E. SSN AND P. PLOCATION=‘STAFFORD’; 85

Using Heuristics in Query Optimization (4) 86

Using Heuristics in Query Optimization (5) 87

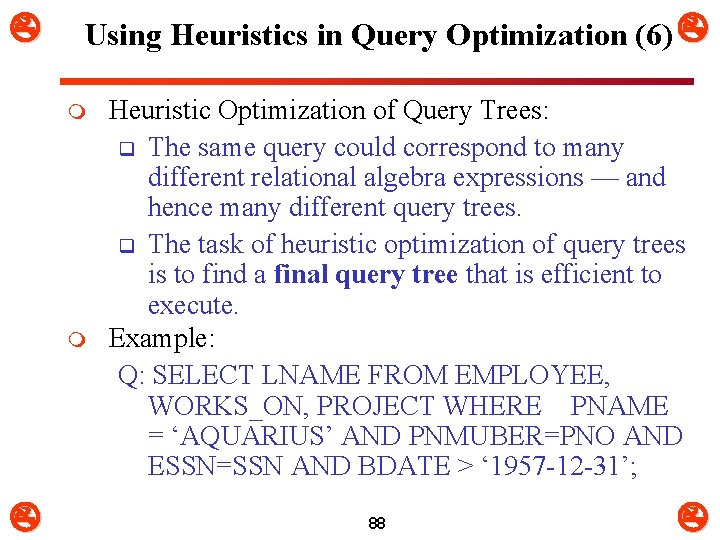

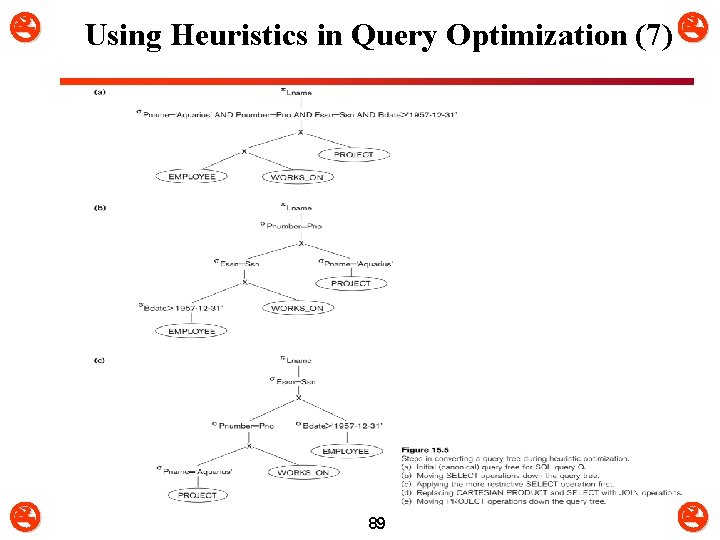

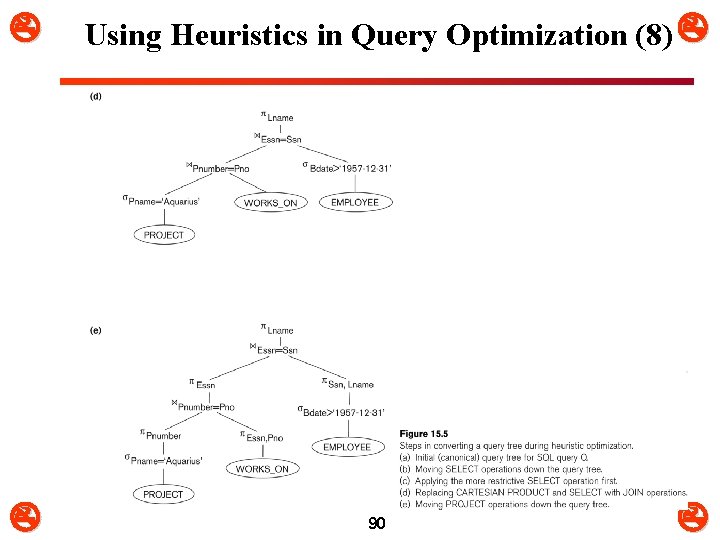

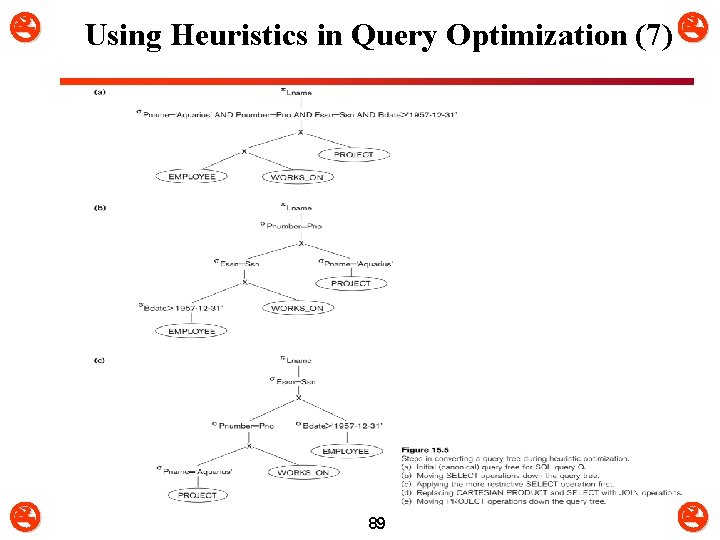

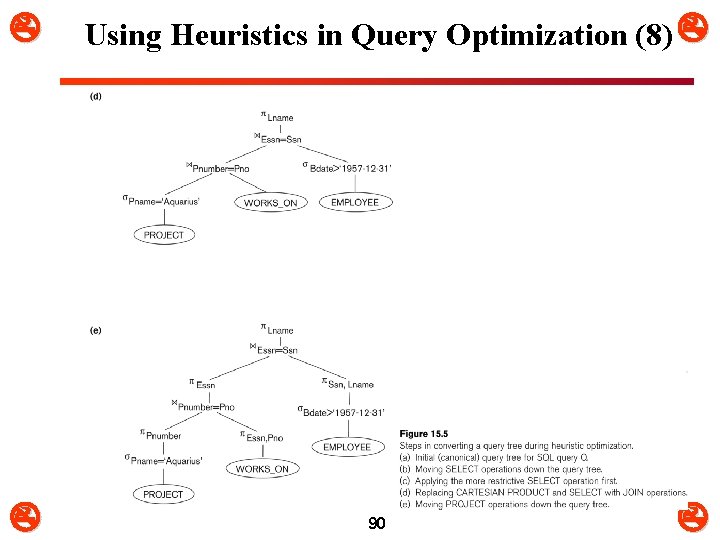

Using Heuristics in Query Optimization (6) m m Heuristic Optimization of Query Trees: q The same query could correspond to many different relational algebra expressions — and hence many different query trees. q The task of heuristic optimization of query trees is to find a final query tree that is efficient to execute. Example: Q: SELECT LNAME FROM EMPLOYEE, WORKS_ON, PROJECT WHERE PNAME = ‘AQUARIUS’ AND PNMUBER=PNO AND ESSN=SSN AND BDATE > ‘ 1957 -12 -31’; 88

Using Heuristics in Query Optimization (7) 89

Using Heuristics in Query Optimization (8) 90

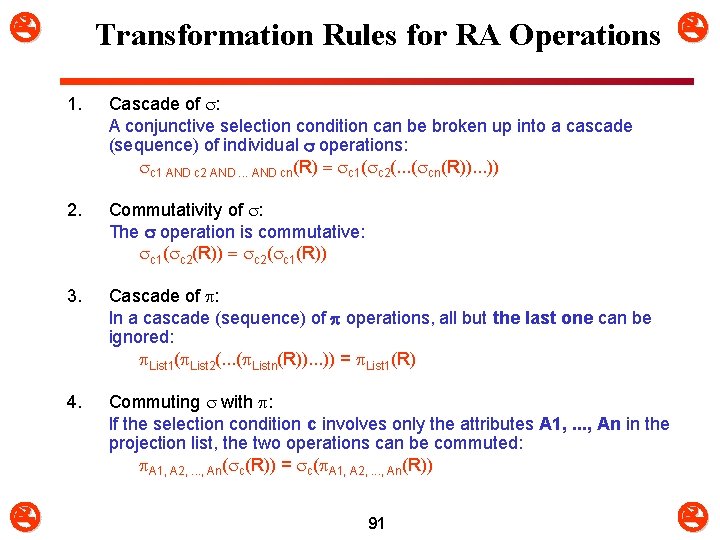

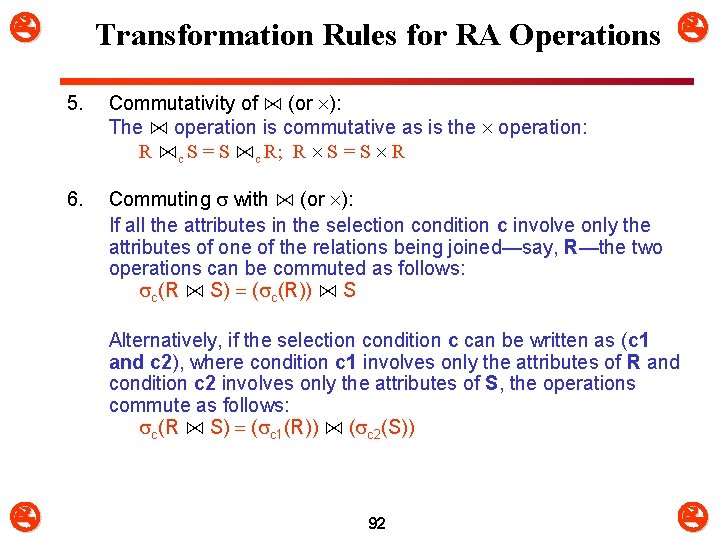

Transformation Rules for RA Operations 1. 2. Cascade of : A conjunctive selection condition can be broken up into a cascade (sequence) of individual operations: c 1 AND c 2 AND. . . AND cn(R) = c 1( c 2(. . . ( cn(R)). . . )) Commutativity of : The operation is commutative: c 1( c 2(R)) = c 2( c 1(R)) 3. Cascade of : In a cascade (sequence) of operations, all but the last one can be ignored: List 1( List 2(. . . ( Listn(R)). . . )) = List 1(R) 4. Commuting with : If the selection condition c involves only the attributes A 1, . . . , An in the projection list, the two operations can be commuted: A 1, A 2, . . . , An( c(R)) = c( A 1, A 2, . . . , An(R)) 91

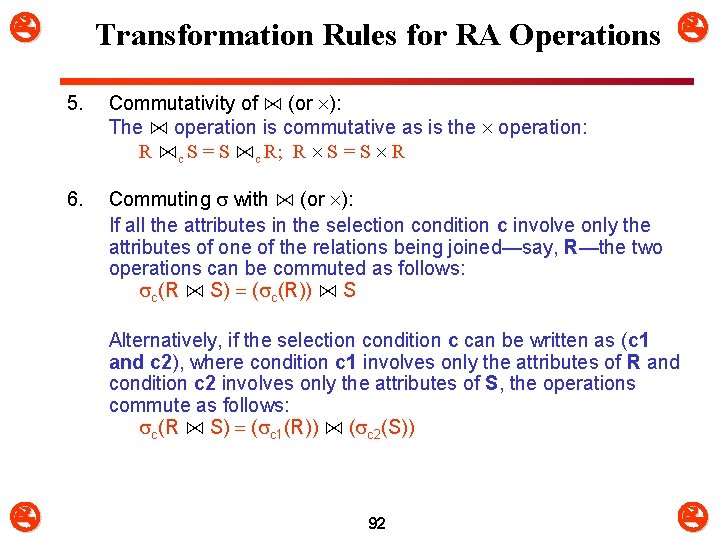

Transformation Rules for RA Operations 5. Commutativity of ⋈ (or ): The ⋈ operation is commutative as is the operation: R ⋈c S = S ⋈c R; R S = S R 6. Commuting with ⋈ (or ): If all the attributes in the selection condition c involve only the attributes of one of the relations being joined—say, R—the two operations can be commuted as follows: c(R ⋈ S) = ( c(R)) ⋈ S Alternatively, if the selection condition c can be written as (c 1 and c 2), where condition c 1 involves only the attributes of R and condition c 2 involves only the attributes of S, the operations commute as follows: c(R ⋈ S) = ( c 1(R)) ⋈ ( c 2(S)) 92

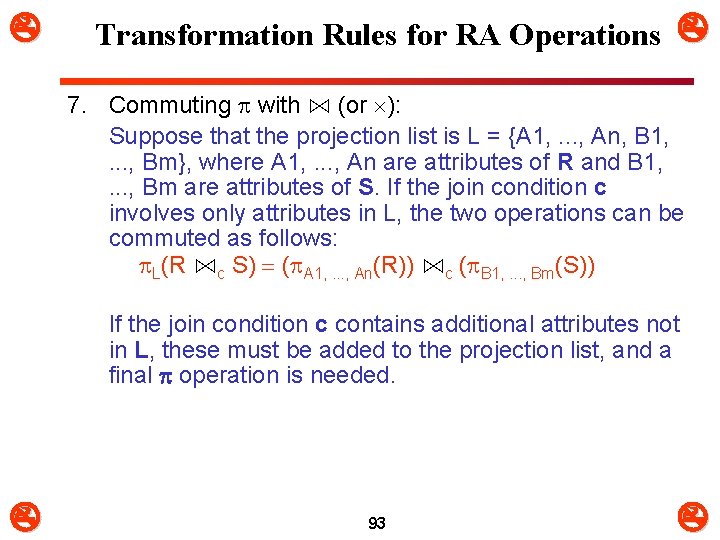

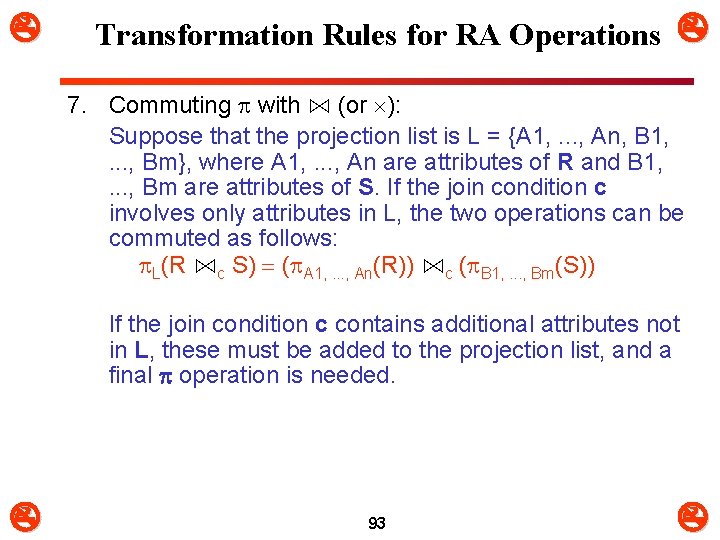

Transformation Rules for RA Operations 7. Commuting with ⋈ (or ): Suppose that the projection list is L = {A 1, . . . , An, B 1, . . . , Bm}, where A 1, . . . , An are attributes of R and B 1, . . . , Bm are attributes of S. If the join condition c involves only attributes in L, the two operations can be commuted as follows: L(R ⋈c S) = ( A 1, . . . , An(R)) ⋈c ( B 1, . . . , Bm(S)) If the join condition c contains additional attributes not in L, these must be added to the projection list, and a final operation is needed. 93

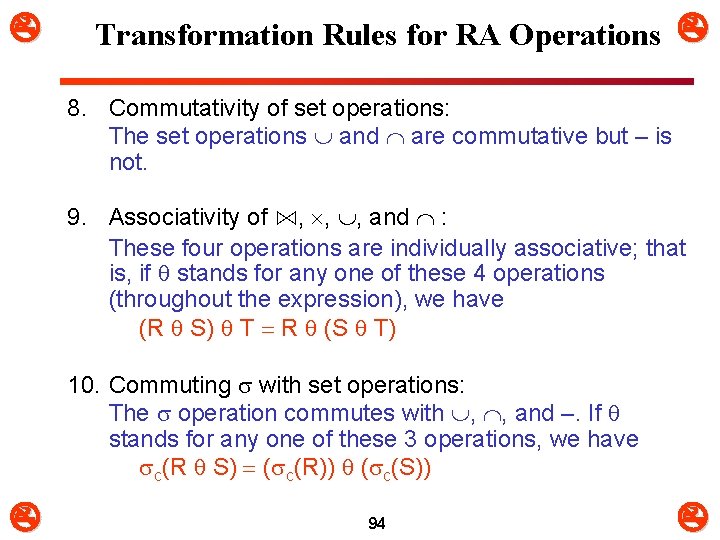

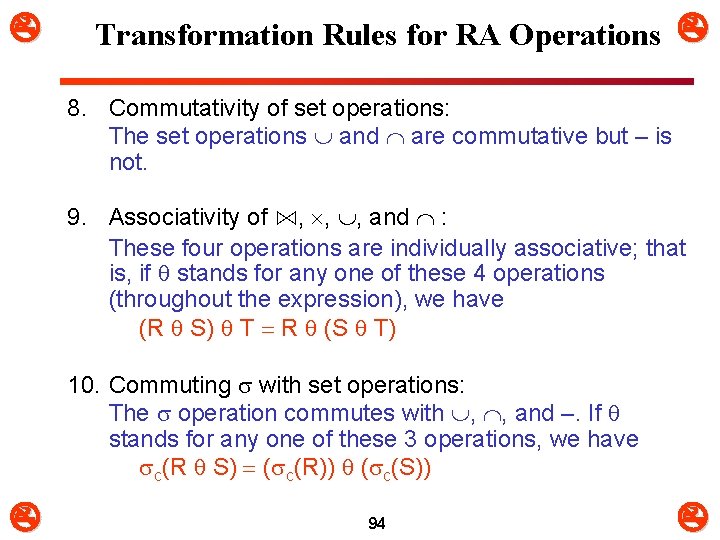

Transformation Rules for RA Operations 8. Commutativity of set operations: The set operations and are commutative but – is not. 9. Associativity of ⋈, , , and : These four operations are individually associative; that is, if stands for any one of these 4 operations (throughout the expression), we have (R S) T = R (S T) 10. Commuting with set operations: The operation commutes with , , and –. If stands for any one of these 3 operations, we have c(R S) = ( c(R)) ( c(S)) 94

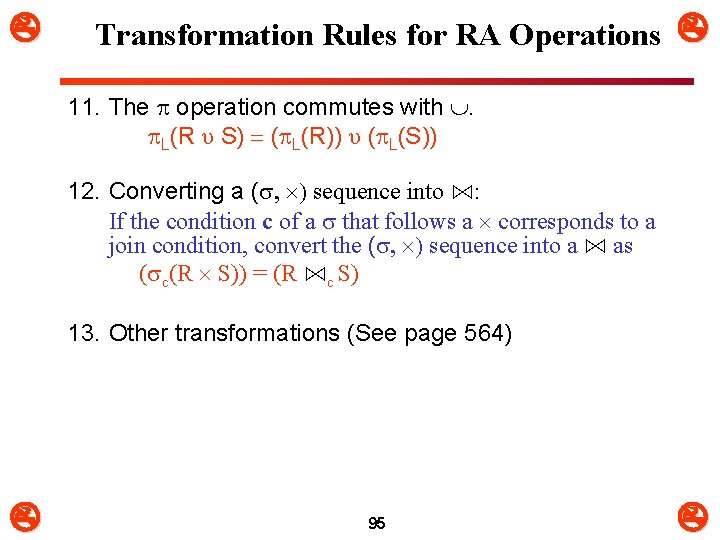

Transformation Rules for RA Operations 11. The operation commutes with . L(R υ S) = ( L(R)) υ ( L(S)) 12. Converting a ( , ) sequence into ⋈: If the condition c of a that follows a corresponds to a join condition, convert the ( , ) sequence into a ⋈ as ( c(R S)) = (R ⋈c S) 13. Other transformations (See page 564) 95

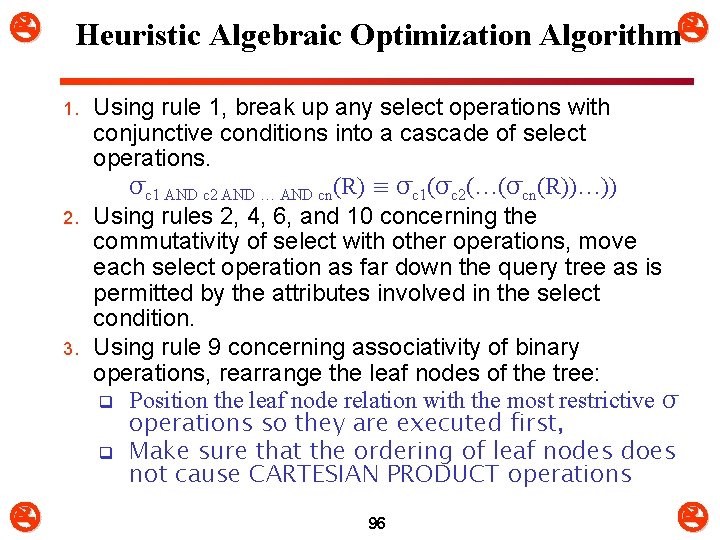

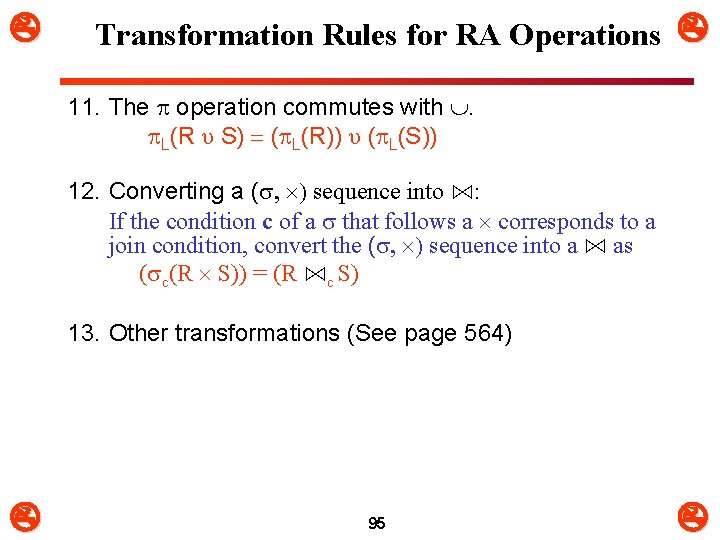

Heuristic Algebraic Optimization Algorithm 1. 2. 3. Using rule 1, break up any select operations with conjunctive conditions into a cascade of select operations. σc 1 AND c 2 AND … AND cn(R) ≡ σc 1(σc 2(…(σcn(R))…)) Using rules 2, 4, 6, and 10 concerning the commutativity of select with other operations, move each select operation as far down the query tree as is permitted by the attributes involved in the select condition. Using rule 9 concerning associativity of binary operations, rearrange the leaf nodes of the tree: q Position the leaf node relation with the most restrictive σ operations so they are executed first, q Make sure that the ordering of leaf nodes does not cause CARTESIAN PRODUCT operations 96

Heuristic Algebraic Optimization Algorithm Using Rule 12, combine a cartesian product operation with a subsequent select operation in the tree into a join operation. Combine a with a subsequent σ in the tree into a ⋈ 5. Using rules 3, 4, 7, and 11 concerning the cascading of project and the commuting of project with other operations, break down and move lists of projection attributes down the tree as far as possible by creating new project operations as needed. 4. 6. Identify subtrees that represent groups of operations that can be executed by a single algorithm. 97

Summary of Heuristics for Algebraic Optimization m The main heuristic is to apply first the operations that reduce the size of intermediate results. m Perform select operations as early as possible to reduce the number of tuples and perform project operations as early as possible to reduce the number of attributes. (This is done by moving select and project operations as far down the tree as possible. ) m The select and join operations that are most restrictive should be executed before other similar operations. (This is done by reordering the leaf nodes of the tree among themselves and adjusting the rest of the tree appropriately. ) 98

Query Execution Plans m An execution plan for a relational algebra query consists of a combination of the relational algebra query tree and information about the access methods to be used for each relation as well as the methods to be used in computing the relational operators stored in the tree. m Materialized evaluation: the result of an operation is stored as a temporary relation. m Pipelined evaluation: as the result of an operator is produced, it is forwarded to the next operator in sequence. 99

8. Using Selectivity and Cost Estimates in Query Optimization (1) m Cost-based query optimization: q Estimate and compare the costs of executing a query using different execution strategies and choose the strategy with the lowest cost estimate. q (Compare to heuristic query optimization) m Issues q Cost function q Number of execution strategies to be considered 100

Using Selectivity and Cost Estimates in Query Optimization (2) m Cost Components for Query Execution 1. Access cost to secondary storage 2. Storage cost 3. Computation cost 4. Memory usage cost 5. Communication cost m Note: Different database systems may focus on different cost components. 101

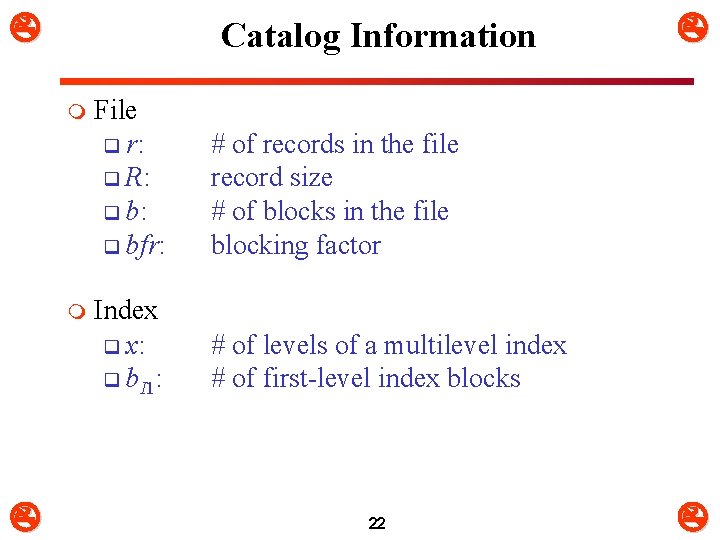

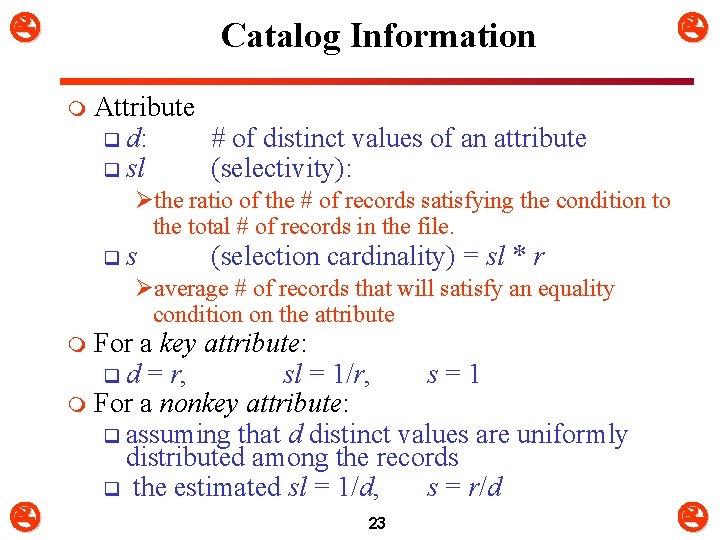

Using Selectivity and Cost Estimates in Query Optimization (3) m Catalog Information Used in Cost Functions q Information about the size of a file Ø Ø q Information about indexes and indexing attributes of a file Ø Ø Ø number of records (tuples) (r), record size (R), number of blocks (b) blocking factor (bfr) Number of levels (x) of each multilevel index Number of first-level index blocks (b. I 1) Number of distinct values (d) of an attribute Selectivity (sl) of an attribute Selection cardinality (s) of an attribute. (s = sl * r) 102

Using Selectivity and Cost Estimates in Query Optimization (4) m m Examples of Cost Functions for SELECT S 1. Linear search (brute force) approach q CS 1 a = b; q For an equality condition on a key, CS 1 a = (b/2) if the record is found; otherwise CS 1 a = b. S 2. Binary search: q CS 2 = log 2 b + (s/bfr) – 1 q For an equality condition on a unique (key) attribute, CS 2 =log 2 b S 3. Using a primary index (S 3 a) or hash key (S 3 b) to retrieve a single record q CS 3 a = x + 1; CS 3 b = 1 for static or linear hashing; q CS 3 b = 1 for extendible hashing; 103

Using Selectivity and Cost Estimates in Query Optimization (5) m m Examples of Cost Functions for SELECT (contd. ) S 4. Using an ordering index to retrieve multiple records: q For the comparison condition on a key field with an ordering index, CS 4 = x + (b/2) S 5. Using a clustering index to retrieve multiple records: q CS 5 = x + ┌ (s/bfr) ┐ S 6. Using a secondary (B+-tree) index: q For an equality comparison, CS 6 a = x + s; q For an comparison condition such as >, <, >=, or <=, q CS 6 a = x + (b. I 1/2) + (r/2) 104

Using Selectivity and Cost Estimates in Query Optimization (6) m m Examples of Cost Functions for SELECT (contd. ) S 7. Conjunctive selection: q Use either S 1 or one of the methods S 2 to S 6 to solve. q For the latter case, use one condition to retrieve the records and then check in the memory buffer whether each retrieved record satisfies the remaining conditions in the conjunction. S 8. Conjunctive selection using a composite index: q Same as S 3 a, S 5 or S 6 a, depending on the type of index. Examples of using the cost functions. 105

Using Selectivity and Cost Estimates in Query Optimization (7) m Examples of Cost Functions for JOIN q Join selectivity (js) q js = | (R C S) | / | R x S | = | (R C S) | / (|R| * |S |) Ø If condition C does not exist, js = 1; Ø If no tuples from the relations satisfy condition C, js = 0; Ø Usually, 0 <= js <= 1; m Size of the result file after join operation q | (R C S) | = js * |R| * |S | 106

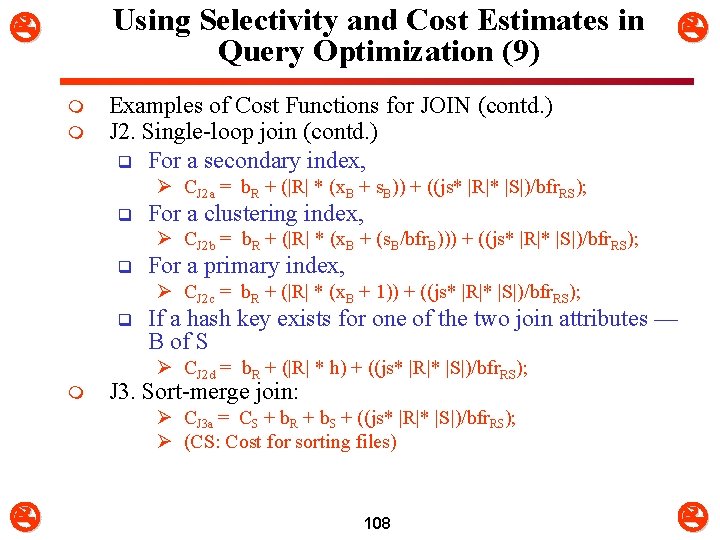

Using Selectivity and Cost Estimates in Query Optimization (8) m m m Examples of Cost Functions for JOIN (contd. ) J 1. Nested-loop join: q CJ 1 = b. R + (b. R*b. S) + ((js* |R|* |S|)/bfr. RS) q (Use R for outer loop) J 2. Single-loop join (using an access structure to retrieve the matching record(s)) q If an index exists for the join attribute B of S with index levels x. B, we can retrieve each record s in R and then use the index to retrieve all the matching records t from S that satisfy t[B] = s[A]. q The cost depends on the type of index. 107

Using Selectivity and Cost Estimates in Query Optimization (9) m m Examples of Cost Functions for JOIN (contd. ) J 2. Single-loop join (contd. ) q For a secondary index, Ø CJ 2 a = b. R + (|R| * (x. B + s. B)) + ((js* |R|* |S|)/bfr. RS); q For a clustering index, Ø CJ 2 b = b. R + (|R| * (x. B + (s. B/bfr. B))) + ((js* |R|* |S|)/bfr. RS); q For a primary index, Ø CJ 2 c = b. R + (|R| * (x. B + 1)) + ((js* |R|* |S|)/bfr. RS); q If a hash key exists for one of the two join attributes — B of S Ø CJ 2 d = b. R + (|R| * h) + ((js* |R|* |S|)/bfr. RS); m J 3. Sort-merge join: Ø CJ 3 a = CS + b. R + b. S + ((js* |R|* |S|)/bfr. RS); Ø (CS: Cost for sorting files) 108

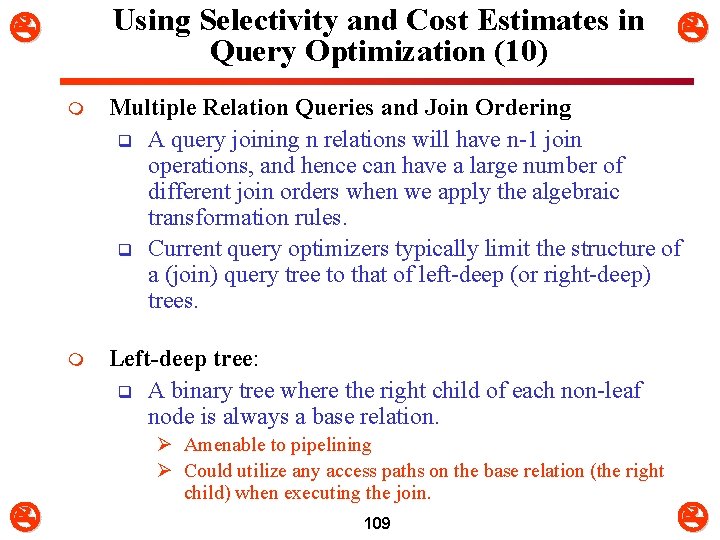

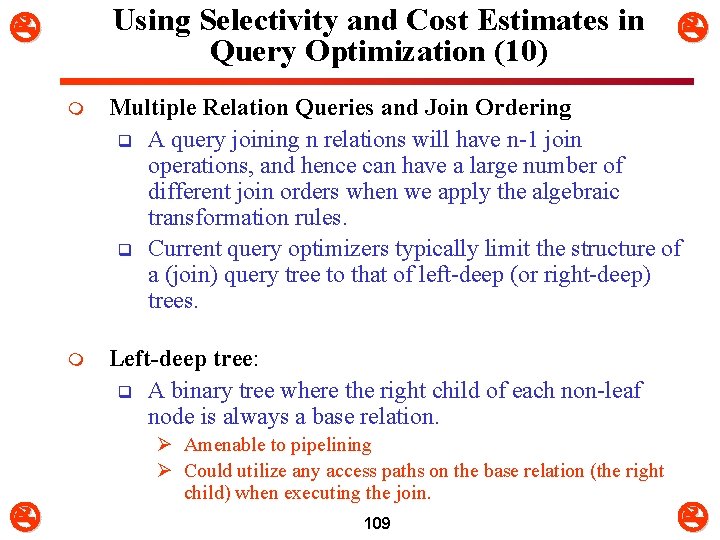

Using Selectivity and Cost Estimates in Query Optimization (10) m Multiple Relation Queries and Join Ordering q A query joining n relations will have n-1 join operations, and hence can have a large number of different join orders when we apply the algebraic transformation rules. q Current query optimizers typically limit the structure of a (join) query tree to that of left-deep (or right-deep) trees. m Left-deep tree: q A binary tree where the right child of each non-leaf node is always a base relation. Ø Amenable to pipelining Ø Could utilize any access paths on the base relation (the right child) when executing the join. 109

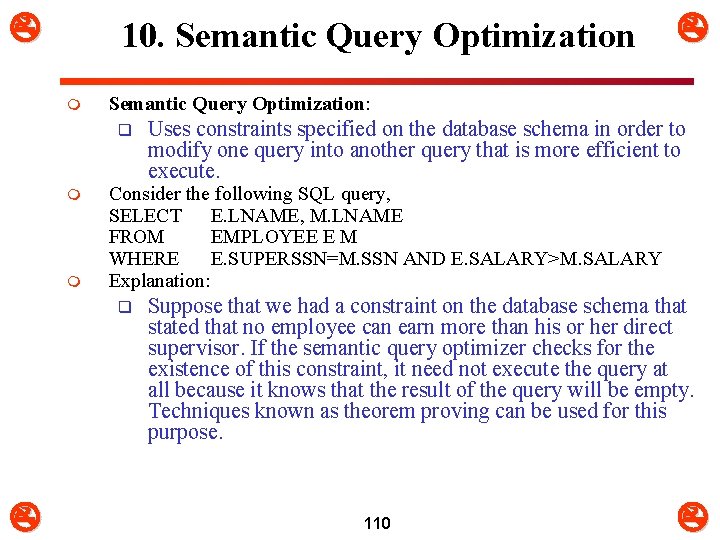

10. Semantic Query Optimization m Semantic Query Optimization: q m m Uses constraints specified on the database schema in order to modify one query into another query that is more efficient to execute. Consider the following SQL query, SELECT E. LNAME, M. LNAME FROM EMPLOYEE E M WHERE E. SUPERSSN=M. SSN AND E. SALARY>M. SALARY Explanation: q Suppose that we had a constraint on the database schema that stated that no employee can earn more than his or her direct supervisor. If the semantic query optimizer checks for the existence of this constraint, it need not execute the query at all because it knows that the result of the query will be empty. Techniques known as theorem proving can be used for this purpose. 110

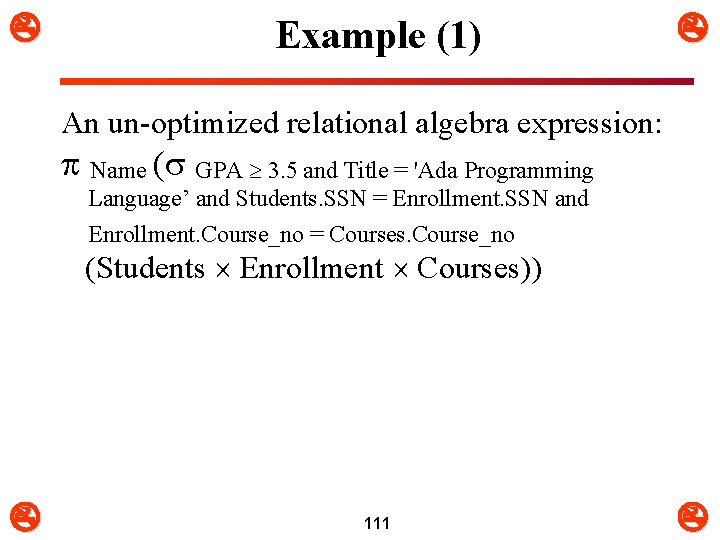

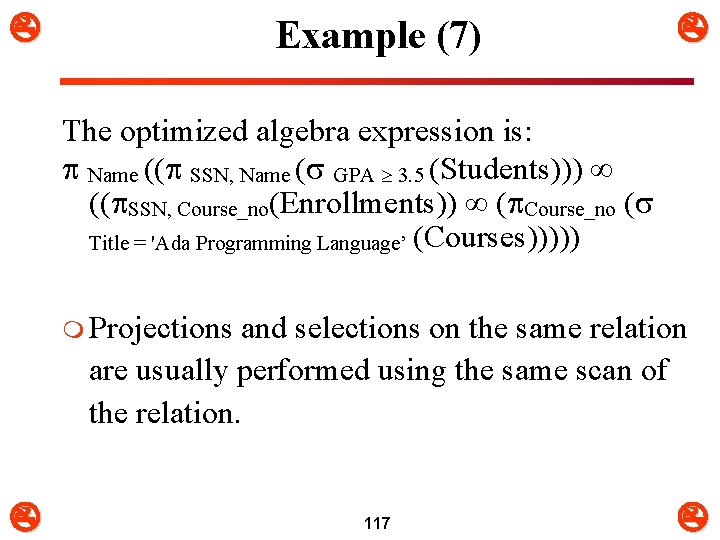

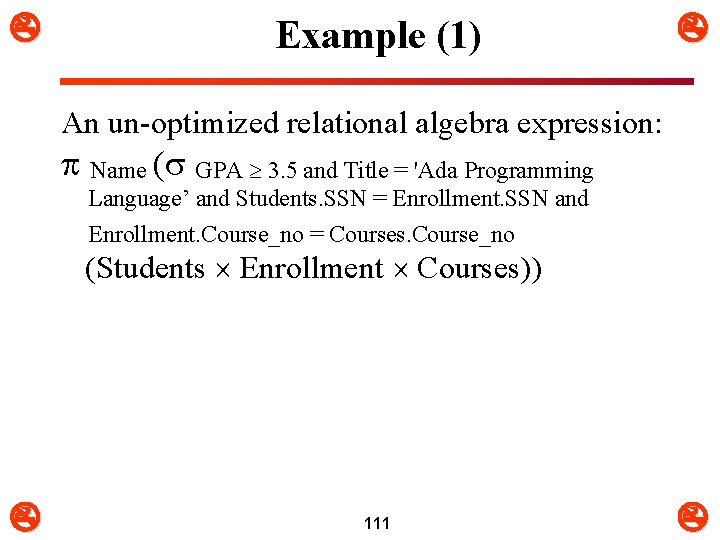

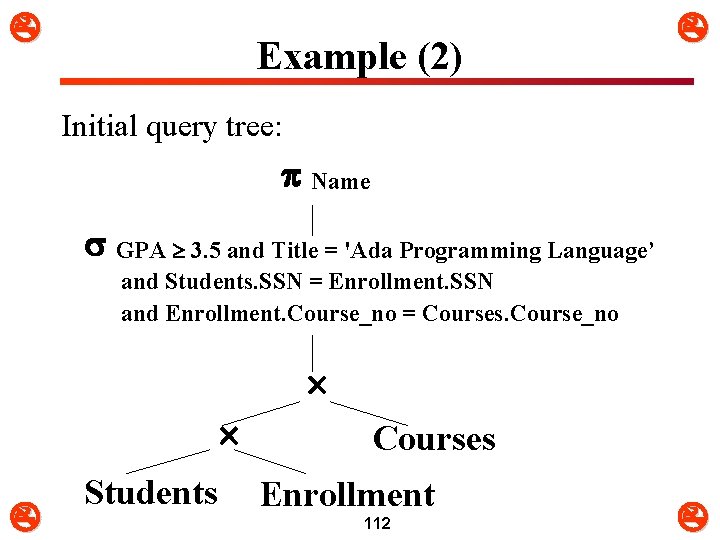

Example (1) An un-optimized relational algebra expression: Name ( GPA 3. 5 and Title = 'Ada Programming Language’ and Students. SSN = Enrollment. SSN and Enrollment. Course_no = Courses. Course_no (Students Enrollment Courses)) 111

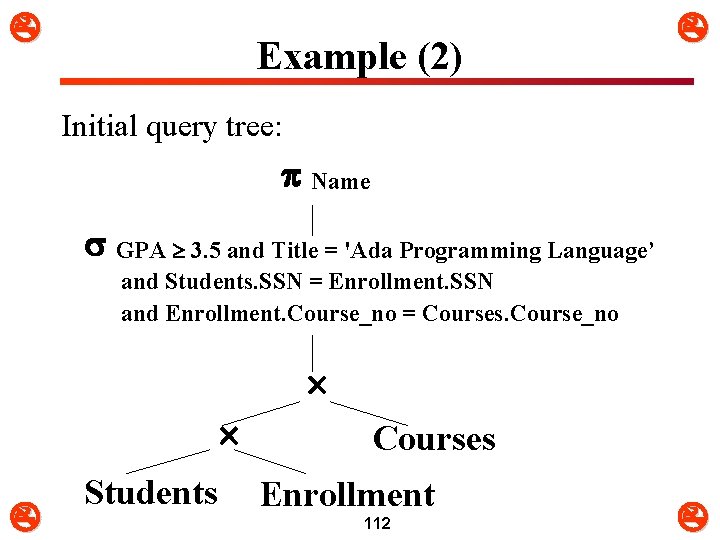

Example (2) Initial query tree: Name GPA 3. 5 and Title = 'Ada Programming Language’ and Students. SSN = Enrollment. SSN and Enrollment. Course_no = Courses. Course_no Students Courses Enrollment 112

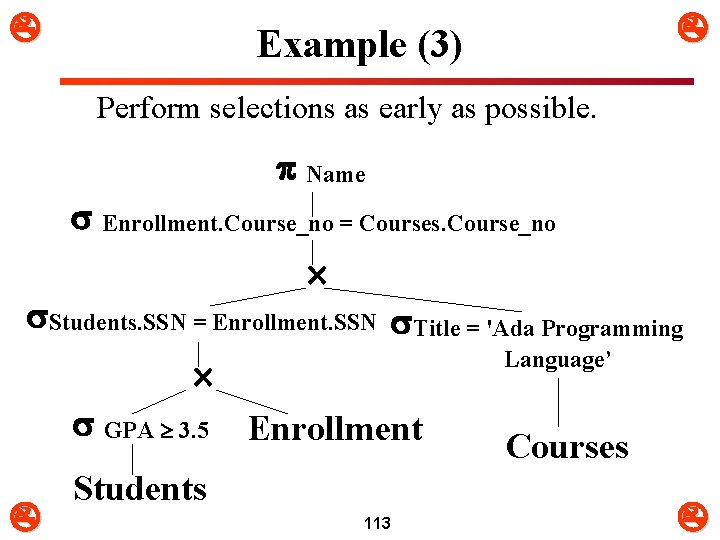

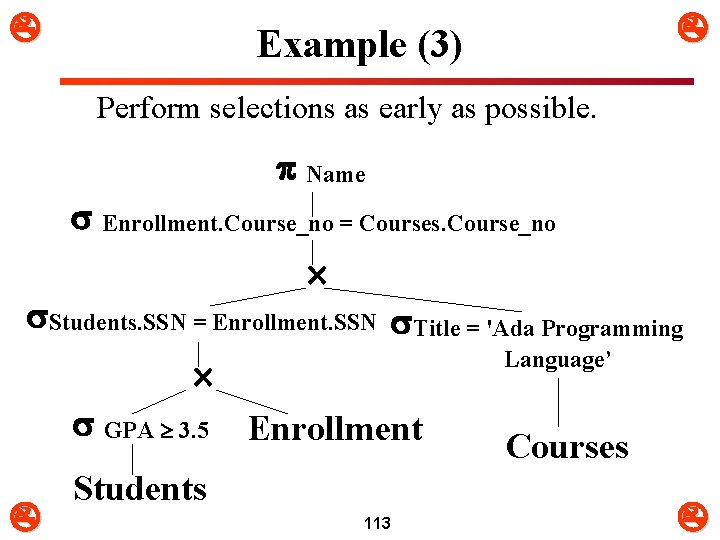

Example (3) Perform selections as early as possible. Name Enrollment. Course_no = Courses. Course_no Students. SSN = Enrollment. SSN Title = 'Ada Programming Language’ GPA 3. 5 Enrollment Courses Students 113

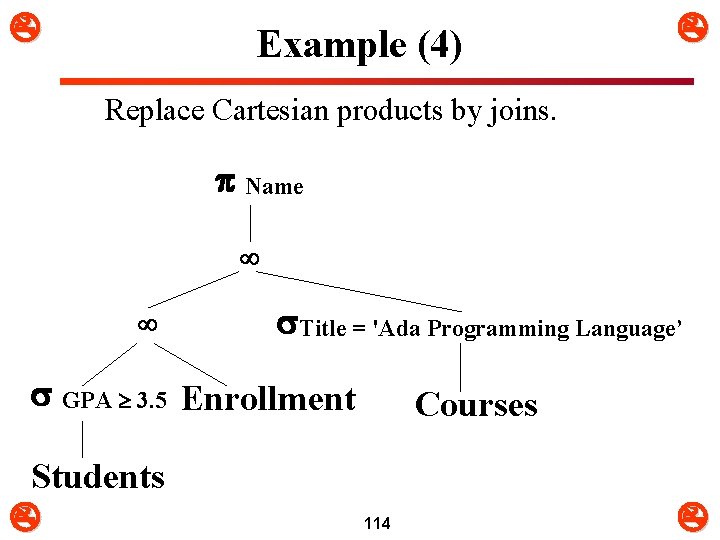

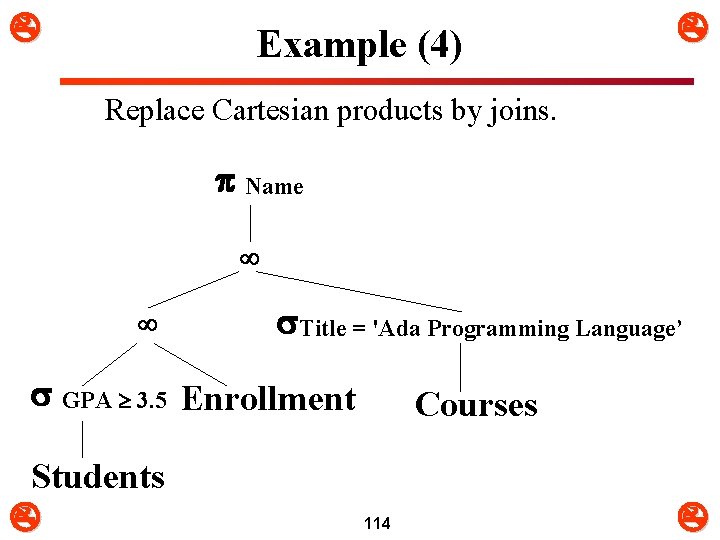

Example (4) Replace Cartesian products by joins. Name Title = 'Ada Programming Language’ GPA 3. 5 Enrollment Students Courses 114

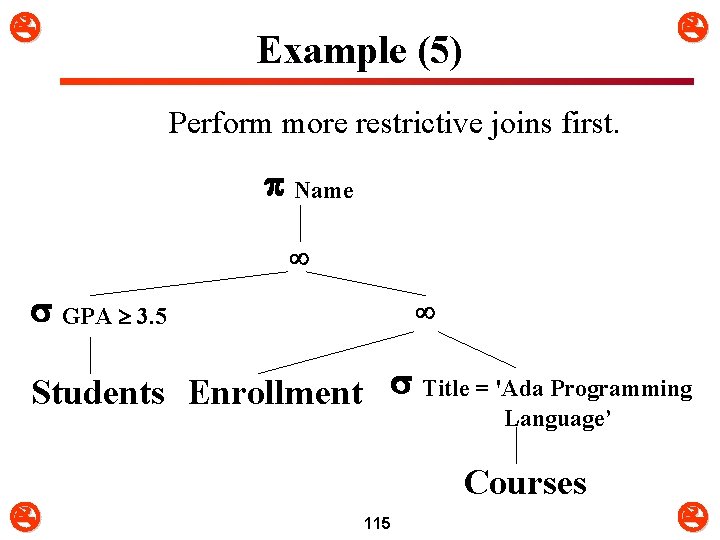

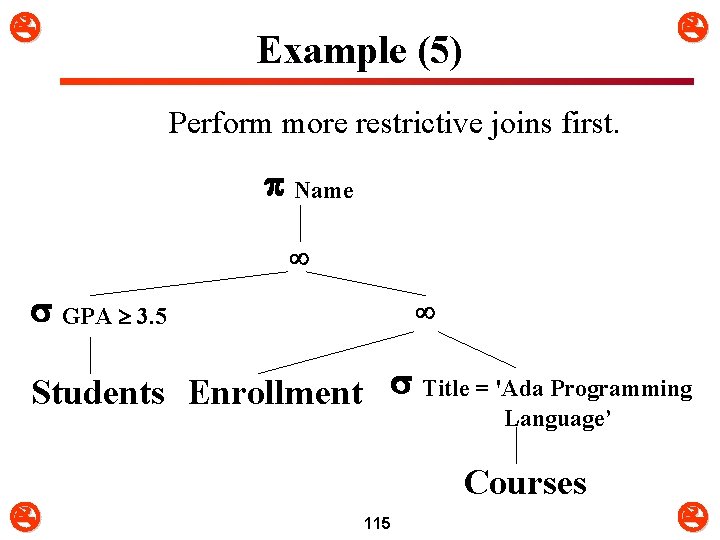

Example (5) Perform more restrictive joins first. Name GPA 3. 5 Students Enrollment Title = 'Ada Programming Language’ Courses 115

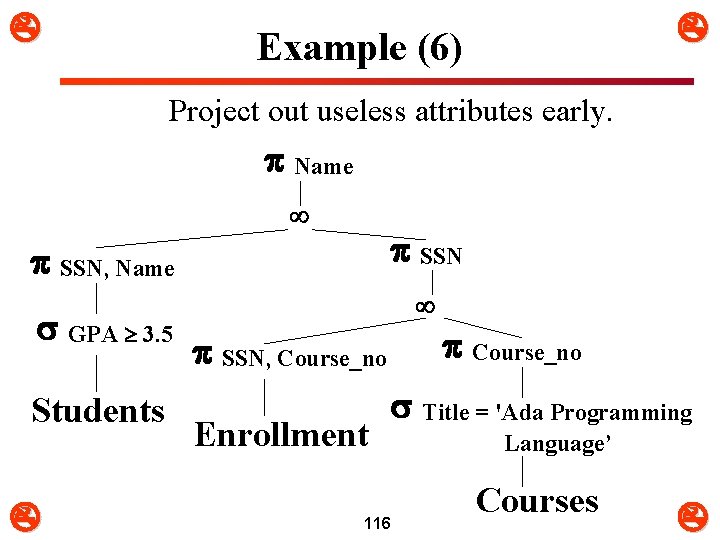

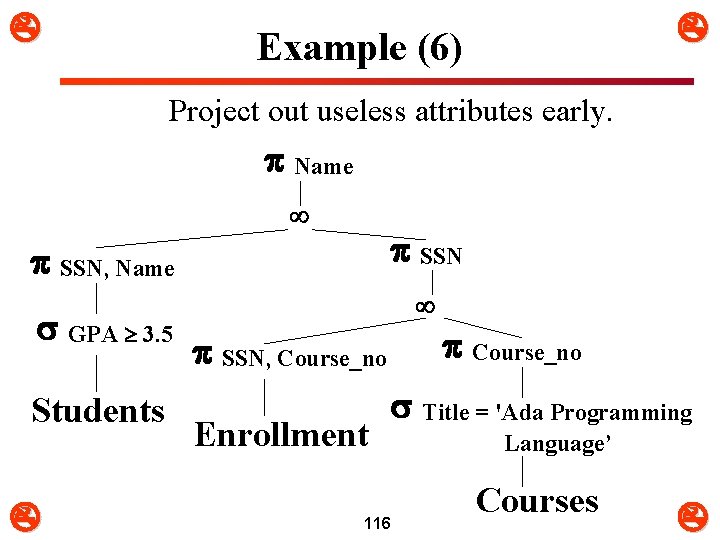

Example (6) Project out useless attributes early. Name SSN, Name GPA 3. 5 Students SSN, Course_no Enrollment 116 Course_no Title = 'Ada Programming Language’ Courses

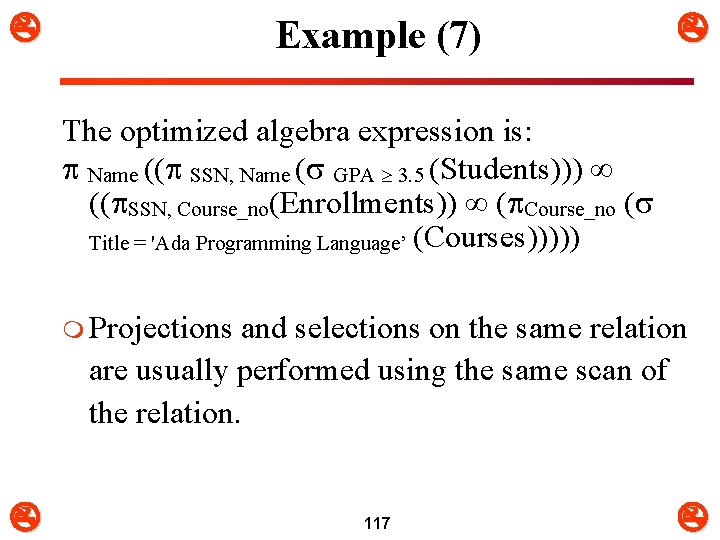

Example (7) The optimized algebra expression is: Name (( SSN, Name ( GPA 3. 5 (Students))) (( SSN, Course_no(Enrollments)) ( Course_no ( Title = 'Ada Programming Language’ (Courses))))) m Projections and selections on the same relation are usually performed using the same scan of the relation. 117