Algorithm Implementation in FPGAs Demonstrated Through Neural Network

- Slides: 62

Algorithm Implementation in FPGAs Demonstrated Through Neural Network Inversion on the SRC-6 e MSECE Thesis Presentation Paul D. Reynolds

Algorithm Hardware Implementation • Algorithms – One Output for each Input – Purely Combinational • Problems – Too Large to be Directly Implemented – Timing Issues • Solution – Clocked Design – Repeated Use of Hardware

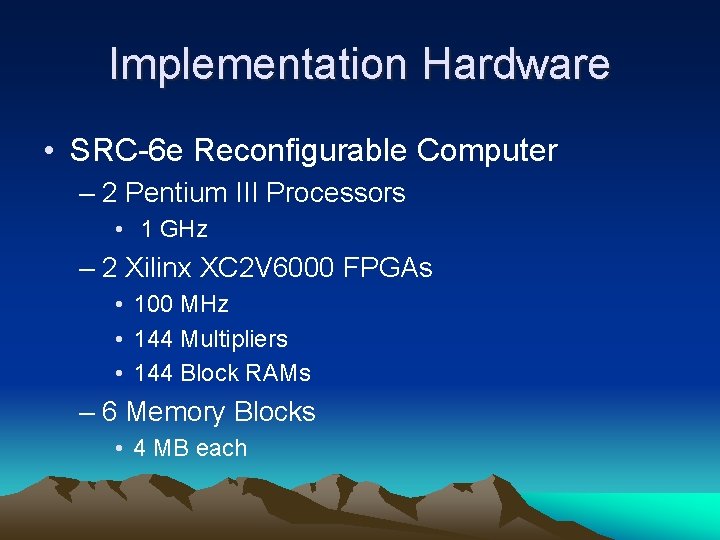

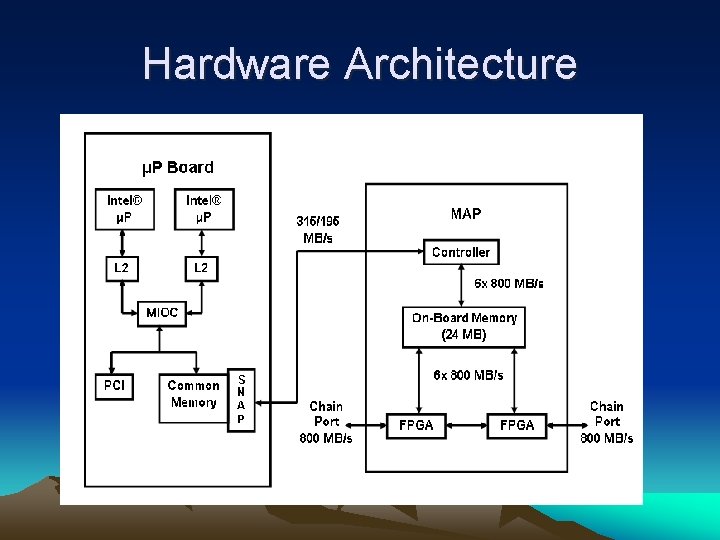

Implementation Hardware • SRC-6 e Reconfigurable Computer – 2 Pentium III Processors • 1 GHz – 2 Xilinx XC 2 V 6000 FPGAs • 100 MHz • 144 Multipliers • 144 Block RAMs – 6 Memory Blocks • 4 MB each

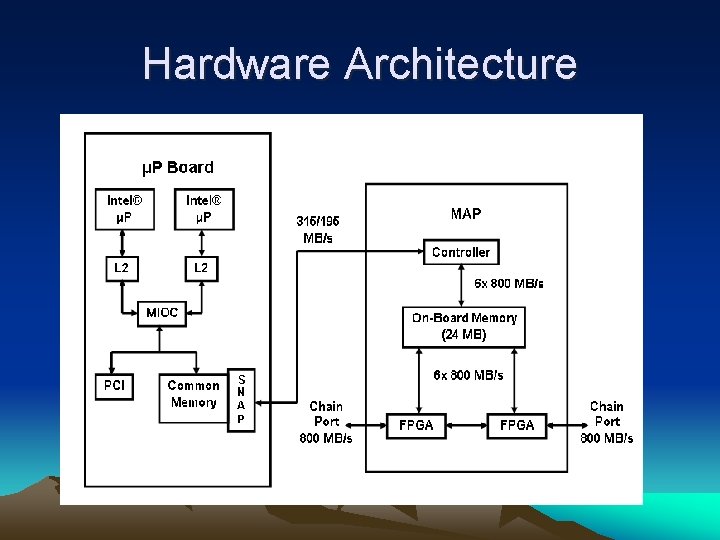

Hardware Architecture

SRC-6 e Development Environment • main. c –C – Executes on Pentium Processors – Command Line Interface – Hardware accessed as a Function

SRC-6 e Development Environment • hardware. mc – Modified C or FORTRAN – Executes in Hardware – Controls Memory Transfer – One for each FPGA used – Can be for entire code or with hardware description functions

Hardware Description. VHDL and VERILOG • Reasons for Use – To avoid c-compiler idiosyncrasies • Latency added to certain loops • 16 bit multiplies converted to 32 bit multiplies – More control • Fixed point multiplication with truncation • Pipelines and parallel execution simpler – IP Cores Useable • More efficient implementation

Neural Network and Inversion Example

Problem Background • To determine the optimal sonar setup to maximize the ensonification of a grid of water. • Influences to ensonification: – Environmental Conditions – Temperature, Wind Speed – Bathymetry – Bottom Type, Shape of Bottom – Sonar System – Total of 27 different factors accounted for

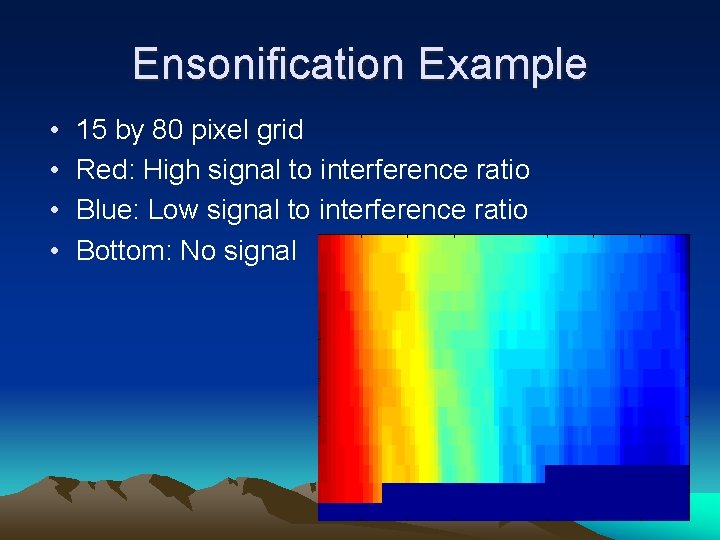

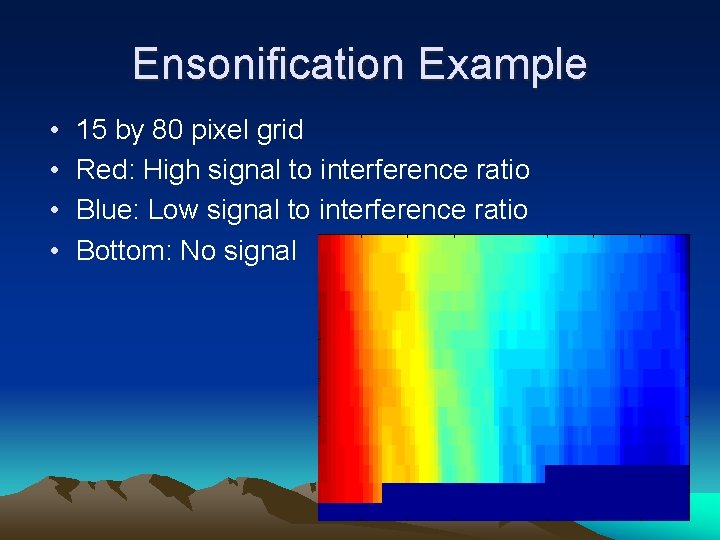

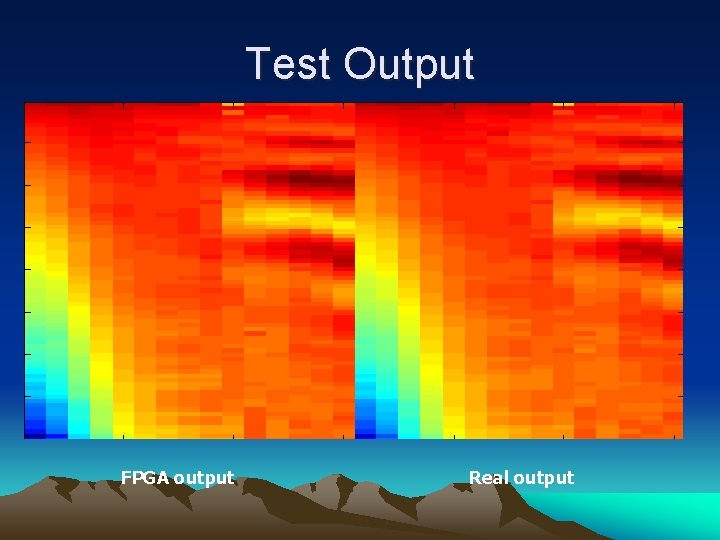

Ensonification Example • • 15 by 80 pixel grid Red: High signal to interference ratio Blue: Low signal to interference ratio Bottom: No signal

Original Solution • Take current conditions • Match to previous optimum sonar setups with similar conditions • Run acoustic model using current conditions and previous optimum setups • Use sonar setup with highest signal to interference ratio

New Problem • Problem: – One acoustic model run took tens of seconds • Solution – Train a Neural Network on the acoustic model (APL & University of Washington)

Neural Network Overview • Inspired by the human ability to recognize patterns. • Mathematical structure able to mimic a pattern • Trained using known data – Show the network several examples and identify each example – The network learns the pattern – Show the network a new case and let the network identify it.

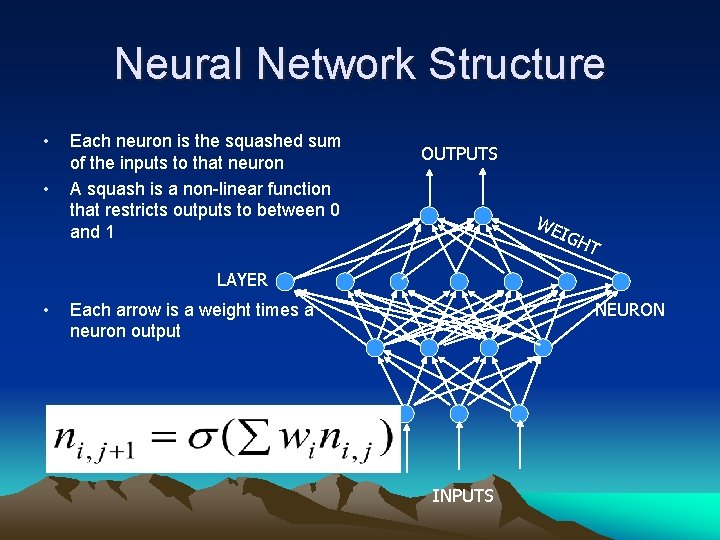

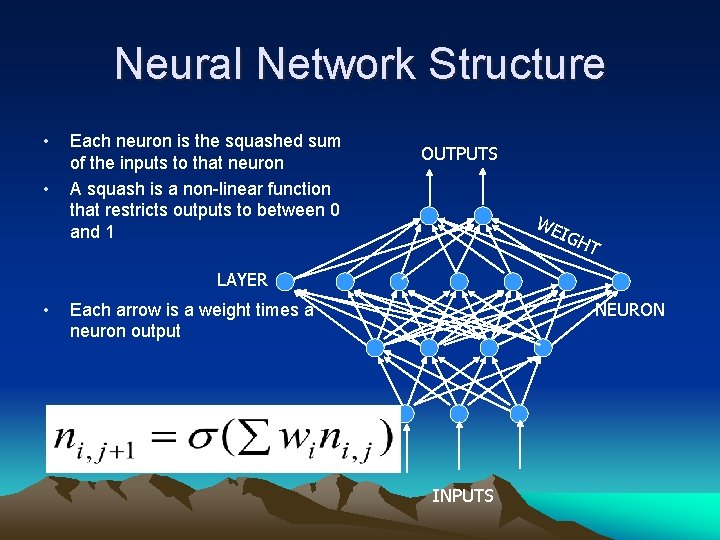

Neural Network Structure • • Each neuron is the squashed sum of the inputs to that neuron A squash is a non-linear function that restricts outputs to between 0 and 1 OUTPUTS WE IGH T LAYER • NEURON Each arrow is a weight times a neuron output INPUTS

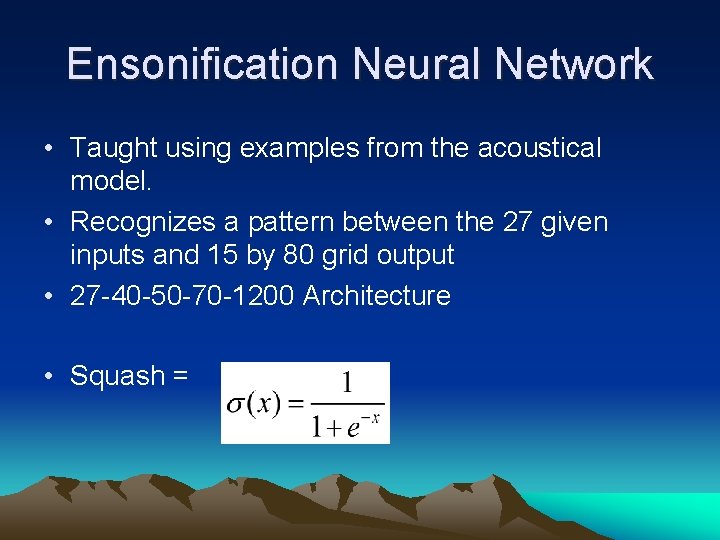

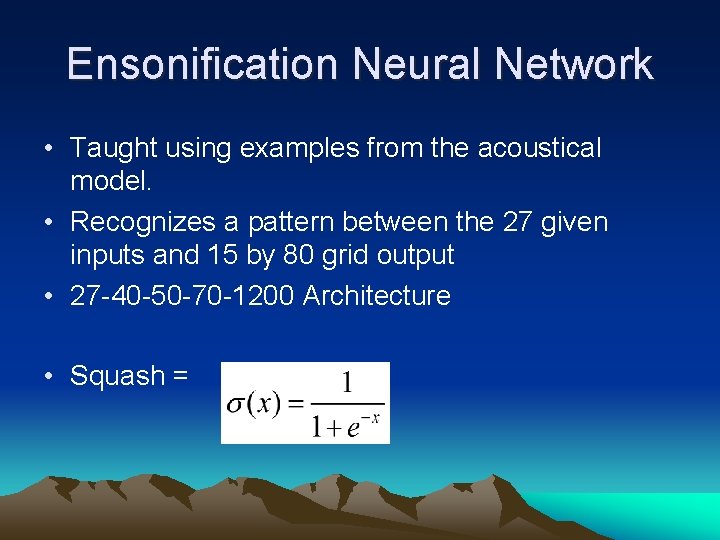

Ensonification Neural Network • Taught using examples from the acoustical model. • Recognizes a pattern between the 27 given inputs and 15 by 80 grid output • 27 -40 -50 -70 -1200 Architecture • Squash =

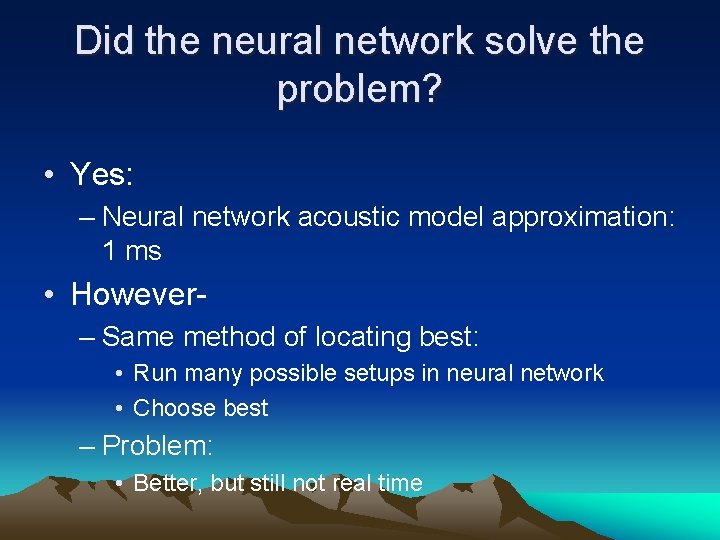

Did the neural network solve the problem? • Yes: – Neural network acoustic model approximation: 1 ms • However– Same method of locating best: • Run many possible setups in neural network • Choose best – Problem: • Better, but still not real time

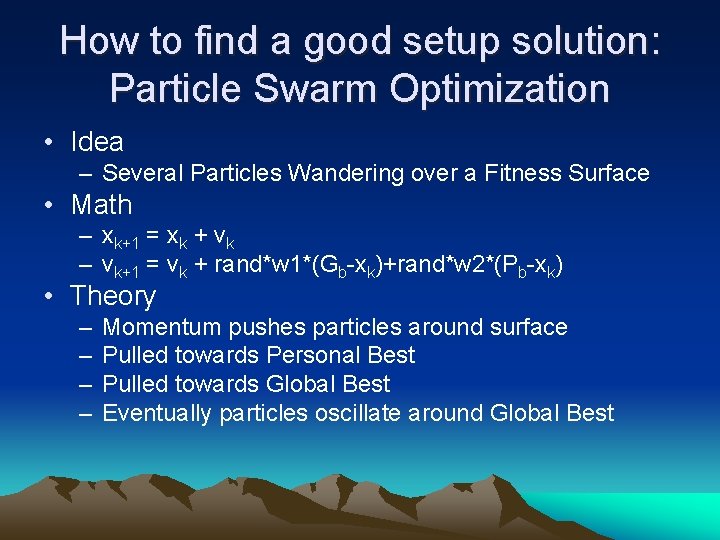

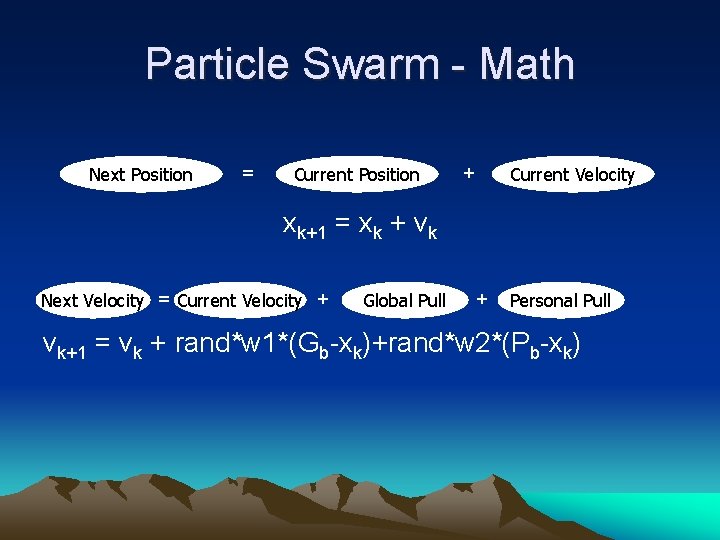

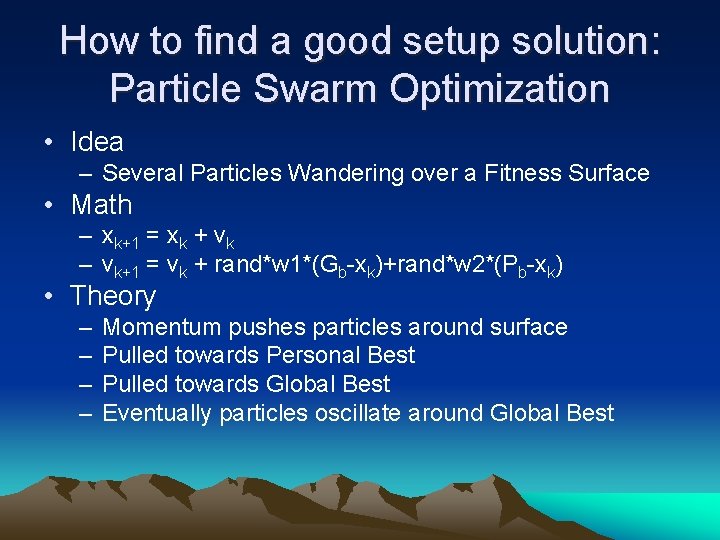

How to find a good setup solution: Particle Swarm Optimization • Idea – Several Particles Wandering over a Fitness Surface • Math – xk+1 = xk + vk – vk+1 = vk + rand*w 1*(Gb-xk)+rand*w 2*(Pb-xk) • Theory – – Momentum pushes particles around surface Pulled towards Personal Best Pulled towards Global Best Eventually particles oscillate around Global Best

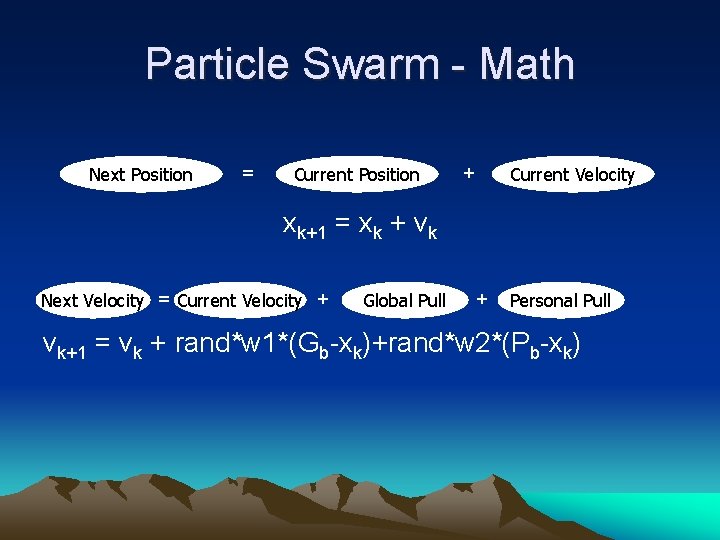

Particle Swarm - Math Next Position = Current Position + Current Velocity xk+1 = xk + vk Next Velocity = Current Velocity + Global Pull + Personal Pull vk+1 = vk + rand*w 1*(Gb-xk)+rand*w 2*(Pb-xk)

Particle Swarm in Operation Link to Particle Swarm file – in Quicktime

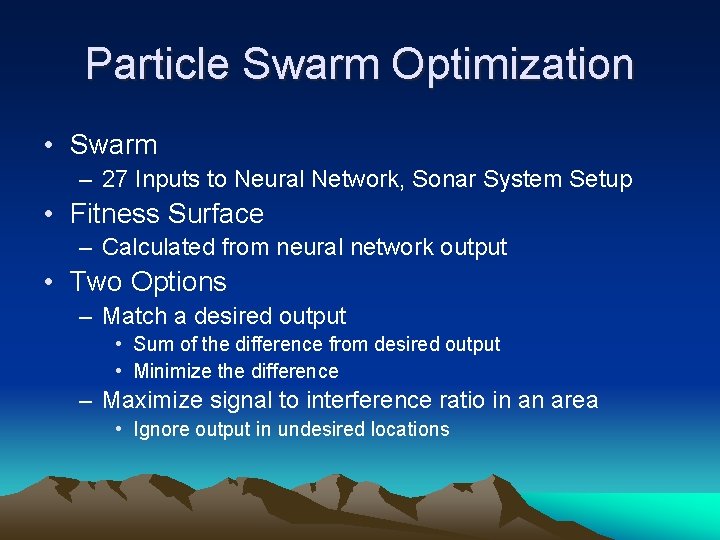

Particle Swarm Optimization • Swarm – 27 Inputs to Neural Network, Sonar System Setup • Fitness Surface – Calculated from neural network output • Two Options – Match a desired output • Sum of the difference from desired output • Minimize the difference – Maximize signal to interference ratio in an area • Ignore output in undesired locations

Particle Swarm in Operation Link to Particle Swarm file – in Quicktime

New Problem Enters • Time for 100 k step particle swarm using a 2. 2 Ghz Pentium: nearly 2 minutes • Desire a real time version • Solution: Implement the neural network and particle swarm optimization in parallel on reconfigurable hardware

Three Design Stages • Activation Function Design – Sigmoid not efficient to calculate • Neural Network Design – Parallel Design • Particle Swarm Optimization – Hardware Implementation

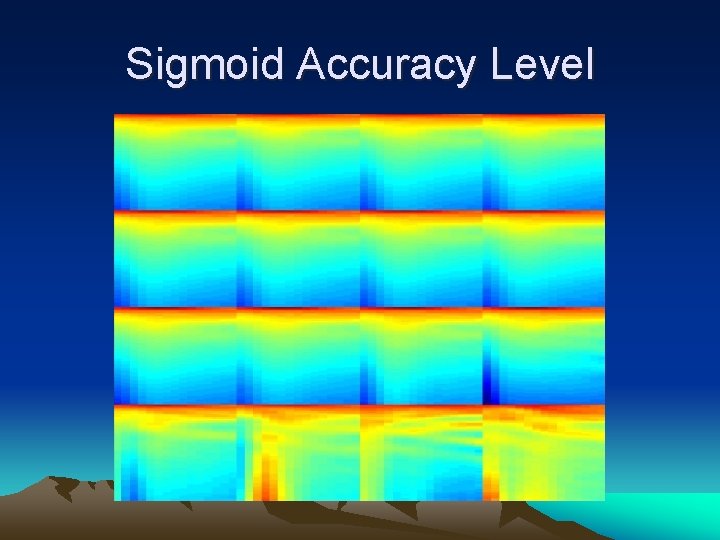

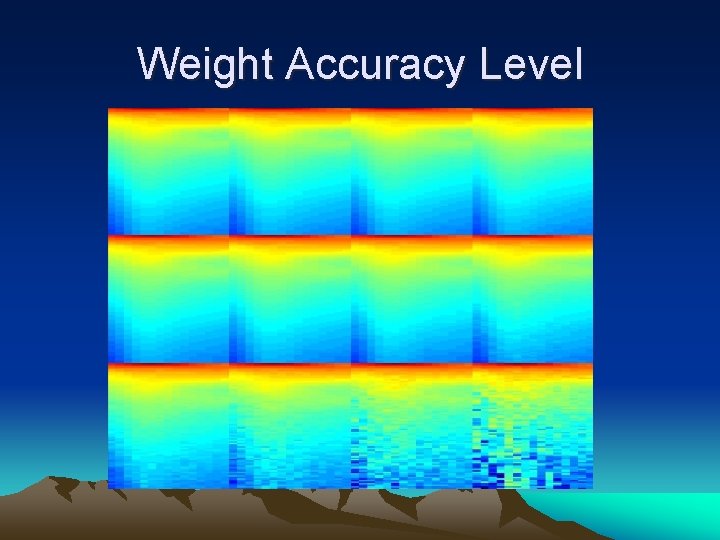

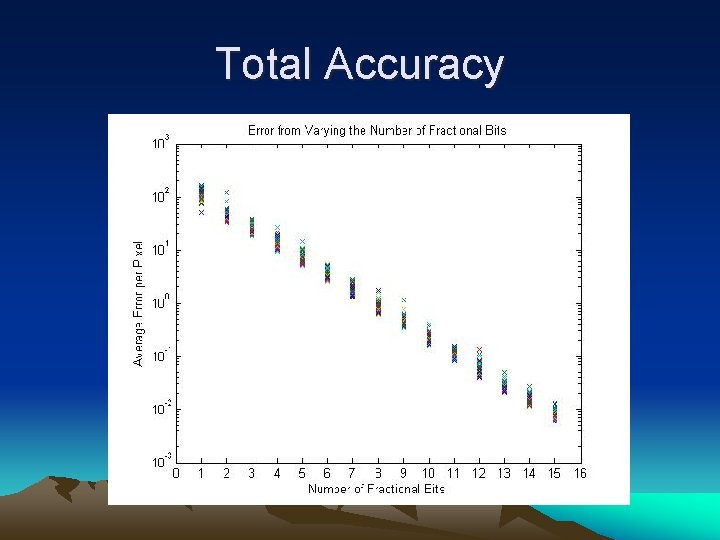

Activation Function Design • Fixed Point Design • Sigmoid Accuracy Level • Weight Accuracy Level

Fixed Point Design • VS Floating Point – Easier – Less Area – Faster • Data Range of -50 to 85 – 2’s Complement – 7 integer bits – 1 sign bit • Fractional Portion – Sigmoid outputs less than 1 – Some number of fractional bits

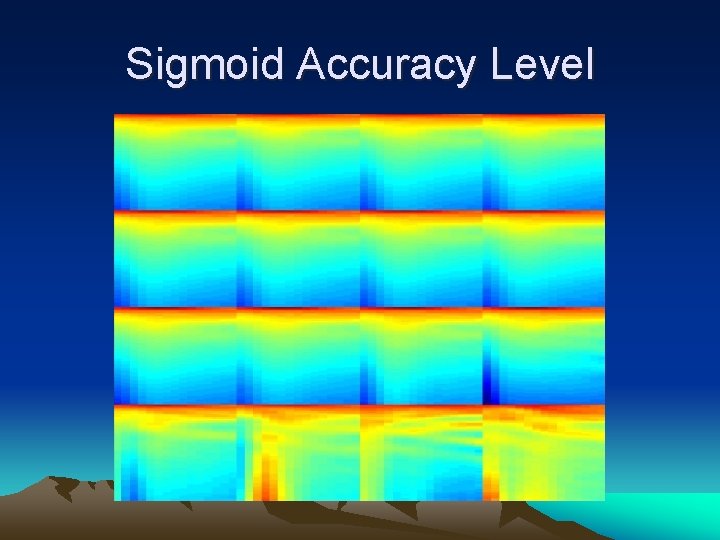

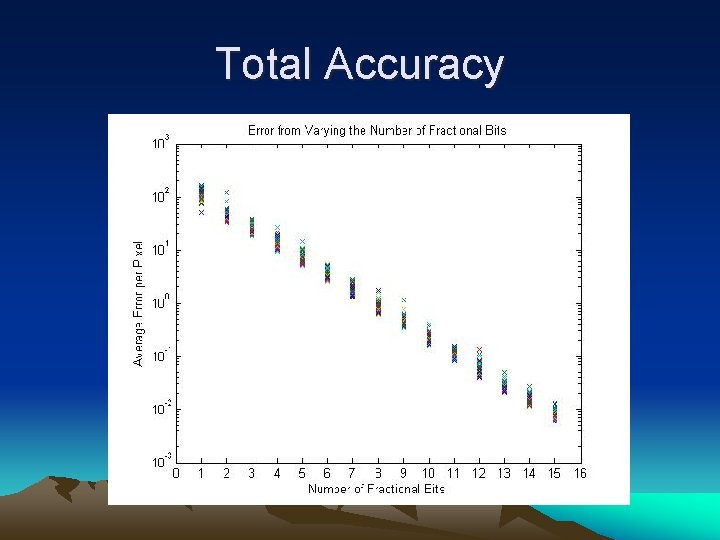

Sigmoid Accuracy Level

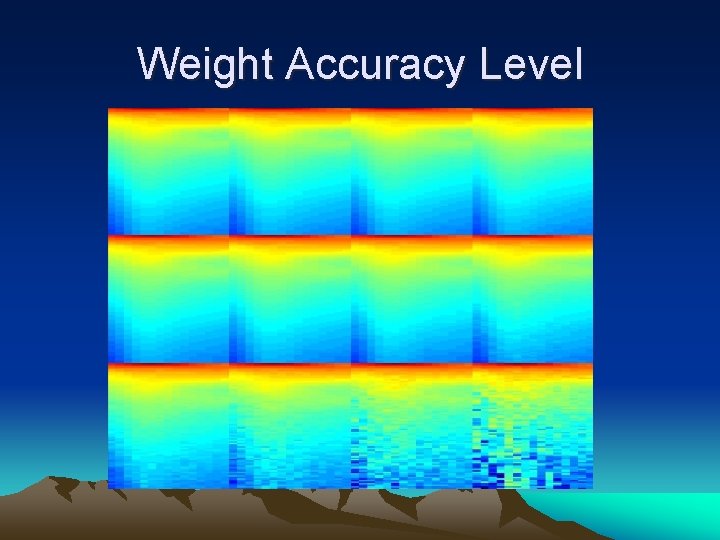

Weight Accuracy Level

Total Accuracy

Fixed Point Results • 16 -bit Number – 1 Sign Bit – 7 Integer Bits – 8 Fractional Bits • Advantages – 18 x 18 multipliers – 64 -bit input banks

Activation Function Approximation • Compared 4 Designs – – Look-up Table Shift and Add CORDIC Taylor Series

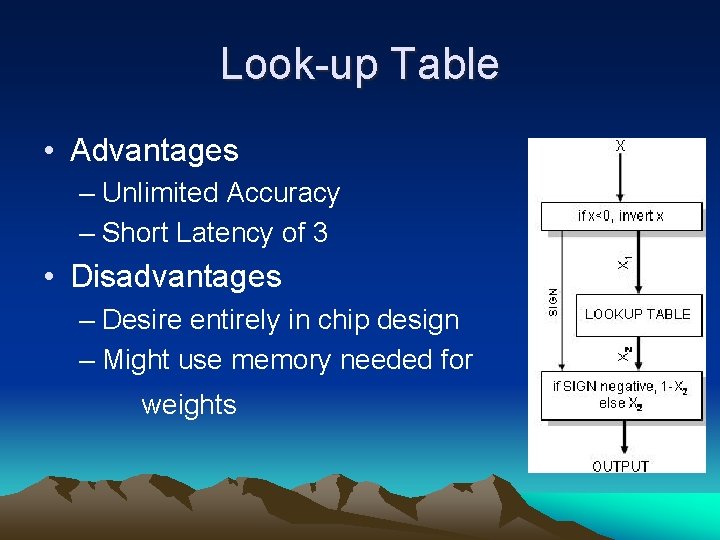

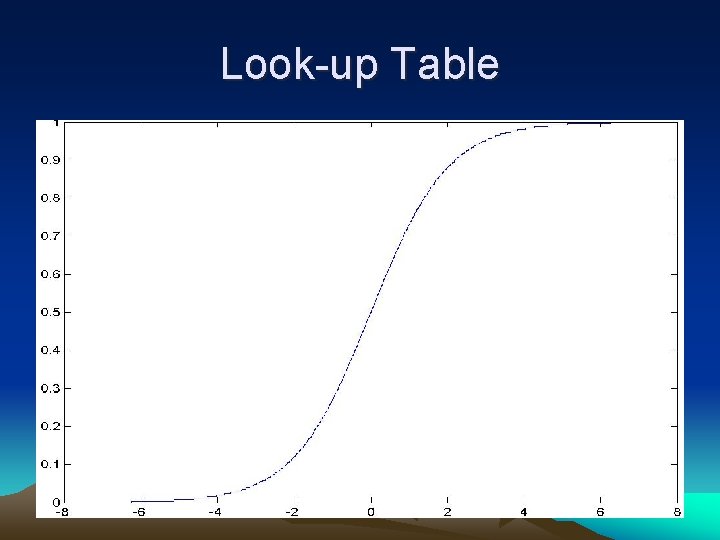

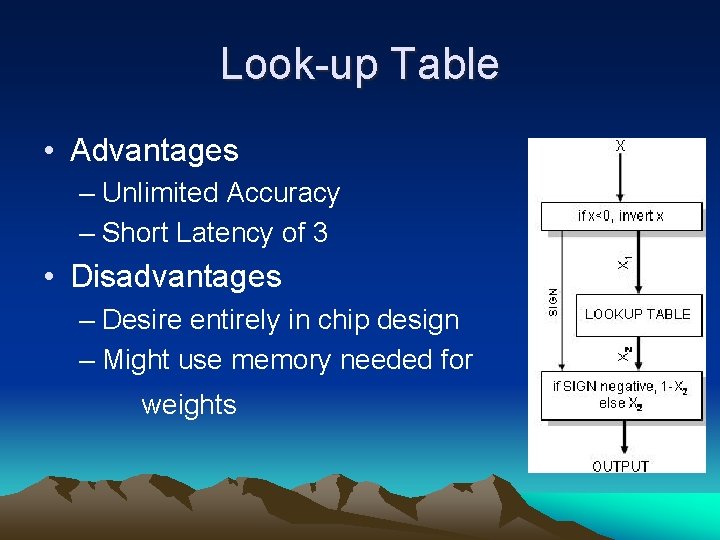

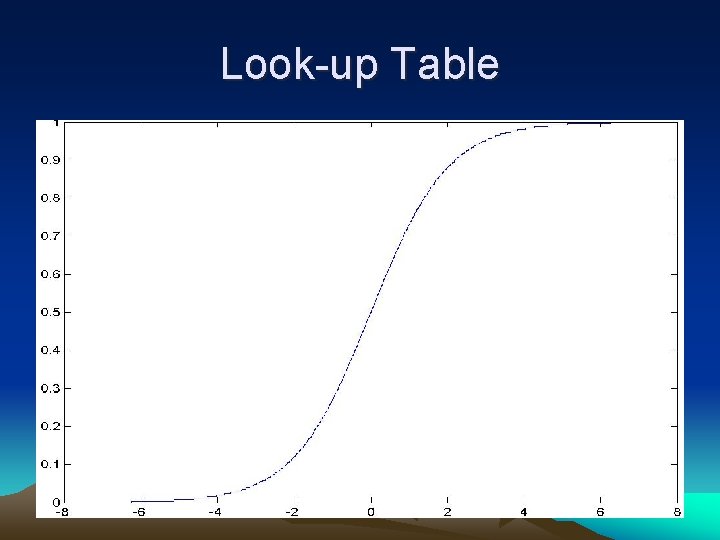

Look-up Table • Advantages – Unlimited Accuracy – Short Latency of 3 • Disadvantages – Desire entirely in chip design – Might use memory needed for weights

Look-up Table

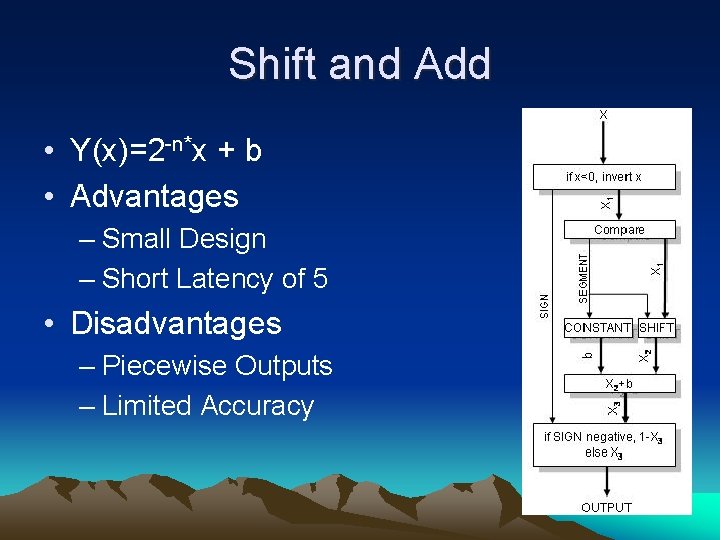

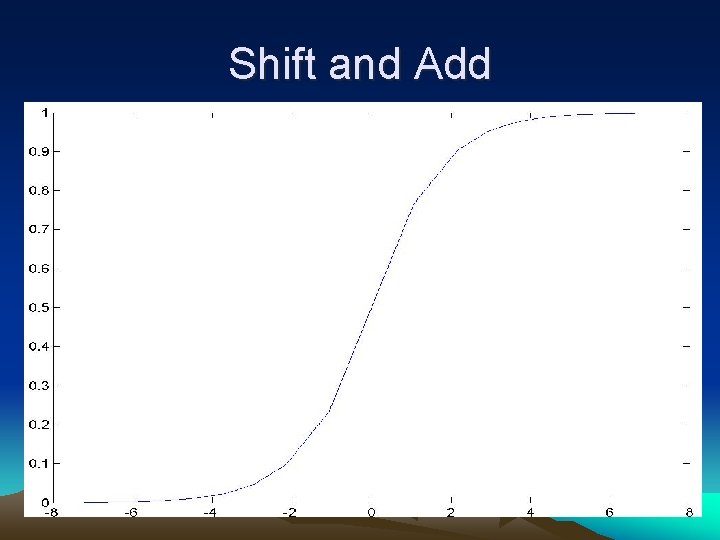

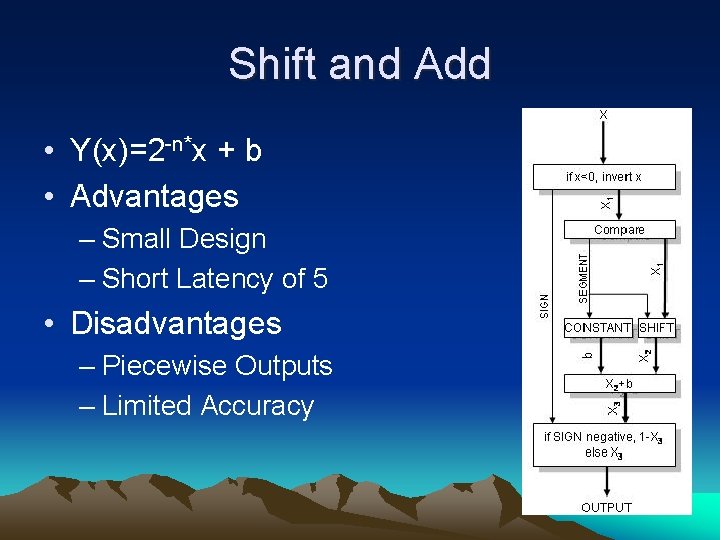

Shift and Add • Y(x)=2 -n*x + b • Advantages – Small Design – Short Latency of 5 • Disadvantages – Piecewise Outputs – Limited Accuracy

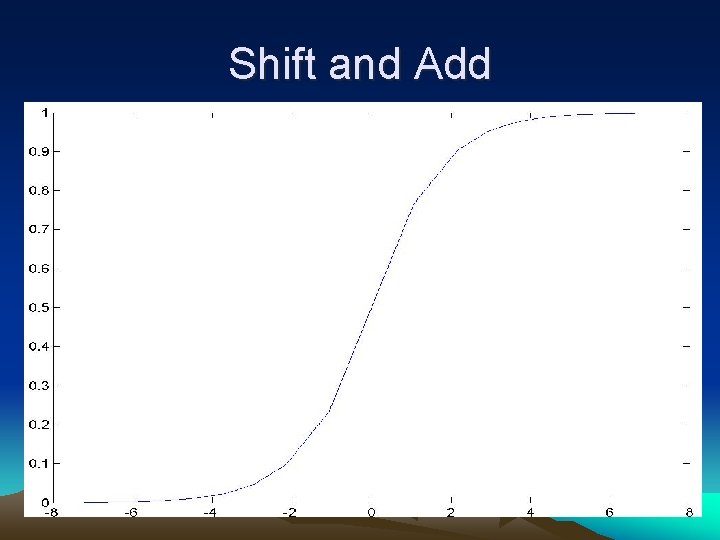

Shift and Add

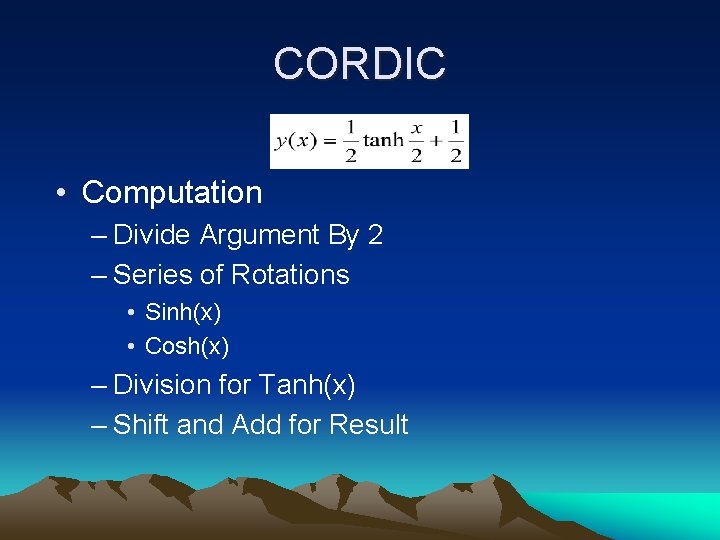

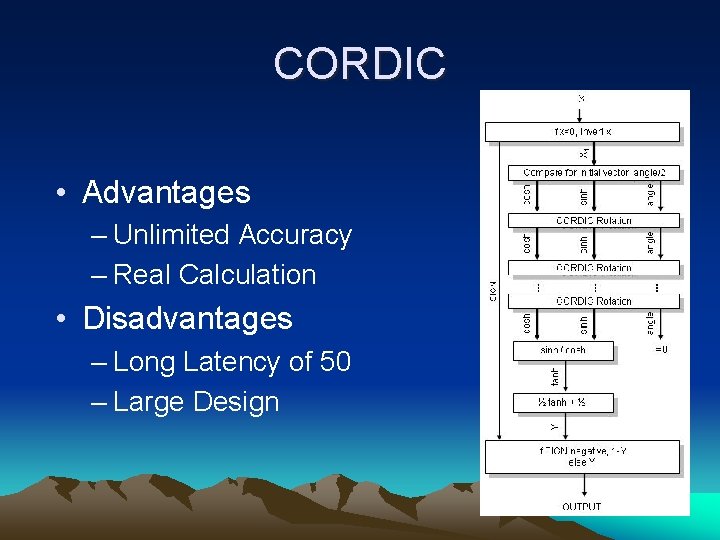

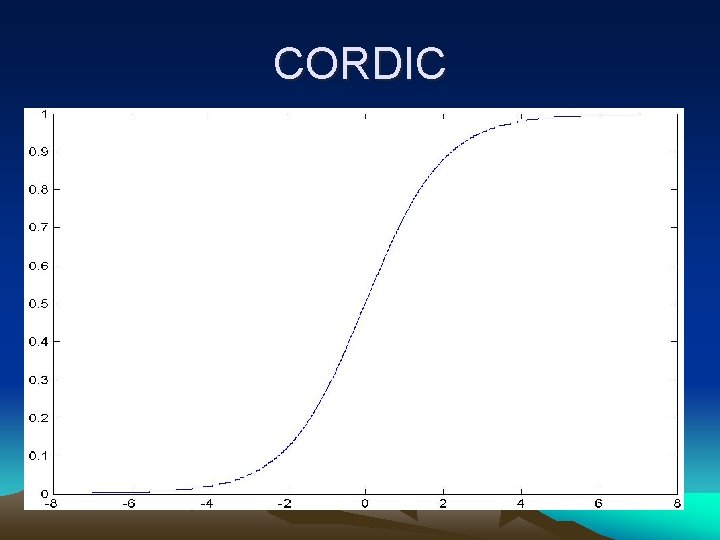

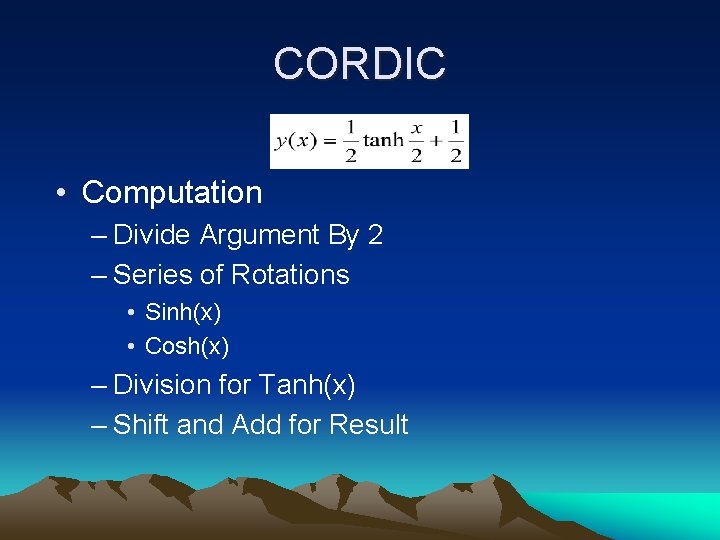

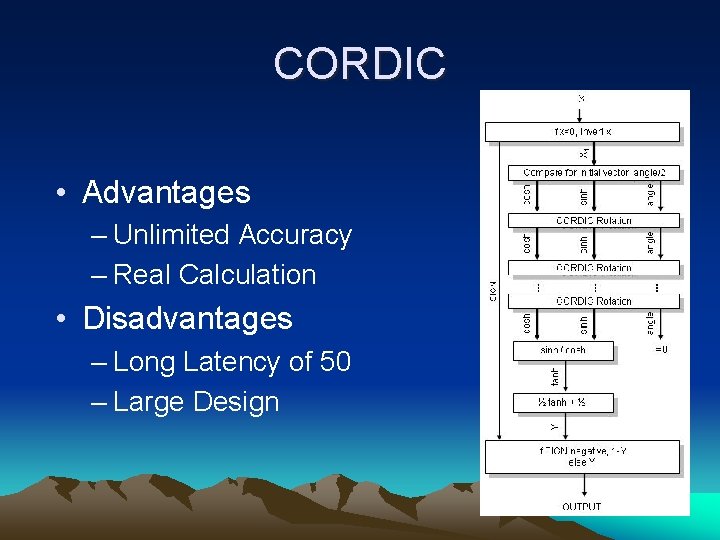

CORDIC • Computation – Divide Argument By 2 – Series of Rotations • Sinh(x) • Cosh(x) – Division for Tanh(x) – Shift and Add for Result

CORDIC • Advantages – Unlimited Accuracy – Real Calculation • Disadvantages – Long Latency of 50 – Large Design

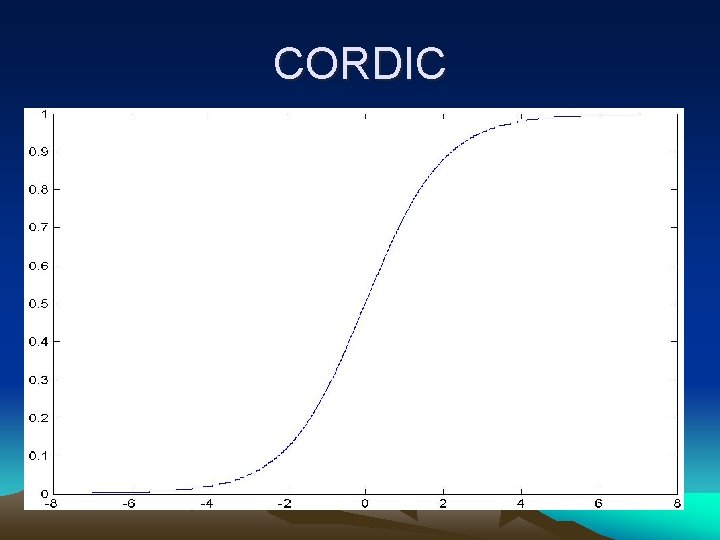

CORDIC

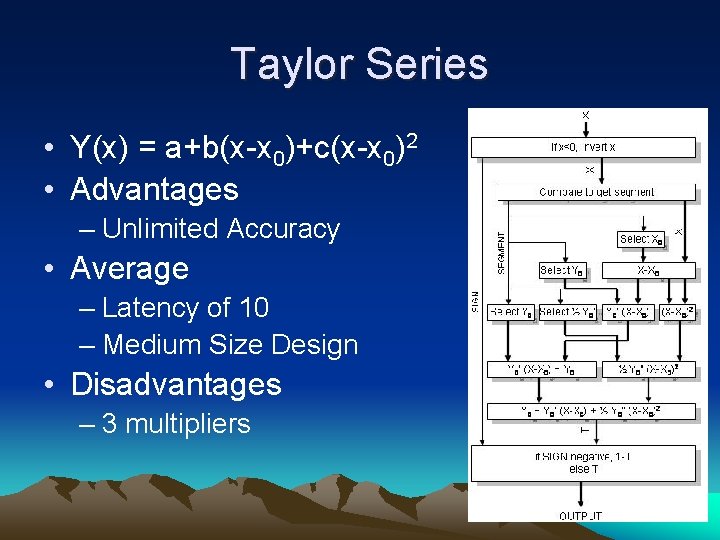

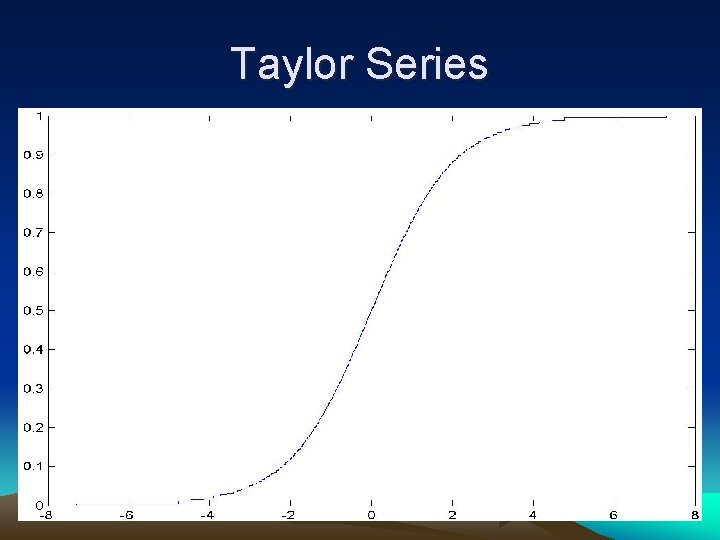

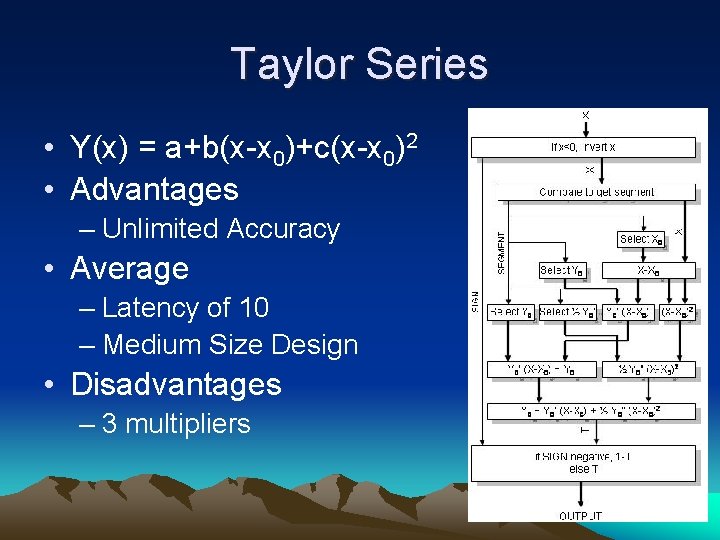

Taylor Series • Y(x) = a+b(x-x 0)+c(x-x 0)2 • Advantages – Unlimited Accuracy • Average – Latency of 10 – Medium Size Design • Disadvantages – 3 multipliers

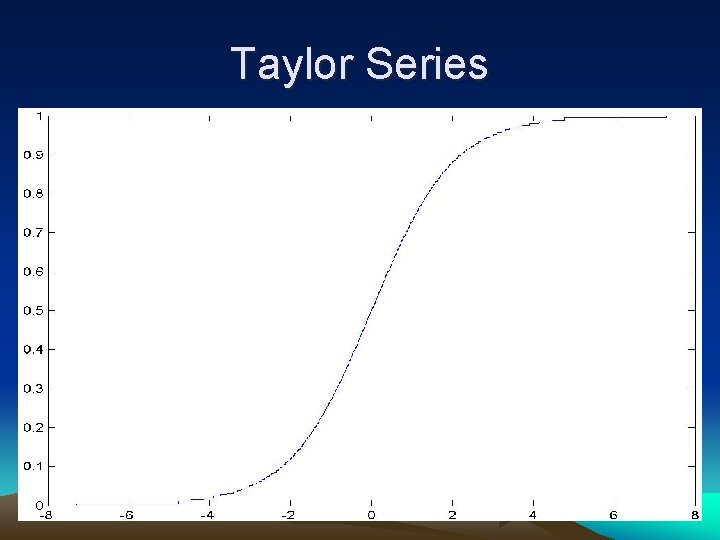

Taylor Series

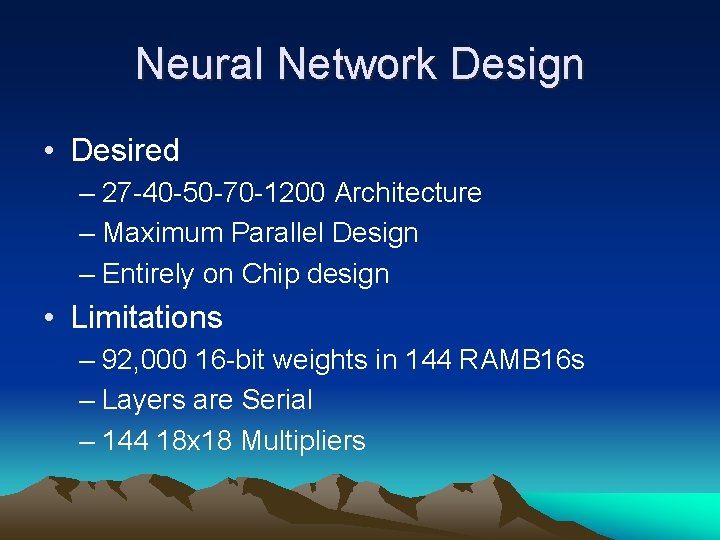

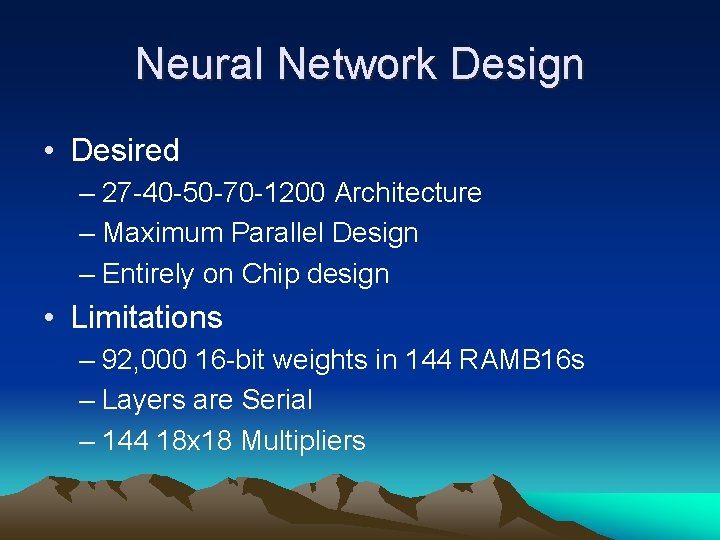

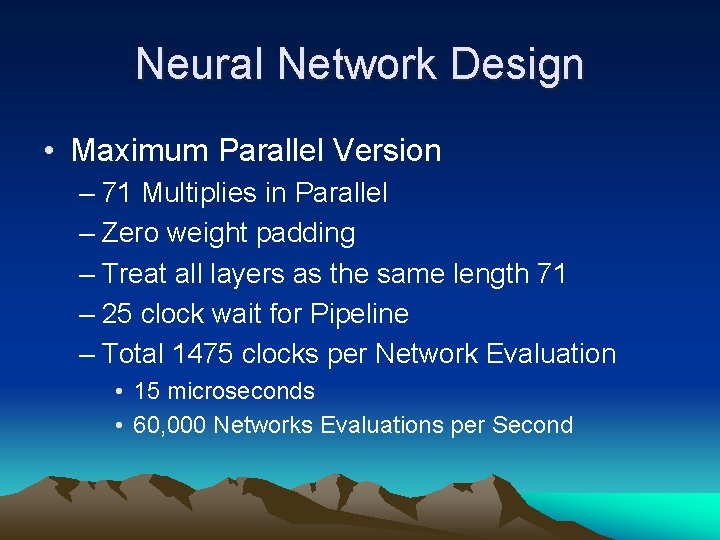

Neural Network Design • Desired – 27 -40 -50 -70 -1200 Architecture – Maximum Parallel Design – Entirely on Chip design • Limitations – 92, 000 16 -bit weights in 144 RAMB 16 s – Layers are Serial – 144 18 x 18 Multipliers

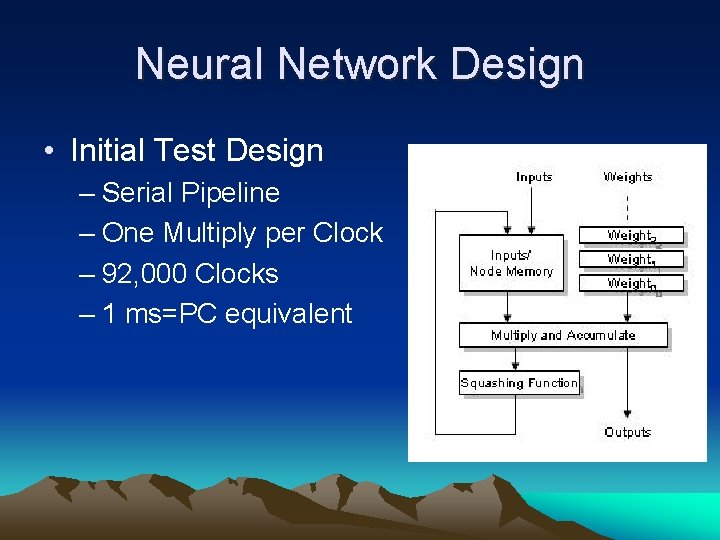

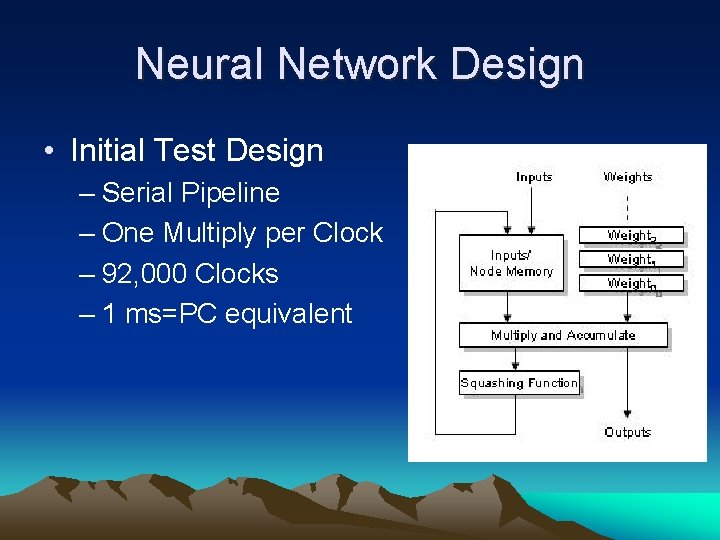

Neural Network Design • Initial Test Design – Serial Pipeline – One Multiply per Clock – 92, 000 Clocks – 1 ms=PC equivalent

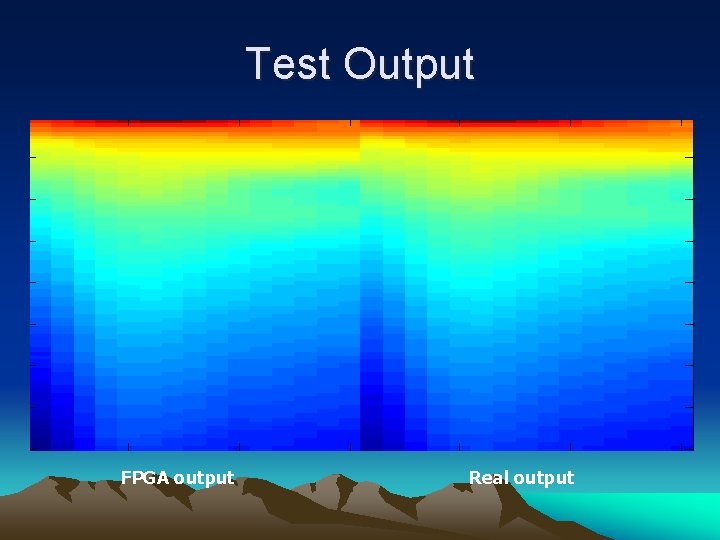

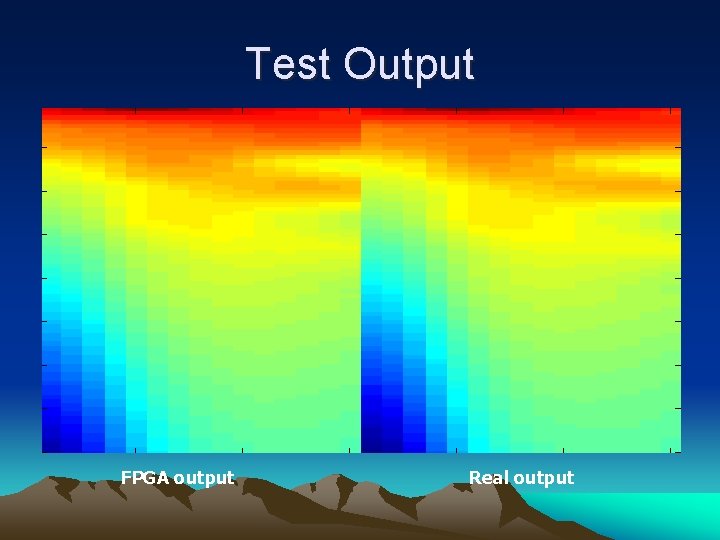

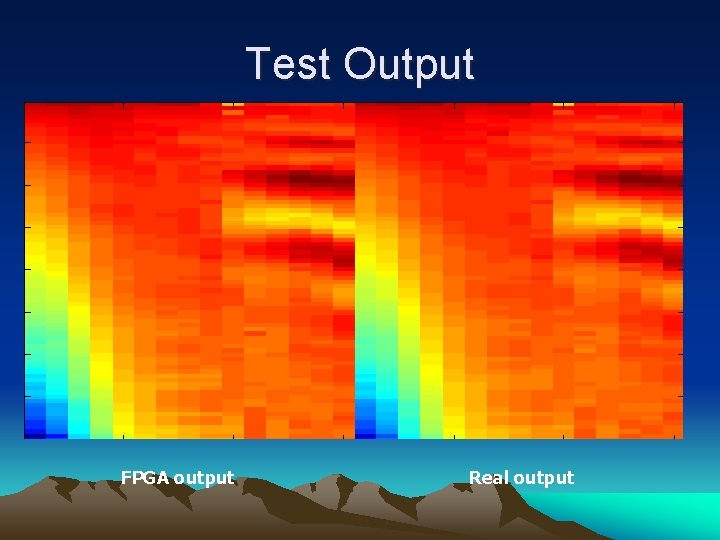

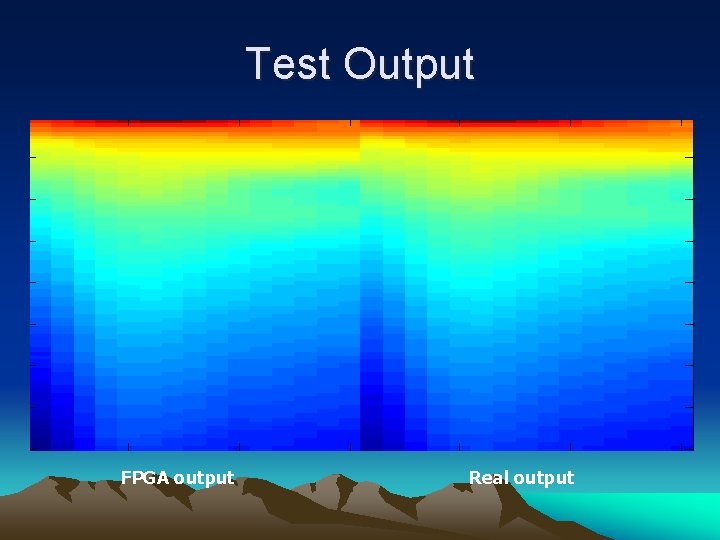

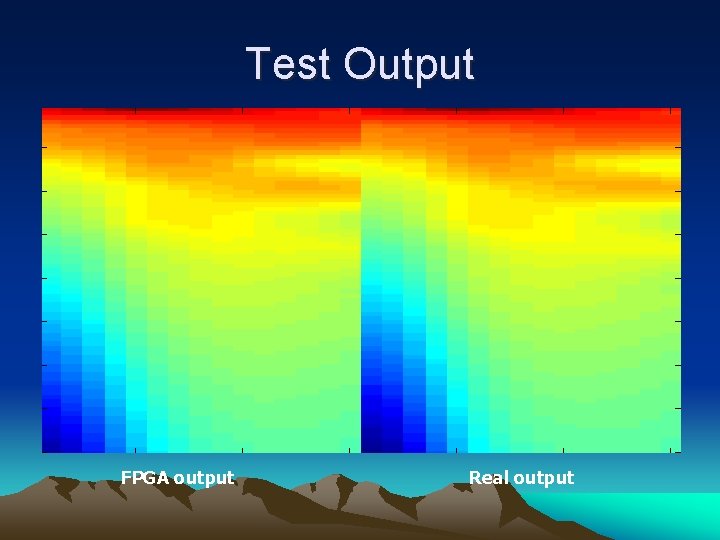

Test Output FPGA output Real output

Test Output FPGA output Real output

Test Output FPGA output Real output

Neural Network Design • Maximum Parallel Version – 71 Multiplies in Parallel – Zero weight padding – Treat all layers as the same length 71 – 25 clock wait for Pipeline – Total 1475 clocks per Network Evaluation • 15 microseconds • 60, 000 Networks Evaluations per Second

Neural Network Design

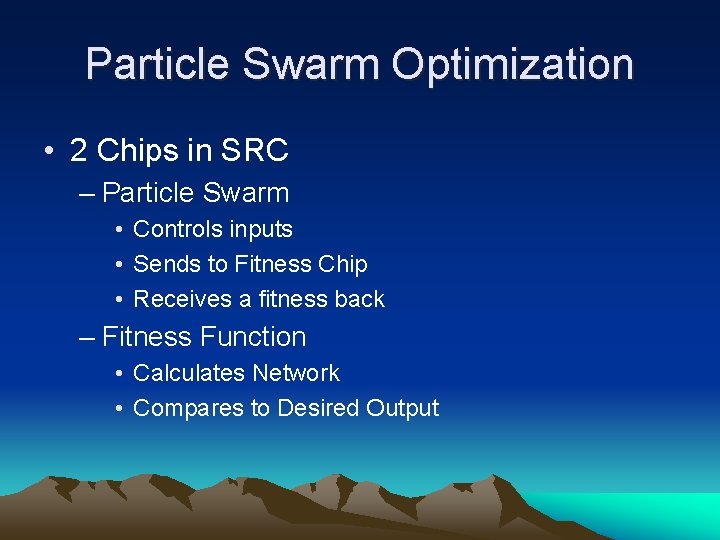

Particle Swarm Optimization • 2 Chips in SRC – Particle Swarm • Controls inputs • Sends to Fitness Chip • Receives a fitness back – Fitness Function • Calculates Network • Compares to Desired Output

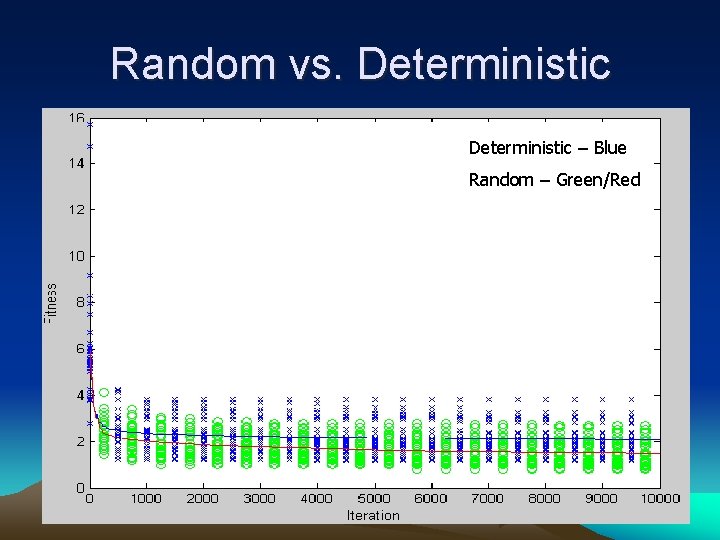

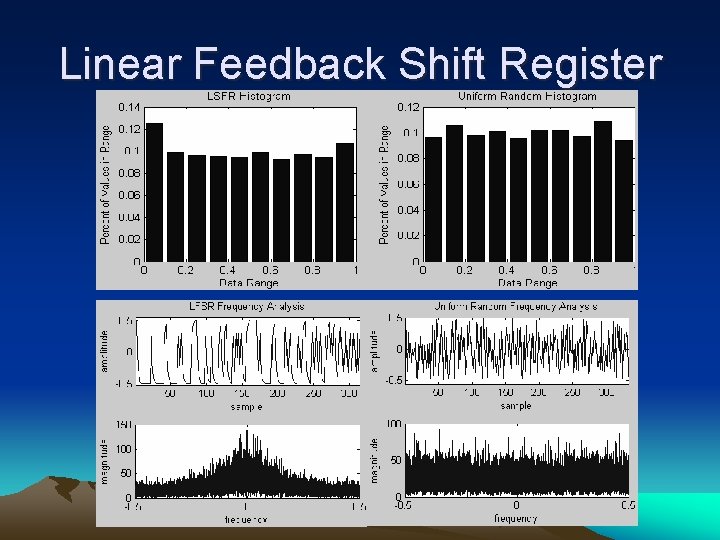

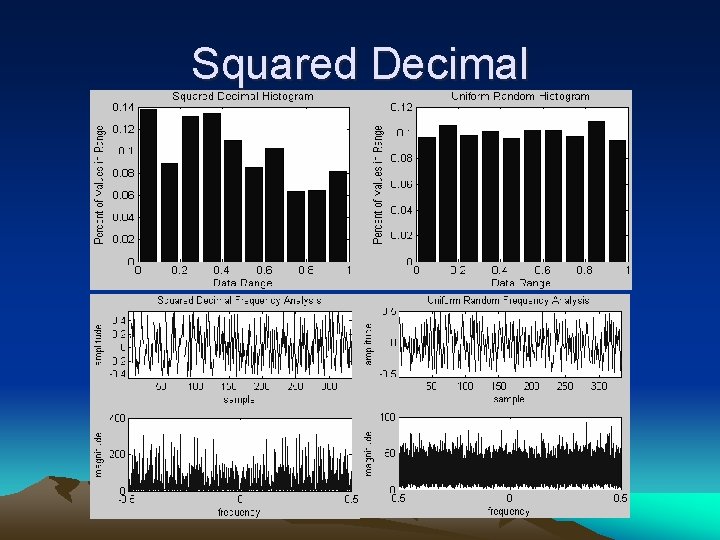

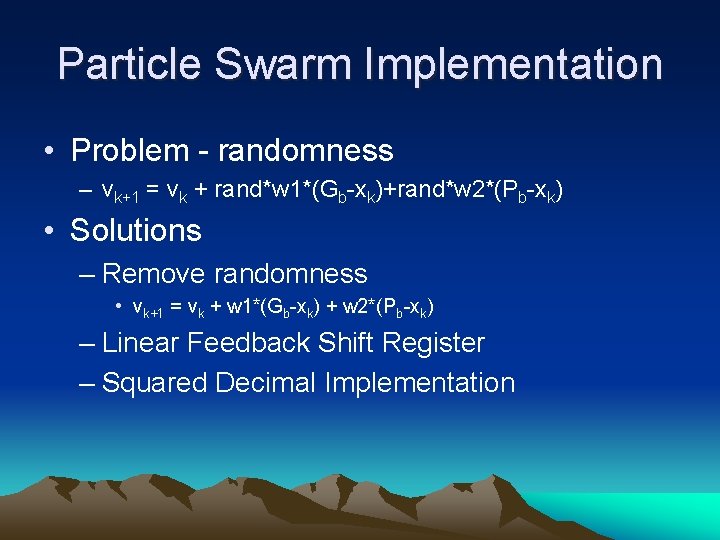

Particle Swarm Implementation • Problem - randomness – vk+1 = vk + rand*w 1*(Gb-xk)+rand*w 2*(Pb-xk) • Solutions – Remove randomness • vk+1 = vk + w 1*(Gb-xk) + w 2*(Pb-xk) – Linear Feedback Shift Register – Squared Decimal Implementation

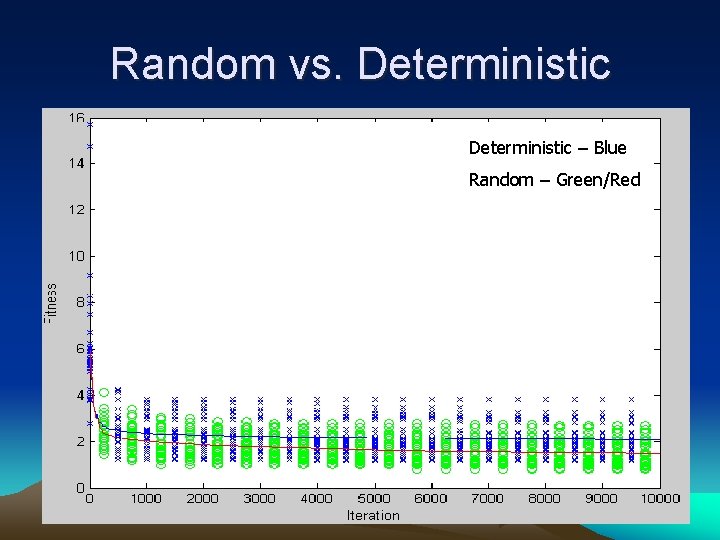

Random vs. Deterministic – Blue Random – Green/Red

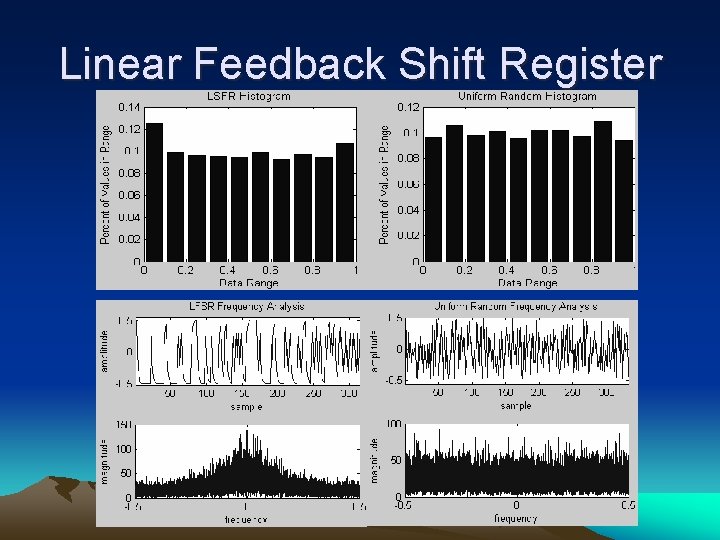

Linear Feedback Shift Register

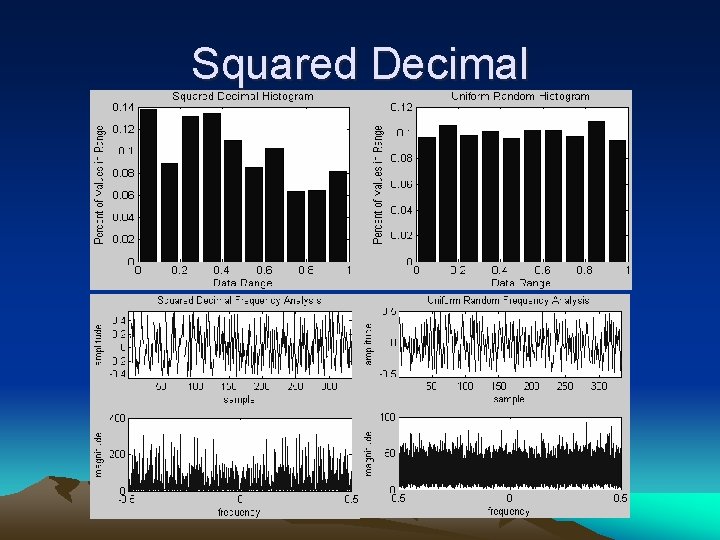

Squared Decimal

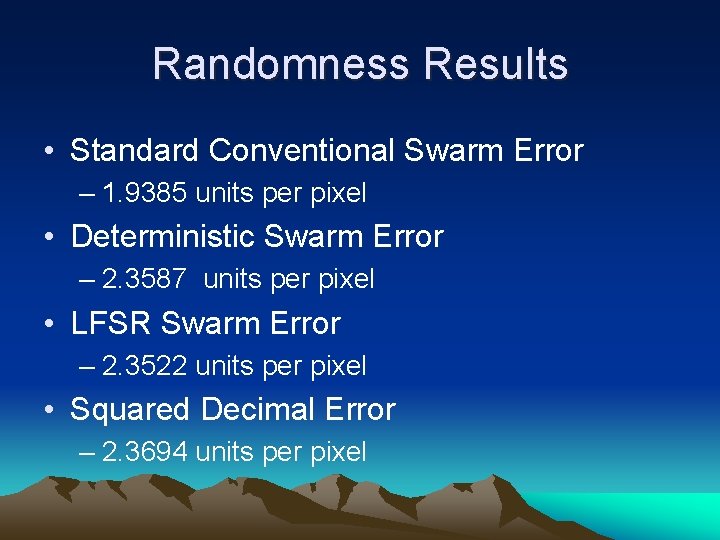

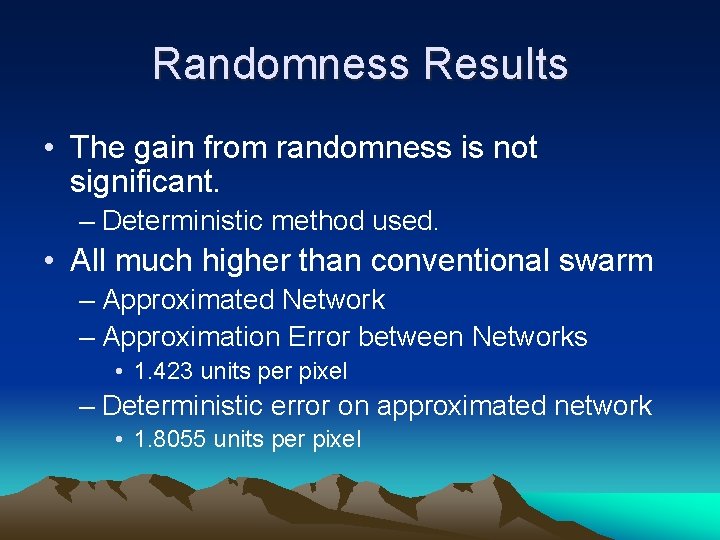

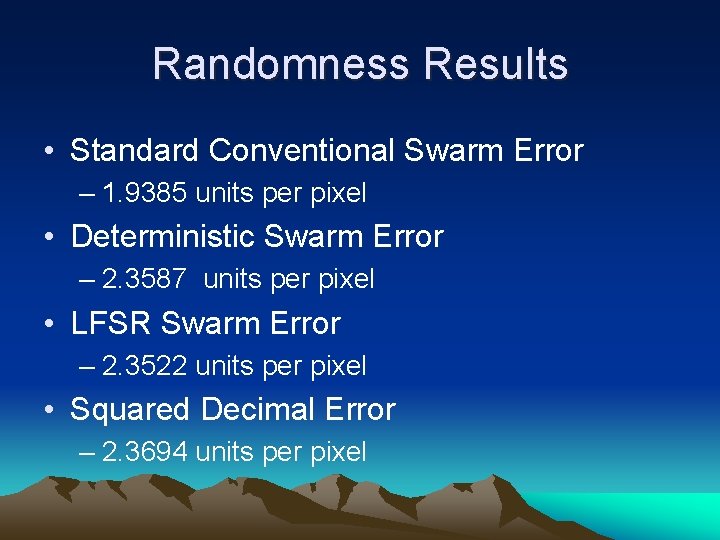

Randomness Results • Standard Conventional Swarm Error – 1. 9385 units per pixel • Deterministic Swarm Error – 2. 3587 units per pixel • LFSR Swarm Error – 2. 3522 units per pixel • Squared Decimal Error – 2. 3694 units per pixel

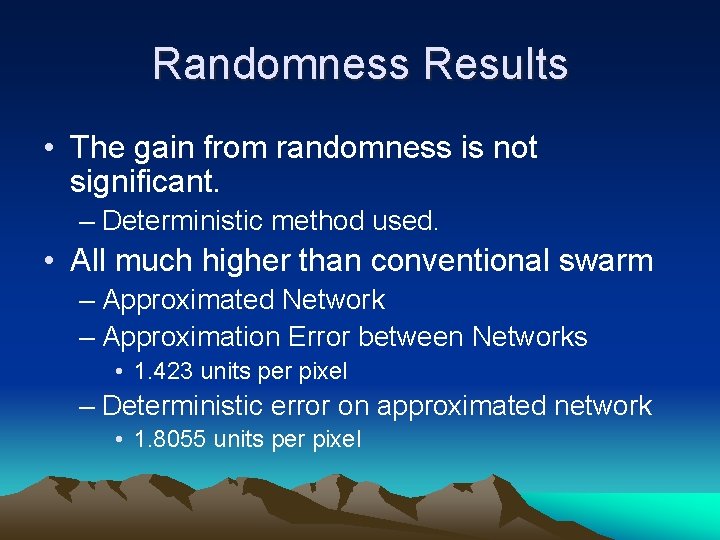

Randomness Results • The gain from randomness is not significant. – Deterministic method used. • All much higher than conventional swarm – Approximated Network – Approximation Error between Networks • 1. 423 units per pixel – Deterministic error on approximated network • 1. 8055 units per pixel

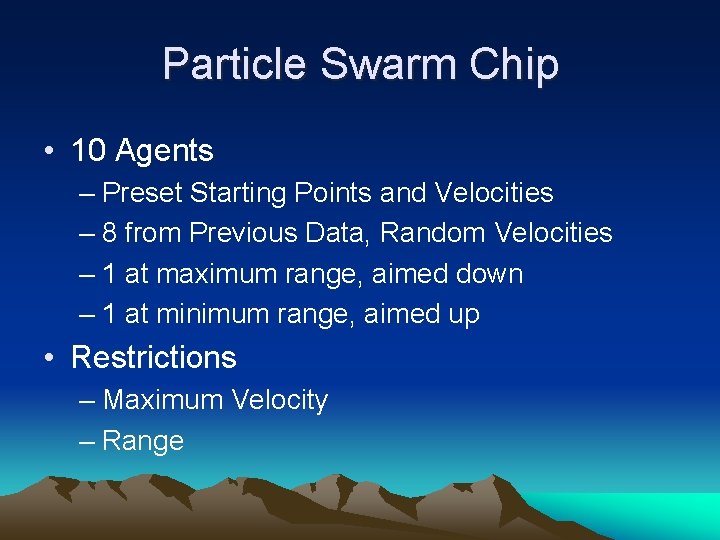

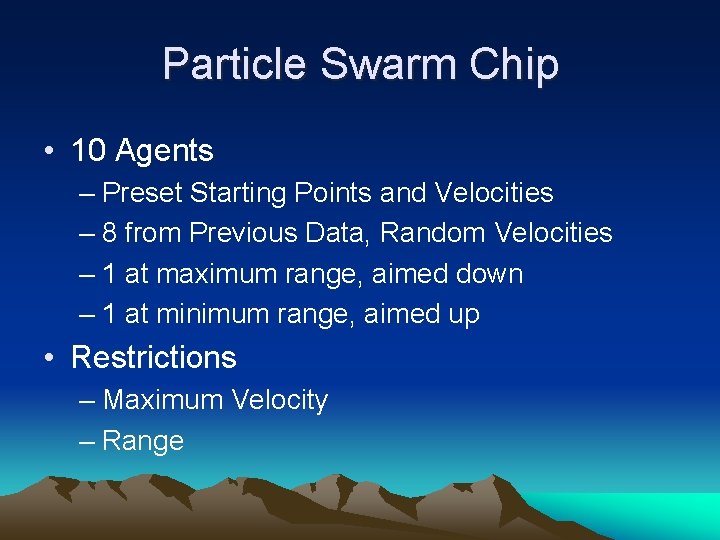

Particle Swarm Chip • 10 Agents – Preset Starting Points and Velocities – 8 from Previous Data, Random Velocities – 1 at maximum range, aimed down – 1 at minimum range, aimed up • Restrictions – Maximum Velocity – Range

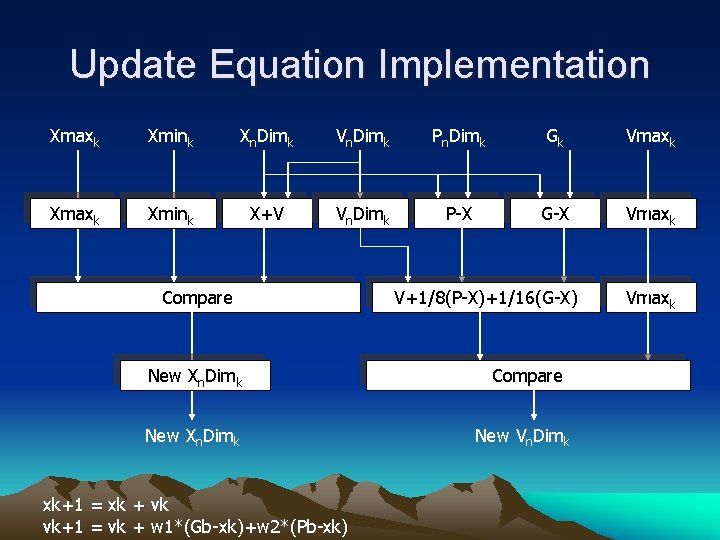

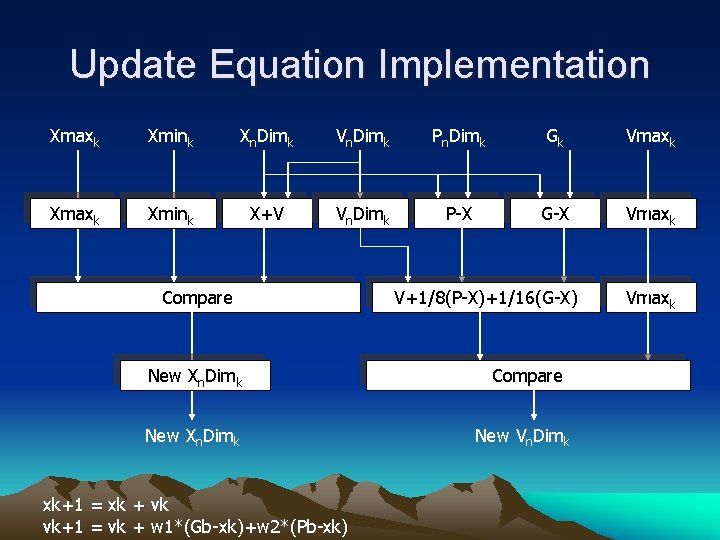

Update Equation Implementation Xmaxk Xmink Xn. Dimk Vn. Dimk Pn. Dimk Gk Vmaxk Xmink X+V Vn. Dimk P-X G-X Vmaxk Compare V+1/8(P-X)+1/16(G-X) New Xn. Dimk Compare New Xn. Dimk New Vn. Dimk xk+1 = xk + vk vk+1 = vk + w 1*(Gb-xk)+w 2*(Pb-xk) Vmaxk

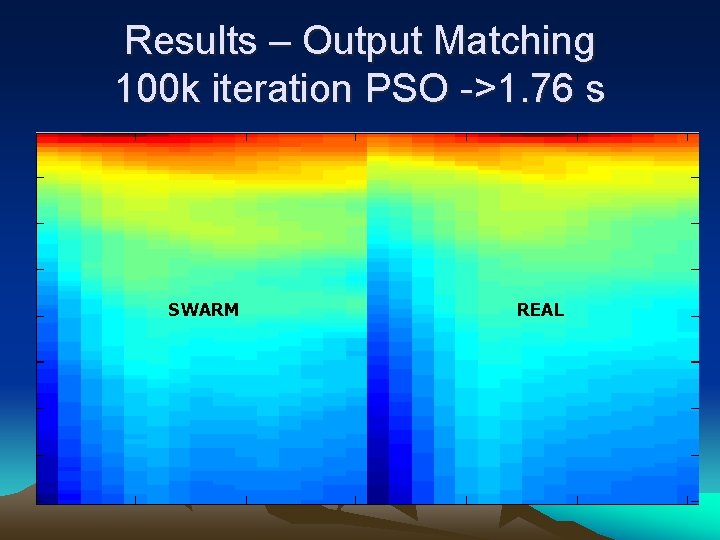

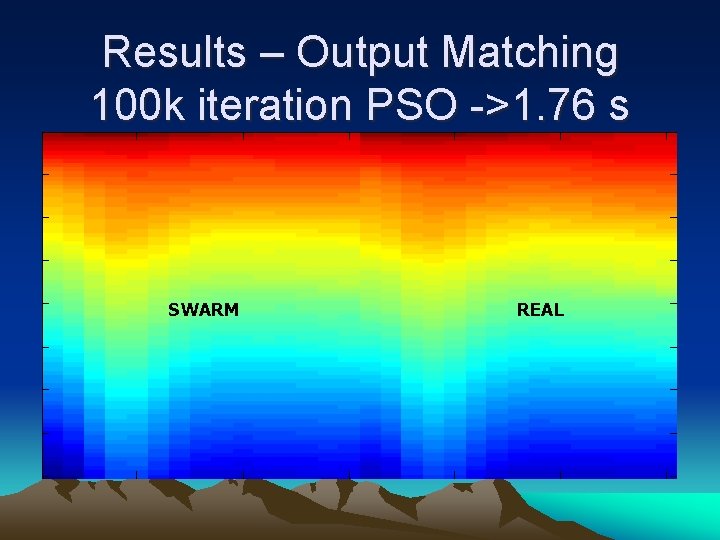

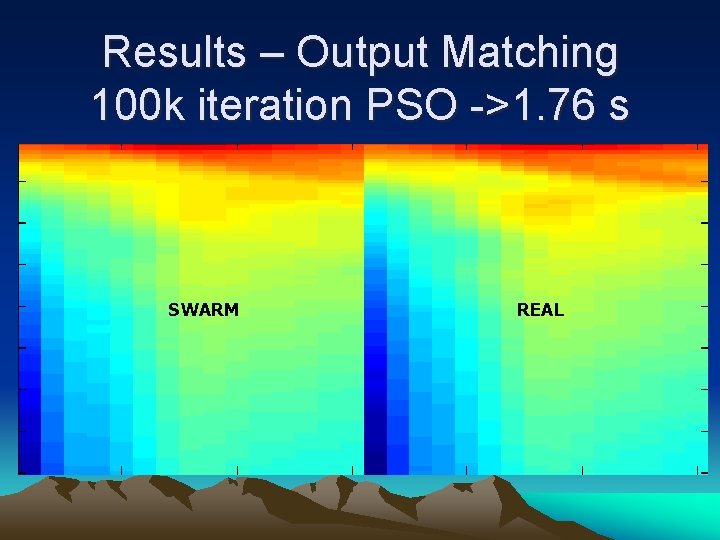

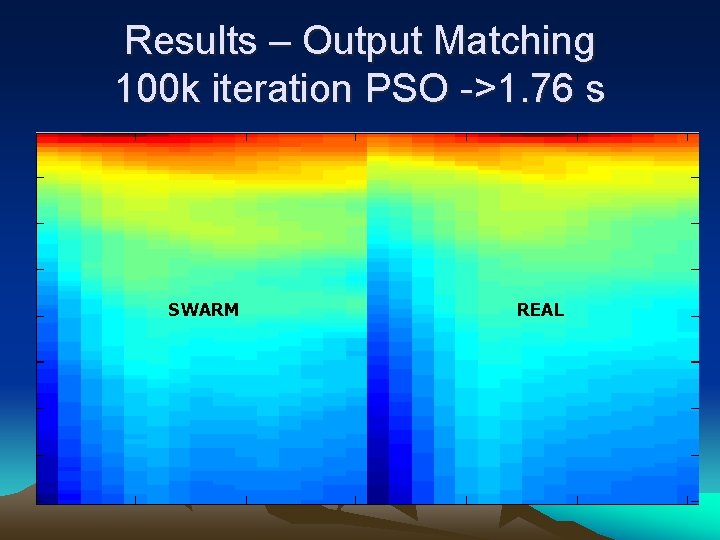

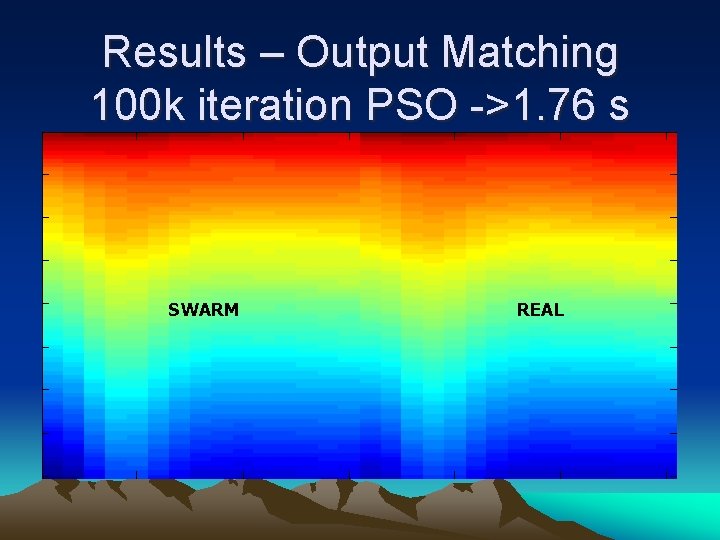

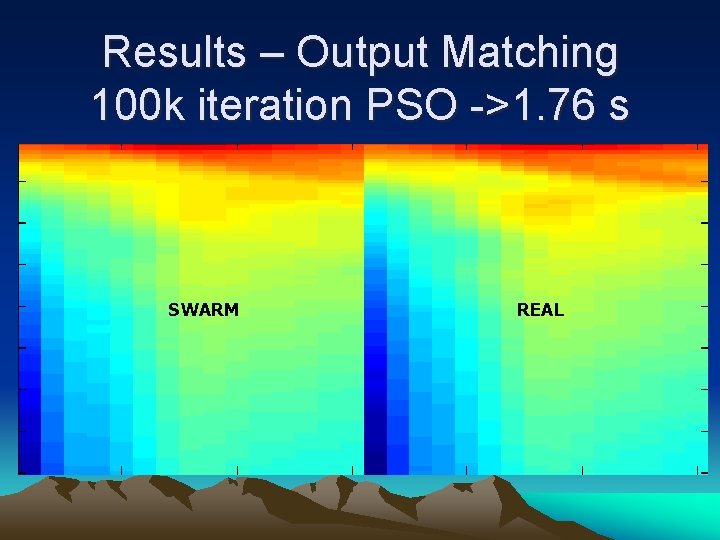

Results – Output Matching 100 k iteration PSO ->1. 76 s SWARM REAL

Results – Output Matching 100 k iteration PSO ->1. 76 s SWARM REAL

Results – Output Matching 100 k iteration PSO ->1. 76 s SWARM REAL

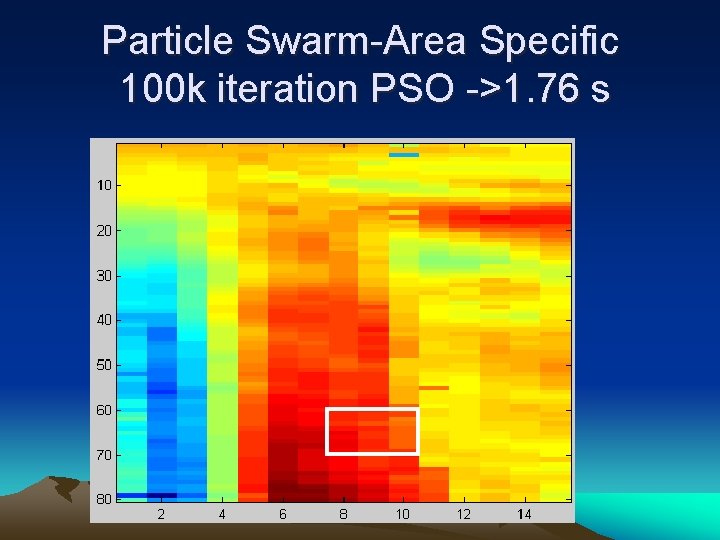

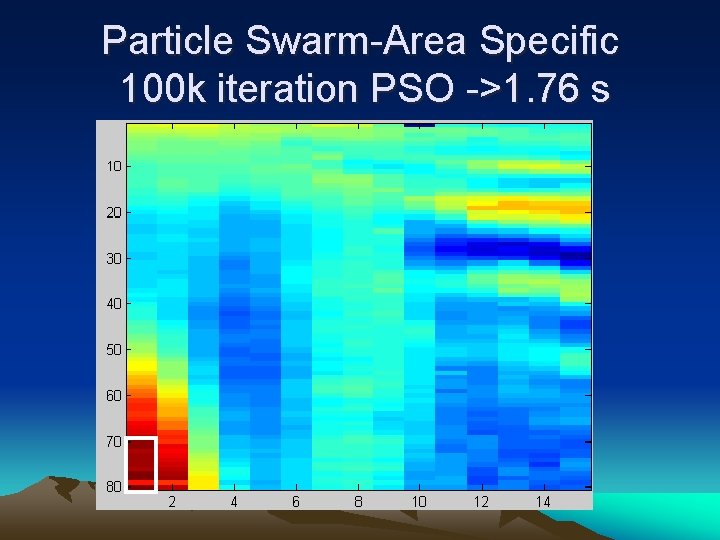

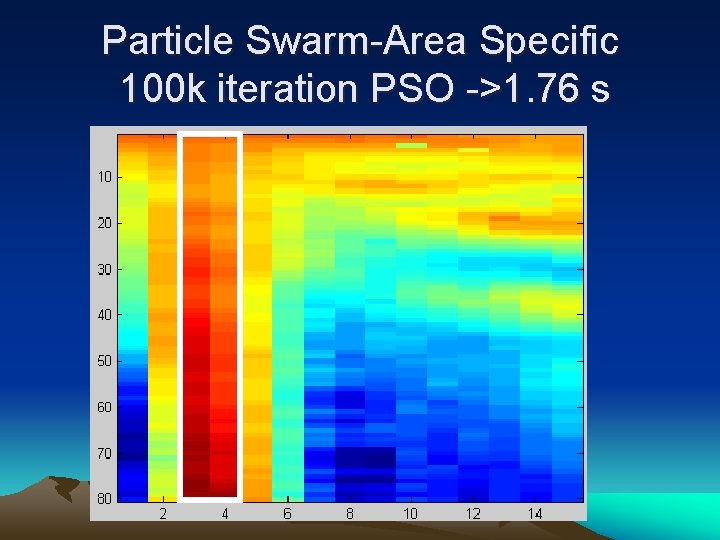

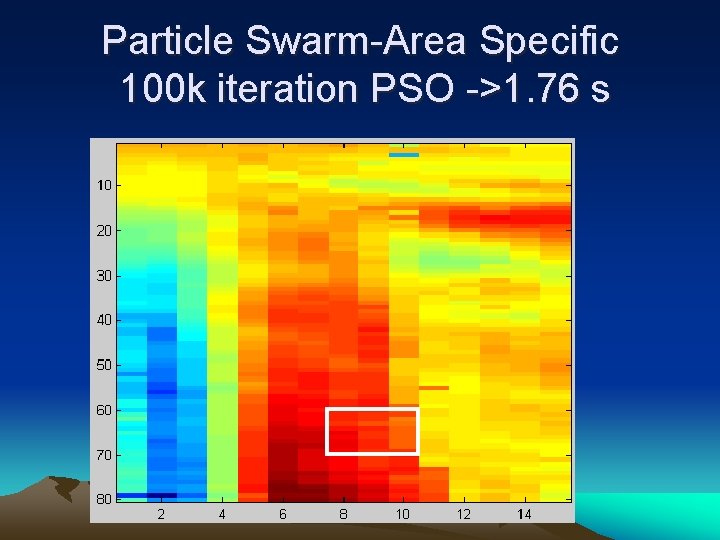

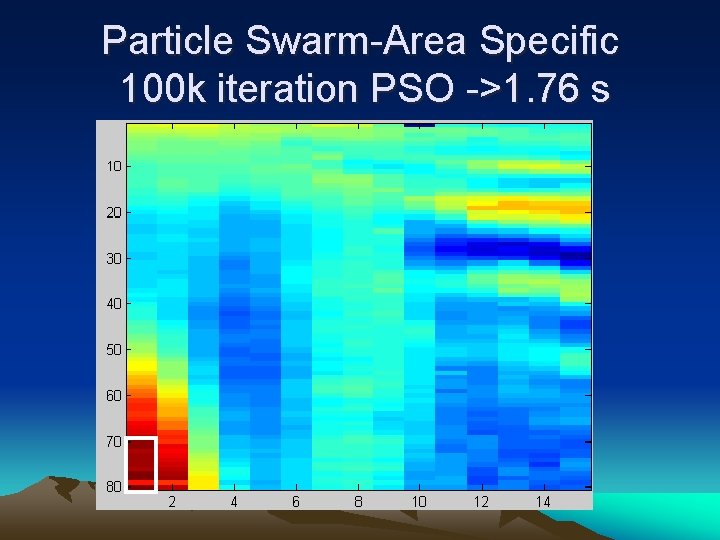

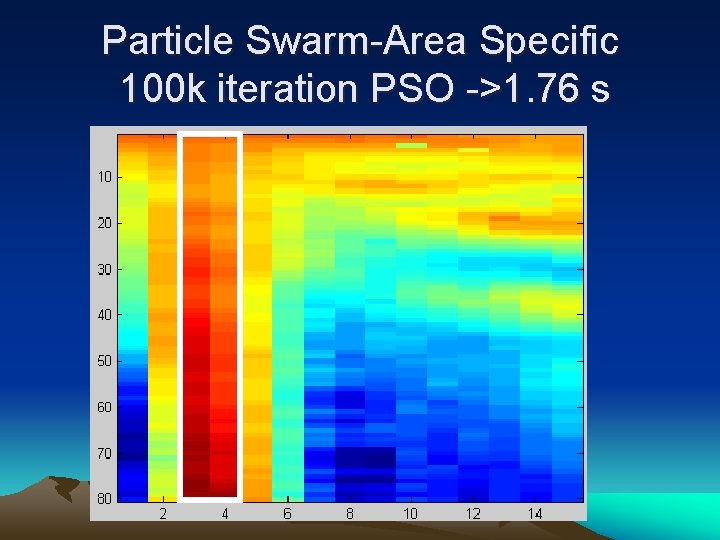

Particle Swarm-Area Specific 100 k iteration PSO ->1. 76 s

Particle Swarm-Area Specific 100 k iteration PSO ->1. 76 s

Particle Swarm-Area Specific 100 k iteration PSO ->1. 76 s

ANY QUESTIONS?