Algorithm Design Techniques Greedy Method Knapsack Problem Job

Algorithm Design Techniques, Greedy Method – Knapsack Problem, Job Sequencing, Divide and Conquer Method – Quick Sort, Finding Maximum and Minimum, Dynamic Programming - Matrix chain multiplication

INTRODUCTION � What is an algorithm? Algorithm is a set of steps to complete a task. � For example, Task: to make a cup of tea. Algorithm: 1. add water and milk to the kettle, 2. Boil it, add tea powder, 3. Add sugar, and then serve it in cup.

What is Computer algorithm � ‘’a set of steps to accomplish or complete a task that is described precisely enough that a computer can run it’’. � Characteristics of an algorithm: - 1. 2. 3. 4. 5. 6. Must take an input. Must give some output (yes/no, value etc. ) Definiteness –each instruction is clear and unambiguous. Finiteness –algorithm terminates after a finite number of steps. Effectiveness –every instruction must be basic i. e. simple instruction. Eg: algorithm to sort a set of values

Given two algorithms for a task, how do we find out which one is better? � � � One naive way of doing this is – implement both the algorithms and run the two programs on your computer for different inputs and see which one takes less time. There are many problems with this approach for analysis of algorithms. 1) It might be possible that for some inputs, first algorithm performs better than the second. And for some inputs second performs better. 2) It might also be possible that for some inputs, first algorithm perform better on one machine and the second works better on other machine for some other inputs.

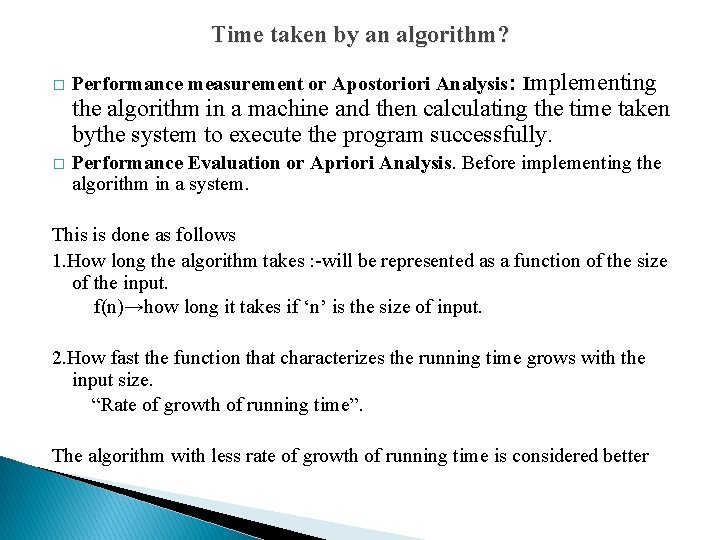

Time taken by an algorithm? � Performance measurement or Apostoriori Analysis: Implementing the algorithm in a machine and then calculating the time taken bythe system to execute the program successfully. � Performance Evaluation or Apriori Analysis. Before implementing the algorithm in a system. This is done as follows 1. How long the algorithm takes : -will be represented as a function of the size of the input. f(n)→how long it takes if ‘n’ is the size of input. 2. How fast the function that characterizes the running time grows with the input size. “Rate of growth of running time”. The algorithm with less rate of growth of running time is considered better

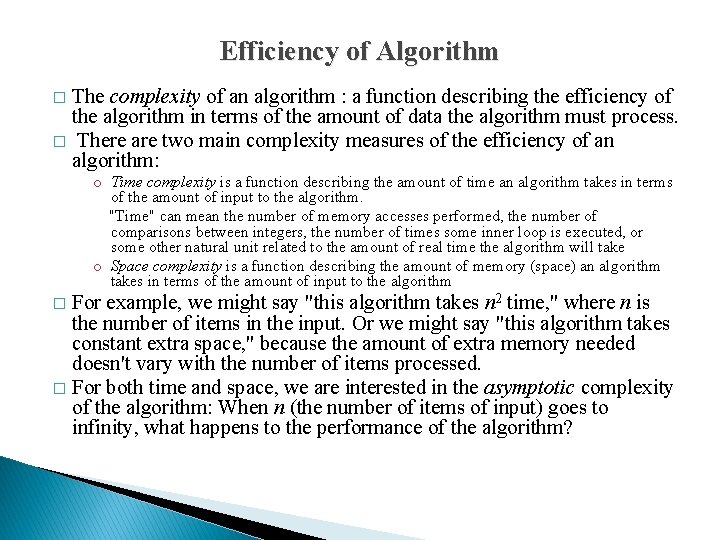

Efficiency of Algorithm The complexity of an algorithm : a function describing the efficiency of the algorithm in terms of the amount of data the algorithm must process. � There are two main complexity measures of the efficiency of an algorithm: � o Time complexity is a function describing the amount of time an algorithm takes in terms of the amount of input to the algorithm. "Time" can mean the number of memory accesses performed, the number of comparisons between integers, the number of times some inner loop is executed, or some other natural unit related to the amount of real time the algorithm will take o Space complexity is a function describing the amount of memory (space) an algorithm takes in terms of the amount of input to the algorithm For example, we might say "this algorithm takes n 2 time, " where n is the number of items in the input. Or we might say "this algorithm takes constant extra space, " because the amount of extra memory needed doesn't vary with the number of items processed. � For both time and space, we are interested in the asymptotic complexity of the algorithm: When n (the number of items of input) goes to infinity, what happens to the performance of the algorithm? �

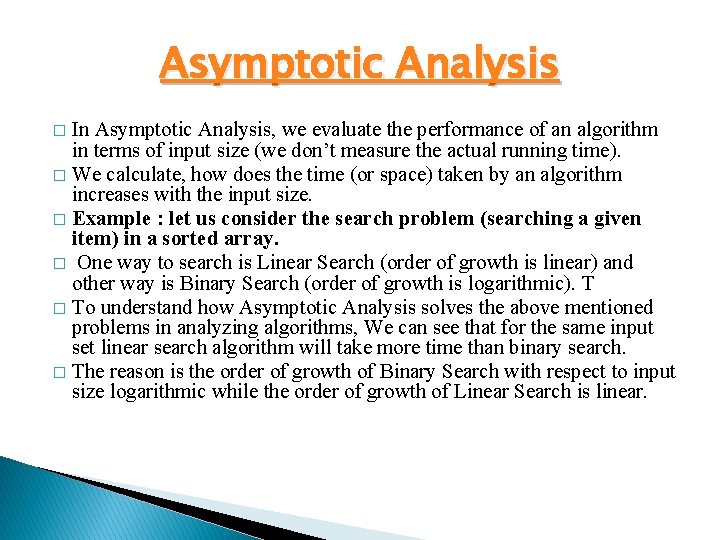

Asymptotic Analysis In Asymptotic Analysis, we evaluate the performance of an algorithm in terms of input size (we don’t measure the actual running time). � We calculate, how does the time (or space) taken by an algorithm increases with the input size. � Example : let us consider the search problem (searching a given item) in a sorted array. � One way to search is Linear Search (order of growth is linear) and other way is Binary Search (order of growth is logarithmic). T � To understand how Asymptotic Analysis solves the above mentioned problems in analyzing algorithms, We can see that for the same input set linear search algorithm will take more time than binary search. � The reason is the order of growth of Binary Search with respect to input size logarithmic while the order of growth of Linear Search is linear. �

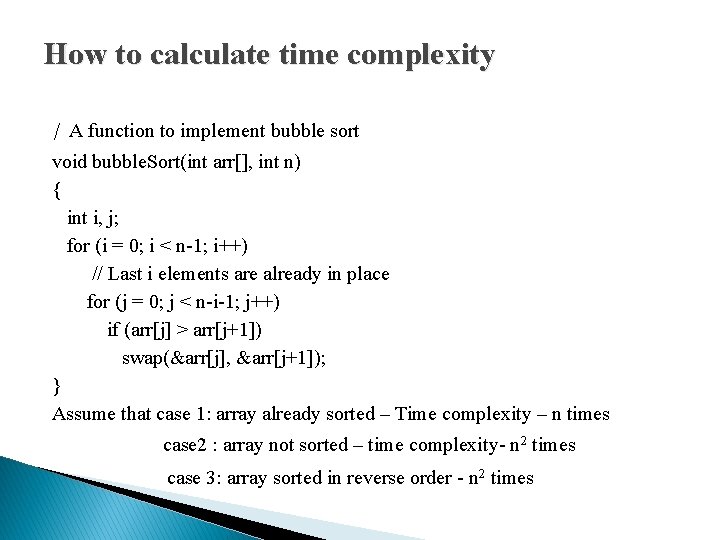

How to calculate time complexity / A function to implement bubble sort void bubble. Sort(int arr[], int n) { int i, j; for (i = 0; i < n-1; i++) // Last i elements are already in place for (j = 0; j < n-i-1; j++) if (arr[j] > arr[j+1]) swap(&arr[j], &arr[j+1]); } Assume that case 1: array already sorted – Time complexity – n times case 2 : array not sorted – time complexity- n 2 times case 3: array sorted in reverse order - n 2 times

Analysis of Algorithm � � We can have three cases to analyze an algorithm: 1) Worst Case 2) Average Case 3) Best Case Worst Case Analysis (Usually Done) In the worst case analysis, we calculate upper bound on running time of an algorithm. We must know the case that causes maximum number of operations to be executed. Average Case Analysis (Sometimes done) In average case analysis, we take all possible inputs and calculate computing time for all of the inputs. Sum all the calculated values and divide the sum by total number of inputs. We must know (or predict) distribution of cases. Best Case Analysis (Bogus) In the best case analysis, we calculate lower bound on running time of an algorithm. We must know the case that causes minimum number of operations to be executed.

Asymptotic notations � Asymptotic notations are mathematical tools to represent time complexity of algorithms for asymptotic analysis. � The following 3 asymptotic notations are mostly used to represent time complexity of algorithms. 1) Θ Notation 2) Big O Notation 3) Ω Notation Assignment: Upload notes on Asymptotic Notations with example (http: //ignou. ac. in/userfiles/Unit 2 finalversion_Asymptotic%20 Bouneds. pdf)

Asymptotic notations � O(expression) is the set of functions that grow slower than or at the same rate as expression. � Omega(expression) is the set of functions that grow faster than or at the same rate as expression. � Theta(expression) consist of all the functions that lie in both O(expression) and Omega(expression).

Amortized Analysis 6. Amortized Cost Analysis Time Analysis Demo – You. Tube ▶ 10: 08 : https: //www. youtube. com/watch? v=uso 8 zq 5 Ztz. Q � is used for algorithms where an occasional operation is very slow, but most of the other operations are faster. � In Amortized Analysis, we analyze a sequence of operations and guarantee a worst case average time which is lower than the worst case time of a particular expensive operation. � The example data structures whose operations are analyzed using Amortized Analysis are Hash Tables, Disjoint Sets and Splay Trees. � In an amortized analysis, the time required to perform a sequence of data structure operations is average over all operation performed.

Amortized Analysis, � Let us consider an example of a simple hash table insertions. How do we decide table size? -- There is a trade-off between space and time, if we make hash-table size big, search time becomes fast, but space required becomes high. � The solution to this trade-off problem is to use Dynamic Table (or Arrays). o The idea is to increase size of table whenever it becomes full. o Following are the steps to follow when table becomes full. 1) Allocate memory for a larger table of size, typically twice the old table. 2) Copy the contents of old table to new table. 3) Free the old table. o If the table has space available, we simply insert new item in available space.

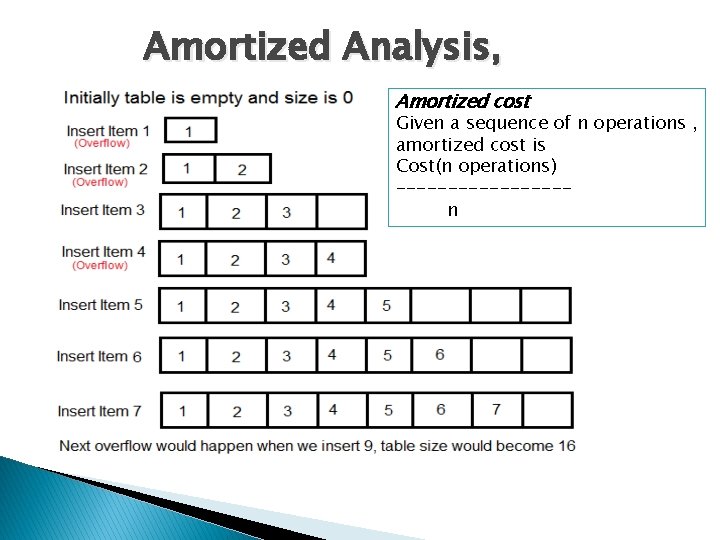

Amortized Analysis, Amortized cost Given a sequence of n operations , amortized cost is Cost(n operations) --------n

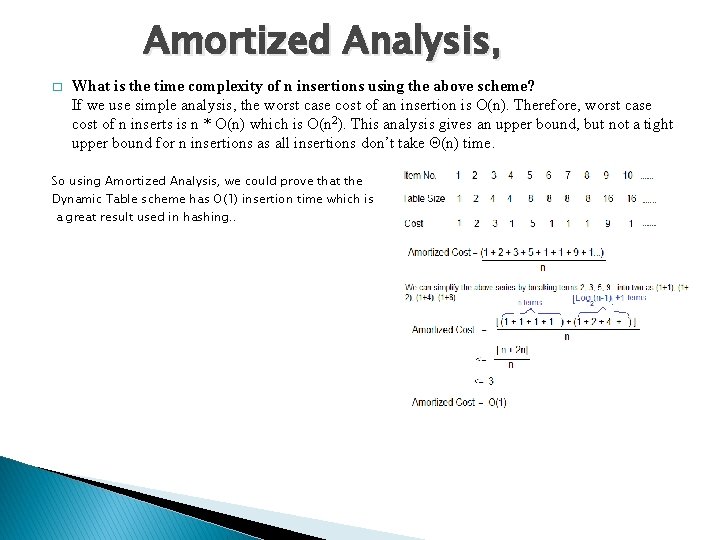

Amortized Analysis, � What is the time complexity of n insertions using the above scheme? If we use simple analysis, the worst case cost of an insertion is O(n). Therefore, worst case cost of n inserts is n * O(n) which is O(n 2). This analysis gives an upper bound, but not a tight upper bound for n insertions as all insertions don’t take Θ(n) time. So using Amortized Analysis, we could prove that the Dynamic Table scheme has O(1) insertion time which is a great result used in hashing. .

Amortized Analysis � Three main techniques used for amortized analysis: 1. 2. 3. The aggregate method, where the total running time for a sequence of operations is analyzed. The accounting (or banker's) method, where we impose an extra charge on inexpensive operations and use it to pay for expensive operations later on. The potential (or physicist's) method, in which we derive a potential function characterizing the amount of extra work we can do in each step. This potential either increases or decreases with each successive operation, but cannot be negative.

Amortized Analysis - aggregate method The aggregate method: determines an upper bound T(n)on the total cost of a sequence of N operations. The amortized cost per operation is then T(n)=n The accounting method: overcharges some operations n early in the sequence, storing the overcharge as “prepaid credit” on specific objects in the data structure. The credit is used later in the sequence to pay for operations that are charged less than they actually cost. The potential method: determines the amortized cost of each operation (like the accounting method) and may overcharge operations early on to compensate for undercharges later. This method maintains the credit as the potential energy of the data structure instead of associating the credit with individual objects within the data.

Implement a QUEUE with two STACKs having constant a mortized cost for each QUEUE operation Name the two STACKs as Stack 1 and Stack 2, we can implement the QUEUE s follows: • ENQUEUE(x): PUSH x into Stack DEQUEUE(x): If Stack 2 is not empty, then simply POP from Stack 2 and return the element. If Stack 2 is empty, POP all the elements of Stack 1, PUSH them into Stack 2, then POP from tack 2 and return the result. B)Choose any two from the three methods to prove the amortized cost or each QUEUE operation is O(1) • Aggregate method Consider a sequence of n operations. The sequence of operations will involve at most n lements. The cost associated with each element will be at most 4 i. e. (pushed into Stack 1 , popped from Stack 1, pushed to Stack 2, and popped from Stack 2). Hence, the actual cost of n operations will be upper bounded by T(n)= 4 n. Hence, the amortized cost of each operation can be T(n)/n = 4 n / n = 4 = O(1).

- Slides: 18