AJUMP Message Passing Framework for Globally Interconnected Clusters

A-JUMP Message Passing Framework for Globally Interconnected Clusters Mr. Sajjad Asghar Advance Scientific Computing Group, National Centre for Physics, Islamabad, Pakistan.

Research Papers ¢ ¢ ¢ 2 Mehnaz Hafeez, Sajjad Asghar, Usman A. Malik, Adeel-ur-Rehman, and Naveed Riaz, “Message Passing Framework for Globally Interconnected Clusters”, CHEP 2010, Taipei, Taiwan (Accepted). Sajjad Asghar, Mehnaz Hafeez, Usman A. Malik, Adeel-ur-Rehman, and Naveed Riaz, “A Framework for Parallel Code Execution using Java”, IEEE International Conference TENCON 2010, Fukuoka, Japan (Accepted). Mehnaz Hafeez, Sajjad Asghar, Usman A. Malik, Adeel-ur-Rehman, and Naveed Riaz, “Survey of MPI Implementations delimited by Java”, IEEE International Conference TENCON 2010, Fukuoka, Japan (Accepted). Adeel-ur-Rehman, Sajjad Asghar, Usman A. Malik, Mehnaz Hafeez and Naveed Riaz , “Multi-lingual Binding of MPI Code on A-JUMP”, IEEE International Conference on Frontiers of Information Technology, Islamabad Pakistan (Submitted). Sajjad Asghar, Mehnaz Hafeez, Usman A. Malik, Adeel-ur-Rehman, and Naveed Riaz, “A-JUMP, Architecture for Java Universal Message Passing”, IEEE International Conference on Frontiers of Information Technology, Islamabad Pakistan (Submitted). CHEP 2010 9/1/2021

Layout Introduction ¢ Related Work ¢ A-JUMP Frame work ¢ Message Passing for Interconnected Clusters ¢ Performance Analysis ¢ Future work & Conclusion ¢ References ¢ 3 CHEP 2010 9/1/2021

Message Passing Interface ¢ ¢ 5 Defacto Standard and defines interfaces for C/C++ and Fortran Programmer defines l the work and data distribution l How and when communication has to be done via communication according to the underlying algorithm. Synchronization is implicit (can also be user-defined) Support distributed memory architectures CHEP 2010 9/1/2021

Message Passing Interface ¢ Features 1. 2. 3. 4. 5. 6. 7. ¢ 6 Standardization Portability Performance Efficient implementations Availability Functionality Scalability The MPI Forum identified some critical shortcomings of existing message passing systems in areas such as complex data layouts or support for modularity and safe communication. CHEP 2010 9/1/2021

Java and MPI ¢ ¢ 7 Java is an excellent basis for implementing message passing framework. l Java supports multi-paradigm communications environment. l Java’s bytecode can be executed securely on many platforms. It allows integrating many technologies that are used for existing software development. Java provides many desired features for building wide range of applications like; standalone, client-server, semantic web, enterprise, mobile, graphical or distributed. Thus, it makes Java an excellent basis for achieving productivity of parallel applications. CHEP 2010 9/1/2021

Layout Introduction ¢ Related Work ¢ A-JUMP Frame work ¢ Message Passing for Interconnected Clusters ¢ Performance Analysis ¢ Future work & Conclusion ¢ References ¢ 8 CHEP 2010 9/1/2021

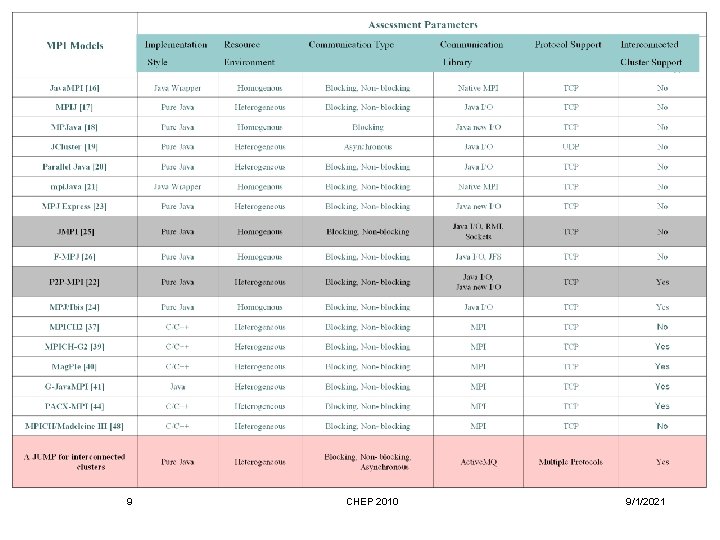

9 CHEP 2010 9/1/2021

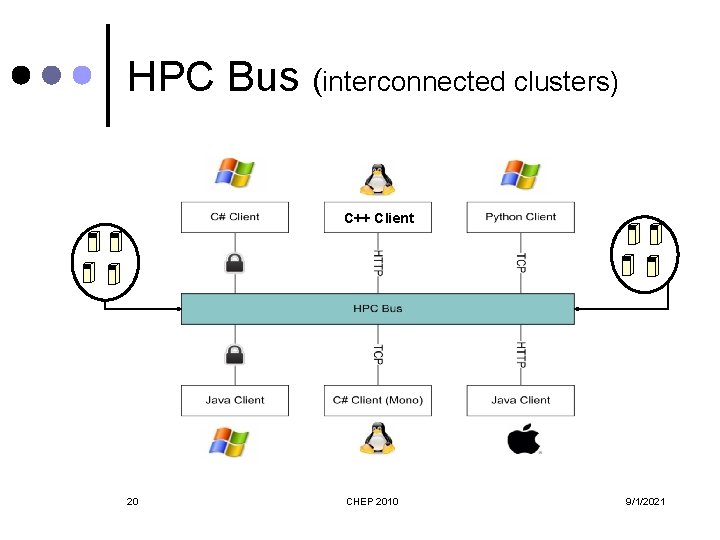

Architecture for Java Universal Message Passing (A-JUMP) Framework A-JUMP is an HPC framework built for distributed memory architectures in order to facilitate explicit parallelism. ¢ A-JUMP is purely written in Java. ¢ A-JUMP is based on mpijava 1. 2 specifications. ¢ A-JUMP backbone is called High Performance Computing Bus (HPC Bus). ¢ 10 CHEP 2010 9/1/2021

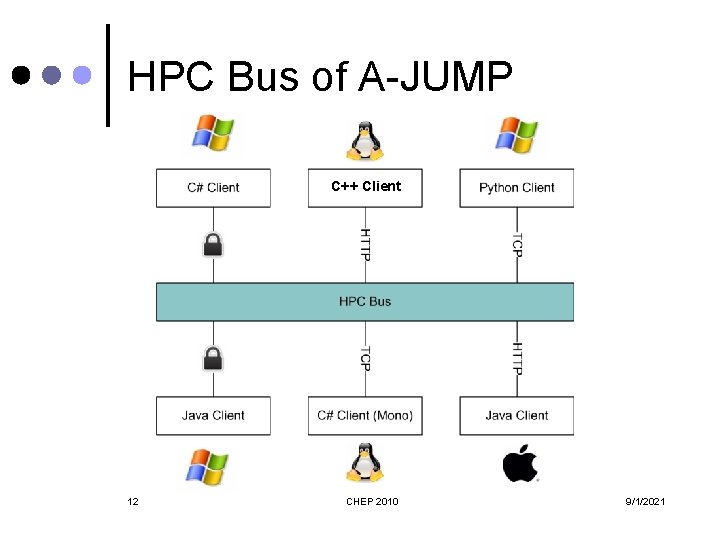

HPC Bus of A-JUMP ¢ ¢ ¢ 11 Communication backbone of the A-JUMP l Based on Apache Active. MQ Includes inter-process communication, monitoring and propagation of Registry information. Provides interoperability between hardware resources and communication protocols. Ensures that any changes in communication protocols and network topologies will remain transparent to end users. Ensures loosely coupled services and components. Segregate the application and communication layers. CHEP 2010 9/1/2021

HPC Bus of A-JUMP C++ Client 12 CHEP 2010 9/1/2021

Architecture for Java Universal Message Passing (A-JUMP) Framework ¢ 13 A-JUMP is designed to provide the following features to write parallel application; l Message Passing for Interconnected Clusters l Multiple Languages l Interoperability l Portability l Heterogeneity for resource environment l Network Topology Independence l Multiple Network Protocol Support l Communication Mode Autonomy (i. e. asynchronous communication) l Different Communication Libraries CHEP 2010 9/1/2021

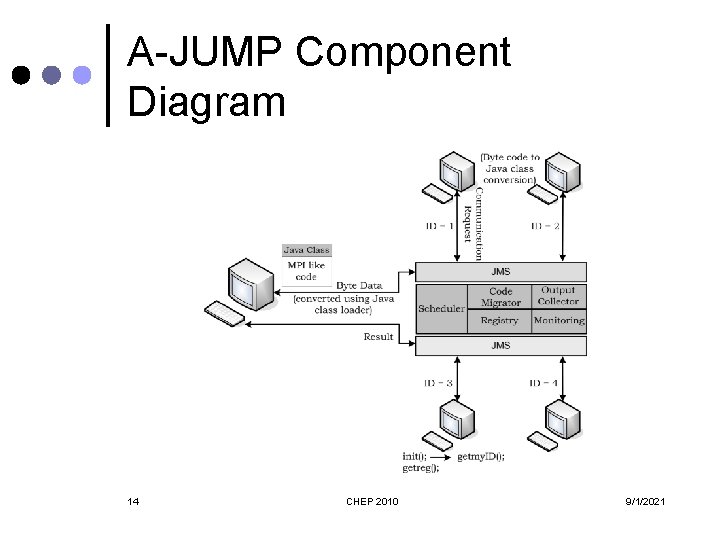

A-JUMP Component Diagram 14 CHEP 2010 9/1/2021

Layout ¢ ¢ ¢ ¢ 15 Introduction Related Work Architecture for Java Universal Message Passing (A-JUMP) Framework Message Passing for Interconnected Clusters Performance Analysis Future work & Conclusion References CHEP 2010 9/1/2021

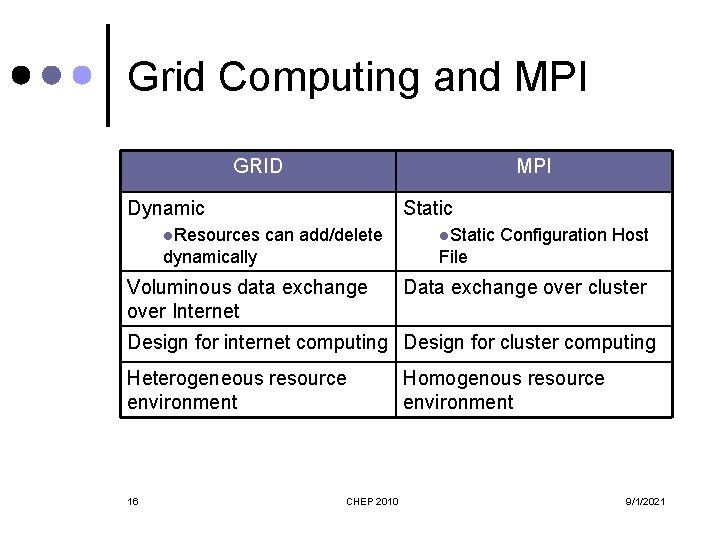

Grid Computing and MPI GRID MPI Dynamic l. Resources Static can add/delete dynamically l. Static Configuration Host File Voluminous data exchange over Internet Data exchange over cluster Design for internet computing Design for cluster computing Heterogeneous resource environment 16 CHEP 2010 Homogenous resource environment 9/1/2021

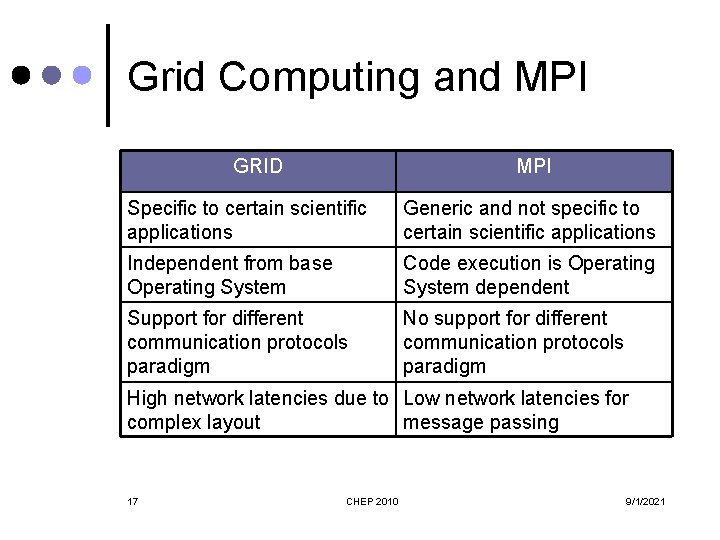

Grid Computing and MPI GRID MPI Specific to certain scientific applications Generic and not specific to certain scientific applications Independent from base Operating System Code execution is Operating System dependent Support for different communication protocols paradigm No support for different communication protocols paradigm High network latencies due to Low network latencies for complex layout message passing 17 CHEP 2010 9/1/2021

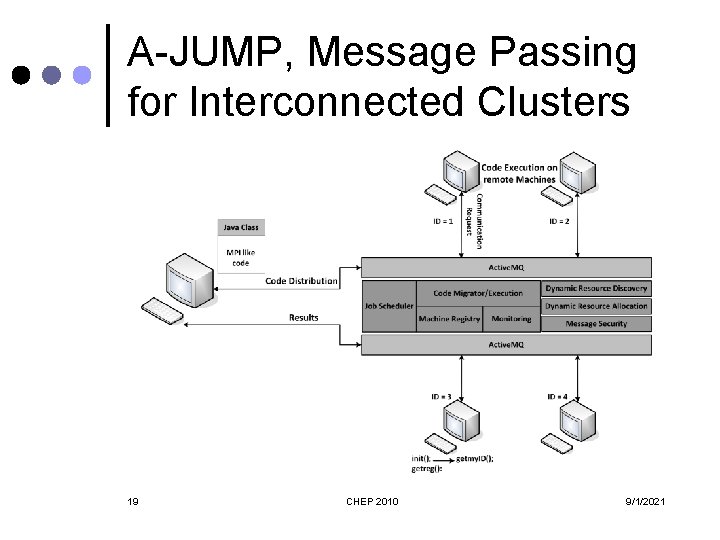

A-JUMP, Message Passing for Interconnected Clusters ¢ ¢ ¢ 18 It supports the dynamic behaviour of interconnected clusters with MPI functionality. It is written in pure Java and does not incorporate any native code/libraries. It provides asynchronous mode of communication for both message passing and code distribution over the clusters. It provides sub-clustering of resources for different jobs to utilize free resources efficiently. It is designed for both internet and cluster computing. CHEP 2010 9/1/2021

A-JUMP, Message Passing for Interconnected Clusters 19 CHEP 2010 9/1/2021

HPC Bus (interconnected clusters) C++ Client 20 CHEP 2010 9/1/2021

A-JUMP, Message Passing for Interconnected Clusters ¢ ¢ 21 It segregates the communication layer from the application code. It is easy to adopt new communication technologies without changing the application code. It offers support for both homogeneous and heterogeneous clusters for parallel code execution. A set of easy to use APIs are part of the framework. CHEP 2010 9/1/2021

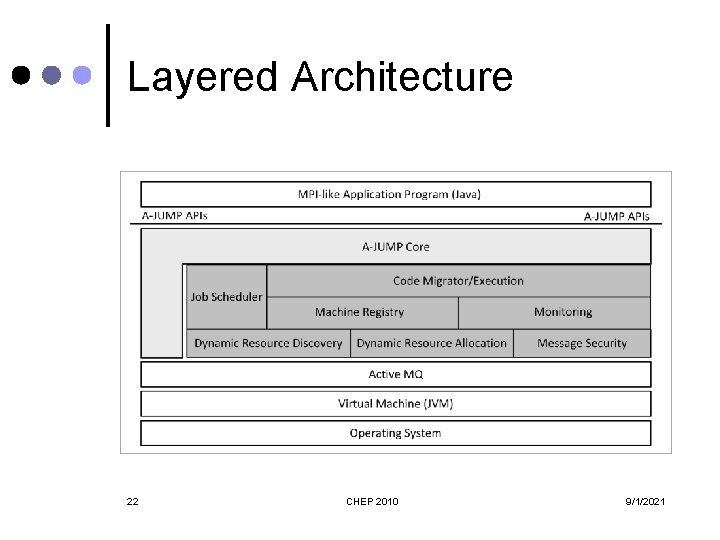

Layered Architecture 22 CHEP 2010 9/1/2021

A-JUMP framework Components Machine Registry ¢ Dynamic Resource Discovery ¢ Dynamic Resource Allocation ¢ Message Security ¢ Job Scheduler ¢ Monitoring ¢ Code Migrator/Execution ¢ API Classes ¢ 23 CHEP 2010 9/1/2021

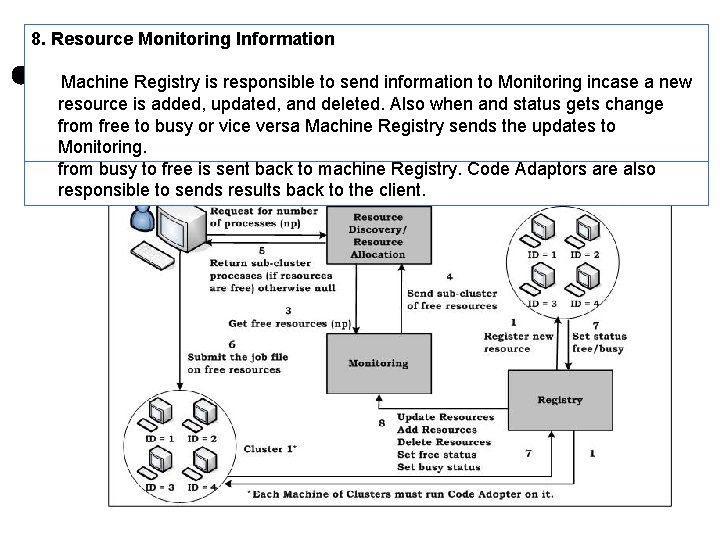

Machine Registry provides a list of machines that are dynamically added or removed from the framework. To become the part of the framework, every worker machine has to run Code Migrator/Execution service. ¢ ¢ l ¢ ¢ ¢ 24 Code Migrator/Execution service sends the request to the Machine Registry for a unique rank. Machine Registry assigns unique ranks to the newly added machines. Machine Registry is also responsible to inform Monitoring module about the newly added resources. In future It will records the static information about each machine which has been registered i. e. number of CPUs and number of cores per CPU. CHEP 2010 9/1/2021

Dynamic Resource Discovery (DRD) ¢ ¢ ¢ 25 It keeps track of the resources that are part of the AJUMP clusters (system size). It gets the information from Machine Registry to index the available machines that are registered with the Machine Registry. DRD fetches the information about the state of the machines from Monitoring. l The states are defined as free and busy. CHEP 2010 9/1/2021

Dynamic Resource Allocation (DRA) ¢ ¢ 26 DRA finds the availability of free resources on A-JUMP clusters. Client sends the request for the required numbers of free processors that are needed to run the job. DRA searches the free available resources from the index maintained by DRD and monitoring. It creates a list of machine that could be used to define a sub cluster from available machines and return this list to client APIs. CHEP 2010 9/1/2021

Message Security ¢ ¢ ¢ 27 It provides the facility to encrypt the outgoing messages. Current implementation is based on simple symmetric encryption technique. Requires more performance analysis CHEP 2010 9/1/2021

Job Scheduler(JS) ¢ ¢ ¢ 28 JS distributes incoming jobs to the cluster. The jobs are sent to the JS by client APIs through Active. MQ. It works on queuing mechanism which keeps incoming jobs queued until free resources are available for execution. If the number of available resources is less than the required resources then job will not be submitted. Otherwise, a sub-cluster is created by JS according to the requirement. Free machines will remain available for new job request. P 2 P-MPI is the only other implementation which provides sub- clustering. CHEP 2010 9/1/2021

Monitoring Job Scheduler gets the information from the Monitoring component. Monitoring records the dynamic information about the computational resources that are part of the framework. It records number of busy and free CPUs. It helps the Job Scheduler to find the best match for task in hand. Monitoring maintains two distinct states of the machines available in the cluster; these are free and busy. ¢ ¢ ¢ l l 29 As soon as a machine starts job execution, it informs Monitoring to change its state from free to busy. Monitoring is also responsible to set the state of the machine back to free as soon as the job gets finished. CHEP 2010 9/1/2021

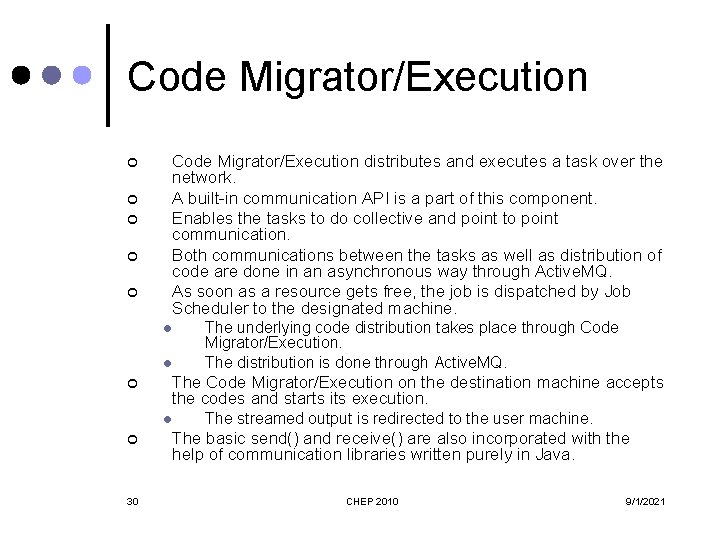

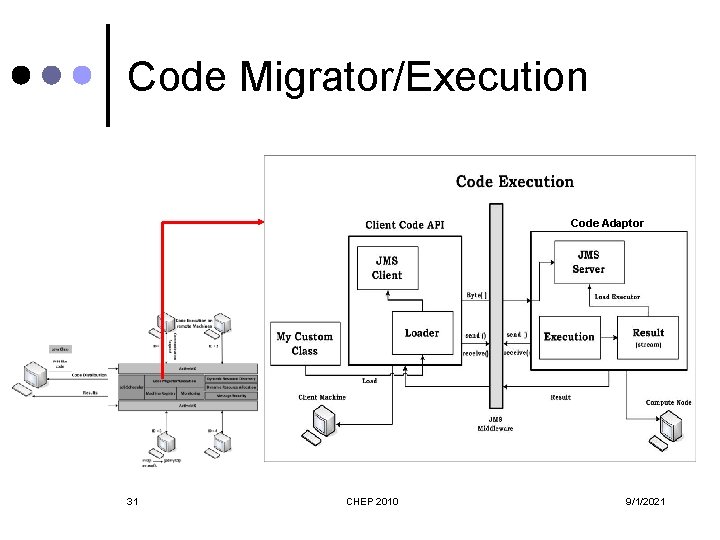

Code Migrator/Execution ¢ ¢ ¢ Code Migrator/Execution distributes and executes a task over the network. A built-in communication API is a part of this component. Enables the tasks to do collective and point to point communication. Both communications between the tasks as well as distribution of code are done in an asynchronous way through Active. MQ. As soon as a resource gets free, the job is dispatched by Job Scheduler to the designated machine. l l ¢ The Code Migrator/Execution on the destination machine accepts the codes and starts its execution. l ¢ 30 The underlying code distribution takes place through Code Migrator/Execution. The distribution is done through Active. MQ. The streamed output is redirected to the user machine. The basic send() and receive() are also incorporated with the help of communication libraries written purely in Java. CHEP 2010 9/1/2021

Code Migrator/Execution Code Adaptor 31 CHEP 2010 9/1/2021

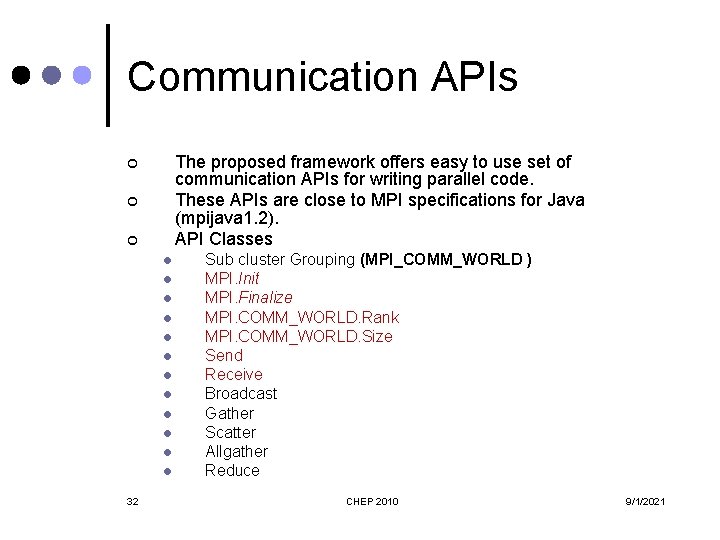

Communication APIs The proposed framework offers easy to use set of communication APIs for writing parallel code. These APIs are close to MPI specifications for Java (mpijava 1. 2). API Classes ¢ ¢ ¢ l l l 32 Sub cluster Grouping (MPI_COMM_WORLD ) MPI. Init MPI. Finalize MPI. COMM_WORLD. Rank MPI. COMM_WORLD. Size Send Receive Broadcast Gather Scatter Allgather Reduce CHEP 2010 9/1/2021

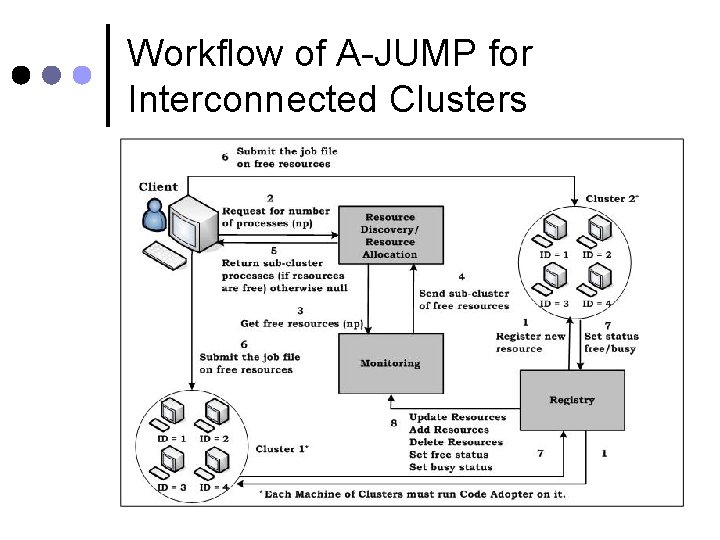

Workflow of A-JUMP for Interconnected Clusters 33 CHEP 2010 9/1/2021

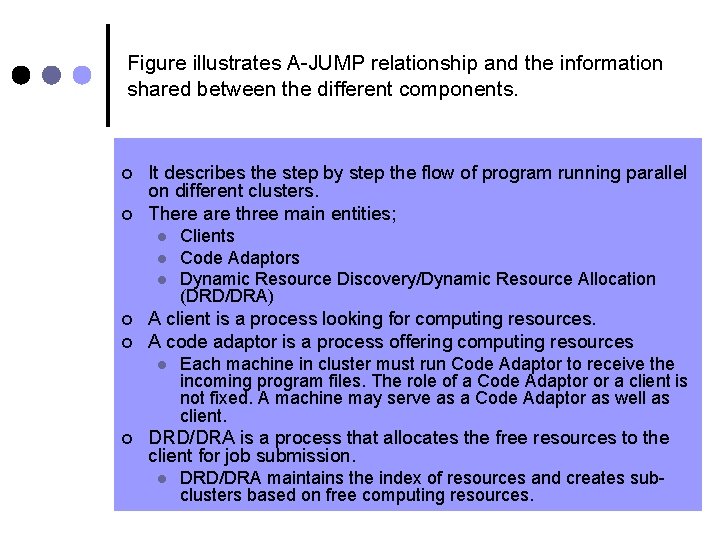

Figure illustrates A-JUMP relationship and the information shared between the different components. ¢ ¢ It describes the step by step the flow of program running parallel on different clusters. There are three main entities; l l l ¢ ¢ A client is a process looking for computing resources. A code adaptor is a process offering computing resources l ¢ Each machine in cluster must run Code Adaptor to receive the incoming program files. The role of a Code Adaptor or a client is not fixed. A machine may serve as a Code Adaptor as well as client. DRD/DRA is a process that allocates the free resources to the client for job submission. l 34 Clients Code Adaptors Dynamic Resource Discovery/Dynamic Resource Allocation (DRD/DRA) DRD/DRA maintains the index of resources and creates subclusters based on free computing resources. CHEP 2010 9/1/2021

2. Resource 3. 4. 5. 6. 7. 8. Request Get Create Return Submit Set Status Free Sub afor Resources Job Monitoring free Cluster Number byor the busy Client of processes APIs 1. Registration of New a. Information Machine Workflow of A-JUMP for Multi. Each new resource the cluster isof assigned a unique rank by the Machine Client DRD/DRA Monitoring It Once When Machine is the client sends Code responsibility Registry seeks creates receives Adaptors a request the isainresponsible the sub information of start for DRA/DRD information cluster number execution of of to to free available send about processes forward of available the information the submitted free information free required processors resources required tojob Monitoring to of it run informs the resources, from and the free Monitoring. sends incase job. Machine subit. Before itcluster submits to a new It Registry. Once the new resource is registered, this information is propagated searches DRD/DRA. to the Registry resource the jobclient. is. Clusters to about is submitted the added, Inindexed specific the case, change updated, toifmachines free the required cluster/clusters, processes in and theresources identified deleted. status for from sub Also by are thethe free clustering. user not when DRD/DRA. to available sends busy. and status At request then These thegets same DRD/DRA to machines change the time to over Internet the Monitoring. from reside DRD/DRA sends may Machine free a message Registry tofor on busy the different or given also about vice sends clusters number the versa unavailability the Machine or ofupdates on freea processes. single Registry in of the resources cluster. change sendsto the ofthe status updates client. to the to Monitoring. As soon as the submitted job finishes, a request of change in status from busy to free is sent back to machine Registry. Code Adaptors are also responsible to sends results back to the client. 35 CHEP 2010 9/1/2021

Layout ¢ ¢ ¢ ¢ 36 Introduction Related Work Architecture for Java Universal Message Passing (A-JUMP) Framework Message Passing for Interconnected Clusters Performance Analysis Future work & Conclusion References CHEP 2010 9/1/2021

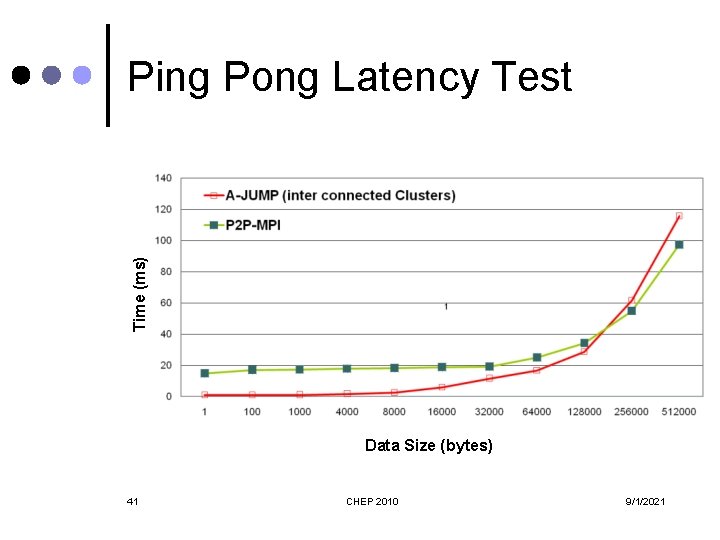

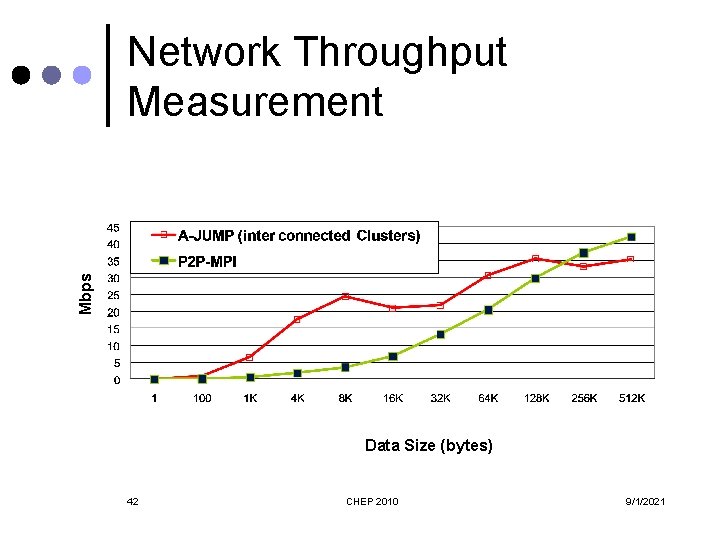

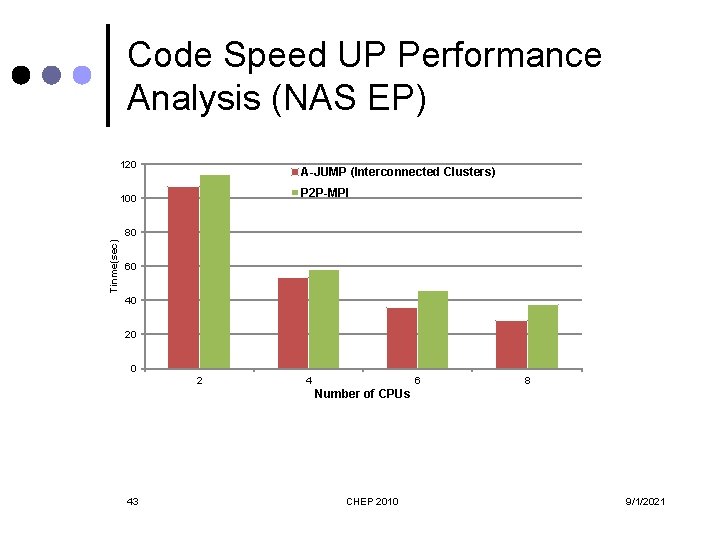

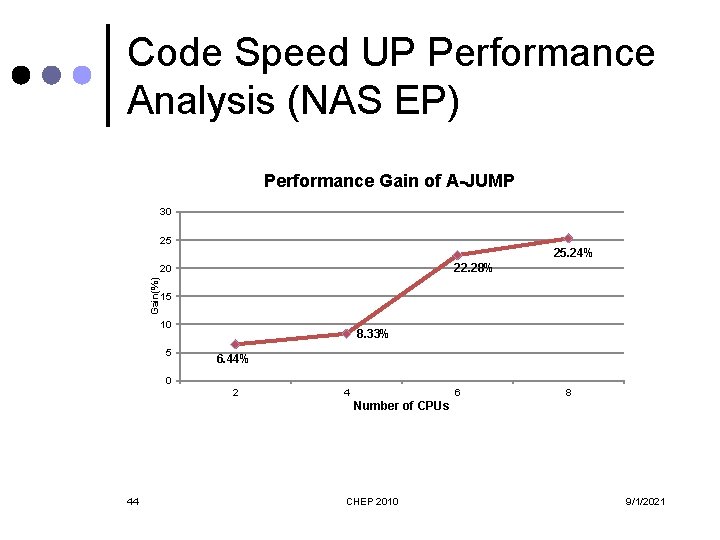

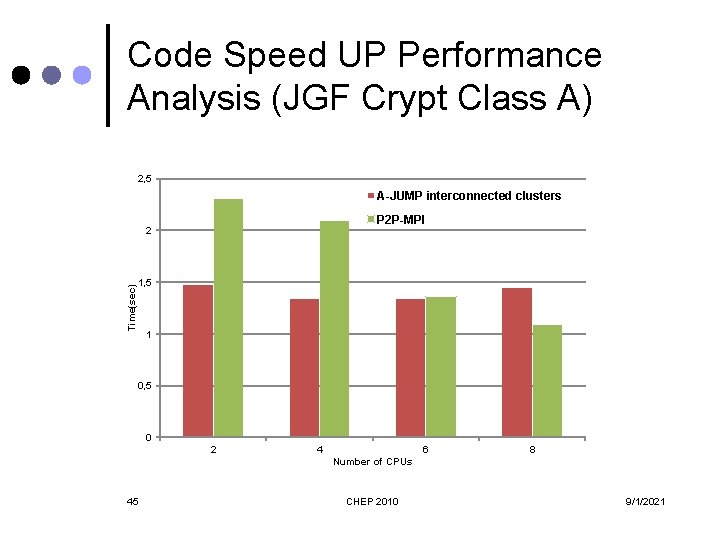

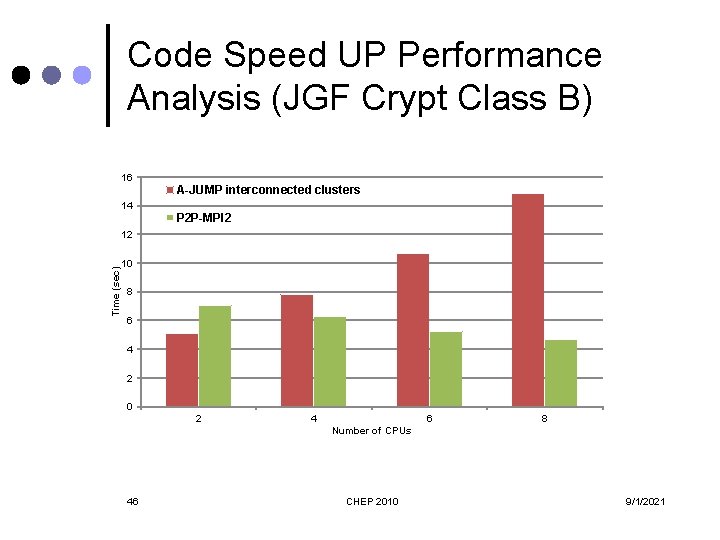

Performance Analysis Communication Performance Measurement ¢ l l Code Speed-up Performance Analysis ¢ l l 37 Ping Pong Latency Test Network Throughput Measurement Embarrassingly Parallel (EP) Benchmark JGF Crypt Benchmark CHEP 2010 9/1/2021

P 2 P-MPI ¢ ¢ ¢ 38 S. Genaud and C. Rattanapoka, “P 2 P-MPI: A Peer-to. Peer Framework for Robust Execution of Message Passing Parallel Programs, ” Journal of Grid Computing, 5(1): 27 -42, 2007. Strength l Pure Java l Supports distributed memory models l Supports heterogeneous environment l Supports high speed networks Weakness l No support for high speed networks l Slow Performance CHEP 2010 9/1/2021

Test Environment ¢ ¢ ¢ 39 Results are collected on an environment that simulates globally interconnected clusters. The hardware resources involved in conducting the tests include; 9 single core machines divided into two clusters. The clusters are connected with 1 Gbps bandwidth. The machines are running Windows Server 2003 (with SP 2) and Windows XP (with SP 3). The TCP window size is default on all the machines in clusters. No optimization is done at the level of OS as well as at the level of hardware. CHEP 2010 9/1/2021

Time (ms) Ping Pong Latency Test Data Size (bytes) 41 CHEP 2010 9/1/2021

Mbps Network Throughput Measurement Data Size (bytes) 42 CHEP 2010 9/1/2021

Code Speed UP Performance Analysis (NAS EP) 120 A-JUMP (Interconnected Clusters) P 2 P-MPI 100 Tinme(sec) 80 60 40 20 0 2 4 6 8 Number of CPUs 43 CHEP 2010 9/1/2021

Code Speed UP Performance Analysis (NAS EP) Performance Gain of A-JUMP 30 25 25. 24% 22. 28% Gain(%) 20 15 10 5 8. 33% 6. 44% 0 2 4 6 8 Number of CPUs 44 CHEP 2010 9/1/2021

Code Speed UP Performance Analysis (JGF Crypt Class A) 2, 5 A-JUMP interconnected clusters P 2 P-MPI Time(sec) 2 1, 5 1 0, 5 0 2 4 6 8 Number of CPUs 45 CHEP 2010 9/1/2021

Code Speed UP Performance Analysis (JGF Crypt Class B) 16 A-JUMP interconnected clusters 14 P 2 P-MPI 2 Time (sec) 12 10 8 6 4 2 0 2 4 6 8 Number of CPUs 46 CHEP 2010 9/1/2021

Layout ¢ ¢ ¢ ¢ 47 Introduction Related Work Architecture for Java Universal Message Passing (A-JUMP) Framework Message Passing for Interconnected Clusters Performance Analysis Conclusion and Future work References CHEP 2010 9/1/2021

Conclusion & Future Work ¢ ¢ ¢ ¢ A-JUMP for interconnected clusters is loosely coupled with its communication layer It supports sub clustering It provides dynamic resource management It carries multiple communication protocols It supports asynchronous message passing It supports heterogeneous environment Performance analysis results of A-JUMP l l 48 For small message sizes are better than P 2 P-MPI For large message sizes are promising and comparable with P 2 P-MPI CHEP 2010 9/1/2021

Conclusion & Future Work ¢ ¢ ¢ 49 The communication Library will be improved for message sizes >= 256 k Support for different security techniques The other area of focus will be to improve network latencies and lessen the communication overheads Multilingual Support for Interconnected Clusters More NAS and JGF benchmarks CHEP 2010 9/1/2021

Layout ¢ ¢ ¢ ¢ 50 Introduction Related Work Architecture for Java Universal Message Passing (A-JUMP) Framework Message Passing for Interconnected Clusters Performance Analysis Conclusion and Future work References CHEP 2010 9/1/2021

References ¢ ¢ ¢ ¢ ¢ 51 Ian Foster, “The Anatomy of the Grid: Enabling Scalable Virtual Organizations, ” in Proc. 7 th International Euro-Par Conference on Parallel Processing, Manchester, 2001, pp. 1– 4. LCG homepage [Online]. Available: http: //lcg. web. cern. ch/lcg. EGEE homepage [Online]. Available: http: //www. eu-egee. org. S. Haug, F. Ould-Saada, K. Pajchel, and A. L. Read, “Data management for the world’s largest machine, ” in Applied Parallel Computing. State of the Art in Scientific Computing, vol. 4699, Springer Berlin / Heidelberg, 2007, pp. 480 -488. P. Eerola et al. , “The Nordu. Grid production grid infrastructure, status and plans, ” in IEEE/ACM Grid, Nov. 2003, pp. 158– 165. Tera. Grid Project homepage [Online]. Available: http: //www. teragrid. org F. Cappello et al. , “Grid’ 5000: a large scale and highly reconfigurable experimental grid testbed. ” In Proc. 6 th IEEE/ACM International Workhop on Grid Computing - 2005, pp. 99 -106. J. Dongarra, D. Gannon, G. Fox, and K. Kennedy. The Impact of Multicore on Computational Science Software. CTWatch Quarterly, 2007 [Online]. Available: http: //www. ctwatch. org/quarterly/articles/2007/02/the-impact-of-multicore-oncomputational-science-software. S. Genaud and C. Rattanapoka, “P 2 P-MPI: A Peer-to-Peer Framework for Robust Execution of Message Passing Parallel Programs, ” Journal of Grid Computing, 5(1): 27 -42, 2007. S. Schulz, W. Blochinger, and M. Poths, “A Network Substrate for Peer-to-Peer Grid Computing beyond Embarrassingly Parallel Applications, ” in Proc. WRI International Conference on Communications and Mobile Computing – 2009, Volume 03, pp. 60 -68. M. Humphrey and M. R. Thompson, “Security Implications of Typical Grid Usage Scenarios, ” Cluster Computing, vol. 5, Issue-03, 2002, pp. 257 -264. P. Phinjaroenphan, S. Bevinakoppa, and P. Zeephongsekul, “A Method for Estimating the Execution Time of a Parallel Task on a Grid Node, ” in Proc. Eurepean Grid Conefernce, Amsterdam, 2005, pp. 226 -236. A. Nelisse, J. Maassen, T. Kielmann, and H. Bal. “CCJ: Object-Based Message Passing and Collective Communication in Java, ” Concurrency and Computation: Practice & Experience, 15(3 -5): 341 -369, 2003. J. Al-Jaroodi, N. Mohamed, H. Jiang, and D. Swanson, “JOPI: a Java object-passing interface: Research Articles, ” Concurrency and Computation: Practice & Experience, vol. 17, Issues 7 -8, pp. 775 -795, 2005. P. Martin, L. M. Silva, and J. G. Silva, “A Java Interface to WMPI”. In 5 th European PVM/MPI Users’ Group Meeting, Euro. PVM/MPI’ 98, Liverpool, UK, Lecture Notes in Computer Science, vol. 1497, pp. 121 -128. Springer, 1998. S. Mintchev and V. Getov, “Towards Portable Message Passing in Java: Binding MPI, ” in 4 th European PVM/MPI Users’ Group Meeting, Euro. PVM/MPI’ 97, Crakow, Poland, Lecture Notes in Computer Science, vol. 1332, pp. 135– 142. Springer, 1997. G. Judd, M. Clement, and Q. Snell, “DOGMA: Distributed Object Group Metacomputing Architecture, ” Concurrency and Computation: Practice and Experience, 10(11 -13): 977 -983, 1998. CHEP 2010 9/1/2021

References ¢ ¢ ¢ ¢ 52 B. Pugh and J. Spacco, “MPJava: High-Performance Message Passing in Java using Java. nio, ” in Proc. 16 th Intl. Workshop on Languages and Compilers for Parallel Computing (LCPC'03), Lecture Notes in Computer Science, vol. 2958, pp. 323339, College Station, TX, USA, 2003. B. Y. Zhang, G. W. Yang, and W. -M. Zheng, “Jcluster: an Efficient Java Parallel Environment on a Large-scale Heterogeneous Cluster, ” Concurrency and Computation: Practice and Experience, 18(12): 1541 -1557, 2006. A. Kaminsky, “Parallel Java: A Unified API for Shared Memory and Cluster Parallel Programming in 100% Java” in Proc. 9 th Intl. Workshop on Java and Components for Parallelism, Distribution and Concurrency (IWJac. PDC'07), pp. 196 a (8 pages), Long Beach, CA, USA, 2007. M. Baker, B. Carpenter, G. Fox, S. Ko, and S. Lim, “mpi-Java: an Object-Oriented Java Interface to MPI, ” in 1 st Int. Workshop on Java for Parallel and Distributed Computing (IPPS/SPDP 1999 Workshop), San Juan, Puerto Rico, Lecture Notes in Computer Science, volume 1586, pp. 748– 762. Springer, 1999. S. Genaud and C. Rattanapoka, “P 2 P-MPI: A Peer-to-Peer Framework for Robust Execution of Message Passing Parallel Programs, ” Journal of Grid Computing, 5(1): 27 -42, 2007. A. Shafi, B. Carpenter, and M. Baker, “Nested Parallelism for Multi-core HPC Systems using Java, ” Journal of Parallel and Distributed Computing, 69(6): 532 -545, 2009. M. Bornemann, R. V. v. Nieuwpoort, and T. Kielmann, “MPJ/Ibis: A Flexible and Efficient Message Passing Platform for Java, ” in Proc. 12 th European PVM/MPI Users’ Group Meeting (Euro. PVM/MPI’ 05), Lecture Notes in Computer Science, vol. 3666, pp. 217 -224, Sorrento, Italy, 2005. S. Bang and J. Ahn, “Implementation and Performance Evaluation of Socket and RMI based Java Message Passing Systems, ” in Proc. 5 th Intl. Conf. on Software Engineering Research, Management and Applications (SERA'07), pp. 153159, Busan, Korea, 2007. G. L. Taboada, J. Tourino, and R. Doallo, “F-MPJ: scalable Java message-passing communications on parallel systems, ” Journal of Supercomputing, 2009. V. Getov , G. v. Laszewski , M. Philippsen , and I. Foster, “Multiparadigm communications in Java for grid computing, ” Communications of the ACM, Volume. 44, Issue 10, 2001, pp. 118 -125. H. Schildt, “Java Beginner’s Guide”, 4 th ed. , Tata Mc. Graw-Hill, 2007. D. Balkanski , M. Trams , and W. Rehm , “Heterogeneous Computing With MPICH/Madeleine and PACX MPI: a Critical Comparison” Technische Universit, Chemnitz, 2003. S. Asghar, M. Hafeez, U. A. Malik, A. Rehman, and N. Riaz, “A-JUMP, A Universal Parallel Framework, ” unpublished. MPI: A Message Passing Interface Standard. Message Passing Interface Forum [Online]. Available: http: //www. mpiforum. org/docs/mpi-11 -html/mpi-report. html. G. L. Taboada, J. Tourino, and R. Doallo, “Java for High Performance Computing: Assessment of current research & practice, ” in Proc. 7 th International Conference on Principles and Practice or Programming in Java, Calgary, Alberta, Canada, 2009. pp. 30 -39. CHEP 2010 9/1/2021

References ¢ ¢ ¢ ¢ ¢ 53 G. L. Taboada, J. Tourino, and R. Doallo, “Performance Analysis of Java Message-Passing Libraries on Fast Ethernet, Myrinet and SCI Clusters, ” in Proc. 5 th IEEE International Conference on Cluster Computing (Cluster'03), Hong Kong, China, 2003, pp. 118 -126. S. Asghar, M. Hafeez, U. A. Malik, A. Rehman, and N. Riaz, “Survey of MPI Implementations delimited by Java for HPC, ” unpublished. R. V. v. Nieuwpoort et al. , “Ibis: an Efficient Java based Grid Programming Environment, ” Concurrency and Computation: Practice and Experience, 17(7 -8): 1079 -1107, 2005. W. Gropp, E. Lusk, N. Doss, and A. Skjellum, “High-performance, portable implementation of the MPI Message Passing Interface Standard. Parallel Computing, ” 22(6): 789. 828, Sept. 1996. MPICH 2 [Online]. Available: http: //www. mcs. anl. gov/research/projects/mpich 2. MPICH-G 2 [Online]. Available: http: //www 3. niu. edu/mpi. N. Karonis, B. Toonen, and I. Foster, “MPICH-G 2: A Grid-Enabled Implementation of the Message Passing Interface, ” Journal of Parallel and Distributed Computing, 2003. T. Kielmann , R. F. H. Hofman , H. E. Bal , A. Plaat , and R. A. F. Bhoedjang, “Mag. PIe: MPI's collective communication operations for clustered wide area systems, ” ACM SIGPLAN Notices, vol. 34, Issue-08, 1999, pp. 131 -140. L. Chen, C. Wang, F. C. M. Lau, and R. K. K. Ma, “A Grid Middleware for Distributed Java Computing with MPI Binding and Process Migration Supports”, Journal of Computer Science and Technology, vol. 18, Issue-04, 2003, pp. 505 -514. V. Welch, F. Siebenlist, I. Foster, J. Bresnahan, K. Czajkowski, J. Gawor, C. Kesselman, S. Meder, L. Pearlman, and S. Tuecke, “Security for Grid Services, ” Twelfth International Symposium on High Performance Distributed Computing (HPDC 12), IEEE Press, 2003. Globus Toolkit Homepage [Online]. Available: http: //www. globus. org/toolkit. PACX-MPI [Online]. Available: http: //www. hlrs. de/organization/av/amt/research/pacx-mpi Version 1. 2 of MPI [Online]. Available: http: //www. mpi-forum. org/docs/mpi-20 -html/node 28. htm. O. Aumage, and G. Mercier, "MPICH/Madeleine: a True Multi-Protocol MPI for High Performance Networks, " vol. 1, pp. 10051 a, 15 th International Parallel and Distributed Processing Symposium (IPDPS'01), 2001. W. Gropp and E. Lusk, “MPICH working note: The second-generation adi for MPICH implementation of MPI, ” 1996. O. Aumage, “Heterogeneous multi-cluster networking with the madeleine III communication library, ” in 16 th IEEE International Parallel and Distributed Processing Symposium (IPDPS '02 (IPPS & SPDP)), pp 85, Washington - Brussels Tokyo, Apr 2002. O. Aumage, L. Boug´e, A. Denis, J. -F. M´ehaut, G. Mercier, R. Namyst, and L. Prylli, “A portable and efficient communication library for high-performance cluster computing, ” in IEEE Intl Conf. on Cluster Computing (Cluster 2000), pp 78 -87, Technische Universitt Chemnitz, Saxony, Germany, Nov 2000. Active. MQ homepage [Online]. Available: http: //activemq. apache. org. CHEP 2010 9/1/2021

54 CHEP 2010 9/1/2021

- Slides: 52