Air quality model performance evaluation By J C

Air quality model performance evaluation By J. C. Chang and S. R. Hanna 1

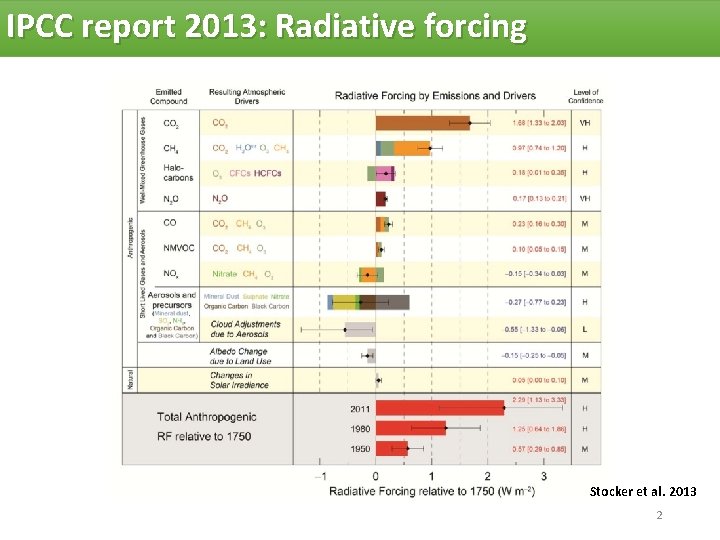

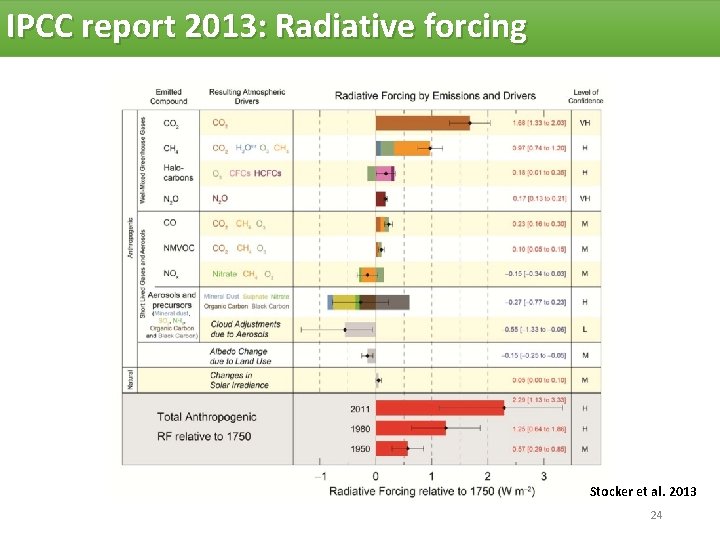

IPCC report 2013: Radiative forcing Stocker et al. 2013 2

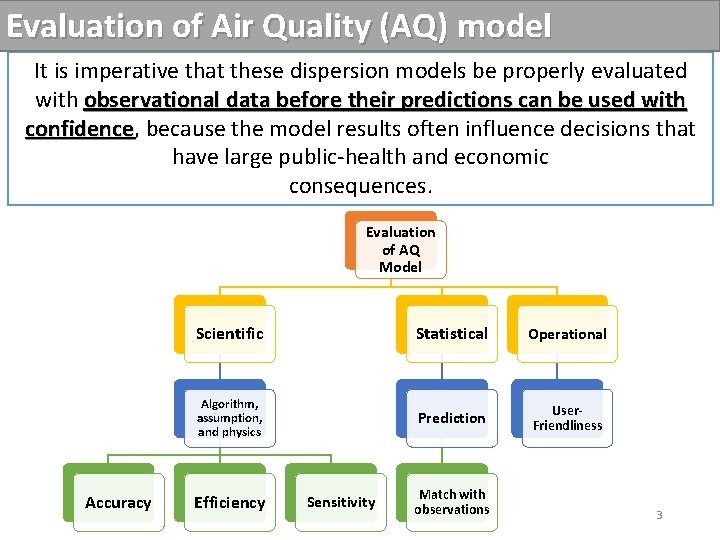

Evaluation of Air Quality (AQ) model It is imperative that these dispersion models be properly evaluated with observational data before their predictions can be used with confidence, confidence because the model results often influence decisions that have large public-health and economic consequences. Evaluation of AQ Model Accuracy Scientific Statistical Operational Algorithm, assumption, and physics Prediction User. Friendliness Efficiency Sensitivity Match with observations 3

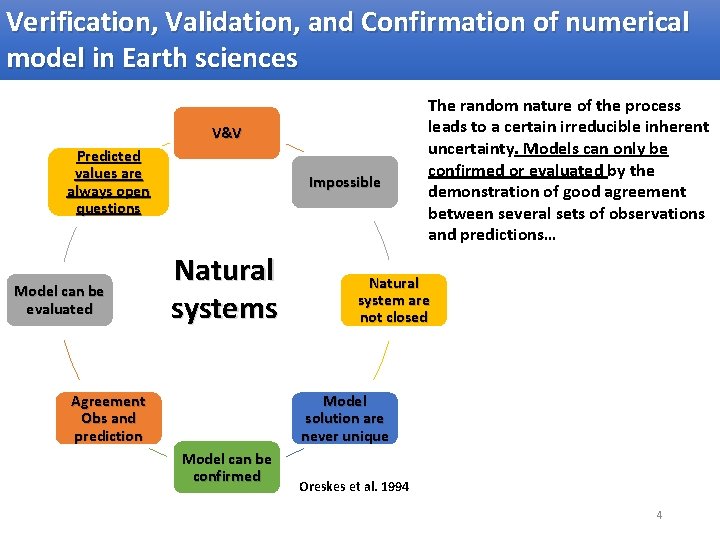

Verification, Validation, and Confirmation of numerical model in Earth sciences V&V Predicted values are always open questions Model can be evaluated Impossible Natural systems Agreement Obs and prediction The random nature of the process leads to a certain irreducible inherent uncertainty. Models can only be confirmed or evaluated by the demonstration of good agreement between several sets of observations and predictions… Natural system are not closed Model solution are never unique Model can be confirmed Oreskes et al. 1994 4

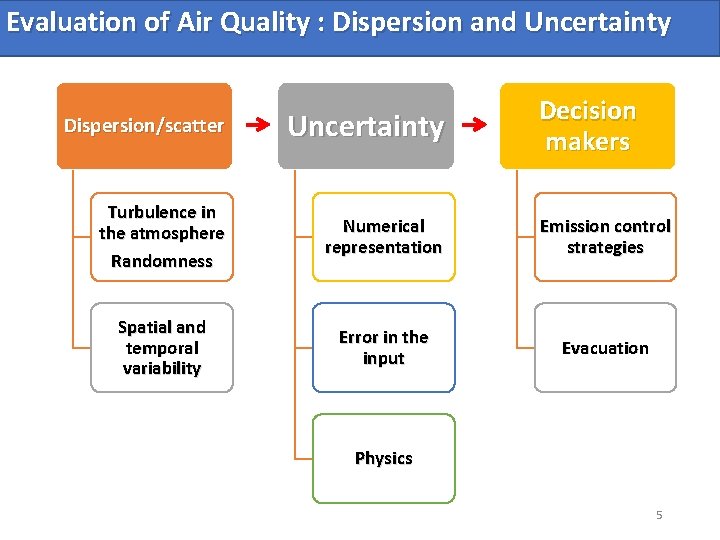

Evaluation of Air Quality : Dispersion and Uncertainty Decision makers Dispersion/scatter Uncertainty Turbulence in the atmosphere Randomness Numerical representation Emission control strategies Spatial and temporal variability Error in the input Evacuation Physics 5

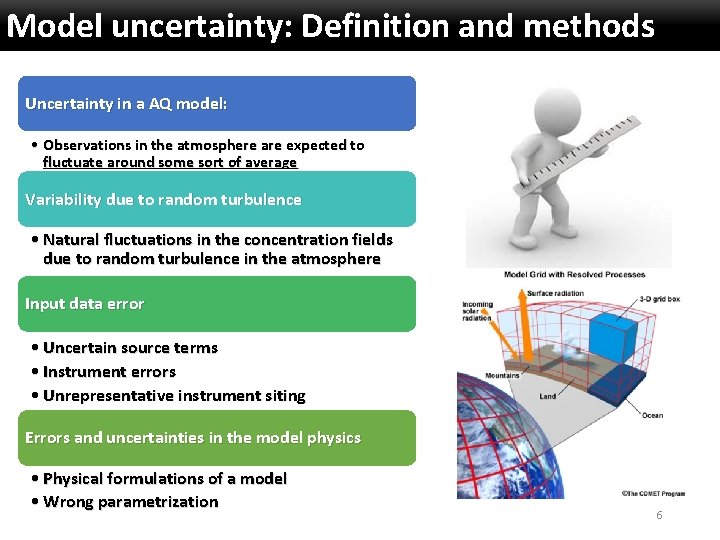

Model uncertainty: Definition and methods Uncertainty in a AQ model: • Observations in the atmosphere are expected to fluctuate around some sort of average Variability due to random turbulence • Natural fluctuations in the concentration fields due to random turbulence in the atmosphere Input data error • Uncertain source terms • Instrument errors • Unrepresentative instrument siting Errors and uncertainties in the model physics • Physical formulations of a model • Wrong parametrization 6

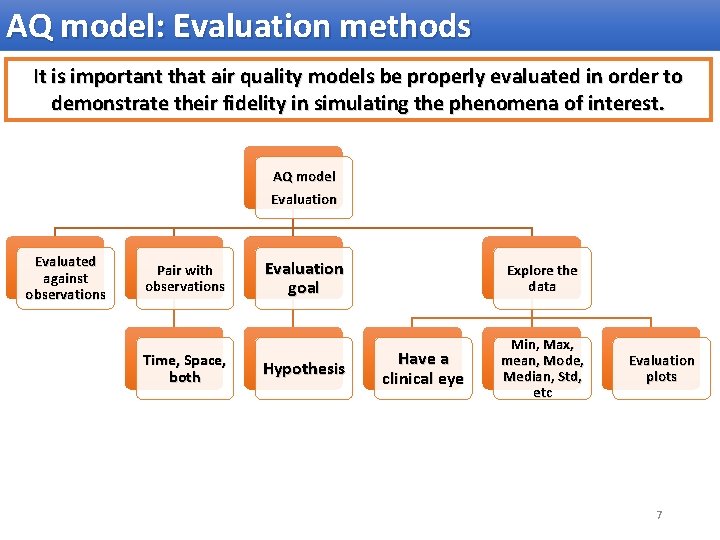

AQ model: Evaluation methods It is important that air quality models be properly evaluated in order to demonstrate their fidelity in simulating the phenomena of interest. AQ model Evaluation Evaluated against observations Pair with observations Time, Space, both Evaluation goal Explore the data Hypothesis Min, Max, mean, Mode, Median, Std, etc Have a clinical eye Evaluation plots 7

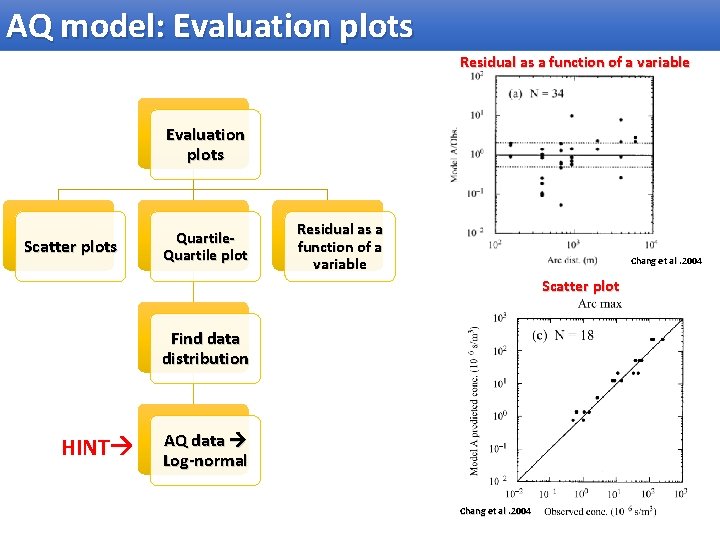

AQ model: Evaluation plots Residual as a function of a variable Evaluation plots Scatter plots Quartile plot Residual as a function of a variable Chang et al. 2004 Scatter plot Find data distribution HINT AQ data Log-normal Chang et al. 2004 8

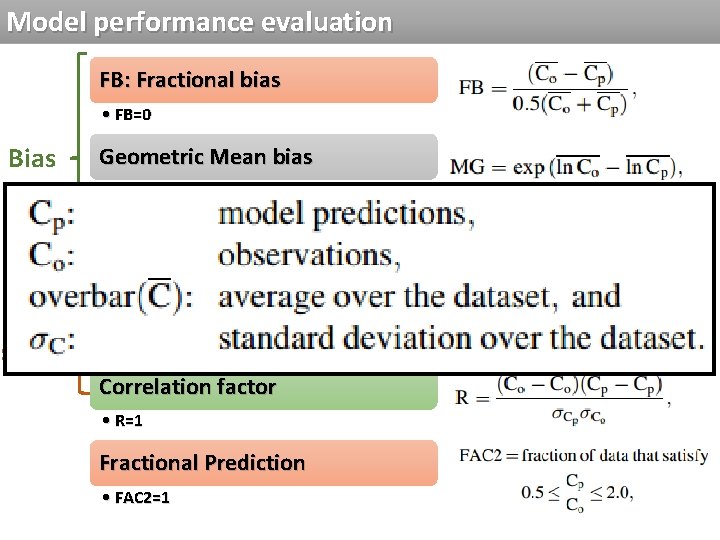

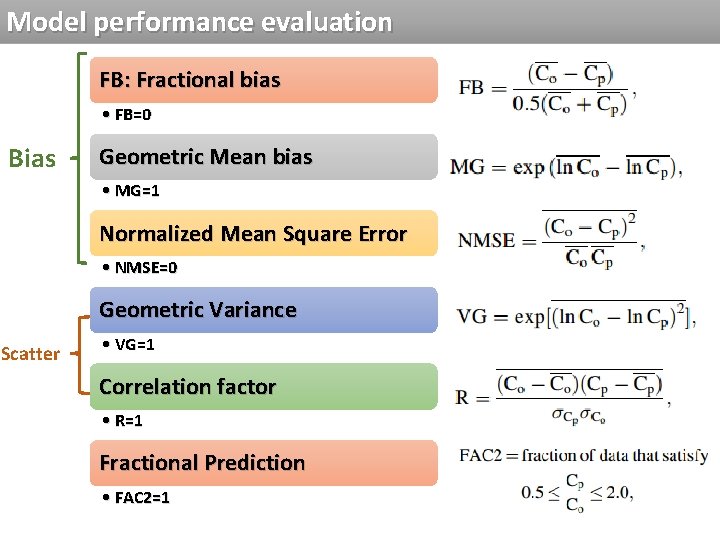

Model performance evaluation FB: Fractional bias • FB=0 Bias Geometric Mean bias • MG=1 Normalized Mean Square Error • NMSE=0 Geometric Variance Scatter • VG=1 Correlation factor • R=1 Fractional Prediction • FAC 2=1 9

Model performance evaluation FB: Fractional bias • FB=0 Bias Geometric Mean bias • MG=1 Normalized Mean Square Error • NMSE=0 Geometric Variance Scatter • VG=1 Correlation factor • R=1 Fractional Prediction • FAC 2=1 10

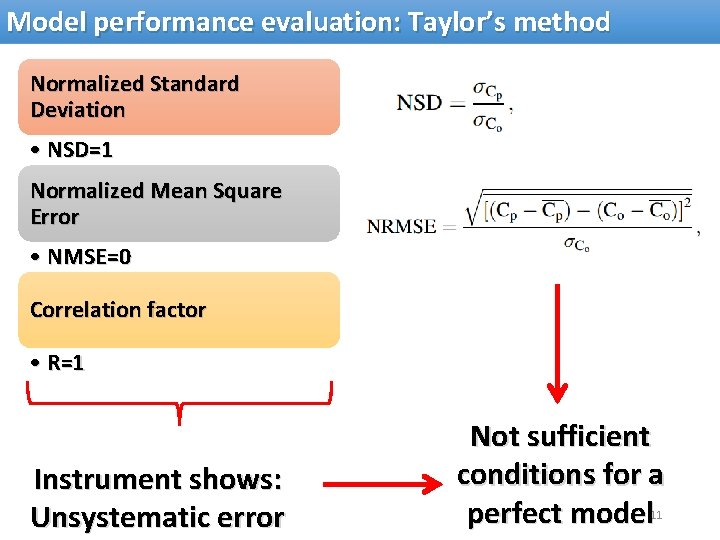

Model performance evaluation: Taylor’s method Normalized Standard Deviation • NSD=1 Normalized Mean Square Error • NMSE=0 Correlation factor • R=1 Instrument shows: Unsystematic error Not sufficient conditions for a perfect model 11

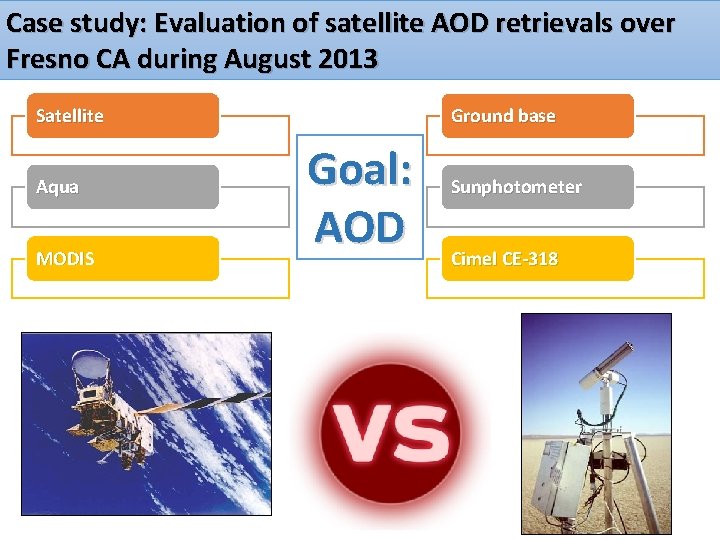

Case study: Evaluation of satellite AOD retrievals over Fresno CA during August 2013 Satellite Aqua MODIS Ground base Goal: AOD Sunphotometer Cimel CE-318 12

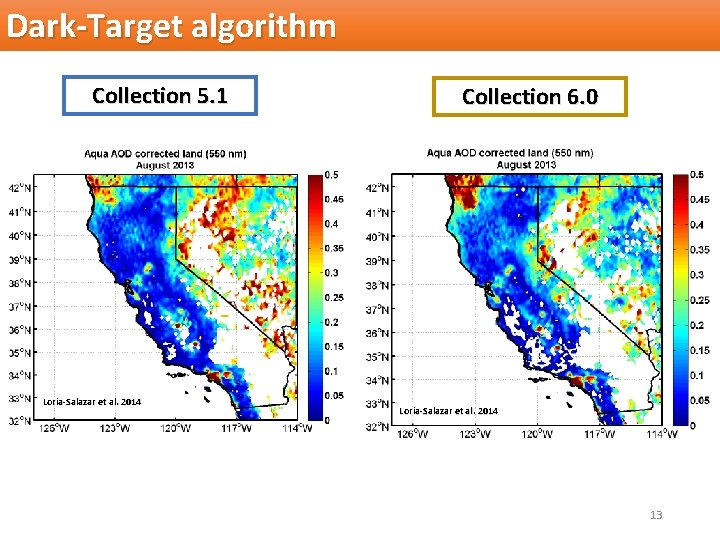

Dark-Target algorithm Collection 5. 1 Loria-Salazar et al. 2014 Collection 6. 0 Loria-Salazar et al. 2014 13

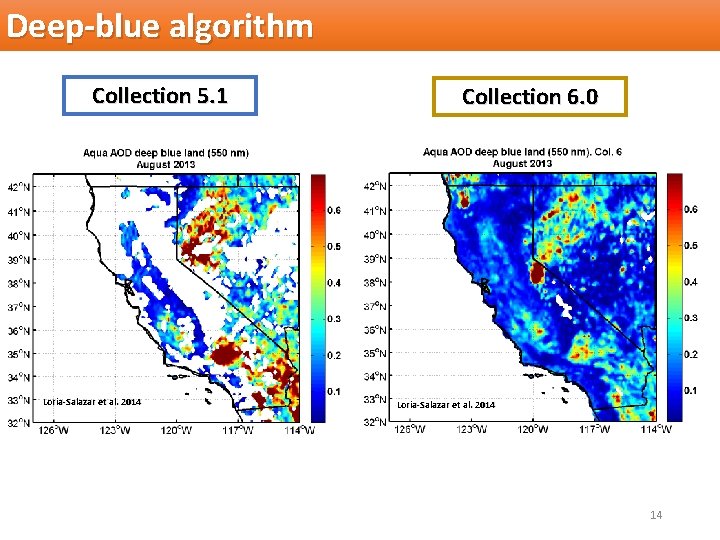

Deep-blue algorithm Collection 5. 1 Loria-Salazar et al. 2014 Collection 6. 0 Loria-Salazar et al. 2014 14

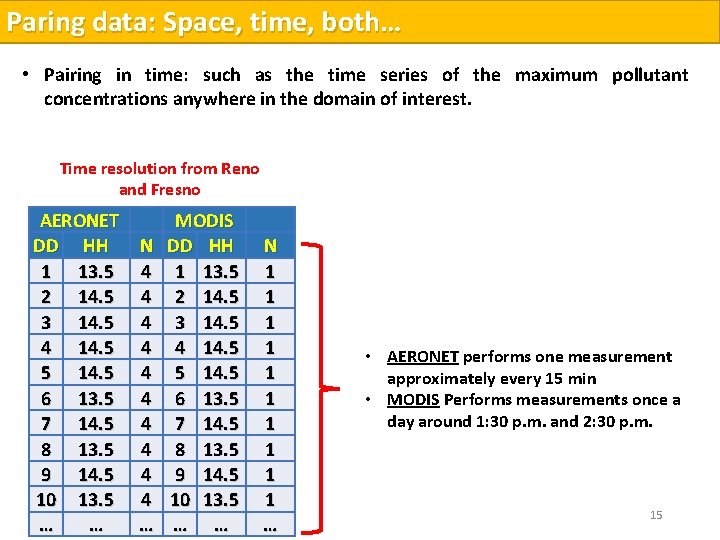

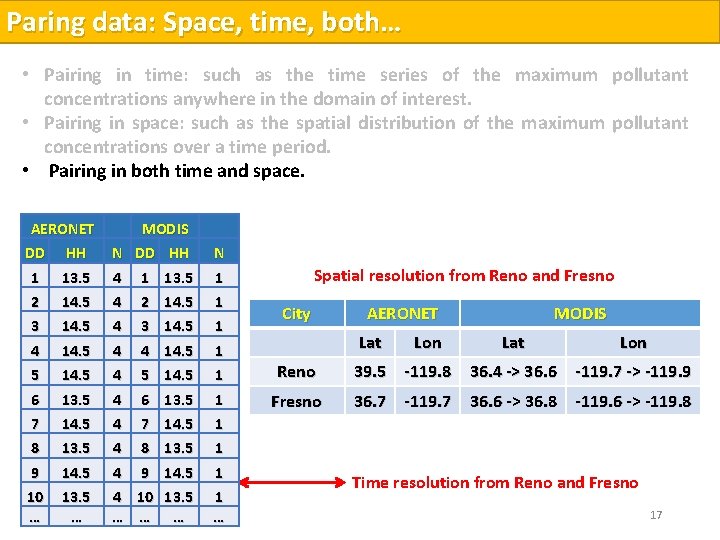

Paring data: Space, time, both… • Pairing in time: such as the time series of the maximum pollutant concentrations anywhere in the domain of interest. • Pairing in space: such as the spatial distribution of the maximum pollutant concentrations over a time period. Time resolution fromand Renospace. • Pairing in both time and Fresno AERONET MODIS DD HH N DD HH 1 13. 5 4 1 13. 5 2 14. 5 4 2 14. 5 3 14. 5 4 4 14. 5 5 14. 5 4 5 14. 5 6 13. 5 4 6 13. 5 7 14. 5 4 7 14. 5 8 13. 5 4 8 13. 5 9 14. 5 4 9 14. 5 10 13. 5 4 10 13. 5 … … … N 1 1 1 1 1 … • AERONET performs one measurement approximately every 15 min • MODIS Performs measurements once a day around 1: 30 p. m. and 2: 30 p. m. 15

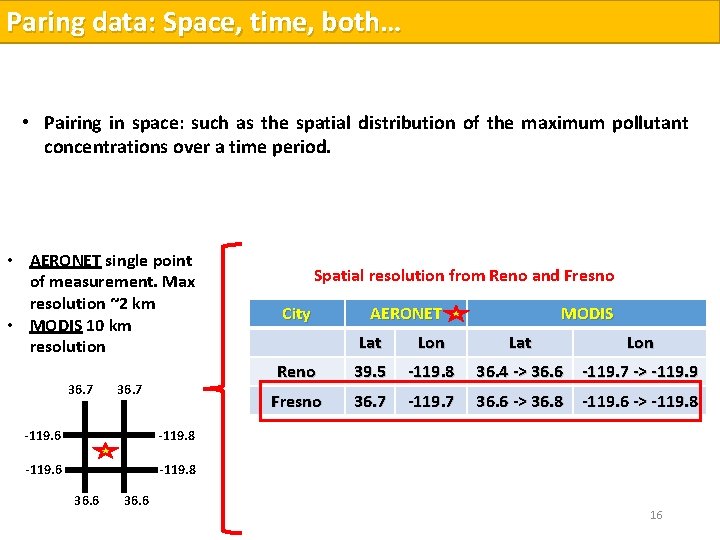

Paring data: Space, time, both… • Pairing in time: such as the time series of the maximum pollutant concentrations anywhere in the domain of interest. • Pairing in space: such as the spatial distribution of the maximum pollutant concentrations over a time period. • Pairing in both time and space. • AERONET single point of measurement. Max resolution ~2 km • MODIS 10 km resolution 36. 7 -119. 6 -119. 8 36. 6 Spatial resolution from Reno and Fresno City AERONET MODIS Lat Lon Reno 39. 5 -119. 8 36. 4 -> 36. 6 -119. 7 -> -119. 9 Fresno 36. 7 -119. 7 36. 6 -> 36. 8 -119. 6 -> -119. 8 16

Paring data: Space, time, both… • Pairing in time: such as the time series of the maximum pollutant concentrations anywhere in the domain of interest. • Pairing in space: such as the spatial distribution of the maximum pollutant concentrations over a time period. • Pairing in both time and space. AERONET DD HH 1 2 13. 5 14. 5 3 4 14. 5 5 6 14. 5 13. 5 7 8 14. 5 13. 5 9 10 … 14. 5 13. 5 … MODIS N DD HH 4 1 13. 5 N 1 4 4 1 1 4 4 4 2 14. 5 3 14. 5 4 14. 5 5 14. 5 6 13. 5 7 14. 5 8 13. 5 9 14. 5 4 10 13. 5 … … … 1 1 1 1 … Spatial resolution from Reno and Fresno City AERONET Lat Lon MODIS Lat Lon Reno 39. 5 -119. 8 36. 4 -> 36. 6 -119. 7 -> -119. 9 Fresno 36. 7 -119. 7 36. 6 -> 36. 8 -119. 6 -> -119. 8 Time resolution from Reno and Fresno 17

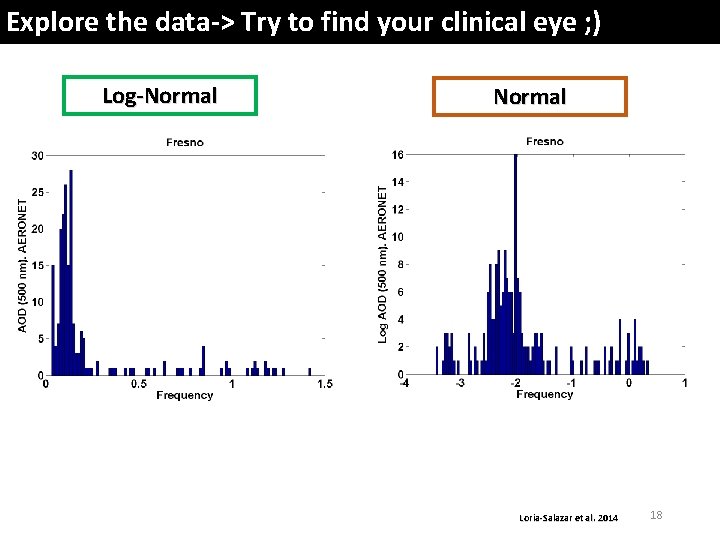

Explore the data-> Try to find your clinical eye ; ) Log-Normal Loria-Salazar et al. 2014 18

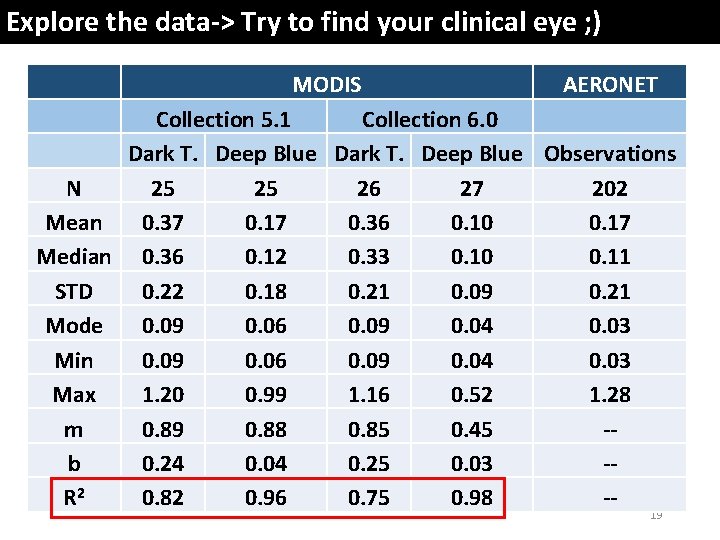

Explore the data-> Try to find your clinical eye ; ) MODIS AERONET Collection 5. 1 Collection 6. 0 Dark T. Deep Blue Observations N 25 25 26 27 202 Mean 0. 37 0. 17 0. 36 0. 10 0. 17 Median 0. 36 0. 12 0. 33 0. 10 0. 11 STD 0. 22 0. 18 0. 21 0. 09 0. 21 Mode 0. 09 0. 06 0. 09 0. 04 0. 03 Min 0. 09 0. 06 0. 09 0. 04 0. 03 Max 1. 20 0. 99 1. 16 0. 52 1. 28 m 0. 89 0. 88 0. 85 0. 45 -b 0. 24 0. 04 0. 25 0. 03 -R 2 0. 82 0. 96 0. 75 0. 98 -19

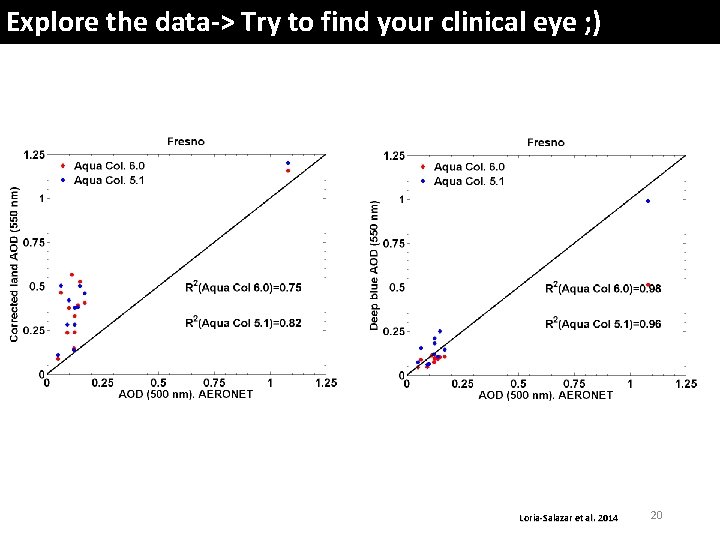

Explore the data-> Try to find your clinical eye ; ) Loria-Salazar et al. 2014 20

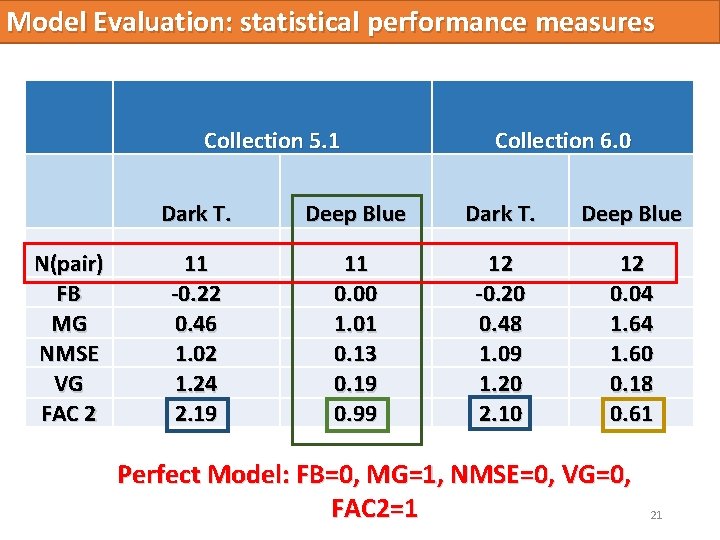

Model Evaluation: statistical performance measures Collection 5. 1 N(pair) FB MG NMSE VG FAC 2 Collection 6. 0 Dark T. Deep Blue 11 -0. 22 0. 46 1. 02 1. 24 2. 19 11 0. 00 1. 01 0. 13 0. 19 0. 99 12 -0. 20 0. 48 1. 09 1. 20 2. 10 12 0. 04 1. 60 0. 18 0. 61 Perfect Model: FB=0, MG=1, NMSE=0, VG=0, FAC 2=1 21

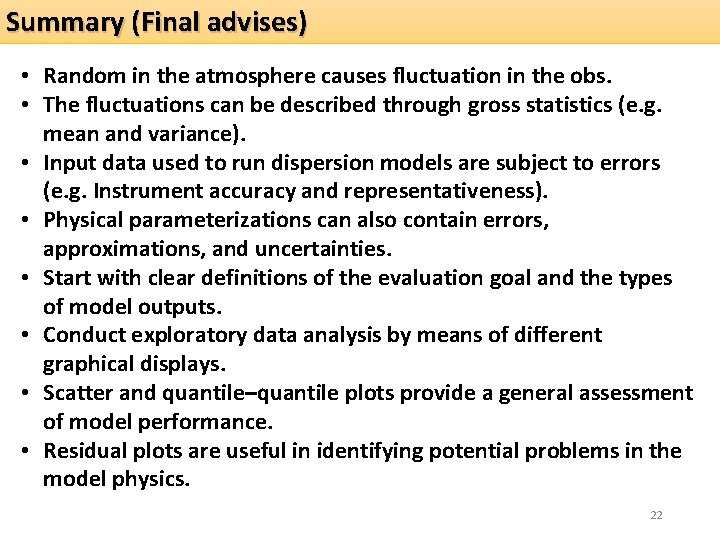

Summary (Final advises) • Random in the atmosphere causes fluctuation in the obs. • The fluctuations can be described through gross statistics (e. g. mean and variance). • Input data used to run dispersion models are subject to errors (e. g. Instrument accuracy and representativeness). • Physical parameterizations can also contain errors, approximations, and uncertainties. • Start with clear definitions of the evaluation goal and the types of model outputs. • Conduct exploratory data analysis by means of different graphical displays. • Scatter and quantile–quantile plots provide a general assessment of model performance. • Residual plots are useful in identifying potential problems in the model physics. 22

Summary (Final advises) • Residual plots are useful in identifying potential problems in the model physics. • The FAC 2 is more robust, because it is not sensitive to the distribution. • For dispersion modeling where concentrations or dosages often vary by several orders of magnitude, MG and VG are better than FB and NMSE. • Lower threshold was suggested when calculating MG and VG, because these two measures are strongly influenced by very low. • The R is not a very robust measure. 23

IPCC report 2013: Radiative forcing Stocker et al. 2013 24

References • Chang, J. C. , and H. Hanna, (2004), Air Quality model performance evaluation, Meteorol. Atmos. Phys. , 87, 167 -196, doi: 10. 1007/s 00703 -0070 -7. • Loria-Salazar M. , H. Holmes, P. Arnott, (2014), Spatial Investigation of Columnar AOD and Near-Surface PM 2. 5 Concentrations During the 2013 American and Yosemite Rim Fires, Lead Poster Presenter, AGU Fall Meeting in San Francisco, California. • Oreskes N, Shrader-Frechette K, Belitz K (1994) Verification, validation, and confirmation of numerical models in the earth sciences. Science 263: 641– 646. • Stocker, T. F. , D. Qin, G. -K. Plattner, M. Tignor, S. K. Allen, J. Boschung, A. Nauels, Y. Xia, et al. , (2013), IPCC, 2013: Climate Change 2013: The Physical Science Basis. Contribution of Working Group I to the Fifth Assessment Report of the Intergovernmental Panel on Climate Change, Cambridge University Press, Cambridge, United Kingdom and New York, NY, USA, 1535 pp, doi: 10. 1017/CBO 9781107415324. 25

Time for fun Questions session 1. How can you apply this to your own work? 2. What is the distribution of AQ data? 3. For the evaluation equations: Should I use the median or the mean for AQ models? 4. For a normal distribution data set: Should I use the median or the mean for AQ models? 5. What is the repercussion on society if you do not evaluate correctly your results? 6. How can we improve this method? Suggestions? 26

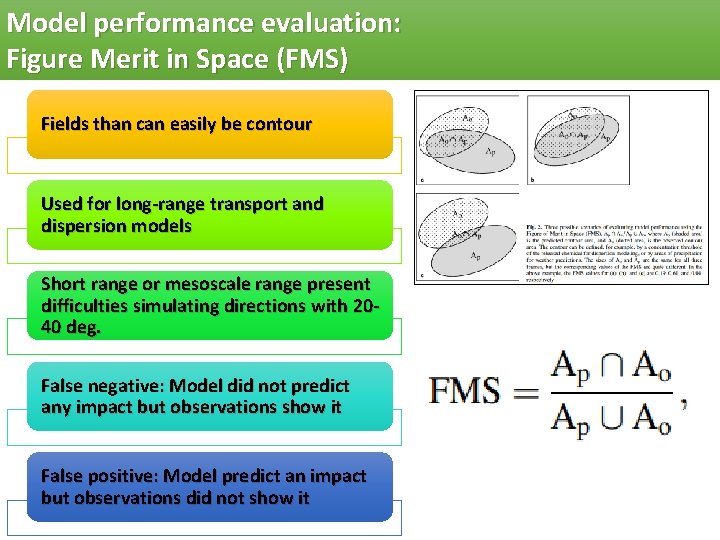

Model performance evaluation: Figure Merit in Space (FMS) Fields than can easily be contour Used for long-range transport and dispersion models Short range or mesoscale range present difficulties simulating directions with 2040 deg. False negative: Model did not predict any impact but observations show it False positive: Model predict an impact but observations did not show it

- Slides: 27