AI system current situation and outlook Presented by

AI system: current situation and outlook Presented by Mingliang Zeng, USTC, ADSL July 17 th, 2019 2021/6/15 ADSL 1

Outline • • AI AI Introduction system: current situation system: outlook system: an example 2021/6/15 ADSL 2

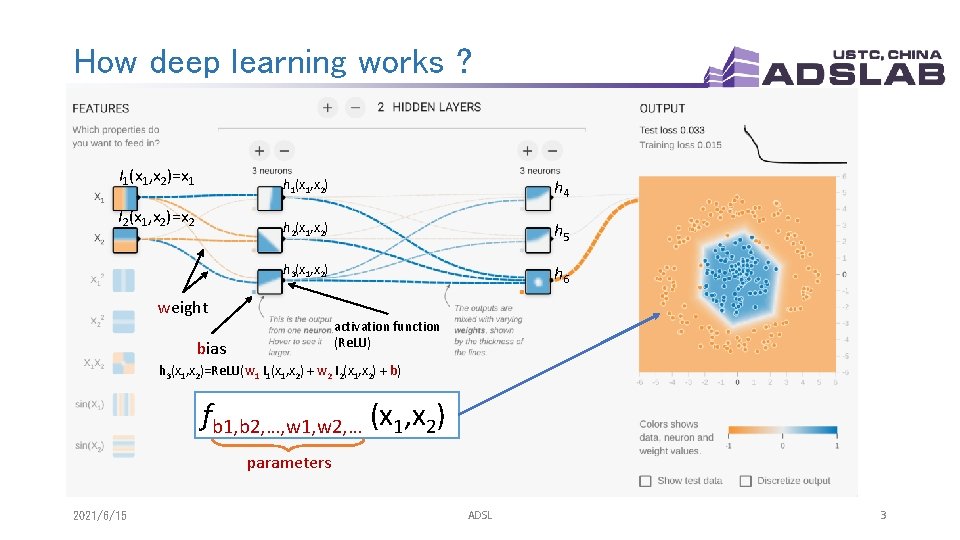

How deep learning works ? I 1(x 1, x 2)=x 1 I 2(x 1, x 2)=x 2 h 1(x 1, x 2) h 4 h 2(x 1, x 2) h 5 h 3(x 1, x 2) h 6 weight activation function (Re. LU) bias h 3(x 1, x 2)=Re. LU(w 1 I 1(x 1, x 2) + w 2 I 2(x 1, x 2) + b) fb 1, b 2, …, w 1, w 2, … (x 1, x 2) parameters 2021/6/15 ADSL 3

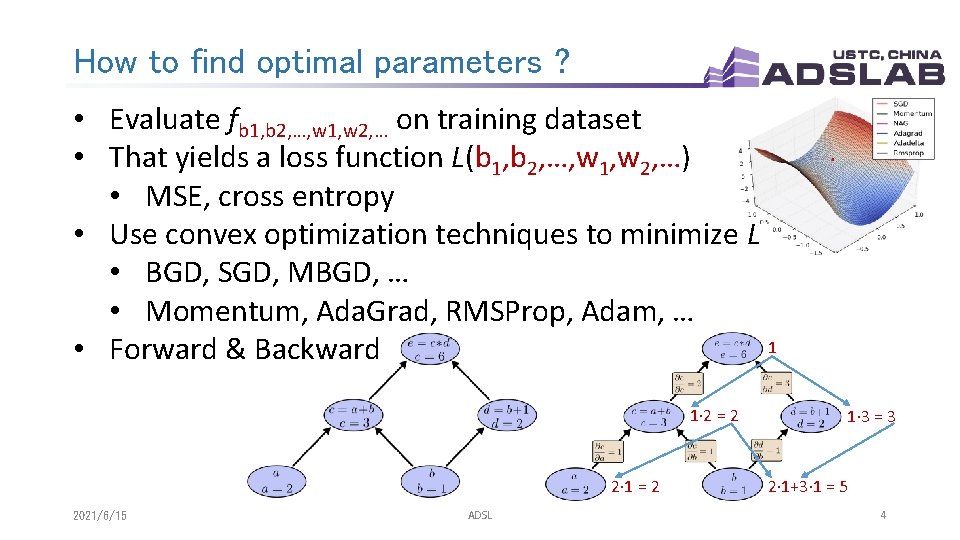

How to find optimal parameters ? • Evaluate fb 1, b 2, …, w 1, w 2, … on training dataset • That yields a loss function L(b 1, b 2, …, w 1, w 2, …) • MSE, cross entropy • Use convex optimization techniques to minimize L • BGD, SGD, MBGD, … • Momentum, Ada. Grad, RMSProp, Adam, … 1 • Forward & Backward 1∙ 2 = 2 2∙ 1 = 2 2021/6/15 ADSL 1∙ 3 = 3 2∙ 1+3∙ 1 = 5 4

Outline • • AI AI Introduction system: current situation system: outlook system: an example 2021/6/15 ADSL 5

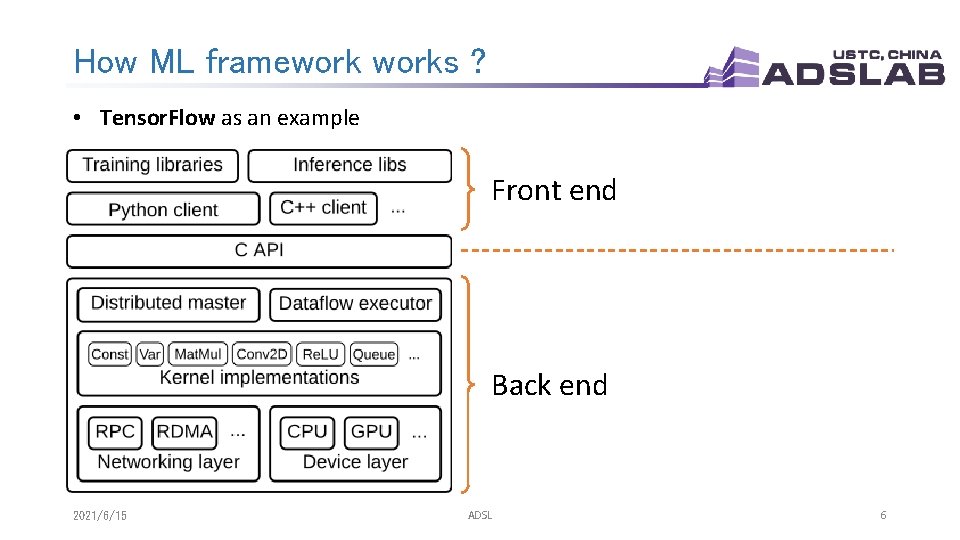

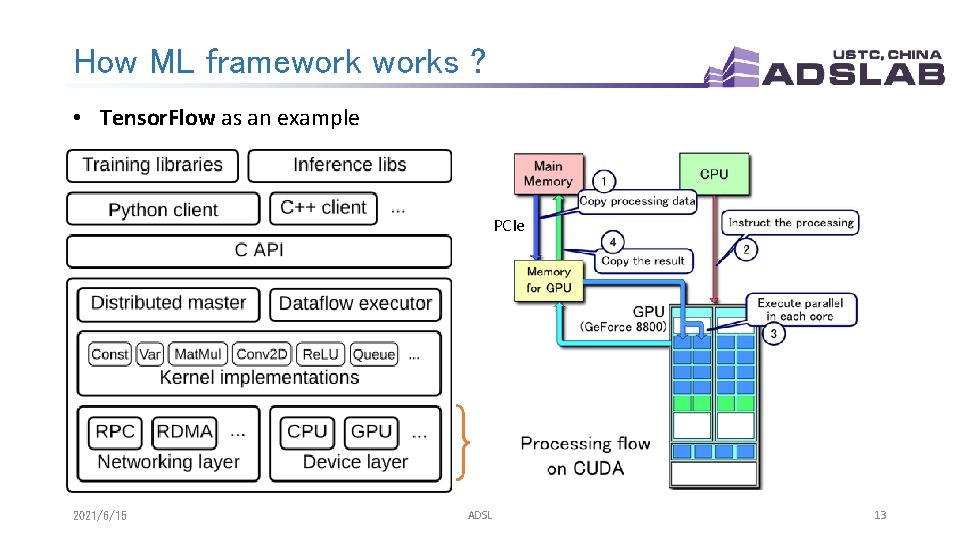

How ML frameworks ? • Tensor. Flow as an example Front end Back end 2021/6/15 ADSL 6

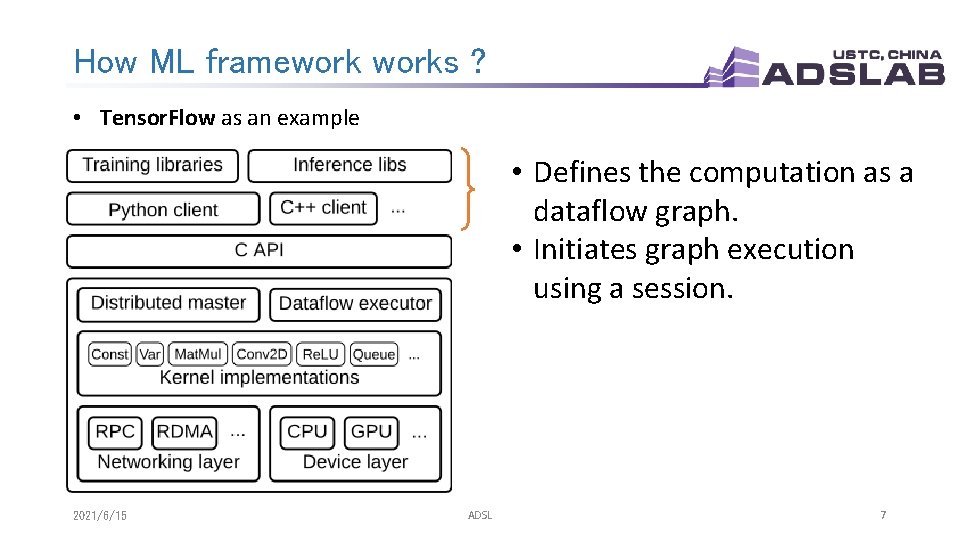

How ML frameworks ? • Tensor. Flow as an example • Defines the computation as a dataflow graph. • Initiates graph execution using a session. 2021/6/15 ADSL 7

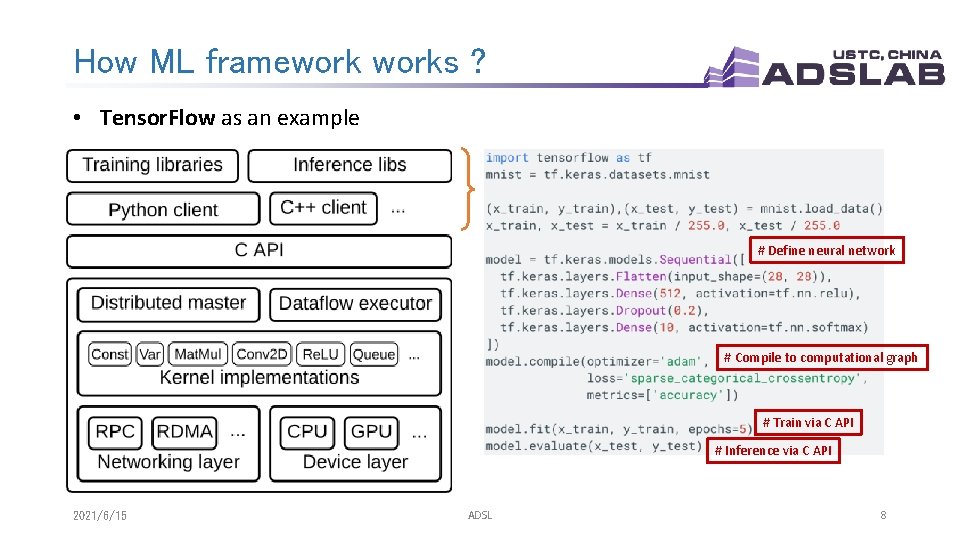

How ML frameworks ? • Tensor. Flow as an example # Define neural network # Compile to computational graph # Train via C API # Inference via C API 2021/6/15 ADSL 8

How ML frameworks ? • Tensor. Flow as an example 2021/6/15 Example of a computational graph: ADSL 9

How ML frameworks ? • Tensor. Flow as an example • Distributed Master • Prunes a specific subgraph from the graph • Partitions the subgraph • Distributes the graph pieces to workers • Initiates graph piece execution by workers • Worker Services (one for each task) • Schedule the execution of graph operations using kernel implementations • Send and receive operation results to and from other worker services 2021/6/15 ADSL 10

How ML frameworks ? • Tensor. Flow as an example 2021/6/15 ADSL 11

How ML frameworks ? • Tensor. Flow as an example The runtime contains over 200 standard operations including mathematical, array manipulation, control flow, and state management operations. Each of these operations can have kernel implementations optimized for a variety of devices. cu. BLAS CUDA Driver Mat. Mul 2021/6/15 ADSL GPU 12

How ML frameworks ? • Tensor. Flow as an example PCIe 2021/6/15 ADSL 13

Outline • • AI AI Introduction system: current situation system: outlook system: an example 2021/6/15 ADSL 14

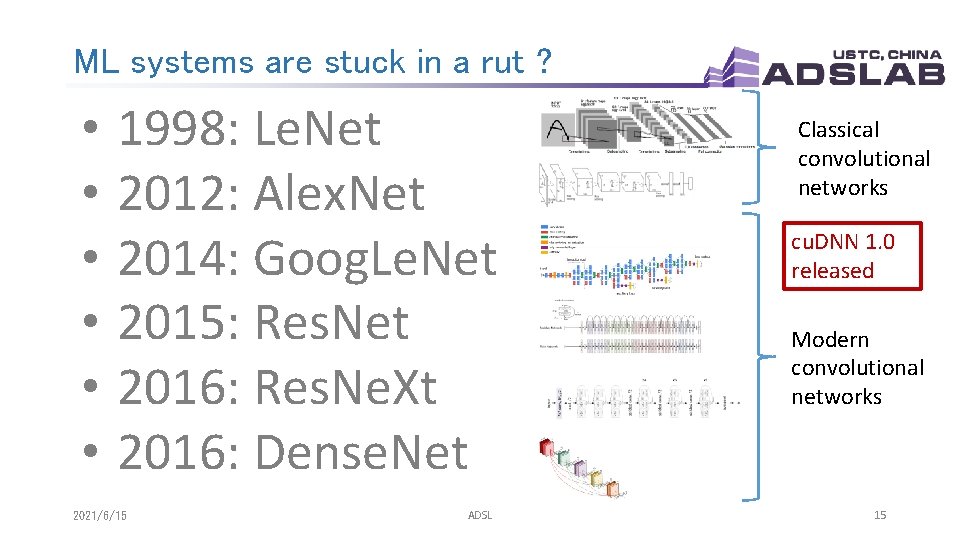

ML systems are stuck in a rut ? • • • 1998: Le. Net 2012: Alex. Net 2014: Goog. Le. Net 2015: Res. Net 2016: Res. Ne. Xt 2016: Dense. Net 2021/6/15 ADSL Classical convolutional networks cu. DNN 1. 0 released Modern convolutional networks 15

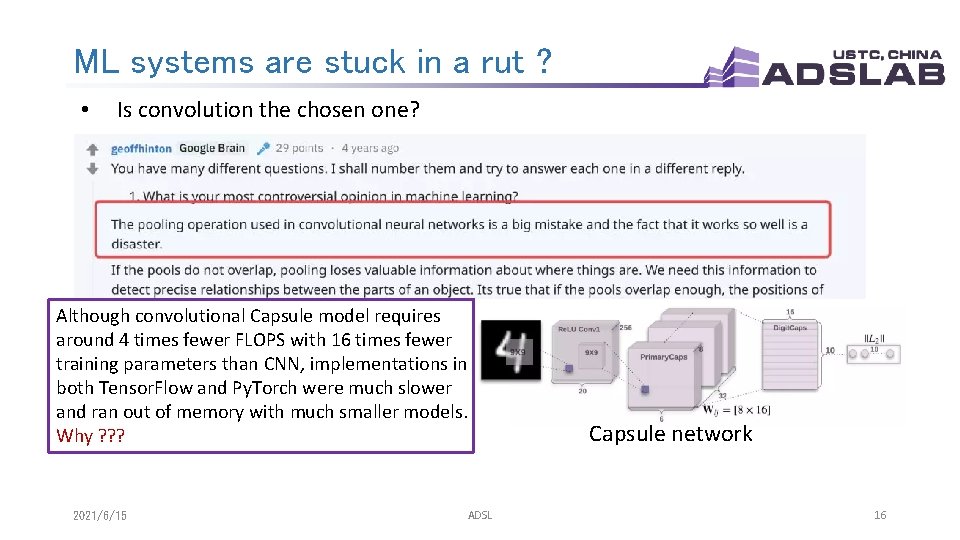

ML systems are stuck in a rut ? • Is convolution the chosen one? Although convolutional Capsule model requires around 4 times fewer FLOPS with 16 times fewer training parameters than CNN, implementations in both Tensor. Flow and Py. Torch were much slower and ran out of memory with much smaller models. Why ? ? ? 2021/6/15 ADSL Capsule network 16

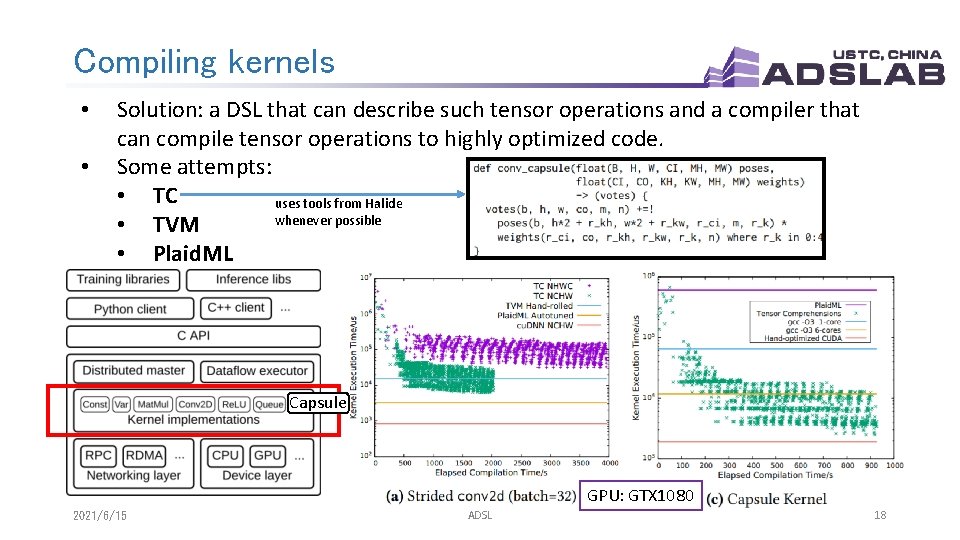

Compiling kernels • • • New ideas often require new primitives. The resulting code of Conv 2 D is little more than 7 nested loops around a multiply-accumulate operation, but array layout, vectorization, parallelization and caching are extremely important for performance. It is hard for researchers to write a highly optimized custom library. cu. DNN Formula of Conv 2 D : Scalar multiplication + Capsule Formula of Capsule : ? ? ? Matrix multiplication 2021/6/15 ADSL 17

Compiling kernels • • Solution: a DSL that can describe such tensor operations and a compiler that can compile tensor operations to highly optimized code. Some attempts: • TC uses tools from Halide whenever possible • TVM • Plaid. ML Capsule GPU: GTX 1080 2021/6/15 ADSL 18

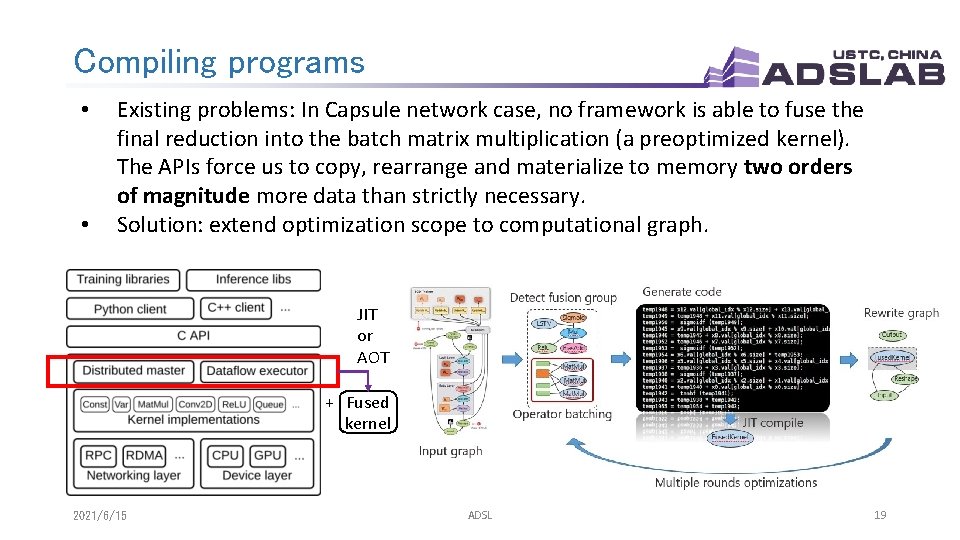

Compiling programs • • Existing problems: In Capsule network case, no framework is able to fuse the final reduction into the batch matrix multiplication (a preoptimized kernel). The APIs force us to copy, rearrange and materialize to memory two orders of magnitude more data than strictly necessary. Solution: extend optimization scope to computational graph. JIT or AOT + Fused kernel 2021/6/15 ADSL 19

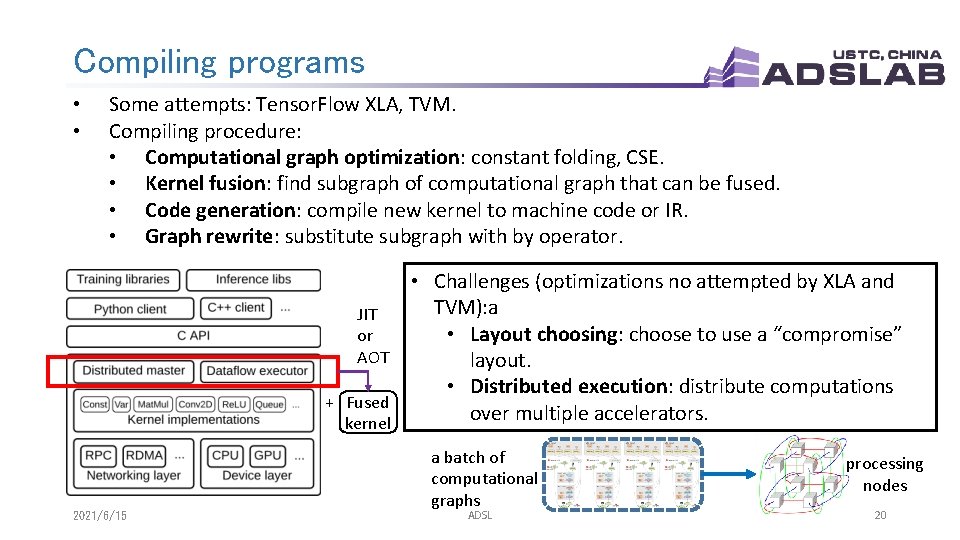

Compiling programs • • Some attempts: Tensor. Flow XLA, TVM. Compiling procedure: • Computational graph optimization: constant folding, CSE. • Kernel fusion: find subgraph of computational graph that can be fused. • Code generation: compile new kernel to machine code or IR. • Graph rewrite: substitute subgraph with by operator. JIT or AOT + Fused kernel 2021/6/15 • Challenges (optimizations no attempted by XLA and TVM): a • Layout choosing: choose to use a “compromise” layout. • Distributed execution: distribute computations over multiple accelerators. a batch of computational graphs ADSL processing nodes 20

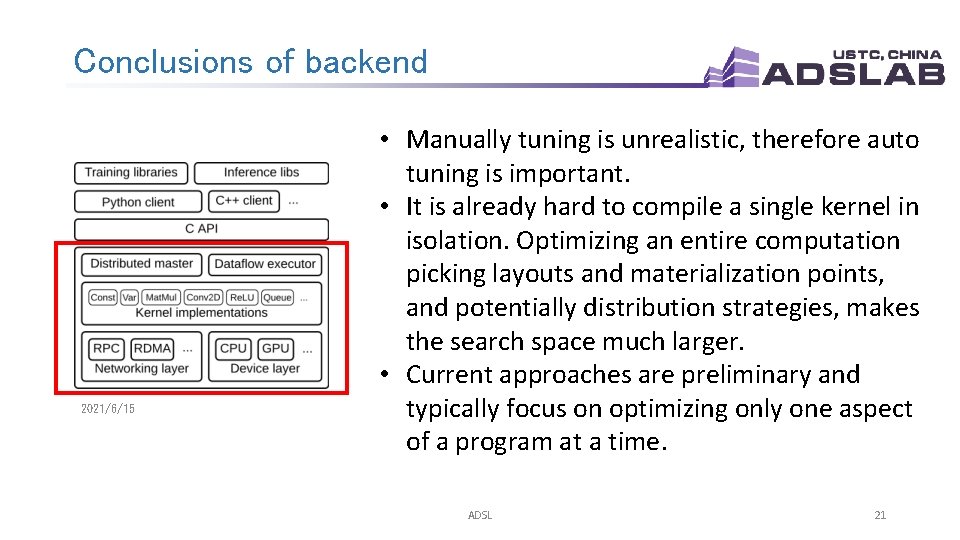

Conclusions of backend 2021/6/15 • Manually tuning is unrealistic, therefore auto tuning is important. • It is already hard to compile a single kernel in isolation. Optimizing an entire computation picking layouts and materialization points, and potentially distribution strategies, makes the search space much larger. • Current approaches are preliminary and typically focus on optimizing only one aspect of a program at a time. ADSL 21

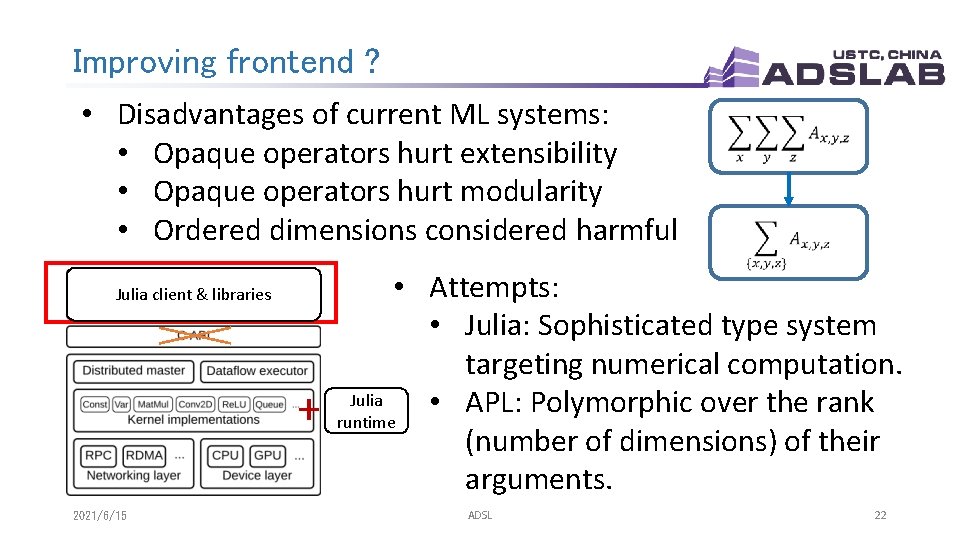

Improving frontend ? • Disadvantages of current ML systems: • Opaque operators hurt extensibility • Opaque operators hurt modularity • Ordered dimensions considered harmful Julia client & libraries + 2021/6/15 • Attempts: • Julia: Sophisticated type system targeting numerical computation. Julia • APL: Polymorphic over the rank runtime (number of dimensions) of their arguments. ADSL 22

Outline • • AI AI Introduction system: current situation system: outlook system: an example 2021/6/15 ADSL 23

Tofu: an AI system example q Program compiling q Operator level parallelism q Supports very large models q Distributes computations over multiple accelerators q Uses a domain specific language TDL inspired by Halide q Partitioning is totally transparent to users q Targets reducing communication cost 2021/6/15 ADSL 24

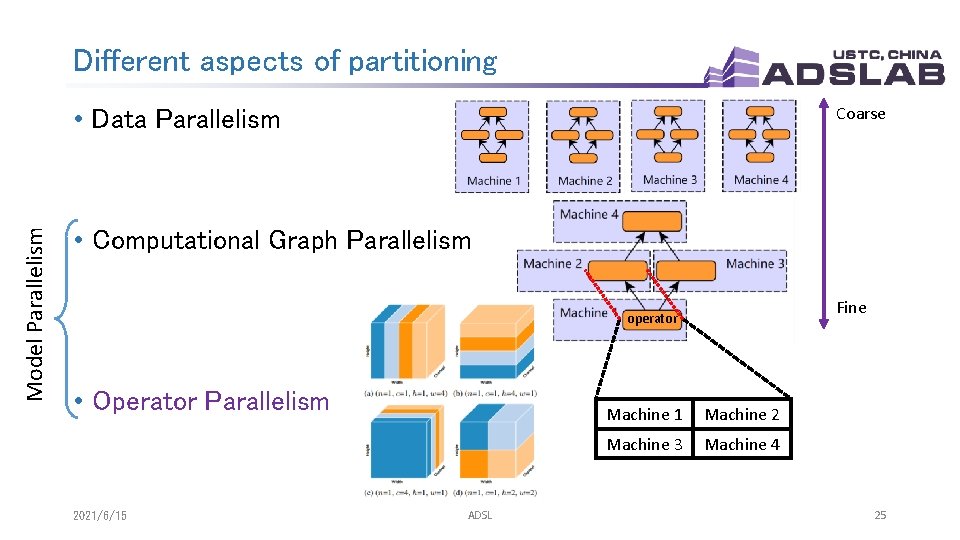

Different aspects of partitioning Coarse Model Parallelism • Data Parallelism • Computational Graph Parallelism Fine operator • Operator Parallelism 2021/6/15 ADSL Machine 1 Machine 2 Machine 3 Machine 4 25

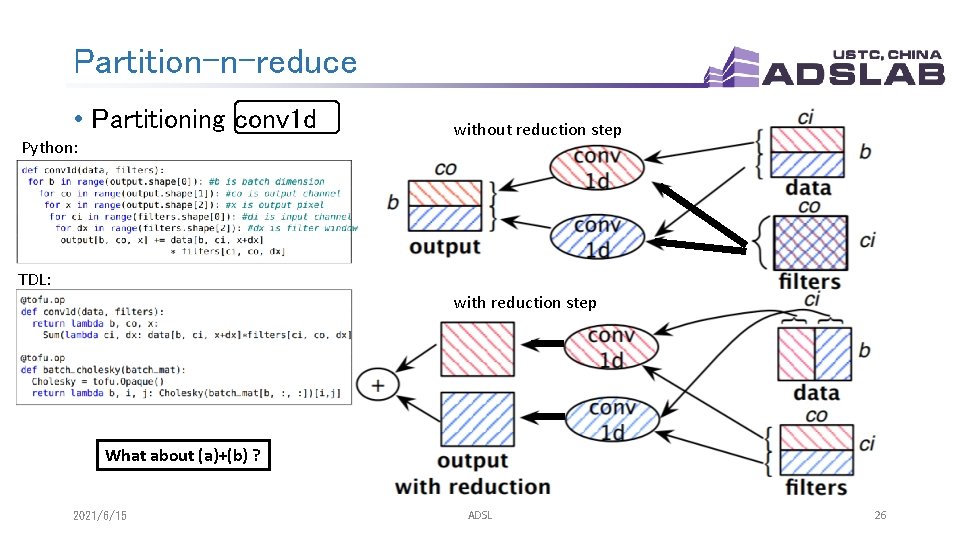

Partition-n-reduce • Partitioning conv 1 d Python: without reduction step TDL: with reduction step What about (a)+(b) ? 2021/6/15 ADSL 26

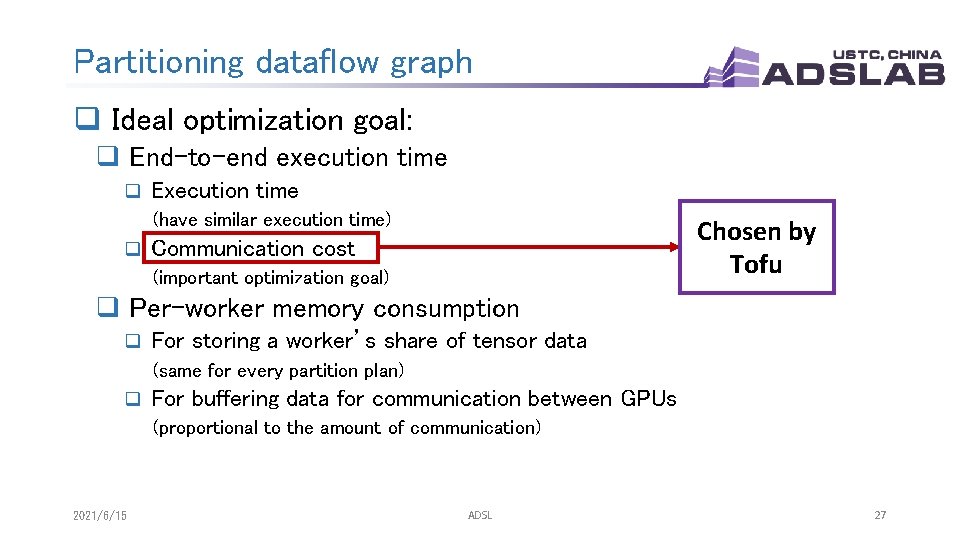

Partitioning dataflow graph q Ideal optimization goal: q End-to-end execution time q Execution time (have similar execution time) q Chosen by Tofu Communication cost (important optimization goal) q Per-worker memory consumption q For storing a worker’s share of tensor data (same for every partition plan) q For buffering data for communication between GPUs (proportional to the amount of communication) 2021/6/15 ADSL 27

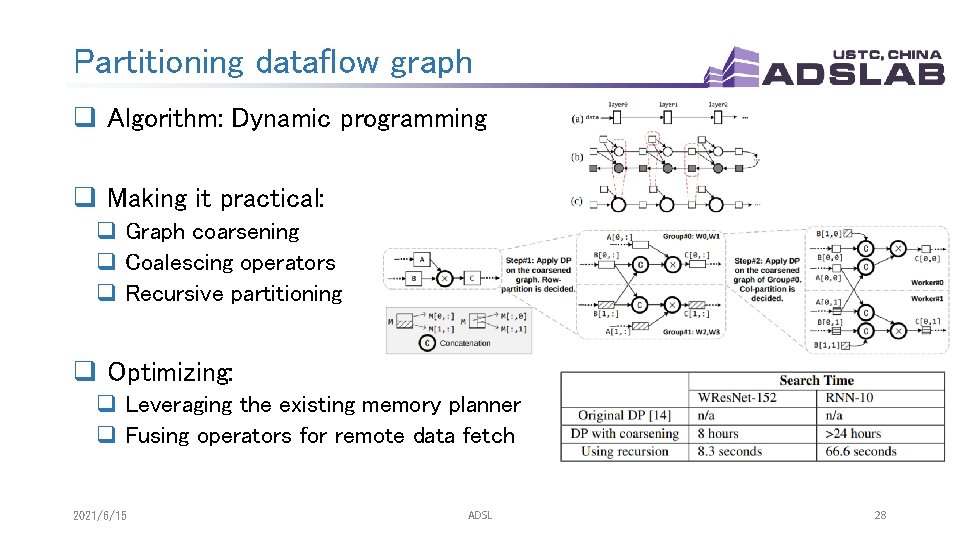

Partitioning dataflow graph q Algorithm: Dynamic programming q Making it practical: q Graph coarsening q Coalescing operators q Recursive partitioning q Optimizing: q Leveraging the existing memory planner q Fusing operators for remote data fetch 2021/6/15 ADSL 28

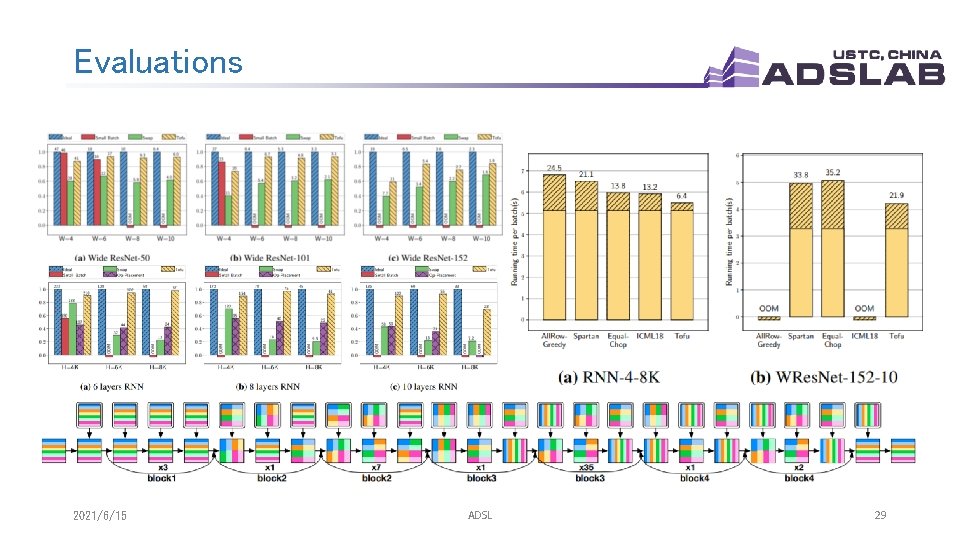

Evaluations 2021/6/15 ADSL 29

More improvements ? q Limitations of partition-n-reduce q restricts each worker to perform a coarse-grained task identical to the original computation q strategies do not necessarily minimize communication q do not take advantage of the underlying interconnect topology q Limitations of TDL q does not support sparse tensor operations well q does not support recursive operation q Limitations of partitioning strategy q does not support leaving some operators un-partitioned 2021/6/15 ADSL 30

Final conclusion q Researches on AI system are hard & promising 2021/6/15 ADSL 31

AI system: current situation and outlook Thanks & QA! July 17 th, 2019 2021/6/15 ADSL 32

- Slides: 32