AGI Through LargeScale Multimodal Bayesian Learning Brian Milch

AGI Through Large-Scale, Multimodal Bayesian Learning Brian Milch MIT March 2, 2008 1

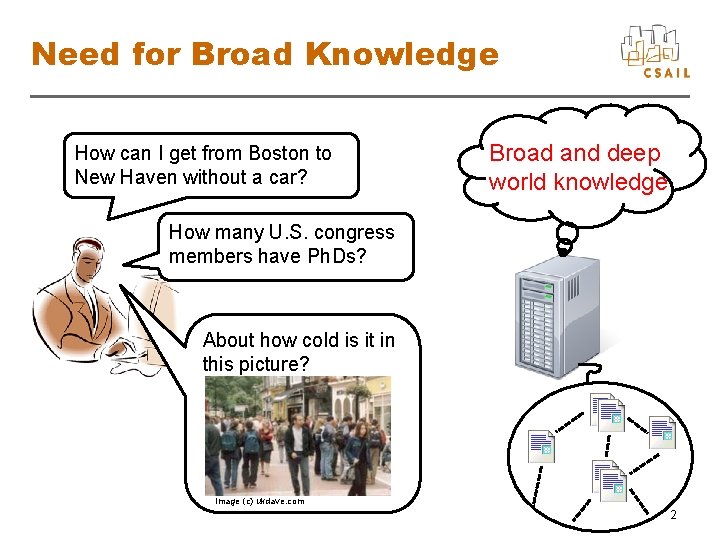

Need for Broad Knowledge How can I get from Boston to New Haven without a car? Broad and deep world knowledge How many U. S. congress members have Ph. Ds? About how cold is it in this picture? Image (c) ukdave. com 2

Acquiring Such Knowledge • Proposal: Learn knowledge from large amounts of online text, images, video • Learn by Bayesian belief updating, maintaining probability distribution over: – Models of how world tends to work – Past, current, and future states of the world 3

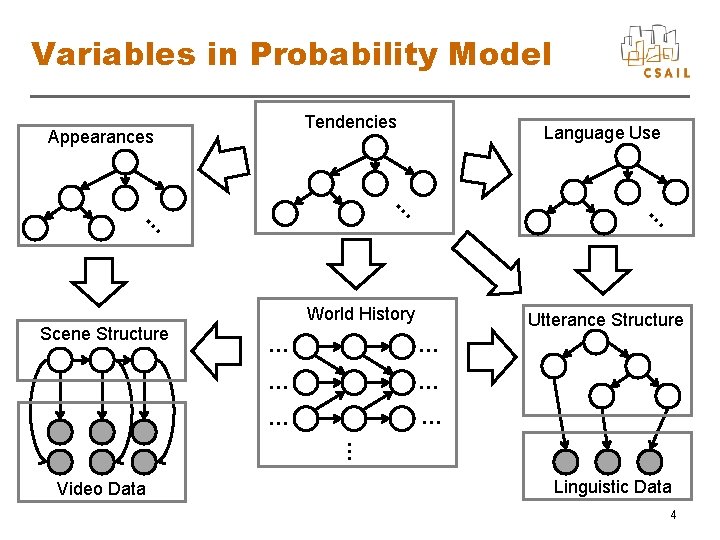

Variables in Probability Model Tendencies Appearances Language Use … … … World History Scene Structure Utterance Structure … … … … Video Data Linguistic Data 4

Data to Learn From • Text? +– Lots available; broad coverage −– No connection with sensory input • Experience of physical or virtual robot(s)? +– Multimodal; get to actively manipulate world −– Physical: hard to get broad experience −– Virtual: may not generalize to physical world • Multimodal data on the Web +– Broad coverage; linguistic and sensory −– Disjointed; sometimes not factual; passive 5

Built-In Components • Why built-in components? – Children don’t learn from scratch – Why not exploit known algorithms, data structures (rendering, parse trees, …) • Modules for reasoning about: – Space, time, physical objects, shape – Language – Other agents 6

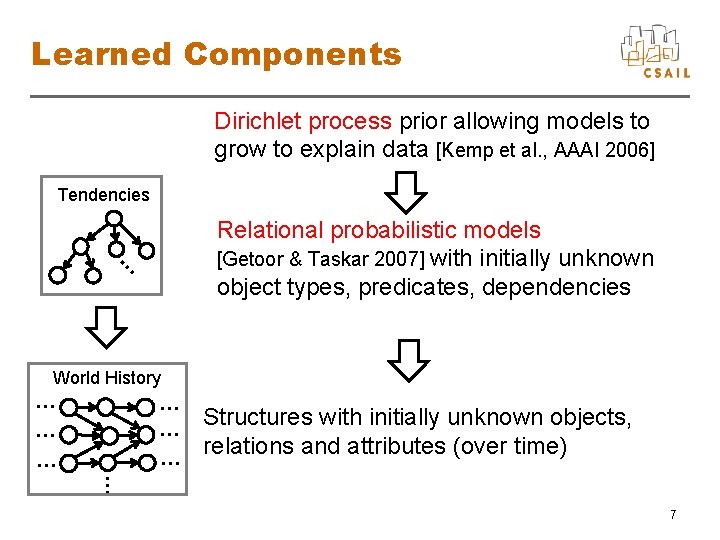

Learned Components Dirichlet process prior allowing models to grow to explain data [Kemp et al. , AAAI 2006] Tendencies … Relational probabilistic models [Getoor & Taskar 2007] with initially unknown object types, predicates, dependencies World History … … … Structures with initially unknown objects, relations and attributes (over time) … 7

![Algorithms • Probabilistic inference – Markov chain Monte Carlo [Gilks et al. 1996] – Algorithms • Probabilistic inference – Markov chain Monte Carlo [Gilks et al. 1996] –](http://slidetodoc.com/presentation_image_h/1151f3c97757d0cb4a6813ea1658ad1b/image-8.jpg)

Algorithms • Probabilistic inference – Markov chain Monte Carlo [Gilks et al. 1996] – Variational methods [Jordan et al. 1999] – Belief propagation [Yedidia et al. 2001] – Hybrids with logical inference [Sang et al. 2005; Poon & Domingos 2006] • Parallelize interpretation of documents, images, videos • Still unclear how to scale up sufficiently 8

Measures of Progress • Should be able to show steady improvement on real data sets (object recognition, coreference, entailment, …) • Serve as resource for shallower, handbuilt systems (replacing Cyc, Word. Net) • Spin off challenges for researchers in specialty areas 9

Conclusions • Potential path to AGI: Bayesian learning on large amounts of multimodal data • Attractive features – Exploits well-understood principles – Learns broad, real-world knowledge – Connected to mainstream AI research 10

- Slides: 10