Advanced Association Rule Mining and Beyond Continuous and

Advanced Association Rule Mining and Beyond

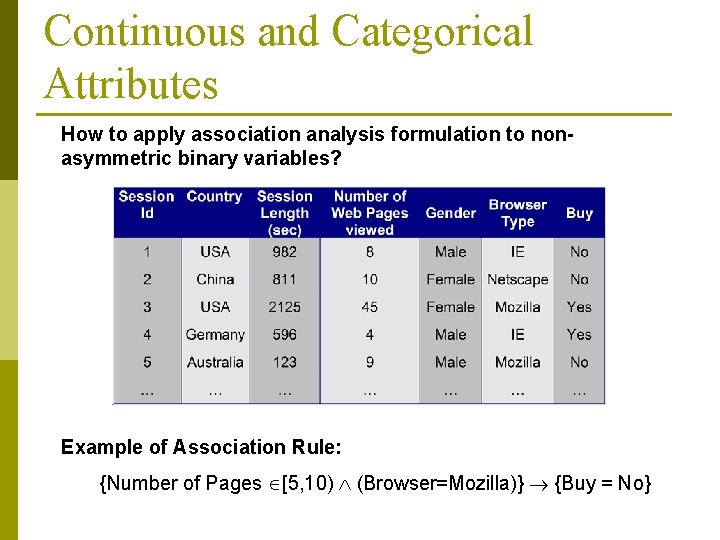

Continuous and Categorical Attributes How to apply association analysis formulation to nonasymmetric binary variables? Example of Association Rule: {Number of Pages [5, 10) (Browser=Mozilla)} {Buy = No}

Handling Categorical Attributes p Transform categorical attribute into asymmetric binary variables p Introduce a new “item” for each distinct attribute-value pair n Example: replace Browser Type attribute with p p p Browser Type = Internet Explorer Browser Type = Mozilla

Handling Categorical Attributes p Potential Issues n What if attribute has many possible values Example: attribute country has more than 200 possible values p Many of the attribute values may have very low support p § Potential solution: Aggregate the low-support attribute values n What if distribution of attribute values is highly skewed Example: 95% of the visitors have Buy = No p Most of the items will be associated with (Buy=No) item p § Potential solution: drop the highly frequent items

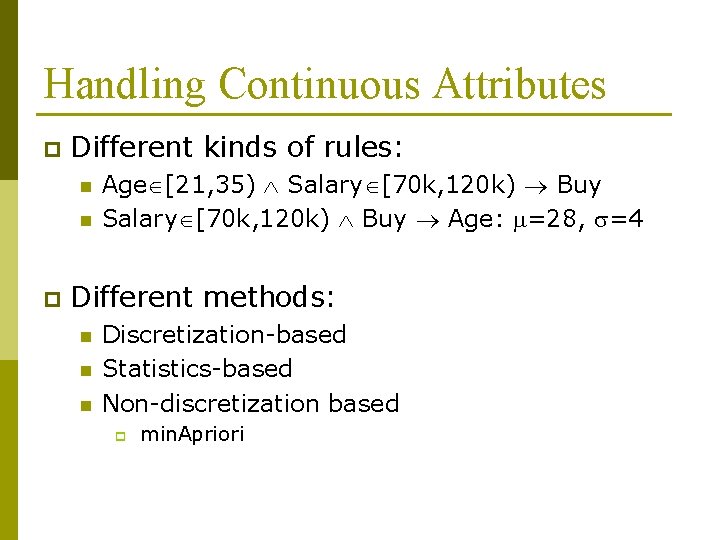

Handling Continuous Attributes p Different kinds of rules: n n p Age [21, 35) Salary [70 k, 120 k) Buy Age: =28, =4 Different methods: n n n Discretization-based Statistics-based Non-discretization based p min. Apriori

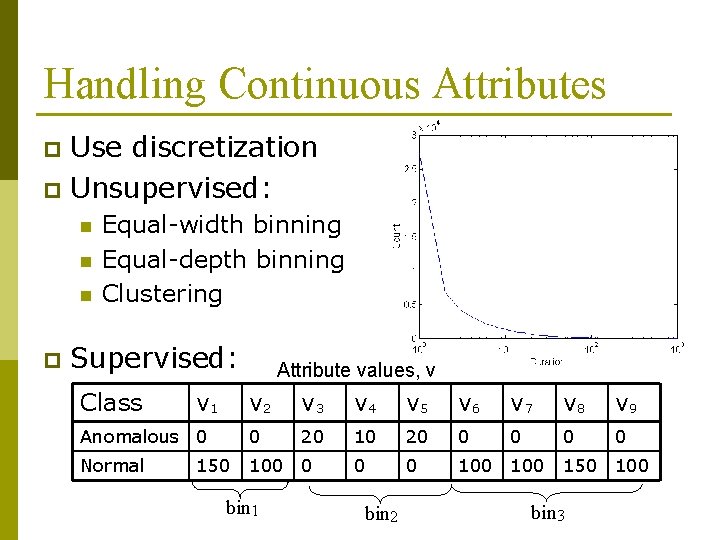

Handling Continuous Attributes Use discretization p Unsupervised: p n n n p Equal-width binning Equal-depth binning Clustering Supervised: Class v 1 Attribute values, v v 2 v 3 v 4 v 5 v 6 v 7 v 8 v 9 Anomalous 0 0 20 10 20 0 0 Normal 100 0 100 150 bin 1 bin 2 150 100 bin 3

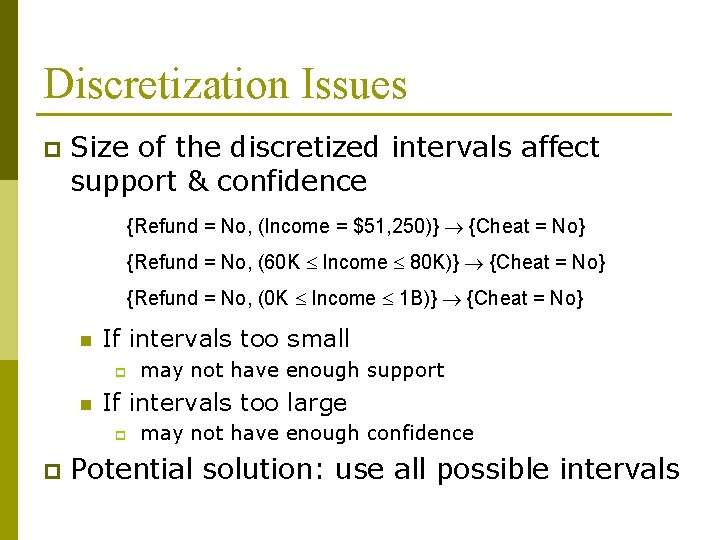

Discretization Issues p Size of the discretized intervals affect support & confidence {Refund = No, (Income = $51, 250)} {Cheat = No} {Refund = No, (60 K Income 80 K)} {Cheat = No} {Refund = No, (0 K Income 1 B)} {Cheat = No} n If intervals too small p n If intervals too large p p may not have enough support may not have enough confidence Potential solution: use all possible intervals

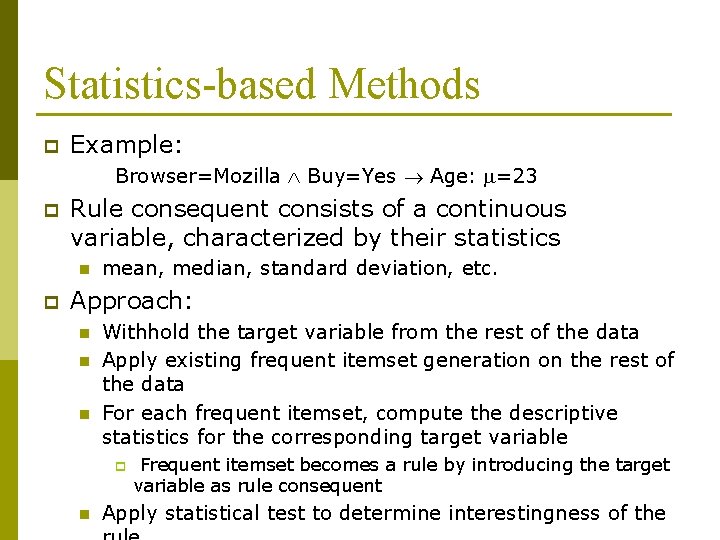

Statistics-based Methods p Example: Browser=Mozilla Buy=Yes Age: =23 p Rule consequent consists of a continuous variable, characterized by their statistics n p mean, median, standard deviation, etc. Approach: n n n Withhold the target variable from the rest of the data Apply existing frequent itemset generation on the rest of the data For each frequent itemset, compute the descriptive statistics for the corresponding target variable p n Frequent itemset becomes a rule by introducing the target variable as rule consequent Apply statistical test to determine interestingness of the

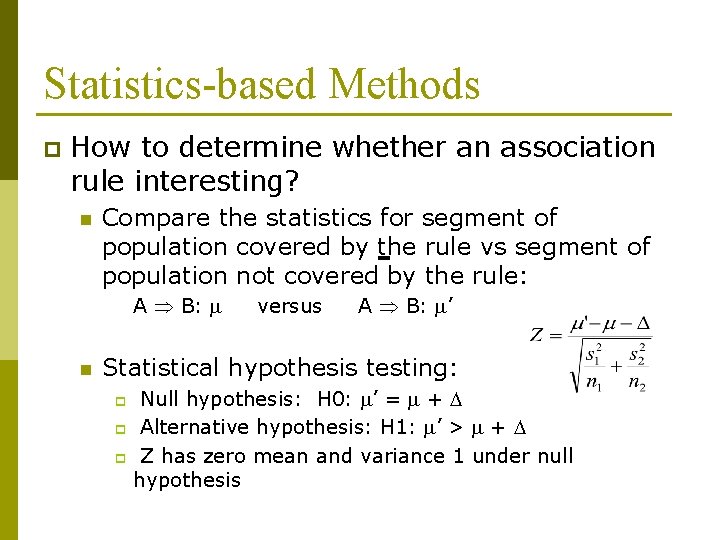

Statistics-based Methods p How to determine whether an association rule interesting? n Compare the statistics for segment of population covered by the rule vs segment of population not covered by the rule: A B: n versus A B: ’ Statistical hypothesis testing: Null hypothesis: H 0: ’ = + p Alternative hypothesis: H 1: ’ > + p Z has zero mean and variance 1 under null hypothesis p

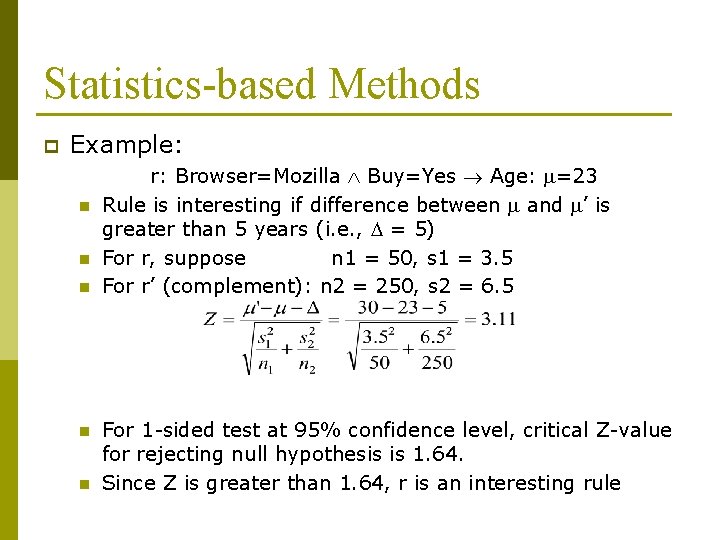

Statistics-based Methods p Example: n n n r: Browser=Mozilla Buy=Yes Age: =23 Rule is interesting if difference between and ’ is greater than 5 years (i. e. , = 5) For r, suppose n 1 = 50, s 1 = 3. 5 For r’ (complement): n 2 = 250, s 2 = 6. 5 For 1 -sided test at 95% confidence level, critical Z-value for rejecting null hypothesis is 1. 64. Since Z is greater than 1. 64, r is an interesting rule

Multi-level Association Rules

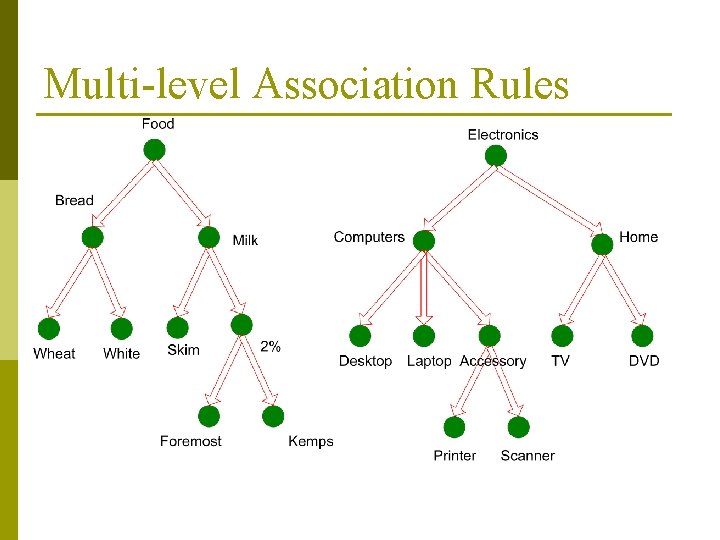

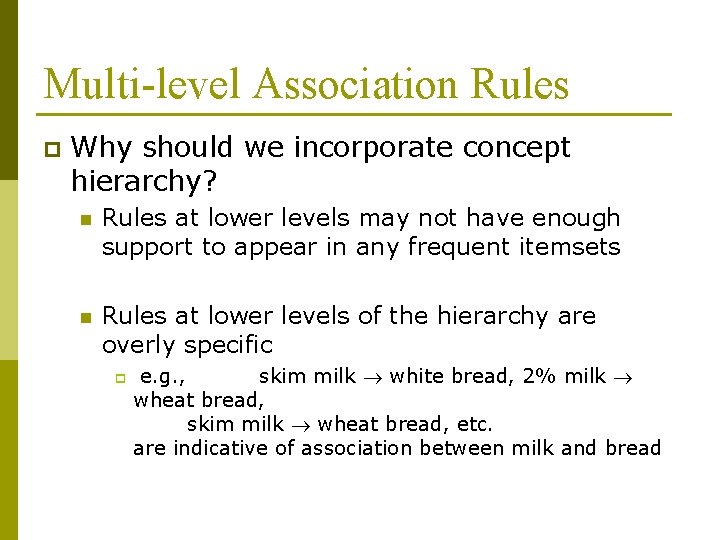

Multi-level Association Rules p Why should we incorporate concept hierarchy? n Rules at lower levels may not have enough support to appear in any frequent itemsets n Rules at lower levels of the hierarchy are overly specific p e. g. , skim milk white bread, 2% milk wheat bread, skim milk wheat bread, etc. are indicative of association between milk and bread

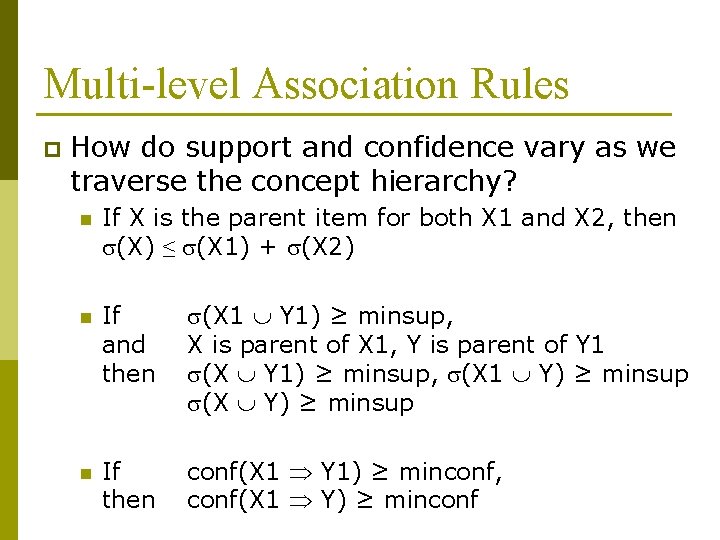

Multi-level Association Rules p How do support and confidence vary as we traverse the concept hierarchy? n If X is the parent item for both X 1 and X 2, then (X) ≤ (X 1) + (X 2) n If and then (X 1 Y 1) ≥ minsup, X is parent of X 1, Y is parent of Y 1 (X Y 1) ≥ minsup, (X 1 Y) ≥ minsup (X Y) ≥ minsup n If then conf(X 1 Y 1) ≥ minconf, conf(X 1 Y) ≥ minconf

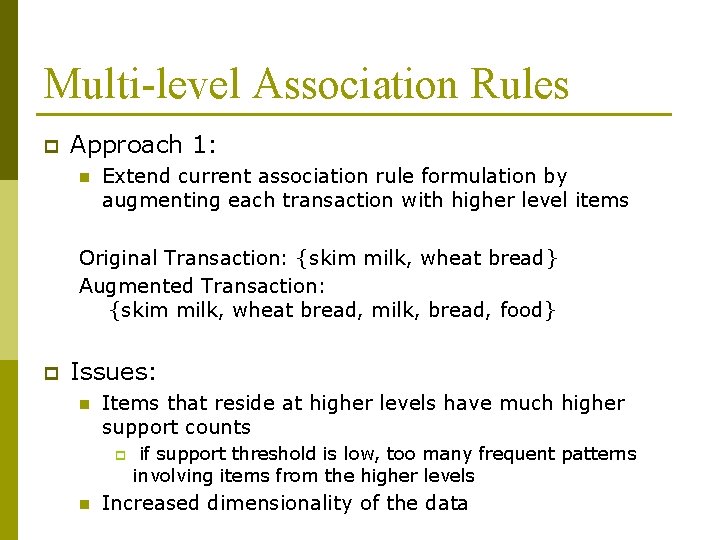

Multi-level Association Rules p Approach 1: n Extend current association rule formulation by augmenting each transaction with higher level items Original Transaction: {skim milk, wheat bread} Augmented Transaction: {skim milk, wheat bread, milk, bread, food} p Issues: n Items that reside at higher levels have much higher support counts p n if support threshold is low, too many frequent patterns involving items from the higher levels Increased dimensionality of the data

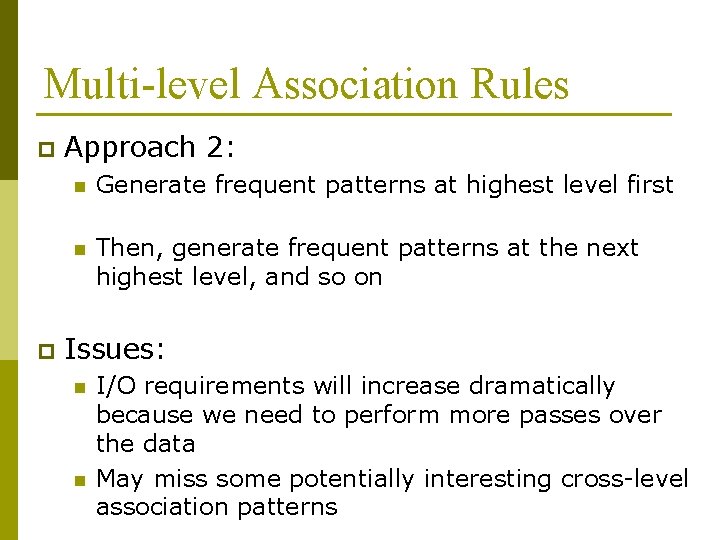

Multi-level Association Rules p p Approach 2: n Generate frequent patterns at highest level first n Then, generate frequent patterns at the next highest level, and so on Issues: n n I/O requirements will increase dramatically because we need to perform more passes over the data May miss some potentially interesting cross-level association patterns

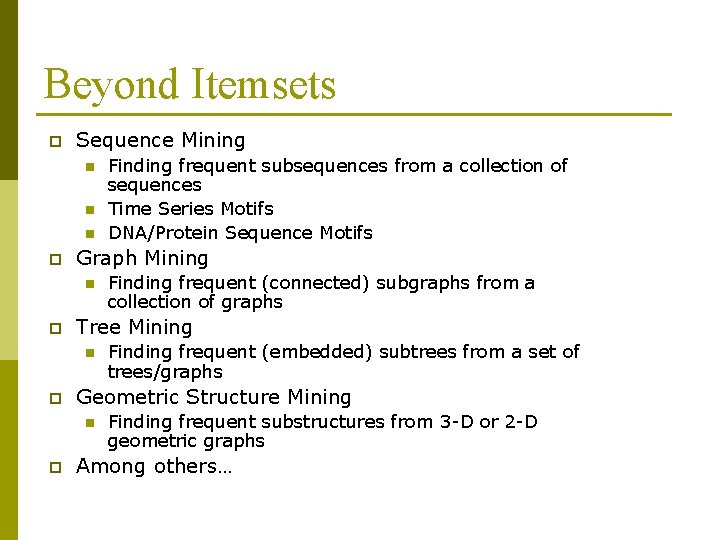

Beyond Itemsets p Sequence Mining n n n p Graph Mining n p Finding frequent (embedded) subtrees from a set of trees/graphs Geometric Structure Mining n p Finding frequent (connected) subgraphs from a collection of graphs Tree Mining n p Finding frequent subsequences from a collection of sequences Time Series Motifs DNA/Protein Sequence Motifs Finding frequent substructures from 3 -D or 2 -D geometric graphs Among others…

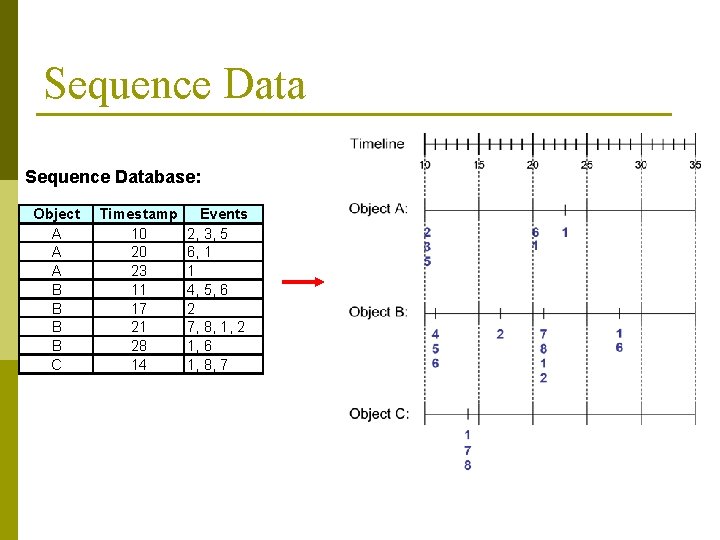

Sequence Database: Object A A A B B C Timestamp 10 20 23 11 17 21 28 14 Events 2, 3, 5 6, 1 1 4, 5, 6 2 7, 8, 1, 2 1, 6 1, 8, 7

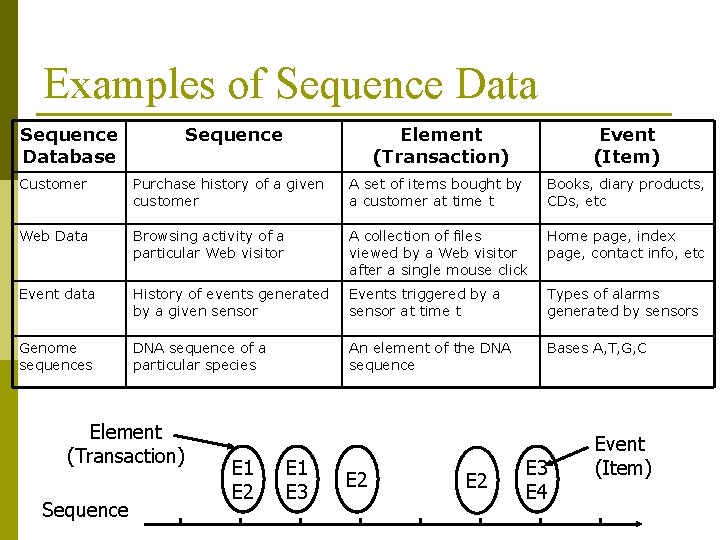

Examples of Sequence Database Sequence Element (Transaction) Event (Item) Customer Purchase history of a given customer A set of items bought by a customer at time t Books, diary products, CDs, etc Web Data Browsing activity of a particular Web visitor A collection of files viewed by a Web visitor after a single mouse click Home page, index page, contact info, etc Event data History of events generated by a given sensor Events triggered by a sensor at time t Types of alarms generated by sensors Genome sequences DNA sequence of a particular species An element of the DNA sequence Bases A, T, G, C Element (Transaction) Sequence E 1 E 2 E 1 E 3 E 2 E 3 E 4 Event (Item)

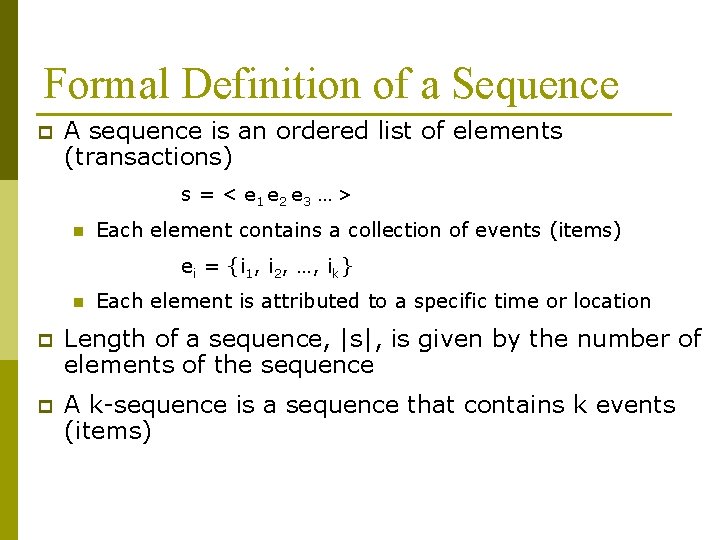

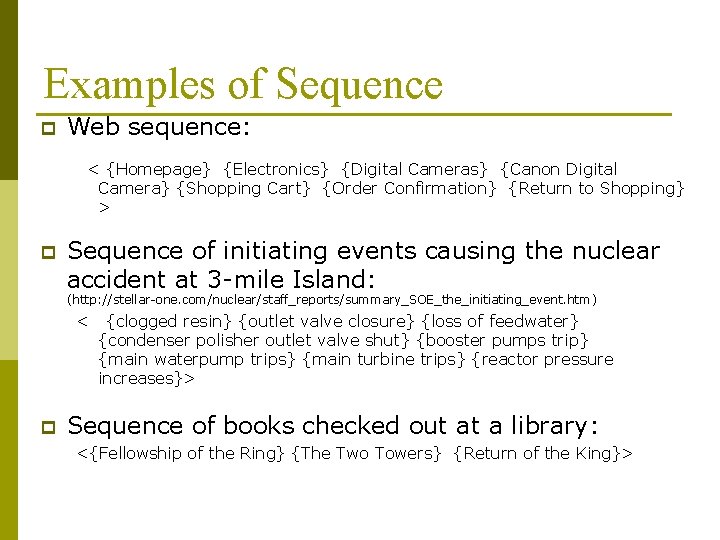

Formal Definition of a Sequence p A sequence is an ordered list of elements (transactions) s = < e 1 e 2 e 3 … > n Each element contains a collection of events (items) ei = {i 1, i 2, …, ik} n Each element is attributed to a specific time or location p Length of a sequence, |s|, is given by the number of elements of the sequence p A k-sequence is a sequence that contains k events (items)

Examples of Sequence p Web sequence: < {Homepage} {Electronics} {Digital Cameras} {Canon Digital Camera} {Shopping Cart} {Order Confirmation} {Return to Shopping} > p Sequence of initiating events causing the nuclear accident at 3 -mile Island: (http: //stellar-one. com/nuclear/staff_reports/summary_SOE_the_initiating_event. htm ) < p {clogged resin} {outlet valve closure} {loss of feedwater} {condenser polisher outlet valve shut} {booster pumps trip} {main waterpump trips} {main turbine trips} {reactor pressure increases}> Sequence of books checked out at a library: <{Fellowship of the Ring} {The Two Towers} {Return of the King}>

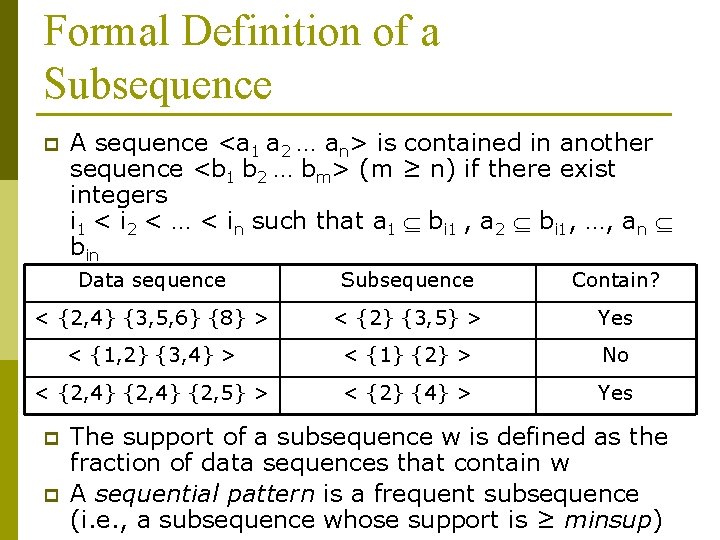

Formal Definition of a Subsequence p A sequence <a 1 a 2 … an> is contained in another sequence <b 1 b 2 … bm> (m ≥ n) if there exist integers i 1 < i 2 < … < in such that a 1 bi 1 , a 2 bi 1, …, an bin Data sequence Subsequence Contain? < {2, 4} {3, 5, 6} {8} > < {2} {3, 5} > Yes < {1, 2} {3, 4} > < {1} {2} > No < {2, 4} {2, 5} > < {2} {4} > Yes p p The support of a subsequence w is defined as the fraction of data sequences that contain w A sequential pattern is a frequent subsequence (i. e. , a subsequence whose support is ≥ minsup)

Sequential Pattern Mining: Definition p Given: n n p a database of sequences a user-specified minimum support threshold, minsup Task: n Find all subsequences with support ≥ minsup

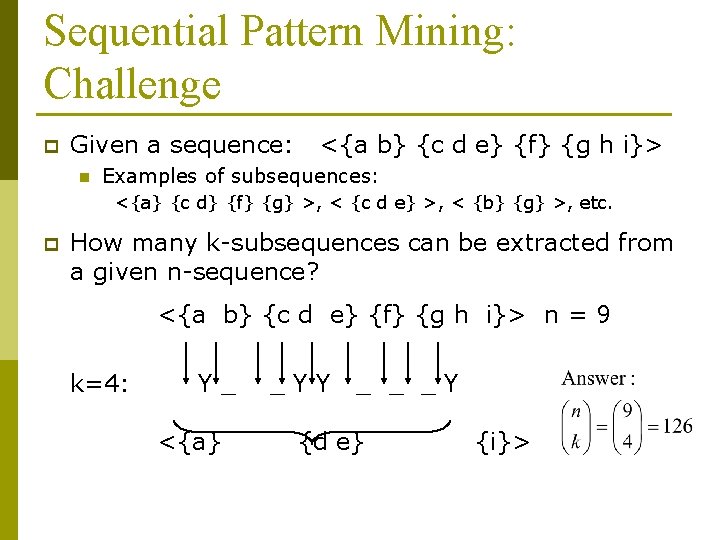

Sequential Pattern Mining: Challenge p Given a sequence: n <{a b} {c d e} {f} {g h i}> Examples of subsequences: <{a} {c d} {f} {g} >, < {c d e} >, < {b} {g} >, etc. p How many k-subsequences can be extracted from a given n-sequence? <{a b} {c d e} {f} {g h i}> n = 9 k=4: Y_ <{a} _YY _ _ _Y {d e} {i}>

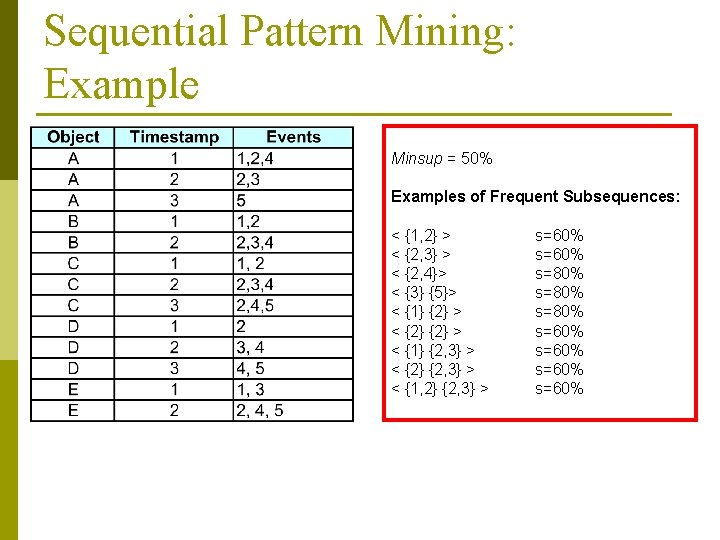

Sequential Pattern Mining: Example Minsup = 50% Examples of Frequent Subsequences: < {1, 2} > < {2, 3} > < {2, 4}> < {3} {5}> < {1} {2} > < {1} {2, 3} > < {2} {2, 3} > < {1, 2} {2, 3} > s=60% s=80% s=60%

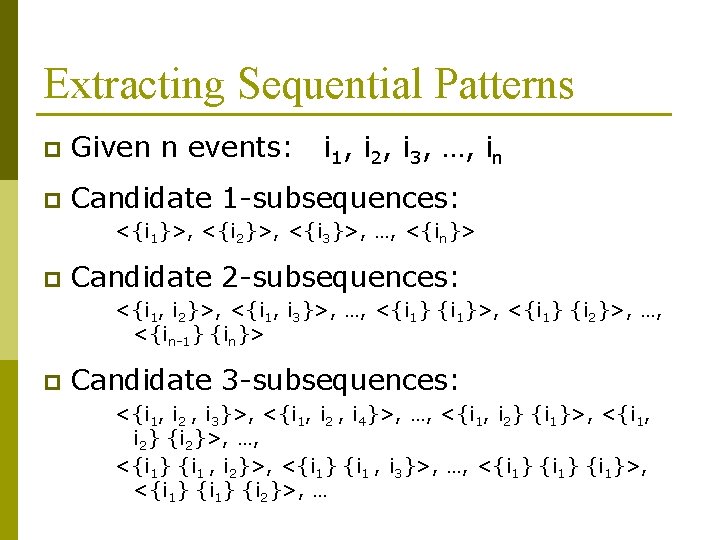

Extracting Sequential Patterns p Given n events: i 1, i 2, i 3, …, in p Candidate 1 -subsequences: <{i 1}>, <{i 2}>, <{i 3}>, …, <{in}> p Candidate 2 -subsequences: <{i 1, i 2}>, <{i 1, i 3}>, …, <{i 1}>, <{i 1} {i 2}>, …, <{in-1} {in}> p Candidate 3 -subsequences: <{i 1, i 2 , i 3}>, <{i 1, i 2 , i 4}>, …, <{i 1, i 2} {i 1}>, <{i 1, i 2} {i 2}>, …, <{i 1} {i 1 , i 2}>, <{i 1} {i 1 , i 3}>, …, <{i 1}>, <{i 1} {i 2}>, …

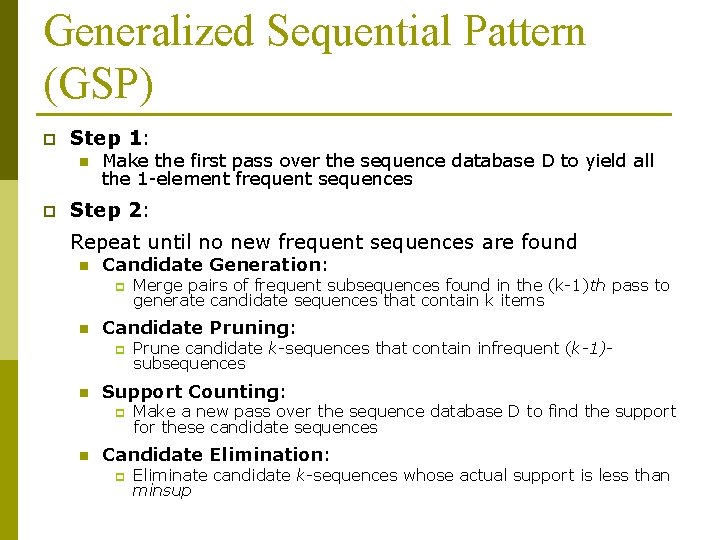

Generalized Sequential Pattern (GSP) p Step 1: n p Make the first pass over the sequence database D to yield all the 1 -element frequent sequences Step 2: Repeat until no new frequent sequences are found n Candidate Generation: p n Candidate Pruning: p n Prune candidate k-sequences that contain infrequent (k-1)subsequences Support Counting: p n Merge pairs of frequent subsequences found in the (k-1)th pass to generate candidate sequences that contain k items Make a new pass over the sequence database D to find the support for these candidate sequences Candidate Elimination: p Eliminate candidate k-sequences whose actual support is less than minsup

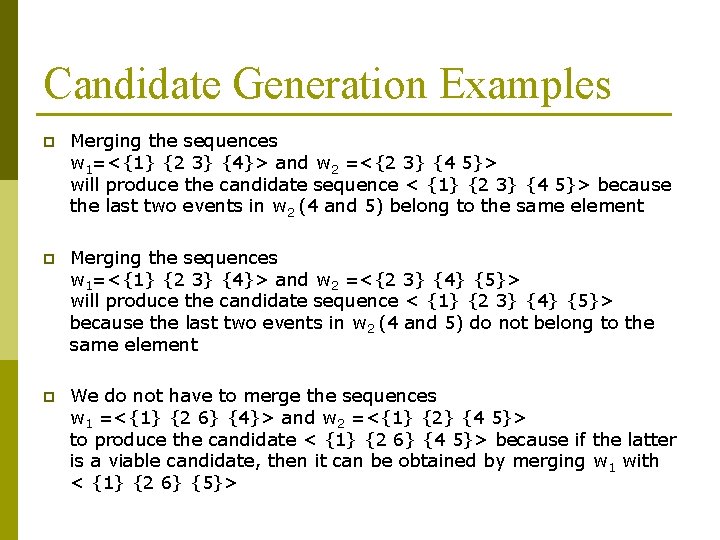

Candidate Generation Examples p Merging the sequences w 1=<{1} {2 3} {4}> and w 2 =<{2 3} {4 5}> will produce the candidate sequence < {1} {2 3} {4 5}> because the last two events in w 2 (4 and 5) belong to the same element p Merging the sequences w 1=<{1} {2 3} {4}> and w 2 =<{2 3} {4} {5}> will produce the candidate sequence < {1} {2 3} {4} {5}> because the last two events in w 2 (4 and 5) do not belong to the same element p We do not have to merge the sequences w 1 =<{1} {2 6} {4}> and w 2 =<{1} {2} {4 5}> to produce the candidate < {1} {2 6} {4 5}> because if the latter is a viable candidate, then it can be obtained by merging w 1 with < {1} {2 6} {5}>

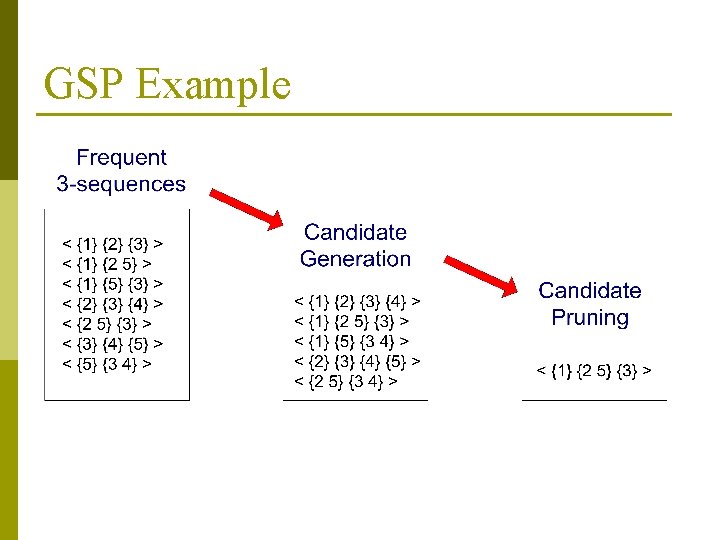

GSP Example

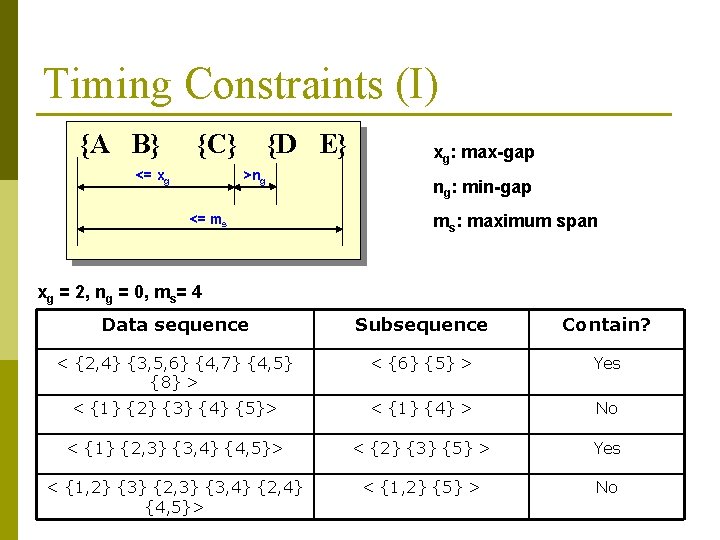

Timing Constraints (I) {A B} {C} <= xg {D E} >ng <= ms xg: max-gap ng: min-gap ms: maximum span xg = 2, ng = 0, ms= 4 Data sequence Subsequence Contain? < {2, 4} {3, 5, 6} {4, 7} {4, 5} {8} > < {6} {5} > Yes < {1} {2} {3} {4} {5}> < {1} {4} > No < {1} {2, 3} {3, 4} {4, 5}> < {2} {3} {5} > Yes < {1, 2} {3} {2, 3} {3, 4} {2, 4} {4, 5}> < {1, 2} {5} > No

Mining Sequential Patterns with Timing Constraints p Approach 1: n n p Mine sequential patterns without timing constraints Postprocess the discovered patterns Approach 2: n n Modify GSP to directly prune candidates that violate timing constraints Question: p Does Apriori principle still hold?

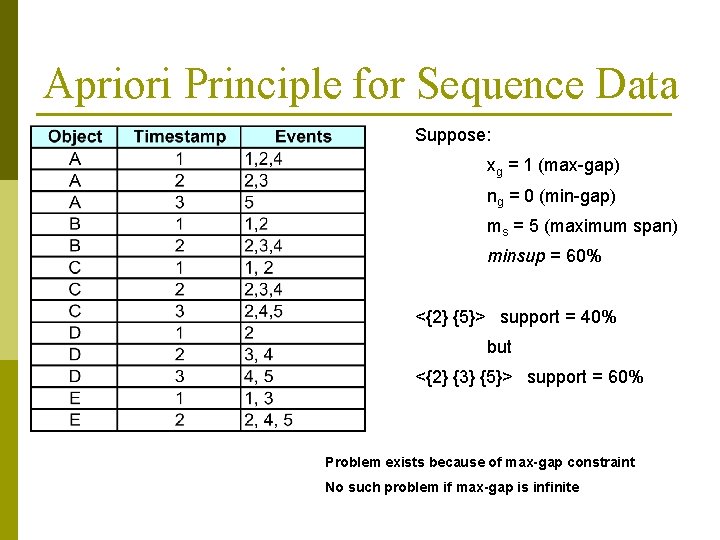

Apriori Principle for Sequence Data Suppose: xg = 1 (max-gap) ng = 0 (min-gap) ms = 5 (maximum span) minsup = 60% <{2} {5}> support = 40% but <{2} {3} {5}> support = 60% Problem exists because of max-gap constraint No such problem if max-gap is infinite

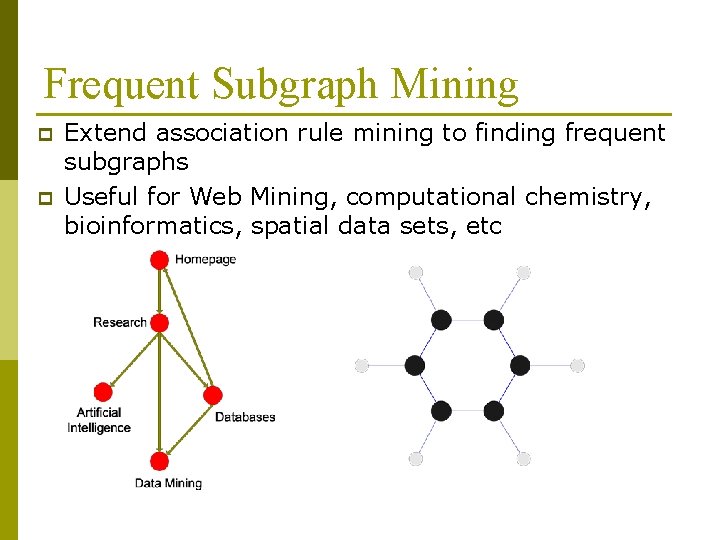

Frequent Subgraph Mining p p Extend association rule mining to finding frequent subgraphs Useful for Web Mining, computational chemistry, bioinformatics, spatial data sets, etc

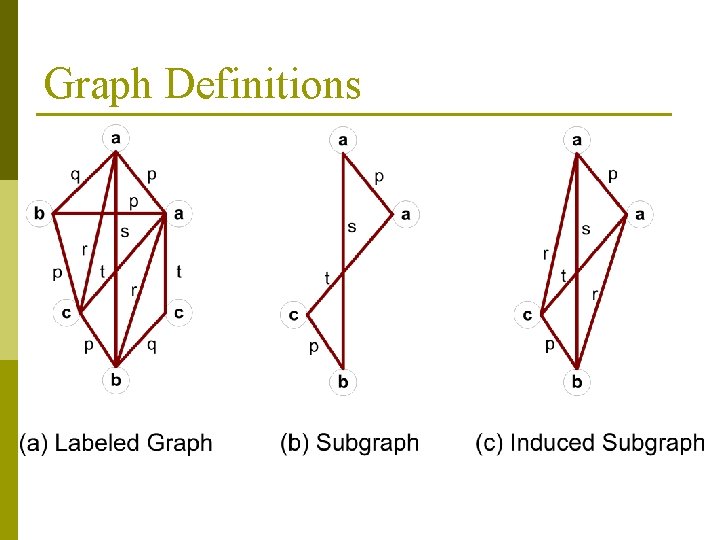

Graph Definitions

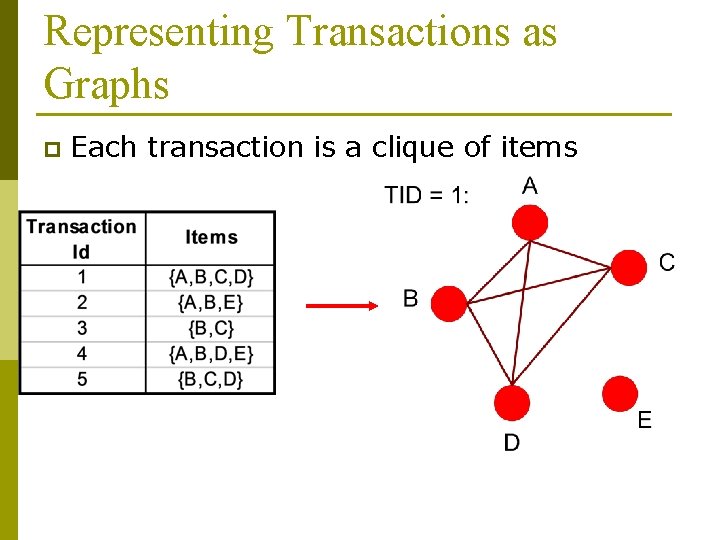

Representing Transactions as Graphs p Each transaction is a clique of items

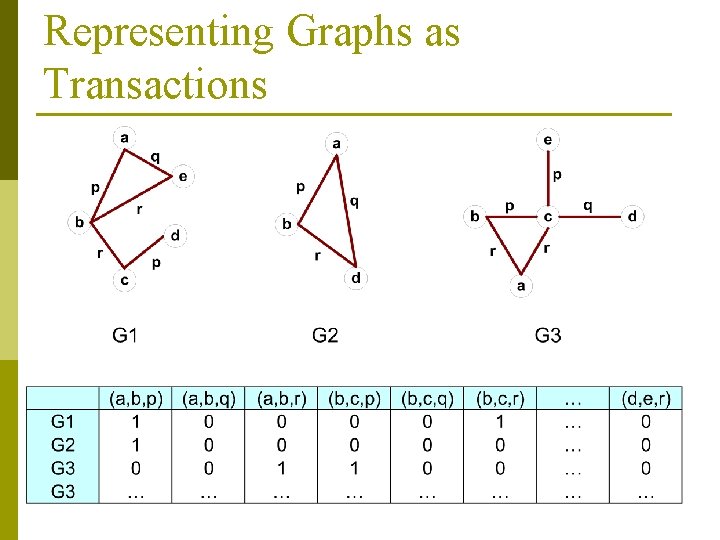

Representing Graphs as Transactions

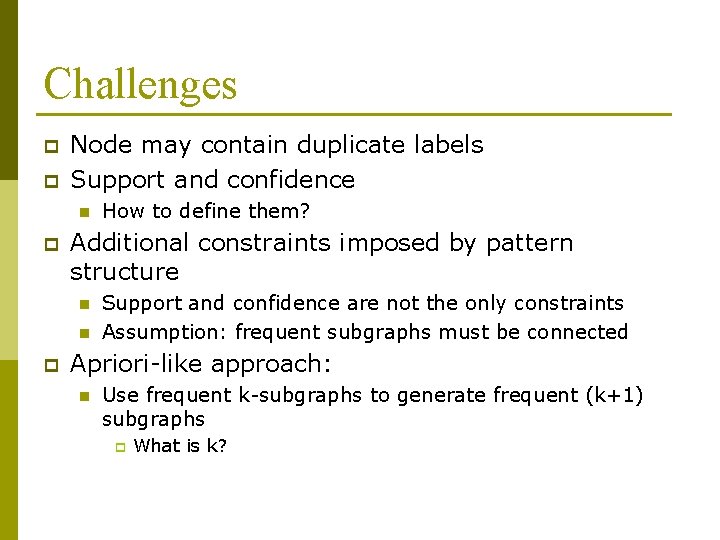

Challenges p p Node may contain duplicate labels Support and confidence n p Additional constraints imposed by pattern structure n n p How to define them? Support and confidence are not the only constraints Assumption: frequent subgraphs must be connected Apriori-like approach: n Use frequent k-subgraphs to generate frequent (k+1) subgraphs p What is k?

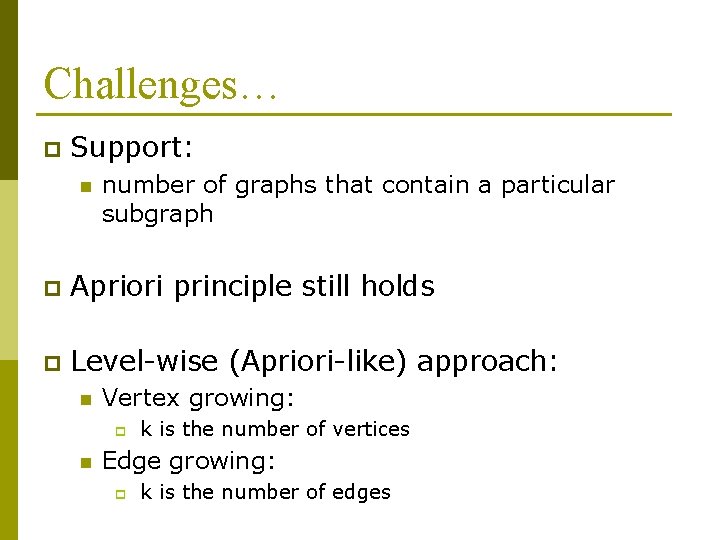

Challenges… p Support: n number of graphs that contain a particular subgraph p Apriori principle still holds p Level-wise (Apriori-like) approach: n Vertex growing: p n k is the number of vertices Edge growing: p k is the number of edges

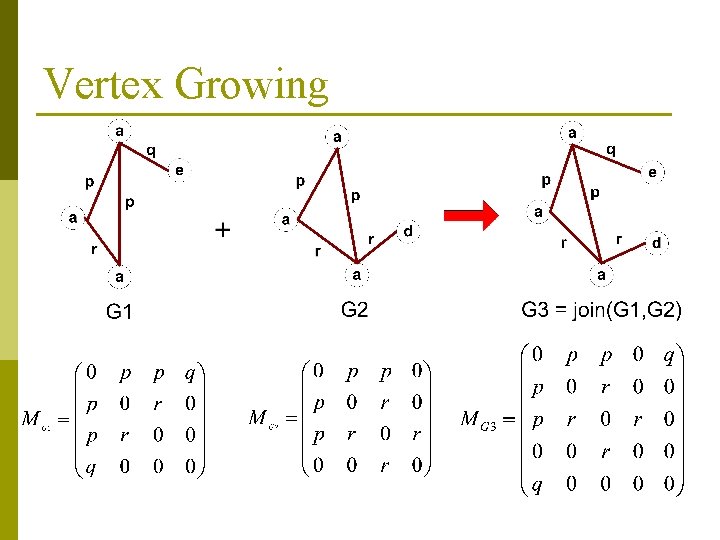

Vertex Growing

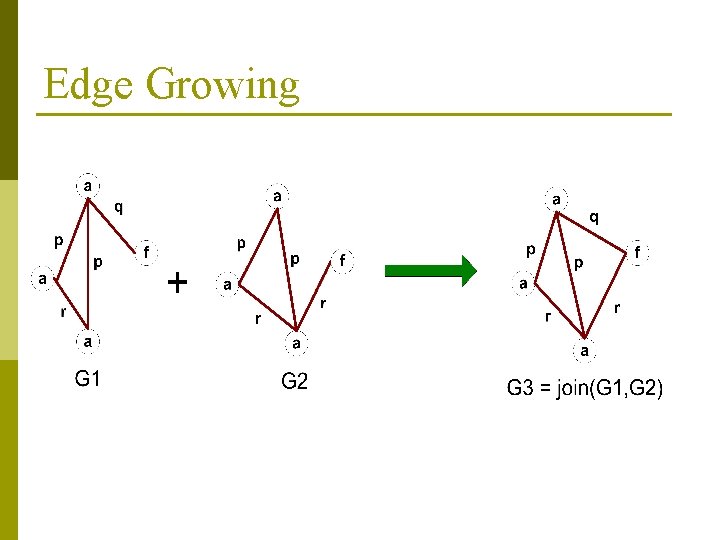

Edge Growing

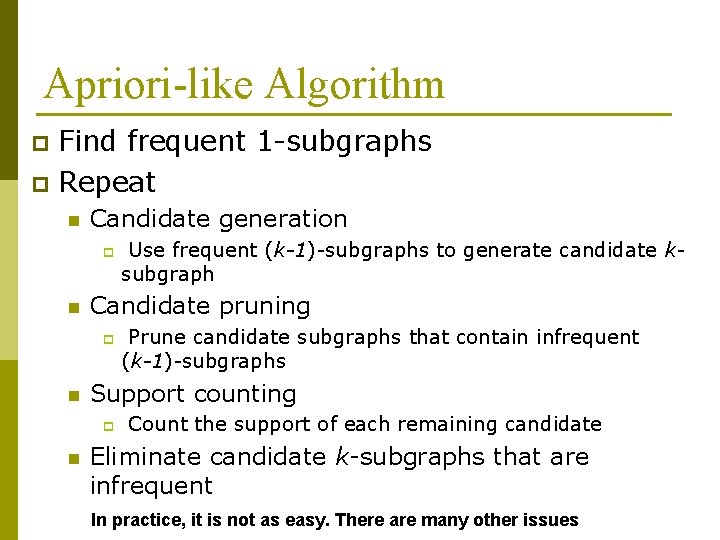

Apriori-like Algorithm Find frequent 1 -subgraphs p Repeat p n Candidate generation p n Candidate pruning p n Prune candidate subgraphs that contain infrequent (k-1)-subgraphs Support counting p n Use frequent (k-1)-subgraphs to generate candidate ksubgraph Count the support of each remaining candidate Eliminate candidate k-subgraphs that are infrequent In practice, it is not as easy. There are many other issues

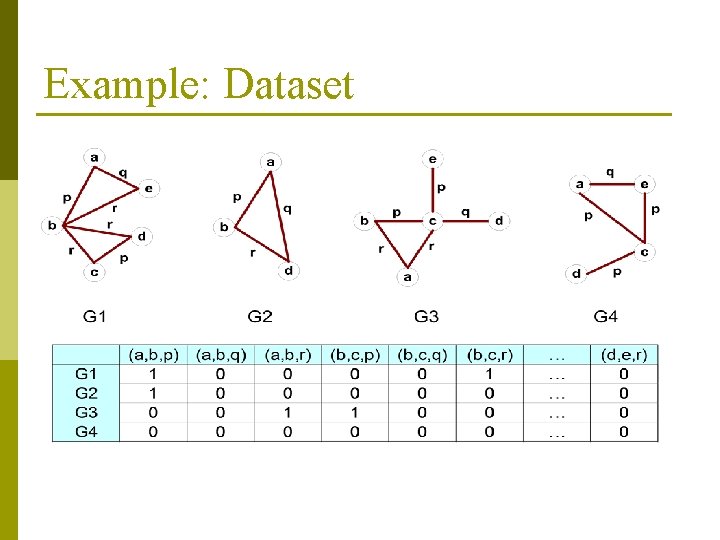

Example: Dataset

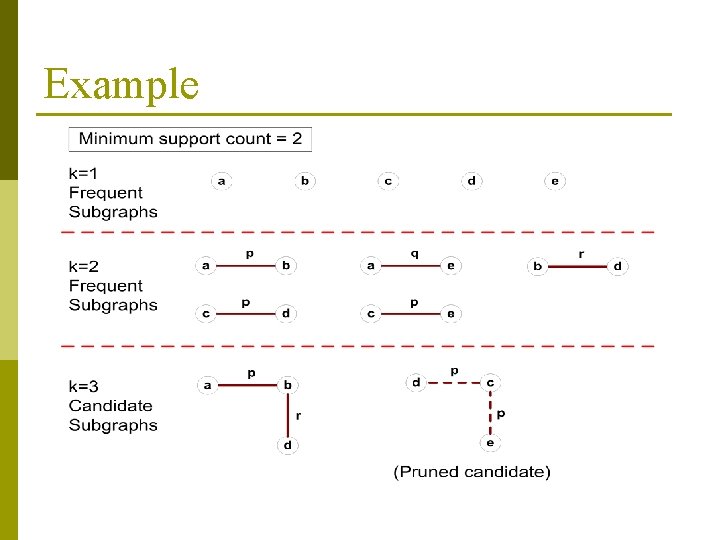

Example

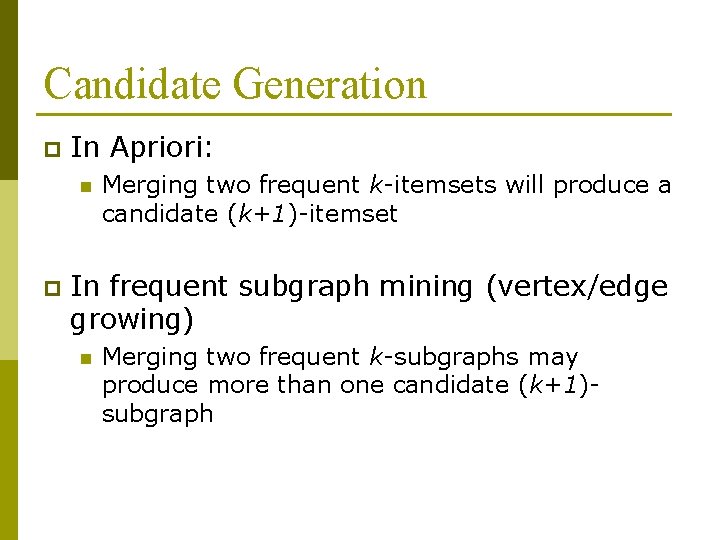

Candidate Generation p In Apriori: n p Merging two frequent k-itemsets will produce a candidate (k+1)-itemset In frequent subgraph mining (vertex/edge growing) n Merging two frequent k-subgraphs may produce more than one candidate (k+1)subgraph

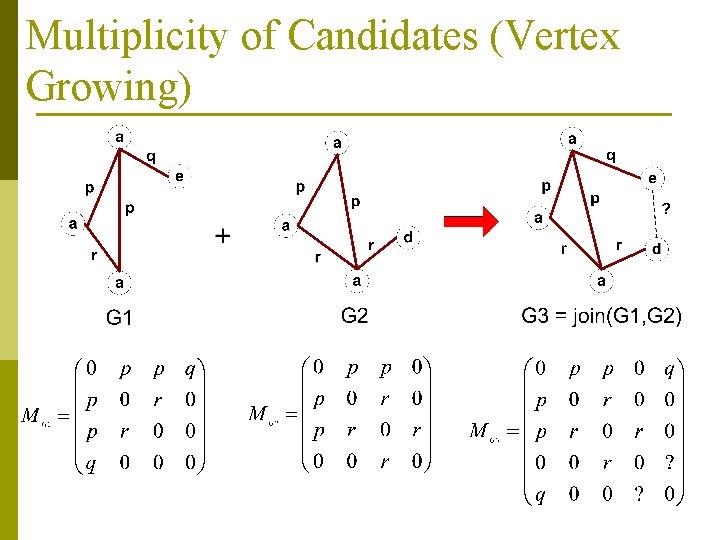

Multiplicity of Candidates (Vertex Growing)

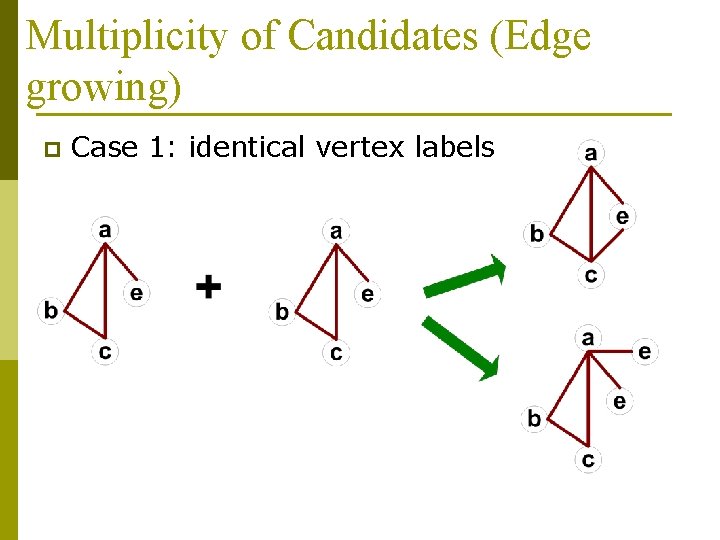

Multiplicity of Candidates (Edge growing) p Case 1: identical vertex labels

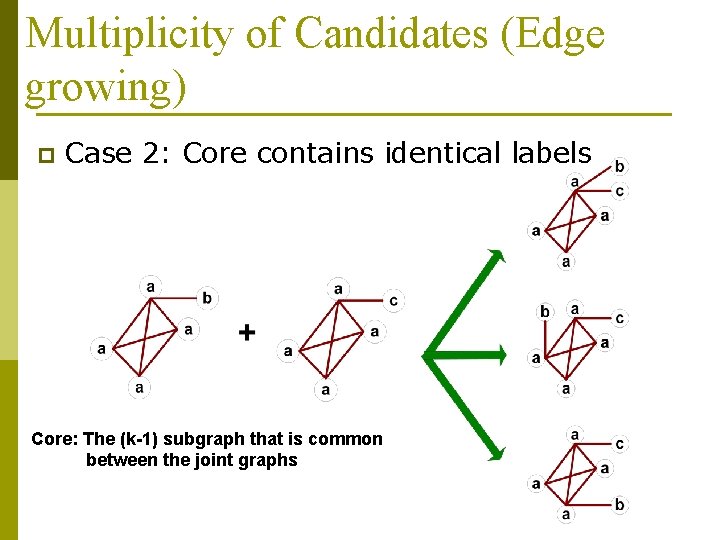

Multiplicity of Candidates (Edge growing) p Case 2: Core contains identical labels Core: The (k-1) subgraph that is common between the joint graphs

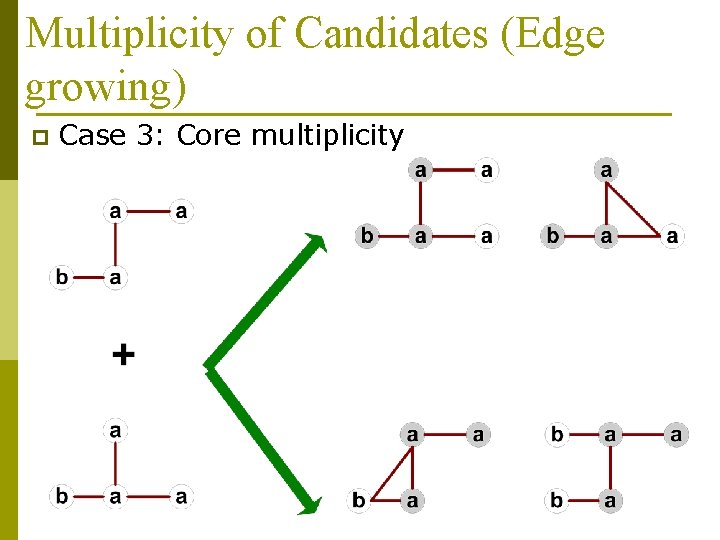

Multiplicity of Candidates (Edge growing) p Case 3: Core multiplicity

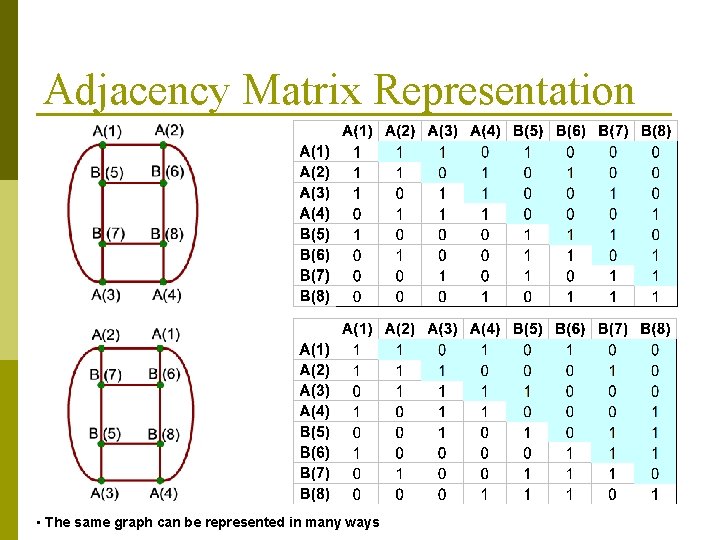

Adjacency Matrix Representation • The same graph can be represented in many ways

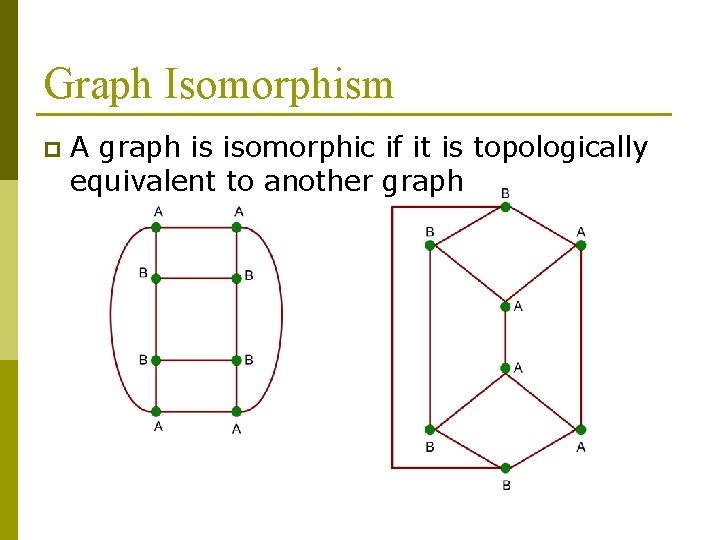

Graph Isomorphism p A graph is isomorphic if it is topologically equivalent to another graph

Graph Isomorphism p Test for graph isomorphism is needed: n During candidate generation step, to determine whether a candidate has been generated n During candidate pruning step, to check whether its (k-1)-subgraphs are frequent n During candidate counting, to check whether a candidate is contained within another graph

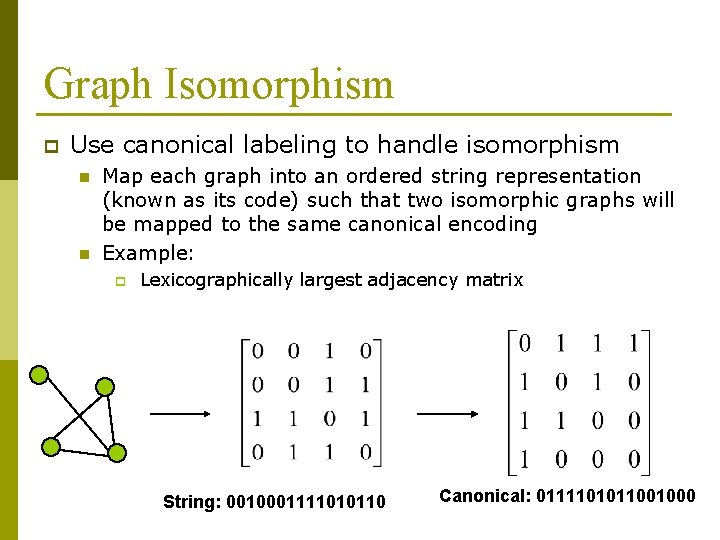

Graph Isomorphism p Use canonical labeling to handle isomorphism n n Map each graph into an ordered string representation (known as its code) such that two isomorphic graphs will be mapped to the same canonical encoding Example: p Lexicographically largest adjacency matrix String: 0010001111010110 Canonical: 0111101011001000

Frequent Subgraph Mining Approaches Apriori-based approach n AGM/Ac. GM: Inokuchi, et al. (PKDD’ 00) n FSG: Kuramochi and Karypis (ICDM’ 01) n PATH#: Vanetik and Gudes (ICDM’ 02, ICDM’ 04) n FFSM: Huan, et al. (ICDM’ 03) p Pattern growth approach n Mo. Fa, Borgelt and Berthold (ICDM’ 02) n g. Span: Yan and Han (ICDM’ 02) n Gaston: Nijssen and Kok (KDD’ 04) p

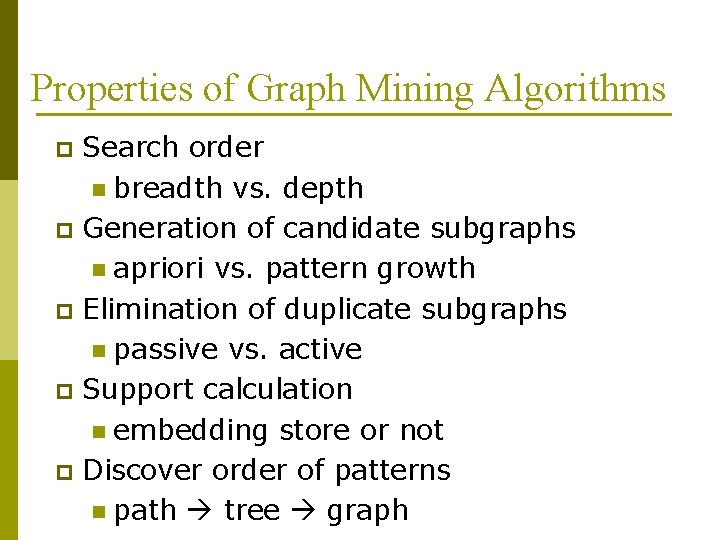

Properties of Graph Mining Algorithms Search order n breadth vs. depth p Generation of candidate subgraphs n apriori vs. pattern growth p Elimination of duplicate subgraphs n passive vs. active p Support calculation n embedding store or not p Discover order of patterns n path tree graph p

Mining Frequent Subgraphs in a Single Graph p A large graph is more interesting n p Software, social network, Internet, biological networks What are the frequent subgraphs in a single graph? n n How to define frequency concept? Apriori property

Challenge Can we define and detect building blocks of networks? p We use the notion of motifs from biology p Motifs: p n n p recurring sequences more than random sequences Here, we extend this to the level of networks.

Network motifs: recurring patterns that occur significantly more than in randomized nets p Do motifs have specific roles in the network? p Many possible distinct subgraphs

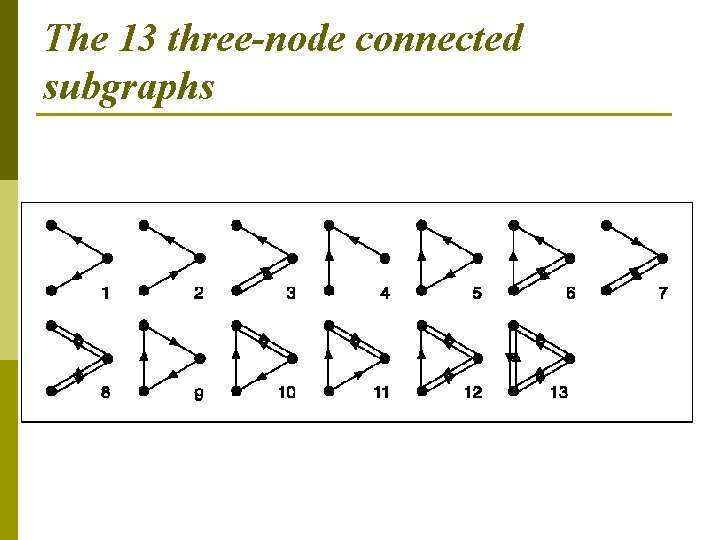

The 13 three-node connected subgraphs

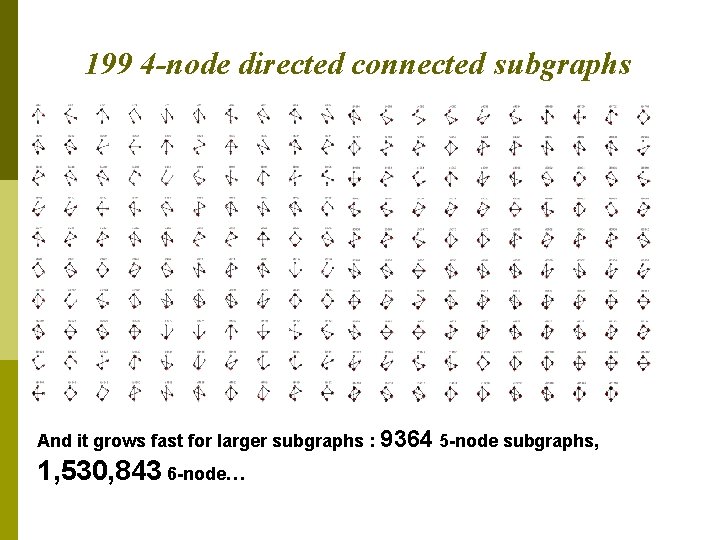

199 4 -node directed connected subgraphs And it grows fast for larger subgraphs : 9364 5 -node subgraphs, 1, 530, 843 6 -node…

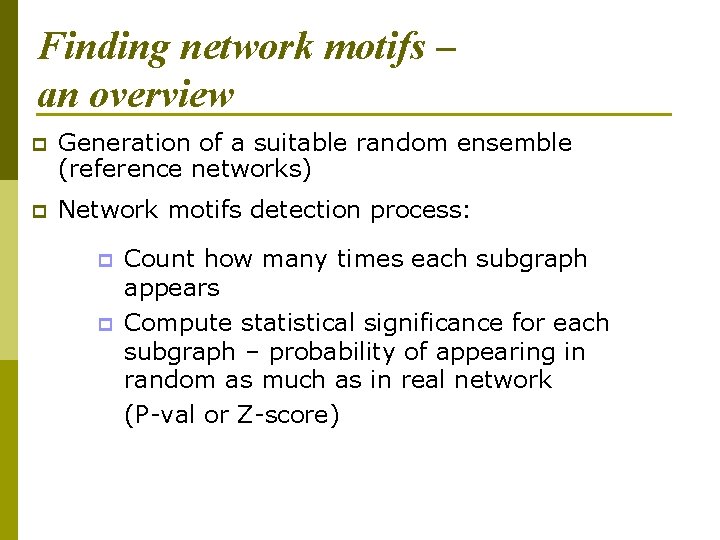

Finding network motifs – an overview p Generation of a suitable random ensemble (reference networks) p Network motifs detection process: p p Count how many times each subgraph appears Compute statistical significance for each subgraph – probability of appearing in random as much as in real network (P-val or Z-score)

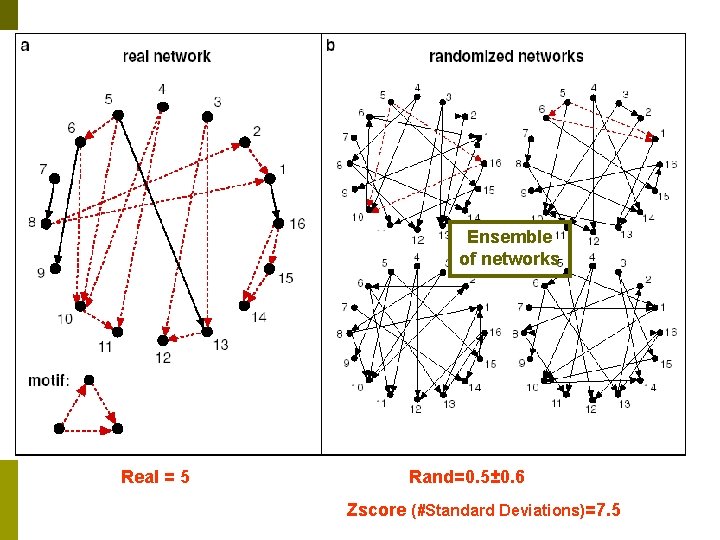

Ensemble of networks Real = 5 Rand=0. 5± 0. 6 Zscore (#Standard Deviations)=7. 5

References p Homepage for Mining structured data n http: //hms. liacs. nl/graphs. html Milo, R. Shen-Orr, S. Itzkovitz, S. Kashtan, N. et. al. Network Motifs: Simple Building Blocks of Complex Networks, Science (2002). p Michihiro Kuramochi, George Karypis, Finding Frequent Patterns in a Large Sparse Graph (2003), SDM’ 03. p

- Slides: 61