Advance topics in Hidden Markov Model Posterior probabilities

Advance topics in Hidden Markov Model • Posterior probabilities • Parameter estimation, algorithm Baum-Welch Leonard E. Baum Lloyd R. Welch

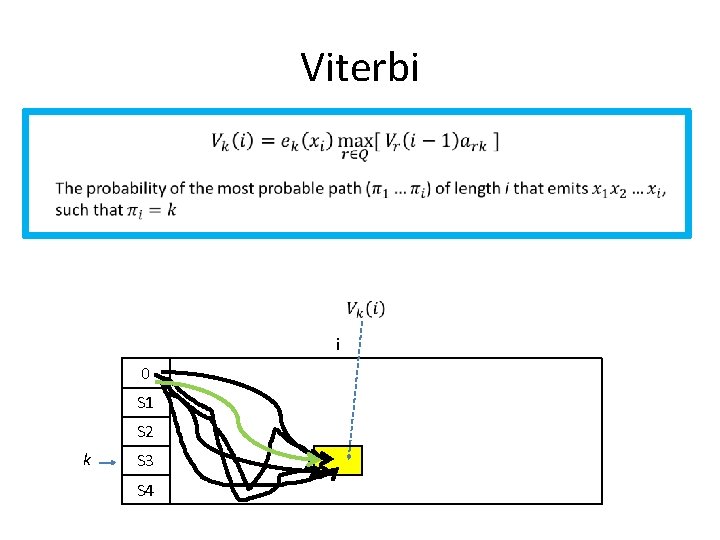

Viterbi i 0 S 1 S 2 k S 3 S 4

Viterbi - example 6 0 1 F 0 1/12 L 0 1/4 2 6 0. 08 0. 01375 0. 00226 0. 02 0. 08

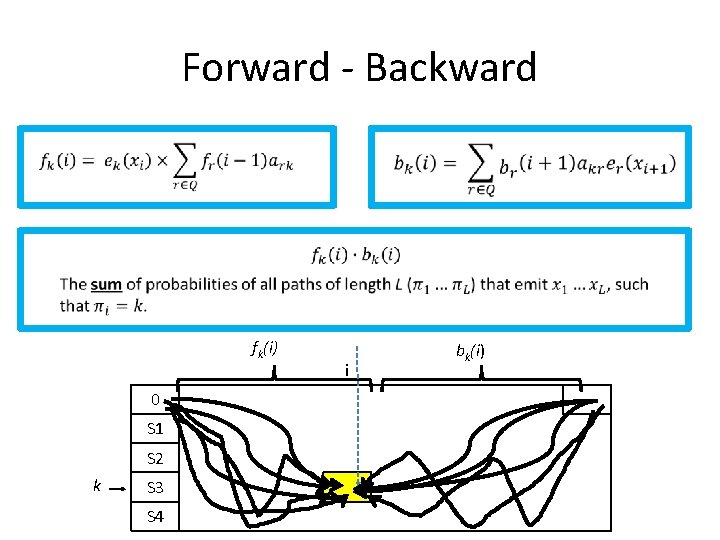

Forward - Backward Viterbi is not always useful because it relies only on the single most probable path. fk(i) 0 S 1 S 2 k S 3 S 4 i bk(i)

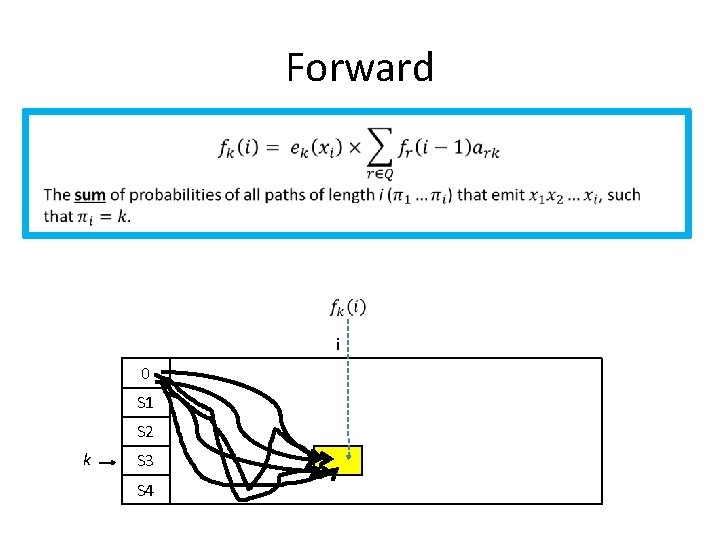

Forward i 0 S 1 S 2 k S 3 S 4

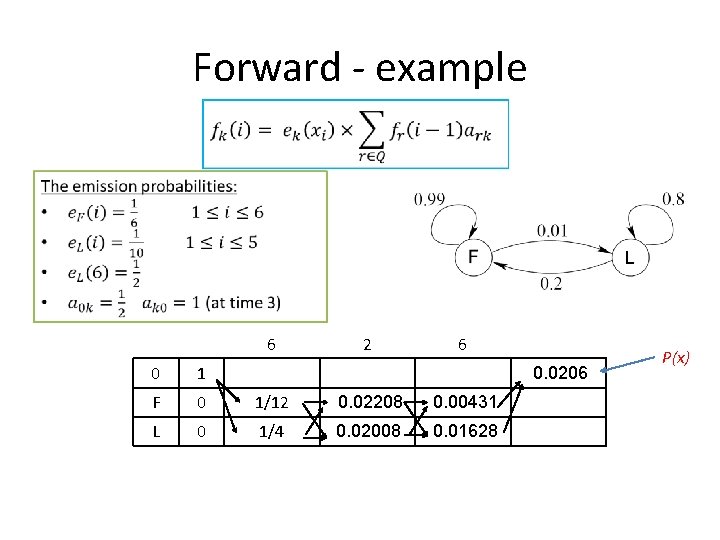

Forward - example 6 2 6 0 1 F 0 1/12 0. 02208 0. 00431 L 0 1/4 0. 02008 0. 01628 0. 0206 P(x)

Backward i 0 S 1 S 2 k S 3 S 4

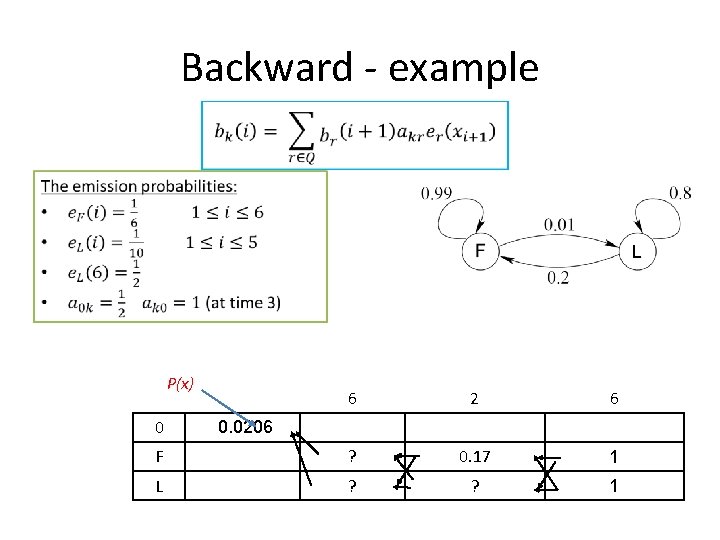

Backward - example P(x) 6 2 6 F ? 0. 17 1 L ? ? 1 0 0. 0206

Forward - Backward fk(i) 0 S 1 S 2 k S 3 S 4 i bk(i)

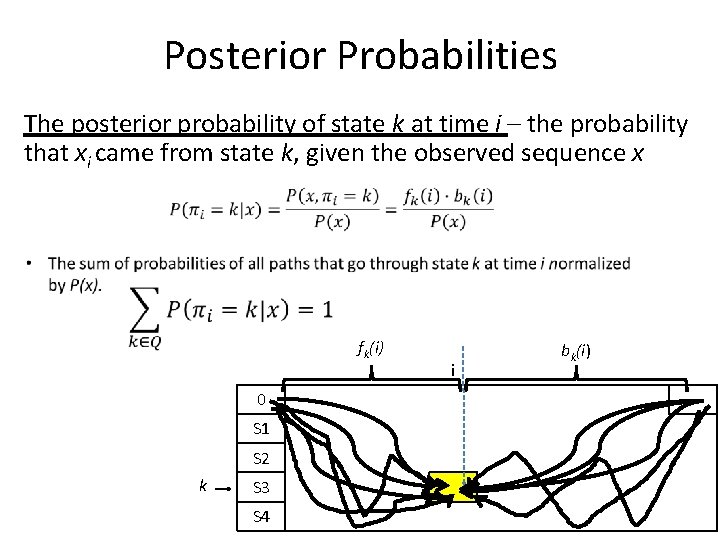

Posterior Probabilities The posterior probability of state k at time i – the probability that xi came from state k, given the observed sequence x fk(i) 0 S 1 S 2 k S 3 S 4 i bk(i)

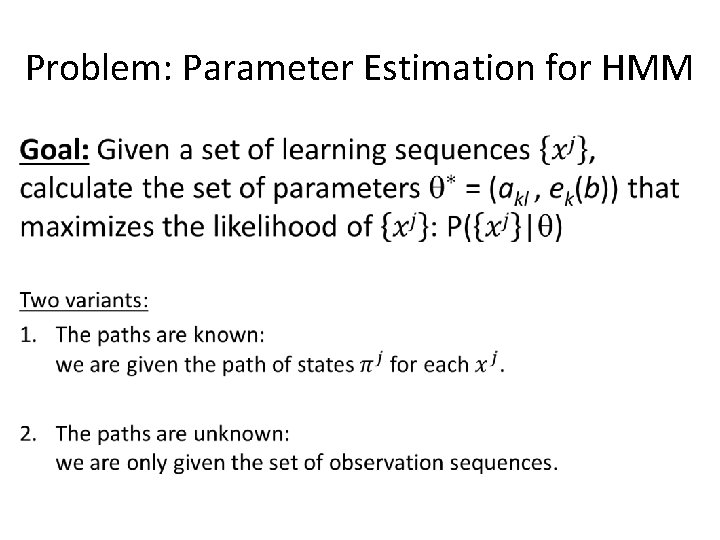

Problem: Parameter Estimation for HMM •

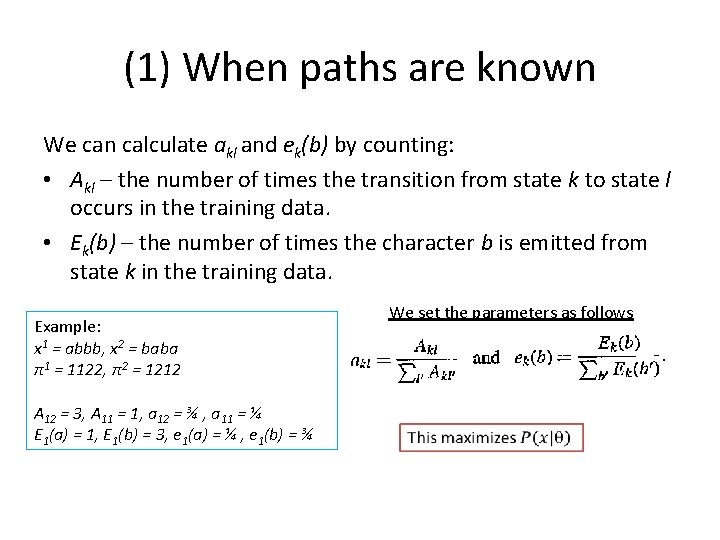

(1) When paths are known We can calculate akl and ek(b) by counting: • Akl – the number of times the transition from state k to state l occurs in the training data. • Ek(b) – the number of times the character b is emitted from state k in the training data. Example: x 1 = abbb, x 2 = baba π1 = 1122, π2 = 1212 A 12 = 3, A 11 = 1, a 12 = ¾ , a 11 = ¼ E 1(a) = 1, E 1(b) = 3, e 1(a) = ¼ , e 1(b) = ¾ We set the parameters as follows

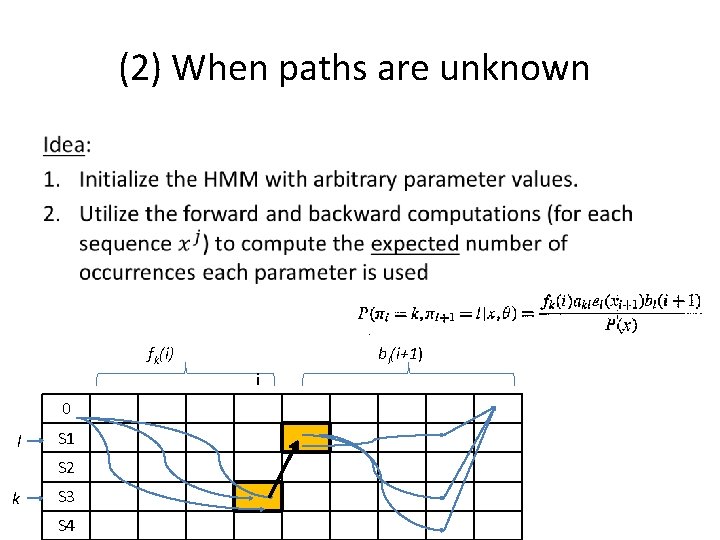

(2) When paths are unknown • fk(i) bl(i+1) i 0 l S 1 S 2 k S 3 S 4

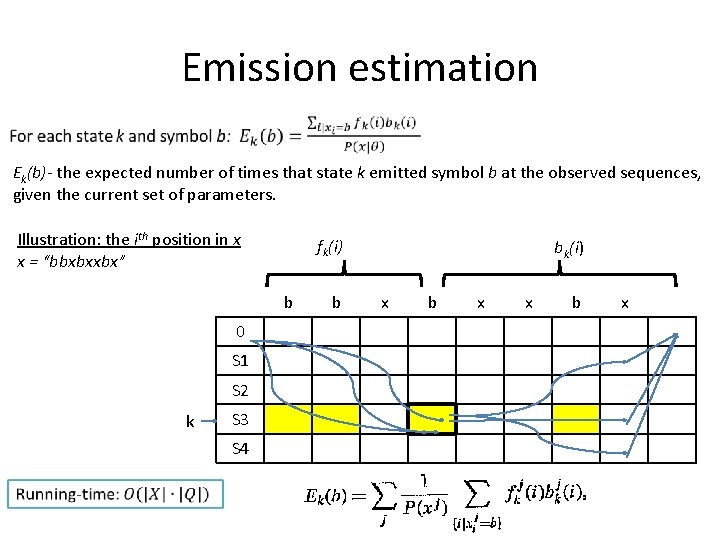

Emission estimation Ek(b)- the expected number of times that state k emitted symbol b at the observed sequences, given the current set of parameters. Illustration: the ith position in x x = “bbxbxxbx” fk(i) b 0 S 1 S 2 k S 3 S 4 b bk(i) x b x

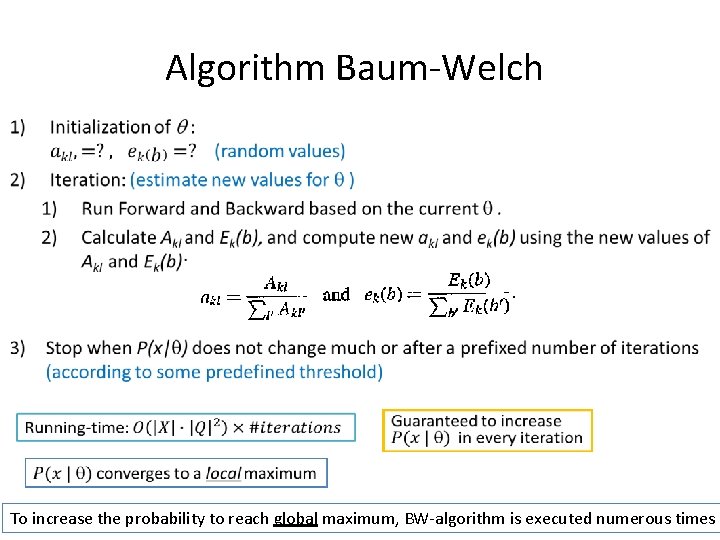

Algorithm Baum-Welch • To increase the probability to reach global maximum, BW-algorithm is executed numerous times

- Slides: 15