ADIC CASPUR CERN Data Direct ENEA IBM RZ

ADIC / CASPUR / CERN / Data. Direct / ENEA / IBM / RZ Garching / SGI New results from CASPUR Storage Lab Andrei Maslennikov CASPUR Consortium May 2004 A. Maslennikov - May 2004 - SLAB update

Participated: ADIC Software : E. Eastman CASPUR : A. Maslennikov(*), M. Mililotti, G. Palumbo CERN : C. Curran, J. Garcia Reyero, M. Gug, A. Horvath, J. Iven, P. Kelemen, G. Lee, I. Makhlyueva, B. Panzer-Steindel, Data. Direct Networks R. Többicke, L. Vidak : L. Thiers ENEA : G. Bracco, S. Pecoraro IBM : F. Conti, S. De Santis, S. Fini RZ Garching SGI : H. Reuter : L. Bagnaschi, P. Barbieri, A. Mattioli (*) Project Coordinator A. Maslennikov - May 2004 - SLAB update 2

Sponsors for these test sessions: ACAL Storage Networking : Loaned a 16 -port Brocade switch ADIC Soiftware : Provided the Stor. Next file system product, actively participated in tests Data. Direct Networks : Loaned an S 2 A 8000 disk system, actively participated in tests E 4 Computer Engineering : Loaned 10 assembled biprocessor nodes Emulex Corporation : Loaned 16 fibre channel HBAs IBM : Loaned a FASTt 900 disk system and units, Infortrend-Europe SANFS product complete with 2 MDS actively participated in tests : Sold 4 Eon. Stor disk systems at discount price INTEL : Donated A. Maslennikov - May 10 2004 motherboards - SLAB update and 20 CPUs 3

Contents • Goals • Components under test • Measurements: - SATA/FC systems - SAN File Systems - AFS Speedup - Lustre (preliminary) - LTO 2 • Final remarks A. Maslennikov - May 2004 - SLAB update 4

Goals for these test series 1. Performance of low-cost SATA/FC disk systems 2. Performance of SAN File Systems 3. AFS Speedup options 4. Lustre 5. Performance of LTO-2 tape drive A. Maslennikov - May 2004 - SLAB update 5

Disk systems: Components 4 x Infortrend Eon. Stor A 16 F-G 1 A 2 16 bay SATA-to-FC arrays: Maxtor Maxline Plus II 250 GB SATA disks (7200 rpm) Dual Fibre Channel outlet at 2 Gbit Cache: 1 GB 2 x IBM FASt. T 900 dual controller arrays with SATA expansion units: 4 x EXP 100 expansion units with 14 Maxtor SATA disks of the same type Dual Fibre Channel outlet at 2 Gbit Cache: 1 GB 1 x Stor. Case Info. Station 12 bay array: same Maxtor SATA disks Dual Fibre Channel outlet at 2 Gbit Cache: 256 MB 1 x Data. Direct S 2 A 8000 System: 2 controllers with 74 FC disks of 146 GB 8 Fibre Channel outlets at 2 Gbit Cache: 2. 56 GB A. Maslennikov - May 2004 - SLAB update 6

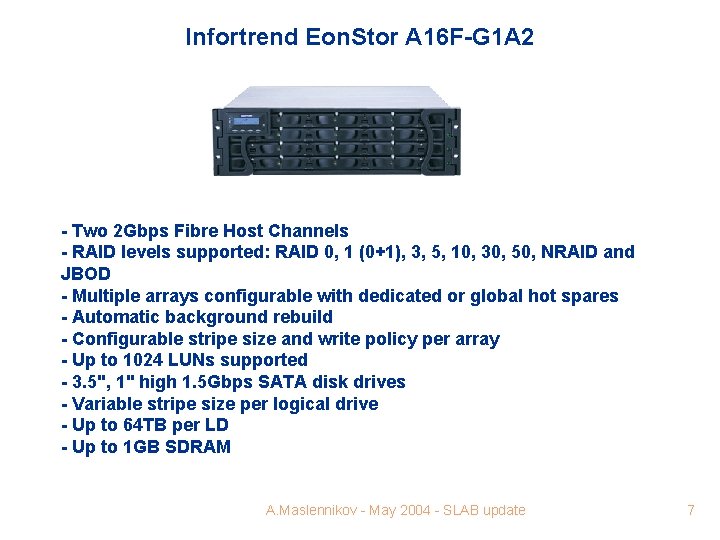

Infortrend Eon. Stor A 16 F-G 1 A 2 - Two 2 Gbps Fibre Host Channels - RAID levels supported: RAID 0, 1 (0+1), 3, 5, 10, 30, 50, NRAID and JBOD - Multiple arrays configurable with dedicated or global hot spares - Automatic background rebuild - Configurable stripe size and write policy per array - Up to 1024 LUNs supported - 3. 5", 1" high 1. 5 Gbps SATA disk drives - Variable stripe size per logical drive - Up to 64 TB per LD - Up to 1 GB SDRAM A. Maslennikov - May 2004 - SLAB update 7

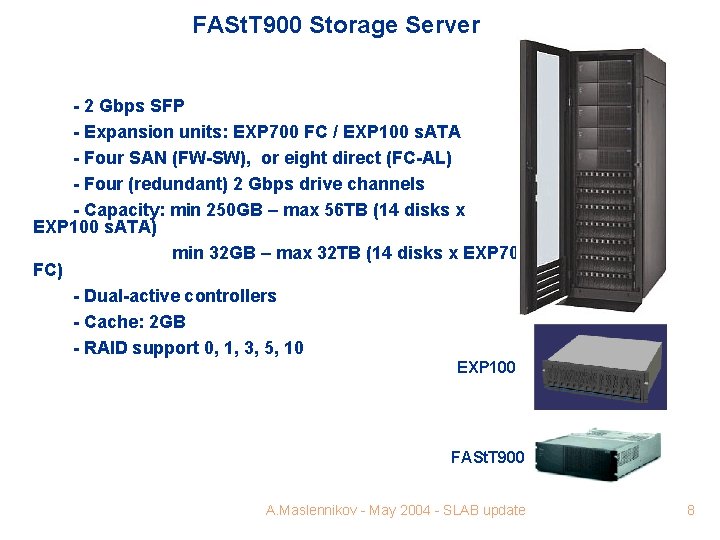

FASt. T 900 Storage Server - 2 Gbps SFP - Expansion units: EXP 700 FC / EXP 100 s. ATA - Four SAN (FW-SW), or eight direct (FC-AL) - Four (redundant) 2 Gbps drive channels - Capacity: min 250 GB – max 56 TB (14 disks x EXP 100 s. ATA) min 32 GB – max 32 TB (14 disks x EXP 700 FC) - Dual-active controllers - Cache: 2 GB - RAID support 0, 1, 3, 5, 10 EXP 100 FASt. T 900 A. Maslennikov - May 2004 - SLAB update 8

STORCase Fibre-to-SATA - SATA and Ultra ATA/133 Drive Interface - 12 hot swappable drives - Switched or FC-AL host connections - RAID levels: 0, 1, 0+1, 3, 5, 30, 50 and JBOD - Dual Fibre 2 Gbps host ports - Support up to 8 arrays and 128 LUNs - Up to 1 GB PC 200 DDR cache memory A. Maslennikov - May 2004 - SLAB update 9

Data. Direct S²A 8000 - Single 2 U S 2 A 8000 with Four 2 Gb/s Ports or Dual 4 U with Eight 2 Gb/s Ports - Up to 1120 Disk Drives; 8192 LUNs supported - 5 TB to 130 TB with FC Disks, 20 TB to 250 TB with SATA disks - Sustained Performance well over 1 GB/s (1. 6 GB/s theoretical) - Full Fibre-Channel Duplex Performance on every port - Power. LUN™ 1 GB/s+ individual LUNs without host-based striping - Up to 20 GB of Cache, LUN-in-Cache Solid State Disk functionality - Real time Any to Any. A. Maslennikov Virtualization - May 2004 - SLAB update - Very fast rebuild rate 10

Components - High-end Linux units for both servers and clients Biprocessor Pentium IV Xeon 2. 4+ GHz, 1 GB RAM Qlogic QLA 2300 2 Gbit or Emulex LP 9 xxx Fibre Channel HBAs - Network 2 x Dell 5224 Gig. E switches - SAN Brocade 3800 switch – 16 ports (test series 1) Qlogic Sanbox 5200 – 32 ports (test series 2) - Tapes 2 x IBM Ultrium LTO 2 (3580 -TD 2, Rev: 36 U 3 ) A. Maslennikov - May 2004 - SLAB update 11

Qlogic SANbox 5200 Stackable Switch - 8, 12 or 16 auto-detecting 2 Gb/1 Gb device ports with 4 -port incremental upgrade - Stacking of up to 4 units for 64 available user ports - Interoperable with all FC SW-2 compliant Fibre Channel switches - Full-fabric, public-loop or switch-to-switch connectivity on 2 Gb or 1 Gb front ports - "No-Wait" routing - guaranteed maximum performance independent of data traffic - Support traffic between switches, servers and storage at up to 10 Gb/s - Low cost: 5200/16 p A. Maslennikov is at least twice less- expensive - May 2004 SLAB update than Brocade 3800/16 p 12

IBM LTO Ultrium 2 Tape Drive Features - 200 GB Native Capacity (400 GB compressed) - 35 MB/s native (70 MB/s compressed) - Read/Write LTO 1 Cartridge - Native 2 Gb FC Interface - Backward read/write with Ultrium 1 cartridge - 64 MB buffer (vs 32 MB buffer in Ultrium 1) - Speed Matching, Channel Calibration - 512 Tracks vs. 384 Tracks in Ultrium 1 - 64 MB Buffer vs. 32 MB in Ultrium 1 - Enhanced Capacity (200 GB) - Enhanced Performance (35 MB/s) - Backward Compatible - Faster Load/Unload Time, Data Access Time, Rewind Time A. Maslennikov - May 2004 - SLAB update 13

SATA / FC Systems A. Maslennikov - May 2004 - SLAB update 14

SATA / FC Systems – hw details Typical array features: - single o dual (active-active) controller - up to 1 GB of Raid Cache - battery to keep the cache afloat during power cuts - 8 through 16 drive slots - cost: 4 -6 KUSD per 12/16 bay unit (Infortrend, Storcase) Case and backplane directly impact on the disks’ lifetime: - protection against inrush currents - protection against the rotational vibration - orientation (H better than V – remark by A. Sansum) Infortrend Eon. Stor: well engineered (removable controller module, lower vibration, H orientation) Storcase: special protection against inrush currents (“soft-start” drive power circuitry), low vibration A. Maslennikov - May 2004 - SLAB update 15

SATA / FC Systems – hw details High capacity ATA/SATA disk drives: - 250 GB (Maxtor, IBM), 400 GB (Hitachi) - RPM: 7200 - improved quality: warranty 3 years, component design lifetime : 5 years CASPUR experience with Maxtor drives: - In 1. 5 years lost 5 drives out of ~100, 2 of which due to power cuts - Factory quality for recent Maxtor Maxline Plus II 250 GB disks: out of 66 disks purchased, 4 were shortly replaced. Others stand the stress very well Learned during this meeting: A. Maslennikov - May 2004 update - RAL annual failure rate is 21 out of- SLAB 920 Maxtor Maxline 16

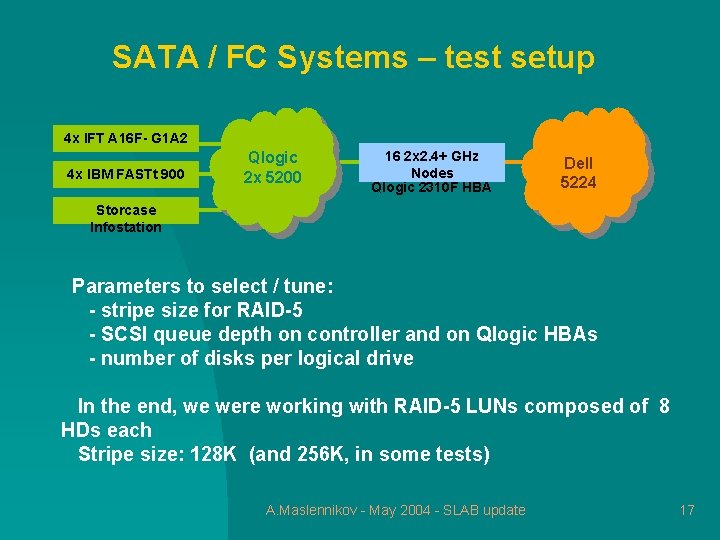

SATA / FC Systems – test setup 4 x IFT A 16 F- G 1 A 2 4 x IBM FASTt 900 Qlogic 2 x 5200 16 2 x 2. 4+ GHz Nodes Qlogic 2310 F HBA Dell 5224 Storcase Infostation Parameters to select / tune: - stripe size for RAID-5 - SCSI queue depth on controller and on Qlogic HBAs - number of disks per logical drive In the end, we were working with RAID-5 LUNs composed of 8 HDs each Stripe size: 128 K (and 256 K, in some tests) A. Maslennikov - May 2004 - SLAB update 17

SATA / FC tests – kernel and fs details Kernel settings: - Kernels: 2. 4. 20 -30. 9 smp, 2. 4. 20 -20. 9. XFS 1. 3. 1 smp - vm. bdflush: “ 2 500 0 0 500 1000 20 10 0” - vm. max(min)-readahead: 256(127) (large streaming writes) 4(3) (random reads with small blksize) File Systems: - EXT 3 (128 k RAID-5 stripe size): fs options: “-m O –j –J size=128 –R stride=32 –T largefile 4” mount options: “data=writeback” - XFS 1. 3. 1 (128 k RAID-5 stripe size): fs options: “-i size=512 –d agsize=4 g, su=128 k, sw=7, unwritten=0 –l su=128 k” mount options: “logbsize=262144, logbufs=8” A. Maslennikov - May 2004 - SLAB update 18

SATA / FC tests – benchmarks used Large serial writes and reads: - “lmdd” from “lmbench” suite: http: //sourceforge. net/projects/lmbench typical invocation: lmdd of=/fs/file bs=1000 k count=8000 fsync=1 Random reads: - Pileup benchmark (Rainer. Toebbicke@cern. ch) designed to emulate the disk activity for multiple data analysis jobs 1) series of 2 GB files are being created in the desination directory 2) these files are then being read in a random way, in many threads A. Maslennikov - May 2004 - SLAB update 19

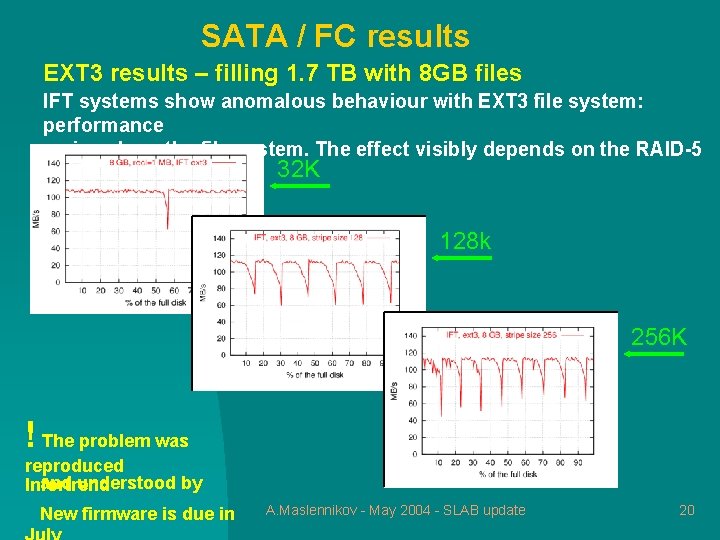

SATA / FC results EXT 3 results – filling 1. 7 TB with 8 GB files IFT systems show anomalous behaviour with EXT 3 file system: performance varies along the file system. The effect visibly depends on the RAID-5 32 K stripe size: 128 k 256 K ! The problem was reproduced and understood by Infortrend New firmware is due in A. Maslennikov - May 2004 - SLAB update 20

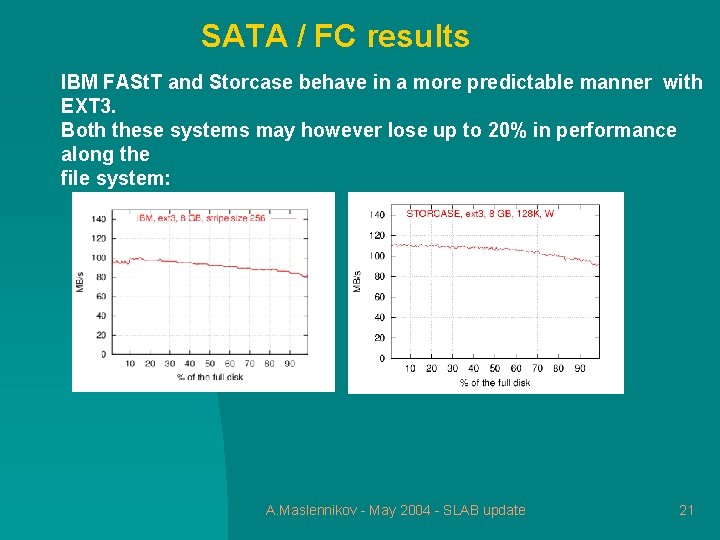

SATA / FC results IBM FASt. T and Storcase behave in a more predictable manner with EXT 3. Both these systems may however lose up to 20% in performance along the file system: A. Maslennikov - May 2004 - SLAB update 21

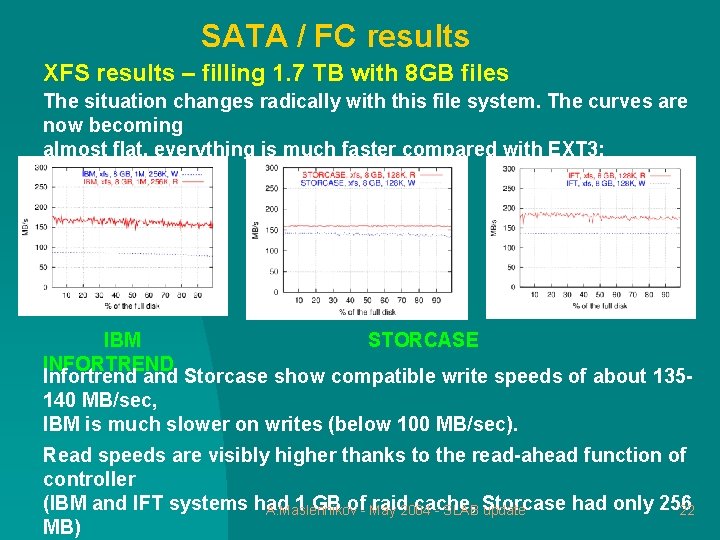

SATA / FC results XFS results – filling 1. 7 TB with 8 GB files The situation changes radically with this file system. The curves are now becoming almost flat, everything is much faster compared with EXT 3: IBM STORCASE INFORTREND Infortrend and Storcase show compatible write speeds of about 135140 MB/sec, IBM is much slower on writes (below 100 MB/sec). Read speeds are visibly higher thanks to the read-ahead function of controller (IBM and IFT systems had 1 GB of- May raid 2004 cache, had only 256 A. Maslennikov - SLAB Storcase update 22 MB)

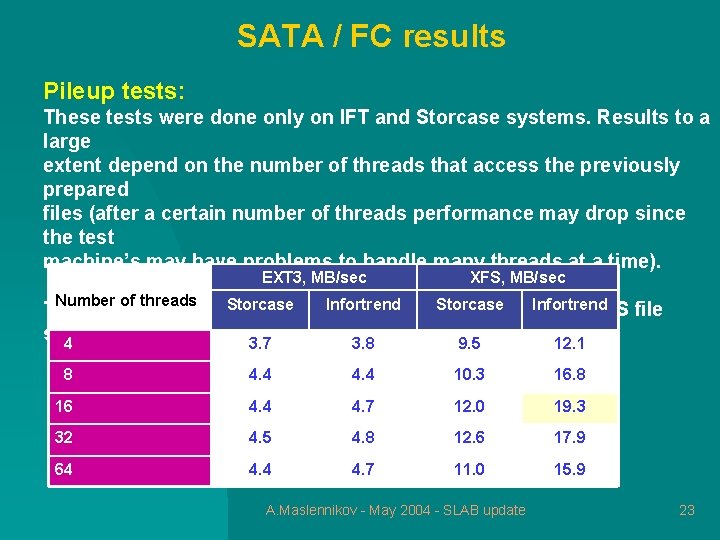

SATA / FC results Pileup tests: These tests were done only on IFT and Storcase systems. Results to a large extent depend on the number of threads that access the previously prepared files (after a certain number of threads performance may drop since the test machine’s may have problems to handle many threads at a time). EXT 3, MB/sec XFS, MB/sec Number of threads Infortrend Storcasearray Infortrend The best result was. Storcase obtained with the Infortrend for XFS file system: 4 3. 7 3. 8 9. 5 12. 1 8 4. 4 10. 3 16. 8 16 4. 4 4. 7 12. 0 19. 3 32 4. 5 4. 8 12. 6 17. 9 64 4. 7 11. 0 15. 9 A. Maslennikov - May 2004 - SLAB update 23

SATA / FC results Operation in degraded mode: We have tried it on a single Infortrend LUN of 5 HDs and EXT 3. One of the disks was removed, and rebuild process was started. The Write speed went down from 105 to 91 MB/sec The Read speed went down from 105 to 28 MB/sec and even less A. Maslennikov - May 2004 - SLAB update 24

SATA / FC results - conclusions 1) The recent low-cost SATA-to-FC disk arrays (Infortrend, Storcase) operate very well and are able to deliver excellent I/O speeds far exceeding that of Gigabit Ethernet. Cost of such systems may be as low as 2. 5 USD/raw. GB. Quality of these systems is dominated by the quality of SATA disks. 2) The choice of local file system is fundamental. XFS easily outperforms EXT 3. In one occasion we have observed an XFS hang under a very heavy load. “xfs_repair” was run, and the error had never reappeared again. We are now planning to investigate this in deep. CASPUR AFS and NFS A. Maslennikov - May 2004 - SLAB update servers are all XFS-based, and there was only one XFS-related 25

SAN File Systems A. Maslennikov - May 2004 - SLAB update 26

SAN File Systems SAN FS Placement These advanced distributed file systems allow clients to operate directly with block devices (block-level file access). Metadata traffic: via Gig. E. Required: Storage Area Network. Current cost of a single fibre channel connection > 1000 USD: Switch port, min ~ 500 USD including GBIC Host Based Adapter, min ~ 800 USD Special discounts for massive purchases are not impossible, but it is very hard to imagine that the cost of connection will become less than 600 -700 USD in the close future. . SAN FS with native fibre channel connection is still not an option for. A. Maslennikov large farms. SAN with i. SCSI - May 2004 - SLABFS update connection 27

SAN File Systems Where SAN File Systems with FC connection may be used: 1) High Performance Computing – fast parallel I/O, faster sequential I/O 2) Hybrid SAN / NAS systems: relatively small number of SAN clients acting as (also redundant) NAS servers 3) HA Clusters with file locking : Mail (shared pool), Web etc A. Maslennikov - May 2004 - SLAB update 28

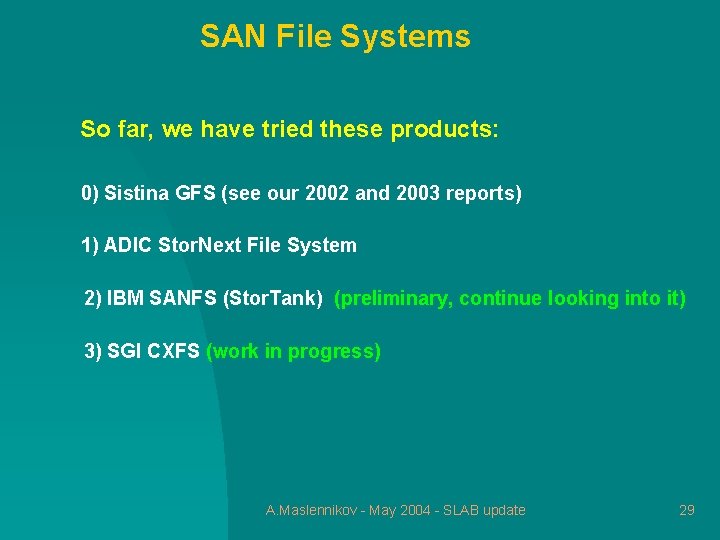

SAN File Systems So far, we have tried these products: 0) Sistina GFS (see our 2002 and 2003 reports) 1) ADIC Stor. Next File System 2) IBM SANFS (Stor. Tank) (preliminary, continue looking into it) 3) SGI CXFS (work in progress) A. Maslennikov - May 2004 - SLAB update 29

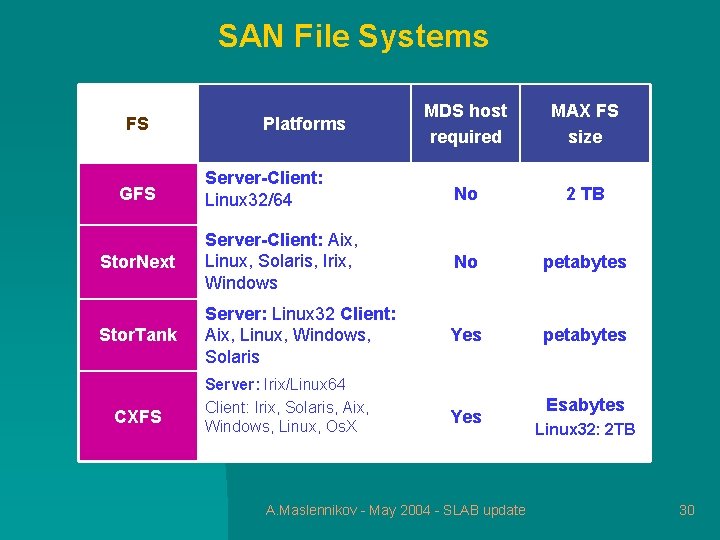

SAN File Systems MDS host required MAX FS size Server-Client: Linux 32/64 No 2 TB Stor. Next Server-Client: Aix, Linux, Solaris, Irix, Windows No petabytes Stor. Tank Server: Linux 32 Client: Aix, Linux, Windows, Solaris Yes petabytes FS GFS CXFS Platforms Server: Irix/Linux 64 Client: Irix, Solaris, Aix, Windows, Linux, Os. X Yes A. Maslennikov - May 2004 - SLAB update Esabytes Linux 32: 2 TB 30

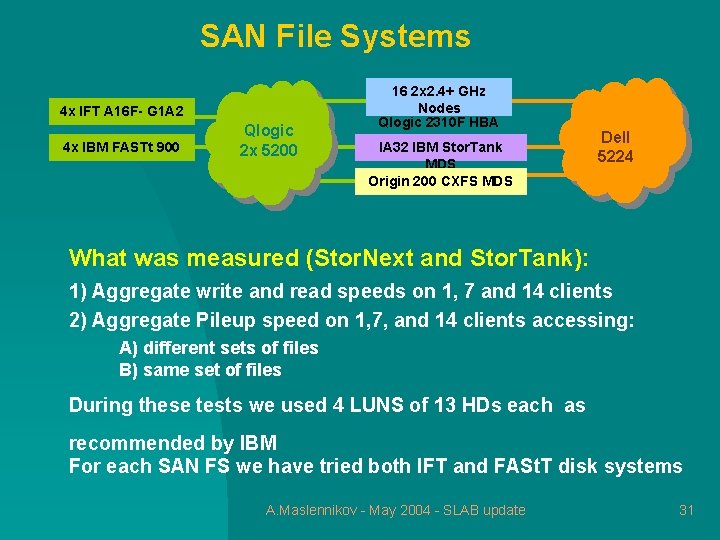

SAN File Systems 4 x IFT A 16 F- G 1 A 2 4 x IBM FASTt 900 Qlogic 2 x 5200 16 2 x 2. 4+ GHz Nodes Qlogic 2310 F HBA IA 32 IBM Stor. Tank MDS Origin 200 CXFS MDS Dell 5224 What was measured (Stor. Next and Stor. Tank): 1) Aggregate write and read speeds on 1, 7 and 14 clients 2) Aggregate Pileup speed on 1, 7, and 14 clients accessing: A) different sets of files B) same set of files During these tests we used 4 LUNS of 13 HDs each as recommended by IBM For each SAN FS we have tried both IFT and FASt. T disk systems A. Maslennikov - May 2004 - SLAB update 31

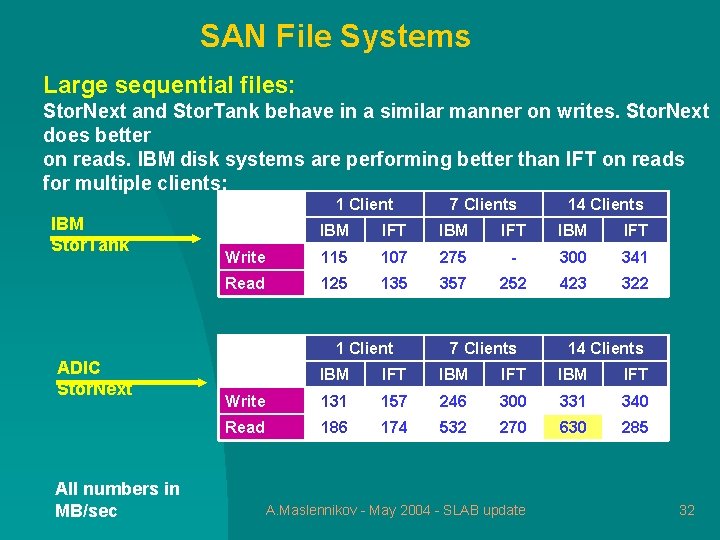

SAN File Systems Large sequential files: Stor. Next and Stor. Tank behave in a similar manner on writes. Stor. Next does better on reads. IBM disk systems are performing better than IFT on reads for multiple clients: 1 Client IBM Stor. Tank All numbers in MB/sec 14 Clients IBM IFT Write 115 107 275 - 300 341 Read 125 135 357 252 423 322 1 Client ADIC Stor. Next 7 Clients 14 Clients IBM IFT Write 131 157 246 300 331 340 Read 186 174 532 270 630 285 A. Maslennikov - May 2004 - SLAB update 32

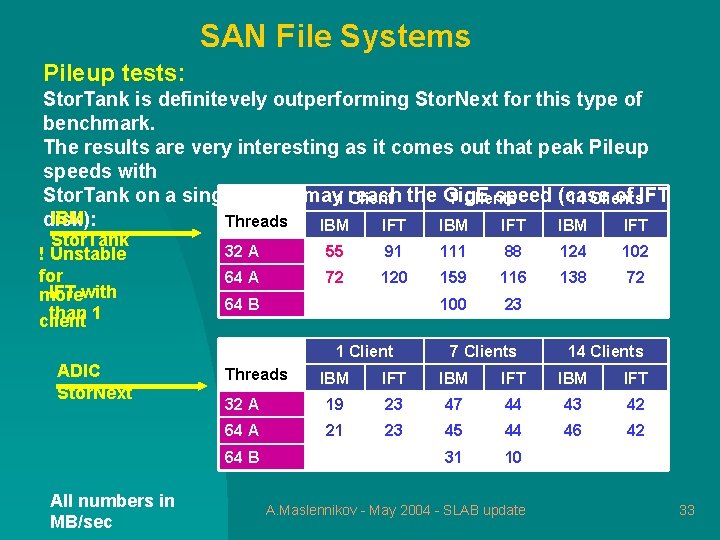

SAN File Systems Pileup tests: Stor. Tank is definitevely outperforming Stor. Next for this type of benchmark. The results are very interesting as it comes out that peak Pileup speeds with Stor. Tank on a single client may 1 reach speed (case of IFT Client the Gig. E 7 Clients 14 Clients IBM disk): Threads IBM IFT Stor. Tank ! Unstable for IFT with more than 1 client 32 A 55 91 111 88 124 102 64 A 72 120 159 116 138 72 100 23 64 B 1 Client ADIC Stor. Next Threads 14 Clients IBM IFT 32 A 19 23 47 44 43 42 64 A 21 23 45 44 46 42 31 10 64 B All numbers in MB/sec 7 Clients A. Maslennikov - May 2004 - SLAB update 33

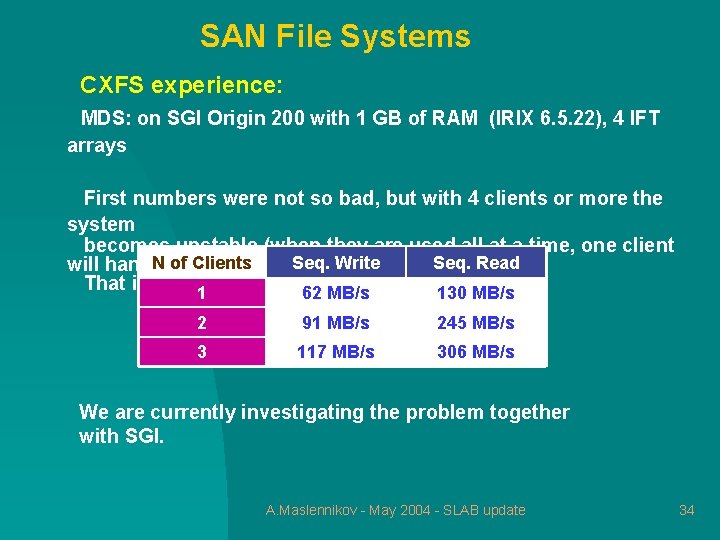

SAN File Systems CXFS experience: MDS: on SGI Origin 200 with 1 GB of RAM (IRIX 6. 5. 22), 4 IFT arrays First numbers were not so bad, but with 4 clients or more the system becomes unstable (when they are used all at a time, one client N of Clients Seq. Write Seq. Read will hang). That is what 1 we have observed so far: 130 MB/s 62 MB/s 2 91 MB/s 245 MB/s 3 117 MB/s 306 MB/s We are currently investigating the problem together with SGI. A. Maslennikov - May 2004 - SLAB update 34

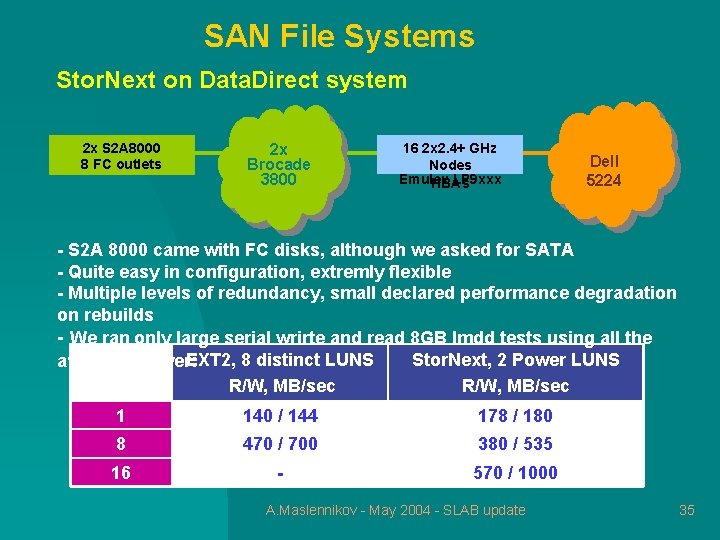

SAN File Systems Stor. Next on Data. Direct system 2 x S 2 A 8000 8 FC outlets 2 x Brocade 3800 16 2 x 2. 4+ GHz Nodes Emulex LP 9 xxx HBAs Dell 5224 - S 2 A 8000 came with FC disks, although we asked for SATA - Quite easy in configuration, extremly flexible - Multiple levels of redundancy, small declared performance degradation on rebuilds - We ran only large serial wrirte and read 8 GB lmdd tests using all the EXT 2, 8 distinct LUNS Stor. Next, 2 Power LUNS available power: R/W, MB/sec 1 140 / 144 178 / 180 8 470 / 700 380 / 535 16 - 570 / 1000 A. Maslennikov - May 2004 - SLAB update 35

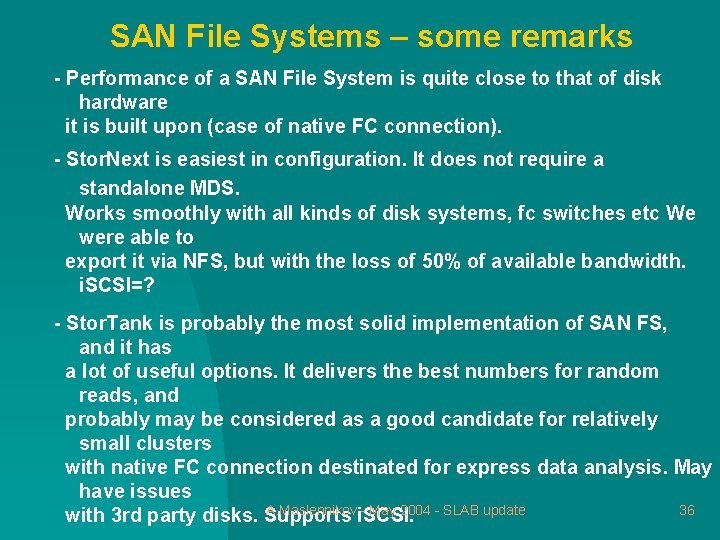

SAN File Systems – some remarks - Performance of a SAN File System is quite close to that of disk hardware it is built upon (case of native FC connection). - Stor. Next is easiest in configuration. It does not require a standalone MDS. Works smoothly with all kinds of disk systems, fc switches etc We were able to export it via NFS, but with the loss of 50% of available bandwidth. i. SCSI=? - Stor. Tank is probably the most solid implementation of SAN FS, and it has a lot of useful options. It delivers the best numbers for random reads, and probably may be considered as a good candidate for relatively small clusters with native FC connection destinated for express data analysis. May have issues A. Maslennikovi. SCSI. - May 2004 - SLAB update 36 with 3 rd party disks. Supports

AFS Speedup A. Maslennikov - May 2004 - SLAB update 37

AFS speedup options - AFS performance for large files is quite poor (max 35 -40 MB/sec even on a very performant hardware). To a large extent this is due to the limitations of Rx RPC protocol, and to the not most optimal implementation of the file server. - One possible workaround is to replace the Rx protocol with an alternative one in all cases where it is used for file serving. We were evaluating two such experimental implementations: 1) AFS with OSD support (Rainer Toebbicke). Rainer stores AFS data inside the Object-based Storage Devices (OSDs) which should not necessarily reside inside the AFS File Servers. The OSD performs A. Maslennikov - May 2004 - SLAB update 38 basic space management and access control and is

AFS speedup options Both methods worked! The AFS/OSD scheme was tested during the Fall 2003 test session, the tests were done with the Data. Direct’s S 2 A 8000 system. In one particular test we were able to achieve 425 MB/sec write speed for both native EXT 2 and AFS/OSD configurations. The Reuter AFS was evaluated during the Spring 2004 session. Stor. Next SAN File System was used to distribute a /vicep. X partition among several clients. Like in the previous case, AFS/Reuter performance was practically equal to the native performance of Stor. Next for large files. A. Maslennikov - May 2004 - SLAB update 39 To learn more on the Data. Direct system and the Fall 2003 session,

Lustre! A. Maslennikov - May 2004 - SLAB update 40

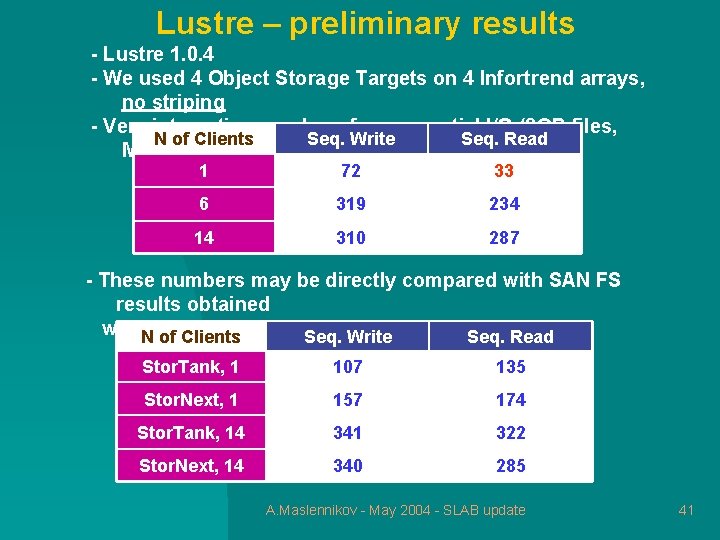

Lustre – preliminary results - Lustre 1. 0. 4 - We used 4 Object Storage Targets on 4 Infortrend arrays, no striping - Very interesting numbers for sequential I/O (8 GB files, N of Clients Seq. Write Seq. Read MB/sec): 1 72 33 6 319 234 14 310 287 - These numbers may be directly compared with SAN FS results obtained with. Nthe same disk arrays: of Clients Seq. Write Seq. Read Stor. Tank, 1 107 135 Stor. Next, 1 157 174 Stor. Tank, 14 341 322 Stor. Next, 14 340 285 A. Maslennikov - May 2004 - SLAB update 41

LTO-2 Tape Drive A. Maslennikov - May 2004 - SLAB update 42

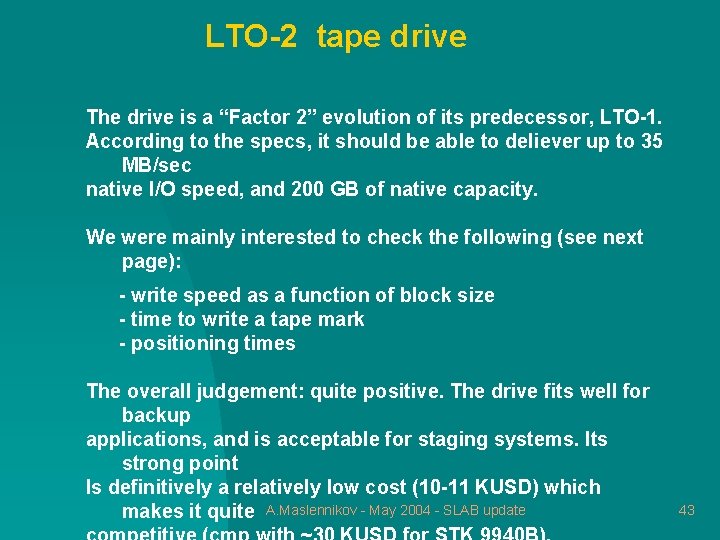

LTO-2 tape drive The drive is a “Factor 2” evolution of its predecessor, LTO-1. According to the specs, it should be able to deliever up to 35 MB/sec native I/O speed, and 200 GB of native capacity. We were mainly interested to check the following (see next page): - write speed as a function of block size - time to write a tape mark - positioning times The overall judgement: quite positive. The drive fits well for backup applications, and is acceptable for staging systems. Its strong point Is definitively a relatively low cost (10 -11 KUSD) which makes it quite A. Maslennikov - May 2004 - SLAB update 43

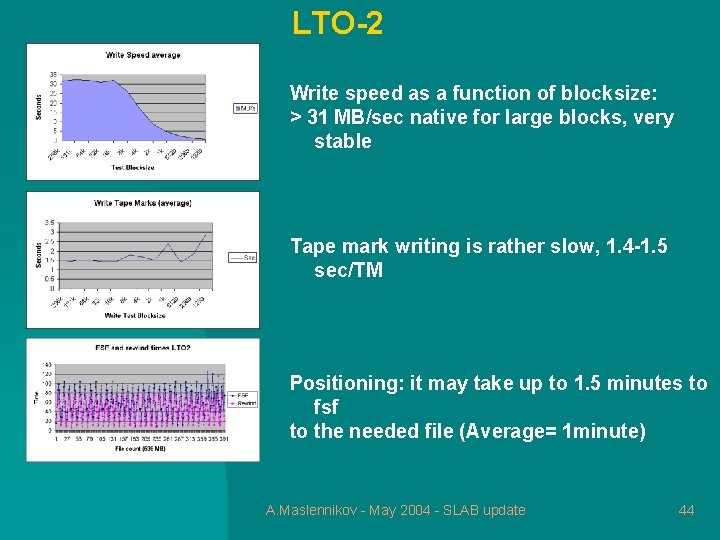

LTO-2 Write speed as a function of blocksize: > 31 MB/sec native for large blocks, very stable Tape mark writing is rather slow, 1. 4 -1. 5 sec/TM Positioning: it may take up to 1. 5 minutes to fsf to the needed file (Average= 1 minute) A. Maslennikov - May 2004 - SLAB update 44

Final remarks Our immediate plans include: - Further investigation of Stor. Tank, CXFS and yet another SAN file system (Veritas) including NFS export - Evaluation of i. SCSI-enabled SATA RAID arrays in combination with SAN file systems - Further Lustre testing on IFT and IBM hardware (new version: 1. 2, striping, other benchmarks) Feel free to join us at any moment ! A. Maslennikov - May 2004 - SLAB update 45

- Slides: 45