Adaptive HistoryBased Memory Schedulers Ibrahim Hur and Calvin

Adaptive History-Based Memory Schedulers Ibrahim Hur and Calvin Lin IBM Austin The University of Texas at Austin 1

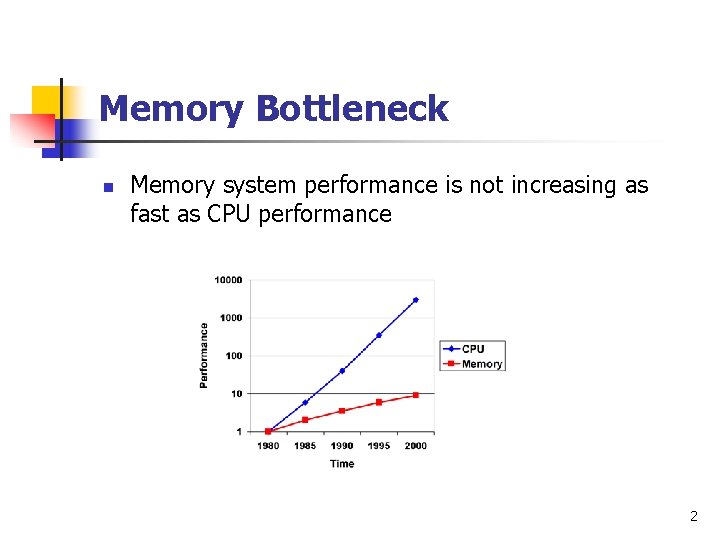

Memory Bottleneck n n n Memory system performance is not increasing as fast as CPU performance Latency: Use caches, prefetching, … Bandwidth: Use parallelism inside memory system 2

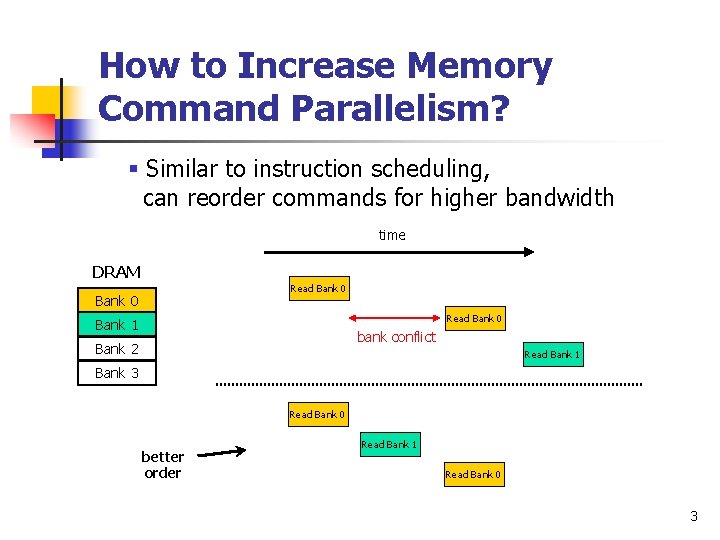

How to Increase Memory Command Parallelism? § Similar to instruction scheduling, can reorder commands for higher bandwidth time DRAM Read Bank 0 Bank 1 bank conflict Bank 2 Read Bank 1 Bank 3 Read Bank 0 better order Read Bank 1 Read Bank 0 3

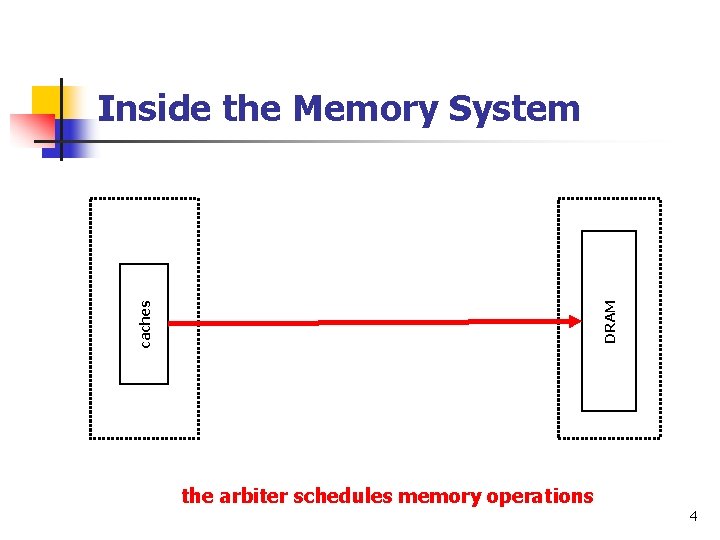

Inside the Memory System not FIFO Memory Queue DRAM FIFO arbiter caches Read Queue Write Queue not FIFO Memory Controller the arbiter schedules memory operations 4

Our Work n n Study memory command scheduling in the context of the IBM Power 5 Present new memory arbiters n 20% increased bandwidth n Very little cost: 0. 04% increase in chip area 5

Outline n n The Problem n Characteristics of DRAM n Previous Scheduling Methods Our approach n History-based schedulers n Adaptive history-based schedulers n Results n Conclusions 6

Understanding the Problem: Characteristics of DRAM n n Multi-dimensional structure n Banks, rows, and columns n IBM Power 5: ranks and ports as well Access time is not uniform n Bank-to-Bank conflicts n Read after Write to the same rank conflict n Write after Read to different port conflict n … 7

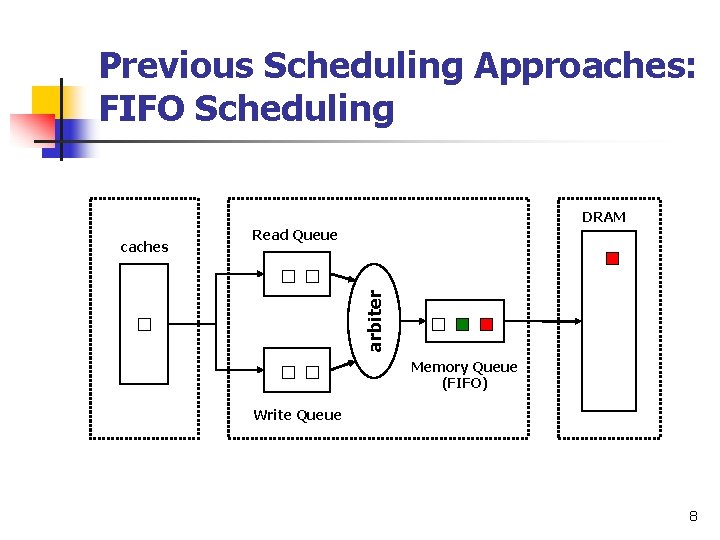

Previous Scheduling Approaches: FIFO Scheduling DRAM Read Queue arbiter caches Memory Queue (FIFO) Write Queue 8

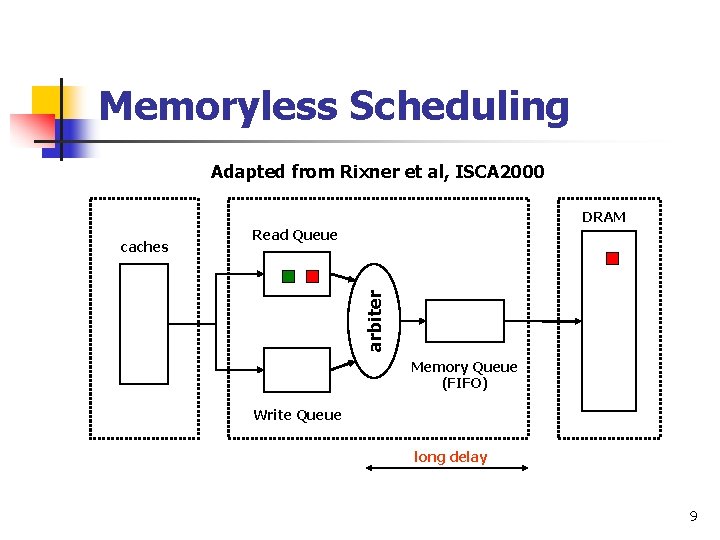

Memoryless Scheduling Adapted from Rixner et al, ISCA 2000 DRAM Read Queue arbiter caches Memory Queue (FIFO) Write Queue long delay 9

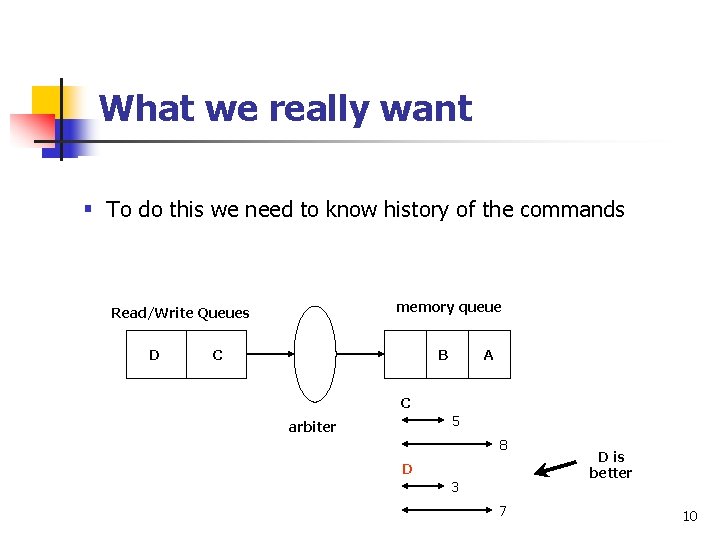

What we really want Keep the pipeline full; don’t hold commands in the queues conflicts totally resolved § Toreorder do this we need until to know historyare of the commands n Forward them to memory queue in an order to minimize future conflicts n memory queue Read/Write Queues D C B A C 5 arbiter 8 D 3 7 D is better 10

Another Goal: Match Application’s Memory Command Behavior § Arbiter should select commands from queues roughly in the ratio in which the application generates them § Otherwise, read or write queue may be congested § Command history is useful here too 11

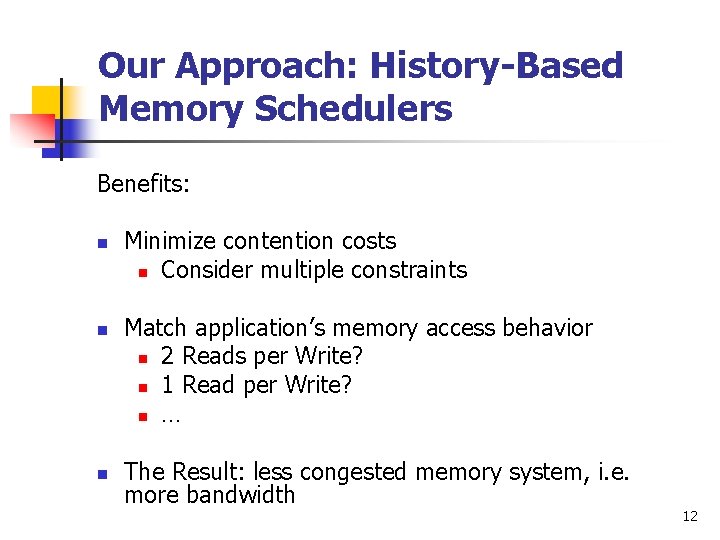

Our Approach: History-Based Memory Schedulers Benefits: n n n Minimize contention costs n Consider multiple constraints Match application’s memory access behavior n 2 Reads per Write? n 1 Read per Write? n … The Result: less congested memory system, i. e. more bandwidth 12

How does it work? n Use a Finite State Machine (FSM) n Each state in the FSM represents one possible history n Transitions out of a state are prioritized n n At any state, scheduler selects the available command with the highest priority FSM is generated at design time 13

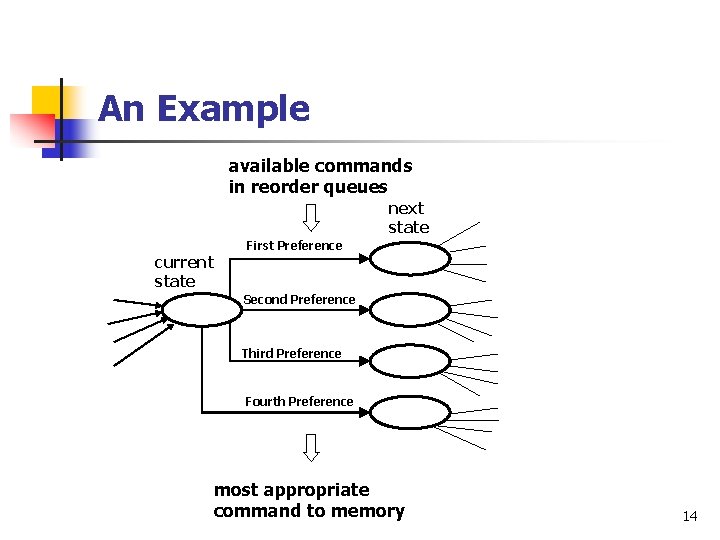

An Example available commands in reorder queues next state current state First Preference Second Preference Third Preference Fourth Preference most appropriate command to memory 14

How to determine priorities? n n Two criteria: n A: Minimize contention costs n B: Satisfy program’s Read/Write command mix First Method Second Method : Use A, break ties with B : Use B, break ties with A Which method to use? n Combine two methods probabilistically (details in the paper) 15

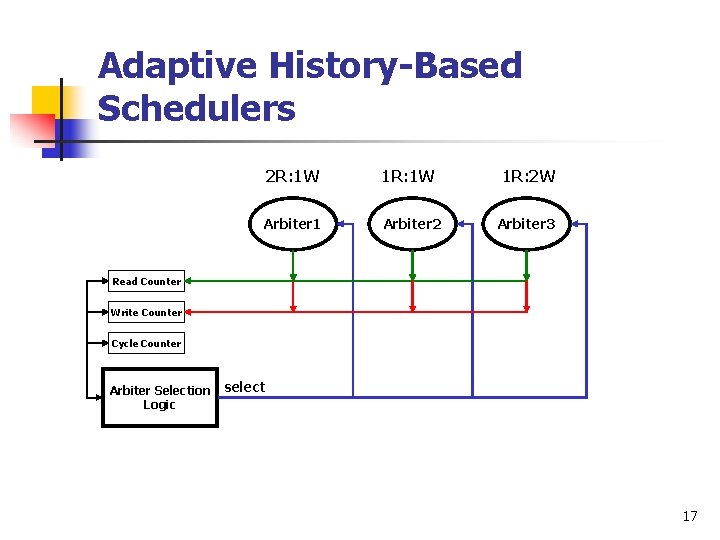

Limitation of the History-Based Approach n n Designed for one particular mix of Read/Writes Solution: Adaptive History-Based Schedulers n Create multiple state machines: one for each Read/Write mix n Periodically select most appropriate state machine 16

Adaptive History-Based Schedulers 2 R: 1 W 1 R: 2 W Arbiter 1 Arbiter 2 Arbiter 3 Read Counter Write Counter Cycle Counter Arbiter Selection Logic select 17

Evaluation n n Used a cycle accurate simulator for the IBM Power 5 n 1. 6 GHz, 266 -DDR 2, 4 -rank, 4 -bank, 2 -port Evaluated and compared our approach with previous approaches with data intensive applications: Stream, NAS, and microbenchmarks 18

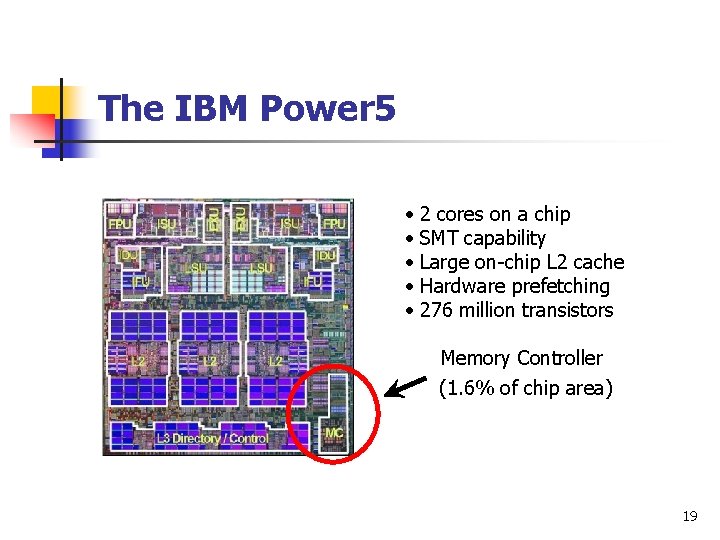

The IBM Power 5 • 2 cores on a chip • SMT capability • Large on-chip L 2 cache • Hardware prefetching • 276 million transistors Memory Controller (1. 6% of chip area) 19

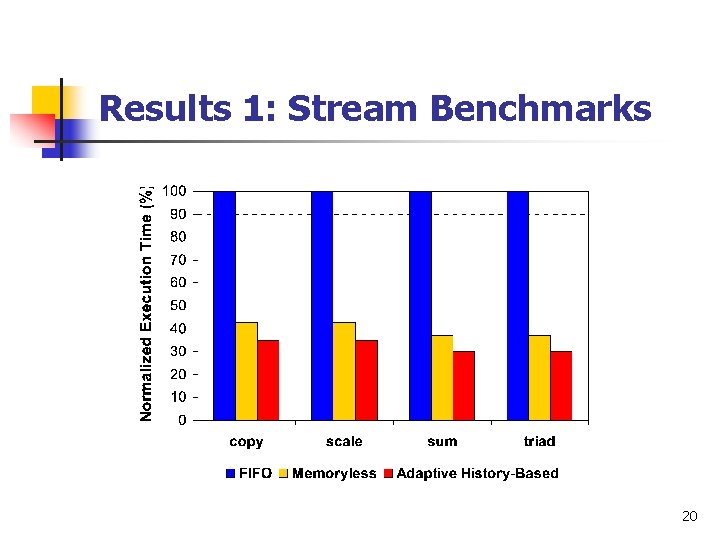

Results 1: Stream Benchmarks 20

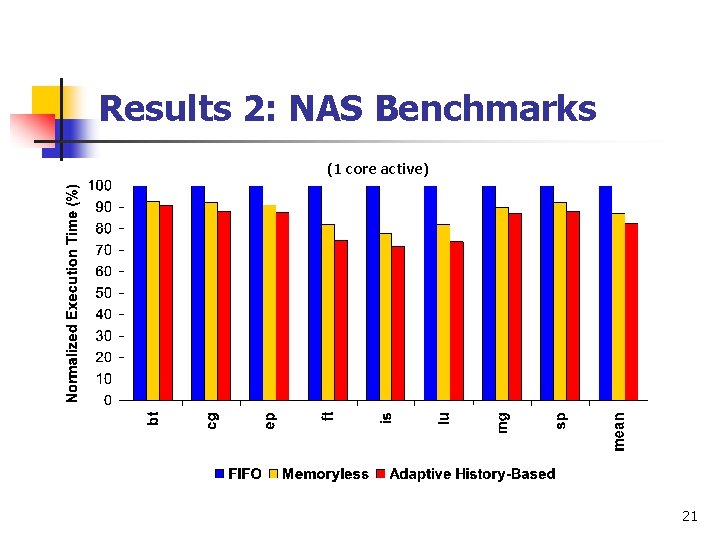

Results 2: NAS Benchmarks (1 core active) 21

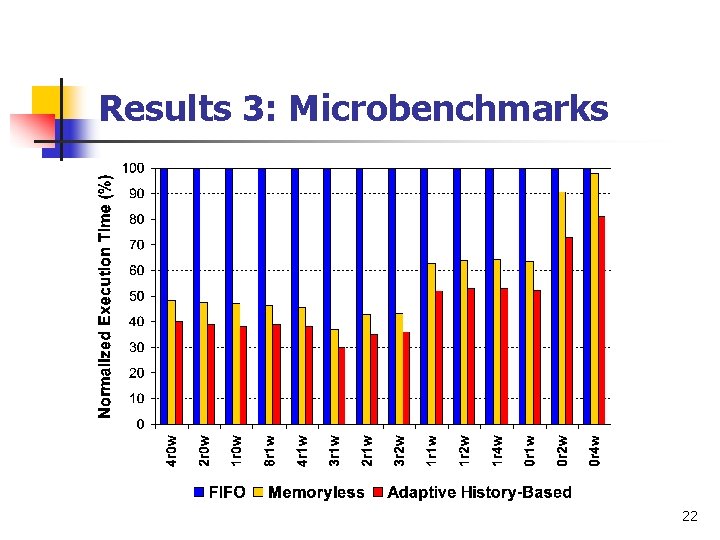

Results 3: Microbenchmarks 22

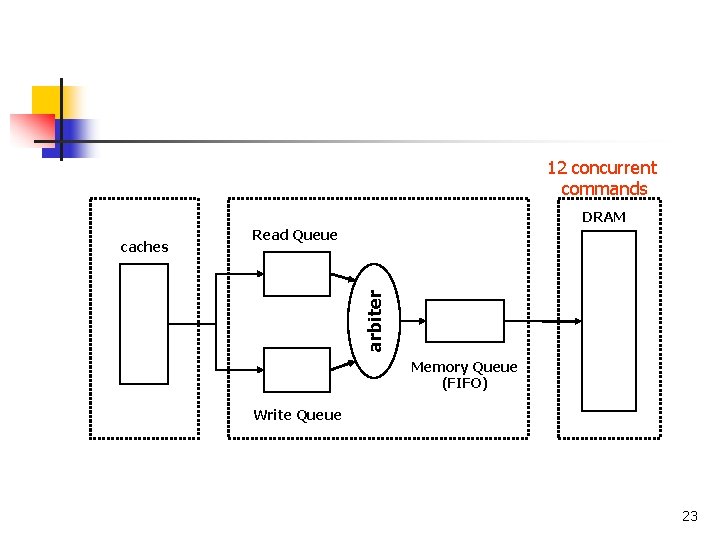

12 concurrent commands DRAM Read Queue arbiter caches Memory Queue (FIFO) Write Queue 23

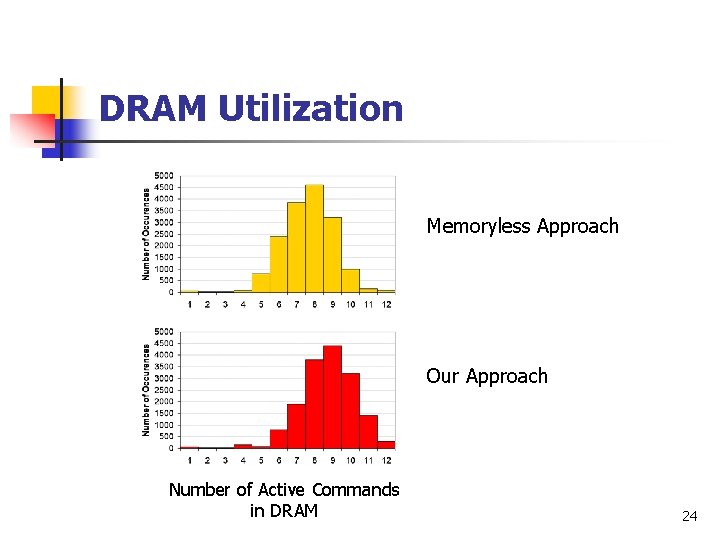

DRAM Utilization Memoryless Approach Our Approach Number of Active Commands in DRAM 24

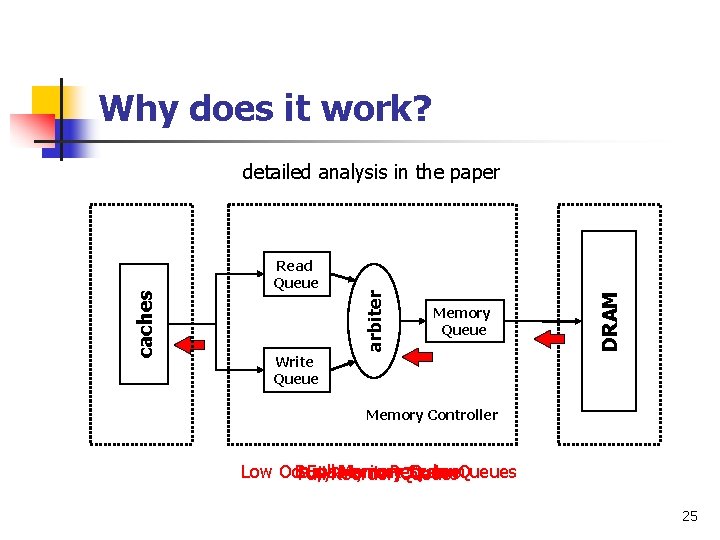

Why does it work? Memory Queue DRAM Read Queue arbiter caches detailed analysis in the paper Write Queue Memory Controller Low Occupancy Busy Full. Reorder Memory in Reorder System Queues Full Queues 25

Other Results n n n We obtain >95% performance of the perfect DRAM configuration (no conflicts) Results with higher frequency, and no data prefetching are in the paper History size of 2 works well 26

Conclusions n Introduced adaptive history-based schedulers n Evaluated on a highly tuned system, IBM Power 5 n n Performance improvement Over FIFO : Stream 63% Over Memoryless : Stream 19% NAS 11% NAS 5% Little cost: 0. 04% chip area increase 27

Conclusions (cont. ) n n Similar arbiters can be used in other places as well, e. g. cache controllers Can optimize for other criteria, e. g. power or power+performance. 28

Thank you 29

- Slides: 29