ACCURACY OF MEASUREMENT VALIDITY AND RELIABILITY ACCURACY OF

ACCURACY OF MEASUREMENT VALIDITY AND RELIABILITY

ACCURACY OF MEASUREMENT Having dealt with many sources of measurement error, you may wish to know how successful you have been in eliminating/reducing measurement error. How would you assess the accuracy of a measurement instrument? For example, … QUESTION: How would you decide/judge if your bathroom scale is operating accurately (free of measurement error)?

ACCURACY OF MEASUREMENT There are Two yardsticks against which we judge the accuracy/precision of a measurement instrument/procedure and its relative success in measuring a variable: Validity and Reliability

ACCURACY OF MEASUREMENT Validity? The degree to which a measurement instrument actually measures what it is supposed/designed to measure Reliability? The degree of dependability, stability, consistency, and predictability of measurement instrument

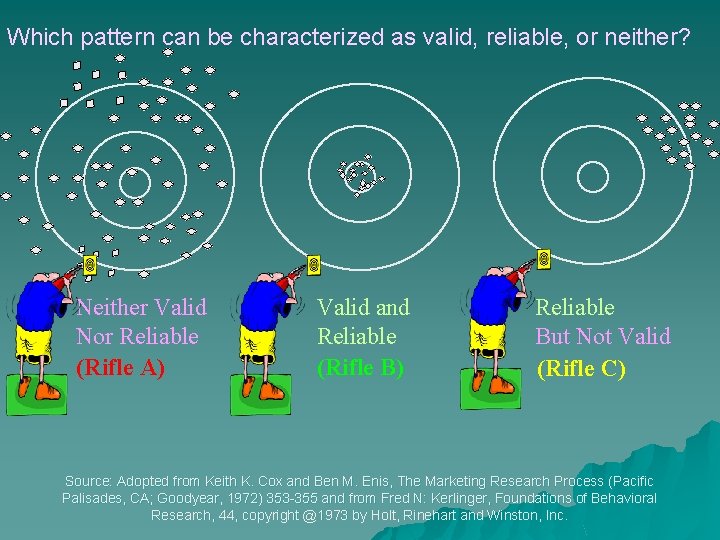

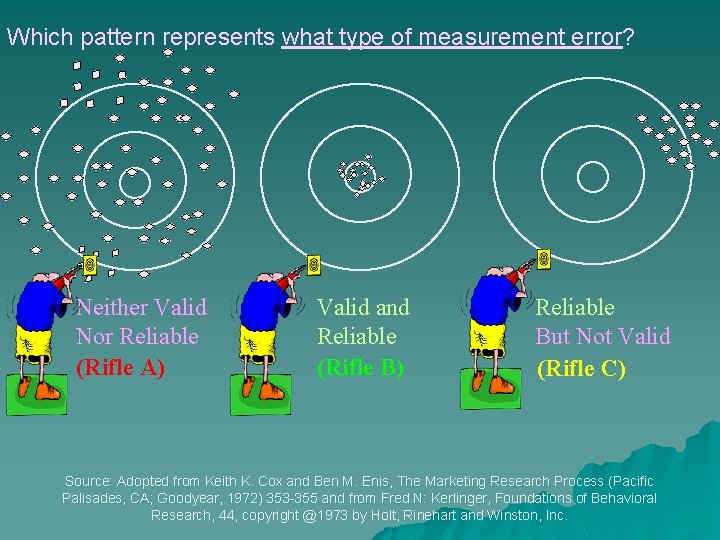

Which pattern can be characterized as valid, reliable, or neither? Neither Valid Nor Reliable (Rifle A) Valid and Reliable (Rifle B) Reliable But Not Valid (Rifle C) Source: Adopted from Keith K. Cox and Ben M. Enis, The Marketing Research Process (Pacific Palisades, CA; Goodyear, 1972) 353 -355 and from Fred N: Kerlinger, Foundations of Behavioral Research, 44, copyright @1973 by Holt, Rinehart and Winston, Inc.

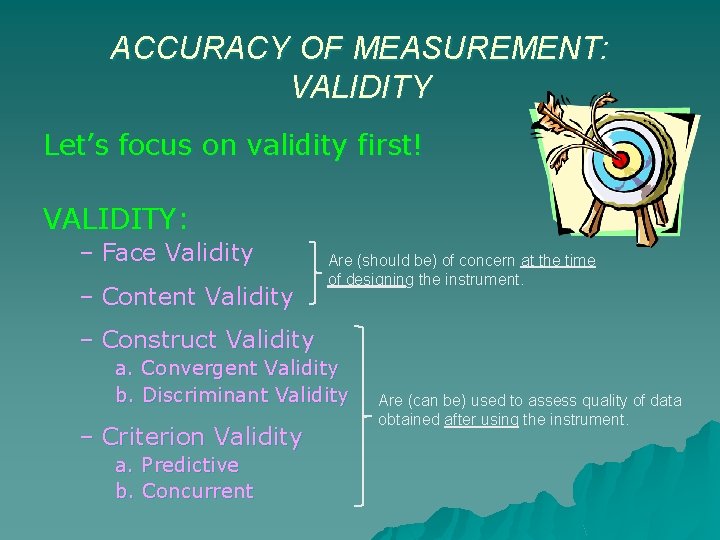

ACCURACY OF MEASUREMENT: VALIDITY Let’s focus on validity first! VALIDITY: – Face Validity – Content Validity Are (should be) of concern at the time of designing the instrument. – Construct Validity a. Convergent Validity b. Discriminant Validity – Criterion Validity a. Predictive b. Concurrent Are (can be) used to assess quality of data obtained after using the instrument.

ACCURACY OF MEASUREMENT: FACE VALIDITY • FACE VALIDITY: – Most subjective of all types of validity – The measurement instrument is intuitively judged for its presumed relevance to the attribute being measured.

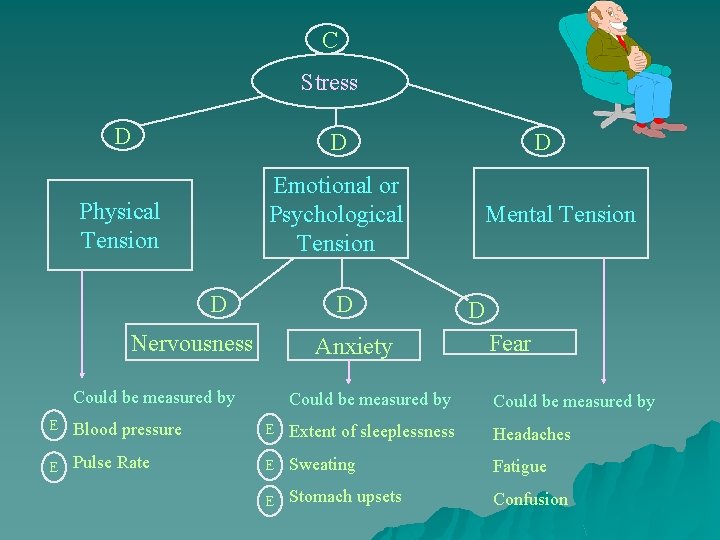

ACCURACY OF MEASUREMENT: CONTENT VALIDITY u CONTENT VALIDITY: – Many constructs represent complex, abstract, and illusive qualities that cannot be directly observed/ measured, but have to be inferred from their multiple indicators. – Content validity is concerned with whether or not the measurement instrument contains a fair sampling of the construct’s content domain, i. e. , the universe of the issues it is supposed to represent. Example: Course Exam? Stress or Anxiety What are some of the symptoms of stress? (see next slide…)

C Stress D D Physical Tension Emotional or Psychological Tension D D Nervousness Anxiety Could be measured by D Mental Tension D Fear Could be measured by E Blood pressure E Extent of sleeplessness Headaches E Pulse Rate E Sweating Fatigue E Stomach upsets Confusion

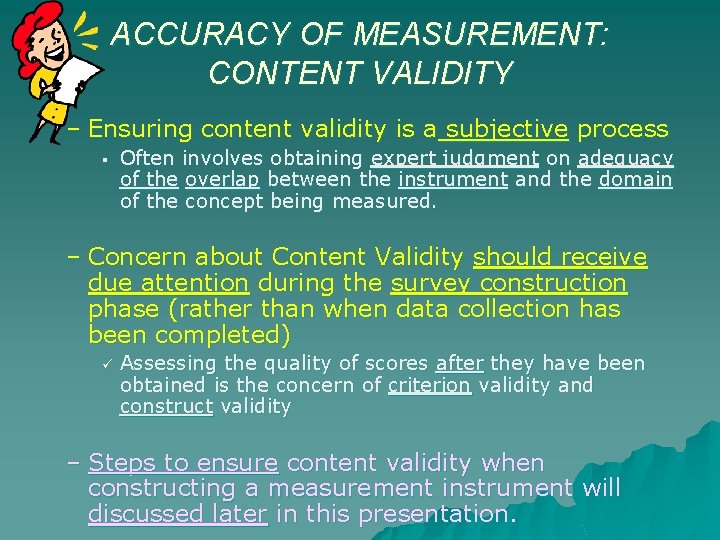

ACCURACY OF MEASUREMENT: CONTENT VALIDITY – Ensuring content validity is a subjective process § Often involves obtaining expert judgment on adequacy of the overlap between the instrument and the domain of the concept being measured. – Concern about Content Validity should receive due attention during the survey construction phase (rather than when data collection has been completed) Assessing the quality of scores after they have been obtained is the concern of criterion validity and construct validity – Steps to ensure content validity when constructing a measurement instrument will discussed later in this presentation.

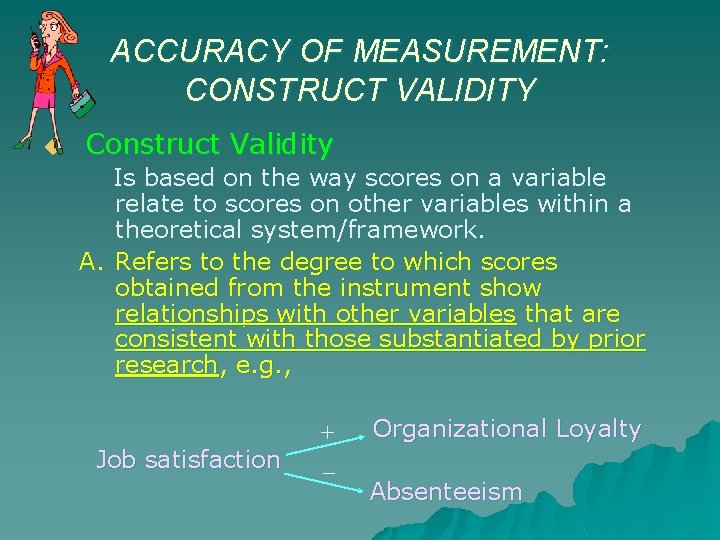

ACCURACY OF MEASUREMENT: CONSTRUCT VALIDITY u Construct Validity Is based on the way scores on a variable relate to scores on other variables within a theoretical system/framework. A. Refers to the degree to which scores obtained from the instrument show relationships with other variables that are consistent with those substantiated by prior research, e. g. , Job satisfaction + _ Organizational Loyalty Absenteeism

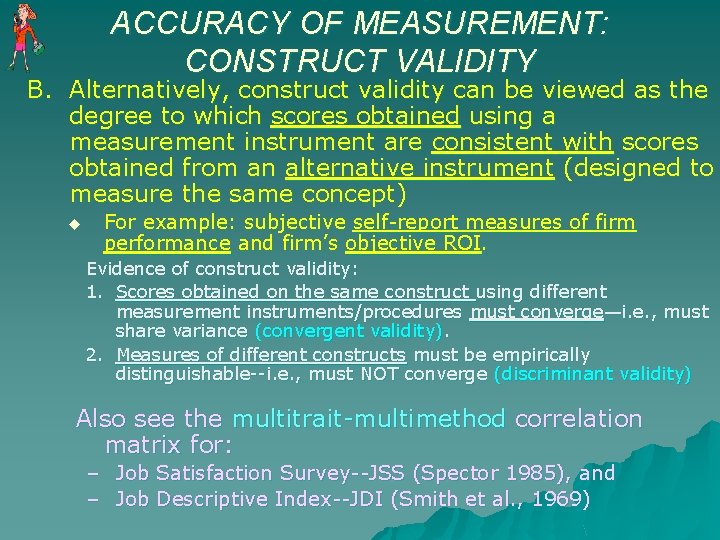

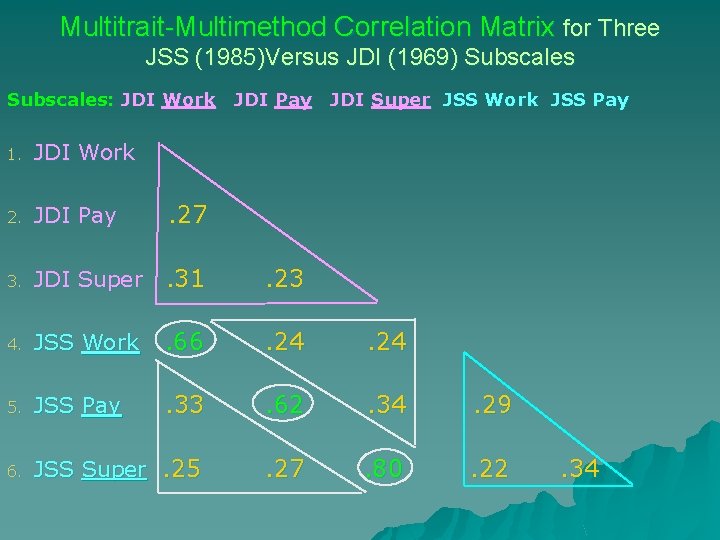

ACCURACY OF MEASUREMENT: CONSTRUCT VALIDITY B. Alternatively, construct validity can be viewed as the degree to which scores obtained using a measurement instrument are consistent with scores obtained from an alternative instrument (designed to measure the same concept) u For example: subjective self-report measures of firm performance and firm’s objective ROI. Evidence of construct validity: 1. Scores obtained on the same construct using different measurement instruments/procedures must converge—i. e. , must share variance (convergent validity). 2. Measures of different constructs must be empirically distinguishable--i. e. , must NOT converge (discriminant validity) Also see the multitrait-multimethod correlation matrix for: – Job Satisfaction Survey--JSS (Spector 1985), and – Job Descriptive Index--JDI (Smith et al. , 1969)

Multitrait-Multimethod Correlation Matrix for Three JSS (1985)Versus JDI (1969) Subscales: JDI Work JDI Pay JDI Super JSS Work JSS Pay 1. JDI Work 2. JDI Pay . 27 3. JDI Super . 31 . 23 4. JSS Work . 66 . 24 5. JSS Pay . 33 . 62 . 34 . 29 6. JSS Super. 25 . 27 . 80 . 22 . 34

ACCURACY OF MEASUREMENT: CRITERION VALIDITY u Criterion Validity The degree to which scores obtained from an instrument can predict a related practical outcome (e. g. , GMAT and academic performance in MBA program--r =. 48 with 1 st year GPA) u Predictive Validity--if the prediction involves a future outcome, e. g. , GMAT u Concurrent Validity--if the prediction involves a present outcome or state of affairs—e. g. , score on political liberalism/conservatism scale predicting political party affiliation.

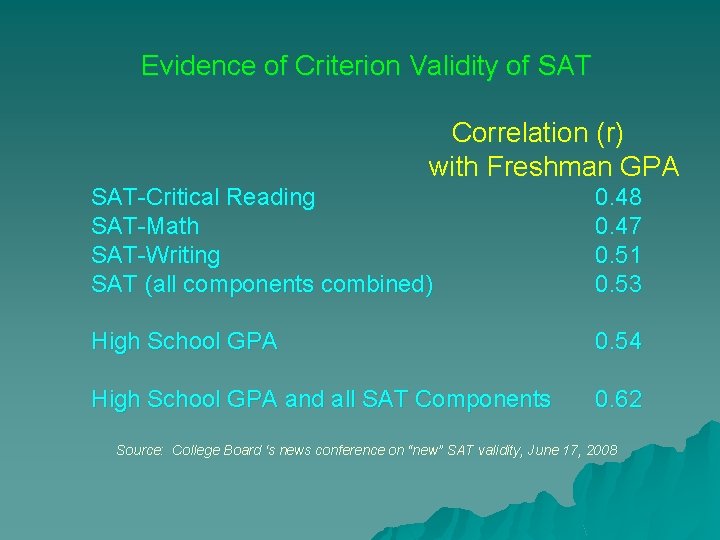

Evidence of Criterion Validity of SAT Correlation (r) with Freshman GPA SAT-Critical Reading SAT-Math SAT-Writing SAT (all components combined) 0. 48 0. 47 0. 51 0. 53 High School GPA 0. 54 High School GPA and all SAT Components 0. 62 Source: College Board ‘s news conference on “new” SAT validity, June 17, 2008

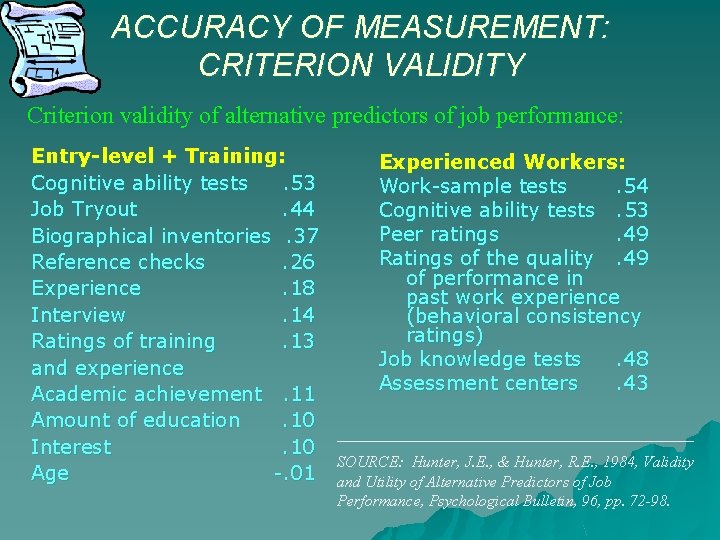

ACCURACY OF MEASUREMENT: CRITERION VALIDITY Criterion validity of alternative predictors of job performance: Entry-level + Training: Cognitive ability tests. 53 Job Tryout. 44 Biographical inventories. 37 Reference checks. 26 Experience. 18 Interview. 14 Ratings of training. 13 and experience Academic achievement. 11 Amount of education. 10 Interest. 10 Age -. 01 Experienced Workers: Work-sample tests. 54 Cognitive ability tests. 53 Peer ratings. 49 Ratings of the quality. 49 of performance in past work experience (behavioral consistency ratings) Job knowledge tests. 48 Assessment centers. 43 __________________________ SOURCE: Hunter, J. E. , & Hunter, R. E. , 1984, Validity and Utility of Alternative Predictors of Job Performance, Psychological Bulletin, 96, pp. 72 -98.

ACCURACY OF MEASUREMENT: RELIABILITY: Dependability, consistency, stability, predictability Reliability: – Stability a. Test-Retest Reliability b. Parallel-Form Reliability – Internal Consistency a. Split-Half Reliability b. Inter-Item Consistency c. Inter-Rater Reliability To better understand RELIABILITY, let’s first review different types of measurement error…

ACCURACY OF MEASUREMENT: RELIABILITY • Measurement, especially in social sciences, is often not exact; it involves approximating and estimating, i. e. , involves measurement error Measurement Error = True Score - Observed Score • Measurement Error: – Constant (repeated) error: reflects error that appears consistently in repeated measurements. – Random (unsystematic) error: reflects error that appears sporadically in repeated measurements.

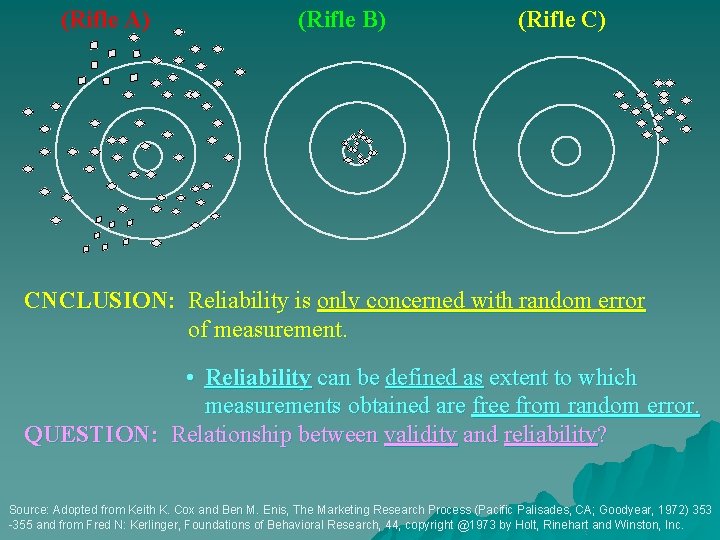

Which pattern represents what type of measurement error? Neither Valid Nor Reliable (Rifle A) Valid and Reliable (Rifle B) Reliable But Not Valid (Rifle C) Source: Adopted from Keith K. Cox and Ben M. Enis, The Marketing Research Process (Pacific Palisades, CA; Goodyear, 1972) 353 -355 and from Fred N: Kerlinger, Foundations of Behavioral Research, 44, copyright @1973 by Holt, Rinehart and Winston, Inc.

(Rifle A) (Rifle B) (Rifle C) CNCLUSION: Reliability is only concerned with random error of measurement. • Reliability can be defined as extent to which measurements obtained are free from random error. QUESTION: Relationship between validity and reliability? Source: Adopted from Keith K. Cox and Ben M. Enis, The Marketing Research Process (Pacific Palisades, CA; Goodyear, 1972) 353 -355 and from Fred N: Kerlinger, Foundations of Behavioral Research, 44, copyright @1973 by Holt, Rinehart and Winston, Inc.

ACCURACY OF MEASUREMENT: RELIABILITY How would you assess reliability of a measurement instrument (say, a bathroom scale)? A. Measure the same person many times and use standard deviation of the scores as an index of ________ stability over repeated measures (e. g. , weight) Another Way?

ACCURACY OF MEASUREMENT: RELIABILITY B. Measure many individuals twice using same instrument and look for stability of scores. That is, compute the correlation coefficient r (Test- Retest Reliability) – Most applicable for fairly stable attributes (e. g. , personality traits). (See next slide for how to compute correlation coefficient r)

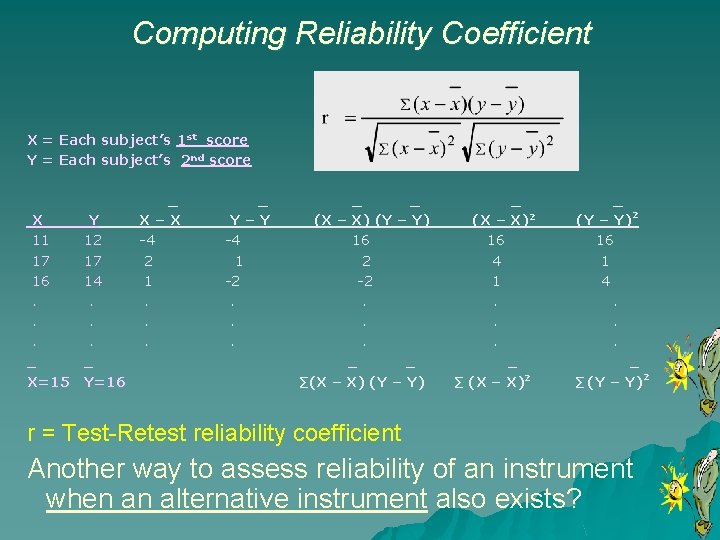

Computing Reliability Coefficient X = Each subject’s 1 st score Y = Each subject’s 2 nd score X 11 17 16. . . _ X=15 Y 12 17 14. . . _ Y=16 _ X–X -4 2 1. . . _ Y–Y -4 1 -2. . . _ _ (X – X) (Y – Y) 16 2 -2. . . _ _ ∑(X – X) (Y – Y) _ (X – X)2 16 4 1. . . _ ∑ (X – X)2 _ (Y – Y)2 16 1 4. . . _ ∑ (Y – Y)2 r = Test-Retest reliability coefficient Another way to assess reliability of an instrument when an alternative instrument also exists?

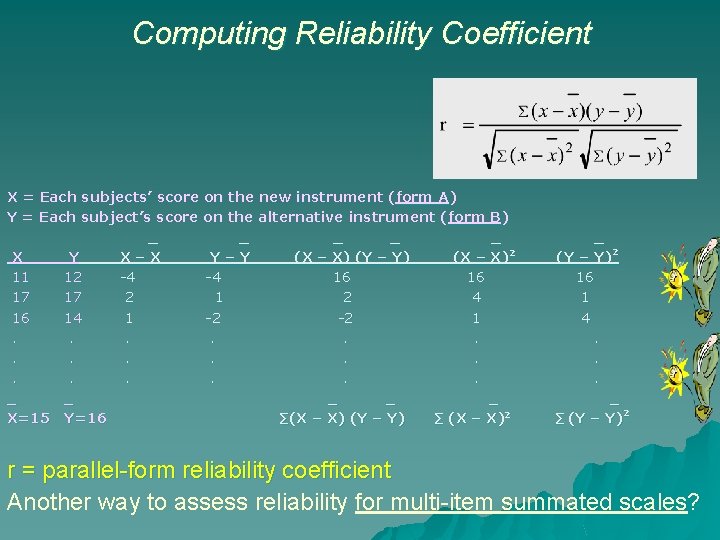

ACCURACY OF MEASUREMENT: RELIABILITY C. Measure many individuals with two instruments (the focal as well as an alternative instrument), and look for consistency of scores across the two instruments, i. e. , compute r (Parallel Form Reliability) (See next slide for how to compute correlation coefficient r)

Computing Reliability Coefficient X = Each subjects’ score on the new instrument (form A) Y = Each subject’s score on the alternative instrument (form B) _ _ _ X Y X–X Y–Y (X – X) (Y – Y) (X – X)2 11 12 -4 -4 16 16 17 17 2 1 2 4 16 14 1 -2 -2 1. . . . _ _ _ X=15 Y=16 ∑(X – X) (Y – Y) ∑ (X – X)2 _ (Y – Y)2 16 1 4. . . _ ∑ (Y – Y)2 r = parallel-form reliability coefficient Another way to assess reliability for multi-item summated scales?

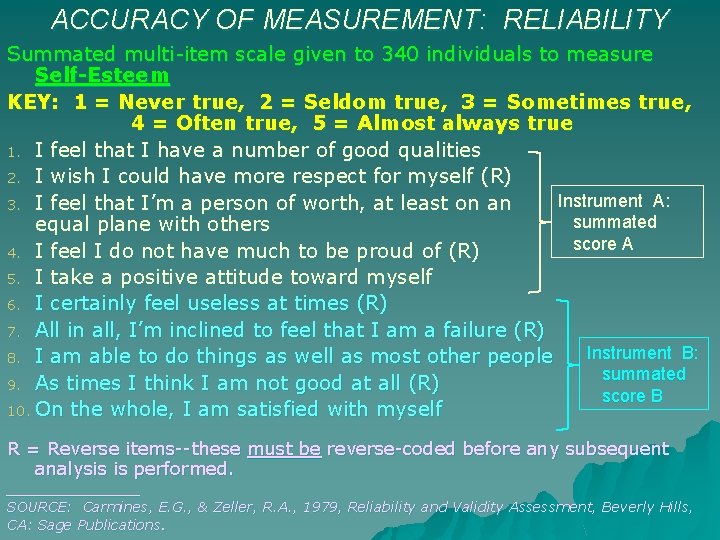

ACCURACY OF MEASUREMENT: RELIABILITY D. In the case of multi-item summated rating scales, you can artificially create two alternative instruments (parallel forms) by splitting the multiple items into two halves and then look for consistency of scores across the two halves. • compute r between pairs of summated scores (Split. Half Reliability). Let’s See an EXAMPLE.

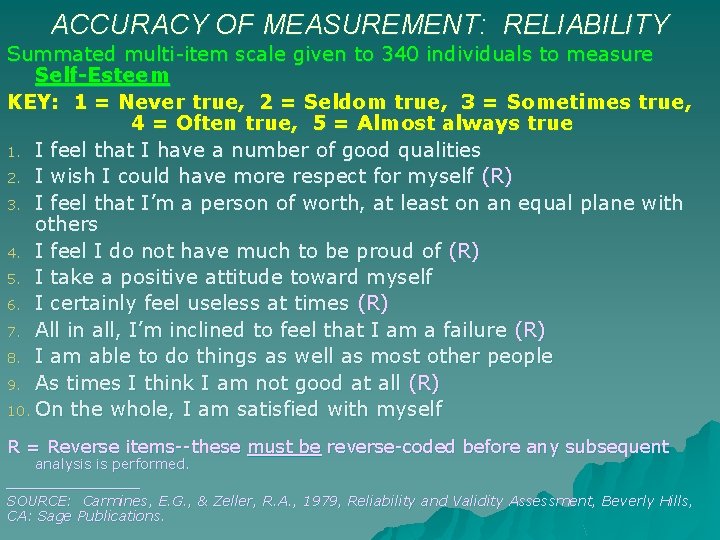

ACCURACY OF MEASUREMENT: RELIABILITY Summated multi-item scale given to 340 individuals to measure Self-Esteem KEY: 1 = Never true, 2 = Seldom true, 3 = Sometimes true, 4 = Often true, 5 = Almost always true 1. I feel that I have a number of good qualities 2. I wish I could have more respect for myself (R) 3. I feel that I’m a person of worth, at least on an equal plane with others 4. I feel I do not have much to be proud of (R) 5. I take a positive attitude toward myself 6. I certainly feel useless at times (R) 7. All in all, I’m inclined to feel that I am a failure (R) 8. I am able to do things as well as most other people 9. As times I think I am not good at all (R) 10. On the whole, I am satisfied with myself R = Reverse items--these must be reverse-coded before any subsequent analysis is performed. ________ SOURCE: Carmines, E. G. , & Zeller, R. A. , 1979, Reliability and Validity Assessment, Beverly Hills, CA: Sage Publications.

ACCURACY OF MEASUREMENT: RELIABILITY Summated multi-item scale given to 340 individuals to measure Self-Esteem KEY: 1 = Never true, 2 = Seldom true, 3 = Sometimes true, 4 = Often true, 5 = Almost always true 1. I feel that I have a number of good qualities 2. I wish I could have more respect for myself (R) Instrument A: 3. I feel that I’m a person of worth, at least on an summated equal plane with others score A 4. I feel I do not have much to be proud of (R) 5. I take a positive attitude toward myself 6. I certainly feel useless at times (R) 7. All in all, I’m inclined to feel that I am a failure (R) Instrument B: 8. I am able to do things as well as most other people summated 9. As times I think I am not good at all (R) score B 10. On the whole, I am satisfied with myself R = Reverse items--these must be reverse-coded before any subsequent analysis is performed. ________ SOURCE: Carmines, E. G. , & Zeller, R. A. , 1979, Reliability and Validity Assessment, Beverly Hills, CA: Sage Publications.

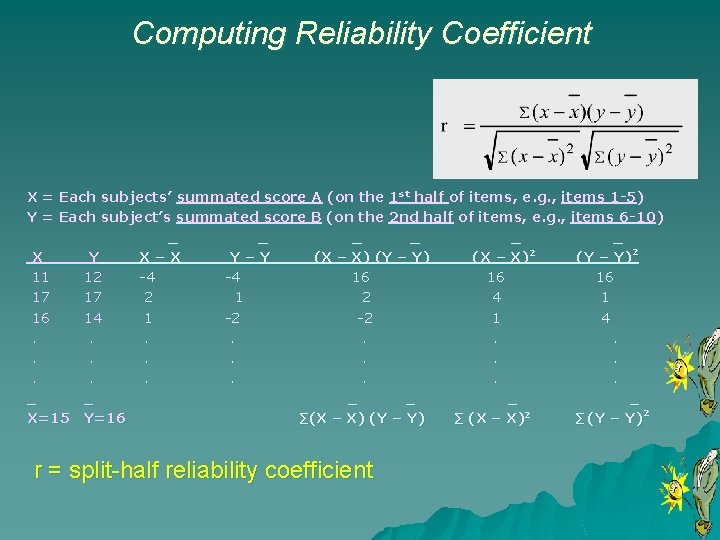

Computing Reliability Coefficient X = Each subjects’ summated score A (on the 1 st half of items, e. g. , items 1 -5) Y = Each subject’s summated score B (on the 2 nd half of items, e. g. , items 6 -10) _ _ _ X Y X–X Y–Y (X – X) (Y – Y) (X – X)2 (Y – Y)2 11 12 -4 -4 16 16 16 17 17 2 1 2 4 1 16 14 1 -2 -2 1 4. . . . . _ _ _ X=15 Y=16 ∑(X – X) (Y – Y) ∑ (X – X)2 ∑ (Y – Y)2 r = split-half reliability coefficient

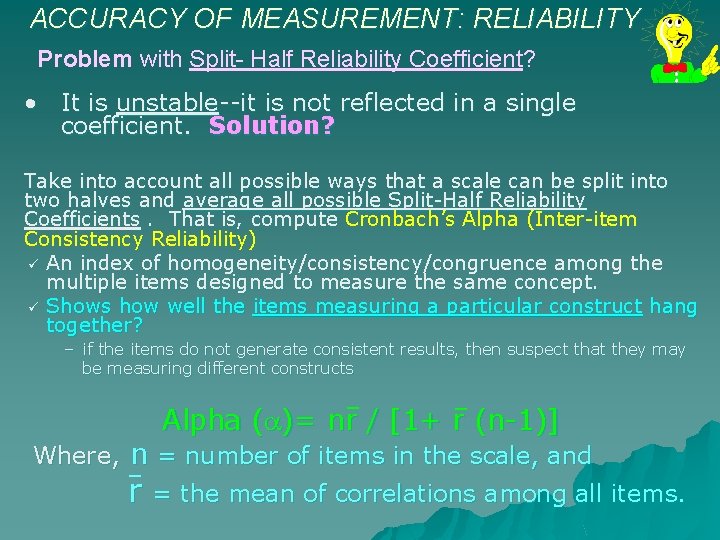

ACCURACY OF MEASUREMENT: RELIABILITY Problem with Split- Half Reliability Coefficient? • It is unstable--it is not reflected in a single coefficient. Solution? Take into account all possible ways that a scale can be split into two halves and average all possible Split-Half Reliability Coefficients. That is, compute Cronbach’s Alpha (Inter-item Consistency Reliability) An index of homogeneity/consistency/congruence among the multiple items designed to measure the same concept. Shows how well the items measuring a particular construct hang together? – if the items do not generate consistent results, then suspect that they may be measuring different constructs Alpha ( )= nr / [1+ r (n-1)] Where, n = number of items in the scale, and r = the mean of correlations among all items.

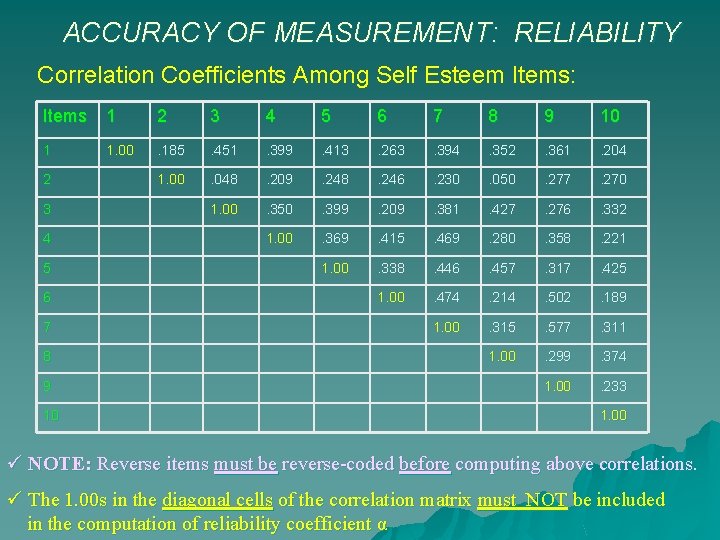

ACCURACY OF MEASUREMENT: RELIABILITY Correlation Coefficients Among Self Esteem Items: Items 1 2 3 4 5 6 7 8 9 10 1 1. 00 . 185 . 451 . 399 . 413 . 263 . 394 . 352 . 361 . 204 1. 00 . 048 . 209 . 248 . 246 . 230 . 050 . 277 . 270 1. 00 . 350 . 399 . 209 . 381 . 427 . 276 . 332 1. 00 . 369 . 415 . 469 . 280 . 358 . 221 1. 00 . 338 . 446 . 457 . 317 . 425 1. 00 . 474 . 214 . 502 . 189 1. 00 . 315 . 577 . 311 1. 00 . 299 . 374 1. 00 . 233 2 3 4 5 6 7 8 9 10 1. 00 NOTE: Reverse items must be reverse-coded before computing above correlations. The 1. 00 s in the diagonal cells of the correlation matrix must NOT be included in the computation of reliability coefficient α

![ACCURACY OF MEASUREMENT: RELIABILITY Alpha = nr / [1+ r (n-1)] r = (. ACCURACY OF MEASUREMENT: RELIABILITY Alpha = nr / [1+ r (n-1)] r = (.](http://slidetodoc.com/presentation_image/4f05443080579c5ac1ad512bd59ed3e9/image-32.jpg)

ACCURACY OF MEASUREMENT: RELIABILITY Alpha = nr / [1+ r (n-1)] r = (. 185 +. 451 +. 399 +. . . +. 299 +. 374 +. 233) / 45 =. 32 Note: The 1. 00 s in the diagonal cells of the correlation matrix should NOT be included in the above computation Alpha ( ) = 10 (. 32) / [ 1 +. 32 (9) ] =. 82 NOTE: Cronbach’s Alpha can be computed and reported ONLY for summated multi-item scales. REMEMBER—It shows how well the multiple items measuring a particular construct hang together? -- EXAMPLE: Let’s see the SPSS OUTPUT for a 4 -item measure of organizational loyalty.

![_ r =. 6728 n=4 = 4 (. 6728) / [1+ 3 (. 6728)] _ r =. 6728 n=4 = 4 (. 6728) / [1+ 3 (. 6728)]](http://slidetodoc.com/presentation_image/4f05443080579c5ac1ad512bd59ed3e9/image-33.jpg)

_ r =. 6728 n=4 = 4 (. 6728) / [1+ 3 (. 6728)] = 0. 8916 Use these for item analysis; i. e. , determining quality of individual items.

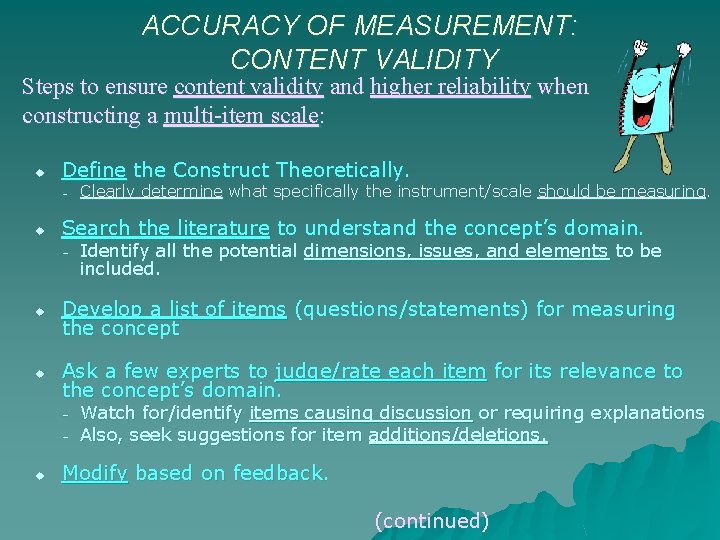

ACCURACY OF MEASUREMENT: CONTENT VALIDITY Steps to ensure content validity and higher reliability when constructing a multi-item scale: u Define the Construct Theoretically. – u Search the literature to understand the concept’s domain. – u u Identify all the potential dimensions, issues, and elements to be included. Develop a list of items (questions/statements) for measuring the concept Ask a few experts to judge/rate each item for its relevance to the concept’s domain. – – u Clearly determine what specifically the instrument/scale should be measuring. Watch for/identify items causing discussion or requiring explanations Also, seek suggestions for item additions/deletions. Modify based on feedback. (continued)

ACCURACY OF MEASUREMENT: CONTENT VALIDITY Steps to ensure content validity and higher reliability when constructing a multi-item scale: u Pretest the scale. – – u Do reliability analysis to identify items that don’t hang together with the rest of items. – u Test it on a group similar to population being studied. Encourage thinking aloud/indicating their thoughts as they consider each instruction/item to identify problematic items. Encourage suggestions and criticisms--don’t get defensive. Examine descriptive statistics for scale means too close to minimum/maximum values; they may signal range restriction and can be candidates for modification. For each item, compare : (a) Cronbach’s alpha of the scale if that particular item were to be deleted from the scale, with (b) The multi-item scale’s overall Cronbach’s alpha when all items are included Whenever alpha increases as a result of deleting an item (i. e. , a > b), that item is a candidate for deletion/revision. Revise/delete ambiguous/problematic items/instructions.

QUESTIONS OR COMMENTS ?

- Slides: 36