Accenture Delivery Data Cubes IDC Business Process Performance

Accenture Delivery Data Cubes – IDC (Business Process Performance Baseline Presentation) Q 1 FY 17

BPPB (Accenture Delivery Data Cubes – IDC) Ø Business Process Performance Baseline Report represents Organization's capability in terms of schedule, effort, cost and quality Ø The BPPB’s are indicators of performance of DC for T, India in the Industry. Ø BPPB data is also used • as input to the projects as they set the project goals • as improvement target for CI initiatives • as input data for prediction analysis to understand future performance Ø The organization BPPB is based on stable data from: • closed releases for AD and Testing projects, • monthly data for AM projects Ø BPPB’s are established at various levels - Organization, Project category, Industry Group, Industry Segment, Technology , ADM 100 (Yes/No), Solution Factory, Tailoring (Yes/No) Ø The BPPBs are released every quarter based on the data submitted by the projects for past 18 rolling months. The Metrics team is responsible for the analysis and release of the BPPB. Ø Organization BPPBs are available at https: //analyticsondemand 2013. accenture. com/Pages/Business. Benchmarks. aspx Ø Details on Measures and Metrics are available in the Measurement Guidelines at https: //idcquality. accenture. com/#/metricsscope/measurementguidelines Ø Organization goals are available on QMS at https: //idcquality. accenture. com/#/metricsprocessbaseline/metricsgoals Copyright © 2013 Accenture All rights reserved. 2

Why BPPB? Business Process Performance Baseline (BPPB) represents the organization's performance in terms of schedule, quality and cost of delivery. BPPB’s can be used: At Organization Level • Analyze the organization’s performance trends on a quarterly basis • Compare the organization’s performance baselines against the organization goals At Project Level • Use organization performance to set initial metrics goals • Use organization performance to set the goals for interim sub-process level • Compare the project performance baselines with the organization performance baselines • Baseline values can be used by the projects to identify the distribution of the data for Monte Carlo Prediction using crystal ball Copyright © 2013 Accenture All rights reserved. 3

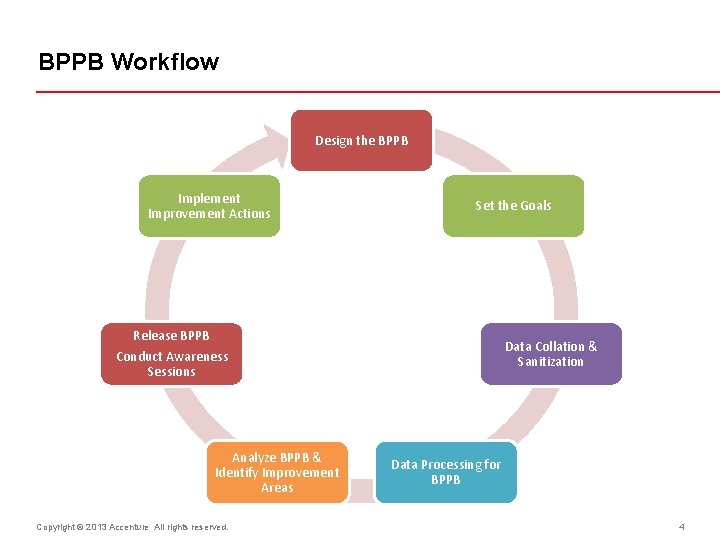

BPPB Workflow Design the BPPB Implement Improvement Actions Set the Goals Release BPPB Data Collation & Sanitization Conduct Awareness Sessions Analyze BPPB & Identify Improvement Areas Copyright © 2013 Accenture All rights reserved. Data Processing for BPPB 4

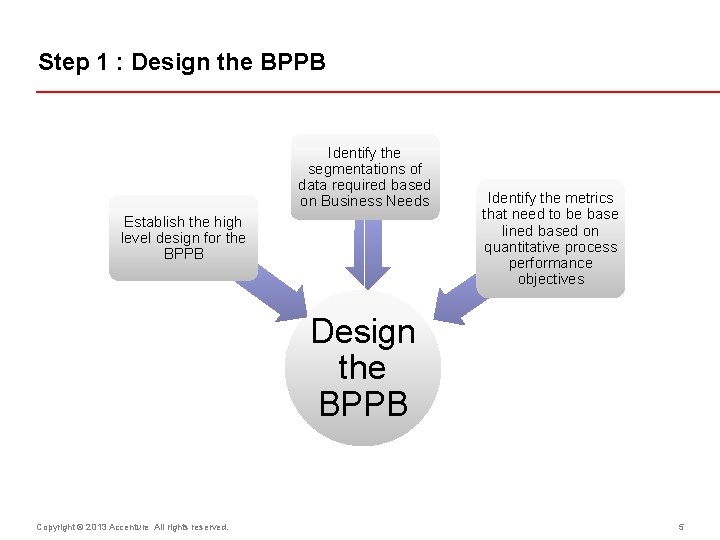

Step 1 : Design the BPPB Identify the segmentations of data required based on Business Needs Establish the high level design for the BPPB Identify the metrics that need to be base lined based on quantitative process performance objectives Design the BPPB Copyright © 2013 Accenture All rights reserved. 5

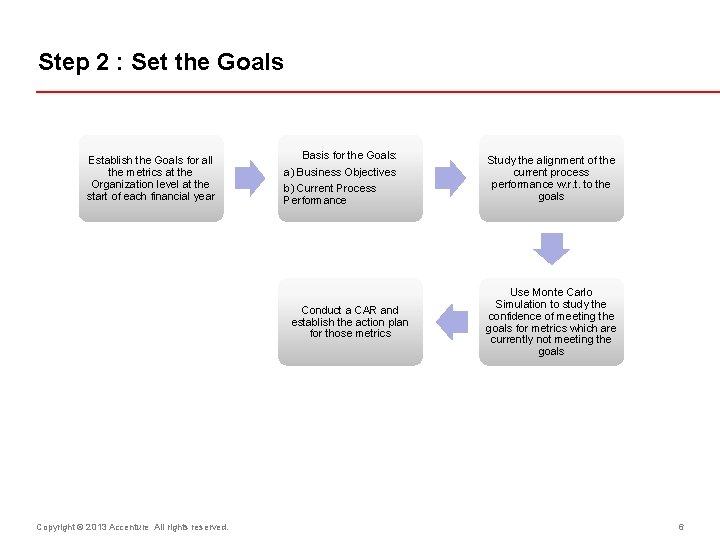

Step 2 : Set the Goals Establish the Goals for all the metrics at the Organization level at the start of each financial year Basis for the Goals: a) Business Objectives b) Current Process Performance Conduct a CAR and establish the action plan for those metrics Copyright © 2013 Accenture All rights reserved. Study the alignment of the current process performance w. r. t. to the goals Use Monte Carlo Simulation to study the confidence of meeting the goals for metrics which are currently not meeting the goals 6

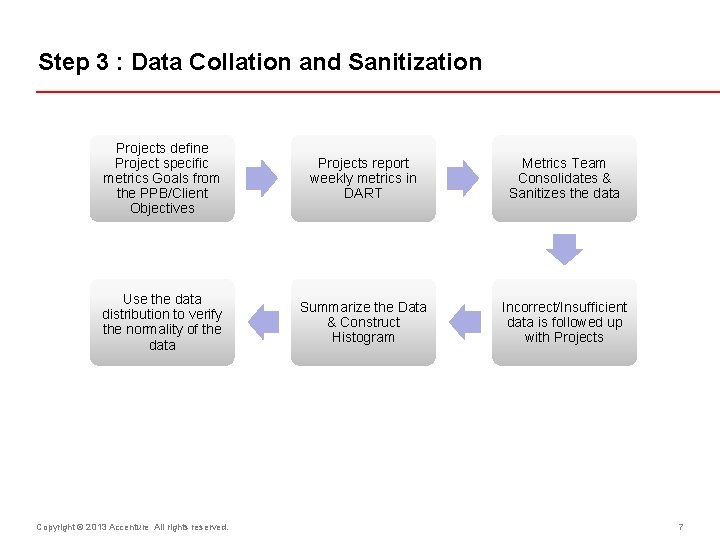

Step 3 : Data Collation and Sanitization Projects define Project specific metrics Goals from the PPB/Client Objectives Projects report weekly metrics in DART Metrics Team Consolidates & Sanitizes the data Use the data distribution to verify the normality of the data Summarize the Data & Construct Histogram Incorrect/Insufficient data is followed up with Projects Copyright © 2013 Accenture All rights reserved. 7

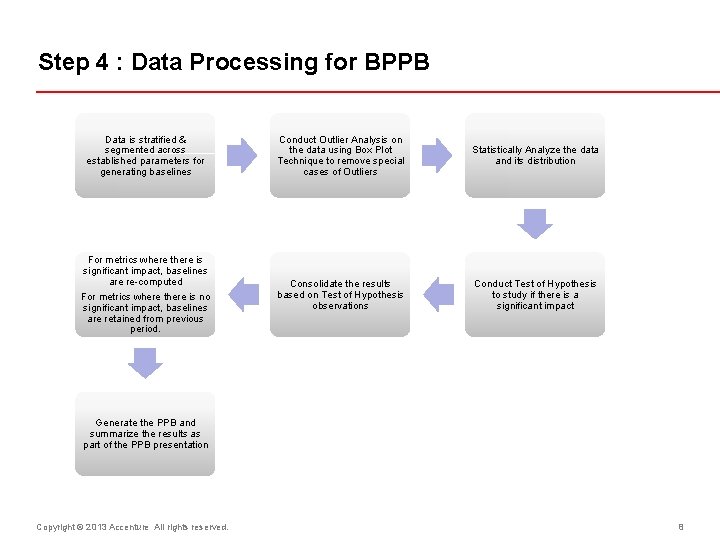

Step 4 : Data Processing for BPPB Data is stratified & segmented across established parameters for generating baselines For metrics where there is significant impact, baselines are re-computed For metrics where there is no significant impact, baselines are retained from previous period. Conduct Outlier Analysis on the data using Box Plot Technique to remove special cases of Outliers Statistically Analyze the data and its distribution Consolidate the results based on Test of Hypothesis observations Conduct Test of Hypothesis to study if there is a significant impact Generate the PPB and summarize the results as part of the PPB presentation Copyright © 2013 Accenture All rights reserved. 8

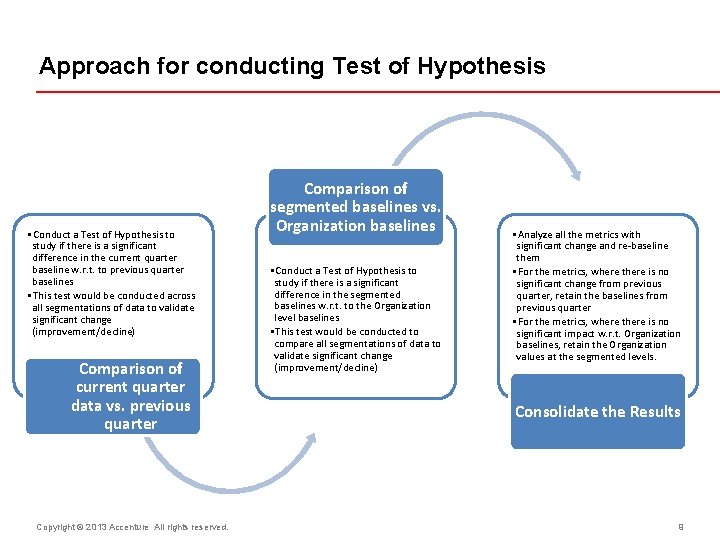

Approach for conducting Test of Hypothesis • Conduct a Test of Hypothesis to study if there is a significant difference in the current quarter baseline w. r. t. to previous quarter baselines • This test would be conducted across all segmentations of data to validate significant change (improvement/decline) Comparison of current quarter data vs. previous quarter Copyright © 2013 Accenture All rights reserved. Comparison of segmented baselines vs. Organization baselines • Conduct a Test of Hypothesis to study if there is a significant difference in the segmented baselines w. r. t. to the Organization level baselines • This test would be conducted to compare all segmentations of data to validate significant change (improvement/decline) • Analyze all the metrics with significant change and re-baseline them • For the metrics, where there is no significant change from previous quarter, retain the baselines from previous quarter • For the metrics, where there is no significant impact w. r. t. Organization baselines, retain the Organization values at the segmented levels. Consolidate the Results 9

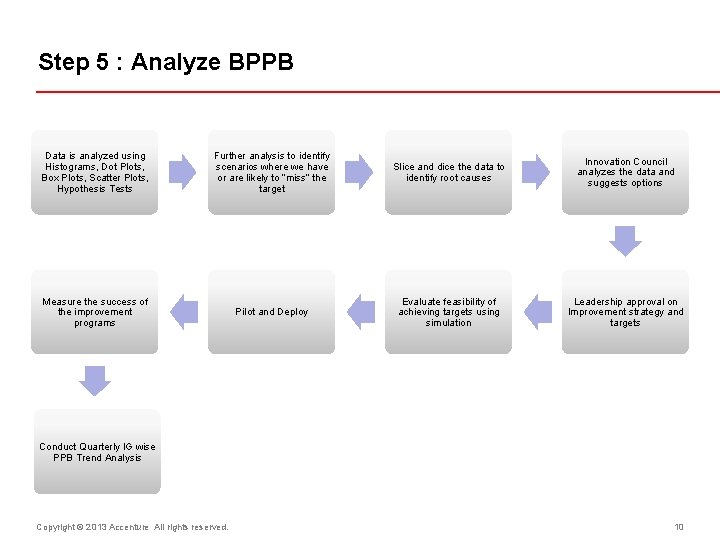

Step 5 : Analyze BPPB Data is analyzed using Histograms, Dot Plots, Box Plots, Scatter Plots, Hypothesis Tests Further analysis to identify scenarios where we have or are likely to “miss” the target Slice and dice the data to identify root causes Innovation Council analyzes the data and suggests options Measure the success of the improvement programs Pilot and Deploy Evaluate feasibility of achieving targets using simulation Leadership approval on Improvement strategy and targets Conduct Quarterly IG wise PPB Trend Analysis Copyright © 2013 Accenture All rights reserved. 10

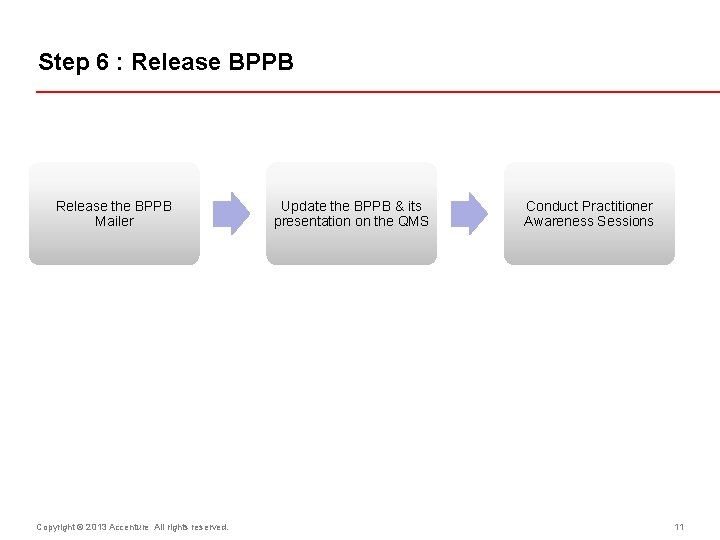

Step 6 : Release BPPB Release the BPPB Mailer Copyright © 2013 Accenture All rights reserved. Update the BPPB & its presentation on the QMS Conduct Practitioner Awareness Sessions 11

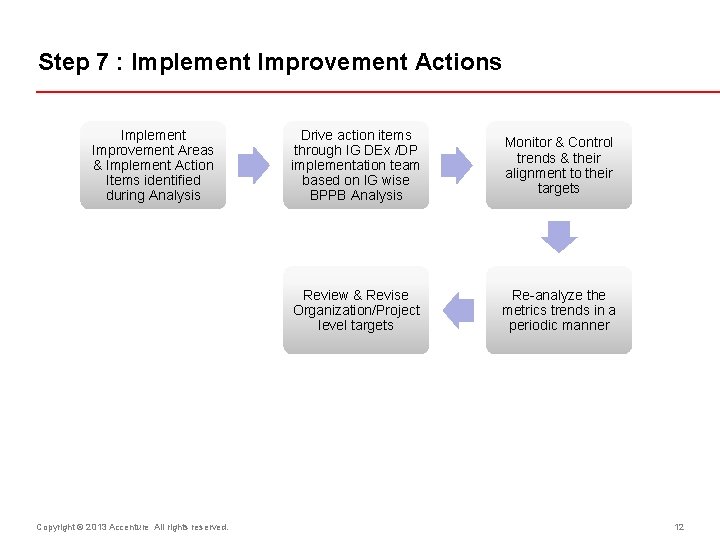

Step 7 : Implement Improvement Actions Implement Improvement Areas & Implement Action Items identified during Analysis Copyright © 2013 Accenture All rights reserved. Drive action items through IG DEx /DP implementation team based on IG wise BPPB Analysis Monitor & Control trends & their alignment to their targets Review & Revise Organization/Project level targets Re-analyze the metrics trends in a periodic manner 12

BPPB tool – MSBI (Microsoft Business Intelligence tool) • Business Benchmarks are now available in MSBI (Microsoft Business Intelligence tool) • Baselines are available for Application development, Application Maintenance, Testing and CTP Productivity related metrics. • Past 8 quarters data is visible in MSBI tool. Copyright © 2013 Accenture All rights reserved. 13

Key Highlights – Application Development • End date variance and Defect removal effectiveness are stable for last 8 quarters. • Delivered Defect Rate is stable and is within IDC Goal (0. 14 defects per 1000 hour). • Stage wise defect rate - Analyze and Stage wise defect rate - Build are stable for last 8 quarters. Copyright © 2013 Accenture All rights reserved. 14

Key Highlights – Application Development • Testing effectiveness - Assembly test metrics have gone below the specified min goal from Q 1 FY 16. • All Peer review effectiveness metrics are showing stable for this quarter. • Cost of quality is showing stable but it is above IDC goal of 30%. • Cost of rework is showing stable and within IDC goal of 5% • Overall defect rate is showing stable and within IDC goal. Copyright © 2013 Accenture All rights reserved. 15

Areas for improvement - Application Development • Cost of Quality – Projects needs to pay closer attention to COQ numbers, as how much effort is spent on quality related activities. Spending too much efforts or too less efforts are not recommended. • Change Request Impact – It indicates the effectiveness of the requirements gathering process, provides details about the additional effort utilized above the planned effort due to requirement changes. • Cost of Rework – Projects needs to spend right amount effort in review activities so that less rework will be observed. • Phase wise Peer Review Effectiveness and Test effectiveness – Projects are expected to spend good amount of efforts on review and testing activities so that maximum defects are identified internally, which will result in minimizing defects detected by client (i. e. lower Delivered Defects Rate). • Overall and delivered Defect rate - Indicates the effectiveness of the review and testing activities; domain/technical expertise, usage of various tools thus ensuring that less defects are identified in the release made and on the delivered release as well i. e. ‘Defects detected in DC’ and ‘Post delivery defects’. Copyright © 2013 Accenture All rights reserved. 16

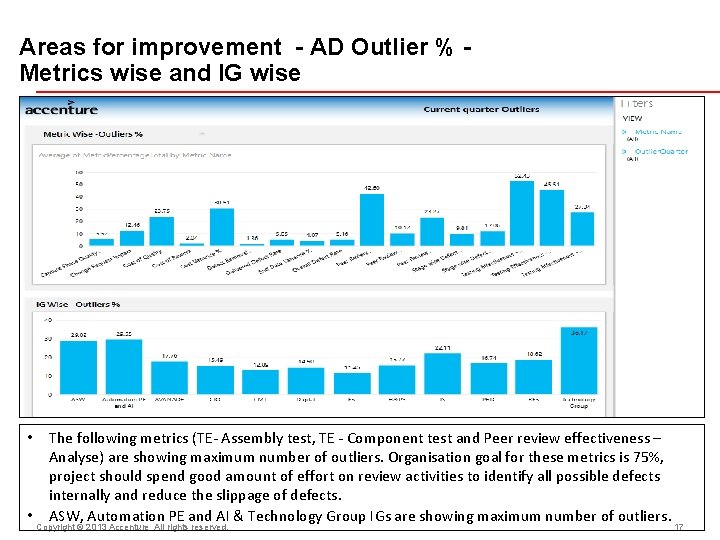

Areas for improvement - AD Outlier % Metrics wise and IG wise • • The following metrics (TE- Assembly test, TE - Component test and Peer review effectiveness – Analyse) are showing maximum number of outliers. Organisation goal for these metrics is 75%, project should spend good amount of effort on review activities to identify all possible defects internally and reduce the slippage of defects. ASW, Automation PE and AI & Technology Group IGs are showing maximum number of outliers. Copyright © 2013 Accenture All rights reserved. 17

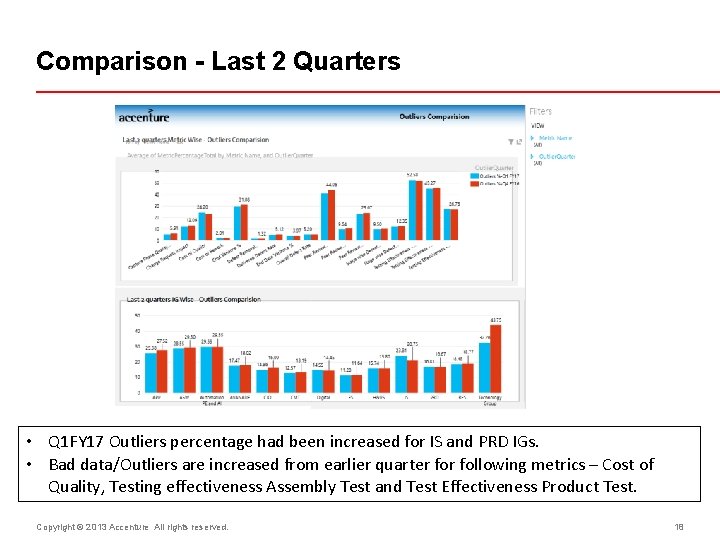

Comparison - Last 2 Quarters • Q 1 FY 17 Outliers percentage had been increased for IS and PRD IGs. • Bad data/Outliers are increased from earlier quarter following metrics – Cost of Quality, Testing effectiveness Assembly Test and Test Effectiveness Product Test. Copyright © 2013 Accenture All rights reserved. 18

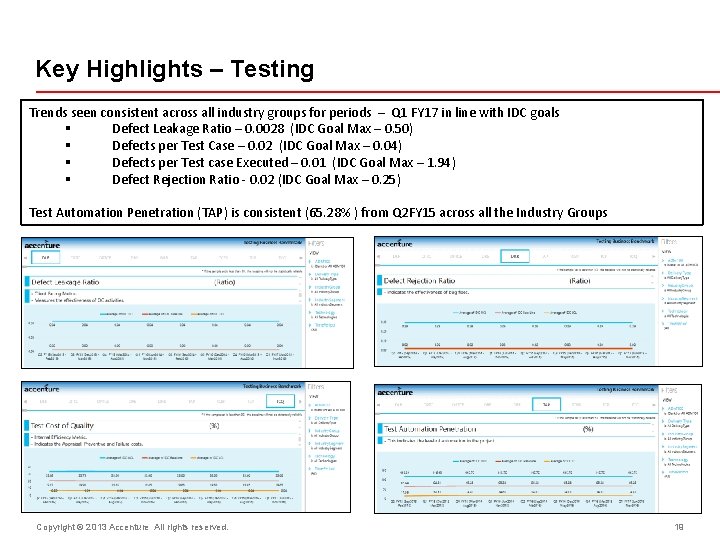

Key Highlights – Testing Trends seen consistent across all industry groups for periods – Q 1 FY 17 in line with IDC goals § Defect Leakage Ratio – 0. 0028 (IDC Goal Max – 0. 50) § Defects per Test Case – 0. 02 (IDC Goal Max – 0. 04) § Defects per Test case Executed – 0. 01 (IDC Goal Max – 1. 94) § Defect Rejection Ratio - 0. 02 (IDC Goal Max – 0. 25) Test Automation Penetration (TAP) is consistent (65. 28% ) from Q 2 FY 15 across all the Industry Groups Copyright © 2013 Accenture All rights reserved. 19

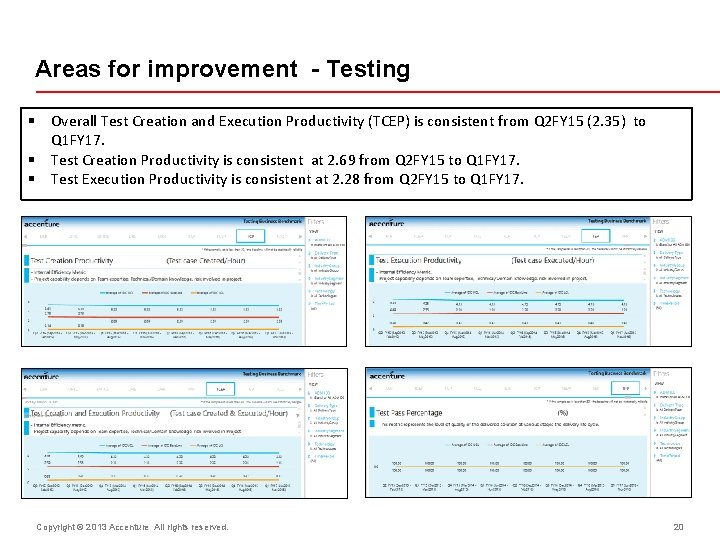

Areas for improvement - Testing § Overall Test Creation and Execution Productivity (TCEP) is consistent from Q 2 FY 15 (2. 35) to Q 1 FY 17. § Test Creation Productivity is consistent at 2. 69 from Q 2 FY 15 to Q 1 FY 17. § Test Execution Productivity is consistent at 2. 28 from Q 2 FY 15 to Q 1 FY 17. Copyright © 2013 Accenture All rights reserved. 20

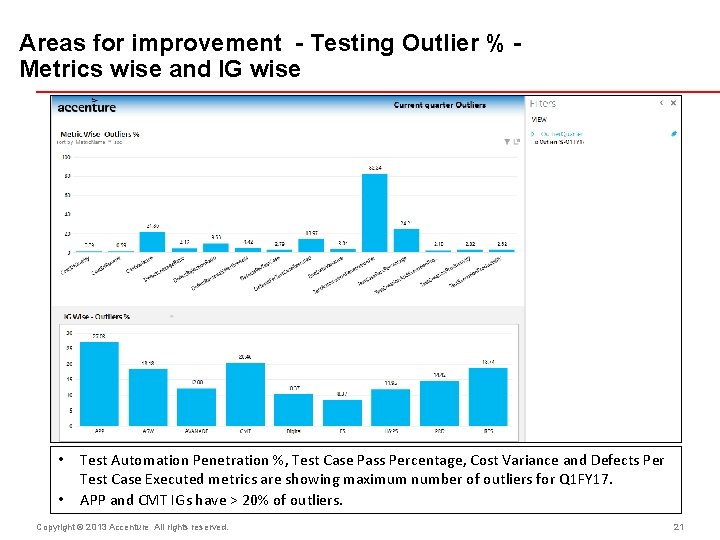

Areas for improvement - Testing Outlier % Metrics wise and IG wise • • Test Automation Penetration %, Test Case Pass Percentage, Cost Variance and Defects Per Test Case Executed metrics are showing maximum number of outliers for Q 1 FY 17. APP and CMT IGs have > 20% of outliers. Copyright © 2013 Accenture All rights reserved. 21

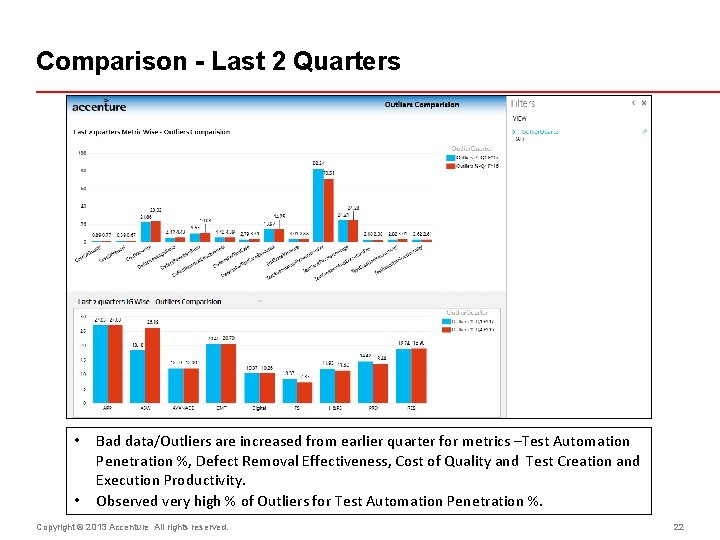

Comparison - Last 2 Quarters • • Bad data/Outliers are increased from earlier quarter for metrics –Test Automation Penetration %, Defect Removal Effectiveness, Cost of Quality and Test Creation and Execution Productivity. Observed very high % of Outliers for Test Automation Penetration %. Copyright © 2013 Accenture All rights reserved. 22

Key Highlights Application Maintenance - Managed Delivery Critical SLA Compliance & SLA Compliance are maintained at 100% § Majority of baselines are retained from earlier quarters since there is no significant change. Other Baselines are improving quarter on quarter (Ex. Average effort of P 5 Problem Requests & Average effort of Service Requests). Productivity : Average Effort/Ticket (Incidents, Work Requests, Service Requests, Problem Requests) • Baselines which have retained previous quarter’s baselines - Organization - 57%, Industry Group - 81%, Technology - 82% • Baselines which have shown improvement – Organization - 29%, Industry Group - 10%, Technology - 9% Backlog Processing Efficiency (Incidents, Work Requests, Problem Requests) § Baselines which have retained previous quarter’s baselines - Organization - 77%, Industry Group - 77% , Technology - 81% § Baselines which have shown improvement – Organization – 15%, Industry Group - 13%, Technology - 11% Schedule : Response time performance (Incident, Problem & Work Request) • Baselines which have retained previous quarter’s baselines - Organization - 100%, Industry Group - 92%, Technology - 97% • Baselines which have shown improvement - Industry group - 5%, Technology - 2% Resolution time performance (Incident, Problem & Work Request) • Baselines which have retained previous quarter’s baselines - Organization - 100%, Industry Group - 94%, Technology - 93% • Baselines which have shown improvement - Industry Group - 3%, Technology - 5% Quality : Reopened metric for Incidents, Problems & Work Requests • Baselines which have retained previous quarter’s baselines - Organization - 100%, Industry Group - 94%, Technology - 96% • Baselines which have shown improvement - Industry Group - 3%, Technology - 1% Copyright © 2016 Accenture All Rights Reserved.

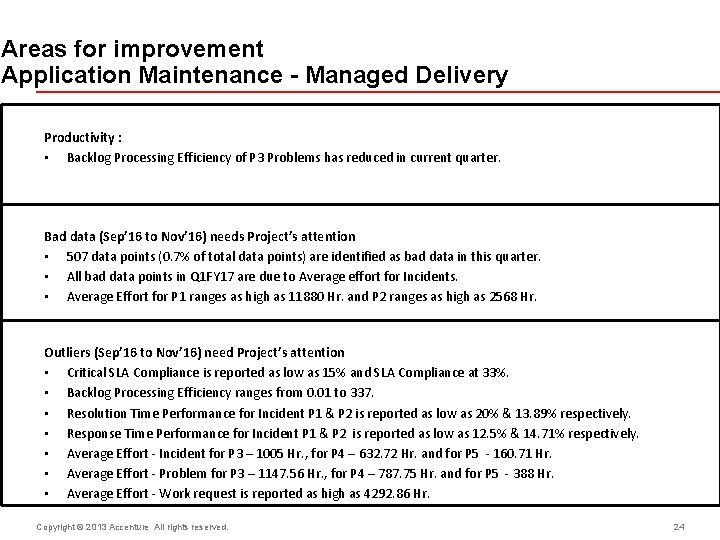

Areas for improvement Application Maintenance - Managed Delivery Productivity : • Backlog Processing Efficiency of P 3 Problems has reduced in current quarter. Bad data (Sep’ 16 to Nov’ 16) needs Project’s attention • 507 data points (0. 7% of total data points) are identified as bad data in this quarter. • All bad data points in Q 1 FY 17 are due to Average effort for Incidents. • Average Effort for P 1 ranges as high as 11880 Hr. and P 2 ranges as high as 2568 Hr. Outliers (Sep’ 16 to Nov’ 16) need Project’s attention • Critical SLA Compliance is reported as low as 15% and SLA Compliance at 33%. • Backlog Processing Efficiency ranges from 0. 01 to 337. • Resolution Time Performance for Incident P 1 & P 2 is reported as low as 20% & 13. 89% respectively. • Response Time Performance for Incident P 1 & P 2 is reported as low as 12. 5% & 14. 71% respectively. • Average Effort - Incident for P 3 – 1005 Hr. , for P 4 – 632. 72 Hr. and for P 5 - 160. 71 Hr. • Average Effort - Problem for P 3 – 1147. 56 Hr. , for P 4 – 787. 75 Hr. and for P 5 - 388 Hr. • Average Effort - Work request is reported as high as 4292. 86 Hr. Copyright © 2013 Accenture All rights reserved. 24

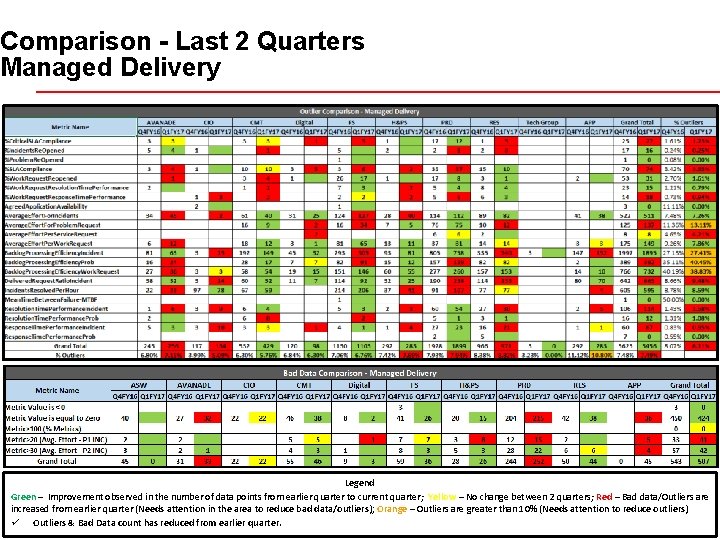

Comparison - Last 2 Quarters Managed Delivery Legend Green – Improvement observed in the number of data points from earlier quarter to current quarter; Yellow – No change between 2 quarters; Red – Bad data/Outliers are increased from earlier quarter (Needs attention in the area to reduce bad data/outliers); Orange – Outliers are greater than 10% (Needs attention to reduce outliers) ü Outliers &© Bad Data count has. Allreduced from earlier quarter. 25 Copyright 2013 Accenture rights reserved.

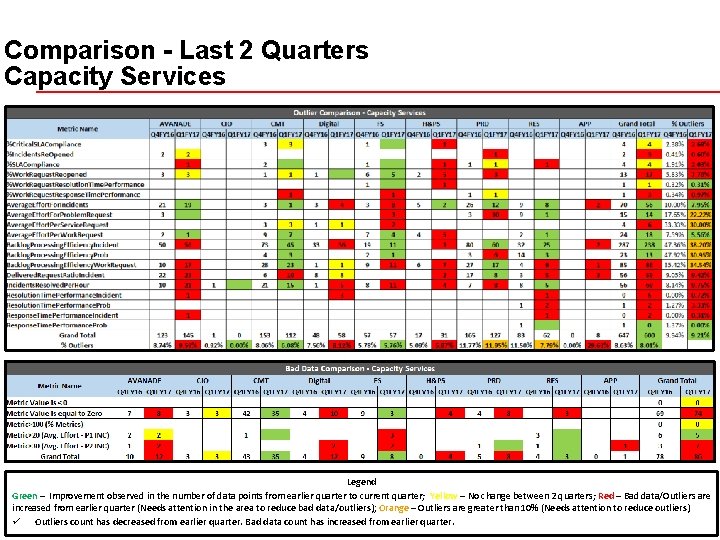

Comparison - Last 2 Quarters Capacity Services Legend Green – Improvement observed in the number of data points from earlier quarter to current quarter; Yellow – No change between 2 quarters; Red – Bad data/Outliers are increased from earlier quarter (Needs attention in the area to reduce bad data/outliers); Orange – Outliers are greater than 10% (Needs attention to reduce outliers) ü Outliers count has decreased from earlier quarter. Bad data count has increased from earlier quarter. Copyright © 2013 Accenture All rights reserved. 26

Follow-ups, Questions and Answers Copyright © 2013 Accenture All rights reserved. 27

- Slides: 27