Accelerating an NBody Simulation Anuj Kalia Maxeler Technologies

Accelerating an N-Body Simulation Anuj Kalia Maxeler Technologies

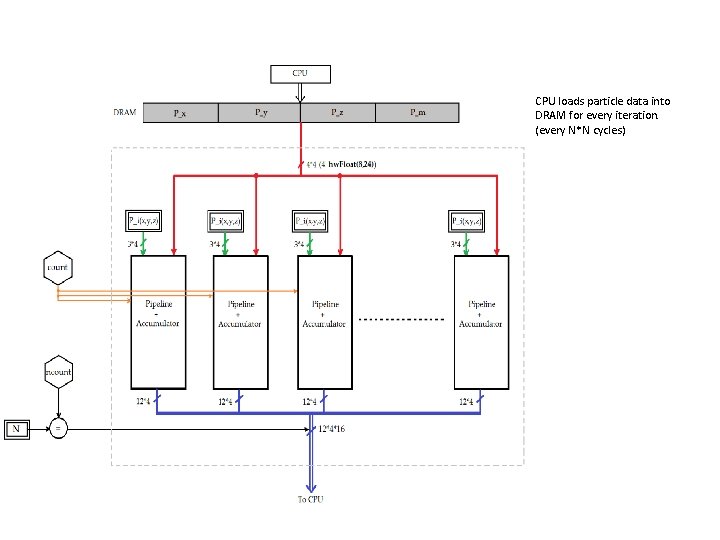

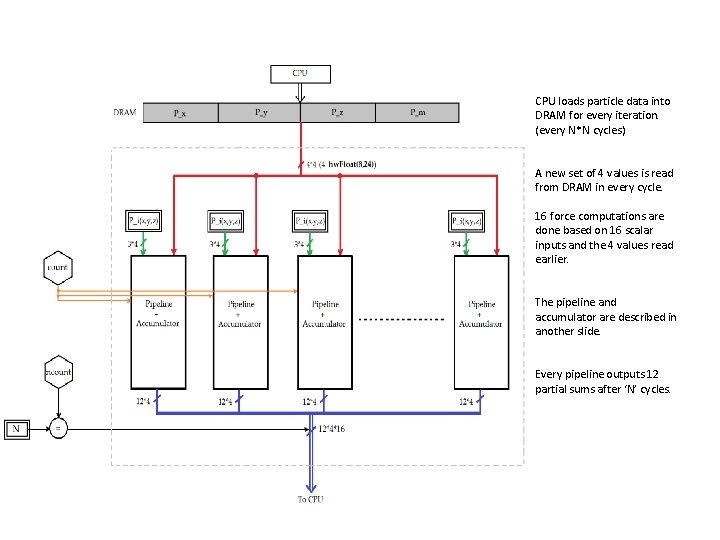

CPU loads particle data into DRAM for every iteration. (every N*N cycles)

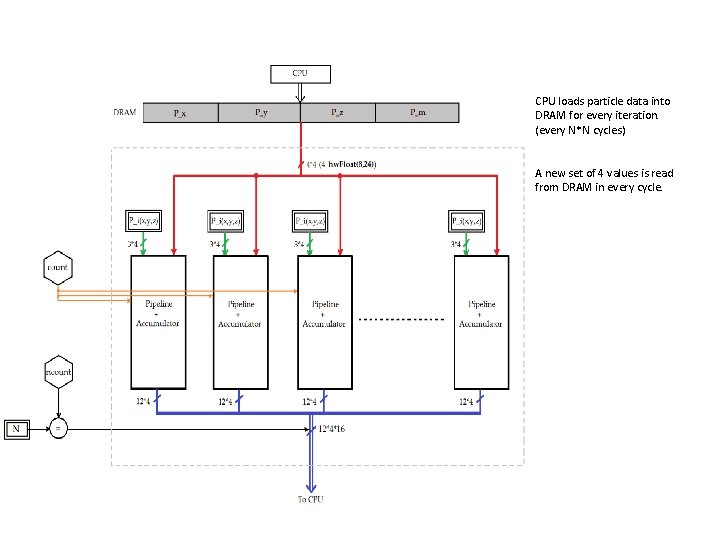

CPU loads particle data into DRAM for every iteration. (every N*N cycles) A new set of 4 values is read from DRAM in every cycle.

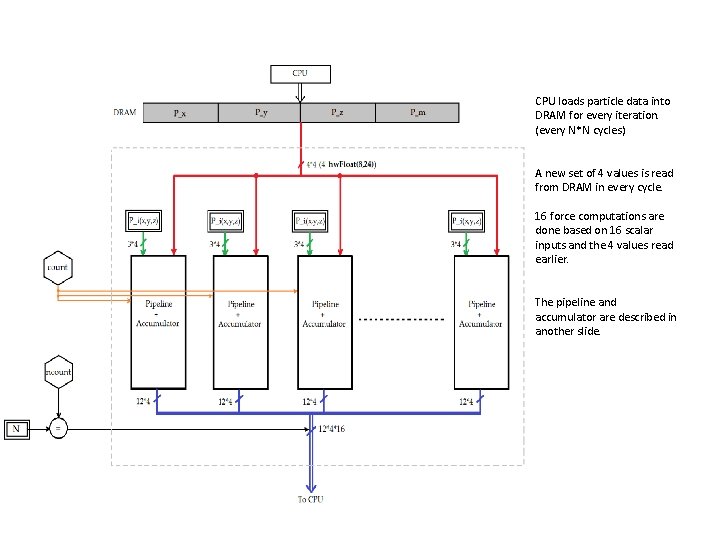

CPU loads particle data into DRAM for every iteration. (every N*N cycles) A new set of 4 values is read from DRAM in every cycle. 16 force computations are done based on 16 scalar inputs and the 4 values read earlier. The pipeline and accumulator are described in another slide.

CPU loads particle data into DRAM for every iteration. (every N*N cycles) A new set of 4 values is read from DRAM in every cycle. 16 force computations are done based on 16 scalar inputs and the 4 values read earlier. The pipeline and accumulator are described in another slide. Every pipeline outputs 12 partial sums after ‘N’ cycles.

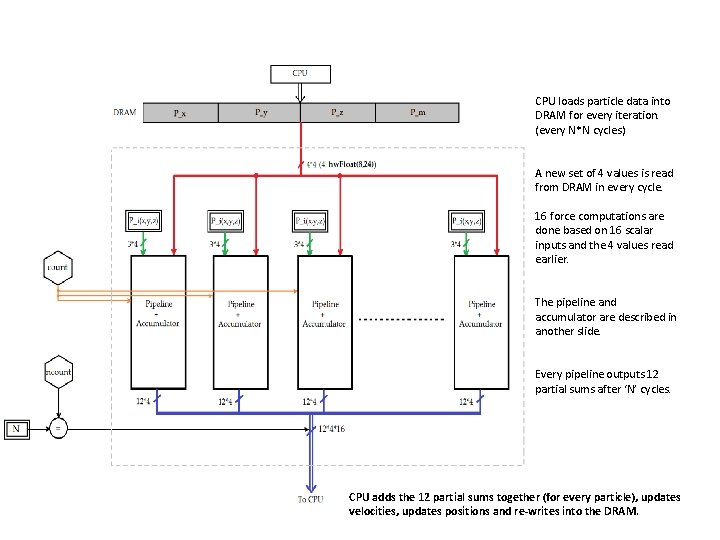

CPU loads particle data into DRAM for every iteration. (every N*N cycles) A new set of 4 values is read from DRAM in every cycle. 16 force computations are done based on 16 scalar inputs and the 4 values read earlier. The pipeline and accumulator are described in another slide. Every pipeline outputs 12 partial sums after ‘N’ cycles. CPU adds the 12 partial sums together (for every particle), updates velocities, updates positions and re-writes into the DRAM.

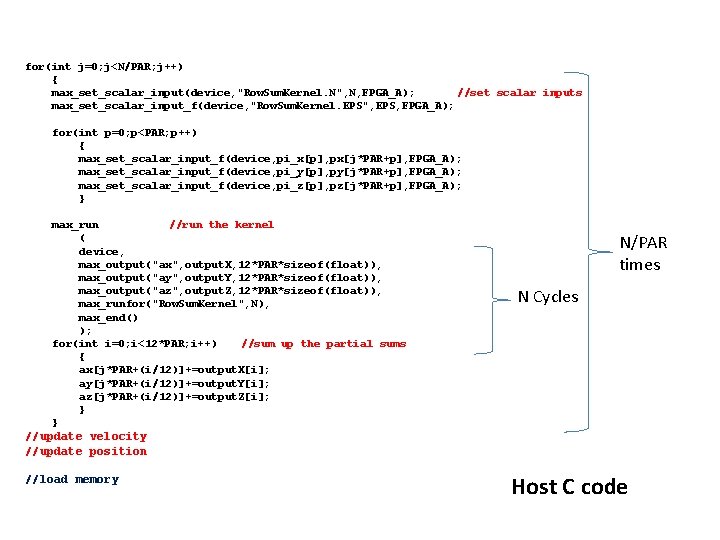

for(int j=0; j<N/PAR; j++) { max_set_scalar_input(device, "Row. Sum. Kernel. N", N, FPGA_A); //set scalar inputs max_set_scalar_input_f(device, "Row. Sum. Kernel. EPS", EPS, FPGA_A); for(int p=0; p<PAR; p++) { max_set_scalar_input_f(device, pi_x[p], px[j*PAR+p], FPGA_A); max_set_scalar_input_f(device, pi_y[p], py[j*PAR+p], FPGA_A); max_set_scalar_input_f(device, pi_z[p], pz[j*PAR+p], FPGA_A); } max_run //run the kernel ( device, max_output("ax", output. X, 12*PAR*sizeof(float)), max_output("ay", output. Y, 12*PAR*sizeof(float)), max_output("az", output. Z, 12*PAR*sizeof(float)), max_runfor("Row. Sum. Kernel", N), max_end() ); for(int i=0; i<12*PAR; i++) //sum up the partial sums { ax[j*PAR+(i/12)]+=output. X[i]; ay[j*PAR+(i/12)]+=output. Y[i]; az[j*PAR+(i/12)]+=output. Z[i]; } } N/PAR times N Cycles //update velocity //update position //load memory Host C code

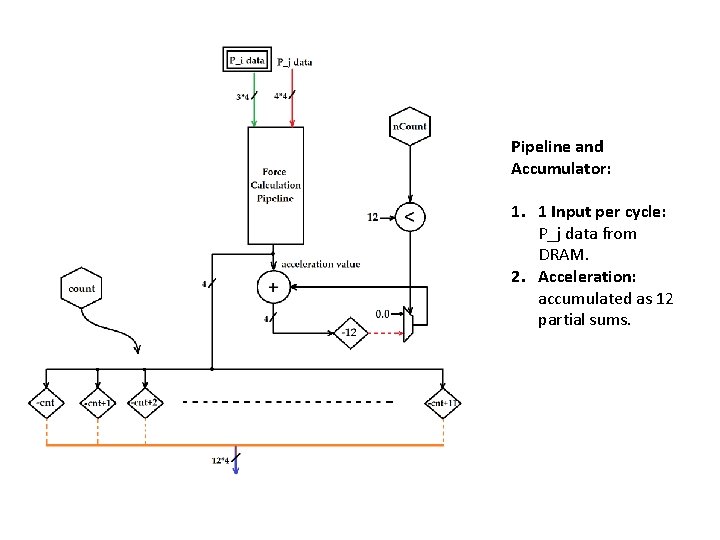

Pipeline and Accumulator: 1. 1 Input per cycle: P_j data from DRAM. 2. Acceleration: accumulated as 12 partial sums.

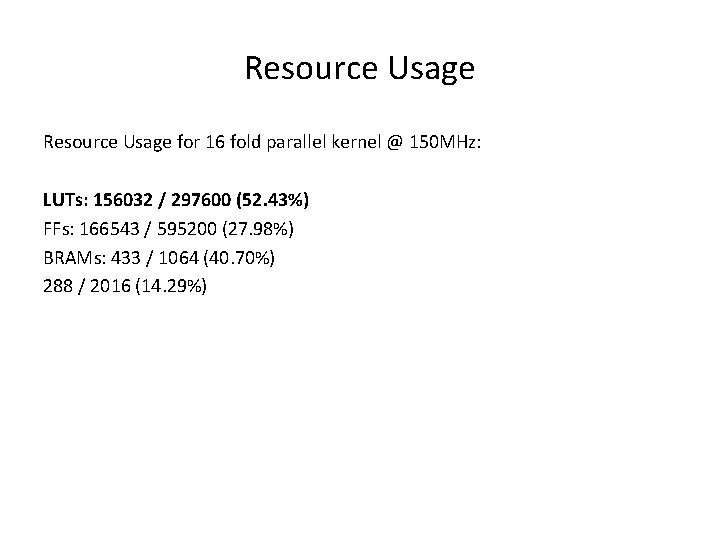

Resource Usage for 16 fold parallel kernel @ 150 MHz: LUTs: 156032 / 297600 (52. 43%) FFs: 166543 / 595200 (27. 98%) BRAMs: 433 / 1064 (40. 70%) 288 / 2016 (14. 29%)

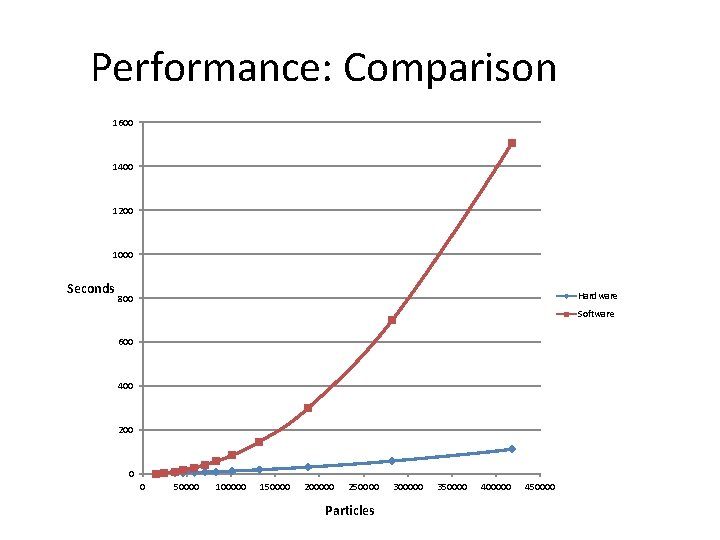

Performance: Comparison 1600 1400 1200 1000 Seconds Hardware 800 Software 600 400 200 0 0 50000 100000 150000 200000 250000 Particles 300000 350000 400000 450000

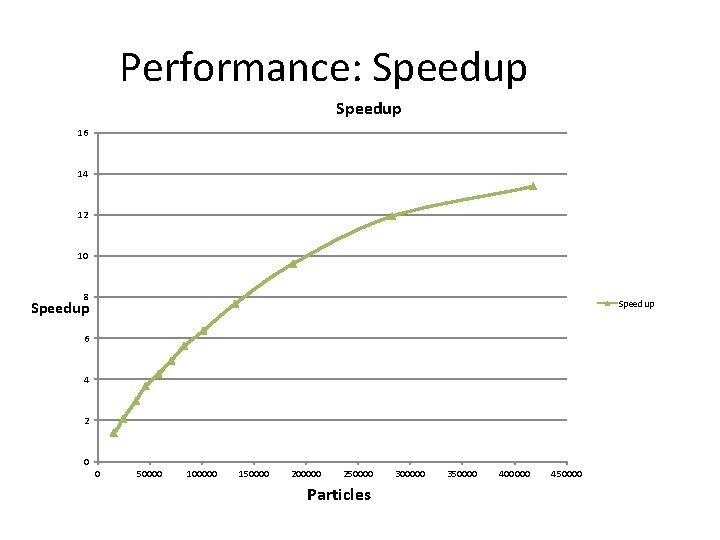

Performance: Speedup 16 14 12 10 8 Speedup 6 4 2 0 0 50000 100000 150000 200000 250000 Particles 300000 350000 400000 450000

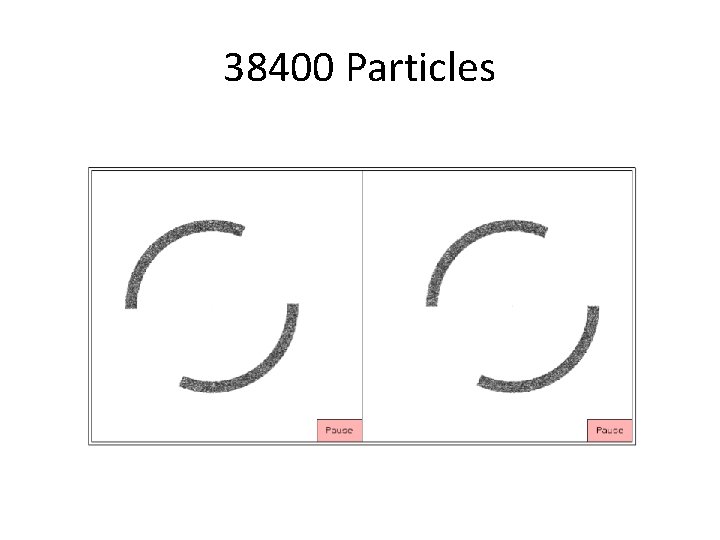

38400 Particles

- Slides: 12