Absorbing Markov Chains Thrasyvoulos Spyropoulos spyropoueurecom fr Eurecom

Absorbing Markov Chains Thrasyvoulos Spyropoulos / spyropou@eurecom. fr Eurecom, Sophia-Antipolis 15 October 2012 1

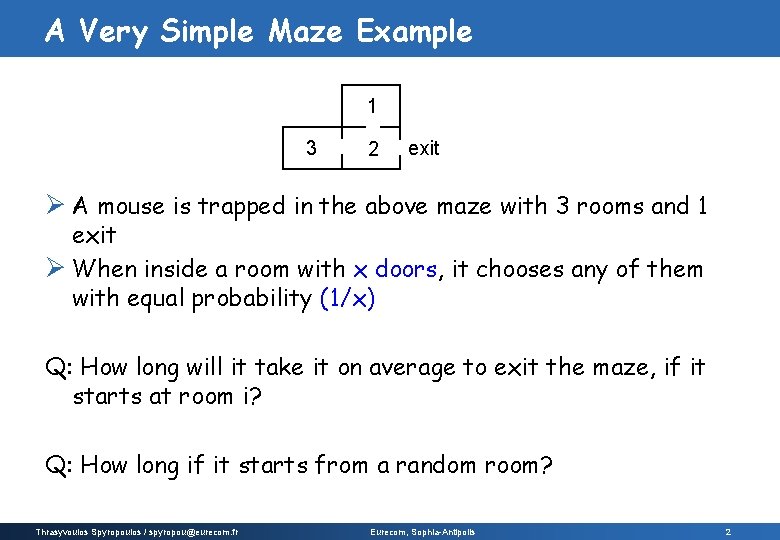

A Very Simple Maze Example 1 3 2 exit Ø A mouse is trapped in the above maze with 3 rooms and 1 exit Ø When inside a room with x doors, it chooses any of them with equal probability (1/x) Q: How long will it take it on average to exit the maze, if it starts at room i? Q: How long if it starts from a random room? Thrasyvoulos Spyropoulos / spyropou@eurecom. fr Eurecom, Sophia-Antipolis 2

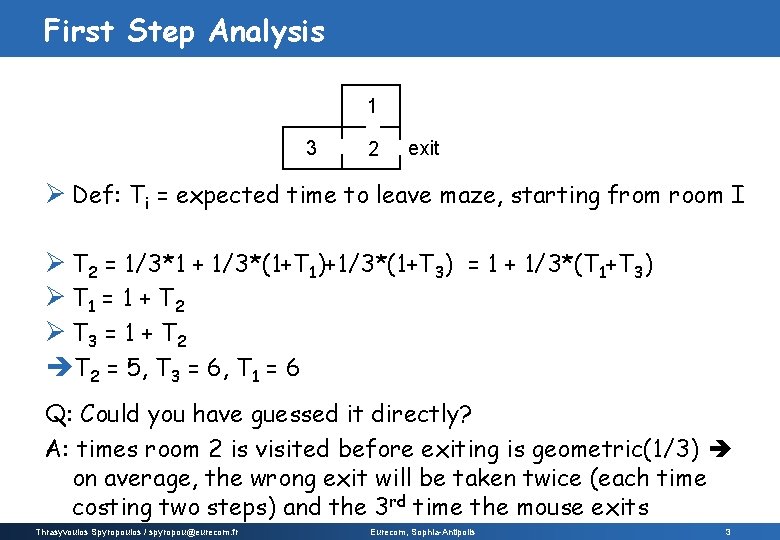

First Step Analysis 1 3 2 exit Ø Def: Ti = expected time to leave maze, starting from room I Ø T 2 = 1/3*1 + 1/3*(1+T 1)+1/3*(1+T 3) = 1 + 1/3*(T 1+T 3) Ø T 1 = 1 + T 2 Ø T 3 = 1 + T 2 = 5, T 3 = 6, T 1 = 6 Q: Could you have guessed it directly? A: times room 2 is visited before exiting is geometric(1/3) on average, the wrong exit will be taken twice (each time costing two steps) and the 3 rd time the mouse exits Thrasyvoulos Spyropoulos / spyropou@eurecom. fr Eurecom, Sophia-Antipolis 3

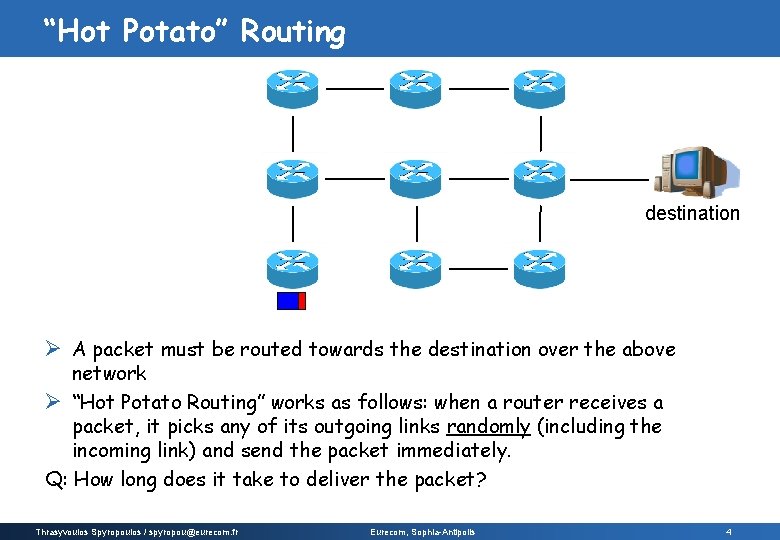

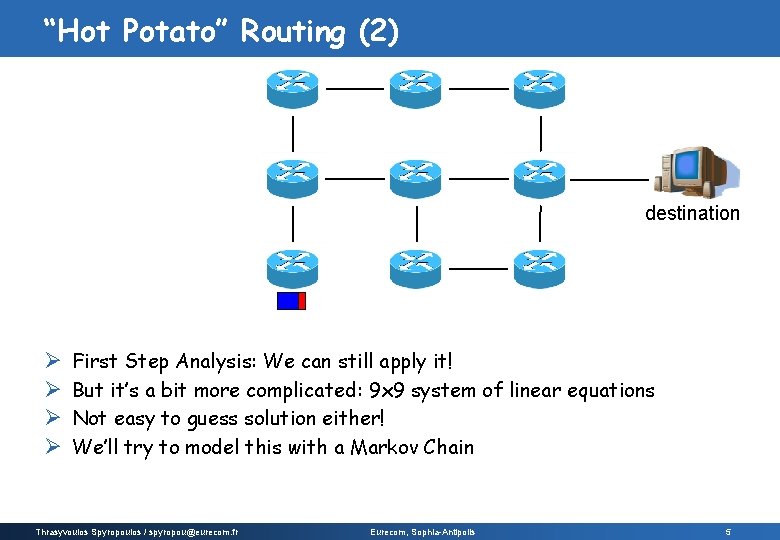

“Hot Potato” Routing destination Ø A packet must be routed towards the destination over the above network Ø “Hot Potato Routing” works as follows: when a router receives a packet, it picks any of its outgoing links randomly (including the incoming link) and send the packet immediately. Q: How long does it take to deliver the packet? Thrasyvoulos Spyropoulos / spyropou@eurecom. fr Eurecom, Sophia-Antipolis 4

“Hot Potato” Routing (2) destination Ø Ø First Step Analysis: We can still apply it! But it’s a bit more complicated: 9 x 9 system of linear equations Not easy to guess solution either! We’ll try to model this with a Markov Chain Thrasyvoulos Spyropoulos / spyropou@eurecom. fr Eurecom, Sophia-Antipolis 5

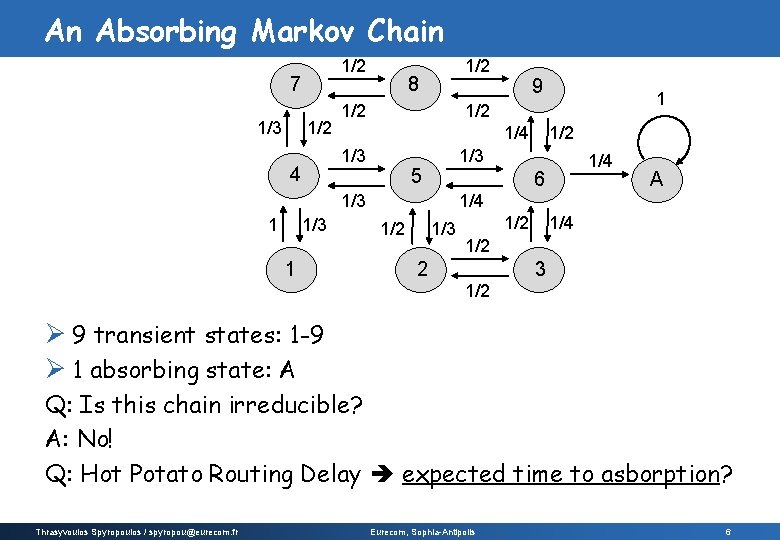

An Absorbing Markov Chain 1/2 7 1/3 1/2 8 1/2 1/4 1/3 1 1 1/2 1/3 5 1/4 6 1/3 1 9 1/2 1/3 4 1/2 A 1/4 1/2 1/3 1/2 1/4 1/2 3 2 1/2 Ø 9 transient states: 1 -9 Ø 1 absorbing state: A Q: Is this chain irreducible? A: No! Q: Hot Potato Routing Delay expected time to asborption? Thrasyvoulos Spyropoulos / spyropou@eurecom. fr Eurecom, Sophia-Antipolis 6

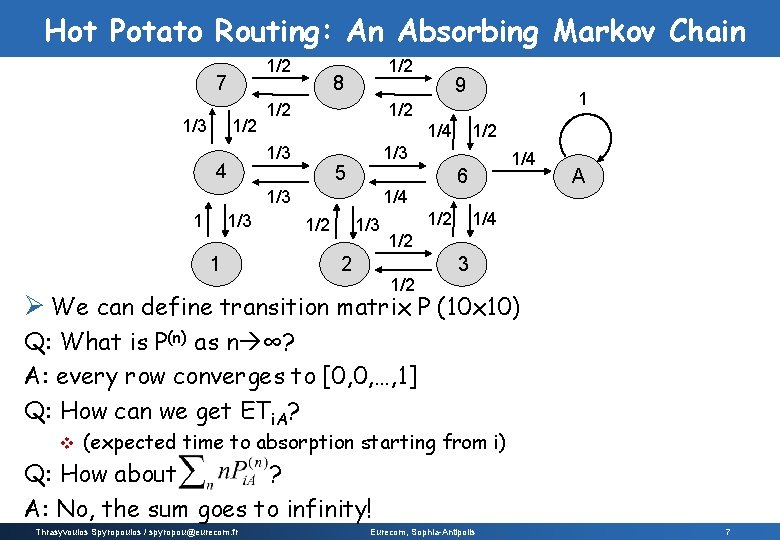

Hot Potato Routing: An Absorbing Markov Chain 1/2 7 1/3 1/2 8 1/2 1/4 1/3 1 1 5 1/3 2 1/4 6 1/4 1/2 1/3 1 9 1/2 1/3 4 1/2 A 1/4 1/2 3 Ø We can define transition matrix P (10 x 10) Q: What is P(n) as n ∞? A: every row converges to [0, 0, …, 1] Q: How can we get ETi. A? v (expected time to absorption starting from i) Q: How about ? A: No, the sum goes to infinity! Thrasyvoulos Spyropoulos / spyropou@eurecom. fr Eurecom, Sophia-Antipolis 7

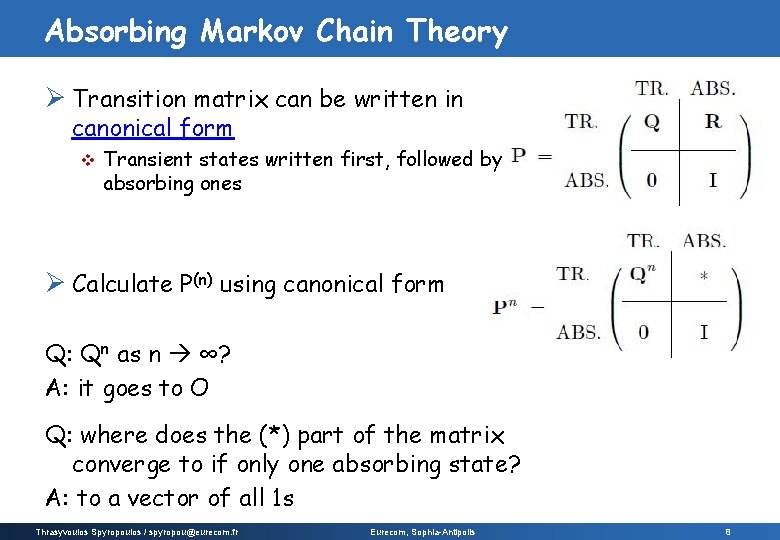

Absorbing Markov Chain Theory Ø Transition matrix can be written in canonical form v Transient states written first, followed by absorbing ones Ø Calculate P(n) using canonical form Q: Qn as n ∞? A: it goes to O Q: where does the (*) part of the matrix converge to if only one absorbing state? A: to a vector of all 1 s Thrasyvoulos Spyropoulos / spyropou@eurecom. fr Eurecom, Sophia-Antipolis 8

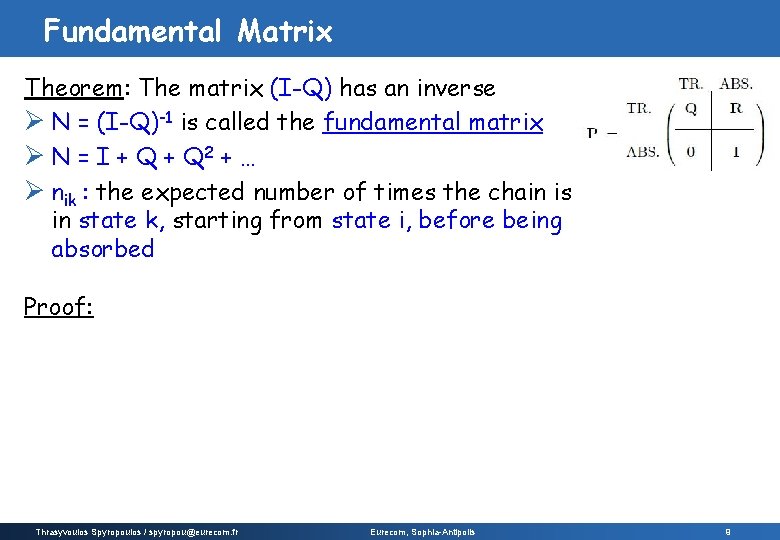

Fundamental Matrix Theorem: The matrix (I-Q) has an inverse Ø N = (I-Q)-1 is called the fundamental matrix Ø N = I + Q 2 + … Ø nik : the expected number of times the chain is in state k, starting from state i, before being absorbed Proof: Thrasyvoulos Spyropoulos / spyropou@eurecom. fr Eurecom, Sophia-Antipolis 9

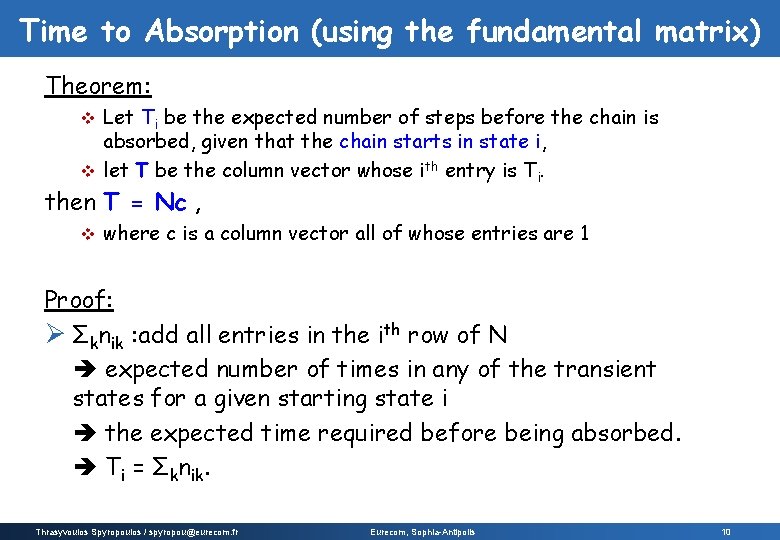

Time to Absorption (using the fundamental matrix) Theorem: Let Ti be the expected number of steps before the chain is absorbed, given that the chain starts in state i, v let T be the column vector whose ith entry is Ti. v then T = Nc , v where c is a column vector all of whose entries are 1 Proof: Ø Σknik : add all entries in the ith row of N expected number of times in any of the transient states for a given starting state i the expected time required before being absorbed. Ti = Σknik. Thrasyvoulos Spyropoulos / spyropou@eurecom. fr Eurecom, Sophia-Antipolis 10

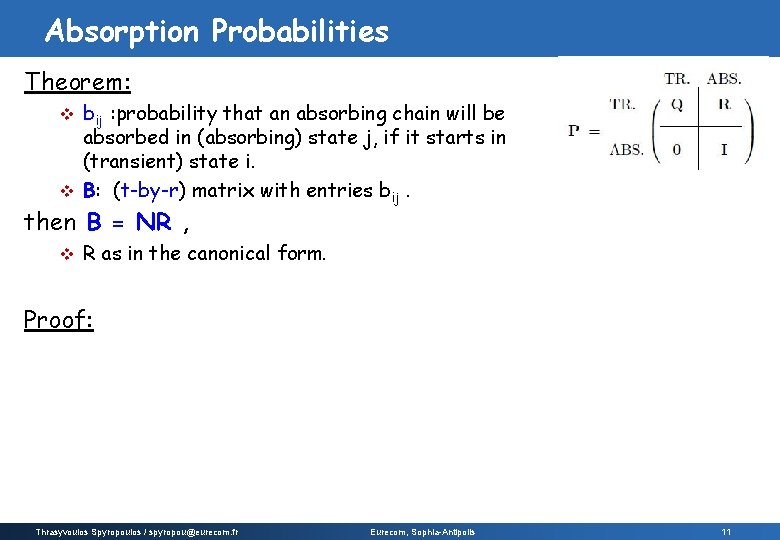

Absorption Probabilities Theorem: bij : probability that an absorbing chain will be absorbed in (absorbing) state j, if it starts in (transient) state i. v B: (t-by-r) matrix with entries bij. v then B = NR , v R as in the canonical form. Proof: Thrasyvoulos Spyropoulos / spyropou@eurecom. fr Eurecom, Sophia-Antipolis 11

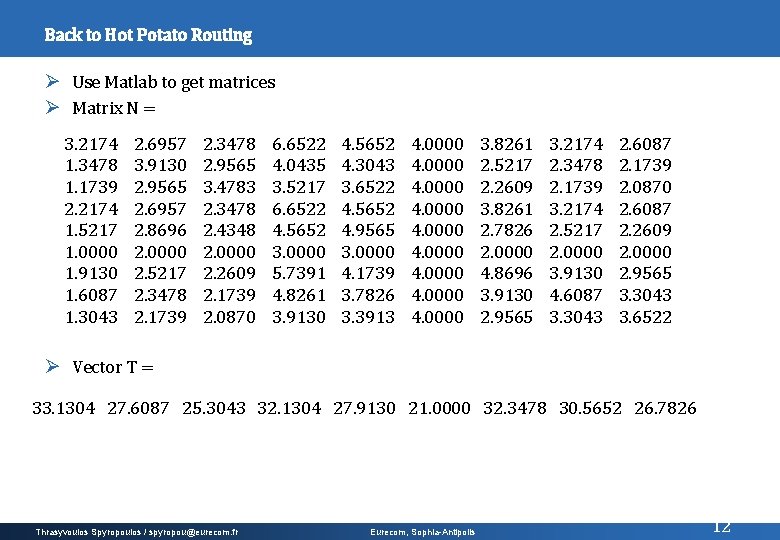

Back to Hot Potato Routing Ø Use Matlab to get matrices Ø Matrix N = 3. 2174 1. 3478 1. 1739 2. 2174 1. 5217 1. 0000 1. 9130 1. 6087 1. 3043 2. 6957 3. 9130 2. 9565 2. 6957 2. 8696 2. 0000 2. 5217 2. 3478 2. 1739 2. 3478 2. 9565 3. 4783 2. 3478 2. 4348 2. 0000 2. 2609 2. 1739 2. 0870 6. 6522 4. 0435 3. 5217 6. 6522 4. 5652 3. 0000 5. 7391 4. 8261 3. 9130 4. 5652 4. 3043 3. 6522 4. 5652 4. 9565 3. 0000 4. 1739 3. 7826 3. 3913 4. 0000 4. 0000 3. 8261 2. 5217 2. 2609 3. 8261 2. 7826 2. 0000 4. 8696 3. 9130 2. 9565 3. 2174 2. 3478 2. 1739 3. 2174 2. 5217 2. 0000 3. 9130 4. 6087 3. 3043 2. 6087 2. 1739 2. 0870 2. 6087 2. 2609 2. 0000 2. 9565 3. 3043 3. 6522 Ø Vector T = 33. 1304 27. 6087 25. 3043 32. 1304 27. 9130 21. 0000 32. 3478 30. 5652 26. 7826 Thrasyvoulos Spyropoulos / spyropou@eurecom. fr Eurecom, Sophia-Antipolis 12

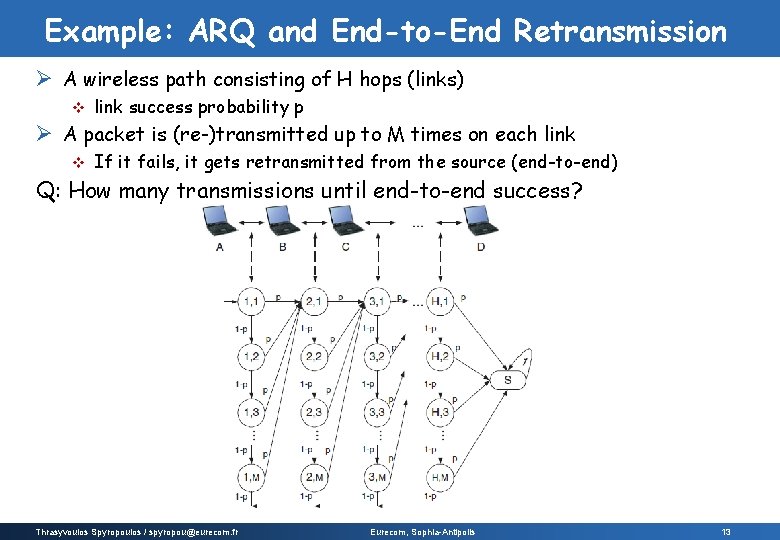

Example: ARQ and End-to-End Retransmission Ø A wireless path consisting of H hops (links) v link success probability p Ø A packet is (re-)transmitted up to M times on each link v If it fails, it gets retransmitted from the source (end-to-end) Q: How many transmissions until end-to-end success? Thrasyvoulos Spyropoulos / spyropou@eurecom. fr Eurecom, Sophia-Antipolis 13

- Slides: 13