A Strong Programming Model Bridging Distributed and MultiCore

A Strong Programming Model Bridging Distributed and Multi-Core Computing Denis. Caromel@inria. fr 1. Background: INRIA, Univ. Nice, OASIS Team 2. Programming: Parallel Programming Models: a) Asynchronous Active Objects, Futures, Typed Groups b) High-Level Abstractions (OO SPMD, Comp. , Skeleton) 3. Optimizing 4. Deploying 1 Denis Caromel

1. Background and Team at INRIA 2 Denis Caromel

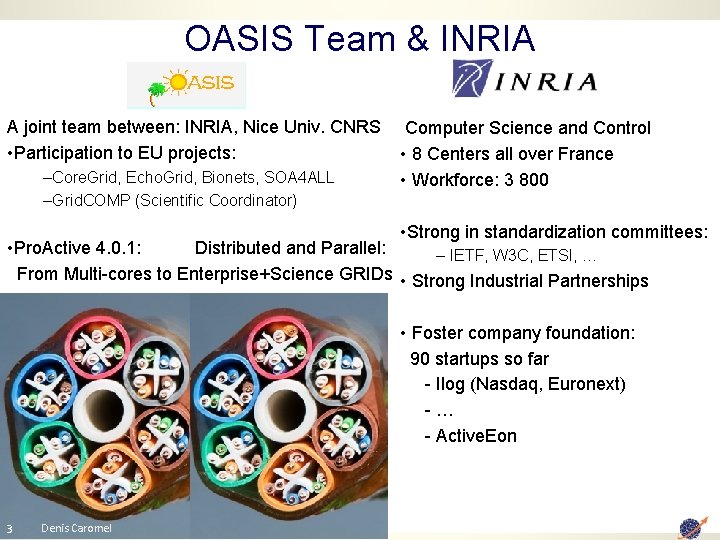

OASIS Team & INRIA A joint team between: INRIA, Nice Univ. CNRS • Participation to EU projects: –Core. Grid, Echo. Grid, Bionets, SOA 4 ALL –Grid. COMP (Scientific Coordinator) Computer Science and Control • 8 Centers all over France • Workforce: 3 800 • Strong in standardization committees: • Pro. Active 4. 0. 1: Distributed and Parallel: – IETF, W 3 C, ETSI, … From Multi-cores to Enterprise+Science GRIDs • Strong Industrial Partnerships • Foster company foundation: 90 startups so far - Ilog (Nasdaq, Euronext) -… - Active. Eon 3 Denis Caromel

Startup Company Born of INRIA Co-developing, Providing support for Open Source Pro. Active Parallel Suite Worldwide Customers (EU, Boston USA, etc. ) 4 Denis Caromel

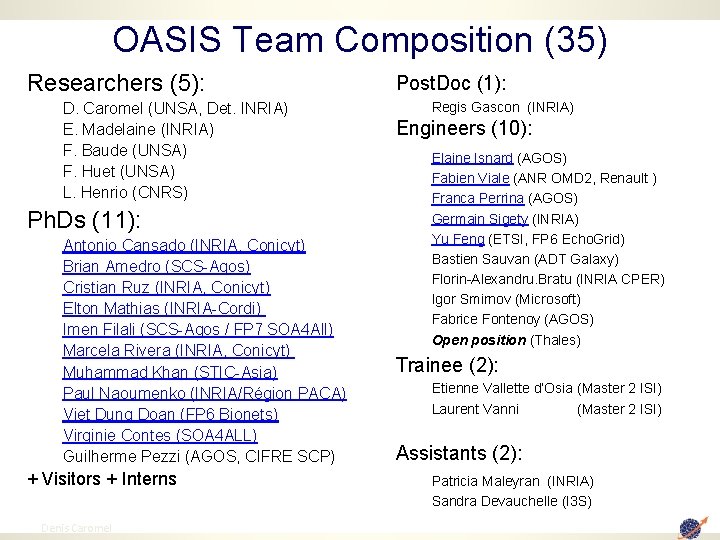

OASIS Team Composition (35) Researchers (5): D. Caromel (UNSA, Det. INRIA) E. Madelaine (INRIA) F. Baude (UNSA) F. Huet (UNSA) L. Henrio (CNRS) Ph. Ds (11): Antonio Cansado (INRIA, Conicyt) Brian Amedro (SCS-Agos) Cristian Ruz (INRIA, Conicyt) Elton Mathias (INRIA-Cordi) Imen Filali (SCS-Agos / FP 7 SOA 4 All) Marcela Rivera (INRIA, Conicyt) Muhammad Khan (STIC-Asia) Paul Naoumenko (INRIA/Région PACA) Viet Dung Doan (FP 6 Bionets) Virginie Contes (SOA 4 ALL) Guilherme Pezzi (AGOS, CIFRE SCP) + Visitors + Interns 5 Denis Caromel Post. Doc (1): Regis Gascon (INRIA) Engineers (10): Elaine Isnard (AGOS) Fabien Viale (ANR OMD 2, Renault ) Franca Perrina (AGOS) Germain Sigety (INRIA) Yu Feng (ETSI, FP 6 Echo. Grid) Bastien Sauvan (ADT Galaxy) Florin-Alexandru. Bratu (INRIA CPER) Igor Smirnov (Microsoft) Fabrice Fontenoy (AGOS) Open position (Thales) Trainee (2): Etienne Vallette d’Osia (Master 2 ISI) Laurent Vanni (Master 2 ISI) Assistants (2): Patricia Maleyran (INRIA) Sandra Devauchelle (I 3 S)

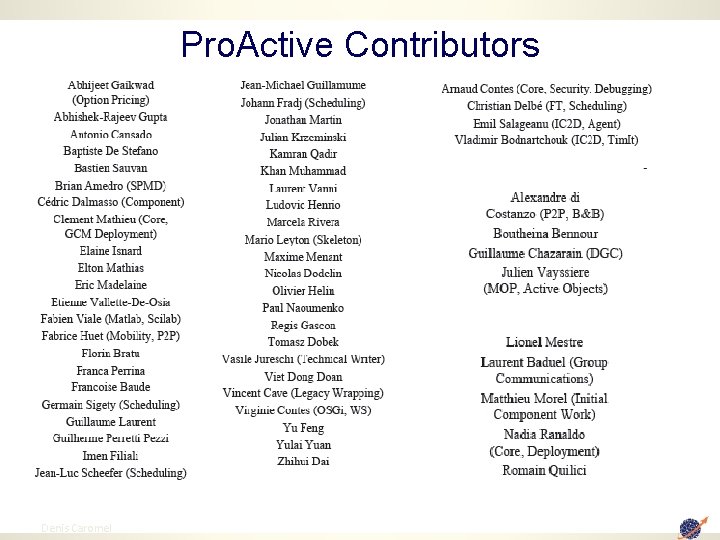

Pro. Active Contributors 6 Denis Caromel

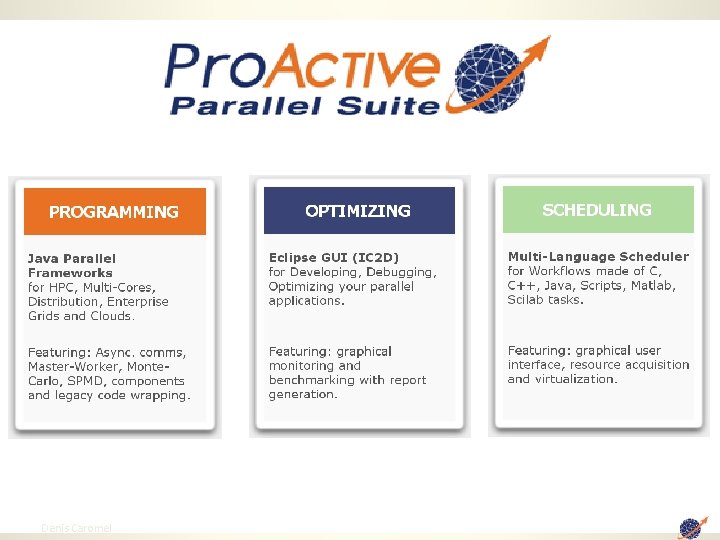

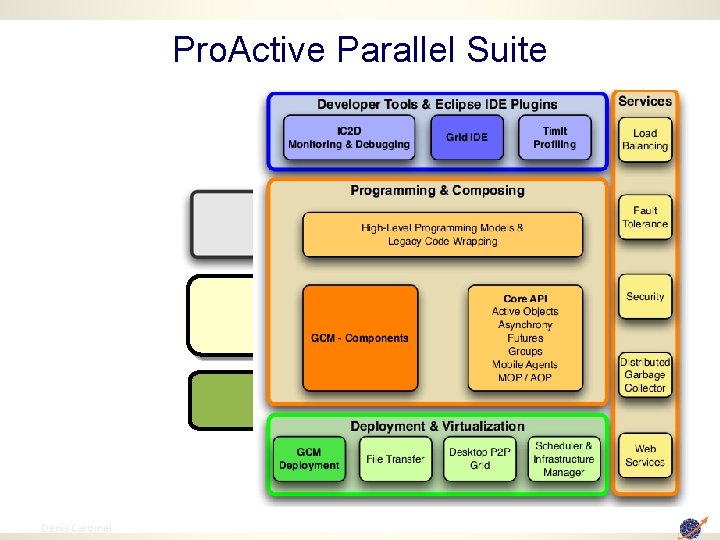

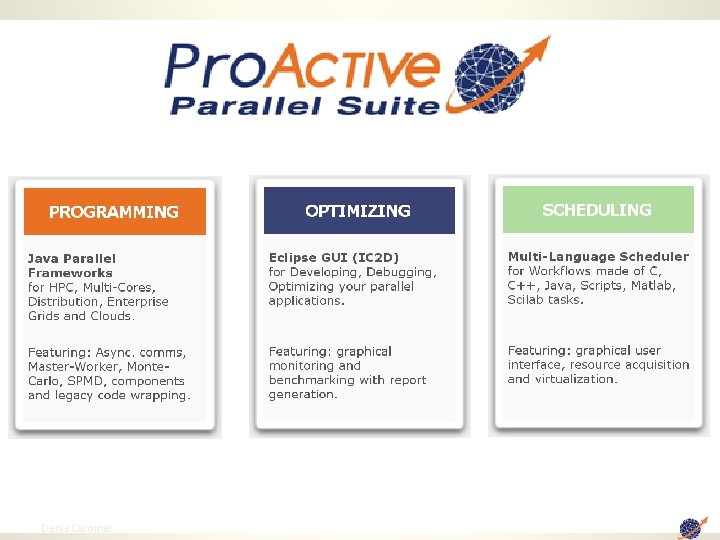

Pro. Active Parallel Suite: Architecture 7 Denis Caromel

8 Denis Caromel

Pro. Active Parallel Suite Physical Infrastructure 9 Denis Caromel 9

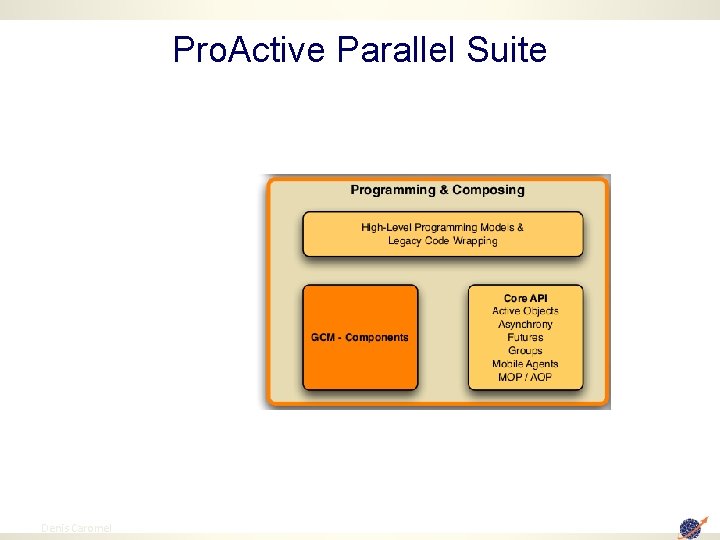

Pro. Active Parallel Suite 10 Denis Caromel 10

2. Programming Models for Parallel & Distributed 11 Denis Caromel

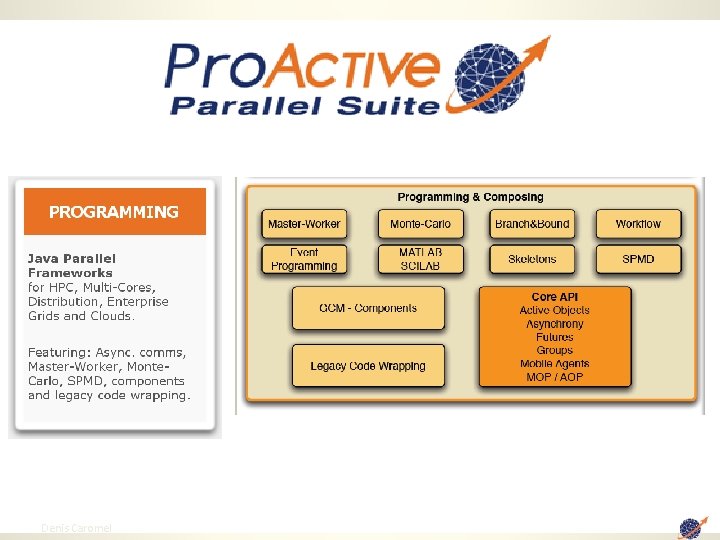

Pro. Active Parallel Suite 12 Denis Caromel

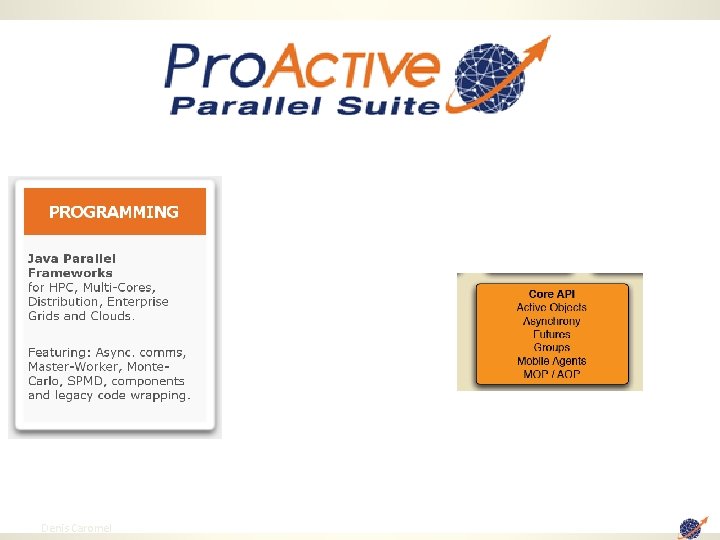

Pro. Active Parallel Suite 13 Denis Caromel

Distributed and Parallel Active Objects 14 Denis Caromel 14

![Pro. Active : Active objects JVM A ag = new. Active (“A”, […], Virtual. Pro. Active : Active objects JVM A ag = new. Active (“A”, […], Virtual.](http://slidetodoc.com/presentation_image/09592cfe7b9e831494d679600eea0595/image-15.jpg)

Pro. Active : Active objects JVM A ag = new. Active (“A”, […], Virtual. Node) V v 1 = ag. foo (param); V v 2 = ag. bar (param); . . . v 1. bar(); //Wait-By-Necessity JVM A v 2 v 1 ag A WBN! V 15 Java Object Active Object Future Object Proxy Denis Caromel Req. Queue Request Thread Wait-By-Necessity is a Dataflow Synchronization 15

First-Class Futures Update 16 Denis Caromel 16

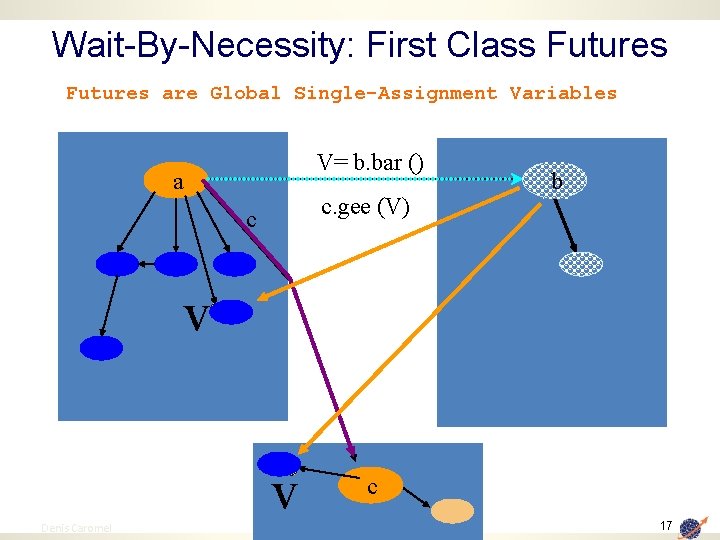

Wait-By-Necessity: First Class Futures are Global Single-Assignment Variables V= b. bar () a c. gee (V) c b v v 17 Denis Caromel c 17

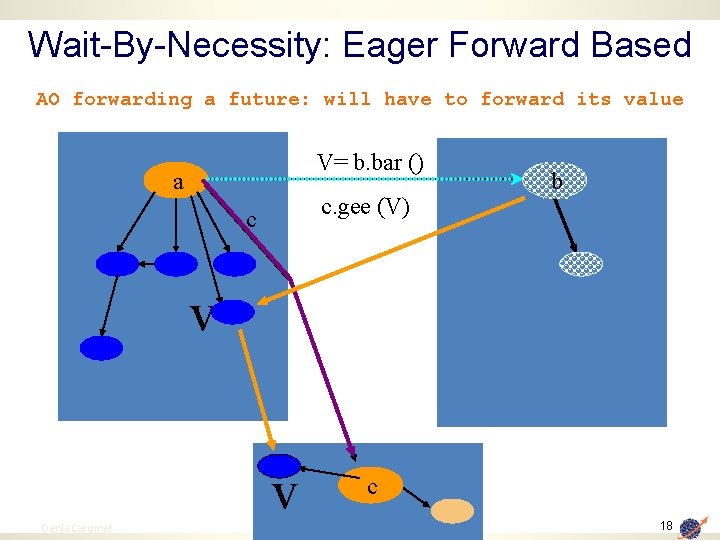

Wait-By-Necessity: Eager Forward Based AO forwarding a future: will have to forward its value V= b. bar () a c. gee (V) c b v v 18 Denis Caromel c 18

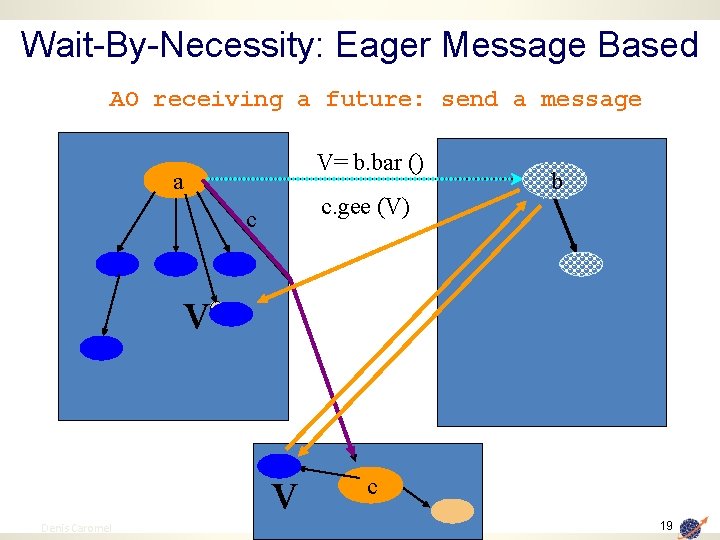

Wait-By-Necessity: Eager Message Based AO receiving a future: send a message V= b. bar () a c. gee (V) c b v v 19 Denis Caromel c 19

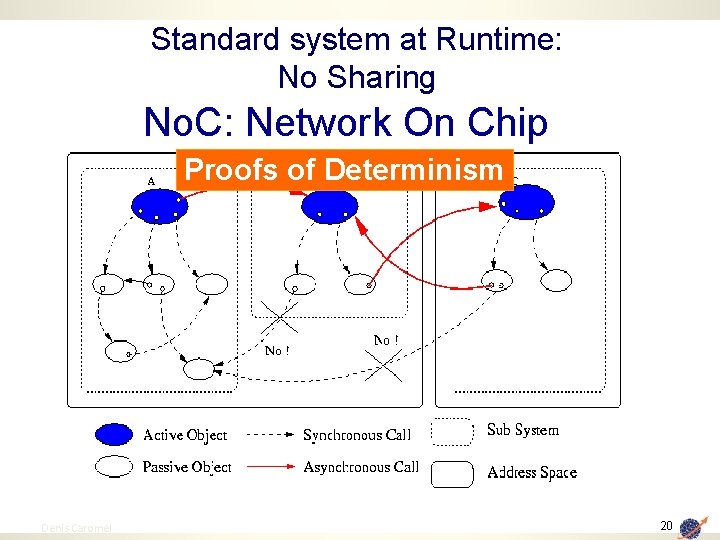

Standard system at Runtime: No Sharing No. C: Network On Chip Proofs of Determinism 20 Denis Caromel 20

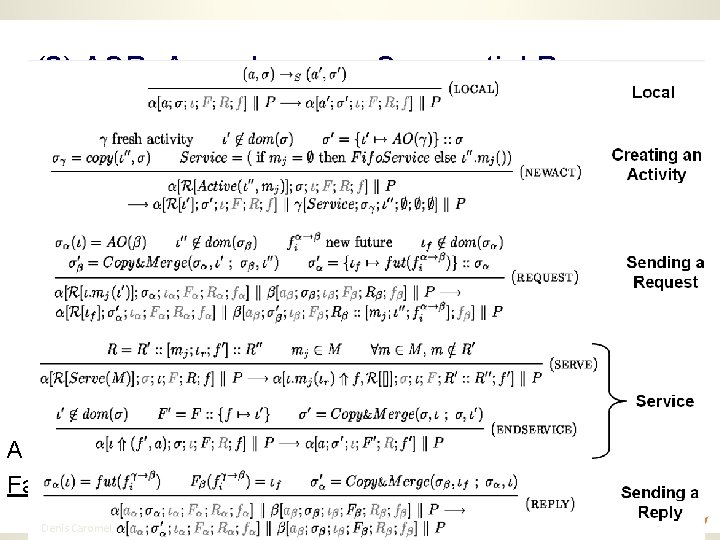

(2) ASP: Asynchronous Sequential Processes ASP Confluence and Determinacy Future updates can occur at any time Execution characterized by the order of request senders Determinacy of programs communicating over trees, … A strong guide for implementation, Fault-Tolerance and checkpointing, Model-Checking, … 21 Denis Caromel

No Sharing even for Multi-Cores Related Talks at PDP 2009 SS 6 Session, today at 16: 00 Impact of the Memory Hierarchy on Shared Memory Architectures in Multicore Programming Models Rosa M. Badia, Josep M. Perez, Eduard Ayguade, and Jesus Labarta Realities of Multi-Core CPU Chips and Memory Contention David P. Barker 22 Denis Caromel

TYPED ASYNCHRONOUS GROUPS 23 Denis Caromel 23

![Creating AO and Groups JVM A ag = new. Active. Group (“A”, […], Virtual. Creating AO and Groups JVM A ag = new. Active. Group (“A”, […], Virtual.](http://slidetodoc.com/presentation_image/09592cfe7b9e831494d679600eea0595/image-24.jpg)

Creating AO and Groups JVM A ag = new. Active. Group (“A”, […], Virtual. Node) V v = ag. foo(param); . . . v. bar(); //Wait-by-necessity A V Typed Group 24 Denis Caromel Java or Active Object Group, Type, and Asynchrony are crucial for Composition 24

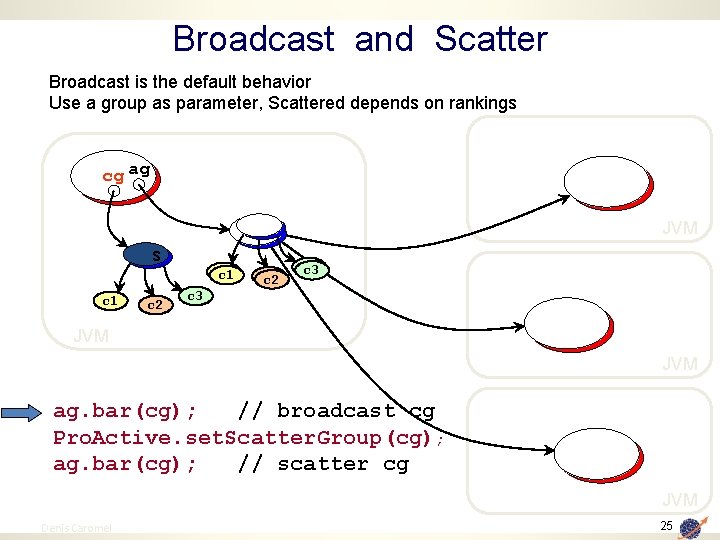

Broadcast and Scatter Broadcast is the default behavior Use a group as parameter, Scattered depends on rankings cg ag JVM s c 1 c 2 c 3 c 3 JVM ag. bar(cg); // broadcast cg Pro. Active. set. Scatter. Group(cg); ag. bar(cg); // scatter cg JVM 25 Denis Caromel 25

Static Dispatch Group Slowest cg ag JVM c 0 c 2 c 1 c 3 c 4 c 6 c 5 c 8 c 7 c 9 Fastest empty queue JVM c 0 c 2 c 1 JVM 26 Denis Caromel c 3 c 4 c 6 c 5 c 8 c 7 c 9 ag. bar(cg); JVM 26

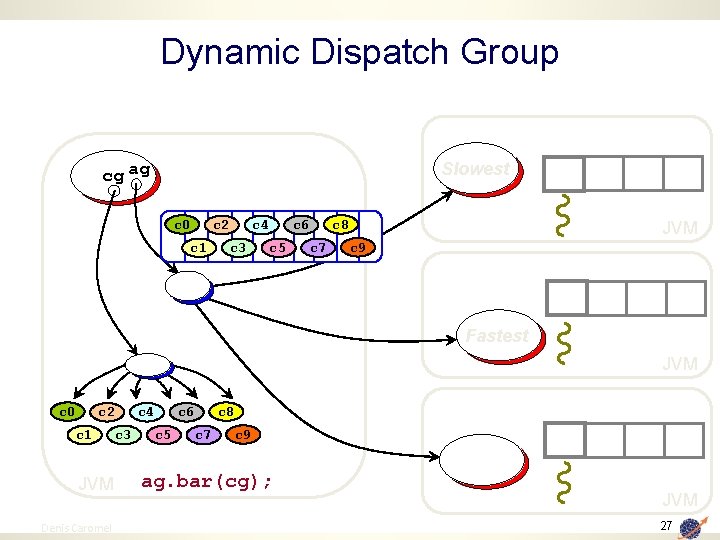

Dynamic Dispatch Group Slowest cg ag c 0 c 2 c 1 c 4 c 3 c 6 c 5 c 8 c 7 JVM c 9 Fastest JVM c 0 c 2 c 1 JVM 27 Denis Caromel c 3 c 4 c 6 c 5 c 8 c 7 c 9 ag. bar(cg); JVM 27

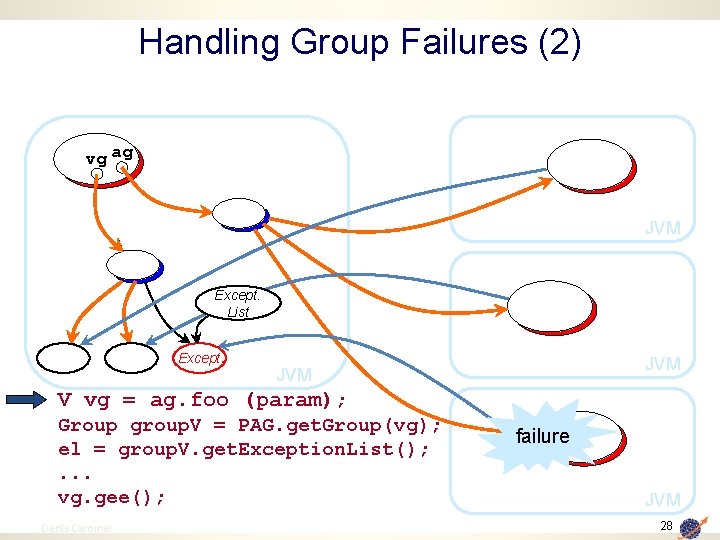

Handling Group Failures (2) vg ag JVM Except. List Except. JVM V vg = ag. foo (param); Group group. V = PAG. get. Group(vg); el = group. V. get. Exception. List(); . . . vg. gee(); 28 Denis Caromel failure JVM 28

Abstractions for Parallelism The right Tool to execute the Task 29 Denis Caromel

Object-Oriented SPMD 30 Denis Caromel 30

![OO SPMD A ag = new. SPMDGroup (“A”, […], Virtual. Node) // In each OO SPMD A ag = new. SPMDGroup (“A”, […], Virtual. Node) // In each](http://slidetodoc.com/presentation_image/09592cfe7b9e831494d679600eea0595/image-31.jpg)

OO SPMD A ag = new. SPMDGroup (“A”, […], Virtual. Node) // In each member my. Group. barrier (“ 2 D”); // Global Barrier my. Group. barrier (“vertical”); // Any Barrier my. Group. barrier (“north”, ”south”, “east”, “west”); A Still, not based on raw messages, but Typed Method Calls ==> Components 31 Denis Caromel 31

Object-Oriented SPMD Single Program Multiple Data Motivation Use Enterprise technology (Java, Eclipse, etc. ) for Parallel Computing Able to express in Java MPI’s Collective Communications: broadcast scatter gather Together with reduce allscatter allgather Barriers, Topologies. 32 Denis Caromel 32

MPI Communication primitives For some (historical) reasons, MPI has many com. Primitives: MPI_Send Std MPI_Recv Receive MPI_Ssend Synchronous MPI_Irecv Immediate MPI_Bsend Buffer … (any) source, (any) tag, MPI_Rsend Ready MPI_Isend Immediate, async/future MPI_Ibsend, … I’d rather put the burden on the implementation, not the Programmers ! How to do adaptive implementation in that context ? Not talking about: the combinatory that occurs between send and receive the semantic problems that occur in distributed implementations 33 Denis Caromel 33

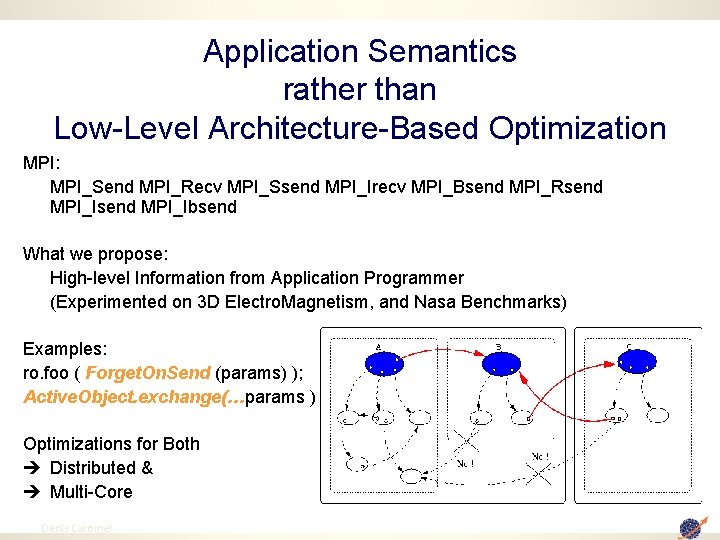

Application Semantics rather than Low-Level Architecture-Based Optimization MPI: MPI_Send MPI_Recv MPI_Ssend MPI_Irecv MPI_Bsend MPI_Rsend MPI_Ibsend What we propose: High-level Information from Application Programmer (Experimented on 3 D Electro. Magnetism, and Nasa Benchmarks) Examples: ro. foo ( Forget. On. Send (params) ); Active. Object. exchange(…params ); Optimizations for Both è Distributed & è Multi-Core 34 Denis Caromel

NAS Parallel Benchmarks Designed by NASA to evaluate benefits of high performance systems Strongly based on CFD 5 benchmarks (kernels) to test different aspects of a system 2 categories or focus variations: • communication intensive and • computation intensive 35

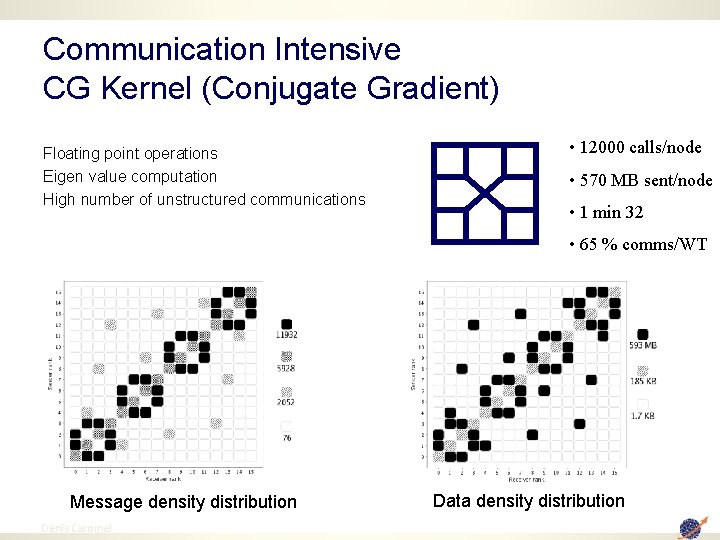

Communication Intensive CG Kernel (Conjugate Gradient) Floating point operations Eigen value computation High number of unstructured communications • 12000 calls/node • 570 MB sent/node • 1 min 32 • 65 % comms/WT Message density distribution 36 Denis Caromel Data density distribution

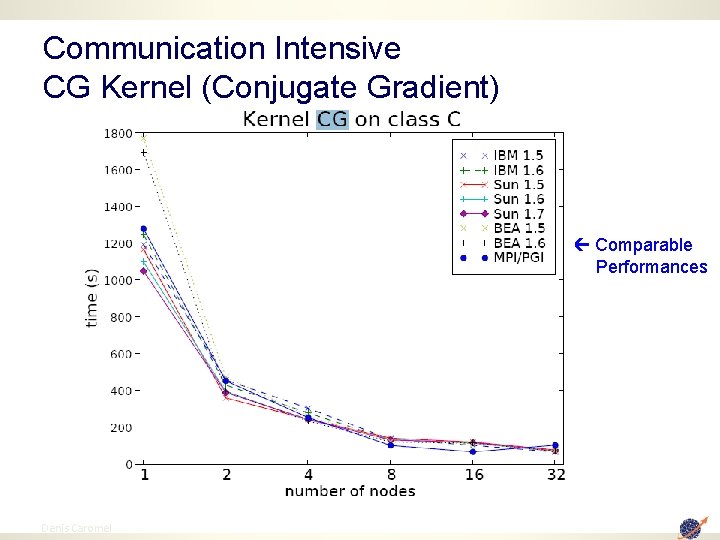

Communication Intensive CG Kernel (Conjugate Gradient) Comparable Performances 37 Denis Caromel

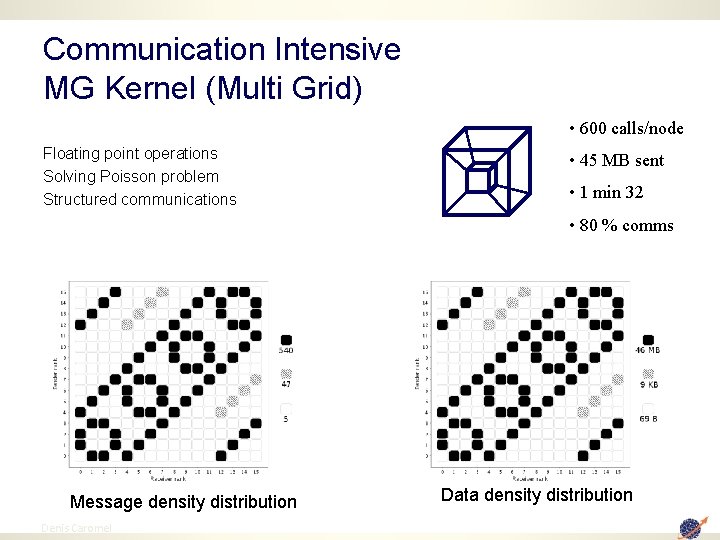

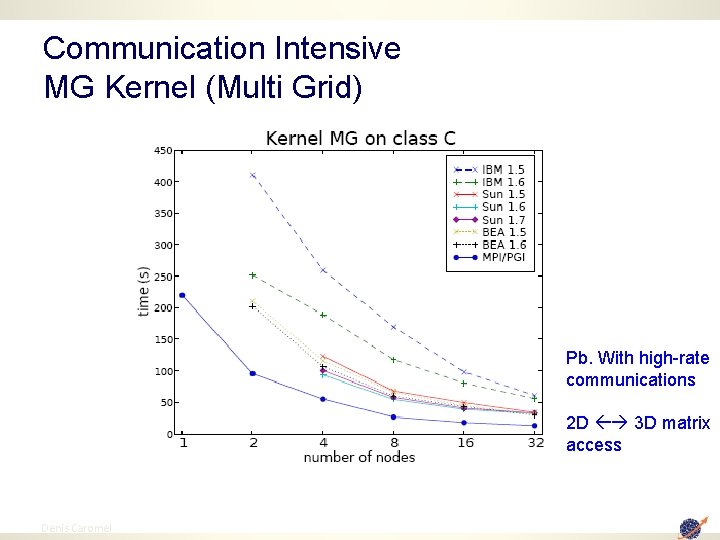

Communication Intensive MG Kernel (Multi Grid) • 600 calls/node Floating point operations Solving Poisson problem Structured communications • 45 MB sent • 1 min 32 • 80 % comms Message density distribution 38 Denis Caromel Data density distribution

Communication Intensive MG Kernel (Multi Grid) Pb. With high-rate communications 2 D 3 D matrix access 39 Denis Caromel

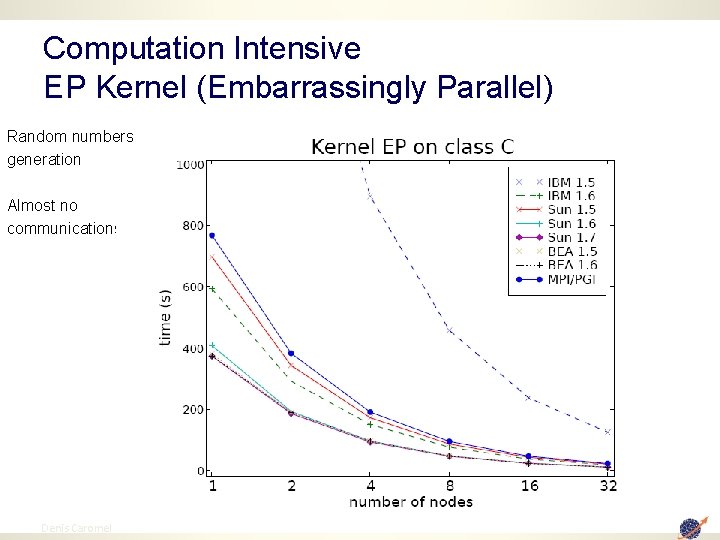

Computation Intensive EP Kernel (Embarrassingly Parallel) Random numbers generation Almost no communications This is Java!!! 40 Denis Caromel

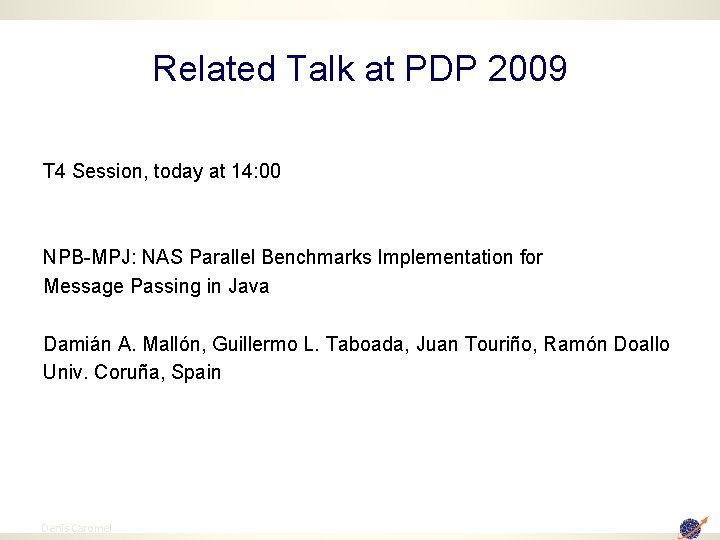

Related Talk at PDP 2009 T 4 Session, today at 14: 00 NPB-MPJ: NAS Parallel Benchmarks Implementation for Message Passing in Java Damián A. Mallón, Guillermo L. Taboada, Juan Touriño, Ramón Doallo Univ. Coruña, Spain 41 Denis Caromel

Parallel Components 42 Denis Caromel 42

Grid. COMP Partners 43 Denis Caromel

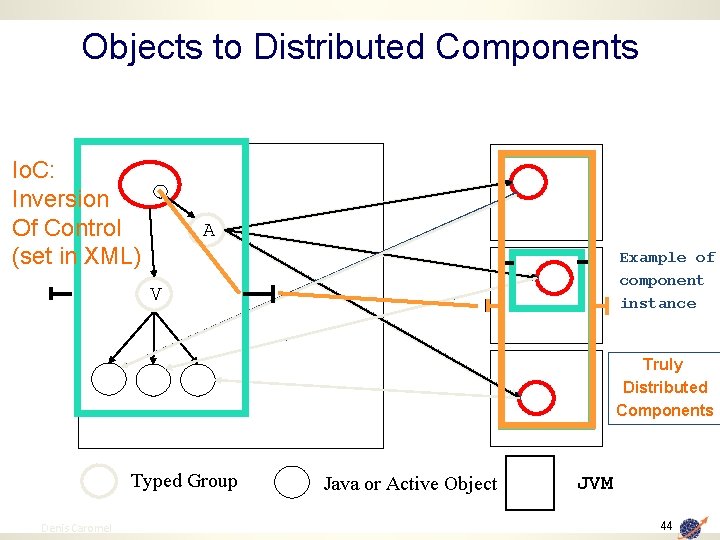

Objects to Distributed Components Io. C: Inversion Of Control (set in XML) A Example of component instance V Truly Distributed Components Typed Group 44 Denis Caromel Java or Active Object JVM 44

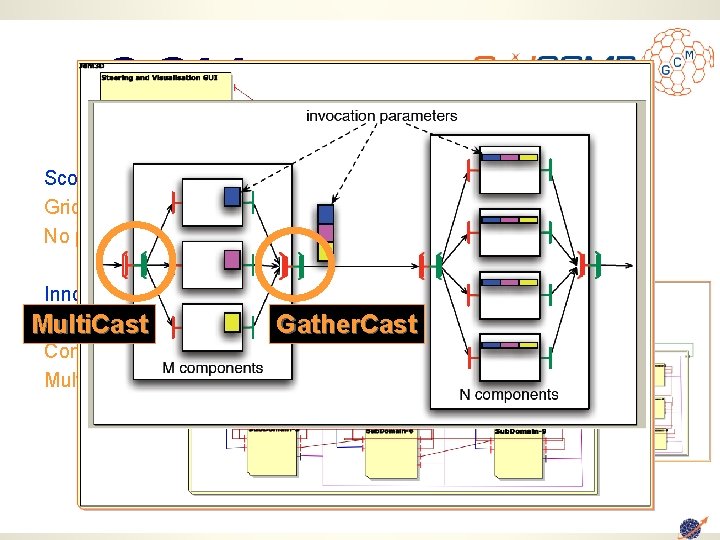

GCM Scopes and Objectives: Grid Codes that Compose and Deploy No programming, No Scripting, … No Pain Innovation: Abstract Deployment Multi. Cast Composite Components Multicast and Gather. Cast

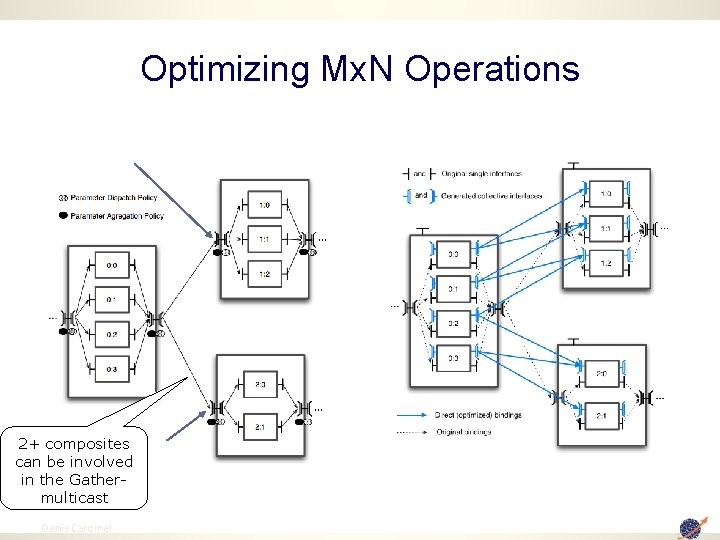

Optimizing Mx. N Operations 2+ composites can be involved in the Gathermulticast 46 Denis Caromel

Related Talk at PDP 2009 T 1 Session, Yesterday at 11: 30 Towards Hierarchical Management of Autonomic Components: a Case Study Marco Aldinucci, Marco Danelutto, and Peter Kilpatrick 47 Denis Caromel

Skeleton 48 Denis Caromel

![Algorithmic Skeletons for Parallelism High Level Programming Model [Cole 89] Hides the complexity of Algorithmic Skeletons for Parallelism High Level Programming Model [Cole 89] Hides the complexity of](http://slidetodoc.com/presentation_image/09592cfe7b9e831494d679600eea0595/image-49.jpg)

Algorithmic Skeletons for Parallelism High Level Programming Model [Cole 89] Hides the complexity of parallel/distributed programming Exploits nestable parallelism patterns Parallelism Patterns Task farm while for pipe BLAST Skeleton Program d&c(fb, fd, fc) if pipe fork Data 49 divide & conquer fork map seq(f 1) seq(f 3) seq(f 2)

![Algorithmic Skeletons for Parallelism High Level Programming Model [Cole 89] public boolean condition(Blast. Params Algorithmic Skeletons for Parallelism High Level Programming Model [Cole 89] public boolean condition(Blast. Params](http://slidetodoc.com/presentation_image/09592cfe7b9e831494d679600eea0595/image-50.jpg)

Algorithmic Skeletons for Parallelism High Level Programming Model [Cole 89] public boolean condition(Blast. Params param){ Hides the complexity of parallel/distributed programming Exploits nestable parallelism patterns File file = param. db. File; return file. length() > param. max. DBSize; } Parallelism Patterns Task farm while for pipe BLAST Skeleton Program d&c(fb, fd, fc) if pipe fork Data 50 divide & conquer fork map seq(f 1) seq(f 3) seq(f 2)

3. Optimizing 51 Denis Caromel 51

Programming & Optimizing Monitoring, Debugging, Optimizing 52 Denis Caromel

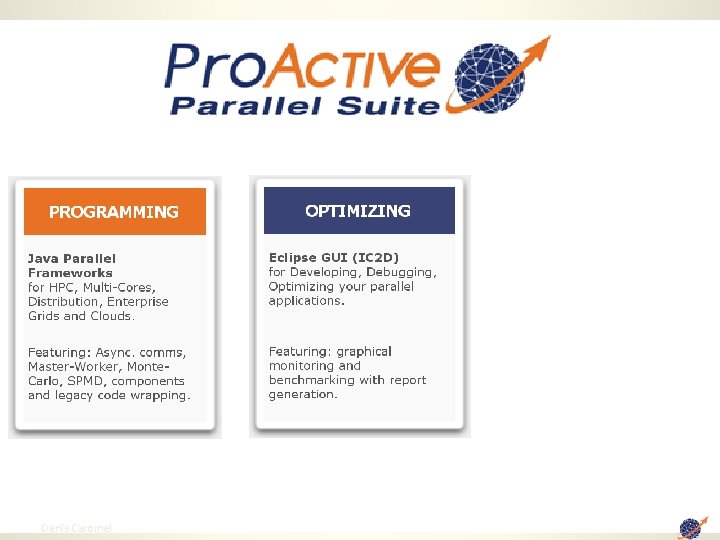

53 Denis Caromel

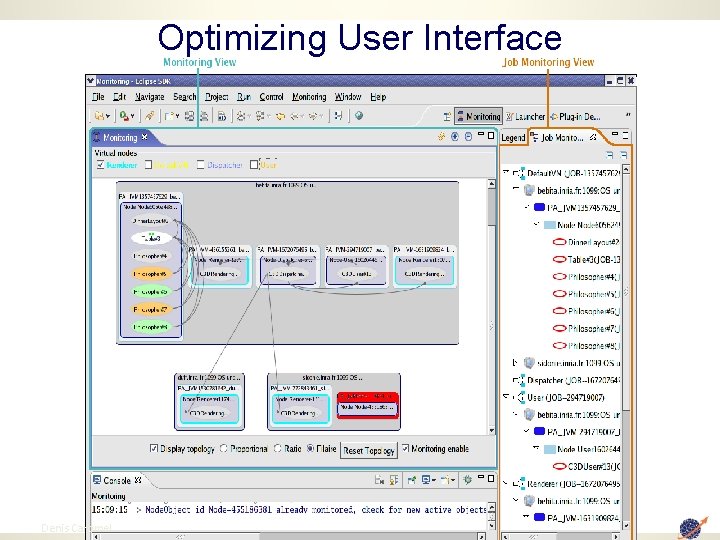

Optimizing User Interface 54 Denis Caromel

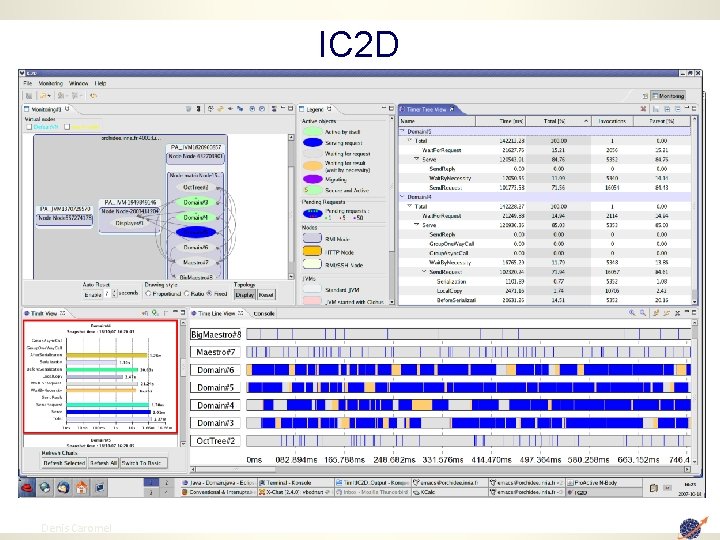

IC 2 D 55 Denis Caromel

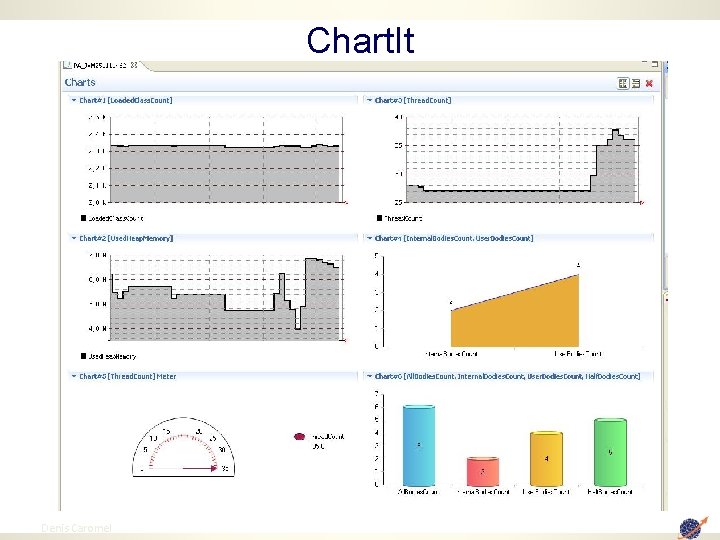

Chart. It 56 Denis Caromel

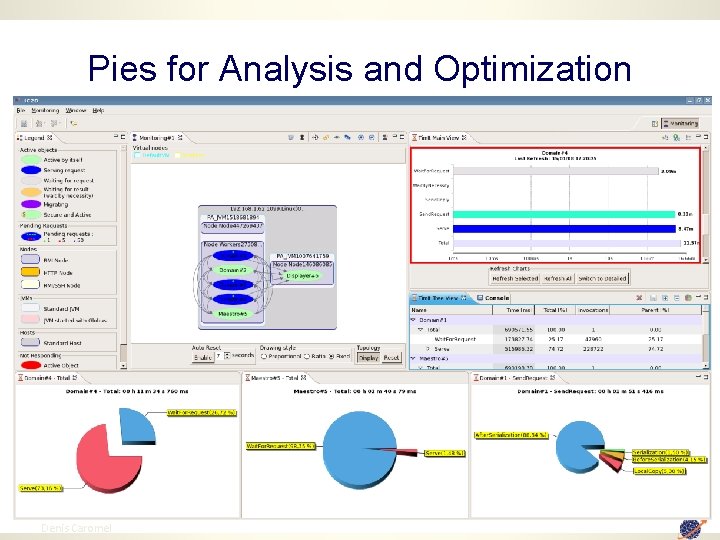

Pies for Analysis and Optimization 57 Denis Caromel

Video 1: IC 2 D Optimizing Monitoring, Debugging, Optimizing 58 Denis Caromel

59 Denis Caromel

4. Deploying & Scheduling 60 Denis Caromel 60

61 Denis Caromel

Deploying 62 Denis Caromel

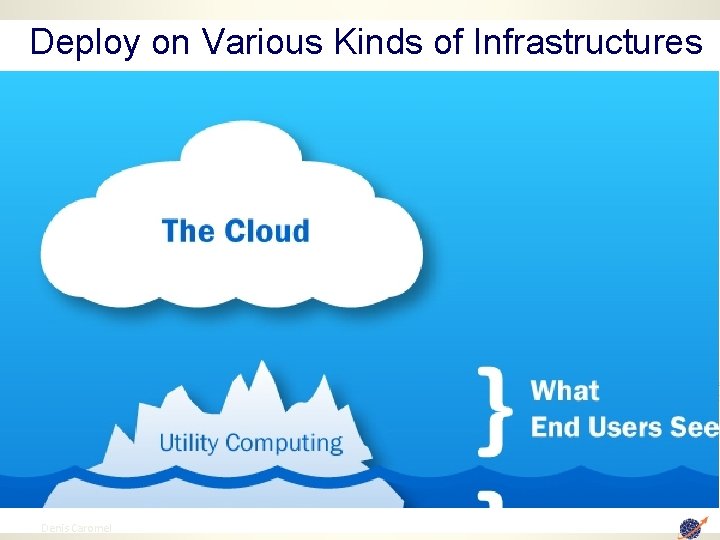

Deploy on Various Kinds of Infrastructures Internet Servlets Internet Clusters 63 Denis Caromel EJBs Databases Large Equipment Internet Parallel Machine Job management for embarrassingly parallel application (e. g. SETI)

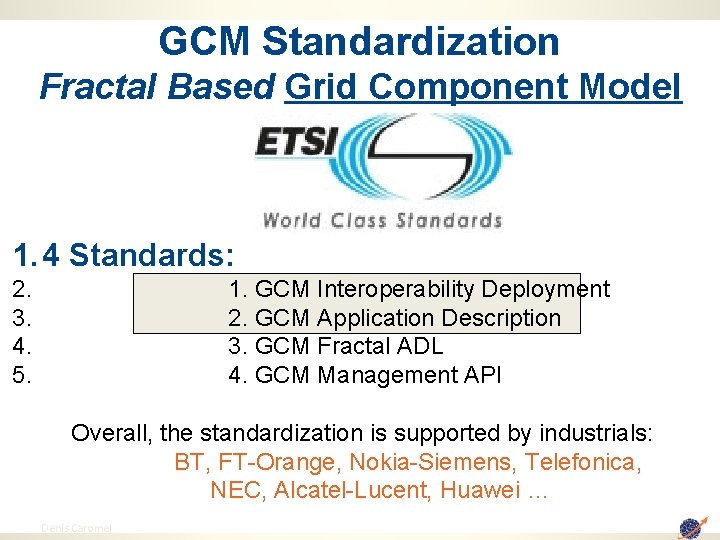

GCM Standardization Fractal Based Grid Component Model 1. 4 Standards: 2. 3. 4. 5. 1. GCM Interoperability Deployment 2. GCM Application Description 3. GCM Fractal ADL 4. GCM Management API Overall, the standardization is supported by industrials: BT, FT-Orange, Nokia-Siemens, Telefonica, NEC, Alcatel-Lucent, Huawei … 64 Denis Caromel

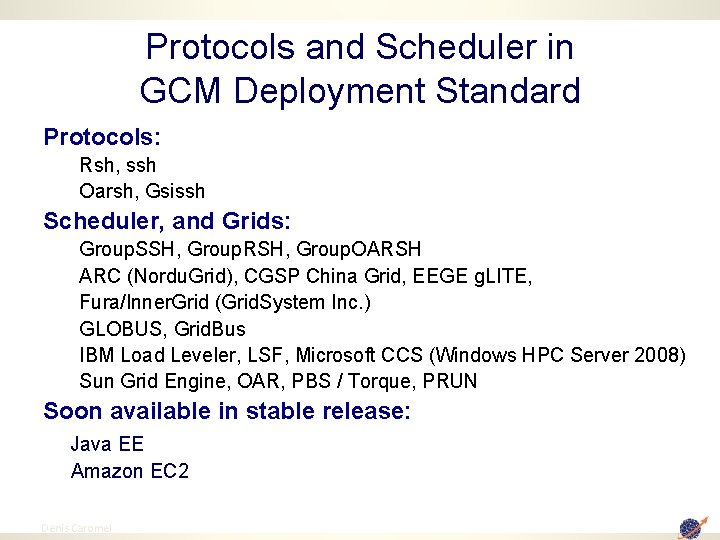

Protocols and Scheduler in GCM Deployment Standard Protocols: Rsh, ssh Oarsh, Gsissh Scheduler, and Grids: Group. SSH, Group. RSH, Group. OARSH ARC (Nordu. Grid), CGSP China Grid, EEGE g. LITE, Fura/Inner. Grid (Grid. System Inc. ) GLOBUS, Grid. Bus IBM Load Leveler, LSF, Microsoft CCS (Windows HPC Server 2008) Sun Grid Engine, OAR, PBS / Torque, PRUN Soon available in stable release: Java EE Amazon EC 2 65 Denis Caromel

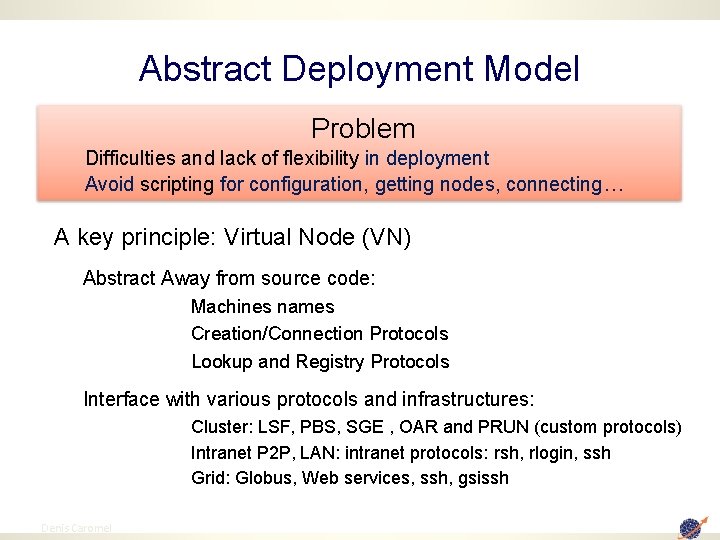

Abstract Deployment Model Problem Difficulties and lack of flexibility in deployment Avoid scripting for configuration, getting nodes, connecting… A key principle: Virtual Node (VN) Abstract Away from source code: Machines names Creation/Connection Protocols Lookup and Registry Protocols Interface with various protocols and infrastructures: Cluster: LSF, PBS, SGE , OAR and PRUN (custom protocols) Intranet P 2 P, LAN: intranet protocols: rsh, rlogin, ssh Grid: Globus, Web services, ssh, gsissh 2009 Denis Caromel 66 66

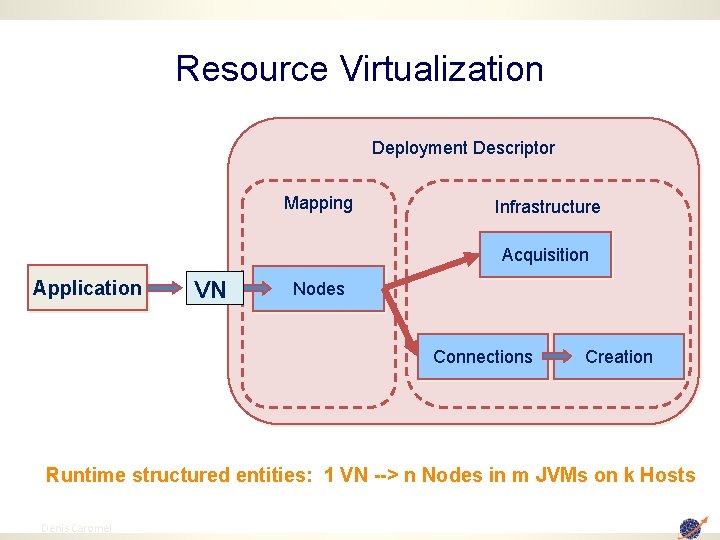

Resource Virtualization Deployment Descriptor Mapping Infrastructure Acquisition Application VN Nodes Connections Creation Runtime structured entities: 1 VN --> n Nodes in m JVMs on k Hosts 2009 Denis Caromel 67 67

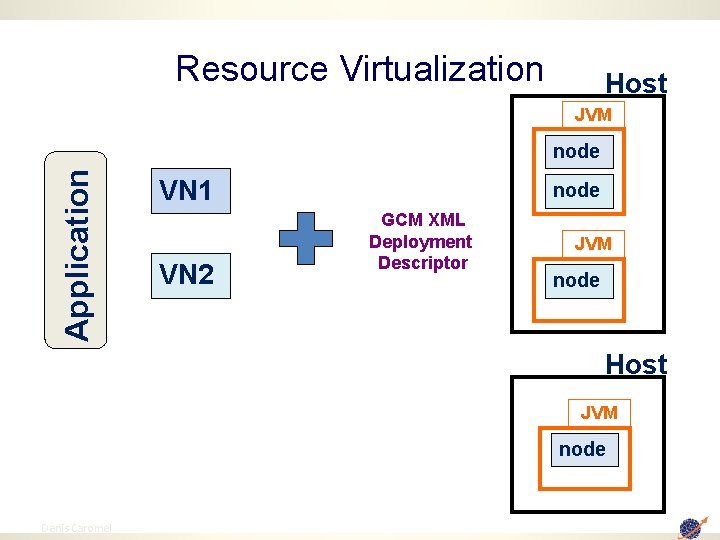

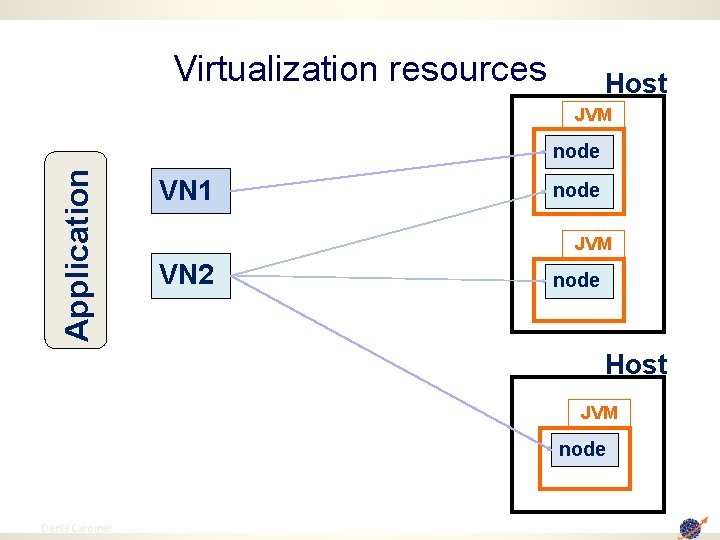

Resource Virtualization Host JVM Application node VN 1 VN 2 node GCM XML Deployment Descriptor JVM node Host JVM node 2009 Denis Caromel 68 68

Virtualization resources Host JVM Application node VN 1 node JVM VN 2 node Host JVM node 2009 Denis Caromel 69 69

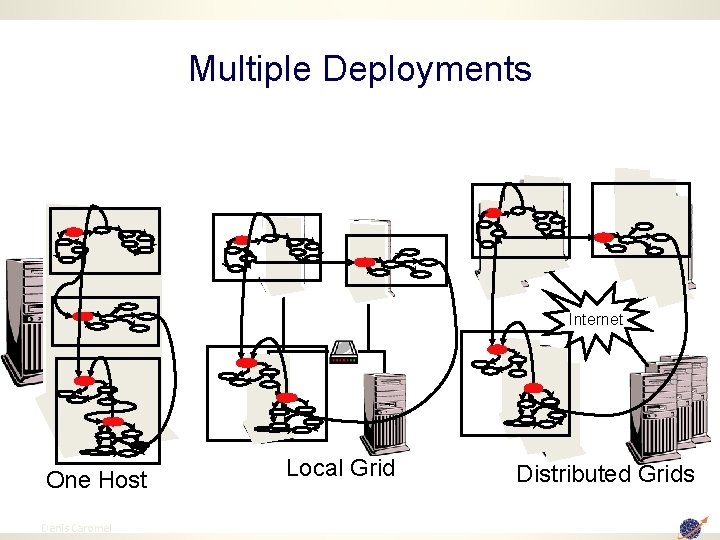

Multiple Deployments Internet One Host 2009 Denis Caromel 70 Local Grid Distributed Grids 70

Scheduling Mode 71 Denis Caromel

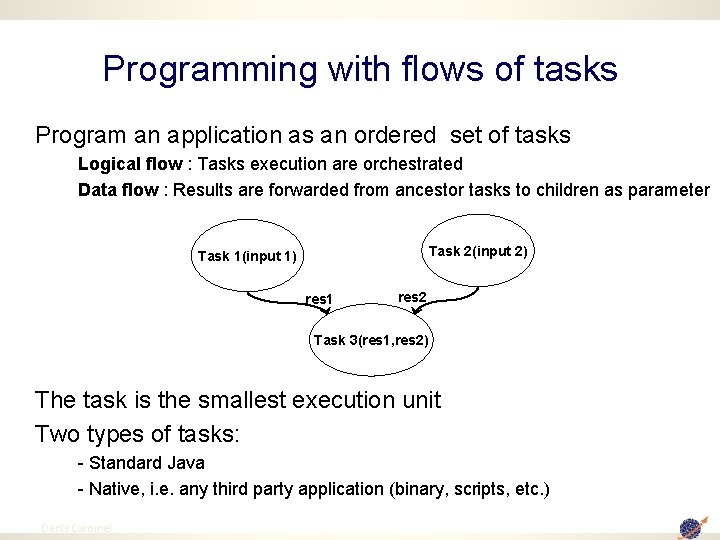

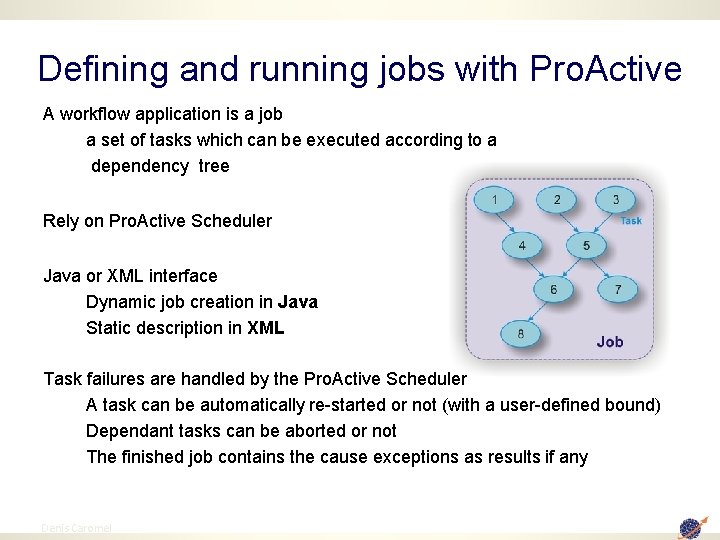

Programming with flows of tasks Program an application as an ordered set of tasks Logical flow : Tasks execution are orchestrated Data flow : Results are forwarded from ancestor tasks to children as parameter Task 2(input 2) Task 1(input 1) res 1 res 2 Task 3(res 1, res 2) The task is the smallest execution unit Two types of tasks: - Standard Java - Native, i. e. any third party application (binary, scripts, etc. ) 2009 Denis Caromel 72 72

Defining and running jobs with Pro. Active A workflow application is a job a set of tasks which can be executed according to a dependency tree Rely on Pro. Active Scheduler Java or XML interface Dynamic job creation in Java Static description in XML Task failures are handled by the Pro. Active Scheduler A task can be automatically re-started or not (with a user-defined bound) Dependant tasks can be aborted or not The finished job contains the cause exceptions as results if any 2009 Denis Caromel 73 73

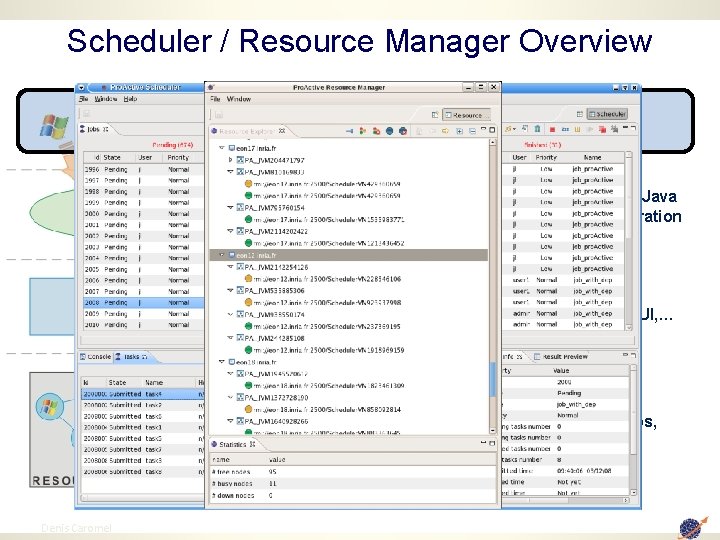

Scheduler / Resource Manager Overview • Multi-platform Graphical Client (RCP) • File-based or LDAP authentication • Static Workflow Job Scheduling, Native and Java tasks, Retry on Error, Priority Policy, Configuration Scripts, … • Dynamic and Static node sources, Resource Selection by script, Monitoring and Control GUI, … • Pro. Active Deployment capabilities : Desktops, Clusters, Pro. Active P 2 P, … 2009 Denis Caromel 74 74

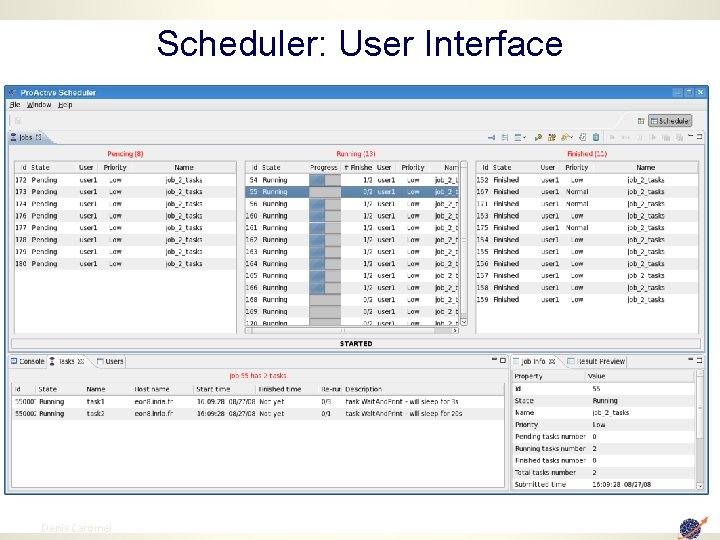

Scheduler: User Interface 75 Denis Caromel

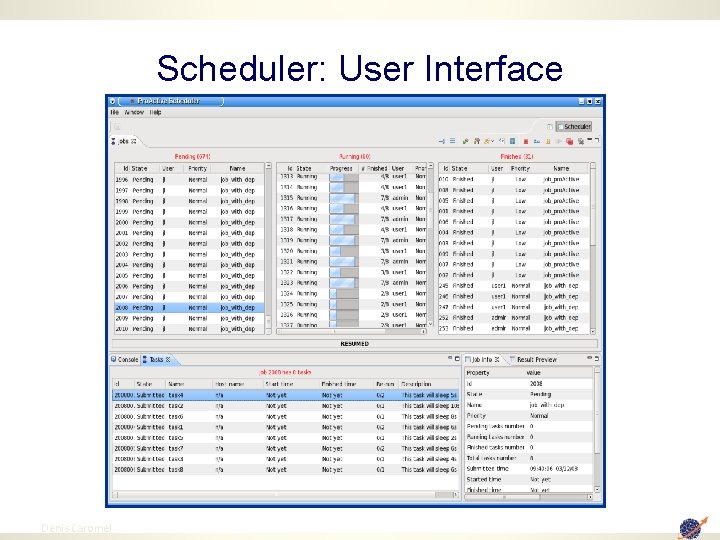

Scheduler: User Interface 76 Denis Caromel

Video 2: Scheduling Scheduler and Resource Manager: See the video at http: //proactive. inria. fr/userfiles/media/video s/Scheduler_RM_Short. mpg 77 Denis Caromel 77

78 Denis Caromel

Summary 79 Denis Caromel

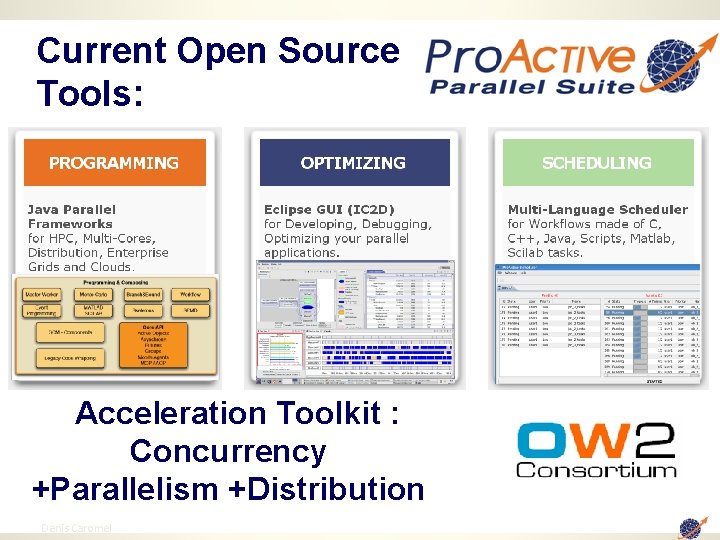

Current Open Source Tools: Acceleration Toolkit : Concurrency +Parallelism +Distribution 80 Denis Caromel

Conclusion ► Summary: q Programming: OO q. Asynchrony, First-Class Futures, No Sharing q. Higher-level Abstractions (SPMD, Skel. , …) q Composing: Hierarchical Components q Optimizing: IC 2 D Eclipse GUI q Deploying: ssh, Globus, LSF, PBS, …, WS Ø Applications: q 3 D Electromagnetism SPMD on 300 machines at once q Groups of over 1000! q. Record: 4 000 Nodes Our next target: 10 000 Core Applications 81 Denis Caromel

Conclusion: Why does it scale? Thanks to a few key features: Connection-less, RMI+JMS unified Messages rather than long-living interactions 82 Denis Caromel

Conclusion: Why does it Compose? Thanks to a few key features: Because it Scales: asynchrony ! Because it is Typed: RMI with interfaces ! First-Class Futures: No unstructured Call Backs and Ports 83 Denis Caromel

Perspectives for Parallelism & Distribution • A need for several, coherent, Programming Models for different applications: – Actors (Functional Parallelism) + Active Objects + Futures – OO SPMD: optimizations away from low-level optimizations – Parallel Component: Codes and Synchronizations: • Multi. Cast Gather. Cast: Capturing // Behavior at Interfaces! – Adaptive Parallel Skeletons – Event Processing (Reactive Systems) Efficient Implementations are needed to prove Ideas! Proofs of Programming Model Properties Needed for Scalability! Our Community never had a greatest Future! Thank You for your attention! 84 Denis Caromel

85 Denis Caromel

Extra Material Grid + SOA J 2 EE + Grid Amazon EC 2 Pro. Active Image and Deployment 86 Denis Caromel

Perspective: SOA + Grid 87 Denis Caromel

SOA Integration: Web Services, BPEL Workflow 88 Denis Caromel

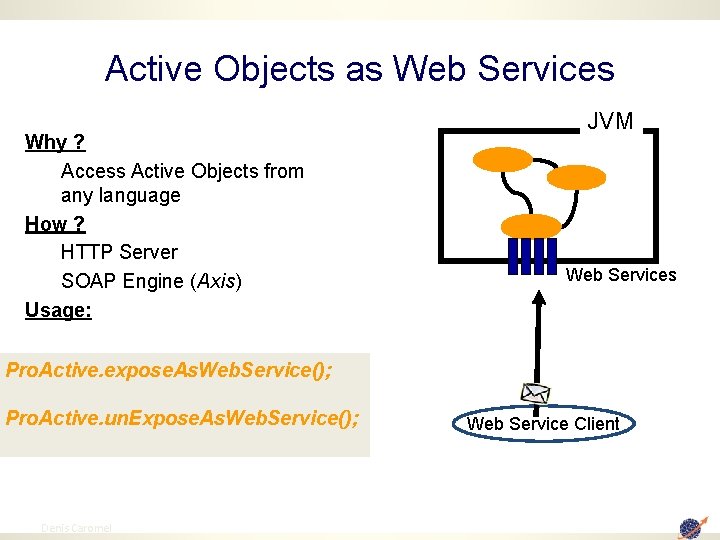

Active Objects as Web Services Why ? Access Active Objects from any language How ? HTTP Server SOAP Engine (Axis) Usage: JVM Web Services Pro. Active. expose. As. Web. Service(); Pro. Active. un. Expose. As. Web. Service(); 89 Denis Caromel Web Service Client

Pro. Active + Services + Workflows Principles: 3 kinds of Parallel Services 3. Domain Specific Parallel Services (e. g. Monte Carlo Pricing) 2. Typical Parallel Computing Services (Parameter Sweeping, D&C, …) 1. Basic Job Scheduling Services 2. (parallel execution on the Grid) 90 Denis Caromel

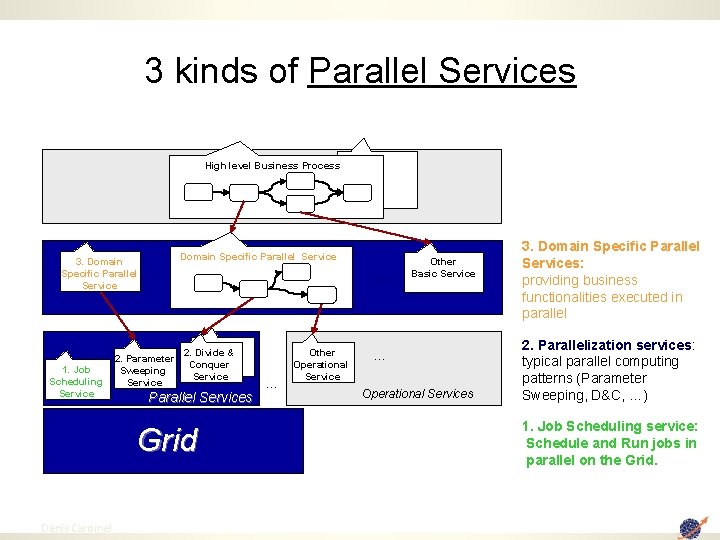

3 kinds of Parallel Services High level Business Process Domain Specific Parallel Service 3. Domain Specific Parallel Service 1. Job Scheduling Service … 2. Parameter Sweeping Service 2. Divide & Conquer Service Parallel Services Grid 91 Denis Caromel … Other Operational Service Other Basic Service … Operational Services 3. Domain Specific Parallel Services: providing business functionalities executed in parallel 2. Parallelization services: typical parallel computing patterns (Parameter Sweeping, D&C, …) 1. Job Scheduling service: Schedule and Run jobs in parallel on the Grid.

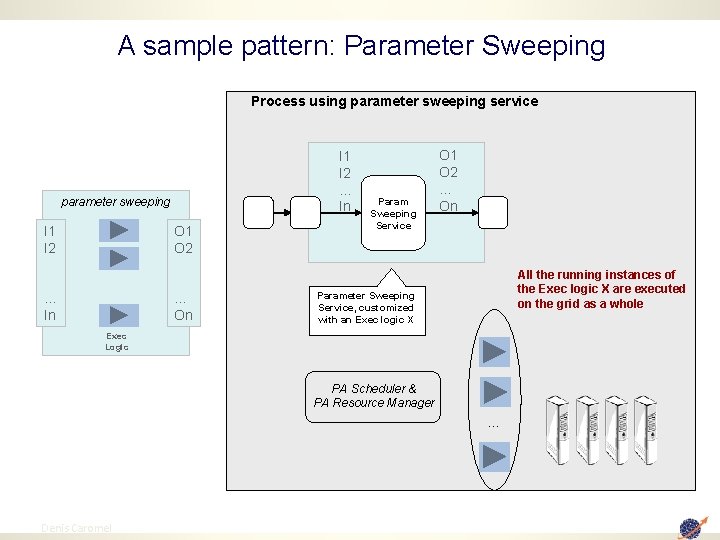

A sample pattern: Parameter Sweeping Process using parameter sweeping service I 1 I 2 … In parameter sweeping I 1 I 2 O 1 O 2 … In … On Param Sweeping Service O 1 O 2 … On All the running instances of the Exec logic X are executed on the grid as a whole Parameter Sweeping Service, customized with an Exec logic X Exec Logic PA Scheduler & PA Resource Manager … 92 Denis Caromel

Video SOA Integration: Web Services, BPEL Workflow 93 Denis Caromel

6. J 2 EE Integration Florin Alexandru Bratu OASIS Team - INRIA 94 Denis Caromel

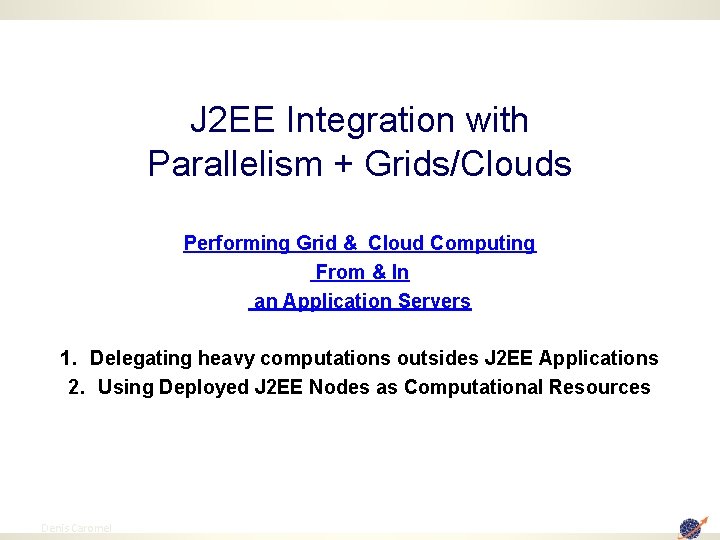

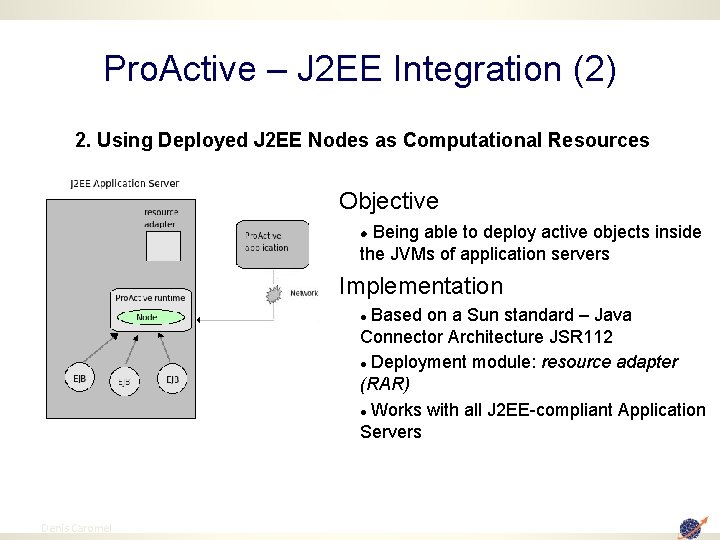

J 2 EE Integration with Parallelism + Grids/Clouds Performing Grid & Cloud Computing From & In an Application Servers 1. Delegating heavy computations outsides J 2 EE Applications 2. Using Deployed J 2 EE Nodes as Computational Resources 95 Denis Caromel

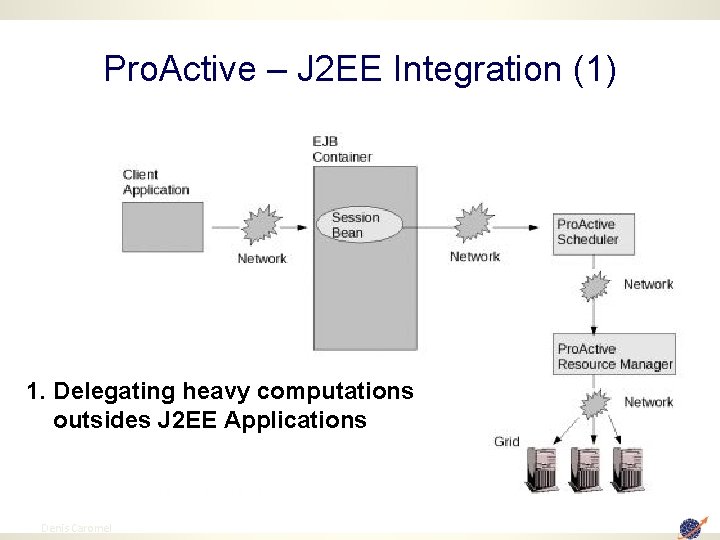

Pro. Active – J 2 EE Integration (1) 1. Delegating heavy computations outsides J 2 EE Applications 96 Denis Caromel

Pro. Active – J 2 EE Integration (2) 2. Using Deployed J 2 EE Nodes as Computational Resources Objective Being able to deploy active objects inside the JVMs of application servers Implementation Based on a Sun standard – Java Connector Architecture JSR 112 Deployment module: resource adapter (RAR) Works with all J 2 EE-compliant Application Servers 97 Denis Caromel

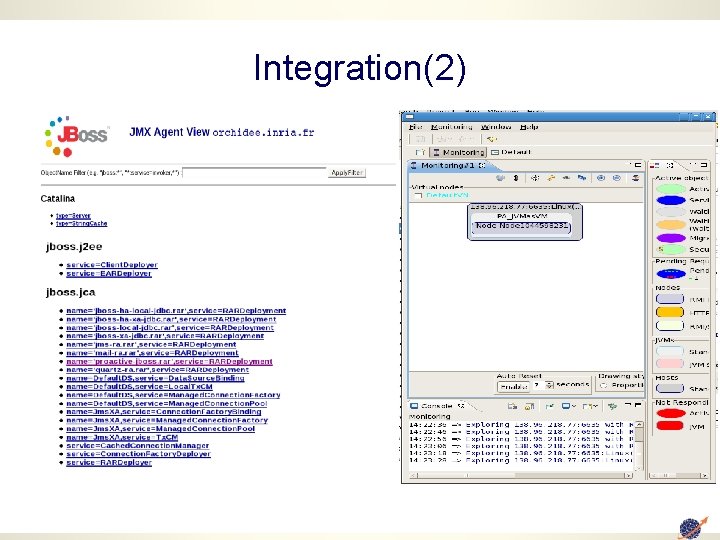

Integration(2)

Grids & Clouds: Amazon EC 2 Deployment 99 Denis Caromel

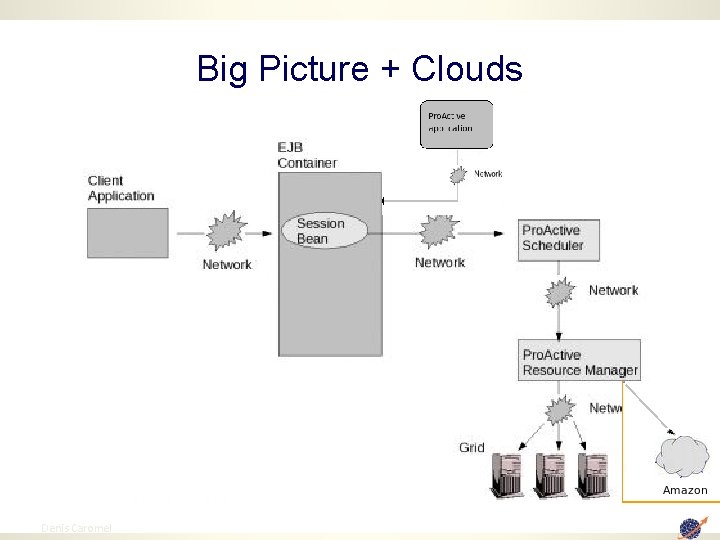

Big Picture + Clouds 100 Denis Caromel

Clouds: Pro. Active Amazon EC 2 Deployment Principles & Achievements: Pro. Active Amazon Images (AMI) on EC 2 So far up to 128 EC 2 Instances (Indeed the maximum on the EC 2 platform, … ready to try 4 000 AMI) Seamless Deployment: no application change, no scripting, no pain Open the road to : In house Enterprise Cluster and Grid + Scale out on EC 2 101 Denis Caromel

Pro. Active Deployment on Amazon EC 2 Video 102 Denis Caromel

103 Denis Caromel

P 2 P: Programming Models on Overlay Networks 104 Denis Caromel

105 Denis Caromel

106 Denis Caromel

- Slides: 106